Abstract

Objective

Outbreak detection and disease control may be improved by simplified, semi-automated reporting of notifiable diseases to public health authorities. The objective of this study was to determine the effect of an electronic, prepopulated notifiable disease report form on case reporting rates by ambulatory care clinics to public health authorities.

Methods

We conducted a 2-year (2012-2014) controlled before-and-after trial of a health information exchange (HIE) intervention in Indiana designed to prepopulate notifiable disease reporting forms to providers. We analyzed data collected from electronic prepopulated reports and “usual care” (paper, fax) reports submitted to a local health department for 7 conditions by using a difference-in-differences model. Primary outcomes were changes in reporting rates, completeness, and timeliness between intervention and control clinics.

Results

Provider reporting rates for chlamydia and gonorrhea in intervention clinics increased significantly from 56.9% and 55.6%, respectively, during the baseline period (2012) to 66.4% and 58.3%, respectively, during the intervention period (2013-2014); they decreased from 28.8% and 27.5%, respectively, to 21.7% and 20.6%, respectively, in control clinics (P < .001). Completeness improved from baseline to intervention for 4 of 15 fields in reports from intervention clinics (P < .001), although mean completeness improved for 11 fields in both intervention and control clinics. Timeliness improved for both intervention and control clinics; however, reports from control clinics were timelier (mean, 7.9 days) than reports from intervention clinics (mean, 9.7 days).

Conclusions

Electronic, prepopulated case reporting forms integrated into providers’ workflow, enabled by an HIE network, can be effective in increasing notifiable disease reporting rates and completeness of information. However, it was difficult to assess the effect of using the forms for diseases with low prevalence (eg, salmonellosis, histoplasmosis).

Surveillance is the cornerstone of public health practice. 1,2 Traditionally, health departments wait for hospital, laboratory, or clinic staff members to initiate most case reports. 3 However, passive approaches can be burdensome for reporters, resulting in incomplete and delayed reports, which can hinder assessment of disease in the community and potentially delay recognition of patterns and outbreaks. 4 -6

Modern surveillance practice is shifting toward electronic transmission of disease information. The adoption of electronic health record (EHR) systems and health information exchange (HIE) among clinical organizations, 7 -9 driven by policies such as the meaningful use program, 10 is creating an information infrastructure that public health authorities (PHAs) seek to leverage for improving surveillance practice. 11 -14

According to the Centers for Disease Control and Prevention (CDC), health departments currently receive up to 67% of total laboratory-based reports for notifiable diseases electronically. 13 However, provider-based case reporting continues to be largely paper-based via fax machines, 15,16 except in a few jurisdictions, such as Massachusetts, where the health department has the capability to query EHR systems. 17 Yet even systems such as the one in Massachusetts have limitations: they may support only a few conditions, and they may require providers to spend substantial time and resources on electronic connectivity.

Policy makers who designed the current iteration of the meaningful use requirements 18 envision that EHR systems and HIE networks will facilitate electronic exchange of data on treatment, corollary results, and other details from providers that are not available from laboratory information systems. When this vision is fully realized, providers could receive automatically generated electronic case reporting (eCR) forms through their EHR system, which could be completed and sent to PHAs for case investigation. Currently, eligible providers can elect to initiate submission of eCR forms to PHAs as part of the Stage 3 meaningful use program. 19

Before the release of the eCR criteria for meaningful use, we conducted a trial to design, implement, and evaluate a decision support system that could leverage an existing electronic HIE network to facilitate generation of eCR forms. Our goal was to improve case reporting rates, completeness of case report information, and timeliness of case reports submitted by primary care providers to PHAs. We previously published details of the trial design 20 and the baseline rates of case reporting among providers and laboratories in our community. 21

The objective of this study was to determine the effect of an electronic, prepopulated notifiable disease report form on case reporting rates, completeness of data in submitted case reports, and timeliness of case reports by ambulatory care clinics to a PHA.

Methods

We performed a controlled before-and-after trial to examine the effect of an informatics intervention on case reporting, completeness, and timeliness for 7 representative diseases of varied incidence and consequence commonly investigated by local PHA staff members in Indiana. These diseases, all notifiable, were chlamydia, gonorrhea, hepatitis B, hepatitis C, histoplasmosis, salmonellosis, and syphilis. As in most states, providers and laboratories in Indiana are required to report each notifiable disease case they encounter.

System Context and Description

The intervention was implemented in the context of the Indiana Health Information Exchange, a large HIE network that delivers laboratory results, radiology results, and other clinical messages to more than 25 000 providers. 22,23 Using components within the HIE infrastructure, we developed a decision support system that prepopulates the official Indiana State Department of Health communicable disease reporting form with standard information for a notifiable disease case. A case is triggered when an electronic laboratory result message is examined by the Notifiable Condition Detector, 24,25 a system that inspects all incoming laboratory messages for potential cases. Using rules developed in partnership with the PHA, the detector determines whether a given laboratory result meets state criteria.

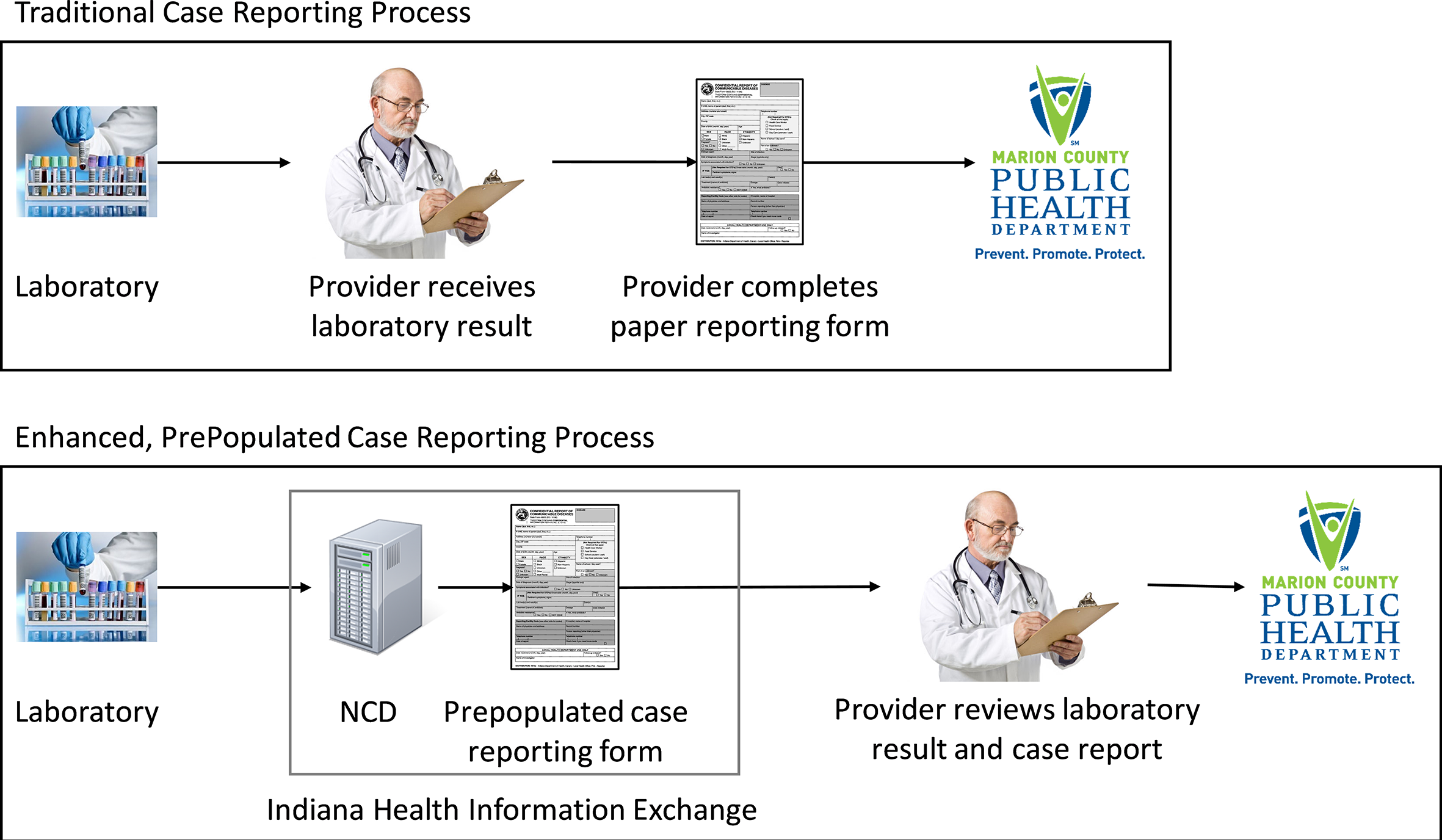

Once triggered by the detector, the system extracts data on the patient’s demographic characteristics, confirmatory test results, and provider information from the laboratory message, as well as the patient’s medical records stored in the HIE. The system further electronically delivers the prepopulated form to an ambulatory care clinic using the HIE network (Figure). The report is delivered as a PDF document suitable for viewing in an EHR system or web browser. The prepopulated form acts as a clinical reminder that the provider has a notifiable condition to be reported to a PHA. Upon review of the form by the provider or clinic staff member, the form can be submitted to the PHA, usually as a fax. Clinic staff members may edit prepopulated fields or add information in blank fields of the form. A detailed description of the intervention and study protocol is available elsewhere. 20

A comparison of the traditional, paper-based case reporting process (top) and the enhanced prepopulated case reporting process (bottom) intervention tested in a trial, Marion County Public Health Department, Indianapolis, Indiana, 2010-2016. In the former process, the provider receives a result from the laboratory and manually completes a case reporting form that is then submitted to the local health department. In the latter process, the intervention, deployed within a health information exchange, detects the positive laboratory test result, prepopulates the case reporting form, and delivers both the laboratory result and the case report to the provider for review. Abbreviation: NCD, Notifiable Condition Detector.

Implementation and Site Information

The intervention was implemented in 7 ambulatory care clinics in central Indiana. Of the 228 health care providers in these 7 clinics, 94.3% (n = 215) were medical physicians and 4.8% (n = 11) were nurse practitioners. Sites were purposefully selected for their varied characteristics. Four sites provided primary care regardless of age or sex; 1 site specialized in primary care for adolescent girls and young women aged 13-19 years, especially sexually active adolescents; 1 clinic specialized in primary care for adults aged ≥18 years; and 1 clinic specialized in primary care for women. Six clinics were located in an urban, metropolitan setting. All but 1 clinic used fax machines to transmit notifiable disease reports to the county PHA. Control clinics included all other outpatient settings electronically connected to the HIE network during the study period (n = 312).

Data Sources and Collection Methods

To evaluate the effect of the system on case reporting, we gathered information from all electronic and paper-based notifiable disease reports submitted to the Marion County Public Health Department (MCPHD) in Indianapolis, Indiana (2015 population of 939 020). Provider reports are typically faxed to MCPHD by nurses or other nonphysician personnel in clinics and hospitals, including infection preventionists who are tasked with reporting notifiable disease information for integrated health systems. 26 The combined count of all cases submitted to MCPHD from all sources served as the denominator of reported cases occurring in the jurisdiction during the study period.

We collected baseline data before the start of the intervention. To ensure reasonable power, we varied the period for collecting baseline data for each disease according to the prevalence of each disease. We gathered reports for highly prevalent diseases (chlamydia and gonorrhea) during a 3-month period (May through July 2012), moderately prevalent diseases during a 6- to 8-month period (syphilis, December 2011 through July 2012; hepatitis C, February 2012 to July 2012), and less prevalent diseases (histoplasmosis, salmonellosis, and hepatitis B) during a 2-year period (August 2010 through July 2012).

The first intervention period occurred from October 7, 2013, through March 15, 2014, during which clinic start dates were staggered. After a 6-month washout period, a second intervention period occurred from September 15, 2014, through June 12, 2016. During this second intervention period, prepopulated case reporting forms included not only standard information but also corollary test results (eg, liver enzymes for hepatitis C patients) as well as data on symptoms, when present in the patient’s HIE-based medical records, for some diseases (eg, jaundice for hepatitis B). Each report was flagged as to whether it was submitted to MCPHD during an intervention period (ie, by a clinic during a time when the intervention was active for that clinic) or nonintervention period. Reports from all control clinics were considered nonintervention reports.

We grouped reports into unique cases (disease episodes) for the same patient by using CDC case definitions. 27 Given the lack of a master person index at the health department, 28 we linked available patient identifiers (eg, first name, last name, sex, date of birth, telephone number) by using probabilistic record linkage, with some human review to resolve questionable matches, to create unique patient identifiers. 29 A case therefore consisted of a set of reports for the same patient for the same disease episode.

Health department staff members extracted the information necessary for case investigation from each report and collated each report by disease. Trainees in public health informatics manually extracted the data and cross-validated them for accuracy. The process of cross-validation consisted of 1 trainee verifying data entered by another trainee. Data fields were recorded as blank when information was absent. Trainees entered dates as they appeared, either timestamped (ie, automatically by information systems) or handwritten onto reports, even when the received date at the public health agency occurred before the date the laboratory test was performed or the diagnosis was recorded. We chose to enter dates as they appeared because these inconsistencies in data reflect actual data in real-world case files. Although we captured data on these discrepancies, we excluded from analyses reports that showed negative time differences and values outside of expected ranges.

Data Analysis Methods

We calculated reporting rates by dividing the number of unique cases with at least 1 provider report by the total number of unique cases submitted to MCPHD. Provider reports, for the purpose of analysis, consisted of both the official forms created by the state health agency for use by providers as well as other documentation (eg, scanned medical records) faxed to the health department from clinics. Finally, some cases consisted of multiple provider reports, such as when infection control staff members at a hospital and an ambulatory care clinic both submit a report. We measured completeness as the percentage of data fields containing values at the individual report level. Public health practitioners selected the fields for analysis as being critical for case investigation and reporting to state health authorities and CDC. We expected each case report to contain this minimum set of data.

Measurement of timeliness focused on the difference, in calendar days, between the test result date and either the date the case was reported by the provider or, if the report date was missing on the submitted form, the date the report was received by the local PHA (eg, ink stamp applied by staff member). A single case in which the received date was recorded as occurring before the date of the laboratory test was removed before analysis.

We adapted from our previous work the methods for establishing reporting rates, completeness of report data elements, and the timeliness of reports. 21,30,31 To estimate the effect of the intervention on reporting rates, we used a difference-in-differences (DID) design. The DID statistical technique compared reporting rates between the intervention clinics and the control clinics (all nonintervention sites that reported ≥1 disease to the local health department) by fitting a binomial generalized linear model (GLM) with a logit link function. We employed the NLEstimate macro from SAS using the estimated model parameters and their variance-covariance matrix. 32 The DID approach removes biases in post-intervention period comparisons between treatment and control groups that could result from permanent differences between those groups as well as biases from comparisons over time in the treatment group that could be the result of trends due to other causes of the outcome. 33

Because the prevalence of each disease varied, we stratified comparisons of reporting rates by disease. Only chlamydia, gonorrhea, and hepatitis C were reported at a high enough frequency during the intervention periods for robust statistical comparisons.

We evaluated the difference in completeness, separately for each field, by fitting a binomial GLM with logit link function. We evaluated the difference in timeliness, measured as a count of days, between the date the laboratory test was performed and the date the result was reported to a PHA by fitting a negative binomial GLM with a log link function. For completeness and timeliness, we accounted for the potential clustering effect of multiple reports for the same case by using the NLEstimate macro, which employs generalized estimating equations. We performed all analyses using SAS version 9.4 34 with a threshold for significance at .05. This study received ethics approval from the institutional review board at Indiana University.

Results

We identified 16 056 unique cases across all periods. Of these, the 7 intervention clinics reported 1388 cases (8.6% of 16 056 cases). The most prevalent diseases reported by intervention clinics during intervention periods were chlamydia (65.1%; 330 of 507 cases) followed by hepatitis C (13.6%; 69 of 507 cases) and gonorrhea (11.8%; 60 of 507 cases) (Table 1). The intervention clinics did not report syphilis during either intervention period.

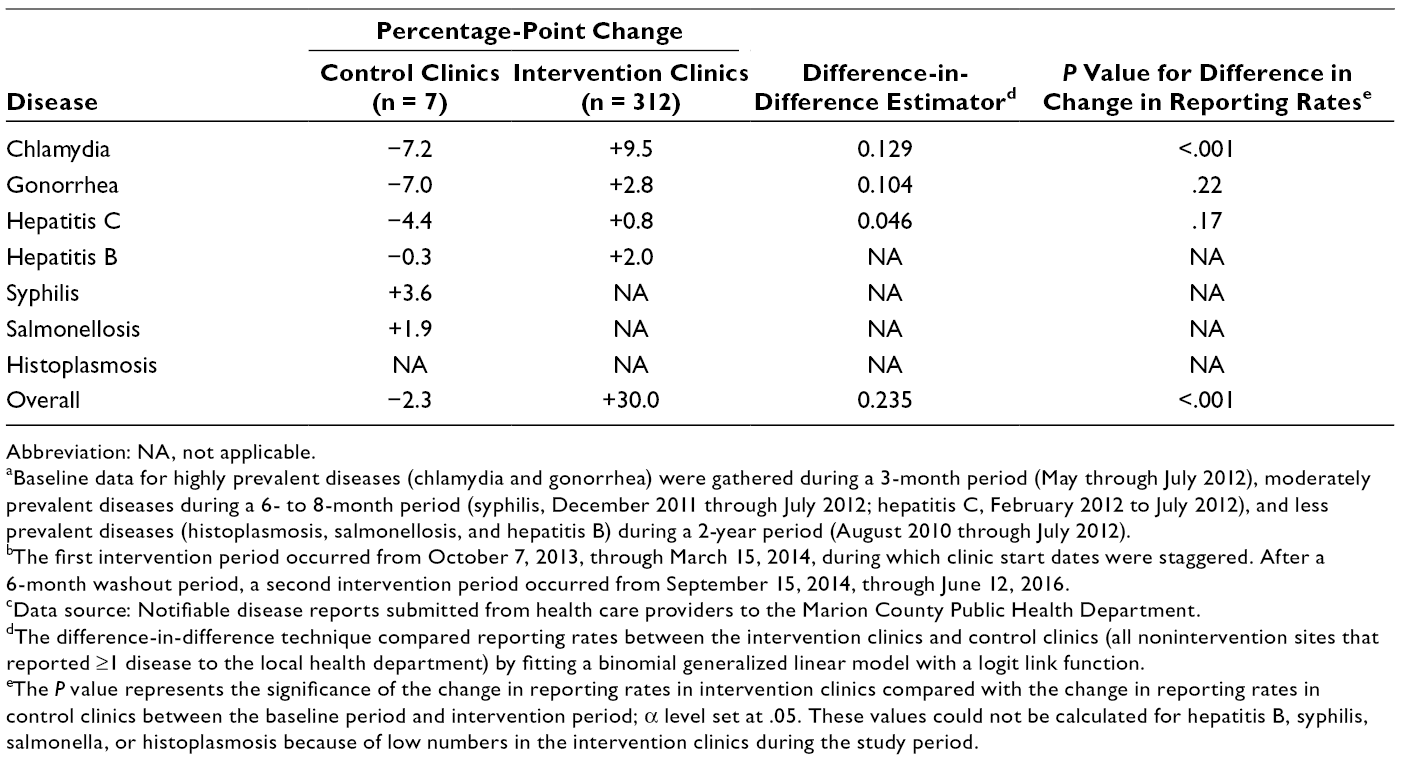

Reporting rates during baseline period and study period stratified by notifiable disease and source of reports during various periods, Marion County Public Health Department, Indianapolis, Indiana, 2010-2016 a

Abbreviation: NA, not applicable.

aData source: Notifiable disease reports submitted from health care providers to the Marion County Public Health Department.

bBaseline data for highly prevalent diseases (chlamydia and gonorrhea) were gathered during a 3-month period (May through July 2012), moderately prevalent diseases during a 6- to 8-month period (syphilis, December 2011 through July 2012; hepatitis C, February 2012 to July 2012), and less prevalent diseases (histoplasmosis, salmonellosis, and hepatitis B) during a 2-year period (August 2010 through July 2012).

cThe first intervention period occurred from October 7, 2013, through March 15, 2014, during which clinic start dates were staggered. After a 6-month washout period, a second intervention period occurred from September 15, 2014, through June 12, 2016.

dAs in most states, providers in Indiana are required to report all notifiable diseases to the local public health authority.

eThe combined count of all cases submitted to the Marion County Public Health Department from all sources in the jurisdiction during the study period.

Proportion of Reported Cases With at Least 1 Provider Report

Of all cases submitted to MCPHD across all periods, 12.6% (2028 of 16 056 cases) contained at least 1 report from a provider (Table 1). The reporting rate for intervention clinics was significantly higher for cases submitted during intervention periods (51.5%; 261 of 507) than for cases submitted at baseline (21.5%; 189 of 881) and for cases submitted by control clinics at baseline (12.3%; 584 of 4729) and during intervention periods (10.0%; 994 of 9939) (P < .001). Of the 261 cases submitted from intervention clinics during intervention periods, 197 (75.5%) cases included prepopulated forms generated by the information system.

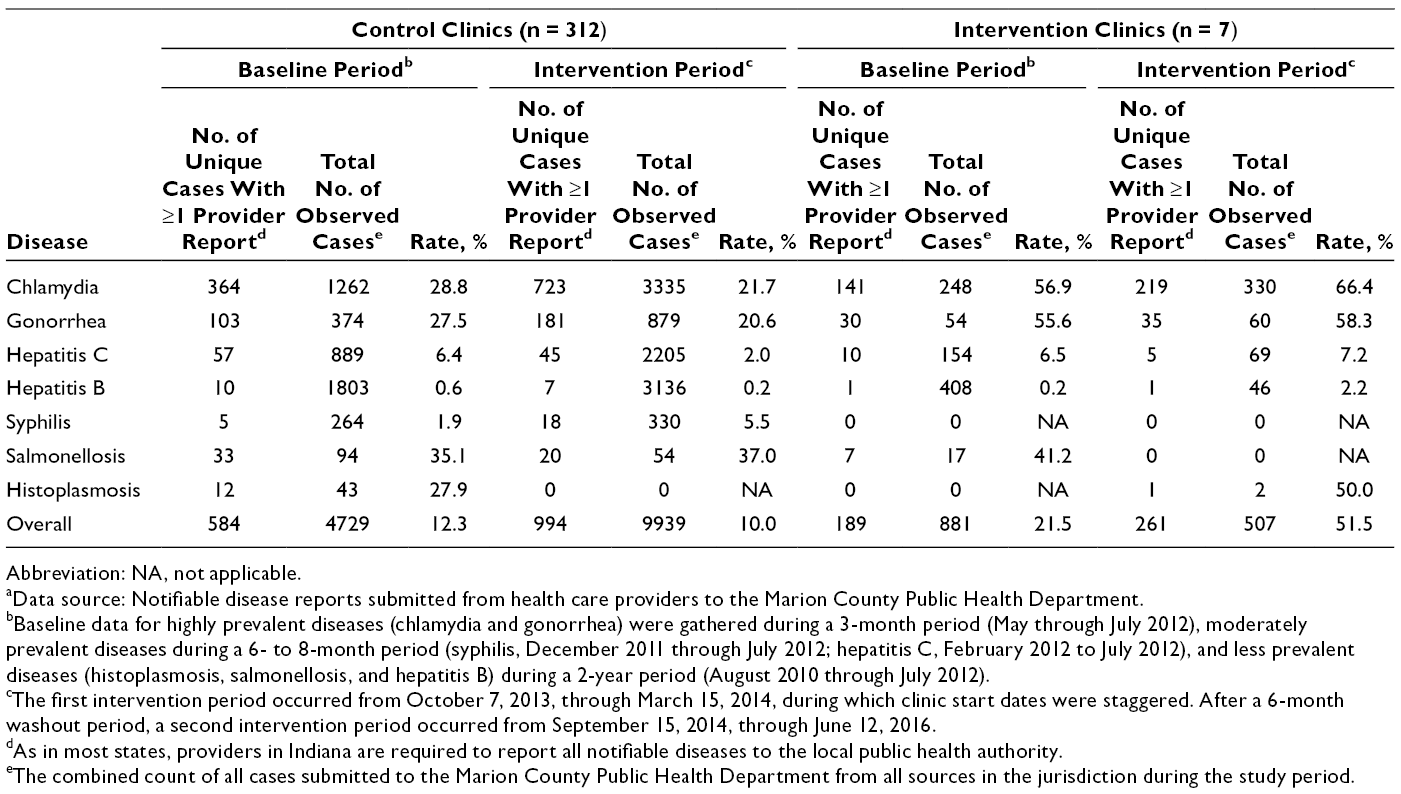

Reporting rates increased from baseline to intervention for chlamydia (from 56.9% to 66.4%), gonorrhea (from 55.6% to 58.3%), and hepatitis C (from 6.5% to 7.2%) in intervention clinics (Table 1). The percentage-point increases were 9.5% for chlamydia, 2.8% for gonorrhea, and 0.8% for hepatitis C (Table 2). However, only the increase for chlamydia was significant (P < .001). Reporting rates for all 3 diseases decreased in control clinics during the same period (Table 2). Although the reporting rate for hepatitis B increased in intervention clinics from baseline to intervention (from 0.2% to 2.2%) (Table 2), small numbers of cases prevented statistical comparison. Reporting rates for syphilis and salmonellosis increased in control clinics from baseline to intervention: by 3.6 percentage points for syphilis and 1.9 percentage points for salmonellosis. Changes in rates for these diseases in intervention clinics could not be detected because of a lack of observable cases.

Abbreviation: NA, not applicable.

aBaseline data for highly prevalent diseases (chlamydia and gonorrhea) were gathered during a 3-month period (May through July 2012), moderately prevalent diseases during a 6- to 8-month period (syphilis, December 2011 through July 2012; hepatitis C, February 2012 to July 2012), and less prevalent diseases (histoplasmosis, salmonellosis, and hepatitis B) during a 2-year period (August 2010 through July 2012).

bThe first intervention period occurred from October 7, 2013, through March 15, 2014, during which clinic start dates were staggered. After a 6-month washout period, a second intervention period occurred from September 15, 2014, through June 12, 2016.

cData source: Notifiable disease reports submitted from health care providers to the Marion County Public Health Department.

dThe difference-in-difference technique compared reporting rates between the intervention clinics and control clinics (all nonintervention sites that reported ≥1 disease to the local health department) by fitting a binomial generalized linear model with a logit link function.

eThe P value represents the significance of the change in reporting rates in intervention clinics compared with the change in reporting rates in control clinics between the baseline period and intervention period; α level set at .05. These values could not be calculated for hepatitis B, syphilis, salmonella, or histoplasmosis because of low numbers in the intervention clinics during the study period.

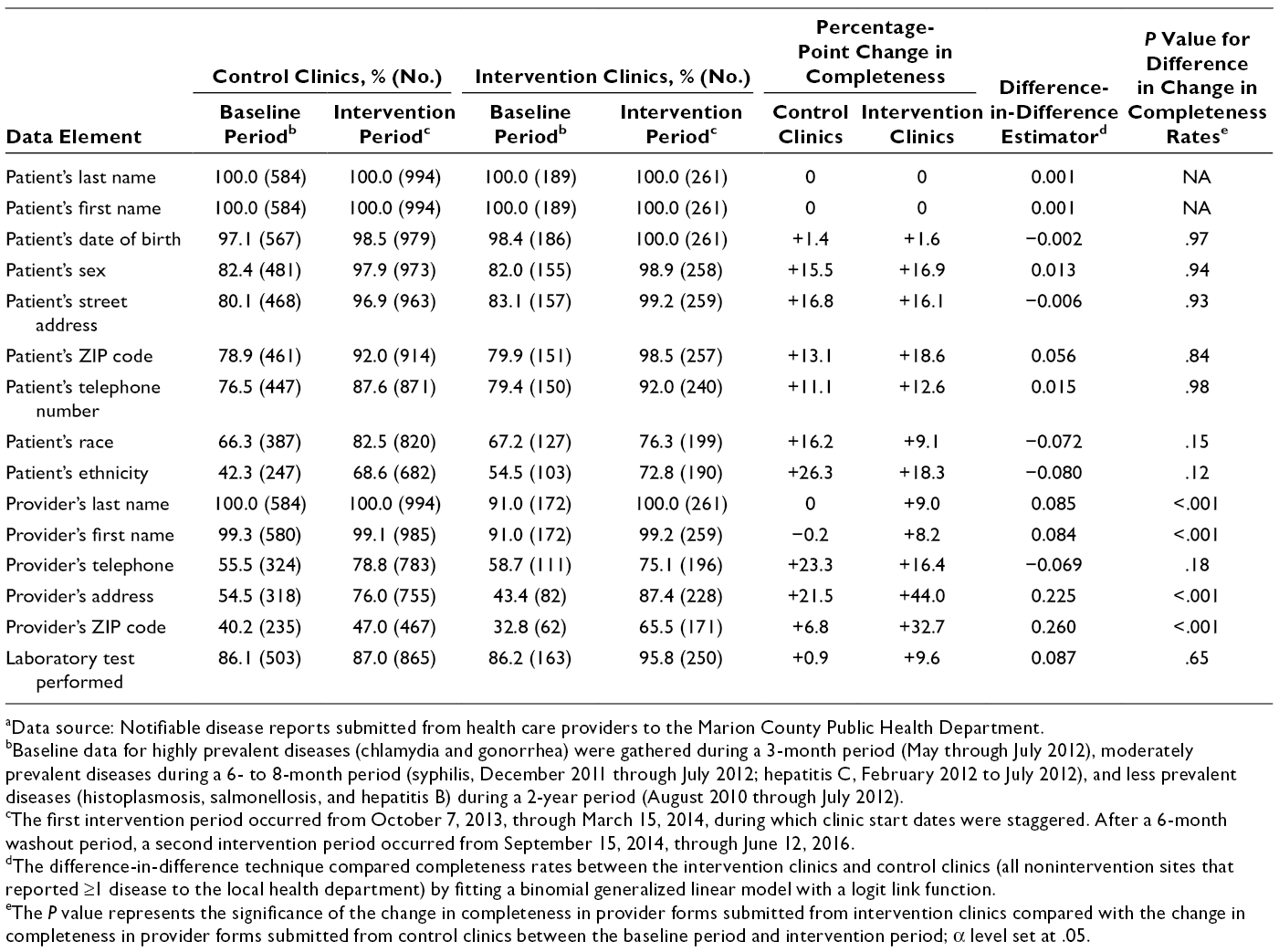

Completeness of Information in Provider Reports

Of the 15 fields examined in this study, 13 improved in completeness in intervention clinics and 11 improved in completeness in control clinics (Table 3). During the intervention periods only, 10 fields were more complete in reports submitted by intervention clinics than in reports submitted by control clinics (Table 3). Completeness in 4 fields improved significantly more in intervention clinics than in control clinics: physician last name, physician first name, physician address, and physician ZIP code. Even with improvements in completeness across control and intervention clinics, completeness levels for 5 fields were <90%: patient race, patient ethnicity, physician telephone, physician address, and physician ZIP code.

Completeness of data in key fields in provider reports of 7 notifiable diseases sent during various periods to Marion County Public Health Department, Indianapolis, Indiana, 2010-2016 a

aData source: Notifiable disease reports submitted from health care providers to the Marion County Public Health Department.

bBaseline data for highly prevalent diseases (chlamydia and gonorrhea) were gathered during a 3-month period (May through July 2012), moderately prevalent diseases during a 6- to 8-month period (syphilis, December 2011 through July 2012; hepatitis C, February 2012 to July 2012), and less prevalent diseases (histoplasmosis, salmonellosis, and hepatitis B) during a 2-year period (August 2010 through July 2012).

cThe first intervention period occurred from October 7, 2013, through March 15, 2014, during which clinic start dates were staggered. After a 6-month washout period, a second intervention period occurred from September 15, 2014, through June 12, 2016.

dThe difference-in-difference technique compared completeness rates between the intervention clinics and control clinics (all nonintervention sites that reported ≥1 disease to the local health department) by fitting a binomial generalized linear model with a logit link function.

eThe P value represents the significance of the change in completeness in provider forms submitted from intervention clinics compared with the change in completeness in provider forms submitted from control clinics between the baseline period and intervention period; α level set at .05.

Timeliness of Reports Submitted by Providers

Timeliness of provider reports increased in both intervention and control clinics. The overall time between the date a laboratory test was performed and the date the result was reported decreased from 10.1 days to 9.7 days in intervention clinics. This decrease was not significantly different from the decrease in control clinics from 11.3 to 8.0 days (P = .16). When stratified by disease, timeliness of chlamydia reports similarly improved in both control and intervention clinics, but the decrease in days was significantly better in control clinics (from 11.8 to 7.9 days; P = .01) than in intervention clinics (from 11.5 to 9.5 days).

Discussion

This trial is unique because it is the first such trial to examine the effect of an HIE-based intervention on provider reporting rates, information completeness, and timeliness of reporting. Previous studies on HIE interventions largely focused on outcomes relevant to clinical operations, usability of HIE software by clinician users, patient-level outcomes, and laboratory reporting of notifiable disease. 35 -37 A 2017 study on HIE-enabled surveillance focused on improving access to chronic disease information, 38 not improvements in routine notifiable disease surveillance. Therefore, this study adds evidence on the benefits of implementing interoperability between clinical and public health information systems in support of the work processes of PHAs.

With respect to our primary outcome measure of reporting rates, chlamydia reporting rates increased significantly in intervention clinics and fell slightly in control clinics. Reporting rates for gonorrhea and hepatitis C among intervention clinics increased modestly but not significantly. Yet reporting rates for these conditions fell modestly during the same period in control clinics. Despite the lack of significance, the change in direction was encouraging. Three-quarters of cases reported by intervention clinics contained a prepopulated form, indicating strong uptake of the intervention.

One provider confessed that before participating in this study, he did not realize he was required to report hepatitis C cases to the PHA. As in previous studies, 6,39 we found that provider awareness of reporting requirements may not be uniform and could be improved through education. An electronic intervention may not be sufficient for building awareness of requirements. Education is especially important in academic practices, where we learned from interviews of clinic personnel that turnover is a challenge to awareness of what should be reported. 26

Completeness of the information in provider reports also increased in our study, yet the increase was observed in both control and intervention clinics. Completeness in 4 fields improved significantly more in intervention clinics than in control clinics. These improvements can largely be attributed to prepopulation of case reporting forms using data available in the HIE, which receives updates on patients and providers through the myriad clinical messages it receives from multiple EHR systems. However, given that completeness also improved for many fields in reports from control clinics, confounders may exist, including a general quality improvement process at the state health department that examines completeness and works with report submitters to capture the full range of data asked for on case reporting forms. Moreover, the improvements in 2 fields (provider’s first name, provider’s last name) in reports from intervention clinics resulted in a completeness level equivalent to that observed in control clinics.

Improved completeness of reports could reduce burden on both clinic reporters and PHA case investigators, who have indicated needing to look in multiple systems to complete their case investigation. 26,40 Despite moderate improvement in the intervention clinics, several fields considered key to case investigation remained <90% complete. Furthermore, previous studies noted that some fields needed by PHA case investigators are not captured in clinical EHR systems. 30,31 Room for improvement exists for report completeness.

Although the time to submit a case to the PHA improved, improvements in timeliness among intervention clinics were not significantly better than those among control clinics. The intervention transmitted the prepopulated case reporting form in parallel with the laboratory report. We hypothesized that timeliness would improve. Yet the average time between laboratory result and PHA receipt of the clinic report remained around 10 days. Limited improvement in timeliness was most likely due to clinic processes involved in communicating results to the patient and starting the patient on treatment for the disease, which is information asked for by PHA investigators. Clinic staff members need time to reach the patient, communicate test results, and prescribe treatment before they can record these processes in the case reporting form submitted to the PHA. Interventions such as this one may not be effective at speeding up clinical workflow, even though they may alert clinic staff members that a case should be reported to the PHA.

A current pilot program is testing a “digital bridge” (ie, efforts by decision makers in public health, health care, and health information technology to address information exchange) between clinical and public health organizations for notifiable disease reporting. 41 -43 Although the results from this program are not yet known, this initiative may heed lessons from our study, such as a focus on clinic reporters other than the physician. The initiative may also wish to recruit clinical sites with a high volume of diseases to test EHR-enabled reporting for a wider range of diseases encountered by PHAs. Finally, the initiative may work with its partners to examine clinical workflows to see whether timeliness can be improved along with reporting rates and information completeness.

A near-term future state for public health might involve a combination of forms-based and fully digital EHR-based case reporting solutions. Either process might result in similar workflows for the provider whose role is to review system-generated information before routing each form to the health department, and the output might be similar. The role and value of intermediaries such as an HIE are likely to be important until such time as all EHR vendors, both large and small, fully support the spectrum of public health functions such as case reporting. Current support for case reporting and similar functions is limited in the EHR marketplace.

Limitations

This study had several limitations. Although participating clinics were diverse in size, provider complement, and focus (eg, primary care, adolescent girls and young women aged 13-19), these clinics were not randomly assigned, nor were their underlying patient populations necessarily representative of the population in Indiana. In addition, it was challenging to observe a sufficient number of cases of some diseases (salmonellosis, histoplasmosis) to make conclusions about effect of the intervention on the full spectrum of notifiable conditions observed by PHAs. Incidence of many diseases was low in intervention clinic populations. These limitations could be overcome by expanding the intervention to a larger set of clinics, as well as extending the timeframe during which disease cases occur and are reported to the PHA.

Conclusions

Receipt of complete, timely information is critical to the work of public health. When disease investigators receive incomplete information, they must call providers’ offices or track down details through time-consuming and complex medical records review processes. This process is burdensome and costly for both clinical and public health organizations. Through better integration between clinical and public health information systems, initiatives such as this one as well as the emerging digital bridge continue to refine technical and workflow processes to make public health surveillance more efficient. In an efficient public health surveillance system, doing the right thing is also the easy thing for clinic reporters.

Footnotes

Acknowledgments

The “Improving Population Health Through Enhanced Targeted Regional Decision Support” study is a collaboration among Indiana University, the Regenstrief Institute, the Marion County Public Health Department, and the University of Washington. The authors acknowledge project team members who are not authors: Patrick T.S. Lai, MPH, and Uzay Kirbiyik, MPH, of the Indiana University Richard M. Fairbanks School of Public Health; Jennifer L. Williams, MPH, and Abby K. Church, MPH, of the Regenstrief Institute; Rebecca Hills, PhD, of the University of Washington; and Melissa McMaster, RN, of the Marion County Public Health Department. These persons contributed to the overall success of the study, including coordination of data access, data entry, and study documentation.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This project was supported by grant no. R01HS020909 from the Agency for Healthcare Research and Quality and grant no. 71596 from the Robert Wood Johnson Foundation. The Department of Veterans Affairs, Veterans Health Administration, Health Services Research and Development Service (CIN 13-416) further employs Dr. Dixon as a health research scientist at the Richard L. Roudebush Veterans Affairs Medical Center in Indianapolis, Indiana. The content is solely the responsibility of the authors and does not necessarily represent the official views of the Agency for Healthcare Research and Quality, the Robert Wood Johnson Foundation, or the Department of Veterans Affairs.