Abstract

The Item Wording Effect (IWE) in psychological testing describes how individuals respond differently to positively and negatively worded items. Previous IWE research faced challenges due to measures varying beyond item valence. This study aimed to address this problem by developing an inventory, the Positive and Negative Descriptor Inventory (PANDI), with items varying solely on valence. Semantic framing was manipulated to examine which factor (valence vs. framing) was more causal of the IWE. Using an online survey on Mechanical Turk, 336 Canadian participants responded to PANDI items in different experimental conditions. Results indicated that item valence had a bigger impact on IWE than semantic framing. PANDI-Good items in the Affirming Condition exhibited lower reliability but higher means and response variance than other groups, emphasizing the significant difference in how individuals interpret positive and negating inventory items. This study recommends using negatively worded items sparingly, and not using negating items at all.

Introduction

In the development of psychological inventories, equal numbers of positively worded items (e.g., I am good) and negatively worded items (e.g., I am bad) are used to minimize the effects of response biases such as acquiescence and naysaying (DeVellis, 2003), as well as to slow completion times (as negative words are processed slower than positive words (Wason, 1959), giving responders additional time to think about their answers (Podsakoff et al., 2003). After negatively worded items are reverse scored, their responses should align closely with positively worded items scores. It is presumed that responders answer positively and (reverse scored) negatively worded items similarly because the items measure the same hypothetical construct (Nunnally, 1978).

An unintended consequence of this best-practices principle is the discovery that responders answer positively and negatively worded items differently, that the presumption of item response similarity is false. In fact, studies show positively, and negatively worded items yield differences in mean scale scores (positive items produce higher means than negative items; Schriesheim & Hill, 1981; Weems et al., 2003), factor structure (positive and negative items often load on unique factors, which changes the impression of single dimension constructs into multidimensional ones; Bulut & Bulut, 2022; Marsh, 1996), reliability (positive items often yield greater Cronbach’s alphas than negative items; Barnette, 2000; Eys et al., 2007), and structural equation models (positively worded items produce better fitting models than negatively worded items; Gnambs & Schroeders, 2020; Tomas & Oliver, 1999). These results have been found over decades of study, in student and community samples, using a variety of inventories, and across diverse cultures: they are robust. We refer to these differences collectively as the Item Wording Effect (IWE).

Despite decades of research, the exact nature of the IWE remains unknown. Various studies have found diverse empirical results leading to a variety of distinct conclusions (Kam, 2018). The cause of the IWE is largely thought to be multifactorial, however, most attribute it to inventory development decisions or methodological style (Horan et al., 2003). Others have found that the IWE correlates significantly with social desirability (Rauch et al., 2007). Though these results were unable to be replicated in a later study (DiStefano & Motl, 2006; Kam, 2018). Inventory developers who incorporate positively and negatively worded items in their scales encounter these problems, whereas developers who opt for all positively or all negatively worded items eschew the IWE. Some researchers explain that IWE occurs because responders have greater difficulty answering negatively worded items. Negatively worded items have greater interpretive complexity over positive items (Wason, 1959). Whilst others point to the deleterious effect that emotion-laden negative words have on cognitive processing and categorization (Isen & Daubman, 1984).

Research has also identified trait characteristics or substantive issues related to the IWE, making it more than just an artifact of methodical style. Much of the work connecting personality and the IWE has focused on Rosenberg’s self-esteem scale. The difference in responses to its positively and negatively items has caused some to question whether the scale is a two-dimensional measure or just one (Tomas & Oliver, 1999). Similar doubts have been raised about measures of optimism (Marshall et al., 1992; Plomin et al., 1992), anxiety (Vagg et al., 1980; Vautier et al., 2004), psychological wellbeing (Hystad & Johnsen, 2020), and affect (Watson et al., 1988). The strongest case to be made for a substantive connection with the IWE is intelligence. Research shows the IWE is most discernible among responders with cognitive-developmental deficits and disabilities. Across studies, researchers find that response differences lessen as intelligence levels grow (Gnambs & Schroeders, 2020; Marsh, 1996). Additional research has found that people with low levels of reading achievement are more likely to agree with positively worded items, less likely to agree with negatively worded questions, and have greater difficulty disagreeing with negatively worded items due to the complexity of the task (Bulut & Bulut, 2022; Gnambs & Schroeders, 2020; Greenberger et al., 2003).

The Present Study

Although the IWE has been the subject of decades of research, the causes, and correlates of IWE are still unclear. We agree the trends in the literature are likely correct. IWE is both due to stylistic and substantive factors such that negative item valences and certain traits make the IWE more pronounced. Unfortunately, research findings heretofore have been obscured by study measures that lacked consistent and clear distinctions between positive and negative worded items. In most studies, inventory items differ in more than just semantic valence (whether the key descriptors in items are evaluatively positive or negative [e.g., I am kind vs. I am cruel; Haigler & Widiger, 2001]). Items also differ in terms of semantic framing (affirmation vs. negation: “I am kind,” “I am not kind”; Schriesheim et al., 1991), affix adjustments (the use of prefixes and suffixes] to turn positive items to negative, e.g., kind to unkind, meaningful to meaningless; Barnette, 2000), item complexity (e.g., I am not discouraged by untrustworthy misbehavior”), response option valences (whether the ends of a response scale begin with a positive option [strongly agree] or negative option [strongly disagree], or switch back and forth; Bors, Gruman, & Shukla, 2010), and the number of themes within an item (“I like to eat apples and bananas”; Kline, 1993).

To simplify our study design and interpretability of results, we opted to test an inventory that maximises the difference between positive and negative item valences, without introducing any of the problems associated with semantic framing, affix adjustments, item complexity, response option valences, and multiple themes. We created an inventory ourselves by utilizing single-word descriptors instead of the typical statements or questions that make-up inventory items. To test the widely held hypothesis that negative wording is more difficult to understand than positive wording, we asked participants to complete equal numbers of positively and negatively worded items. We also choose to manipulate semantic framing (I am… vs. I am not… item stems) to make comparisons with item valence. Thus, we tested a within-participants (positive items vs. negative items) and between-participants (I am vs. I am not) mixed-model design. Hypothesis 1. Scale reliability and mean inter-item correlations would be positively affected by item valence and semantic framing. Positively worded items and affirmatively framed items (I am…) would generate greater scale reliability and mean inter-item correlations than negatively worded and negatively framed items. Hypothesis 2. Inventory mean scores would be positively affected by item valence and semantic framing. Positively worded items and affirmatively framed item stems (I am…) would generate greater mean scores negatively worded or negatively framed items. Hypothesis 3. Intra-scale response variance, as measured by inter-item standard deviations (ISDs; Marjanovic et al., 2015) would be positively affected by item valence and semantic framing. Positively worded items and affirming item stems (I am…) would generate smaller ISDs than negatively worded and negating items.

Method

Participants

Participants were recruited via an online questionnaire posted on Mechanical Turk, which took on average 11.77 min (SD = 19.93) to complete. These non-student community members were recruited from Canada and paid a nominal participation fee of US$1.00 USD. The original sample were 403 responders but was reduced by factors such as missing data (12 = 2.98%), indiscriminate or careless responding (38 = 9.43%), and a less than three-minute administration time (35 = 8.68%). The final sample were 336 responders. They were 246 men (73.21%) and 90 women (26.79%) and had a mean age of 33.32 years old (SD = 8.75). The final sample had a mean completion time of 13.30 min (SD = 21.38).

Measures

Demographics were assessed with two items querying age (in years) and biological sex (man, woman, other). The data were scrutinized for validity using three indicators. 1. Missing data. Participants who completed less than 90% of all items or failed to complete any one measure in full was eliminated from the final sample. 2. Conscientious Responders Scale (CRS; Marjanovic et al., 2014). The CRS is a 5-item validity scale that differentiates between conscientious responding (CR: answer generated systematically, which we presume to be the result of honest and accurate responding) from indiscriminate responding (IR: responding i.e. generated unsystematically and/or carelessly). CRS items were randomly embedded throughout the questionnaire not to appear in a row or too obviously to participants. The CRS achieves a higher degree of classification accuracy through the utilization of instructional items. Each item directs the responder exactly how to answer that item (e.g., “Please select response option one (strongly disagree) to answer this item”). Therefore, responses that are congruent with item instructions are presumed to be the result of CR and scored a 1s, whereas incongruent responses are presumed to be the result of IR and scored as 0s. CRS sum scores from 0 to 2 are labelled IR and scores between 3 and 5 labelled CR. Research shows the CRS is an effective and widely used measure for authenticating the validity of questionnaire data (Marjanovic et al., 2019). 3. Questionnaire completion times. Although it is difficult to pin down an exact principle for flagging quick administration times, it is unreasonable to assume questionnaires completed extremely quickly yield valid data (Huang et al., 2012; Wood et al., 2017). Prior to administration online, we had seven individuals complete the questionnaire to gain an idea how long it would take to administer. Their mean administration time was a little over 12 minutes. To be conservative in setting a intuitive cutoff, we decided a priori that participants who completed the questionnaire in less than 25% of our pilot groups’ administration time would be eliminated from the sample.

Study Measure

Positive and Negative Descriptor Inventory (PANDI; adapted from the theoretical model and items in Osgood et al., 1964). Developed for this investigation, the Good and Bad subscales each contain 15 trait descriptors, which describe aspects of being a good or bad person (e.g., honest, deceitful), a strong or weak person (powerful, fragile), and an active or passive person (e.g., lively, lethargic). Items are answered on a 5-point scale ranging from 1 = Not At All to 5 = A Great Deal. All negatively worded PANDI-Bad items are reverse scored to be in semantic alignment with the PANDI Good items. Consequently, greater scores on either subscale reflect higher trait levels of goodness, strength, and energy. A description of our development of this inventory is provided below.

Procedure

The questionnaire was part of a larger study including personality and educational tests. Appearing the end of the questionnaire, the PANDI measure instructions contained a manipulation that put responders in one of two experimental conditions.

Manipulation

Responders were randomly assigned to either the Affirming Condition (n = 165) or Negating Condition (n = 171). Both groups’ questionnaires were identical until the last inventory (the PANDI) began with these instructions: “Using the 5-point response scale beside each item, please indicate how much the following items describe you in a general way – the way you are most of the time. Please answer all items as honestly and as accurately as possible.” Each of the following 30 PANDI items began with either “I am” (the Affirming Condition) or “I am not” (the Negating Condition) item stems before each descriptor. Once finished, and after a reading a short debriefing statement, participants were thanked before leaving the questionnaire.

Development of the Study Inventory

We sourced our pool of descriptor items from Osgood, Suci, and Tannebaum’s work on semantic differential scales (SDSs; 1957; 1964). In SDSs, the stem of the item asserts some subject-verb combination (e.g., I am…) followed by a series of bipolar word pairs assessing attitudes about some social issue or topic (e.g., good. . . . . . . bad). The responder selects a point along a response continuum to answer the item. Osgood et al. identified three factors that categorize attitudes: (1) Evaluation – whether a topic is good or bad; (2) Potency – whether a topic is strong or weak; and (3) Activity – whether a topic is active or passive. Together, the Evaluation-Potency-Activity (EPA) model of attitude structure has been applied in the various fields of psychology (Friborg et al., 2006), political science (Abelson et al., 1982), information systems (Verhagen et al., 2015), and marketing (Themistocleous et al., 2019).

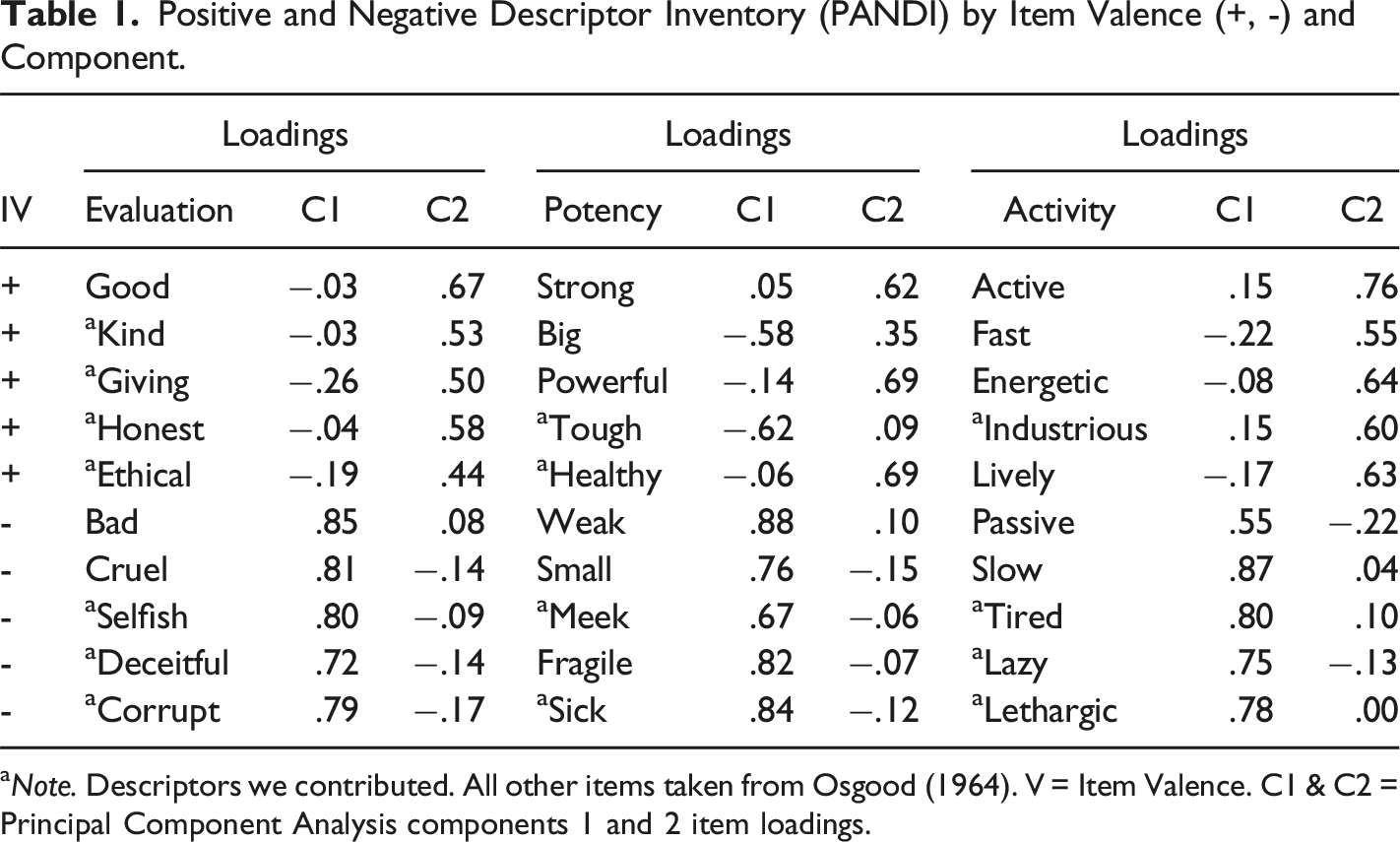

Positive and Negative Descriptor Inventory (PANDI) by Item Valence (+, -) and Component.

aNote. Descriptors we contributed. All other items taken from Osgood (1964). V = Item Valence. C1 & C2 = Principal Component Analysis components 1 and 2 item loadings.

Results

Preliminary Statistics

All negatively worded items in the Affirming Condition (e.g., I am bad), and all positively worded PANDI items in the Negating Condition (e.g., I am not good) were reversed scored so that all PANDI data were positively aligned. After this, higher scores in all PANDI items, across Semantic Framing conditions, indicated greater levels of goodness, strength, and energy. Exploratory principal component analysis with varimax rotation was conducted 1 to force items into a two-factor structure. We observed that all negatively worded items loaded onto component 1, accounting for 47.22% of the variance in responding, and all positively worded items loaded onto component 2, accounting for another 13.55% of the variance 2 .

Although the results of the PCA showed some items were psychometrically better than others, almost all the items passed conservative rules for item retention, such as component loadings above .40 and having double the loading size on an intended component than all other components (Kline, 1993). In the aggregate there was little difference between scales with 30 items, 24 items, and 18 items. For the sake of simplicity, because the items were previously vetted by Osgood (1964), and to avoid the potential for selectively choosing items to produce a desired outcome (i.e., p-hacking; Head et al., 2015), we retained all 30 items for the main analysis of this study.

Inferential Statistics

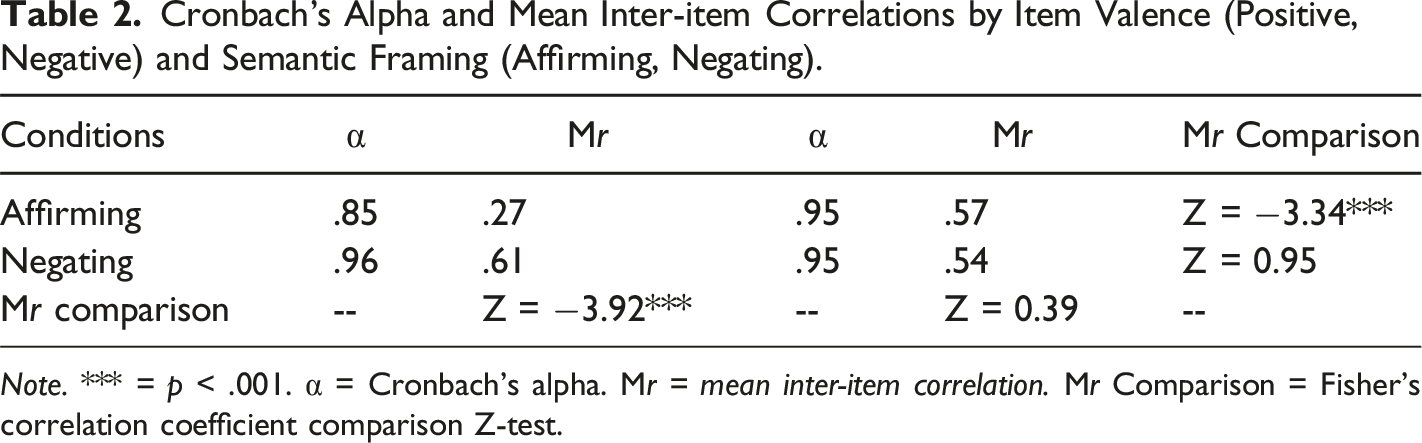

Cronbach’s Alpha and Mean Inter-item Correlations by Item Valence (Positive, Negative) and Semantic Framing (Affirming, Negating).

Note. *** = p < .001. α = Cronbach’s alpha. Mr = mean inter-item correlation. Mr Comparison = Fisher’s correlation coefficient comparison Z-test.

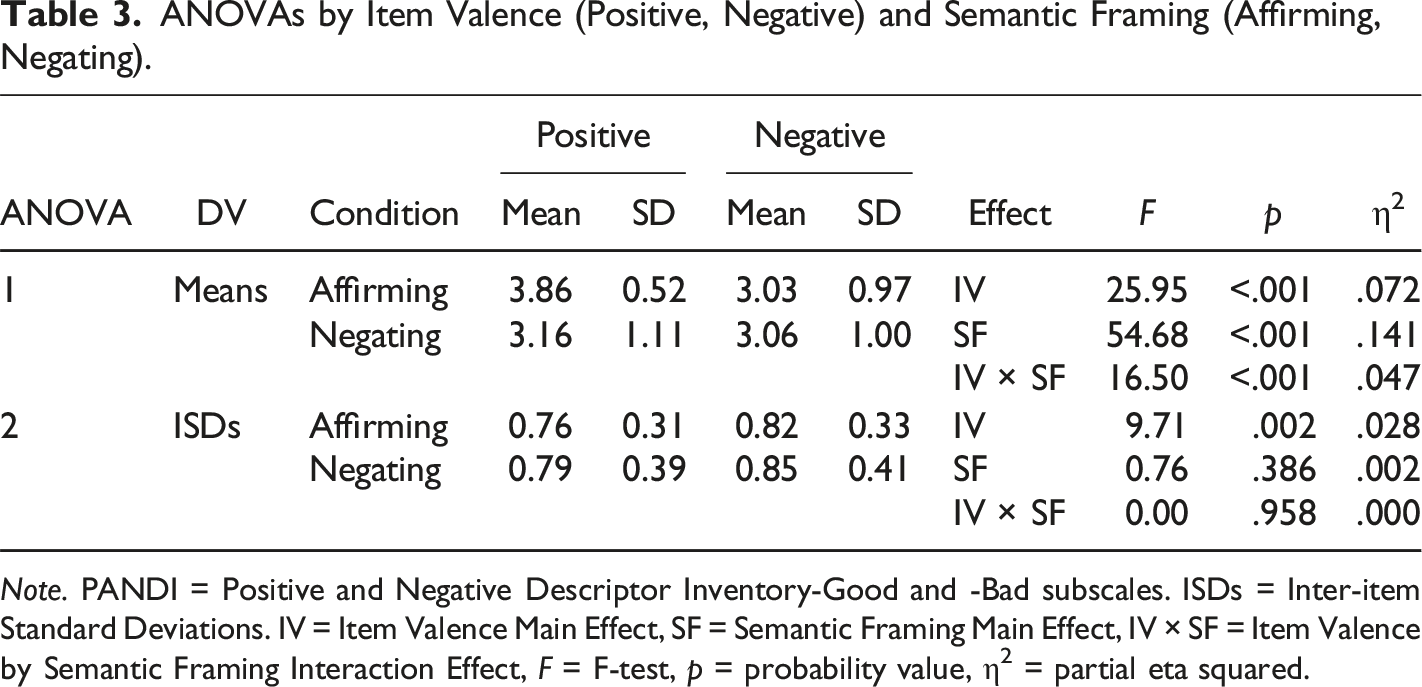

ANOVAs by Item Valence (Positive, Negative) and Semantic Framing (Affirming, Negating).

Note. PANDI = Positive and Negative Descriptor Inventory-Good and -Bad subscales. ISDs = Inter-item Standard Deviations. IV = Item Valence Main Effect, SF = Semantic Framing Main Effect, IV × SF = Item Valence by Semantic Framing Interaction Effect, F = F-test, p = probability value, Ƞ2 = partial eta squared.

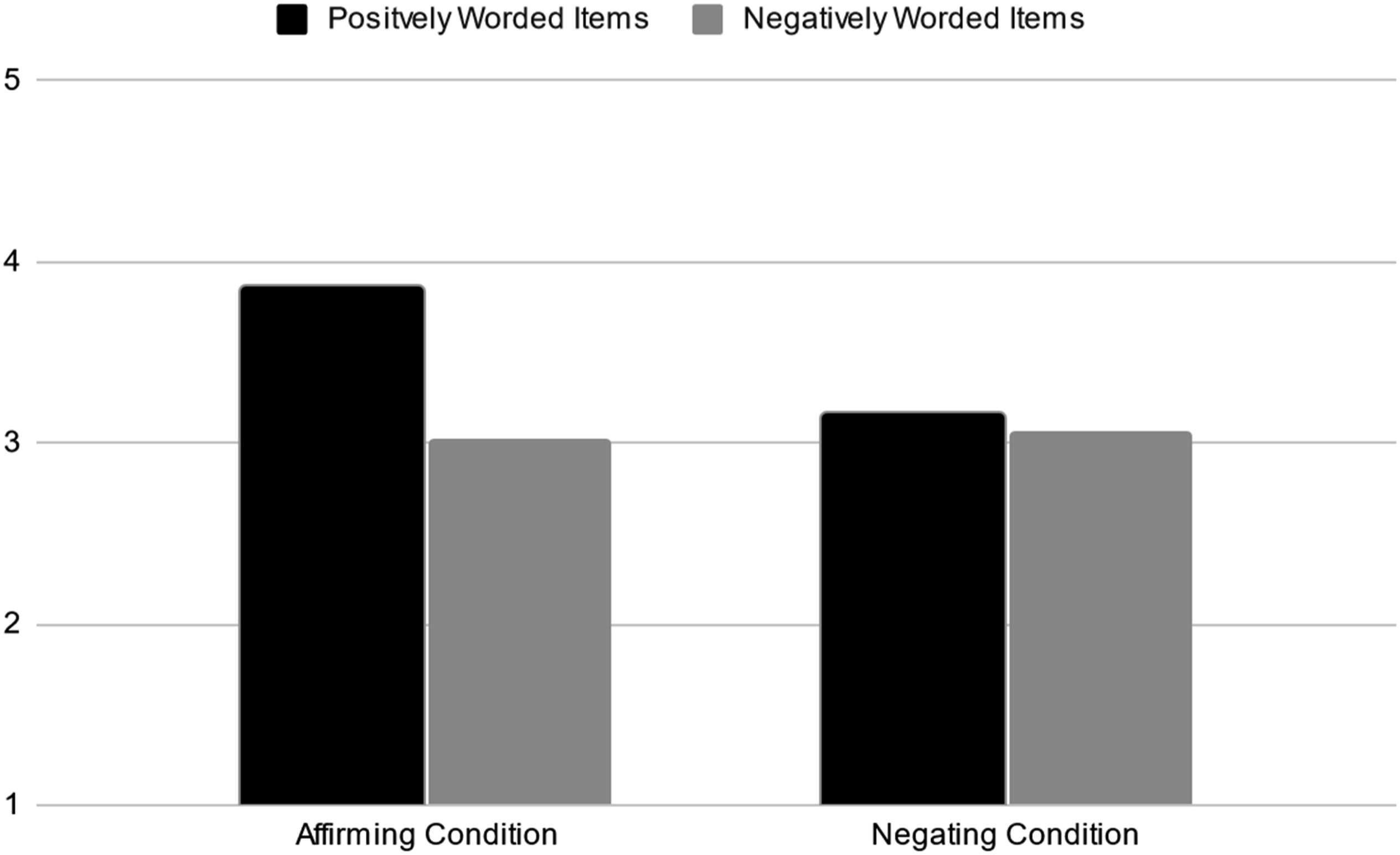

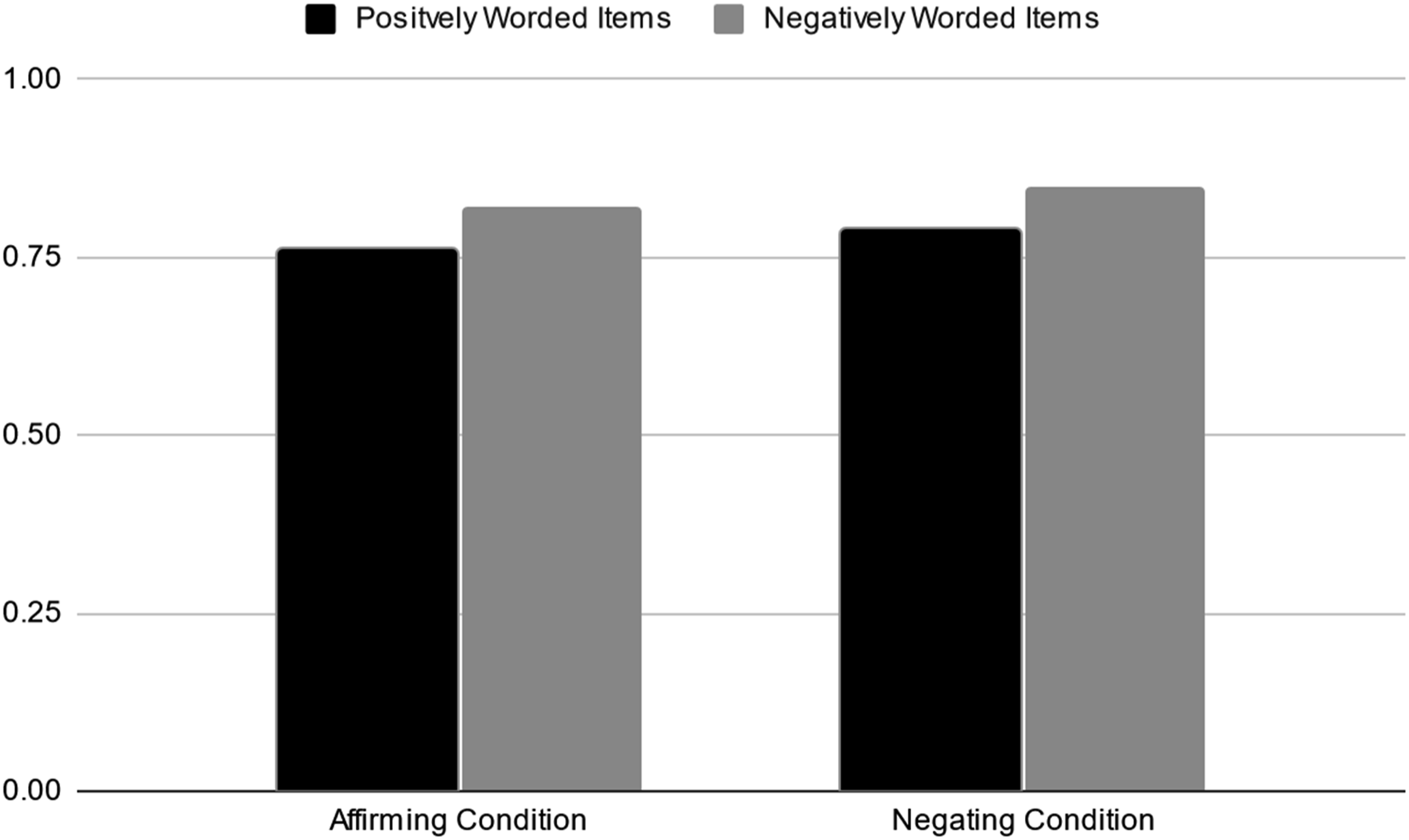

ANOVA 1 by item valence (positive, negative) and semantic framing (affirming, negating).

A second mixed-model ANOVA was conducted to test Hypothesis 3, that item valence and semantic framing affects intra-scale response variance. In this ANOVA, the outcome variable was the inter-item standard deviation (ISD), which quantifies intra-scale response variance across all the items of a scale. It stands to reason that if all the items of an inventory gauge the same construct, one should expect responses to all its items to be similarly located in the response range. We expected the PANDI Good subscale to have smaller ISDs than the PANDI Bad subscale, and the Affirming Condition to produce smaller ISDs than the Negating Condition.

In support of Hypothesis 3, results of the second ANOVA yielded a statistically significant main effect of item valence in the expected direction (Table 3 & Figure 2). PANDI-Good items produced less intra-scale response variance than PANDI-Bad items. The main effect for semantic framing was not statistically significant but was in the expected direction. In sum, the effect of item valence on intra-scale response variance was predictable, meaningful, and greater than the effect of semantic framing. ANOVA 2 inter-item standard deviations by item valence (positive, negative) and semantic framing (affirming, negating).

Discussion

The Item Wording Effect is a psychological testing phenomenon wherein responders answer positively worded items (e.g., I am good) differently than negatively worded items (e.g., I am bad). Research shows meaningful differences in the reliabilities, mean scores, and factor structure of positively and negatively worded items. Apart from that, the literature on the IWE has been murky due in part to researchers’ use of study measures that are differentiated by more than just item valence, but also semantic framing, affix use, changing response option valences, item complexity, and multiple themes.

The purpose of this study was to use a clarified inventory in which items could be differentiated on item valence alone. For this purpose, we developed a 30-item personality inventory, adapted in part from Osgood et al. (1964), that separated into 15 positively worded and 15 negatively worded subscales called the PANDI-Good and PANDI-Bad, respectively. Further, we manipulated semantic framing to make comparisons with item valence to see which variable caused the biggest IWE.

Hypothesis 1 findings were contrary to our expectations and completely inconsistent with the IWE literature. Positively worded items in the Affirming Condition produced the lowest reliability of all four groups. We attribute this odd result to a restriction of range. Table 2 shows PANDI-Good standard deviations in the Affirming Condition were about half the size of the other three groups. The PANDI-Good means in the Affirming Condition were more narrowly distributed as compared to the PANDI-Bad and Negating Condition items. This may reflect the jarring, slowing effect that negative items have on responders’ completion times (Podsakoff et al., 2003).

Hypothesis 2 showed interesting effects of item valence and semantic framing on scale means. Because all negatively worded and Negating Condition items were reversed scored, and all their content were conceptually aligned, mean scores should have been equivalent across groups. Yet, results showed responders seemed to process positively worded items in the Affirming Condition differently than in the other three groups. Positively worded and Affirming Condition item means were high above the response scale midpoint, whereas means in the other three groups were more conservatively near the midpoint. In sum, responders showed a positivity bias when completing positivity worded and affirmingly framed items. This is consistent with positive biases found in self-referent attributions (Watson et al., 2007), worldview/outlook (Mezulis et al., 2004; Peeters, 1971), and language (Augustine et al., 2011; Dodds et al., 2015). Hypothesis 3 analysis showed positively worded items produced less intra-scale response variance than negatively worded items, which we attribute to response hesitancy or a lack of certainty in responding to negatively worded items (Podsakoff et al., 2003). This explanation is consistent with research that shows responders answer positively worded items significantly quicker than negatively worded items (Watson et al., 2007).

In sum, positively worded and affirming items produced the best results for means and intra-scale response variance, but the worst results for inventory reliability. Altogether, the IWE was meaningfully influenced by item valence, and to a lesser extent semantic framing. This finding violates a fundamental assumption of objective personality testing, that responders can interpret items correctly (Kline, 1993; Watson et al., 1988). From these findings, and previous research, we argue this presumption is untenable and should be addressed.

Inventory developers may not be able to entirely avoid using negatively worded items. The subject matter they measure is often dark, disturbed, and pathological (e.g., the dark tetrad), which necessitates negatively worded content. Nevertheless, developers would benefit to carefully choose clarified items that vary on item valence alone. Avoid items that are negating, affixed, sesquipedalian (pun intended), and contain multiple themes. This study showed negative and negating items caused meaningful deleterious effects in reliability, mean scores, and intra-scale response variance as compared to positively worded and affirming items. Because these findings are mostly consistent with the IWE literature, which suggests a systematic methodical biasing effect of negating and negatively worded items, researchers would be sensible to limit their use when possible. The main loss in the absence of negative and negating items (e.g., control for response biases) can be regained with the clever use of data validity scales (e.g., acquiescence and naysaying can be detected with extremely small ISDs; Marjanovic et al., 2015).

Limitations and Future Directions

Our data were restricted to online self-report questionnaires that produced high rates of indiscriminate or careless responding. Although we were careful to screen the data to identify and expunge invalid responders, we believe some may have gotten through the screens with the use of sophisticated bots and response generating software that contaminated our data, nevertheless (Dupuis et al., 2019). We believe this because of the large number of responders that completed the questionnaire in less than 3 minutes but were still able to pass the validity scale and missing data cutoffs. This is important because indiscriminate responding contaminated data can mistakenly yield factors made up of positively and negatively worded items (e.g., Schmitt & Stuits, 1985). The more indiscriminate responding (IR) in a set of data, the more strongly the data would show signs of bidimensionality. We thusly implore researchers to take efforts to filter their data of impurities before commencing with analyses.

In future research, the IWE and its suspected causes and correlates can be re-examined using clarified attitudinal and personality inventories as used in this study. Existing measures like the Positive and Negative Affect Schedule (PANAS; Watson et al., 1988) are also promising measures to use in IWE research because items can be segregated based on item valence alone. For now, we advise researchers to be weary of inventories containing negating and negatively worded items: avoid their use if possible. Despite the advantages they gift to researchers (e.g., reduction of response bias), their disadvantages are costly to ignore.

Footnotes

Acknowledgments

We thank Morgan van Morgan for her assistance throughout this project.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the SSHRC Insight Grant # 435-2019-0529.