Abstract

When using opt-out (vs. opt-in) policies, choice architects presume that people consent, rather than explicitly asking them to state their consent. While opt-out policies often increase compliance, they are also associated with managerial issues such as ethical considerations, legal regulations, limited public support, and increased no-show rates. This research demonstrates that choice architects can also establish presumed consent through the language they use, holding the opt-in policy constant. Seven studies in various health domains indicate that presumed-consent language (e.g., “a vaccine was arranged for you”), rather than explicit-consent language (e.g., “you can choose to get a vaccine”), increases persuasion (i.e., behavioral intentions and actual behaviors). This effect occurs through perceived endorsement: Decision-makers infer through the presumed-consent language that the desired health behavior (e.g., vaccination) is the recommended course of action. Furthermore, this research examines the proposed endorsement process under various conditions. When product tangibility is low (e.g., a flu shot), the effectiveness of presumed-consent language stems primarily from perceived endorsement rather than psychological ownership or perceived ease. In contrast, when product tangibility is high (e.g., a sunscreen lotion), the effect stems primarily from psychological ownership rather than perceived endorsement or perceived ease.

Opt-out (vs. opt-in) policies are a widely used choice architecture tool (Keller et al. 2011). Under opt-out policies, choice architects presume that decision-makers consent to a particular outcome, whereas under opt-in policies, decision-makers need to explicitly agree with a particular course of action. When choice architects presume that decision-makers consent (i.e., under an opt-out default policy) compliance is usually higher relative to when choice architects ask for explicit consent (i.e., under an opt-in default policy) (Jachimowicz et al. 2019; Last et al. 2021). Presumed-consent policies influence a variety of health behaviors, such as increasing organ donation (Johnson and Goldstein 2003), increasing flu vaccination (Chapman et al. 2010, 2016), decreasing drug prescription (Patel et al. 2016), or decreasing unnecessary imaging (Sharma et al. 2019). However, opt-out policies are also associated with significant managerial problems impeding their implementation such as (1) ethical considerations (Keller et al. 2011); (2) privacy regulations—for example, opt-out strategies are no longer allowed under the General Data Protection Regulation in Europe (D’Assergio et al. 2025); (3) limited public support (Felsen, Castelo, and Reiner 2013; Lemken, Wahnschafft, and Eggers 2023), as opt-out strategies are perceived to reduce freedom of choice (Jung and Mellers 2016); and (4) increased no-show rates—for example, in a study of default policies to encourage flu vaccination, participants in the opt-out condition were unlikely to cancel their appointments, and 71% of participants did not show up for their vaccination appointment, compared with a 0% no-show rate in the opt-in condition (Chapman et al. 2016). To accommodate the challenges associated with presumed-consent policies (i.e., opt-out), we identify presumed-consent language as an alternative intervention that improves persuasion under an explicit-consent policy (i.e., opt-in).

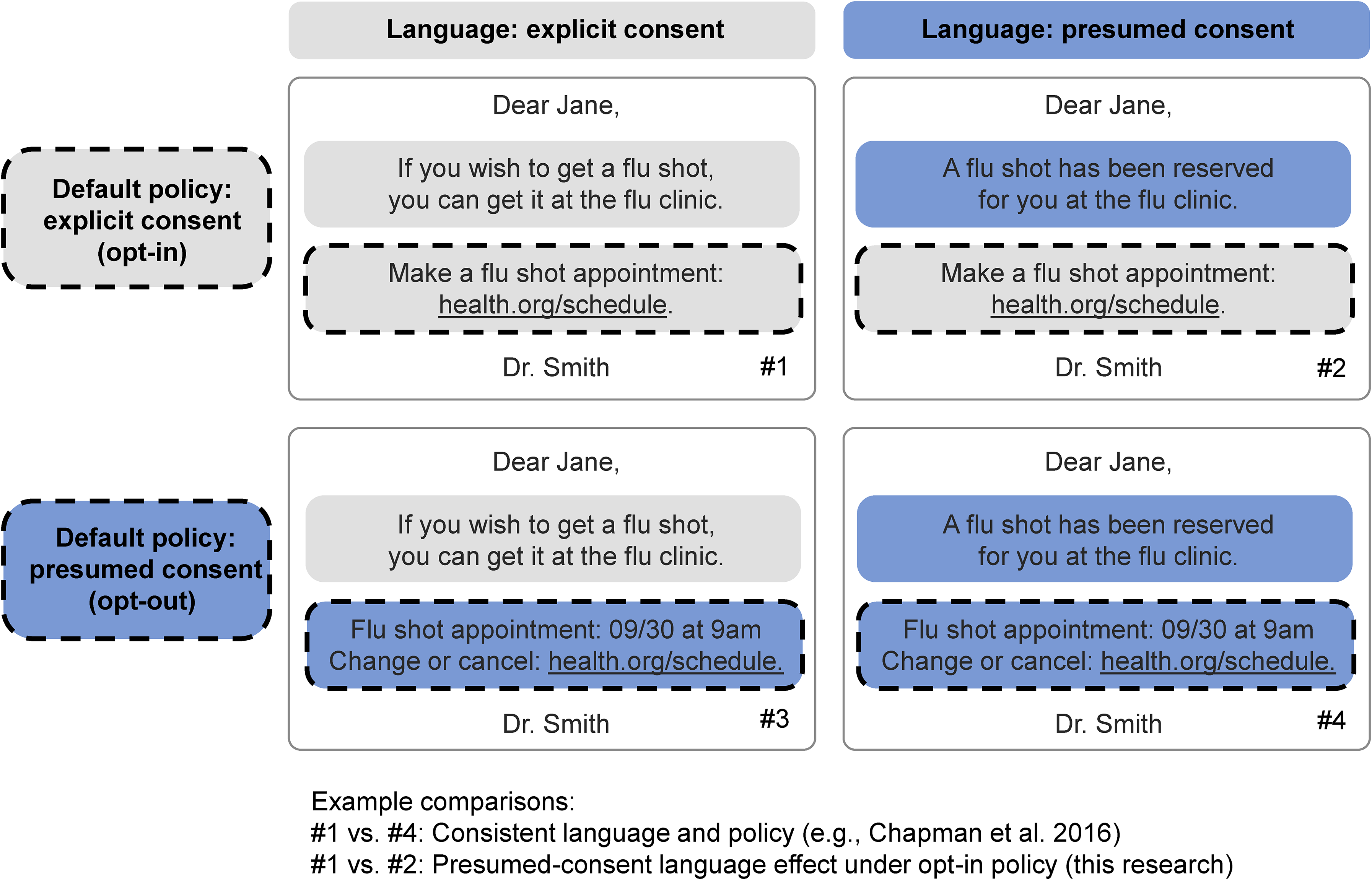

We distinguish presumed-consent policy effects and presumed-consent language effects. First, “presumed-consent policy effects” occur when choice architects use an opt-out policy (presumed consent) over an opt-in policy (explicit consent). If decision-makers do not specify a particular course of action (e.g., schedule an appointment for a flu shot), they will automatically obtain the outcome that is predetermined by the choice architect (i.e., the default policy, such as an automatically scheduled appointment). Second, “presumed-consent language effects” occur when choice architects use language that signals presumed consent rather than explicit consent. For instance, a message like “a flu shot has been reserved for you” presumes consenting decision-makers and assumes that decision-makers will agree with a particular course of action (i.e., scheduled appointment).

In Figure 1, we illustrate the difference between language and policy in the context of a message that contains both an introductory text (the “language” part) and a web link (the “policy” part). When implementing a presumed-consent policy (i.e., opt-out), compared with an explicit-consent policy (i.e., opt-in), choice architects may intuitively keep the language and policy consistent (see cell #1 vs. cell #4 in Figure 1). For example, Chapman et al. (2016; p. 44) used the following stimuli, which suggest consistency in language and policy: “Patients in the opt-out condition received a letter informing them that the medical practice had prescheduled them for a flu shot during flu clinic hours … but they could reschedule or cancel the appointment if they chose. Patients in the opt-in condition received a letter informing them that they could make an appointment during flu clinic hours if they wished.” In this research, we argue that the language and the policy do not need to be consistent in terms of presuming consent. Importantly, we propose that presumed-consent language (vs. explicit-consent language) may improve persuasion even when holding the opt-in policy constant (see cell #1 vs. cell #2 in Figure 1).

Presumed-Consent Policy and Language Effects.

Language interventions constitute an interesting alternative for policy makers and marketers because they may accommodate some of the managerial challenges associated with opt-out policies. To provide initial evidence for this assumption, we conducted a pilot study among 101 U.S.-based Prolific Academic participants who evaluated language and policy interventions on four measures: ethical considerations, privacy protection, preservation of freedom of choice, and intervention support (see Web Appendix A for measures). Participants first saw a message with explicit-consent policy and explicit-consent language (#1) and subsequently evaluated two messages changing either presumed-consent language (#2) or presumed-consent policy (#3). The context was flu vaccination, and the message content was adapted from Figure 1 (see Web Appendix A). Compared with a presumed-consent policy intervention (i.e., opt-out), the presumed-consent language intervention scored higher on ethical considerations (Mpolicy = 3.51 vs. Mlanguage = 4.05; β = .53, t(100) = 2.29, p = .024), privacy protection (Mpolicy = 3.68 vs. Mlanguage = 4.07; β = .39, t(100) = 2.07, p = .041), preservation of freedom of choice (Mpolicy = 3.23 vs. Mlanguage = 3.78; β = .55, t(100) = 2.13, p = .036), and intervention support (Mpolicy = 3.02 vs. Mlanguage = 3.63; β = .60, t(100) = 3.15, p = .002). In summary, presumed consent via language may circumvent some of the challenges associated with presumed consent via policy (i.e., opt-out).

This article makes three main contributions. First, we contribute to the literature on presumed consent (Rithalia et al. 2009; Steffel, Williams, and Tannenbaum 2019) by disentangling different types of presumed-consent effects (i.e., via language rather than policy) in persuasive communication. We operationalize the effectiveness of persuasive communication in terms of click behaviors, behavioral intentions, and actual vaccination uptake. We propose that presumed-consent language improves the effectiveness of persuasive communication over explicit-consent language, holding the policy constant. More specifically, we compare the effect of language within the context of opt-in policies because of the managerial challenges associated with opt-out policies (Chapman et al. 2016; D’Assergio et al. 2025; Jung and Mellers 2016; Keller et al. 2011). Recent literature on “defaultless defaults” used color and position to make one option appear like a default (Reeck et al. 2023). In this research, we pursue a similar objective while focusing on language instead of color or position.

Second, we contribute to the literature on the implicit interaction between decision-makers and choice architects (Krijnen, Tannenbaum, and Fox 2017). Prior literature shows that decision-makers make inferences about the choice architect's intentions: When confronted with choice options, decision-makers will try to make sense of why the choice architect has set a particular option as a default. Choice architects may “leak” information about their own attitudes, intentions, and beliefs when they designate an option as the default (i.e., the outcome when no action is taken by the decision-maker). Hence, default options come with an implicit endorsement from the choice architect (McKenzie, Liersch, and Finkelstein 2006). We contribute to this literature by identifying endorsement as a psychological mechanism and demonstrating that presumed-consent language leaks information about choice architects’ recommended course of action, holding the policy constant.

Third, we contribute to the literature on the underlying effectiveness of behavioral interventions by comparing different psychological processes. In general, our results suggest that presumed-consent language effects may operate through three processes identified in the literature on presumed-consent policy (Jachimowicz et al. 2019; Johnson and Goldstein 2003): (1) endowment (e.g., psychological ownership; Buttenheim et al. 2022; Dai et al. 2021; Rabb et al. 2022), (2) endorsement (McKenzie, Liersch, and Finkelstein 2006), and (3) ease (Nolte and Löckenhoff 2023). Importantly, we find consistent evidence that perceived endorsement explains presumed-consent language effects. Furthermore, we demonstrate that product tangibility moderates the relative strength of perceived endorsement over psychological ownership and perceived ease.

Conceptual Framework

Presumed-Consent Language Effects

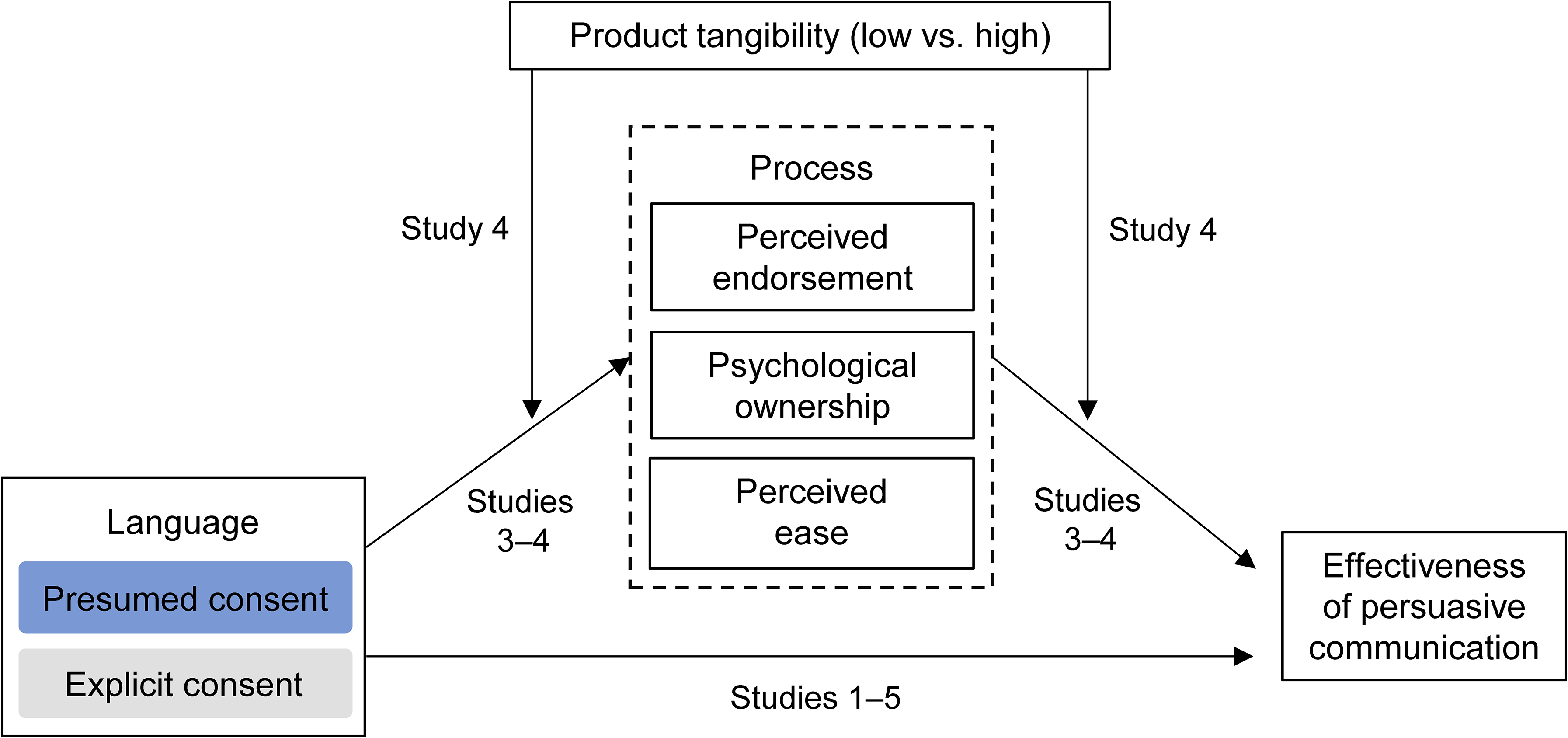

We conceptualize presumed-consent language effects building on the medical literature regarding “presumptive announcements” (Brewer et al. 2017). Correlational studies on patient–provider conversations show that patients are more likely to get vaccinated when providers assume readiness for the vaccination (e.g., “Your son is due for vaccines against meningitis & HPV. We’ll give them at the end of the visit.”) (Moss et al. 2016; Opel et al. 2013, 2015). Presumptive announcements may help individuals build on their favorable intentions to get vaccinated when the choice architect “presumes” that decision-makers wish to get vaccinated (Brewer et al. 2017). Given the correlational nature of these studies, we cannot rule out the possibility that doctors employ different language depending on their perceptions of patients’ attitudes regarding vaccination (e.g., a doctor feels the patient is reluctant, and therefore does not use a presumptive announcement). Building on the correlational evidence, we propose that presumed-consent language increases persuasion in a causal manner (see Figure 2). We hypothesize:

Conceptual Framework and Study Overview.

Presumed-Consent Language Effects Mediated by Endorsement, Ownership, and Ease

While we acknowledge that multiple psychological processes could account for presumed-consent language effects, this research focuses on three families of processes as established in the literature on presumed-consent policies: perceived endorsement, psychological ownership, and perceived ease (Jachimowicz et al. 2019; Johnson and Goldstein 2003).

Perceived endorsement

Compared with explicit-consent language (e.g., “you can get a vaccine or not”), presumed-consent language (e.g., “a vaccine was arranged for you”) may be perceived as an endorsement, such that the message implies that the desired health behavior (e.g., vaccination) is the recommended course of action. Speakers and listeners rely on tacit assumptions and implicit principles (Grice 1975; Schwarz 1996), and a social sensemaking process governs the interaction between the choice architect and the decision-maker (Krijnen, Tannenbaum, and Fox 2017). For example, McKenzie, Liersch, and Finkelstein (2006) show that a choice architect's attitudes can be revealed through their choice of default (i.e., information leakage): When a desired health behavior is made the default policy, decision-makers perceive the default policy as an implicit recommendation. Presumed-consent language may therefore signal that the desired health behavior is the recommended course of action.

Psychological ownership

Prior literature in the domain of vaccination suggests an alternative process for the effectiveness of presumed-consent language. Compared with explicit-consent language (e.g., “you can get a vaccine”), presumed-consent language (e.g., “a vaccine is reserved for you”) may increase perceived psychological ownership (Buttenheim et al. 2022; Dai et al. 2021; Rabb et al. 2022). Research on the endowment effect shows that people value objects more when they experience a higher degree of psychological ownership (Morewedge 2021; Shu and Peck 2011). The increased feeling of ownership may increase the uptake of a vaccine. This process is similar to “endowing” decision-makers with a preselected option. Indeed, the more a decision-maker feels endowed with a preselected option, the more likely they are to stay with the default, as a consequence of loss aversion (Jachimowicz et al. 2019; Kahneman and Tversky 1979) or anticipated guilt (Theotokis and Manganari 2015). In sum, we theorize that presumed-consent language effects could also be explained by psychological ownership.

Perceived ease

Perceived ease could explain default policy effects in two ways. First, when an option is preselected, individuals might not evaluate each presented option separately. Instead, they may assess whether the default option satisfies them (Johnson et al. 2012) and stick with the default option if it is satisfactory. Second, different default policy designs vary in the ease with which decision-makers can deviate from the default. When switching from the preselected policy requires greater effort, decision-makers tend to be more inclined to stick with the default (Jachimowicz et al. 2019). For instance, decision-makers may infer that it is easier to obtain a vaccine if they learn that “a vaccine has been reserved” for them. In sum, we theorize that presumed-consent language effects could be explained by an increase in perceived ease.

We suggest that the strength of these processes is context-dependent, which we further discuss in the next section.

Relative Mediating Strength of Endorsement and Ownership Moderated by Product Tangibility

The strength of each psychological process behind presumed-consent policy effects is likely to be context-specific (Dinner et al. 2011). In health contexts, we propose that presumed-consent language generally operates via perceived endorsement: Because consumers are not medical experts, they are likely to rely on endorsements and recommendations from medical professionals when facing a variety of health decisions. But even in health care contexts, other psychological processes may become more prominent, depending on whether the communication involves tangible products (e.g., “a dental cleaning pack has been reserved for you”) or intangible ones (e.g., “a dental cleaning appointment has been reserved for you”). More specifically, we predict that product tangibility moderates the relative strength of the underlying psychological processes. Tangible products can be experienced via the sense of touch, and they are more palpable and more material (Laroche, Bergeron, and Goutaland 2001). For example, a physical item (e.g., a medical record binder) is more tangible than its digital equivalent (e.g., a digital medical record), because the former can be physically touched, manipulated, or stored at home.

The materiality of tangible products increases their capacity to garner psychological ownership (Atasoy and Morewedge 2018; Peck and Shu 2009; Pierce, Kostova, and Dirks 2003). For instance, when considering a tangible product such as a toothbrush, consumers are more likely to experience psychological ownership when they learn that this product “has been reserved” for them. In contrast, consumers will have more difficulty experiencing ownership for intangible products (e.g., a skin check, a flu shot) because consumers cannot touch or store them. In sum, we posit that perceived endorsement plays a prominent mediating role in presumed-consent language effects, but that a process in terms of psychological ownership may become relatively stronger in the case of high product tangibility. We predict the following moderated mediation pattern:

Overview of Studies

Our empirical evidence consists of five preregistered experiments and a study combining an experiment and a meta-analysis of randomized controlled trials (RCTs). The study overview is illustrated in Figure 2. The Internal Review Board of the first author’s university approved all experiments, and we obtained informed consent from all participants. Preregistrations for all studies, raw data, and R code are available at https://osf.io/qmjba.

We compare the effectiveness of presumed-consent language and presumed-consent policy in Study 1. We provide evidence for presumed-consent language effects (H1) with actual consent rates (Study 1), click-through behavior (Study 2), behavioral intentions (Studies 3–4), and secondary data on vaccination uptake (Study 5). The findings reveal that both perceived endorsement and psychological ownership mediate the effect of presumed-consent language on behavioral intentions, but the mediating role of perceived endorsement is significantly stronger (H2a, Studies 3a–b). Only when product tangibility is high (e.g., dental cleaning pack) do we find that the mediating role of psychological ownership is stronger than perceived endorsement (H2b, Study 4). Lastly, we provide evidence that presumed-consent language effects extend to real-world settings (H1, Studies 5a–b).

Study 1: Presumed-Consent Language and Policy Effects

Study 1 aims to provide evidence of presumed-consent language and policy effects in the context of a real skin cancer detection smartphone app. Following Johnson and Goldstein (2003), we use the effective consent rate as the dependent variable by comparing the number of people who opt in (in the explicit-consent policy condition) with the number of people who do not opt out (in the presumed-consent policy condition). Most importantly, we will disentangle the effect of explicit consent versus presumed consent operationalized via policy as well as via language. However, while we use a classic 2 × 2 design, we do not predict an interaction effect. Instead, we predict a positive conditional effect of presumed-consent language (vs. explicit-consent language) on the effective consent rate within the opt-in condition (H1). Additionally, we aim to replicate prior research on default effects (Jachimowicz et al. 2019); that is, we predict a positive main effect of presumed-consent policy (i.e., opt-out) over explicit-consent policy (i.e., opt-in). The study design and predictions were preregistered (https://osf.io/qmjba).

Method

We requested 1,200 and recruited 1,228 U.K.-based participants from Prolific Academic (753 female, 465 male, 10 nonbinary; Mage = 37.31 years). To increase realism given the smartphone app setting, we restricted participation to respondents accessing the study via their smartphones. We randomly assigned participants to one of four conditions in a 2 (language: explicit consent vs. presumed consent) × 2 (policy: explicit consent = opt-in vs. presumed consent = opt-out) between-participants design.

On the first page of the study, participants read about the skin cancer detection smartphone app, SkinScreener. We provided participants with an introduction including a visual explanation (see Web Appendix B). Below the explanation, we informed participants that “We are conducting a survey to better understand prospective patients,” and we added four filler questions to increase the realism of the study. Adapted from the literature on the Fitzpatrick scale (Eilers et al. 2013), we averaged four questions on hair color, skin color, freckles intensity, and sun sensitivity into a skin type scale (α = .70). Participants did not receive feedback on these skin type questions, such that the need for a skin check was unclear. We collected participants’ age and gender below the four filler questions.

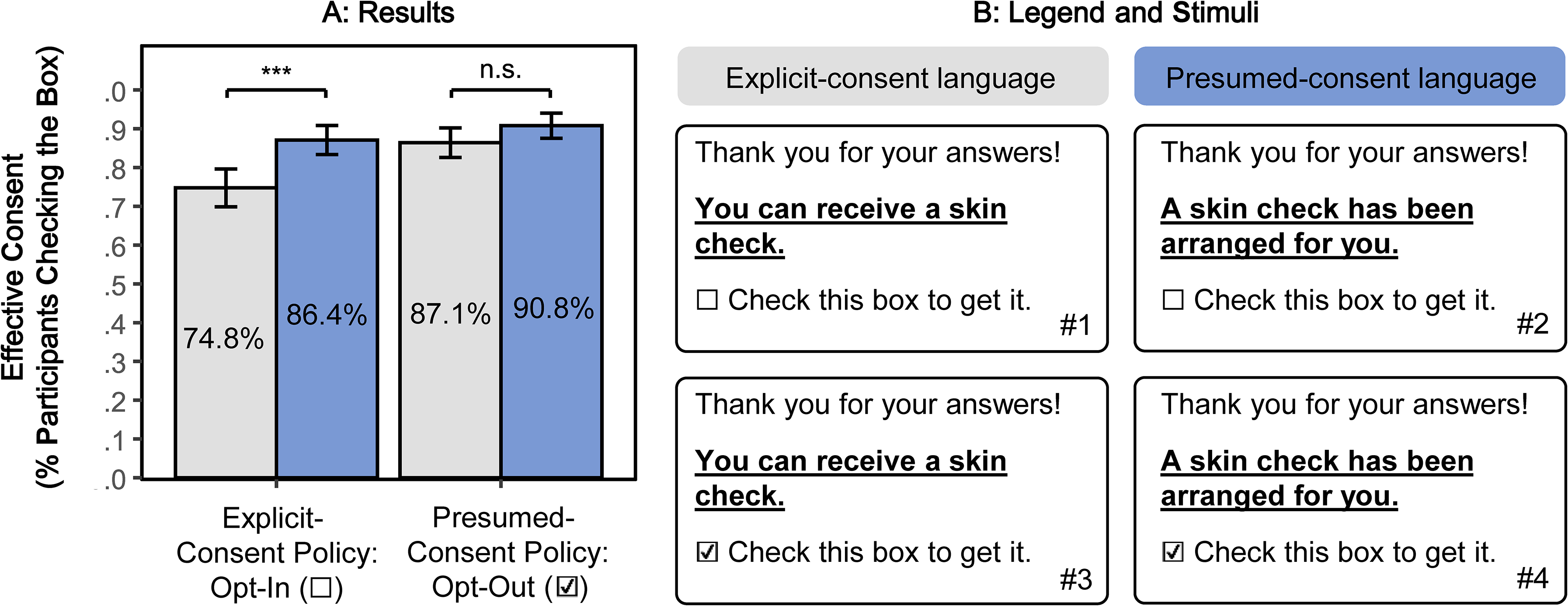

The second page of the study consisted of a message that included our key manipulations and the dependent variable (see Figure 3 and Web Appendix B). In the message, we thanked participants for their answers and informed them about the opportunity to get a skin check. In the explicit-consent language conditions, participants read, “You can receive a skin check.” In the presumed-consent language conditions, participants read, “A skin check has been arranged for you.” In the explicit-consent policy conditions, the checkbox next to “Check this box to get it” was unchecked, such that participants who wanted a skin check needed to check it (i.e., opt in). In the presumed-consent policy conditions, the checkbox was prechecked, such that participants who did not want a skin check needed to uncheck it (i.e., opt out). Our dependent variable was effective consent (i.e., whether the checkbox was checked or not), indicating whether participants agreed to get a skin check. Participants who actually consented received the link to download the SkinScreener app as well as an offer for a free skin check (https://skinscreener.com) on the next page. For exploratory reasons, we measured whether participants actually clicked on the link.

Presumed-Consent Language and Policy Effects (Study 1).

Results

Our main analysis consisted of a preregistered logistic regression with effective consent as the dependent variable (0 = no, 1 = yes) and language (−1/2 = explicit consent, 1/2 = presumed consent), policy (−1/2 = explicit consent: opt-in, 1/2 = presumed consent: opt-out), and their interaction as independent variables. We used ANOVA coding (rather than dummy coding) such that the coefficients for language and policy represent main effects (rather than conditional effects). First, we examined the main effects of presumed-consent policy and language. As predicted, and consistent with prior literature, there was a significant main effect of policy (β = .63, z(1224) = 3.76, p < .001) such that effective consent was higher for presumed-consent policy (i.e., opt-out) than for explicit-consent policy (i.e., opt-in). Exploratory analyses revealed that the main effect of language was significant (β = .57, z(1,224) = 3.40, p < .001). Second, we further examined the conditional effects of presumed-consent language at different values of presumed-consent policy. As predicted, under an explicit-consent policy (i.e., opt-in), effective consent was significantly higher in the presumed-consent language condition than the explicit-consent language condition (86.4% vs. 74.8%; β = .76, z(1,224) = 3.61, p < .001), supporting H1. However, exploratory analyses revealed that effective consent did not differ between the presumed-consent language condition and the explicit-consent language condition under a presumed-consent policy (i.e., opt-out) (90.8% vs. 87.1%; β = .38, z(1,224) = 1.45, p = .147), and that the language × policy interaction was not significant (β = −.38, z(1,224) = −1.15, p = .252). The results are illustrated in Figure 3.

As a robustness check, we found that the effects held when we controlled for the skin type scale and that this scale did not moderate the effect of presumed-consent language or policy (see Web Appendix B). Lastly, exploratory analyses of the clicks on the download page link revealed no difference between the experimental conditions (see Web Appendix B).

Discussion

Study 1 provides initial support for the presumed-consent language effect under an explicit-consent policy (i.e., opt-in; H1). We also find that effective consent is higher under a presumed-consent policy (i.e., opt-out) relative to an explicit-consent policy (i.e., opt-in), consistent with prior literature (Jachimowicz et al. 2019). In sum, these results indicate that language and policy both contribute to increasing consent rate. Finally, we did not observe differences in click behaviors; however, it is important to emphasize that we only provided the download link to participants who indicated prior consent. The next study investigates the effect of presumed-consent language on click behaviors by providing the link to all participants.

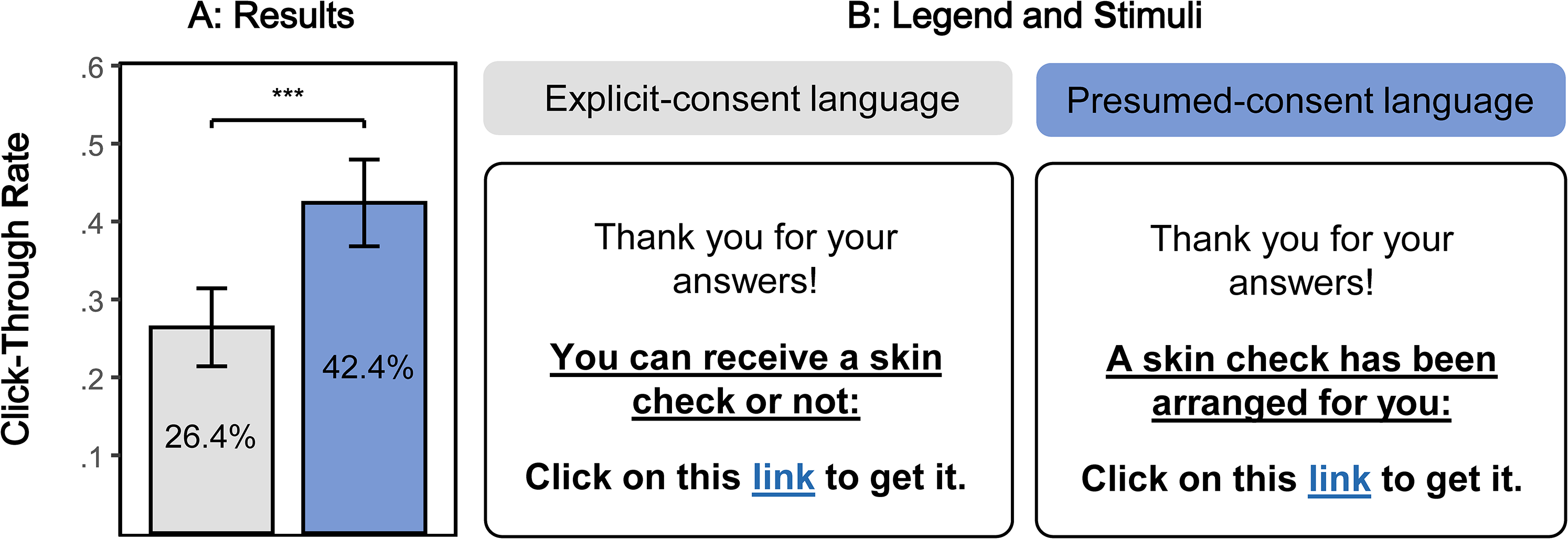

Study 2: Presumed-Consent Language Effect with Click-Through Behavior

The main goal of Study 2 is to replicate the effect of presumed-consent language (H1) with a realistic behavioral outcome. In this study, we use a pop-up message with the presumed-consent language manipulation, including a link to download the same skin cancer detection smartphone app used in Study 1. As a dependent measure, we surreptitiously measure how many participants click on the link. We predict that the click-through rate will be higher for presumed-consent language than for explicit-consent language. The study was preregistered (https://osf.io/qmjba).

Method

We requested 600 and recruited 601 U.K.-based participants from Prolific Academic (370 female, 228 male, 3 nonbinary; Mage = 39.20 years). As in Study 1, we again restricted participation to respondents accessing the study via their smartphones to increase realism given the app download setting. We randomly assigned participants to one condition of a two-cell (language: explicit-consent vs. presumed-consent) between-subjects design.

In the explicit-consent condition, participants read, “You can receive a skin check or not” (see Figure 4). We included “or not” to emphasize both choice options. Literature on active choice shows that when both choice options are explicit and equally salient (Keller et al. 2011), people are more likely to consent. Note that “or not” was absent in the explicit-consent conditions of Studies 1, 3b, and 4 and that its inclusion/exclusion did not affect the interpretation of our results in meaningful ways.

Presumed-Consent Language Effect with Click-Through Behavior (Study 2).

In the presumed-consent condition, we relied on multiple stimuli to ensure that our findings could be generalized (i.e., are not dependent on one idiosyncratic message). We randomly assigned participants to one of two messages (“A skin check has been arranged for you,” or “A skin check has been secured for you”). Because we did not preregister to compare the two messages, we present the results with collapsed presumed-consent messages. Exploratory results by message are represented in Web Appendix C.

On the first page of the study, participants read about the skin cancer detection smartphone app SkinScreener, including an introduction and visual explanation (Web Appendix C). Below the explanation, we informed participants that “We are conducting a survey to better understand prospective patients,” and we added the skin type questions (α = .70) of Study 1 to increase the realism of the manipulation. Importantly, participants did not receive feedback on the skin type questions, such that the need for a skin check was unclear. Then, participants indicated their age and gender below the filler questions.

On the second page of the study, a pop-up message thanked participants for their answers and informed them about the opportunity to get a skin check, including a link to the smartphone app (see Figure 4 and Web Appendix C). The link landed on the download page of the SkinScreener app, which offers a free skin check. Below the pop-up box, the “complete survey” button was displayed. The study was programmed to be terminated either by clicking on the link or clicking on the “complete survey” button. Our dependent variable is whether participants clicked on the link or not.

We performed a manipulation check with an independent sample of 100 U.K.-based Prolific Academic participants (59 female, 40 male, 1 nonbinary; Mage = 37.82 years). We assigned participants to the same between-subjects conditions as in the main study with one exception: On the pop-up message page, participants could not click on the link, and we asked them to evaluate perceived presumed consent (“The message seems to presume that I wish to follow the skin check recommendation,” “I feel like this message assumes that I already intend to get a skin check,” and “I feel like this message implies that getting a skin check is my default course of action”; 1 = “Not at all,” and 7 = “Very much”; α = .93; Mexplicit = 3.11, Mpresumed = 5.29; β = 2.18, t(99) = 7.16, p < .001).

Results

The preregistered analysis was a logistic regression with click (0 = did not click, 1 = clicked) as the dependent variable and language (0 = explicit consent, 1 = presumed consent) as the independent variable. As predicted, we found that presumed-consent language (vs. explicit-consent language) increased click-through rate (CTRexplicit = 26.4% vs. CTRpresumed = 42.4%; β = .72, z(599) = 4.09, p < .001). The results are illustrated in Figure 4. As a robustness check, we found that the effect holds when controlling for the skin type scale (β = .73, z(598) = 4.15, p < .001) and that there was no interaction between presumed-consent language and the skin type scale (p = .613).

Discussion

In Study 2, we find evidence for presumed-consent language effects by employing click-through behavior as a dependent measure (H1). In the next studies, we examine the underlying processes behind presumed-consent language effects.

Studies 3a–b: Presumed-Consent Language Effects Through Endorsement, Ownership, and Ease

The goal of Studies 3a–b is to compare the psychological processes underlying presumed-consent language effects. Relative to explicit-consent language (e.g., “you can get a flu shot or not”), presumed-consent language (e.g., “a flu shot has been made available to you”) may increase the effectiveness of persuasive communication through an increase in perceived endorsement, psychological ownership, and perceived ease. In contrast to the assumption that these messages operate via psychological ownership (Buttenheim et al. 2022; Dai et al. 2021; Rabb et al. 2022), we hypothesize that perceived endorsement will be a stronger psychological driver. Specifically, we predict that the indirect effect of presumed-consent language (vs. explicit-consent language) on behavioral intentions through perceived endorsement will be stronger than through psychological ownership (H2a) or perceived ease.

To investigate the mediating processes, we randomly allocate participants in Study 3a to rate only one of the potential mediators in a between-participants design. In Study 3b, we use a parallel mediation design and participants rate all potential mediators (in counterbalanced order). The two studies use different health domains (flu shot in Study 3a and skin check in Study 3b), different control messages (e.g., excluding “or not” in the explicit-consent condition), and samples from different populations (U.K.-based Prolific in Study 3a and U.S.-based Prolific in Study 3b). Studies 3a–b also extend presumed-consent language effects (H1) to a new dependent variable, behavioral intentions. Both studies were preregistered (https://osf.io/qmjba).

Method

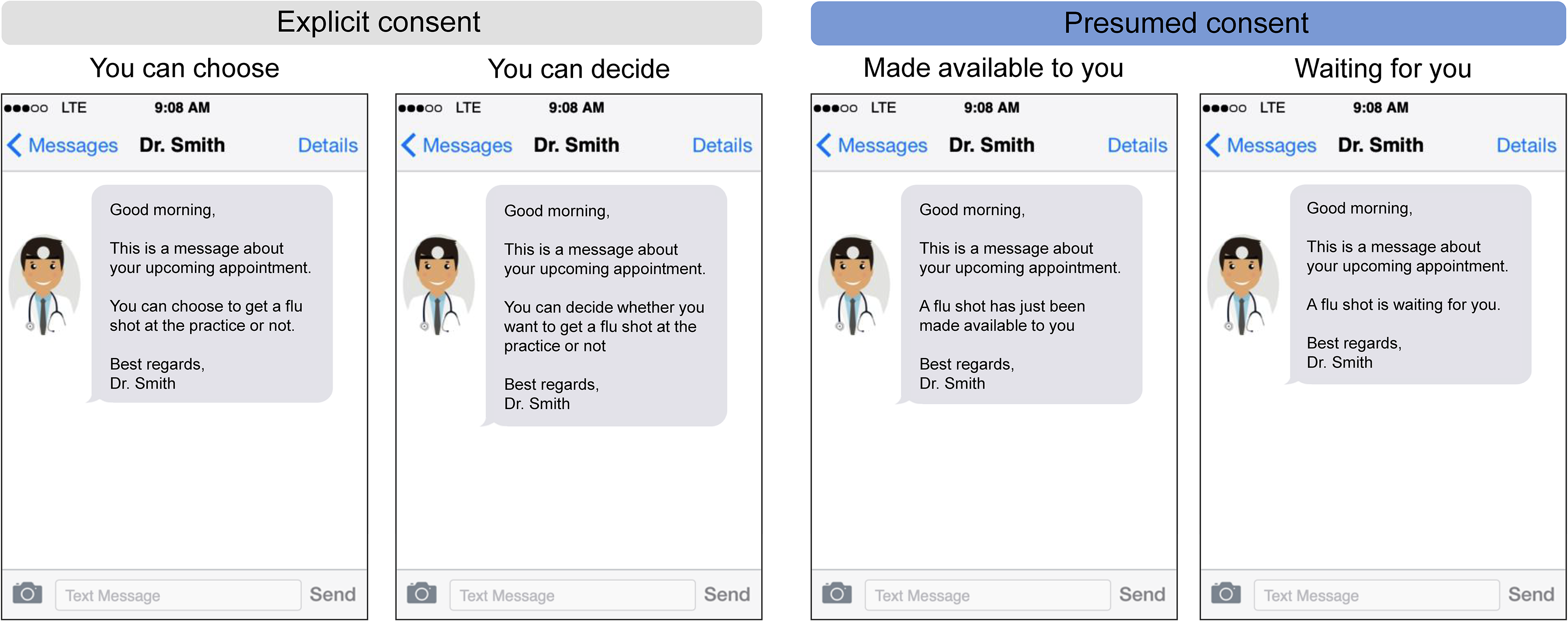

In this set of studies, we asked participants to imagine a situation in which they communicate with their physician “Dr. Smith” via text message (Study 3a) or email (Study 3b). To increase the realism, we modeled the visualization of the text messages after text messages on a mobile application such that they appear like actual text messages on the smartphone (see Figure 5). Full stimuli are presented in Web Appendices D–E.

Experimental Stimuli (Study 3a).

In Study 3a, we requested 600 and recruited 601 U.K.-based participants from Prolific Academic (345 female, 251 male, 5 nonbinary; Mage = 40.44 years). We randomly assigned participants to one condition of a 2 (language: explicit consent vs. presumed consent) × 3 (mediator: perceived endorsement vs. psychological ownership vs. perceived ease) between-participants design. In the presumed-consent conditions, we relied on two text messages based on prior research on vaccination uptake (Dai et al. 2021; Milkman et al. 2021, 2022). Participants in the presumed-consent conditions read one text message, randomly selected from a set of two messages: “A flu shot has just been made available to you” and “A flu shot is waiting for you.” In the explicit-consent conditions, participants also read one text message, randomly selected from a set of two messages: “You can decide whether you want to get a flu shot at the practice or not” and “You can choose to get a flu shot at the practice or not.” The stimuli are presented in Figure 5 and Web Appendix D.

In Study 3b, we requested 300 and recruited 306 U.S.-based participants from Prolific Academic (175 female, 129 male, 2 nonbinary; Mage = 36.07 years). We randomly assigned participants to one condition of a two-cell (language: explicit consent vs. presumed consent) between-participants design. In the presumed-consent condition, participants read the email message, “A skin check has been arranged for you,” and in the explicit-consent condition, “You can get a skin check.” The stimuli are presented in Web Appendix E.

To investigate the mediating processes in Study 3a, we randomly allocated participants to rate perceived endorsement, psychological ownership, or perceived ease in a between-subjects design, to avoid common method bias (Podsakoff et al. 2003). This allows us to measure the three different psychological processes in an unconfounded manner (i.e., in the absence of any spillover effects from one rating scale to the next). In Study 3b we used a within-participants design and we presented all potential mediators in random order.

We measured perceived endorsement with two items: “To what extent does the message make you feel that getting a flu vaccination/skin check is a favorable recommendation from the doctor?” and “To what extent does the message make you feel that the doctor recommends to get a flu shot/skin check?” (1 = “Not at all,” and 7 = “Very much”; Study 3a: α = .92, Study 3b: α = .88). The scale was adapted from Dinner et al. (2011) and Nolte and Löckenhoff (2023).

We measured psychological ownership with two items: “To what extent does the message make you feel that the flu shot/skin check is yours?” and “To what extent does the message make you feel that you own the flu shot/skin check?” (1 = “Not at all,” and 7 = “Very much”; Study 3a: α = .83, Study 3b: α = .83). The scale was adapted from Peck and Shu (2009).

We measured perceived ease with two items: “To what extent does the message make you feel that getting a flu shot/skin check is easy?” and “To what extent does the message make you feel that getting a flu shot/skin check is convenient?” (1 = “Not at all,” and 7 = “Very much”; Study 3a: α = .90; Study 3b: α = .86). The scale was adapted from Dinner et al. (2011) and Nolte and Löckenhoff (2023).

Next, we assessed our key dependent variable, behavioral intentions (“To what extent does this message make you want to get a flu shot/skin check?” and “To what extent does this message make you intend to get a flu shot/skin check?”; 1 = “Not at all,” and 7 = “Very much”; Study 3a: α = .95; Study 3b: α = .90). The correlations between all measures are presented in Web Appendix G.

Finally, we collected a manipulation check by measuring perceived presumed consent using the three-item scale from Studies 1–2 (Study 3a: α = .94; Mexplicit = 2.85, Mpresumed = 5.68; β = 2.82, t(599) = 24.15, p < .001; Study 3b: α = .86 Mexplicit = 4.14, Mpresumed = 5.74; β = 1.59, t(304) = 10.67, p < .001) as well as gender and age. Additionally, we included a measure of perceived reciprocity in Study 3b as an exploratory alternative account (see Web Appendix E).

Results

Separate mediation analyses

Following our preregistered plan, we estimated the indirect effect of presumed-consent language (vs. explicit-consent language) on behavioral intentions through (1) perceived endorsement, (2) psychological ownership, and (3) perceived ease in three separate mediation analyses (PROCESS Model 4, Hayes 2017). We label the independent variable to mediator relationship as a-path, the mediator to outcome as b-path, the indirect effect as a × b, and the independent variable to outcome as c-path (Hayes 2017).

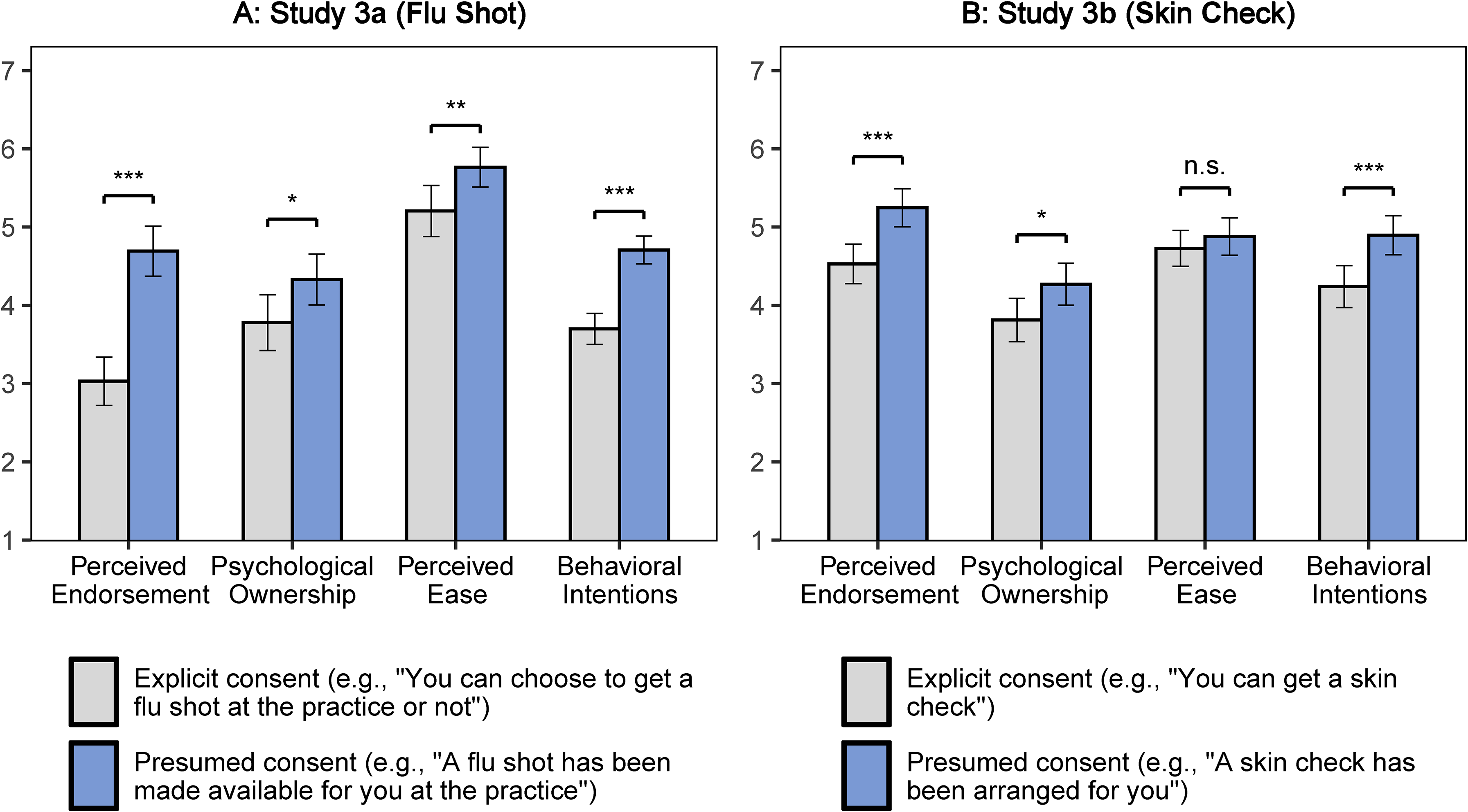

First, we found that presumed-consent language (vs. explicit-consent language) had a significant influence on perceived endorsement (Study 3a: Mexplicit = 3.03, Mpresumed = 4.69; aEND = 1.66, t(195) = 7.29, p < .001; Study 3b: Mexplicit = 4.53, Mpresumed = 5.25; aEND = .72, t(304) = 4.03, p < .001) and on psychological ownership (Study 3a: Mexplicit = 3.77, Mpresumed = 4.33; aOWN = .55, t(200) = 2.24, p = .026; Study 3b: Mexplicit = 3.81, Mpresumed = 4.27; aOWN = .46, t(304) = 2.33, p = .021). In Study 3a, there was also a significant effect on perceived ease (Mexplicit = 5.21, Mpresumed = 5.77; aEAS = .56, t(200) = 2.65, p = .009), though this effect was nonsignificant in Study 3b (Mexplicit = 4.73, Mpresumed = 4.88; aEAS = .15, t(304) = .91, p = .365). The findings are illustrated in Figure 6.

Average Psychological Reactions (Studies 3a–b).

Second, we found that the relationship between perceived endorsement and behavioral intentions was positive and significant (Study 3a: bEND = .67, t(194) = 12.40, p < .001; Study 3b: bEND = .64, t(303) = 13.34, p < .001), the relationship between psychological ownership and behavioral intentions was positive and significant (Study 3a: bOWN = .51, t(199) = 8.77, p < .001; Study 3b: bOWN = .45, t(303) = 9.47, p < .001), and the relationship between perceived ease and behavioral intentions was positive and significant (Study 3a: bEAS = .61, t(199) = 9.24, p < .001; Study 3b: bEAS = .68, t(303) = 13.49, p < .001).

Third, as predicted, the indirect effect through perceived endorsement was positive and significant (Study 3a: aEND × bEND = 1.11, 95% CI = [.78, 1.47]; Study 3b: aEND × bEND = .46, 95% CI = [.22, .70]). A similar analysis showed an indirect effect through psychological ownership (Study 3a: aOWN × bOWN = .28, 95% CI = [.03, .53]; Study 3b: aOWN × bOWN = .21, 95% CI = [.03, .40]). The indirect effect through perceived ease was significant in Study 3a (aEAS × bEAS = .34, 95% CI = [.10, .64]) and nonsignificant in Study 3b (aEAS × bEAS = .10, 95% CI = [−.12, .34]).

In an exploratory analysis, we further found mediating effects of perceived reciprocity, which was measured as an additional scale in Study 3b (see Web Appendix E for details; given the low internal validity, we did not merge the two items: item 1: aRE1 × bRE1 = .23, 95% CI = [.10, .40]; item 2: aRE2 × bRE2 = .22, 95% CI = [.02, .44]).

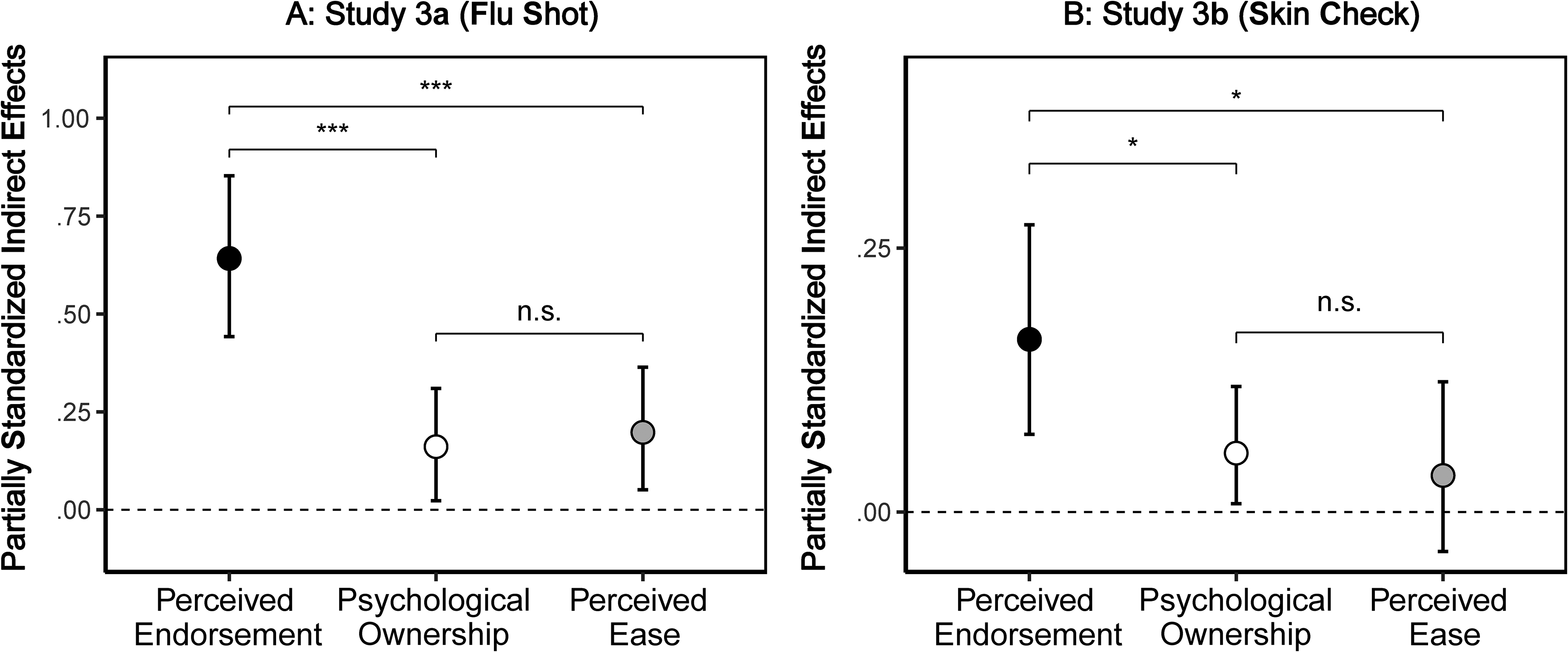

Comparing indirect effects

In a second preregistered analysis, given that we measured the mediators in a between-subjects design in Study 3a, we compared the mediation paths using the pooled data with a moderated mediation model including moderation on the a-, b-, and c-paths (PROCESS Model 59, Hayes 2017). In other words, the mediation paths from this moderated mediation model are the same as the indirect effects estimated separately by condition, as reported in the first step. Importantly, we compared the indirect effects by standardizing all mediating and outcome measures before the analyses. Because the independent variable is binary and dummy-coded, the partially standardized indirect effects can be interpreted as the mediated change in standard deviation units of the outcome (Pieters 2017). As predicted, we found that the standardized indirect effect through perceived endorsement was statistically stronger than the partially standardized indirect effect through psychological ownership (Δ = aEND × bEND − aOWN × bOWN = .48, 95% CI = [.24, .73]) and perceived ease (Δ = aEND × bEND − aEAS × bEAS = .44, 95% CI = [.19, .70]). The partially standardized indirect effects are plotted in Figure 7, Panel A.

Presumed-Consent Language Effects Through Perceived Endorsement, Psychological Ownership, and Perceived Ease (Studies 3a–b).

For Study 3b, we preregistered a parallel mediation including perceived endorsement, psychological ownership, and perceived ease with standardized measures for the mediators as well as outcome (PROCESS Model 4, Hayes 2017). Supporting our hypothesis, we found that the partially standardized indirect effect through perceived endorsement was statistically stronger than the partially standardized indirect effect through psychological ownership (Δ = aEND × bEND − aOWN × bOWN = .11, 95% CI = [.01, .21]) and perceived ease (Δ = aEND × bEND − aEAS × bEAS = .13, 95% CI = [.02, .23]). The partially standardized indirect effects are plotted in Figure 7, Panel B.

Discussion

Studies 3a–b provide evidence for the mediating role of perceived endorsement in the effect of presumed-consent language on behavioral intentions. While the mediation analyses also provide support for an interpretation in terms of psychological ownership, we find that perceived endorsement seems to be a more pertinent psychological reaction to presumed-consent language (H2a). Finally, we find mixed evidence for the mediating role of perceived ease. We contend that presumed-consent language effects may be less likely to be explained in terms of ease because we hold the policy constant across our studies (i.e., opt-in), while prior literature established that ease could differ between default policies (i.e., opt-in vs. opt-out).

Study 4: Moderated Mediation by Product Tangibility

The main goal of Study 4 is to test the prediction that the stronger mediating role of perceived endorsement (vs. psychological ownership) is reversed from low to high product tangibility (H2). Study 4 also further generalizes presumed-consent language effects across different product categories. The study was preregistered (https://osf.io/qmjba).

Method

We requested 800 and recruited 799 U.K.-based participants from Prolific Academic (385 female, 404 male, 10 nonbinary; Mage = 39.91 years). We randomly assigned participants to one condition of a 2 (language: explicit-consent vs. presumed-consent) × 2 (product tangibility: high vs. low) between-subjects design.

Using a similar scenario as in Study 3b, we told participants to examine an email conversation with their medical practice. In the explicit-consent condition, participants read, “You can decide whether you want to get a [target] at the practice.” In the presumed-consent condition, participants read, “A [target] has been reserved for you at the practice.”

We manipulated product tangibility in three different health domains to increase external validity. In the low-tangibility (vs. high-tangibility) condition, we randomly assigned participants to one of the three targets: “dental cleaning treatment” (vs. “dental cleaning pack”), “skin check” (vs. “sunscreen lotion”), and “COVID-19 booster shot” (vs. “digital thermometer”). In the high-tangibility condition, a picture of the product was presented to participants to increase perceived tangibility. Stimuli are presented in Web Appendix F.

We used the same scales as in Study 3 for all of our measures: Participants rated perceived endorsement (α = .92), psychological ownership (α = .89), and perceived ease (α = .84). We presented the scales on separate pages, in a counterbalanced order. Next, participants rated behavioral intentions (α = .94) and finally, perceived presumed consent, which we used as a manipulation check (α = .92; Mexplicit = 3.27, Mpresumed = 5.70; β = 2.43, t(797) = 24.59, p < .001). We collected participants’ gender and age at the end.

Results

The preregistered analysis was a moderated mediation model with (1) language as the independent variable (0 = explicit consent, 1 = presumed consent), (2) behavioral intentions as the dependent variable, (3) perceived endorsement, psychological ownership, and perceived ease as mediators, and (4) product tangibility (0 = low, 1 = high) as the moderator, using standardized measures for mediators and outcome. As preregistered, we modeled the moderation of product tangibility on all mediating paths (PROCESS Model 59 with 5,000 bootstrap samples, Hayes 2017). In other words, the estimated conditional indirect effects in this moderated mediation model are the same as the indirect effects estimated separately by tangibility conditions.

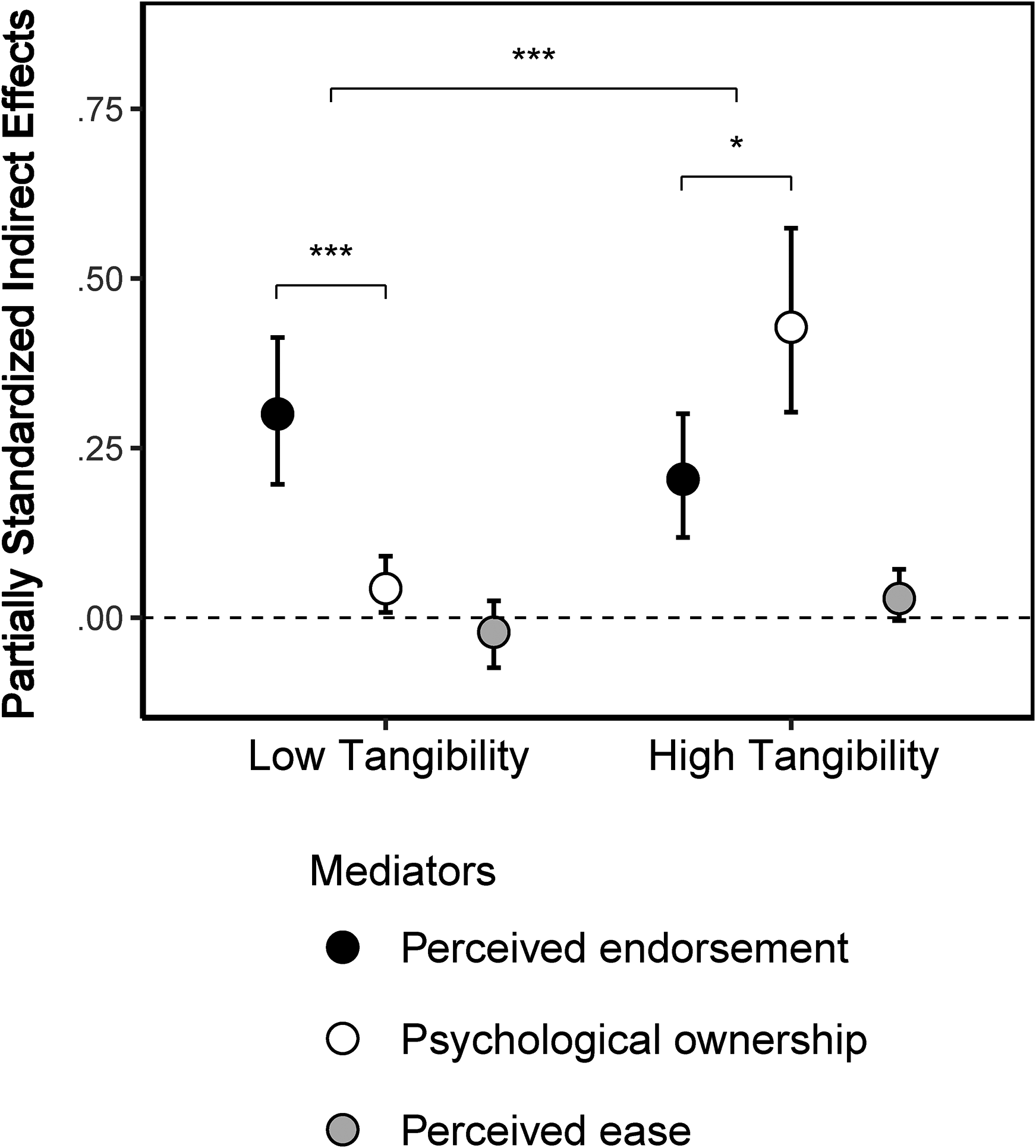

As predicted and consistent with H2, we found a significant reversal in the relative strength of perceived endorsement over psychological ownership (Δintangible − Δtangible = .48, 95% CI = [.27, .69]). The partially standardized conditional indirect effects from the preregistered analysis are plotted in Figure 8. We unpacked this moderated mediation by the tangibility conditions.

Moderated Mediation by Product Tangibility (Study 4).

In the low product tangibility condition, we found a larger (Δintangible = aEND × bEND − aOWN × bOWN = .26, 95% CI = [.15, .38]) conditional indirect effect of presumed consent on behavioral intentions through perceived endorsement (aEND × bEND = .30, 95% CI = [.20, .42]) than for psychological ownership (aOWN × bOWN = .04, 95% CI = [.01, .09]). This is consistent with H2a and replicates Studies 3a–b.

In the high-product-tangibility condition, we found a smaller (Δtangible = aEND × bEND − aOWN × bOWN = −.22, 95% CI = [−.41, −.04]) conditional indirect effect of presumed consent on behavioral intentions through perceived endorsement (aEND × bEND = .20, 95% CI = [.12, .30]) than for psychological ownership (aOWN × bOWN = .43, 95% CI = [.30, .57]). This is consistent with H2b.

Exploratory analyses revealed that the pattern of indirect effects was similar in the dental domain and skin domain (Web Appendix F). However, the mediating role of psychological ownership was not different between the COVID-19 booster shot and digital thermometer—it was insignificant in both conditions. We speculate that this result may be due to the use of the word “digital,” which could sound intangible, or because a thermometer is used less often than a toothbrush or sunscreen.

Lastly, we report additional analyses pooled across the tangibility conditions in Web Appendix F. First, we report the main effects of presumed-consent language (vs. explicit-consent language) on all dependent measures. Second, we report the indirect effects of presumed-consent language (vs. explicit-consent language) on behavioral intentions through each mediator.

Discussion

In Study 4, we demonstrate that the relative mediating role of perceived endorsement and psychological ownership reverses from low product tangibility (e.g., dental cleaning treatment) to high product tangibility (e.g., dental cleaning pack). This pattern of results provides empirical support for H2. Note that in the high-tangibility condition, a picture of the product was presented to participants to increase perceived tangibility. In fact, prior research suggests using pictures or symbols to make services seem more tangible (Koernig 2003), and researchers have used the presence or absence of pictures between tangibility and intangibility conditions (Darke et al. 2016; Ding and Keh 2017). However, we note that introducing a picture in only two of the four experimental conditions could potentially introduce a confound. Future research may further investigate the influence of presumed-consent language using different types of tangibility manipulations.

Studies 5a–b: Correlational Evidence of Presumed-Consent Language Effects in Field Settings

In the previous studies, we relied on experimental data to establish causal evidence for presumed-consent language effects, as well as to establish the underlying psychological processes and a boundary condition. The goal of the current study is to provide initial evidence that presumed-consent language effects (H1) matter in field settings. To do so, we combine an experiment on the influence of 60 different text messages on perceived presumed consent (Study 5a) with meta-analytical data on message-level effectiveness in terms of vaccination uptake (i.e., intervention arms with different text messages) from eight RCTs using 1.16 million participants (Study 5b). We explore the relationship between the average message-level perceived presumed consent (Study 5a) and the message-level effectiveness (Study 5b).

Method

We performed a systematic search on February 24, 2022, using the following bibliographic search engines: PubMed, ScienceDirect, and PsycInfo. Search terms are detailed in Web Appendix I. We reviewed the lists of titles and abstracts and used the inclusion criteria to select potentially relevant articles for full review. We back-searched the reference lists for additional studies. The PRISMA flowchart is presented in Web Appendix H. The screening database (including all articles and reasons for exclusion) is available on our OSF repository (https://osf.io/qmjba).

We included articles in the final list if they (1) included a patient-directed text messaging intervention, (2) included adult participants (e.g., we excluded studies on children), (3) provided vaccination rate (e.g., we excluded studies on vaccination intentions), (4) included an RCT in which participants are randomly assigned to a control or an intervention condition (e.g., we excluded observational studies), and (5) did not include a multifaceted intervention in which the message intervention is confounded with other interventions (e.g., face-to-face). Overall, we included eight RCTs from seven articles (Buttenheim et al. 2022; Dai et al. 2021; Herrett et al. 2016; Milkman et al. 2021, 2022; Rabb et al. 2022; Regan et al. 2017). Note that we identified 60 unique text messages used in 63 experimental arms, because two text messages were used in several experimental arms (see Web Appendix I).

Study 5a: Experiment

We asked participants to rate the 60 text messages that we identified in the systematic review. We requested and recruited 1,500 U.K.-based participants from Prolific Academic (790 female, 703 male, 7 nonbinary; Mage = 41.87 years). Participants were randomly assigned to rate 2 of the 60 messages because we aimed to have 50 independent ratings of each of the 60 messages. We excluded 57 participants who spent less than 10 seconds on either of the two message evaluation pages. Thus, the final sample consisted of 2,886 evaluations from 1,443 participants. Note that the pattern of results is similar when we include all participants.

We mimicked a text message design and reframed the original messages with generic terms, so that, for instance, we did not mention medical locations (instead, we used the generic hospital name “Medical Care Hospital”) and referred to practitioners with the name “Dr. Smith.” As some messages of the database included the patient's name, we first asked participants to indicate their first name, which we mentioned would be deleted from the data for anonymity. We present details on how we created generic content and how we introduced the study in Web Appendix J.

We measured perceived presumed consent with the three-item scale used in our prior studies (“The message seems to presume that I wish to follow the flu recommendation,” “I feel like this message assumes that I already intend to get a flu vaccine,” and “I feel like this message implies that getting a flu shot is my default course of action”; 1 = “Not at all,” and 7 = “Very much”). After checking the scale reliability (α = .92), we computed perceived presumed consent as the average of the three items. Next, we estimated the message-level mean perceived presumed consent with a mixed effect regression with random intercepts for participants.

Study 5b: Meta-analysis

In this meta-analysis, we evaluated the effect of the 60 text messages on actual vaccination uptake. Based on the aggregated vaccination data reported in the RCTs, we recreated the data at the individual level. For each RCT, we used the same analytical procedure used in most RCTs, that is, an ordinary least squares (OLS) regression with robust standard errors, with vaccination outcome (yes or no) as the dependent variable. The results are similar using logistic regression instead. For each RCT including K messages, we included K − 1 dummies, leaving the control condition as the reference category. The estimated coefficients represent the percent increase in vaccination rate from control to each intervention message. All effect sizes are plotted in Web Appendix K. When the messages were used in several experimental arms (see Web Appendix I), we aggregated the experimental arms. Note that we used a weighted OLS regression for the RCT in Rabb et al. (2022) given the iterative block-level randomization design (see OSF repository for code and analyses; https://osf.io/qmjba). When articles presented several outcome measurement lengths (e.g., vaccination rate after 7 days vs. vaccination rate after 28 days), we selected the longest time frame because it yielded more conservative estimates (Dai et al. 2021).

We also coded for several control variables that may affect variation in effect sizes. Importantly, two covariates significantly explained heterogeneity in effect size (see Web Appendix L): (1) whether one (vs. multiple) text messages were sent, as prior research found higher effectiveness for messages sent on multiple days (Milkman et al. 2022), and (2) whether the intervention was launched early or late in the vaccination campaign (Rabb et al. 2022). In fact, Rabb et al. (2022) found that eight messages failed to improve vaccination uptake. They speculate that their results are explained by the context of the study rather than the content of the messages. Whereas all other RCTs were launched at the beginning of the vaccination campaign, Rabb et al. launched their RCT when 75% of the population was already vaccinated against COVID-19 (600,000 out of 800,000 Rhode Island adults).

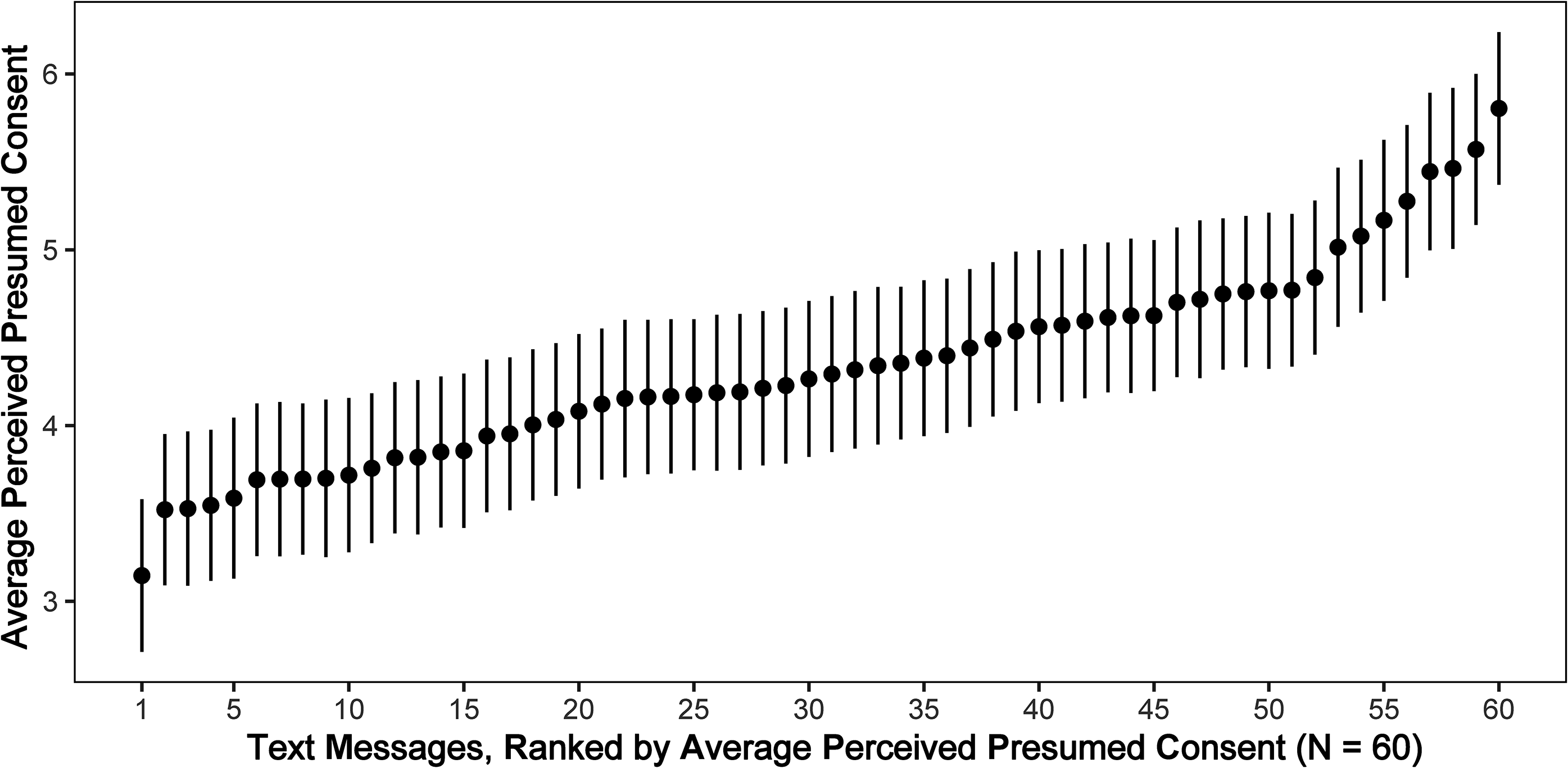

Results

First, we examined how the 60 text messages differed from each other in terms of perceived presumed-consent ratings obtained in Study 5a. We estimated a meta-analysis on the average perceived presumed-consent ratings of the 60 messages and we found high heterogeneity (I2 = 84.15%, Q(59) = 372.11, p < .001). As illustrated in Figure 9, the mean perceived presumed consent in text messages ranged from 3.15 to 5.80, with an average of 4.33 and a standard error of .07. For example, the highest-rated message on perceived presumed-consent read, “John, this is a reminder that a flu vaccine has been reserved for your appt with Dr. Smith. Please ask your doctor for the shot to make sure you receive it.” while the lowest-rated message on presumed-consent read, “Hi John! It's flu season. A National Institutes of Health study reveals that Americans who get flu shots are less likely to get the flu. You can get your flu shot at Walmart. Will you get your flu shot and be part of this group?” All messages are presented in Web Appendix I.

Average Perceived Presumed Consent by Text Message (Study 5a).

Second, we explored the heterogeneity in effect sizes from Study 5b. Given that the effect sizes for different interventions were nested within RCT, we used a three-level meta-analysis following the procedures in Harrer et al. (2021). We found that the three-level model provided a significantly better fit compared with a two-level model with level-three heterogeneity constrained to zero (χ(1) = 64.71, p < .001). The estimate from the three-level model was small, positive, and significant (β = .021, z = 4.29, p < .001). In other words, the average effect size corresponded to a 2.11 percentage point (pp) increase in vaccination uptake from control to intervention (95% CI = [1.15, 3.08]). Importantly, we found that the heterogeneity in effect sizes was high (I2 = 93.45%, Q(59) = 760.04, p < .001), mostly stemming from the differences in RCTs (90.05%), with relatively small heterogeneity between messages (3.40%).

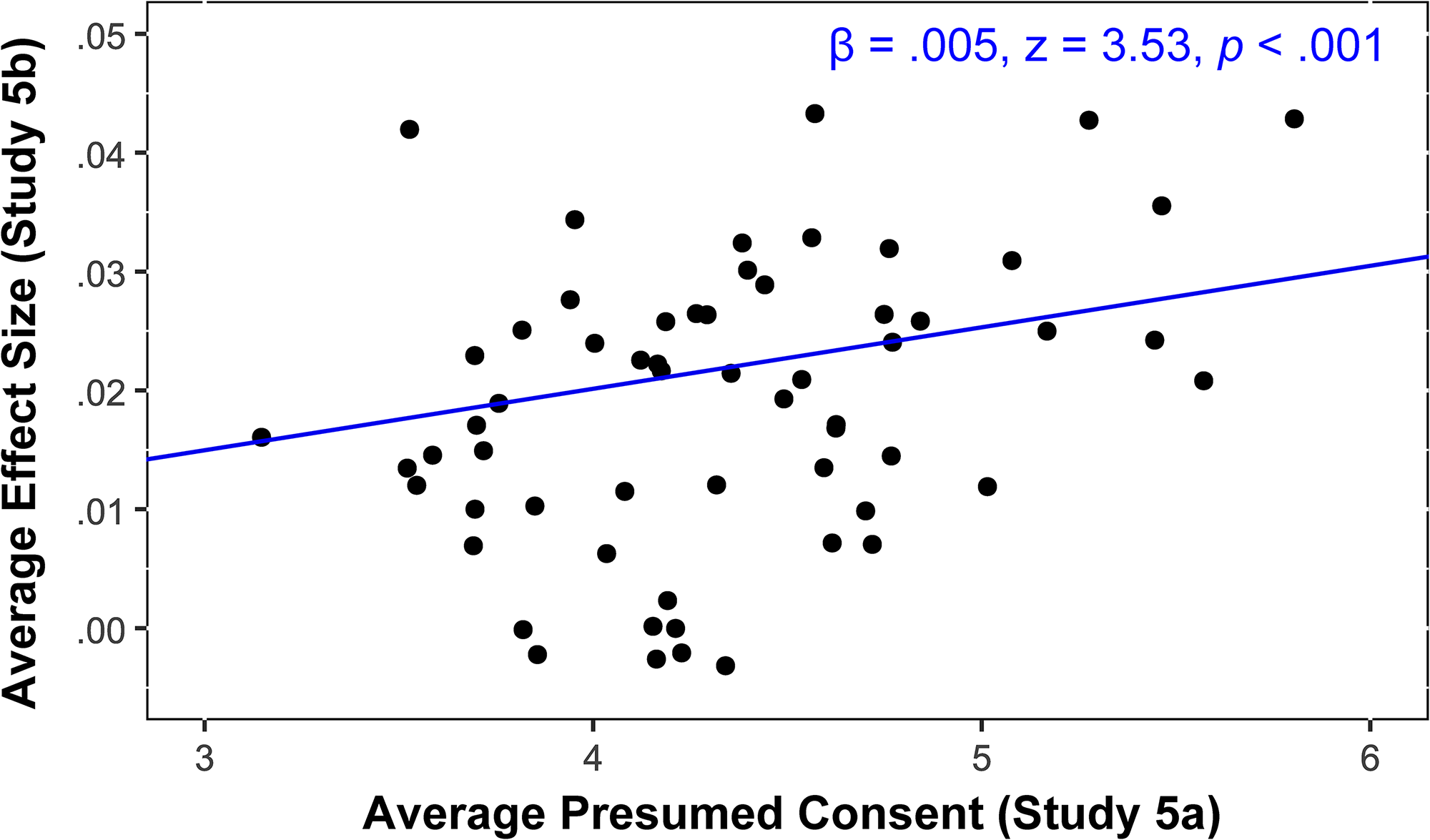

Third, we performed a three-level meta-analysis using the perceived presumed-consent ratings from Study 5a as the predictor variable and the effect sizes from Study 5b as the outcome variable (Column M1 in Web Appendix M). We found that the average effect size was positively associated with perceived presumed-consent ratings (β = .005, z = 3.53, p < .001), as illustrated in Figure 10. While the variance between messages is small (3.40%), the meta-analytical model explains 50% of it. For robustness, we also estimated a similar model controlling for the two main covariates in Study 5b (Column M2 in Web Appendix M). The perceived presumed-consent ratings positively predicted effect size even when controlling for the covariates (β = .004, z = 2.76, p = .006). The model including covariates explained 77% of the variance between messages and 19% of the variance between RCTs.

Relationship Between Perceived Presumed Consent (Study 5a) and Vaccination Uptake Effectiveness (Study 5b).

Discussion

Studies 5a–b provide correlational evidence for the effectiveness of presumed-consent language (H1) in field settings. These final studies indicate that messages adopted in prior research display considerable heterogeneity in perceived presumed consent and that this heterogeneity is positively associated with message effectiveness. We acknowledge important limitations of Studies 5a–b , such as the small between-message heterogeneity in Study 5b as well as the correlational and exploratory nature of the studies.

General Discussion

In this research, we propose that presumed-consent language improves the effectiveness of persuasive communication compared with explicit-consent language across various health domains. We demonstrate that presumed-consent language effects may operate in similar ways as presumed-consent policy effects (e.g., opt-out or default effects) and can be explained by several mechanisms such as perceived endorsement and psychological ownership. Importantly, we find that perceived endorsement has a stronger (vs. weaker) mediating role than psychological ownership when product tangibility is low (vs. high). We also show that presumed-consent language may explain, at least partially, why some messages are more effective in increasing vaccination uptake than other messages.

We do not claim that employing presumed-consent language is a silver bullet, but we propose that it may circumvent some of the managerial challenges associated with opt-out policies. Overall, our findings suggest that the effects of using presumed-consent language may have been overlooked in prior research and that the interpretation, in terms of increasing perceived endorsement, should be considered as an important lever in the design and evaluation of messages to encourage health behaviors.

Theoretical Contributions

Our research provides three contributions. First, we contribute to the literature on presumed consent by conceptualizing different effects. While existing literature focuses primarily on default policies (Rithalia et al. 2009; Steffel, Williams, and Tannenbaum 2019), we argue that presumed-consent language may be an overlooked aspect in the context of choice architecture. We propose that prior research may have measured the combined effects of policies and language, rather than just the isolated effect of different policies. Indeed, a message that states that a vaccine appointment has been made and that decision-makers need to cancel the appointment if they are not interested establishes presumed consent via policy as well as via language. To our knowledge, we are the first to argue that a presumed-consent effect may in reality often consist of two independent effects: one driven by the status quo policy, and one driven by language that signals presumed consent. For example, when decomposing the largest presumed-consent effect in Study 1 (16 pp increase, from 74.8% to 90.8%), we find that it consists of an 12.3 pp language effect (77.0%) and a 3.7 pp opt-out policy effect (23.0%). Future research could examine how this decomposition varies according to different factors, such as type of manipulation, context, and/or outcome. For instance, one could expect a greater share of the policy effect when opting in is effortful (e.g., a phone call) compared with when it is not (e.g., checking a box).

Second, we contribute to the literature on the implicit social interactions in choice architecture (Krijnen, Tannenbaum, and Fox 2017). On the one hand, doctor recommendations (e.g., recommendation for HPV vaccination) provide an explicit endorsement (Brewer et al. 2017). On the other hand, McKenzie, Liersch, and Finkelstein (2006) propose an information leakage mechanism such that presumed-consent policies may be perceived as an implicit endorsement from choice architects. We contribute to this literature by demonstrating that presumed-consent language leaks information about choice architects’ endorsement, even in the absence of a presumed-consent policy (i.e., opt-out).

Third, we contribute to the literature on the multiple processes behind the effectiveness of behavioral interventions. On the one hand, Dinner et al. (2011) demonstrate that presumed-consent policy effects may be explained by an endowment account based on reference dependence, but they find no evidence for a process in terms of endorsement or ease. In contrast, the meta-analysis of presumed-consent policy effects conducted by Jachimowicz et al. (2019) points to an interpretation in terms of endorsement and endowment, but not ease. The discrepancy in the relative strength of these processes likely stems from context-specific factors (Dinner et al. 2011). On the other hand, prior research suggests psychological ownership as a potential explanation for the effectiveness of message content on vaccination uptake (Buttenheim et al. 2022; Dai et al. 2021; Milkman et al. 2021, 2022; Rabb et al. 2022). We advance this stream of research by providing a more nuanced conceptual framework. First, we demonstrate that messages used in prior research (e.g., “the vaccine has been reserved for you,” “the vaccine is waiting for you,” “the vaccine has just been made available to you”) effectively establish presumed consent, which can subsequently lead to multiple psychological processes, including psychological ownership as well as perceived endorsement and perceived ease. Second, we propose that product tangibility moderates the relative strength of perceived endorsement and psychological ownership. Importantly, when product tangibility is low (e.g., dental cleaning treatment), the mediating role of perceived endorsement is stronger than psychological ownership. However, when product tangibility is high (e.g., dental cleaning pack), the mediating role of perceived endorsement is weaker than psychological ownership.

Practical Implications

Our findings provide practical insights for marketers (George et al. 2023; Huang and Lee 2023; Moorman et al. 2024) and policy makers (Milkman et al. 2024; Ruggeri et al. 2024). First, should managers choose presumed-consent language interventions over policy interventions? While presumed-consent policy interventions show robust results (Jachimowicz et al. 2019), they also suffer from important managerial problems such as (1) ethical considerations, (2) legal regulations, (3) limited public support, and (4) increased no-show rates. Therefore, presumed-consent language constitutes a relevant alternative, as it may not only alleviate some managerial problems associated with policies (e.g., pilot study) but also effectively improve persuasion (Studies 1–5).

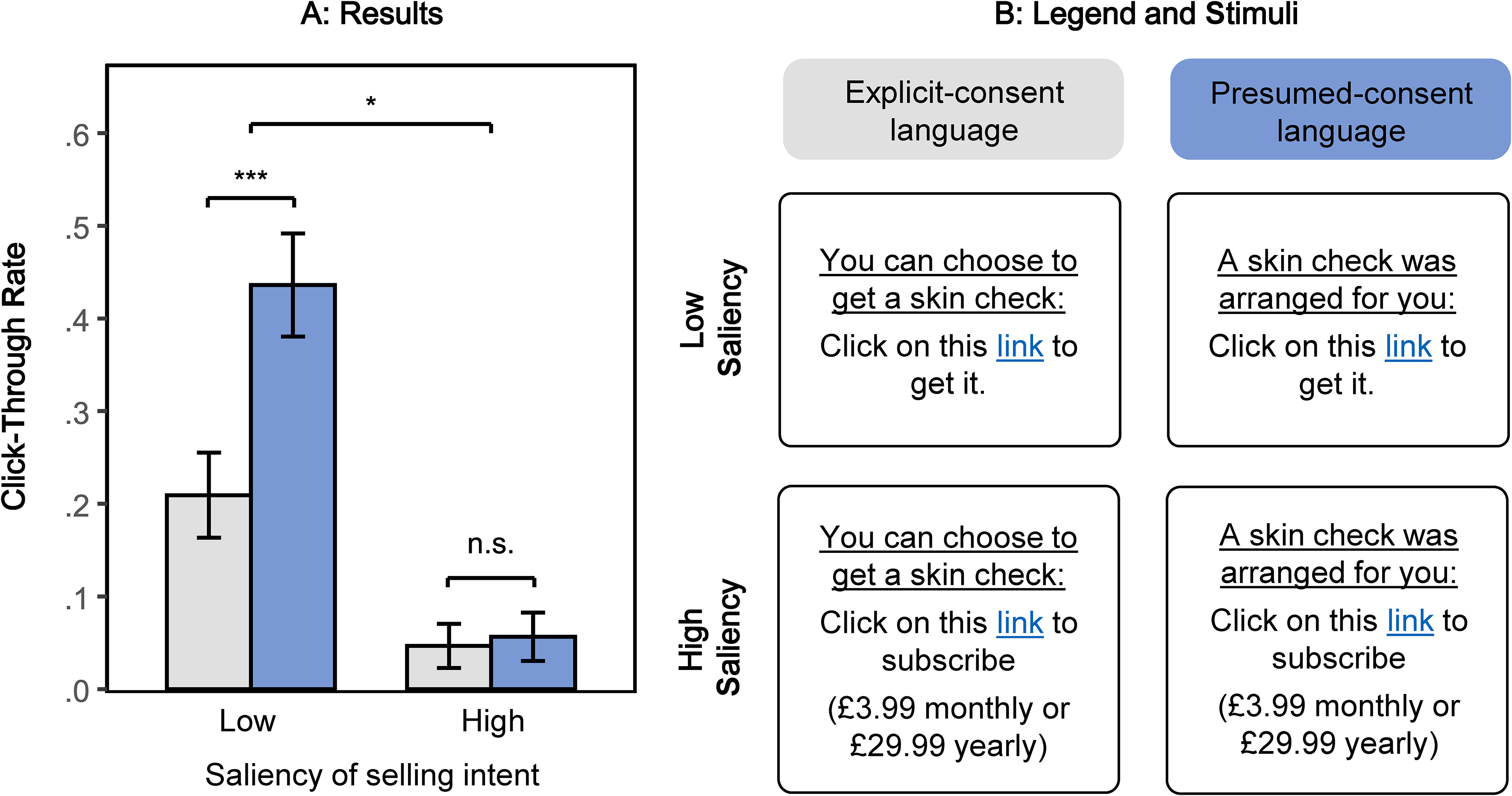

Second, how can managers design effective presumed-consent language? In complement to messages from the vaccination literature (“A flu shot has been made available for you,” “A flu shot is waiting for you,” “A flu shot has been reserved for you”; Dai et al. 2021; Milkman et al. 2021, 2022), Study 2 reveals that other phrases could also signal presumed consent (i.e., “A skin check has been arranged for you,” “A skin check has been secured for you”). Future research could investigate the impact of different types of presumed-consent language (e.g., “scheduled,” “organized”) outside of the health domain, such as appointment-based services (e.g., legal consultation, coaching session, tax preparation) or product trials for e-commerce websites and smartphone applications. Importantly, the results from Web Study W1 (N = 1,207, Web Appendix N) suggest that managers should not combine presumed-consent messages with salient selling intent (e.g., “click here to subscribe”), as it could lead to potential reactance effects. See Figure 11.

Moderation by Saliency of Selling Intent (Web Study W1).

Third, should managers adjust presumed-consent language to specific domains? We find that when product tangibility is low (e.g., dental cleaning treatment), the mediating role of perceived endorsement is stronger than psychological ownership. However, when product tangibility is high (e.g., dental cleaning pack), the mediating role of perceived endorsement is lower than psychological ownership. As a consequence, managers could use presumed-consent language that specifically aims to cater to the ownership angle by using words related to ownership, such as “purchase” or “belong” (e.g., “a test kit has been purchased for you,” “a voucher that belongs to you”).

Limitations and Future Research

We acknowledge several limitations and avenues for future research. First, despite the positive aspects of presumed-consent language, there are also potential negative aspects (as for any other behavioral intervention). For example, does presumed-consent language potentially lead consumers to overlook certain health risks or nudge people into unnecessary health procedures? While we do not have clear answers to these questions, we believe policy makers and managers should use presumed-consent language for important health decisions with clear health benefits and limited health risks (e.g., flu shot). Similarly, is it ethically risky to nudge consumers with presumed-consent language, and should choice architects make assumptions about what people want or not? We encourage future research to examine these questions as part of the ongoing debate around libertarian paternalism (Thaler and Sunstein 2003) as well as dark nudges and sludges (Posner et al. 2023; Thaler 2018).

Second, future research could examine why increased endorsement influences health behaviors. We speculate that the positive effect of endorsement may be explained through both dimensions of health confidence: perceived benefit (e.g., vaccination effectiveness) and perceived safety (e.g., absence of side effects) (Brewer et al. 2017).

Third, we aimed to compare three psychological processes: perceived endorsement, psychological ownership, and perceived ease. We acknowledge limitations inherent to comparing measured psychological processes, such as variations in scores measured on different scales, as well as linguistic or semantic fluency variations in different scales. We aimed to reduce these concerns by using standardized scores and having a similar linguistic format: “To what extent does the message make you feel that …?” Moreover, while we focused on three families of psychological processes based on the literature on defaults, we encourage future research to examine alternative mechanisms behind presumed-consent language, such as perceived reciprocity (Web Appendix E), implied selectivity (Bogard, Fox, and Goldstein 2021), comprehension (Gilbert 1991), attention processes (Dellaert et al. 2022; Sullivan et al. 2025), processing depth, sense of urgency, and paternalism.

Fourth, because our work aimed at disentangling psychological processes, we have not examined whether individual differences moderate our proposed process. Considering the polarization around vaccination, we evidently assume that the effectiveness of presumed-consent language depends on the preexisting opinions and beliefs about vaccination. While antivaxxers may not be persuaded by any text-based reminder, future research could explore the relative effectiveness of presumed-consent language in different types of segments. This is particularly important as some vaccines have higher perceived uncertainty than others (Zimmermann, Somasundaram, and Saha 2023). We hypothesize that presumed-consent language works primarily among individuals who are uncertain about vaccination because their need for endorsement should be high. Exploring how to persuade decision-makers most effectively along the entire spectrum of attitudes toward vaccination is an important area for future research.

Lastly, while we demonstrate presumed-consent language effects for different health decisions, it is likely that the effect extends to other domains. Building on the results from Study 4, we hypothesize that in more commercial domains, the effect of presumed-consent language could be driven by psychological ownership.

Conclusion

To conclude, this research proposes that presumed-consent language may circumvent some of the managerial challenges associated with presumed-consent policies (i.e., opt-out) in health contexts. We find that presumed-consent language improves the effectiveness of persuasive communication compared with explicit-consent language under an explicit-consent policy (i.e., opt-in). These findings not only deepen theoretical understanding but also provide practical implications for health marketers.

Supplemental Material

sj-pdf-1-jmx-10.1177_00222429251323885 - Supplemental material for Beyond Opt-Out: How Presumed-Consent Language Shapes Persuasion

Supplemental material, sj-pdf-1-jmx-10.1177_00222429251323885 for Beyond Opt-Out: How Presumed-Consent Language Shapes Persuasion by Romain Cadario, Jenny Zimmermann and Bram Van den Bergh in Journal of Marketing

Footnotes

Acknowledgments

The authors are grateful to the JM review team for their guidance. The authors thank the lunch club participants at the Rotterdam School of Management, the seminar participants at Tilburg University and KU Leuven, as well as Gretchen Chapman and Katherine Milkman for their feedback and comments.

Coeditor

Cait Lamberton

Associate Editor

Kelly Goldsmith

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) acknowledge support from the Erasmus Research Institute in Management for providing funding for data collection.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.