Abstract

Customer care is important for its role in relationship building. This role has traditionally been performed by human customer agents; however, the emergence of interactive generative AI (GenAI) shows potential for using AI for customer care in emotionally charged interactions. Bridging practice and the academic literatures in marketing and computer science, this article develops an AI-enabled customer care journey, from accurate emotion recognition to empathetic response, emotional management support, and, finally, the establishment of an emotional connection. Marketing requirements for each of the stages are derived from in-depth interviews with top managers and a survey of chief marketing officers. By juxtaposing these requirements against the current feeling capabilities of GenAI, the authors highlight the technological challenges engineers must tackle. The article concludes with a set of marketing tenets for implementing and researching the caring machine. These include verifying emotion recognition accuracy using marketing emotion theories through multiple emotion signals and methods, utilizing prompt engineering to enhance GenAI’s emotion understanding, employing “response engineering” to personalize emotion management recommendations, and strategically deploying GenAI for emotional connection to simultaneously enhance customer emotional well-being and customer lifetime value.

Keywords

There is increasing attention in marketing practice to customer care, 1 which goes beyond helping customers make consumption choices or solve product problems to build and strengthen long-term customer relationships. Information technology in general, and recently AI, have demonstrated the potential to facilitate the dynamic interaction and communication between service providers and customers (Caruelle et al. 2022; Huang and Rust 2018; Kopalle et al. 2022; Liu-Thompkins, Okazaki, and Li 2022; Rust and Huang 2014). Relationship building especially depends on the emotional connection with customers (Berry 2000), from which customers feel a sense of belonging and being understood. Customer care emphasizes marketing's role and responsibility to build emotional connections with customers. It is not just altruistic; if done well, it will result in a “win-win” that also increases firm profits, because emotionally connected customers are more valuable and loyal, bringing steady profit streams to the company (Magids, Zorfas, and Leemon 2015; Rust, Lemon, and Zeithaml 2004). Yet, there is doubt in marketing about whether AI can handle customer emotions, which is traditionally the territory of humans.

Science fiction author William Gibson, who coined the term “cyberspace,” once said, “The future is already here—it's just unevenly distributed” (The Economist 2001) Taking that idea to heart, we approached two very different groups of high-ranking marketing and AI executives. One was a group of four very high-ranking executives at top AI organizations, who are knowledgeable about the latest in AI. One of these executives was, until recently, chief marketing officer (CMO) of a company at the forefront of AI applications in business. This group was our “leader” group, which can suggest the future of AI application in customer care. 2 This leader group believes that using AI for customer care is not an issue of “whether” but rather “how.” Those top executives state that AI can better recognize customer emotions than human agents by capturing displayed emotional cues, especially when customers are not able to articulate their emotions well; AI can “fake” empathy during interactions, like the emotional labor that human agents display that makes customers feel understood; AI can manage customer emotions by generating relevant solutions faster than humans from factual, customer, and brand data; and AI can develop an intimate relationship with customers, just like a smartphone with its user.

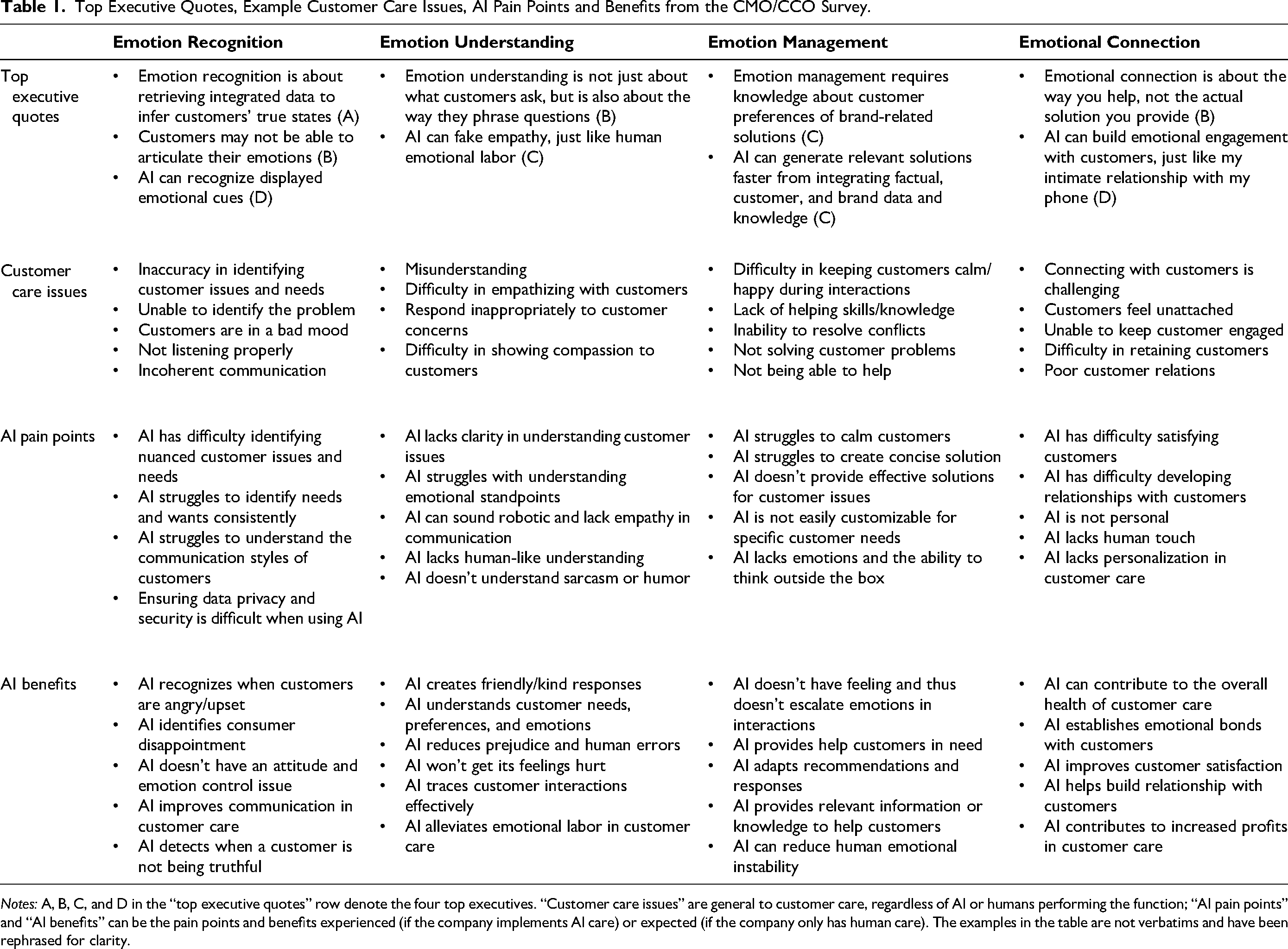

Top Executive Quotes, Example Customer Care Issues, AI Pain Points and Benefits from the CMO/CCO Survey.

Notes: A, B, C, and D in the “top executive quotes” row denote the four top executives. “Customer care issues” are general to customer care, regardless of AI or humans performing the function; “AI pain points” and “AI benefits” can be the pain points and benefits experienced (if the company implements AI care) or expected (if the company only has human care). The examples in the table are not verbatims and have been rephrased for clarity.

The other group was a larger, more mainstream group of 305 CMOs and chief customer officers (CCOs), to give us a sense of the status quo of AI in customer care in a broader cross-section of companies (more detail about the data collection is given in Web Appendix B). The top five problems these executives face in customer care include (1) quality staff shortage, (2) unhappy and angry customers, (3) slow response time, (4) the need to communicate with and understand customers, and (5) the need to retain and satisfy customers. The staff shortage issue is directly related to slow response time. For “quality” staff, it was often mentioned that some staff lack the knowledge and right attitude to care about customers. The frequent mentions of unhappy or angry customers also highlight the emotionally charged nature of customer care, which causes problems of miscommunication and misunderstanding during interactions and can hurt eventual customer satisfaction and retention. Approximately half of the survey respondents indicated that their companies use AI for customer care (52.13%), among which 17 companies use ChatGPT, indicating that generative AI (GenAI) is already being used in service settings, although most of the respondents instead employ older generations of AI technology such as chatbots, which have more limited feeling capability. We also find that the broader cross-section knows about their current customer care issues but has a less clear idea of how AI can help.

These practitioner views reflect the need for a deeper understanding of how to use state-of-the-art AI for customer care: its positioning, its strengths and limitations, and the challenges ahead. As one top executive states, while there is great variation among the abilities of human workers (knowledge, empathy, communication), this variation is eliminated with the caring machine. These interviews and the survey motivate us to seek an interdisciplinary approach to using AI for customer care.

The emergence of interactive GenAI, 3 such as OpenAI's GPT models, Microsoft's Bing, Google's Bard, and IBM's Watsonx, which can interact and communicate with customers directly due to their prompt–response design, shows great potential as a new technological solution to many of the customer care issues identified from the survey. Top leaders believe that “feeling AI” (AI that has the capability to communicate and interact with humans based on emotional understanding from analyzing emotion data) is already here to some degree, and some CMOs/CCOs are more ready than others to leverage the feeling capability of AI for customer care, as the previous William Gibson quote reveals.

The inputs from the practitioners and the rapid advancement of feeling AI (e.g., interactive GenAI) motivate the use of feeling AI to provide customer care. Drawing from both the marketing and the computer science literatures, we outline an AI-enabled customer care journey that addresses how feeling AI, exemplified by GenAI, can be used to move customers along the journey from emotion recognition, understanding, and management to connection. This journey bridges the marketing side and the technical side in the use of AI for customer care. The marketing side informs us what is required for customer care along the journey, whereas the computer science side deals with the technical challenges in developing the caring machine

4

to meet the marketing requirements. Building a successful caring machine requires both the marketing side and technical side working together. We illustrate how GenAI can be used for customer care with a fictitious scenario along the customer care journey. “Thanks. I’m going to Indiana for my mother's funeral. It's a tough time for me.” Mark sounds calm but looks sad. “I appreciate that,” Mark replies. “A quiet window seat, please,” Mark requests. “We’ll reserve a quiet window seat and offer priority boarding. Our bereavement fare policy may provide a discount for you,” Kathy suggests. “Please apply the discount,” Mark confirms his choice. “Thank you for your help and understanding. I really appreciate the support and care you have shown,” Mark expresses his appreciation. “You are welcome, Mark. Wishing you a safe and comfortable journey. Remember, we are just a message away if you need anything. Take care.” Kathy concludes the conversation.

From this AI-enabled customer care journey, we lay out a set of fundamental marketing tenets for marketing practitioners to use and for marketing researchers to study feeling AI for customer care. We start by identifying marketing requirements for the caring machine from the practitioner survey and the marketing literature. We then review and summarize what the state-of-the-art feeling AI, exemplified by GenAI, currently can and cannot do. We identify technical challenges from the gap that inform computer scientists how to develop feeling AI to meet the marketing requirements. Finally, we derive implications for marketing practitioners and researchers as marketing tenets about using and researching feeling AI for customer care.

This article contributes to a better understanding of customer care by proposing a conceptually novel customer care journey. The journey shows the importance of caring about customers by recognizing, understanding, managing, and connecting with them emotionally. This contrasts with typical customer service, which often focuses on solving specific customer problems. The existing literature on service quality (e.g., Parasuraman, Zeithaml, and Berry 1988) and customer relationships (e.g., Moorman and Rust 1999; Rust, Lemon, and Zeithaml 2004) combines what we would call customer service and customer care. Much customer service is the “brain” part of interacting with customers that mostly focuses on problem solving, whereas customer care is the “heart” part that focuses on attending to the customer's emotional needs. Both parts are often required for a satisfied customer relationship. The customer care journey informs service providers about how to care for customers’ emotional well-being along the journey, eventually increasing customer lifetime value.

This article provides a deeper understanding of how to build a caring machine that uses feeling AI for customer care. The existing marketing theories and practices have a deeper knowledge of using big data analytics to identify customer preferences for personalization (e.g., Chung, Rust, and Wedel 2009; Chung, Wedel, and Rust 2016; Wedel and Kannan 2016), yet knowledge about how AI can be used for customer care is limited. Huang and Rust (2018) consider AI for personalization to be thinking AI, because it focuses on what customers think, and AI for relationships to be feeling AI, because it focuses on customer communication and interaction. GenAI has recently revolutionized the way AI can be used for human interactions and communications. Our discussion and illustration about what GenAI can and cannot do along the customer care journey (e.g., prompt engineering, response engineering) enhance marketers’ understanding of and skill sets in the use of GenAI in marketing.

This article also contributes to affective computing by showing the technical challenges associated with GenAI for customer care. These are important tasks for computer science scholars to consider, informed by the marketing perspective. We contend that if feeling AI can infer unexpressed emotion signals (e.g., subjective feeling) from expressed signals (e.g., spoken language, facial expression images), it can recognize customer emotions more accurately. Feeling AI can express empathetic understanding about customer emotions if it leverages the customer input more (which is naturally perspective-taking) and has common sense to increase the appropriateness of its response. Feeling AI can provide more helpful emotion management recommendations if it is engineered by response (i.e., answers from the customer) rather than by prompt (i.e., questions asked by the customer). Finally, feeling AI can establish customer–brand emotional connections (rather than customer–AI connection) if it aligns customer emotional well-being with the company's strategic goals.

In the remainder of the article, we first provide an overview of the customer care journey. We then provide a brief historical sketch about the evolution of AI's feeling intelligence and GenAI as feeling AI. We discuss the current state from the marketing and the technical perspectives, respectively: from the CMO/CCO survey we derive the marketing requirements for using AI customer care, and from the review of the state-of-the-art GenAI as feeling AI we show what it currently can and cannot do for customer care. We conclude with two sets of implications for the two perspectives, with technical challenges for the computer science side regarding how to build a caring machine to meet marketing needs, and with marketing tenets for the marketing side to know how to use the caring machine for customer care.

The Customer Care Journey

Customer Care Defined

We define customer care as “the process by which service providers seek to care for customer emotions, in addition to solving their service problems, during interactions and communications.” From effective customer care, an emotional connection is built, customer emotional well-being is improved, and customer lifetime value is increased.

The term “customer care” is currently more prevalent in marketing practice than in the academic marketing literature. Marketing practice uses this term to convey the goal of managing the interactions with customers to build emotional connections, from which satisfied customer relationships emerge (Berg et al. 2022; Kisielewska 2022). Customer care may be driven by individual customer problems, but often goes beyond to deepen customer relationships.

Our definition reflects the general idea of customer care in marketing practice, but further specifies four characteristics of customer care: its goal is customer emotional well-being, its context is customer interactions and communications, it improves customer emotional well-being by building emotional connections through solving customer problems and caring for the customer's emotions, and it is intended to result in a satisfied, long-term relationship.

In addition to solving problems, customer care is aimed to improve the customer's emotional well-being. Emotional well-being, also known as emotional wellness, involves the ability to (1) be aware of and understand one's own emotions and (2) manage (negative) emotions. It is “the ability to produce positive emotions, moods, thoughts, and feelings, and adapt when confronted with adversity and stressful situations” (Melkonian 2021) that involves “enhancing emotional awareness, regulation, and recovery” (Davis 2021).

Emotional well-being is important because many customer care communications and interactions are emotionally charged. Angry or frustrated customers can feel mistreated if their emotions are not addressed, which often results in customer churn, even if their problems are solved. Emotions influence how customers make consumption decisions and carry out consumption activities (Devezer et al. 2014). Service providers that help customers improve their emotional well-being, such as by enabling them to enjoy a sense of well-being, freedom, belonging, and security, not only maximize customer value (Magids, Zorfas, and Leemon 2015) but also improve customer service agents’ well-being.

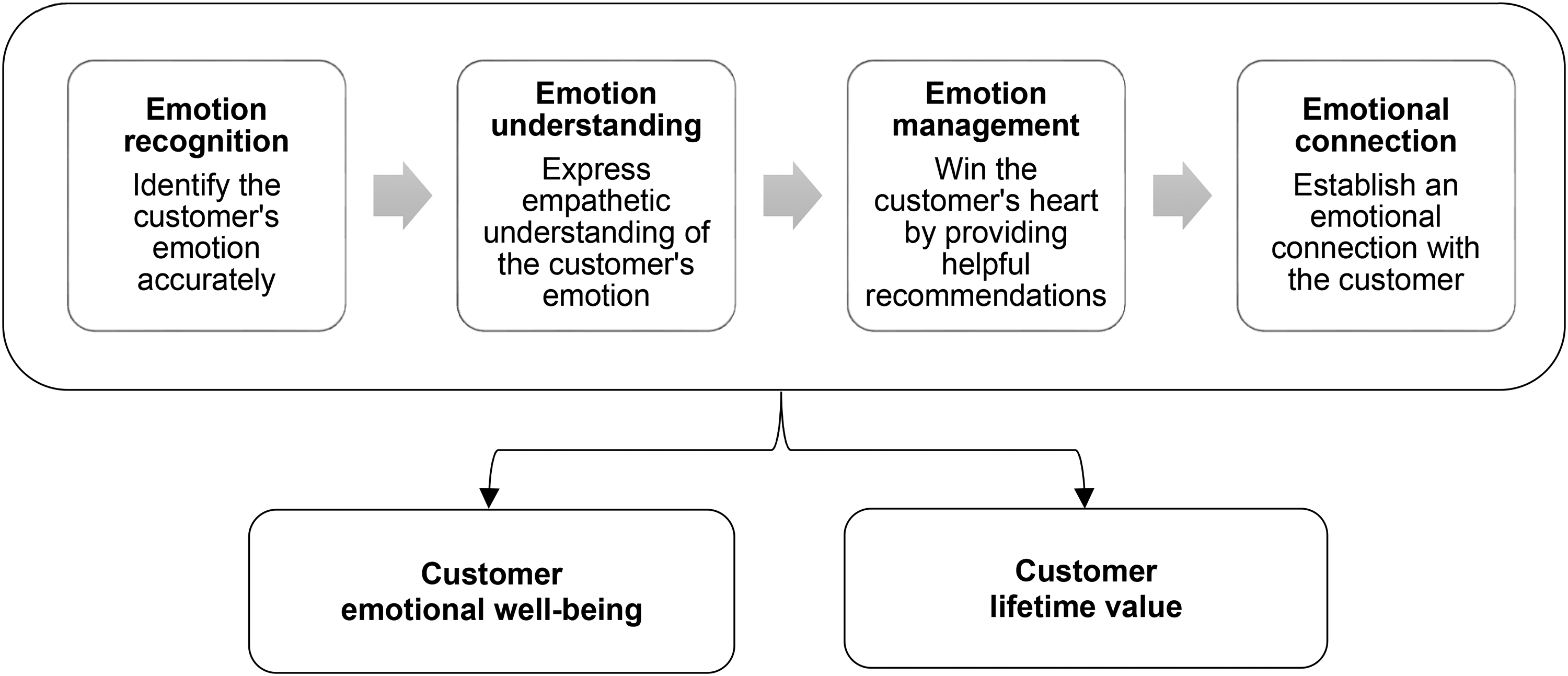

The Four-Stage Journey

We propose a novel customer care journey that includes four stages of customer care, from emotion recognition, understanding, and management to connection, constituting a journey moving customers from the initial contact, to be understood, helped, and eventually emotionally connected with the service provider. Figure 1 illustrates this four-stage journey.

The Customer Care Journey.

Compared with the traditional customer journey, this customer care journey focuses on the feeling aspect, such as customer engagement, experience, and emotion, rather than the more typical thinking aspect, such as customer utility, choice consideration, or consumption decisions. A comprehensive review of various customer journey stages can be seen in Hamilton et al. (2021). In the following discussion, we focus on the emotion aspect, but also recognize that customer problems and emotions are often intertwined.

Emotion recognition

The major task of the emotion recognition stage is to accurately recognize the customer's emotion that needs care. Emotion recognition starts once contact, by the service provider or by the customer, is initiated. Our survey shows that accurately identifying customer problems and emotions is important to avoid miscommunications, which escalate customer emotions. Thus, accurately recognizing customer emotion is critical for deciding whether and how to provide care. In the fictitious scenario, AI needs to accurately sense that Mark is sad, based on potentially conflicting signals, and take the initiative to care. This is typically labeled in the customer journey as the stage of “awareness” (Chernev 2014; Court et al. 2017; Lemon and Verhoef 2016), “attention” (Howard and Sheth 1969), “problem recognition” (Belch and Belch 2015), or “need recognition” (Puccinelli et al. 2009).

Emotion understanding

After recognizing the customer's emotion that needs care, emotion understanding is to be empathetic about the customer's emotion. Emotion understanding thus is deeper than emotion recognition, because, in addition to accuracy, it requires empathy. Empathy is the “reactions of one individual to the observed experiences of another” (Davis 1983, p. 113). It is the service agent’s ability to understand the customer's emotions as if they were the customer and respond to the emotions appropriately (Allard, Dunn, and White 2020; Bagozzi, Brady, and Huang 2022; Bagozzi and Moore 1994; Rust and Huang 2021). This is traditionally labeled as the stage of “understanding” (Chernev 2014), “engaging” (Richardson 2010), or “exploring” (Forrester 2010) in the customer journey.

Emotion management

The major task of the emotion management stage is to provide helpful emotion management recommendations to assist the customer in managing the emotion. Generally, the recommendations should be specific to the customer's situation and related to the service provided by the company. A recent NPR podcast reported on a company that trains GenAI to display customer emotion information in real time for customer support, resulting in higher customer satisfaction. The company sees the customer care process as typically emotionally charged, and thus managing customer emotions is an important requirement for solving customers’ problems or winning their hearts (Wong and Ma 2023); for example, de-escalating negative emotions aroused by service failure is a major task (Herhausen et al. 2023). When customers find the customer care agent and/or the recommendation helpful, this can lead to increased preference toward the company. This is labeled as the stage of “preference” (Lewis 1908), “desire” (Strong 1925), or “decision” (Hamilton et al. 2021) in the traditional customer journey.

Emotional connection

The ultimate stage is to establish an emotional connection with the customer for improved emotional well-being and customer lifetime value. An emotional connection is established when the customer knows that the company cares about them and they care about the company in return. When an emotional connection is established, the customer feels special, personal, intimate, and close with the brand (Berry 2000; Rust et al. 2021) as well as attached, bonded, and connected to the brand (Thomson, MacInnis, and Park 2005). It builds the brand equity that a brand enjoys with a customer who has a positive emotional appraisal of a brand, above and beyond its rationally perceived value (Rust, Lemon, and Zeithaml 2004). An emotional connection results in the customer being willing to continue the relationship with the service provider over time. Magids, Zorfas, and Leemon (2015) show that emotionally connected customers are, on average, 52% more valuable and loyal to the companies than customers with high satisfaction levels. This is traditionally labeled the “enjoy” (Edelman and Singer 2015), “satisfaction” (Hamilton et al. 2021), “loyalty” (Court et al. 2017), or “complete” (Richardson 2010) stage.

The Feeling Intelligence of AI

Multiple AI Intelligences

Customer care has traditionally been performed by human agents, due to the relative advantage of humans over AI in emotional skills (Rust and Huang 2021) and the technological limitations of AI's feeling capabilities (termed “feeling AI” hereinafter). Existing studies thus emphasize collaborative care for using feeling AI to assist human customer care agents (Davenport and Miller 2022; Huang and Rust 2022; Wilson and Daugherty 2018).

Huang and Rust (2018) propose a novel multiple intelligences view that points out that AI, designed to mimic human intelligences, can similarly have the multiple intelligences of doing, thinking, and feeling. They term these intelligences mechanical (for routine, repetitive tasks), thinking 5 (for analytical conclusions or decisions), and feeling (for human communications and interactions). Among AI’s three intelligences, mechanical intelligence is already quite mature, thinking intelligence is currently experiencing the most development, and feeling intelligence is on the rise. The progress of AI builds on previous developments. When AI reaches a more advanced level of intelligence, it usually retains or has the potential to retain all capabilities from the previous levels (Huang and Rust 2022).

The launch of generative AI (GenAI), such as ChatGPT, in late 2022, along with the follow-ups of other interactive GenAI, is quickly making feeling AI a reality. Feeling AI is a machine that has the capability to communicate and interact with humans based on emotional understanding from analysis of emotion data (e.g., Huang and Rust 2018, 2022; Huang, Rust, and Maksimovic 2019). This is AI's capability for engaging in reciprocal communication with customers (Puntoni et al. 2021). The computer science literature uses terms such as affective computing (Picard 1997) and artificial empathy (Asada 2015).

The previous generation of feeling AI can recognize customer emotions in different modalities, such as computer vision for recognizing facial emotions (Ekman 2003) and sentiment analysis for recognizing emotions in spoken or expressed language (Rust et al. 2021; Schuller 2018). Due to the lack of generative capabilities to respond to customer emotions, these technologies are not suitable for interactive communications. Thus, they provide customer care only partially, in the form of human–machine collaboration, in which AI provides support for human agents.

GenAI as Feeling AI

GenAI refers to advanced deep-learning models designed to generate new content. These models utilize the vast data they have been trained on, combined with specific user inputs, to generate output (OpenAI 2023). The dominant GenAI (e.g., OpenAI's GPT models, Google Cloud GenAI) is designed in a prompt–response paradigm that instructs GenAI to generate responses based on user prompts (inquiries). The pretraining learning from the huge amount of human-generated data enables GenAI to generate human-like responses, and the prompt–response design enables its interactive and communicative capabilities. Together, they make GenAI the new generation of feeling AI because it is designed for human interaction and communication; can recognize and express empathetic understanding of user emotions by analyzing the user's direct inputs; generates responses that demonstrate empathy, understanding, or support based on the context of the conversation; and provides information, suggestions, or recommendations that may be helpful to the user in addressing their emotional challenges. For example, Li et al. (2023) explore the emotional intelligence of large language models (LLMs) and find that the performance of LLMs can be enhanced by emotion prompts—that is, prompts with emotional stimuli (e.g., “this is very important to my career”). Tak and Gratch (2023) argue that GenAI has the potential to be a computational model of emotion because it demonstrates the ability to label emotions in prompts accurately.

State-of-the-art GenAI consists of generative models that are trained on general, publicly available data; third-party data; and domain-specific data based on the transformer architecture. 6 Transformers are considered foundation models (i.e., general-purpose models that are trained on general big data and can be fine-tuned to perform various downstream tasks; Bommasani et al. 2022). The most well-known models are OpenAI's GPT series and IBM's Granite foundation models that operate on the Watsonx.ai platform. They are designed to interact with a user query that prompts the machine to generate a response. This interactive and communicative nature of GenAI makes it feeling AI (i.e., AI for human interaction and communication).

GenAI is an autoregressive model that predicts the future values in a sequence based on the past values in that sequence (Li 2022). Such models require limited fine-tuning to perform specific tasks. 7 Santhanam and Shaikh (2019) show that fine-tuning language-generation models using labeled emotion data can generate situationally appropriate emotional responses. Moradi et al. (2022) show that with few-shot learning (i.e., using only a few learning examples), the transformer architecture can achieve high levels of task-specific performance, especially when the fine-tuning domain is inside the pretraining domain.

Marketing Requirements: The Current Customer Care Situation

We surveyed 305 U.S. CMOs and CCOs who were gathered for us by a U.S. marketing research company (see Web Appendix B for details). Respondents all have a job duty that is directly or indirectly related to customer care and are knowledgeable about AI (above the midpoint on a seven-point scale). Various industries and company sizes are represented. In three open-ended questions, we asked them to list the major problems their company faces with customer care, the main pain points of using AI for customer care, and the main benefits of using AI for customer care. 8 From the survey, we identify marketing requirements based on the current situation. Table 1 illustrates example responses from the survey regarding the general customer care issues, the pain points, and the benefits of using AI for customer care. The marketing requirements row of Table 2 summarizes the discussion, denoted as MR1–MR4, along the four stages of the customer care journey.

Marketing Requirements, Feeling AI (Illustrated by GenAI), Technical Challenges, and Marketing Tenets.

Disentangle Emotions from Problems

Customer emotions and problems are often intertwined, either because the problems result in (negative) customer emotions (e.g., a service failure) or because the nature of the problems is negative (e.g., a physician delivers bad news to a patient). The intertwined emotions and problems often make emotion recognition and problem identification difficult. In the CMO/CCO survey, recognizing customer concerns and needs accurately when they are emotionally charged is the major problem encountered at this stage. Emotions affect thinking, behavior, and interactions in marketing and consumption (Bagozzi, Gopinath, and Nyer 1999; Huang 2001; Nyer 1997). Customers may get confused themselves, and their emotions complicate the issues and make it difficult to identify their problems. For example, “irrational customers,” “customers are in a bad mood,” “unable to identify the problem,” “inaccuracy in addressing customer issues,” and “not listening properly” are commonly mentioned problems. Some studies have demonstrated the emotion recognition ability of early generations of feeling AI, such as analyzing customer anger from customer service chat transcript data (Crolic et al. 2022), using customer-generated keywords to understand their brand sentiments in social media (Rust et al. 2021), recognizing consumers’ happiness from watching movie trailers using facial action code (Liu et al. 2018), and using facial recognition to quantify salespeople’s display of basic emotions in sales presentations (Bharadwaj et al. 2022).

In the survey, marketing managers also mentioned that AI may not be able to identify and summarize complex or nuanced customer issues but may be good at assisting human agents in recognizing customer emotions (whether customers are angry, upset, or disappointed). Some also consider it a benefit that AI has no emotion (in common understanding) because it does not involve emotion control issues, compared with some customer service agents who may develop negative attitudes when handling emotionally charged customers. For example, Holthower and Van Doorn (2023) find that customers feel less judged by a robot (vs. a human) when engaging in embarrassing service encounters, such as being confronted with one's own mistakes by a frontline employee. The disentangled emotions and problems and the differential capabilities of AI in problem and emotion recognition show that feeling AI needs to be able recognize emotions accurately from intertwined customer emotions and problems.

Disentangle Conflicting Emotion Signals

Customers may not want to reveal their true emotions, often are not fully aware of their own emotions, and may not be able to articulate their emotions. “Incoherent communication” is mentioned as a major issue in the survey. In the marketing literature, emotion is viewed as a multi-element experience, with some elements more observable than others. Recognizing emotion accurately thus requires taking multiple emotional elements into consideration. Bagozzi, Gopinath, and Nyer (1999) define emotions as a “mental state of readiness that arises from cognitive appraisals of events or thoughts; has a phenomenological tone; is accompanied by physiological processes; is often expressed physically (e.g., in gestures, posture, facial features); and may result in specific actions to affirm or cope with the emotion, depending on its nature and meaning for the person having it” (p. 184). This comprehensive definition summarizes the key elements of emotions: physical expression, physiological response, subjective experience, and cognitive appraisal.

This view of emotions reflects the appraisal theory of emotion (Lazarus 1982, 1991; Nyer 1997), which physical reactions to an event are interpreted to determine the emotions elicited. Among the four elements, cognitive appraisal is the summary labeling of all other elements (i.e., customers conclude from their physical expressions, subjective experiences, and physiological responses what kind of emotions they are experiencing). When customers express their appraisal in some way (e.g., in written text or spoken language), feeling AI can recognize the emotion accurately. However, customers often send out conflicting emotion signals, either consciously or unconsciously. For example, in the fictitious scenario we presented, Mark sounds calm but looks sad, making emotion recognition more difficult.

Customers Need to Feel Understood

The survey illustrated that misunderstanding is one of the major problems that CMOs/CCOs face. In the customer care journey, if customers do not feel understood within a few iterations of an interaction, they get frustrated and angry, and customer care agents can also become demoralized. Frequently mentioned problems include “misunderstanding,” “difficulty in effectively communicating with customers,” “unable to understand customer needs,” “has difficulty in empathizing with customers,” and “difficulty in showing compassion to customers.”

Understanding customer emotions and problems requires the capability of empathy. For example, customer service agents need to empathize with customers to calm them down if they are angry, physicians need to empathize with patients when they report bad news about a diagnosis, and psychiatrists need to empathize with patients to provide emotional solutions. Specifically, being empathetic requires two capabilities: (1) taking the customer's perspective to understand their emotions and problems and (2) reacting to the emotions and problems appropriately.

Perspective-taking means to “spontaneously adopt the psychological point of view of others” (Davis 1983, pp. 113–14), “sharing another's feelings by placing oneself psychologically in that person's circumstance” (Lazarus 1991, p. 287), putting oneself in the place of another and experiencing his or her feelings (Hoffman 1984), and understanding another person's cognitive-emotional experience as if it were affecting the observer directly (Allard, Dunn, and White 2020).

The marketing literature suggests that empathetic concern is one component of AI empathy (Bagozzi, Brady, and Huang 2022) that will influence customer and firm outcomes (Liu-Thompkins, Okazaki, and Li 2022). The CMO/CCO survey shows conflicting views about AI empathy. On the one hand, some respondents mentioned that AI may be perceived as cold and lacking empathy because AI agents are robots and do not have human-like understanding, AI does not understand sarcasm or humor, and it struggles with understanding emotional context. On the other hand, some mentioned that AI can generate kind responses and create friendly experiences for customers because it does not have prejudice against any customers. The conflicting views reveal that when feeling AI is used for the emotion understanding task, it needs to be able to express empathy, from which customers feel that they are understood.

Responses Need to Be Appropriate to the Context

In the CMO/CCO survey, respondents often mentioned that “providing appropriate responses to consumer concerns,” “inappropriate response tone,” and “maintaining courteous interactions” are a challenge in customer care. An appropriate reaction means being capable of responding to another person's emotions in the right and positive way (Bagozzi, Brady, and Huang 2022; Batson et al. 1983). This is termed “emotional labor” in service encounters (Ashforth and Humphrey 1993; Hennig-Thurau et al. 2006; Liu, Chi, and Gremler 2019), which is the act of displaying the appropriate emotion in service encounters. Appropriateness can be expressed in terms of compassion/pity (Bagozzi and Moore 1994), concern for others in the situation (Allard, Dunn, and White 2020), and caring and individualized attention (Parasuraman, Zeithaml, and Berry 1988).

Consistent with the emotional labor literature, such acting does not have to be consistent with the customer care agent's true feeling, but is displayed according to display rules of emotional labor to facilitate service encounters (Ashforth and Humphrey 1993). This requirement should be doable for feeling AI, because the focus is on expressed empathy, not empathy as if feeling AI actually experiences customer emotions in a human way. Feeling AI, trained with relevant and available emotion knowledge, can express understanding of the nature, causes, and control/regulation of emotion, or the way one identifies, predicts, and explains emotion in oneself and others (Harris 2008).

Need to Help Customers Manage Their Emotions

In the CMO/CCO survey, managers indicated that “managing to keep customers calm during interactions,” “keeping customer happy,” “being available for customers when they need support,” “lack of effective helpful skills,” and “not being able to help” are frequently encountered issues. These issues reveal the need to provide emotional management recommendations to restore or maintain customers’ happy feelings. This requirement is rooted in the customer service and service failure literature, in which de-escalating negative emotions aroused by service failure is a major task (Herhausen et al. 2023). DeWitt, Nguyen, and Marshall (2008) find that loyalty is a function of trust and emotion following service recovery, which implies the importance of managing customer emotions in service recovery. Dallimore, Sparks, and Butcher (2007) find that customers and service providers influence each other's emotions in a service failure event, potentially triggering a negative spiral, with angry customers causing service agents to display anger, which is negative for complaint management. Marketing managers suggest that, compared with human agents, AI does not appear to be a good customer care agent to calm customers down; at the same time, neither will it escalate customers’ negative emotions, because it doesn’t have its own emotions and thus won’t create a vicious cycle with unhappy customers.

Need to Solve Customer Problems

In the CMO/CCO survey, managers often mentioned “not solving customer problems,” and “lack of employee knowledge in handling customer care issues” as common issues. These issues fall into the category of not being able to solve customer problems. They also mentioned that “AI may not be able to solve a customer's problem,” “AI is limited to predefined problems and solutions,” and “AI has difficulty in fixing customer problems,” showing that AI needs to improve its problem-solving skills.

In some customer care cases, simply offering a sympathetic ear is sufficient, whereas other cases require solving customer problems, which often are the causes of the emotions. Huang and Rust (2018, 2021) propose a “relationalization” approach to solve customer problems in an emotionally charged interaction that uses the thinking capability of AI to provide personalized solutions and uses the feeling capability of AI to manage the interactions.

Improve Customer Emotional Well-Being

In the CMO/CCO survey, managers often mentioned customer care problems such as “connecting with customers,” “customers feel unattached,” “keeping customer engaged,” “ensuring customer satisfaction,” “poor customer relationship,” and “retaining customers.” These problems reveal the need to connect with customers to keep them engaged and to build good customer relationships for customer satisfaction and retention. They also indicate that “lacks human touch,” “is not personal,” and “lacks personalization” are barriers for AI to establish an emotional connection with customers. Whether AI can satisfy customers or build relationships depends on whether it is used alone or to assist human agents. When AI is used alone, customer managers tend not to believe that it can satisfy or develop relationships with customers, but if AI is used to assist human agents, they believe all these outcomes can be improved.

The marketing literature shows that a customer–brand emotional connection is established when customers perceive that the brand genuinely cares about them, and the customers in turn also care about the brand in the form of customer satisfaction, brand loyalty, and customer relationship. This requirement reflects several research streams in marketing. Berry (2000) considers that brand emotional connection is especially important for service brands, and when it is established, such superior customer experiences are difficult for competitors to imitate. This is the relationship equity in Rust, Lemon, and Zeithaml (2004) and Rust, Zeithaml, and Lemon (2000) in which a firm–customer tie is established that goes beyond the quality–cost ratio and brand reputation. It also reflects the service marketing literature by emphasizing the importance of dynamic interactions over time that deepen the service relationship (Rust and Huang 2014). A service brand can connect with its customers cognitively and/or emotionally, which is the overall relationship between a brand and a customer (Huang and Dev 2020). Thomson, MacInnis, and Park (2005, p. 78) develop a scale to measure consumers’ emotional attachments to brands. They define attachment as “an emotion-laden target-specific bond between a person and a specific object.” Emotional brand attachments include dimensions such as affection, connection, and passion. They show that emotional brand attachment is distinct from brand attitude, involvement, loyalty, and satisfaction, and that it can predict commitment to the brand and the willingness to pay a premium price for it.

Increase Customer Lifetime Value

In the CMO/CCO survey, managers stated that “AI can contribute to customer satisfaction,” “AI for customer care leads to stronger customer retention,” and “AI helps in customer retention.” These comments show the need to increase customer lifetime value. The marketing literature on customer lifetime value and customer equity suggest that a customer's value to the company needs to encompass the customer's entire future relationship with the company. This view looks at the long-term total profits that a customer can bring to a company (Rust, Zeithaml, and Lemon 2000; Venkatesan and Kumar 2004).

Thus, the emotional connection helps increase the customer's lifetime value. When customers establish an emotional connection with the right company, their emotional well-being can be improved from satisfaction and fulfilled needs, and the company can be more profitable because of the customer’s higher lifetime value. Magids, Zorfas, and Leemon (2015) argue that an emotional connection matters more than customer satisfaction. They show that connecting with customers at an emotional level and fulfilling their emotional needs is the most effective way to maximize customer value. In their study, across a sample of nine categories (online retailer purchase, hotel room stays, consumer banking products, etc.), they show that emotionally connected customers are 52% more valuable than those who are just highly satisfied.

GenAI as State-of-the-Art Feeling AI: What It Currently Can and Cannot Do

With the four sets of marketing requirements in mind for using AI to move customers along the customer care journey, in this section we identify the current strengths and weaknesses of state-of-the-art GenAI for performing the four functions of customer care. GA1–GA4 (denoting the four functional capabilities of GenAI in the four stages of the customer care journey) detail what GenAI is currently good at and not so good at as the status quo of feeling AI. The second row of Table 2 summarizes the feeling capabilities of GenAI.

Good at Recognizing Expressed Emotions Accurately

GenAI recognizes emotions based on user prompts and then generates responses from the pretraining data. This interactive prompt–response design has the advantages of modeling expressed emotions accurately, because the user's prompt is an expressed signal of emotion that is directly observable. When the emotions are expressed by the users themselves, they are the cognitive appraisal of the emotion that reflects accurately what the user experiences.

Based on the direct user input, GenAI predicts a user's emotions based on the training data, which are how people describe or talk about emotions in the real world. The advantages of this approach are that it is unsupervised (not limited to any predefined emotions) and contextual (Frackiewica 2023). In contrast to the traditional approach of emotion recognition that relies on discrete or dimensional theories of emotions to represent emotions (e.g., Ekman 2003; Russell and Mehrabian 1977), GenAI does not recognize emotions based on any specific emotion theory. For example, for the text input, “I am feeling bad,” the emotion in the input would be recognized straightforwardly as that the user is feeling bad; it doesn’t classify the bad feeling into the categories of sadness, anger, or fear, as the Ekman's basic emotion theory does (Ekman 2003). This gives emotion recognition high contextual accuracy, as emotions can be recognized in countless categories based on user input and the context of the conversation.

Not So Good at Accurately Recognizing Emotions if User Input Is Dishonest and Vague and if There Is No Relevant Emotion Knowledge to Be Used for Prediction

Nevertheless, there are two conditions for this approach to emotion recognition and problem identification to be accurate: it hinges on the user input being honest and clear, and relevant emotion knowledge is available for recognizing the emotion. When the two conditions are not met, GenAI can generate hallucinated responses. Ji et al. (2023) define hallucination in GenAI as generated output that is nonsensical or unfaithful to the input data (i.e., output does not exist in the source input), but it gives users the perception of being fluent and natural.

There are various contributors to GenAI hallucination. The two conditions we discuss here fall into the data source category. Prompts as the user input data source may not be honest or clear when users do not have the ability to express their problems and emotions, or may not want to reveal their emotions. GenAI is fine-tuned to follow human instructions via reinforcement learning (Bai et al. 2022); thus, it always strives to produce answers that comply to the user's inputs. As a result, if the user input is dishonest (for whatever reason not revealing the true emotion) or ambiguous (not expressing emotions clearly), the recognition results will be misleading. Pretraining data as the input data source may not contain relevant emotion knowledge to be used for generating the responses (i.e., output does not exist in the training data). If the training data do not contain relevant knowledge to recognize the emotion in the context of the conversation, GenAI may hallucinate the answers.

Good at Learning the User's Perspective from the User's Inputs Directly

GenAI can take the user's perspective to understand emotions by learning directly from the user's inputs. CNN reports a recent published study showing that a panel of licensed health care professionals prefer ChatGPT's responses to medical questions over human physician responses, because the machine's responses are more empathetic. Nearly half of responses from ChatGPT were perceived as empathetic (45%) compared with less than 5% of those from physicians. On average, ChatGPT scored 41% more empathetic (McPhillips 2023). This shows that GenAI is a natural perspective-taker, because it learns directly from the user's inputs. The more elaborate the inputs, the higher degree of perspective-taking can be achieved by learning more from the inputs. When the data are from the user directly, they naturally reflect the user's perspective, such as the user's emotional style and emotional preference. These are the ideal data for customer care. In this aspect, the prompt–response design of GenAI may give AI an advantage in perspective-taking. In training an empathetic conversation model, Rashkin et al. (2019) focus on using personal conversations in emotionally grounded personal situations, rather than using social media conversations, to proxy a one-on-one conversation context.

Not So Good at Response Appropriateness Due to Lack of Common Sense to Evaluate the Appropriateness

However, even though GenAI can be a good perspective-taker, its responses may not be appropriate. Whether a response is appropriate often hinges on cultural norms. Barrett et al. (2019) show that there is substantial variation in how people communicate emotions across cultures, situations, and even within a single situation. Due to the complexity and nuance involved, humans can easily judge whether a response is appropriate in a context based on commonsense knowledge; however, GenAI may not get it right without such knowledge. For example, the 2022 Amazon Mind Reader commercial portrays a chatbot that can accurately recognize unspoken emotions from multimodal emotional signals, but generates inappropriate, socially awkward responses based on the true emotions. Davis and Marcus (2015) refer to common sense as real-world knowledge—a rich understanding of the world. It is acquired and accumulated throughout a human's entire life experience. They consider that lacking common sense often results in AI having inappropriate responses in the domains of interacting with people.

Good at Providing Generic Recommendations Based on Learning from General Knowledge

The responses generated by GenAI tend to be generic. When GenAI generates responses from its pretraining data, those data are general knowledge, either from the public internet or from the GenAI service provider's data; neither are brand-specific. Thus, the recommendations tend to be generic and may apply to all companies (including competitors) in a similar situation. Although we can instruct GenAI to take the brand’s perspective (e.g., assume the role of a manager from Brand A), the generated recommendations will still be largely similar across brands because the recommendations are all generated based on the same generic pretraining data. Even if the recommendations vary to some degree across brands, they are limited to those publicly available brand data. While it is also possible to fine-tune with company proprietary data, given the disproportion between the size of the training data and the size of the fine-tuning data, fine-tuning may have limited effect if the fine-tuning data size is small.

Not So Good at Generating Personalized Recommendations

For recommendations to be helpful, they need to be personalized to the user's emotions in the specific context. Personalization is to tailor-make a brand's recommendations to fit a customer's personal situation (Huang and Rust 2017). Thus, the recommendation needs to be brand- and customer-specific.

Being brand-specific requires GenAI to be fine-tuned with brand-specific data or via third-party plug-ins. OpenAI recently offered fine-tuning GPT-3.5 Turbo and is experimenting with fine-tuning GPT-4 to custom use cases. These cases are alleged to enable the model to follow instructions better, generate consistent format responses, and have a custom tone; yet these improvements are peripheral compared with generating personalized recommendations to manage customer emotions. ChatGPT also offers third-party plug-ins that connect ChatGPT to third-party applications to enable users to access up-to-date information and use third-party services. For example, Expedia uses ChatGPT plug-ins to help travelers plan their trips using the Expedia app, and OpenTable uses the plug-ins to provide restaurant recommendations. However, both approaches (fine-tuning and third-party plug-ins) involve security and privacy concerns, which is undesirable for many companies.

Being customer-specific requires GenAI to follow the user's intentions in generating responses (Bai et al. 2022). The fine-tuning for GenAI to follow user intentions is more successful via reinforcement learning, but it still sometimes generates harmful responses (Ouyang et al. 2022). GenAI also tends to generate wordy recommendations that diffuse the focus of the user's problem. Microsoft explains that it is due to the model's (i.e., ChatGPT) enormous numbers of training data sets that lead it to cover a topic from different angles (Javaji 2023). When dealing with unhappy users, diffuse answers may not be personally helpful.

Good at Establishing User–AI Emotional Connection and Improving User Emotional Well-Being

GenAI is designed as interactive systems and is fine-tuned based on reinforcement learning incorporating human feedback (Bai et al. 2022; Ouyang et al. 2022). When the models are used for direct one-to-one interaction between the AI and the user, such as a companion AI (e.g., ElliQ), even though the responses it generates are generic at the beginning, they can improve over time. The emotional connection deepens with more exchanges of prompts and responses between AI and the user. In the companion AI case, when the AI–user emotional connection is established, user emotional well-being is improved from the deepened relationship.

Not So Good at Ensuring Customer–Brand Emotional Connection

The emotional connection that GenAI establishes is between the AI and the user, not necessarily between the customer and the brand. Establishing customer–brand connections requires GenAI to have multiple perspectives, so that GenAI agents can distinguish themselves from the company (i.e., to know that they are agents of the company, not the company itself). This is distinct from the companion AI case, in which the machine acts as itself to follow the user's intentions. So far, GenAI does not show sufficient evidence that it has the capability to have multiple perspectives.

Building the Caring Machine: Technical Challenges

We identify technical challenges (denoted TC1–TC4) from the gap between the marketing requirements and GenAI's current feeling capabilities to inform computer scientists’ development of the caring machine. The technical challenges row of Table 2 summarizes the discussion.

Emotions are multimodal, and not all modalities are equally observable. When inputs are limited to one or two modalities (e.g., language, image), and when the input modalities convey conflicting or ambiguous messages for an emotion, recognition accuracy is hindered. Thus, GenAI needs to be multimodal in input and output and must infer unexpressed signals from expressed signals—even when the inputs are inconsistent, ambiguous, or biased—to prevent hallucination.

Be Multimodal in Input and Output

Being able to take multimodal inputs and generate multimodal outputs increases the chance of emotion recognition and interpretation accuracy. Emotion is a holistic experience that involves multiple components of subjective experience, physiological response, physical expression, and cognitive appraisal (Bagozzi, Gopinath, and Nyer 1999). Some customers have better cognitive ability than others to summarize and express their emotions clearly. Thus, relying on a single modality (e.g., language) is likely to reduce the accuracy of emotion recognition. There have been recent attempts to expand the input or output modality to voice and image. For example, GPT-4 can accept both text and image inputs but can only output text. ChatGPT can interact with images and voices. Nvidia Corporation, a company that designs graphics processing units, is developing its own GenAI that allows users to input text or images and generate outputs that integrate text and image. Whether these models perform better or how soon they can perform better than the traditional emotion recognition model (e.g., generative adversarial networks [GAN]; Hajarolasvadi et al. 2020) is unclear.

Infer Unexpressed Signals from Expressed Signals

GenAI needs to have inference ability when there are disagreements in the multimodal inputs. GenAI is good at predicting the next value, but it is not good at inferring relationships between pairs of values when they are not mutually consistent. Lee, An, and Thorne (2023) test LLMs’ inference ability by examining whether they can capture disagreements in user annotations (i.e., human labeler's conflicting opinions) and conclude that such models have only limited inference ability, hindering their accuracy.

Inference ability is also important to detect customers’ dishonest or vague inputs and identify bad training data. Customers may be pretending, or may not be able to express their emotions clearly. Bad training data include data that are biased, inaccurate, or irrelevant. Those data often reflect human biases in the real-world public data and should not be used to generate responses. Those data biases are common, since public data are reflections of human biases (Luccioni et al. 2023). If GenAI has strong inference abilities, it can better discriminate when the data it is trained on are biased. For example, if GenAI notices that certain types of inputs always lead to certain (bad) types of outputs, it might infer that there's a bias in the data. Bias detection mechanisms can then be implemented to address this.

Align with the Target Customer's (vs. the Public's) Intention

Even if the current GenAI can be good at taking the user's perspective from learning from the customer's input, it predicts responses from generic pretraining data that are generated by the public, weakening its perspective-taking capability. When GenAI does not follow a customer's instruction, this is referred to as the misalignment issue in the computer science literature, in which AI does not follow human intentions (Ouyang et al. 2022). Bai et al. (2022) apply preference modeling and reinforcement learning from human feedback (RLHF) to fine-tune language models. While this training approach has been applied to the current GenAI, it is mainly used to train GenAI to be helpful and harmless, not for better perspective-taking. Huang (in Dukach 2023) proposes that aligning GenAI with the user's intention involves integrating the marketing concept of “target customer.” The current training approach is to follow all users’ intentions (or an average user's intention), which does not consider customers’ heterogeneous intentions.

Have Commonsense Knowledge

Even if GenAI is pretrained using all available public data, it is not a knowledge model. Many of the response appropriateness issues come from whether GenAI has commonsense knowledge. In her TED Talk, Choi (2023) concludes that GenAI is incredibly smart yet shockingly stupid, because it has no common sense. She illustrates with the common observation that GenAI often hallucinates simple facts without knowing it is inappropriate. Lecun (2022) discusses common sense as world models that guide AI in discerning what is plausible and what is beyond possibility. He asserts that while LLMs appear to have an extensive amount of background knowledge extracted from written text, their understanding of commonsense knowledge is superficial because much of human common sense is not captured in textual form. As illustrated in the Amazon Mind Reader commercial example, when AI has no common sense, its response can be socially inappropriate.

There are some efforts to train GenAI to have common sense. Conceptually, Lecun (2022) considers that common sense in AI could emerge from acquiring world models that capture the self-consistency and interdependence of observations in the world, enabling AI to supplement missing information and recognize violations of its world model. Empirically, Bubeck et al. (2023) experiments with GPT-4 to see whether it can reason with commonsense knowledge about the world. They demonstrate that GPT-4 can solve novel and difficult tasks that span mathematics, coding, vision, medicine, law, psychology, and more, without needing special prompting, and its performance is close to human-level performance. However, given the preliminary nature of that study, some researchers believe that strong conclusions may be premature.

Response engineering involves gathering more information from customers about their preferred emotion management solutions, and a theory of mind involves reasoning the customer's unobservable emotions and mental states to determine what solutions will restore the customer's happy feeling. The former asks customers about their preferred solutions directly, whereas the latter infers customer preferences.

Develop Response Engineering to Elicit Preferred Solutions from Customers Directly

In prompt engineering, the process is to create a prompting function fprompt(x) that results in the most effective performance using input x to generate response y (Liu et al. 2023). This process is for the user to ask questions (i.e., develop prompts) and for GenAI to provide answers (i.e., generate responses). The prompt that can generate the intended response is typically improved through multiple rounds of iterations. In this process, the customer needs to know how and what to ask to get the preferred emotion management solution, which apparently does not work in the customer care context, because customers may not be in the mood to iterate multiple rounds with the caring machine to get preferred solutions.

We propose a response engineering approach that assigns the question-asking role to GenAI to elicit solution preferences from the customer directly. In contrast to prompt engineering, response engineering is the reverse process of training GenAI to improve its responses by iteratively probing the customer's preferences through multiple rounds of question-asking to solicit more representative responses from the customer. Through question-asking, feeling AI can learn more about the customer's preferences and thus can generate more personalized recommendations for managing the customer's emotion. There are some preliminary efforts to achieve response engineering (Liu et al. 2023; White et al. 2023) under the current prompt–response design. These methods can be considered workaround solutions, because GenAI is not designed to ask questions.

Develop a Machine Theory of Mind to Infer the Customer’s Preferred Solutions

To generate helpful emotion management recommendations, the literature suggests that feeling AI may need to have a theory of mind—that is, the ability to attribute others’ mental states (e.g., beliefs, emotions, desires, intentions, knowledge) and to understand how they affect behavior and communication (Carpendale and Lewis 2006; Harwood and Farrar 2006; Wellman 1992). Using the term “world model” (background knowledge about how the world works), Lecun (2022) proposes that if AI can learn its own world model, it can use the model to predict, reason, and plan in a self-supervised manner. When feeling AI has a theory of mind (or its own world model), it can reason why customers are happy or unhappy and provide recommendations to help customers manage their emotions.

One initial attempt to develop a machine theory of mind is to use chain-of-thought prompting (i.e., asking GenAI to generate a series of intermediate reasoning steps to address complex problems rather than just predict a final answer), which has been shown to improve the reasoning performance on various arithmetic, common sense, and logical reasoning tasks (Weng et al. 2023). There are also some very preliminary efforts to test the theory-of-mind capabilities of GPT-4 and ChatGPT (those tests require AI to infer the mental states of others to answer questions). Bubeck et al. (2023) allege that AI can reason about the intentions and feelings of people in complex social situations. Kosinski (2023) did similar tests and shows from GPT-3 that the models can solve those tasks with high accuracy (90% for GPT-4). He concludes that theory-of-mind ability (so far considered to be uniquely human) may have emerged from those models due to improved language skills (i.e., the ability is not explicitly engineered into AI systems). These preliminary results are not without controversy. Whang (2023) questions the validity of the tests, as changing the prompts can easily alter the results.

Be Aware of the Multiple Perspectives of the Machine, the Customer, and the Firm

To establish an emotional connection with the customer on behalf of the company requires GenAI to take multiple perspectives: that of AI itself, the customer, and the firm. Feeling AI needs to be self-aware—that is, to have its own thoughts and feelings to exert its own agency (i.e., being autonomous) (Sundar 2020). This has been an important requirement for autonomous vehicles, so that they can make critical decisions on the road (Osburg et al. 2022). Waken.ai (2023) experiments with a cognitive framework to evaluate ChatGPT's self-awareness capability and shows very preliminary evidence for this possibility. However, these findings did not replicate in the latest version of ChatGPT. When feeling AI acts on its own agency and has the theory of mind to attribute not only the customer's emotions and mental states but also the firm's mental models (Rust, Moorman, and Van Beuningen 2016), it can differentiate between itself, the customer, and the firm, from which emotional connections can be established between AI and customers (e.g., companion AI) or between firm and customers (i.e., AI as the agent of the firm).

Align Customer Emotional Well-Being with the Company's Strategic Goal

Aligning the firm's strategic goal (as the input) with the customer’s emotional well-being (as the output) in GenAI requires feeling AI to be agentic in optimizing between the two objective functions. Achieving one at the cost of the other would not be considered strategic. Kiron and Schrage (2019) suggest that a company can define its strategy by key performance indicators, and then AI can optimize these for firms. In model training, this requires alignment between inputting strategic goals (e.g., customer lifetime value) and outputting customer emotional well-being. Currently, GenAI is mainly used to augment decision makers’ strategic thinking, but not to the degree of acting in accordance with the company's strategic goals. Achieving this alignment may require reinforcement learning or some other modeling approach to model multiple goals simultaneously. Liu, Zhu, and Zhang (2022) review goal-conditioned reinforcement learning, which represents one such approach to multiple-goal reinforcement learning.

The Caring Machine: Marketing Tenets

We derive marketing tenets (shortened as MT1–MT4) from the gap between marketing requirements and GenAI's current feeling capabilities for marketers to use and for academics to research feeling AI for customer care along the customer care journey. The tenets for practitioners focus on how marketing managers can leverage the current or near-term feeling AI for customer care, while the tenets for researchers focus on how marketing scholars can theorize the caring machine. In the interest of space, we focus on two major implications for practitioners and two major implications for researchers for each stage. The marketing tenets row of Table 2 summarizes the four tenets, implications for marketing managers, and implications for marketing researchers.

Emotion Recognition Tenets for Practice

Cross-verify recognition accuracy using multiple emotion signals

For practitioners, it is important to verify the accuracy of the responses. The CMO/CCO survey has shown that the ability to identify customer emotions and needs accurately is an important customer care issue. Tech companies are experimenting with or deploying various self-verification methods for GenAI to reduce hallucinated responses and build trust with their customers (Cavanell 2023; Nirmal 2023; Weng et al. 2023). In addition to the self-verification efforts by tech companies, marketing practitioners can apply the multiple elements of emotions identified in the existing marketing emotion theories, including physical expression, physiological response, subjective experience, and cognitive appraisal, for cross-validation.

Balance between recognition accuracy and customer emotion data security and privacy

Using multiple emotion signals for cross-validation often requires using (i.e., fine-tuning) or allowing external access to (e.g., plug-ins) proprietary, internal customer emotion data. When applying this approach, keeping customer emotion data within the boundary of the company (e.g., in a private cloud) is critical. As Poremba (2023) puts it, “Anything in the chatbot's memory becomes fair game for other users.” This open sharing concern can be more severe for customer emotion data. Customer emotion data are the firm's competitive advantage and are more personal and sensitive to customers; thus, keeping them secure and private is an important consideration for the firm to be responsible to its customers and to remain autonomous from AI service providers. Some very big companies (e.g., Morgan Stanley) can leverage the benefits of GenAI by mining the data of their own customers, given that the large size of their proprietary data are more effective for fine-tuning. However, smaller companies may not benefit from fine-tuning, and thus open sharing is often the more feasible approach. Some companies try to train their own models, while others focus on fine-tuning. This is a practical issue that practitioners need to consider when striving to improve the accuracy of emotion recognition without sacrificing customer emotional data security and privacy.

Emotion Recognition Tenets for Research

Use marketing emotion theories as the knowledge base for inference

For researchers, marketing emotion theories can be applied and adapted to capture the nuances of customer emotions in marketing contexts. Emotions do not occur in a vacuum, and emotional experiences vary across contexts and customers. Marketing emotions are mainly about customer emotional responses to marketing stimuli (e.g., advertisement) or to consumption (in consumption contexts). Huang (2001) reviews four theories of emotions borrowed from psychology and five marketing emotion theories and identifies three characteristics of marketing emotions. First, they tend to have a wide range. For example, Aaker, Stayman, and Vezina (1988) identify 31 feeling clusters, and Richins (1997) identifies 17 consumption emotions. Second, the intensity of the emotions tends to be milder. Thus, many marketing scholars prefer to use the term “feeling,” or “affective” rather than “emotion” (Aaker, Stayman, and Vezina 1988; Batra and Holbrook 1990). Third, positive and negative feelings are independent but can co-occur (Burke and Edell 1989). Thus, for accurate inference, emotion theories developed in marketing serve as more relevant knowledge bases for verification. This theoretical approach to verification is important, as machine learning–based GenAI is data-driven and has limited inference capabilities.

Develop emotion theories in the AI–customer interaction context to facilitate inference

One AI pain point that emerged from the CMO/CCO survey is that AI has difficulty identifying nuanced customer issues and needs. For example, emotions experienced in a health care context, such as delivering a bad prognosis, can be very intense; thus, the strong emotions create a customer care context in which communications with patients and their families are important (Danaher et al. 2023; Ong et al. 1995). What are the characteristics of customer care emotions across different industries? What emotions are likely to be experienced in interactions with AI agents, and in which way? These are some important research questions to be addressed.

Empathy enables emotion understanding and can be facilitated by more and better human–AI interactions. Marketing practitioners can develop prompting skills to leverage the interaction capability of feeling AI to enable customers to reveal their thinking and feeling to the caring machine. Marketing researchers can broaden and deepen theoretical approaches to customer–AI interaction by identifying antecedents, mechanisms, and outcomes of empathy in such interactions.

Emotion Understanding Tenets for Practice

Train customer agents (humans or AI) and/or customers to develop prompting skills for mutual understanding

Practitioners can train customer agents, the caring machine, or even customers to develop better prompting skills for better mutual understanding. The more iterations in the interaction, the more thinking and feeling can be unveiled, and the higher chance for the service provider to align with the customer's intentions and preferences. Prompt-based learning is a new approach to modeling prediction tasks (Liu et al. 2023). Better prompting skills entail knowing how to ask questions to get desirable answers. Prompt engineering facilitates adaptation of GenAI to ad hoc tasks (Bach et al. 2022) and has become a new skill for improving performance. Human agents who master prompting can know better what questions to ask to elicit customers’ thinking about their problems and resulting feelings. When the prompting is performed by the machine, marketers can use system prompts (i.e., “prompt templates” that automate prompting; Liu et al. 2023) to program AI with introductions (i.e., role, task, and demonstrations) and then have the customer interact with the prompted AI via user prompts to ease customer prompting, from which customer issues and emotions can be better understood.

Form AI–human agent teams to supply human common sense for fine-tuning appropriate AI reactions

Given that current AI does not have common sense to evaluate response appropriateness, marketers can form AI–human agent teams to supply human common sense as fine-tuning data for AI to learn. The current practice of AI–human collaboration in customer service is for AI to handle simple, routine issues and for human agents to handle more complex issues. However, such a division of labor does not allow AI to learn from human common sense and thus is not ideal for improving the appropriateness of AI reactions. This implication suggests that GenAI's responses can be fine-tuned with common sense that human agents apply to understand customer emotions. Such common sense is context-specific and has potential for fine-tuning GenAI to demonstrate human-like appropriate responses.

Emotion Understanding Tenets for Research

Develop prompt engineering approaches for empathetic customer care

“Prompt marketing” can be expected to be a new buzz term for designing prompts to generate desirable marketing outputs. However, the current practice of prompting is more of an art than science, with prompting skills learned in a trial-and-error manner. This creates difficulty in replicating good prompts across customer care scenarios and transferring to different customer care agents (humans or machines). Thus, developing theoretical approaches for prompt engineering can facilitate systematic use of prompting for empathetic customer care.

Develop theories of empathy in customer–AI interactions

For researchers, this is a task of developing a theory of empathetic understanding in customer–AI interactions. Such a theory can improve our understanding and facilitate prediction about what variables improve or hinder empathy, what variables moderate the effects, and what dependent variables should be used to gauge such interactions. Customer–AI interactions may possess unique characteristics that are different from customer–human agent interactions. For example, respondents to the practitioner survey often questioned whether feeling AI has qualities of sincerity or authenticity. Customers also have different degrees of acceptance of interacting with feeling AI (e.g., Mende et al. 2019). Some recent studies have called for more research on leveraging the empathetic capability of AI in marketing (Caruelle et al. 2022; Liu-Thompkins, Okazaki, and Li 2022). Thus, understanding feeling AI's strengths and limits, as discussed in the state-of-the-art GenAI section, and understanding target customers’ acceptance of artificial empathy can help develop a customer–AI interaction theory that can explain and predict the processes and outcomes of customer–AI interactions and their boundary conditions.

Emotion Management Tenets for Practice

Use response engineering to learn customer preferences and reactions to brand recommendations

For practitioners, response engineering can help learn customers’ preferred emotion management solutions and their reactions to provided recommendations. When GenAI, as customer care machine, is responsible for asking questions, the customer's preference and reaction can be learned from the interaction directly. In the fictitious scenario presented previously, after expressing empathy to Mark's sadness, GenAI agent Kathy asks Mark's preferred flight arrangement to make the flight easier for him, and then, based on Mark's response, provides a quiet window seat, priority boarding, and refers him to the bereavement fare policy. The personalized set of recommendations requires combining knowledge of the customer (e.g., Mark is attending his mother's funeral and prefers a quiet seat) with knowledge of the brand (e.g., the flight still has window seat available, the airline has a bereavement fare policy) to address the situation. When response engineering is used to learn about the customer and when the knowledge is matched with brand knowledge, the customer is more likely to consider the emotion management recommendation helpful.

Fine-tune responses by learning from customer response knowledge for helpful brand recommendations

Personalizing recommendations requires knowing the customer's preferred brand recommendations using response engineering. Customers’ response data accumulated from response engineering can be used to fine-tune responses such that machines predict better over time how the customer will react to certain brand recommendations, from which the brand recommendation could be refined and eventually considered helpful by the customer. For example, once Kathy learned Mark's (and customers like Mark in a similar situation) preferred recommendations, Kathy doesn’t always have to do response engineering but can provide brand recommendations much faster if Kathy has been fine-tuned with customer response knowledge.

Emotion Management Tenets for Researchers

Develop marketing models for machine theory of mind for recommendation personalization

Researchers can develop marketing models for machine theory of mind for using AI to infer customer preferences and offer personalized recommendations. Such models need to be able to use AI to infer the mind of customers and then personalize emotion management recommendations accordingly. In marketing, various algorithms and analytical models have been developed to personalize product recommendations to customers adaptively (Chung, Rust, and Wedel 2009) to build customer lifetime value (Huang and Rust 2017). The marketing contexts in these studies do not involve customer emotions, yet they can serve as the starting point for developing such models in the new prompt–response AI paradigm and providing customer care. Thus, GenAI can be leveraged to better describe and predict helpful emotion management recommendations.

Develop theories for using AI to manage customer emotions