Abstract

A theory-first paradigm tends to be the dominant approach in much academic marketing research. In this approach, a theory is borrowed, refined, or developed and then tested empirically. In this challenging-the-boundaries article, the authors make a case for an empirics-first approach. “Empirics-first” refers to research that (1) is grounded in (originates from) a real-world marketing phenomenon, problem, or observation, (2) involves obtaining and analyzing data, and (3) produces valid marketing-relevant insights without necessarily developing or testing theory. The empirics-first approach is not antagonistic to theory but rather can serve as a stepping-stone to theory. The approach lends itself well to today’s data-rich environment, which can reveal novel research questions untethered to theory. The present article describes the underlying principles of an empirics-first approach, which consists of exploring a domain purposefully without preconceptions. Using a rich set of published examples, the authors offer guidance on how to implement empirics-first research and how it can lead to valuable knowledge development. Advice is also offered to scholars on how to report empirics-first research and to reviewers and to editorial teams on how to evaluate it. The ultimate objective is to pave a way for the empirics-first approach to enter the mainstream of academic marketing research.

A decade ago, a plea was made for marketing to free itself from the orthodoxy of deductive, theory-driven knowledge creation with the view that it was limiting discovery (Alba 2012; Lynch et al. 2012). Concurrently and since, pleas have been made to increase the relevance of marketing research to marketing’s stakeholders, including consumers, managers, public policy makers, and educators (Lehmann, McAlister, and Staelin 2011; Moorman et al. 2019; Reibstein, Day, and Wind 2009; Schmitt et al. 2022; Van Heerde et al. 2021). We see a connection between these pleas. In fact, concern over the state of marketing has recently been accompanied by calls for nondeductive approaches to knowledge creation (Lehmann 2020; Zeithaml et al. 2020). It is peculiar that our field has resisted departure from its dominant theory-driven paradigm while lamenting the lack of insights and relevance from that paradigm. In this challenging-the-boundaries article, we promote an empirics-first (EF) approach as an alternative to the dominant theory-first (TF) approach. “Empirics-first” refers to research that (1) is grounded in (originates from) a real-world marketing phenomenon, problem, or observation, (2) involves obtaining and analyzing data, and (3) produces valid marketing-relevant insights without necessarily developing or testing theory.

Our overarching objective is to provide a how-to guide for conducting, reporting, reviewing, and fostering EF research that will complement existing commentaries on the legitimacy of the EF approach (Alba 2012), the evaluation of EF research (Lynch et al. 2012), and the attractiveness and necessity of connecting marketing research to the real world (Van Heerde et al. 2021). A key target audience consists of junior marketing scholars who wish to follow the EF path, but we also address senior colleagues who set the rules for research and whose endorsement is essential.

Our use of “empirics-first” encompasses different nondeductive approaches, including induction and abduction. 1 Another related notion is “grounded theory,” which refers to the collection of data in service of theory development (Eisenhardt, Graebner, and Sonenshein 2016; Zeithaml et al. 2020). Elsewhere, Bass (1995) offers a distinction between cases in which theory follows empirics from cases in which empirics follow theory. We use the term “empirics-first” because research that begins with empirics need not lead to theory. A key theme throughout our discussion is that none of the aforementioned approaches fully captures the impetus for EF research, the scope of its processes, or the diversity and strength of its outputs.

We do not deny the virtues of deductive research. In practice, however, a frequent characteristic of TF research is a lack of discovery and relevance (Eisenhardt, Graebner, and Sonenshein 2016; Lynch et al. 2012), due in part to demands to position the investigation within the literature and satisfy the rules of hypothesis testing. TF research often starts with the literature and a search for moderators, mediators, extensions, and applications of a reported effect. EF research is often sparked by “data” from the real world. This means that the research can start down a fresh path, with new questions that are unburdened by the demands of existing theory. Whereas relevance can be achieved by both EF and TF research, we argue that EF has a natural arc that bends more easily back to real-world implications. Another point of difference is that although TF research tends to be satisfied with establishing the direction of a (causal) effect, EF research also often aims to estimate effect sizes to inform marketing stakeholders about the economic and societal significance of the findings.

Still, we do not claim that EF research is relevant per se or that it is routinely more relevant than TF research. In fact, both TF and EF research can be highly relevant as well as highly irrelevant. Novel data may fail to inform marketing stakeholders, and EF research can lack impact when its findings are narrow or idiosyncratic to the specific context examined.

If our argument has merit, the obvious question is why EF research has failed to gain traction, especially in strategy and experimental consumer research, which our survey shows are most likely to abide by a TF approach. One possible reason is that EF research appears to lack rigor. In contrast to TF research, which is well represented in PhD education and consists of a series of well-defined steps, the bumbling nature of EF research tends to be open-ended and unstructured. We do not dispute that EF research has lacked an established road map, but neither would we characterize it as uninformed, chaotic, or unscientific. In this article, we describe EF research in practice and formulate research steps to enhance its rigor and outcomes. We also argue that wider adoption of the EF approach will be contingent not only on a recognition of its inherent virtues but also on a willingness to accept the trade-offs that accompany those virtues. Thus, we target the Jekyll-and-Hyde contradiction in all of us as authors and reviewers: as authors we may prefer EF, but as reviewers we often demand TF.

In the remainder of this article, we elaborate on why the present is an especially opportune time for EF research. We then discuss differences between EF and TF that are bolstered by a survey of scholars’ attitudes and behaviors. We follow with a framework for conceptualizing EF research and then provide guidance on how to conduct EF research. We conclude with recommendations on how to report and evaluate EF research and a discussion of key implications for the research ecosystem.

EF: Why Now?

Aside from the mounting demand for relevance, several external developments contribute to the timeliness (and timelessness) of the EF approach. First, novel data and new methods increasingly offer new vistas on consumer, organizational, and market behavior. 2 Data from online behavior are now routinely exploited, but the digitization of offline practices that accompany the proliferation of new business models, marketplaces, and media platforms offers many more opportunities. Similarly, consumer research can benefit from increasingly available panel data that facilitate longitudinal analysis (Chintagunta and Labroo 2020). In addition, web scraping offers unique opportunities to capture online marketplaces and behaviors (Boegershausen et al. 2022).

Accompanying the increasing volume of often unstructured data are new approaches such as machine learning that allow identification of potentially relevant independent variables (IVs) and more complex nonlinear relationships without relying on an extensive literature review for each variable in the data (Ma and Sun 2020; Wedel and Kannan 2016). Achieving superior prediction and identifying important variables and relationships could help validate or contradict prior knowledge and/or suggest insights and patterns that have not yet been explored, creating new areas of inquiry (e.g., Yarkoni and Westfall 2017). 3

Another reason to encourage a turn toward EF research arises from developments outside of marketing (Rust 2020). Marketing can (and should) rectify its belated response to critical issues of the day, including vaccine acceptance, climate change, disinformation, and consumer autonomy. For society’s sake, marketing scholars are obliged to tackle these issues regardless of the existence of guiding theories; for the sake of self-preservation, marketing scholars should rush to these intellectual frontiers to avoid being marginalized by practitioners and scholars from other disciplines (Wierenga 2021).

The EF approach could also help address the confidence crisis plaguing the social sciences, a prominent cause of which has been the failure to replicate. Although activities that lead to false-positive results are being addressed (Simmons, Nelson, and Simonsohn 2011), concern with HARKing (hypothesizing after results are known) persists. As we show in our survey, many marketing scholars suspect that some research tacitly follows an EF approach but is molded to fit the TF template in its reporting (with hypotheses formulated post hoc).

A kind interpretation of this behavior is that authors feel compelled to meet reviewer expectations about both science and the reporting of science. Indeed, it has been argued that a properly crafted manuscript tells a compelling story (Peracchio and Escalas 2008) via the classic TF or deductive schema (i.e., introduction → conceptual framework → hypotheses → method and results → discussion), with hypotheses playing a central role in the overall flow. The classic TF format may result in a more readable article than does a less structured EF format, in part because a guiding theory provides the reader with a logical schema for organizing multiple findings. We suspect that this readability rationale prompts some authors to adopt a TF format when reporting their research. However, we also fear a less kind interpretation, wherein authors deliberately misrepresent the scientific process to heighten the persuasiveness of their argument. Research integrity requires that EF research be reported as such.

TF and EF Research Differences

TF and EF research approaches differ along several dimensions. For efficiency, we summarize the differences in Table 1. In keeping with our esteem for both TF and EF research, we acknowledge the relative suitability of these approaches across various research projects and objectives. Indeed, the TF–EF distinction can be seen as a continuum, such that specific research projects blend positive features of both approaches (e.g., Chen et al. 2020). 4

TF and EF Research Differences.

To ascertain that we do not stand isolated in our enthusiasm for EF research, we contacted some leading marketing scholars and asked, “In your portfolio of published papers, which ones would you characterize as being primarily EF rather than TF? A typical EF paper does not start with theory development and hypotheses, but rather starts with open-ended research questions and explores data with an open mind for discovery. The data can be based on experiments, observation, archival sources or company records.” Table 2 contains six EF studies that were recommended to us. To this list, we added six of our own studies, not because they are paragons of EF research but because we know the inspiration for these projects and the steps pursued in arriving at the final outcomes. This knowledge allows us to illustrate more concretely our subsequent recommendations on how to conduct and report EF research.

Examples of EF Research.

An author of this study was among those we contacted for examples of EF research.

Table 2 shows that EF research occurs across all parts of the field and applies to diverse methods. Whereas some EF research gets at the “why” of the outcome (e.g., Zheng, Bolton, and Alba 2019), other studies primarily document what is transpiring without asking why (e.g., Rust et al. 2021; Van Heerde, Helsen, and Dekimpe 2007; Zhang and Wedel 2009). Also, whereas some EF research is more descriptive/predictive in nature (e.g., Fernandes, Lynch, and Netemeyer 2014; Golder and Tellis 1997; Stahl et al. 2012), other EF research has a more causal (e.g., Bolton, Warlop, and Alba 2003) or even normative (e.g., Zhang and Wedel 2009) focus. What these studies all share, however, is that their starting points are empirical observations rather than theory.

Survey on EF and TF Practices and Perspectives

We assessed the discipline’s current beliefs and behaviors regarding EF and TF research through a worldwide survey of 1,000 marketing scholars randomly selected from an American Marketing Association list of 4,162 unique email addresses (91 of which were undeliverable). We netted 140 responses (response rate of 15.4%) after excluding respondents who do not conduct empirical academic marketing research. The complete questionnaire and response summary are reported in the Web Appendix. Top-line results are reported subsequently; the differences we report are significant at p < .05 (see the Web Appendix for test results of these differences). Questions and response scales for these top-line results are reported in the Appendix.

Overall, marketing scholars believe that EF research has promise in three important ways: First, a much higher proportion of respondents view EF research as more likely to lead to real-world insights than TF research (48% vs. 14%; Question 5). This difference is strong among empirical modelers but not significant among strategy researchers. Second, respondents believe that EF research is more appropriate than TF research for investigating new phenomena (65% vs. 11%; Question 6). Third, EF research is viewed as more appropriate for serving marketing’s broader constituencies (45% vs. 18%; Question 6).

These positive views of EF are offset by some strongly negative sentiments. Consistent with TF orthodoxy, 64% of respondents agree that research should be based on a strong theoretical rationale (Question 3). Similarly, 43% agree or are neutral to the charge that research starting with data analysis is unscientific; 53% of strategy researchers but only 26% of empirical modelers share this view (Question 3).

The most common order in which scholars conduct and report research adheres to the TF template (Question 2). However, these modal responses mask substantial variation across respondents. In fact, many scholars (28%) develop hypotheses at later stages of research (reminiscent of HARKing), and many scholars (29%) collect data during earlier stages of research (consistent with EF; Question 2e). Empirical modelers (55%) are more likely to collect data in early stages than strategy researchers (29%) and experimental consumer researchers (5%; Question 2f). Across all types of marketing scholars, few report that they collect data during early stages (0%–6% depending on scholar type; Question 2f). A gap between research conduct and research reporting is most striking among editorial board members and editors, 36% of whom collect data during early stages but none of whom report doing so (Question 2f). Our conjecture is that successful people in our field have learned that TF studies receive a friendlier reception in the review process. Indeed, these senior scholars are likely to apply the TF template themselves when reviewing research.

We find these results disconcerting. EF is viewed positively in terms of characteristics we claim to value: real-world insight, relevance to stakeholders, and novel phenomena. Yet, the pull of TF orthodoxy is so strong that EF-derived findings are often portrayed as TF-derived. One culprit is PhD training, which is characterized as emphasizing a TF approach more so than an EF approach (63% vs. 20%). Strategy researchers (84% vs. 12%) and experimental consumer researchers (68% vs. 12%) agree with this TF emphasis in PhD education, whereas empirical modelers are more balanced (40% vs. 34%; Question 5). We surmise that over time and in several areas of our field (consumer and strategy research, in particular), the TF approach has become the dominant mode of conducting research. Scholars adhering to the TF approach in their own work teach their own PhD students well-honed TF principles without paying much (if any) attention to the appropriateness, process, and benefits of EF research.

Another culprit is the review process. A large majority of respondents (88%) believe journals have strong expectations about the structure of an article (Question 4), 5 with a strong preference for TF over EF (75% vs. 8%; Question 5). Consequently, scholars find it easier to go through the review process with a TF paper than with an EF paper (70% vs. 10%); even empirical modelers share this view (65% vs. 8%; Question 5). Overall, these results substantiate our goal to level the TF–EF playing field in teaching, conducting, reporting, and reviewing research.

Stages and Strategies for EF Research

Our main objective is to offer guidance on how to conduct EF research. We emphasize at the start that we do not claim to be the arbiters of this process. Indeed, there is no routinized process for EF research, and no formal user manual will be offered. However, our accumulated experience over several decades and across most of the major areas of marketing have produced learnings that we hope will prove useful.

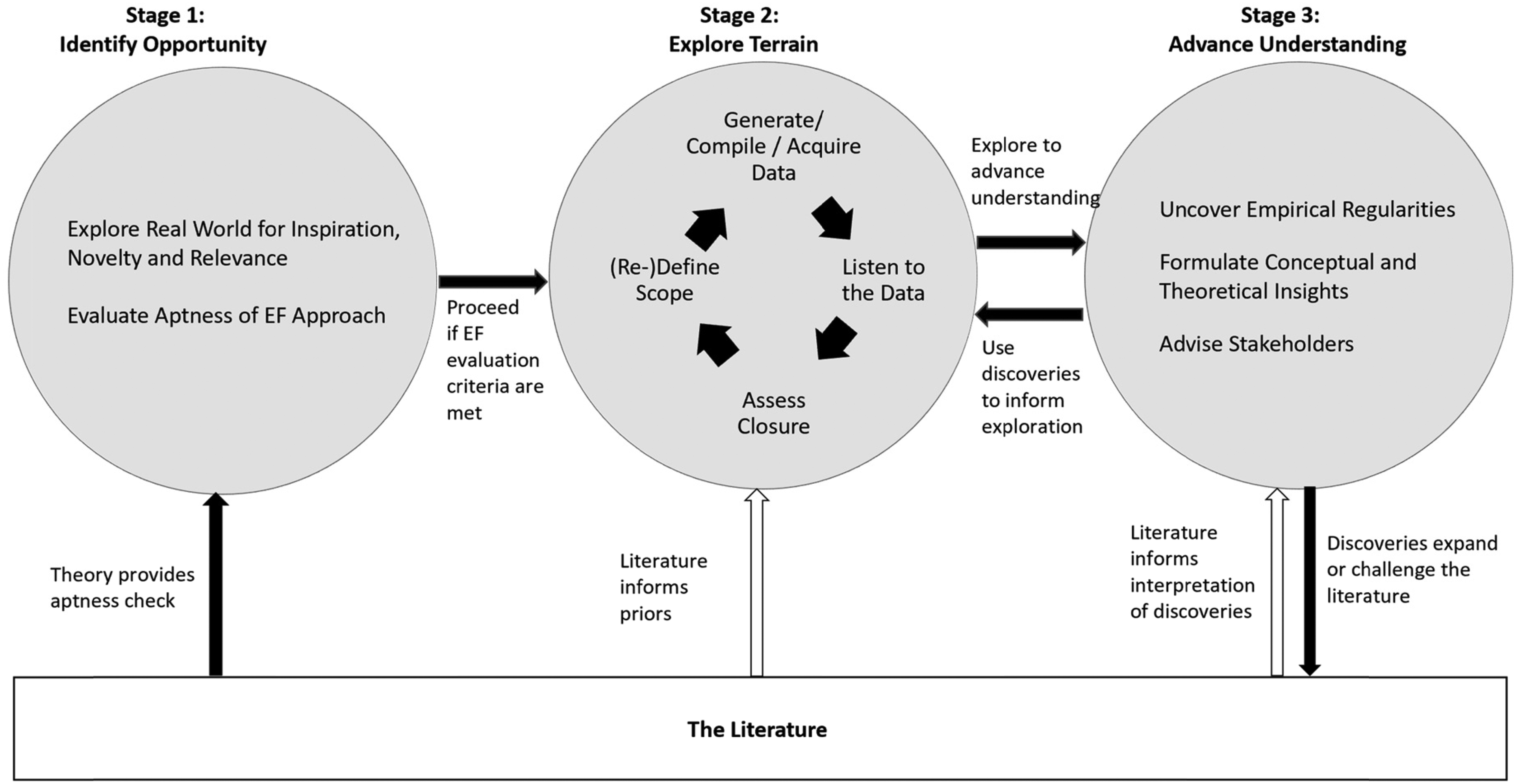

Figure 1 offers a sketch of the EF process along with its interactions with the literature. In the first stage, “Identify Opportunity,” the scholar selects a meaningful, real-world issue that is relevant not only to a lone marketing stakeholder but instead has broader appeal. The research can proceed to the next stage when it is apt, which occurs when the literature provides little guidance. This second stage, “Explore Terrain,” is unquestionably less linear and more iterative than TF research. Finally, in the third stage, “Advance Understanding,” the scholar strives to provide outcomes (e.g., empirical regularities) not attainable through traditional hypothesis testing. A feedback loop exists between exploring the terrain and advancing understanding, as some discoveries that emerge may inform the scope of further empirical exploration.

EF Research.

Notably, the scholar does not stand apart from the literature in any of these stages. In the first stage, existing theory (or the lack thereof) provides an aptness (suitability) check, with lack of strong theory pointing to an opportunity for an EF approach. In the second stage, priors or hunches that guide EF research stem from the scholar’s accumulated experience (including previous exposure to the literature). In the final stage, the literature informs the interpretation of discoveries while the discoveries expand or challenge the literature.

The major components of EF research portrayed in Figure 1 are communicated in more detail in Table 3 and in the following sections. The discussion also illustrates why EF research does not fall neatly into narrower categories, such as induction and abduction. Indeed, the start of EF research often has multiple sources that are not mutually exclusive, and the outcomes of EF research may include many findings, which may or may not have theoretical implications.

Stages and Strategies for EF Research.

Stage 1: Identify Opportunity

Explore the Real World for Inspiration, Novelty, and Relevance

A key feature of EF research emerges during the identification of research opportunities. Nobel laureate Paul Krugman relates his dissertation chair’s advice: Don’t reread the literature. Your head is already stuffed full of that material, and you’ll end up doing a small twiddle on someone else’s model. Instead, he urged me to read about real-world issues—read the Financial Times, the Economist, economic history, get your juices flowing. See what seems to be an interesting issue you think you can use. (Krugman 2002)

We likewise urge marketing scholars to examine emergent and underexplored real-world phenomena and to do so without strong preconceptions. One approach is to identify a marketing angle in the pressing problems facing society. For example, resistance to food technologies such as genetic modification that can alleviate health problems, hunger, and environmental harm is ultimately a question of consumer behavior (Kim, Kim, and Arora 2022). Insights that reduce such resistance speak to managerial and public policy, as would insights regarding the efficacy of interventions to discourage smoking (Wang, Lewis, and Singh 2021) or improve financial literacy (Fernandes, Lynch, and Netemeyer 2014).

Scholars can also obtain inspiration from needs and frustrations expressed by marketing stakeholders. For example, new products are ubiquitous, and managers need to understand the patterns and underlying drivers of sales takeoff (Golder and Tellis 1997) and the rewards associated with pioneering these new markets (Golder and Tellis 1993). Opportunities may also emerge from novel data sources, such as the data documenting a major product-harm crisis (Van Heerde, Helsen, and Dekimpe 2007), or from conflict between the marketing literature and the observed world, such as when the observed failure of many early entrants contradicted the prevailing accepted view of pioneer advantage (Golder and Tellis 1993).

When identifying EF research opportunities, scholars can increase the relevance of their research by asking whether an investigation will produce actionable advice to stakeholders. Relevance increases as stakeholder control over the scholar’s IVs increases and as the stakeholder finds the scholar’s dependent variables (DVs) more valuable. In the product-harm study, for example, the managers had control over the IVs (price and advertising) and a deep interest in the DV (brand performance; Van Heerde, Helsen, and Dekimpe 2007); similarly, the sales takeoff model provided managers with description, (partial) explanation, and prediction (Golder and Tellis 1997).

Evaluate Aptness of the EF Approach

The EF approach tends to be more apt—meaning suitable for use—when any of the following conditions are met: theory is in short supply; the literature is equivocal; intuition leads to multiple plausible, yet conflicting, outcomes; observations taken from the world or opinions expressed in business reports do not align with theoretical predictions; the prevalence of an empirical effect has been examined scantly; and rich and newly emergent data allow the scholar to probe unexamined relationships.

For example, although prior research broached questions concerning consumer knowledge and reasoning about prices (Dickson and Sawyer 1990; Estelami, Lehmann, and Holden 2001; Kahneman, Knetsch, and Thaler 1986), research on the underlying drivers of price fairness was thin and insufficient to offer guidance regarding three potential reference points (past prices, competitor prices, and vendor costs) that form the core of an EF examination of price fairness (Bolton, Warlop, and Alba 2003). Likewise, the previous literature on market orientation failed to consider how organizations become market-oriented, which is addressed in an EF study (Gebhardt, Carpenter, and Sherry 2006). An EF approach was also apt in the study on sales takeoff (Golder and Tellis 1997), inasmuch as the data that supported the existing literature lacked completeness. The literature on product-harm crises, in turn, was underdeveloped (mostly lab studies and conceptual articles), making an empirical study based on real-world data (Van Heerde, Helsen, and Dekimpe 2007) highly relevant, especially given that the increasing complexity of products, the closer scrutiny by manufacturers and policy makers, and the higher demands by consumers were expected to lead to a considerably higher incidence of product-harm crises.

When EF criteria are met, exploration can commence, as discussed next and represented in the middle of Figure 1.

Stage 2: Explore Terrain

(Re)Define Scope

EF research is often initiated by open-ended research questions rather than directional hypotheses. For example, the market-pioneering study began with the question of “What are the rewards for actual pioneers?” (Golder and Tellis 1993), and the biological-causation research began with curiosity about the portrayal of willpower in terms of neural processes and structures that would influence perceptions of consumer control over undesirable behavior (Zheng and Alba 2021).

The scope of EF research can be characterized as purposefully expanding because the lack of theory-based guardrails may preclude a precise prespecification of the scope of the endeavor. 6 In some instances, the investigator may have a rough sense of the breadth required to address the research questions and to achieve a priori objectives. In other instances, the scope may expand because one finding demands investigation of other questions or variables, in which case the scholar must assess the scope on a recurring basis. In such cases, higher-order insights may arise from new research questions and/or a scope unanticipated at the start. For example, in the study on self-regulation (Zheng and Alba 2021), there was little basis on which to anticipate the variety of portrayals of biological causation and dependent measures required to arrive at a generalizable conclusion.

In most cases, EF research will call for a wide scope to avoid overgeneralization from an individual finding or idiosyncratic setting. Thus, a research question that seems circumscribed at first may become broader (e.g., via different views of the problem) or deeper (e.g., via exploring process mechanisms) as it progresses. Still, initial research in an emerging domain can be relatively narrow (focused) if it can be broadened in follow-up research. For example, even though the data in Van Heerde, Helsen, and Dekimpe (2007) covered only one product-harm crisis, the research led to the higher-order conjecture that such a crisis does not just result in lower sales but also reduces a brand’s ability to recover through marketing and weakens the brand’s market position. This conjecture served as impetus for future research that expanded the empirical knowledge base with over 60 other product-harm crises (Cleeren, Van Heerde, and Dekimpe 2013), which, in turn, led to various empirical regularities and empirically tested contingency factors.

According to the logic of falsification, a narrow empirical finding has value in theory testing. However, one-off, contextually bound research does not represent the best of EF research. EF scholars are advised to be conscientious in their quest to falsify their findings, perhaps by exploring multiple contexts or by applying a plethora of robustness tests. A problem of marketing research generally is that findings are not aggressively pursued across laboratories. Thus, a finding can become lore even though it is valid only within the narrow confines of its discovery or is circumscribed by a single boundary condition. Properly pursued, wide-ranging EF research with honest attempts to falsify the empirical insights can avoid this dilemma. In EF research, failed robustness checks should be viewed as learning opportunities that lead to an even broader exploration that considers why a finding obtains in one context but not another.

Generate, Compile, or Acquire Data

The specific processes followed by the EF scholar will vary depending on the source of the data: (1) data generation, which entails experiments, surveys, and observation; (2) data compilation, which involves the collection of archival data or syndicated data sources; and (3) data acquisition, which consists of starting with a (preferably) unique data set from an external party (e.g., a participating industry partner). The key difference between the latter two approaches is that data acquisition begins with an established data set, whereas data compilation begins with some desired variables but no actual data. The data acquisition approach tends to be even more free-flowing than the data compilation approach, given that the latter requires at least some preconception about the initial variables that will be compiled.

Marketing scholars can use all three sources regardless of their scholarly domain, but there is some empirical correspondence between data generation, compilation, and acquisition and the familiar marketing domains of consumer behavior, strategy, and modeling, respectively. Of course, EF studies can also combine data sources (see, e.g., Fernandes, Lynch, and Netemeyer 2014).

Generate data

Of the three data sources, the least structured involves data generation, where “bumbling” was first acknowledged in marketing and elsewhere by behavioral scholars (Alba 2012). The clearest form of bumbling is when research proceeds as a progression, with each finding informing the next step. In the study of biological causation, for example, the failure to move the needle on perceived willpower with one operationalization of biological causation prompted examination of different and stronger operationalizations (Zheng and Alba 2021). In such instances, the ultimate scope may be poorly anticipated at inception. Data generation can also have a more hierarchical structure, as when the scholar appreciates, a priori, the imperative to sample heterogeneous contexts to avoid the risk of improper generalization and provide an opportunity for overarching insight. For example, the research on technology resistance involved deliberate sampling of multiple diverse technologies (Zheng, Bolton, and Alba 2019).

Compile data

In data compilation, initial data collection must be guided by broad research questions (e.g., “What do new product sales look like in the stage between initial commercialization and the later years covered in diffusion-model studies?”). Because some research questions cannot be answered with existing data and because the costs of data compilation can be high, it is important to assess data requirements early in the empirical investigation so either the scope can be adjusted or the project abandoned. For example, do existing data sources enable identification of the first year of sales and provide sales figures for every year thereafter? In both the market-pioneering study and the sales-takeoff study (Golder and Tellis 1993, 1997), data in a small number of categories were compiled initially to assess the feasibility of the study and guide additional data compilation. When public data are not available, pursuing private data (e.g., organization archives) may be feasible. However, some research opportunities may have to be explored through other approaches (e.g., data generation through surveys and/or interviews). Initial data collection and exploration are invariably helpful in refining broad research questions or adding complementary research questions.

Acquire data

Data acquisition often starts with a rich new data set that offers potential for novel insight. 7 It is vital to leverage the strengths of the data set. If the data excel on the cross-sectional dimension (e.g., unprecedented breadth of brands, consumers, organizations, countries), the research questions should capitalize on this asset. If the main strength is in the longitudinal dimension (e.g., exceptional length of time), this feature offers the greatest potential. The strengths can also draw from marketing-related variables that were never previously observed or that are newly observed with an unprecedented level of granularity.

The cross-sectional breadth of the data will also determine the extent to which it is best to focus on the generalizability of recurring empirical patterns (potentially augmented with a contingency analysis) versus establishing the prototypicality of the data at hand (where one case can be argued to be a prototype of the problem). As the product-harm data set covered only one crisis, it did not offer the potential to uncover empirical regularities. Instead, the authors judged it prototypical of other major product-harm crises because of the severity of the crisis, the broad media coverage that it had received, and the fact that a well-known manufacturer had been involved. In line with that positioning, the article provided extensive detail on the institutional context and ensuing managerial implications (Van Heerde, Helsen, and Dekimpe 2007).

Listen to the Data

Once initial data have been generated, compiled, and/or acquired, the EF researcher proceeds by “listening” to the data. This step involves approaching the research with an open mind as to what the data may tell about the focal phenomenon. As discussed next, the recommended approach is to start with simple checks and analyses, add more breadth and depth anchored by the scholar’s knowledge, and, when possible, explore causal relationships, while staying neutral with a scholarly mindset.

Validate the data and offer model-free evidence

Reliance on data requires data validation regardless of which of the aforementioned three approaches are used. It is critical to use reliable, credible sources and to ensure that individual data elements are corroborated through equally reliable, credible sources. In the case of qualitative research, data from multiple sources (historical documents, interviews, and observation) can benefit from cross-validation among informants (Gebhardt, Carpenter, and Sherry 2006). In more quantitative realms, data should be organized chronologically (e.g., before vs. after a product-harm crisis) and/or cross-sectionally (e.g., takeoffs in newer vs. older product categories) so that insights, generalizations, and explanations can be discovered through qualitative and quantitative data analysis.

Data visualization is also an important way to listen to data, as is data simplification through methods such as text mining, cluster analysis, and factor analysis (Laurent 2013). For example, in the sales-takeoff study, initially plotting sales against time led to the surprising discovery that this early, unresearched period of sales was typically lengthy and consisted of a low level of flat sales that quickly transitioned into a period of sharply increasing sales (Golder and Tellis 1997). Starting with copious model-free evidence helps preestablish the face validity of later model results and increases confidence in those results. In some instances, model-free evidence may be as informative in providing managerial advice as subsequent model estimates (e.g., time to takeoff and price decreases at takeoff).

Incorporate scholars’ knowledge

Irrespective of the data source, prior knowledge plays an important role. EF scholars almost always have some initial idea of what they could look for and may have expectations about potential (causal) relationships, but those expectations tend to align more closely with hunches than with literature-driven hypotheses. An EF scholar is therefore not an analysis machine that merely computes covariances among variables in a raw data set. Instead, inclusion of the investigator’s prior knowledge and hunches is desirable (Bradlow et al. 2017; Dekimpe 2020). Prior knowledge will therefore inevitably guide the investigator’s attention to aspects of the data, enable comprehension and interpretation of the data, and allow for elaboration beyond the data (Alba and Hutchinson 1987). Consequently, the EF investigator may harbor hunches about processes and outcomes based on intuition or prior research, may be able to form hypotheses (albeit often two-tailed), may combine intermediate findings with knowledge to pursue additional avenues for exploration, and may use creativity to generate implications for theory and insights of interest to stakeholders.

Maintain scholar neutrality

Because of the bumbling nature of the EF approach, EF scholars should be open-minded and agenda-free. This requires the scholar to escape the human bonds of top-down processing and motivated reasoning. It is therefore crucial to let the data speak freely (Laurent 2013). Scholars will ultimately add their interpretations as they build the higher-order insights that make EF research attractive. Importantly, the discovery process is not a black-box data-mining exercise, but rather is guided by focal variables of interest and priors about where to look.

In that spirit, we feel some caution is advised when applying machine-learning methods to EF research. Machine learning tends to lack transparency and interpretability, especially at the causal level (Ma and Sun 2020). 8 Machine learning cannot replace the EF scholar’s hunch or experience regarding where to look in the data, what relationships are meaningful to study, which additional data should be considered, and whether the results are coherent. So, although machine learning has its merits in discovery and prediction, and despite movement toward interpretable machine-learning methods (Murdoch et al. 2019), machine learning currently complements rather than replaces EF research.

Explore causality

Causality is not a requirement of EF research, but EF scholars recognize its virtues and are urged to go beyond mere associational relationships whenever possible. 9 Even though associational relationships can be useful in providing an initial understanding of a novel phenomenon and motivating subsequent causal research, EF researchers should aim to identify causal effects by addressing endogeneity and selection issues and leveraging experimental variation (through lab or field experiments or quasi-experiments) when possible. Within the data generation approach, lab and field experiments allow for causal inferences. Within data compilation and data acquisition approaches that use observational data, researchers can capitalize on quasi-experimental variation in the data (Goldfarb, Tucker, and Wang 2022). Research investigating the causal effect of brand advertising on social distancing during the pandemic is a good example (Ghosh Dastidar, Sunder, and Shah 2022).

We further note that the EF approach, because of its exploratory nature, is more likely to reveal multicausal explanations. For example, the literature on technology adoption emphasized scientific literacy as the key driver, paying relatively little heed to visceral and values-based causes (Zheng, Bolton, and Alba 2019). Offering multiple causal pathways is a knowledge-expanding outcome that often is a closer reflection of reality than a singular causal pathway.

Determine investigative breadth and depth

The investigator’s hunches will point to initial relationships that can be probed. As the research proceeds, the investigation is likely to broaden by considering additional contexts (e.g., countries, industries, organizations) and deepen by considering moderators, mediators, and additional DVs. For example, the market-pioneering study expanded from 17 categories to 50 categories while also broadening the set of variables considered and outcomes investigated (Golder and Tellis 1993). Such deepening should especially be pursued when a focal relationship is not robust across conditions. Also, even though scholars’ priors (hunches) are likely to guide the initial data analysis and interpretation, it is important to listen with an open mind to the data so that the strength of the data guides posterior inferences.

Run resilience tests

All relationships that are identified should be assessed for resilience, for example, by testing different operationalizations and estimation methods. Control variables to rule out alternative explanations could be included, alternative endogeneity corrections could be considered, and robustness across subsamples and alternative measures could be explored. Failed resilience tests should be welcomed as an opportunity to learn more about the phenomenon by clarifying boundary conditions and moderators.

Assess Closure

As noted in Figure 1, the data exploration stage is iterative within the stage and is complemented by a feedback loop from the “Advance Understanding” stage. However, at some point, the data exploration stage needs to be terminated, which requires the scholar to establish closure criteria. In Table 3, we present these criteria as a set of questions pertaining to the utility of additional data collection, the insightfulness of the findings, the extent to which the findings shift a priori beliefs, and the practical utility of the findings for stakeholders, including economically, managerially, or socially meaningful effect sizes and robustness. Journals will also impose length constraints that further circumscribe the investigation. Finally, closure cannot be disentangled from the final element of EF research, which is to advance understanding.

Stage 3: Advance Understanding

Our advocacy for an EF approach would be on shaky ground without evidence of its unique virtues. In this section, we illustrate how the EF approach can lead to a wide variety of desirable outcomes that advance our understanding of marketing phenomena, including empirical regularities, conceptual and theoretical insights, and advice to stakeholders, in ways that would be difficult to attain in a typical TF investigation.

Uncover Empirical Regularities

EF research can result in empirical regularities (generalizations), which are patterns of regularity that “repeat over different circumstances and that can be described simply by mathematical, graphical, or symbolic methods” (Bass 1995, p. G7), not only in the direction of certain effects but also in the typical effect sizes (Hanssens 2018). Such regularities show the extent to which marketing phenomena are generalizable across contexts. In addition, whereas TF research is often focused on the direction of relationships (positive or negative) in clean (or controlled) cases, EF research often advances understanding by documenting effect sizes across a large number of heterogenous cases. Theory rarely informs effect sizes, but effect sizes (and their variation) are exceedingly important to many marketing stakeholders. For example, the sales-takeoff study documented an effect size of a 4.2% increase in the probability of a new product’s takeoff for a 1% decrease in price (Golder and Tellis 1997).

If an EF investigation is sufficiently broad, a quasi meta-analysis can be conducted within the investigation, and various potential moderators can be explored (see, e.g., Datta et al. [2022] for a recent application). EF findings will also typically contribute to subsequent meta-analyses and should be reported with such future research in mind.

Formulate Conceptual and Theoretical Insights

Ironically, some of the especially important outcomes of EF research align with the conceptual and theoretical penchants of TF research. For example, EF research can spawn new constructs (e.g., sales takeoff). Moreover, EF research can help resolve ongoing theoretical debates. For example, the market-pioneering study (Golder and Tellis 1993) found more support for dynamic theories opposed to a pioneer advantage, relative to static theories supporting a pioneer advantage. EF researchers need not specify underlying processes in an initial investigation, but speculation can and should be offered to motivate and direct subsequent TF efforts. For example, in the sales-takeoff study, researchers did not develop or test a specific theory, but the results did raise doubts about diffusion theory’s ability to explain the sales takeoff (Golder and Tellis 1997). Moreover, this insight was the impetus for subsequent research that showed support for informational cascades theory as an explanation for sales takeoff (Golder and Tellis 2004).

When a wide net is cast across disparate contexts and perspectives, entire frameworks can emerge that organize a domain. For example, a broad consideration of perceived price fairness led to a four-dimensional “transaction space” that integrated the drivers of perceived fairness along a temporal dimension (Bolton, Warlop, and Alba 2003). A broad consideration of technology resistance led to a three-dimensional resistance space that described any given consumer’s resistance in terms of the combined effects of cognitive, affective, and motivational influences (Zheng, Bolton, and Alba 2019). The grounded-theory approach of Gebhardt, Carpenter, and Sherry (2006) led to a four-stage model for creating a market orientation. In their exploration of host motivations for participating in sharing economy platforms, Chung et al. (2022, p. 817) offered a new perspective in discovering that Airbnb hosts are driven not only by monetary motivation but also by intrinsic motivations, such as “to share beauty” and “to meet people.”

In some cases, the research may result in a new theory grounded in the empirical investigation. For example, after observing multimodal pricing patterns across multiple product categories that differed significantly from theoretical predictions, Gangwar, Kumar, and Rao (2014) developed a new price promotion theory that better matched the empirical observations (see also Bradlow et al. 2017). Keep in mind, however, that theory development is not the raison d’être of EF research but rather one of several desirable outcomes.

For EF research to yield generalizable marketing knowledge, abstraction is a crucial element. Abstraction entails going from the empirical specifics to more generalizable ideas, concepts, or relationships. The scholar should aim to entertain the highest level of abstraction enabled by the empirical results. Abstraction can also happen through follow-up research, such as meta-analysis.

Advise Stakeholders

Irrespective of the nature of the discoveries, it is crucial to conclude the natural arc of EF research by returning to the stakeholders and the real-world issue that initiated the investigation. In the best case, the investigation will provide guidance to managers, consumers, policy makers, and educators, and it may even find an audience among the general public. For example, the empirical regularities uncovered across 50 product categories in the market-pioneering study (Golder and Tellis 1993) yielded clear advice for managers, educators, and policy makers, who should no longer believe that market pioneering is important in establishing long-term leadership. As a further example, Rust et al. (2021) show how a brand’s reputation not only can be monitored in real time and longitudinally but also can be actively managed by leveraging the relationships among its underlying brand, value, and relationship drivers.

Guidance for Reporting EF Research

The success of EF research rests not only on proper execution but also on effective communication. EF scholars should be mindful of the expectations of the review team (many of whom may be steeped in the TF tradition, according to our survey) and anticipate the consequences of telling a schema-incongruent story. Toward this end, we offer the following suggestions.

Explain Your EF Approach at the Outset

A first piece of advice is to be clear that the research follows the EF approach and to explain why the approach is appropriate for the research problem. For example, one can point to thin, scattered, or conflicting literature that necessitates an EF approach, a tactic recently adopted in Datta et al. (2022).

Be True to the EF Approach

EF research needs to be reported as EF research, not as TF research, but it can be challenging to communicate a nonlinear process. As a general principle, we believe that an EF manuscript need not explain all the twists and turns but should reflect its exploratory character. That is, authors should have latitude in constructing a narrative that is palatable and appealing to readers, even if the ordering of the studies differs from their chronological order (Bem 2003). However, finding significant relationships via an EF approach and then reporting them as hypotheses in a TF write-up (i.e., HARKing) is not acceptable.

Clearly Explain the Flow of the Article

The absence of a template for reporting EF research can make its communication trickier than that of template-abiding TF research. To overcome this challenge, we recommend paying special attention to communicating the article’s structure and narrative. Tools include providing a flowchart to orient the reader at the start; structuring the article according to the research questions; using informative section, table, and figure headings that explain to the reader what is going on (e.g., Hewett et al. 2016); and providing explanatory bridges between the steps in the process.

Offer Justification for Selected Variables

When proposing particular variables (e.g., different moderating or mediating variables) to study, the scholar needs to offer some justification for those that are selected. EF scholars should not simply include any available variable that is found to have some (correlational or predictive) merit, as this may simply reveal an idiosyncratic finding that does not generalize across different settings. The justification for selected variables need not stem from a single framework or theory, as long as there is some rationale or prior expectation for including them. EF scholars can convince readers that a study’s variables are worth considering by alluding to related literature, industry practice, general logic, or common sense.

Report Fishing Expeditions as Such

Many reviewers rightfully object to incompletely reported “fishing expeditions” that capitalize on chance by testing numerous variables and reporting only the significant catches. EF scholars are advised, in contrast, to document their hunches about where they looked, explain their exact steps, report what did and did not work, test robustness, and report the results fully. A good example of this candid approach is Chen et al. (2020), in which the authors discuss how theory and the data informed their approach.

Avoid Unsupported Generalizations and Unsubstantiated Stakeholder Advice

If the breadth of the empirical investigation has important limitations, the author has the obligation to avoid unsupported generalizations and unsubstantiated stakeholder advice. It is the task of the field to explore generalization and stakeholder advice by examining additional contexts in future studies.

Share Empirical Findings

Another step EF scholars could take to promote knowledge development is to share empirical findings that do not appear in the main article or even in a typical web appendix. To aid future research, this information should be adequately structured, for example, according to the various main effects that were considered, the interaction effects that were explored, potential theories addressed, and so forth. A structured template for reporting these analyses and results at the end of a typical web appendix would aid knowledge development and facilitate future meta-analyses (Lehmann 2020). It is particularly important to document how data are interpreted at the level of constructs, as this makes it easier to accumulate knowledge via meta-analyses examining similar IVs and DVs. Meta-analyses are not only helpful in integrating insights across EF studies and deriving meta-knowledge; they can also help identify remaining knowledge gaps (e.g., critical research questions that have yet to be answered or critical contingencies not yet accounted for; Hulland and Houston 2020).

To make our advice for EF scholars more concrete, Table 4 offers criteria and associated questions to evaluate EF research nearing completion. Although some of these questions also pertain to TF research, the mindset with which they are applied differs systematically, as explained in Table 1. It is unreasonable to expect EF articles to answer all questions in the affirmative; rather, considering these questions may offer additional pathways to increase the value of the work.

Questions for Interrogating EF Research.

Guidance for Evaluating EF Research

Our emphasis thus far has been on the merits of EF research and the description of its conduct and reporting. As a practical matter, however, few scholars have a death wish. Even among reviewers who profess sympathy for EF research, understanding of the trade-offs inherent in EF research may be low. If EF research receives a chilly reception in the review process because it fails familiar TF evaluative criteria, scholars will either refrain from pursuing EF research or succumb to the temptation of HARKing.

To help rectify this problem, reviewers and editors should be open-minded and informed when evaluating EF research. Lynch et al. (2012) describe different criteria that are appropriate for contrasting research approaches. From our experiences (on both sides of the review process) with TF-focused reviewers and editors, we offer some additional advice for journal gatekeepers.

Do Not Demand an Overarching Theoretical Framework or a Single Theoretical Lens

Much EF research lacks a theoretical framework because none exists or because the phenomenon is multifaceted and cannot fit into a single framework. Reviewers who make allegations regarding the relevance of existing frameworks should be specific about how those frameworks explain and predict the EF findings.

Do Not Expect Perfection

A key role of reviewers is to make sure that the article is not wrong. However, some reviewers think all coefficients in a model with 20–30 parameters should have “expected” signs and significance that all conform to a specific story. The real world of marketing is far messier, and some of this messiness will be reflected in the estimates. If reviewers expect perfection, scholars will feel pressure to cut corners to provide selected results, thereby contributing to the crises in research ethics and replication described previously. In contrast, reviewers can encourage authors to pay special attention to—rather than discard—systematic outliers and parameter estimates with consistently wrong signs. Exceptions can be insightful, and these instances may lead to new knowledge that would remain concealed if scholars focused only on average effects (see, e.g., Wies, Moorman, and Chandy 2022).

Be Realistic with Robustness Checks

Reviewers should understand that particular robustness checks may be infeasible because additional data can be difficult or impossible to collect. For example, it may be unrealistic to ask the authors of a data-acquisition EF study to find a second industry partner that is willing to share proprietary data; however, asking for robustness checks within the realm of the available data is not unreasonable. Also, one should be open-minded if a robustness check does not confirm all findings. In TF research a failed robustness check is seen as a dent in the proposed theory, but in the EF mindset it represents a learning opportunity (Table 1). A promising development is the “specification curve analysis” of Simonsohn, Simmons, and Nelson (2020). This approach attempts to factorially enumerate all possible plausible specifications of one’s model—sometimes in the thousands—and to consider the percentage of plausible specifications for which the key result holds (see Todri, Adamopoulos, and Andrews [2022, their Figure 3], for a prime example of this approach).

Do Not Demand Traditional Theoretical Implications

Many TF articles use the discussion section to nest their work in existing theory. Although EF research often does not offer these traditional theoretical implications (e.g., because there is no literature into which they can be nested), EF research often offers new ideas, new concepts, and new relationships that represent less traditional (but equally valuable) forms of theoretical progress. In addition, EF research may pursue other valuable outcomes, including empirical regularities and stakeholder advice (see Figure 1 and Table 3).

Do Not Ask That EF Research Be Reported as TF Research

A cardinal sin is to demand that an EF manuscript be styled as a TF manuscript. Reviewer discomfort with nonlinearity and messiness is a poor rationale for HARKing. 10

Editors Must Support the EF Approach

Ultimately, editors are the linchpins. They set journal policy and oversee associate editors and reviewers. Unfortunately, overtures to pluralism in policy that editors offer when taking office often fail to find traction in practice. Editors need to promote EF research more vigorously and overrule misguided reviewers (and associate editors) who exhibit unjustified hostility toward it. In the end, editors are best positioned to address a basic question: How are the interests of marketing and the journal served by suppressing relevant and robust EF findings? Or, more positively, how much can the field and the journal benefit from publishing these findings?

General Discussion

Our overarching line of reasoning is composed of three arguments: (1) a large part of academic marketing research is dominated by the TF paradigm, (2) a well-conducted EF approach may serve as an alternative route for relevant knowledge generation, and (3) EF research lacks a how-to guide. In this article, we focus on the third of these arguments by offering advice for conducting, reporting, and evaluating EF research. Our advocacy does not imply that one needs to choose sides. We have engaged in both TF and EF research as the situation demanded. In the end, all science is a quest for truth, and scholars should adopt the approach that provides the best route in a particular context. In actuality, most research is situated on the continuum between pure EF and pure TF.

In the preceding sections, we have offered suggestions to authors, reviewers, and editors. We conclude by discussing implications for PhD training and for the broader science ecosystem.

Implications for PhD Training

As our survey indicates, TF advocacy can be found in PhD education. We urge educators to grant EF and TF research equal status, but we do so with some trepidation. If young scholars commit to EF research before journals are prepared to give it a fair hearing, these scholars are doomed. Thus, our advice to young scholars sympathetic to an EF mindset is contingent on reciprocity from editors and reviewers.

To facilitate adoption of the EF approach in PhD training, it is important that students be encouraged to observe the real world. For example, PhD students should engage with consumers, managers, and other marketing stakeholders to learn of their everyday marketing problems. In addition, whereas many current data-analysis courses follow a deductive paradigm (theory, hypotheses, data collection, testing), these courses could be designed to include, as well, an EF mindset. For example, students could be offered a rich new data set that can be explored to uncover novel marketing insights.

In addition, course content should explicitly include EF articles that have impacted the field, and advisors should communicate the virtues of an EF approach. During the research process itself, scholars can use the guidance in Table 4 as one way to check whether the research is on track for a strong EF contribution.

Implications for the Science Ecosystem

A saddening development across the entire realm of science is a crisis of confidence driven by a reporting of inflated effects (Ioannidis 2005). Whereas outright fraud is rare, selective reporting of analyses and findings has proven problematic. The current debate centers on the benefits and costs of study preregistration. A stimulating exchange regarding the merits of preregistration has recently appeared (Pham and Oh 2021a, b; Simmons, Nelson, and Simonsohn 2021a, b), a portion of which touches on the EF approach.

First, it has been argued that preregistration encourages pursuit of expectation-confirming studies. To our mind, properly conducted EF research is inherently agenda-free. EF research is not guided by hypotheses, and scholars can be agnostic with regard to particular outcomes.

Second, whereas preregistration may enhance replicability, advocates on both sides acknowledge that preregistration may do little to enhance generalizability or robustness. To our mind, properly conducted EF research is expressly concerned with generalizability and robustness. EF scholars grow confident when broad and deep investigation leads to convergence and/or a within-investigation meta-analysis. In addition, failures of generalizability and robustness are taken as potential opportunities. In TF research, expectation-confirming results have become favored over null results, creating incentives for confirmatory bias and p-hacking; in EF research, null results are informative, especially when they reveal instances in which an effect no longer obtains.

Third, there is disagreement over whether preregistration impedes exploration, relevance, and insight. We cannot settle this debate. Insofar as exploration is thwarted, preregistration runs counter to all that we advocate. We do agree that good science should be measured in terms of its trustworthiness as well as its contributions to knowledge, practice, and human welfare.

Fourth, a more technical matter is the question of the extent to which different types of data lend themselves to preregistration. Whereas many behavioral EF studies are amenable to preregistration, compiled or acquired data are less so (Pham and Oh 2021b; Simmons, Nelson, and Simonsohn 2021a). In terms of the spirit of open science, concerns over confidentiality and nondisclosure agreements demanded by data providers are nontrivial; yet, procedures have been put in place by several journals to balance the interests of various stakeholders (e.g., Desai 2013).

Finally, in the medical domain and other fields outside marketing, researchers have latitude to publish unexpected findings (e.g., a subset of the sample that does not react to the treatment). We believe that marketing journals should be open to post hoc results, which arise after a scholar realizes that additional tests could be conducted to explicate a theory or unanticipated results more fully. These post hoc results may provide impetus to others to investigate such findings further, particularly with an eye toward replicability.

Conclusion

Over half a century ago, academic research across several business disciplines came under criticism for falling short of scientific standards (see, e.g., Neslin and Winer 2014). One response from academia was a paradigm shift away from description and toward theory. This shift was entirely appropriate and broadly successful. The question now is whether the pendulum has moved too far, such that scientific legitimacy is viewed so narrowly that it suppresses real-world relevance and induces academic marketing to serve no constituency other than itself (Stremersch, Winer, and Camacho 2021). It may be time to entertain another paradigm shift in which theory is less revered and empirical evidence is more so. The EF path, when ambitiously and rigorously pursued, offers one way to address demands for relevance, novelty, replicability, and generalizability. Success requires changes in the mindsets of authors, a more even balance between TF research and EF research in PhD education, and a journal review process that understands and accepts the inherent trade-offs in pursuing EF research. We hope our article offers legitimacy for EF research and provides concrete guidance for scholars to produce high-quality EF research and for reviewers to evaluate it.

Supplemental Material

sj-pdf-1-jmx-10.1177_00222429221129200 - Supplemental material for Learning from Data: An Empirics-First Approach to Relevant Knowledge Generation

Supplemental material, sj-pdf-1-jmx-10.1177_00222429221129200 for Learning from Data: An Empirics-First Approach to Relevant Knowledge Generation by Peter N. Golder, Marnik G. Dekimpe, Jake T. An, Harald J. van Heerde, Darren S.U. Kim and Joseph W. Alba in Journal of Marketing

Footnotes

Appendix

Survey responses discussed in the “Survey on EF and TF Practices and Perspectives” section of this article are based on specific parts of Questions 2, 3, 4, 5, and 6 (see the Web Appendix for the complete set of survey questions and responses). Here, we present the response scales for the survey questions and results discussed in this article.

Question 2 asked respondents to indicate the sequence of seven research steps (review academic literature; develop theory and/or theoretical rationale (hypotheses); propose/identify research questions; design study; collect/acquire/gain access to data; analyze data; develop implications) under four different perspectives: (1) the order in which you typically conduct a research project, (2) the order in which you typically report a research project, (3) the order in which you suspect others typically conduct research projects in your research domain, and (4) the order you feel is expected by reviewers.

Questions 3 and 4 used a response scale with five levels: “Strongly disagree,” “Disagree,” “Neither agree nor disagree,” “Agree,” and “Strongly agree.” Percentages are based on the sum of “Strongly agree” and “Agree” responses or the sum of “Strongly agree,” “Agree,” and “Neither agree nor disagree” responses. Statements for (dis)agreement were (1) “Research should be based on a strong theoretical rationale,” (2) “Research that starts with analyzing data is unscientific,” and (3) “Journals have strong expectations on the structure of a paper.”

Questions 5 and 6 used a response scale with five levels: “Strongly agree (TF),” “Somewhat agree (TF),” “Neutral,” “Somewhat agree (EF),” and “Strongly agree (EF).” Percentages are based on the sum of “Strongly agree” and “Somewhat agree” responses for EF research versus the sum of “Strongly agree” and “Somewhat agree” responses for TF research; the remaining responses were neutral between EF and TF. Statements for agreement were (1) “[TF/EF] research is more likely to lead to real-world insights,” (2) “[TF/EF] research is more appropriate for investigating new phenomena such as AI, drones, the pandemic, etc.,” (3) “[TF/EF] research is more appropriate for serving our field’s broader constituencies (e.g., managers, consumers, public policy officials),” (4) “In my Ph.D. research training there was more emphasis on [TF/EF],” (5) “The field expects papers to follow the [TF/EF] approach,” and (6) “It is easier to go through the journal review process with a paper following the [TF/EF] approach.”

Acknowledgments

The authors thank Rong Guo, principal research data engineer at the Tuck School of Business at Dartmouth College, for research support with survey responses, and especially the JM review team for their extremely constructive feedback and guidance. The authors also thank Donald R. Lehmann, John G. Lynch Jr., Oded Netzer, Roland T. Rust, John F. Sherry Jr., and Jie Zhang for providing examples of EF research.

Associate Editor

Christine Moorman

Author Note

The order of authorship is arbitrary.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.