Abstract

Chatbots have become common in digital customer service contexts across many industries. While many companies choose to humanize their customer service chatbots (e.g., giving them names and avatars), little is known about how anthropomorphism influences customer responses to chatbots in service settings. Across five studies, including an analysis of a large real-world data set from an international telecommunications company and four experiments, the authors find that when customers enter a chatbot-led service interaction in an angry emotional state, chatbot anthropomorphism has a negative effect on customer satisfaction, overall firm evaluation, and subsequent purchase intentions. However, this is not the case for customers in nonangry emotional states. The authors uncover the underlying mechanism driving this negative effect (expectancy violations caused by inflated pre-encounter expectations of chatbot efficacy) and offer practical implications for managers. These findings suggest that it is important to both carefully design chatbots and consider the emotional context in which they are used, particularly in customer service interactions that involve resolving problems or handling complaints.

Keywords

The use of artificial intelligence (AI) in marketing is on the rise, as managers experiment with the use of AI-driven tools to augment customer experiences. One relatively early use of AI in marketing has been the deployment of digital conversational agents, commonly called chatbots. Chatbots “converse” with customers, through either voice or text, to address a variety of customer needs. Chatbots are increasingly replacing human service agents on websites, social media, and messaging services. In fact, the market for chatbots and related technologies is forecasted to exceed $1.34 billion by 2024 (Wiggers 2018).

While some industry commentators suggest that chatbots will improve customer service while simultaneously reducing costs (De 2018), others believe they will undermine customer service and negatively impact firms (Kaneshige and Hong 2018). Thus, while customer service chatbots have the potential to deliver greater efficiency for firms, whether—and how—to best design and deploy chatbots remains an open question. The current research begins to address this issue by exploring conditions under which customer service chatbots negatively impact key marketing outcomes. While many factors may influence customers’ interactions with chatbots, we focus on the interplay between two common features of the customer service chatbot experience.

The first feature relates to the design of the chatbot itself: chatbot anthropomorphism. This is the extent to which the chatbot is endowed with humanlike qualities such as a name or avatar. Currently, the prevailing logic in practice is to make chatbots appear more humanlike (Brackeen 2017) and for them to mimic the nature of human-to-human conversations (Luff, Frohlich, and Gilbert 2014). However, anthropomorphic design in other contexts (e.g., branding, product design) does not always produce beneficial outcomes (e.g., Kim, Chen, and Zhang 2016; Kwak, Puzakova, and Rocereto 2015). Accordingly, we examine circumstances under which anthropomorphism of customer service chatbots may be harmful for firms.

The second dimension explored in this research is a commonly occurring feature in customer service interactions, irrespective of the modality: customer anger. Anger is one of the most prevalent specific emotions occurring in customer service contexts; estimates suggest that as many as 20% of call center interactions involve hostile, angry, complaining customers (Grandey, Dickter, and Sin 2004). Furthermore, the prevalence of anger increased during the COVID-19 pandemic (Shanahan et al. 2020; Smith, Duffy, and Moxham-Hall 2020), so a higher proportion of interactions are likely to be with angry customers. Thus, it is both practically relevant to consider how customer anger interacts with chatbot anthropomorphism and theoretically relevant due to the specific responses (e.g., aggression, holding others accountable) evoked by anger that impact the efficacy of more humanlike technology.

Across five studies including the analysis of a large real-world data set from an international telecommunications company and four experiments, we find that when customers in an angry emotional state encounter a chatbot-led service interaction, chatbot anthropomorphism has a negative effect on customers’ satisfaction with the service encounter, their overall evaluation of the firm, and their subsequent purchase intentions. However, this is not the case for customers in nonangry emotional states. The negative effect is driven by an expectancy violation; specifically, anthropomorphism inflates preinteraction expectations of chatbot efficacy, and those expectations are disconfirmed. Our findings suggest that it is important to both carefully design chatbots and consider the emotional context in which they are used, particularly in common types of customer service interactions that involve handling problems or complaints. This research contributes to the nascent literature on chatbots in customer service and has managerial implications both for how chatbots should be designed and for context-related deployment considerations.

Conceptual Framework

Anthropomorphism in Marketing

Deliberate marketing efforts have made anthropomorphism, or the attribution of humanlike properties, characteristics, or mental states to nonhuman agents and objects (Epley, Waytz, and Cacioppo 2007; Waytz, Epley, and Cacioppo 2010), especially pervasive in the modern marketplace. Product designers and brand managers often encourage customers to view their products and brands as humanlike, through a product's visual features (e.g., face-like car grilles; Landwehr, McGill, and Hermann 2011) or brand mascots (e.g., the Pillsbury Doughboy; Wan and Aggarwal 2015). In digital settings, advances in machine learning and AI have ushered in a new wave of highly anthropomorphic devices, from humanlike self-driving cars (Waytz, Heafner, and Epley 2014) to voice-activated virtual assistants with human names and speech patterns (e.g., Amazon's Alexa; Hoy 2018).

Extant research generally suggests that inducing anthropomorphic thought is linked to improved outcomes. “Humanized” products and brands are more likely to achieve long-term business success because they encourage a more personal consumer–brand relationship (Aggarwal and McGill 2007; Wan and Aggarwal 2015). Anthropomorphic product features can make products more valuable (Hart, Jones, and Royne 2013) and can boost overall product evaluations in categories, including automobiles, mobile phones, and beverages (Aggarwal and McGill 2007; Labroo, Dhar, and Schwarz 2008; Landwehr, McGill, and Hermann 2011). Wan, Chen, and Jin (2017) found that anthropomorphized products increased consumers’ preference and subsequent choice of those products.

Anthropomorphism of technology has also been shown to improve marketing outcomes. Humanlike interfaces can increase customer trust in technology by increasing perceived competence (Bickmore and Picard 2005; Waytz, Heafner, and Epley 2014) and are more resistant to breakdowns in trust (De Visser et al. 2016). Avatars (anthropomorphic virtual characters) can make online shopping experiences more enjoyable, and both avatars and anthropomorphic chatbots can increase purchase intentions (Han 2021; Holzwarth, Janiszewski, and Neumann 2006; Yen and Chiang 2021). Anthropomorphic digital messengers can even be more persuasive than human spokespeople in some contexts (Touré-Tillery and McGill 2015) and can increase advertising effectiveness (Choi, Miracle, and Biocca 2001). Anthropomorphized digital devices can even become friends with their users (Schweitzer et al. 2019), such that the consumer resists being disloyal by replacing the product (Chandler and Schwarz 2010), leading to greater customer brand loyalty.

Although most evidence points to beneficial effects of anthropomorphism, there are drawbacks. For example, anthropomorphic helpers in video games reduce enjoyment of the gaming experience by undermining a players’ sense of autonomy (Kim, Chen, and Zhang 2016). Other research shows that for agency-oriented customers, brand anthropomorphism exaggerates the perceived unfairness of price increases (Kwak, Puzakova, and Rocereto 2015) and hurts brand performance amid negative publicity (Puzakova, Kwak, and Rocereto 2013). Low-power customers perceive risk-bearing entities (e.g., slot machines) as riskier when the entities are anthropomorphized (Kim and McGill 2011). Further, research suggests that when customers are in crowded environments and want to socially withdraw, brand anthropomorphism harms customer responses (Puzakova and Kwak 2017). Thus, it would be overly simplistic to assume that anthropomorphism positively impacts customers’ encounters with brands, products, or companies. The consequences are more nuanced, with outcomes depending on both customer characteristics and the context (Valenzuela and Hadi 2017).

While customers’ downstream responses to anthropomorphism are mixed, one consistent consequence of anthropomorphism is that customers attribute more agency to anthropomorphic entities (Epley, Waytz, and Cacioppo 2007). “Agency” refers to the capacity to plan and act (Gray, Gray, and Wegner 2007). Because anthropomorphism leads customers to perceive a mental state in another entity, it increases individuals’ perception that the entity is capable of acting in a deliberate manner (Waytz et al. 2010). This increases expectations that the agent has abilities such as emotion recognition, planning, and communication (Gray, Gray, and Wegner 2007). These heightened expectations lead individuals to ascribe moral responsibilities to anthropomorphic entities (Waytz et al. 2010), to believe that the entity should be held accountable for its actions (De Visser et al. 2016), and to think the entity deserves punishment in the case of wrongdoing (Gray, Gray, and Wegner 2007).

Of course, anthropomorphic entities do not always perform in a manner consistent with the high levels of agency customers expect. In fact, some researchers suggest that one reason behind the “uncanny valley” (i.e., the tendency for a robot to elicit negative emotional reactions when it closely resembles a human; Mori 1970) is because robots do not perform in the agentic manner that their human resemblance would imply (Waytz et al. 2010). In other words, the robots’ behavior violates the expectations elicited by their highly anthropomorphic facade. These violations arguably apply to current chatbots, given that their performance is not expected to reach believable levels of human intelligence before 2029 (Shridhar 2017). Thus, expectancy violations play an important role in chatbot-driven customer service settings.

Expectancy Violations and Customer Anger

Before using a product or service, customers form expectations regarding how they anticipate the target product, brand, or company will perform. Postusage, customers evaluate the target's performance and compare that to their preusage expectations (Cadotte, Woodruff, and Jenkins 1987). When performance fails to meet expectations, the negative disconfirmation is known as an expectancy violation (Sundar and Noseworthy 2016), which arises because (1) preusage expectations are high or (2) postusage performance is poor (Cadotte, Woodruff, and Jenkins 1987). Expectancy violations not only harm customer satisfaction (Oliver 1980; Oliver and Swan 1989) but also negatively impact other consequential downstream outcomes, including attitude toward the company (Cadotte, Woodruff, and Jenkins 1987) and purchase intentions (Cardello and Sawyer 1992; Oliver 1980). Importantly, customer responses to expectancy violations are highly influenced by their emotional states, particularly anger (Ask and Landström 2010).

Two theories help explain why anger increases customers’ negative responses to expectancy violations. The functionalist theory of emotion suggests that anger is an activating, high-intensity emotion with an evolutionary purpose: it evokes quick decision making and heuristic use to react quickly to immediate threat (Bodenhausen, Sheppard, and Kramer 1994). Anger is often used as a strategy to respond to obstacles (Lerner and Keltner 2000; Martin, Watson, and Wan 2000) or retaliate against an offending party (Cosmides and Tooby 2000) because of its tendency to increase action and aggression, compared with other emotions that are deactivating (e.g., sadness; Cunningham 1988; Lench, Tibbett, and Bench 2016) or nonaggressive (Lerner and Keltner 2000).

This retaliation is also predicted by appraisal theorists, who suggest that even in situations of incidental anger, anger increases the tendency to hold others responsible for negative outcomes (Keltner, Ellsworth, and Edwards 1993) and to respond punitively toward them (Goldberg, Lerner, and Tetlock 1999; Lerner, Goldberg, and Tetlock 1998; Lerner and Keltner 2000). This is markedly distinct from emotions such as frustration or regret, which are more likely to manifest when people hold the situation or themselves responsible for negative outcomes, respectively (Gelbrich 2010; Roseman 1984). Thus, angry (vs. nonangry) customers are more likely to blame others and retaliate when another's performance falls short of expectations. This is particularly the case if their goals are obstructed (Martin, Watson, and Wan 2000), as angry customers especially feel the need to achieve a desirable outcome (Roseman 1984).

Linking Chatbot Anthropomorphism, Expectancy Violation, and Customer Anger

Drawing from the extant theories and research, we hypothesize that anthropomorphism heightens customers' preperformance expectations about a chatbot's level of agency and performance capabilities, resulting in expectancy violations. Further, angry customers are more likely to suffer from expectancy violations due to their need to overcome obstacles, to blame and hold others accountable, and to respond punitively to such expectancy violations due to their action orientation (i.e., giving lower satisfaction ratings, poor reviews, or withholding future business from the offending party). This logic would suggest that angry customers might be better served by nonanthropomorphic agents. Recent research supports this notion by demonstrating that in unpleasant service situations, reducing human contact (e.g., through technological barriers; Bitner 2001) can help attenuate customer dissatisfaction and limit negative service evaluations (Giebelhausen et al. 2014).

Building on these arguments, we predict that individuals who enter a chatbot service interaction in an angry emotional state will respond negatively to chatbot anthropomorphism, whereas individuals in nonangry emotional states will not. While the most immediate negative reaction is likely to manifest in reduced customer satisfaction ratings of the service encounter with the chatbot, this can also carry over to harm more general firm evaluations and result in lower future purchase intentions, which are known consequences of dissatisfaction (Anderson and Sullivan 1993). Formally, we hypothesize the following:

Our proposed conceptual framework is illustrated in Figure 1. Across five studies, using a combination of real-world and experimental data, we test the different parts of our theorizing to collectively support our proposed framework. In Study 1, we analyze a large data set from an international mobile telecommunications company that captures customers’ interactions with a customer service chatbot. We use natural language processing (NLP) on chat transcripts and find that for customers exhibiting an angry emotional state during a chatbot-led service encounter, anthropomorphic treatment of the bot has a negative effect on their satisfaction with the service encounter (consistent with H1a). In Study 2, the first of four experiments, we manipulate chatbot anthropomorphism and customer anger and find that angry customers display lower customer satisfaction when the chatbot is anthropomorphic versus when it is not (consistent with Study 1 and H1a). Study 3 shows that the negative effect extends to company evaluations (H1b) but not when the chatbot effectively resolves the problem. Study 4 shows that the negative effect of chatbot anthropomorphism for angry customers extends further to reduce customers’ purchase intentions (H1c) and provides evidence that this effect is driven by inflated preinteraction expectations of chatbot efficacy (H2). Finally, Study 5 manipulates preinteraction expectations and demonstrates that the negative effect dissipates when people have lower expectations of anthropomorphic chatbots (further supporting H2).

Illustration of proposed model.

Study 1

Study 1 analyzes a real-world data set from an international mobile telecommunications company capturing customers’ interactions with a customer service chatbot. The chatbot was available via the company's website and mobile app and was a text-only bot driven by machine learning, specifically, advanced NLP. The chatbot was highly anthropomorphic; the avatar was a cartoon illustration of a young female avatar with long hair, makeup, and modern casual clothing. Her name appeared in the chat, and customers could visit a profile webpage with her bio describing her personality and listing some of her likes and dislikes.

The main purpose of the study was to examine how treating a chatbot as more or less human (i.e., higher or lower anthropomorphic treatment) impacted customer satisfaction with the encounter and, critically, whether this effect was moderated by customer anger (i.e., H1a). Because the chatbot was anthropomorphic and this could not be varied experimentally, we focused on the anthropomorphic treatment of the chatbot. If a customer treats a chatbot in a more human-consistent way, then we assume that is a consequence of a customer having more anthropomorphic thoughts resulting from perceiving the chatbot as more anthropomorphic. Specifically, we operationalized anthropomorphic treatment as the extent to which customers used the chatbot's name in their text-based conversation. As a name makes an object more anthropomorphic (Waytz, Epley, and Cacioppo 2010), the use of the chatbot's name indicates treating it as more human and serves as a reasonable proxy for anthropomorphic treatment.

Data and Measures

Data were provided by a major international mobile telecommunications company. The data set covers 1,645,098 lines of customer text entries from 461,689 unique customer chatbot sessions that took place between September 2016 and August 2017 in one European country served by this company. At the end of each session, customers were given the option to rate their satisfaction with the chatbot encounter from one to five stars. Approximately 7.5% of sessions were rated (34,639 out of 461,689). In addition, for each line of customer text entered, there were metadata from the underlying chatbot NLP system that indicated the system's confidence in it having correctly “understood” each line of customer input, which was expressed as a percentage and termed the “bot recognition rate.” We used the 1–5 satisfaction rating as the dependent variable. The distribution of this variable is shown in Figure 2, and the mean (SD) satisfaction rating was 2.16 (.79). We controlled for quality of the chatbot experience using the bot recognition rate, drawing on the assumption that for a given chatbot session, a higher average and lower variance in recognition rate indicated that the chatbot consistently understood more of a customer's inputs, which likely meant that the customer had an overall better communication experience.

Distribution of user satisfaction ratings following interaction with anthropomorphic service chatbot.

We processed chat transcript data (i.e., unstructured text) using the dictionary-based Linguistic Inquiry and Word Count (LIWC) package 1 (Pennebaker and Francis 1996) to classify each consumer text entry with respect to anger and to build our measure of the extent to which each customer treated the chatbot anthropomorphically.

Anger

In line with our theorizing, anger was the key emotion in customers’ inputs to the chatbot. Our measure of anger was the corresponding LIWC item (“anger”) that indicates the proportion of words in the input that are classified as being associated with anger. To arrive at the session-level measure, we averaged the LIWC anger value of each customer input within a given session. Unfortunately, this implicitly assumes that anger is exogenous by ignoring the initial emotional state of the customer and the dynamics of the consumer–bot exchange. We subsequently examine the robustness of our results when relaxing this assumption.

Anthropomorphic treatment

The chatbot was anthropomorphic because it had been endowed with extensive humanlike features (e.g., name, avatar, likes/dislikes). As we had no control over this, we instead aimed to measure anthropomorphic treatment, or the extent to which customers treated the chatbot in a humanlike manner.

There are several possible measures to derive for anthropomorphic treatment. Our approach was to use a simple measure based on the frequency of use (or nonuse) of the chatbot's name, assuming that if a customer used the chatbot's name, then they were treating it in a more humanlike manner than if they did not use it. Thus, our measure of anthropomorphic treatment was the total number of times in a customer's chatbot session that they used the chatbot's name. Repetition of the chatbot's name may also be an implicit acknowledgement by a customer of the chatbot's agency, which is another key indicator of humanlike treatment. Examples of this are customer inputs such as, “Hello [Bot Name], my name is [Customer Name], can you please help me with my bill?” and finishing a conversation with “Thank you [Bot Name].” The mean (SD) of this count was.032 (.178), ranging from zero to six times the bot's name was used per user session.

Bot recognition rate

As noted previously, the chatbot's NLP system produced a confidence value, expressed as a percentage, of the likelihood that it correctly understood customer text input. The average and standard deviation of these values within a user session provide control variables for the quality and consistency of that customer experience. The mean (SD) of this value was 73.09 (23.6).

Number of interactions

As a control, we captured the number of times the customer interacted with the chatbot in each session (i.e., the number of customer inputs per session). The mean (SD) was 3.56 (4.21), with a range of 1 to 491.

Chatbot interaction type

Finally, we controlled for the type of interaction the customer had with the service chatbot. This categorization was used by the chatbot itself, retained as metadata, connecting the requests received with a broad categorization of different types of service encounters: General Dialog, Questions and Answers, Providing Links, Frequently Asked Questions, and Feedback. Examples of individual dialogs include “Invoices,” “SIM Card Activation,” and “PIN Recovery.”

Analysis

Our goal was to estimate the extent to which anthropomorphic treatment, anger, and their interaction affected customer satisfaction. Considering that satisfaction was measured on a 1–5 scale (with five being the highest level of satisfaction), but with a great deal of mass in the distribution at the scale midpoint and both endpoints, we treated this as an ordinal variable and analyzed it using an ordinal logistic regression. Thus, we accounted for the potential for heterogeneity in distances between scale points. Vitally, we econometrically handled the obvious potential for a selection bias because only 7.5% of all customer chat sessions in our data set included a satisfaction rating. Thus, our analysis is based on the 34,639 chat sessions for which we had a satisfaction measure, but we make use of all 461,689 chat sessions in our treatment of endogenous sample selection.

To account for the ordinal nature of our data and the sample selection, we used an extended ordinal probit model, which estimates the probit selection and the ordinal satisfaction ratings equations simultaneously and with correlated errors (Greene and Hensher 2010).

2

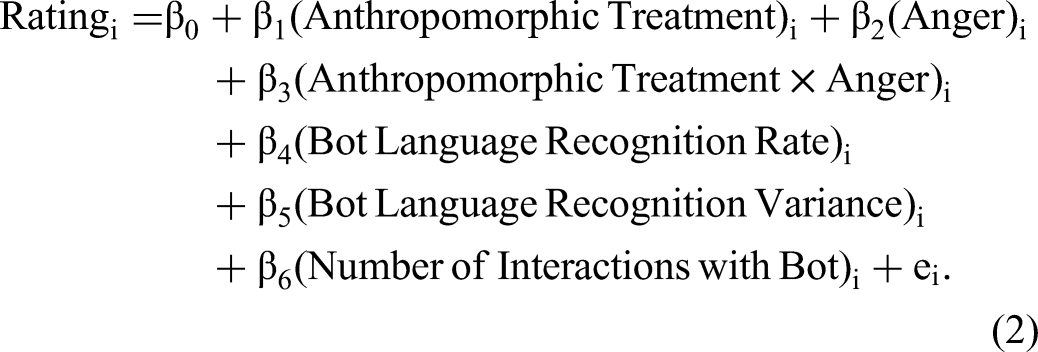

The first equation was a binary probit model for leaving a satisfaction rating (1) or not (0), as described in Equation 1 (with i denoting the chat session and error esi). The second equation was an ordered probit model, as shown in Equation 2 (with i denoting the chat session and error ei). The error terms in Equations 1 and 2 were correlated. Note that in Equation 1 we used bot interaction type as an exclusion restriction because it impacts the likelihood of leaving a rating, P(Feedback = 1)i, but not the satisfaction rating, Ratingi.

Results

Table 1 reports descriptive statistics and correlations. Table 2 reports results from the model described previously. First, considering the selection model in Table 2, we see that the type of customer–bot interaction (which we use as an exclusion restriction) is a significant predictor of the likelihood of the customer providing feedback to the firm, especially when, compared with general conversation (the base case in the model), the bot is focused on eliciting feedback (α6-feedback = 2.973, p < .001). Anger had a negative and significant effect on the likelihood of providing feedback (α2 = −.008, p < .05), whereas the number of exchanges with the bot during the session had a positive and significant effect (α5 = .033, p < .001).

Descriptive Statistics in Study 1.

Notes: Boldface indicates p < .05.

Probit Selection Model—Likelihood of Customer Providing a Rating After Interaction and Ordinal Probit—Customer Rating (Study 1).

p < .10.

*p < .05.

**p < .01.

***p < .001.

Next, considering the main model for satisfaction ratings in Table 2, after accounting for the likelihood of providing feedback, both main effects of anthropomorphic treatment and anger were nonsignificant (β1 = −.055, n.s.; β2 = −.002, n.s.). However, their interaction was significant and negative (β3 = −.167, p = .05). This was after controlling for the technical performance of the bot during that session (recognition rate mean and variance) and the number of customer interactions in a session. Probing this interaction revealed the hypothesized effects across the distribution of consumer anger scores. When anger is higher (1 SD above the mean), the marginal effect of anthropomorphic treatment on satisfaction rating is significant and negative (β1 = −.350, p = .02), consistent with H1a. Interestingly, we also found that when anger was lower, but still present (1 SD below the mean), the marginal effect of anthropomorphic treatment on satisfaction rating remained negative, albeit with a smaller effect size than in the higher anger case (β1 = −.329, p = .02). Thus, it appears that the mere presence of anger can result in a negative relationship between anthropomorphic treatment and satisfaction. Further probing this interaction, we found that when anger was zero (i.e., not at all present), the marginal effect of anthropomorphic treatment on satisfaction rating was nonsignificant (β1 = .011, p = .32).

Robustness Checks

We were restricted in our analysis of Study 1 given that the data were provided as an outcome of the firm's operations and the conditions around each customer could not be assigned or manipulated. We could not control whether a customer provided feedback, the level of anthropomorphic treatment, or the customers’ level of anger upon entering the chatbot interaction. While the first two limitations were addressed with a selection model and by exploiting variance in exhibited behavior, the levels of anger were taken as a given.

As a robustness check, we instead considered anger as a binary treatment effect and estimated an additional augmented model that accounted for the ordinal nature of ratings, the selection bias in providing feedback, and the anger of customers as a treatment condition. The inherent weakness of this model comes from a loss of information by dichotomizing anger into a binary (angry/not angry) condition, thus losing the nuance of levels of anger interacting with the level of anthropomorphic treatment. However, as a check, it shows the robustness of results to the specification of anger as an endogenous component of the customer experience, and for the estimation of drivers of anger, again with correlated errors (for the outcome, selection, and anger).

The model and results are presented in Web Appendix B. The endogenous anger treatment was positively but weakly correlated with the decision to provide feedback (r = .047) and negatively correlated with satisfaction rating (r = −.449). In the endogenous anger condition model, anthropomorphic treatment was positive and significant (γ1 = .289, p < .001). Critically, in the model for satisfaction rating, anthropomorphic treatment was nonsignificant for customers in the nonangry treatment (β1a = −.059, n.s.) and negative and significant for customers in the angry treatment (β1b = −.573, p = .04). We confirmed the significant difference between those two groups using a Wald test (χ2(2) = 6.62, p = .04), which highlights that even if we model anger as a binary outcome of an endogenous process, our conclusions remain essentially the same.

In addition, we test whether alternative negative emotions (anxiety and sadness) or a positive mood valence meaningfully explain our customer satisfaction ratings or interact with the degree of chatbot anthropomorphic treatment. A reestimated extended ordinal probit model containing additional emotions is presented in Web Appendix C. While sadness is a significant predictor in our first stage model of feedback selection, no other negative emotions were significant. However, positive valence interacts meaningfully with anthropomorphic treatment (β = .0508, p = .001) in explaining satisfaction, consistent with prior research showing positive consequence of anthropomorphism. But, importantly, the hypothesized effect between anger and anthropomorphic treatment remains unchanged (β = −.1617, p = .05).

Discussion

By leveraging real-world data from customers actively engaging with a chatbot across numerous chat sessions, we find initial evidence in support of H1a. An increase in the average level of anger exhibited by the consumer during their session resulted in a lower level of satisfaction with the service encounter, but only when the chatbot was treated anthropomorphically. In situations where the bot was not treated anthropomorphically, higher levels of anger did not meaningfully affect consumer satisfaction. Of course, this study has limitations. First, all customers were presented with the same highly anthropomorphic chatbot, so we had to rely on the variance in customers’ anthropomorphic treatment of the bot, as opposed to variation in chatbot anthropomorphism, per se. Second, we initially assumed that customers entered the chat angry, independent from their exchange with the chatbot; however, our robustness checks confirm that anger is not strictly exogenous but also arose out of characteristics of the exchange with the bot (e.g., the number of exchanges, variance in language recognition). Finally, both anthropomorphic treatment and anger were measured from customer behaviors, rather than manipulated. These limitations motivated the four follow-up experiments.

Study 2

The purpose of Study 2 was to test our theory under a controlled experimental setting and further show that, for angry customers, chatbot anthropomorphism has a negative effect on customer satisfaction. Accordingly, this study manipulated both chatbot anthropomorphism (via the presence/absence of anthropomorphic traits in the chatbot) and customer anger, allowing us to infer a causal relationship on satisfaction. In addition, careful chatbot selection enabled us to rule out idiosyncratic features of the Study 1 chatbot. Specifically, two of its specific features pose potential confounds in trying to generalize the results. First, it was clearly female, and previous research suggests that female service employees are more often targets of expressed frustration and anger from customers than are male service employees (Sliter et al. 2010). In addition, she had a smiling expression, which is incongruent with the emotional state of participants who were angry, and such affective incongruity may cause a negative reaction and lower satisfaction.

Pretests

Avatar

We pretested avatars to select one that was both gender and affectively neutral. Twenty-five participants from Amazon Mechanical Turk (MTurk) evaluated a series of avatars on bipolar scales assessing both gender (“definitely male–definitely female”) and warmth (“extremely cold–extremely warm”) and indicated their agreement with one seven-point Likert item: “This avatar has a neutral expression.” Drawing on the results of this pretest, we used the avatar pictured in Web Appendix D for the anthropomorphic chatbot condition. Specifically, our analysis confirmed that this avatar was neutral in both gender and warmth, with scores that did not significantly differ from the corresponding scale midpoints (Mgender = 3.64, t(24) = −1.23, p = .23; Mwarmth = 3.80, t(24) = −1.16, p = .26) and agreement with the neutral expression item was significantly above the midpoint (M = 5.76, t(24) = 7.80, p < .001).

We also wanted to choose a gender-neutral name for the anthropomorphic chatbot. Twenty-seven participants from MTurk evaluated a series of names on a seven-point bipolar scale assessing gender (“definitely male–definitely female”). From the results, we chose the name “Jamie,” which was not significantly different from the midpoint (M = 3.89, t(26) = −.68, p = .50).

Scenario

We created two customer service scenarios (neutral vs. anger) to use in this study (for the full scenarios used in both conditions, see Web Appendix E). In the neutral condition, the scenario described how the participant had purchased a camera for an upcoming trip, but upon receipt, the camera was broken. After searching the website, they diagnosed the issue as a problem with the lens and read about how to exchange the camera. It must be mailed back to the company before receiving a new camera, which is expected to arrive after they depart for a trip, the reason they wanted it originally.

In the anger condition, the scenario contained additional details designed to evoke anger. The original camera shipping was delayed, diagnosing the issue was time consuming, and they already tried to contact customer service and were placed on hold and passed from one representative to another. To ensure this scenario was successful in invoking anger compared with the neutral condition but did not differ in realism, we conducted a pretest on MTurk. Fifty participants were randomly assigned to read one of the two scenarios and then indicated how angry the scenario would make them feel (two items: “This situation would leave me feeling angry [frustrated]”; r = .65) and how realistic they found the scenario (two items: “How realistic [true-to-life] is this scenario?”; r = .61) on seven-point Likert scales (1 = “not at all,” 7 = “extremely”). Our analysis confirmed that those in the angry condition reported significantly greater feelings of anger than those in the neutral condition (Mneutral = 5.46 vs. Manger = 6.06; F(1, 48) = 4.31, p = .04). However, there was no significant difference in scenario realism (Mneutral = 5.48 vs. Manger = 5.69; F(1, 48) = 2.09, p = .16).

Anthropomorphism

We created two versions of the customer service chatbot (control vs. anthropomorphic). In the control condition, participants were told they would interact with “the Automated Customer Service Center,” and in the anthropomorphic condition, they were told they would interact with “Jamie, the Customer Service Assistant.” Furthermore, in the anthropomorphic condition, the chatbot featured the avatar selected from the pretest, and the chat text consistently used a singular first-person pronoun (i.e., “I”) and appeared in quotation marks.

To ensure that this manipulation was successful, we conducted a pretest on MTurk. One hundred one participants were randomly assigned to one of the two chatbots and then indicated how anthropomorphic the chatbot was on nine seven-point Likert scales (adapted from Epley et al. [2008] and Kim and McGill [2011]: “Please rate the extent to which [the Automated Customer Service Center/Jamie]: came alive (like a person) in your mind; has some humanlike qualities; seems like a person; felt human; seemed to have a personality; seemed to have a mind of his/her own; seemed to have his/her own intentions; seemed to have free will; and seemed to have consciousness”; α = .98). Analysis confirmed that those in the anthropomorphic condition reported significantly greater anthropomorphic thought (Mcontrol = 3.37 vs. Manthro = 4.82; F(1, 99) = 16.64, p < .001).

Main Study Design and Procedure

Two hundred one participants (48% female; Mage = 37.29 years) from MTurk participated in this study in exchange for monetary compensation. The study consisted of a 2 (chatbot: control vs. anthropomorphic) × 2 (scenario emotion: neutral vs. anger) between-subjects design. Participants were randomly assigned to read one of the aforementioned scenarios (neutral or anger). Then, participants entered a simulated chat with either “the Automated Customer Service Center” in the control chatbot condition or “Jamie, the Customer Service Assistant” in the anthropomorphic condition.

In the simulated chat, participants were first asked to open-endedly explain why they were contacting the company. In addition to serving as an initial chatbot interaction, this question also functioned as an attention check, allowing us to filter out any participants who entered nonsensical (e.g., “GOOD”) or non-English answers (Dennis, Goodson, and Pearson 2018). Subsequently, participants encountered a series of inquiries and corresponding drop-down menus regarding the specific product they were inquiring about (camera) and issue they were having (broken and/or damaged lens). They were then given return instructions and indicated they needed more help. Using free response, they described their second issue and answered follow-up questions from the chatbot about the specific delivery issue (delivery time is too long) and reason for needing faster delivery (product will not come in time for a special event). Finally, participants were told that a service representative would contact them to discuss the issue further. The interaction outcome was designed to be ambiguous (representing neither a successful nor failed service outcome; however, we manipulate this outcome in Study 3). The full chatbot scripts for both conditions and images of the chat interface are presented in Web Appendices F and G.

Upon completing the chatbot interaction, participants indicated their satisfaction with the chatbot by providing a star rating (a common method of assessing customer satisfaction; e.g., Sun, Aryee, and Law 2007) between one and five stars, on five dimensions (α = .95): overall satisfaction, customer service, problem resolution, speed of service, and helpfulness. Lastly, participants indicated their age and gender and were thanked for their participation.

Results and Discussion

Four participants failed the attention check (entering a nonsensical response for the open-ended question), leaving 197 observations for analysis. Analysis of variance (ANOVA) results revealed a significant main effect of scenario emotion on satisfaction (i.e., averaged star rating on the five dimensions), in that those in the anger scenario condition were less satisfied than those in the neutral scenario condition (F(1, 193) = 33.45, p < .001). Importantly, we found a significant chatbot × scenario emotion interaction on customer satisfaction (F(1, 193) = 5.26, p = .02). Consistent with Study 1, a simple effects test revealed that participants in the anger scenario condition were less satisfied when the chatbot was anthropomorphic (M = 2.09) versus when it was not (M = 2.58; F(1, 193) = 4.13, p = .04). For those in the neutral scenario, chatbot anthropomorphism had no significant influence on satisfaction, but satisfaction was directionally higher in the anthropomorphic condition (Mcontrol = 3.16 vs. Manthro = 3.44; F(1, 193) = 1.46, p = .23). Figure 3 presents an illustration of means.

The effect of chatbot anthropomorphism and anger on customer satisfaction (Study 2).

Whereas Study 1 provides initial support for the interactive effect of anthropomorphism and anger on customer satisfaction, Study 2 tests our theorizing in a controlled experimental design. This allowed us to more definitively conclude that when customers are angry, anthropomorphic traits in a chatbot lower customer satisfaction with the chatbot (consistent with H1a) 3 and rule out alternative explanations based on the chatbot’s gender or expression. While not central to our main theorizing, we ran an identical study manipulating sadness instead of anger. Both anger and sadness are negative emotions, but anger represents an activating emotion, whereas sadness is a deactivating emotion (Cunningham 1988; Lench, Tibbett, and Bench 2016). We predicted that only angry customers are activated to respond negatively to anthropomorphic chatbots due to their need to overcome obstacles, blame others, and respond punitively to expectancy violations (Goldberg, Lerner, and Tetlock 1999; Lerner and Keltner 2000; Lerner, Goldberg, and Tetlock 1998). Interestingly, participants in the sad condition were more satisfied when the chatbot was anthropomorphic versus when it was not (Mcontrol = 1.90 vs. Manthro = 2.53; F(1, 188) = 8.49, p < .01), which is consistent with prior literature (Han 2021; Yen and Chiang 2021) that demonstrates the positive effect of anthropomorphic chatbots in other situations. Full details are available in Web Appendix I.

Study 3

There were three main goals of Study 3. First, while our previous study induced anthropomorphism via a simultaneous combination of visual and verbal cues (with an avatar and first-person language, respectively), the current study aimed to show that the effect diminishes with the reduction of anthropomorphic traits. Thus, we remove the visual trait of anthropomorphism (i.e., the avatar) and anticipate the negative effect of anger to attenuate, providing further support that the degree of humanlikeness is responsible for driving the effect. Second, we wanted to test H1b by exploring whether the negative effect of anthropomorphism for angry customers would extend to influence their evaluations of the company itself. Finally, we wanted to provide initial evidence that expectancy violations are responsible for these observed negative effects. To do so, we examined whether the outcome of the chat interaction—namely, if the chatbot was able to indubitably resolve the customer's concerns—could serve as a boundary condition. We predicted that if the chatbot could meet the high expectations of efficacy, the negative effect of anthropomorphism should dissipate.

Pretests

Avatar

We selected a new avatar in this study to increase the robustness of our examination and generalizability of our findings. Twenty-six participants from MTurk evaluated a series of avatars as in the Study 2 pretest. Our analysis confirmed that the avatar (pictured in Web Appendix D) was considered neutral in both gender and warmth (Mgender = 4.04, t(25) = .12, p = .90; Mwarmth = 4.27, t(25) = 1.32, p = .20) and had a neutral expression (M = 5.12, t(25) = 4.35, p < .001).

Scenario

As in Study 2, we created two customer service scenarios (neutral vs. anger; for the full scenarios, see Web Appendix J). In the neutral condition, the scenario described a situation where the participant was interested in buying a camera from the company, “Optus Tech,” with a specific feature (advanced video stabilization). After searching the website, it was difficult to tell whether Optus Tech's camera had this feature. In addition, the delivery window was wide, which meant that the expected delivery may or may not occur after they depart for a trip, the whole reason they wanted the camera.

In the anger emotion condition, there were additional details designed to evoke anger: researching the feature was time consuming, they already tried to contact customer service and were placed on hold and passed from one representative to another, the representative could not answer their question, and they had to call a second time to ask about shipping. To ensure that this scenario was successful in invoking anger compared with the neutral condition, we pretested 48 MTurk participants who were randomly assigned to read one of the two scenarios and then indicated both their anticipated feelings of anger and how realistic they found the scenario (as measured in the Study 2 pretest; r = .77 and r = .63, respectively). Indeed, those in the anger condition reported significantly greater anticipated feelings of anger than those in the neutral condition (Mneutral = 3.63 vs. Manger = 5.46, F(1, 46) = 18.00, p < .001). However, there was no significant difference in how realistic participants found the two scenarios (Mneutral = 5.50 vs. Manger = 4.92, F(1, 46) = 2.54, p = .12). We only used the anger scenario in this study, but an upcoming study used both scenarios.

Anthropomorphism

We created three versions of the customer service chatbot: control, verbal anthropomorphic, and verbal + visual anthropomorphic. The first and last chatbots were similar to Study 2 except using the new avatar. The additional chatbot used the verbal anthropomorphic traits (i.e., the bot introduced itself as Jamie and used first-person language in quotations) but not the visual trait (i.e., the avatar). One hundred twenty-one MTurk participants were randomly assigned to one of the three chatbots: the Automated Customer Service Center (control chatbot condition), Jamie without an avatar (verbal anthropomorphic condition), or Jamie with an avatar (verbal + visual anthropomorphic condition) and then indicated how anthropomorphic the chatbot was on nine seven-point Likert scales (as in Study 2; α = .97). We coded the control, verbal anthropomorphic, and verbal + visual anthropomorphic conditions as 0, 1, and 2, respectively, to represent the strength of the anthropomorphic manipulation. Results demonstrated that the linear trend was significant (Mcontrol = 2.38 vs. Mverbal = 4.14 vs. Mverbal + visual = 4.39; F(1, 118) = 39.16, p < .001).

Main Design and Procedure

Four hundred nineteen participants (61% female; Mage = 38.50 years) from MTurk participated in this study in exchange for monetary compensation. This study consisted of a 3 (chatbot: control vs. verbal anthropomorphic vs. verbal + visual anthropomorphic) × 2 (scenario outcome: ambiguous vs. resolved) between-subjects design. First, all participants read the anger scenario and then entered a simulated chat with the chatbot. In the ambiguous outcome condition, participants encountered a series of questions and corresponding drop-down menus regarding the specific product (camera) and feature (advanced video stabilization) they were inquiring about. They were then given basic product information about the feature that was purposefully ambiguous. Then, participants indicated they needed more help. Using free response, they described their second issue and answered follow-up questions from the chatbot about the specific delivery issue (delivery window/timing) and reason for needing faster delivery (product will not come in time for a special event). Participants were told that a service representative would contact them to discuss the issue further. In the resolved outcome condition, there were two critical differences: participants were directly given the product feature information that resolved their query and explicitly informed of the specific delivery time information, which confirmed they would receive their delivery in time for their special event. The entire chatbot scripts for both conditions and images of the interface are presented in Web Appendices K and L.

Upon completing the interaction, participants evaluated the company, Optus Tech, on four seven-point bipolar items (α = .95): “unfavorable–favorable,” “negative–positive,” “bad–good,” and “unprofessional–professional.” As a manipulation check for the scenario outcome, participants responded to three items: “My question was sufficiently answered,” “My problem was appropriately resolved,” and “I got the help I needed” (1 = “strongly disagree,” and 7 = “strongly agree”; α = .97). To assess whether participants knew they were interacting with a chatbot (vs. a human), we asked participants to indicate the extent to which they felt they interacted with a human versus an automated chatbot (1 = “definitely a real live human,” 7 = “definitely an automated chatbot”. 4 Participants indicated demographics and were thanked for participating.

Results and Discussion

Fifty-two participants failed the attention check (entering a nonsensical response for the open-ended question), leaving 365 observations for analysis.

Scenario outcome manipulation check

Participants in the resolved condition indicated that their problem was more appropriately resolved (M = 6.36) than participants in the ambiguous condition (M = 4.32; t(363) = 12.40, p < .001), indicating a successful manipulation.

Main analysis

Unsurprisingly, ANOVA results revealed a significant main effect of scenario outcome on company evaluation, such that participants reported lower evaluations of the company when the outcome was ambiguous (M = 4.68) versus when it was resolved (M = 5.36; F(1, 359) = 18.44, p < .001). There was no main effect of chatbot anthropomorphism (F(1, 359) = 1.38, p = .25). Importantly, there was a marginally significant chatbot anthropomorphism × anger scenario interaction on company evaluation (F(2, 359) = 2.64, p = .07). A simple effects test revealed that there was no significant difference between the chatbot conditions when the outcome was resolved (F < 1). This provides some evidence that effectively meeting expectations eliminates the negative effect, which is conceptually consistent with H2, because if high preinteraction expectations of efficacy are met, there should be no resultant expectancy violations. However, there was a significant difference when the outcome was ambiguous (F(2, 359) = 3.78, p = .02). Planned contrasts revealed when the outcome was ambiguous, participants reported lower company evaluations when the chatbot was verbally and visually anthropomorphic (M = 4.28) versus the control (M = 5.06; t(359) = 2.75, p < .01), providing evidence in support of H1b. However, the verbal anthropomorphic condition (without an avatar) did not significantly differ from the control (Mverbal = 4.69 vs. Mcontrol = 5.06; t(359) = 1.36, p = .17) or the verbally and visually anthropomorphic condition (vs. Mverbal + visual = 4.28; t(359) = 1.48, p = .14). Because the verbal anthropomorphic condition both theoretically and empirically fell between the two other conditions, we tested whether our anthropomorphism manipulation demonstrated a linear trend. We coded the control, verbal anthropomorphic, and verbal + visual anthropomorphic conditions as 0, 1, and 2, respectively, to represent the strength of the anthropomorphic manipulation. Results demonstrated that the linear trend was not significant when the outcome was resolved (F < 1) but was significant when the outcome was ambiguous (F(1, 359) = 7.55, p < .01). These findings suggest that visual and verbal anthropomorphic traits likely produce an additive effect, where multiple traits lead to greater anthropomorphic thought, and accordingly results in lower company evaluations (at least in the case of angry consumers, which we exclusively examined in this study). Figure 4 presents an illustration of means.

The effect of chatbot anthropomorphism and anger on company evaluation (Study 3).

Study 4

Study 4 serves two key purposes. First, we extend our investigation to an even further downstream negative outcome by examining purchase intentions (H1c). Second, we build on the findings of Study 3 and directly test our proposed underlying process: expectancy violations driven by preperformance expectations (H2). Specifically, we predict anthropomorphism increases preperformance expectations that a chatbot would display greater agency and performance. While people in a neutral state will perceive the expectancy violation, they are less motivated to retaliate or respond punitively. Angry people, in contrast, punish the company by lowering their purchase intentions.

Design and Procedure

One hundred ninety-two participants (55% female; Mage = 37.31 years) from MTurk participated in exchange for payment. This study consisted of a 2 (chatbot: control vs. anthropomorphic) × 2 (scenario emotion: neutral vs. anger) between-subjects design. Participants were randomly assigned to read one of the neutral or anger information search scenarios pretested in the prior study. Then participants were told they were about to enter a chat with either the Automated Customer Service Center (control condition) or Jamie (anthropomorphic condition). At this point, all participants saw the brand logo for Optus Tech, but those in the anthropomorphic chatbot condition also saw the avatar.

Next, participants indicated their preinteraction efficacy expectations regarding the chatbot's upcoming performance on four seven-point Likert items (“I expect the Automated Service Center/Jamie to: do something for me; take action; be proactive in resolving my issues; say things to calm me down”; α = .89). Participants completed the same interaction as in the ambiguous condition from Study 3 and indicated their purchase intentions for the camera on two seven-point Likert items: “I would buy the camera from Optus Tech,” and “I would try to find a different company to buy the camera from” (the latter was reverse-coded; r = .65). Afterward, participants rated their postinteraction assessment of the chatbot's efficacy, on four seven-point Likert items that corresponded to the preinteraction items (“I felt the Automated Service Center/Jamie: did a lot for me; took action; was proactive in resolving my issues; said things to calm me down”; α = .92). Lastly, participants indicated their age and gender and were thanked for their participation.

Results and Discussion

Purchase intention

Twenty-one participants failed the attention check used in prior studies, leaving 171 observations for analysis. ANOVA results revealed a significant main effect of anger on purchase intentions, where participants in the anger scenario condition reported lower purchase intentions than those in the neutral scenario condition (F(1, 167) = 20.04, p < .001). Consistent with the pattern of results predicted in H1c, there was a significant chatbot anthropomorphism × anger scenario interaction on purchase intentions (F(1, 167) = 4.29, p = .04). A simple effects test revealed that participants in the anger scenario condition reported lower purchase intentions when the chatbot was anthropomorphic (M = 2.73) versus when it was not (M = 3.57; F(1, 167) = 5.79, p = .02). For those in the neutral scenario, the chatbot had no significant influence on purchase intentions. Figure 5 presents an illustration of means.

The effect of chatbot anthropomorphism and anger on purchase intentions (Study 4).

Expectancy violation

We predicted that encountering an anthropomorphic chatbot at the start of the service experience would increase participants’ preinteraction expectations about the efficacy of the chatbot, relative to the control chatbot. However, the postinteraction efficacy assessments of the chatbots should not differ because they performed equally, resulting in greater expectancy violations for anthropomorphic chatbots (H2).

To assess this hypothesis, we ran a repeated-measures ANOVA with chatbot anthropomorphism as the between-subjects variable and time (preinteraction expectations at Time 1 and postinteraction assessments at Time 2) as the within-subjects factor. We did not find a significant overall main effect of chatbot anthropomorphism on efficacy (F(1, 169) = .91, p = .34). Importantly, there was a significant interaction of chatbot anthropomorphism and time (F(1, 169) = 7.31, p = .01). Probing this interaction, as we expected, preinteraction expectations of the chatbot's efficacy at Time 1 were significantly higher in the anthropomorphism condition than in the control condition (Mcontrol = 4.94 vs. Manthro = 5.50; F(1, 169) = 6.91, p = .01), but there was no difference in the postinteraction assessments at Time 2 (Mcontrol = 4.09 vs. Manthro = 3.88; F < 1). These results are consistent with the logic that a greater expectancy violation is more likely in the anthropomorphism condition than in the control because of inflated preinteraction expectations of chatbot efficacy stemming from more humanized traits.

We also calculated an expectancy violation score for each participant by subtracting their postinteraction assessment score at Time 2 from their preinteraction expectation score at Time 1 (Madden, Little, and Dolich 1979). As we expected, an ANOVA with chatbot anthropomorphism and anger scenario as predictors and expectancy violation as the dependent variable produced only a significant main effect of chatbot anthropomorphism on expectancy violation (Mcontrol = .85 vs. Manthro = 1.62; F(1, 169) = 7.31, p = .01).

Mediation

Importantly, our theorizing suggests that anthropomorphism inflates preinteraction expectations of chatbot efficacy for all customers. Yet, angry customers are more motivated than nonangry customers to respond punitively by lowering their purchase intent. Accordingly, we performed a moderated mediation analysis based on 10,000 bootstrapped samples (Hayes 2013, Model 15). While the index of moderated mediation did not reach significance (indirect effect = .0279; 95% confidence interval [CI]: [−.0778, .1562]), we examined the separate indirect effects at each emotion condition based on our a priori predictions (Aiken and West 1991; Hayes 2015). In other words, while we did not have predictions for what might drive purchase intention for those in the neutral condition, we did predict that for angry customers, lowered preinteraction expectations would explain the decreased purchase intention. As per our theorizing, results demonstrated that for individuals in the anger condition, preinteraction expectations mediated the effect of chatbot anthropomorphism on purchase intention (indirect effect = .0675; 95% CI: [.0012, .1707]). However, for participants in the neutral condition, the indirect effect was not significant (indirect effect = .0396; 95% CI: [−.0408, .1468]). These results suggest that, as we predicted, the inflated preinteraction expectation of efficacy caused by the anthropomorphic chatbot is the underlying mechanism lowering purchase intentions for angry participants.

Study 5

Study 4 demonstrated that anthropomorphic chatbots result in lower purchase intentions when customers are angry by elevating preinteraction expectations of efficacy. Yet, it is theoretically and managerially important to understand how this effect can be remedied. Some companies attempt to explicitly temper customer expectations of their chatbots. For example, Slack's chatbot introduces itself by explaining that, “I try to be helpful (But I’m still just a bot. Sorry!)” (Waddell 2017). Study 5 explores whether explicitly lowering customer expectations of anthropomorphic chatbots prior to the interaction effectively reduces negative customer responses.

Avatar Pretest

For the Study 5 pretest, 31 participants from MTurk evaluated a series of avatars as in the prior pretests. Our analysis confirmed that the avatar (see Web Appendix D) was considered neutral in both gender and warmth (Mgender = 5.55, t(30) = 1.52, p = .14; Mwarmth = 4.97, t(30) = −.19, p = .85) and had a neutral expression (M = 5.94, t(30) = 8.91, p < .001).

Design and Procedure

Three hundred two participants (52% female; Mage = 40.78 years) from MTurk participated in exchange for monetary compensation. The study consisted of a 2 (chatbot: control vs. anthropomorphic) × 2 (expectation: baseline vs. lowered) between-subjects design. All participants read the anger information search scenario. Afterward, participants saw they would chat with either “the Automated Customer Service Center” in the control or “Jamie, the Customer Service Assistant” in the anthropomorphic chatbot condition. In the lowered expectation condition, they also read, “The Automated Customer Service Center/Jamie, the Customer Service Assistant will do the best that it/I can to take action but sometimes the situation is too complex for it/me (it's/I’m just a bot) so please don't get your hopes too high.”

Participants then indicated their preinteraction efficacy expectations (as in Study 4; α = .89), completed the product information interaction and evaluated the company (as in Study 3; α = .97), rated their postinteraction assessment of the chatbot's efficacy (as in Study 4; α = .92), answered demographic questions, and were thanked for their participation.

Results and Discussion

Expectancy violation manipulation check

Consistent with the manipulation intention, there was a main effect of anthropomorphism on preinteraction expectations (F(1, 298) = 4.36, p = .04), where participants had higher expectations when the chatbot was anthropomorphic (M = 4.53) compared with the control (M = 4.18). There was also a main effect of expectations on preinteraction expectations (F(1, 298) = 45.61, p < .001), where, as we intended, lowering expectations resulted in lower preinteraction expectations (M = 3.78) than in the baseline expectation condition (M = 4.93). There was a significant chatbot × expectation interaction on preinteraction expectations (F(1, 298) = 7.00, p < .01). Simple effects tests revealed that in the baseline expectation conditions, the people in the anthropomorphic condition had higher expectations of chatbot efficacy than in the control condition (Mcontrol = 4.53 vs. Manthro = 5.34; F(1, 298) = 11.35, p = .001). Yet, in the low-expectation conditions, there was no difference between the preinteraction expectations of efficacy for the anthropomorphic and control chatbot (Mcontrol = 3.83 vs. Manthro = 3.73; F < 1). For postinteraction evaluations, consistent with predictions, there were no significant differences (i.e., no main effect of anthropomorphism, no main effect of expectation, and no interaction between anthropomorphism and expectation). This indicates preinteraction expectations are responsible for changes to expectancy violations.

Expectancy violations were calculated by subtracting postinteraction evaluations from preinteraction expectations, with higher numbers indicating greater violations. There is a main effect of anthropomorphism on expectancy violations (F(1, 298) = 7.38, p < .01), where participants indicated greater expectancy violations when the chatbot was anthropomorphic (M = .65) compared with the control (M = .10). There was also a main effect of the expectation manipulation on expectancy violations (F(1, 298) = 36.73, p < .001), where there were greater expectancy violations in the baseline expectation condition (M = .99) compared with the lowered-expectation condition (M = −.24). Importantly, there was also a significant chatbot × expectation interaction on expectancy violations (F(1, 298) = 13.26, p < .001). As we expected, when participants had the baseline expectation (i.e., no information given), they experienced greater expectancy violations driven by preinteraction expectations when the chatbot was anthropomorphic compared with the control (Mcontrol = .35 vs. Manthro = 1.63; F(1, 298) = 20.47, p < .001). Yet when participants were told to have lower expectations, there was no difference between the expectancy violations for the anthropomorphic chatbot and the control (Mcontrol = −.14 vs. Manthro = −.33; F < 1).

Company evaluation

The ANOVA results revealed a marginal main effect of anthropomorphism on company evaluation; participants reported marginally lower evaluations of the anthropomorphic chatbot (M = 4.11) versus control (M = 4.44; F(1, 298) = 2.86, p = .09). There was no main effect of expectation (F < 1). There was a significant chatbot × expectation interaction on company evaluation (F(1, 298) = 4.35, p = .04). Consistent with prior studies, a simple effects test revealed that participants in the baseline expectation condition rated the company lower when the chatbot was anthropomorphic (M = 3.90) versus when it was not (M = 4.63; F(1, 298) = 4.13, p = .04). For those in the lowered-expectation condition, chatbot anthropomorphism had no significant influence on company evaluations (Mcontrol = 4.25 vs. Manthro = 4.32; F < 1), indicating that lowering customer expectations of anthropomorphic chatbots effectively mitigated the negative effect of anger on company evaluations. Figure 6 presents an illustration of means, and Web Appendix M provides additional analysis.

The effect of chatbot anthropomorphism and expectations on company evaluation (Study 5).

General Discussion

The deployment of chatbots as digital customer service agents continues to accelerate as the underlying machine learning technologies improve and as the practice becomes more common across industries. Customers are increasingly interacting with firms through chatbots, and there has been a significant push for more humanlike versions of such bots. Prior research has begun to demonstrate some negative implications of anthropomorphism in specific situations, including video games (Kim, Chen, and Zhang 2016), gambling (Kim and McGill 2011), and overcrowded environments (Puzakova and Kwak 2017), as well as for some types of people (agency-oriented customers; Kwak, Puzakova, and Rocereto 2015). Yet, our research is the first to demonstrate the negative effect of anthropomorphism in the wider context of customer service and connect the use of these humanlike chatbots to negative firm outcomes.

We find, using a large data set of real-world customer interactions and four experiments, that anthropomorphic chatbots can harm firms. An angry customer encountering an anthropomorphic (s. nonanthropomorphic) chatbot is more likely to report lower customer satisfaction, lower overall evaluation of the firm, and lower future purchase intentions. This negative effect is driven by expectancy violations due to inflated preinteraction expectations of efficacy caused by the anthropomorphic chatbot. Angry customers respond more punitively to these expectancy violations compared with nonangry customers.

The decision to anthropomorphize a chatbot is a deliberate and strategic choice made by the firm. The current research shows that this choice has a significant impact on key marketing outcomes for a substantial (and increasing due to the pandemic; Shanahan et al. 2020; Smith, Duffy, and Moxham-Hall 2020) group of customers: specifically, those who are angry during the service encounter. As such, firms should attempt to gauge whether a customer is angry either before or early in the conversation (e.g., using NLP) and deploy a chatbot with an appropriate level of anthropomorphism or lack thereof. A less precise solution would be to assign nonanthropomorphic chatbots to customer service roles that tend to involve angry customers (e.g., customer complaint centers) while continuing to employ anthropomorphic agents in more neutral or promotion-oriented settings (e.g., searches for product information) due to their previously documented beneficial effects (Han 2021; Yen and Chiang 2021) and the current empirical evidence (i.e., Study 1, Web Appendix I). This strategic deployment of chatbots should help firms deliver better chatbot-mediated service experiences. Moreover, appropriate chatbot deployment can improve immediate customer satisfaction, company evaluations, and future purchase intentions (e.g., customer retention).

Given our finding that the negative effect of anthropomorphism for angry customers is driven by an expectancy violation due to inflated preinteraction efficacy expectations, another practical implication is that marketers should consider how to frame their customer service chatbots to customers. As our final study shows, if an anthropomorphic chatbot is deployed to angry customers, it is best to downplay its capabilities. Some companies seem to have intuited this, as illustrated by the aforementioned Slack bot example. Similarly, the Poncho weather app told people, “I’m good at talking about the weather. Other stuff, not so good” (Waddell 2017). Explicitly informing customers that they are conversing with an imperfect chatbot lowers preinteraction efficacy expectations that were inflated by anthropomorphic traits. Yet, this is not obvious to all companies; there are plenty of examples of chatbots that inadvertently increase preinteraction efficacy expectations. For example, Madi, Madison Reed's chatbot, is labeled a “genius” (Murdock 2016) and Tinka, T-Mobile's chatbot, is given a 18,456 IQ (Morgenthal 2017). Of course, Study 3 shows that meeting the high expectations for service can also reduce the negative impact of anthropomorphism. Thus, by utilizing these strategies, all customers can be handled well via AI technology.

Alternatively, firms could transfer angry customers directly to a real live person to assist them, thus avoiding an anthropomorphic chatbot–based expectancy violation entirely. Yet this option incurs additional costs and assumes that the human agent has greater agency and efficacy. While it is plausible that human agents will deliver higher quality service, in actuality, human agents suffer from constraints that limit their effectiveness. Thus, future research might address how people respond to chatbots compared with humans. It would be interesting to explore whether higher expectations of quality and agency would be compensated for by social norms of polite interactions and compassion for others.

In addition, anger may not be the only relevant emotion to consider managerially or theoretically. While our data indicate that anger is of primary importance and is the most commonly identified emotion in service contexts, it is possible that with more sophisticated language processing tools, other emotions, different sources of those emotions, and social conventions could become relevant. For example, it could be that anger remains relevant in customer service contexts but that the source of the anger, such as whether it arises from a lack of procedural or interactional fairness, also impacts the success of anthropomorphic bots (Blodgett, Hill, and Tax 1997). Thus, it is important for future research to continue to investigate how the complexities of emotion, sources of emotion, and social norms interact to influence the effectiveness of anthropomorphic digital customer service agents.

It is worth noting that there might be a point in the future when the conversational performance of AI becomes sufficiently advanced and its implementation so commonplace that expectancy violations simply cease to be a concern. In this future, chatbots might be capable of greater freedom of action, in addition to performing intuitive and empathetic tasks (Huang and Rust 2018). In approaching such a point, the difference between the reactions of angry and nonangry customers would likely diminish until the groups are nondistinct, and anthropomorphism might cease to conditionally influence customer outcomes. However, this future does not appear to be imminent (Shridhar 2017).

In the short and medium term, therefore, as firms experiment with conversational agents in a variety of customer-facing roles, it remains important to consider the anthropomorphic traits of the chatbot, including simple features such as naming (i.e., “Alexa”), language style, and the degree of embodiment, along with the specific customer contexts in which the interactions are likely to occur. Specific contexts can vary from traditional corporations to government (e.g., the Australian government chatbot), law (e.g., the “DoNotPay” chatbot), and psychotherapy (e.g., the “Woebot” chatbot). It is worthwhile to decide, and important for future research to explore, which chatbot is most appropriate for any given interaction, according to the chatbot's characteristics and the specific context.

Altogether, chatbots deliver a multitude of benefits to the business (e.g., scalability, cost reductions, control over the quality of interactions, additional customer data). As such, they will continue to be a valuable tool for marketers as the technology matures. Here, we have shown that the unconditional deployment of humanized chatbots leads to negative marketing outcomes, from dissatisfaction to lowered purchase intentions. However, with careful and conscientious implementation, considering the customer's emotional state (e.g., anger), firms can reap the benefit of this burgeoning technology.

Supplemental Material

sj-pdf-1-jmx-10.1177_00222429211045687 - Supplemental material for Blame the Bot: Anthropomorphism and Anger in Customer–Chatbot Interactions

Supplemental material, sj-pdf-1-jmx-10.1177_00222429211045687 for Blame the Bot: Anthropomorphism and Anger in Customer–Chatbot Interactions by Cammy Crolic, Felipe Thomaz, Rhonda Hadi and Andrew T. Stephen in Journal of Marketing

Footnotes

Acknowledgments

The authors thank Vit Horky and NICE (formerly Brand Embassy) for their generous help with providing data reported in this article and Jean-Charles Ravon and Teradata for helping with emotion recognition. The authors also thank the audience at the Theory + Practice in Marketing conference and seminar participants at Monash University, the University of Denver, Cornell University, and the University of Warwick for their suggestions and comments.

Associate Editor

Praveen Kopalle

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Future of Marketing Initiative (FOMI) at Saïd Business School, University of Oxford.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.