Abstract

We examined how generalized and mathematics-specific language skills predicted the word-problem performance of students with mathematics difficulty. Participants were 325 third-grade students in the southwestern United States who performed at or below the 25th percentile on a word-problem measure. We assessed generalized language skills in word reading, passage comprehension, and vocabulary knowledge. In addition, we measured mathematics-specific vocabulary knowledge. To explore variation within the mathematics-difficulty population, we utilized unconditional quantile regression to determine how each of these skill sets predicted word-problem performance when controlling for computation and emergent bilingual status. Results revealed that mathematics-vocabulary knowledge significantly predicted word-problem performance at all but two quantiles (p < .001), with strongest predictive relations at the highest quantiles. Passage comprehension had an overall significant relation to word-problem performance (p < .05) that was also reflected in multiple quantiles. Neither word-reading accuracy nor generalized-vocabulary knowledge demonstrated a significant predictive relation to word-problem performance. Given the consistent relation between mathematics-vocabulary knowledge and word-problem performance across quantiles, researchers and practitioners should prioritize evidence-based mathematics-vocabulary instruction to support students’ word-problem-solving skills.

Word-problem solving represents a major component of overall mathematics proficiency, and students are often asked to demonstrate their content knowledge through solving word problems on high-stakes assessments (National Governors Association Center for Best Practices, & Council of Chief State School Officers, 2010; Powell et al., 2019). Students not only rely on knowledge of mathematics content to solve word problems, but they also employ language-based skills to read, understand, and represent these problems (Fuchs et al., 2018; Kintsch & Greeno, 1985; Powell et al., 2019). When students encounter challenges solving word problems, both mathematics and language-based barriers may, therefore, be responsible. In this study, we examined the roles of language-based skills in word-problem solving among students with mathematics difficulty (MD). By better understanding the relations between specific language skills and word-problem solving, researchers can design more targeted interventions for students who require word-problem support.

Word-Problem Solving

Word-problem solving requires students to identify quantitative relationships within text, represent those relationships numerically, and solve for an unknown value (Fuchs et al., 2015; Kintsch & Greeno, 1985). Word-problem-solving is a core component of mathematics proficiency, reflected by its presence in national mathematics standards throughout the elementary grades (National Governors Association Center for Best Practices, & Council of Chief State School Officers, 2010). Students encounter increasingly complex word problems as they advance through the early elementary grades, building from one-step problems involving addition and subtraction to multistep problems requiring addition and subtraction as well as multiplication and division.

Word-problem solving requires a combination of mathematics and language skill sets (Fuchs et al., 2018, 2020). Kintsch and Greeno (1985) characterized students’ word-problem process through an extension of the Construction-Integration Model (Kintsch, 2018). Consider the following problem: Joe had four marbles. Joe also had two pencils. Samira had two fewer marbles than Joe. How many marbles did Samira have? This problem can be solved as 4 − 2 = 2 marbles. This translation of the word problem into a solvable number sentence involves a step-by-step process. First, if the problem is presented in written form, students must be able to decode the words within the problem. Next, students apply several comprehension-related skills, each of which relies on an understanding of word-problem vocabulary and syntax. These include (a) identifying needed information (i.e., about marbles), (b) ignoring irrelevant information (i.e., about pencils), and (c) determining quantitative relationships within the problem (i.e., finding the difference between Joe’s four marbles and Samira’s marbles; Powell & Fuchs, 2018; Powell et al., 2019). Finally, once students have an accurate representation of the problem, they can apply their knowledge of operations and the number system to compute a numerical answer to the problem. Word-problem solving is thus a complex process that taps not only mathematics skills but also language skills such as word reading, passage comprehension, and vocabulary knowledge.

MD and Word-Problem Solving

Word-problem solving can be challenging for students due to its complexity, particularly for students with MD. We define students with MD as students whose mathematics performance falls below grade-level expectations (Geary et al., 2012; Powell et al., 2019). Difficulty with mathematics impacts a large proportion of students in the United States, as evidenced by only 36% of fourth-grade students demonstrating proficiency on the National Assessment of Educational Progress (National Center for Education Statistics, 2022). Proficiency rates are even lower for many subgroups of fourth-grade students, including students eligible for the National School Lunch Program (20%), students with disabilities (16%), and emergent bilinguals (14%).

Students who show limited mathematics proficiency experience varying degrees of MD (Cowan & Powell, 2014). Some students have relatively mild MD, as evidenced by their positive response to less intensive (e.g., small group, Tier 2) intervention (Dennis, 2015; Powell & Fuchs, 2015). Other students with MD have more significant challenges and require more intensive, individualized instruction. With this in mind, researchers regularly divide samples of children with MD into those with more and less severe difficulty (e.g., those performing below the 10th percentile versus those performing between the 10th and 25th percentiles; Cowan & Powell, 2014; Mazzocco et al., 2011; Nelson & Powell, 2018).

There are several reasons students with varying levels of MD experience challenges with word-problem solving. Students with limited fact and procedural fluency may struggle with fact and computation demands embedded in word-problem solving (Andersson, 2008; Geary, 2004). Students may also have difficulty developing problem representations and problem-solving plans due to language-based challenges (Fuchs et al., 2020; Powell et al., 2019). For example, students may struggle to comprehend a problem’s text structure, recognize the mathematics-specific meanings of vocabulary terms, distinguish relevant from irrelevant information, and determine the word-problem label (i.e., what the problem is asking about; Fuchs et al., 2015; Powell et al., 2019). Students with more significant MD may encounter substantial challenges with multiple of these component skills, while those with relatively mild MD may demonstrate proficiency with some word-problem skills but not others.

Word-problem difficulty can also be especially prevalent among emergent bilingual students when participating in instruction in their second language. In this study, we defined emergent bilingual students as those still developing fluency in the English language, as identified by a state-administered English proficiency test. Emergent bilinguals tend to perform lower on language-heavy tasks than their monolingual-English speaking peers when measured in their second language (Alt et al., 2014; Xu, Lafay, et al., 2022). Relatedly, research indicates a relation between (a) English language development in listening, speaking, reading, and writing and (b) word-problem performance for emergent bilinguals with MD (King & Powell, 2023).

Given the myriad difficulties students experience with the language demands of word-problem solving, we focused this study on how language skill sets predict varying levels of word-problem performance. Specifically, we examined the predictive relations between word-reading accuracy, passage comprehension, generalized-vocabulary knowledge, and mathematics-vocabulary knowledge and word-problem performance. Within this study, we controlled for student status as emergent bilingual because of research indicating that language-heavy tasks in mathematics, such as word-problem solving, can be especially challenging for emergent bilinguals (Alt et al., 2014; Xu, Lafay, et al., 2022).

Word-Problem-Solving Instruction

Evidence-based word-problem instruction can effectively support students with MD in the elementary grades (Myers et al., 2022). Oftentimes, this support includes explicitly teaching a step-by-step problem solving process and incorporating schema instruction (Fuchs et al., 2021; Powell et al., 2021). Students with less substantial challenges in word-problem-solving may benefit from receiving evidence-based instruction in a small group (e.g., Tier-2 context), while those with significant word-problem difficulty may require more intensive, individualized intervention (Powell & Fuchs, 2015). Previous research also indicates emergent bilingual students with MD can benefit from evidence-based word-problem instruction in either their home language or second language (Orosco, 2014; Sanford et al., 2020; Swanson et al., 2019). Cultural identity and backgrounds must also be addressed in word-problem solving instruction, such as incorporating problems referencing relatable situations and characters (Abdulrahim & Orosco, 2020).

Language Predictors

In this section, we discuss previous research on the relations between word-problem performance and each of our language variables of interest: word-reading accuracy, passage comprehension, generalized-vocabulary knowledge, and mathematics-vocabulary knowledge. We define language skills as an umbrella term that includes reading skill, listening comprehension, and vocabulary knowledge.

Word-Reading Accuracy

To understand and solve a word problem, students must first be able to read the individual words within a given problem (Wong & Ho, 2017). We define word reading as a student’s ability to accurately read individual words (e.g., through decoding or automatic recognition). Word reading is a primary avenue through which students access a word problem; even when problems are initially read aloud to students, word-reading skill provides students with continued access to the word-problem text (Fuchs et al., 2006). This access allows students to refer to the text as they identify needed information, construct a problem representation, and solve the problem numerically (Fuchs et al., 2006; Kintsch & Greeno, 1985; Powell et al., 2019). Word reading, therefore, serves as a prerequisite skill set to successful word-problem performance.

Previous research has established a relation between word-reading difficulty and MD, such that co-occurrence of these two profiles is estimated to be several times more than by chance (Martin & Fuchs, 2022; Willcutt et al., 2019). Given this relation, as well as the foundational role of word reading in word-problem solving, one could expect that word-reading accuracy predicts word-problem performance. However, evidence of this relation is equivocal (Bjork & Bowyer-Crane, 2013; Fuchs et al., 2020; King & Powell, 2023; Wong & Ho, 2017). In a study of 6- and 7-year-old children in the United Kingdom, Bjork and Bowyer-Crane (2013) determined word-reading accuracy did not significantly predict word-problem performance. In a quantile analysis of second-grade students in the United States, Fuchs et al. (2020) learned that word-reading accuracy significantly predicted word-problem performance for only certain quantiles of students. Specifically, word reading tended to have stronger predictive utility for students with higher and lower word-problem performance than for students with average word-problem performance. King and Powell (2023) reported that reading level for third-grade emergent bilinguals with MD correlated with word-problem performance. In this article, we add to the research base on word reading and word-problem solving by focusing our analysis on third-grade students with varying degrees of MD.

Passage Comprehension

To solve word problems, students must be able to comprehend verbally presented problems containing quantitative information (Fuchs et al., 2015). Particularly when word problems are presented in written form, students must read and understand the problem text to solve the problem. In this study, we refer to passage comprehension as one’s ability to read and understand a written passage (Fuchs et al., 2015; Lin, 2021). Numerous researchers have examined the relation between students’ passage comprehension skills and their mathematics word-problem performance (Boonen et al., 2014; Daroczy et al., 2015; Fuchs et al., 2015; Kintsch & Greeno, 1985; Lin, 2021). Kintsch and Greeno (1985) posited that passage comprehension was necessary to build an accurate schematic representation of a word problem, thereby playing a foundational role in the word-problem solving process. Fuchs et al. (2015) corroborated this notion in their study of second-grade students in which they administered several cognitive and academic measures to participants, including passage comprehension, word-problem-specific language comprehension, and word-problem solving. Fuchs et al. determined that word-problem solving is a form of passage comprehension that requires understanding of word-problem-specific language, which presents unique complexity that can cause difficulties for students with MD (Daroczy et al., 2015; Powell et al., 2019).

Despite the passage comprehension demands embedded in word-problem solving, previous research does not conclusively indicate that passage comprehension significantly predicts word-problem performance. Trakulphadetkrai et al. (2020) reported a significant correlation between passage comprehension and word-problem solving for 9- to 10-year-old emergent bilinguals. Yet in a study with sixth-grade students in the Netherlands, Boonen et al. (2014) administered a passage comprehension measure and a word-problem measure in which problems were read aloud and presented in written form. They determined passage comprehension significantly predicted word-problem performance at the test level but not at the item level. Finally, in a meta-analysis of word-problem predictors for elementary school students, Lin (2021) determined that passage comprehension did not significantly predict word-problem outcomes. In this study, we extend the literature on this topic by examining the extent to which passage comprehension differentially predicts word-problem outcomes across varying levels of MD severity.

Generalized Vocabulary

Students must understand the vocabulary within a word problem to solve the problem successfully, regardless of whether the word problem is read aloud or presented in written form (Daroczy et al., 2015; Powell et al., 2019). Word problems contain both generalized-vocabulary terms (e.g., car) and mathematics-vocabulary terms (e.g., perimeter; Monroe & Panchyshyn, 1995; Powell et al., 2019). In this article, we refer to generalized-vocabulary terms as terms that do not have a meaning specific to the context of mathematics. The word car is an example of a generalized-vocabulary term because it holds meaning in everyday language rather than specifically within mathematics. Although students encounter generalized-vocabulary terms outside of the context of mathematics, these terms also appear in word problems (e.g., There are 20 cars parked at the bookstore and 10 cars parked at the pharmacy. How many more cars are parked at the bookstore than at the pharmacy?).

Some generalized-vocabulary terms have multiple meanings. In fact, many of these terms have mathematics-specific definitions that differ from their definitions in everyday language (Powell et al., 2019; Rubenstein & Thompson, 2002). For instance, consider the word foot, which refers to a body part in everyday language but a unit of measurement within mathematics (Rubenstein & Thompson, 2002). Because the word foot has both generalized and mathematics-specific meanings, we consider it to be both a generalized- and mathematics-vocabulary term. Students must understand both general and context-specific meanings of vocabulary terms to solve word problems, which can create challenges for students with MD (Powell et al., 2019).

Although students must identify word meanings to solve word problems, previous research indicates mixed results regarding the relation between students’ generalized-vocabulary knowledge and their word-problem performance (Chow & Ekholm, 2019; Purpura & Ganley, 2014). In some studies, researchers have identified significant correlations between vocabulary knowledge and various mathematics domains, including word-problem solving for both monolingual-English speaking students and emergent bilingual students (Purpura & Ganley, 2014; Vukovic & Lesaux, 2013; Xu, Burr, et al., 2022). However, in other studies, researchers have determined a limited role of generalized-vocabulary knowledge in problem solving and mathematics in general (Chow & Ekholm, 2019; Purpura & Reid, 2016). For instance, Purpura and Reid (2016) highlighted that generalized vocabulary was not a significant predictor of early numeracy skills when mathematical language performance was also considered. In this study, we add to the literature by including both generalized and mathematics vocabulary in our examination of language-based predictors of word-problem outcomes.

Mathematics Vocabulary

When solving word problems, students often encounter vocabulary terms with meanings that are unique to the context of mathematics (Fuchs et al., 2015; Kintsch & Greeno, 1985; Powell et al., 2019). We consider these terms mathematics-vocabulary terms. Some mathematics-vocabulary terms, such as integer and quadrilateral, have only mathematics-specific meanings; in a model of mathematics-vocabulary categories, Monroe and Panchyshyn (1995) label these terms technical vocabulary terms. Other mathematics-vocabulary terms are subtechnical vocabulary terms because they have multiple meanings that are context-dependent (e.g., foot as a unit of measurement vs. foot on a person’s body, as previously discussed). Moreover, Monroe and Panchyshyn explain that mathematics vocabulary also includes symbolic vocabulary, such as exponent and fraction notation. Students must comprehend technical, subtechnical, and symbolic mathematics vocabulary to successfully solve word problems, regardless of whether problems are read aloud or presented in written form.

Previous research indicates a consistent relation between mathematics vocabulary and word-problem performance (Lin, 2021; Peng & Lin, 2019). In a study of fourth-grade students in China, Peng and Lin (2019) determined that mathematics vocabulary contributed to word-problem performance after controlling for generalized vocabulary, working memory, processing speed, and IQ. In a meta-analysis of word-problem predictors, Lin (2021) further investigated the role of mathematics vocabulary and determined that it significantly predicted word-problem outcomes for students in third through fifth grades. Among third-grade emergent bilinguals with MD, Powell et al. (2020) noted limited mathematics-vocabulary knowledge relative to monolingual-English speakers; this difficulty with mathematics vocabulary was especially pronounced among those who also exhibited challenges with word problems and equation solving. In this study, we build on the research base examining the relation between mathematics vocabulary and word-problem solving by focusing on how this relation manifests across third-grade students with varying degrees of MD.

Purpose and Research Question

Given that students rely on language skills to solve word problems, we explored the extent to which four language skill sets predicted word-problem performance: word-reading accuracy, passage comprehension, generalized-vocabulary knowledge, and mathematics-vocabulary knowledge. To specifically gain insight into language-based barriers to word-problem solving, we included only students with demonstrated word-problem difficulties in our study. The sample of students in this study was from a randomized controlled trial measuring the efficacy of a word-problem intervention for third-grade students with MD (Powell et al., 2021). This study is a secondary analysis of data collected at pretest (i.e., prior to randomization) during the randomized controlled trial.

We were especially interested in examining (a) if any significant heterogeneity of language skills existed within the spectrum of students with MD and (b) how such heterogeneity could contribute to differences in word-problem outcomes. This examination stemmed from research that has explored addressing student needs across varying degrees of MD (Dennis, 2015; Powell & Fuchs, 2015). Given the role of language in word-problem solving, we sought to investigate how language variables could differentially predict word-problem performance of students with varying degrees of MD. Recognizing the potential for differential predictive relations dependent on the severity of students’ MD, we assessed predictors using unconditional quantile regression (UQR). The following research question guided our investigation: Which language skills differentially predict the word-problem performance of students with varying degrees of MD?

Method

Context and Setting

Prior to administering measures to participants, we obtained approval from our university’s Institutional Review Board and our school district partner to conduct research in public schools. The school district in which we worked was a large urban district in the southwestern United States with a population of more than 80,000 students. Among all students in the district, 55.5% were Hispanic or Latine, 29.6% were White, 7.1% were African American, and 7.7% belonged to other racial or ethnic categories. In addition, 52.4% of students in the district were economically disadvantaged, 27.1% were emergent bilingual students, and 12.1% received special education services. The district’s graduation rate was 90.7%.

Participants

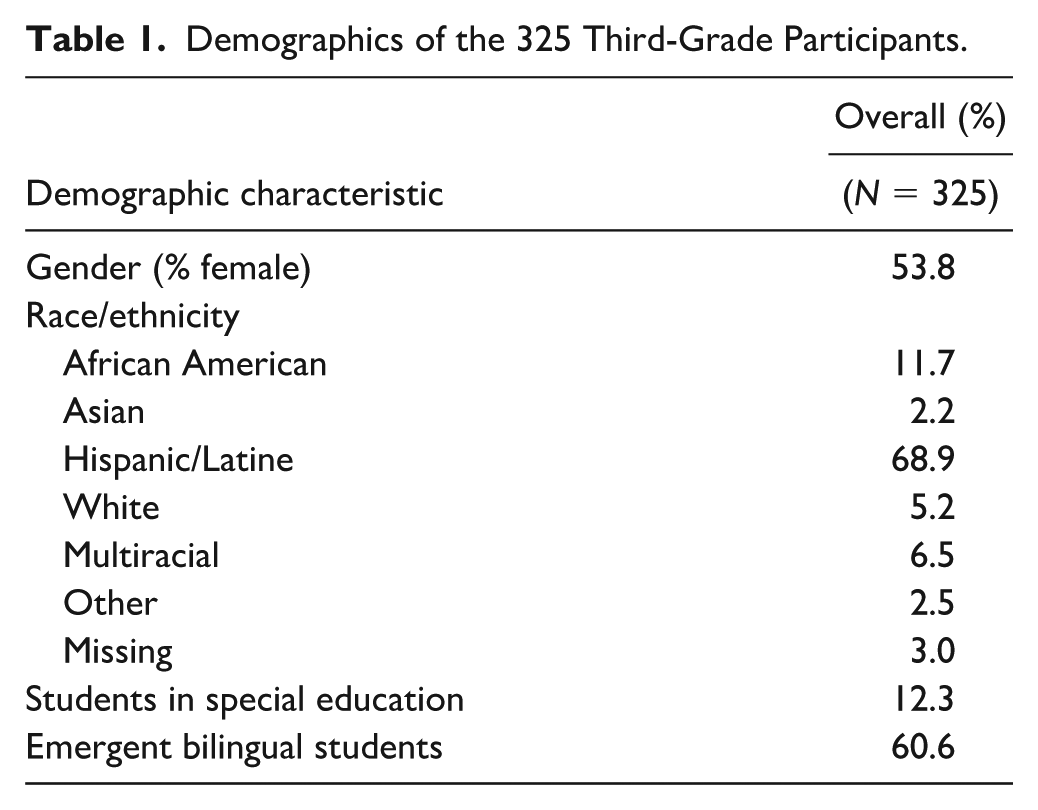

We recruited two cohorts of third-grade students for participation in this project. Across these two cohorts, we administered initial screening assessments to students at 13 schools in 103 third-grade classrooms. In total, we screened 1,734 third-grade students for MD. We identified 325 students with MD, based on performance at or below the 25th percentile on the Single-Digit Word Problems measure (Jordan & Hanich, 2000). We chose the 25th percentile as our cutoff because it is commonly used in research about students with MD (Geary et al., 2012; Nelson & Powell, 2018). Table 1 provides the demographics of the 325 students with MD.

Demographics of the 325 Third-Grade Participants.

Research Team

Project staff administered all measures discussed in this study. All project staff were pursuing or had already obtained master’s or doctoral degrees in education-related fields. Across the two cohorts, 28 project staff members administered measures to participants. Of the project staff, 26 were female. Regarding project staff members’ racial backgrounds, 17 identified as White, 6 as Hispanic, 2 as African American, 2 as Asian American, and 1 as American Indian. In August and September of each school year, all project staff participated in three pretesting training sessions that lasted 3 hr per session, for a total of 9 hr of training.

Measures

To measure participants’ language and word-problem skills, we administered a combination of researcher-developed and standardized tests. Each measure is described in detail within this section.

Word-Reading Accuracy

To measure participants’ word-reading accuracy, we administered the Wide Range Achievement Test (WRAT 4) Word Reading (Wilkinson & Robertson, 2006). This measure assessed letter identification and word recognition on 55 items. Participants’ total scores reflected correct responses to both the letter identification items and word recognition items. Project staff terminated the test after a participant responded incorrectly to 10 consecutive items. Test-retest reliability of this measure was .86.

Passage Comprehension

We measured participants’ passage comprehension skills using the Woodcock Johnson-IV (WJ-IV) Passage Comprehension test (Schrank et al., 2014). On this measure, participants read short passages and identified missing keywords using the context provided in each passage. Project staff terminated the test after a participant provided six consecutive incorrect responses. Participants’ total scores reflected the number of correctly completed items. Test-retest reliability of this measure was .89.

Generalized Vocabulary

We administered the Wechsler Abbreviated Scale of Intelligence II (WASI-II) Vocabulary to evaluate participants’ generalized-vocabulary knowledge (Wechsler, 2011). This measure included three picture-based items and 22 verbal items. For the picture-based items, participants named the object presented in a picture. For the verbal items, participants defined words presented orally and visually. Project staff terminated the test after a student provided five consecutive incorrect responses. Split-half reliability coefficient for this measure met or exceeded .90.

Mathematics Vocabulary

We measured participants’ mathematics-vocabulary knowledge using the Mathematics Vocabulary: Grade 3 assessment (Powell & Tran, 2016). This assessment is a revised and condensed version of a measure that was developed for use across Grades 3, 4, and 5 (Powell et al., 2017). Note that the multigrade measure was created in 2014 but published in 2017. The multigrade measure includes mathematics-vocabulary terms introduced in Grades 3, 4, and 5 throughout commonly used curricula in the United States: enVisionMATH, Everyday Mathematics, and GO Math!. In total, 133 terms were included in the multigrade measure.

When all terms introduced after Grade 3 were eliminated, 45 terms remained in the Mathematics Vocabulary: Grade 3 measure (Powell & Tran, 2016). All terms were included in Grade 3 glossaries, although some terms were introduced in prior grades (i.e., K, 1, or 2). Test questions included matching terms with pictures, drawing pictures to represent terms, and providing written responses. Participants had 12 min to answer as many questions as possible and received 1 point for each correct response. Cronbach’s α of the Mathematics Vocabulary: Grade 3 measure was .92. For additional information about this measure, see Powell et al. (2020).

Word-Problem Solving

Three measures were administered to assess our outcome variable of interest, word-problem performance: Word Problems Test-Brief, Word Problems Test-Part 1, and Word Problems Test-Part 2 (Powell & Berry, 2015). Note that overlap was minimal between the researcher-developed mathematics-vocabulary measure and each of the word-problem measures. Word problems within all measures were intentionally designed to reflect a culturally inclusive set of situations and characters that could be relatable to students with a diverse range of backgrounds.

The Word Problems Test-Brief measure consisted of eight double-digit word problems: one total problem, three difference problems, and four change problems. Project staff read the problems aloud and gave students approximately 1 min to complete each problem. Upon request, project staff reread problems up to one time. Participants earned points for correct numerical and label responses, for a total of 16 possible points. Cronbach’s α for the Word Problems Test-Brief for the sample of students with MD was .83.

On the Word Problems Test-Part 1 measure (Powell & Berry, 2015), participants solved nine double-digit word problems: two total problems, one difference problem, four change problems, and two multischema problems. Two of these problems required interpretation of graphs. Participants earned points for correct numerical and label responses for a total of 18 possible points. Cronbach’s α for students with MD was .84.

The Word Problems Test-Part 2 measure (Powell & Berry, 2015) included nine double-digit word problems: two total problems, two difference problems, three change problems, one equal groups problem, and one multischema problem. One problem included irrelevant information, and three problems required interpretation of graphs. The total number of possible points on this measure was 18. Cronbach’s α for students with MD was .85.

Mathematics Computation

Mathematics computation served as a control variable in this study due to its relation with word-problem performance (Gilbert & Fuchs, 2017; Lin, 2021). To measure mathematics computation, we administered the Math Computation subtest of the WRAT-4 (Wilkinson & Robertson, 2006). This test consisted of 40 written computation problems that increased in difficulty. Students had 15 min to answer as many questions as possible and were awarded 1 point per correct answer. Students also received an additional 15 points for an oral arithmetic section; we did not administer this section to students because it was not necessary for our sample. The maximum possible score students could earn was thus 55. Cronbach’s α was 0.76.

Emergent Bilingual Status

In addition to mathematics computation, emergent bilingual status also served as a control variable in our analysis. To identify students as emergent bilinguals, we used teacher-provided data on students who completed the Texas English Language Proficiency Assessment System (Powell et al., 2022). This test is given annually to all students who are still developing fluency in the English language. The test measures listening, speaking, reading, and writing in English. Students who completed the test during the study were identified as emergent bilinguals in our analysis.

Fidelity of Assessment Implementation

Aside from the English proficiency test, all testing sessions were recorded. We randomly selected greater than 20% of recorded sessions, which were evenly distributed across test administrators (i.e., project staff members). We assessed implementation fidelity within recorded testing sessions using a checklist. Average fidelity across sessions was 98.5% (standard deviation [SD] = 0.024).

Scoring

Two project staff members independently entered student responses to all items on all measures into an electronic database. Original scoring reliability was 99.9% across measures. The two project staff members and the project manager met to resolve all discrepancies. All participant responses were then converted to either correct (1) or incorrect (0) in the electronic database, ensuring full accuracy of scoring.

Data Analysis

We used unconditional quantile regression (UQR; Firpo et al., 2009) to explore whether the influence of mathematics language skills and generalized language skills on word-problem-solving performance varied for students with different levels of word-problem-solving ability when controlling for computation skill. Unconditional quantile regression is distinct from conditional quantile regression (Koenker & Hallock, 2001), where the effects are conditional on the distribution of covariates. In contrast, UQR models the influence of predictors on the unconditional (marginal) quantiles of the outcome variable. In UQR, quantiles are defined prior to regression; therefore, the model is not influenced by any of the covariates in the model (Jiang & Yu, 2024).

Unconditional quantile regression is particularly useful for investigating heterogeneous effects within a sample of students with MD. It provides a more comprehensive understanding of how predictor variables impact performance at various points along the achievement spectrum rather than solely focusing on mean outcomes even within a restricted range of word-problem-solving scores. This allows us to detect differential effects that might be obscured in traditional regression methods that focus on average relationships. Because more than 60% of our participants were identified as emergent bilingual, we also controlled for emergent bilingual status.

To implement the UQR approach, we choose the two-step UQR method introduced by Firpo et al. (2009) that consists of running a regression of a transformation—a (recentered) influence function—of the outcome variable on the explanatory variables. The first step was to create a binary variable relying on the recentered influence function (RIF) for each quantile of interest (here, .20, .30, .40, .50, .60, .70, .80, .90). We then fit ordinary least squares (OLS) regression models replacing the original dependent variable with the RIF-based binary variable. This two-step process estimates the relationship between predictor variables and word-problem-solving performance and, more importantly, tests whether effects differ across the unconditional distribution of the dependent variable (Porter, 2015).

We evaluated three comparisons for each predictor: (a) the .20 versus .50 quantiles; (b) the .20 versus .80 quantiles; and (c) the .50 versus .80 quantiles. These percentile values were selected to represent the continuum of performance within our sample of students with MD. To ease interpretation, all variables were z-transformed prior to analysis. All analyses were conducted in Stata 15.1. The rifhdreg command (Rios-Avila, 2020) was used for creating RIFs of quantiles of interest.

Results

Heterogeneity of MD

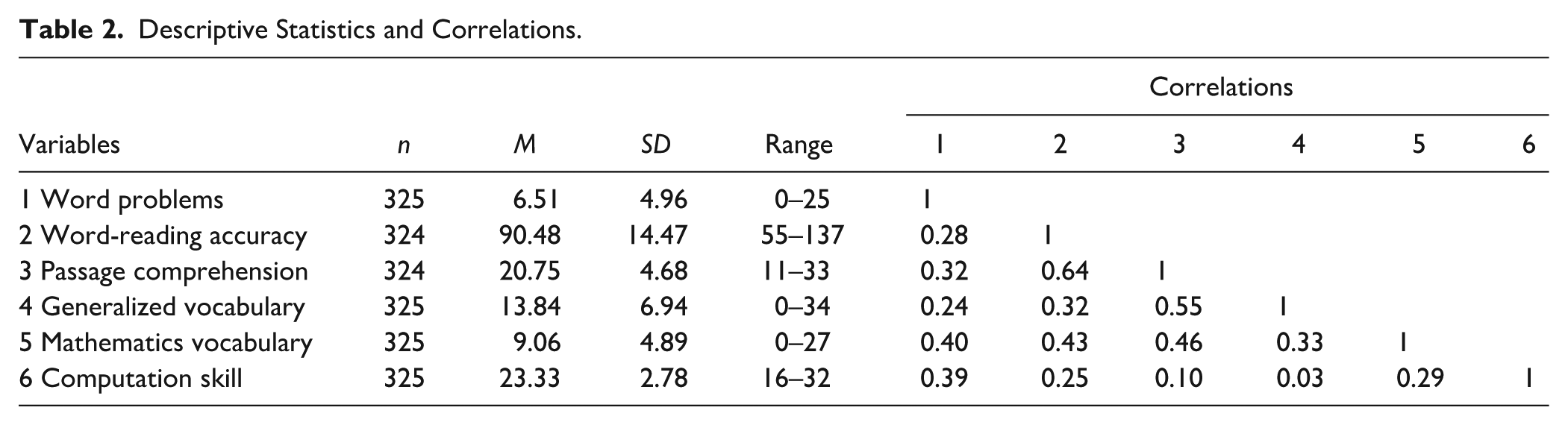

We first examined heterogeneity of our sample of students with MD. Table 2 includes results of this examination. On word-reading accuracy, scores ranged from 55 to 137 (M = 90.48, SD = 14.47). Scores on the passage comprehension measure ranged from 11 to 33 (M = 20.75, SD = 4.68). Scores on general vocabulary ranged from 0 to 34 (M = 13.84, SD = 6.94), and scores on mathematics vocabulary ranged from 0 to 28 (M = 9.06, SD = 4.89). Computation scores ranged from 16 to 32 (M = 23.33, SD = 2.78). Finally, word-problem scores ranged from 0 to 25 (M = 6.51, SD = 4.96).

Descriptive Statistics and Correlations.

Predictors of Word-Problem Performance

Means, standard deviations, and bivariate correlations between the study variables are included in Table 2. Word-problem performance was moderately related to word-reading accuracy (r = .28), passage comprehension (r = .32), generalized vocabulary (r = .24), mathematics vocabulary (r = .40), and computation skill (r = .39). Passage comprehension proved to be strongly related to word-reading accuracy (r = .64) and generalized vocabulary (r = .55).

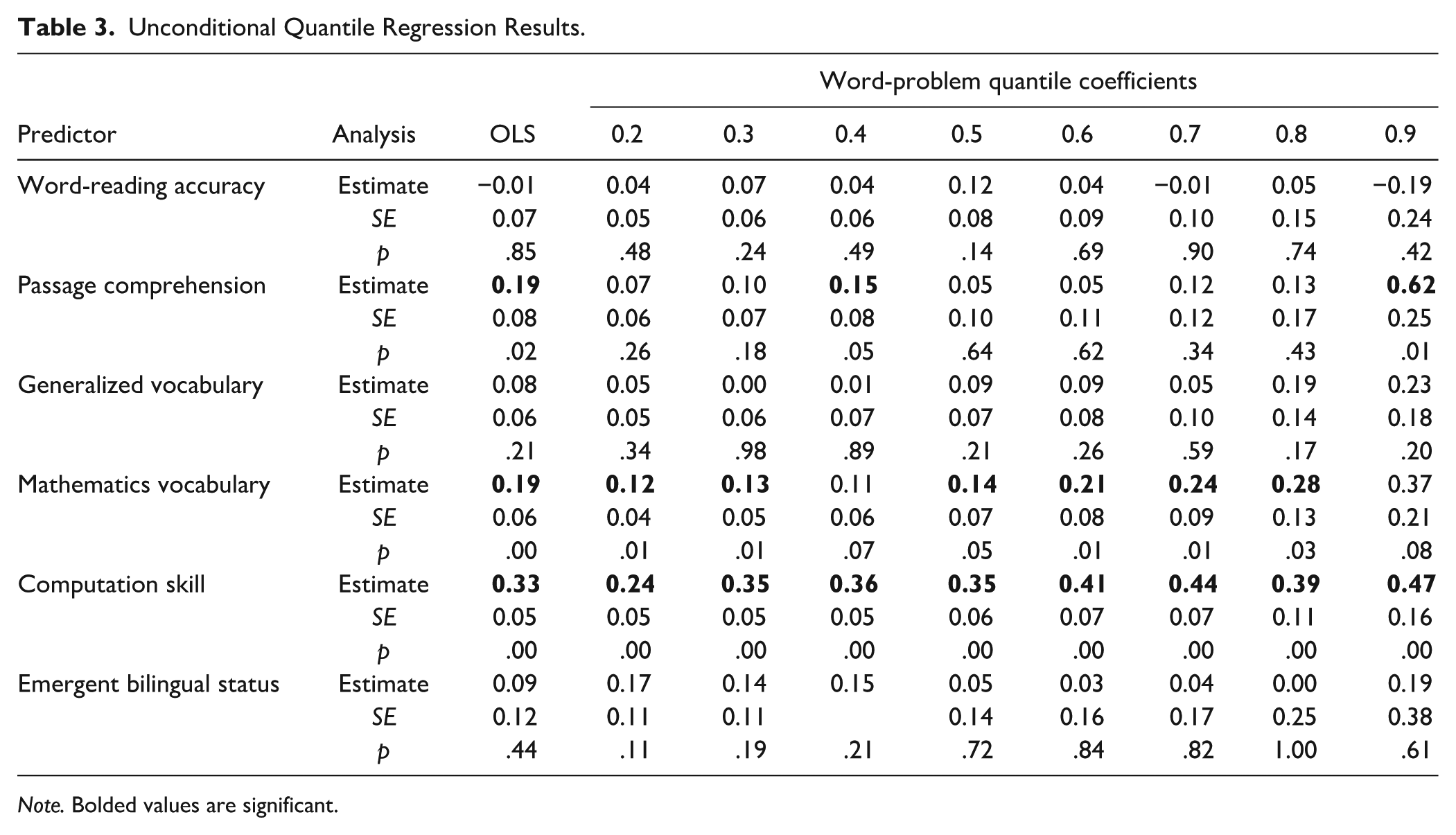

Results of OLS and UQR regression predicting students’ word-problem performance from generalized language skills and mathematics-vocabulary skill controlling for emergent bilingual status are presented in Table 3. Results of the OLS regression indicated passage comprehension (β = 0.19, standard error [SE] = 0.08, p = .02), mathematics vocabulary (β = 0.19, SE = 0.06, p < .001), and computational skill (β = 0.33, SE = 0.05, p < .001) were significant predictors of word-problem performance. The overall model accounted for 27% of the variance, F (6, 307) = 19.40, p < .001.

Unconditional Quantile Regression Results.

Note. Bolded values are significant.

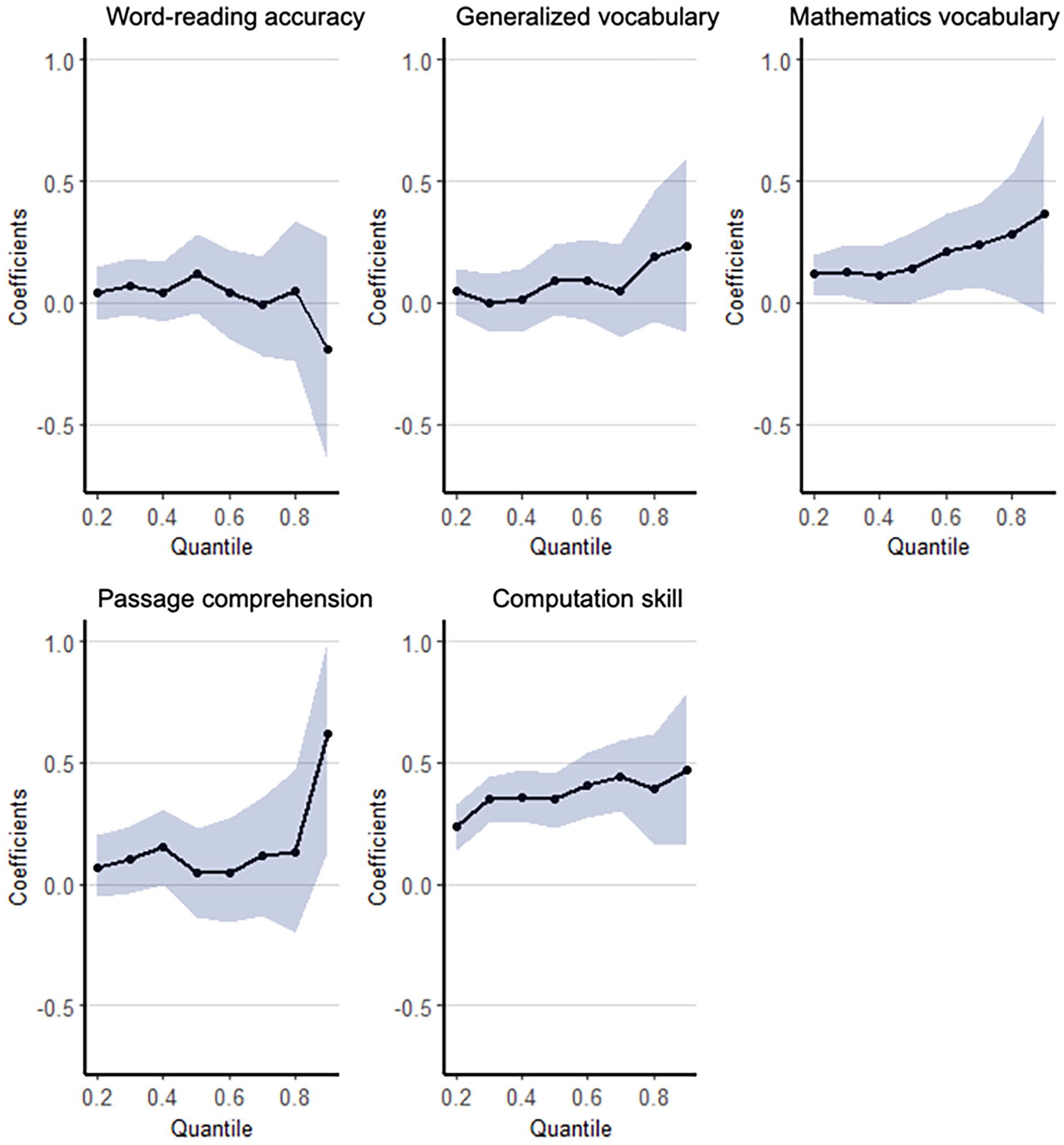

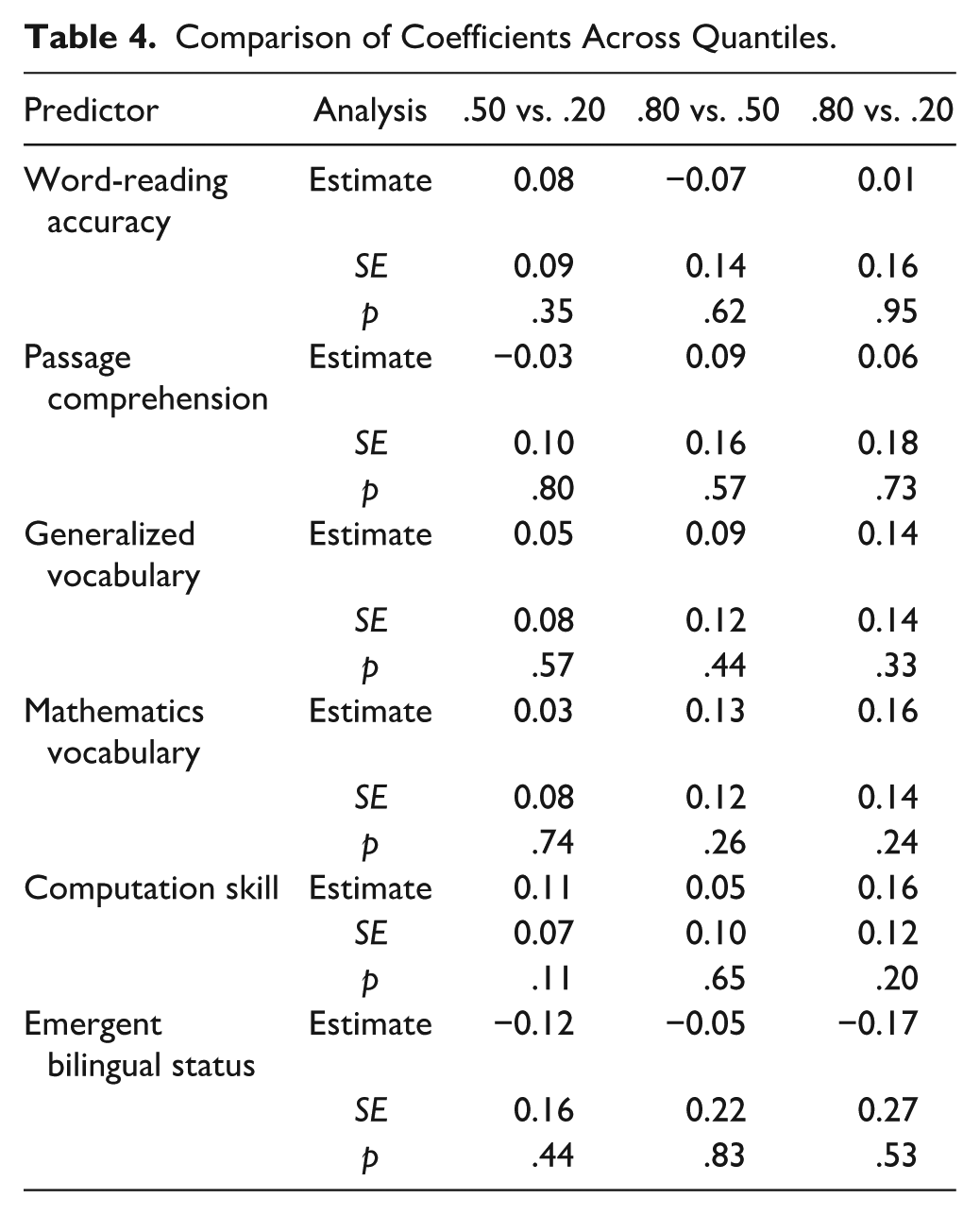

Results from the UQR analyses indicated mathematics vocabulary and computation skill were significantly associated with word-problem solving when controlling for the effect of other variables. This association was significant for computational skill across all quantiles, whereas for mathematics vocabulary, it was significant across all quantiles except for the 40th (p = .07) and 90th quantiles (p = .08). The quantile-process plots in Figure 1 demonstrate that the unique predictive utility of mathematics vocabulary and computation skill for word-problem solving tends to increase from the 20th quantile (coefficient = 0.12 for mathematics vocabulary and 0.24 for computation skill) to the 90th quantile (coefficient = 0.37 for mathematics vocabulary and 0.47 for computation skill). Slope comparisons revealed no significant differences across quantiles for either computational skill or mathematics vocabulary (refer to Table 4). Furthermore, controlling for the effects of other variables, the predictive utility of passage comprehension was conditional on word-problem level. That is, passage comprehension was significantly related to word-problem solving at the .40 and .90 quantiles. Comparison between quantiles indicated passage comprehension’s predictive utility did not differ statistically across values of word-problem solving. Last, controlling for the effects of other predictors, word-reading accuracy and generalized vocabulary were not related to word-problem solving at any quantile.

Unconditional Quantile Regression Coefficients for Predicting Word-Problem Performance.

Comparison of Coefficients Across Quantiles.

Discussion

Solving word problems is a complex task that requires the successful integration of numeracy- and language-based skill sets (Fuchs et al., 2020; Kintsch & Greeno, 1985). In this study, we investigated the extent to which language skills differentially predicted word-problem performance among third-grade students with MD when controlling for computation skill and emergent bilingual status.

Language Predictors

Word-Reading Accuracy

Our analysis indicated word-reading accuracy did not predict word-problem performance of third-grade students with MD within any quantile. The results complement the studies of both Bjork and Bowyer-Crane (2013) and Wong and Ho (2017), which indicated no significant relation between word reading and word-problem performance among typically achieving students. Our results also partially corroborate those of Fuchs et al. (2020), who determined an inconsistent relation between word reading and word-problem solving (i.e., at only specific quantiles of performance). Although word reading is a prerequisite skill needed to access word problems presented in written form, it is possible that students rely more heavily on other language skills (e.g., passage comprehension) when they schematically represent and solve word problems (Kintsch & Greeno, 1985).

In addition, it is possible word-reading ability may differentially relate to word-problem performance dependent on whether word problems are presented by reading aloud, in written form where students read independently, or both. In this study, problems were presented in written form and read aloud; this was also the case in Fuchs et al.’s (2020) study and Wong and Ho’s (2017) study. More research is needed that isolates these format differences to provide insight into potential differential effects of word reading based on differences in test administration format, particularly for students with MD and emergent bilingual students.

Passage Comprehension

Our analysis revealed a significant predictive relation between passage comprehension and word-problem performance (β = 0.19, SE = 0.08, p = .02). When examining these relations at individual quantiles, passage comprehension significantly predicted word-problem performance at the 0.40 and 0.90 quantiles. Interestingly, these were the two quantiles in which mathematics vocabulary did not significantly predict word-problem performance. That is, students at these particular quantiles did not follow the overall trends in our analysis and instead appeared to recruit generalized passage comprehension skills more so than mathematics-vocabulary skills to solve word problems. Our relatively small sample size may have contributed to this variation in results across quantiles.

Moreover, the inconsistent predictive relation between passage comprehension and word-problem solving complements some previous research results. For instance, in Boonen et al.’s (2014) study, passage comprehension was related to word-problem performance at the overall test level but not at the individual item level, and in Lin’s (2021) meta-analysis of word-problem predictors among elementary-age students, passage comprehension did not significantly predict word-problem performance. Additional research can further investigate the nuanced relations between passage comprehension and word-problem solving, especially using different administration formats for word-problem measures (i.e., problems only read aloud, only presented in written form, or both) and larger sample sizes.

Generalized Vocabulary

Generalized-vocabulary knowledge did not significantly predict word-problem performance at any quantile in our analysis. Initially, this result may seem surprising given the presence of generalized-vocabulary terms in word problems (e.g., words such as car, bookstore, and pharmacy in the following problem: “There are 20 cars parked at the bookstore and 10 cars parked at the pharmacy. How many more cars are parked at the bookstore than at the pharmacy?”). Moreover, researchers in some studies have determined significant relations between vocabulary knowledge and mathematics performance across domains, including word-problem-solving (Purpura & Ganley, 2014; Vukovic & Lesaux, 2013). However, several other studies indicated this relation is insignificant (Chow & Ekholm, 2019), especially when mathematics language is included in the model (Purpura & Reid, 2016). The inclusion of a mathematics-vocabulary measure in our study may explain why generalized-vocabulary knowledge did not significantly predict word-problem performance in our analysis. Despite the presence of both generalized and mathematics-specific vocabulary in word problems, it is possible that only mathematics-vocabulary knowledge is significantly recruited in students’ problem-solving process (i.e., in the translation of a text-based problem to a solvable number sentence; Kintsch & Greeno, 1985). Future research should further investigate this pathway.

Mathematics Vocabulary

Our results indicated a predictive relation between mathematics vocabulary and word-problem performance across all but two quantiles. The relation between mathematics vocabulary and mathematics performance across domains, including word-problem solving, is substantiated in several other studies (Lin, 2021; Peng & Lin, 2019; Purpura & Reid, 2016). Moreover, within our analysis, the predictive utility of mathematics vocabulary tended to increase as students’ word-problem performance improved. This increasing trend may suggest that as students demonstrate more success with word-problem solving, they more actively rely upon mathematics-vocabulary knowledge to support their problem-solving process. Finally, when contrasted with the lack of predictive relation between generalized vocabulary and word-problem performance, mathematics vocabulary appears to be a unique predictor of word-problem solving that is distinct from generalized vocabulary (Purpura & Reid, 2016).

Implications for Research

Because our sample represented one school district, future research is warranted with larger samples and across other districts and geographic areas. In addition, researchers should further investigate language predictors of word-problem performance across students at multiple grade levels, students without MD, students with and without identified reading difficulty, and emergent bilingual students. Particularly given the substantial and increasing number of emergent bilingual students in the United States, it is critical to understand the nuances of how generalized and content-specific language skills shape problem-solving performance for this population (Powell et al., 2020). Large-scale analyses of differential relations between word-problem performance and varying levels of English proficiency can provide insight into these nuances. These insights can lead to increased breadth and depth of research-validated practices to support word-problem development across diverse student populations.

In addition to assessing the generalizability of our results, future research should focus on further development of mathematics-vocabulary measures. In particular, there is a need for standardized, grade-level-specific measures in this area. Given that researcher-developed measures have indicated relations between mathematics vocabulary and mathematics performance (Lin, 2021; Peng & Lin, 2019; Powell & Nelson, 2017; Purpura & Reid, 2016), it will be important to determine whether these results are substantiated across specific grade levels using standardized measures.

Implications for Practice

Given the relation between mathematics vocabulary and word-problem performance, practitioners should consider prioritizing mathematics-vocabulary instruction. Those with more severe MD may benefit from explicit instruction on applying mathematics-vocabulary knowledge to word-problem solving so they can successfully develop and recruit this skill set. Consistent with findings from intervention research with students with severe MD, this instruction should be provided within the context of intensive intervention that emphasizes individualization and frequent opportunities for student engagement (Dennis, 2015; Fuchs et al., 2017; Powell & Fuchs, 2015). Recommended practices in mathematics-vocabulary intervention include multiple exposures to vocabulary terms, use of visual and concrete representations, and schematic maps (Lariviere et al., 2024; Stevens et al., 2023). Although research on mathematics-vocabulary instruction is relatively sparse, these practices are also validated in the more established research base on general-vocabulary instruction (Marulis & Neuman, 2010; Marzano, 2004; Taylor et al., 2009).

In addition, practitioners should consider providing opportunities to build reading-comprehension skill within the context of mathematics word problems. Utilizing schema instruction and attack strategies (e.g., mnemonics that outline a problem-solving approach) can support students to comprehend the quantitative relationships within text-based problems (Powell & Fuchs, 2018). These tools can also help students distinguish relevant from irrelevant information in word problems (Powell et al., 2019; Powell & Fuchs, 2018).

Limitations

This study has several limitations to consider, the first of which centers on measurement. Each language construct included in the study was represented by a single measure. Utilizing multiple measures to capture each language construct would have provided more robust conclusions. In addition, because our word-problem measure was both read aloud to students and presented in written form for students to read themselves, we were unable to ascertain the extent to which students relied on each presentation format in their problem-solving process. Moreover, both the mathematics-vocabulary and word-problem measures were researcher-developed. Because we determined a consistent predictive relation between these skill sets, it would be helpful to assess the generalizability of our results using standardized measures.

In addition, the relatively small sample size of our study prevented feasibly conducting our analysis with attention to varying degrees of English proficiency among emergent bilingual students. As noted in the implications for research, future large-scale studies can investigate these variations. We accounted for this limitation in our study by controlling for emergent bilingual status.

Finally, across all four language predictors measured, 27% of the variance was accounted for in word-problem performance among third-grade students with MD. The majority of variance was, therefore, unexplained by our investigation, which could perhaps be accounted for by a combination of cognitive, numeracy, and even other language skills. For instance, Chow and Ekholm (2019) determined that receptive syntax predicted mathematics performance among first- and second-grade students, which is a language skill we did not include in our model.

Conclusion

In this study, we determined mathematics vocabulary significantly predicts word-problem performance among third-grade students with MD at all but two quantiles. In addition, the predictive relation between mathematics vocabulary and word-problem performance tends to increase as students’ word-problem performance improves. Although there was a limited predictive relation between passage comprehension and word-problem solving, neither word-reading accuracy nor generalized-vocabulary knowledge predicted word-problem performance at any quantile. These results underscore the importance of developing and utilizing evidence-based practices to provide mathematics-specific vocabulary instruction to students with MD.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was supported in part by Grant R324A150078 from the Institute of Education Sciences in the U.S. Department of Education to the University of Texas at Austin. The content is solely the responsibility of the authors and does not necessarily represent the official views of the U.S. Department of Education.