Abstract

Cross-cultural research in social and behavioral sciences has expanded hugely over the past 50 years, but progress is currently hampered by a lack of appreciation of the profoundly differing principles and goals of two distinct traditions. The first is the main variant of cross-cultural psychology (CCP), focusing on how culture shapes individual psychological functioning. The second was pioneered by Hofstede. It studies societal differences, and we name it “comparative culturology” (CC). We explain how these two paradigms differ. CCP is grounded in psychology and typically looks for unobservable individual-level constructs, which supposedly exist independently of their measurement, to provide understanding of individual differences as affected by culture. CC is an interdisciplinary field whose roots and impact span sociology, anthropology, political science, economics, management studies, psychology, and beyond. CC measures cultural dimensions as group-level constructs created by researchers, which are best understood as ecological manifolds: conglomerates of conceptually and statistically associated variables (not necessarily held together by a single underlying factor) that collectively explain national (and other group) differences. Given these paradigmatic distinctions, the two fields need not, and cannot, use the same validation methods. They should co-exist and collaborate based on mutual appreciation of their differences, without attempts by either field to impose its idiosyncrasies on the other.

Keywords

“Render to Caesar the things that are Caesar’s, and to God the things that are God’s.” “Culturology is not cross-cultural psychology, though culturology’s findings are of crucial importance for improving the conceptual heft of studies in cross-cultural psychology.”

Introduction

How can one explain the obvious differences in skin color between human populations that have lived close to the equator for thousands of years and those at high latitudes? Researchers who are interested in causes within individuals might compare samples from both locations and discover different genetic variants that determine skin color via the agency of group genetic adaptation. Other researchers may be more interested in the ecological conditions that determine pigmentation. Their answer might be that darker skin provides protection against ultraviolet radiation, which is stronger around the equator, whereas lighter skin facilitates vitamin D synthesis in conditions of reduced sunlight at higher latitudes (Barsch, 2003). Although these two groups of researchers have approached the same question with very different methods and provided very different answers, neither of them is wrong.

Evidently, different scientific disciplines can study and explain the same reality differently, yet without necessarily contradicting each other. Unfortunately, this logic is not always appreciated. For example, an intense debate has flared up in recent years between proponents of measurement invariance testing (i.e., analyzing the similarity of individual-level factor structures across societies) in cross-cultural research (Fischer et al., 2023; Meuleman et al., 2023; Sokolov, 2018) and the detractors of such testing (Welzel et al., 2021, 2022). The former academic camp claims that invariance testing is necessary whenever a research instrument is administered to samples from different cultures, regardless of the level of analysis (individual or ecological) and the goal of the analysis (comparisons of individuals or comparisons of ecological units). The latter camp rejects this view. We believe that, before addressing a specific question of this type, one needs to discuss a more fundamental issue: the differences between the goals and nature of two academic fields. While, in a very broad understanding of the term “cross-cultural psychology” (CCP), both fields can be placed under that umbrella, the fact that their main interests and methods may diverge quite substantially justifies a distinction (Smith & Bond, 2022).

Thus, in this paper, we compare two fields. The first is the core, or classic, branch of CCP that compares individuals from different cultures on psychological constructs operationalized at the individual level. These constructs arguably can be classified under the broad umbrella of “personality,” including not only traits (also known as “basic tendencies”) but also “characteristic adaptations,” referring to self-esteem, values, attitudes, and more (McAdams & Pals, 2006; McCrae & Costa, 2008). The second, pioneered by Geert Hofstede (1980) and developed subsequently by Ronald Inglehart, Shalom Schwartz, Michael Bond, Christian Welzel, Michael Minkov, and other authors, compares whole societies on dimensions of culture usually obtained from aggregated item scores taken from individuals’ self-reports from those cultures. The similarity of item content used in both fields is a major source of misunderstandings concerning the differences between the two.

Hofstede’s field still does not have an official name. It has been called “culturology” (Minkov, 2013) and “nationology” (Akaliyski et al., 2021). However, these terms are too imprecise to capture accurately the scope of this branch of social science. Therefore, we suggest “comparative culturology” (CC): simultaneous comparisons of multiple modern societies (nations or other cultural entities) through dimensions of culture. CC differs from “culturology,” which usually focuses on single cultures, and from Murdock and White’s (1969) classic cross-cultural anthropology, which studies the cultures of pre-industrial ethnic groups, where many modern societal indicators (for instance, corruption, road death tolls, or educational achievement) are inapplicable.

The largest-scale CC projects that have produced dimensional models of culture are, in chronological order, those by Hofstede (1980, 2001), Schwartz (1994, 2008), Inglehart and Baker (2000), House et al. (2004), Inglehart and Welzel (2005), Welzel (2013), Minkov (2018), and Beugelsdijk and Welzel (2018). Smaller projects, such as the one led by Michael Bond (Chinese Culture Connection, 1987), have also yielded valuable insights into the dimensionality of national culture and its consequences. Although Gelfand et al. (2011) proposed only one dimension of culture, tightness-looseness, it has enjoyed great popularity.

Although the findings of CC have been used in diverse disciplines, they have been especially influential in CCP and cross-cultural management. According to Smith and Bond (2022), Hofstede’s pioneering work gave particular emphasis to the comparative focus of CCP, whereas Peterson (2003) noted that Hofstede’s first book shaped the basic themes, structures, and controversies of cross-cultural studies for over 20 years. The work of Ronald Inglehart, as well as of Shalom Schwartz, has also enjoyed great popularity across disciplines, as it demonstrates how cultural values shape the trajectories that societies follow.

Despite the influence of CC on CCP, we have observed in recent years an increasing tension between current proponents of these two fields, especially regarding questions of measurement. Certain followers of the CCP tradition have claimed that, as CC often uses the same type of data as CCP, viz., responses to questionnaire items provided by individuals, CC must treat its data in the same way as CCP (Fischer et al., 2023; Meuleman et al., 2023; Sokolov, 2018). This claim ignores the obvious fact that the same material can be used for very different research purposes. Historians, for example, would use a medieval manuscript to learn something about political developments in the society where it was written, whereas linguists would use it to find out how a particular language was used in the past. The goals of the two fields require different methods despite using the same data source. An interdisciplinary approach is also possible, and sometimes desirable, but it is not mandatory.

As human cultures are not simply mechanistic accumulations of human minds but products of the interactions of those minds, CCP and CC must realize that they operate in different domains. They can certainly collaborate, but they should not try to replace or subsume each other’s constructs, theories, and methods. Failure to appreciate that difference would be similar to claiming that because humans consist of cells, psychologists cannot properly understand humans without also being cytologists and using their research methods, whereas cytologists need training in quantum mechanics and must use the methods of that science, because all cells consist of atoms.

Structure of the Present Article

As proponents of the CC paradigm with varying relationships to CCP, we first explain how we see the difference between these two fields, focusing on their respective goals, as well as the forms and structures of data that each has available. Then, we explain what these differences imply for the two fields’ philosophical assumptions and for their methods. We explain the constructs of CC and compare them to those of CCP, partly drawing on Coltman et al.’s (2008) distinction between “reflective” and “formative” measurement models (see also Diamantopoulos et al., 2008). We believe that misunderstandings between CCP and CC stem in part from a lack of mutual appreciation of how the two fields position their constructs within that framework. However, we believe that a categorical distinction between reflective and formative paradigms is neither possible nor helpful here: both have their weaknesses, as they oversimplify a complex reality (VanderWeele, 2022).

As individualism-collectivism (IDV-COLL) is the best-known and perhaps most important dimension of national culture (Beugelsdijk & Welzel, 2018; Smith et al., 1996), we use examples related to this dimension throughout this paper.

Goals and Practical Constraints for Research in Cross-Cultural Psychology and Comparative Culturology

To help explain why researchers in CC and in mainstream CCP tend to define and measure their constructs in different ways, three differences between these fields are especially important: (1) Mainstream CCP treats individuals living in diverse societies or cultural communities as its focal objects of study, whereas CC treats societies or cultural communities that individuals inhabit as its main interest. (2) Mainstream CCP has traditionally placed greater emphasis on developing basic theories prior to application, whereas CC has traditionally given more emphasis to practical utility as a basis for developing its theories. (3) Researchers in mainstream CCP typically have at their disposal relatively few variables collected from many individuals, whereas researchers in CC typically have at their disposal many variables collected from few societies.

Individuals Versus Societies as Focal Objects of Study

As a sub-field of general psychology, the main focus of CCP is on comparing the minds and behaviors of individuals from culturally different societies. According to Greenfield (2000), “the methodological ideal of the paradigmatic cross-cultural psychologist is to carry a procedure established in one culture, with known psychometric properties, to one or more other cultures, to make a cross-cultural comparison” (p. 224). From this perspective, CCP’s “methodological ideal” refers to comparisons of individual-level patterns. The definitions of CCP cited by Berry et al. (1992) also indicate that CCP is the study of “subjects from two or more cultures” and “members of various culture groups” (p. 1).

In contrast, the work of Hofstede (1980, 2001), and his successors, suggests that CC is interested in individuals primarily to the extent that it is possible to extract information from them that can be used to understand whole cultural units, such as nations, national regions, and ethnic groups. As Hofstede (2001) famously wrote, “cultures are not king-size individuals. They are wholes, and their internal logic cannot be understood in the terms used for the personality dynamics of individuals. Eco-logic differs from individual logic” (p. 17).

To understand this distinction better, it may be helpful to compare the way that CCP and CC would treat the same set of respondents’ statements from multiple societies. A psychologist might be most interested in whether individuals who agree with statement S1 also agree with statement S2, but not S3, and whether the S1xS2 correlation justifies the conclusion that there is an underlying individual-level factor behind S1 and S2 that causes agreement with those statements and, in addition, predicts behavior B1. CCP might employ the same methodology and go a step further: it would test how similar these associations are in diverse societies. CC would use a very different approach, however. It would not necessarily search for any patterns at the individual level, but would address a different question: If a society has a relatively high percentage of respondents who agree with statement S1, does it also have a relatively high percentage of respondents who agree with statement S2, even if those two groups of respondents do not necessarily consist of the same persons? And how is variation among societies in these percentages linked to variation in relevant outcomes?

The logic of studying phenomena that are related at the societal level but not necessarily at the individual, may seem puzzling to those who are not used to ecological analyses. Yet, it is relatively simple: various societal outcomes can be explained in terms of aggregate scores of variables that are not correlated at the individual level. For instance, the values of humility and power are correlated positively at the national level and are both found within the “hierarchy” domain in Schwartz’s (2008) model of culture-level value priorities, although these values are unrelated at the individual level (Schwartz & Sagiv, 1995). This means that humility and power cohabit within a particular society: the more important it is for the powerful to maintain their power, the more likely they will be to instill humility and submission in the rest of the population, and they are more likely to be successful if their subjects are dependent on them. However, there is apparently no individual-level mechanism that associates power-seeking or social dominance orientation with humility.

Another example is available from Minkov et al. (2017) and Minkov et al. (2023). They found that conformism and social ascendancy (status-seeking) are two different dimensions at the individual level. Nevertheless, they are strongly associated at the national level. Therefore, they could be regarded as parts of the same construct: IDV-COLL. In that case, testing whether the nation-level construct is culturally invariant at the individual level would be illogical. It would be like expecting that a structure of a molecule should be replicated at the level of atoms or vice-versa, simply because both molecules and atoms contain protons, neutrons, and electrons.

There are numerous examples of behavioral variables that are correlated at the national level but not at the individual level. Yet, the nation-level correlations can yield constructs that have strong predictive properties and are consistent with sound theory. For instance, Minkov and Beaver (2016) show that adolescent fertility and paternal absenteeism are correlated at the national level and yield a construct that can be explained in terms of life history strategy theory, predicting criminal violence rates across 51 nations. Adolescent fertility and paternal absenteeism measures cannot yield any pattern across individuals, as the former is a characteristic of women, whereas the latter is a characteristic of men. This example illustrates the inherent distinction between the ecological and individual levels of analysis.

Certain researchers who follow the CCP tradition sometimes argue that as societies consist of individuals, and CC collects its data from them, societal-level patterns must be validated through replication at the individual level in each society under study. This is what invariance is designed to address. For instance, in his criticism of the view that nation-level analyses do not require individual-level invariance testing, Sokolov (2018) states that values are important determinants of the individual actions that trigger political change, which, in his view, means that individual values matter. This position ignores the fact that what matters most for political change, or any other culture-related societal outcome, is usually not how values are combined within an individual but to what extent each particular value prevails in a society.

We provide an example from the latest (seventh) wave of the World Values Survey (www.worldvaluessurvey.com). It contains a section where the respondents select values or traits that they believe it is important to teach to their children. Two of these are “thrift” (Q13P) and “determination and perseverance” (Q14P). The Chinese Culture Connection (1987) found that when these are measured as personal values, they are correlated at the national level, forming the backbone of an important dimension of national culture, originally called “Confucian Work Dynamism,” which Hofstede (2001) later renamed “long-term orientation,” incorporating it in his model as a fifth dimension of national culture. Indeed, the World Values Survey dataset confirms the positive relationships between these two variables at the national level: r = .56 (p < .0001, n = 64 countries). Note that differences in response style cannot explain this fairly strong correlation, as the respondents freely choose items from a list, rather than rating them on a scale. Thus, a country that scores high on either of the two variables is likely to score high on the other one as well, and this ecological combination will result in a culture of self-discipline and orientation toward self-improvement activities, such as education, that pay off in the somewhat distant future (Hofstede, 2001).

However, the same two variables are associated negatively in the United States (r = −.106, p < .0001, n = 2,592 individuals) and in China (r = −.143, p < .0001, n = 3,022 individuals). This means that the ecological concept of a “long-term oriented” culture, characterized by a combination of thrift and determination, has no individual-level equivalent in some of the world’s most populous countries. Obviously, testing for cross-cultural measurement invariance of individual differences in a construct that does not exist at the individual level is a meaningless exercise.

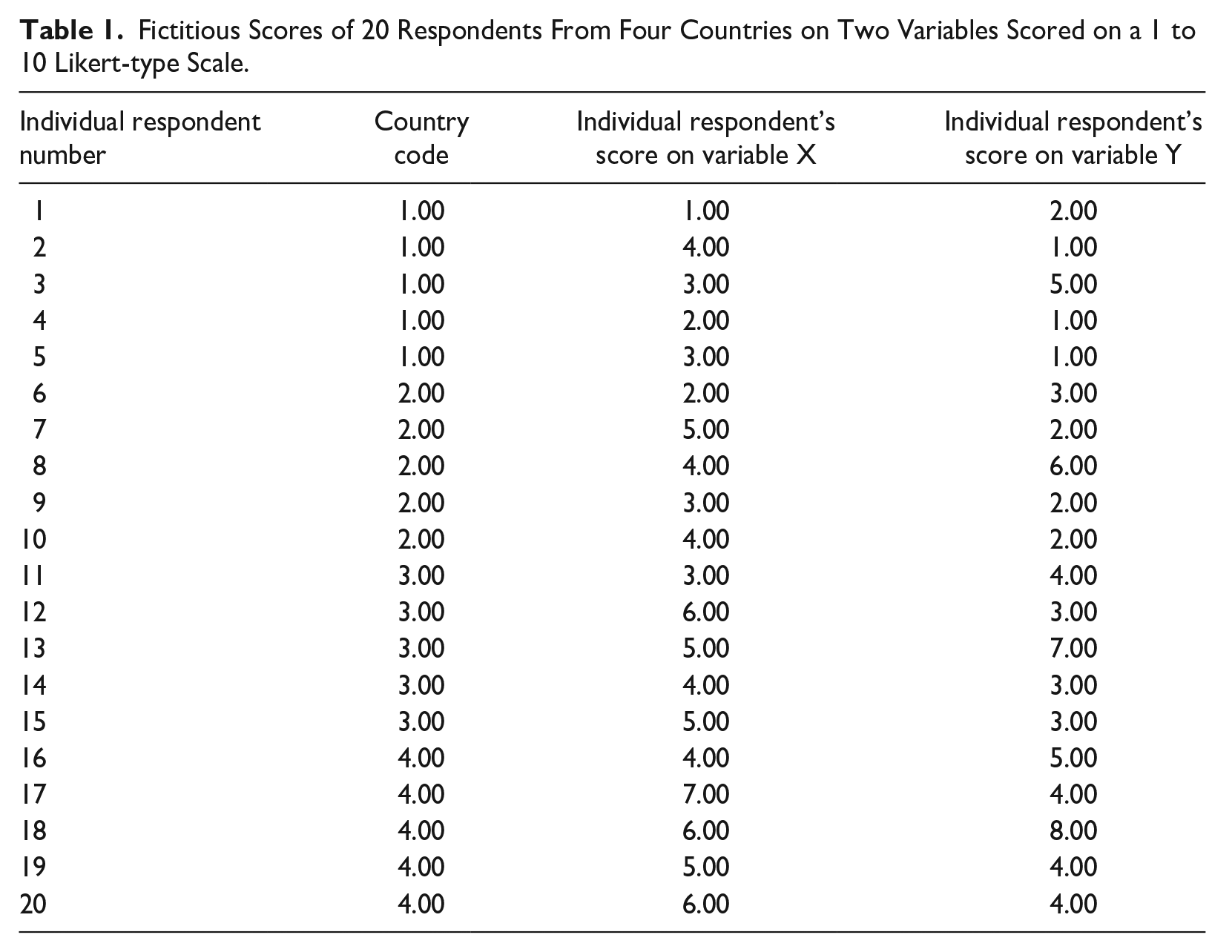

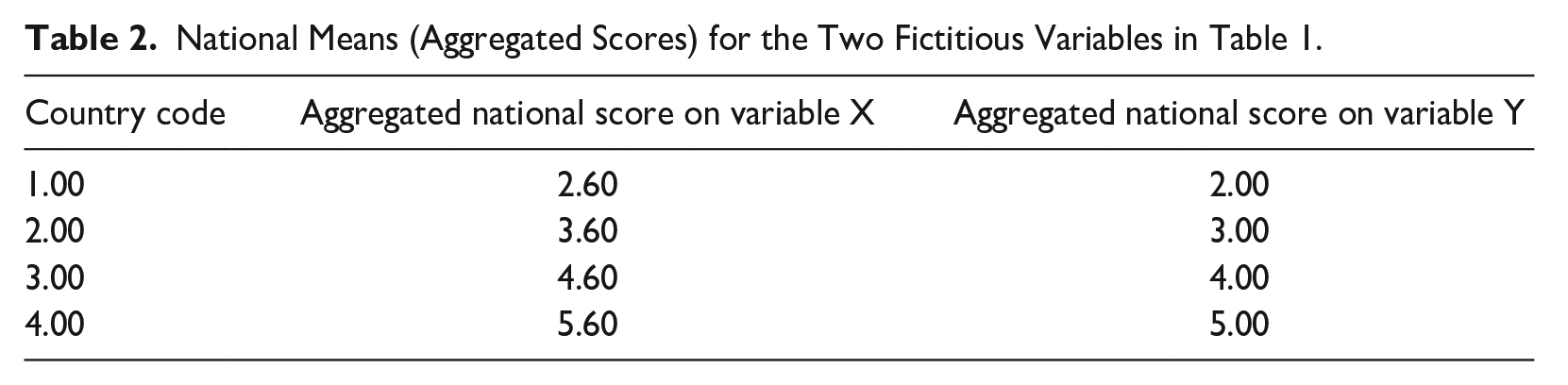

As an extreme illustration, note that it is also mathematically possible (even if realistically impossible) for a correlation to be exactly .00 at the individual level and exactly 1.00 at the ecological (national) level. Table 1 shows fictitious scores for 20 respondents from four countries on two variables: X and Y. Within each of the four countries, across the country’s five respondents, variables X and Y correlate at exactly 0.00, with p = 1.00. After aggregation by nation (calculation of each nation’s mean score on each of the two variables), we obtain the national scores in Table 2. Now, across the four countries, variables X and Y correlate at exactly 1.00, with p = exactly 0.00.

Fictitious Scores of 20 Respondents From Four Countries on Two Variables Scored on a 1 to 10 Likert-type Scale.

National Means (Aggregated Scores) for the Two Fictitious Variables in Table 1.

The two types of correlations are illustrated in Figure 1, where each respondent is represented by a dot, and the respondents of each country are colored differently and connected to the country mean points.

How is this possible? Within each country, there is presumably no underlying dimension of individual differences. However, we notice that each country’s scores on both variables fall within a particular band, and each next band (for each next country) is positioned higher than the previous: 1 to 5, 2 to 6, 3 to 7, and 4 to 8. As each country’s two mean scores are compressed into a specific and relatively narrow band, they are bound to be similar, hence the high (actually perfect) correlation between them.

CC is essentially the study of these bands. In reality, the bands never have clearcut boundaries. They are more similar to clouds with fuzzy edges, as shown by Akaliyski et al. (2021). Therefore, CC researchers analyze the centroids or midpoints of the bands. One of the main challenges for comparative culturologists is to explain what accounts for the positions of these midpoints. This requires searching for external (non-cultural) ecological variables that are similarly banded, in the sense of having similar midpoints, and theoretically related to the cultural bands under investigation. Examples of such external variables are economic development (Hofstede, 2001), climatic zones associated with different precipitation patterns (Welzel, 2013), pathogen prevalence (Fincher et al., 2008), or type of agriculture and agricultural zones in the past (Minkov & Kaasa, 2021). As variables of this kind operate mainly at the ecological level, their effect is felt most strongly there, accounting for the bands and midpoints of the individual respondents’ scores. Within each country and its band, the ecological effect is relatively weak, or none. Individuals who live in a particular country are exposed to many effects of its level of economic development, such as poor public services, poor infrastructure, or a lack of welfare, more or less similarly, despite the existence of individual wealth differences. Likewise, they experience the country’s climate and the effect of local pathogens, in more or less the same way. Therefore, these ecological variables are substantially controlled for as far as individual-level differences within a country are concerned, unless the country has an enormous territory with a lot of geographic and economic variability. Without such variability, what becomes prominent at the individual level are individual differences unrelated to ecological ones. Those individual differences are the focus of study of psychology, not CC.

We can now go further and show that the ecological and individual levels of analysis can vary in terms of complex structures, not just relationships between two variables. In other words, figuratively speaking, at both levels we observe houses made of the same bricks but combined very differently, which makes the two types of houses very different in terms of shape, function, and properties.

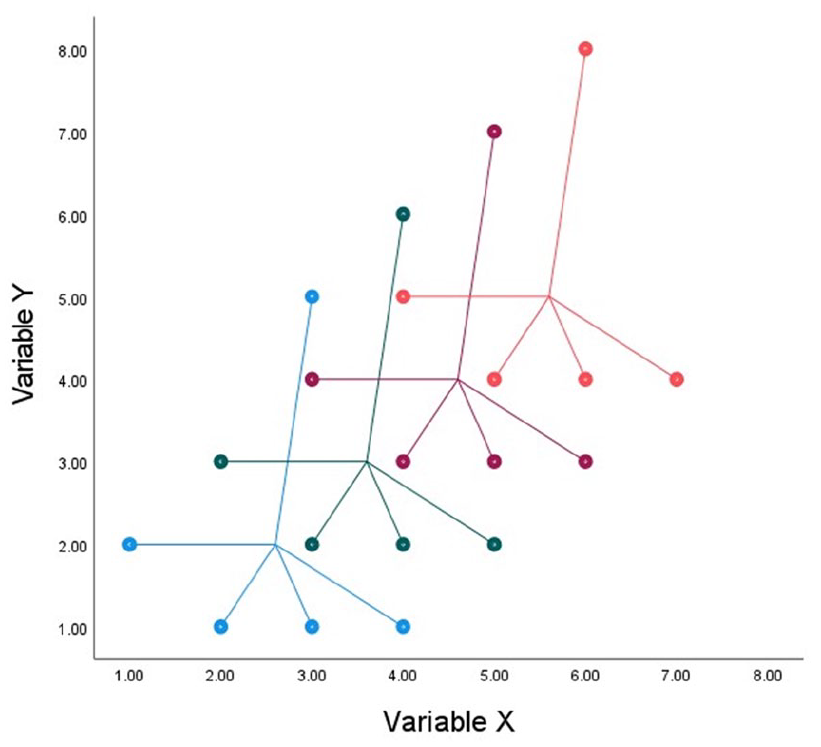

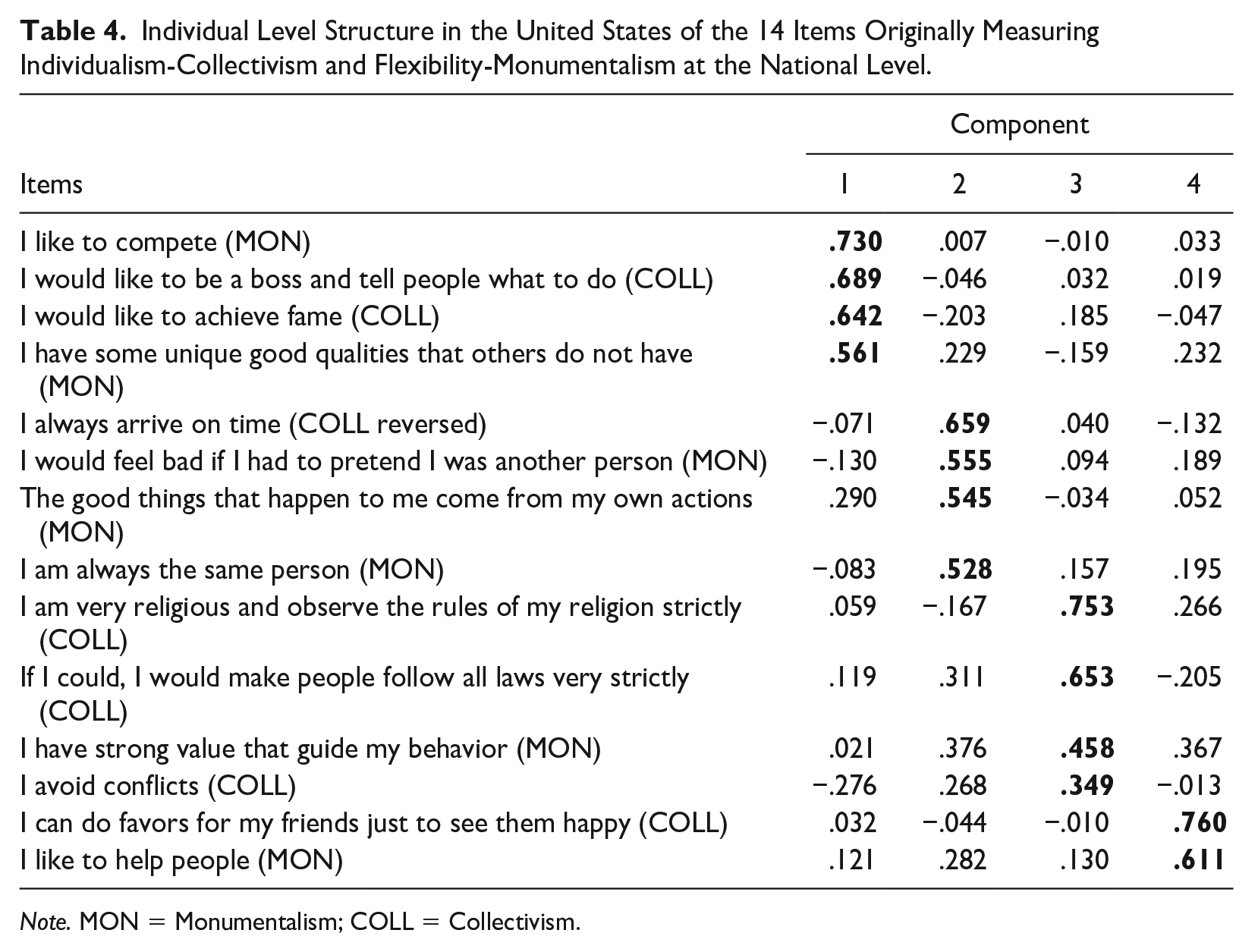

Minkov et al. (2023) conducted a principal components analysis of the 14 items previously used in two separate studies for separate extractions of two nation-level dimensions of culture: IDV-COLL (Minkov et al., 2017) and flexibility-monumentalism (FLX-MON) (Minkov, Bond, et al., 2018). Minkov et al. (2023) confirmed that these 14 items yield a two-component structure at the national level, consistent with IDV-COLL and FLX-MON theories. Table 3 shows that structure.

National-Level Structure of 14 Items Measuring Monumentalism-Flexibility and Collectivism-Individualism at the National Level.

What is the structure of the same 14 items at the individual level? To test this, we used U.S. data from the same dataset as that of Minkov et al. (2017) and Minkov, Bond, et al. (2018). The United States is the best-represented nation in that dataset, with a sample of 2,809 respondents, closely reflecting the U.S. census in terms of gender, age, education, race, income, employment, and geographic origin. Details are available from the two previously cited publications. An individual-level principal component analysis of the 14 items across the 2,809 U.S. respondents yielded four components with eigenvalues of at least 1.00, accounting for altogether 47% of variance. Table 4 provides the components structure. After each item (provided in a shortened form), in parentheses, we provide an indication of which dimension the item measures at the national level.

Individual Level Structure in the United States of the 14 Items Originally Measuring Individualism-Collectivism and Flexibility-Monumentalism at the National Level.

Note. MON = Monumentalism; COLL = Collectivism.

As we see in Table 4, the 14 items that measure IDV-COLL and FLX-MON at the national level yield four components at the individual level (specifically in the United States), not two as at the national level. Moreover, the items are now completely reshuffled. Some items that load on the same component at the national level load on different components at the individual level. Vice-versa, some items that load on different components at the national level, may load on the same component at the individual level. Because of this reshuffling, forcing the 14 items into just two individual-level components does not help recover the structure of the two nation-level dimensions. It is noteworthy that the reshuffled four-component individual-level structure is not less interpretable than the two-component national-level structure. High scorers on the first component are individuals who have a sense of personal superiority and wish to demonstrate it by competing, as well as to enhance it further by achieving fame and power. High scorers on the second component are individuals who have a strong and stable ego that is not affected by external circumstances. High scorers on the third component are individuals who have a religious devotion to law, order, and predictability. Finally, high scorers on the fourth component are motivated by a desire to be nice to others. Crucially, none of these four individual-level components corresponds either conceptually or empirically to the nation-level constructs IDV-COLL and FLX-MON.

The complete lack of correspondence in the structures of these same 14 items at the ecological and individual level of analysis in the United States should be a sufficient illustration of the inappropriateness of any individual-level invariance-testing when a researcher’s agenda is to provide ecological-level measures. Note that this is very different from the situation for a CCP researcher, who might be interested in studying patterns of cross-cultural variation in individual-level constructs. In that case, some type of invariance testing may be necessary to ensure that one is comparing individuals from different countries on a similar construct. Thus, the two levels have their own inherent logics, neither of which can be revealed through an analysis at the other level.

The examples that we have provided in this section support Schwartz (2011), who rejected the view that culture is located in people’s minds. “Culture” is an ecological pattern of relationships: for example, the co-existence of many people who believe that their children should be thrifty plus many people (not necessarily the same individuals) who believe that children should be perseverant. Another such pattern is the co-existence in a society of many individuals who have a high self-regard and many who have consistent selves. As these combinations are not typical of individuals, they are not located in their minds. Therefore, culture is obviously not a characteristic of individuals.

Crucially, we are not arguing that ecological and individual-level analyses will always yield highly different structures. Our point is that the validity of a dimension conceived and measured at one level of analysis does not depend on the presence or absence of isomorphism at another level. The individual-level patterns are known as “personality,” whereas the ecological patterns are known as “culture.” Personality is not culture and culture is not personality.

Prioritizing Basic Theory or Practical Utility

Any social scientific field would be well advised to heed the maxim popularized by Kurt Lewin that “there is nothing as practical as a good theory” (Lewin, 1943, p. 118). Theory and practice in all disciplines are inescapably connected. Still, statements by the founders of CCP emphasize theory. In a paper by Triandis and Brislin (1983), we read that “the benefits of cross-cultural research [in psychology] are largely concerned with better theory development” (p. 4). According to Miller (1997), “the goals of cross-cultural psychology include testing the generality of psychological theories in diverse cultural contexts” (p. 114). In a treatise on the goals of cross-cultural psychology, Berry et al. (2002) note that “perhaps the first and most obvious goal is the testing of the generality of existing psychological knowledge and theories” (p. 3) and cite other authors who share this view.

Although CCP is also interested in what its constructs predict, and hence how practically useful they might be (and some CCP research is primarily devoted to practical utility), there are nonetheless many examples of major CCP studies that provide aggregate national scores of psychological variables with no exploration of their nomological networks other than correlations with IDV-COLL or national wealth (Diener & Lucas, 2004; Kuppens et al., 2006; McCrae, 2002; McCrae & Terracciano, 2005; Schmitt & Allik, 2005; Schmitt et al., 2004, 2007; Schmitt & International Sexuality Description Project, 2004).

Hofstede (1980, 2001) never underestimated the value of theory. Yet, he pioneered CC as a response to the practical needs of a multinational company in a period of accelerating globalization, when it was becoming increasingly clear that international managers needed some knowledge of cultural differences to be able to function more effectively in diverse cultures. In line with this realization, Hofstede and his associates explicitly stated that practical utility should be the first priority of all social science and explained what they meant by that:

Social science should be oriented towards practice: its models should lead to valid predictions. A good theory is needed to explain these models as they may defy common sense. Yet, the merit of any model should be judged on the basis of its capability to statistically predict interesting and important phenomena (Minkov & Hofstede, 2011, p. 18)

In the view of Smith and Bond (2019), the strongest argument for retaining an interest in nation-level dimension scores is that they consistently predict relevant nation-level indices derived from independent sources. Of course, a strong correlation is useless unless there is a good theory to explain it. CC does not downplay the importance of theory even though it assigns it an auxiliary role.

As CC emphasizes empirical prediction, all major CC models of national culture have reported extensive nomological networks (i.e., relationships with external variables). Hofstede (2001) showed that some of his dimensions predict a wide range of national indicators. Although Inglehart and Baker (2000) were more interested in what explains cultural differences in values across the globe than what those value differences explain, Welzel (2013) showed that differences in cultural emancipation (statistically a merger of Inglehart and Baker’s two dimensions) predict differences in the successful implementation of democracy. Likewise, Schwartz (2008) published a monograph focusing on the implications of his model of culture. House et al. (2004) also provided nomological networks for Project GLOBE’s dimensions, whereas Minkov’s revisions of Hofstede’s model (Minkov et al., 2017, Minkov, 2018; Minkov & Kaasa, 2021) were concerned primarily with the predictive qualities of the revised Minkov-Hofstede dimensions. In summary, all major models of national culture strongly emphasize the predictability of independent indices, which is a test of their practical utility. 1

Availability of “Long” Versus “Wide” Data Structures

While the backbone of CC is testing the predictive properties of a construct with respect to an almost limitless array of national indicators and measuring the position of the same country with respect to others over and over again, this approach is inapplicable in psychology. Psychologists typically do not have the luxury of easily collecting data about the same individuals numerous times and from numerous sources (such as health records, criminal records, education achievement records, driving records, and so forth), whereas CC researchers have a huge amount of regularly refreshed, secondary nation-level data at their disposal. On the other hand, a sufficiently resourced psychologist will have the luxury of being able to sample large numbers of individuals for any given study, allowing for high statistical power and the possibility of testing highly complex statistical models, whereas even the best-resourced practitioners of CC using nations as units of analysis would have an upper limit of around 200 observations, even if they collected data from every country in the world.

Thus, by the nature of their subject matter, CCP is intrinsically better equipped to produce and analyze “long” datasets consisting of a larger number of observations of a smaller number of variables, whereas CC is intrinsically better equipped to produce and analyze “wide” datasets consisting of a smaller number of observations of a larger number of variables.

The Nature and Measurement of Theoretical Constructs in Cross-Cultural Psychology and Comparative Culturology

The following discussion focuses on the nature of the predominant constructs in CCP and CC. We argue that psychology, including CCP, is characterized by a greater prevalence and understanding of reflective than formative constructs and measures (after Coltman et al., 2008). In contrast, the goals of CC, as well as the greater availability of wide rather than long data, make this field more suited to developing constructs and measures that may be partially or wholly formative in nature.

Before comparing types of constructs, we need to define IDV-COLL from the viewpoint of CC, as we will refer to this dimension of culture on multiple occasions. We stress that what we have in mind is the ecological-level IDV-COLL dimension first proposed by Hofstede (1980), later reconceptualized by Minkov et al. (2017), Beugelsdijk and Welzel (2018), and other authors. Importantly, we are not referring to the numerous homonymous individual-level measures, such as those of Triandis and Gelfand (1998) and others, as reviewed by Oyserman et al. (2002).

We provide a description of the COLL pole, whereas IDV is the same with a negative sign. National COLL has been conceptualized as having three facets (Minkov et al., 2017). The first is conformism: a society’s tendency to pressure its members into uniformity and submission. The second is social ascendancy: a society’s tendency to make its members prioritize acquisition of power and high social status. The third is exclusionism: a society’s tendency to promote group-based privileges and group-based exclusion. 2 The conceptual relationships between these concepts are explained further in this article.

Searching for Independently and Objectively Existing Factors Versus Designing Utilitarian Tools

Although various epistemological traditions are found in psychology, mainstream psychologists very often conceptualize their constructs as having an independent and objective existence, consistent with a philosophy of scientific realism (Cacioppo et al., 2004): the view that “scientific theories are approximations of universal truths about reality” (p. 213). Also, psychological dimensions are commonly (but not always) defined as reflective as opposed to formative (Coltman et al., 2008), meaning that the indicators measuring them are assumed to “reflect” (i.e., be caused by) underlying factors that exist objectively and are independent of their measures, rather than “forming” (i.e., constituting) the construct being measured. Big Five personality traits are a good example of reflective constructs, as they are thought to have a strong biological foundation (DeYoung et al., 2010; Soto, 2018), and according to some authors (Costa & McCrae, 2006), changes in their levels are mostly due to biological maturation. If we accept that “extraversion” has much to do with energy (McCrae & John, 1992), it is easy to imagine that trait as an objectively existing biological property, like a person’s body temperature. One’s total average energy level is the same, no matter how it is measured. An indicator of a person’s energy can be more or less accurate (in case it is contaminated with something unrelated to energy), but a person’s true energy level remains unaffected, regardless of which indicator we use to measure it. A cross-cultural psychologist might be interested in finding out if a tool that supposedly measures such a reflective construct, for instance, a person’s energy or extraversion, can be used in different cultures to reveal the respondents “true levels” of the trait.

Although Hofstede never defined his constructs as reflective or formative, some of his views suggest he leaned toward a philosophy of scientific instrumentalism (Cacioppo et al., 2004): the view that scientific theories should first and foremost provide prediction and solve problems. This is consistent with the formative paradigm because he stated that his dimensions do not exist independently and objectively. They are creations of the researchers’ minds (Hofstede, 2002): In the first session of a new student class, I used to write big: CULTURE DOESN’T EXIST. In the same way, values don’t exist, dimensions don’t exist. They are constructs, which have to prove their usefulness by their ability to explain and predict behavior. The moment they stop doing that, we should be prepared to drop them, or trade them for something better (p. 1359)

Hofstede’s views are shared, and perhaps made clearer by other scholars: “[Culture] exists only as a set of inferences or abstractions from observable, measurable behavior (including verbal reports)” (Rohner, 1984, p. 117). Hofstede’s acceptance of subjectivity is also consistent with Schwartz’s (2011) data reduction philosophy: “To work with values, we arbitrarily partition into broad value domains” (p. 308). “Arbitrarily” does not mean “randomly.” Schwartz (2008) evidently accepted that the indicators that define a CC construct should make sense theoretically, and that the resulting combination should predict relevant external variables. However, there is no requirement for a CC construct to reflect an objectively existing and defined phenomenon that has an independent existence (least of all in individuals’ minds) and is not a function of the way that it is measured.

This does not mean that CC constructs are entirely subjective. As an analogy, one might compare all potential indicators from which cultural dimensions can be extracted to the set of all celestial bodies visible to the naked eye. They exist objectively and their proximities and distances are also objective, but grouping them into constellations involves a high degree of subjectivity, as there can be no objective answer to the question of where one constellation ends and another starts. Ancient Chinese constellations (Xu, 2009) have little to do with the Babylonian zodiac that Western societies are familiar with, whereas the Paris Codex, containing the work of Mayan astronomers, suggests that the Maya had yet another way of partitioning the sky. It would be pointless to debate the question of which partitioning is the true one. Instead, a very different question should be asked: what practical benefits does a particular partitioning yield? For instance, is it a helpful guide for efficient navigation at sea?

The indicators used by CC can be placed on a map, such as Schwartz’s (2008) circumplex of values. The number of indicators is almost limitless, and at some point, that map can look so completely saturated that no clearly discernible constellations of items can be identified. This situation would be practically impossible in psychology, as one can hardly get the same respondents to answer thousands of questions (although huge sets of some data can now be collected through Google or Facebook). In contrast, CC has access to vast databases of secondary data, enabling the production of a nearly saturated map that necessitates some type of data-reduction. One can, and does, use statistical guidelines for that purpose, yet they are merely suggestions. Therefore, one can choose indicators for a construct, such as IDV-COLL, somewhat subjectively, yet pragmatically: the final product should be a theoretically defensible tool with strong predictive properties. According to Minkov and Hofstede (2011), to understand a CC construct properly, it is not enough to theorize about it or examine the items through which it has been operationalized. One also needs to analyze its nomological network: all external variables that are closely associated with it.

A CC construct, such as IDV-COLL, is based on objectively existing indicators. However, their combination into overarching themes is largely an act of creation. One variant of an IDV-COLL measure cannot be truer than another, just as there are no true and untrue constellations in the sky. One IDV-COLL measure can, however, be more useful than another if, first and foremost, it is a better predictor of important extraneous variables, such as democracy, economic freedom, transparency-corruption, gender equality, road death tolls, and more (Minkov et al., 2017). 3

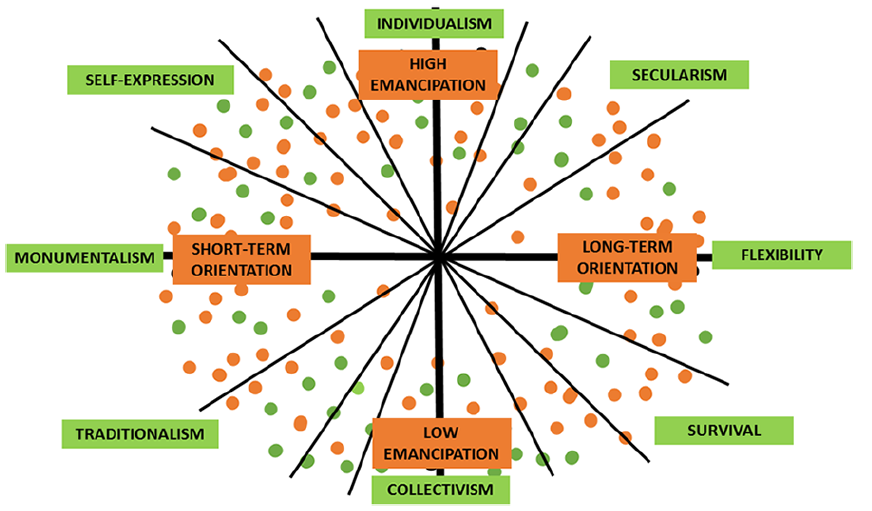

Figure 2, based on Kaasa and Minkov (2022) and Minkov and Kaasa (2021), illustrates this point. The first of these studies shows that the culture-related World Values Survey items form a circumplex like that of Schwartz (2008). As one adds more items, the circumplex will become denser, like the artificially created circumplex in Figure 2. It can be dimensionalized in accordance with Inglehart and Baker (2000) and Inglehart and Welzel (2005). In that case, one would obtain the familiar traditional-versus-secular dimension and a survival-versus-self-expression dimension, represented by the corresponding axes (diagonals) in Figure 2. But the dimensionalization can also be done in accordance with Minkov and Kaaa (2021) revision of Hofstede’s model, in which case the dimensions would be IDV-COLL and FLX-MON. Other dimensionalizations are also possible because the number of diagonals that one can draw across a circle or ellipse is infinite, and each of them will run close to a great (and potentially infinite) number of items, meaning that it is closely associated with all of them. Asking which of these dimensions captures slightly more variance than others becomes a practically irrelevant question. It is much more relevant to ask which dimensions are more compelling theoretically and more useful in terms of their predictive properties.

A Fictitious Plot of Nation-Level Measures of Subjective and Objective Culture With Potential Dimensionalizations.

In Figure 2, the green dots symbolize items from self-reports, yielding dimensions of so-called subjective culture (self-reports), marked by green labels. The brown dots symbolize national behavioral indicators, such as road death tolls, gender equality, homicide rates, and more, analyzed by Minkov and Kaaa (2021), yielding dimensions of so-called objective culture, marked by brown labels. A useful way to dimensionalize the circumplex is to draw axes in such a way that they simultaneously stand for theoretically defensible dimensions of both subjective and objective culture. In Figure 2, the names of the objective culture dimensions (brown labels) are from Minkov and Kaaa (2021). High emancipation stands for a combination of transparency (versus corruption), democracy, gender equality, economic freedom, and safety, whereas long-term orientation stands for a combination of strong effort and excellent results in education, low adolescent fertility, low homicide rates, and paternal presence at home.

If researchers are interested in explaining precisely these phenomena, the most useful dimensionalization seems to be the one shown in Figure 2 by the thick axes that match IDV with high emancipation, whereas FLX is matched with long-term orientation. But if the researchers wish to explain something else, a different coordinate system may seem more useful, such as the Inglehart-Welzel dimensions of traditional versus secular values and self-expression versus survival values. Thus, a CC construct is a partly subjectively chosen combination of objectively existing measures associated with a theory.

Latent Causative Factors Versus Mutualism Within a Manifold

According to Bartholomew (2004), “If a set of test scores tends to be positively correlated among themselves, there is a prima facie case for believing that those correlations are induced by a common dependence on a latent variable” (p. 62). Following this logic, psychologists tend to think in terms of latent factors: unobservable mental entities that cause the respondents’ responses. If a respondent provides a self-description of a person who is frequently worried, depressed, upset, and angered, that is supposedly because all of these are caused by a single common underlying cause, called a neuroticism factor. In accordance with the same logic, a person can be seen as good at mental object rotation, word substitution, and digit span, because of an underlying factor of general intelligence that causes one’s performance on each of those tests.

However, explanations of item covariation through unitary latent factors have recently come under strong attack (Borsboom et al., 2021; VanderWeele, 2022). There is an alternative explanation of what accounts for item co-variance. For instance, van der Maas et al. (2014) authored an article entitled “Intelligence is what the intelligence test measures. Seriously.” Those authors argue that instead of ridiculing that century-old statement, we can take it quite seriously under certain conditions: The mutualism model, an alternative for the g-factor model of intelligence, implies a formative measurement model in which “g” is an index variable without a causal role. If this model is accurate, the search for a genetic of brain instantiation of “g” is deemed useless. This also implies that the (weighted) sum score of items of an intelligence test is just what it is: a weighted sum score. Preference for one index above the other is a pragmatic issue that rests mainly on predictive value. (p. 12)

How does the mutualism model explain the intercorrelations between the items of a given construct? A key concept in that model is a manifold (van der Maas et al., 2014): a system of indicators that are associated with each other through their interactions or interdependencies. A synonymous concept is a “psychological network” (Borsboom et al., 2021), whereas its equivalent in CC would be a “cultural network.” Taking this perspective, IDV-COLL does not stand for a latent factor. It is the name of a subjectively outlined constellation of variables whose statistical intercorrelatedness can be explained through a sound theory. The main reason for the merging of all selected variables into a single IDV-COLL measure is the need for simplification: it is easier for the human mind to think in terms of a single entity than in terms of three facets, let alone five or more indicators behind each facet.

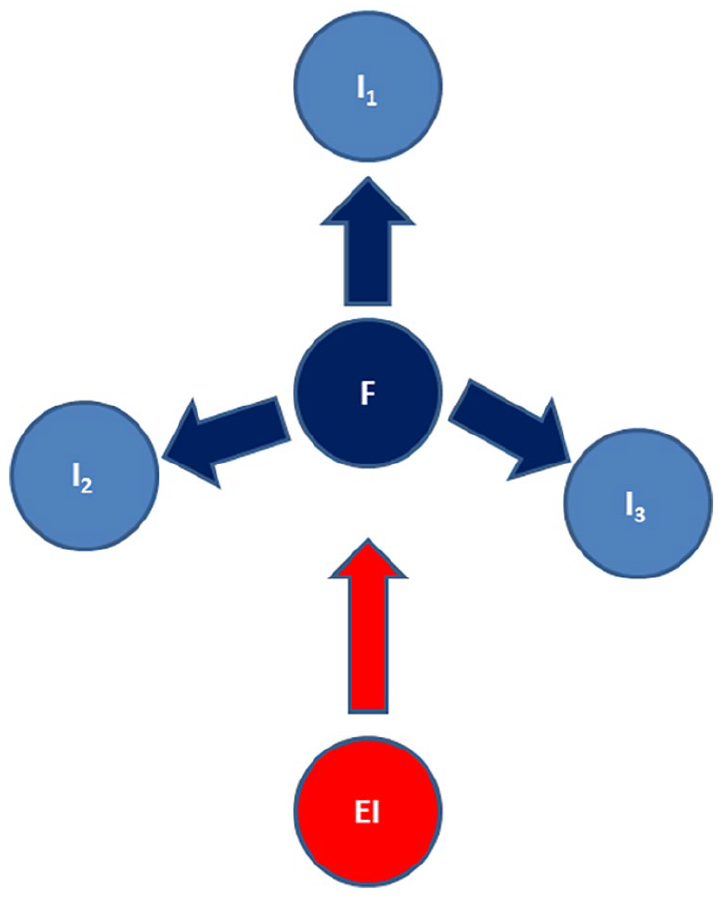

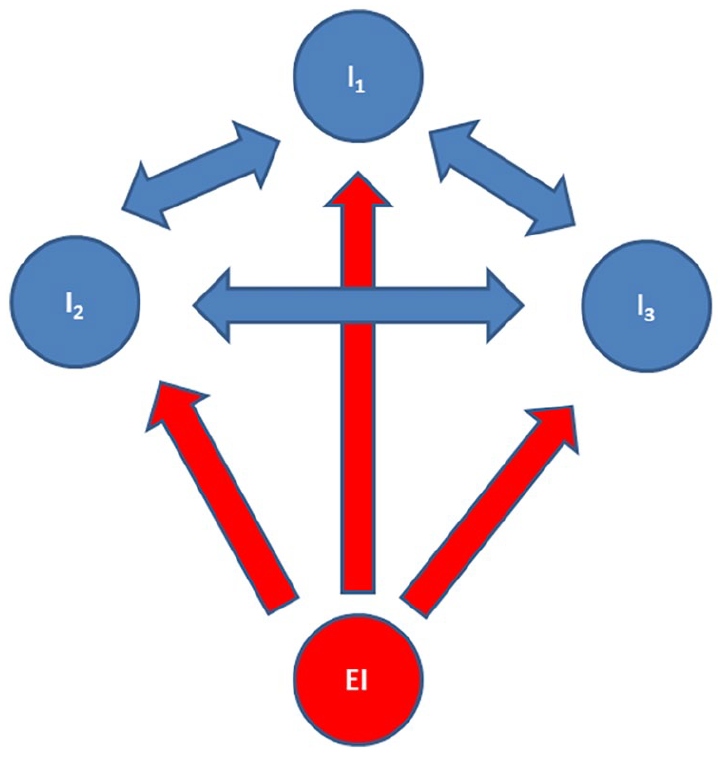

Figure 3 shows a reflective model involving external influences (EI) that cause a latent factor (F), which in term causes indicators (variables) I1, I2, and I3: the indicators through which F is measured. Figure 4 displays a manifold in which there is no latent factor. External influences (one or many) cause each indicator (variable). In addition, the indicators may cause each other, albeit to various degrees. The perfect symmetries in Figures 3 and 4 may, of course, be exaggerations.

Visualization of a Latent Factor Model, With a Factor (F) caused by External Influences (EI) and Causing its Indicators (I).

Visualization of a Mutualistic Manifold Model, With External Influences (EI) Causing Indicators (I) Which Also Cause Each Other.

One can easily explain why the facets of IDV-COLL are intercorrelated at the ecological level, and may cause each other, even in the absence of a single latent cultural factor that causes them. Exclusionism is synonymous with discrimination, which breeds frustration, which in turn creates a desire for wealth and power to achieve social privileges and avoid discrimination or other types of oppression. However, ruling elites who have usurped society’s wealth and power will not easily allow upward social mobility. Instead, they will enforce conformism: submission and conflict avoidance, so that the rich and powerful keep their privileges without being challenged by the lower classes. This creates inequality, which is synonymous with exclusionism. Thus, one can posit a mutualistic cause-and-effect relationship between the three facets of COLL. Of note, this mutualistic effect is detectable only at the ecological level, as the mutualistic dynamism has no analog at the individual level (Minkov et al., 2023). This is another example of the fact that ecological patterns may be radically different from those at the individual level.

A logical consequence of conceptualizing a CC construct as a manifold of interrelated variables, and not as a latent factor, is that factor analysis (including exploratory and confirmatory approaches) is not appropriate for determining the structure of CC constructs. The statistical rationale underlying factor analysis is that latent factors can be discovered by excluding variance that the indicators do not share and by focusing only on their shared variance, which is supposed to be accounted for by one or more latent factors. In contrast, there are alternative data reduction techniques, such as principal components analysis, multidimensional scaling, and hierarchical clustering analysis, that are not supposed to uncover latent factors and do not exclude any variance. They simply demonstrate how the indicators can be classified into groups (principal components or clusters), or arranged along dimensions, based on some measure of statistical similarity or distance, such as Pearson correlation or Euclidian distance. Network analysis can also be used in CC as it shows at a glance relationships between indicators of interest. If one adopts the manifold logic, then these classification data reduction techniques make better sense as they reveal how the indicators form a manifold without excluding any variance. 4 Moreover, any variants and derivatives of factor analysis are inapplicable in CC. This is especially true of any type of confirmatory factor analysis, be it single-group or multi-group, as this technique assumes the existence of a latent factor, which is incompatible with the manifold concept.

Of course, just as it is possible to model psychological constructs as networks without a latent factor (Borsboom et al., 2021), the opposite is also technically possible: modeling CC constructs as latent factors. However, latent factors may be a convenient idea in personality psychology, whereas in CC, they would be theoretically cumbersome. For instance, McCrae and Costa (1995) indicate that traits are “hypothetical constructs” (236) and argue that they are not to be equated with observable behaviors, biological bases, or brain mechanisms. They are tendencies that “no imaging technique or anatomical dissection would ever find . . . among the neurons and neurotransmitters of the human brain” (p. 239). In that case, if traits do not have observable antecedents that can be detected with human senses or technology, viewing them as “latent” (hidden) reality is a convenient solution. CC does not need to posit a hidden reality. Differences in IDV-COLL for instance are often ascribed not to anything hidden in people’s minds but to differences in the physical environment, causing differences in types of economy and level of economic development. There is no assumption here that these antecedents of the IDV-COLL package have the same effect on all of the ingredients of the package, causing one homogeneous whole. Moreover, the shared variance among these ingredients may be due as much to mutual influences among them as to shared underlying causes (see Figure 4). Modeling these ingredients with a latent variable may thus provide a pragmatically useful statistical simplification, but this should not be confused with believing in an objectively existing underlying latent factor. Note that the existence of objective causes of the IDV-COLL package does not make the construct objectively exist. What is to be included in that package is largely a subjective human decision.

Dealing With Non-Systematic Measurement Error

The issue of data quality is as old as the study of culture. For instance, it is clear that either Mead’s (1928) or Freeman’s (1983) Samoan respondents 5 told falsehoods to either of the two researchers as the two sets of mutually exclusive reports (sometimes by the same individuals) that they provided cannot both be true. It is also conceivable that respondents sometimes do not understand some of the items and, even if they do not wish to lie intentionally, they may provide nonsensical responses. Even if they understand the items, they may be incapable of providing a trustworthy self-assessment due to lack of experience with introspection or with a specific response format. All these are problems that CCP and CC should be equally concerned with. Unfortunately, neither discipline possesses a silver bullet to deal with these concerns. Invariance testing is certainly not a solution for CC in this respect because it tests structures at the individual level of analysis that are irrelevant in ecological analyses. If we assume that problems of this kind have been avoided during data collection (for instance, by discussing the sensitivity or intelligibility of the items with focus groups), CCP and CC would proceed in different directions from this point onward.

Classical test theory assumes that the variance in scores on a measure of a latent construct is a function of the true score plus error (MacKenzie et al., 2005). CCP usually agrees with this reflective paradigm, assuming that each indicator contains a substantive part, which is a true reflection of a latent construct, and an error element. Whatever the origin of the error, as it is unrelated to the construct, it must be partialed out by means of factor analysis. However, this data treatment may not be considered sufficient. Followers of the reflective paradigm in individual-level research may also suspect that despite the elimination of non-shared variance, some error is still present in the data, for instance, due to response bias such as acquiescence or because of social desirability. Thus, additional data-cleansing operations may be needed to arrive at the true, objectively existing construct.

CC is closer to the formative paradigm, which usually rules out the presence of error (Coltman et al., 2008; Diamantopoulos et al., 2008; VanderWeele, 2022). Indeed, in classic measurement theory, “error” and “truth” are conceptually a Yin and Yang: there can be no concept of “error” without a concept of “truth.” But as CC does not aim to uncover any true (objectively and independently existing) constructs, the concept of “error” becomes problematic within a CC paradigm.

CC does recognize a particular plethora of problems that arise from poor preparation of a study. This may include poor translation, poor instructions for the respondents, items that are unintelligible, irrelevant, or uncomfortable to the respondents, and a response format (such as Likert-type scales) that the respondents are not familiar with. Problems of this kind may be viewed as a source of error in terms of classic measurement theory. Still, in CC, the problem is not that the poor preparation of the study can yield something that deviates from a so-called “truth,” but that it produces meaningless variance. In the CC context, “meaningless” can be defined as “theoretically unsound and having poor empirically predictive properties.”

Once problems of these kinds have been minimized during the study preparation, the statements of supposedly honest and well-informed respondents, who have experience with introspection, are in principle (though not always) to be taken at face value and there is nothing to partial out of them, as one does not search for an objectively and independently existing factor. A CC construct is partly a matter of creative design, especially as it concerns item selection. If one rules out the problems discussed above, it would be a logical contradiction for the indicators not to measure the construct for which they have been chosen. From the viewpoint of CC, saying that the combination of statements S1, S2, and S3 is not the real IDV-COLL, even though IDV-COLL has been posited and operationalized as a combination of S1, S2, and S3, and that there is a more real IDV-COLL consisting of other statements, would be illogical. It would be like saying that the Eiffel Tower was not built properly because the real Eiffel Tower should look like the Statue of Liberty.

This position implies that CC operates partly under the umbrella of operationalism (Minkov & Hofstede, 2011), a research philosophy formulated by Nobel-prize winner Percy Williams Bridgman (Stanford Encyclopedia of Philosophy, 2019). It is based on the view that we do not know the meaning of a concept unless we have a method for measuring it. According to Bridgman, a concept is “nothing more than a set of operations: the concept is synonymous with the corresponding set of operations” (Stanford Encyclopedia of Philosophy, 2019, p. 1). Although this view is arguably extreme (Minkov & Hofstede, 2011), it does imply something acceptable to CC: IDV-COLL is what has been measured, not what somebody might think it is or should be. Like any other construct in CC, IDV-COLL emerges from its measures, and it cannot be more “true” or less “true,” no matter how it is measured. It is an altogether different point that a measure of any construct can be conceptually incoherent or practically irrelevant, for instance, by lacking a convincing nomological network. Although one measure of IDV-COLL cannot be truer than another, it can be more useful.

This implies that once a researcher has proposed a particular CC construct and given it a name, other researchers would be well advised not to create confusion by using the same name for constructs that are not empirically related to the original one. Unfortunately, this advice is not always heeded. Project GLOBE (House et al., 2004) used some of Hofstede’s (1980, 2001) construct names for their own measures in the absence of a close statistical relationship between them and even criticized Hofstede for how he conceptualized his dimensions. Apparently, they worked with a reflective research philosophy in mind, in which a dimension of culture is something like a chemical element on Mendeleev’s table: a real entity that one can measure properly or mistakenly. This type of reification is alien to the Hofstedean philosophy of CC, where a dimension of culture can only be measured in a more useful or less useful way, rather than truly or falsely.

Once respondents’ statements have been collected, both CCP and CC need to answer an important question. For CCP, that question is, “Do the statements reflect true attitudes of each respondent?” For CC, the question is formulated differently: “Are these statements usable to reveal something about the cultural norms to which the respondents have been exposed that is theoretically coherent and has predictive utility?” Naturally, if the respondents have lied or answered without understanding the items, or if the items were irrelevant to them, the responses are likely to be unusable. This can be established by showing that they have no internal coherence (they are not related conceptually and statistically) and no predictive power: they are not closely related to any relevant national indicators. Of course, finding a good nomological network for a CC construct is not enough for it to be validated. One needs a robust theory to explain all associations.

Social Desirability as Bias Versus Social Norms as Cultural Content

Followers of the CCP tradition may not be satisfied with the position of CC described in the previous section, and this may be a significant point of contention between CCP and CC. Rooted in a reflective tradition, CCP researchers might argue that, even if the respondents have understood the items and have answered honestly, their statements may be contaminated with something unrelated to the presumed latent factor, such as social desirability. In consequence, the predictive properties of the statements are unreliable (Sokolov, 2018). Even if statement S1 is strongly correlated with behavior B1 and there is a compelling theory to explain that relationship, the conclusion that S1 and B1 are closely related is premature because the correlation between them may be inflated by C, which is not part of either S1 or B1. One can imagine a culture where most people denounce homosexuality in surveys but, in private, have much more liberal attitudes. Thus, when filling out questionnaires, the respondents’ true attitudes toward homosexuality are inflated or deflated by social desirability.

Once again, we emphasize that CC is not interested in tapping the truth in each individual’s mind. To a CC analyst, social desirability is another word for “social norm,” which is exactly what CC tries to discover. What is important to CC is not the individual reality in the respondents’ minds but the cultural reality concerning the social norm, which is finally what drives mass behavior and, hence, the course that societies are charting. If statements to the effect that homosexuality is unacceptable are strongly correlated with some indicator of discriminatory behavior against gay people, CC is satisfied that the social norm that has been deduced from the individuals’ statements is plausible because it has tangible societal consequences. One can certainly imagine individuals who privately admit that they have a positive attitude toward gay people but, under social pressure, they state (even in anonymous surveys) that they disapprove of homosexuality and, because of the same pressure, they would not hire a gay person in their company. Psychologists who are interested in “true” attitudes in people’s minds would see this discrepancy between private attitudes and exhibited behavior as a problem. In CC, it is not a problem at all, because the goal is to feel the pulse of the whole society, not of specific individuals. If there is social pressure that creates homophobic statements and homophobic behaviors, that means that the culture of the society in question is indeed homophobic, and it does not matter that, while some respondents make these statements and engage in the respective behaviors voluntarily, others do so under social duress.

Nonetheless, one must avoid the pitfall of taking all respondent statements at face-value or interpreting them using Western logic. “Homosexuality” and “religious” probably have more or less close conceptual equivalents across the world, but other concepts may not travel so easily across cultural boundaries. For instance, Heine et al. (2008) conceive of Big Five conscientiousness as related to Western punctuality (“clocks on time”) and use this interpretation to claim that there is something amiss with self-reported national Big Five scores because they do not predict measures based on such Western concepts of conscientiousness. However, if a Middle Eastern nation scores higher on Big Five conscientiousness than most Western nations, that begs the question of how Middle Easterners interpret the questionnaire items. To an Egyptian, “I am a disciplined and dutiful person” may not evoke time punctuality and driving like a Scandinavian, but avoiding pork and alcohol, praying every day, speaking reverently about religion, and adhering to a conservative dress code. This example underscores the need in both CCP and CC to avoid questionnaire items that contain concepts with idiosyncratic cultural overtones.

Response Style as a Common Concern for Both Fields

One important objection to the position that CC constructs are created from their indicators, and thus cannot be untrue, is that people’s responses to survey items can be affected by culturally normative response styles, which may alter item correlations in ways unrelated to the issue under investigation (Fischer et al., 2023).

Response style is an extremely complex phenomenon that we cannot address fully in this article. But as it is a relevant concern for both CCP and CC, we will consider it briefly. One can take the view that some instances of response style are not a concern in CC. Smith (2004, 2011) has demonstrated that response style shows consistent patterns of differences across cultural samples in different surveys. Response style is thus an element of culture and is not necessarily exogenous to dimensions of culture. As conflict avoidance is an integral part of COLL (Minkov et al., 2017), it is unsurprising that COLL societies have been found to induce acquiescence. Thus, saying that an indicator of COLL is contaminated with acquiescence would be like saying that water is contaminated with H2O. If acquiescence is closely and negatively correlated with IDV-COLL, it can be viewed as an alternative, non-obtrusive measure of conformism, which is part and parcel of COLL. 6

Likewise, respondents in societies that score high on monumentalism (Latin America, Africa, and Middle East) have a tendency to state that they have strong values that guide their behaviors in most situations, whereas so-called flexible cultures at the other end of that continuum (East Asia) exhibit the opposite tendency: respondents tend to indicate that their behaviors are situation-driven, not value-driven (Minkov et al., 2018). Thus, it is more common for Latin Americans to state that most values are “very important” to them, but for East Asians to choose the “somewhat important” option, if available. In this case, these response styles need not be viewed as error: some respondents have simply stated their values more loudly than others.

A somewhat different situation arises when a particular response style is not related to the targeted dimension of culture. If acquiescence is very closely related to, and even part of, IDV-COLL, it cannot also be very closely related to FLX-MON as defined and measured by Minkov, Bond, et al. (2018), which is intended to be only moderately related to IDV-COLL. Therefore, if FLX-MON is measured through items that allow acquiescence, such as “[I agree that] I have a high opinion of myself,” the outcome could be problematic. The reason for that is not that this item would distance the FLX-MON measure from the “truth” (a supposedly objective latent factor), but that the predictive properties of this version of FLX-MON would be diluted, as they would shift the measure in the direction of IDV-COLL. In consequence, this FLX-MON measure would for instance be a less good predictor of national differences in educational achievement, as that variable is not well explained by IDV-COLL. In this case, this instance of response style would be a concern for CC. Whether it would be called “an error term,” “noise,” or “nuisance” is simply a matter of terminology. But in no way does it imply the existence of an objectively existing latent factor, which is mathematically equal to what has been measured minus an error term.

Some types of response style may generate other problems. Strong agreement with both “I am usually lazy” and “I am usually industrious” may suggest an ability to reconcile opposites (“Although I hate to work, I do work hard because I need to survive”), or it may be due to mindless responding. One possible strategy for addressing this problem is to combine these two statements in a single item and ask the respondents which way they lean, while also avoiding Likert-type scales (as in Minkov et al., 2017, 2018, as well as the WVS items about the justifiability of various behaviors). In CC, one does not need Likert-type scales to measure the intensity of endorsement of a given response. Percentages of respondents who have provided a particular response can be used to measure intensity at the ecological level on a scale from 0 to 100.

Nevertheless, as CC research frequently relies on secondary data sources, researchers will often need to work with data using response formats that were designed without considering cultural differences in response styles. This will call for creative treatment of the data on a case-by-case basis. An extensive range of approaches for addressing response styles in social scientific research is already available (Podsakoff et al., 2012). Researchers in both CC and CCP should make careful and judicious use of these approaches according to the needs of their study, when constructing their measures and interpreting patterns in data, while recognizing that the most suitable approaches may differ across the two fields because of their different goals, data sources, and epistemological emphases. We caution strongly against any dogmatic or “one-size-fits-all” approach to how this should be addressed across studies with different goals and available data sources.

Conclusions

We have highlighted the fundamental differences between the goals, assumptions, and limitations of CCP and CC, reaching the conclusion that these are two different disciplines, studying distinct phenomena—individual patterns versus societal patterns—in fairly different ways and for different purposes. These two types of patterns are not necessarily similar. Therefore, tests that compare the invariance of individual-level structures are at best irrelevant and at worst directly counterproductive in CC: they amount to looking for something that is not supposed to exist. It is like assuming that the principles of quantum physics will be applicable to the objects that we see with our eyes or vice-versa.

We note that the dimensions-as-manifolds concept that we defend here is new to the CC literature. It was absent in the work of Hofstede (1980, 2001) and other prominent pioneers of CC. We believe that it is highly desirable that contemporary CC researchers embrace this novel concept as it can provide much-needed clarity and logic to its methodology.

Rather than continue a fruitless debate on invariance testing, we suggest that CCP and CC continue to look for ways to collaborate, such as exploring how findings from either discipline can help elucidate issues in the other. For example, Minkov et al. (2020) showed that the individual-level correlation between religiousness and the affective component of happiness is a function of a nation’s emancipative values or IDV-COLL score. Another example is the extensive literature (for instance, Schmitt et al., 2008) demonstrating that personality and value differences between men and women are larger in countries with greater gender emancipation and a higher IDV-COLL score. Thus, a country’s position on a CC construct can explain some individual-level phenomena within it (Smith & Bond, 2019). Conversely, countries can be scored on differences in individual-level patterns (such as the Big Five) and these measures can provide alternative measures of national differences. Although they would not be measures of culture, they could enrich our understanding of culture. As long as the two fields appreciate each other’s specificities, they can collaborate fruitfully in many domains.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work of the first author was supported by the Basic Research Program of the Higher School of Economics, Russian Federation. The other authors received no financial support for the research, authorship and/or publication of this article.