Abstract

A formal model reveals how the information environment affects international races to implement a powerful, dangerous new military technology, which may cause a “disaster” affecting all states. States implementing the technology face a tradeoff between the safety of the technology and performance in the race. States face unknown, private, and public information about capabilities. More decisive races, in which small performance leads produce larger probabilities of victory, are usually more dangerous. In addition, revealing information about rivals’ capabilities has two opposing effects on risk: states discover either that they are far apart in capability and compete less or that they are close in capability and drastically reduce safety to win. Therefore, the public information scenario is less risky than the private information scenario except under high decisiveness. Finally, regardless of information, the larger the eventual loser’s impact on safety relative to the eventual winner’s, the more dangerous is the race.

Keywords

Introduction

Uncertainty is central to the study of arms races in the field of international relations. It underpins analyses of when arms races lead to conflict (Jervis 1976; Kydd 1997; Schelling 1980) and studies of the potential of treaties and other forms of international cooperation (Kydd and Straus 2013). In a world characterized by anarchy, it is likely the information environment will play a key role in determining the impact of emerging technologies and the competitions to develop them. We study the role of incomplete information in a setting that has been largely neglected in the international relations literature: races for powerful new technologies. Some scholars posit that races for such technologies may become a key feature of international politics in the coming decades, as states compete to be the first to develop new technologies such as advanced artificial intelligence (AI) or nanotechnology that could give them a sudden, significant increase in capability over other states.

Such races have important differences from competitions to build larger numbers of existing armaments. 1 An important feature of these races is that they are associated with different kinds of risk. Sometimes this risk is an exogenous feature of the international system that is exacerbated by an arms race. If a state’s technological development has the potential to cause a relative power shift, its rivals may attack to prevent such development (Fearon 1995). In other cases, which we focus on in the present work, the risk of negative externalities is inherent to the development and implementation process itself. For example, biological weapons development can produce pathogens that affect a broad range of actors beyond those involved in development. In April 1982, a research lab in the Soviet biological weapons program produced an anthrax outbreak in the city of Sverdlovsk that killed over 100 people. Likewise, genetic sequencing of the virus that caused the 1977–1978 influenza epidemic reveals that the virus seems likely to have come from a research laboratory (Rozo and Gronvall 2015). Biological weapons use has a relatively high probability of infecting the user, an argument put forth about why there have been relatively few uses of such weapons despite the weakness of the provisions of the Biological Weapons Convention (Ord 2020). Indeed, some scholars posit that substantial global risks many result from such technology races (Cave and ÓhÉigeartaigh 2018; Stern 2002). Because of these risks, actors face an inherent safety-performance tradeoff, in which they must choose the optimal allocation of resources between advancing the performance level of a technology, thereby increasing the probability of winning the race, and investing in the safety of the technology, which lowers the risk of disaster (Trager et al. 2021). Such allocations are determined by the strategic contexts in which the actors find themselves. Actors’ information about their rivals’ capability interacts with this tradeoff in a number of ways. Overestimation of a rival’s technological capability may lead an actor to overinvest in capability, increasing risk relative to the complete information scenario (Stafford et al. 2021). In other cases, actors may learn that they are far behind in the race and choose to cede the prize to their opponent, lowering risk (Bimpikis et al. 2019).

Some of these dynamics are illustrated in the race for the first nuclear bomb during World War II when physicists involved in the Manhattan Project expressed concerns over the safety-performance tradeoff. Edward Teller feared that a nuclear fusion reaction could ignite the atmosphere, ending life on Earth. He privately urged the U.S. government to delay development so that additional calculations and tests could be performed. Though the team was able to show that these fears were improbable, Teller and his colleagues remained worried until after the Trinity test was conducted. Part of the reason why history favored development over safety is the U.S. government’s uncertainty over the level of progress of Germany’s development of nuclear weapons. Albert Einstein, for example, would later write to US President Franklin Roosevelt, “Had I known that the Germans would not succeed in developing an atomic bomb, I would have done nothing for the bomb.” (Newsweek 1947). 2

A number of scholars believe that the development of advanced forms of artificial intelligence will exhibit similar strategic dynamics (Russell 2019; Yudkowsky 2011). Indeed, AI has already begun to shape international and domestic politics in profound ways, expanding the use of fully automated drones (Horowitz 2018) and heightening domestic surveillance in authoritarian regimes (Beraja et al. 2023). And progress in the field is increasing rapidly. On average, experts in the field believe there is a 50 percent chance of developing an AI that surpasses human performance on all job tasks by 2060 (Zhang et al. 2022). Such advanced AI systems may carry enormous benefits to states, but they all produce distinct risks, ranging from AI outputs that are misaligned with human preferences or control of powerful AI systems by expansionary states or other dangerous actors (Brundage et al. 2018; Russell 2019). 3 Though AI development is currently led by scientists a strong preference for open sharing of knowledge, as the rewards from advanced AI become more apparent, as with nuclear weapons, states may have incentives to increase the pace and secrecy of development with as-yet-unknown effects on risk.

Likewise, the importance of such a tradeoff is likely to become increasingly important in biological research for both military and civilian use. The field of synthetic biology has an explicit goal of reducing the level of tacit knowledge necessary to produce new biological agents (Mukunda et al. 2009), which could increase the technology’s accessibility to state and nonstate actors. The proliferation of terrorist actors concurrent with the publication of gene sequencing for Ebola, influenza, and other deadly pathogens on the Internet led to an increased focus on preventing proliferation (Stern 2002). Likewise, the cost of genome sequencing, and thus of developing deadly biological agents, has been halving faster than every 2 years, making the development of weaponized agents accessible to an ever-increasing set of actors (Mukunda et al. 2009). Finally, accidental laboratory leaks can also cause deadly outbreaks, exacerbating risk (Lipsitch and Inglesby 2014; Rozo and Gronvall 2015). Such a rapidly evolving threat has forced political actors to consider a safety-performance tradeoff for biological research (Stern 2002).

We develop a formal model that captures many of these strategic considerations. We solve for the perfect Bayesian equilibria under three scenarios regarding information about capabilities: unknown, private, and public. First, we show that more decisive races, in which small leads in performance produce larger probabilities of victory in the race, are weakly more dangerous under most parameter values. Second, we show that revealing information about the capabilities of rivals has two opposing effects on disaster risk. The benefit is that actors may discover that they are sufficiently far apart in capability and will compete less. The cost is that actors may discover they are close in capability and thus engage in a dangerous race to the bottom, cutting corners on safety to win the race. As a result, the public information scenario is more dangerous than the private information scenario only under high decisiveness. As decisiveness decreases, the first effect dominates the second, so that public knowledge of capabilities is welfare-improving. Third, in all information scenarios, we find that the larger the impact of the eventual loser on safety, relative to the eventual winner, the more dangerous is the race due to a public-good effect.

Our work is organized as follows. the next section provides an overview of the interaction among information, investments, and risk in the arms race literature, finding that existing models fail to fully capture the strategic situation in which states find themselves when developing risky new technologies. The section after describes our choice of model primitives, grounded in existing cases of technology races. The fourth section presents the base model, and the fifth describes the forces that generate risk under each information scenario and illustrates these forces in a series of historical examples. In the sixth section, we consider the role of safety sharing, enmity, and regime type on risk. The final section concludes.

Information, Arms Racing, and Risk

An extensive literature exists on how information affects the risk of conflict in arms races. The sorts of incomplete information that drive the risk of conflict appears to fall into three broad categories (Ramsay 2017). The majority of the literature has focused on uncertainty over actors’ costs of conflict (Kydd 1997). A second strand of literature focuses on psychological factors influencing states’ risk-taking, invoking such causes as states’ mutual tendency to be either overly optimistic about their own chances of winning a conflict (Wittman 2009) or overly pessimistic about the intent of a rival’s arms buildup (Jervis 1976). Finally, a third strand of literature, in which our work is situated, focuses on the role of uncertainty about the capabilities of rivals. Across literatures, the existence of a baseline bargaining model of conflict (Fearon 1995) has given scholars a framework with which to analyze the role of uncertainty in war. This has led to a number of robust analytical results, including that weaker types are less likely to initiate conflict (Powell 2004), that a higher variance over the distribution of types increases risk (Reed 2003; Wittman 2009), and that perfectly peaceful equilibria only obtain when the joint cost of war is large enough (Fey and Ramsay 2011).

Existing literature has studied the role of information in quantitative arms races, those for which states accumulate arms but the level of technology remains fixed. 4 Kydd, (2000); Meirowitz and Sartori, (2008) focus on situations in which states are able to arm in private before bargaining. Kydd shows that states perceived as having relatively low capabilities tend to arm in private in order to secure better bargaining outcomes. Meirowitz and Sartori (2008) endogenize the decision to disclose capabilities, arguing that states prefer to keep their capabilities private to secure better bargaining outcomes, even when the risk of war increases. A second class of models studies an asymmetric arms race, when a weaker state is seeking to acquire new military capabilities to lower the gap with strong states. Bas and Coe (2016) study a dynamic model in which a strong state obtains a noisy signal about an arming state’s level of capabilities, finding that the estimated time to completion of the arming is more predictive of preventative war than the mere existence of arming. 5

The empirical evidence on the influence of arms races on war is mixed. Early work by Richardson (1960); Wallace, (1979, 1982) finds a positive correlation between rapid accumulation of arms and the outbreak of war. Later work has qualified these results, finding only some types of arms races are correlated with an increased outbreak of war. Horn (1987) finds that brief periods of rapid arming do not heighten the risk of war, while Sample (1997) finds that, while most arms races are associated with an increased probability of conflict, those involving nuclear weapons buildups or those between bitter rivals are not.

In contrast to the study of arms races and war, the study of qualitative arms races has been hindered by the lack of a standard model for thinking about such competitions. As such, existing formalizations of arms races we argue are poor descriptors of the strategic environment in which states find themselves. First, the rewards to qualitative races may be inordinately large, perhaps leading to rapid, discontinuous power shifts between a state that develops a new technology and her geopolitical rivals. This view is epitomized by President Vladimir Putin of Russia, who said with regards to military uses of AI: “the one who becomes the leader in this sphere will be the ruler of the world.” (AP 2017). As such, it makes little sense to view the outcome of the race in terms of bargaining over shares of a pie (Fearon 1995). Second, the risks resulting from such races may be quite different along a number of dimensions compared to quantitative arms races, whose main risks are war and an inefficient allocation of economic resources. 6 Instead, risks from technology races may be both much larger in consequence and have a far smaller probability of realization than risks from quantitative races (Stern 2002). However, states have a far greater control over the level of risk than they do over the occurrence of war. 7 For example, biological research can continue, albeit at a slower pace, even if a state were to prohibit the publication of most biological research and keep risk to a minimum. 8 This allows states to control the tradeoff between risks from speeding up development and the risk of a geopolitical rival developing the technology first.

In this work, we develop a model that explicitly takes into account such features. This model contributes to a small but growing literature on qualitative arms races. Naude and Dimitri (2020) study an evolutionary model of a qualitative race within one country, showing that taxing technological development and using public procurement can incentivize cooperation and reduce risk. Stafford et al. (2021) employ a dynamic model to study the effects of a capabilities gap on risks in qualitative races, finding that risk is higher for a larger gap in players’ performance levels when enmity is high but is lower when enmity is low. They show that there exists a safety-performance tradeoff in which investments in safety and investments in research progress are complementary goods. Both of these models assume that research capability levels are common knowledge, an assumption that seems unlikely in real-world races. Both the Manhattan Project and the Soviet bioweapons program, for example, were carried out under conditions of high secrecy. While the model in Armstrong et al. (2016) compares risk in a technology race under public and private knowledge of capabilities, they make the strong assumption that states have perfect knowledge of the R&D process such that the state with the highest performance wins the contest for certain. In contrast, we generalize their model to take into account both the role of information about research progress and the capabilities of rivals. It is to this uncertainty that we now turn.

Model Primitives

In our model, two states i ∈ {1, 2} compete to build a significant military technology, such as a new biological weapon or powerful AI system. Nature endows each state with a research capability level x

i

, which we can think of as determined exogenously by existing attributes such as GDP and military expenditures.

9

Depending on the information scenario, explained in detail below, x

i

may be unknown, privately known, or publicly known. Each state’s capability is drawn independently from a commonly-known distribution G(x

i

), which we assume for simplicity and without much loss of generality, is uniformly distributed on [0, μ]. Each state chooses a level of safety investment s

i

∈ [0, 1]. However, investing in safety research detracts from a state’s performance in the race in a linear fashion. We denote a state’s net performance level as k

i

= x

i

− s

i

. If a state i wins the race, it then implements the technology and receives its military benefits; with probability s

i

, implementation is successful, and with probability 1 − s

i

, a disaster is incurred.

10

For now, we assume that only the winner has a chance to implement the technology and thus contribute to the safety of the process, though later we relax this assumption and allow both states to affect the risk of disaster. We normalize the value of winning the race to 1 and the value of a disaster to 0. If i loses the race, its rival j has a chance to implement the technology. We assume that the disaster affects all states equally.

11

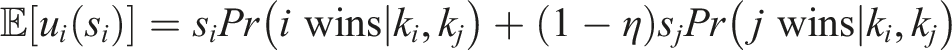

However, if a rival wins the race and implementation is successful, a state receives only an intermediate payoff (1 − η), where η ∈ (0, 1] represents enmity, or the opportunity cost of losing the race. In effect, then, we have the following utility ordering for each state i:

Finally, we assume that states’ performance levels k

i

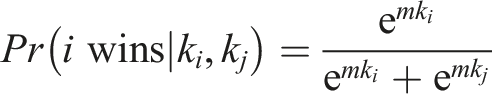

translate into success in the race according to a logistic contest success function (CSF):

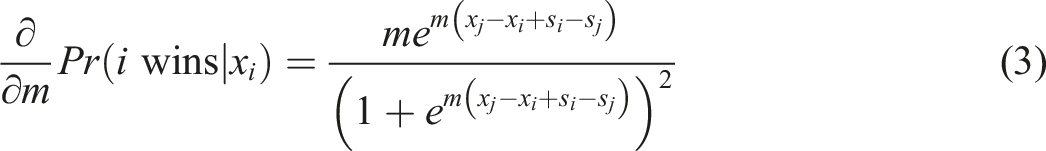

Though contest success functions have been commonly used to model war outcomes (cf. Fearon 2018; Skaperdas 1998; Hirshleifer 1995), their use in a technology race warrants discussion. First, the use of a CSF allows us to model the level of uncertainty inherent in innovation. Even given a known performance level, research outcomes are the result of an inherently random process that involves a certain amount of luck as researchers seek to recombine existing knowledge in novel ways (Weitzman 1998). 12 Even if another research team is lower in capabilities, they have a positive probability of making the discovery first. Thus, it is common for economists to measure innovation races between firms using a contest success function (Baye and Hoppe 2003). Second, we choose the logistic CSF in particular because degree of difference in capabilities matters for success. This appears to be an important feature in determining risks from qualitative races. Both the Manhattan Project scientists and Soviet biologists stated that the potential of their rival, Nazi Germany and the United States, respectively, to catch up in the race was a driving factor encouraging them to favor performance over safety (Ord 2022). 13

An important focus of our paper is the decisiveness parameter in the CSF, m ≥ 0. This determines the rate at which additional effort translates into success. At low values of decisiveness, progress is highly uncertain, such that even a state with relatively low capability may win the race due to “luck” or some other resource that is not accounted for by x, but as m → ∞, the state with the highest value of k wins the race with certainty.

14

In our context, m is correlated with the level of uncertainty over the research process.

15

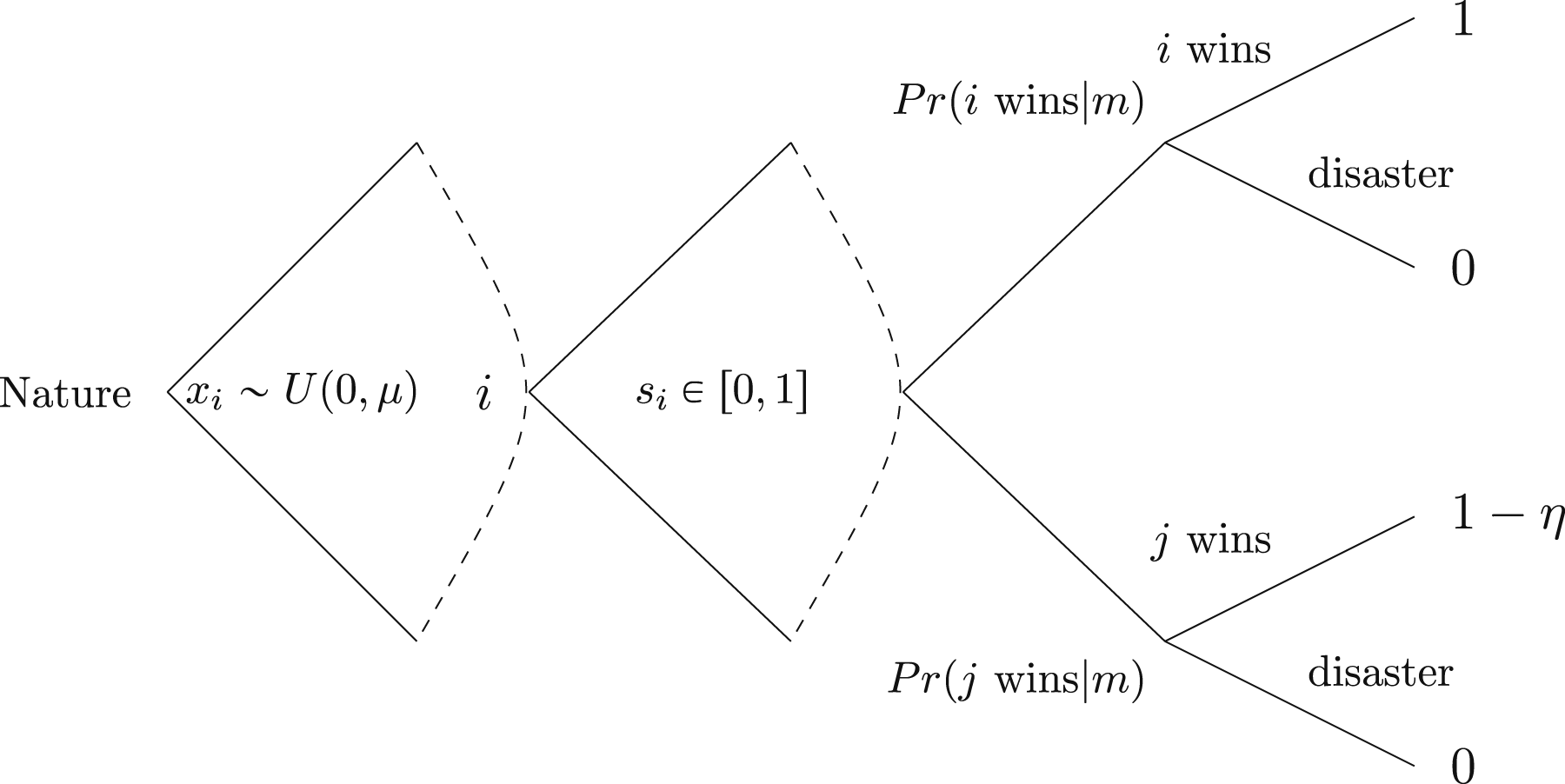

In cases when m is low, mastering the steps necessary to build a technology is likely to be difficult, requiring high levels of expertise or a search through a wide space of ideas in order to find one that “works.” In other cases, m is high, representing a high level of clarity about how research capabilities translate into success. This is likely to be the case if a new technology is an iteration of an existing one. Summarizing the above description, Figure 1 presents the structure of the game. The game tree.

Base Model

To examine the risk that arises under different information structures, we begin by characterizing the unique symmetric Bayesian Nash Equilibrium of the game under no information, private information, and public information conditions. This will allow us to calculate the expected risk of the race given the distribution of states’ capabilities G(x). 16

No Information

We begin with the no information case. In this scenario, no state knows its own capability. This is distinct from the uncertainty that comes from decisiveness. With low values of decisiveness, states may know their own performance level and can channel their resources toward developing the technology, with uncertain results. Here, states also have no information about their own capability. This is a more fundamental source of uncertainty: does a state’s stock of resources even contribute to technological progress at all? Realistically, then, the no information case represents a lower bound on states’ knowledge, as in the real world states are likely to have at least an understanding of how to build a novel technology. Because states have the same prior beliefs over the type space, in the symmetric Nash equilibrium, each will choose the same strategy. Here we derive the equilibrium safety level of each player as well as the expected disaster risk over the distribution of states’ capabilities.

In the case in which states do not know their capabilities, the unique symmetric BNE strategy is given by

First, we note that the equilibrium outcome is written in terms of F(c), where C

i

= X

i

+ V

i

− V

j

, Vi ∼ Gumbel(1,

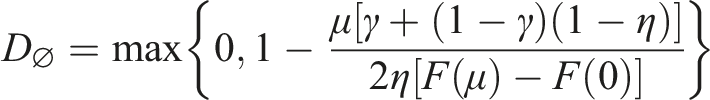

Now we turn to the disaster risk. This is the expected probability of disaster over the distribution of types G(x) who play their BNE strategies. Since all states are playing the same action, the expected risk of disaster is simply given by

In any distribution of capability levels the expected level of disaster risk is given by

This value represents the expected risk from a qualitative race to a neutral third party who cannot query states for their types. This provides a useful benchmark by which we can compare the level of risk even as states are permitted increased knowledge of their own or their opponent’s capabilities.

Private Information

In this section, we consider the case in which each state knows its own capability level but not its rival’s. This situation more closely resembles realistic qualitative races, which often involve closely-guarded state secrets. Power-seeking states have a strong incentive to keep their technological capabilities hidden from rivals in order to win the race, as even proof-of-concept demonstrations could lead to increased competition (Bimpikis et al. 2019). The Soviet biological weapons program, for example, was conducted in so-called “closed cities,” which were not printed on most maps and which were off-limits to even Soviet citizens. In the private information case, each state i can condition its safety level on its own capability, choosing a strategy s private (x i ). Before we establish the level of safety in this scenario, we begin with a lemma showing that performance, or capability less safety, is always increasing in x i :

Let k

i

(x

i

) = x

i

− s

i

(x

i

). In the private information scenario, at any BNE, k

i

is strictly increasing in x

i

.

Now we solve for the equilibrium safety level.

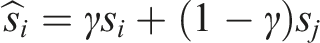

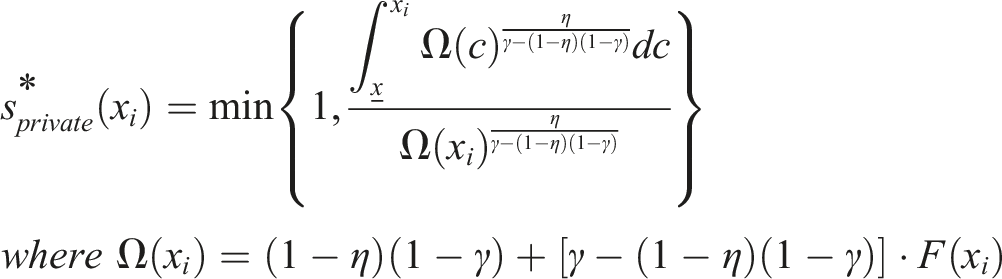

There exists a unique symmetric Bayesian Nash Equilibrium in pure strategies. The strategy is given by

Here, we see that states increase their safety levels as capabilities increase. Since more capable actors are more likely to win the race, knowing they are at the high end of the distribution, they can condition on this information by trading off additional performance for a higher level of safety. In addition, as in the private information case, we see that as enmity level increases, states start putting less efforts into safety. Finally, as before, the overall disaster risk is given by

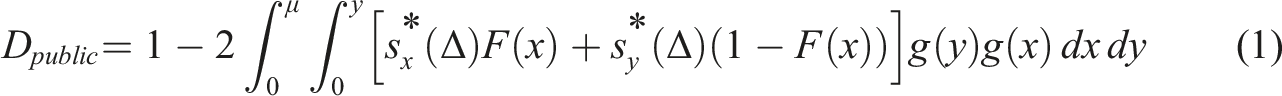

The disaster risk in the private information scenario is given by

Public Information

Now we solve for the case in which both states are fully aware of each other’s capabilities. While states are often incentivized to keep capabilities secret in order to impede rivals’ progress, in other cases, states may want to demonstrate their capabilities to deter potential rivals, as in the case of the U.S. and Soviet hydrogen bomb tests in 1952 and 1953, respectively. Alternatively, this corresponds to states of the world in which espionage techniques make secret-keeping impossible. In this state of the world, the difference in capabilities determines states’ safety choices and the risk of disaster. Here, we denote the leader’s capability as x and the laggard’s as y. Denote Δs ≔ x − y as the variable on which states condition their safety choices. We can then find a unique pure strategy Nash equilibrium.

There exists a unique pure strategy Nash equilibrium for the public information cases for all values of m > 0.

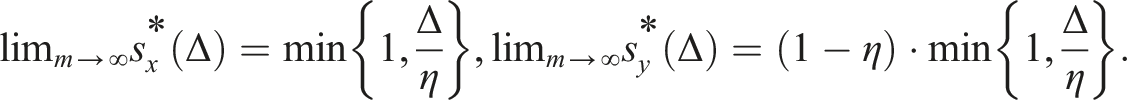

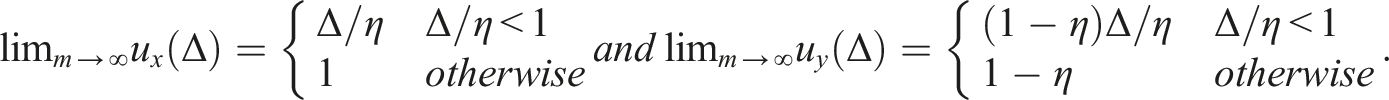

Denote the solution to this system of equations as s*(Δ). Except in the case where m → ∞, equilibrium strategies do not permit a closed-form solution. In order to give intuition, then, in the following two corollaries, we show payoff and strategy equivalence with Armstrong et al. (2016) in the limit.

[Strategy equivalence] As m → ∞, strategies converge to the following expressions:

[Payoff equivalence] As m → ∞, states’ utilities converge to the following expressions: From these limit expressions, we see that safety is positively associated with the difference between the leader and laggard and, as in the other scenarios, negatively associated with enmity. Unlike in the private information scenario, in which highly-capable states always play higher levels of safety, here capable states will cut corners on safety if their rival is close in capability. If states can observe their rival’s capability, cutting corners on safety is only rational if a state is close enough in capability such that doing so will greatly increase the chance a state overtakes its rival. We compute the associated disaster risk as follows:

Information and Risk

We now turn to comparisons of disaster risk under our three information scenarios. 18 We begin with a derivation of comparative statics in the model and then present empirical examples of these forces from real-world qualitative races.

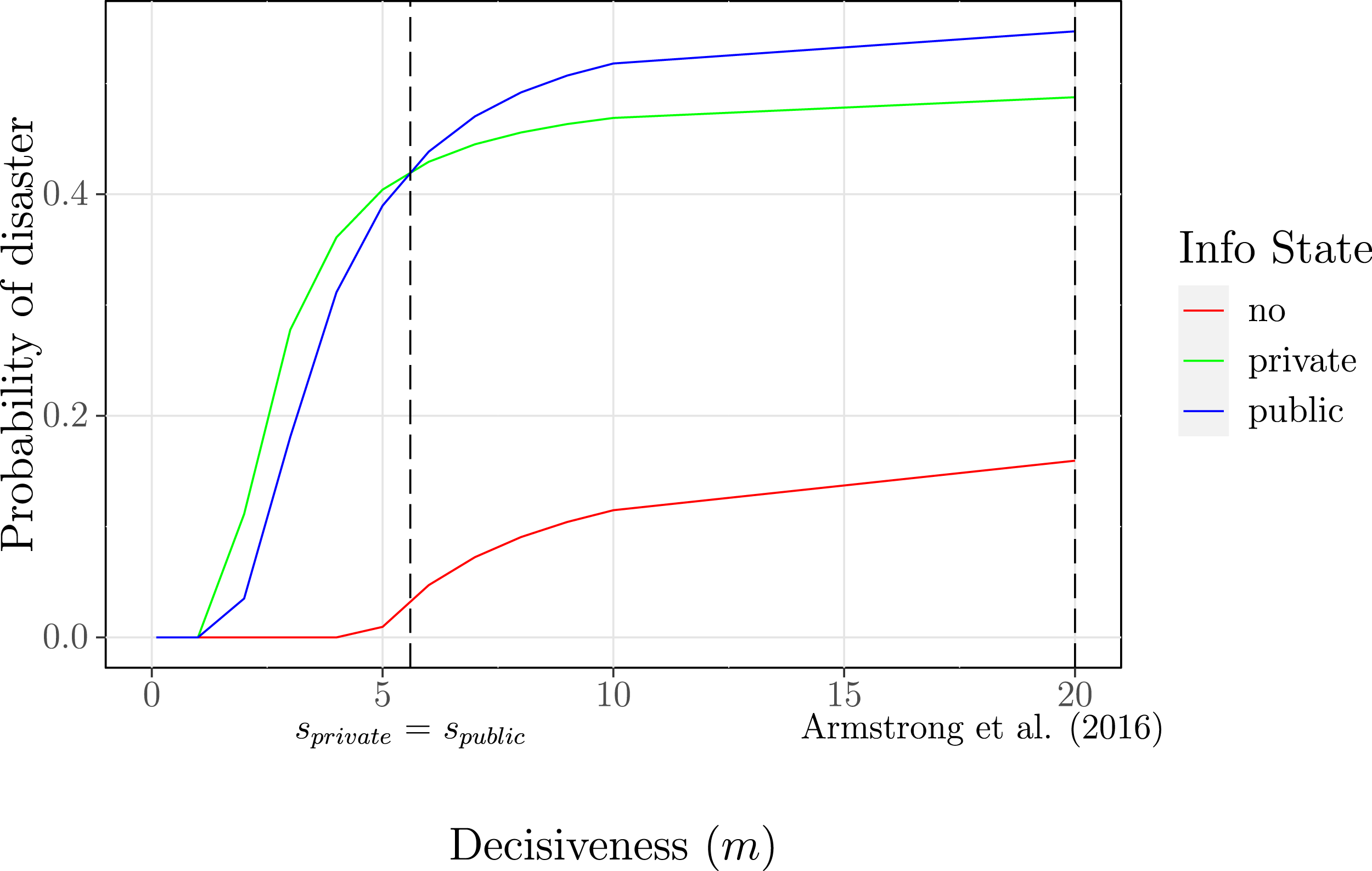

Information and Welfare

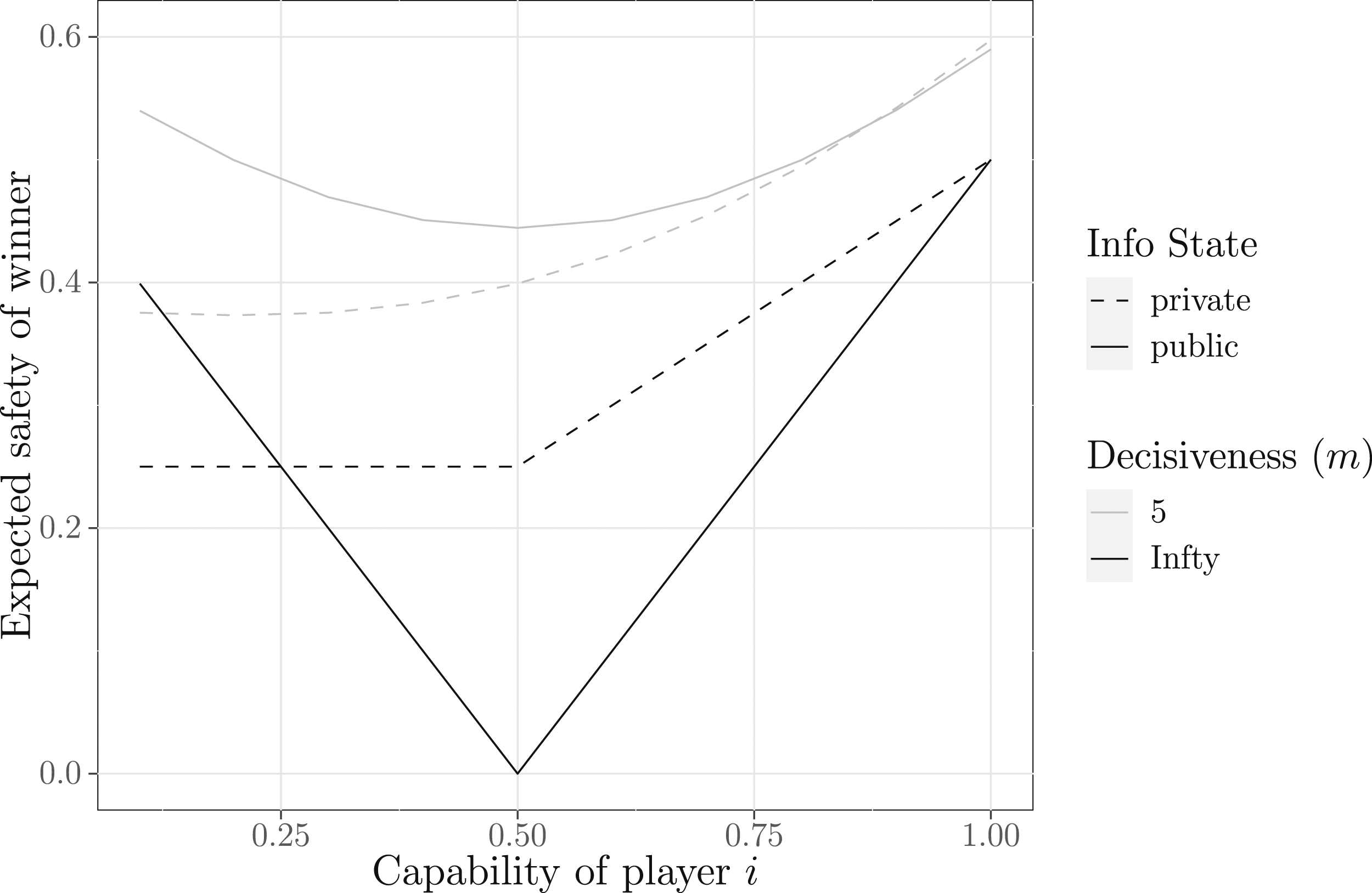

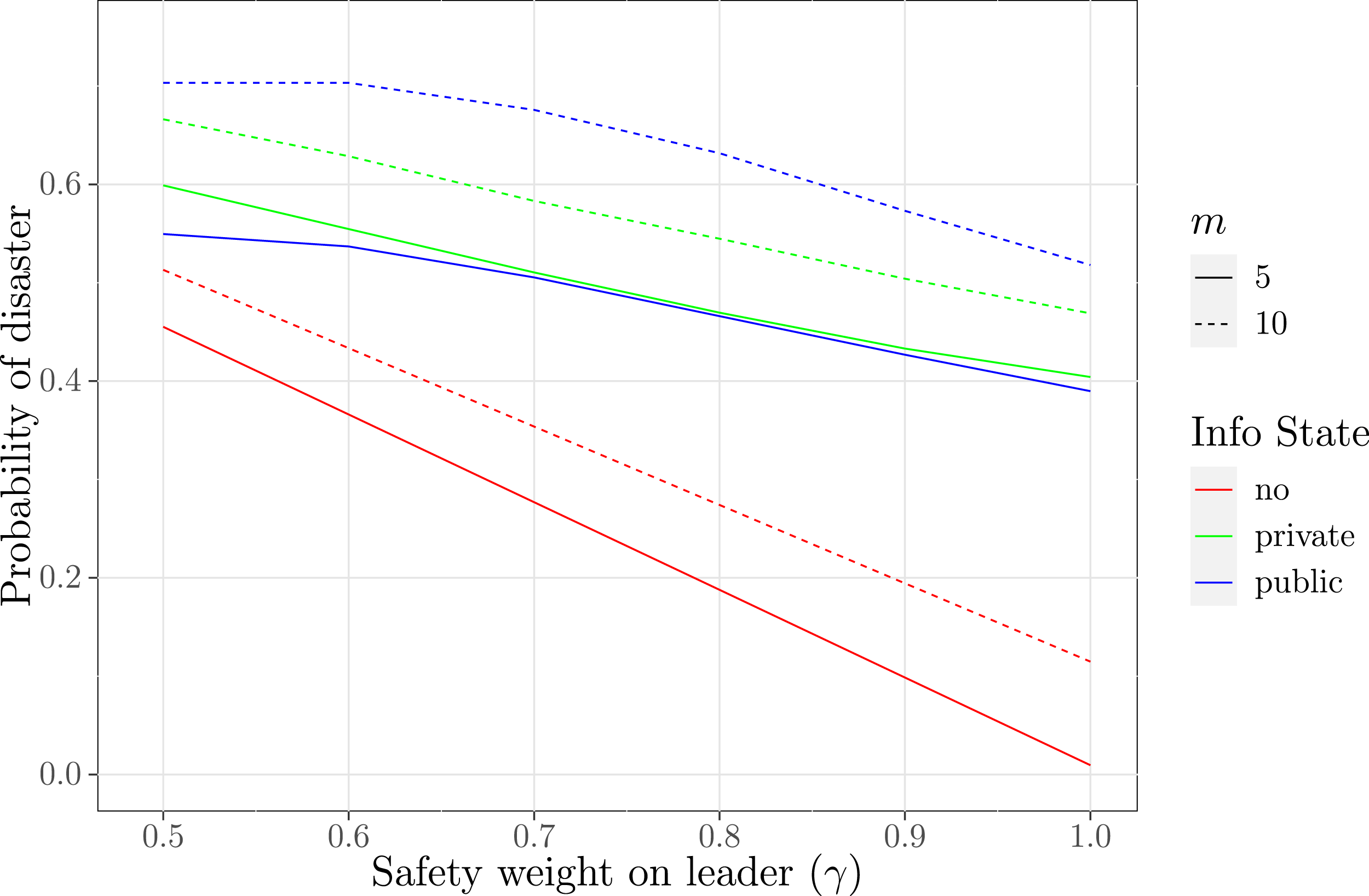

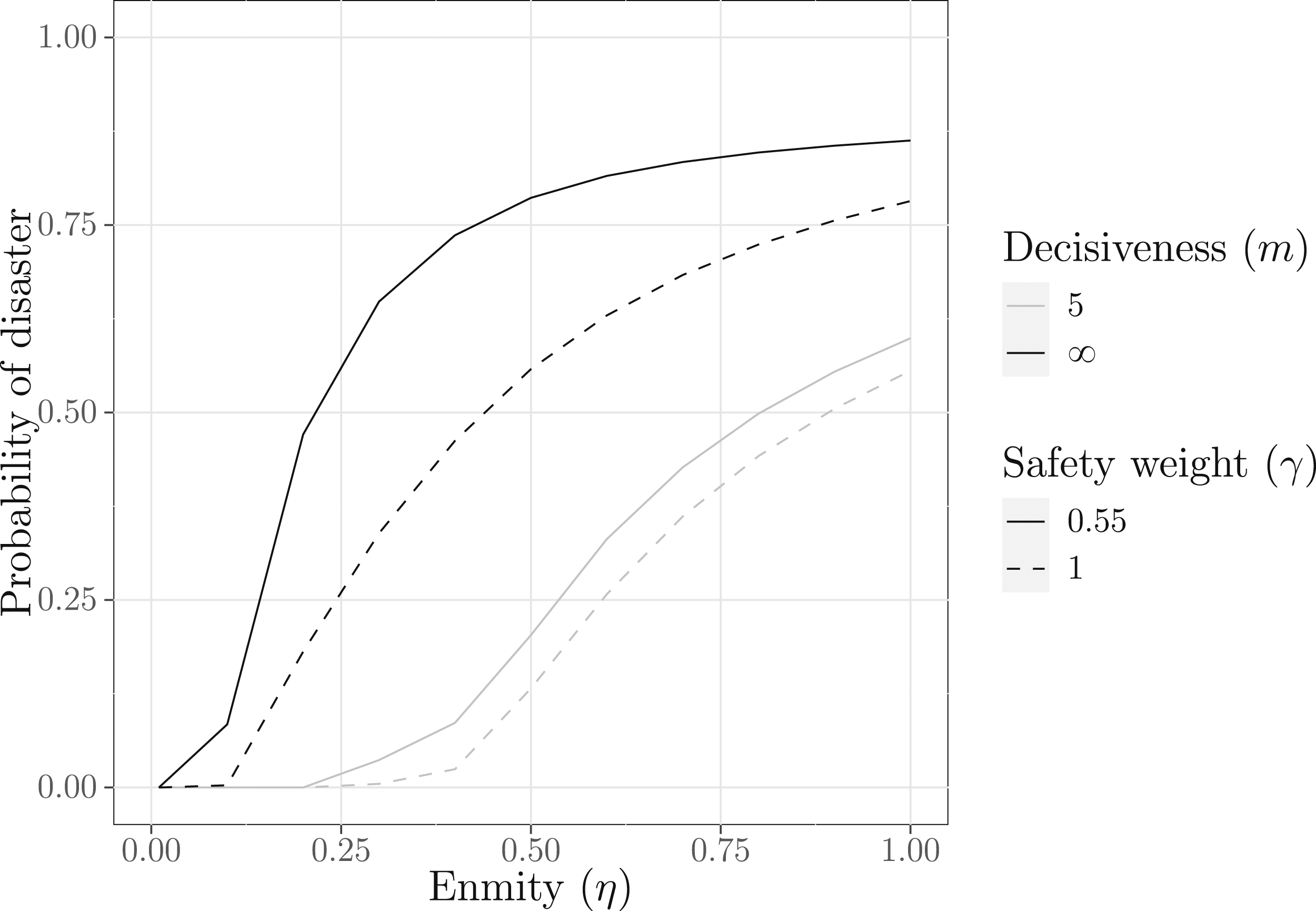

Changing information states can make the race more dangerous; we seek to understand how this interacts with the decisiveness parameter m. We present two primary sets of results, both illustrated in Figure 2. First, across most parameter values, the expected disaster risk is increasing with m. We prove strong versions of this statement for the no information case and private information case and a weaker statement for the public information case.

19

Disaster risk (μ = 1.44, η = 0.9).

In the no information and private information scenarios, risk always increases with decisiveness, unless risk is 0. In the public information scenario, risk is higher as m → ∞ than as m → 0.

That is, if trading off safety for additional performance does not produce a high chance of winning the race, states will be reluctant to do so. As decisiveness becomes arbitrarily large, however, even the smallest additional unit of performance will determine for certain who wins the race, offering states a large incentive to cut corners. An observable implication of this is that in early stages of development, when progress is highly uncertain, states are likely to prioritize safe development over winning the race. In the early stages of nuclear weapons development, for example, and as late as 1942, U.S., Soviet, and German scientists published major insights on nuclear fission in publicly-accessible physics journals. Only around 1940, when the prospect of succeeding in the race became more imminent, did U.S. scientists begin concealing their results and progressing without checking necessary calculations (Ord 2022).

Second, we want to know how the relative openness of the race interacts with decisiveness to influence risk. As shown in Figure 2, for much of the parameter space, the no information scenario is safer than the other two information scenarios. In fact, in Proposition 5 below, we show that the no information scenario is always at least as safe as the private information scenario.

The most interesting case, however, is the comparison between the public and private information scenarios. In quantitative arms race models, better information about rivals’ capabilities tends to reduce risks (Wittman 2009; Reed 2003). In contrast, in their study of a qualitative race, Armstrong et al. (2016) find that, given a high degree of enmity between states, the public information scenario is more risky than the private information scenario. However, we show that both effects are possible in a qualitative race. For large values of m and high enmity, we show that indeed public information produces higher risk than private information, producing an information hazard effect. However, as m declines, we see in Figure 2 that the public information scenario becomes safer than the private information scenario. Finally, as m tends to 0, we see that in both cases, players are unwilling to take any risk and implement at the maximum safety level. We present this result formally.

The no information scenario is always safer than the private information scenario, while the relative safety of the public and private information scenarios depends on m.

These results are presented in Figure 2, where we see that the riskiest information scenario changes at m = 6. Two forces drive this result. Note that the decisiveness parameter m enters into the disaster risk function in two places: the contest success function and the equilibrium safety choices of the players. To see how these forces affect risk, consider the drivers of risk when m → ∞. The public information scenario is riskier than the private information scenario as long as Expected safety of the race winner (μ = 1, η = 1, x

j

= 0.5).

Finally, the no information case is weakly safer than the private information case. Thus, learning one’s own capability does not increase welfare. In the no information scenario, just as in cases with low decisiveness, states are quite uncertain about the returns to their own efforts. Here, the chance of being a laggard is exactly 1/2, so states are highly uncertain whether cutting corners on safety will benefit or harm them. Since they know their rivals will also face the same uncertainty, they will be quite unwilling to cut corners on safety. Thus, even for very high decisiveness, states are unwilling to take much risk, so states would be better off if they did not learn their type.

Empirical Illustrations

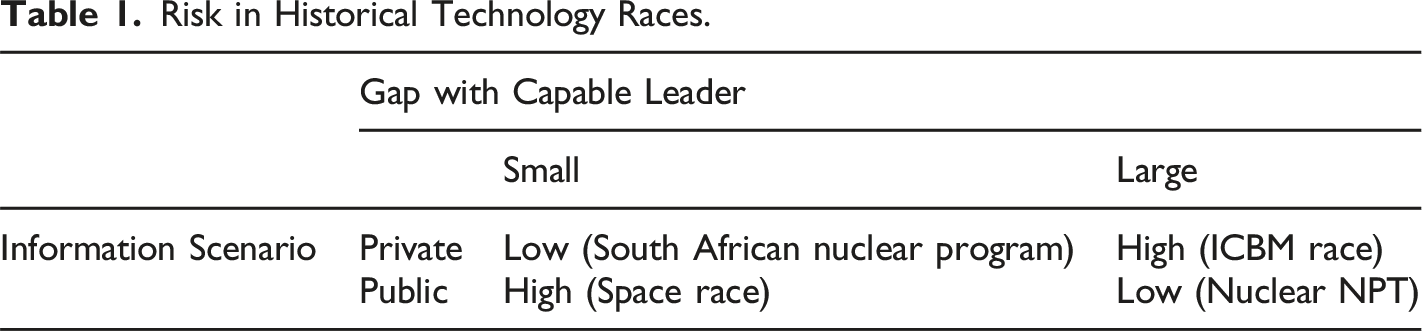

Risk in Historical Technology Races.

Information Scenarios

First, we compare the role of public and private knowledge when the gap between a capable leader and a laggard in a technology race is believed to be large. Consider the U.S.-Soviet race for the development of intercontinental ballistic missiles (ICBMs) in the 1950s. At the time, Soviet leadership attempted to exaggerate the size of their ICBM arsenal. In October 1957, Premier Nikita Khrushchev declared Soviet factories were “turning out missiles like sausages” (Ellsberg 2017). The USSR was reasoning along the lines of the public information scenario: if the US could be persuaded they were far behind in the race, they would abstain from engaging in a dangerous arms buildup and accede to Soviet foreign policy demands (Horelick and Rush 1966). However, their strategy backfired: instead of backing down, the U.S. engaged in a rapid buildup of ICBM capabilities, testing its first in 1958 and producing over 1000 by 1965 (Nuclear Threat Initiative, 2022). Why? As our model highlights, the strategic logic is different when capabilities are private. By 1957, the U.S. Air Force privately estimated that the Soviet Union was far ahead in development, with hundreds of missiles developed as early as 1959 (Ellsberg 2017). The belief that they were relatively low on the distribution of capabilities, then, led the U.S. to pursue a risky development strategy, one which might not have occurred if the Soviet leadership had backed up their claims regarding their technological prowess (Mathers 1998). As a consequence, the US and USSR engaged in a dangerous arms buildup. By 1961, when the U.S. learned that their estimates were mistaken, they had developed 40 missiles, while the USSR had only 4 (Lawler and Mahan 1961). By 1965, the US had expanded its production to over 1000 (Nuclear Threat Initiative 2022).

On the other hand, when states know they are behind in the race, they are often willing to develop safely. Consider the example of the Nuclear NonProliferation Treaty. That the weapons stockpiles of most nuclear powers are public information shows most states are willing to publicly reveal their nuclear capabilities in order to be able to develop safe, peaceful nuclear technology under the treaty in exchange for technology transfers, rather than engage in a weapons development race that risks strong international retaliation (Fuhrmann and Lupu 2016). A deviation to a race would be futile, since the wide gap in the distribution of capabilities is public knowledge. Thus, even most states that have technical capabilities to build nuclear weapons, such as Argentina and Brazil, are not willing to run the risk of a race (Narang 2017). Only when enmity is high enough are some low-capability states, such as North Korea, willing to begin active development.

Our model predicts that we should see the opposite effect when states are close: when information is public, states engage in a “race to the bottom” on development, generating a far higher level of risk than in the private information scenario. The U.S. government, for example, began the Space Race after the public launch of Sputnik I by the Soviet Union. 22 Prior to the launch, while each state knew the other possessed a satellite program, the U.S. underestimated Soviet progress, with President Eisenhower choosing to deprioritize the speed of the U.S.’s own program. Unlike in the ICBM race, the USSR did publicly demonstrate their technological capabilities. The Soviet launch of Sputnik I in October 1957 caught U.S. policymakers by surprise, prompting Eisenhower to re-prioritize the Vanguard project, which until then had been beset by delays (Barnhart 2021). The U.S. was a close second, launching its first satellite a mere 4 months later, in January 1958. In contrast, by refusing to conduct public tests of a new technology, a state may be able to hide its level of technological prowess to avoid a costly race with its rivals. Consider the case of South Africa’s nuclear weapons development. From 1971 through the dismantling in 1990–1991, South Africa pursued what Narang (2017) calls a “hidden” development strategy, prioritizing secrecy above speed or deterrence. Besides risks inherent in the production process, South Africa also faced risks of international isolation or retaliation should its program be discovered, as neither superpower wanted it to acquire them (Liberman 2001; Rabinowitz and Miller 2015). While the U.S., suspicious of South Africa’s refusal to accept International Atomic Energy Association inspections of its nuclear reactors, placed an embargo on nuclear fuel exports to South Africa, South Africa was able to assemble and then store its first thermonuclear weapon in 1979 and developed a further 5 by 1990 (Liberman 2001). Unlike the space race, South Africa’s secret development did not prompt further proliferation by rivals, who did not know how behind they were in development, nor more than a moderate response by the international community, who did not realize the scale of South Africa’s technological advance. Indeed, a race was avoided despite a relatively high degree of enmity between South Africa’s government and the two superpowers.

Decisiveness

The second source of risk in our model is high decisiveness. Though quantifying this parameter is difficult, we note that states’ beliefs about m are likely to be positively correlated with how rapid they anticipate research progress to be. If states express a belief that research progress will happen quickly or that they are certain about key technological parameters, it is likely they believe m to be large. By analyzing variation in actor’s expressions of certainty, then, we can analyze how changes in decisiveness affect states’ safety choices. For example, as R&D on the first atomic bomb progressed, U.S. and British politicians and scientists increasingly expressed certainty that such a weapon could be soon developed. Early in development, there was considerable uncertainty about the size of the critical mass of uranium and amount of infrastructure spending required to develop a bomb. In 1939–1940, estimates for the critical mass ranged from 44 tons to 1 pound of uranium-235, indicating high uncertainty about the timeline required to produce sufficient enriched uranium (Ord 2022). In this period, it could be said that decisiveness was low: it was unclear by how much a marginal investment in capabilities would improve performance in the race. At this time, scientists and officials seemed relatively unconcerned about sacrificing safety for an advantage in the arms race, as U.S., German, and Soviet scientists continued to publish key results on nuclear fission in physics journals (Ord 2022). By 1942, the U.S. and U.K. governments came to believe that decisiveness was considerably higher. For one, uncertainty surrounding estimates for the critical mass had declined to such a degree that the U.S. was able to accurately forecast the remaining time to the development of uranium and plutonium bombs (Ord 2022). As uncertainty in the possibility of success decreased, the U.S. drastically increased funding of capabilities: the Manhattan Project would end up totalling 0.4 percent of U.S. GDP by 1944. Likewise, U.S. funding on safety relative to performance began to decrease, as it pressed forward with the Trinity test despite Teller’s fears that it could ignite Earth’s atmosphere (Ord 2020).

Multiple Tests, Varying Enmity, and Varying Regime Type

Until now, we have made a number of simplifying assumptions: only the winner can implement the technology, enmity is fixed, and regime type is symmetric. In this section, we relax these assumptions to observe their effects on risk.

Multiple Tests and Overall Risk

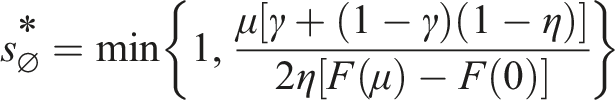

First, we analyze a game in which both states contribute to overall safety. Real-world qualitative races often proceed as a series of steps. During the Space Race, for example, the U.S. conducted a failed satellite test in December 1957 before succeeding 2 months later. If each test carries some risk, then the choices of both the winner and loser of the technology competition determine the overall risk level. This generalization raises important theoretical questions: (1) How does the likely winner’s safety investment change now that her optimal strategy depends on the likely loser’s safety investments? and (2) How does the structure of the safety provision burden affect states’ incentive to win the race and the overall level of risk? We explore these questions in each of the three information contexts of our baseline specification. Now, let the winner contribute a fraction γ ∈ [0.5, 1] to overall safety, while the loser contributes (1 − γ).

23

In our base model, γ = 1 and only the winner conducts a risky test of the technology. However, as γ → 0.5, the eventual loser of the race approaches the leader’s level of contribution to safety. In each state’s utility function, then, the winner’s expected payoff is multiplied by

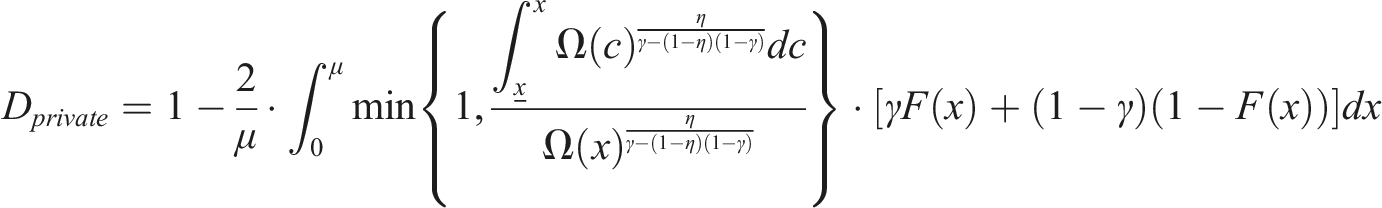

As before, we solve the model in all three information scenarios and present the results as propositions. In the no information case, the symmetric equilibrium dictates that both states exert the same level of safety efforts as in the base model when γ = 1. When the competition loser’s safety efforts–or lack thereof–also affects disaster risk, we find that the equilibrium safety efforts unambiguously decreases.

In the no information case, the unique equilibrium level of safety efforts in a symmetric BNE of pure strategies is given by:

The expected level of disaster risk is then The less safety is determined exclusively by the winner, the more the states are willing to sacrifice safety to increase the chance of winning the race. This is due to a public-good effect. As the fraction of the benefits of a given state’s safety decrease relative to the cost, it is less willing to invest in safety over performance. We see that the amount by which the safety efforts decrease is linear in γ. Turning to the private information scenario, states are aware of their endowed capability and how rare it is compared to the general population, while still unaware of the opponent’s capabilities. In this case, the states condition safety investment strategies on their capabilities. First, we establish the equivalent of Lemma 1 for γ < 1.

In the private information scenario when γ < 1, k

i

is always strictly increasing in x

i

.

Then Proposition 7 characterizes the equilibrium safety investments and disaster risk. 24

In the private information case, the equilibrium level of safety efforts in a symmetric BNE of pure strategies is unique up to a set

The disaster risk is given by: Although the expression is too complicated to elicit general intuition from, we can get insight into the effect of γ on the equilibrium effort when enmity levels are extreme. As η → 0, and the states do not mind whether they or their opponent successfully builds the technology, Proposition 7 dictates that Due to the complexity of states’ utility functions in the public information case, we instead report the results of numerical simulations of equilibria to compare to the no information and private information cases. Figure 4 presents the disaster risk for γ ∈ [0.5, 1] for the same parameter values we used in simulations in Figure 2 (η = 0.9, μ = 1.44) under high (m = 10) and moderate (m = 5) values of decisiveness. We see that, as in the no information and private information cases, disaster risk is monotonically decreasing in γ in the public information case as well. Similar to the other cases, the same public-good and selection effects apply. As γ is lowered, the loser contributes more to overall risk. Since the loser is more likely to have lower capabilities than the winner, and thus also to invest less in safety in order to win, this serves to increase risk. Likewise, as other players contribute less to overall safety, each faces a temptation to shirk in their safety investments. Reduced investments in safety by others lowers the expected return on safety, even for actors that are likely to win the race.

Varying safety contributions of the winner.

Risk and the Marginal Value of Winning

Until now, we have held fixed the η parameter, which we have termed enmity and characterizes the opportunity cost of one’s rival winning the race. Enmity can be high for one of two reasons. First, enmity can be high because states are existing rivals.

27

When states are more intense rivals, they are more willing to cut corners to develop a new technology. This is one of the reasons the U.S. government ignored some of the concerns of the director of the Los Alamos Laboratory, J. Robert Oppenheimer, over the development of the atomic bomb: they feared Germany winning the race.

28

Presumably, the U.S. would not have taken on the same level of risk had the U.K. been developing a nuclear bomb instead. Second, enmity can be high because losing the race may quite harmful to one’s security only if the increase in capability enabled by the technology is relatively large. States developing a next-generation fighter jet or tank are unlikely to engage in high levels of corner cutting on safety, even competing against a bitter rival, as the expected value of losing the race is only marginally smaller than that of winning. In this case, we expect the η parameter to be relatively low. Our model captures both of these intuitions. In all three information scenarios, states choose weakly lower safety levels when the level of enmity between them is higher. Results are presented for the public information case in Figure 5 for high and low values of decisiveness m and of γ. We see that higher values of m and lower values of γ increase the concavity of the curve. That is, in highly decisive races or races in which both the winner and loser share approximately equal safety burdens, a race can quickly become maximally dangerous even when enmity is still low. In addition, we see an interaction effect: for low γ and high m, enmity is more harmful than if only one of these conditions are met.

29

Effects of enmity under public information (μ = 0.72).

Regime Type and Risk

Another parameter we have kept fixed is our value of disaster, which recall is normalized to 0. However, it is likely that the relative value of a disaster depends on a state’s regime type. In military conflict, for example, democracies tend to exhibit greater care to reduce casualties than autocracies (Gartzke 2001). Likewise, in technology races, we might expect democracies to value minimizing the chance of a disaster more highly than autocracies. To formalize this intuition, let a disaster reduce the value of the race outcome relative to winning by a factor of d i , which is drawn to a distribution d i ∼ iid H(d) that is independent of a state’s level of capabilities. Therefore, we can view democratic states as having lower values of d i .30 As expected, we find that if races are more likely among autocracies, as parameterized by a higher expected disaster value, risk increases in all information scenarios. Importantly, this is driven not only by races between autocracies but also by races between democracies and autocracies: in response to an autocratic rival’s corner cutting on safety, a democracy will choose a lower safety level than it would when racing against another democracy. The following proposition formalizes this result:

In all information scenarios, the expected safety of the race weakly increases as the average cost of a disaster rises.

Conclusion

In the introduction, we suggested that qualitative technology races present novel sources of risk that affect states’ national security. Given the rate of progress on a number of powerful, risky military technologies such as advanced artificial intelligence (Brundage et al. 2018; Dafoe 2017) and enhanced biological agents (Mukunda et al. 2009; Stern 2002), such risks will become increasingly important factors in states’ decision-making, even as they remain understudied in the international relations literature. Therefore, we develop a model to analyze the strategic forces influencing such risk. We find that the level of risk depends on states’ knowledge about each others’ capabilities as well as the decisiveness of the race. When races are less decisive, as is likely during early stages of research or in novel fields, public knowledge is beneficial, preventing weak laggards from cutting corners on safety. However, at high levels of decisiveness, when the race is in its final stages or research is in a well-established field, private knowledge is safer, preventing a race to the bottom. Finally, as the eventual loser is allowed to conduct more powerful tests or as enmity between players rises, overall safety falls.

A clearer understanding of the strategic forces influencing states’ technology development can inform policymakers seeking to reduce the risk of an accident. First, our model implies that disclosure policies are important for novel technologies. As we have seen, public revelation of capabilities can produce a dangerous competition in which states steeply cut corners on safety to win. On the other hand, keeping capabilities private can induce risky behavior by states who believe they are lagging behind, as the U.S. did during the ICBM race. In this case, if the Soviet Union had credibly shared its capabilities, it may have prevented a dangerous race. Taken together, these two imply that there is room for policies, such as agreements to share technology or provide transfers to identify incapable laggards and prevent them from racing (Stafford and Trager 2022). Second, policymakers should be clear-eyed about to what extent cutting corners on safety will produce additional gains in the race. As we have seen, public knowledge of capabilities and high levels of safety are optimal when decisiveness is low. Thus, giving in to the hype about rapid, certain progress of a new technology may unnecessarily increase risk if such hype is not well-founded (Smith 2020). Rapid improvement in machine language-processing via so-called large language models, for example, has produced media hype about the imminent arrival of human-level or otherwise transformative artificial intelligence (Bender and Koller 2020), despite experts believing that such progress remains decades away (Zhang et al. 2022).

We believe the findings of our model can admit a number of extensions and suggest directions for future research. First, we assume that the information partitions are given exogenously. In many scenarios, however, states may choose to share information or close off development to increase their chances of winning a race. As in conventional arms races, states can signal their military superiority with a public display of weapons capability in an effort to deter would-be attackers. On the flip side, states may have an incentive to engage in espionage to uncover the capabilities of their rivals or gain access to their knowledge base. Soviet spying during the race for the atomic bomb likely accelerated their program by a year or two (Ord 2022). Studying a model in which states are allowed to disclose information voluntarily or spy on rivals may help elucidate how different information scenarios arise. Second, our model considers decisiveness to be exogenous and common knowledge. Instead, we might expect it to vary over time; as we have shown, the race for the atomic bomb became more decisive–and thus far more risky–as research progressed. Extending the model to a dynamic game in which decisiveness varies over the course of the race might yield insights about when races are most risky. Alternatively, we might extend the model such that states have private information about decisiveness. If a state believes its rival has a high value of decisiveness, it might be willing to cut corners on safety even if the state itself believes decisiveness to be low. Third, it may be important to analyze the effect of information in a dynamic context where agreements are possible. Here, information plays a different role, sometimes allowing states to increase general welfare by conditioning their strategies on each other’s behavior (Stafford and Trager 2022). Fourth, to focus attention on states’ decisions to allocate their R&D budget on capabilities and safety, we have assumed budgets and therefore entry into the race are exogenous. Instead, we might consider a model in which states face a cost function and choose how much to spend on capabilities and safety, in which the optimal solution may be to simply stay out of the race. After all, the majority of states have never invested in a nuclear weapons program, and such a model would help elucidate the reasons behind this empirical regularity.

In this paper, we have sought to unite various strands of literature–on qualitative races and on risks from various technologies such as nuclear weapons and bioweapons–into a unified model. We hope to provide a framework upon which scholars can build as they seek to understand the strategic effects of and risks from emerging technologies. In particular, our model contributes to understanding the role of information and uncertainty in qualitative arms races, showing that the decisiveness of the race can change the qualitative and quantitative effects of information. We hope these insights are not only theoretically insightful but can be used to improve policy decisions so that advanced technologies are developed for the benefit of all.

Supplemental Material

Supplemental Material - Uncertainty, Information, and Risk in International Technology Races

Supplemental Material for Uncertainty, Information, and Risk in International Technology Races by Nicholas Emery-Xu, Andrew Park, and Robert Trager in Journal of Conflict Resolution

Footnotes

Acknowledgments

For useful comments, we thank (in random order) Jeffrey Ohl, Jonas Mueller, Richard Jordan, Lennart Stern, and Paolo Bova, as well as two anonymous reviewers and conference participants at APSA 2021, the Conference on Great Power Competition in the 21st Century (2022), and the Politics of Emerging Technology Mini-Conference (2022). We thank the Stanford Existential Risks Initiative and the Open Philanthropy Project for financial and logistical support. All remaining errors are our own.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Open Philanthropy Project and Stanford Existential Risk Initiative and the UC Office of the President through its UC National Laboratory Fees Research Program (Award #LFR-18-547591).

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.