Abstract

This study investigates the features of fake news networks and how they spread during the 2020 South Korean election. Using actor–network theory (ANT), we assessed the network’s central players and how they are connected. Results reveal the characteristics of the videoclips and channel networks responsible for the propagation of fake news. Analysis of the videoclip network reveals a high number of detected fake news videos and a high density of connections among users. Assessment of news videoclips on both actual and fake news networks reveals that the real news network is more concentrated. However, the scale of the network may play a role in these variations. Statistics for network centralization reveal that users are spread out over the network, pointing to its decentralized character. A closer look at the real and fake news networks inside videos and channels reveals similar trends. We find that the density of the real news videoclip network is higher than that of the fake news network, whereas the fake news channel networks are denser than their real news counterparts, which may indicate greater activity and interconnectedness in their transmission. We also found that fake news videoclips had more likes than real news videoclips, whereas real news videoclips had more dislikes than fake news videoclips. These findings strongly suggest that fake news videoclips are more accepted when people watch them on YouTube. In addition, we used semantic networks and automated content analysis to uncover common language patterns in fake news, which helps us better understand the structure and dynamics of the networks involved in the dissemination of fake news. The findings reported here provide important insights on how fake news spread via social networks during the South Korean election of 2020. The results of this study have important implications for the campaign against fake news and ensuring factual coverage.

Introduction

Fake news, a phenomenon amplified exponentially by new network technologies, has emerged as a formidable societal challenge. This phenomenon extends its reach across critical domains, from the integrity of democratic elections to the management of public health crises, as witnessed during the recent COVID-19 pandemic (Rudgard, 2020). For example, during COVID-19, misperceptions were successfully created through simple content alterations and the addition of popular anti-COVID-19 hashtags such as #COVIDIOT and #covidhoax to otherwise valid Twitter content, thus encouraging the hesitant and skeptical minority to be open to commenting, retweeting, like, and sharing rumors about vaccines’ efficacy (Sharevski et al., 2022). The ubiquity of social media platforms that make possible the creation of such networks has assisted individuals and groups in spreading fake news, enabling the spread of their disinformation and misinformation at unprecedented speed, reaching more network participants and remaining longer in the public domain (Rhodes, 2022). In the political landscape, digital platforms have become conduits for the dissemination of false information and propaganda during electoral processes, exerting influence over voter behaviors and posing a threat to democratic principles (Azis Prasetyo and Aisyah, 2018; Igwebuike and Chimuanya, 2021). The 2016 US presidential election serves as a stark illustration, where an inundation of fake news eclipsed authentic narratives, leading to a widespread acceptance of erroneous information (Budak, 2019). This exposure to fake news predisposes individuals to adopt various political misperceptions (Ognyanova et al., 2020), ultimately shaping their subsequent behavior, including voting decisions (Cantarella et al., 2023).

Digital networks that host fake news, misinformation, and other forms of disinformation exist in a context in which traditional news media and political institutions are viewed with a growing level of mistrust, and the “wisdom of the ordinary, non-specialist ‘hacker’ and/or purported secret information from supposed insiders is believed instead” (Bleakley, 2023). Recent research reveals that sources of fake news frequently attack mainstream media organizations, claiming that they are biased and incapable of doing their jobs properly. A drop in trust in the media of 5% was predicted among the people who took part in the study if they were exposed to disinformation during the month leading up to the 2018 election. In addition, a discernible correlation emerges between consumption of fake news and diminished trust in mainstream media across all levels of political ideology (Ognyanova et al., 2020). Albright aptly characterizes the phenomenon, noting the rapid dissemination of emotionally charged messages on platforms like Twitter. This calculated distortion of attention hastens the spread of misinformation, giving rise to the establishment of alternative, often unfounded, narratives (Albright, 2017).

In this context, digital platforms readily embrace subversive and discriminatory claims, as they are swiftly reinforced by peers and prove challenging for authorities to counter effectively. Extant research clearly indicates that online social networks are usually formed among like-minded people who use digital platforms to both facilitate sharing among themselves and promote and bolster belief in both true/fake news within and beyond their groups (Bleakley, 2023). While individuals tend to seek out like-minded people, the algorithms of digital platforms have amplified this tendency through filtering challenging information and providing confirmatory information, which algorithms determine from platform users’ previous behaviors and choices (Nolin and Olson, 2016; Pariser, 2011). Albright (2017) emphasizes the necessity of scrutinizing the fake news ecosystem, which can be accomplished by tracing the flow of information across expansive networks of websites, profiles, and platforms.

Against this backdrop, it becomes imperative to turn our attention to South Korea, a nation experiencing a period of unprecedented political transformation. In 2017, the impeachment of a president and the ensuing political upheaval were accompanied by a surge of false and misleading information propagated through online channels (Yoo et al., 2022). This unique socio-political environment sets the stage for an in-depth exploration of how fake news influenced the political landscape during the pivotal 2020 general election in South Korea. This study aims to examine the dynamics of fake news dissemination within this distinctive context, offering valuable insights for navigating the challenges posed by digital networks in the South Korean political environment.

The substantial societal costs stemming from the misuse of digital news networks highlight the urgency of this inquiry. While previous studies have examined the spread of misinformation during the COVID-19 epidemic, the specific influence of fake news network structures in a country like South Korea remains a critical knowledge gap. Given its unique political and social landscape which is very different from its Western counterparts, this study endeavors to elucidate how fake news impacted the political terrain of South Korea during the 2020 election.

The subsequent sections of this article are structured as follows. Section “Literature review” provides a comprehensive literature review, situating the phenomenon of fake news within the broader global and South Korean contexts. Section “Method: case study analysis” delineates the methodology, encompassing data collection and analytical approaches. Section “Results” presents the results, revealing the specific manifestations and consequences of fake news in South Korea. Section “Videoclip network” focuses on discussion, drawing connections between our findings and broader theoretical frameworks and practical context. Finally, in Section “Discussion,” we conclude with a comment on this study’s limitations and recommendations for future research.

Literature review

Misinformation in the South Korean context

Over the past several years, South Korea has experienced unparalleled political changes. In 2017, we saw the impeachment of a president. During the same time, a slew of false and misleading information proliferated quickly through online and social media channels during this time of political unrest (Yoo et al., 2022). Unison of people who share similar ideologies is to be expected in such a strongly polarized environment (Choi et al., 2020). Even when the information is erroneous, people in these echo chambers frequently absorb false information that supports their ideologies. According to the Institute for the Study of Journalism’s Digital News Report 2019, among 38 countries surveyed, South Korean news consumers have the lowest level of trust in the news media (Newman et al., 2019). Furthermore, approximately 40% of South Korean news consumers access news through YouTube, and the country ranked highly in terms of podcasts usage (Newman et al., 2019).

Given that South Korean news consumers express low levels of trust and approval of the news media overall, this distrust and dissatisfaction expressed by South Koreans constitutes a significant problem. According to a survey conducted by the South Korea Press Foundation in March 2018, 69.2% of the 1500 respondents had seen or heard about manipulated or false information in the form of news distributed on social media (Yoo et al., 2022). Furthermore, the networks have been used to disseminate fake news aimed at undermining legitimate political processes during national elections in South Korea, including news stories about the major presidential candidates (Park and Youm, 2019). For example, in 2017, following the candlelight demonstrations and the subsequent impeachment of South Korea’s former president, Park Geun-hye, fake news was widely distributed among supporters. In the aftermath of Guen Hye’s impeachment, South Korean political parties used fake news to mobilize their supporters in order to gain an advantage in situations involving political divisions and confrontations between the pro-impeachment, progressive young generation and the anti-impeachment, conservative senior generation. The communications asserted that US President Donald Trump had expressed opposition to impeachment and that North Korea was the mastermind behind the impeachment scheme (Go and Lee, 2020).

While various research studies have looked at how misinformation spread during the COVID-19 epidemic (Freiling et al., 2023; Zhang et al., 2023), it is still unclear how the network structure of fake news influences its spread in a country like South Korea. It is politically and socially different from Western countries. Thus, this study examines how fake news influenced the political landscape of South Korea during the 2020 election.

Analytical framework: actor–network theory

The analytical framework for this study is based on actor–network theory (ANT). ANT challenges traditional sociological approaches that prioritize human agency and social structures. Instead, ANT treats both human and nonhuman actors as having agency and influence in shaping social phenomena. It emphasizes the importance of studying how networks are formed and how actors, both human and nonhuman, join and mobilize within these networks (Sharifzadeh, 2016). According to Latour (2007), the ANT incorporates a wide variety of actants, from tangible elements to abstract concepts like declarations and ideas. Interactions and networking among ANT’s actants are its primary concern. These distinguishable interactions reflect the network’s inscription. An actor network might represent a social network, so it comprises not just people interacting with one another, but also interactions with nonhuman actants. This is evident in the features of social media technology and legislation that mediate the relationship between humans (Labafi, 2020). Our research aims to uncover the differences between real news and fake news networks in terms of network density, geodesic distances, and centrality measures. These findings will contribute to ANT by illustrating how different actors and entities, including individuals, channels, and videoclips, are organized and interconnected within the network. This provides empirical evidence of the heterogeneous composition and structure of actor networks involved in the dissemination of real and fake news, highlighting the role of both human and nonhuman actors.

Fake news

The surge in deliberately fabricated false stories, often referred to as “fake news,” has become a pressing concern in today’s information landscape (Kar et al., 2023; Lazer et al., 2018). While some scholars express reservations about the term itself, citing its potential to erode the credibility associated with “news,” alternative descriptors like “misinformation,” “disinformation,” or “fabricated news” have been proposed (Allcott and Gentzkow, 2017; Kar et al., 2023). Nevertheless, empirical evidence suggests that public interest in the term “fake news” surpasses related terms like “misinformation,” “disinformation,” and “fabricated news” by a significant margin, indicating its enduring relevance (Ansar and Goswami, 2021).

The transformative role of social media platforms deserves special attention. Originally conceived as spaces for social interaction, they have evolved into influential information ecosystems. Social media now facilitate not only connections but also the seamless sharing and reception of information across borders, fundamentally altering the way individuals engage with content (Grover et al., 2022). This evolution has given rise to algorithm-driven content curation, potentially leading to the formation of echo chambers where individuals are predominantly exposed to information that aligns with their existing beliefs (Rodrigues da Cunha Palmieri, 2023). Consequently, there is a growing concern regarding the reinforcement of pre-existing opinions and the potential isolation of individuals from diverse perspectives (Diaz Ruiz and Nilsson, 2023).

While significant strides have been made in detecting fake news online, much remains to be uncovered (Chen et al., 2015; Conroy et al., 2015; Bastick, 2021). Studies examining the propagation of political fake news in South Korean online communities offer valuable insights into the dynamics at play. Choi (2014) and (Choi, Yang, and Chen, 2018) delved into political discussions, revealing a notable centralization and cliquishness in information flow. These discussions were characterized by heightened emotion, particularly anger, and participants tended to refer primarily to like-minded messages. Furthermore, those with more reciprocal relationships and higher popularity within the forum tended to maintain or create more discussion ties. It is important to acknowledge that the dynamics of online political discussions may not mirror those of fake news distribution networks. In the case of fake news, if widely disseminated by a motivated and like-minded group, its distribution network might exhibit higher levels of centralization and cliquishness compared with typical discussion networks.

In addition, the popularity of the author may hold greater sway in fake news distribution than the emotional content or social effects of the message itself (Choi, 2014; Choi et al., 2018). Likewise, a study involving 10,000 users and 555,684 tweets suggests that factors such as emotion stability, polarity stability, hashtag consolidation ratio, hashtag diversity, lexical diversity, favorites count, and friends count may influence the propagation of both misinformation and information (Kar and Aswani, 2021). Similarly, recent research by Aswani et al. (2019) delves into the management of misinformation in social media, shedding light on factors contributing to its rapid propagation. Their analysis of approximately 1.5 million tweets in cases involving misinformation highlights the role of emotions and polarity in determining content authenticity. Notably, tweets with a higher element of surprise combined with other emotions are more likely to be associated with misinformation. Furthermore, tweets featuring neutral content are less prone to virality when it comes to spreading misinformation.

Building on this foundation, the current study undertakes a comprehensive assessment of the structure and content of fake news distribution, employing network analysis and automated content analysis. This approach represents a critical stride toward a deeper understanding of the mechanisms underpinning the dissemination of fake news. However, it is imperative to highlight that while social media data analysis effectively describes the phenomenon, it often falls short in addressing the underlying cause-and-effect relationships. Thus, an empirical data analysis approach is adopted to shed light on how fake news has been disseminated, addressing South Korea-specific questions. In doing so, we recognize the need to look beyond American-centric concerns and approaches, as the United States does not offer a universal model, particularly in an Asian context. For instance, the prevalence of fake news on platforms like Kakaotalk distinguishes South Korea’s information landscape from that of other nations where distribution may be more prominent on platforms like YouTube, Facebook, and Twitter.

Having established the contextual backdrop, we now turn our attention to the methodology employed in this study to dissect the dissemination and characteristics of fake news surrounding the unfounded concerns about election fraud in South Korea’s 2020 general election.

Method: case study analysis

In this research, we have selected the unfounded concerns about election fraud in South Korea’s 2020 general election, as the allegations of electoral fraud gained a considerable amount of attention in South Korea (Kim, 2020). Although the electoral fraud issue is likely to stem from a political conspiracy, it was widely discussed on YouTube during the period of data collection and likely to provide in-depth insights in terms of their thematic genres and fake news formation. During the data collection phase, we had to differentiate between “fake news” and “real news.” In terms of this classification, in the electoral fraud case, we classified the content that supports or agrees with the electoral fraud argument as fake news, whereas we classified content that refutes this argument as real news. We classified content that simply introduced the idea of electoral fraud as real news. Then, we addressed the four research questions specific to YouTube platforms:

How South Korean fake news is disseminated;

Whether its dissemination exhibits different path characteristics from that of real news;

Whether this spread is driven by author effect, message effect, or social effect;

Which words commonly and frequently appear in fake news messages.

To address these questions, we conducted four discrete analyses: (1) a social network analysis (SNA), (2) natural language processing, (3) semantic network analysis, and (4) automated content analysis. We conducted this analysis using two distinct network models in the form of channel network and videoclip network.

Social network analysis

SNA was chosen as a pivotal analytical approach due to its proficiency in elucidating the underlying structure and dynamics of social interactions within a digital platform like YouTube. By representing users, channels, and videoclips as nodes, and their interactions as edges, SNA enables us to visualize the patterns of engagement and identify key actors or content within the network. SNA also makes possible the calculation of various network indices, including measures of centrality, clustering, and density (Altuntas et al., 2022). These metrics offer valuable insights into the prominence and influence of specific nodes or channels, as well as the overall cohesion and connectivity of the network. This approach is particularly relevant when investigating the dissemination of information, as it illuminates how users and content are interconnected (Ulibarri and Scott, 2017). First, nodes represent participants in an independent network. Edges denote connectivity between nodes. Edges can represent followings, followers, mentions, and replies in social media. Clusters are heavily interconnected elements that are rarely connected to other blocks. Network density represents the average value of a random network connection. Denser networks contain more and/or more valuable relationships, thereby augmenting the average tie value (Eom et al., 2018).

Several alternative approaches could have been considered to complement or enhance the analysis of fake news dissemination in the context of South Korea’s 2020 general election. One potential alternative approach is the integration of sentiment analysis with SNA. This combined approach would enable the assessment of emotional or attitudinal aspects associated with interactions within the network, providing a more nuanced understanding of user engagement patterns and sentiment dynamics (Röchert et al., 2020). Another viable alternative approach is social media analytics, which focuses on extracting meaningful insights from large-scale social media data through techniques like text mining, sentiment analysis, and content categorization (Khan and Malik, 2022). While social media analytics provides valuable insights into content characteristics and sentiment trends, it may not capture the underlying network structures and relationships (Serrat and Serrat, 2017) that are essential in understanding the dissemination patterns of fake news. Given the focus on understanding how information is disseminated and the role of user interactions, SNA emerged as the most suitable analytical framework for unraveling the complexities of fake news distribution in the South Korean 2020 election context.

Content analysis

In addition to examining the structure of fake news dissemination, we investigated the content of fake news stories. Natural language processing was employed to identify frequently mentioned words and pairs of closely linked words. Semantic network analysis further revealed semantic relationships between words. Automated content analysis enabled the identification of linguistic features characteristic of fake news stories.

Data collection

Legislative elections were held in South Korea on 15 April 2020. The year 2020 had witnessed a surge in pandemic-driven misinformation and the impending election also heightened the spread of fake news throughout the country (Jang et al., 2023). Participants ranging from political groups to individuals and potentially external entities were alleged to have manipulated public opinion and influenced voter behavior through the dissemination of false or misleading information (Choi, 2020; Ko, 2020). In order to comprehensively analyze this phenomenon, we collected YouTube data between 17 April and 10 August 2020 for the electoral fraud case using YouTube API. We used keywords or terms such as “electoral fraud,” “Election Commission,” and “early voting manipulation” to search relevant YouTube videoclips. After this data collection via API, the researchers manually filtered out videoclips that were not relevant to each case. We also gathered information about the YouTube channel that uploaded those videoclips. We transformed the videoclips into text using Google’s Speech-to-text (STT) service.

For SNA, we built models of two distinct networks: first, a channel network; and second, a videoclip network. In the channel network, nodes represent individual YouTube channels, while edges indicate interactions between channels. These interactions include elements such as subscriptions, mentions, or replies from one channel to another. This network model offers insights into the relationships among channels, highlighting which ones are more central or influential within the network. Similarly, the videoclip network comprises nodes representing individual videoclips, with edges denoting interactions between videoclips. These interactions were established when the same users replied to multiple videoclips, indicating a connection between them. The links were directed based on the temporal sequence of replies, providing further granularity in understanding the flow of interactions. For instance, if user A replied to videoclips X and Y, then the link was formed between X and Y. Each link is valued given the number of users shared between two videoclips. The links are directed by considering the time when replies were created. If user A replied to X first and then replied to Y afterwards, we formed X Y. Based on these networks formed, we calculated network density, geodesic path lengths, degree centrality, and other network indices. 1 Additional statistical analyses will be conducted to compare the differences of these indices in terms of fake/real and author/message/social factors.

Results

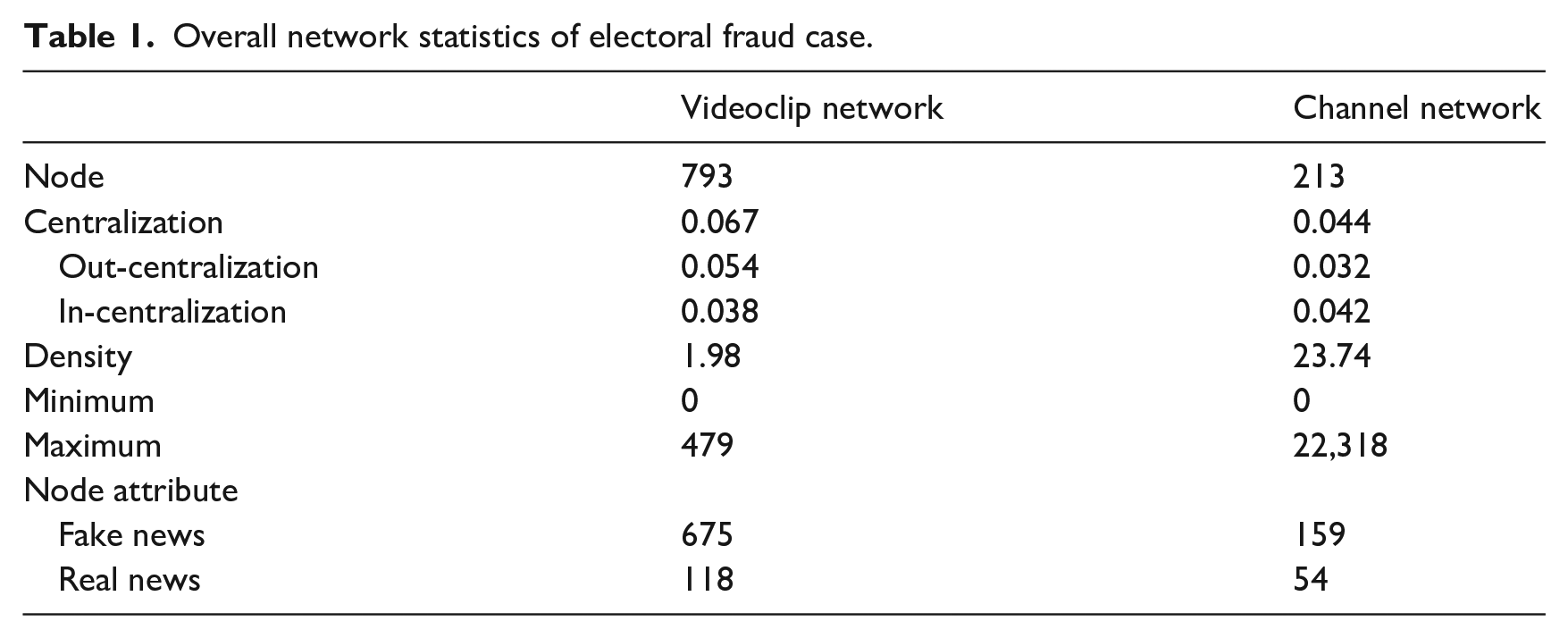

Overall network statistics

Table 1 summarizes the network statistics of videoclip network and channel network regarding electoral fraud case. Videoclip network is composed of 793 nodes, which also indicates that the number of relevant videoclips is in fact 793. On the level of dyadic relationships, the number of users who moved from one videoclip to the other ranged from 0 to 479. The value of network density was 1.98, which means that the average number of users who moved from one videoclip to another was 1.98. The network centralization value was 0.054 for out-degree (arrows heading out from the node in the network diagram) and 0.038 for in-degree (arrows heading into the node in the network diagram). These values closer to zero suggest that users spread out to the overall network, rather than being concentrated on a few videoclips. Of the above-stated 793 videoclips, 675 were classified as fake news.

Overall network statistics of electoral fraud case.

Channel network consists of 213 nodes, which also indicates that the number of relevant channels is 213. On the level of dyadic relationships, the number of users who moved from one channel to the other ranged from 0 to 22,318. The value of network density was 23.74, which means that the average number of users who moved from one channel to another was 23.74. The network centralization value was 0.032 for out-degree and 0.042 for in-degree. Among 213 channels, 159 were classified as fake news.

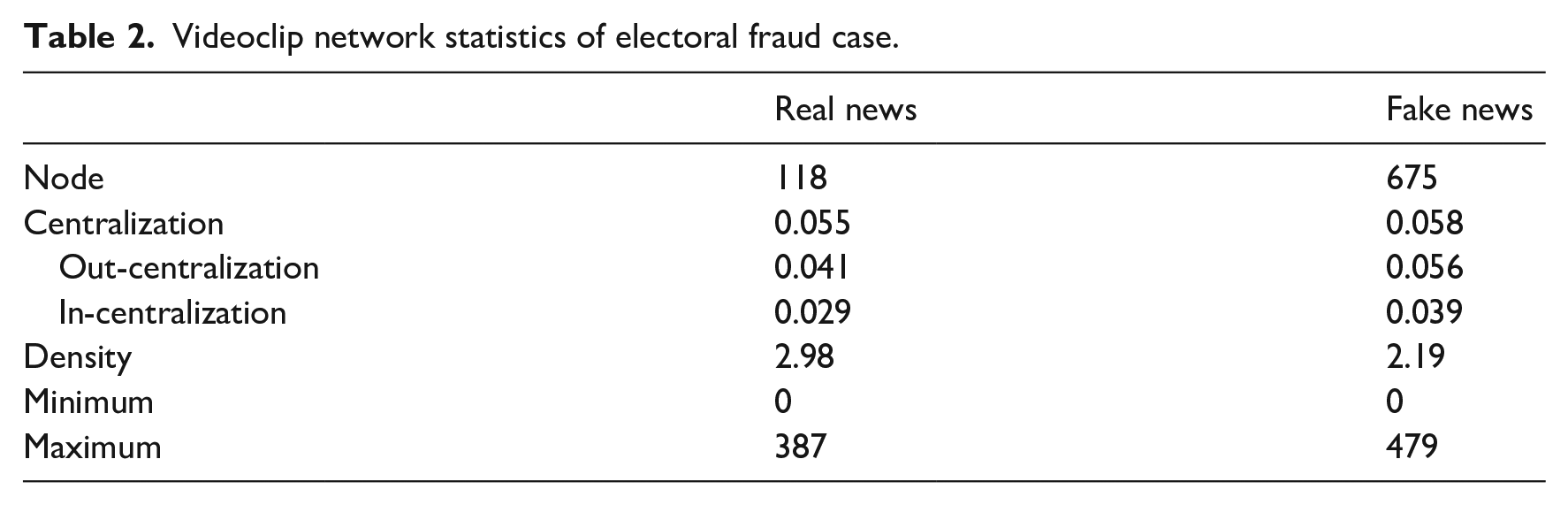

Fake news dissemination

While Table 1 exhibits the characteristics of overall network, Table 2 shows the characteristics of fake news network and real news network, respectively. Regarding real news, videoclip network is composed of 118 nodes, which also means that the number of relevant videoclips is 118. On the level of dyadic relationships the number of users who moved from one videoclip to the other ranged from 0 to 387. The value of network density was 2.98, which means that the average number of users who moved from one videoclip to another was 2.98. The network centralization value was 0.041 for out-degree and 0.029 for in-degree. These values closer to zero suggest that users spread out to the overall network, rather than being concentrated on a few videoclips.

Videoclip network statistics of electoral fraud case.

Regarding fake news, videoclip network consists of 675 nodes, which also indicates that the number of relevant videoclips is 675. On the level of dyadic relationships, the number of users who moved from one videoclip to the other ranged from 0 to 479. The value of network density was 2.19, meaning that the average number of users who moved from one videoclip to another amounted to 2.19. The network centralization value was 0.056 for out-degree and 0.039 for in-degree. These values closer to zero suggest that users spread out to the overall network, rather than being concentrated on a few videoclips.

Overall, the videoclip network of real news had relatively higher density than that of fake news, but we cannot rule out the possibility that this difference might have stemmed from the tendency that the former has a smaller number of nodes than the latter—it is highly likely that the network with fewer nodes tends to have greater value of density than the network with more nodes. The difference in terms of network centralization between the two networks was negligible.

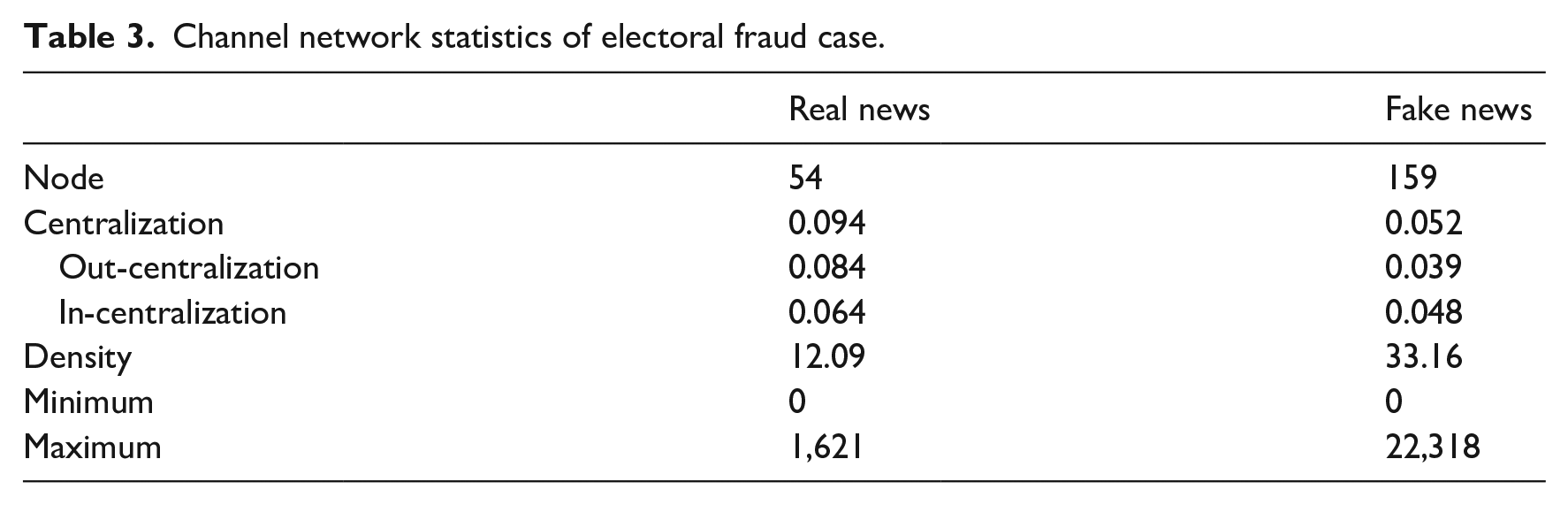

Table 3 summarizes the network statistics of a channel network of real news and fake news, respectively. In our dataset, none of the channels had both real news videoclips and fake news videoclips. Regarding real news, channel network is composed of 54 nodes, which also indicates that the number of relevant channels is 54. On the level of dyadic relationships, the number of users who moved from one channel to the other ranged from 0 to 1621. The value of network density was 12.09, which means that the average number of users who moved from one channel to another was 12.09. The network centralization value was 0.084 for out-degree and 0.064 for in-degree. These values closer to zero suggest that users spread out to the overall network, rather than being concentrated on a few channels.

Channel network statistics of electoral fraud case.

Regarding fake news, channel network is composed of 159 nodes, which also indicates that the number of relevant channels is 159. On the level of dyadic relationships, the number of users who moved from one channel to the other ranged from 0 to 22,318. The value of network density was 33.16, which means that the average number of users who moved from one channel to another was 33.16. The network centralization value was 0.039 for out-degree and 0.048 for in-degree. These values closer to zero suggest that users spread out to the overall network, instead of being concentrated on a few channels.

Overall, the channel network of real news had relatively lower density than that of fake news, even though the former had fewer nodes. (For reference, it is highly likely that the network with a smaller number of nodes tends to have greater value of density than the network with a larger number of nodes.) This finding implies that the channel network of fake news is denser and more active than that of real news. The difference in terms of network centralization between the two networks was negligible.

Network visualization

We visualized networks that were discussed in the previous sections. Network visualization plays a critical role in enhancing the accessibility and interpretability of complex network data. They provide a condensed overview of the complex relationships and offer a visual narrative that complements the textual analysis, providing readers with a more comprehensive understanding of the data (Unwin, 2020). In order to provide an overview of the network structures and characteristics elucidated in our research, we provided the truncated version of those whole networks. The truncated version allows us to identify the links better visually among channels or videoclips. In the truncated version, we visualized nodes whose degree centrality is above the average or ranks within the top 25%. If the number of nodes amounted to more than 100 even after we applied the aforementioned rule, then we visualized nodes whose degree centrality ranks within the top 10%.

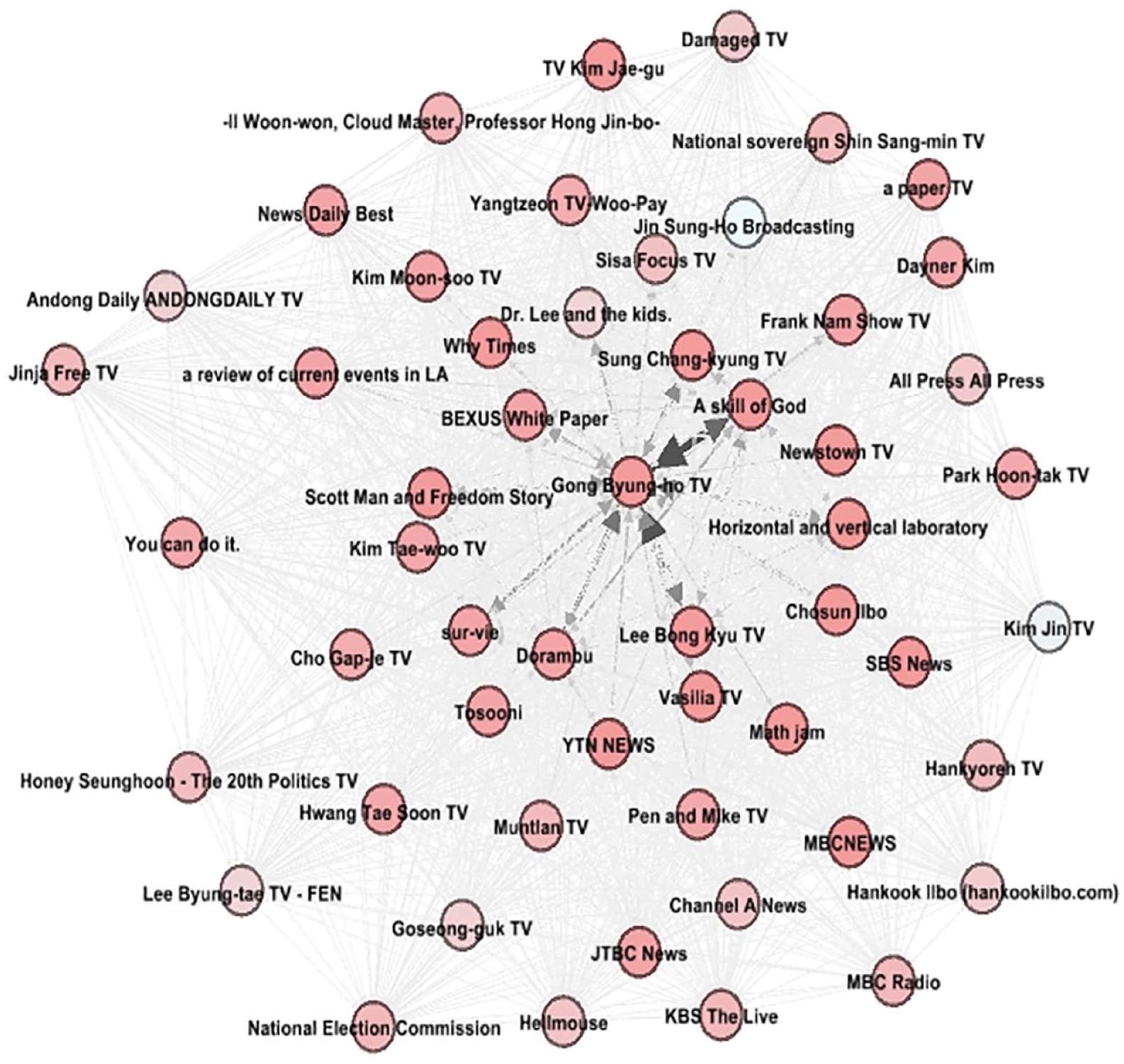

Channel network of real news

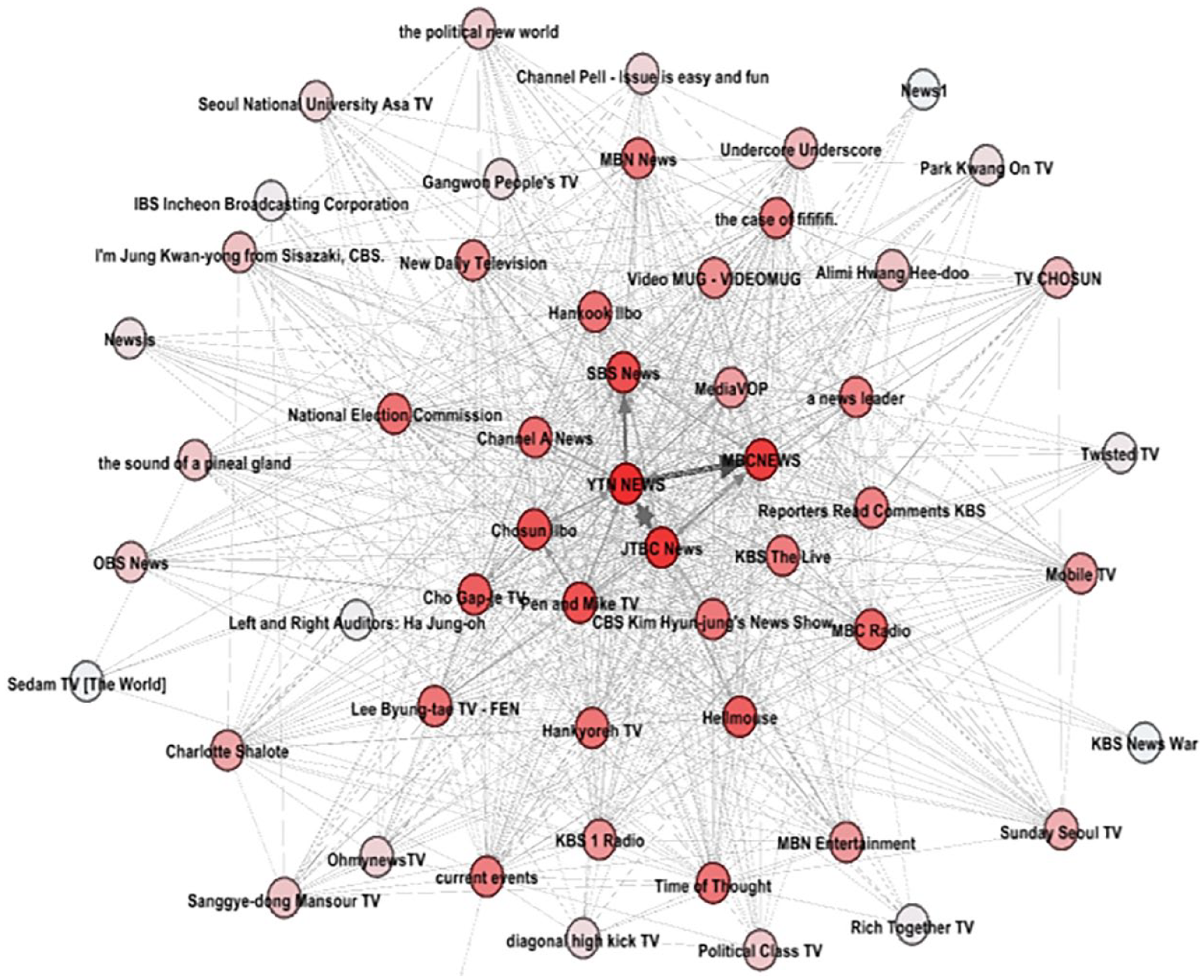

In the case of channel networks (see Figures 1 to 3) the higher the value of degree centrality, the darker the color of the node.

Overall channel network: Visualized nodes whose degree centrality ranks within the top 25%.

Channel network of real news.

Channel network of fake news: Visualized nodes whose degree centrality is above the average.

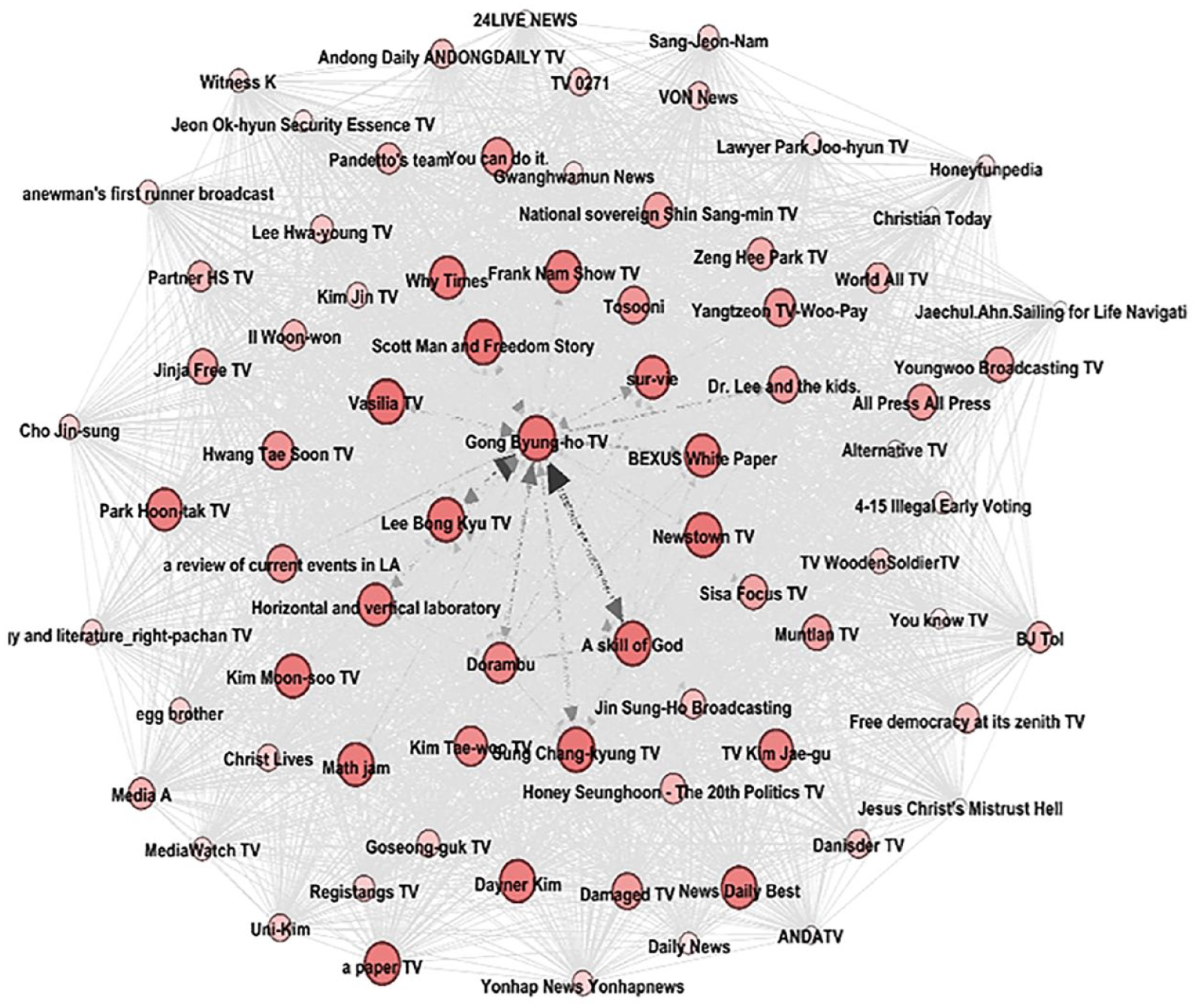

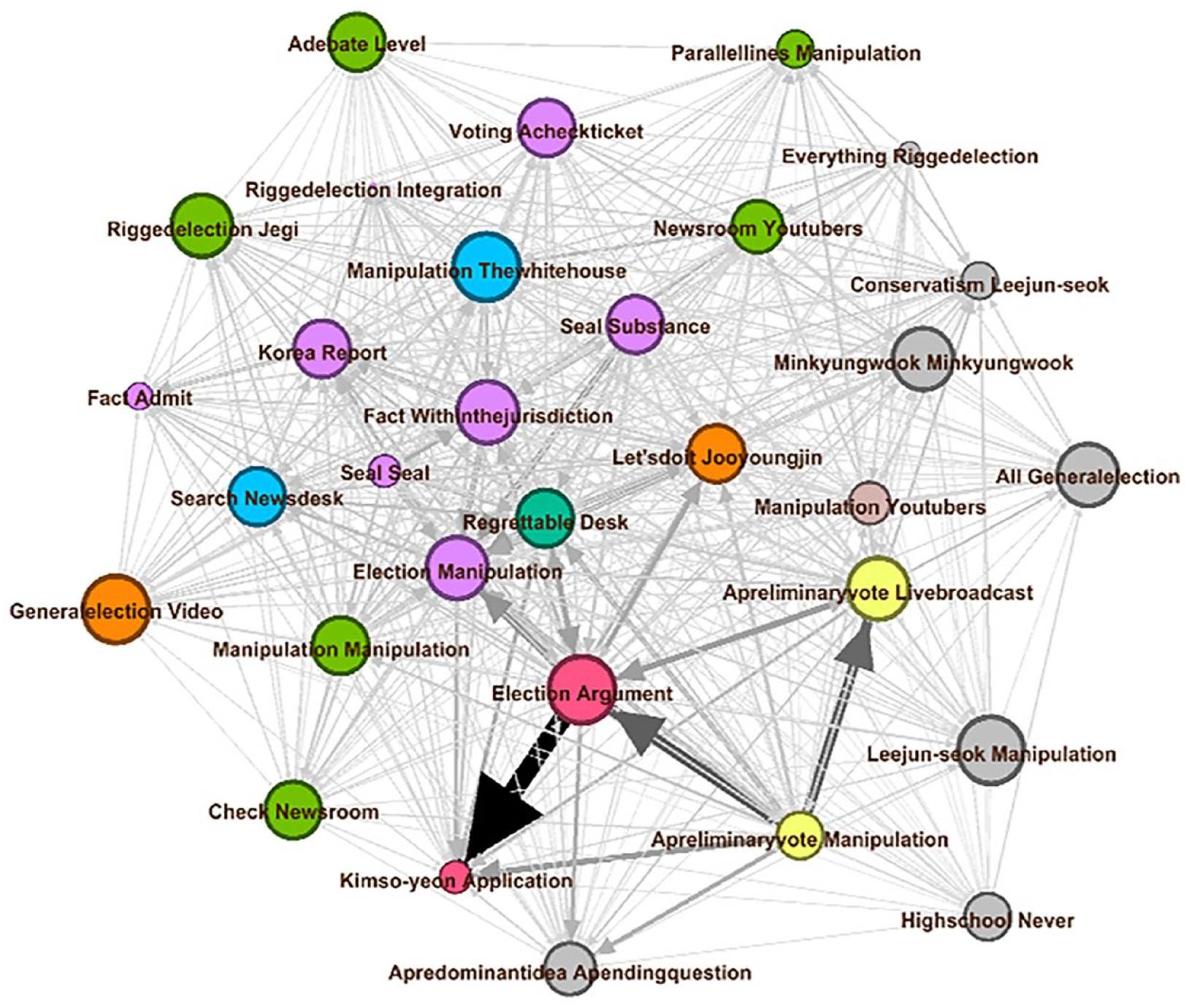

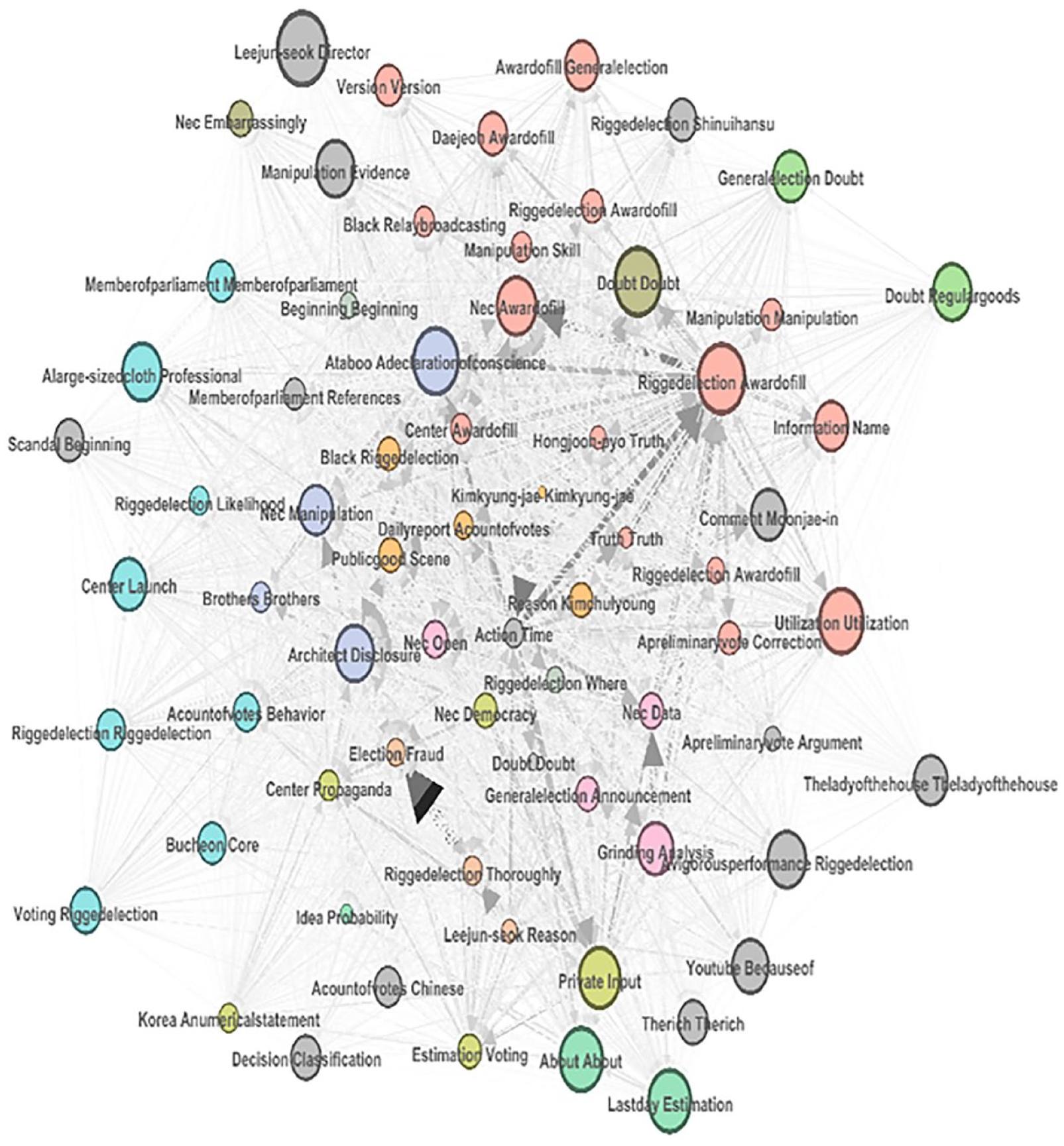

Videoclip network

In the case of the videoclip network (see Figures 4 to 6), the size of each node is proportional to its degree centrality—the higher the node’s degree centrality is, the larger in size it will be. The nodes’ colors are different based on the channels they belong to. Channels having relatively few videoclips are all colored gray. We visualized the label of each node by extracting one or two words from the title of each videoclip. Note that we were not able to visualize the whole title of each videoclip because it was too long and difficult to visualize in a readable manner.

Overall videoclips network: Visualized nodes whose degree centrality ranks within top 10%.

Videoclip network of real news: Visualized nodes whose degree centrality ranks within the top 25%.

Videoclip network of fake news: Visualized nodes whose degree centrality ranks within the top 10%.

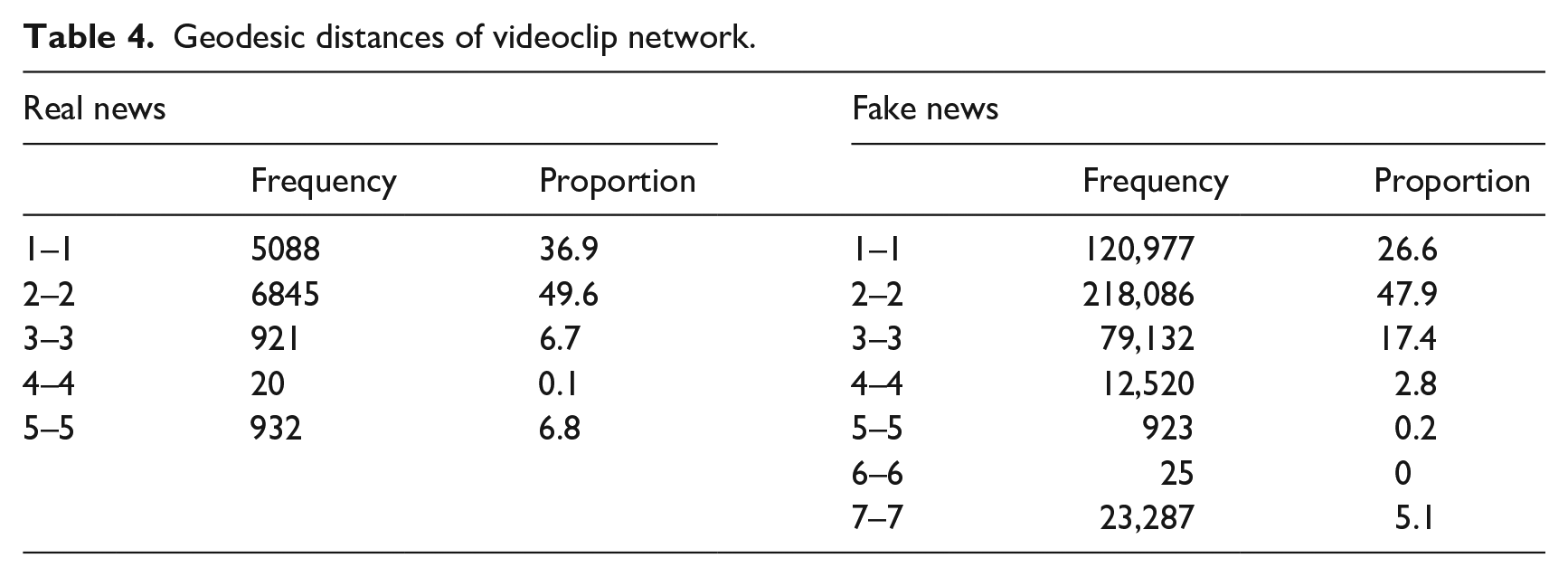

Geodesic distances

We calculated the geodesic distances (the length of the shortest path between two nodes) of videoclip networks. When we were not able to calculate the geodesic distance between two nodes because they were not linked to each other, then we added distance 1 to the largest value of the geodesic distance identified within the network. The value 1 of geodesic distance represents the direct connection between two videoclips. As shown in Table 4, the values of geodesic distances between two nodes are mostly 1 or 2 in the videoclip network of real news. In contrast, regarding the videoclip network of fake news, a considerable number of dyadic distances had the geodesic distance of 3. Moreover, the videoclip network of fake news had a larger maximum value of the geodesic distance than that of real news.

Geodesic distances of videoclip network.

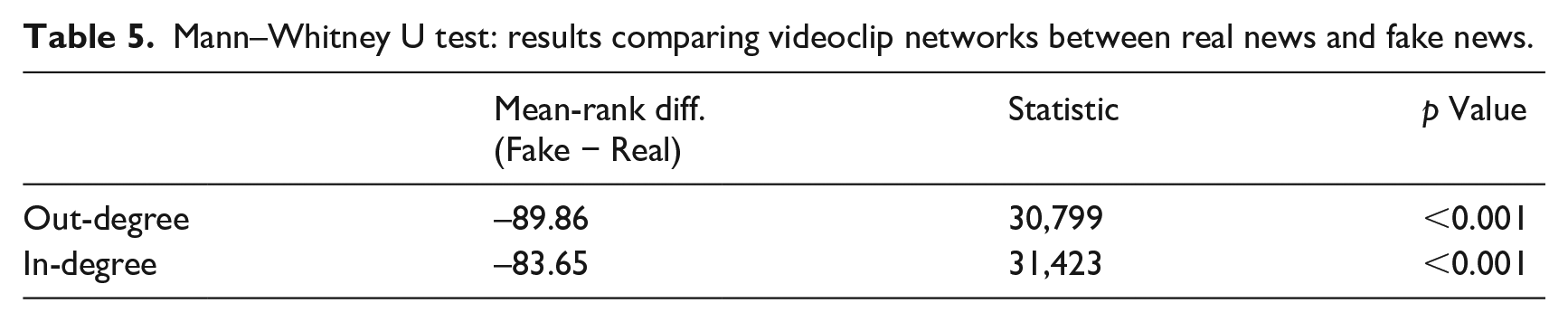

As shown in Table 5, we statistically compared the difference of videoclip networks between real news and fake news. We normalized in-degree centrality and out-degree centrality, taking into account the number of nodes involved in each network. Implementing Mann–Whitney U test, we found statistically significant differences in terms of these variables. The videoclip network of fake news tends to have smaller values when compared with real news. Taken together, these results suggest that the spread of fake news videoclips has different network characteristics from that of real news videoclips.

Mann–Whitney U test: results comparing videoclip networks between real news and fake news.

Author effect, message effect, and social effect

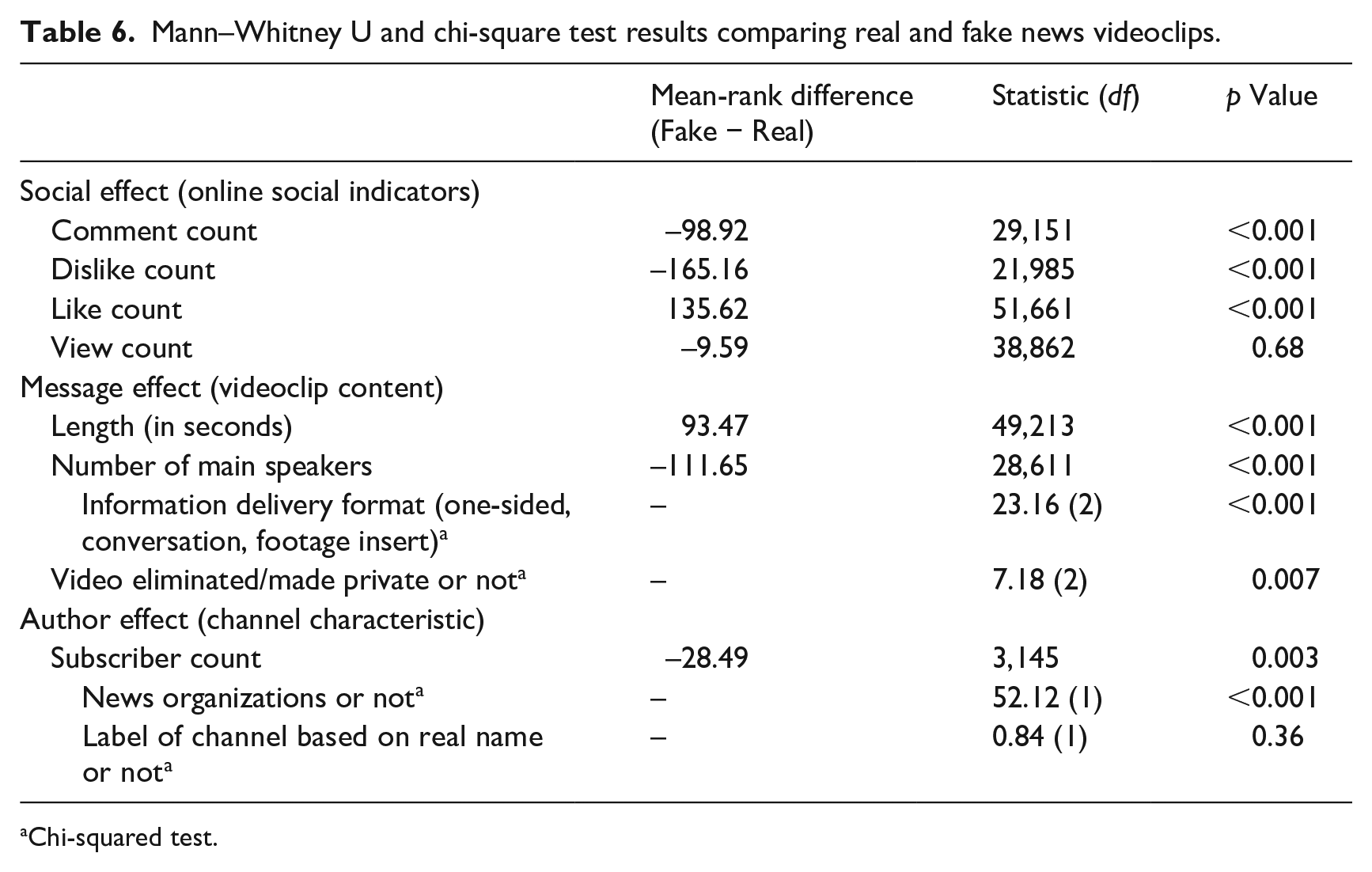

We compared the differences between real news videoclips and fake news videoclips in terms of author effect (i.e. YouTube channels), message effect (i.e. the content of videoclips uploaded by YouTube channels), and social effects (i.e. online social indicators such as the numbers of comments, likes, dislikes, and view that each videoclip have garnered; see Table 6). Regarding social effects, we found statistically significant differences between real news videoclips and fake news videoclips in terms of the numbers of comments, likes, and dislikes. Specifically, fake news videoclips had the larger number of likes than real news videoclips, whereas real news videoclips had the larger number of dislikes than fake news videoclips. These findings strongly suggest that fake news videoclips are more welcomed than real news videoclips on YouTube.

Mann–Whitney U and chi-square test results comparing real and fake news videoclips.

Chi-squared test.

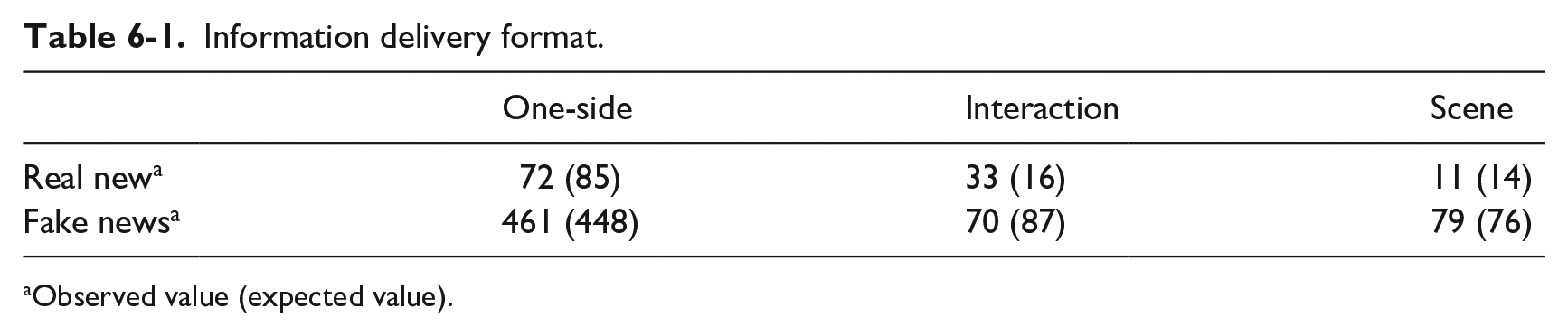

Information delivery format.

Observed value (expected value).

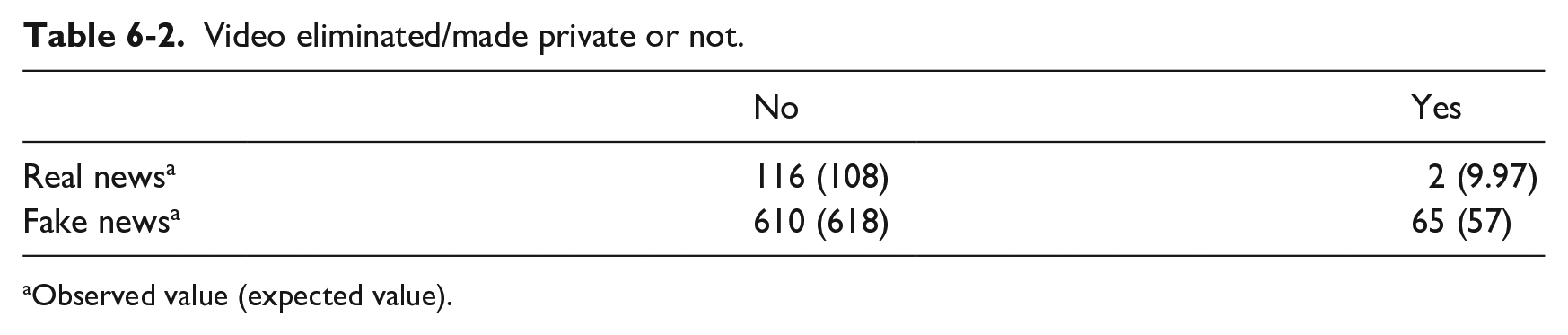

Video eliminated/made private or not.

Observed value (expected value).

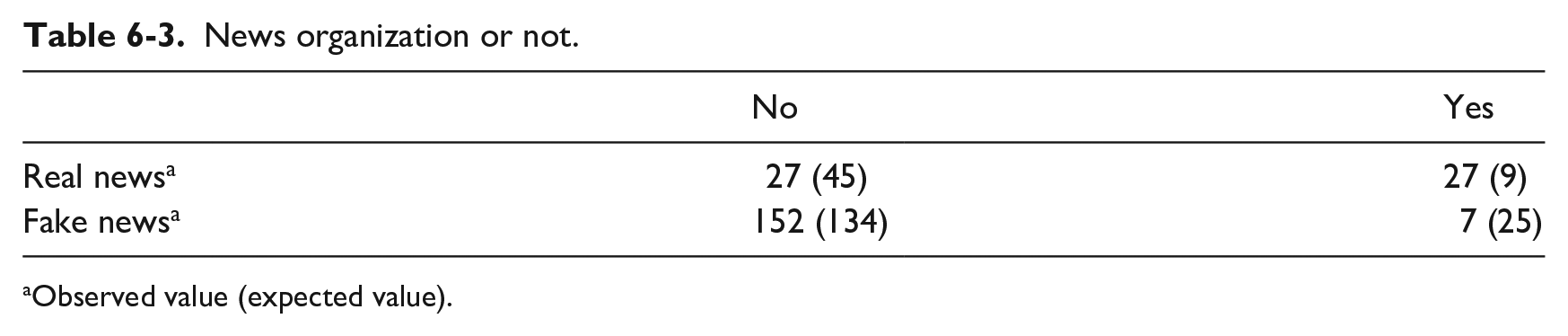

News organization or not.

Observed value (expected value).

Label of channel based on real name or not.

Observed value (expected value).

Regarding message effects, we analyzed the content of videoclips of real news and fake news. We found that the length of videoclips was statistically significantly different between the two—fake news videoclips were longer by 10 minutes and 37 seconds in average than real news videoclips. The number of main speakers in the videoclips was also markedly different between real news and fake news. Real news videoclips had an average of 0.48 more main speakers than the fake news videoclips. Furthermore, the formats of videoclips we categorized as one-sided delivery, conversational format, and field-footage insert greatly differed between the two. In the case of fake news, the observed number of videoclips that used conversational formats was smaller than statistically expected, whereas the opposite situation was found for real news. We also checked whether the videoclips uploaded and released during the period of data collection had been made private (i.e. not open to the public) afterwards (this was checked on 12 January 2021). We discovered that the number of videoclips eliminated or made private was greater in the case of fake news. Regarding the latter, the observed number of videoclips eliminated or made private was even greater than statistically expected.

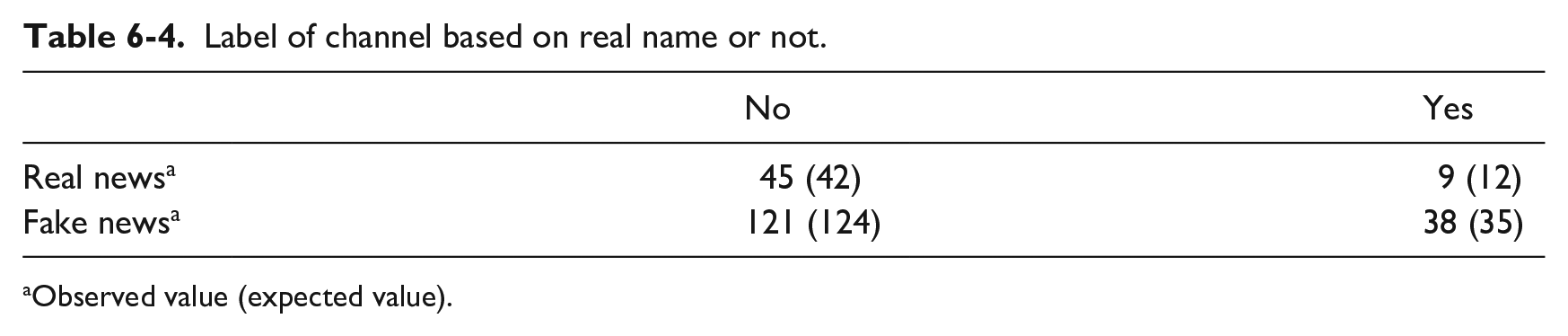

Referring to author effects, we focused on the channels that created and uploaded videoclips about the electoral fraud. The number of subscribers each channel has was found to be a statistically significant factor that differentiates channels that uploaded fake news videoclips from channels that uploaded real news videoclips. The latter had on average 203 more subscribers compared with the former. As well, we found that far larger channels were news organizations in the case of real news. However, we did not find any statistical significance regarding the variable whether the labels of channels are based on real names or not.

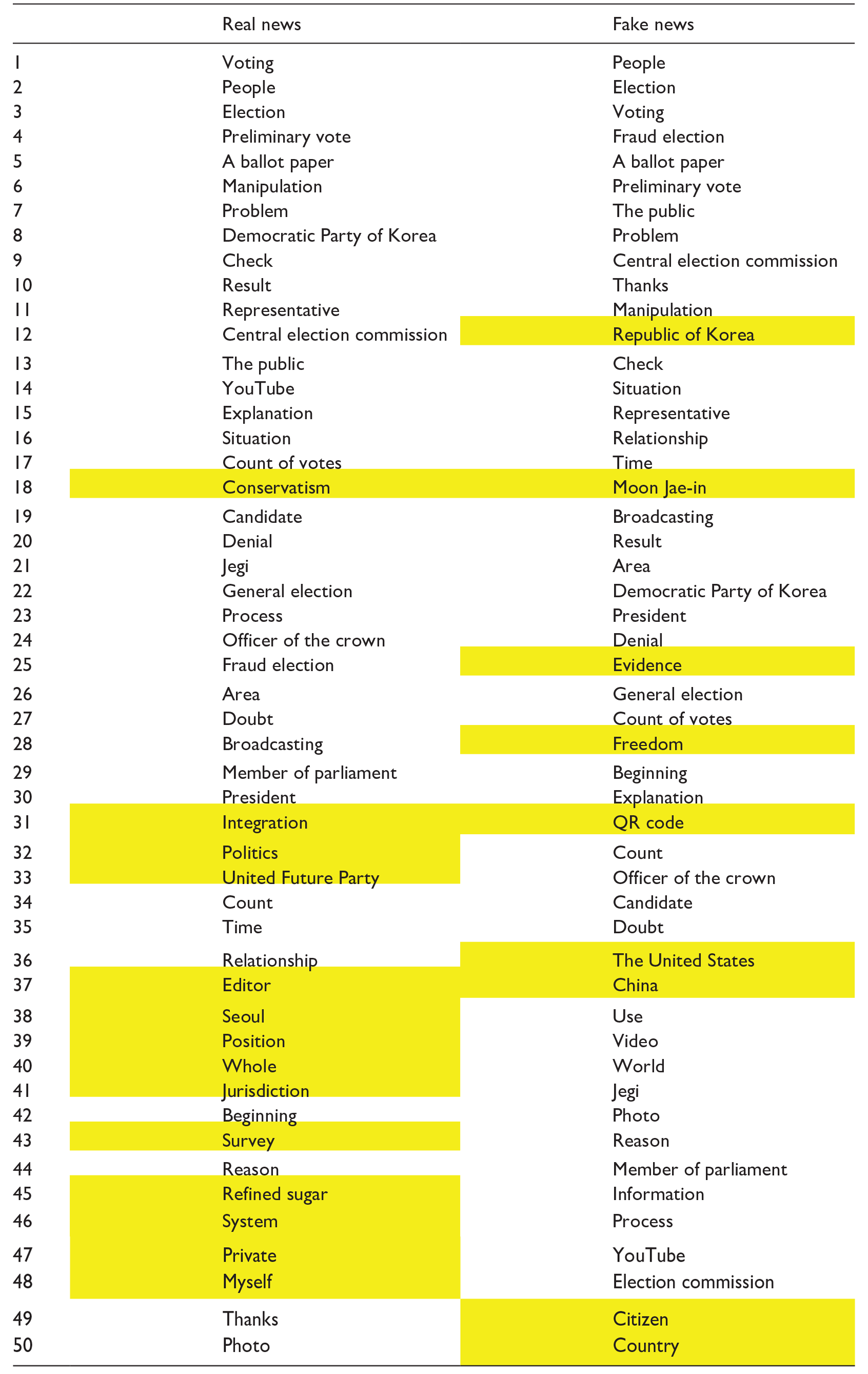

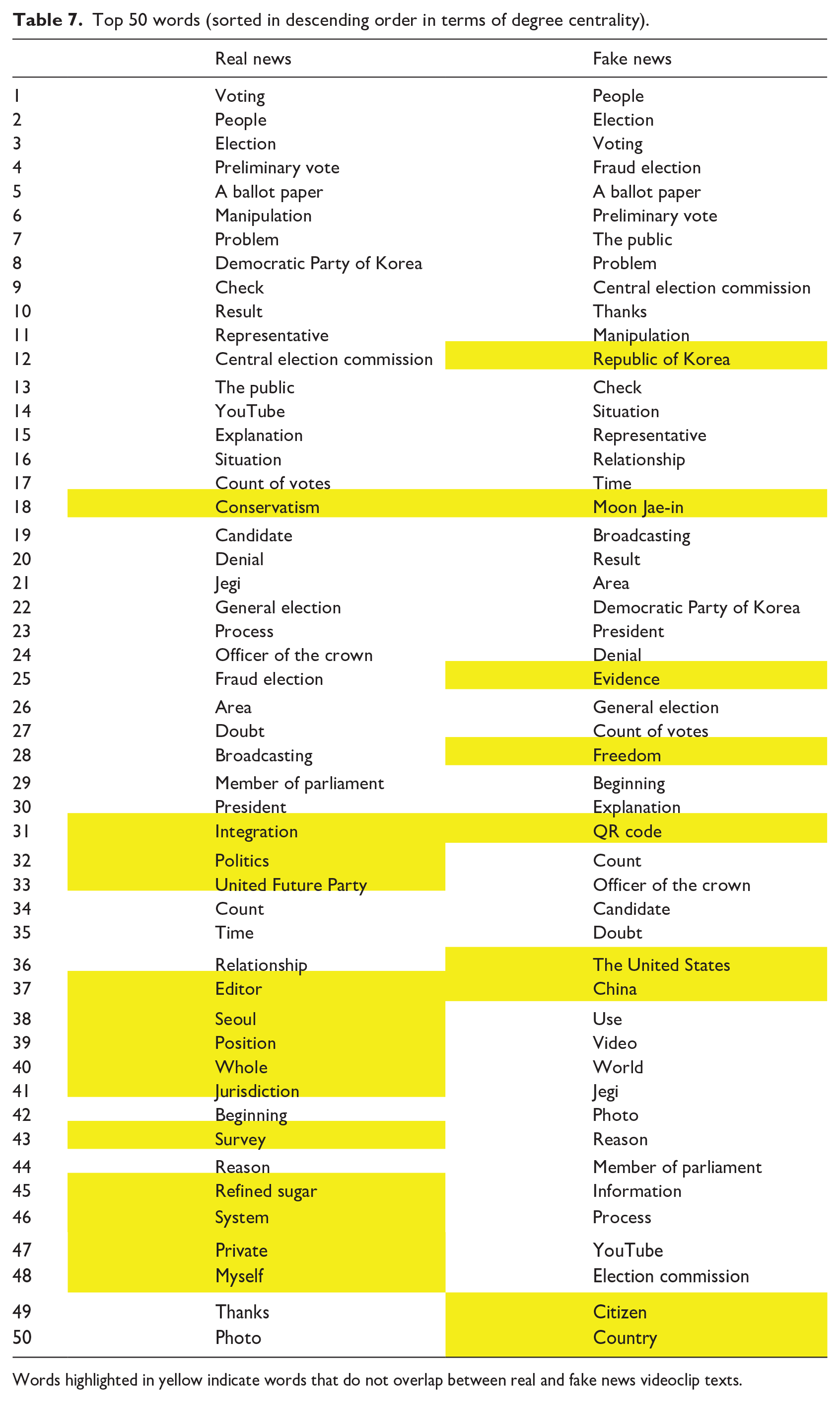

Fake news messaging

We implemented natural language processing using the texts extracted from videoclips and extracted only nouns from these texts. Then, we formed semantic networks composed of those nouns. The links between nouns were made when the two nouns appeared in the same videoclip. Based on the semantic network of real news and fake news, respectively, we calculated the degree centrality of each noun included in the network. Table 7 shows the top 50 words (i.e. nouns) that had higher degree centrality than others. Unlike the scenario involving real news, the terms such as “the Republic of Korea,” “Moon Jae In” (i.e. the President of South Korea), “evidence,” “freedom,” “QR code,” “the United States,” and “China” were frequently mentioned.

Top 50 words (sorted in descending order in terms of degree centrality).

Words highlighted in yellow indicate words that do not overlap between real and fake news videoclip texts.

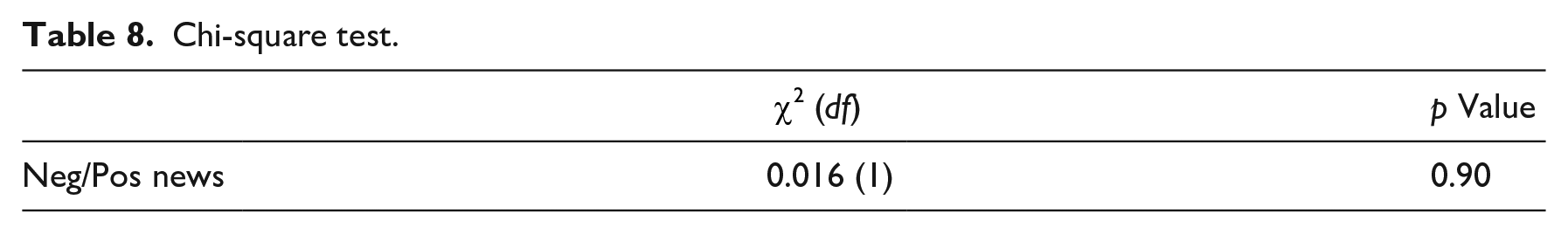

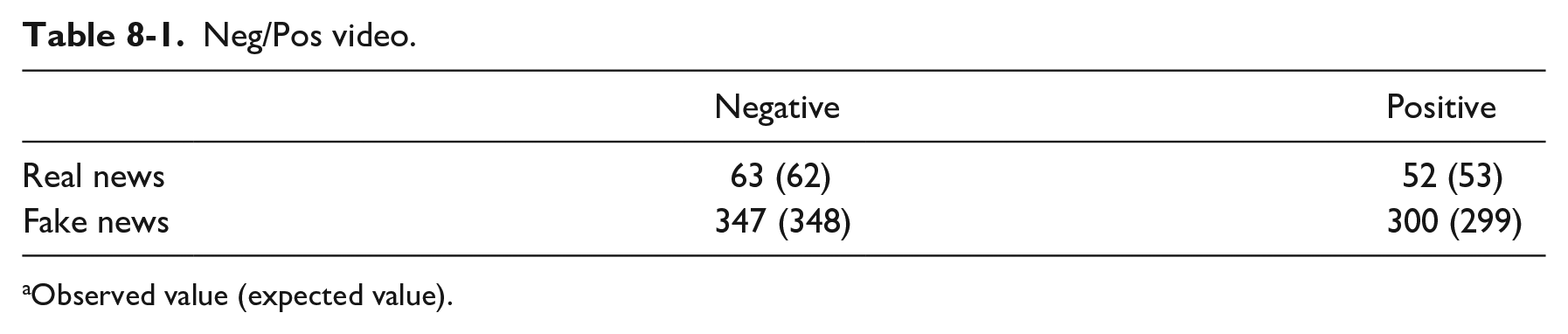

We also implemented sentiment analysis with the texts extracted from real and fake news videoclips. For this analysis, we used KOSAC (Korean Sentiment Analysis Corpus) dictionary (Shin et al., 2012) and tagged positive or negative sentiments on each part-of-speech (POS) that the texts contain. We subtracted the number of negative POS from the number of positive POS and then divided these subtracted values with the number of total POS that each text contains (to eliminate the effect of text length). When the values generated from this procedure were greater than zero, we classified the text of the videoclip as having positive sentiment on the electoral fraud. For this analysis, we excluded 31 texts from our dataset, since they had the same number of positive and negative POS or they were not retrievable due to the elimination of videoclips at the time of this analysis. As shown in Table 8, the percentage of fake news videoclip texts categorized as having negative sentiment is 53.6%, whereas for real news videoclip texts it is 54.8%. We were not able to detect any statistical significance regarding the sentiment difference between real and fake news videoclip texts (see Table 8).

Chi-square test.

Neg/Pos video.

Observed value (expected value).

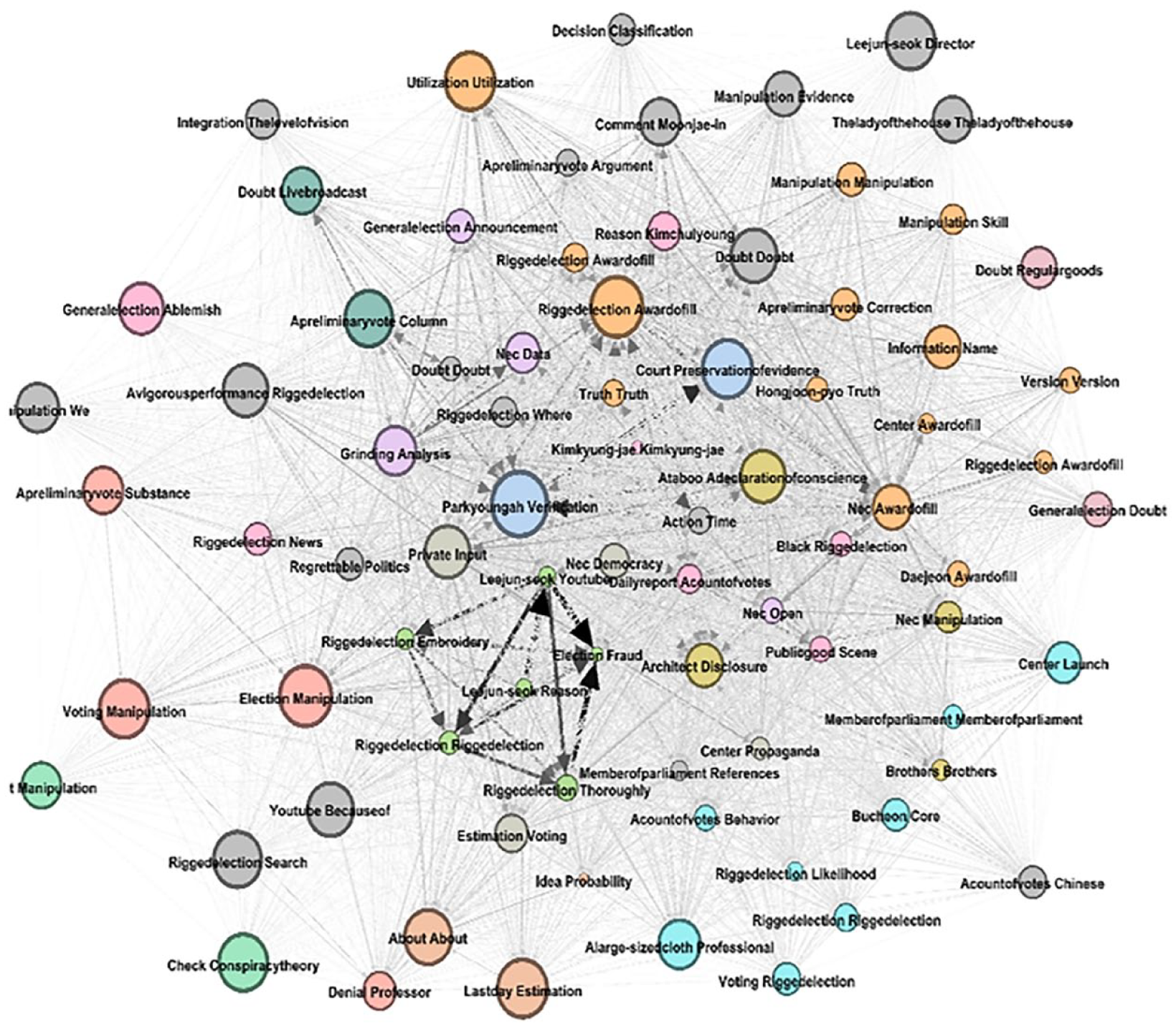

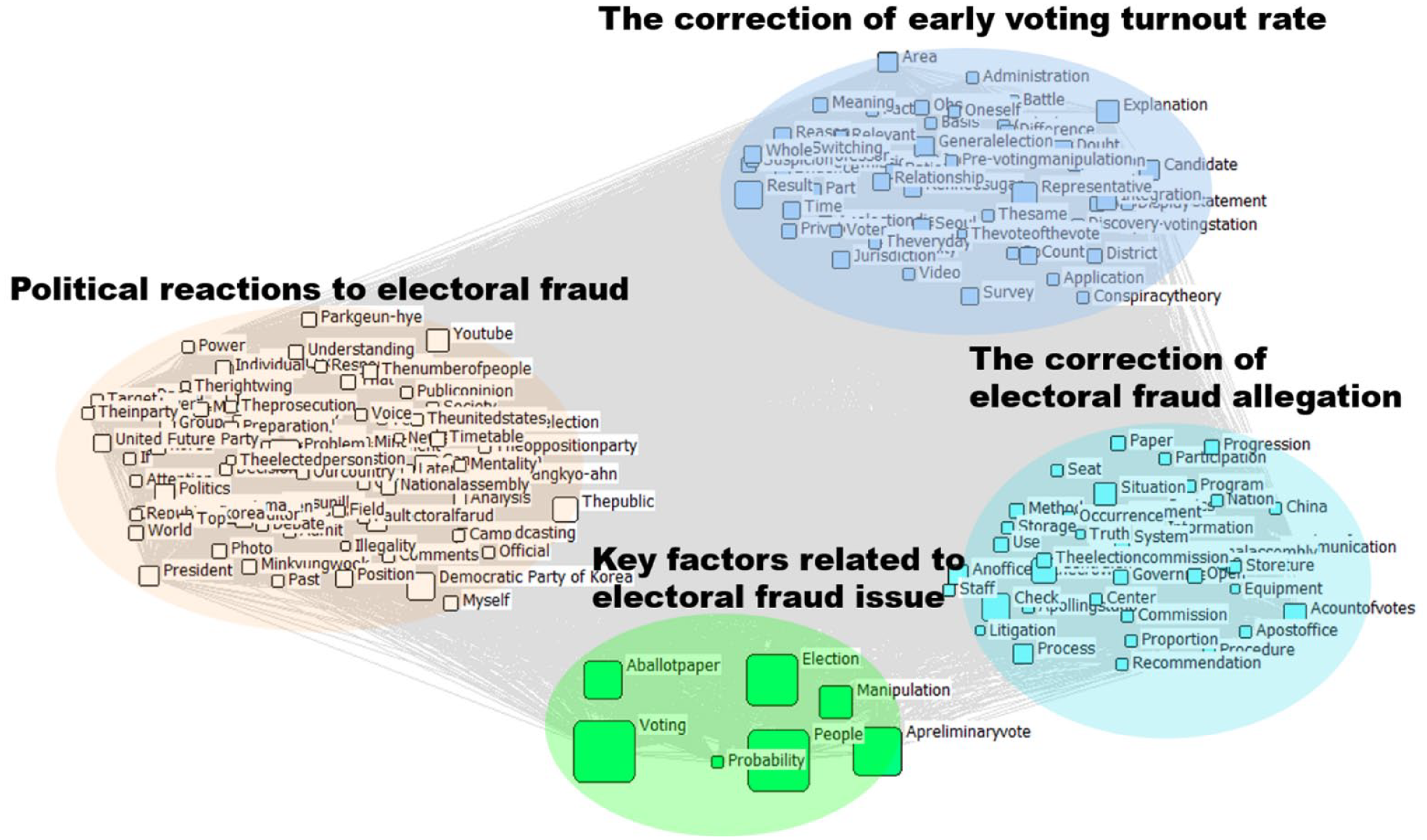

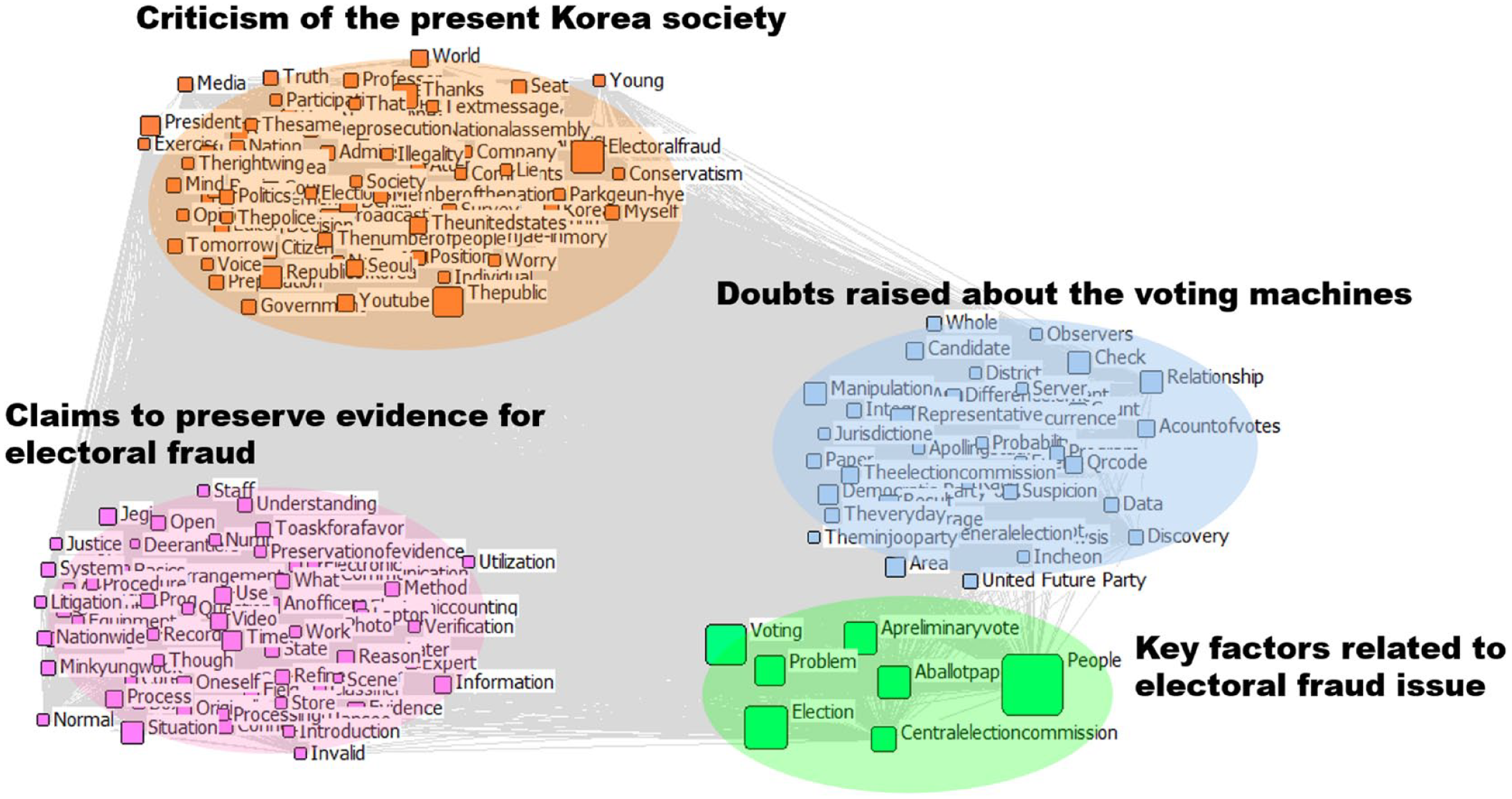

Finally, we conducted CONCOR (CONvergence of iterated CORrelations) analyses on the semantic networks of real and fake news videoclip texts. CONCOR allows us to cluster words based on the structural equivalence that words have in the semantic network and enables us to point out the implications of the network’s thematic structure. For visualization purposes, we only included the top 200 words in terms of word occurrence frequency in the CONCOR analyses. The sizes of the nodes are proportional to their degree centrality. Figure 7 shows the CONCOR result of the semantic network of real news videoclip texts, while Figure 8 shows CONCOR result of the semantic network of fake news videoclip texts.

CONCOR result of the semantic network of real news videoclip texts.

CONCOR result of the semantic network of fake news videoclip texts.

As shown in Figure 7, real news videoclips discussed issues such as the correction of the early-voting turnout rate (e.g. voter, suspicion, pre-voting manipulation), the correction of electoral fraud allegation (e.g. classifier, program, equipment), key factors related to electoral fraud issue (e.g. preliminary vote, manipulation, ballot paper), and political reactions to electoral fraud (e.g. Democratic Party of Korea, United Future Party, politics).

As shown in Figure 8, fake news videoclips addressed criticism of current Korean society (e.g. prosecution, Korea, worry), claims to preserved evidence of electoral fraud (e.g. equipment, method, processing), doubts raised about the voting classifier (e.g. QR code, server, storage), and key factors related to the electoral fraud issue (e.g. preliminary vote, election, ballot paper).

Discussion

Practical implications

To explain the characteristics of fake news propagation, this study utilized SNA and content analysis. The results provide important insights into the structure and characteristics of these networks, which can be useful in curbing the spread of fake news and dissemination of factual data. The study’s contributions are particularly significant, when placed in the context of the 2020 South Korean election. Because of the increased interest in the election at that time, it has unfortunately become a prime target for the dissemination of disinformation and fake news. To evaluate the effects and potential implications of misinformation campaigns, it is essential to gain insight into the features of the networks involved in spreading fake news in future elections. Our study points to several significant practical implications that are of paramount importance in the battle against misinformation, particularly in the context of electoral fraud cases.

First, the analysis of network statistics highlights the critical need for strategic targeting of high-influence nodes in the dissemination process. Nodes with higher out-degree centrality in the videoclip network played a pivotal role in spreading information. This finding highlights the importance of identifying and focusing efforts on these influential nodes for effective interventions, such as promoting fact-checking initiatives or implementing content moderation measures, to combat the spread of fake news (Budak, Agrawal, and El Abbadi, 2011; Pham et al., 2020; Zhen et al., 2023). According to Zhen et al. (2023), a viable strategy to address this issue may involve specifying influential users within a community who have a track record of disseminating false information. The authors suggest that it would be beneficial for social media platforms and governments to monitor and potentially label the messages of these accounts as potentially misleading, or even censor them outright. Consequently, this targeted approach may prove considerably more precise and efficient compared with indiscriminate interventions (Zhen et al., 2023).

The identification of a substantial volume of misleading content emphasizes the urgency for a robust fact-checking infrastructure (Gradoń et al., 2021). The denser and more active channel network of fake news, coupled with the higher prevalence of direct links, signifies a concerted effort in disseminating false information. This highlights not only the urgency in implementing technical measures (e.g. extended algorithm) to counteract the spread of misinformation (Kumar and Geethakumari, 2014; Pham et al., 2020) but also the need for honest messaging to debunk the spread of misinformation (Schnackenberg and Tomlinson, 2016).

The abundance of direct links in the network indicates that video snippets were widely used to disseminate news across various media categories. However, the higher prevalence of fake news videoclips in this group suggests active and widespread disinformation dissemination. Consequently, establishing dedicated fact-checking teams within media organizations is critical in swiftly identifying and debunking false information. This proactive approach ensures a more accurate public discourse and prevents the rapid spread of misinformation. In addition, the identification of statistically significant differences in comments, likes, and dislikes between actual and false news videos emphasizes the need for robust fact-checking infrastructure to ensure accurate information dissemination (Li and Chang, 2023).

Textual analysis of the fake news videoclip reveals words or terms such as “the Republic of Korea,” ‘Moon Jae In’ (i.e. the President of South Korea), “evidence,” “freedom,” “QR code,” “the United States,” and “China” were frequently mentioned. Fake news videoclips addressed criticism of the current Korean society (e.g. prosecution, Korea, worry), claims to preserve evidence of electoral fraud (e.g. equipment, method, processing), doubts raised about the voting classifier (e.g. QR code, server, storage), and key factors related to electoral fraud issue (e.g. preliminary vote, election, ballot paper). This underscores the importance of online media literacy programs that equip individuals with the skills to critically evaluate content and discern between credible information and misleading narratives (Lee and Ramazan, 2021).

We further compared real news and fake news videoclips in terms of author effect (i.e. YouTube channels), message effect (i.e. the content of videoclips uploaded by YouTube channels), and social effects (i.e. online social indicators like comments, likes, dislikes, and views; see Table 6). We identified statistically significant differences in comments, likes, and dislikes between actual and false news videos. Fake news videos received more likes while true news videos had more dislikes. In terms of message impact, fake news videoclips averaged 10 minutes and 37 seconds longer than true news clips.

We also examined electoral fraud video channels for author effects. The number of subscribers was statistically significant in differentiating fake news channels from legitimate news channels. This finding (see Table 6) suggests that the number of subscribers a channel has can act as an important clue in distinguishing between fake and real news channels. Channels with a larger following are more likely to be linked with reliable and trustworthy news sources, while those with fewer subscribers may have a higher tendency to spread false information. Therefore, platforms and authorities should consider subscriber counts as one of the factors when assessing the credibility of social media channels. Channels with a larger audience may be given priority in monitoring and fact-checking efforts, as they are likely to have a wider impact on spreading information. To counteract the dissemination of fake news, it is important for authorities to consider engagement metrics, content characteristics, and channel attributes. This could involve targeted interventions aimed at addressing the specific features of fake news content and channels, along with strategies to promote media literacy and advanced detection methods (Zhang and Ghorbani, 2020). In addition, platforms and content creators should be vigilant in monitoring and addressing the spread of misinformation, taking into account these specific characteristics.

The observation that fake news networks exhibited higher density and active dissemination is a cause for concern. The result indicates that there was a concerted effort to spread fake news through videoclips and channels, aiming to manipulate public perceptions and shape election outcomes. The higher density indicates that there are more individuals involved in the propagation of fake news, which could amplify its reach and influence on public discourse. By identifying the linguistic elements, frequently used words, and content patterns prevalent in fake news articles, this study unpacks the strategies employed by those spreading misinformation during the election. This critical analysis of the content helps us to understand how false narratives were constructed and spread. However, it is important to note that the study does not delve into the specific motivations or intentions of the individuals or groups behind the dissemination of fake news.

Theoretical implications

ANT is a sociological framework that seeks to understand social phenomena by examining the relationships and interactions between both human and nonhuman actors (entities that have agency and can influence outcomes) work together to shape reality (Nawararthne and Storni, 2023). ANT is particularly interested in how these actors come together to form networks, how they negotiate their interests, and how they shape the course of events. One significant criticism of ANT pertains to its assertion of equal agency among all actors in a network (Hadden and Jasny, 2019; Whittle and Spicer, 2008). Despite this, some scholars have defended this assumption of equal agency (Law, 1992). Through SNA, our research provides empirical evidence that supports the notion that certain actors wield significant influence in the dissemination of information within a social network. The identification of statistically significant differences in the in-degree and out-degree centrality between real news and fake news networks contributes to ANT’s understanding of power dynamics and influence within actor networks. The lower values observed in the videoclip network of fake news suggest that certain actors connected to fake news may have limited influence compared with those involved in real news dissemination. This highlights the complexities of power relationships and the ways in which different actors mobilize resources and become visible within the network. Recognizing these influential actors allows individuals and organizations to effectively control the rapid spread of information and, in turn, address any barriers to the flow of information (Kolli and Khajeheian, 2020).

The findings also confirm that ANT is a suitable framework to examine the spread of fake news within the context of electoral processes and affirms the role of technological platforms and algorithms in shaping the characteristics of actor networks (Weikmann and Lecheler, 2023). The interconnectedness and dissemination patterns observed in the videoclip network indicate the role of nonhuman actors, such as social media platforms (YouTube) or ineptness of recommendation algorithms, in countering the spread of fake news. This aligns with ANT’s perspective on the agency of nonhuman actors and the reciprocal relationship between humans and technologies in network formation (Latour, 2007).

This study addresses a critical gap in existing ANT literature by focusing on the contemporary issue of fake news propagation within the context of electoral processes. While earlier ANT studies have shown how a claim can be construed as fact or fiction through networks of human and nonhuman interactions (Pantumsinchai, 2018), or how fake videos propagate in a fact-checking network (Weikmann and Lecheler, 2023), or the agenda-setting power of fake news in the political landscape (Vargo et al., 2018), to the best of the authors’ knowledge, this is one of the first studies that investigated the propagation of real and fake news in the South Korean election through the lens of ANT. It enriches the existing body of literature by showcasing the continued relevance and adaptability of ANT as a theoretical framework in understanding complex socio-technical systems.

Limitations and future research

Our analysis of network characteristics reveals the unique characteristics of the videoclips and channel networks in South Korea, emphasizing the dynamic transmission of fake news and its distinctive network patterns. Electoral processes can be better protected from the negative effects of false news by taking advantage of the knowledge gained from a thorough understanding of these network properties. However, it is important to note that our findings are limited in terms of the depth of exploration of actor–network dynamics. The analysis primarily focuses on network characteristics and does not exclusively delve into the controversies and power dynamics (Whittle and Spicer, 2008) and existing discoursers within the network (Pantumsinchai, 2018). Research in the future could expand on these dimensions to provide a more comprehensive contribution to ANT’s theoretical framework.

Also, in accordance with the inherent limitations of ANT as an analytical lens (Whittle and Spicer, 2008), this study primarily focused on network characteristics and content evaluation without explicitly examining the impact of fake news on the electoral processes. Thus, the study does not explore the extent to which this fake news influenced voter behavior or electoral outcomes. Hence, we need more research and real-world evidence like qualitative interviews or experiments, to understand how voters act when they encounter fake news.

Another key factor to consider is the role of social media platforms and their algorithms in aiding the spread of fake news during the South Korean election. Furthermore, we do not examine the role of platforms like YouTube in facilitating disinformation distribution or the efficiency of content moderation procedures in limiting its spread (Ibrahim et al., 2023). Future research should examine the systemic variables that contribute to the spread of fake news, such as platform policies, algorithms, and user engagement dynamics.

Finally, given the rapid changes occurring in media and technology, future research might look at how emerging systems like artificial intelligence (AI)-generated content contribute to the creation and propagation of fake news (Gutiérrez, 2023). It is critical to understand the potential risks and obstacles produced by these changes in order to design successful tactics to combat fake news in the future. Despite the fact that the findings provide insights regarding the characteristics of real and fake news networks and the common patterns in their content, a thorough analysis is still necessary. Future research should continue to conduct network analysis across various political and social situations to determine, first, whether the network characteristics and fake news dissemination patterns seen in the 2020 South Korean election are consistent; or second, if there are variations due to cultural, political, or social realities or circumstances.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Korea Foundation, Grant # 1024000-2769, Republic of Korea.