Abstract

The confluence of organizational coaching and artificial intelligence (AI), specifically generative AI is set to permanently change how employees are developed and supported. This could result in significant benefits to individual and organizational learning, wellness, and performance. The benefits of organizational coaching are well documented through rigorous research, while the efficacy of AI coaching shows early signs of promise. The challenge now is how to optimally leverage AI in organizational coaching for exponential gains. In this article, I will explore the current and potential future applications of AI coaching in organizations, what we must be cognizant of, and which concerns are overhyped.

Brief Background

Organizational coaching is a combination of applied psychology and adult learning where a one-on-one individual developmental process is facilitated by a human coach, with the aim of helping people increase their self-awareness and self-efficacy for their own and the organization's benefit. Artificial intelligence (AI) is the simulation of human intelligence using machines with the aim of mimicking human reasoning, problem-solving, decision-making, and even creativity. AI coaching is when these two worlds meet. More specifically, AI coaching is the imitation of human coaching expertise through the application of emulated human coaching knowledge.

Organizational coaching has been around for several decades. From a rather shaky and sometimes notorious past (Sherman & Freas, 2004), coaching is continuously maturing and professionalizing. This positive trend is witnessed by the exponential growth in credentialled, certified coaches through coaching bodies such as the International Coaching Federation (ICF) and European Mentoring and Coaching Counsel (EMCC) and the growing number of universities that offer postgraduate degrees in coaching, for example, Stellenbosch University, Henley, Oxford Brookes, and New York University to name a few. An encouraging development in the professionalization of coaching over the last 10 years is the growing body of scientific evidence supporting this field (see, e.g., Athanasopoulou & Dopson, 2018; De Haan & Nilsson, 2023). Organizations are taking coaching seriously and it has become an integral part of human resource development efforts (Terblanche, 2022).

AI has been around since the 1940s and has seen several peaks and “winters” because of inflated expectations (Haenlein & Kaplan, 2019). The holy grail of AI is the elusive “general AI” where the AI is as intelligent and indistinguishable from human intelligence. We have not yet reached that point, however, the recent arrival of generative large language models (LLMs) propelled AI onto the center stage. LLMs are large computational models, some consisting of trillions of parameters and trained on billions of data elements (Kasneci et al., 2023). LLMs form the basis of systems that can generate remarkably accurate human language such as ChatGPT. LLMs and generative AI in general have created excitement, hype, and doomsday predictions notably the ability to generate very realistic fake content (Kalla et al., 2023). However, some scholars see the potential of generative AI to contribute to organizational change including strategy development (Kanitz et al., 2023) and decision-making (Oswick, 2024).

Despite these concerns, this new class of technology holds immense potential for coaching and other helping professions that are language and conversation-based. While human coaching has a proven track record in individual and organizational development, there are several challenges such as high cost, sporadic availability, and lack of scalability. Generative AI could form the basis of a new form of coaching that could address some of these concerns by offering coaching to millions more people at a fraction of the cost of human coaching. Unsurprisingly, therefore, AI has already made its way into organizational coaching in various forms, causing excitement, confusion, and concern. Let's take a closer look at how AI is currently used in organizational coaching and what future applications could be possible.

Current and Future Applications of AI in Organizational Coaching

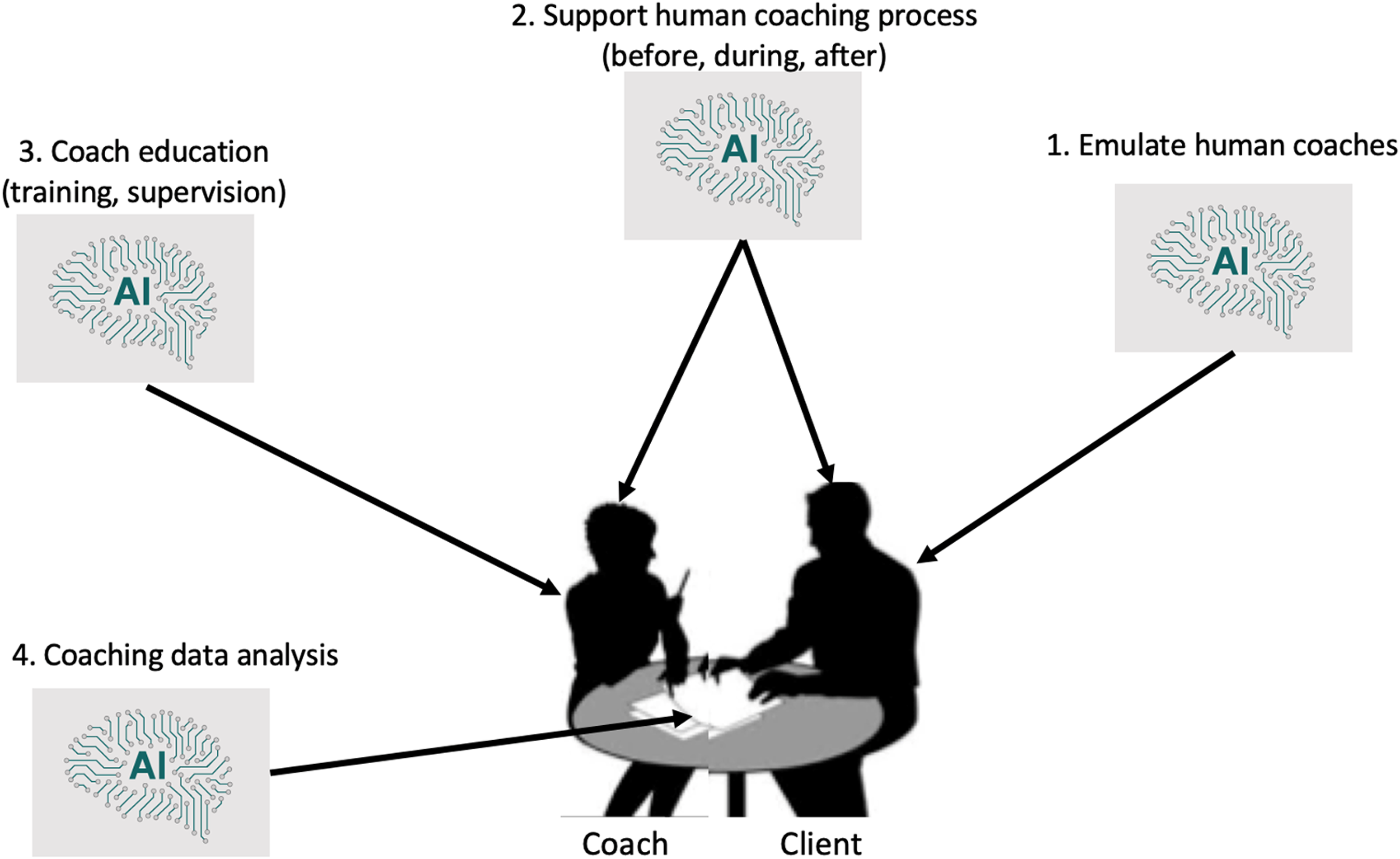

Although it is still early days for AI in coaching, there are already relatively mature AI coaching products available. This current use of AI in coaching also provides insights into potential future applications. I envisage four main areas where AI can play a role as depicted in Figure 1: Coach emulation, coach support, coach education, and coaching data analysis.

Main applications of artificial intelligence (AI) in organizational coaching.

Coach emulation is a euphemism for human coach replacement. In essence, these are AI coaches that can function autonomously and perform human coaching functions without any human assistance. There are a number of these AI coaches in the form of chatbots that are currently commercially or freely available such as Coach Vici (coachvici.com) and Aimy (https://aimy.coachhub.com/login). Early indications are that these stand-alone AI coaching chatbots are remarkably effective in certain coaching contexts as shown in a human versus AI coaching comparative study (Terblanche et al., 2022). This application of AI coaching in organizations holds immense potential. Providing even just a basic form of coaching to employees could scale the benefits of coaching far beyond its currently limited application to management levels. There is also a cost benefit since AI coaches are orders of magnitude cheaper than human coaches and AI coaches are available 24/7. With AI coaching emulators organizations will have no excuse not to provide a “coach” to everyone in the organization. One of the main advantages of AI coach emulators is that they can collect data with each client interaction. This data can be analyzed to provide valuable insights into employee sentiment, levels of engagement, recurring themes, and team performance. To mitigate confidentiality concerns, data can be reported at an aggregate level. This ability to extract insights from coaching addresses a long-standing concern about the opaqueness of human coaching in organizations.

The second application of AI in organizational coaching is to support human coaches before, during, and after the human coaching intervention. Before coaching starts, many coaches and organizations have a matching, screening, and onboarding process for new coaching interventions. AI coach assistants can be used to automate some of these repetitive tasks such as administering in-take surveys and having exploration discussions about preferences, goals, and desired coaching outcomes. During human coaching interventions which often have intervals of several weeks, AI coach assistants can check in and follow up with clients on what was discussed in the previous human coaching session. These nudges and constant support are important aspects of goal theory that have been shown to promote positive coaching outcomes. Human coaching interventions are time-boxed with between 4 and 10 sessions typical. When human coaching ends, AI assistants can be used to prolong the coaching effect by acting as a reflective soundboard, reminding clients about their coaching achievements and helping them to keep working on distal goals. Organizations could therefore get more value for their money when employing AI coach assistants with human coaching interventions. A novel application of AI coaching assistants is the notion of triadic coaching. In this future scenario, a client could be coached by both a human and an AI coach at the same time. The human coach would lead the process and make use of AI interpretations of the human–human interactions to direct the process, provide in-the-moment suggestions for coaching questions, and detect client behavioral and cognitive patterns.

The third application of AI is to help coaches with skills development. In fact, coaching skills development goes beyond human coaches and includes teaching coaching skills to managers and employees on all levels of the organization. The notion of “manager as coach” or “leader as coach” has gained widespread traction in recent years as organizations realize the positive impact the use of coaching skills such as active listening, open-ended questions, reflective practice, and structured feedback has (Adele et al., 2022). AI can be employed to train employees on these skills by for example analyzing recorded coaching sessions for coaching competencies demonstrated and keeping track of coaching skills development progress (Bridgeman & Giraldez-Hayes, 2023). There are already such applications available (see ovida.org).

Finally, AI could be used to analyze and detect patterns in human-only coaching interventions post facto. Many human coaches collect data during coaching sessions including notes or recordings. Currently, organizations receive very little useful information from coaching interventions. AI could assist in this regard. With the employees’ permission, human coaching data could be submitted for AI analysis to detect trends and patterns not only within one client intervention, but across the entire organization's coaching interventions. Currently, these patterns are difficult to detect in large-scale human coaching interventions where numerous coaches practice at the same time within an organization. AI could even be used to try and match individual and organizational changes with coaching intervention. AI can find patterns in data that would be impossible for humans to detect due to the large number of variables and permutations in large data sets.

Debunking Concerns About AI Coaching

It is a positive sign that there are a growing number of research studies published on AI coaching. These studies add to our much-needed understanding of how AI coaches should be created, when and where they should be used, and their efficacy—all to avoid a Wild West in AI Coaching. Most of these studies, in good scholarly fashion also cite potential concerns and pitfalls of AI coaching. However, there is perhaps a lack of creative human thinking about how to overcome these challenges. In this section, I would like to debunk some of the typical concerns about AI coaching and provide possible solutions.

One of the most commonly cited fears raised by AI coaching is that it will replace or displace human coaches. A recent study (Diller et al., 2024) for example shows that coaches fear AI coaching which leads to negative opinions and reduced curiosity about AI coaching. This is ironic since another study (Terblanche et al., 2024) showed that clients welcome the use of AI coaching assistants provided that their human coaches endorse it. Coaches need to change their mindsets about AI coaching and embrace it as an inevitable part of the future. Coaches need to up their game, and receive proper theoretically- and evidence-based coach training to increase their understanding of the human change process. Coaches should broaden their repertoire of coaching approaches to meet the client where they are to compete effectively with an AI coach who could analyze mountains of data in an instant to select an appropriate coaching approach. Human coaches must also realize that AI will make more productive and efficient coaches of them.

Another often-cited concern with AI coaching is the potential bias in AI training data. This occur when the data an AI is trained to create for example LLMs contain cultural, racial, or gender biases. There are two solutions to this problem. Firstly, it can be argued that detecting bias in an AI is easier than detecting it in a human. Most (if not all) human coaches have biases. There is, however, no way an organization can detect such biases when these coaches interact with their employees. A human coach could for example be secretly anticapitalistic and influence clients to leave the organization. Their experience of being bullied in the workplace could bias a human coach to project or practice transference if a client brings up a similar situation. With AI coaches it is much easier to detect and correct such biases by interrogating the AI algorithm. Secondly, the AI bias assumption is based on the fact that commercial LLMs are proprietary, and the nature of their training data is not made public. To overcome this lack of transparency it is possible to use open source LLMs and customize them with known data sources where biases could be checked beforehand. We have more control over the underlying data sources that drive AI coaches than we think.

A final concern about AI coaching is data security and privacy. It is true that information shared in a coaching conversation could be sensitive. In human coaching, confidentiality is a key ethical concern that coaches take very seriously. The concern often raised in this regard with AI coaching is that the coaching conversation data may be accessed unlawfully with dire data breach consequences. One could argue that coaching data is less sensitive than peoples’ online banking, credit card, and medical history, yet most of us are happy to trust technology with this sensitive data. The technology exists to safeguard electronic data to the extent that the benefits significantly outweigh the risks. Surprisingly, in a study of AI chatbot coaching adoption factors (Terblanche & Kidd, 2022) risk was one of the least concerning aspects, implying that users are not that concerned about their data in an AI coaching context. We can mitigate this risk of data security and privacy by leveraging technology solutions from industries that have battled these issues for much longer.

What the Future of AI Coaching Needs Now

To harness the incredible potential of AI in coaching for the benefit of individuals and organizations, current stakeholders in coaching need to wake up and join the AI party. Human resources and other custodians of coaching in organizations need to embrace AI coaching and bravely experiment with how to apply it, in which context, and with which employees. Coaches need to address their fear of AI coaching, educate themselves on the nature of this new phenomenon, and look for ways to incorporate it into their practice. Coach training providers have the responsibility to include AI coaching as a standard and mandatory part of coach training. These providers should also investigate AI tools available to help educate their students. Coaching bodies such as ICF, EMCC, and all others should develop AI coaching standards, guidelines, and best practices to help guide stakeholders on the responsible creation and use of AI coaching. AI coach developers must adhere to ethically acceptable coaching practices when they create AI coaches. They need to partner with coaching experts to ensure their creations are indeed in line with good coaching principles.

The bottom line is that there is a deep-seated need within humans to improve themselves. This notion is what led to the advent of human coaching originally. The fact that AI now makes it possible to emulate aspects of human coaching implies that its development, application, and growth are inevitable. Organizations making use of coaching and all stakeholders in the coaching fraternity have a responsibility to help guide this natural process.

Conclusion

AI coaching is here whether we like it or not. We need to understand how to responsibly develop and apply AI in coaching so that organizations, employees, coaches and, in fact, all of humanity stand to benefit from large-scale, cost-effective, and ubiquitous coaching support. While there are some risks involved, the potential benefits far outweigh these risks if we keep an open, creative mind and get involved in the evolution of this phenomenon. AI coaching can redefine what it means to develop people and enhance organizational performance. We need to stop being scared and suspicious of AI in organizational coaching and instead embrace this powerful technology to avoid the coaching industry being left behind, or worse, AI coaching being hijacked by people who don’t know what coaching is.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.