Abstract

Background:

The purpose of this study was to develop and to validate a measure of cultural responsiveness that would assist mental health practitioners across a range of disciplines, in Australia, to work with Indigenous clients.

Aim:

The Cultural Responsiveness Assessment Measure (CRAM) was developed to provide a tool for practitioners and students to evaluate their own culturally responsive practice and professional development.

Method:

Following expert review for face validity the psychometric properties of the measure were assessed quantitatively, from the responses of 400 mental health practitioners.

Results:

Confirmatory Factor Analysis yielded a nine factor, 36 item instrument that demonstrated strong convergent and discriminant validity as well as test-retest reliability.

Conclusions:

It is anticipated that the CRAM will have utility as both a learning tool and an assessment measure, for mental health practitioners to ensure that services are culturally responsive for Aboriginal and Torres Strait Islander people.

Keywords

Introduction

Cultural responsiveness is recognised as a core competency for all psychology students and practitioners in Australia, as well as in other countries such as the United States and Canada (American Psychological Association, 2015; Canadian Psychological Association, 2021). The Australian Psychology Accreditation Council (2019) has included cultural responsiveness as a requirement for all levels of psychology qualification and practice. Beyond psychology, the Australian Association of Social Workers (AASW) Practice Standards state that ‘social workers practice respectfully and inclusively with regard to culture and diversity’ (Australian Association of Social Workers, 2023, p. 10). Such requirements place importance on methods for both students and practitioners to monitor and assess their cultural responsiveness for working with Indigenous people. This group has found mainstream health and mental health services culturally unsafe (Kendall & Barnett, 2015) and its members suffer mental illness at higher rates than the wider community with a suicide rate that is double that of the rest of the Australian population (Silburn et al., 2014). Self-assessment measures can improve the accuracy of self-reflection across multifaceted competency domains and enable practitioners to identify and monitor gaps in practice, and facilitate professional development (Rice et al., 2022). To aid self-assessment of the core competency of cultural responsiveness, this research aimed to develop and validate a measure for mental health practitioners. Structured from the elements of cultural responsiveness found within the literature (P. Smith et al., 2021) and client interviews (P. Smith et al., 2023a, 2023b) the Cultural Responsiveness Assessment Measure (CRAM) provides a tool for systematic self-evaluation across nine domains of culturally responsive practice. It is anticipated that the CRAM will provide a tool for mental health practitioners in a range of disciplines, to work in ways that will help to close the gap in mental health, which has been found to be culturally unsafe for Indigenous people (Kendall & Barnett, 2015; McGough et al., 2018).

Cultural responsiveness for practitioners is a recursive process that sets reflexivity at the centre of a dynamic and ongoing movement where the reflexive self, initiates exploration into the domains of learning, experience, and discovery for best outcomes for Indigenous people (P. Smith et al., 2022). A culturally responsive mental health workforce will help to decolonise psychology by setting aside the ethnocentric practices of the dominant culture, giving a place to Indigenous epistemologies and worldviews (Dudgeon & Walker, 2015). As a dynamic and ongoing process cultural responsiveness requires that individuals continue to monitor their progress and consider areas of strength and deficit, and so the need for a self-assessment instrument is a matter of great importance to inform sound professional practice.

A measure or scale or assessment instrument can capture the depth and breadth of multifaceted constructs beyond the limits of a single item (Boateng et al., 2018). Cultural responsiveness is a complex and multifaceted construct that is an overarching term for multiple discrete domains that are also interrelated (P. Smith et al., 2021). This multifaceted construct requires an assessment instrument that is holistic and all-encompassing enabling systematic self-evaluation across all domains. Previous measures which have sought to evaluate practitioner capabilities in cross-cultural or multi-cultural contexts have been mostly designed for populations outside of Australia, and have largely focused on knowledge, attitudes and awareness of culture and health rather than practitioner responsiveness or capabilities in working with Indigenous people (West et al., 2018). The CRAM is based on a framework that emerged from a concept analysis of cultural responsiveness found within the literature (P. Smith et al., 2021) and was subsequently developed into a model of reflexivity for teaching and learning (P. Smith et al., 2022). This model with nine domains centred on the reflexive self is recursive, non-binary and non-linear, prompting the need for ongoing practitioner self-evaluation. In a further study by the authors (P. Smith et al., 2023a), which sought to test this model with Indigenous former clients of mental health practitioners, knowledge and awareness were clear themes. Further, culturally safe practice that is free of racism and includes practitioner reflexivity were also identified in the responses from participants, indicating that current concepts of cultural responsiveness need to now move forward to a more expansive definition and understanding of this construct.

The aim of the present study was to develop a psychometrically sound instrument to assist mental health professionals and students at a practical level, to work more effectively with Indigenous people. The study sets out to develop the CRAM and to assess the psychometric properties of the newly developed measure.

Method

The term Indigenous is used respectfully within this article as a term to identify both Aboriginal and Torres Strait Islander people who are the First Nations people of Australia, and the first author, a Gamilaroi First Nations researcher, was assisted with cultural advice and support from an Aboriginal advisory group that included Gamilaroi Elders.

There are three important steps to creating a scale that is valid and reliable: item development, scale development and scale evaluation (Boateng et al., 2018). Item development sets forth an initial set of items that are deemed to be representative of the construct that is to be measured. Scale development is the process of organising the items into a structure that is user friendly, clear, and logical, and scale evaluation seeks to ensure that the instrument is psychometrically rigorous, and tests validity and reliability and in respect of construct validity, assuring that it measures constructs as hypothesised (Churchill, 1979).

Item development

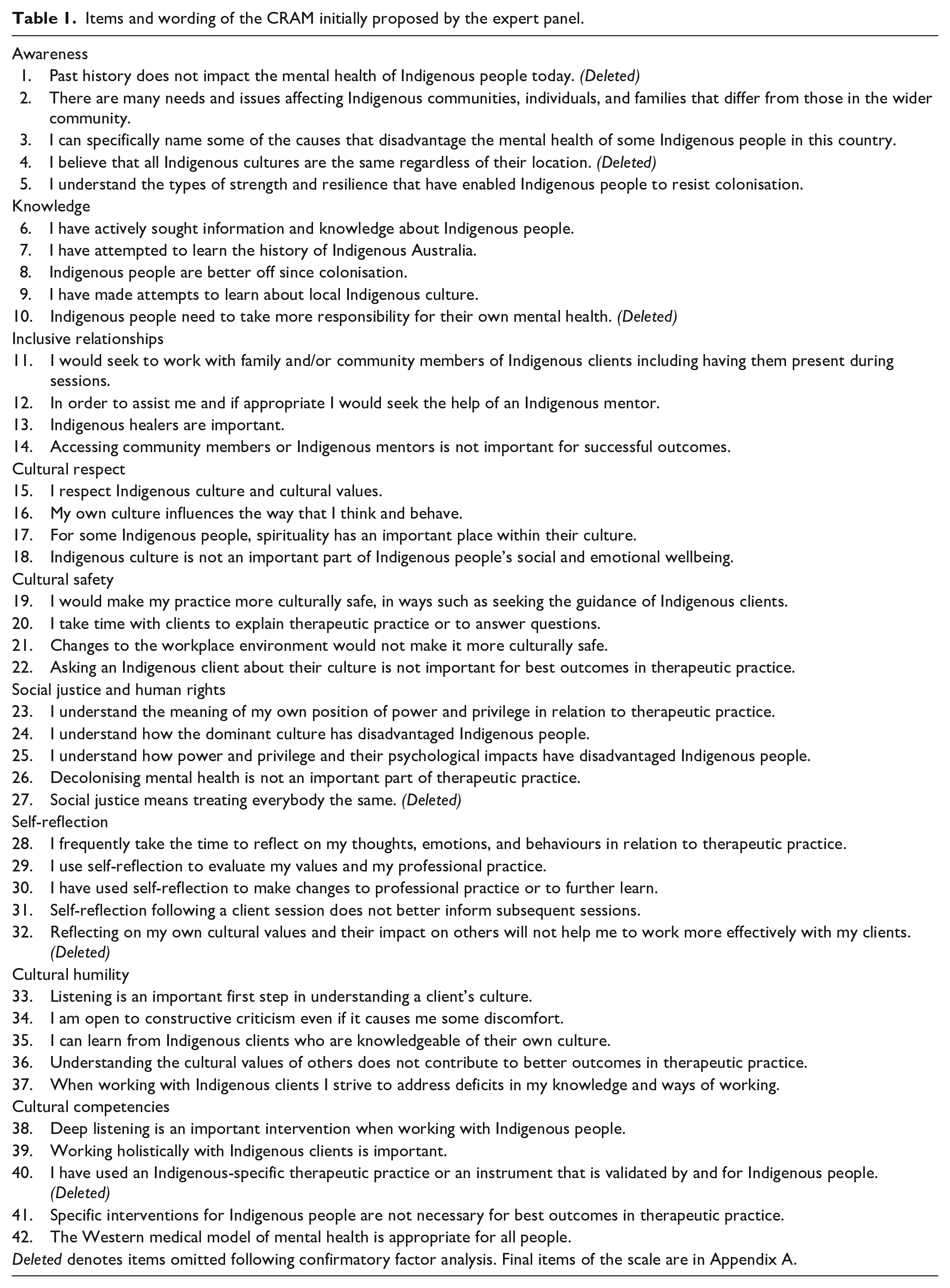

Item development commenced from the conceptual model of cultural responsiveness (P. Smith et al. 2022), which is based on the literature review (P. Smith et al., 2021) and qualitative interviews (P. Smith et al., 2023a, 2023b), using both deductive and inductive methodologies (Morgado et al., 2017). This meant that items were initially deductively generated based on the following themes of cultural responsiveness (P. Smith et al., 2022): Awareness, Knowledge, Inclusive Relationships, Cultural Respect, Cultural Safety, Social Justice/Human Rights, Self-Reflection, Cultural Humility, and Cultural Competencies. To strengthen the validity of the scale an inductive analysis of data from a qualitative study (P. Smith et al., 2023a) were also considered with a view to discovering any additional emerging themes (Morgado et al., 2017). From these themes a pool of 50 items was generated to elicit responses for practitioners to evaluate their level of cultural responsiveness.

Scale development

To evaluate face validity of the item pool, a panel of academics and practitioners, both Indigenous and non-Indigenous, were consulted to provide feedback on the suitability and appropriateness of the initial 51 items, and whether they would adequately measure changes to dimensional aspects of cultural responsiveness. Responses from the thirteen panel members focused on specific items, and there were no recommendations to remove any of the domains, apart from a single comment that Knowledge and Awareness could be difficult for respondents to differentiate. Recommendations by each individual panel member for change of items and wording were incorporated after consensus by the research team and this process resulted in a reduced pool of 42 items, with six domains retaining 5 items and three domains with 4 items, shown in Table 1. All panel members were consulted a second time to review the latest version of the instrument which incorporated the panel’s suggested amendments, and to provide opportunity for any additional comments or suggestions. Responses from this second round were integrated following review and consensus by the research team. These responses indicated that the items, fifteen of which would be reverse scored, and the nine domains of the CRAM were deemed to encapsulate the construct of cultural responsiveness. The panel also approved the response format of a 7-point Likert Scale, with choices ranging from ‘Strongly disagree’ to ‘Strongly agree’. Seven-point Likert scales possess greater sensitivity and accuracy than scales with less points, so the 7-point scale was adopted for this study (Finstad, 2010).

Items and wording of the CRAM initially proposed by the expert panel.

Scale evaluation

The second aim of this study was to evaluate the psychometric properties of the newly developed CRAM. Following ethics approval from the university’s Human Research Ethics Committee, this second stage of scale development consisted of a pilot study of the 42-item instrument, as an online survey. Participants were able to complete the survey anonymously or opt to provide an email address and complete the survey a second time, 1 month later for assessment of test-retest reliability.

Participants

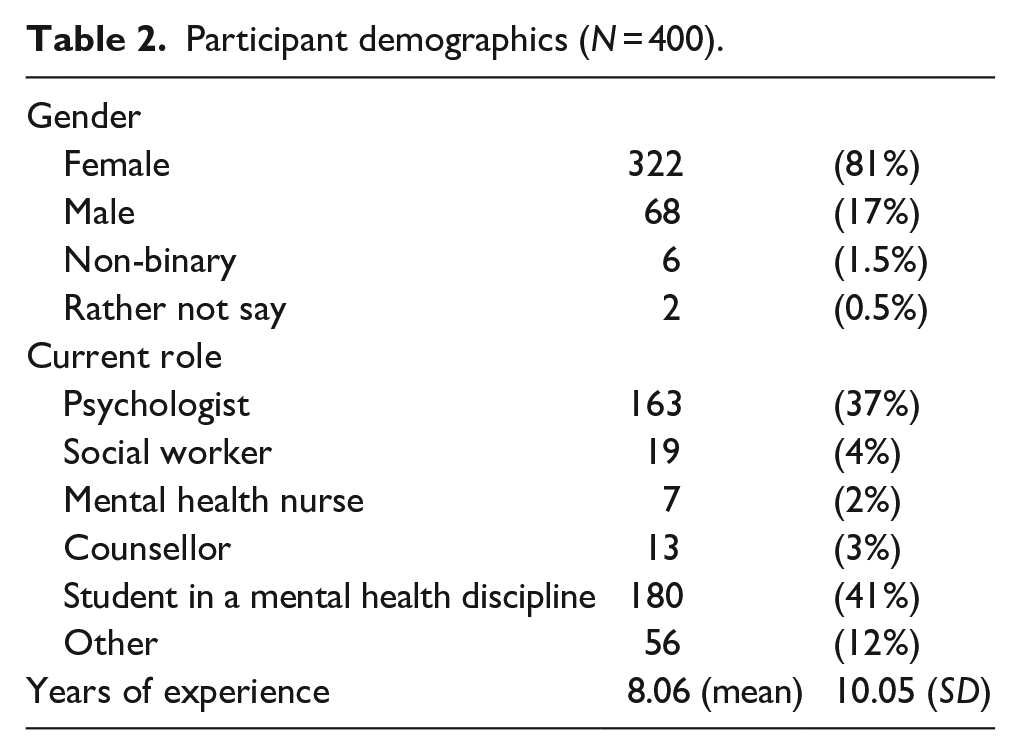

Mental health practitioners and trainees across a variety of disciplines were invited to participate through electronic advertisements and printed flyers resulting in the participation of 400 mental health practitioners, with their demographics shown in Table 2.

Participant demographics (N = 400).

Procedure

Participants were asked to complete the survey, hosted on QualtricsTM (2022; Provo, UT) and each participant provided online informed consent prior to undertaking the survey. Participants were also asked to provide their email address if they were willing to be contacted again to complete the survey once more a month later for the purposes of test-retest reliability

Materials

Cultural Responsiveness Assessment Measure (CRAM)

The psychometric qualities of the newly developed CRAM were the focus of the present study and as such are reported in the results section below.

Cultural Safety Training Questionnaire

The survey also asked participants as a measure of convergent validity, to respond to the items of the Cultural Safety Training Questionnaire (CSTQ; Ryder et al., 2017), which is a 15 item self-report scale. This instrument is a measure of cultural safety and evaluates attitude change across two factors: transformative unlearning and critical thinking for students and academic staff involved in Aboriginal Health. This instrument was reported as having overall adequate reliability for the two thematic areas with Cronbach’s α > .70, (Boateng et al., 2018) and test-retest reliability with overall intraclass correlation (ICC) = 0.7 (Ryder et al., 2017). Reliability analysis for this sample indicated Cronbach’s α of .82.

Riverside Life Satisfaction Scale

To assess divergent validity participants completed the Riverside Life Satisfaction Scale (RLSS; Margolis et al., 2019), a 6-item one-factor self-report scale that assesses contentment with life, desire for change and absence or presence of envy. It is considered to possess strong correlation (r = 0.95) with another similar measure the Satisfaction with Life Scale (Diener et al., 1985) as well as high internal consistency (α = 0.75) and test-retest reliability (Margolis et al., 2019). Reliability for this sample was estimated with Cronbach’s α = .86.

Analysis

Exploration of the dimensions and content of factors is a necessary first step before assessment of validity and reliability can be conducted (Boateng et al., 2018). Confirmatory Factor Analysis (CFA) using Jamovi Project (2022) statistical software explored both the content and the relationships between factors of the CRAM. CFA was selected as there was already an a priori hypothesis of the factor structure, and CFA is used where the purpose is to test theory that has already been established and where factors have been determined (Kääriäinen et al., 2011). CFA seeks to test whether the measures of a construct, in this case cultural responsiveness, are consistent with a priori understandings from literature and qualitative data of that construct (Awang, 2015). The measure in this study was derived from the theoretical model (P. Smith et al., 2022) developed from the published literature review (P. Smith et al., 2021) and qualitative interviews (P. Smith et al., 2023a). Thus, for this study which was hypothesis or theory driven rather than data driven, CFA was deemed to be the best method because it tests the structure of existing theory, unlike inductive methodologies (Kääriäinen et al., 2011; Maruyama, 1998). Exploratory Factor Analysis (EFA) was not deemed satisfactory for this study because it is data driven and it is adopted in research where there is no a priori assumption of the associations of variables (Kääriäinen et al., 2011).

The total sample of 400 survey responses were included in the CFA, and this number is deemed to be a suitable sample size for CFA (Cattell, 1978; Gorsuch, 1983). Based on a rating system convention for sample sizes in factor analysis by Comrey and Lee (1992) this study’s sample would rate good (>300).

Results

Confirmatory factor analysis

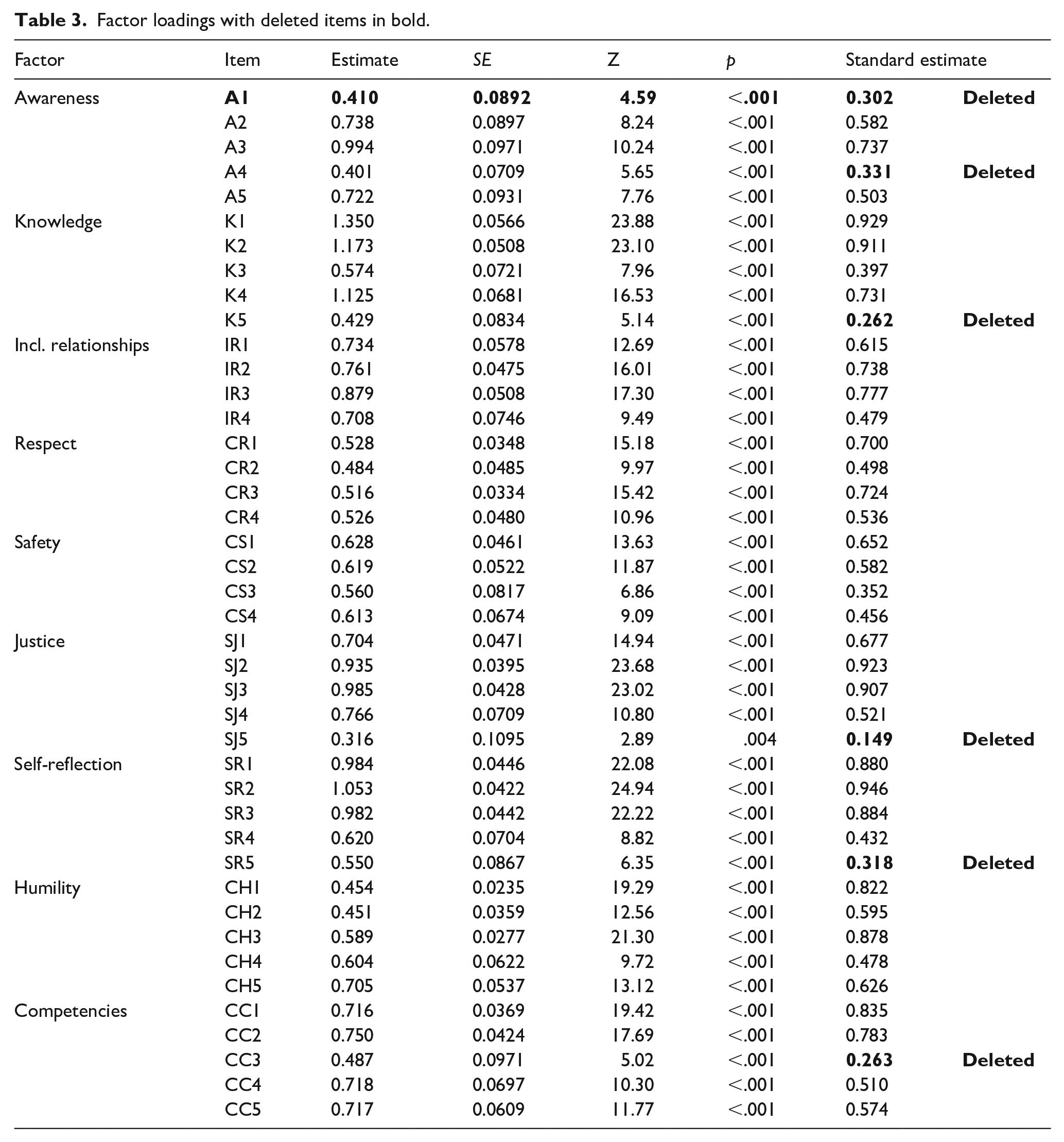

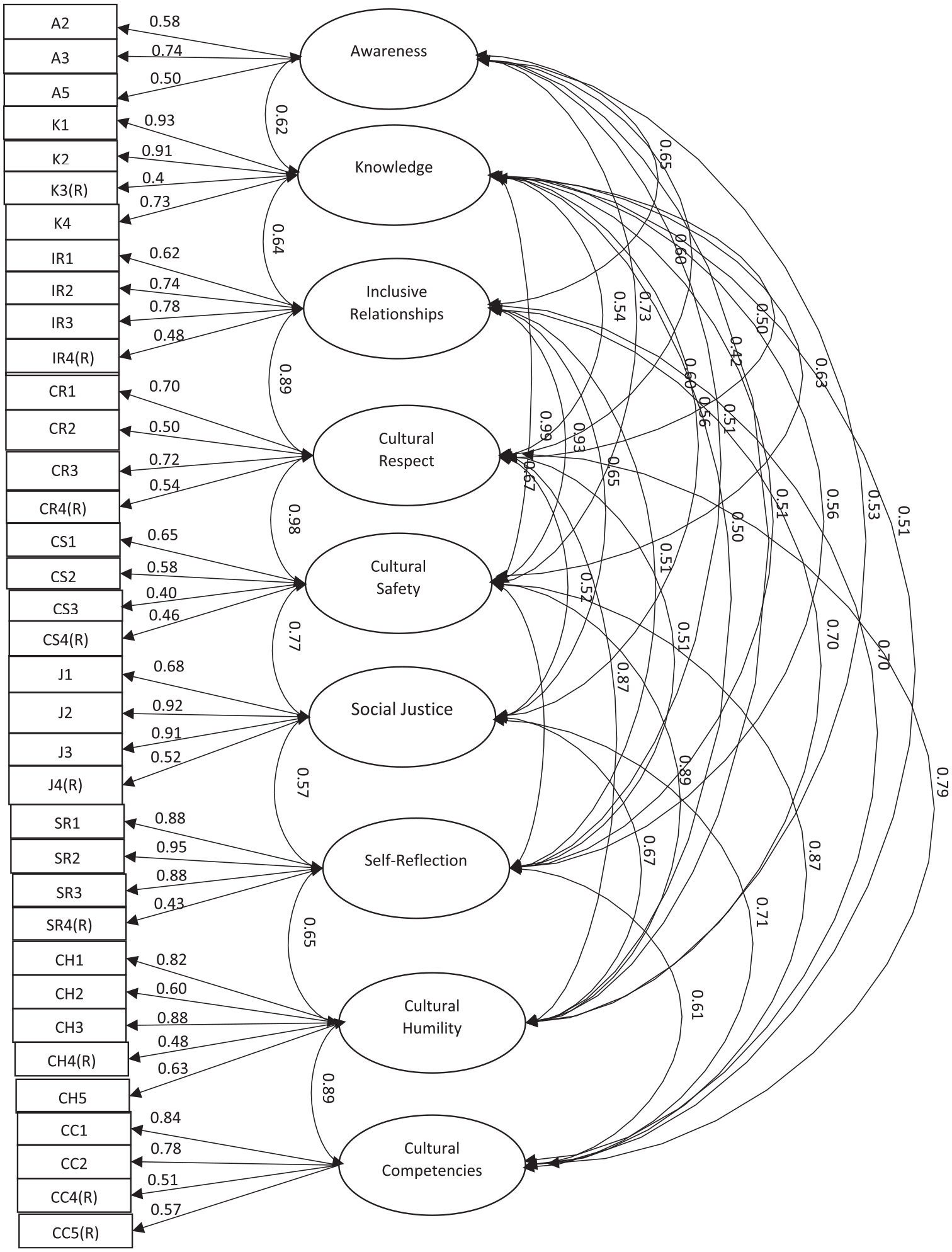

All items demonstrated positive factor loadings (Table 3) and CFA where items with factor loadings with a standard estimate coefficient of <0.4 were deleted. This accords with the convention of Tabachnick and Fidell (2000) where 0.4 as a minimum, accounts for more than 10% of the item’s variance and ‘as a rule of thumb, only variables with loadings of 0.32 and above are interpreted’ (p. 625). This process resulted in reduction of the instrument to 36 items, with all factors possessing at least 3 items. The 36 items, together with the scale instructions, are shown in Appendix A (Figure 1).

Factor loadings with deleted items in bold.

Final model structure of the CRAM. (R) denotes reverse scored items.

With 400 responses in the CFA for a 36-item instrument, a response-to-item ratio of 11 to 1 was achieved, indicating a suitable sample size for validity and stability of factor loadings (Boateng et al., 2018). CFA fitness indices which assist in understanding how the data support or fit the instrument, returned mixed results. The Root Mean Square Error of Approximation (RMSEA) showed satisfactory Absolute Fit (0.08) meeting the <0.09 threshold suggested by McNeish et al. (2018) but the Comparative Fit Index (CFI) and Tucker-Lewis Index (TLI) as measures of Incremental Fit were slightly below acceptance levels of >0.90 at 0.79 and 0.77 respectively (Awang, 2015). However, these cannot be discounted as irrelevant (McNeish et al., 2018) and rather than asking whether there is exact fit it can be more relevant to ask the degree of lack of fit and what meaning this then has for the instrument, which in this case may not affect its validity (Browne & Cudeck, 1992; McNeish et al., 2018). Marsh et al. (2004) have cautioned against the universal application of cutoff values for fit indices in that these can be affected by sample size (under approximately 500) and model complexity, such as the nine-factor measure that comprises the CRAM. Nonetheless, the CFA fit indices indicated that the original a priori nine factor model performed well overall and that a more parsimonious model, with less factors, was not indicated, supporting the structure of the cultural responsiveness model.

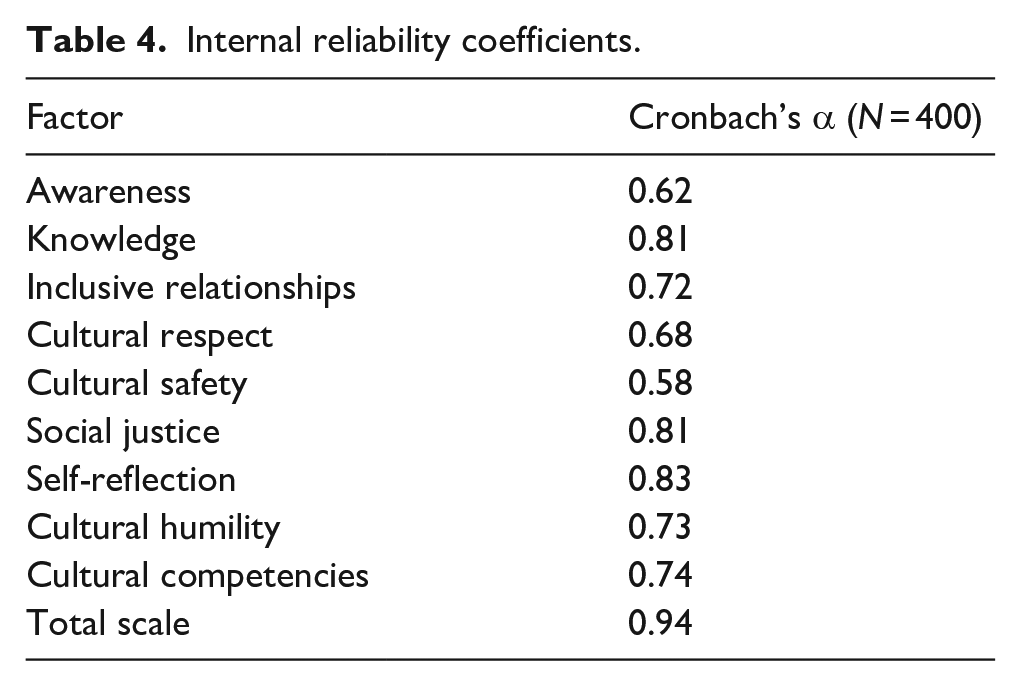

Internal reliability

The 36 items comprising the total CRAM demonstrated strong overall internal reliability (α = .94), and reliability coefficients for all factors are presented in Table 4. The individual factors in general showed acceptable reliability with α ⩾ .7 being acceptable (Boateng et al., 2018) apart from Awareness (α = .62) and Cultural Safety (α = .58) which were retained for reasons outlined in the discussion.

Internal reliability coefficients.

Test-retest reliability

In respect of test-retest reliability, which measures the temporal stability of the instrument, 150 participants responded to the invitation to complete the measure a second time and were emailed a link to complete the survey again. There were 50 responses received at timepoint 2 which is deemed a suitable sample size within the minimal acceptable error rates proposed by McMillan and Hanson (2014). Additionally, a sample of 50 involved in 2 timepoint responses delivers a correlation with power = 90%, p < .05 with the null hypothesis at 0 (Bujang & Baharum, 2017). Generally, a response rate of 32% to electronic surveys is deemed average (Shannon & Bradshaw, 2002), and the response rate to this survey at timepoint 2 was 33%. This is considered a good response rate when there are often diminishing returns for multiple surveys attributed to what has been called survey fatigue (Porter et al., 2004). The mean total score on the CRAM at timepoint 1 was 6.46 and at timepoint 2 was 6.44. The Pearson product-moment correlation coefficient was used in this instance, as a measure of reliability (Boateng et al., 2018) and a correlation coefficient of 0.5, p < .001, indicated large effect size for test-retest reliability (Cohen, 1988) and suggesting that the scale assesses a stable construct that shows some changeability.

Construct validity

For purposes of construct validity, measures of convergent and discriminant or divergent validity were used employing the total sample (N = 400). In terms of convergent validity total scores on the CRAM were compared using the Pearson product-moment correlation with the total scores on the Cultural Safety Training Questionnaire (CSTQ; Ryder et al., 2017), an instrument that was deemed to measure similar constructs as the CRAM. A correlation of 0.805, p < .001 indicated a large effect size (Cohen, 1988).

Evaluation of divergent validity was conducted by comparison with the Riverside Life Satisfaction Scale (Margolis et al., 2019) which measures constructs deemed to be fairly conceptually distinct from those measured by the CRAM, despite both measures similarly investigating personal beliefs and values, the foci of the two instruments are diverse. A result of 0.195, p < .001 indicated that there was small correlation between the scores on these two instruments (Cohen, 1988), supporting the position that the CRAM is a novel instrument that is not simply reflecting other measures (Boateng et al., 2018), in this case a measure of a generally positive view of one’s life.

Discussion

The Cultural Responsiveness Assessment Measure (CRAM) has been developed as a tool for mental health practitioners to self-assess across all components of culturally responsive practice when working with Indigenous people. The CRAM is underpinned by a literature review (P. Smith et al., 2021), a conceptual model (P. Smith et al., 2022) and qualitative interviews with Indigenous clients of mental health practitioners (P. Smith et al., 2023a; 2023b). This paper detailed the development and initial psychometric evaluation of the CRAM, in accordance with recognised scale creation guidelines (e.g., Boateng et al., 2018). The initial results of psychometric testing, as presented above, suggest an instrument with strong face validity, strong internal reliability, and test-retest reliability. Item factor loadings were also considered suitable for the nine factors, supporting the a priori model of the instrument. Consideration was given to excluding the Awareness and Cultural Safety factors from the instrument, due to low reliability, but these factors were retained because they did not detract from the CRAM’s overall reliability. These two factors in this instrument, also serve as a reminder to mental health professionals, in their self-assessment, that they are still important parts of culturally responsive practice, as identified in the related qualitative interview study of Indigenous clients of mental health practitioners (P. Smith et al., 2023a; 2023b). The CFA also indicated that there was some overlap between factors, and future psychometric evaluations may eventually indicate a reduced factor structure for the CRAM is warranted. However, the current evaluation justified all domains of the conceptual model and retained the nine-factor structure of the CRAM.

The strong test-retest reliability calculated between the timepoint 1 and timepoint 2 data suggests stability of the instrument across two administrations, but unlike what would be found with a higher coefficient, it suggests that the measure also possesses elements of change and fluctuation. This would be expected with an instrument such as this, in that it assesses constructs for some people, particularly trainees, whose results from timepoint 1 to timepoint 2 may be influenced by a learning effect (Hart, 2014).

In relation to convergent validity the strong correlation between the CSTQ and the CRAM, suggests that the two measures partially assess a similar construct. In comparison, a small correlation with the RLSS which was considered to be discriminant or divergent from the CRAM, supports the position that the two measures deemed to be conceptually diverse are also sufficiently statistically distinct. These two results support the construct validity of the CRAM and provide evidence to support its ability to measure the dimensions of cultural responsiveness.

It was the intention of the authors from the outset that the CRAM would be an instrument that would assist both students and practitioners within mental health to monitor their cultural responsiveness, and it is noted that 41% of survey respondents identified as students and participants were from a range of disciplines and with varied experience levels. It is possible that results from a sample with a higher percentage of experienced practitioners could deliver different results. However, the results of this study indicate both the utility and relevance of the CRAM as a novel instrument across a range of mental health disciplines and for a variety of experience levels. Most similar instruments, including the CSTQ, measure single or a limited number of components of what is now proposed as being definitive of cultural responsiveness. The CRAM provides a holistic conceptualisation of cultural responsiveness, based on the broad and multi-factor model identified in research (e.g., P. Smith et al., 2021, 2023a). This detailed model and measure provides a practical framework for practitioners, by identifying multiple factors and skills that can be specifically targeted in the development of cultural responsiveness. Thus, the CRAM provides a broad base for practitioners to assess relevant skills and attitudes when working with Indigenous peoples. This instrument moves mental health practice beyond competence models and the acquisition of knowledge and toward a more self-reflexive and collaborative approach.

This study was limited to mental health students and practitioners within the Australian context, and studies assessing the applicability of the CRAM for other locations is needed. Future cross-cultural studies may find that some modifications for local culture may be necessary. For example, items specifically mentioning an Australian context could be modified to specify other cultural contexts for psychometric evaluation. Additionally, it is hoped that the CRAM could offer a foundation for an instrument to assist those who are involved in other disciplines beyond mental health. The scale and its factor structures may also offer a starting point that will have international applicability for practitioners whose work involves First Nations people. However, as to the suitability of the CRAM for use with non-Indigenous minority or marginalised groups such as refugees, immigrants, and ethnic minorities, this would require major structural changes. For example, specific items about Elders, spirituality, colonisation, and traditional healers, would need to be carefully reconsidered.

The results of the present study support the proposition that cultural responsiveness is a multi-faceted construct in terms of assessment and skill development. The CRAM total score demonstrated strong internal reliability and construct validity with acceptable test-retest reliability, and support for the nine-factor model of the instrument.

Conclusion

This study outlines the development of an instrument that may assist mental health students and practitioners to support Indigenous clients in ways that are culturally responsive. The CRAM, as a self-report instrument offers mental health practitioners a way to understand and assess their own areas of strength as well as those areas that need further attention when they are working with Indigenous clients.

Footnotes

Appendix A

Acknowledgements

It is acknowledged that the work of this study took place on the traditional lands of the Gamilaroi and Anaiwan nations of northwest New South Wales, Australia, and respects are paid to Elders past, present and emerging. Acknowledgement is also given to the Aboriginal Advisory Group, which assisted with cultural advice and support.

Author contributions

All authors contributed to the research and all versions of the manuscript. PS conducted all stages of the research and prepared the draft version of the manuscript. KR, NS and KU contributed to all stages of the research and proofread draft manuscripts. Statistical analyses were reviewed by NS.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The first author, (PS) a proud Gamilaroi man, is supported by a University of New England Aboriginal and Torres Strait Islander Higher Degree by Research scholarship under the Australian Government Research Training Program (RTP).

Data availability

Data may be made available on reasonable request to the corresponding author.