Abstract

Money laundering (ML) and terrorist financing (TF) are pernicious global challenges. Estimates suggest that ML represents 2 to 4 percent of global GDP, disrupting financial systems, hindering anti-corruption efforts, fuelling terrorism, and destabilizing governments and security institutions globally. Emerging technologies like artificial intelligence (AI) complicate detection and prosecution efforts, enabling anonymity in the movement of money. This article explores AI's role in anti-money laundering (AML) efforts and in countering the financing of terrorism (CFT), focusing on data analysis for financial intelligence units (FIUs) and private sector reporting entities. It addresses AI's uses, benefits, and risks in Canadian and international AML/CFT, explores opportunities and challenges of AI adoption, and proposes next steps for research and practical application. By examining AI's promises and perils in financial intelligence, this article aims to contribute to academic and policy-oriented efforts to understand and leverage AI for effective ML/TF prevention.

Keywords

Money laundering (ML) and terrorist financing (TF) have been widely recognized as growing global challenges, prompting the international community to reprioritize their prevention. 1 By some United Nations (UN) and International Monetary Fund (IMF) estimates, international money laundering accounts for between 2 and 4 percent of global gross domestic product (GDP). 2 These illicit funds significantly impact the integrity of financial systems and the global economy; thwart efforts to combat graft, corruption, organized crime, and terrorism; and destabilize governments, institutions, and other national and international security and governance bodies.

Canada is not immune to these concerns. Although estimating the total amount of money laundered in Canada is challenging, a 2020 estimate from Criminal Intelligence Service Canada, a collaborative body that brings together the Canadian criminal intelligence community across all levels of government, suggests that between $45 billion and $113 billion is laundered annually across the country, with hundreds of millions of dollars laundered through trade to, and within, Canada each year, and a notable portion attributed to professional money launderers. 3 In the province of British Columbia alone, an expert panel on money laundering estimated that in 2018 over $7 billion in illicit funds were laundered. A range between $800 million and $5.3 billion was attributed to money laundering within the BC real estate market, contributing to an estimated 5 percent increase in housing prices. 4

The present challenge of ML/TF is compounded by emerging technologies like artificial intelligence (AI), which upend traditional responses, regulations, and policies. Criminals and terrorist financiers can exploit AI technologies to expand their operations, communicate anonymously, move funds, and avoid detection. 5 These technological advancements make it difficult to identify, investigate, and prosecute money launderers and terrorist financiers, “who shield a staggering amount of money in their varied illegal transactions” around the globe. 6

In response, national and international approaches used to counter the financing of terrorism (CFT) 7 and other anti-money laundering (AML) systems must better explore the use and utility of leveraging AI. So, too, must financial intelligence units (FIUs)—state-run organizations that receive, process, and analyze information on suspicious financial transactions and transmit intelligence to law enforcement and other government agencies—as well as the global institutions that support FIUs and the private sector entities whose financial transaction reports feed into the intelligence process. As in other domains of geopolitics, statecraft, intelligence, criminality, and warfare, emerging technologies are a double-edged sword: a challenge and an opportunity. Global experts are working to identify appropriate digital tools, determine the optimal timing for their implementation, and introduce and optimize them for AML/CFT purposes, as well as enhance data quality and assess the applications of machine learning and artificial intelligence in recognizing suspicious patterns hidden within it. 8 Sarah Paquet, the director and CEO of Canada's FIU, the Financial Transactions and Reports Analysis Centre of Canada (FINTRAC), and chair of the Egmont Information Exchange Working Group, announced in November 2023 the agency's modernization strategy, dubbed “FINTRAC in real-time,” which essentially leverages technologies, including AI, to expedite the entire AML/CFT process. Doing so, Paquet explains, will yield better identification and assessment of risks, support for Canadian businesses, interpretation and analyses of financial reporting, and financial intelligence for law enforcement and national security agencies “in real-time or as close to it as we can get.” 9

Despite the growing complexity of the relationship between AI and financial crime, there is scant academic research addressing the subject in any great detail. Exceptions tend to focus on a specific aspect of the puzzle, such as detailing the AI tools, techniques, and approaches being used from a technical, scientific, or engineering perspective, 10 or speculating on the legal or ethical ramifications of applying AI to financial systems and crimes. 11 Empirical studies on the topic are nearly non-existent. No overarching guiding research program has been proposed to advance the field of study of AI in AML/CFT, 12 13 and very few studies have approached the topic from an international affairs or geopolitical perspective, notwithstanding that AML and CFT are inherently linked to global security issues. 14 Canada, for its part, has not received any case study or illustrative treatment.

Our article builds on this literature to explore the promises and perils of using AI for financial intelligence towards the detection and prevention of money laundering and terrorist financing in Canada, focusing on data collection and analysis for reporting entities and FIUs. In sections one and two, we provide a contextual understanding of AI and ML/TF, respectively. Next, against a backdrop of the nascent and emerging literature tying AI and ML/TF together, we outline and describe the respective opportunities and challenges of adopting and implementing AI in Canadian and international AML/CFT efforts. Finally, in the article's concluding section, we discuss next steps for thinking about and better exploring AI in AML/CFT from a research, methodological, and practical perspective, providing scholars from a variety of fields with avenues for future scholarship.

An (all-too-brief) introduction of artificial intelligence

John McCarthy helped coin the term “artificial intelligence” in 1955 upon hosting the first scholarly conference on the topic. 15 While numerous definitions of AI have emerged since then, McCarthy originally proposed the following meaning: “It is the science and engineering of making intelligent machines, especially intelligent computer programs.” He further explained that AI “is related to the similar task of using computers to understand human intelligence,” but it does not have to “confine itself to methods that are biologically observable.” 16 Conceptually, however, humanity's journey to discover whether or not machines can reason far pre-dates McCarthy, and pulls together disparate actors, from philosophers to swindlers to authors. 17 For instance, the French philosopher-mathematician René Descartes, as illustrated by Masahiro Morioka, proposes that “human intelligence has a universal capability applicable to any surrounding situations, whereas machine intelligence is no more than a combination of abilities that are applicable only to certain situations that the creator could imagine when they built the automated machine.” 18 In 1770, the charlatan Wolfgang von Kempelen inspired exploration of the theoretical and mechanical possibilities of autonomous machines with his spurious “Mechanical Turk,” a chess-playing machine he claimed to be capable of playing a game of chess against human opponents. 19 Science fiction writers E.M. Forster, Karel Čapek, Isaac Asimov, and Harlan Ellison, among others, dreamt up intelligent humanoid robots and other machines to explore future scenarios involving machine intelligence functioning within society, thereby spurring imaginations across the fields of AI, robotics, and computer science. 20

The contemporary debate surrounding the concept of artificial intelligence was marked by Alan Turing's 1950 foundational paper, “Computing machinery and intelligence.” 21 Turing, widely acknowledged as the “Father of Artificial Intelligence,” asked whether machines could think, and proposed his controversial Turing Test or imitation game to measure if a human interrogator can distinguish between a computer- and human-generated response. 22 In 1966, building on Turing's research on cognitive computing, Joseph Weizenbaum created an early natural language processing computer, named ELIZA, which imitated the conversational patterns of users to give the impression that the computer program understood their personal issues, even though it lacked the ability to truly comprehend and carry on a meaningful and seamless conversation. 23 In 1995, Stuart Russell and Peter Norvig published Artificial Intelligence: A Modern Approach, 24 which quickly became a hallmark of contemporary AI research by providing distinct AI goals and distinguishing computer systems based on logic, thinking, and action.

Today, machine learning, as a subfield of AI, focuses on the creation of algorithms and statistical models enabling computer systems to learn from data as well as make predictions or judgements based on data. 25 The science of AI has progressed beyond mere theory to real—and, at times, stunning—applications, in part thanks to concurrent and rapid technical growth and exponential increases in the collection and storage of huge volumes of data. Attendant challenges include risks associated with biased algorithms and/or data, societal disruptions caused by AI systems and flawed algorithmic decisions, privacy issues related to data collection and analysis, and security challenges stemming from fabricated content (e.g., disinformation, deepfakes) and cyberattacks employed by malicious state and nonstate actors. To address these risks effectively, policymakers, researchers, businesses, civil society, and industry stakeholders must collaborate in developing regulations, ethical guidelines, and best practices for responsible AI development and implementation. What follows is a detailed summary of AML and CFT and the promises and pitfalls of applying AI to them.

Exploring and defining money laundering and terrorist financing

Article 3 of the first international treaty to tackle the issue of illicit funds and to require countries to establish money laundering as a criminal offence, the 1988 Convention Against Illicit Traffic in Narcotic Drugs and Psychotropic Substances, defines ML as “the conversion or transfer of property, knowing that such property is derived from any offense(s), for the purpose of concealing or disguising the illicit origin of the property or of assisting any person who is involved in such offense(s) to evade the legal consequences of his actions.” 26 The main purpose of ML is to create a series of complex financial transactions to make it exceedingly difficult to trace the origin of the funds. This UN convention was the first international treaty to tackle the issue of illicit funds and to require countries to establish money laundering as a criminal offence.

The money laundering literature highlights the challenge of reaching consensus within the international community regarding a fixed predicate offence, making it exceedingly difficult to establish a uniform definition. As a result, there are several limitations when it comes to comparing money laundering practices across different countries. However, international cooperation is imperative in dismantling criminal operations and combatting illicit financial flows. 27 Money laundering does not correspond to a specific behaviour; instead, it can encompass various forms of predicate crimes, ranging from minor tax evasion to the trafficking of weapons of mass destruction. 28

Money launderers may be involved in simultaneous criminal activities. For example, a drug trafficker may also engage in human and/or weapons trafficking through their networks. Additionally, as Isabel Ana Canhoto explains, “money laundering may involve a varying number of actors, from sole traders to highly sophisticated organized crime groups with their own financial director.” 29 And, while ML is often associated with drug trafficking and perceived as a consequence of criminal activities, it was not always considered a criminal offence: in the United States, before 1986, for example, narcotics dealers retained their US assets and could access their profits upon leaving prison. 30 Back then, the primary concern was the drug trade, with money laundering being viewed as an unfortunate consequence. Today, however, ML is illegal worldwide and encompasses the proceeds of a wide range of other criminal activities. A majority of governments and global economies adhere to the definitions, rules, and regulatory systems established by multilateral organizations like the Financial Action Task Force (FATF), the United Nations Office on Drugs and Crime (UNODC), and the European Union (EU). These organizations define money laundering as “a process that helps criminals to disguise illegal profits without compromising the criminals’ benefits from the proceeds.” 31

The literature on ML usually describes three stages of activity: placement, layering, and integration. During placement, illicit funds are introduced into the financial system. The process typically entails breaking down large sums of money into smaller deposits, which are then placed into various financial institutions. Cash deposits or other means may be used to transform these sums into seemingly legitimate funds. In layering, illicit funds are converted into another form of asset, and complex layers of financial transactions are created, both nationally and internationally, to further obscure the process, trail, source, and ownership of the ill-gotten funds. With integration, illicit funds and laundered proceeds are reinvested into the economy to make them appear legitimate. This can involve purchasing additional assets such as real estate, securities, or luxury goods. ML is an ongoing process, with new “dirty” money regularly flowing into the financial system. 32

Similar stages are also present in terrorist financing schemes, apart from the third stage (integration), which, in the context of terrorist financing, involves the distribution of funds to terrorists and terrorist organizations for the purposes of other criminal offences. Though distinct, ML and TF are often interconnected issues. When law enforcement authorities detect and prevent money laundering, they may disrupt the flow of funds to terrorist activities. In a similar vein to money laundering, addressing terrorist financing is challenging and requires effective AML/CFT systems. Prior to al-Qaeda's 2001 attack on the US (i.e., 9/11), international legal frameworks for combatting terrorist financing were limited and sparingly funded. It was only in 1999 that the UN established an international convention against terrorist financing, known as the International Convention for the Suppression of the Financing of Terrorism. 33 The 9/11 terrorist attack compelled governments and international organizations to redirect and reenergize their focus and resources toward identifying and disrupting suspected terrorist operations, including their sources of financing. For example, in Canada, the scope of the Proceeds of Crime (Money Laundering) Act expanded to include provisions for combatting terrorist financing, leading to the development of the Proceeds of Crime (Money Laundering) and Terrorist Financing Act. At the international level, too, the FATF expanded its mandate to address issues related to the funding of terrorism and terrorist organizations. This expansion resulted in the issuance of the FATF Eight (later expanded to Nine) Special Recommendations against Terrorist Financing designed to help identify and combat various techniques and methods used in terrorist financing. 34

Although heterogenous definitions of terrorism contribute to variation in state-level terrorist financing policies and legislation, the concept of terrorist financing is generally well established. The FATF has put forth a widely accepted definition of terrorist financing, describing it as involving the provision of funds or financial support to terrorists or terrorist organizations with the intent of furthering their activities. 35 This can include providing funds for terrorist violence, radicalization, recruitment, training, and the acquisition of weapons, among other activities that support terrorism more broadly. Conversely, debates and discussions on a comprehensive definition of terrorism often revolve around the criminalization of terrorist acts, designating these acts as criminal offences, and determining what precisely constitutes terrorism. 36

Terrorist financing usually consists of two sets of financial activities. The first involves providing the funds required for the execution of a terrorist operation—covering expenses such as food, transportation, textbooks, electronic equipment, explosives, weapons, and other materials. 37 The second category includes activities centred on collecting funds to support terrorist operations, training, and propaganda. 38 Unlike money laundering, terrorist financing can involve both legal and illegal sources: activities typically associated with traditional money laundering, such as human and drug trafficking, arson, and smuggling, may be used as methods to raise money for terrorist purposes, but terrorists may also receive funds from legitimate humanitarian and business organizations. Charities, which have historically been a significant source of funding for proscribed groups like al-Qaeda and ISIS, may—often unknowingly—channel their funds into terrorist activities due to the corrupt individuals within these organizations. 39 Furthermore, as per the FATF report, crowdfunding has emerged as a method that terrorists and terrorist organizations employ to raise funds for financing their operations. 40 Some religiously-inspired terrorist organizations also use informal financial networks such as hawala 41 and similar service providers to transfer money. Terrorists mix legitimate funds with terrorist funds, making it exceedingly challenging for governments to trace and prevent terrorist financing within the formal financial system. Furthermore, criminals are now leveraging emerging technologies, such as artificial intelligence. For instance, scammers exploit those who are less technologically adept by using AI to alter and imitate the voices of a victim's relative, such as a grandchild, or by using AI to personalize phishing emails and messages by analyzing data from multiple sources, including social media, making them more convincing and harder for traditional spam filters to detect. 42

What follows next is an assessment of how AI technologies may assist in emerging AML/CFT efforts.

Leveraging AI to the AML/CFT system

As criminals employ increasingly sophisticated methods to commit financial crimes, reporting entities, including companies subject to AML/CFT regulations, and FIUs, should adopt emerging technologies to outcompete and stay ahead of malicious actors. When it comes to tackling financial crime, traditional AML systems may no longer be effective: many detection systems are outdated and rely on protracted and time-consuming manual processing. In response, several institutions are exploring and implementing AI-powered technology to enhance their AML/CFT operations. In Canada, all the major banks have launched initiatives to integrate AI technologies into their businesses, including in AML/CFT. 43 Similarly, according to McKinsey's Global AI survey, approximately 60 percent of respondents from the financial services sector have adopted at least one AI capability. 44 Artificial intelligence can be applied to the AML/CFT system in two overarching ways: among reporting entities and FIUs.

Among reporting entities, AI can be applied in several distinct ways. Traditionally, the first line of defence in combatting money laundering and terrorist financing involves knowing and understanding the customer. AI-powered AML/CFT systems can help reporting entities enhance their Know Your Customer (KYC) and Customer Due Diligence (CDD) obligations and processes. Reporting entities are required to undertake CDD and KYC processes when establishing new business relationships and conducting transactions above an established monetary threshold. 45 KYC occurs during customer onboarding and involves collecting information on the new customer, such as their full name, phone number, and ID documents. CDD, an ongoing process that occurs throughout the customer's lifecycle, includes collecting and evaluating customer information, establishing a baseline of typical activity, and assessing risks related to ML and TF. With the rise of online banking, external risks such as hacking, identity theft, and phishing have emerged, threatening the integrity of user accounts. Stolen customer information can be used to acquire unauthorized access and facilitate financial crimes. 46 Therefore, as Rashid Alhajeri and Abdulrahman Alhashem argue, “the best approach to regularly monitor all data is through autonomous AI systems that navigate millions of data points, analyze suspicious transactions, and note the trends and patterns of such transactions that might indicate malicious behavior.” 47 AI-powered AML/CFT systems can swiftly identify suspicious transactions (e.g., involvement of high-risk jurisdictions, atypical transactional activity, large transfers, transactions conducted at different physical locations, insufficient explanation for a source of funds, a series of complicated transfers of funds, or transactions occurring at the same time of day) related to suspected ML and TF activities. These systems use automated transaction monitoring to provide real-time detection, triggering immediate alerts and investigations, and can pool transactional data from various sources, including internal databases and payment systems, creating a comprehensive overview of an actor's financial activities. In essence, the power of AI is used to sift through troves of financial-related data in order to capture a snapshot of a steady state against which anomalies—potential indicators of criminal activity—can be identified, tracked, and further investigated. 48 According to some academic technical studies, AI-powered AML/CFT systems can efficiently monitor large volumes of data, reducing the occurrence of false positives from 90 percent to less than 50 percent in CDD and KYC processes, while simultaneously minimizing the traditional (i.e., manual) time required to conduct these reviews. 49 AI can monitor transactions against constantly evolving regulatory requirements in real time, and can be programmed to stay current with the latest regulations, automatically adjusting compliance processes accordingly to ensure that reporting entities remain compliant. 50

Reporting entities, particularly financial institutions, generate huge volumes of data, posing challenges for data quality management. AI-powered AML/CFT systems can automate data cleaning processes, such as identifying duplicate entries or missing values, thus minimizing human error along the chain. Further, reporting entities can utilize AI-powered AML/CFT systems to verify the authenticity of identity documents provided by the customers, examining them for signs of tampering, forgery, or counterfeiting. Additionally, AI can extract key information, in real time, from large volumes of documents, and cross-check this data with known watchlists, internal risk databases, government sanctions, and adverse media. This enables it to assess high-risk customers based on their network connections, transactional history, sources of funds, and geographic locations. For instance, AI can help identity beneficial owners—an individual who directly or indirectly owns or controls about 25 percent of a corporation or business entity—by combing through the unstructured data, even including handwritten notes, that are used to identify beneficial owners. 51 Such technology responds to 2022 FATF mandates for stronger global beneficial ownership standards whereby states must ensure authorities have access to accurate and up-to-date information on the true owners of these entities. 52

Financial intelligence units, responsible for collecting, analyzing, and disseminating financial intelligence to relevant authorities and law enforcement agencies, 53 can also benefit from the analytic power provided by AI. According to the FATF and the Egmont Group of Financial Intelligence Units, integrating AI brings benefits such as enhanced efficiency, improved quality, strategic resource allocation, and a more dynamic risk-based approach to identifying criminal behaviour. 54 As with reporting entities, FIUs can utilize AI to improve transaction monitoring systems by processing and analyzing large volumes of financial data in real time. AI algorithms can detect suspicious patterns and behaviours, including but not limited to atypical transactional activity, high-risk activities, and high-risk jurisdictions. They can also analyze complex networks of transactions and relationships between individuals, accounts, and entities, as well as intricate money laundering schemes that may escape detection by traditional rule-based approaches. 55 Rule-based or scenario-based triggers are criteria or conditions established by reporting entities or authorities. These pre-defined criteria (e.g., series of transactions below reporting thresholds to avoid detection, rapid movement of funds between accounts) automatically flag transactions or activities indicative of potential money laundering, terrorist financing, or other financial crimes. The benefits of using AI-based AML/CFT triggers, as opposed to traditional rule-based systems, include less time investigating false positives and the ability to process and analyze more complex data. 56

AI can likewise significantly enhance data cleaning processes within FIUs. For instance, it can standardize and normalize textual data found in suspicious transaction reports, suspicious activity reports, and other reports, making the information more readable; it can also eliminate unnecessary transactions and text and merge or flag duplicate records. It can assist in complex entity resolution, involving the identification and connection of multiple records (e.g., address, bank account, phone number) related to the same real-world entity (whether an individual or an organization) within one, or across many, disparate datasets. 57 According to Quantexa, a firm automating AML processes, “entity resolution is the best way to connect billions of data points spread across multiple systems into a single, accurate view.” 58 The objective is to reduce the need for human intervention in repetitive tasks, thereby enhancing data accuracy and allowing resources to be directed toward higher-priority analytical tasks. 59

The FATF and Egmont emphasize that AI systems can automate a portion of the risk analysis process by leveraging extensive amounts of unstructured data. AI-based tools, they report, “may enable FIUs to identify emerging risks which do not correspond to already known profiles,” and can likewise help “verify and adjust findings prepared based on traditional risk analysis.” 60 Additionally, using historical data and trends analysis, AI-powered AML/CFT systems can assist intelligence analysts in predicting potential money laundering and/or terrorist financing activities among suspected subjects. By adopting this proactive approach, FIUs can implement preventive measures and disrupt criminal operations before they escalate or become entrenched. The use of AI for AML/CFT can enhance examination and supervision by establishing continuous feedback loops between FIUs and reporting entities. 61 For instance, when a reporting entity submits a suspicious transaction report or an attempted suspicious transaction report, an FIU can immediately leverage AI to assess the quality, validity, and relevance of the data, providing timely and detailed feedback to the reporting entity. In sum, both reporting entities and FIUs can benefit from incorporating AI into their organizations in various ways.

Despite these promises, the FATF and others acknowledge the challenges and risks of fully adopting AI in AML/CFT. 62 Several stand out. First, some financial institutions face significant obstacles and barriers when integrating AI-powered AML/CFT approaches within their legacy banking systems. The latter often manage various back-end activities, including account opening, setup, and transaction processing. 63 These legacy systems function well at what they do, but they were not designed to accommodate AI. The result is that properly integrating machine learning approaches may require a costly and complex retrofit or reinvention of an organization's internal processes.

Additionally, reporting entities often store their data across various silos, including within outdated web-based applications and different departments. The lack of a centralized, comprehensive database can result in significant duplication of efforts in their AML/CFT processes and can hinder the full adoption and implementation of AI-powered approaches. 64 Unfortunately, there is no straightforward or perfect method for implementing a comprehensive AI system. Many reporting entities and FIUs, irrespective of their size, struggle with inconsistent, incomplete, and poor-quality data. It is essential to recognize that with all applications of AI, whatever the domain or field, the intelligence or analysis one generates with machine learning will only be as good as the data against which it is used: garbage in, garbage out. High-quality and accurate data is crucial. Poor data quality can lead AI to unintended outcomes, inaccurate forecasts, and increased false positives, culminating in poor decision-making. 65

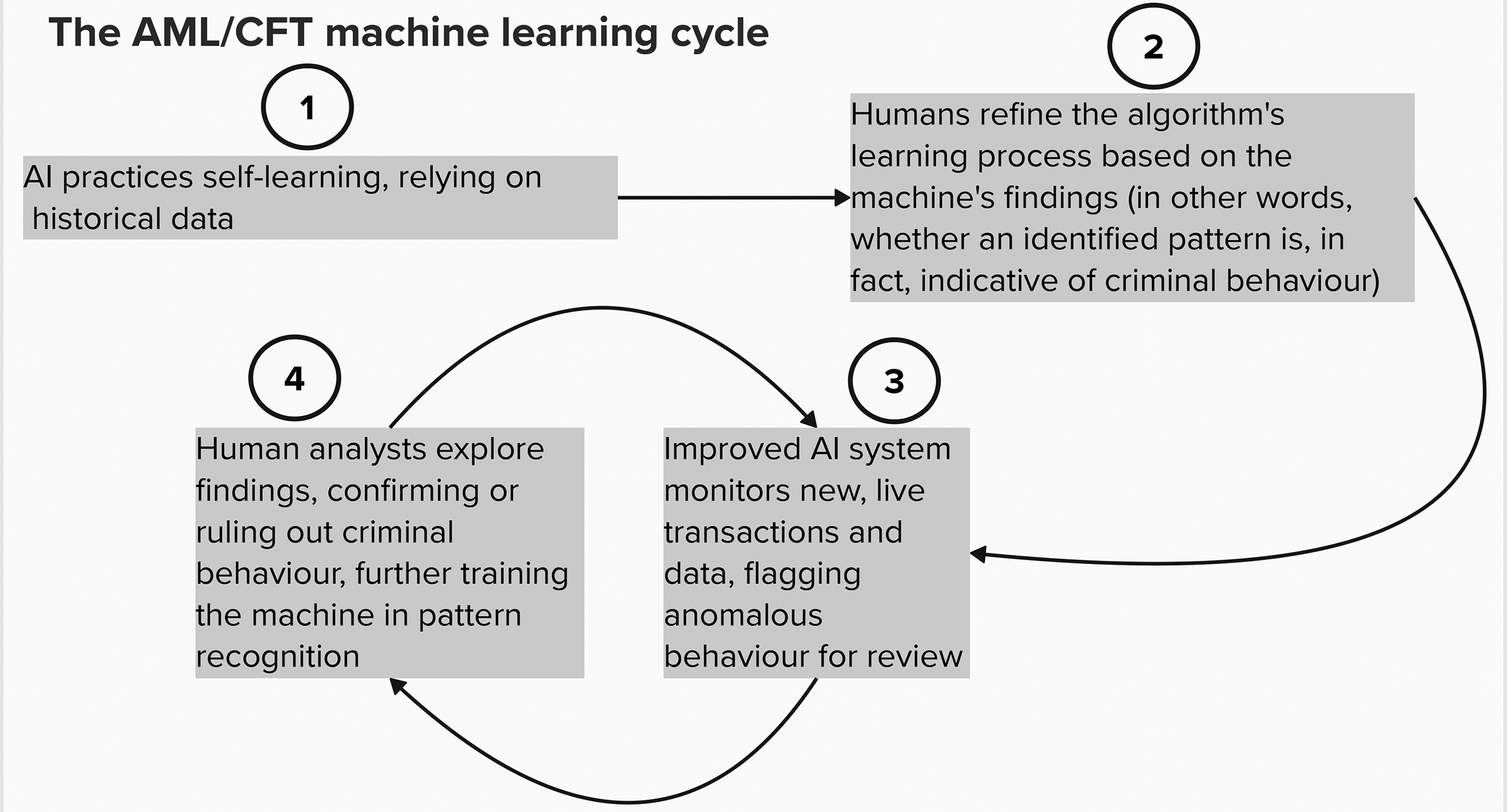

Striking a balance in implementing AI systems may involve autonomously spotting and identifying risks, and then having analysts review data and findings to make a fully-informed decision. Human oversight is critical. The analyst's input is crucial for AI to correctly identify abnormal behaviour as it occurs. Graphic One, below, illustrates how the process might evolve. These systems must recognize both low-risk, typical behaviour and high-risk, atypical behaviour. As analysts input their knowledge into the adaptive learning system, the AI can improve the precision and pertinence of its alerts, minimizing false positives and mitigating the risk of false negatives over time. 66 It is widely accepted among AI experts and observers alike that some level of oversight is necessary when implementing new technology, programs, or policies, regardless of their application or field.

Graphic One 67

Yet, even with a degree of built-in human-machine teaming and oversight, the results and findings AI produces may be difficult to understand. AI is sometimes described as a black box, an opaque system that cannot always easily or neatly explain how it identified the patterns in its findings. 68 Regulators are not just interested in transactions AI have flagged as fraudulent, but also expect to learn specific attributes, probabilities, and how and why these contribute to a suspicion of fraud. 69

Successfully adopting AI—or introducing any new and potentially disruptive technology, for that matter—into the AML/CFT system may likewise be influenced by the organizational culture inherent to the reporting entity or FIU. AI has made many employees, and employers alike, nervous about job displacement, which may slow its adoption. There is also a lack of familiarity with and sufficient training in AI tools. 70 A survey conducted by the Boston Consulting Group sheds light on the sentiments of nearly 13,000 individuals, including executives, middle management, and frontline employees in eighteen countries: the findings suggest that executives and organizational leaders tend to be more optimistic about incorporating AI into their work, while frontline employees express more concerns. 71 To help alleviate concerns and cultivate trust, reporting entities and FIUs should introduce AI gradually, provide essential training and justifications for its use, continually evaluate its worth to the organization, and build their employees’ confidence in using these new systems.

Other issues include global access to AI and technical insufficiencies. Discrepancies between countries in terms of access to AI infrastructure and expertise mean that global AI development expertise is concentrated in a few countries, leaving others with limited access to skilled professionals, infrastructure, and technology. As a result, some countries may face challenges in adopting and implementing AI solutions due to financial constraints and limited local expertise, hindering the equitable fight against global financial crimes. Moreover, there are certain gaps that AI may struggle to address, particularly in areas such as hawala and similar service providers, as well as cryptocurrency transactions. These informal and often legitimate financial systems present unique challenges for detecting ML/TF. While AI is constantly evolving and may improve over time, its current limitation in dealing with informal financial systems highlights the need for further research, development, and testing. 72

Moving the study of AI and AML/CFT forward

AI holds the potential to tackle the complexities and emerging challenges involved in detecting, preventing, and deterring a new era of financial crimes. Organized criminal and terrorist organizations are leveraging AI for their laundering needs, suggesting that both private and public sector actors at the forefront of global AML/CFT efforts must consider their options. Doing so may be essential to recalibrating the playing field and maintaining an upper hand to investigate, deter, and dismantle ML/TF schemes that cross jurisdictions and span the globe. But applying the technology to AML/CFT efforts in Canada and abroad is still in the early and exploratory stages. A program of study and practice has yet to be proposed. What follows below are considerations on the research, methodological, empirical, and practical needs of the emerging AI and AML/CFT research program.

From a research perspective, though AML/CFT scholarship has begun to address the nexus between AI (and other emerging technologies) and ML/TF, much theoretical and empirical thought and work still needs to be done. Scholars recognize that AI is rapidly advancing in this and many other domains, and have begun to piece together how both criminal entities and counter-crime organizations and agencies may leverage it to their advantage. Very little rigorous scholarship on the subject exists; much of this knowledge is anecdotal in nature, driven by media investigations, and, at times, informed by corporate interests in pitching and selling technological counter-measures. The literature is also largely atheoretical: it does not yet propose how to conceptualize the ways AI may shift the study of AML/CFT. In other words, there is no theory or framework of AI in AML/CFT. Many leading questions remain under-explored: How does AI shift criminal or terrorist motivation and behaviour, including in, but also beyond, ML/TF? Does AI attract a certain type of criminal or terrorist organization? How do criminal entities build trust with their AI tools? Do “off-the-shelf” AI systems purchased from private corporations, or other AI-for-hire schemes, lower the barrier to entry among criminal and terrorist organizations? What historical lessons can we pull from the way ML/TF have adapted to and adopted other forms of technology in the past (e.g., cryptocurrencies, the metaverse) for thinking about AI applications? 73 Given the increasing prevalence of state-sanctioned cyberattacks and ransomware that cross the traditional barrier between statecraft and international crime, there is a need to investigate and compare how state and non-state adversaries leverage AI for money laundering and terrorist financing purposes: how might different levels of analysis, from the individual to the state, inform the study of criminal use and application of AI to ML/TF?

Relatedly, empirical research on AI and ML/TF is also limited. No detailed Canadian exploration of the subject exists, for instance; nor do comparative national studies from other jurisdictions or comparative case studies across various jurisdictions and regulatory environments, including those within countries and regions, and among alliance partners. Several animating questions present themselves: What lessons might Canada pull from, or offer to, others in addressing ML/TF with AI? What legal, ethical, or governance considerations must Canada and its core allies and partners explore when applying machine learning and AI to local and cross-jurisdictional AML/CFT efforts? What are the different approaches, levels of effectiveness, and challenges faced by different countries and multilateral settings in addressing and countering AI use in ML/TF?

Further, AML/CFT studies like those proposed here will face a significant data access challenge. 74 Empirical insights and observations from relevant stakeholders, such as FIUs, regulatory government agencies, reporting entities, and law enforcement (and, where feasible, criminal and terrorist organizations), on their experiences testing and utilizing AI in countering (or facilitating) ML/TF must be gathered, assessed, and analyzed. But acquiring that data will prove difficult because of existing and persisting trade-offs that afflict most contemporary studies of AML/CFT. Jingguang Han and colleagues summarize the dilemma accordingly: “[T]here are no open-source data for money laundering research, due to the importance of maintaining client privacy. Therefore, data need to be provided by private institutions; this is a difficult task because releasing client data can compromise an institution's reputation and may not comply with data privacy governance.” 75 The conundrum complicates the study of AML/CFT, but perhaps AI can provide unique solutions. What innovative data collection, management, or analysis processes might be developed by AML/CFT studies to circumvent this dilemma when exploring the impact of AI? What proxy data might be collected, or synthetic data created, to facilitate the empirical study of AI in ML/TF? How might unique public-private-academic partnerships or consortia facilitate the necessary transfer of information and data for the rigorous study of contemporary AML/CFT?

Looking further ahead, it is also worth questioning whether AI could introduce new methods of money laundering and terrorist financing that we have not yet imagined. For instance, how might AI intersect with other emerging financial technologies, like blockchain-enabled decentralized autonomous organizations to create as-of-yet unanticipated and surprising consequences for AML/CFT? 76 What might fully-automated money laundering look like, and how do we prepare ourselves for such a scenario? What methodologies or approaches can we use to explore the future of AI in ML/TF five, or even ten, years from now? Could strategic foresight help scholars and lawmakers alike anticipate the future of automated AML/CFT, as it has with other concerns pertaining to the nexus of between technology and security, governance, and diplomacy? 77

Finally, from a practical or policy-oriented perspective, we lack a comprehensive, global legal and regulatory architecture or framework that can properly govern the use of AI across domains and industries, including in AML/CFT. This gap raises ethical concerns and potential legal challenges, such as excessive surveillance, privacy breaches, and the potential for AI algorithms to (re)produce biased or unfair outcomes, particularly for specific demographic groups. 78 To mitigate these risks, stronger legislation, enhanced oversight, and increased awareness within both corporate and government sectors are essential. Governments and organizations must support AI's responsible use and bolster public trust. Countries and international governing bodies, including the United Kingdom, the US, Canada, Japan, Brazil, China, and the EU, are taking disparate approaches to regulating and/or developing standards in AI. Some countries are further ahead than others. The EU's Artificial Intelligence Act (2024), for instance, provides a groundbreaking legislative and legal framework much more robust than the soft law initiatives of the FATF and the Organisation for Economic Co-operation and Development (OECD), which could become the primary global comprehensive binding legal framework to categorize AI risks and impose transparency requirements. 79 Its objectives include ensuring transparency and explainability in processes and outcomes, incorporating human oversight, addressing cybersecurity concerns, upholding data protection standards, and respecting privacy. 80 In essence, the EU will impose obligations on AI system providers based on the power and risk level associated with their machines. The greater the risk to society, the more stringent the restrictions.

By contrast, the US is still working on its regulatory approach. It has presented plans and statements, such as the Blueprint for an AI Bill of Rights and the Biden Administration's Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence. The executive order significantly contributes to accountability in the development and deployment of AI across organizations, 81 but, in contrast to the EU AI Act, these initiatives are nonbinding. And US President Donald Trump's second administration has yet to decide how it will approach AI in and beyond AML/CTF. Canada, too, is midstream in developing its own regulatory framework for AI. The Artificial Intelligence and Data Act (AIDA), part of the 2022 Bill C-27 Digital Charter Implementation Act, seeks to regulate the design, development, and deployment of AI systems within the private sector, focused on mitigating the risks associated with “high-impact” AI systems such as those in healthcare and emergency services or those processing biometric data for identification purposes. 82 If passed, Canada's law would require that businesses establish and adhere to new governance mechanisms and policies designed to assess and mitigate the risks associated with their AI systems, providing users with sufficient information to make informed decisions. 83 These proposed amendments are designed to bring AIDA in line with the EU's AI Act.

From a policy research perspective, how do these disparate approaches to regulating AI across the globe influence AI's future use in AML/CFT? How will the potential privacy risks of integrating AI to counter ML/TF be gauged against the potential benefit to society of doing so? What role do individual FIUs and other consortia, including the FATF, have in shaping AI regulations beyond the specifics of their relatively narrow mandates? How will existing members of various multilateral AML/CFT, policing, intelligence, and governance bodies grapple with diverging levels of capability in, and trust of, AI? And finally, is an “allied perspective” on AI in AML/CFT feasible? These and other research questions should help animate the study of AML/CFT for years to come.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Government of Canada > Social Sciences and Humanities Research Council of Canada (grant number 435-2020-0338).

Notes

Author Biographies

Corinne Ibalanky is a senior analyst with the Government of Canada and received her MA (International Affairs) from the Norman Paterson School of International Affairs, Carleton University.

Alex Wilner, PhD, is an associate professor of International Affairs at the Norman Paterson School of International Affairs at Carleton University, chair of Carleton's Cybersecurity Collaborative Specialization, and director of the Infrastructure Protection and International Security program.