Abstract

As robots are required to conduct versatile manipulations in unstructured space environments, traditional planning and control strategies may become cumbersome or even infeasible. To overcome this challenge, the paper presents imitation learning with inherent Lyapunov stability (IL2S), a novel framework for jointly learning the dynamical system and accompanying Lyapunov function from demonstrations. We represent the robot motion policy as a nonlinear autonomous dynamical system that captures the invariant motion patterns from a handful of teaching examples. Furthermore, the elaborate neural networks are leveraged to simultaneously learn the motion model and the parametric control function, whereby the generated movements closely follow the demonstrations, ultimately converge to the target, and instantly respond to unanticipated changes. Our approach yields resembled trajectories on the handwriting dataset and is demonstrated extensively in real-world experiments, where the robot accomplishes two different satellite manipulation tasks, namely static grasping and dynamic docking.

Introduction

The last decade has witnessed burgeoning progress in space manipulations encompassing docking, repairing, refueling, assembly and orbit cleanup, thanks to their notable economic potential and strategic benefits.1,2 Accordingly, a variety of pertinent technologies have been advanced rapidly by the aerospace community all over the world.3,4 The intricate nature of missions in harsh space environments poses critical challenges for the planning and control of robots remaining to be further developed.

The motion planning of robots is one of the research hotspots in the space manipulation field. In the case of a structured environment, preprogramming strategies are commonly applied. Such methods require experts to design motion trajectories ahead according to predefined tasks, then the trajectories are tested on the ground and stored in the on-board controller. When the space robot executes a task in orbit after deployment, it invokes the corresponding trajectory command upon the specific task. Using this method, the Shuttle Remote Manipulator System (SRMS) completed on-orbit maintenance and repair missions, such as the inspection of the space shuttle and the repositioning of the payload. 5 To improve the adaptation to the dynamic environment, visual servo and other sensor-based guidance were used to adjust offline trajectories. 6 For example, the Orbital Express spacecraft was equipped with different sensory devices to estimate the real-time state in proximity tasks. 7 Additionally, teleoperation technology also plays a significant role in space robot control. It puts the operator directly into the control loop. The operator can utilize physical movements to give commands to directly control the robot, while perceiving the state information of the space robot through vision and force feedback. National Space Development Agency of Japan accomplished the teleoperation experiment based on predictive virtual environment technology on ETS-VII, realized the ground-space bilateral operation with a delay of 7 s, and successfully implemented the slope tracking and socket experiments.8,9 Chen et al. 10 addressed the issue of bilateral teleoperation control for the networked space robotic system subject to multiple physical constraints. Nevertheless, with the growing complexity and versatility of tasks performed by space manipulators, two main issues arise. First, existing control schemes are heavily dependent on manual programming, which is difficult to put into use efficiently without a large amount of expertise and experience. Second, traditional approaches provide a limited level of autonomy and adaptation to fulfill the requirements of complex operations.11–13

Propelled by advances in artificial intelligence and cybernetics, some of these concerns mentioned above could be alleviated from an imitation learning perspective.14–16 Imitation learning, commonly referred to as learning from demonstrations, is one of the most instinctive paradigms to learn skill model capturing motion patterns from a collection of examples.17–19 It has been a thriving theme for decades and three main strands stand out in this domain, namely, dynamical-system-based methods which learn the underlying motion dynamics,20–22 probabilistic approaches that aim to harness data variability and model uncertainty,23–26 and more recently, neural networks which focus on generalization to unseen situations.27–29 Dynamical-system-based methods have been shown to be particularly promising and potential in modeling robot motion, which reacts naturally to disturbances in the dynamic setting, instead of stubbornly mimicking the teaching examples.30,31 However, designing a fictitious system is not trivial given that the estimated dynamics demands furnishing a stabilizing certificate enabling the robot to ultimately reach the desired goal from any initial points. As a result, there is a body of research devoted to learning dynamical system from demonstrations. The pioneering work was Dynamic Movement Primitive (DMP) which estimated a time-varying dynamics for a wide spectrum of robotic skills, ranging from walking, pouring, to grasping.32–34 Stable Estimator of Dynamical Systems (SEDS) learned a stable nonlinear autonomous dynamical system from human demonstrations using the Gaussian mixture model and the quadratic Lyapunov function. 20 A principal deficiency of SEDS is that the quadratic stability constraint is too restrictive to effectively mimic highly nonlinear trajectories. To enhance the precision, a more universal task-oriented energy function was estimated using constrained optimization from a few demonstrations. 35 Figueroa and Billard. 36 presented an alternative approach to derive an asymptotically stable model by the linear parameter varying reformulation. To address the “accuracy versus stability” dilemma, the diffeomorphism often modeled by a neural network was introduced to learn the stable dynamical system on a latent space.37–40 Despite making extensive advancements, several challenges still need yet to be resolved: encoding and reproduction of the full robot pose,40,41 adaptation to unexpected changes, 42 handling sequential or dynamic tasks,43,44 and among others.

Inspired by the preceding discussion, the paper resorts to the capabilities of deep learning and imitation learning to synthesize a motion policy from human demonstrations. Our work follows the line of dynamical-system-based learning from demonstrations. This approach exhibits desirable properties that enable the system to react swiftly to uncertainties in dynamic settings while guaranteeing that the resulting movements converge to the attractor. The core contributions of this study revolve around the following three axes:

(i) A data-driven modular motion planning framework is investigated that encodes the robotic manipulator movements into a nonlinear autonomous dynamical system at the kinematic level, dispensing with tedious hardcoding of robot skills;

(ii) In contrast to previous approaches that enforce stability via imposing predefined control constraints, we provide a more general paradigm to concurrently learn a dynamical system and corresponding Lyapunov function from demonstrations;

(iii) The efficacy of the proposed scheme is evaluated on the handwriting dataset as well as demonstrations collected from a real-world robotic system, demonstrating that our approach can accomplish the satellite manipulation tasks of static grasping and dynamic docking.

The remainder of this article is organized as follows. The second section formulates the dynamical system to produce robot movements. Then, the mechanism to jointly learn the dynamical system and Lyapunov function from demonstrations is presented. The fourth section provides the results of simulated and real-world robot experiments. Finally, this paper is completed with conclusions.

Problem formulation

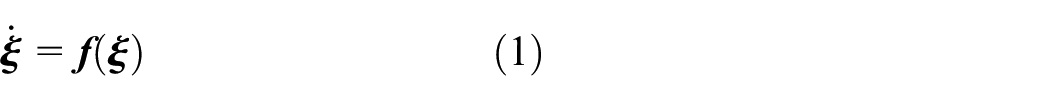

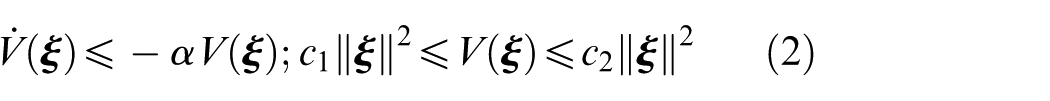

Assume that the motion of the robotic manipulator is characterized by a first-order autonomous ordinary differential equation, that is, time-invariant dynamical system

where

Given a set of demonstrations

The representative approach to achieve the aforementioned objective is in two steps: A regression algorithm is first employed to learn an original dynamical system, followed by the addition of an auxiliary control term based on Lyapunov theory to ensure system stability, as exemplified by methods such as NEUM-GPR 26 and CLF-GMR 35 . However, existing approachesstill exhibit multiple limitations in terms of model structure, stability guarantees, and generalization capabilities. Most works implicitly assume that robot trajectories adhere to a Gaussian distribution, which to some extent constrains the reproduction accuracy and generalization ability of the learned model. Although data-driven energy functions are utilized to enhance model precision, the essential properties, namely unique minimum, positive definiteness, and continuous differentiability, are not fully guaranteed. Moreover, previous imitation learning approaches rarely take into consideration the dynamic nature of space manipulation tasks. Therefore, it is of significant importance to propose a sound imitation learning algorithm for these tasks to unify accuracy and flexibility.

In this article, we focus on overcoming the key challenges of leveraging dynamical-system-based imitation learning for space robotic manipulation. This study distinguishes itself from prior research in two key aspects. On the theoretical front, we adopt the neural network with a special structure to simultaneously learn the dynamical system and the Lyapunov function, thereby striking a balance between stability and generalization. On the practical front, we conduct ground-based simulation experiments for satellite static grasping and dynamic docking, substantially expanding the application scope of imitation learning.

Proposed approach

This section delineates a new approach to learning skill model from demonstrations, referred to as imitation learning with inherent Lyapunov stability (IL2S). Firstly, the compositional stable dynamical system is deduced by solving a constrained optimization problem. Then, harnessing the prominent fitting competence of neural networks, we estimate concurrently the movement dynamics and associated Lyapunov function, where the dynamics is inherently adherent to be stable satisfying the Lyapunov criteria. Finally, the exponential stability of the learned dynamical system is rigorously proven.

Stable dynamical-system-based robot motion

To clarify the narration, we begin the section with a lemma that presents an instructional criterion for analyzing the stability of a nonlinear dynamical system in the sequel. 45

for all

The dynamical system

We will learn the nominal system

for all

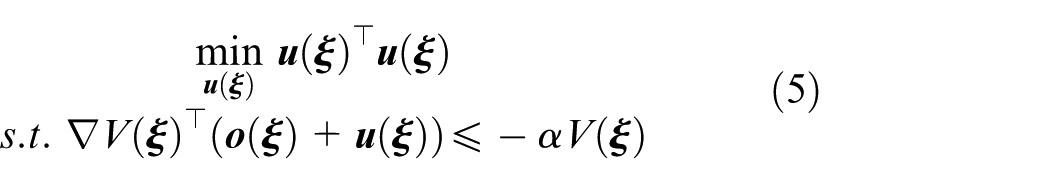

Moreover, we intend to adjust the nominal estimated dynamics model

It is found apparently that the issue (5) is convex and its analytic solution can be derived as

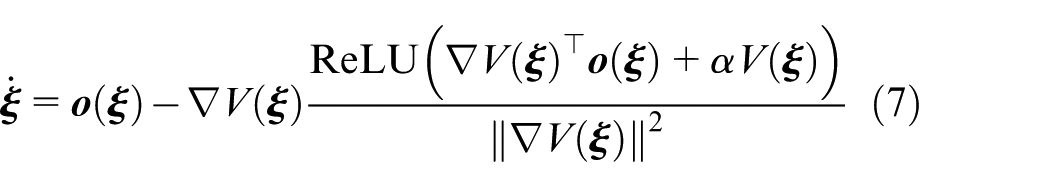

The stable dynamical system is eventually obtained as follows

which implies that, for all

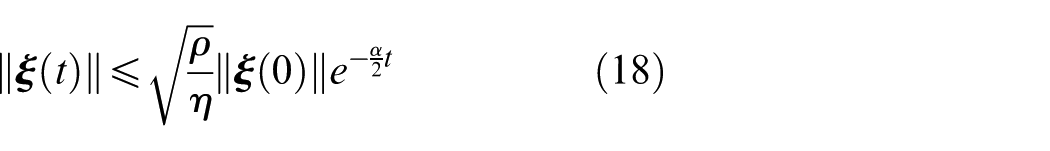

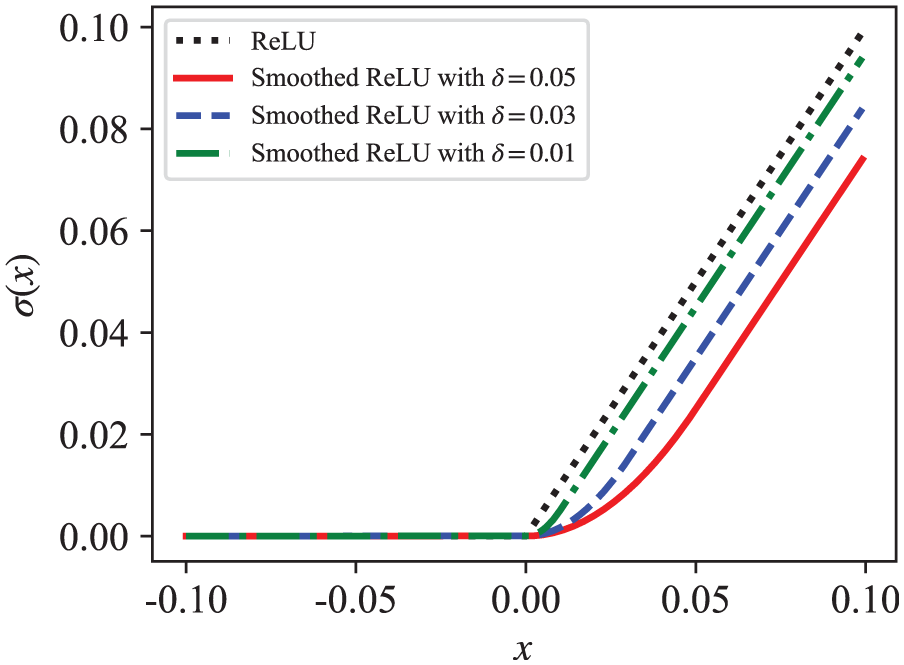

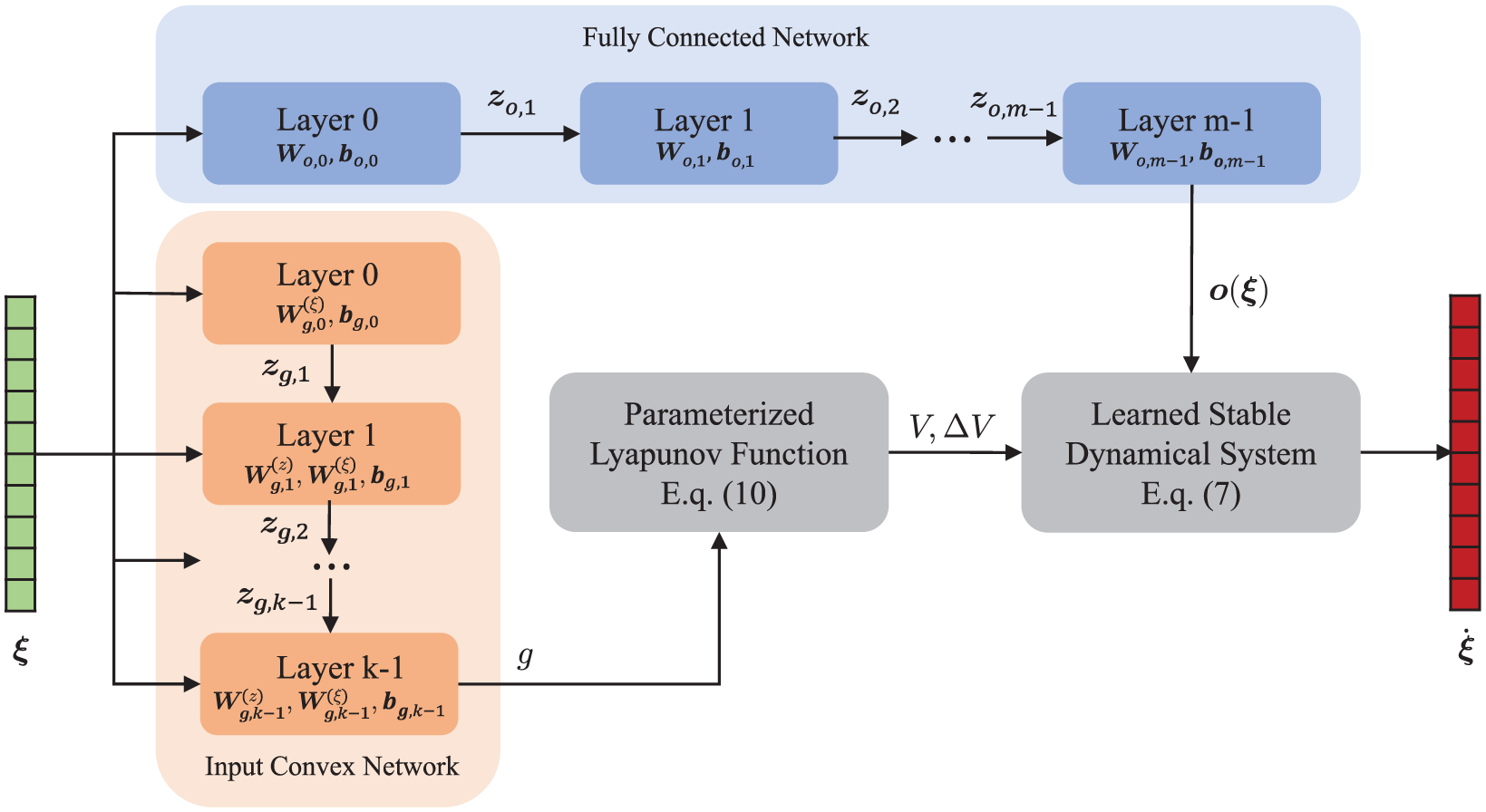

The schematic architecture of our proposed learning and control framework is illustrated in Figure 1, comprising two distinct operational modes: online execution (green/blue modules) and offline training (orange modules). In the offline learning phase, we employ kinesthetic demonstrations

The schematic diagram of the designed learning and control scheme.

Joint learning of dynamics and Lyapunov function from demonstrations

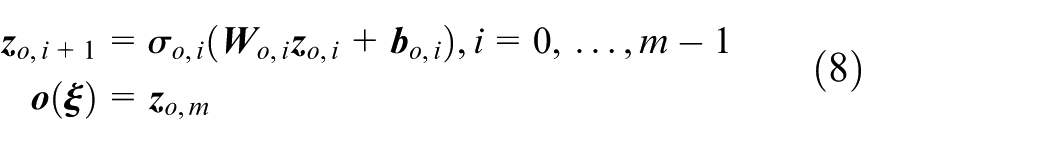

This subsection delves into the problem of how to determine the original dynamics

The

where

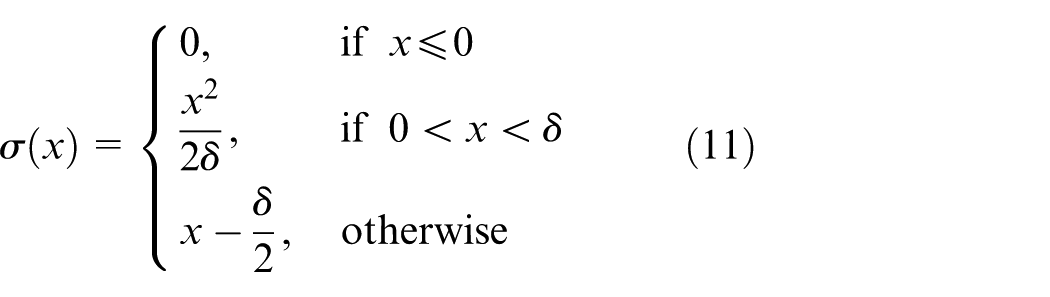

While the previous narration may appear to make the issue of learning a stable dynamical system apparent, the subtlety of this method is rooted in the parameterization of the control function

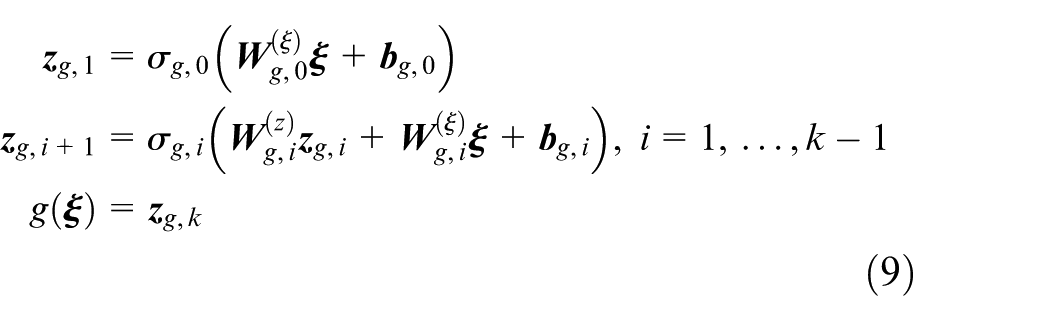

Firstly, as mentioned previously,

where

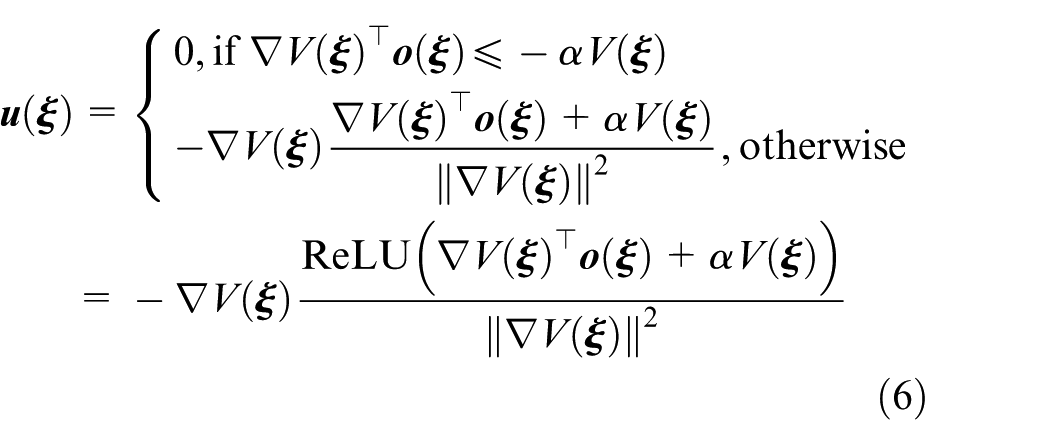

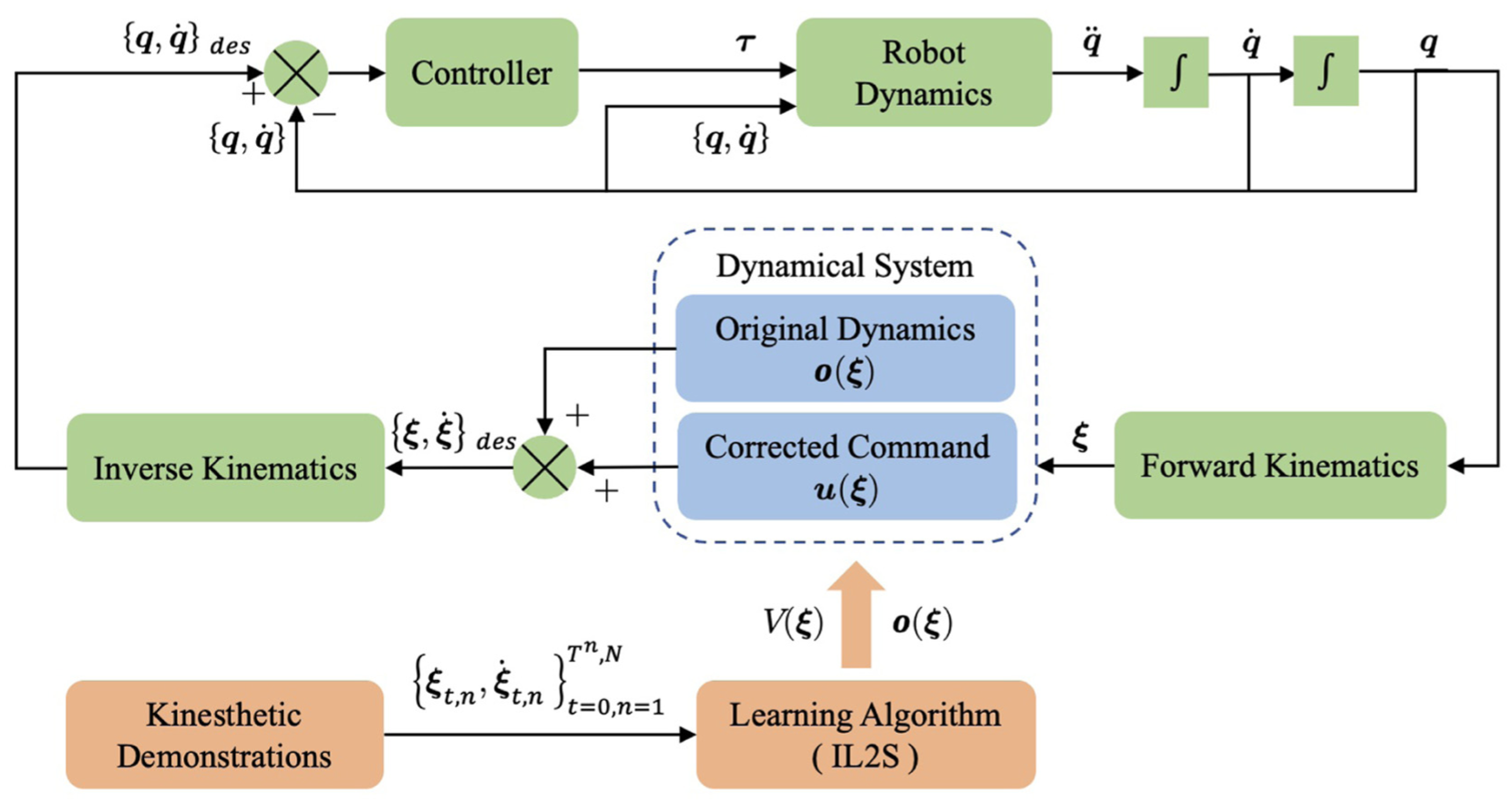

It could be found that a remarkable characteristic of ICNN lies in the incorporation of “passthrough” layers which establish a direct connection between the input

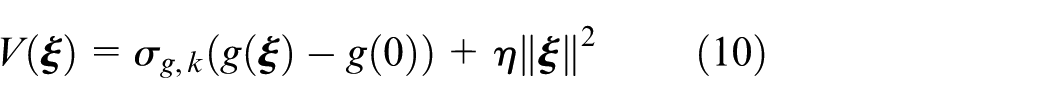

Furthermore, given that the Lyapunov function

where

Last but not least, the control Lyapunov function

where

Smoothed ReLU activation.

Figure 3 illustrates the comprehensive structure for joint learning of the dynamical system and the Lyapunov function from demonstrations. This framework addresses two critical aspects of stable imitation learning:

1) Original dynamics learning: the FCNN is employed to approximate the original dynamical system. This network learns the intrinsic characteristics of the demonstration trajectories by minimizing reproduction error through supervised training. Crucially, the network captures the desired motion primitives while preserving the smoothness and feasibility constraints inherent to the task.

2) Stability-guaranteeing Lyapunov learning: parallel to dynamics learning, the ICNN is utilized to synthesize a valid Lyapunov function. Specifically, the ICNN’s enforced convexity with respect to the system state ensures the learned function satisfies the fundamental Lyapunov conditions. This enables the derivation of a corrective control term that actively modulates the nominal dynamics.

The synergistic operation of these components yields a provably stable control policy. While the FCNN generates target-conforming motions, the ICNN-derived correction term guarantees global asymptotic stability by constraining the system evolution to progressively decreasing level sets of the Lyapunov function. This joint learning approach simultaneously achieves performance optimization (handled by the FCNN) and stability assurance (enforced by the ICNN), thereby mitigating limitations inherent in end-to-end learning methods that lack rigorous stability guarantees.

The schematic that illustrates the joint learning of stable dynamics and Lyapunov function from demonstrations.

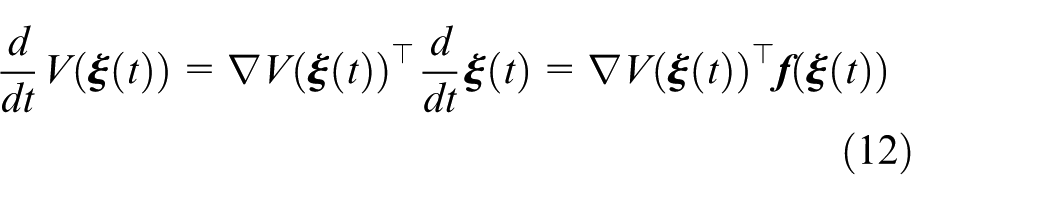

Stability analysis

In the following, we analyze the stability of the constructed model rigorously via Lyapunov theory. The inherent stability of the learned dynamics is declared in Theorem 1.

Considering (4), it is obtained as

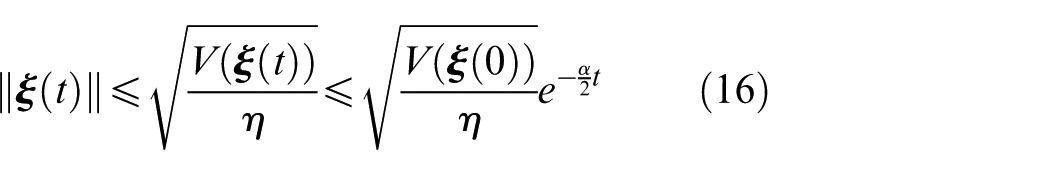

Integrating this inequality gives the upper bound as

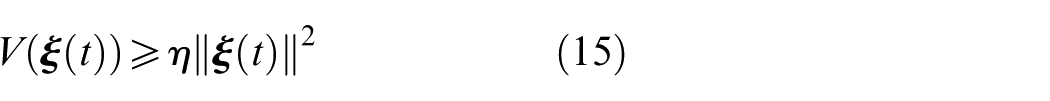

From the positive definite structure of

Combining (14) and (15) yields

Then, given the fact that the activation function

where

Taking (16) and (17) together, it has

Therefore, the dynamical system exponentially converges to the equilibrium. Theorem 1 is proven.

Experiments and results

This section demonstrates the capabilities of our approach through a series of experiments. We first validate the performance of the learned dynamical system in terms of stability and reproduction accuracy using the handwriting dataset. Subsequently, we conduct two satellite manipulation tasks: static grasping and dynamic docking.

Simulated experiments

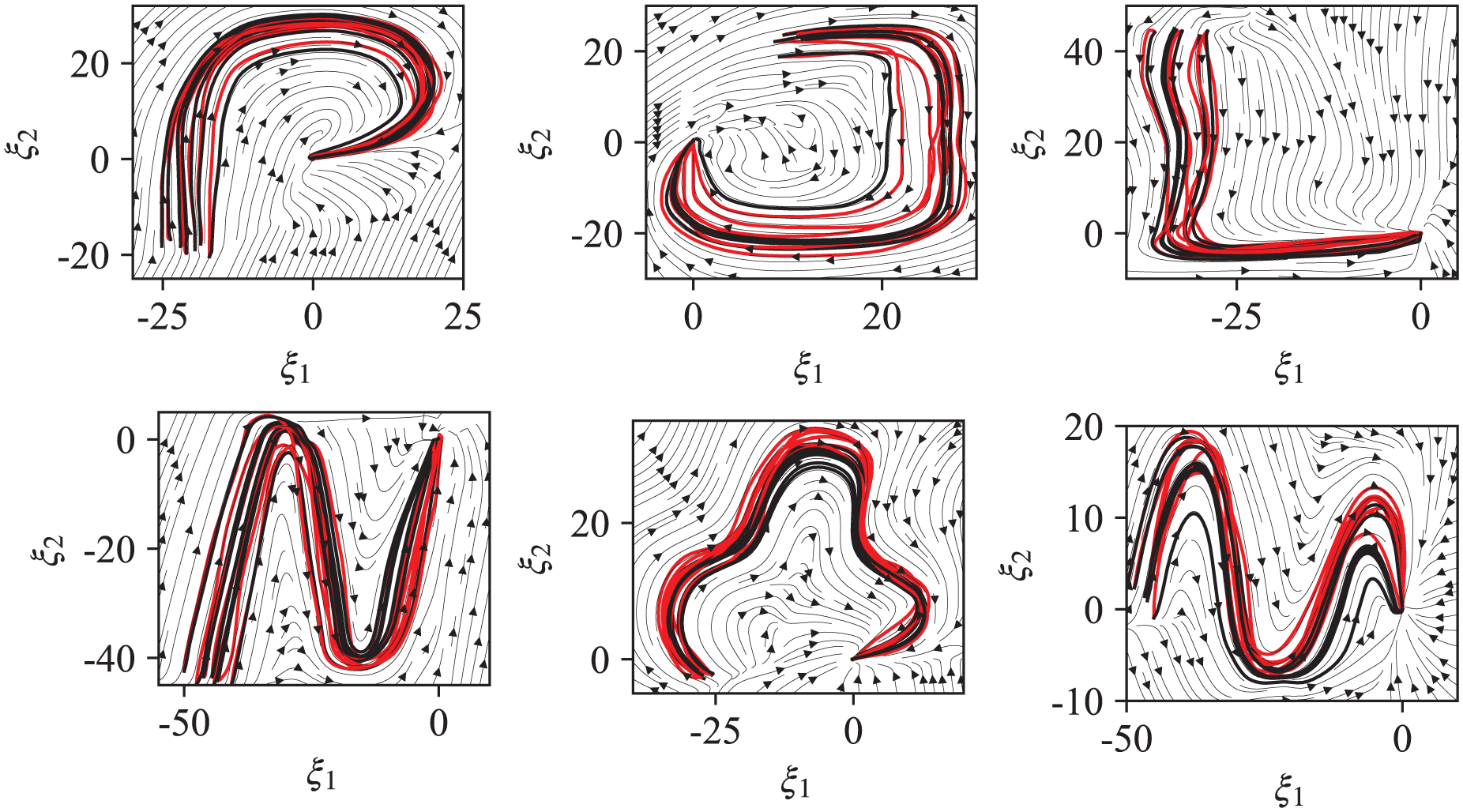

The performance of the presented framework is first gauged upon the LASA handwriting dataset. This dataset contains 30 patterns of 2D human handwriting motions, each with seven similar demonstrations starting from different initial points and ending at the identical goal. For each motion case, the model is constructed with neural networks as described in (8) and (9). Specifically, let

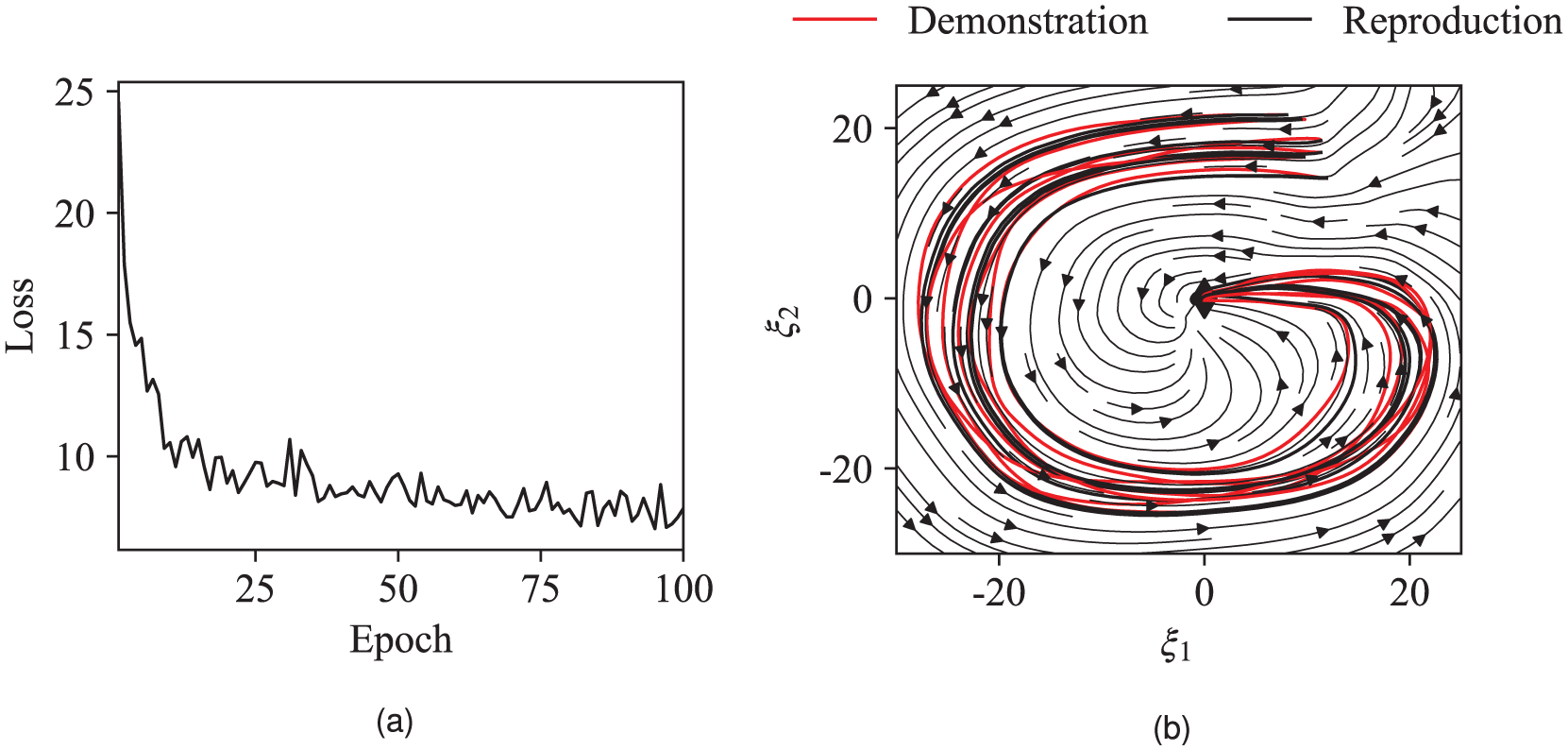

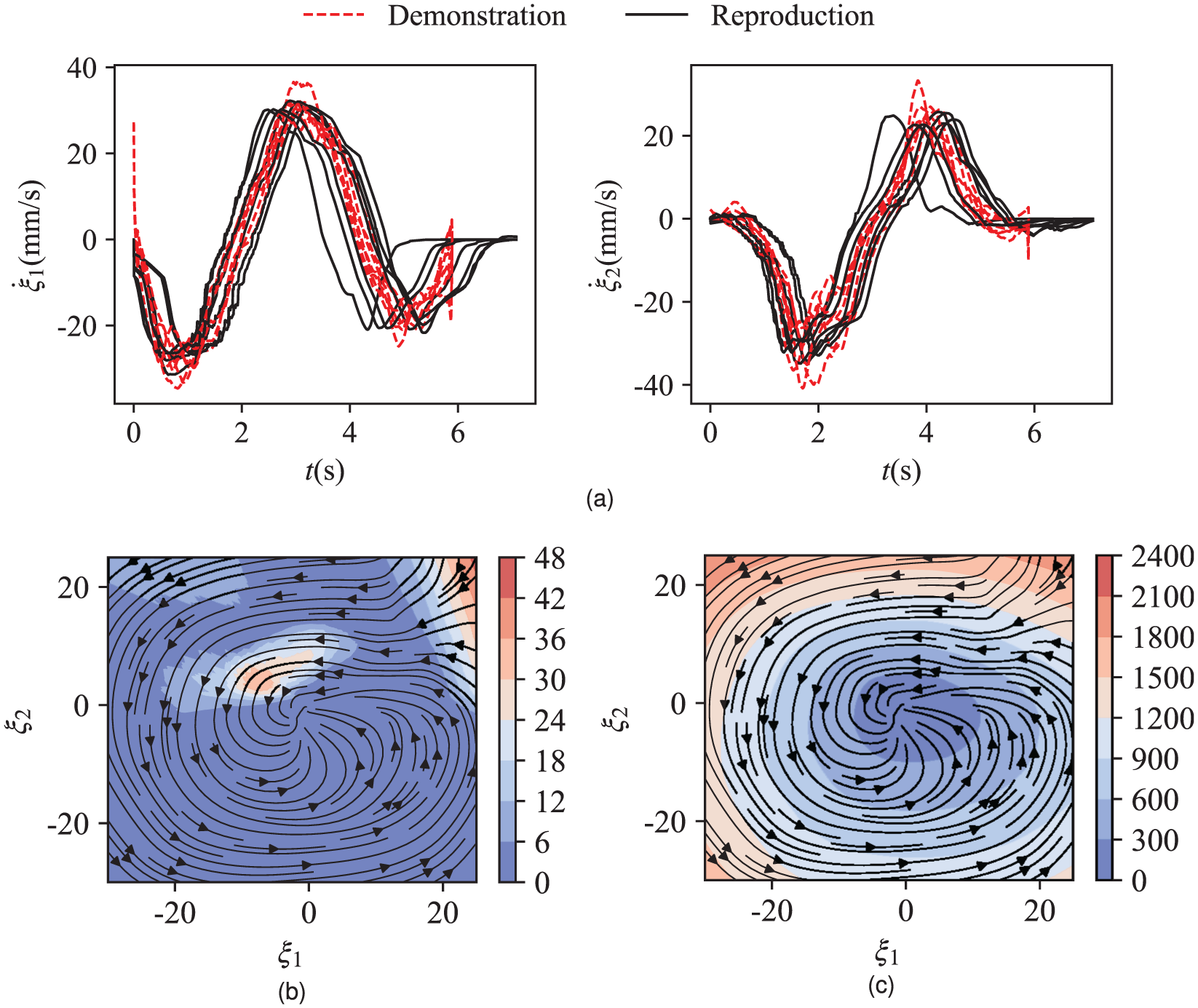

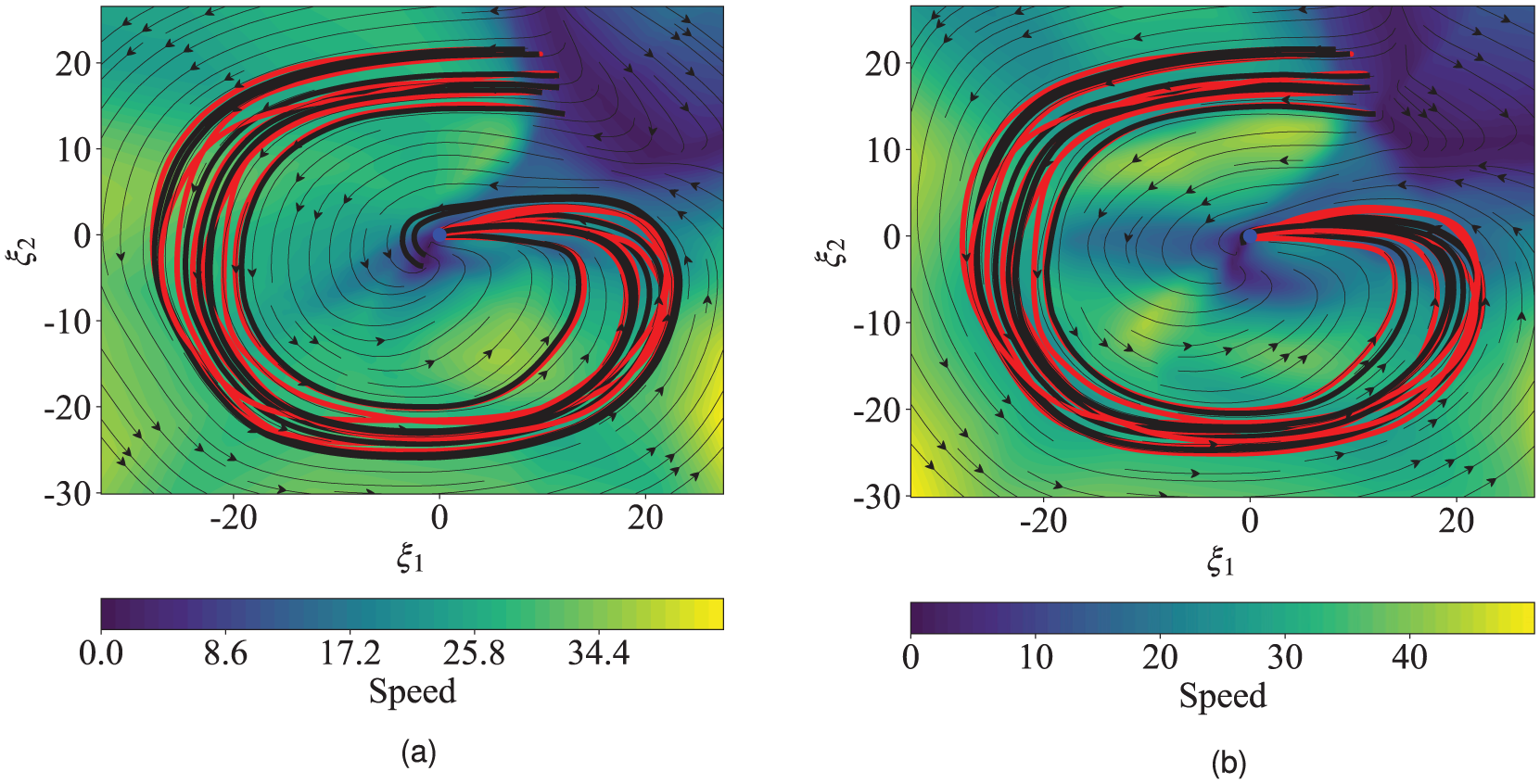

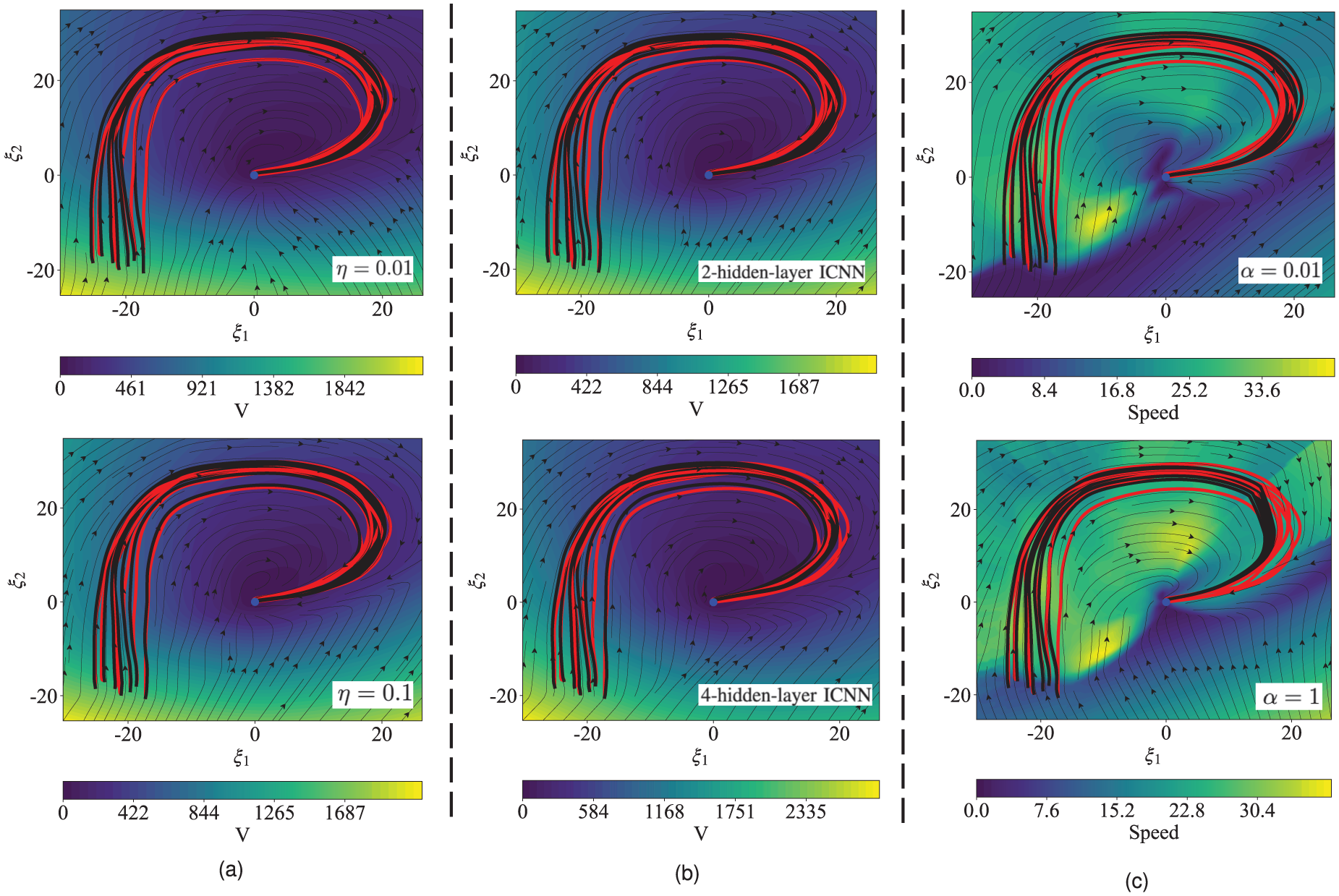

Figure 4(a) outlines the MSE loss evolution throughout the training of Gshape motion. It could be observed that the training loss remains in a downward trend and is almost convergent at about 80 epochs. The plots in Figure 4(b) illustrate the demonstrated Gshape trajectories with red lines, the learned dynamical system represented as a light black vector field, and the reproduced trajectories with dark black lines. These trajectories are generated by simulating the forward integration of the resulting dynamical system. The simulations start from the same points as those in the examples. As we can see, the presented IL2S learning system is capable of accurately reproducing the reference trajectories and encapsulating the inherent motion preferences of Gshape in a broad area. Furthermore, all generated trajectories starting from different initial points ultimately converge to the target, which manifests the stability of the learned dynamical system. Figure 5(a) indicates the reproduced trajectories have a similar velocity profile to the demonstrations, meaning that they follow the analogous dynamics.

The learned results of Gshape motion: (a) Training loss evolution. (b) Learned dynamical system.

The learned results of Gshape motion: (a) Velocity profile. (b) Norm of the corrected command. (c) Learned Lyapunov function.

Figure 5(b) showcases the norm of the corrected term for Gshape movement. It is evident that the corrected command is activated only on a small region to guarantee convergence to the goal, which implies that the learned Lyapunov function is, to a great extent, consistent with the demonstration trajectories. Moreover, activation of the corrected term in a fraction of trajectories demonstrates that

The learned dynamical system of six handwriting motion patterns.

To elucidate the critical role of enhanced ReLU activation (11) in the learning framework, we perform a comparison with the canonical counterpart. As demonstrated in Figure 7, although the standard ReLU enables closer trajectories alignment with expert demonstrations, it induces progressive divergence from the target in the end. This instability fundamentally originates from the standard ReLU’s non-differentiable characteristic at the origin, which violates the smoothness prerequisite for constructing a valid Lyapunov function. In sharp contrast, the improved ReLU formulation exhibits two critical advantages simultaneously: 1) preservation of demonstration-matching precision through adaptive gradient modulation, and 2) provable asymptotic stability via maintaining continuous differentiability across the operational domain. The empirical evidence coupled with the theoretical stability guarantee conclusively establishes the indispensability of the incorporated ReLU modification for achieving both trajectory-tracking accuracy and closed-loop convergence in the imitation learning framework.

Learned dynamical system of Gshape using two configures of our method. (1) Canonical ReLU function. (2) Improved ReLU function.

To rigorously benchmark the superiority of the proposed method, we evaluate IL2S against two approaches: NEUM-GPR 26 and CLF-GMR 35 . CLF-GMR integrates Gaussian mixture regression (GMR) for dynamical system modeling while enforcing stability through online trajectory correction regulated by a parameterized Lyapunov function, specifically constructed as a weighted combination of asymmetric quadratic functions learned from demonstration data. In contrast, NEUM-GPR employs Gaussian process regression (GPR) to initially learn the nominal dynamical system, and then augments the system with an online-optimized corrective term derived through constrained neural control Lyapunov framework to ensure stability. To ensure experimental comparability, we rigorously adhere to the parameter configuration protocols specified in the original implementations to achieve optimal performance. Detailed parameter settings are systematically documented in the respective publications.

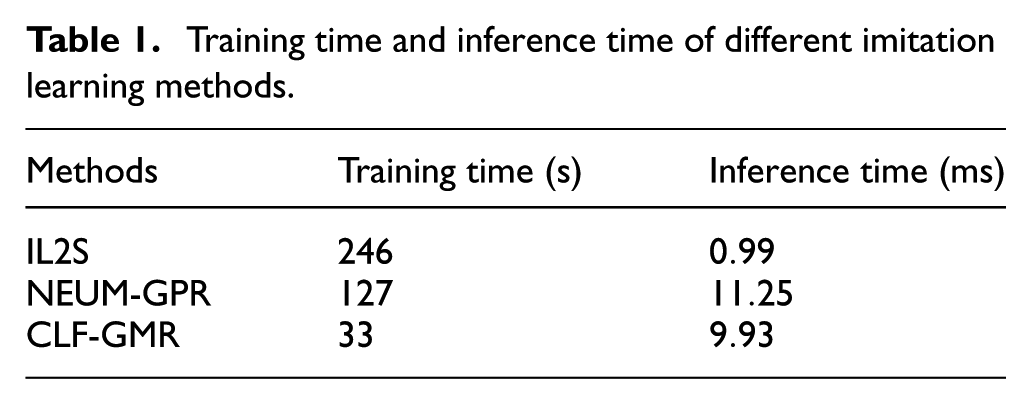

Training time and inference time serve as primary metrics for evaluating computational efficiency. As indicated in Table 1, IL2S requires marginally longer offline training than NEUM-GPR and CLF-GMR, but achieves a millisecond-scale inference time, which is significantly faster than comparative methods. This improvement stems from IL2S’s explicit integration of Lyapunov stability constraints during offline training, thereby eliminating the computationally intensive online optimization inherent to NEUM-GPR and CLF-GMR. These results robustly demonstrate the real-time performance advantage of IL2S, which is suitable for dynamic manipulation tasks.

Training time and inference time of different imitation learning methods.

Two metrics, Root Mean Squared Error (RMSE) and Dynamic Time Warping Distance (DTWD), are utilized to quantitatively assess the contour similarity between the reproduction and demonstration. The comparative results of six motion patterns are shown in Figure 8. We find that the proposed IL2S can achieve favorable performance in terms of both RMSE and DTWD, which highlights the accuracy and flexibility of reproduction.

Comparative results of the proposed IL2S against NEUM-GPR and CLF-GMR under two metrics with one standard deviation: (a) RMSE. (b) DTWD.

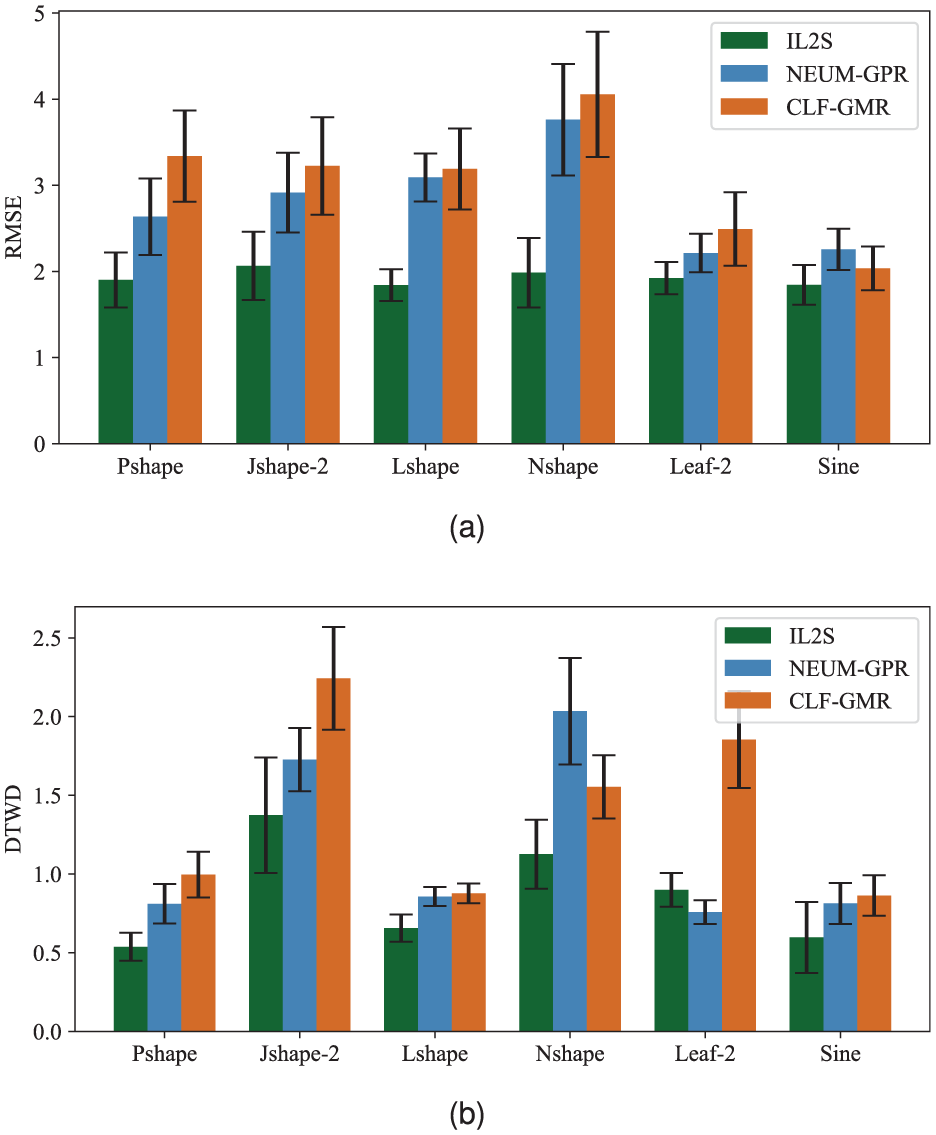

To systematically investigate the influence of parameters on the learned system behavior, we conduct a comprehensive ablation study based on the Pshape demonstration. Adhering to the single-variable principle, we rigorously control all system parameters except for the target variable according to the baseline specifications.

Figure 9(a) comparatively illustrates system behaviors under

Ablation results for the Pshape demonstration. (a) Different values of

Figure 9(b) displays the generated systems under two different layers of ICNN. Crucially, the results demonstrate that the convexity property of the learned Lyapunov function remains invariant to the network depth, while the resulting dynamical systems exhibit comparable performance in terms of generalization capability, reproduction accuracy, and stability. These findings empirically establish that the fundamental stability characteristic and performance metrics remain robust against modifications in ICNN’s architectural complexity.

The velocity modulation effect of the parameter

Robot experiments

To assess the designed control scheme in real settings, we implement two satellite manipulation tasks using a 6-DOF UR5e arm mounted with the Robotiq gripper: static grasping and dynamic docking.

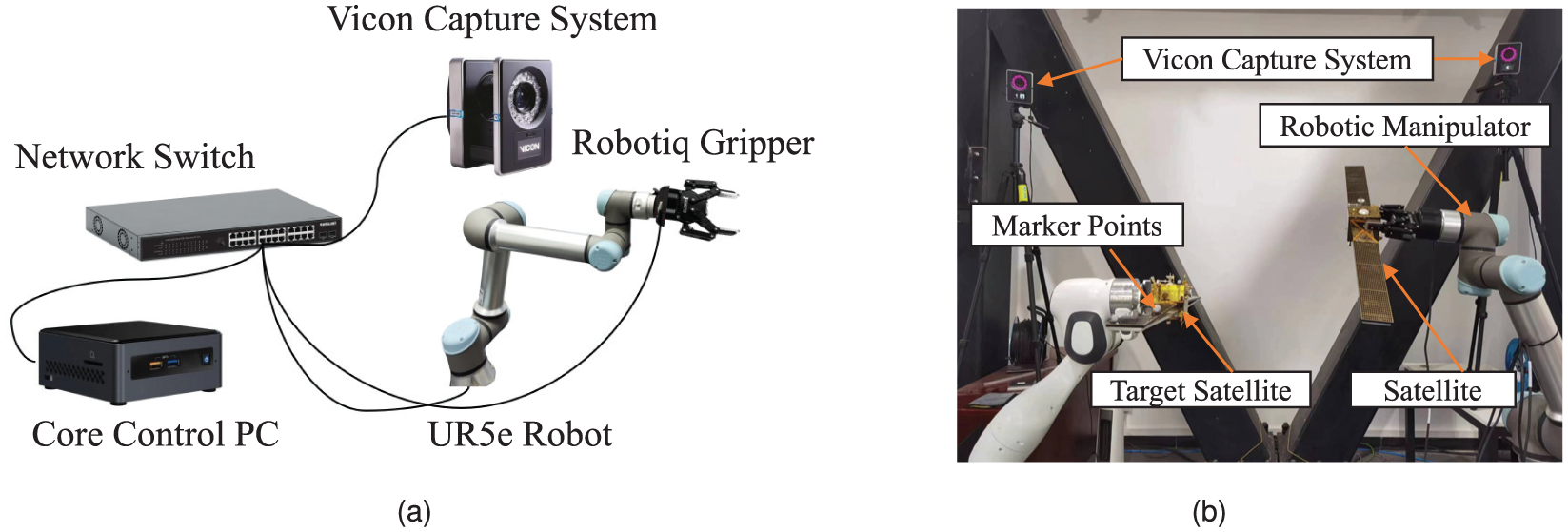

As shown in Figure 10, the robot experimental platform consists of six components: core control computer, robotic manipulator, Robotiq gripper, satellite model, Vicon motion capture system, and network switch. The developed learning algorithm is executed on the core computer under the Ubuntu 22.04 environment. The Vicon capture system is leveraged to acquire the pose of the target in real time. The network switch establishes communication link between the control computer and other devices, ensuring the consistency of signal timing. For the UR5e robot, the application program interface can be invoked directly to realize the low-level control which uses the PID approach internally.

The robotic experimental platform: (a) Hardware composition and connection. (b) Physical devices of satellite manipulation.

The demonstrations are collected from the kinesthetic teaching robot in gravity compensation mode. The data is collected at a frequency of

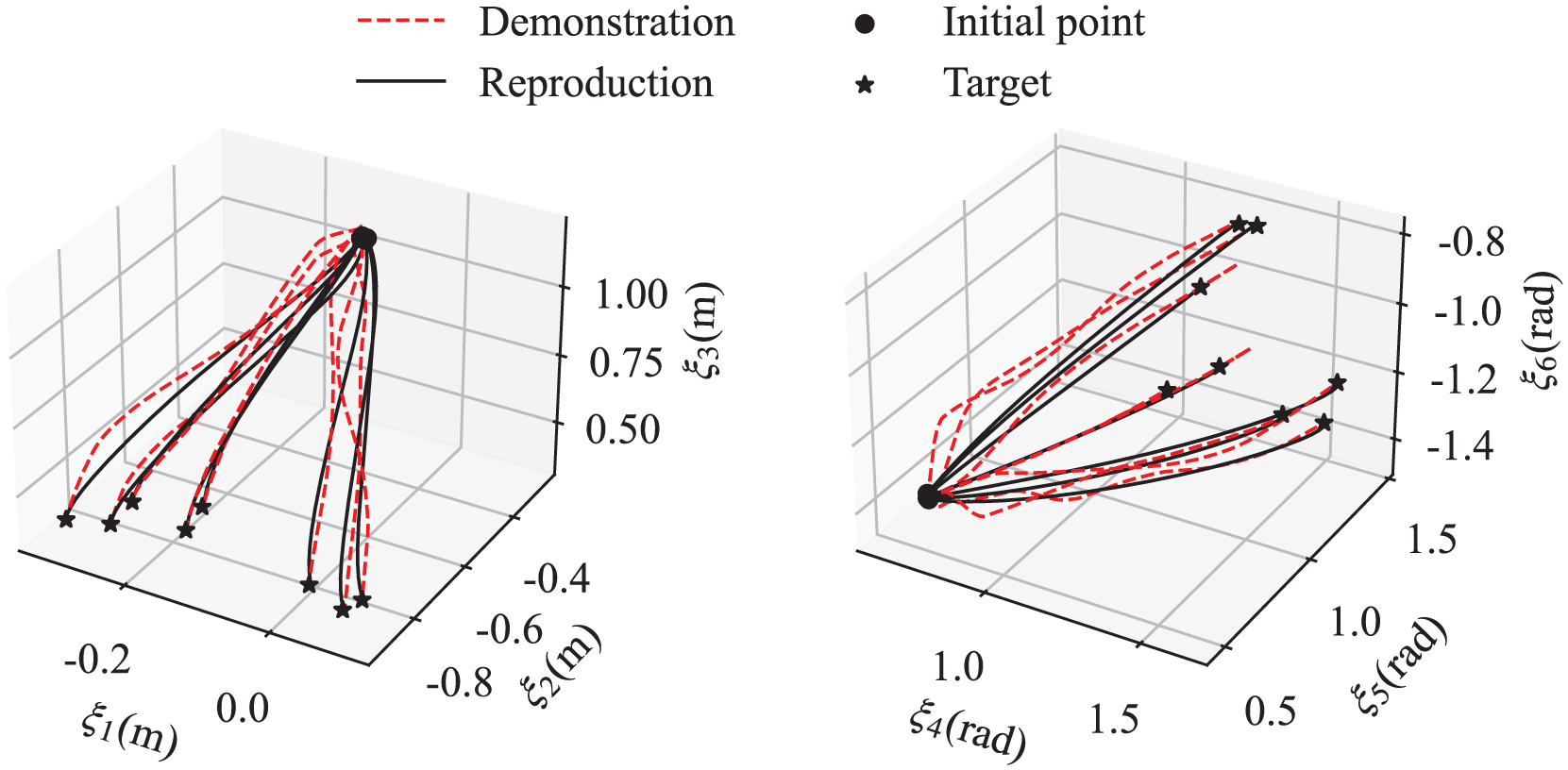

The resulting dynamical system, which is estimated by neural networks, is applied to represent the trajectories of the end-effector position

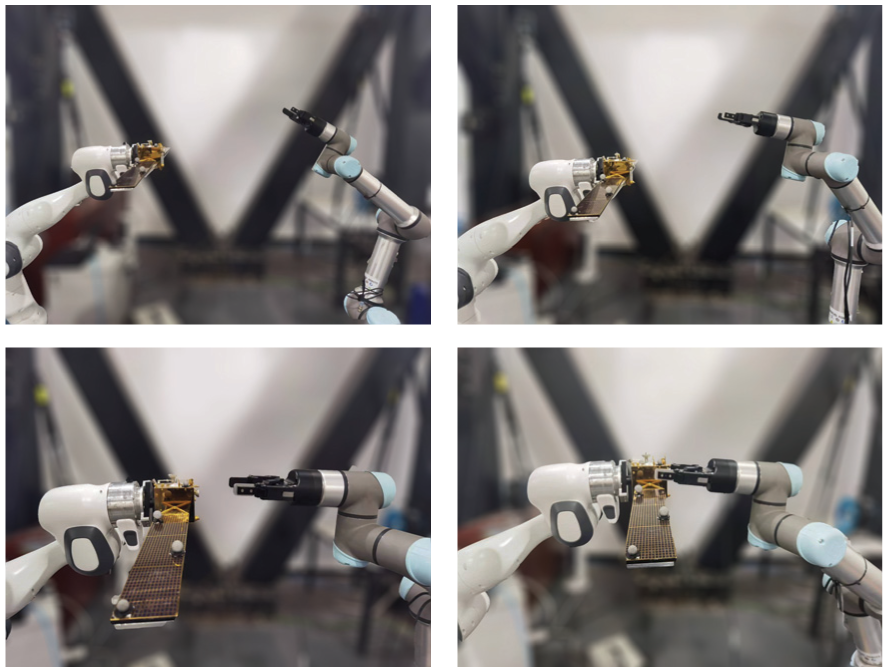

In the first scenario, the robotic arm is tasked with autonomously reaching and grasping a static satellite. Figure 11 presents the generated end-effector trajectories aimed at eight spatially distributed positions within the workspace. As anticipated, these trajectories preserve the characteristics of the human demonstration while guaranteeing asymptotic convergence to the target. Sequential snapshots in Figure 12 document the complete grasping procedure, highlighting the excellent capability to achieve the predefined target position and orientation during the final approach phase.

Reproduced trajectories starting from different points.

Snapshots of the static grasping.

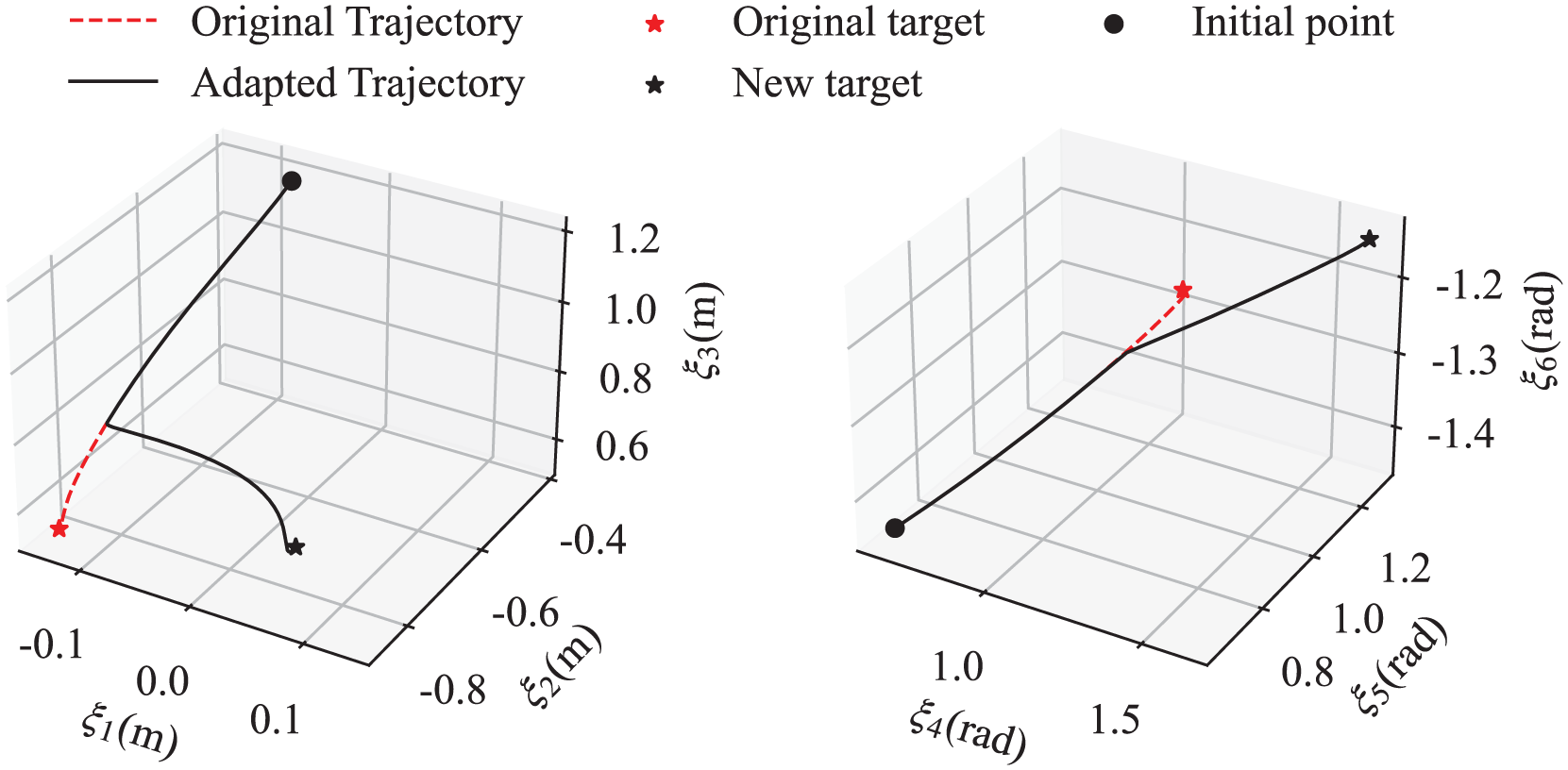

To rigorously evaluate the real-time adaptability of IL2S, we introduce an unexpected target perturbation during the task execution. Specifically, at t = 1s after the initiation of the movement, the target satellite is physically displaced to a new location. Figure 13 demonstrates the response of the dynamical system: a new trajectory is regenerated online, simultaneously satisfying physical constraints. Critically, this adaptation leverages our online framework without recomputing the fundamental motion primitives. The replanned path successfully intercepts the displaced target with high positional and angular accuracy, validating the instantaneous adaptation capability of the presented method.

On-the-fly adaptation to a change in the target for the static grasping task.

Collectively, this scenario demonstrates the efficacy of our method in high-dimensional manipulation spaces: precise kinematic coordination is achieved through 6-DoF pose control. The demonstrated performance validates exceptional stability and robustness when operating in unstructured environments, confirming the capacity of IL2S to handle real-world uncertainties.

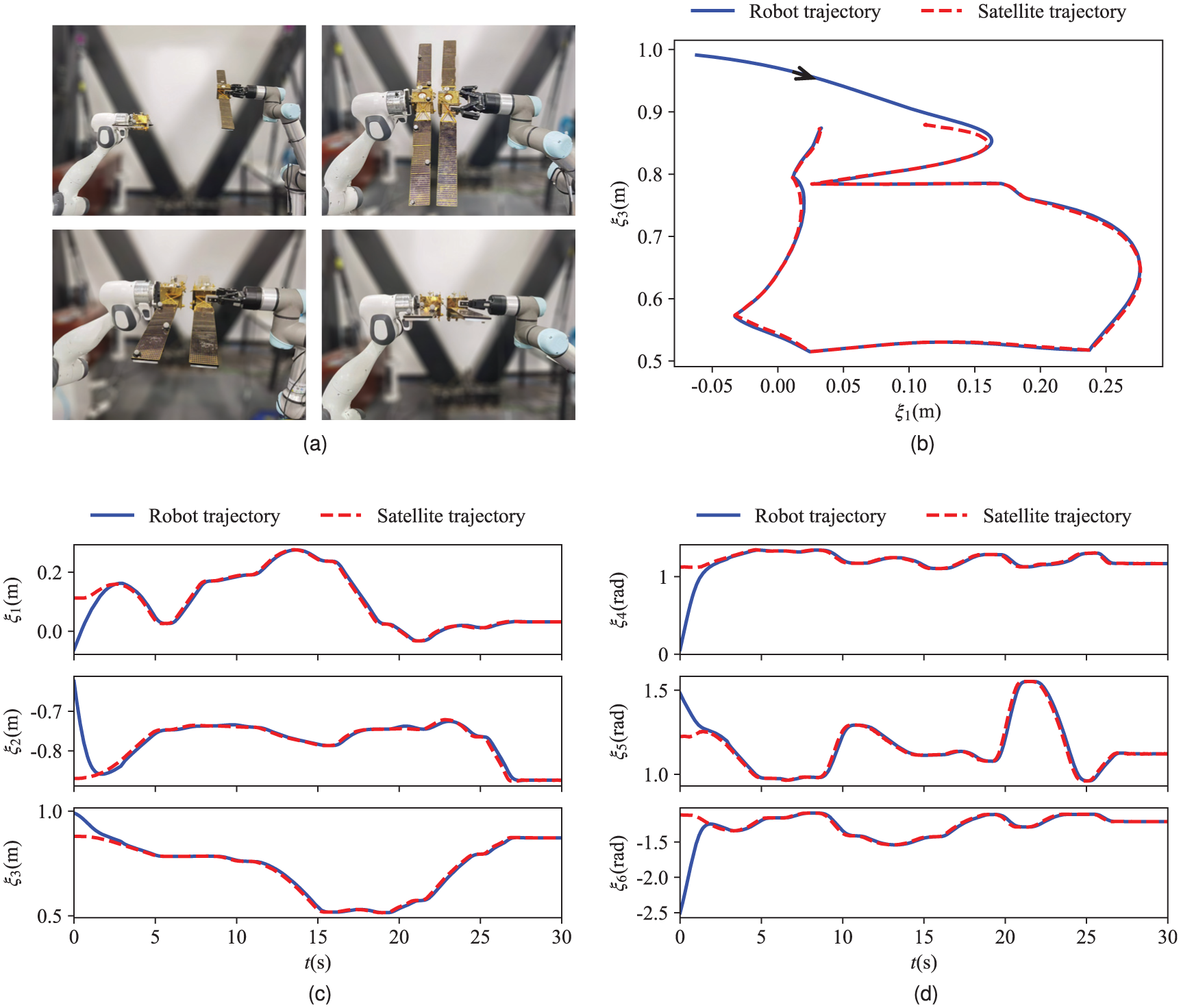

The second task involves manipulating the robotic arm to enable the grasped satellite to track and dock with a second, dynamically moving satellite. To accurately acquire the real-time position and orientation of this dynamic target, we affix eight optical markers to its surface. These markers are continuously tracked by a Vicon motion capture system, which provides high-fidelity pose estimation at a sufficient update rate. The captured pose data is then streamed in real time to the robotic controller, forming a closed-loop feedback system essential for dynamic tracking. Snapshots documenting the progression of this dynamic docking experiment are presented in Figure 14(a), visually illustrating the relative alignment of both satellites during the maneuver. Figure 14(b) depicts the motion trajectories of the target satellite and the robotic system in the

The task of dynamic docking: (a) Snapshots of the dynamic docking. (b) Tracking trajectories in the

Figure 14(c) and (d) provide quantitative analyses of the docking process, depicting the time histories of both the robotic end-effector pose and the dynamic satellite pose, all expressed relative to the robotic base frame. Analysis of these curves reveals a clear trend: under the control of the proposed method, the relative pose error (comprising both positional displacement and angular misalignment) between the two satellites exhibits a consistent decrease throughout the tracking phase. Obviously, this error converges and stabilizes at a low magnitude, confirming successful and stable docking achievement and maintenance. These results substantiate the robust adaptation and reactivity of the proposed IL2S method when operating in challenging dynamic environments characterized by moving targets. The system’s ability to replan trajectories online and precisely execute the docking maneuver demonstrates its effectiveness for complex, time-critical manipulation tasks.

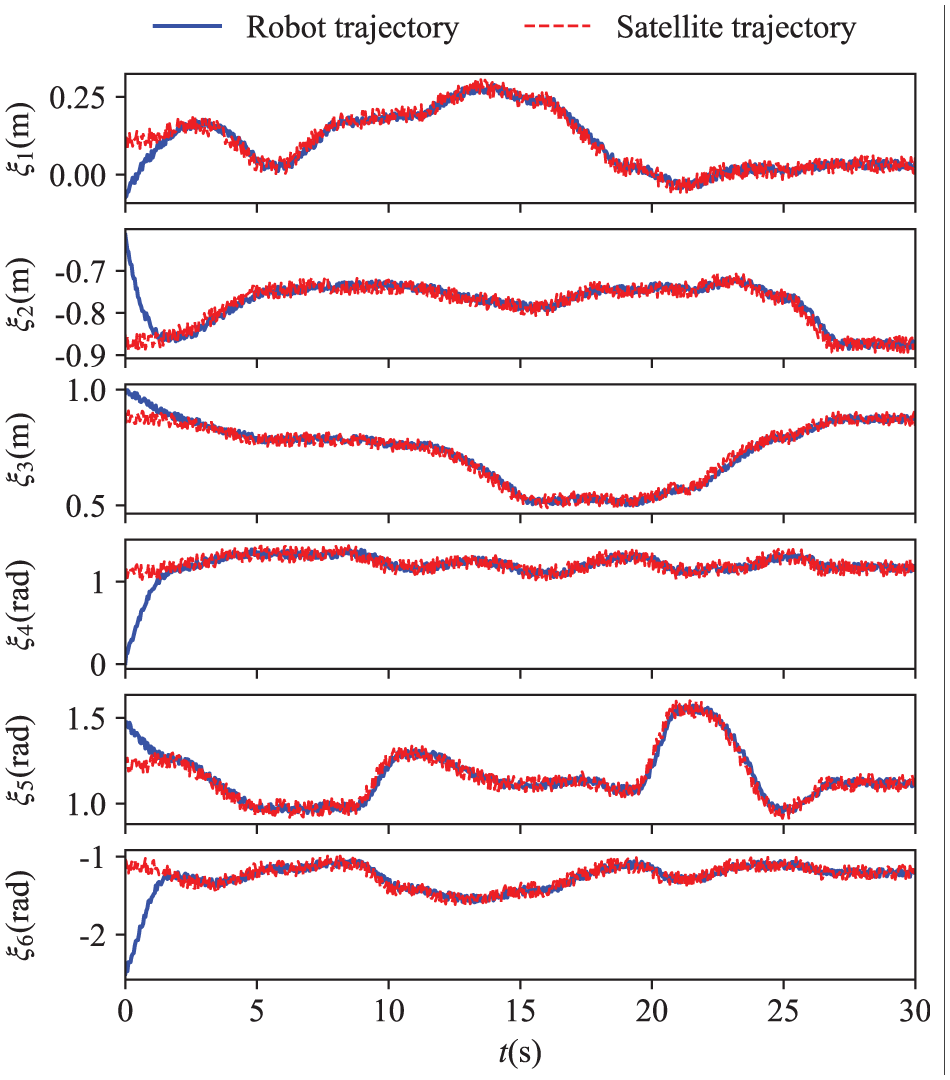

To validate the reliability of the proposed method in practical implementation, we test the system performance under position measurement noise. As shown in Figure 15, despite the noise in the target position, the robotic system achieves successful tracking of the target satellite trajectory within approximately 3 s using the IL2S scheme, whilst maintaining a smooth trajectory. This ensures the successful completion of the dynamic docking task, demonstrating the strong robustness of the IL2S scheme against measurement noise.

Tracking performance under position measurement noise.

Conclusions

In this paper, an imitation learning framework named IL2S is proposed for motion planning and control of robotic manipulation tasks. Firstly, we model the robot movement as a first-order autonomous dynamical system which consists of original dynamics and additional stabilizing term. Based on the demonstrations collected from kinesthetic teaching, the dynamical system and control Lyapunov function are learned using the fully connected neural network and input-convex neural network, respectively. The neural parameterized Lyapunov function with the property of unique minimum, positive definite and continuously differentiable serves as a certificate for the learned dynamical system to converge to the target. We demonstrate that the proposed framework is able to learn the stable dynamical system exhibiting the complex vector field on handwriting motion. Furthermore, we evaluate the methods in two different satellite manipulation scenarios, namely static grasping and dynamic docking. The experiment results elucidate the provided strategy can complete the tasks with regard to high reproduction accuracy, convergence to the target, and accommodation to unexpected changes. As future work, we will focus on how the learned dynamical system can be used in conjunction with force to allow compliant control when in contact with dynamic objects.