Abstract

Detecting misfires in internal combustion engines (ICEs) is essential for maintaining engine health, reducing emissions, and improving performance. However, current misfire detection systems are often generic and lack the capability to localize faults to specific cylinder banks. This limitation is primarily due to the high cost of piezoelectric sensors and the complexity of associated data processing, which restricts widespread adoption, especially in cost-sensitive applications. To address this challenge, the present study explores the use of cost-effective microelectromechanical system (MEMS) sensors for real-time misfire detection. Vibration data from a production Hyundai Xcent MPFI engine is collected and analyzed using decision tree machine learning classifiers. Three types of features—statistical, auto-regressive moving average (ARMA), and histogram-based—are extracted from the MEMS data. The J48 decision tree classifier, when applied to selected histogram features, achieves 100.00% classification accuracy which in turn exudes its effectiveness in the detection and specific localization of misfires. This result is found to exceed the performance level investigated in studies with high classification accuracies averaging between 99.00% and 99.80%, including methodologies spanning transfer learning models for similar applications. This approach offers a low-cost, high-performance solution suitable for on-board engine diagnostics. Furthermore, this approach provides a framework that prospectively enables a broader integration of advanced misfire detection in ICE applications.

Keywords

Introduction

Internal combustion engines (ICEs) rely on precise combustion processes, and misfires stemming from issues like improper ignition or fuel supply can adversely affect performance. Detecting misfires promptly is crucial to prevent prolonged damage, thus warranting a requirement for scalable active misfire detection systems. 1 This aligns with Industry 4.0 trends, enhancing condition monitoring in diverse combustion engine applications. Machine learning’s role in analyzing thermo-mechanical vibrations ensures accurate misfire detection, contributing to informed decision-making and reduced downtime. Research in data-driven fault diagnosis using algorithms like logistic model tree (LMT) and J48 decision tree demonstrates the importance of feature selection for robust classification performance in various mechanical systems, further highlighting the versatility of machine learning in enhancing condition monitoring across industrial applications. The use of statistical features characteristic of vibration signals for gearbox fault diagnosis using a discrete wavelet transformation (DWT) of input signals and subsequent classification by the support vector machine (SVM) algorithm was investigated in detail by Suresh et al. 2 Similarly, the use of ARMA features for the diagnosis of roller bearing faults via classification by extreme learning model (ELM) was investigated and reported by Meng et al. to be an effective combination for accurate bearing fault diagnosis. 3 On the other hand, the use of histogram features of vibration signals for the monitoring of face milling equipment condition was investigated in detail by Madhusudana et al. 4 It may be noted that this specific aspect has a specific relevance in the context of the results of the current study as well.

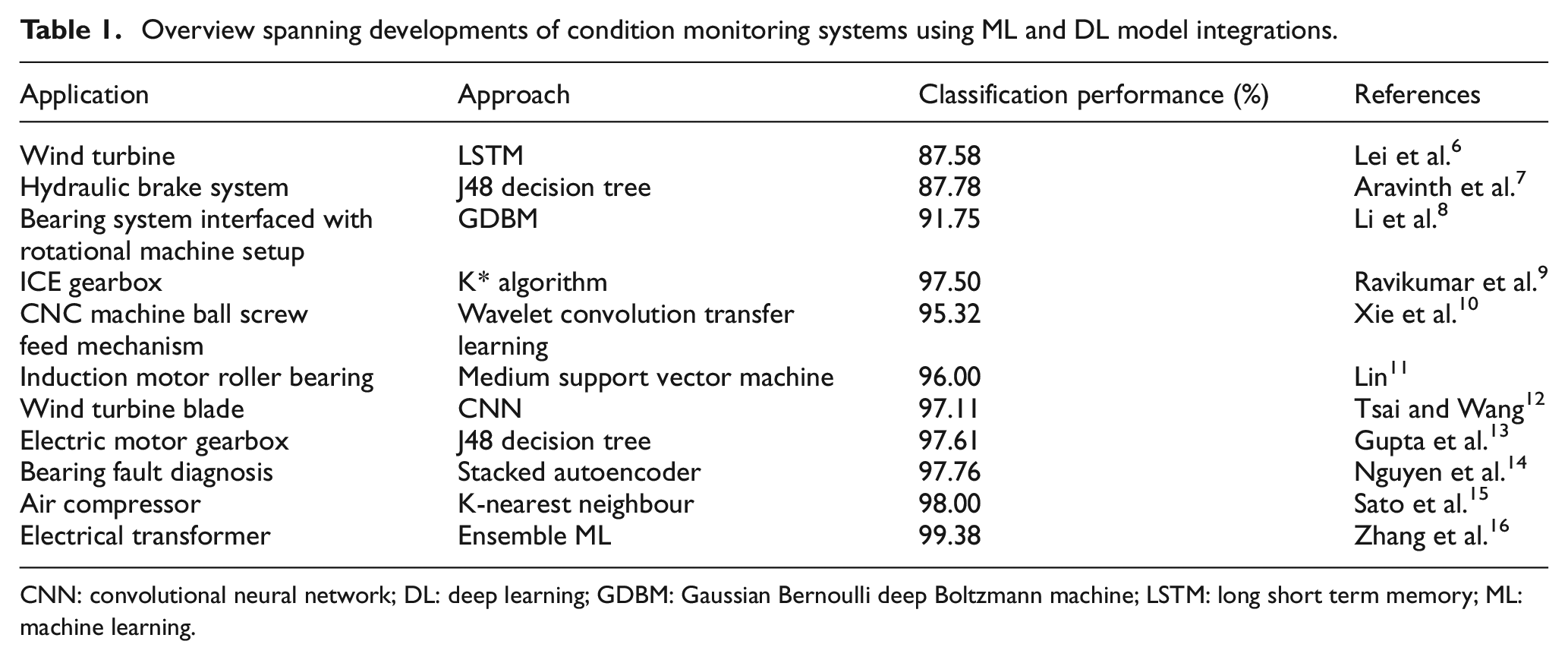

The study by Madhusudana et al. which utilized the K-star classifier for fault classification achieved a good classification accuracy of 96.00%, validating the robustness of histogram features for machinery fault diagnosis. Since machine learning approaches using classifiers belonging to the decision tree family render acceptable classification performances, such approaches have also been extrapolated towards the domain of misfire detection. This is largely because machine learning models in general can analyze patterns in vibration data well and therefore ‘learn’ from diverse datasets. ML algorithms excel at discerning subtle variations within these patterns, enabling the creation of robust models for accurate detection. Dorka et al. presented a condition monitoring framework using a MEMS setup in combination with neural networks deploying a distributed IoT-sensor foundation to attempt an emulation of industrial operating conditions on a reciprocating line-motion axes setup. 5 Additional works in the different domains of equipment condition monitoring and fault diagnosis utilizing vibration signals, along with associated performance results, have been enlisted in Table 1 below.

Overview spanning developments of condition monitoring systems using ML and DL model integrations.

CNN: convolutional neural network; DL: deep learning; GDBM: Gaussian Bernoulli deep Boltzmann machine; LSTM: long short term memory; ML: machine learning.

An overview of developments specifically in the front of misfire detection in ICE engines has been presented as follows. Devasenapati et al. utilized the statistical characteristics of misfire vibration signals to examine the performance of decision tree classifiers for misfire detection, again using piezoelectric sensors. 17 Babu et al. investigated the use of statistical features in vibration signals obtained using piezoelectric accelerometer for training classifier algorithms such as AdaBoost, LogitBoost, J48, and Multiclass Classifier for detection of misfires in diesel engines. 18 A classification accuracy of 92.20% was achieved by Mulay et al. using the functional tree classifier, with the specific use of ARMA features extracted from input signals sourced from a piezoelectric accelerometer on a dedicated engine test rig. 19 Arockia Dhanraj et al. deployed a K* classification approach to attempt and localize misfires into specific cylinder banks with the use of statistical features of vibratory signals sourced using a piezoelectric sensor. 20 This approach including the K* classification approach rendered a commendable accuracy of 98.00% over a time span of 0.24 seconds. Ghazaly et al. performed a detailed investigation into misfire localization using the Khonen self-organizing map (SOM) over three operating conditions, rendering 93.55% accuracy in misfire detection. 21 The implementation of deep learning networks for the specific purpose of misfire detection have also been explored.

A dual approach incorporating the use of artificial neural networks (ANN) for misfire detection in gasoline engines was attempted by Firmino et al. As part of this investigation, the first approach involved collecting vibration data, performing fast Fourier transform (FFT) on input vibration signals and deploying an ANN over the resulting data. The second approach followed the same process but utilized acoustic analysis of engine sound instead. Both approaches resulted in a commendably high classification accuracy of 99.30% and 98.70%, respectively. 22 Naveen Venkatesh et al. examined the implementation of transfer learning approach for misfire detection in a gasoline (spark ignition) engine. Pre-trained networks including AlexNet, ResNet50, GoogLeNet, and VGG16 were deployed over vibration data from a piezoelectric accelerometer, with VGG16 presenting the highest classification accuracy of 98.70% presenting a tuned hyperparameter configuration. 23 Furthermore, a classification test accuracy of 87.00% was achieved by a cascading CNN model developed by Terwilliger and Siegel specifically with the use of acoustic signals. 24 From the review of prevalent works performed thus far, and with due reference to the additional literature works with corresponding results presented in Table 2, a number of critical observations motivating the basis of the current study has been attained. In 2023, Gu et al. examined a method to enable the detection of faults in a gyroscope setup using data collected with a four-mass vibration MEMS gyroscope and subsequent classification with ResNeXt-50 model, relying on a neural framework for fault diagnosis. 25 The IoT-based deployment of MEMs sensors to enable condition monitoring was also examined by Gao et al. wherein a multi-channel MEMs vibration sensor was used as the perceptive basis for a low-power wide area network (LP-WAN) communicating with the respective controller using the LoRa protocol over a Google cloud server. 26 The use of MEMs sensors—with specific emphasis on MEMs-based vibration sensors—is thus highly forward compatible with advanced computing paradigms including cloud computation, wireless OTA data transmission via an IoT foundation and also with concepts of distributed computing for more effective use of hardware systems in resource-constrained environments.

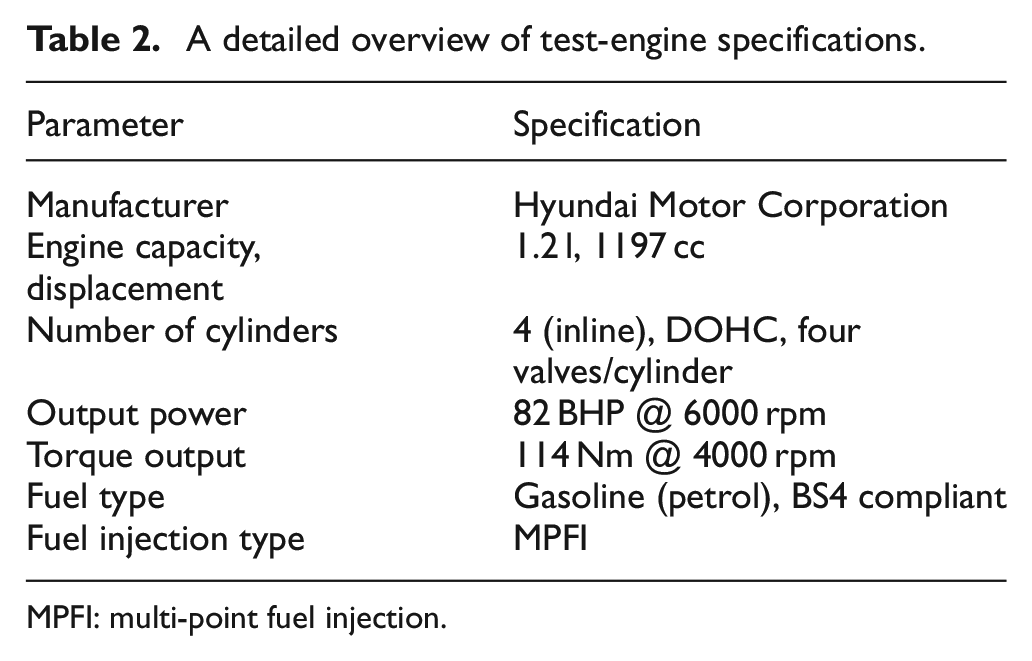

A detailed overview of test-engine specifications.

MPFI: multi-point fuel injection.

There has been a limited focus on scalable solutions for on-board misfire detection in resource-constrained vehicles compared to conventional fault diagnosis in other equipment. A lack of exploration into the viability of different feature types and their extraction along with selection for misfire detection before classifier model development has also been observed. In addition, the absence of sequential investigations into the use of a MEMS accelerometer for misfire detection with various feature types, especially concerning baseline performance further motivates the basis of this study. The study therefore aims to address these gaps systematically, contributing novel insights to the field of modular, and economical misfire detection systems. Therefore, the objectives and technical contributions of the current study are highlighted as follows:

Low-cost sensor deployment: Investigate the feasibility of a cost-effective misfire detection system using a MEMS accelerometer interfaced with an Arduino Uno DAQ system, offering an affordable alternative to conventional piezoelectric sensors.

Real-world experimental data collection: Perform data acquisition on a production-grade Hyundai Xcent MPFI engine under operational conditions contrasting with many prior works that rely on synthetic or laboratory-simulated environments.

Novel feature engineering strategy: Extract and benchmark three distinct classes of features namely, statistical, ARMA, and histogram features from the MEMS vibration data. This structured comparison is one of the first of its kind applied to MEMS-based misfire detection and informs effective feature selection for embedded applications.

Performance benchmarking across feature sets: Present a comparative analysis of classifier accuracy and computational efficiency (model build time) for the different feature types. The results demonstrate that histogram features when paired with the J48 classifier yield 100% classification accuracy with minimal computation time.

The study’s structure comprises section 2 detailing methodology, setup, and data acquisition, including a brief background on capacitive-type MEMS sensors. Furthermore, the section explores feature engineering, covering statistical, ARMA, and histogram features, and outlines the extraction and selection processes. Additionally, the decision tree family of classifiers is introduced, highlighting the attributes of the 12 evaluated tree classifiers. Section 3 presents and discusses detailed results from the feature selection and classifier evaluation stages. Finally, section 4 concludes, addressing limitations and suggesting potential avenues for future research. The present body of work is structured as follows. Section 2 presents a detailed overview of the experiment methodology which spans the approach adopted for data acquisition and the corresponding hardware specifications of the signal source and the DAQ devices. Thereafter, the feature engineering framework adopted for the purposes of this study via 12 statistical features, including the use of ARMA and histogram features to facilitate misfire identification and classification. An overview of the diverse types of decision tree-based classifiers which act on the features specified to enable final misfire classification into one of the cylinder banks of the ICE is also provided. Results of the present investigation in addition to associated discussions are surmised in section 3, while section 4 concludes the present body of work surmising results included in section 3. Finally, the limitations of the present study and therefore the future investigation trajectory along the line of the current study objectives are cumulatively surmised in section 4.

Materials and methodology

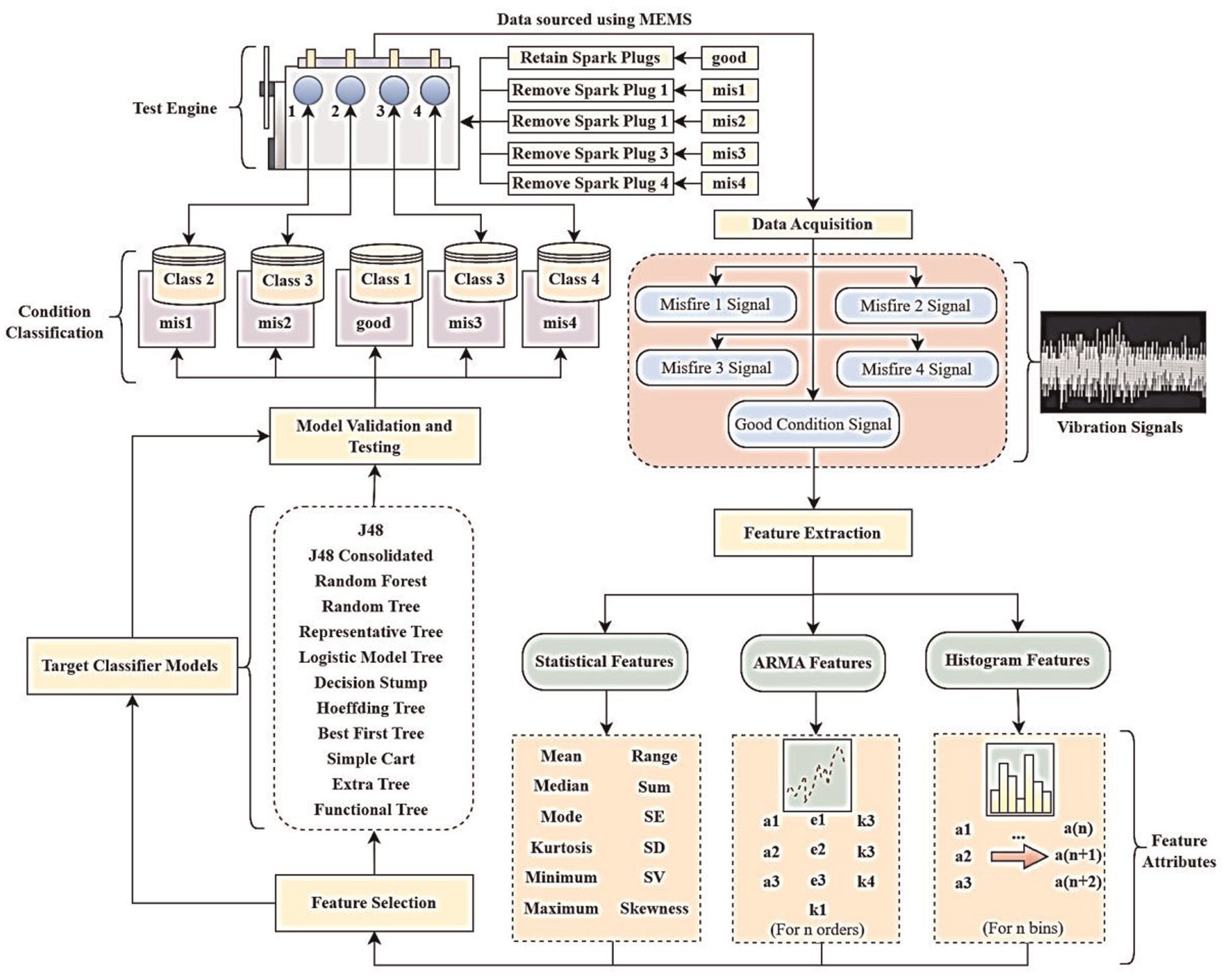

This study uses vibration signals from a traditional automotive internal combustion engine, recorded by a low-cost MEMS sensor. The goals are twofold: first, to validate the MEMS sensor for cost-effective misfire detection using machine learning algorithms, and second, to assess decision tree family algorithms across three feature categories (statistical, ARMA, and histogram).

Experimental setup

In view of conformance with real-world requirements, the experiment in support of the current study was conducted using a 1.2 l four-cylinder multi-point fuel injection (MPFI) system automotive gasoline engine. The detailed specifications of the test vehicle engine have been presented below in Table 2.

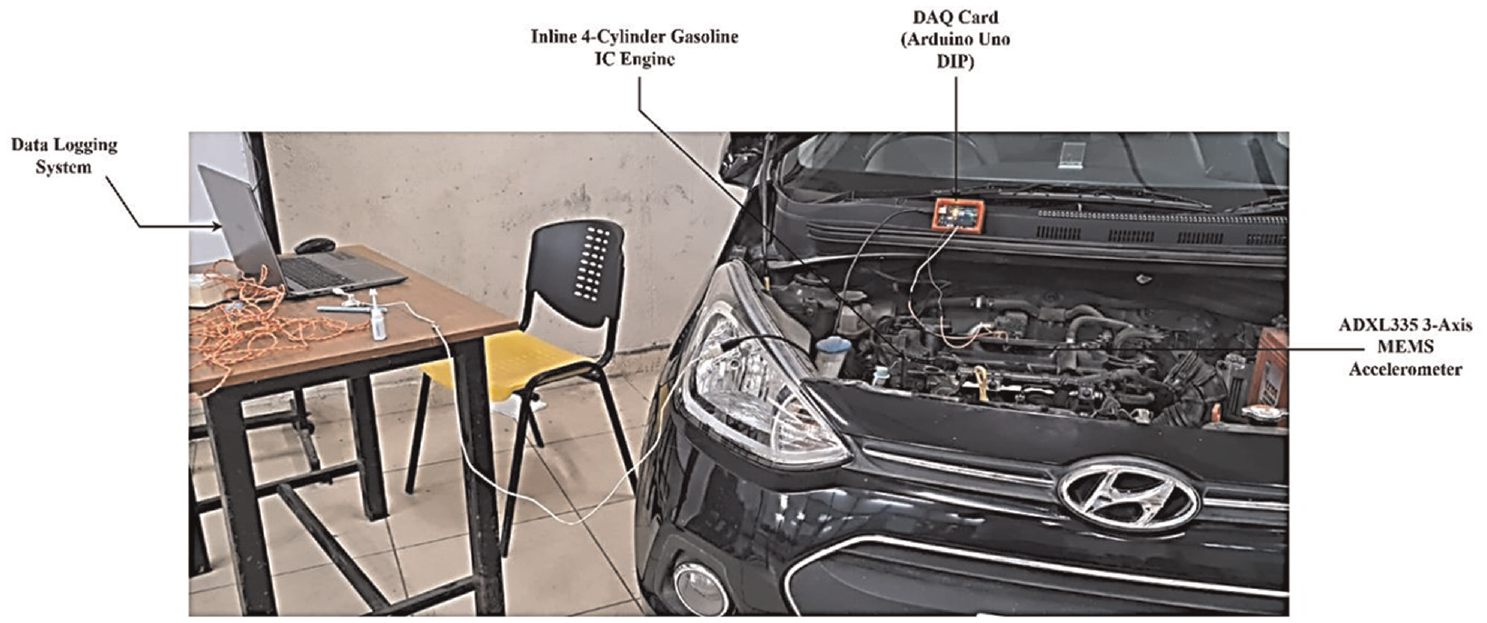

The experiment process was conducted using a MEMS accelerometer bonded to the test vehicle’s main engine block, interfaced with an Arduino Uno MCU for data acquisition. Vibration signals corresponding to misfires were obtained by disabling and removing one glow plug at a time from the cylinder banks, with the setup depicted in Figure 1. This resulted in the induction of misfires in specific cylinders during the power stroke. The experimental setup involved a thorough cleaning of the test ICE, using leadless fuel, and ensuring proper temperature regulation. The radiator fan’s automated thermoregulation response posed challenges during signal collection due to induced vibrations. To address this, the fan circuit was temporarily disabled, and external fans maintained an ambient operating environment. The engine’s condition was categorized into five classes (good, misfire 1–4), each with 100 input signals, generating individual CSV files for feature extraction (statistical, ARMA, and histogram). This resulted in 50 ARMA order files and 100 histogram bin files.

Experimental setup utilized for data acquisition for use in the current body of work.

Data acquisition (DAQ)

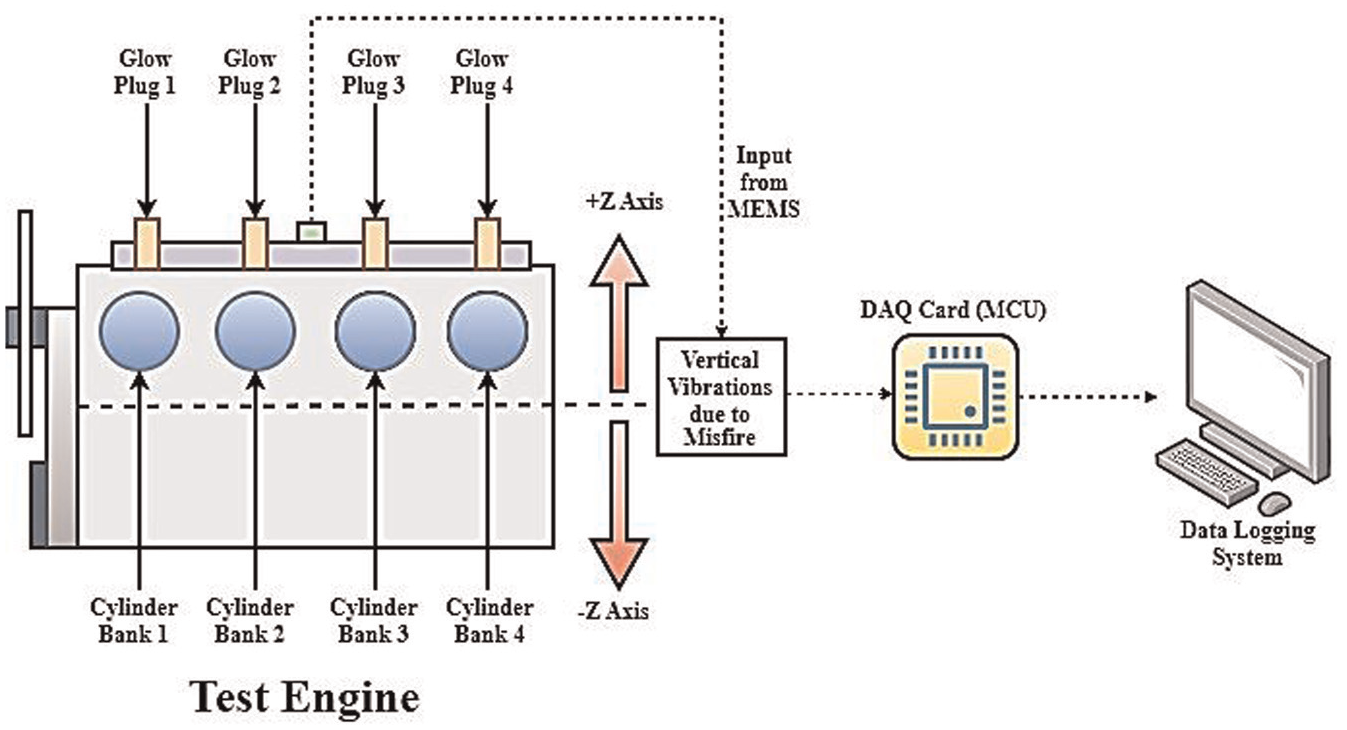

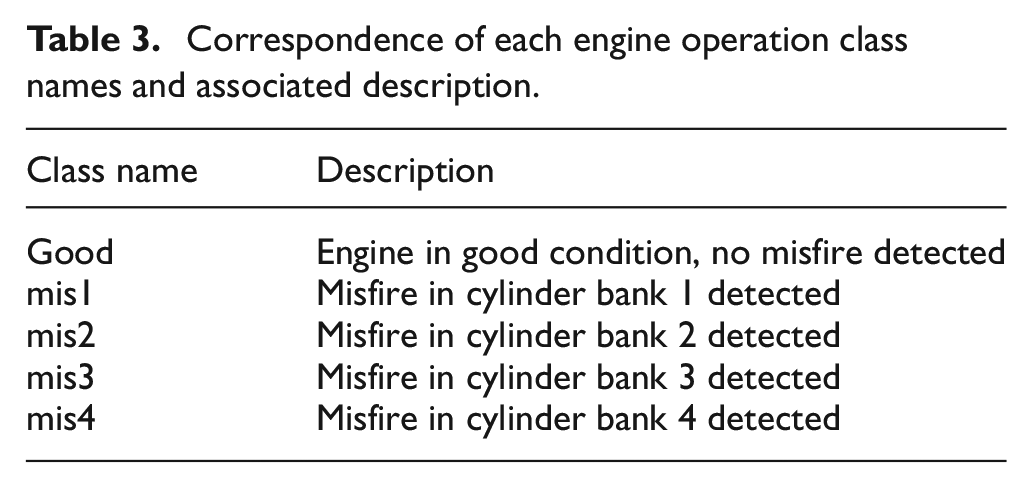

Vibration signals were captured by an ADXL335 3-axis MEMS accelerometer along the Z axis—depicted in Figure 2—with 300 mV/g Zout sensitivity, recording 10,000 sample points/signal at 9600 bauds, and a 24 kHz sampling frequency. A MEMS sensor can be positively utilized for the measurement of mechanical vibrations. 27 Adhered to the engine’s cylinder head cover, the MEMS sensor monitors vertical vibrations during misfire induction in a four-cylinder inline engine, where one glow plug is sequentially removed. The ADXL335 interfaces with an Arduino Uno MCU featuring an 8-bit ATMEGA328P microcontroller with 2 kb SRAM, 32 kb Flash Memory, and 1 kb EEPROM. Figure 2 furthermore illustrates the data flow from engine-induced vibrations to signal acquisition, quantization, and logging in a central system. Thus, five different engine operating cases namely, good, mis1, mis2, mis3, and mis4 were included in the study. Table 3 highlights the correspondence of each class label with its associated interpretation in practice for such a four-cylinder inline engine. One hundred input signals for each class were collected using the Arduino Uno DAQ card and recorded as distinct signal files using the National Instruments (NI) LabView data acquisition software run on a computer system. The experimental process is encapsulated in Figure 3 as well.

Data acqusition process map from engine to logging system.

Correspondence of each engine operation class names and associated description.

Experimental process flow to perform detection of misfire in an ICE using MEMS accelerometer for classification using decision tree family of classifiers.

Feature engineering

Misfire detection in internal combustion engines relies on extracting features from vibration signals including statistical metrics, ARMA modelling, and histogram characteristics. To streamline feature selection, 50 ARMA order files and 100 histogram bin files were prepared. Using the J48 decision tree algorithm, trees were configured on labelled datasets, and 10-fold cross-validation determined accuracy for each feature. Target attribute set files were chosen based on higher cross-validation accuracy, grouping top attributes for subsequent model building and training.

Statistical features

Statistical features like mean, standard deviation, skewness, and kurtosis summarize central tendency, dispersion, and shape in a dataset. In engine vibration data, they offer insights into average, variability, distribution asymmetry, and tail characteristics. Mean and standard deviation indicate average and variability, while skewness and kurtosis reveal distribution characteristics. Anomalies, like sudden changes in standard deviation or mean, function as indicators for potential issues such as misfires. Detecting misfires relies on identifying abnormal changes in these statistical features. The subsequent subsections provide an overview of attributes forming the statistical foundation for spotting deviations during misfires.

Mean: The mean represents the average of a set of values and is calculated by summing all values and dividing by the total number of observations, expressed as

Standard error (se): Standard Error measures the variability of sample means, providing an estimate of how much the sample mean might deviate from the true population mean, expressed as

Median: The median is the middle value of a dataset when arranged in ascending or descending order, effectively dividing the data into two equal halves.

Mode: The mode is the value that appears most frequently in a dataset, indicating the most common observation.

Standard deviation (sd): Standard deviation measures the extent of individual data points’ deviation from the mean, quantifying the overall variability of the dataset, expressed as

Standard variance (sv): Standard variance is the square of the standard deviation, representing the average squared deviation from the mean, expressed as

Kurtosis: Kurtosis measures the sharpness or flatness of the peak of a distribution with reference to the data mean, expressed as Kurtosis =

Skewness: Skewness quantifies the asymmetry of a distribution, indicating whether the data is skewed to the left or right, represented by Skewness =

Range: The range is the difference between the maximum and minimum values in a dataset, providing a simple measure of variability.

Minimum: The minimum is the smallest value in a dataset, representing the lower boundary of the observed values.

Maximum: The maximum is the largest value in a dataset, representing the upper boundary of the observed values.

Sum: The sum is the total of all values in a dataset, providing an aggregate measure of the dataset’s magnitude.

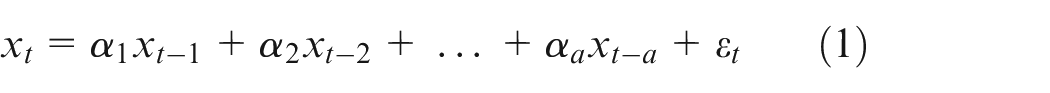

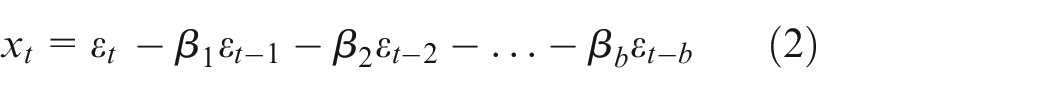

ARMA features (auto-regressive moving average)

ARMA features involve modelling time-series data with auto-regressive (AR) and moving average (MA) components. In engine vibration analysis, ARMA modelling captures temporal dependencies, revealing trends in the signal. For misfire detection, ARMA features identify patterns in time-series vibrations indicative of irregular engine behaviour. The model tracks changes in vibration patterns over time, offering a dynamic view of engine performance. Detecting deviations from expected ARMA patterns signals potential misfires or irregularities in engine operation. Represented as f (a, b), ARMA models use orders a and b for auto-regressive (AR) and moving average (MA) parts. Coefficients (α1, α2, …, αa) and (β1, β2, …, βb) were computed using methods like Yule–Walker equations or maximum likelihood estimation (equations (1) and (2) below):

Histogram features

Histogram features visually represent vibration data distribution across intervals, capturing amplitude, and frequency patterns. Engine misfires, altering histogram shapes, manifest as spikes or shifts, indicating irregular behaviour. The quantitative analysis aids in detecting patterns related to normal and misfire engine operation. By offering a frequency-based perspective, histograms identify anomalies like unexpected peaks, crucial for misfire detection. The feature’s comprehensive representation of the vibrational spectrum proves valuable in discerning irregularities. The number of bins in histogram features correlates with the total data points per signal (equation (3)):

In equation (3), n represents the number of data points and k is the total number of requisite bins each comprising features of all input signals over all target classes.

Decision tree family of machine learning classifiers

Decision trees, commonly used in machine learning, employ a tree-like structure with nodes representing decisions based on specific features. This IF-THEN rule-based approach ensures interpretability, making them accessible without extensive domain knowledge. Operating on unique features, decision trees accommodate complex decision-making and aid visualization. As non-parametric models, they adapt well to diverse datasets, capturing non-linear relationships effectively. During training, optimal decision rules are learned from labelled data, and prediction involves traversing the tree to a leaf node for the final result. Their simplicity, accuracy, and adaptability to non-linear relationships make decision trees suitable for various applications, including misfire detection in resource-constrained systems.

J48 classifier (J48): It is an implementation of the C4.5 algorithm, a versatile decision tree classifier known for its robustness and effectiveness in handling both categorical and numerical data.

J48 consolidated (J48C): J48C is an extended version of J48 that incorporates consolidation pruning to enhance decision tree accuracy and reliability.

Random forest (RF): RF is an ensemble learning method that constructs multiple decision trees and combines their outputs to improve predictive accuracy and reduce overfitting.

Random tree (RT): RT is a simpler variant of random forest, constructing a single decision tree using a random subset of features at each split for faster training and deployment.

Representative tree (REPTree): REP tree is a decision tree classifier prioritizing the selection of representative instances during node splitting to enhance classification accuracy, particularly useful for imbalanced class distributions.

Logistic model tree (LMT): LMT is a hybrid decision tree algorithm combining decision trees with logistic regression models at leaf nodes to capture both linear and non-linear relationships in the data.

Decision stump (DS): Decision Stump is a simple decision tree with one level, often used as a weak learner in ensemble methods like boosting.

Hoeffding tree (HT): Hoeffding tree is designed for streaming data scenarios, using the Hoeffding bound to make statistically sound decisions with limited data.

Best first tree (BFT): BFTree classifier employs a best-first strategy during tree construction, dynamically selecting the best node to split based on heuristic evaluation for efficient decision tree building with limited computational resources.

Extra tree (ET): Extra tree or extremely randomized trees, is an ensemble learning method similar to random forest but with additional randomness in selecting thresholds for feature splits, providing improved robustness to noisy data.

Simple cart (SC): Simple cart or classification and regression trees, is a straightforward decision tree algorithm using Gini impurity as the splitting criterion, efficient for large datasets.

Functional tree (FT): Functional trees extend traditional decision trees by incorporating functional attributes, allowing the model to capture complex relationships in continuous and functional feature spaces.

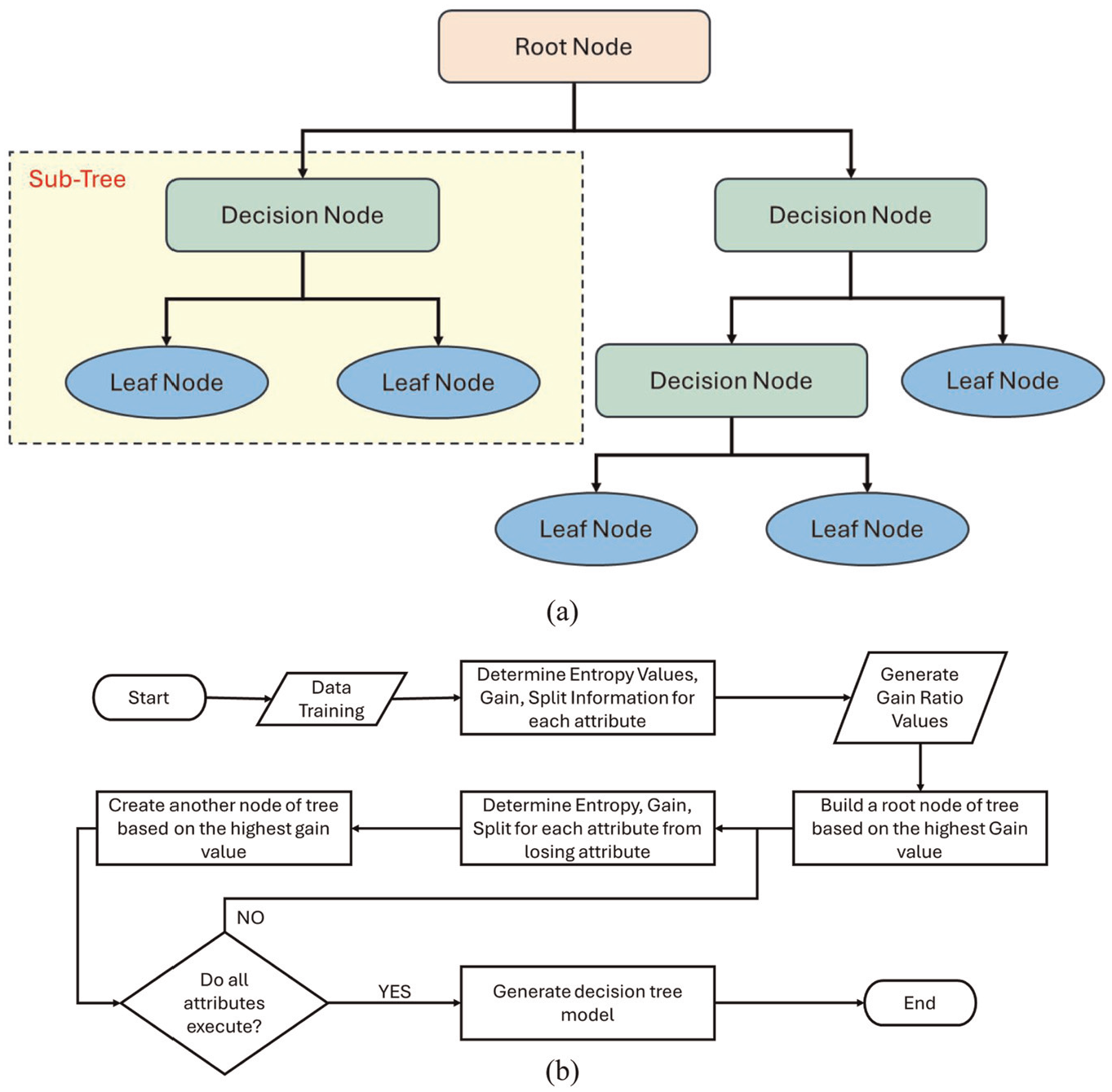

Among the decision tree algorithms considered, J48 was found to be the accurate performing algorithm. The general workflow of decision trees and the flowchart representing the algorithm workflow is presented in Figure 4.

An overview of (a) workflow of decision tree and (b) flowchart representation of the working of J48 algorithm.

Results and discussion

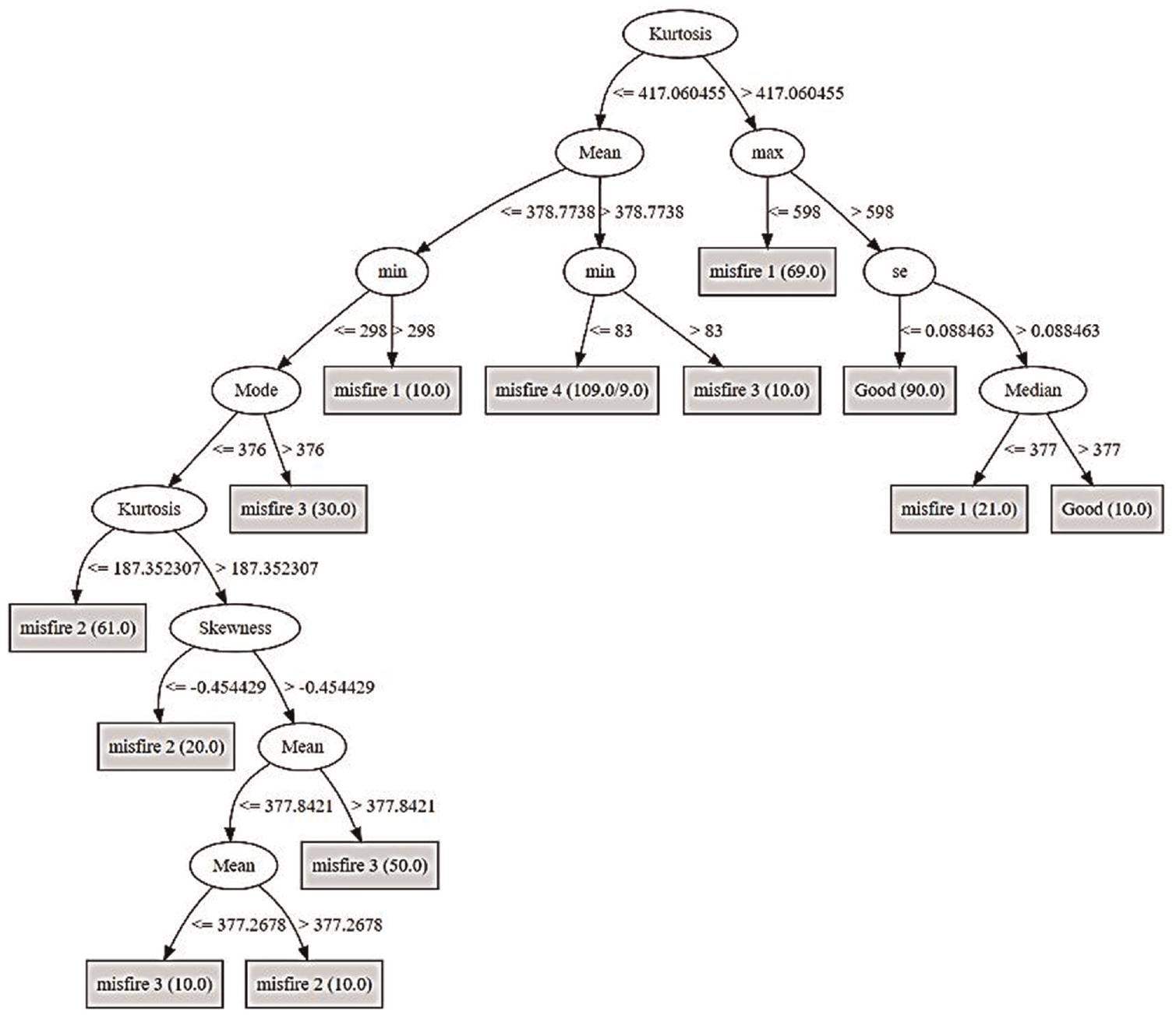

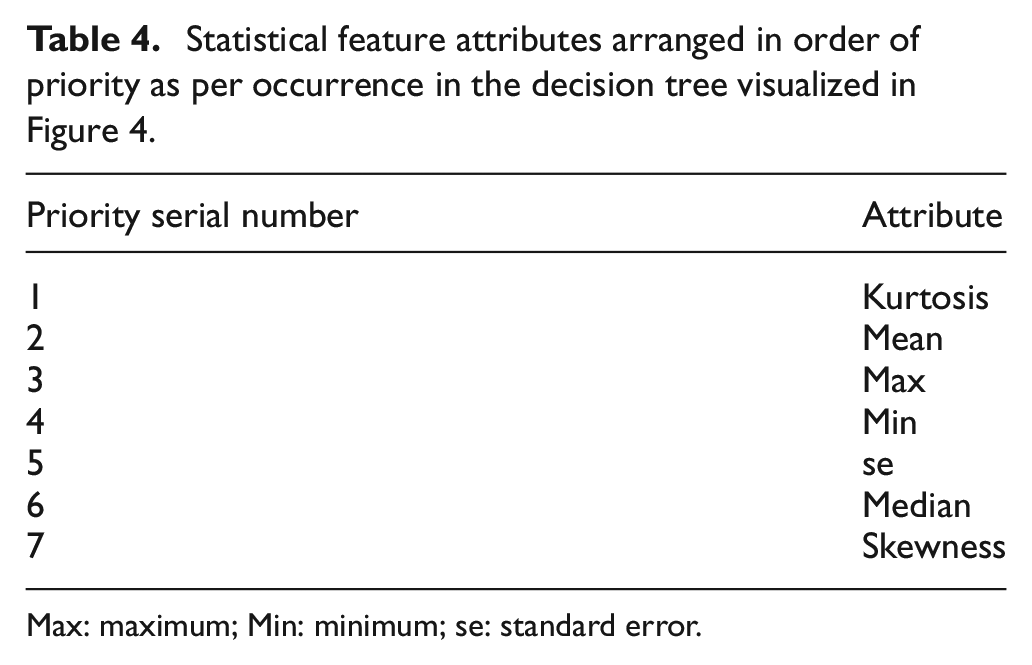

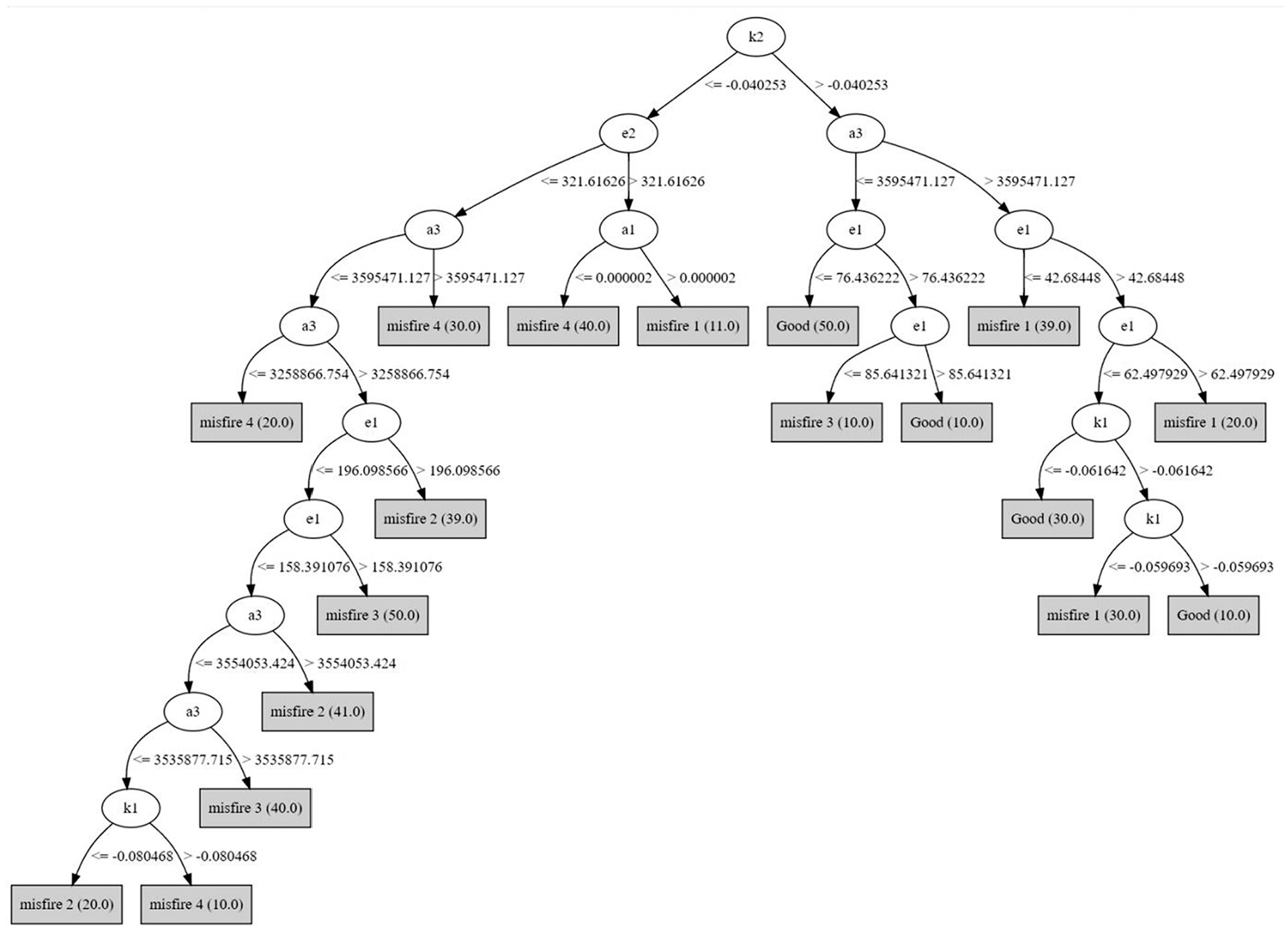

After feature engineering, the statistical, ARMA, and histogram feature-attribute sets are split into 0.8:0.2 ratios for training and testing. The 12 decision tree algorithms are evaluated for training, 10-fold validation, and testing accuracies, and the models are deployed on complete labelled feature data for accuracy assessments. Tuning key classification parameters sets a baseline for cross-comparing performance across feature types. Statistical, ARMA, and histogram features undergo extraction and attribute selection using a J48 classifier with specific configurations. The final set of feature attributes is determined based on J48 classifier performance. Subsequent sections present feature selection results and discuss decision tree classifier performance for each feature type. The J48 classifier is deployed on the consolidated statistical features, resulting in the decision tree shown in Figure 5. From Figure 5, it is apparent that out of a total of 12 attributes, only six attributes seemed to be included in the decision tree structure, implying that the thresholding of these parameters specifically impacts the performance of the classifier model used for feature selection, and for all other models evaluated over subsequent sections in this work. Table 4 presents the attributes in sequence, as depicted in Figure 5.

Decision tree visualization for J48 classifier deployed on statistical feature data.

Statistical feature attributes arranged in order of priority as per occurrence in the decision tree visualized in Figure 4.

Max: maximum; Min: minimum; se: standard error.

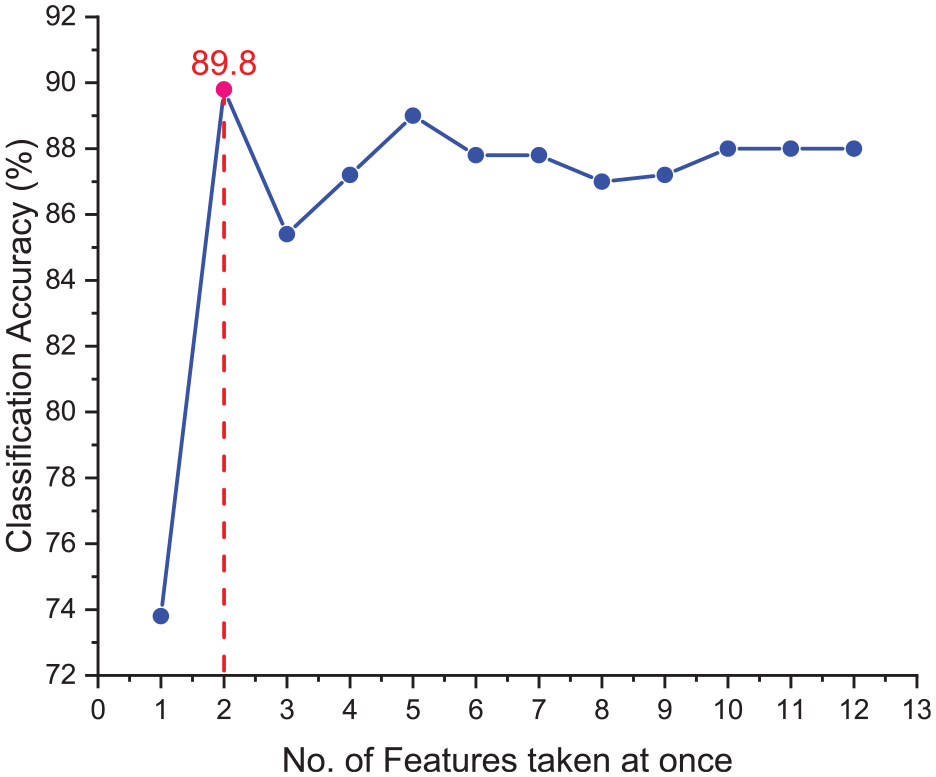

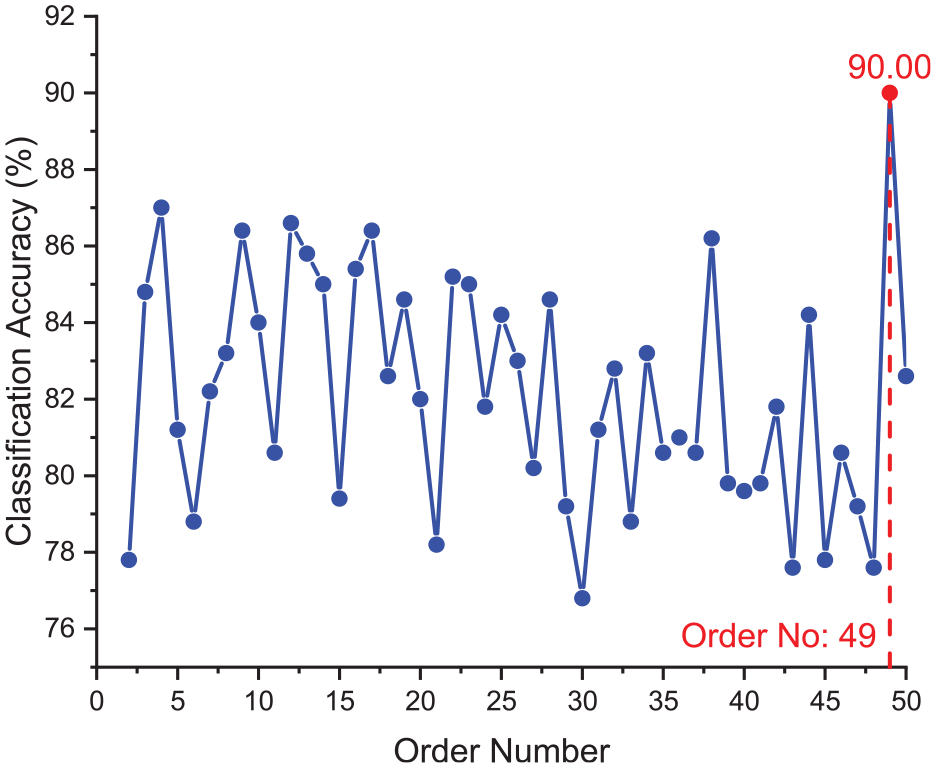

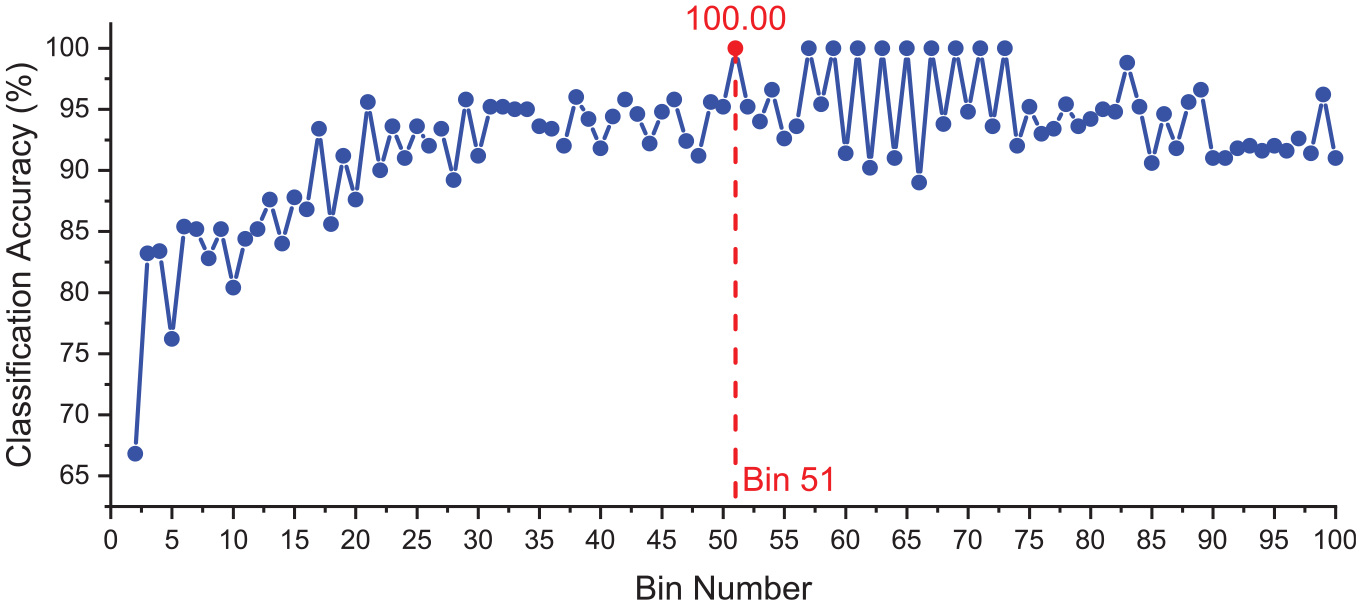

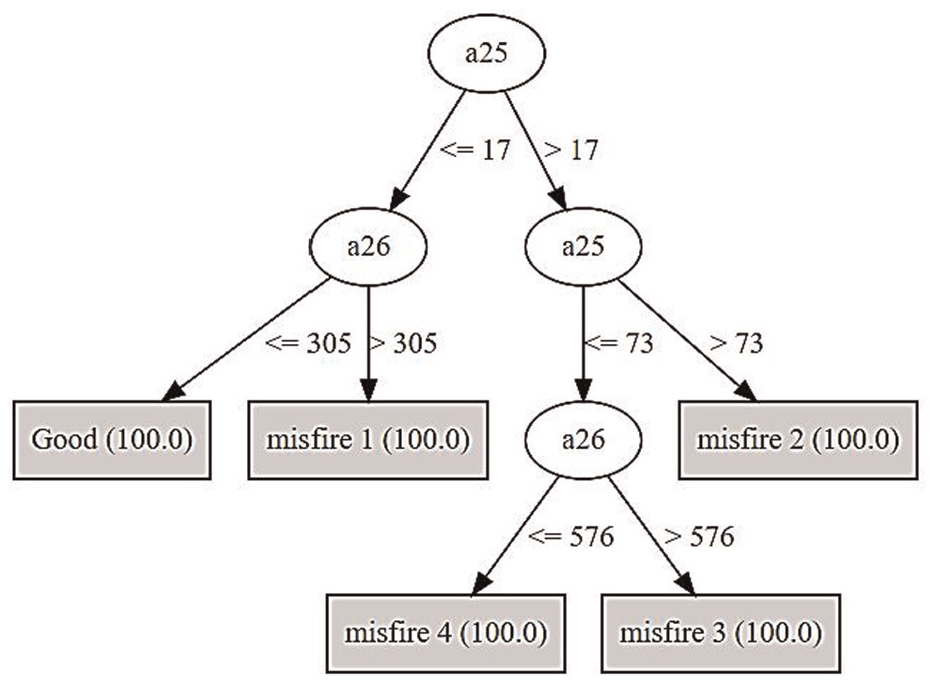

In Table 4, referencing the decision tree in Figure 4 for statistical features, the J48 algorithm identifies kurtosis as the key feature, followed by attributes like mean, max, min, standard error, median, and skewness. Each level of the tree establishes decision criteria, where, for example, if kurtosis is below or equal to 417.060455, the next decision relies on the mean; otherwise, it turns to the maximum. The sequential consideration of the top i attributes from Table 4 indicates that the combination of kurtosis and mean achieves the highest validation accuracy at 89.80%. This outperforms other attribute combinations, with validation accuracy stabilizing around 87.00%–88.00% from the sixth combination onwards (considering Kurtosis + mean + max + min + se + mode attributes), as highlighted in Figure 6 as well. This process is continued in the case of ARMA and histogram features as well wherein both cases employ the same J48 classifier to attain the end result. The order and bin number selection ARMA and histogram are presented in Figures 7 and 8, while the corresponding decision trees derived from J48 are presented in Figures 9 and 10, respectively. The final set of feature-attributes utilized for subsequent system evaluation has been surmised in Table 5.

J48 model achieves the highest validation accuracy of 89.80% using the top two statistical feature attributes from Table 5.

ARMA feature order number selection using J48 decision tree algorithm.

Histogram feature bin selection using J48 decision tree algorithm.

Decision tree visualization for J48 classifier deployed on ARMA feature data.

Decision tree visualization for J48 classifier deployed on histogram feature data.

Results of feature (–attribute) selection process.

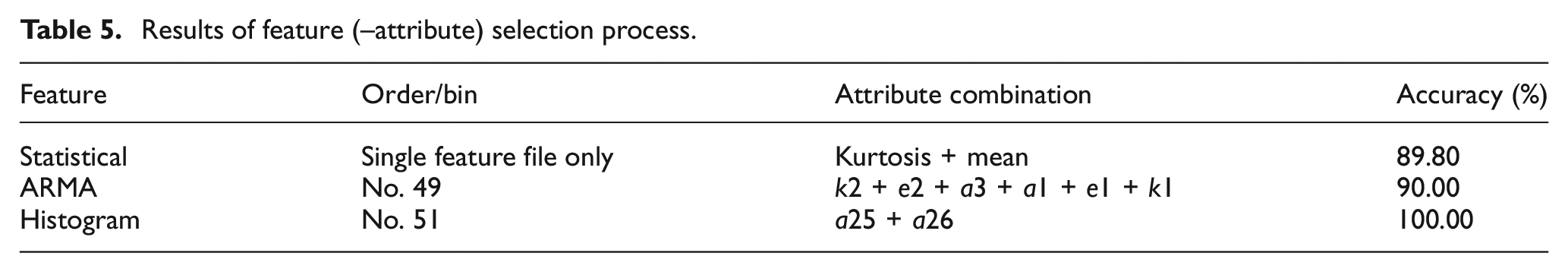

With the selection of those attributes characteristic of each feature resulting in higher J48 classifier validation accuracy as represented in Table 5, 12 different decision tree classifier algorithms are built on the final feature set corresponding to statistical, ARMA and histogram features. For each feature-classifier examination as represented in Tables 6 to 8, the corresponding classifier training, 10-fold validation, and testing accuracies along with associated model building times are recorded as well. These metrics set a comprehensive base for classifier-to-classifier performance comparison over the same final feature set. Table 6 consolidates the performance metrics of the 12 decision tree algorithms deployed over the statistical feature data set which after feature-attribute selection, comprises the kurtosis, and mean of the original vibration signals.

Overview of classification performance of 12 decision tree algorithms deployed on selected statistical feature-attribute data.

Boldfaced values represent the best performing model.

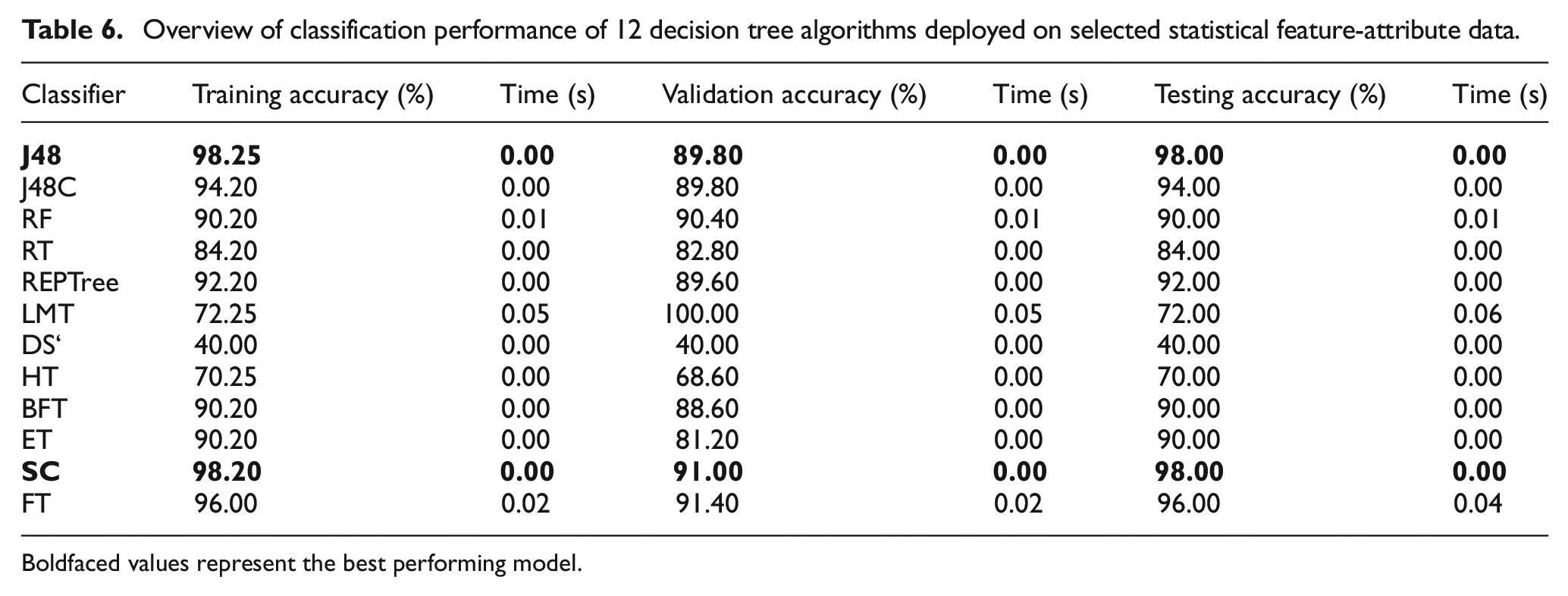

Overview of classification performance of 12 decision tree algorithms deployed on selected ARMA feature-attribute data.

Boldfaced values represent the best performing model.

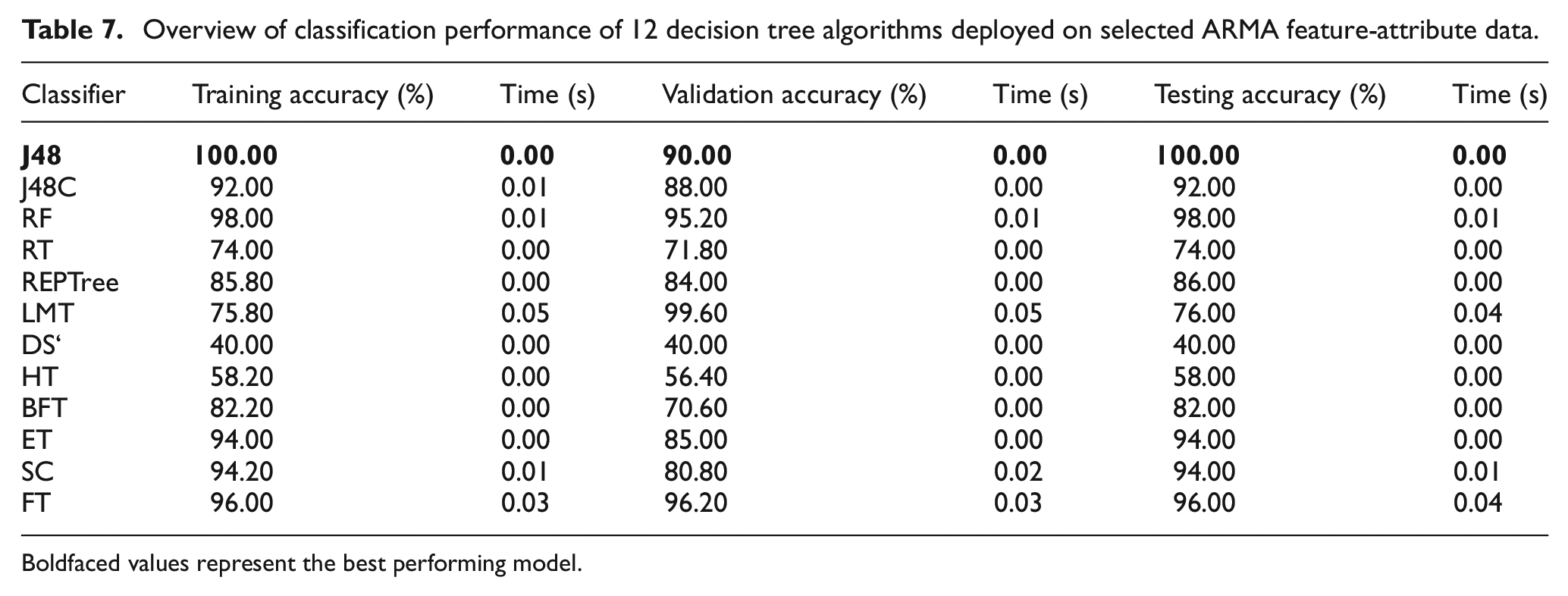

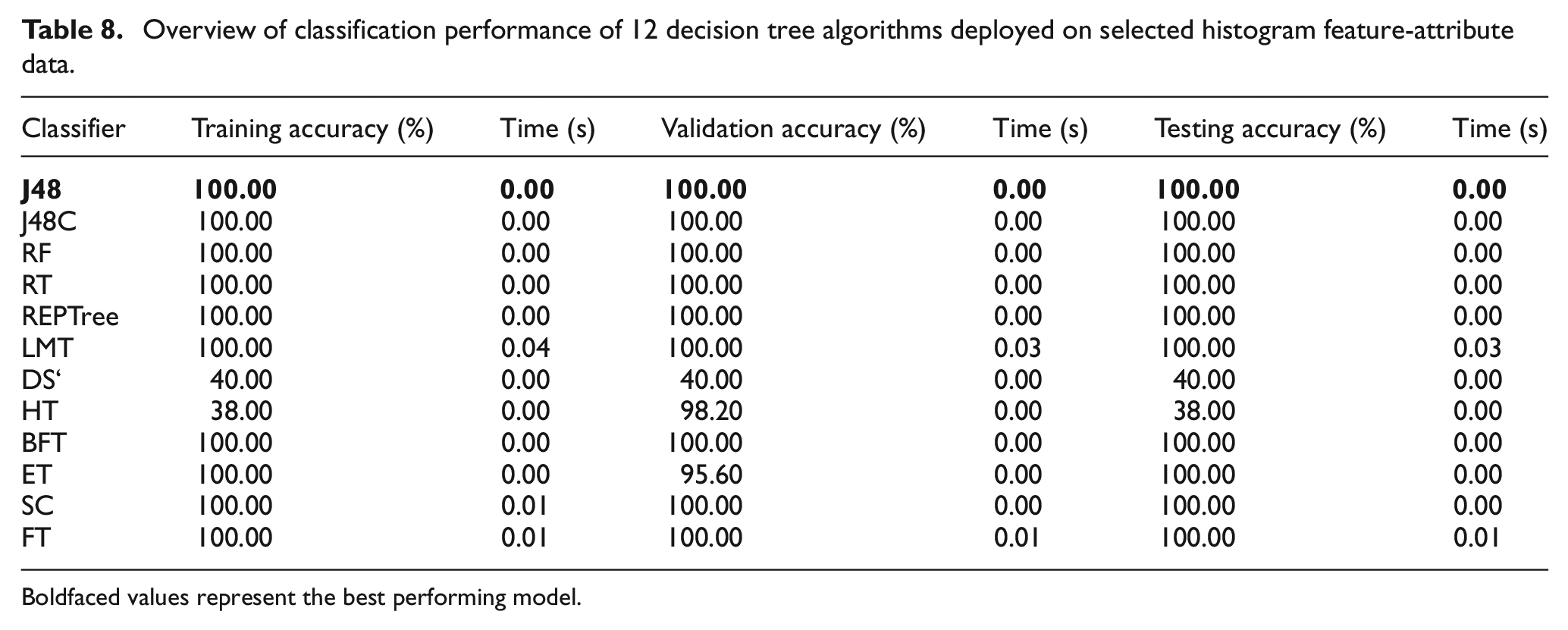

Overview of classification performance of 12 decision tree algorithms deployed on selected histogram feature-attribute data.

Boldfaced values represent the best performing model.

Table 6 highlights decision tree classifiers with strong performances above 90.00% in training, validation, and testing. Notably, Random Forest, Simple CART, and Functional Tree excel. J48 achieves impressive training and testing accuracies exceeding 98.00%, slightly trailing in validation at 89.80%. Simple CART matches J48’s testing accuracy but surpasses it in validation at 91.00%. The choice between models should prioritize testing and validation accuracies, despite J48’s slightly higher training accuracy of 98.25%. Simple CART stands out for statistical features, utilizing kurtosis, and mean attributes from vibration signals. In Table 7, considering selected ARMA attributes from Table 5, only J48 attains perfect testing and training accuracy, with 90.00% validation accuracy. The functional tree consistently performs well, but its model building time surpasses J48’s in training, validation, and testing processes.

Since in real-time it is imperative for machine learning models to minimize the time for result generation, the model of choice corresponding to results reported in Table 7 is the J48 classifier. Besides, the rationale behind the output of a J48 algorithm is sufficiently intuitive to analyze and backtrack using the respective decision tree to identify the source of misclassification if any. In analyzing the 12 classifier models built using the selected histogram attributes, intriguing results emerge. Nine out of twelve classifiers consistently demonstrate perfect classification accuracies across training, 10-fold cross-validation, and testing. This underscores the pivotal role of feature type and attributes, emphasizing that misfire detection performance is not solely dictated by the classifier’s inherent structure.

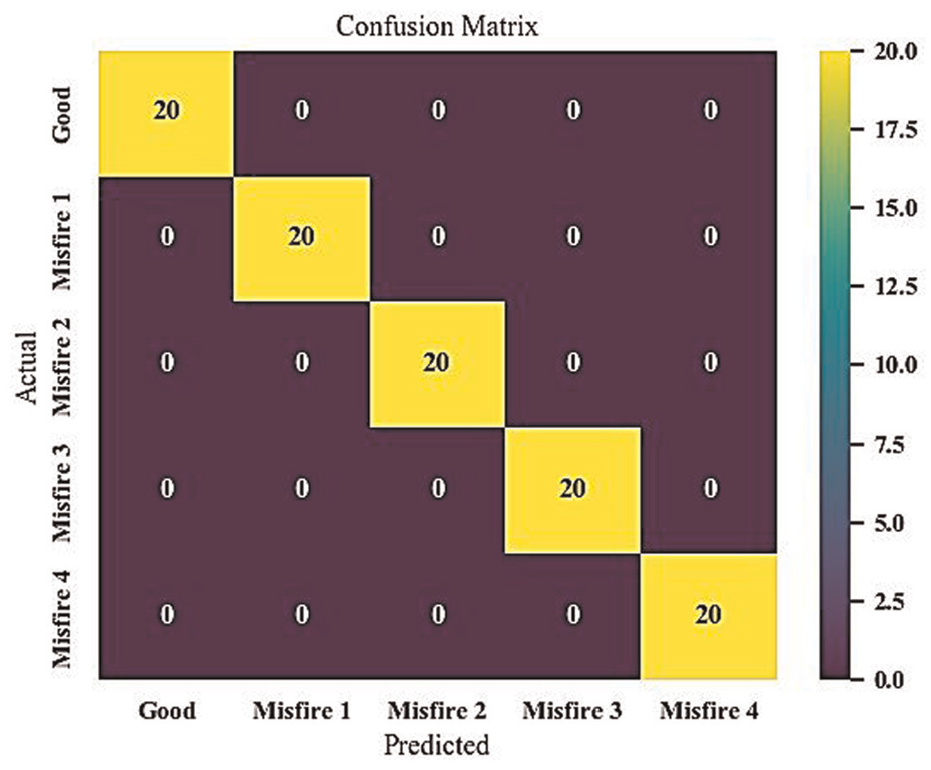

From Table 8, the decision stump classifier exhibits subpar performance, achieving a testing accuracy of only 40.00%. Similarly, the Hoeffding tree performs even worse, with a testing accuracy of 38.00%, despite a high validation accuracy of 98.20%. The extra tree classifier is excluded due to its relatively lower validation accuracy of 95.60%. Considering model-building time in a second pass filtering approach, the logistic model tree (LMT), simple tree, and functional tree classifiers are omitted due to comparatively higher build time requirements. Among the remaining algorithms—J48, J48 consolidated (J48C), random forest, random tree, representative tree, best first tree, and extra tree—all exhibit perfect training, validation, and testing accuracies. For the final comparison, the J48 classifier stands out as the top-performing model. Its simplicity in implementation and result interpretation makes it particularly appealing from an application perspective. Hence, for the selected histogram attributes, the J48 classifier is deemed the most effective machine learning algorithm for misfire detection and classification. The confusion matrix in Figure 11 illustrates the total occurrences of correct and incorrect classification instances when the J48 model is applied to the testing data corresponding to the chosen histogram attributes.

Confusion matrix for J48 performance on histogram feature attributes a25 and a26.

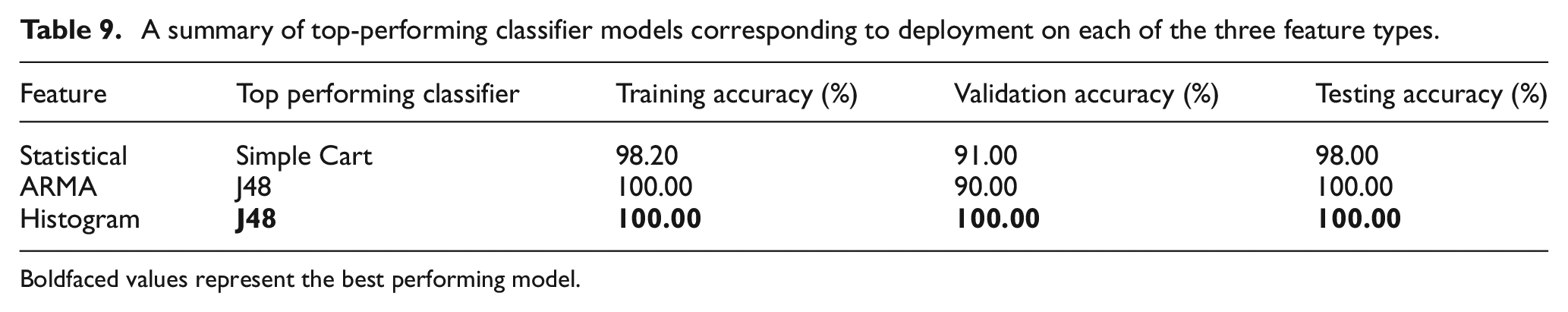

The comprehensive investigation into model performances concludes with a summary highlighting the classification capabilities of the top-performing models assessed for statistical, ARMA, and histogram features individually. The results are summarized in Table 9, where it becomes evident that the pairing of a J48 classifier with a25 and a26 attributes of histogram features, representing vibration signals obtained from a MEMS accelerometer, achieves flawless misfire detection. Upon reflection, even the combination of the J48 classifier with ARMA features as the chosen feature set for model building attains a notably high-performance level. However, it falls short of the validation accuracy achieved by the J48 + histogram combination by a margin of 10.00%. In either case, considering testing accuracy, and particularly when juxtaposed with the simple cart model’s testing performance over statistical feature data, the J48 classifier emerges as the unequivocal winner for misfire detection.

A summary of top-performing classifier models corresponding to deployment on each of the three feature types.

Boldfaced values represent the best performing model.

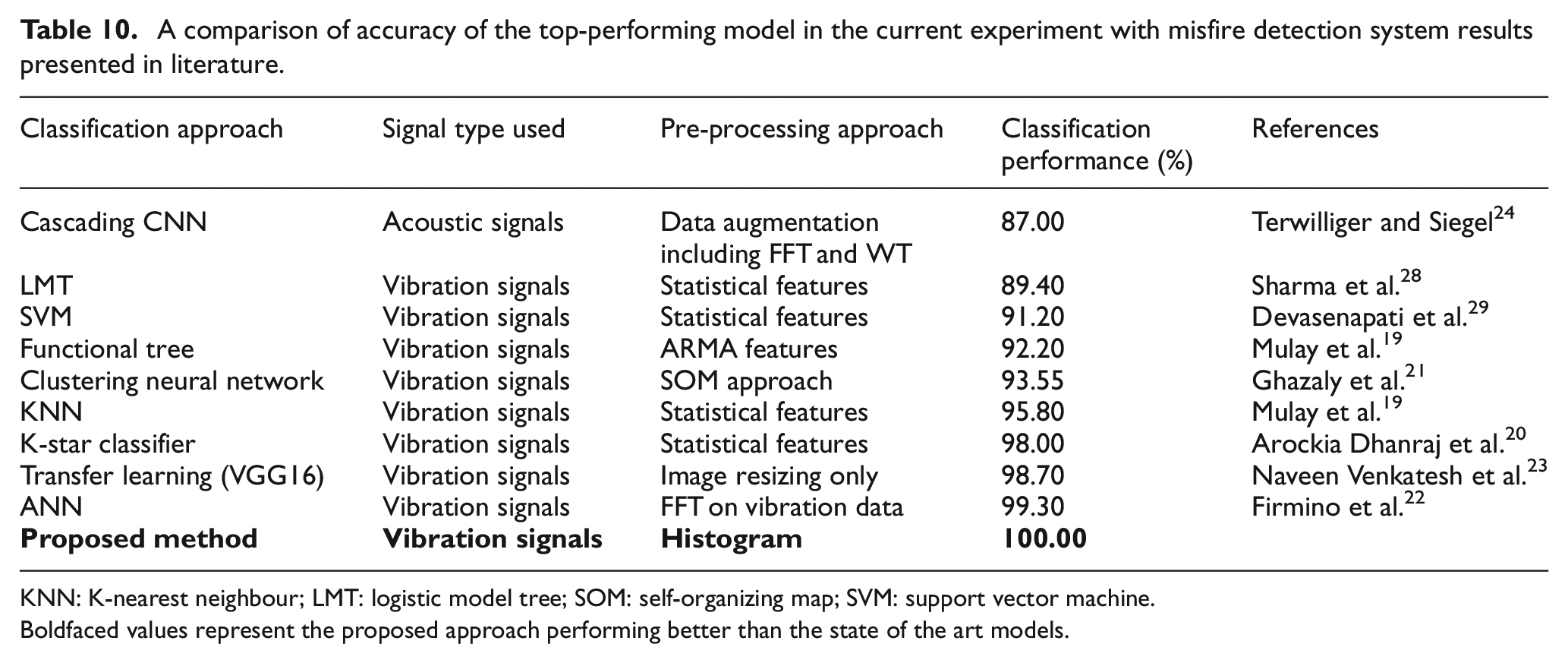

The overall classification was conducted in WEKA data mining software developed by University of Waikato and was carried out in a Windows 11 OS, 16 GB RAM personal computer. In addition to classification accuracy, the computational efficiency of the decision tree classifiers was also evaluated by measuring the model build time for each feature type. The observed average training model-built times for statistical, histogram, and ARMA features were 0.006, 0.005, and 0.009 s, respectively. Among the test model building most of the features performed much quickly with a computational time near zero. For the proposed combination of histogram features and J48 algorithm, the training, validation, and test model-built time were 0.01, 0.00, and 0.00 s, respectively. This supports the feasibility of deploying the proposed approach in real-time misfire detection systems using low-cost embedded hardware. A comparison of the performance of the proposed model-feature combination with that of results reported in literature is surmised sequentially in Table 10 which also includes the results of neural-network models which cannot be economically deployed in resource constrained systems.

A comparison of accuracy of the top-performing model in the current experiment with misfire detection system results presented in literature.

KNN: K-nearest neighbour; LMT: logistic model tree; SOM: self-organizing map; SVM: support vector machine.Boldfaced values represent the proposed approach performing better than the state of the art models.

It is apparent from Table 10 that very few studies propose a decision tree + histogram feature combination for misfire detection, with the specific use of vibration signals. Interestingly, a cascading CNN model developed by Terwilliger and Siegel achieves only 87.00% in performance 24 while the transfer learning approach and ANN methodology adopted by Naveen Venkatesh et al. and Firmino et al.,22,23 respectively, are individually and commendably high. The K-Star classifier and statistical feature combination investigated by Mulay et al. is the singular machine learning approach closer in performance to that of the proposed model combination. 19 This examination by Mulay et al. however, does not sequentially consider the performance of the model for different feature types including ARMA and histogram unlike the current study.

Conclusions

Misfires in internal combustion engines pose significant threats to engine longevity, operational smoothness, and emission levels. Regardless of the engine’s stroke type or fuel, the probability of misfires is ever-present. This study presents a roadmap for an economical and robust misfire detection system employing a MEMS accelerometer, a low-cost data acquisition card, and processing software. The proposed system detects misfires in cylinder banks by monitoring vibration data. This data undergoes feature extraction, feature-attribute selection, and final feature selection. The study identifies the J48 classifier with specific histogram attributes as the most effective model, yielding perfect training, 10-fold validation, and testing accuracies with minimal computation time. While the experimentation focussed on a gasoline engine, the proposed approach is suggested for misfire detection in combustion engines overall. Importantly, the study validates the viability of MEMS accelerometers over piezoelectric ones without compromising detection fidelity.

Limitations and future scope for investigation

The present study has limitations, such as not exploring the impact of ignition delay or spark timing advancement on misfire occurrence. The measurements, conducted in real-world conditions, include noise from extrinsic activities, mitigated by robust feature engineering. A key assumption is that the vibration signals hence extracted only account for a limited consideration of external noises from the environment a further accounting of which (in sampled data points) can intensify the robustness of the model sets. However, the vibration data does include vibrations filtering in from the functionality of other peripheral components in the ICE. Notwithstanding, this study fulfils predefined objectives, emphasizing lateral and longitudinal scalability across various engine types and fuels. Future studies could explore alternative classifiers and address misfires in multiple cylinder banks or various causes, optimizing a generalized misfire detection system. This could also span a parallel field deployment on ICEs fully propelling a mobile automobile in actual driving conditions in the real world.

Footnotes

Author contributions

V.V. conceptualization, data curation, formal analysis, investigation, methodology, software, validation, visualization, role/writing—original draft. N.V.S. conceptualization, data curation, formal analysis, funding acquisition, investigation, methodology, resources, supervision, validation; role/writing—review and editing. P.A.B. conceptualization, investigation, methodology, validation, supervision; role/writing—review and editing. S.V. conceptualization, data curation, formal analysis, Funding acquisition, investigation, methodology, resources, supervision, validation, role/writing—review and editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Considerations

There are no human participants in this article and informed consent is not required.

Consent to participate

Not Applicable.

Consent for publication

Not Applicable.

Data availability statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.