Abstract

To address the challenges of detecting small unmanned aerial vehicles (UAVs) under low-altitude, low-light conditions, we propose an innovative and efficient UAV detection model. This model integrates the EnlightenGAN image enhancement network with our improved YOLOv8n named LL-YOLO, leveraging their combined strengths. Initially, we created the GUET-UAV-LL dataset, consisting of low-light UAV images captured in low-altitude environments. EnlightenGAN is employed to enhance the visual clarity and characteristics of UAV images in challenging lighting conditions. These enhanced images are then processed by LL-YOLO, which incorporates the SPD-Conv building block to boost the network’s ability to detect small targets. Additionally, we introduce the LSKA mechanism into the SPPF, optimizing feature map processing without significantly increasing model complexity. To further improve detection accuracy and reduce computational overhead, we replace the Bottleneck module in YOLOv8n’s C2f with FasterNet’s FasterBlock. Extensive experiments on both public and our custom datasets validate the effectiveness of our approach. Our model demonstrates a 13.8% improvement in Recall (R) and an 8.1% increase in mAP@0.5 compared to the original YOLOv8n, with no change in model size. These results highlight our model’s superior detection performance while maintaining lightweight and real-time capabilities, positioning it as a more effective solution than existing alternatives.

Introduction

In recent years, Unmanned Aerial Vehicles (UAVs) have witnessed a remarkable surge in adoption across a wide array of domains, spanning from civilian to military and scientific applications. This growth can largely be attributed to their compact size, exceptional maneuverability, and ease of control,1,2 which make them highly adaptable and versatile in various settings. However, the rapid proliferation of unmanned aerial vehicles (UAVs) in both civilian and commercial applications has introduced not only new opportunities but also significant security and safety concerns. Incidents such as unauthorized drone intrusions into restricted airspaces, surveillance over private properties, and even accidents in densely populated areas have drawn increasing public and governmental attention. These emerging issues underscore the urgent need for efficient, long-range UAV detection systems that can address these concerns. 3

Presently, UAV detection technologies can be classified into several broad categories: acoustic, radio, radar, and visual methods.4–6 Among these, visual detection methods, which utilize visible light, have gained significant attention due to their distinct advantages, such as cost-effectiveness, high performance, and relatively simple implementation when compared to other detection technologies. As such, visual-based UAV detection holds tremendous promise for future advancements in the field, particularly as researchers strive to improve system efficiency and accuracy.7,8 However, despite the clear advantages, visual detection methods still face inherent limitations, particularly small targets detection in challenging scenarios. In real-world environments, several factors can hinder the effectiveness of visual detection techniques. For instance, images that suffer from high levels of noise often undergo distortion or lack sufficient exposure, leading to reduced clarity and impaired human visual perception. More critically, these issues pose substantial obstacles to object detection algorithms, which rely on clear, discernible features to function effectively. 9 In low-light conditions, the challenges intensify, as UAV features become less conspicuous and harder to extract, further complicating the detection process. This can result in false positives, missed detections, or other types of inaccuracies that significantly undermine the reliability of UAV detection systems. These difficulties are particularly pronounced when it comes to small targets, such as UAVs, which may be difficult to distinguish against complex backgrounds or in environments where the lighting conditions are suboptimal. 10

Given these challenges, it is clear that while visual-based methods show great potential, further research is needed to address their limitations in complex, noisy, and low-light conditions. Developing more robust algorithms capable of overcoming these environmental hurdles will be essential for improving the accuracy and reliability of UAV detection systems, paving the way for safer and more secure airspace management.

To address the aforementioned challenges in low-light UAV detection, we propose a cascaded model that synergistically combines EnlightenGAN with our LL-YOLO. While maintaining a non-redundant network structure, our approach significantly enhances the detection performance of small objects like UAVs in low-light environments. Our contributions can be summarized as follows:

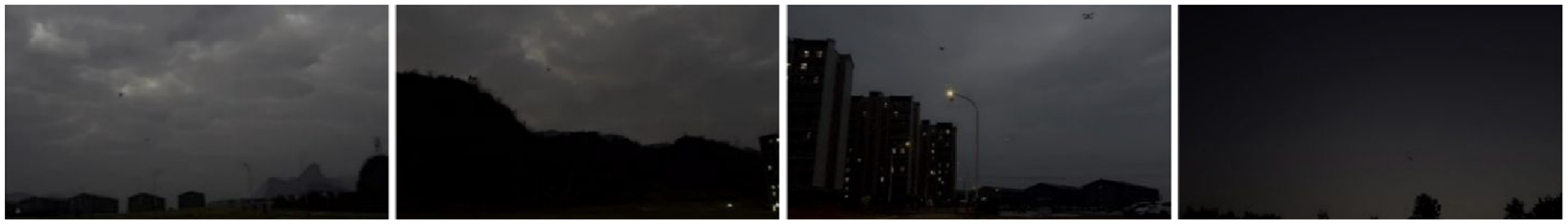

1. To address the severe scarcity of UAV detection datasets under low-illumination and complex background conditions, this study constructs a novel dataset by collecting 5000 high-resolution images (1920 × 1080) captured from real-world UAV flight. Each image contains between one and three UAVs, recorded under a variety of low-altitude, low-light environments such as those featuring trees, buildings, mountains, and streetlights—representing common but challenging background types for UAV detection.

In addition, to enhance the diversity and applicability of the dataset, 2746 relevant images was carefully selected from the publicly available Det-FLy dataset by removing images irrelevant to the objectives of this study. These selected samples were then integrated with the self-collected data to form the GUET-UAV-LL dataset, which provides a comprehensive and representative benchmark for low-light UAV detection tasks in realistic and complex environments.

2. To tackle challenges such as detail loss, poor feature representation, and low contrast in low-light UAV imagery, this paper proposes a two-stage detection framework tailored for low-illumination conditions. It integrates EnlightenGAN for image enhancement and a customized LL-YOLO detector. EnlightenGAN improves visibility and restores suppressed features, while LL-YOLO ensures accurate detection in complex scenes. The framework achieves a strong balance between visual quality and detection accuracy under degraded lighting environments.

3. To improve the detection of small, low-resolution targets like flying UAVs under low-light conditions. we design the SPD-Conv module into the YOLO detection framework. Unlike standard convolutions, SPD-Conv is designed to mitigate information loss during the feature extraction, which are critical for detecting small, low-contrast objects in visually degraded environments.

4. To alleviate the issue of target–background ambiguity under low-light conditions and mitigate interference from complex backgrounds, this study proposes a novel architectural design that integrates Large Kernel Self-Attention (LSKA) with SPPF. This hybrid mechanism captures rich contextual information, enhances spatial feature extraction, and suppresses background noise. By improving the model’s focus on target regions, the proposed approach constitutes a conceptual advancement in attention-guided feature modeling for robust object detection in visually degraded environments.

5. To reduce model parameters while preserving detection speed and accuracy, this study proposes the integration of FasterBlock from FasterNet in place of the conventional bottleneck module within the C2f structure of YOLOv8n. This design enhances efficiency without sacrificing performance, representing a novel contribution to the development of compact and high-speed object detection networks.

Related work

UAV detection

Limited computational power in early object detection research led to the manual design of image processing features using machine learning algorithms, followed by joint training. The emergence of deep learning has revolutionized object detection, with one-stage detection algorithms exemplified by You Only Look Once (YOLO), 11 and Single Shot Multibox Detector (SSD), 12 and two-stage detection algorithms, represented by Region CNN(RCNN), 13 Fast RCNN, 14 and Faster RCNN. 15 Dong et al. 16 proposed an approach that enhances the differentiation between UAVs and birds by incorporating a shallow feature pyramid network and an attention model into SSD. Que et al. 17 addressed the detection problem of “small, slow, and low” targets, such as UAVs. They augmented a standard training dataset with varying levels of noise to construct a detection system based on the YOLOv3 algorithm. Zhou et al. 18 introduced a multi-layer fusion model based on YOLOv8 to restore the image details of a small UAV, enhancing the detection of necks to express multi-scale features for accurately restoring image details. However, images captured in low-light conditions often experience degradation, such as low visibility, contrast, and uneven illumination, significantly impacting target detection performance. Xiao et al. 19 proposed specialized feature pyramid and context fusion networks to enhance object detection performance in low-light scenes, building upon the RFB-Net model introduced by Liu and Huang 20 Mainstream object detection algorithms, designed for well-lit conditions, may not yield satisfactory results in very low-light environments, highlighting the importance of improving low-light image quality due to its significant impact on object detection. 21

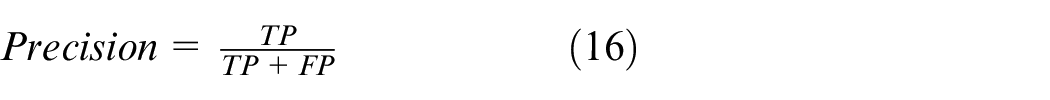

Image enhancement

Traditional enhancement methods, such as histogram equalization (HE) and its various variations,22,23 can effectively expand the dynamic range of pixels. However, in complex situations, they may lead to overexposure or underexposure. Image enhancement methods based on Retinex can be divided into Single Scale Retinex (SSR) and various Multi Scale Retinex (MSR) methods.24,25 CNN-based image enhancement methods have significantly improved image quality. They can be categorized into supervised and unsupervised learning, and are effective in restoring image color with the right prior assumption, but may have the opposite effect without it. Supervised learning relies on paired data during training, which may ultimately produce unrealistic images and have poor generalization ability. 26 MBLLEN 27 is a supervised learning method that uses paired low-resolution and high-resolution images for training. The model is optimized by minimizing the difference between the reconstructed image and the real high-resolution image using pixel-level loss functions like mean squared error during training. Researchers have proposed unsupervised image enhancement algorithms to deal with situations where many datasets are not paired. Zero-DCE 28 can perform image enhancement without the need for paired training data. It enhances images by fitting a curve to input images, directly producing a set of curve parameters used for non-linear per-pixel adjustments. Its primary focus is on brightness improvement, but it may become unstable in scenes with overexposure or severe shadows.

UAV detection with image enhancement

Image enhancement methods based on deep learning combined with YOLO for object detection have emerged. Wang et al. 29 proposed DK YOLOv5, a weak light adaptive object detection model based on YOLOv5. It uses weakly illuminated enhanced images as input and achieves relatively good visual effects, enhancing target information and features to some extent. IAT-YOLO 30 is a lightweight Transformer network based on YOLOv3, focusing on improving image brightness and contrast. However, in extreme situations like severe noise or complex lighting scenarios, its performance may be worse than specialized image enhancement methods. CPA-Enhancer 31 is a thought-guided adaptive enhancement module for object detection under unknown degradation conditions, and it can be trained end-to-end with common detectors.

EnlightenGAN has achieved high-quality image generation from low-light to high-light scenarios through unsupervised learning and can be combined with YOLO to produce satisfactory results in various processing tasks.32,33

Methods

Image enhancing by EnlightenGAN

EnlightenGAN is an image enhancement network that utilizes a Generative Adversarial Network (GAN) framework. It demonstrates good performance in various scenarios without requiring a large dataset of low-light and normal-light images from the same scene for training, particularly suitable for processing images under low-light and complex lighting conditions. 32

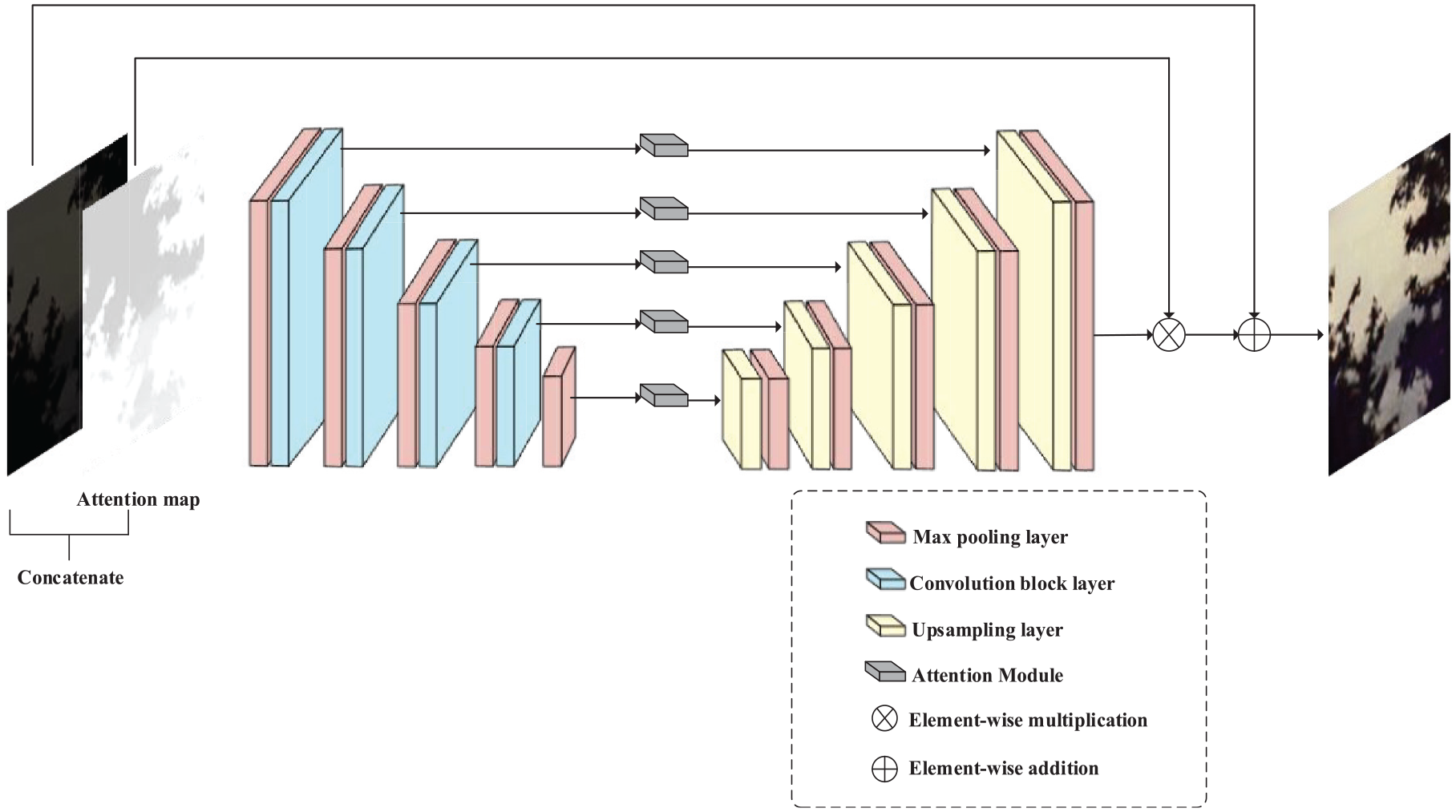

The overall architecture of EnlightenGAN is shown in Figure 1. It comprises two main components: the generator network and the discriminator network. The main structure of the generator network is U-net. To enhance dim areas more than well-lit regions and avoid overexposure or underexposure, the generator network takes weak light images and self-attention maps as input. Self-attention maps are generated based solely on the illumination intensity of the input RGB image. Subsequently, the attention maps are resized to match the size of each feature map and then multiplied with all intermediate feature maps and the output image to yield the enhanced image.

Overall architecture of EnlightenGAN.

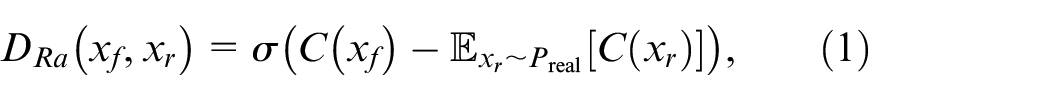

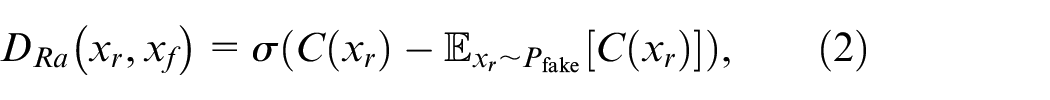

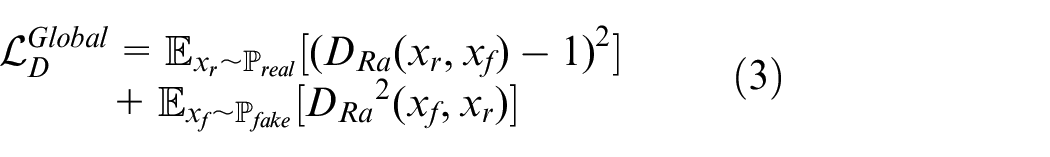

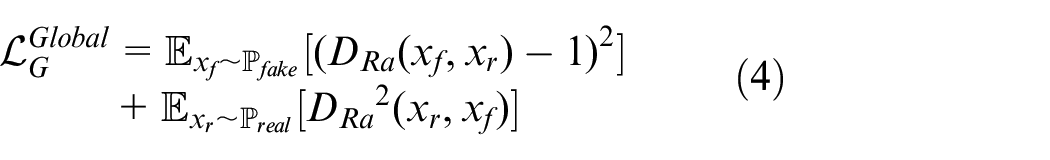

In the discriminator network, the enhanced image serves as the input. The discriminator acts as a binary classifier, consisting of a global discriminator and a local discriminator, which aims to distinguish between generated enhanced images and real images. The global discriminator is responsible for evaluating the entire image and assessing the overall lighting differences between the generated and real images. Its objective is to minimize adversarial loss based on global lighting characteristics, aiming to reduce the distance between the lighting distributions of real and output images. 34 The standard function of global discriminator can be represented as follows:

However, the global discriminator alone is not sufficiently adaptive for images with bright regions in dark scenes. Therefore, a local discriminator is needed to assist the global discriminator.

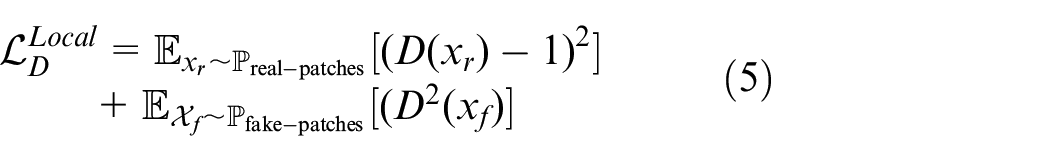

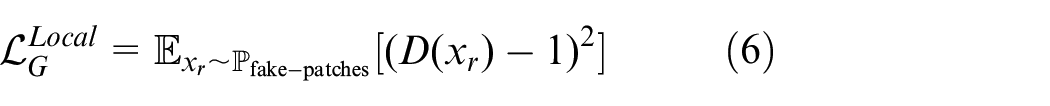

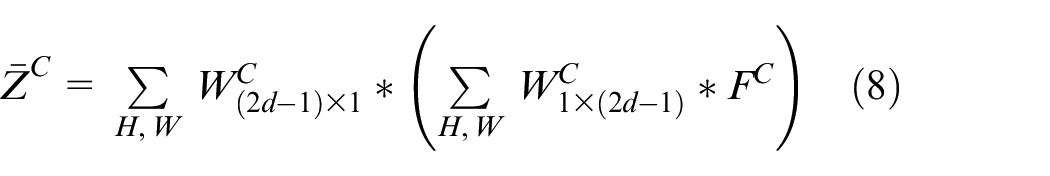

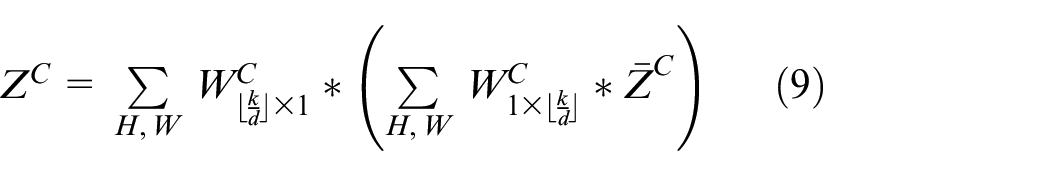

The local discriminator uses randomly cropped patches from the image for discrimination, aiming to assess the local detailed differences between the generated and real images and to improve the image’s detail features. The loss function can be represented as follows:

During the training, the overall loss function is:

LL-YOLO based on YOLOv8n

In recent years, YOLO is becoming increasingly popular as the leading real-time object detection method, and has now evolved into YOLOv12. Among YOLO series, YOLOv8 is renowned for its effective balance between detection speed and accuracy across a range of scenarios. 36

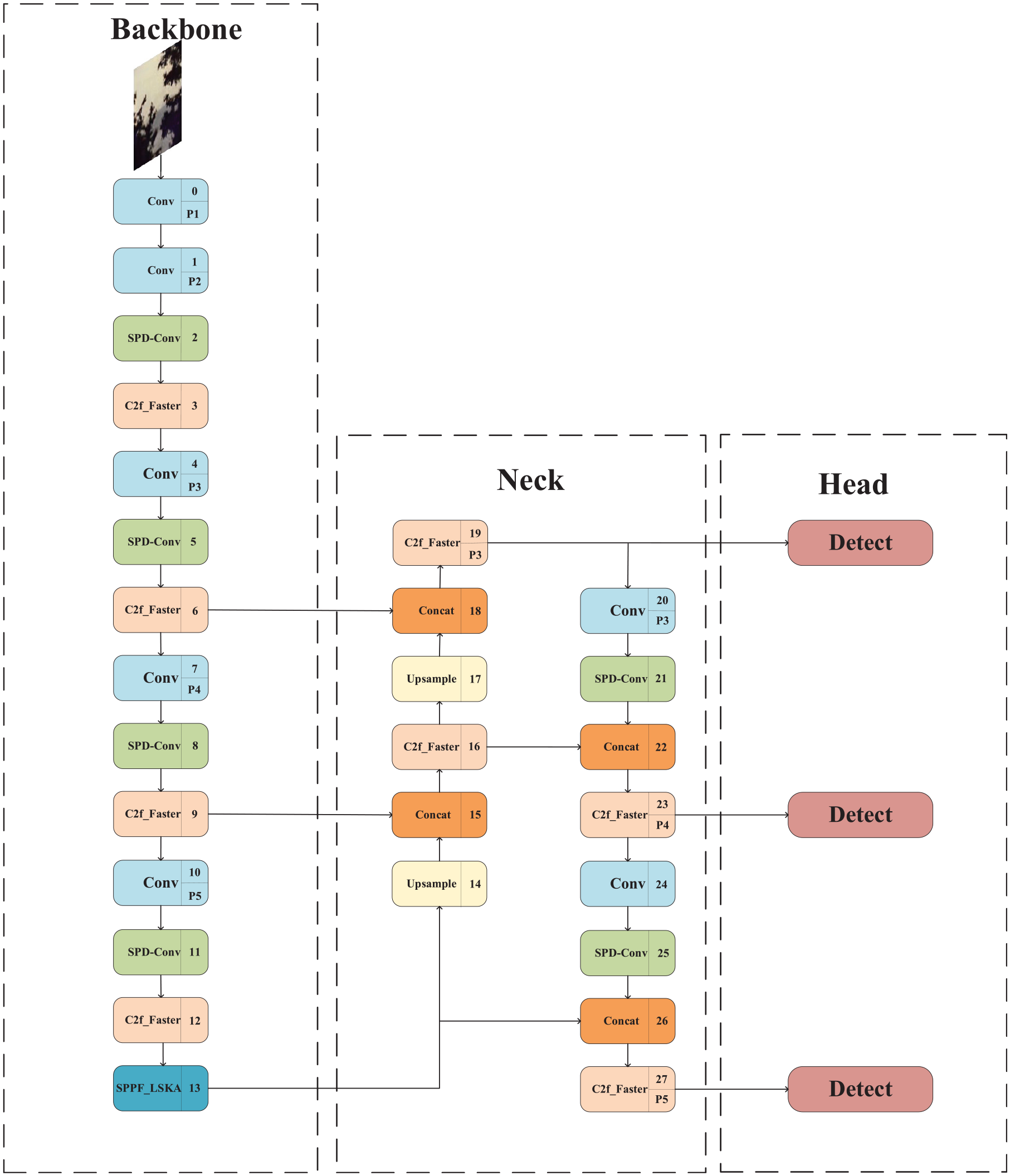

YOLOv8 detection network encompasses five distinct models. Among these, this paper specifically employs the YOLOv8n because of its optimal balance of model weight, inference speed, detection accuracy, and generalization capabilities. However, it still has limitations in detecting small objects under low-light conditions. Subsequently, we refined this algorithm by developing LL-YOLO. The complete architecture of LL-YOLO is illustrated in Figure 2. The proposed improvements are as follows:

Complete structure of LL-YOLO.

SPD-Conv replaces standard convolutions to reduce information loss during feature extraction, especially for small UAV targets, ensuring better retention of critical features. LSKA is integrated into SPPF to enhance spatial feature extraction by capturing richer contextual information and suppressing background noise. The C2f structure is optimized by adopting FasterBlocks from FasterNet, reducing redundant computation while maintaining model capacity.

SPD-Conv

In traditional CNN architectures, the application of pooling layers and stride convolutions naturally results in a progressive reduction in the spatial resolution of the images as the network depth increases. This architecture results in the loss of detailed information concerning small objects and leads to inefficient learning of feature representations. This can adversely affect the subsequent detection processes. 37

UAVs are not only small in size but are also often captured from high-altitude perspectives, making them more susceptible to background clutter, significant scale variation, and motion blur. These factors increase the likelihood that critical edge and structural features will be lost during the downsampling process of traditional convolutional networks. This issue becomes even more pronounced under low-illumination conditions, where image quality further degrades and target contrast is significantly reduced. In such scenarios, conventional feature extraction methods often struggle to capture sufficient edge and texture information, leading to decreased detection performance.

To address these challenges, we replaced traditional convolution with SPD-Conv, a new component that replaces traditional pooling layers and stride convolutions. 38 This modification enables downsampling operations that maintain the integrity of feature maps, preserving learnable information.

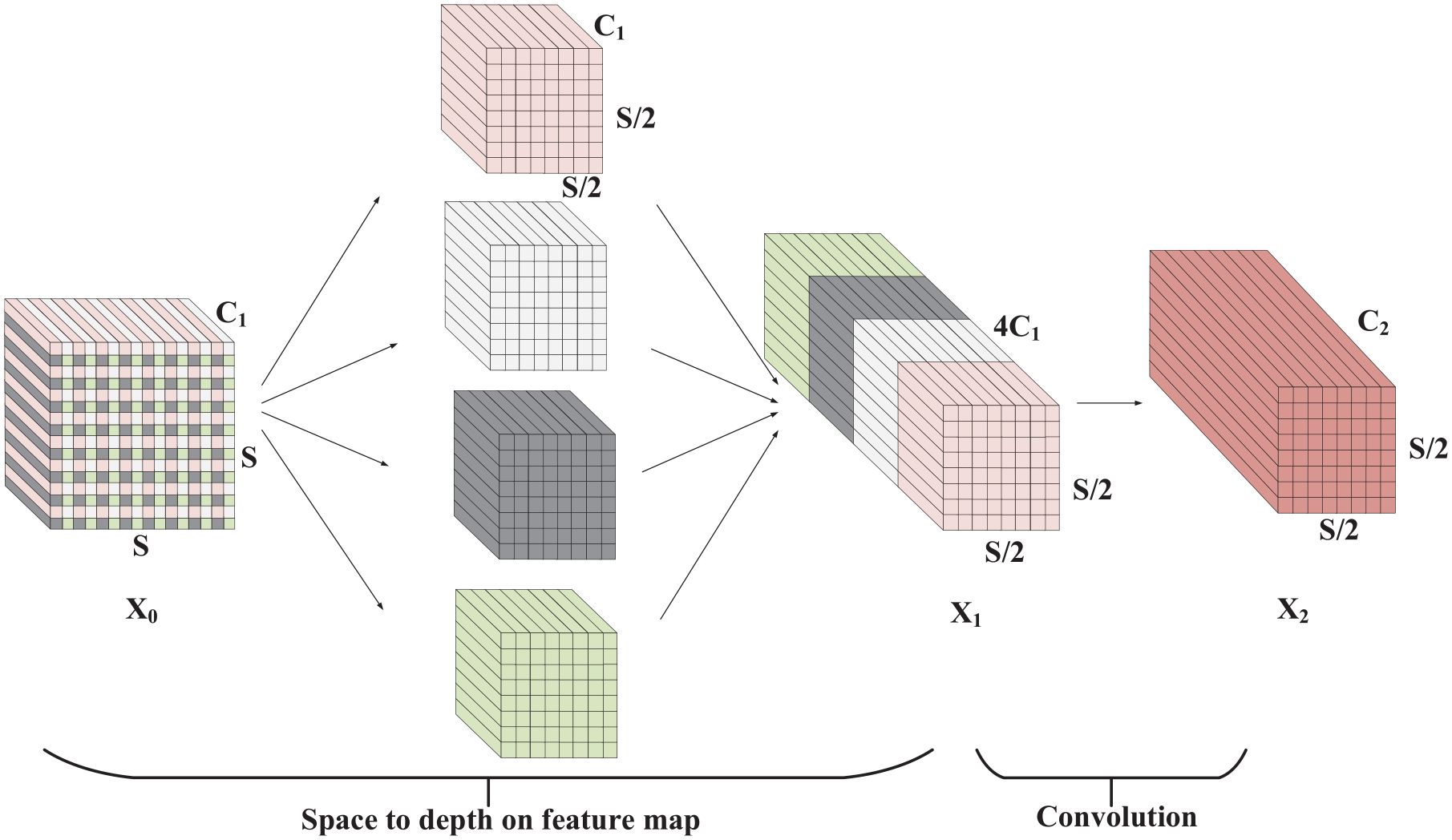

The SPD-Conv building block consists of SPD (space to depth) layer and non-strided convolutional layer. SPD layer reduces each spatial dimension of the input feature map to the channel dimension while preserving information within the channels. This can be achieved by mapping each pixel or feature of the input feature map to a channel. During this process, the size of the spatial dimensions decreases whereas the size of the channel dimensions increases.

The non-strided convolution (Conv) layer is a standard convolutional operation performed after the SPD layer. Non-strided convolution does not move across the feature map but performs convolution operations on each pixel or feature mapping. This helps to reduce the potential downsampling issue in the SPD layer and retains more fine-grained information.

While the scale is 4, the schematic diagram of SPD-Conv building block is shown in Figure 3. The input feature map is first transformed by the SPD layer. Starting from the initial feature map

The schematic diagram of SPD-Conv building block.

In this way, the spatial information of UAVs that would otherwise be lost due to traditional convolution operations is instead transferred and preserved in the channel dimension through the proposed SPD-Conv. This effectively reduces information loss during feature extraction under low-illumination conditions and maximizes the preservation of small UAV target features.

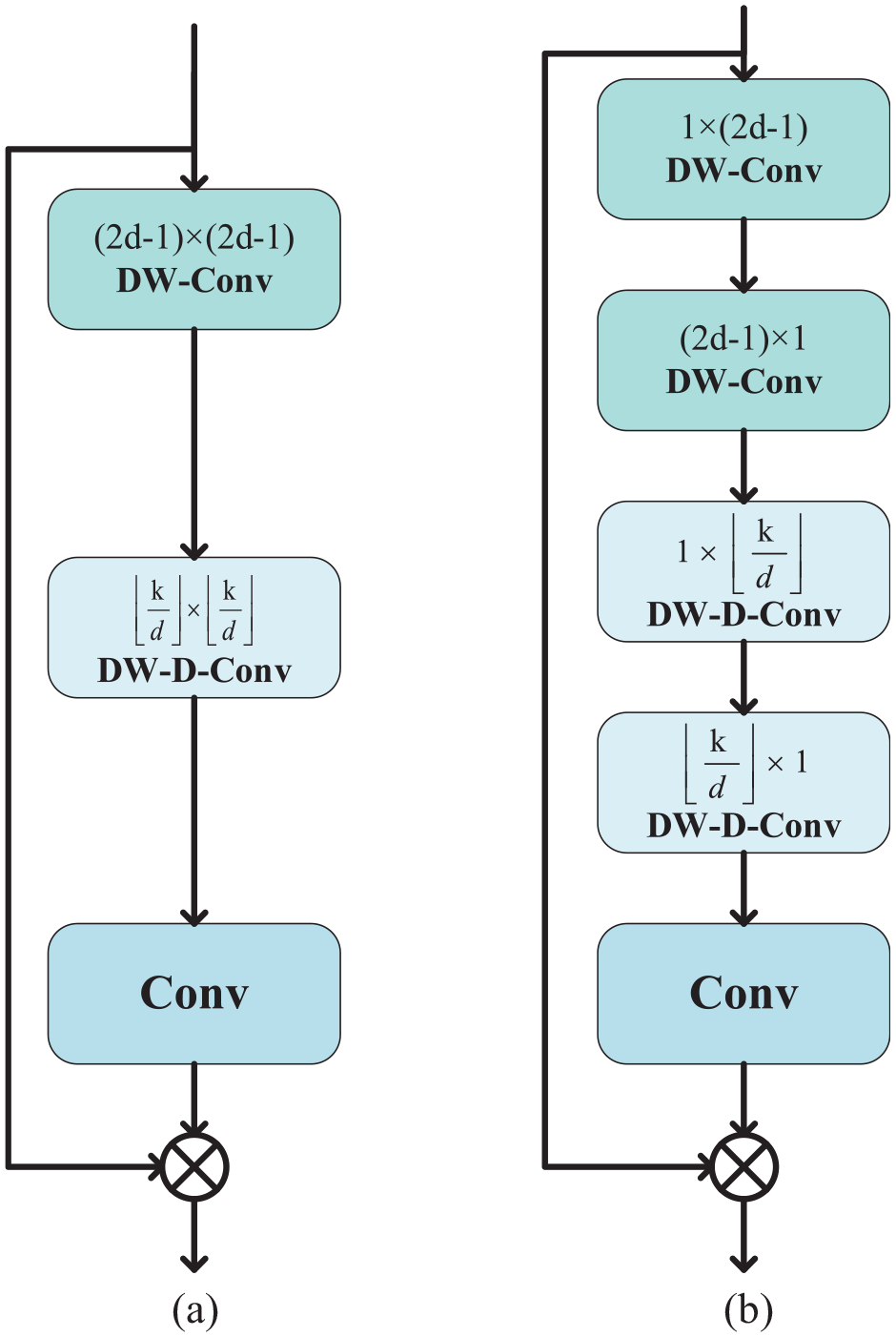

LKSA attention module

Attention is often used to focus on the most important parts of the input image. Large Kernel Attention (LKA) is the self-attention mechanism of spatial attention. Visual Attention Networks (VAN) with LKA modules have been proven to outperform Visual Transformers (ViT) in various vision-based tasks. 39 However, as the size of the convolutional kernels increases, the depth of convolutional layers in these LKA modules leads to a quadratic increase in computation and memory consumption. To mitigate these issues, Large Separable Kernel Attention (LSKA) can be applied. 40 A comparison on different designs of the LKA is shown in Figure 4.

Comparison on different designs of LKA module: (a) LKA design and (b) LSKA design.

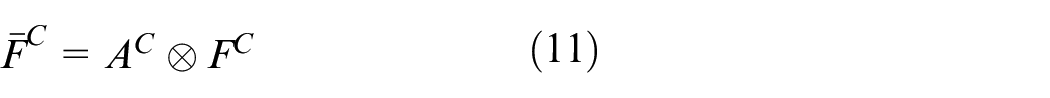

The output to LSKA can be expressed by the following formulas:

In these formulas, * and ⊗ represent convolution and Hadamard product, respectively.

The final output of the LSKA module, denoted as

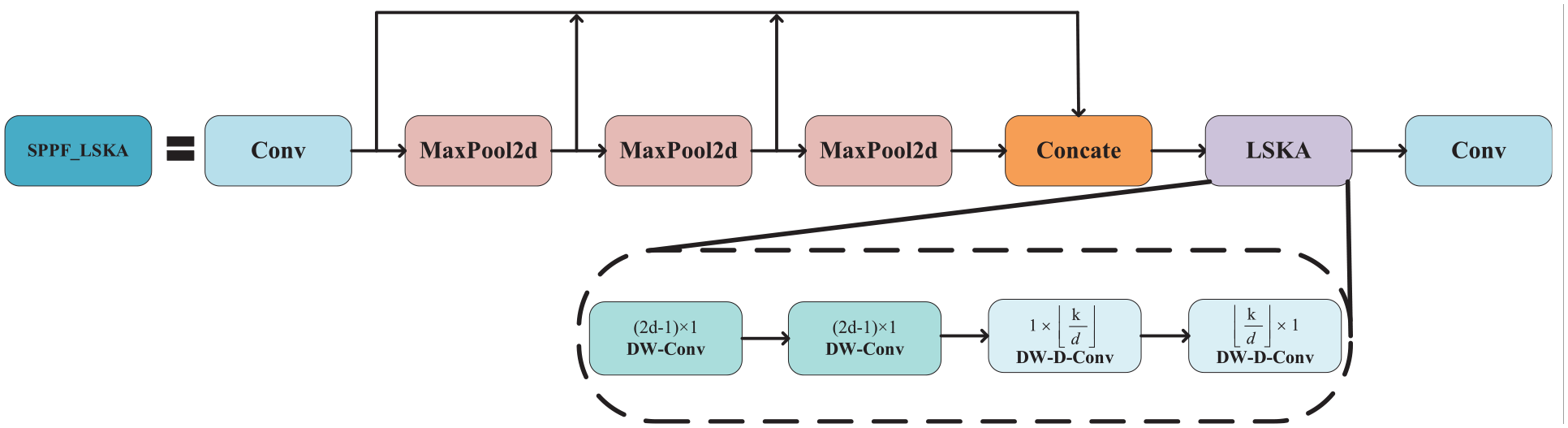

The structure of the SPPF_LSKA module is shown in Figure 5. In this design, the LSKA is integrated after each MaxPool2d operation and positioned before the second convolutional layer within the SPPF block. Specifically, LSKA utilizes large, separable convolutional kernels in combination with spatially dilated convolutions to effectively capture broader contextual dependencies. These operations generate a spatial attention map that highlights semantically important regions in the feature space. The generated attention map is subsequently used to adaptively reweight the original features, enhancing the network’s focus on critical spatial cues and improving the representation of salient regions in low-light conditions.

The structure of SPPF_LSKA module.

This design brings several key advantages to the SPPF module. First, it introduces an attention mechanism that compensates for the information loss typically associated with pooling operations, enabling the network to better retain and highlight critical features. Second, by utilizing large separable kernels and spatially dilated convolutions, LSKA not only performs efficient computation with minimal overhead but also enhances the network’s ability to focus on semantically important regions within the feature maps. This is particularly beneficial in low-light environments. Overall, integrating LSKA into SPPF significantly improves the network’s feature attention capability while preserving its lightweight characteristics.

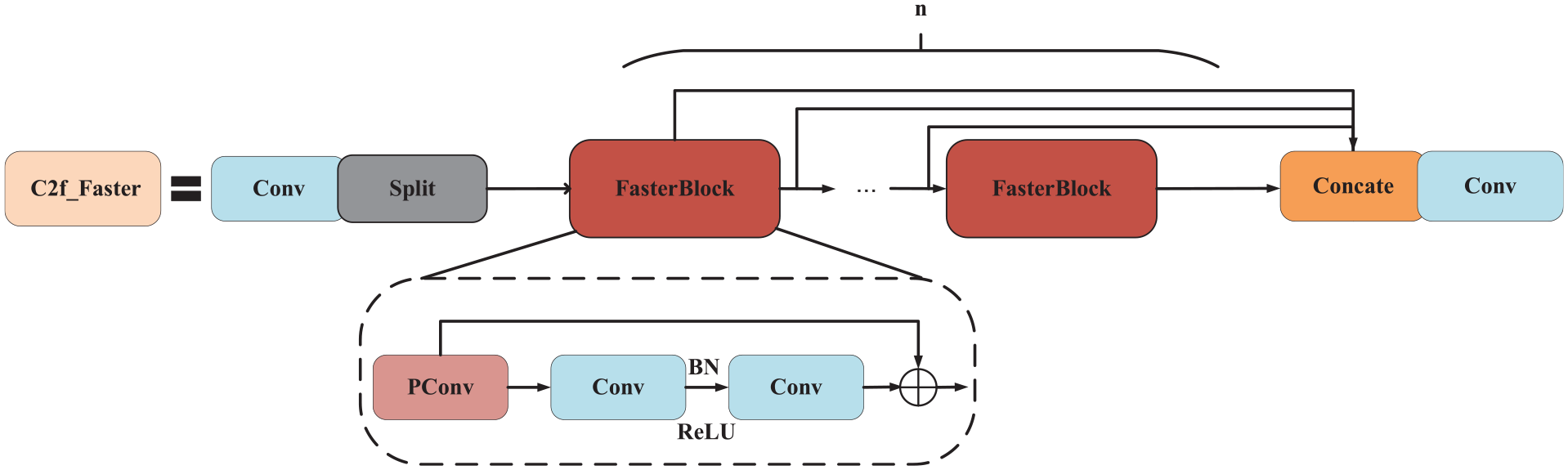

C2f_Faster: C2f using FasterBlock

Traditional CNN architectures suffer from the problem of redundant feature maps, in which feature maps from different channels exhibit high similarity. Although this issue has been addressed in some studies,41,42 there are few methods that effectively utilize it in a simple and efficient manner.

FasterNet was designed to reduce redundancy while improving its inference speed. It has demonstrated higher running speeds in various visual tasks than other networks, without compromising accuracy. 43 To reduce memory usage and enhance the computational efficiency of the detection network, we have replaced the bottleneck blocks in C2f with FasterBlock from FasterNet, thereby accelerating the speed of YOLOv8n. The specific replacement locations of the modules are shown in Figure 6.

The structure of C2f_Faster.

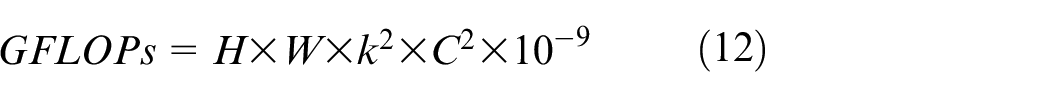

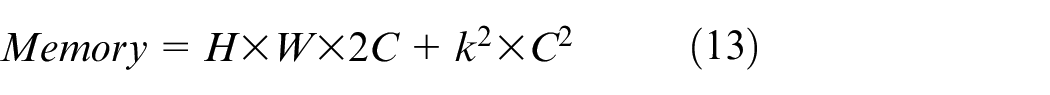

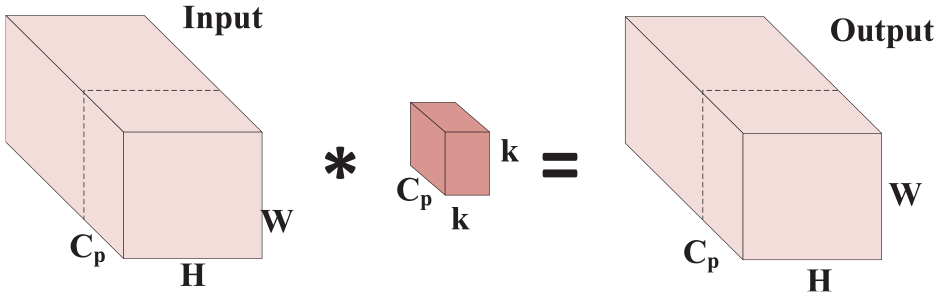

The key component of FasterBlock is PConv. The operation of PConv is illustrated in Figure 7. It selectively applies a regular Convolution to extract spatial features from a subset of input channels while keeping the remaining channels unchanged. For consecutive or regular memory access, the first or last consecutive Cp channels can be used as representatives for the computation of the entire feature map. Assuming that the input and output feature maps have the same number of channels and that the addition operations in the convolution calculations are ignored. The performance of the convolution is assessed based on GFLOPs. In this case, the following formula can be derived:

The operation diagram of PConv.

If

Obviously, PConv demonstrates lower memory usage. However, if PConv is applied alone, only

Experiments

GUET-UAV-LL dataset

Currently, very few datasets are available for UAV detection, specifically tailored to low-light UAV detection. We captured 5000 images of UAVs with a resolution of 1920 × 1080, with 1–3 UAVs in each image, creating a new dataset. This dataset was captured in dark light from different low-altitude backgrounds, such as trees, buildings, mountains, street lamps, etc. The distance between the UAVs and the camera was about 20–200 m. This can provide a benchmark for low-altitude and low-light UAV detection. Figure 8 shows sample images of this dataset. Additionally, to enrich the application scenarios of the model, we deleted images irrelevant to our research from Det-Fly, 44 and retained 2500 images to add to the self-built dataset captured before.

Examples of GUET-UAV-LL dataset.

Simultaneously, we captured 6000 images of single or multiple UAVs flying under daylight conditions to create a training set for EnlightenGAN. Subsequently, we applied EnlightenGAN to enhance the low-light dataset. The results of enhancement are presented in Figure 9. The enhanced images exhibit more pronounced features of the UAV targets than the original low-light images.

The results of enhancement of GUET-UAV-LL dataset.

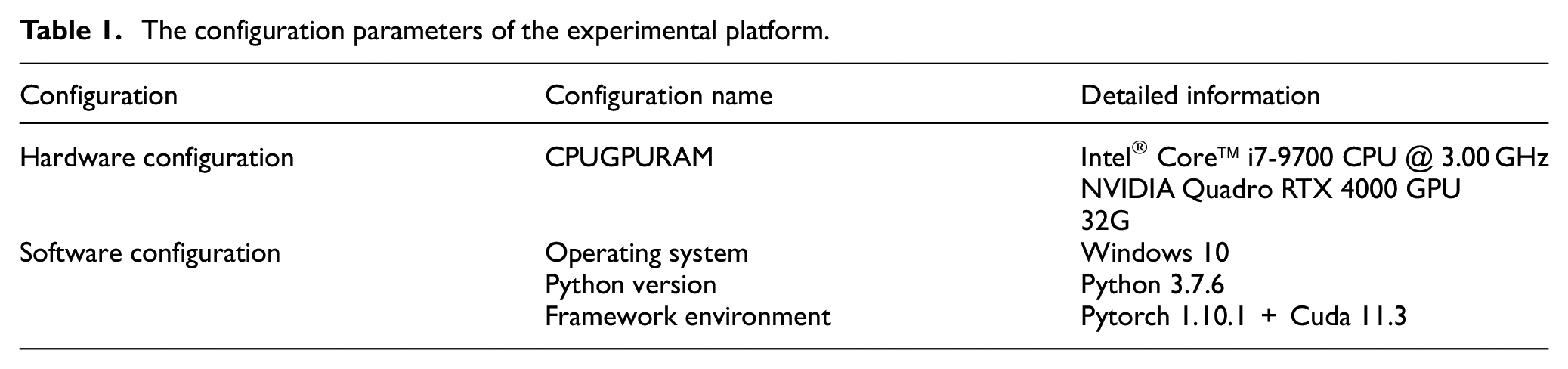

Experiment environment and hyperparameter

The configuration parameters of the experimental platform are presented in Table 1. Using this platform, we conducted training, contrast experiments, and ablation experiments. Finally, we validated the effectiveness of the adopted improvement measures and our improved network on different datasets. Hyperparameters of the experimental platform are presented in Table 2.

The configuration parameters of the experimental platform.

Hyperparameters for training.

The model parameters were chosen based on established literature and empirical evaluation of our dataset. AdamW was selected for its effectiveness in accelerating convergence and maintaining stability. An initial learning rate of 0.01 and momentum of 0.937, consistent with YOLOv8 defaults, were used, as they strike a balance between convergence speed and model accuracy for various object detection tasks. The learning rate of 0.01 was particularly chosen after conducting preliminary experiments, showing that it facilitates rapid but stable convergence across various architectures. To prevent overfitting, a weight decay factor of 0.0005 was used, a common regularization technique in YOLO implementations. This value, after tuning, provided a good balance between model complexity and generalization. Given the constraints of our GPU memory and the need to balance training speed with model stability, a batch size of 16 was selected. This value was found to offer an optimal compromise between efficient utilization of GPU resources and maintaining stable gradient updates during the backpropagation process. Larger batch sizes were tested but led to instability, whereas smaller sizes significantly slowed convergence. The total number of training epochs was set to 100, a value that ensured the model had sufficient opportunity to converge effectively without prematurely overfitting to the training data. The number of epochs was empirically determined based on the monitoring of validation performance, ensuring that the model reached a plateau in terms of loss reduction. The final choice of 100 epochs was validated through extensive experimentation, confirming that it resulted in stable training dynamics and effective convergence across the training process.

In summary, the parameter settings were determined through a combination of theoretical considerations, prior empirical findings, and iterative tuning on our dataset. These settings were validated on our dataset, showing stable training dynamics and effective convergence.

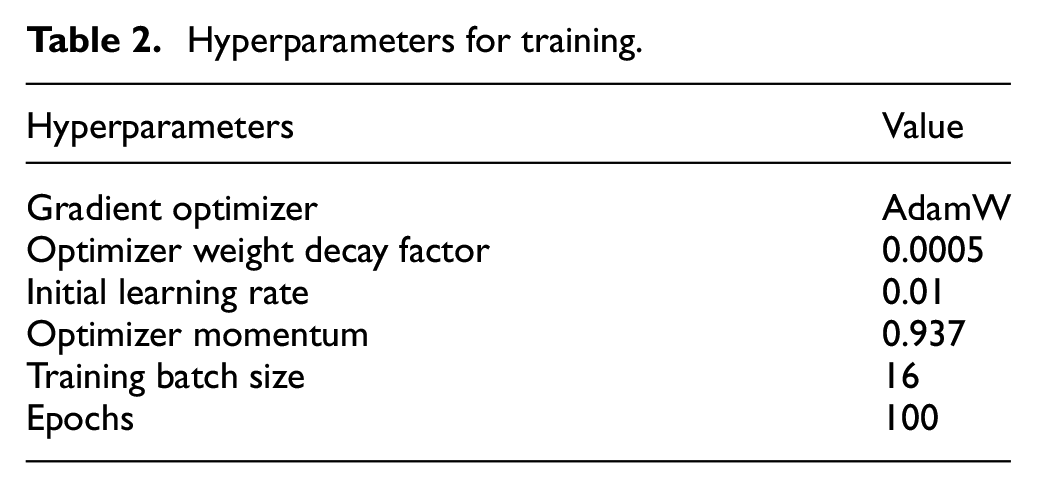

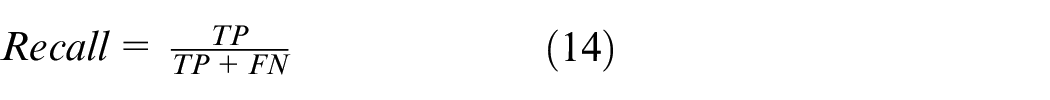

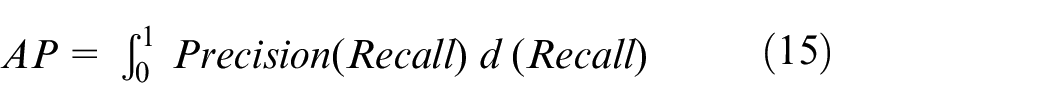

Evaluation metrics

In the experiment, we used two evaluation metrics, Recall (R) and mean Average Precision (mAP) to evaluate the performance of the algorithm. The higher the values of these two metrics, the better the performance of the detection algorithm. Recall (R) is defined as follows:

where TP (True Positive) is the number of correctly identified UAVs by the detection algorithm. FP (False Positive) refers to the number of detected backgrounds but classified as UAVs. FN (False Negative) is the number of UAVs erroneously classified as background The mAP is the mean of the Average Precision (AP) for all categories. When detecting only a single object, the values of AP and mAP are equal. And then they can be defined as follows:

mAP@0.5 and mAP@0.75 indicate the mAP values when IoU = 0.5 and IoU = 0.75 respectively. The values of R and F1score are defined as follows:

Frames Per Second (FPS), the frame rate per second, is used to evaluate the processing speed of the model. 45 The calculation formula of FPS is:

Preprocess corresponds to the time taken for image preprocessing, inference refers to the inference time, and postprocess signifies the time spent on image post-processing.

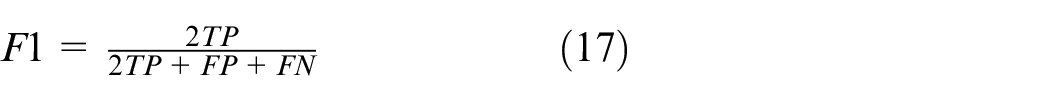

Comparisons of YOLO network

In order to compare the differences between the different mainstream series in the YOLO network and the differences in different models under YOLOv8, we first conducted comparative experiments related to YOLO on GUET-UAV-LL, and the experimental results are shown in Table 3. From Table 3, it can be seen that in the YOLOv8 series, although the detection accuracy and model parameter size of YOLOv8n are not optimal, they have achieved an effective balance between speed and accuracy. Moreover, under the same conditions of being the smallest model in the series, other network do not achieve the detection performance of YOLOv8n.

The experimental results of mainstream YOLO.

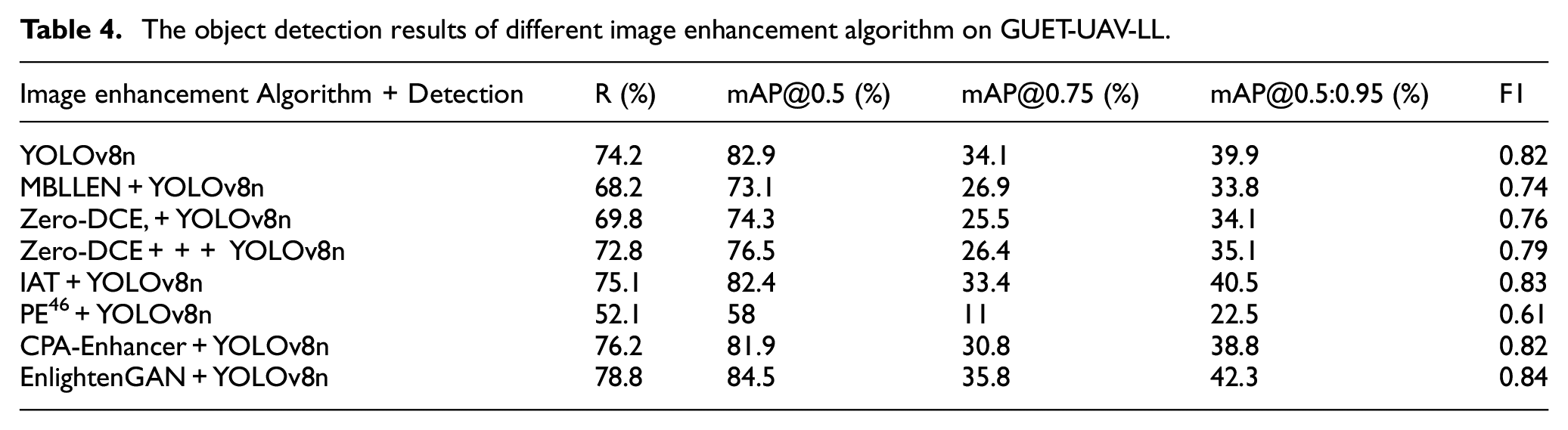

Comparisons of image enhancement algorithm

Applying suitable image enhancement methods can enhance the features of UAV targets under low-light conditions, thereby aiding subsequent object detection. We investigated several image enhancement networks to enhance the GUET- UAV-LL. Subsequently, the dataset was trained and validated using YOLOv8n. The experimental results are presented in Table 4. It shows that not all image enhancement methods are effective. In some cases, certain methods can negatively impact detection performance.

The object detection results of different image enhancement algorithm on GUET-UAV-LL.

For example, the combination of EnlightenGAN with YOLOv8n yields superior results compared to using YOLOv8n alone. Specifically, this combined approach leads to a 4.6% increase in Recall, a 1.6% improvement in mAP@0.5, and a 0.02 increase in F1 score. However, it is important to note that such combinations do not always result in enhanced performance. In the case of the PE-YOLO framework, the integration of the PE image enhancement module with YOLOv8 led to a degradation in detection capability. This highlights the crucial importance of selecting the appropriate image enhancement network for improving the performance of object detection tasks. EnlightenGAN, in this context, demonstrates its ability to effectively assist in target detection tasks by enhancing the relevant features while maintaining or even boosting detection accuracy, making it a suitable choice for such applications.

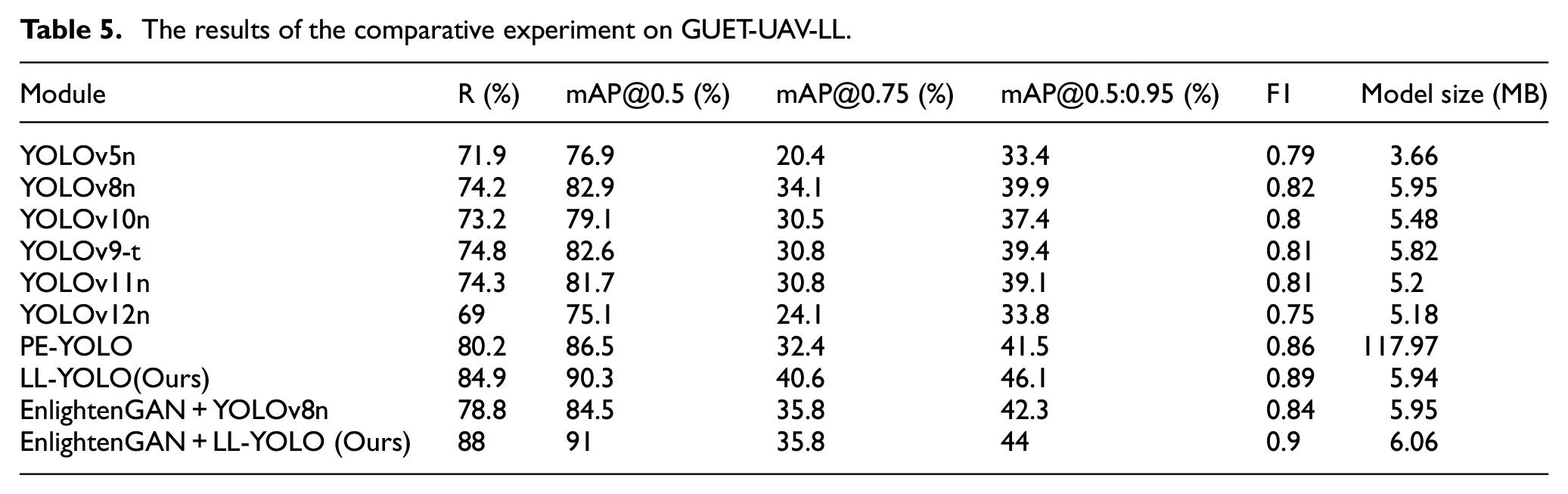

Experiment on GUET-UAV-LL

However, enhancing only the input low-light images did not yield the desired detection performance we need. Consequently, we proceeded to improve YOLOv8n further and then develop LL-YOLO. The final improvement results on testset are presented in Table 5. It reveals that our LL-YOLO, an improvement of YOLOv8n, surpasses the performance of native YOLOv8n. It demonstrates a remarkable 10.7% increase in R and a substantial 7.4% improvement in mAP@0.5. Furthermore, when EnlightenGAN is employed, the final results are significantly enhanced, with R and mAP@0.5 reaching 88% and 91% respectively. This represents a substantial improvement of 13.8% and 8.1%, respectively, compared to the initial results.

The results of the comparative experiment on GUET-UAV-LL.

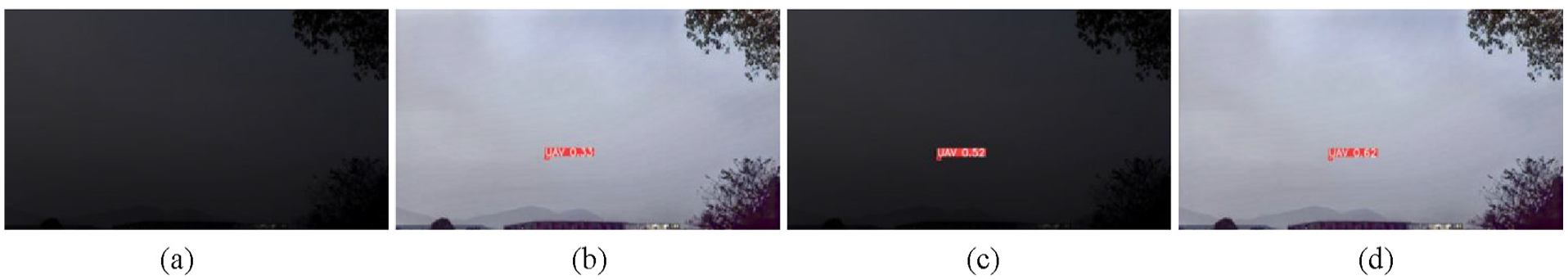

To better illustrate the detection performance of LL-YOLO, Figure 10 shows a comparison of the single UAV detection results, as shown below. This illustrates the detection scenario for a single UAV. In Figure 10(a), it is clear that the target cannot be detected using the YOLOv8n network alone, resulting in missed detection. Figure 10(b) shows how the EnlightenGAN image enhancement network is used to help detect the UAVs, and the target is successfully detected. Notably, in Figure 10(c), our improved LL-YOLO can directly detect UAVs flying under low-light conditions, and the confidence is much higher compared to using the combination of the above only. The best results are obtained when EnlightenGAN and LL-YOLO are used together, as shown in Figure 10(d).

The comparison of the single UAV detection results on GUET-UAV-LL: (a) YOLOv8n, (b) EnlightenGAN+YOLOv8n, (c) LL-YOLO, and (d) EnlightenGAN+LL-YOLO.

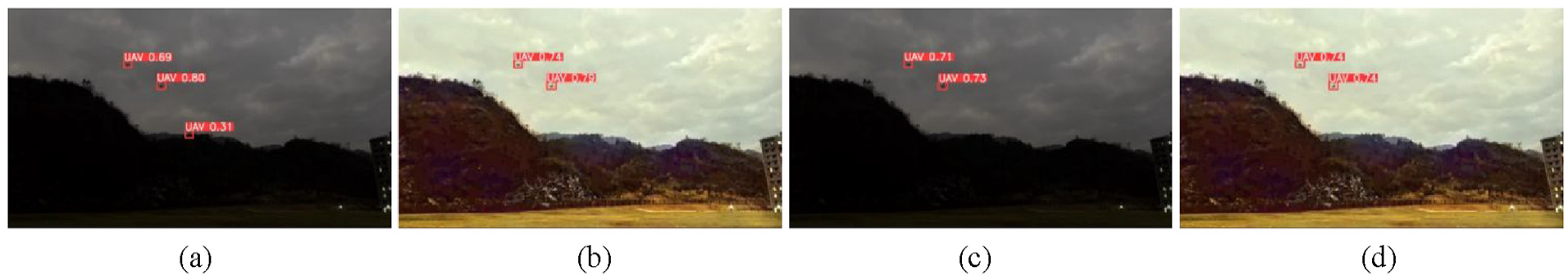

The detection results of detecting multiple UAVs in complex backgrounds are illustrated in Figure 11. In Figure 11(a), it can be observed that there is one UAV instead of three, resulting in false detection. In Figure 11(b), despite the increased confidence after enhancing the image with EnlightenGAN before detection, cases of missed detection still occurred. Using LL-YOLO alone, as shown in Figure 11(c), the UAVs can be correctly detected. In Figure 11(d), simultaneously using EnlightenGAN and LL-YOLO not only eliminated missed detection but also further improved the confidence score. It is evident that LL-YOLO is effective for UAV detection under low-light and complex backgrounds, and the combination of EnlightenGAN + LL-YOLO yields excellent results.

The comparison of the multiple UAVs detection results on GUET-UAV-LL: (a) YOLOv8n, (b) EnlightenGAN+YOLOv8n, (c) LL-YOLO, and (d) EnlightenGAN+LL-YOLO.

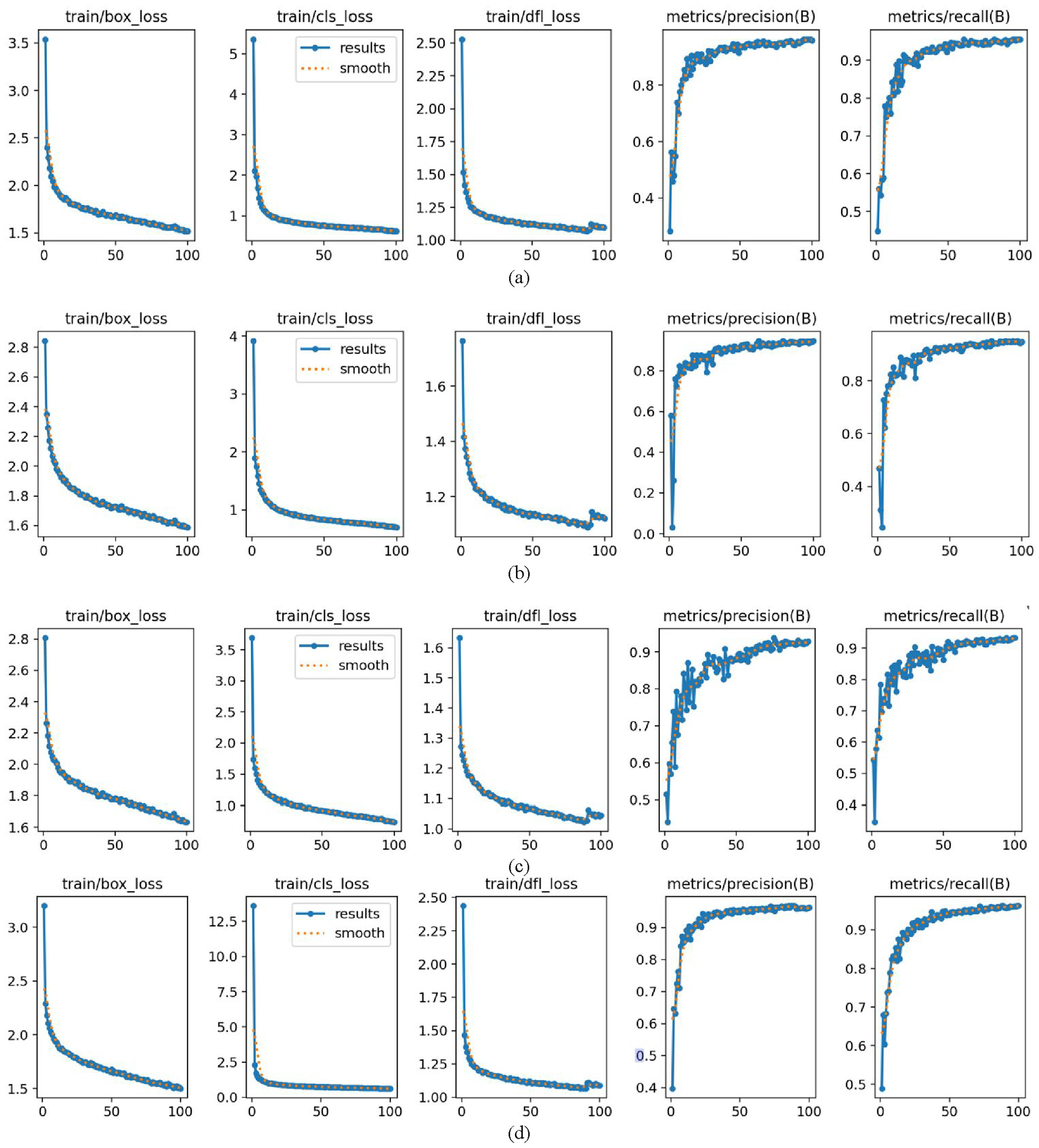

Comparison of training optimizers

The choice of optimizer plays a crucial role in the convergence behavior and final performance of deep neural networks, especially in prediction tasks such as object detection. Although most YOLO-based models adopt Adam or SGD by default, recent studies have highlighted the benefits of newer optimization methods like AdamW and NAdam in improving stability and generalization. To better understand the impact of the optimizer on our LL-YOLO, we conducted a comparative study using four widely adopted optimizers: SGD, Adam, NAdam, and AdamW. All experiments were conducted under identical training settings, and the results are analyzed in terms of convergence speed, and detection accuracy.

As shown in Figure 12, AdamW (Figure 12(d)) demonstrated the most favorable behavior across all metrics, with a rapid and stable decline in loss functions and a consistently high precision and recall throughout training. The classification loss started higher than others but dropped quickly, indicating effective learning. Adam (Figure 12(c)) showed reasonably good convergence and smooth loss curves; however, its precision fluctuated in the mid-stage of training, suggesting some instability. NAdam (Figure 12(b)) exhibited relatively slower convergence in both box_loss and dfl_loss, and although its precision was fairly stable, its recall plateaued at a lower level, indicating suboptimal generalization. SGD (Figure 12(a)) performed steadily and showed minimal fluctuations, but its convergence was slower compared to the other optimizers. Among all, AdamW achieved the best trade-off between convergence speed and stability.

Training effect diagrams of different optimizers in LL-YOLO: (a) SGD, (b) NAdam, (c) Adam, and (d) AdamW.

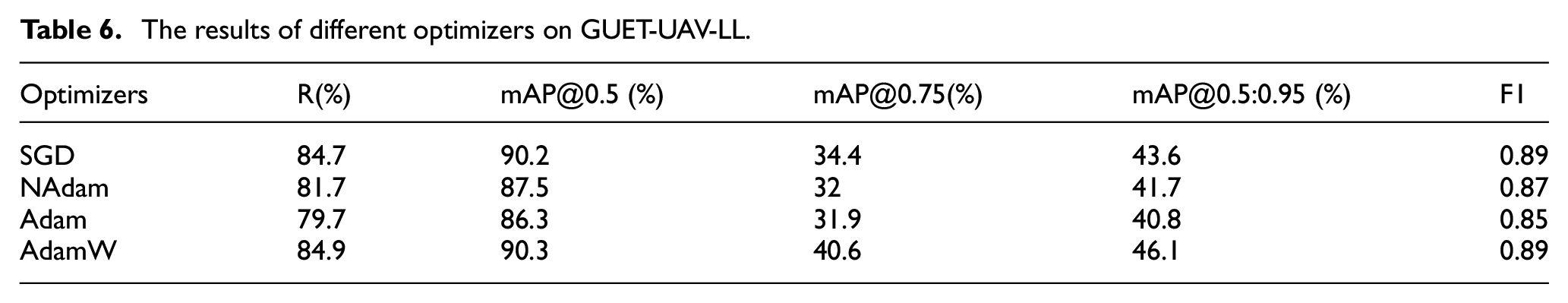

To further quantify the impact of different optimizers on detection performance, we summarized the key evaluation metrics in Table 6 for each model trained with SGD, NAdam, Adam, and AdamW on the same testset. The metrics include R, mAP@0.5, mAP@0.75, mAP@0.5:0.95, and F1 score, offering a quantitative comparison under consistent evaluation settings. Consistent with the observations in Figure 12, AdamW achieved the highest detection performance. SGD showed solid performance with relatively high mAP. NAdam yielded moderate results, whereas Adam had the lowest overall metrics.

The results of different optimizers on GUET-UAV-LL.

It confirms that AdamW provides the best balance between accuracy and efficiency, and thus it is adopted as the default optimizer in our subsequent experiments.

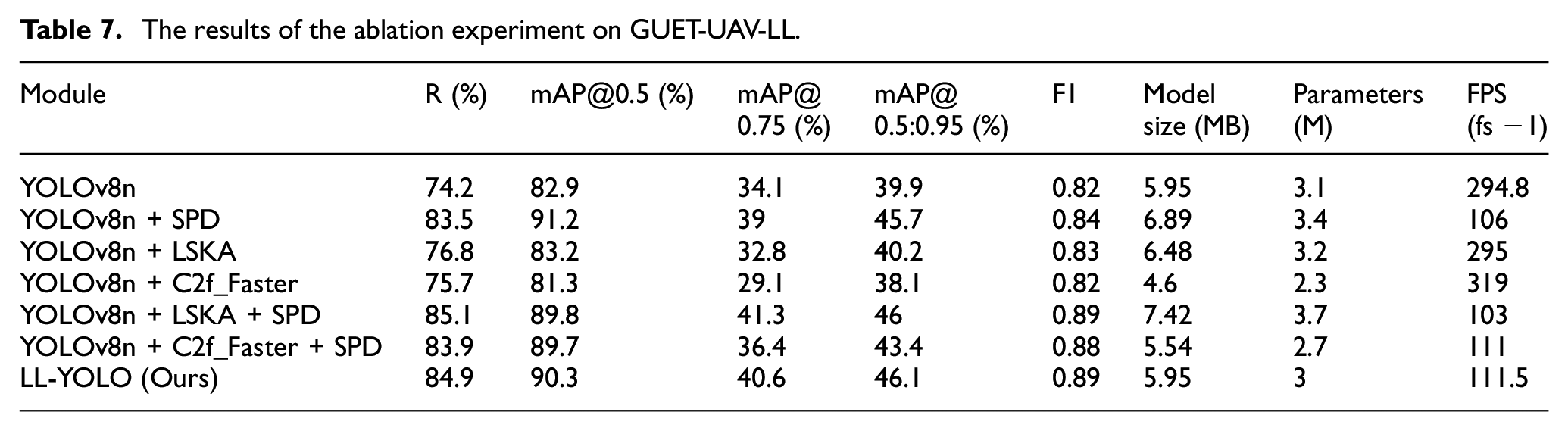

Ablation experiment

To analyze the specific functions of each improvement in LL-YOLO, we conducted additional ablation experiments. The experimental results are presented in Table 7.

The results of the ablation experiment on GUET-UAV-LL.

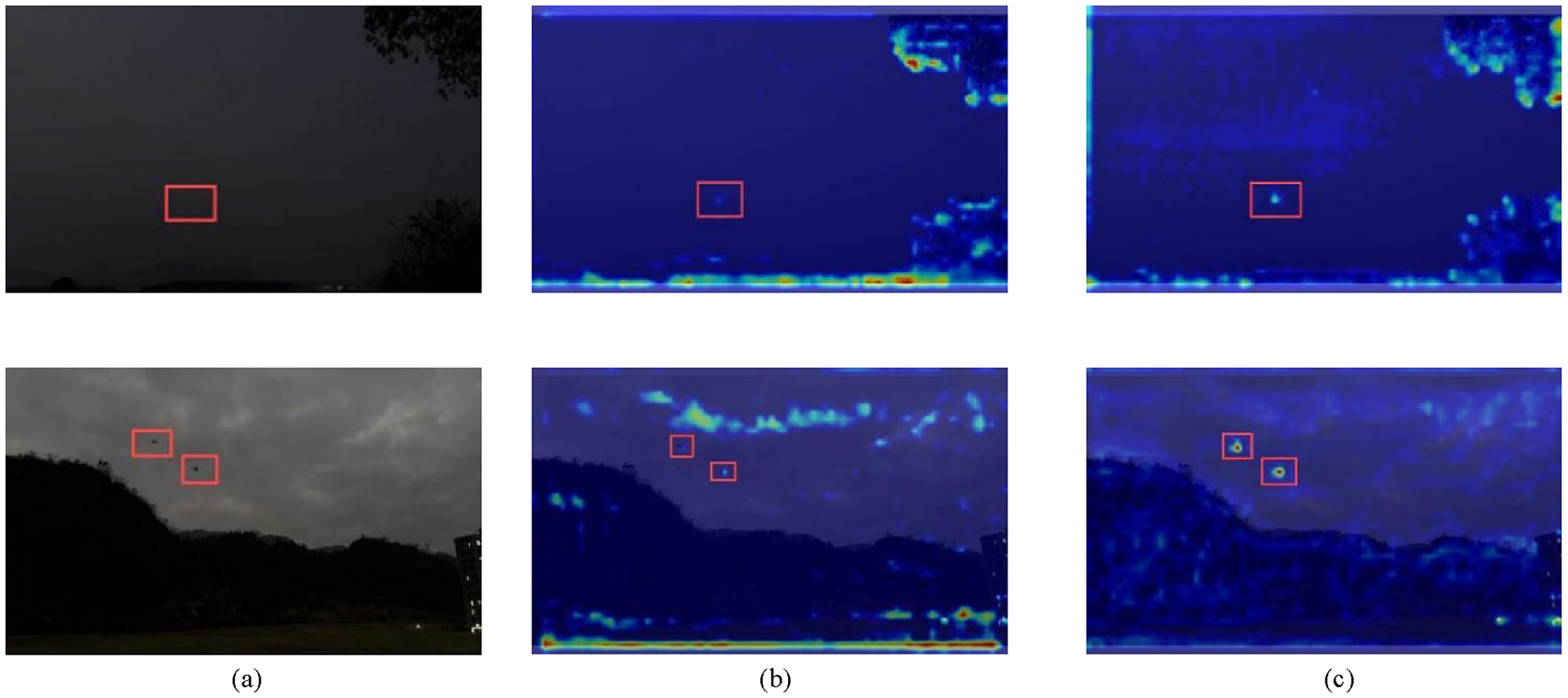

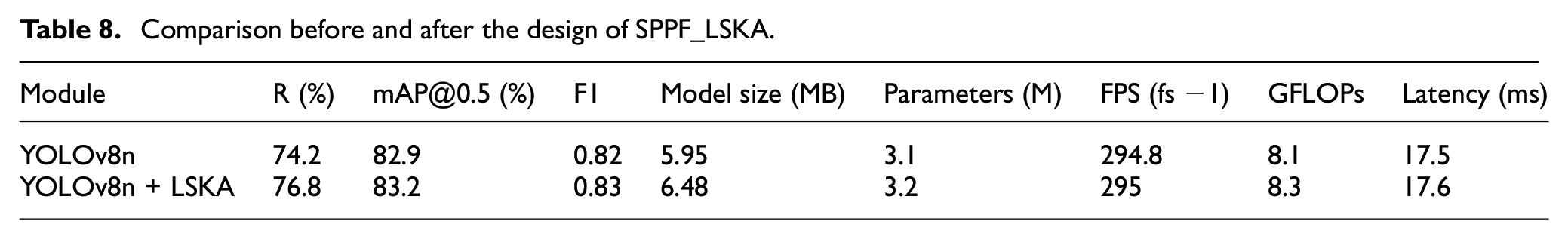

Following the introduction of SPD-Conv, Recall (R) increased by 9.3% compared to the original YOLOv8n, and mAP@0.5 increased by 8.3%. mAP@0.75 increased by 4.9%, and mAP@0.50:90 increased by 5.8%. These experiments demonstrate that SPD-Conv significantly enhances the detection performance of YOLOv8n under low-light conditions. The use of LSKA alone resulted in a 2.6% improvement in Recall. In order to visually and objectively demonstrate the effect of LSKA on the entire model, we used the Grad-CAM heatmaps for analysis. From Figure 13, it can be seen that in the original YOLOv8n, there is significant interference from the bottom background, leading to false detections. LSKA can help YOLO reduce background interference and focus more on the features of the target itself. At the same time, we analyze how the LSKA design influences computational complexity alongside its benefits for detection accuracy. Table 8 summarizes a comparison under identical validation and inference settings, reflecting the changes brought by SPPF_LSKA. Table 8 demonstrates that LSKA enhances detection performance while incurring only a marginal increase in computational complexity.

The comparison of Grad-CAM heatmaps: (a) input images, (b) YOLOv8n, (c) YOLOv8n + LSKA. The red box is artificially added for observation.

Comparison before and after the design of SPPF_LSKA.

However, solely improving the detection capability often compromises the network’s real-time performance. Table 6 illustrates that when the SPD-Conv and LSKA attention mechanisms are employed simultaneously, resulting in a 0.6M increase in model parameters. It makes the detection model the largest among all evaluated models. Consequently, we decided to introduce C2f_Faster to Enhance network efficiency.

After solely optimizing YOLOv8n, we decreased the Parameters to 6.4 and reduced the detection model size by 1.35 MB. When attempting to apply C2f_Faster to speed up the combination of SPD-Conv and LSKA, we observed that R decreased by only 0.2% compared to the previous value of 85.1%, whereas mAP@0.5 increased by 0.5% compared to the previous value of 89.8%. The values of mAP@50:90 and F1 were the best among all models.

Therefore, the combination of YOLOv8n, LSKA, SPD-Conv, and C2f_Faster, referred to as LL-YOLO, can maintain excellent performance while further reducing parameters and model size. The aforementioned ablation experiments underscore the significance of component design in enhancing the detection capabilities of UAVs under low-altitude and low-light conditions, thus validating the effectiveness of these measures in model optimization.

Model universality experiments

In the detection task, the generalization ability of the model is one of the important criteria for measuring the model. Due to the diversity of real scenarios and the differences in data distribution, the model must be able to demonstrate good performance and generalization ability in different datasets. To further verify the superiority of LL-YOLO, we conducted performance tests on existing public datasets.

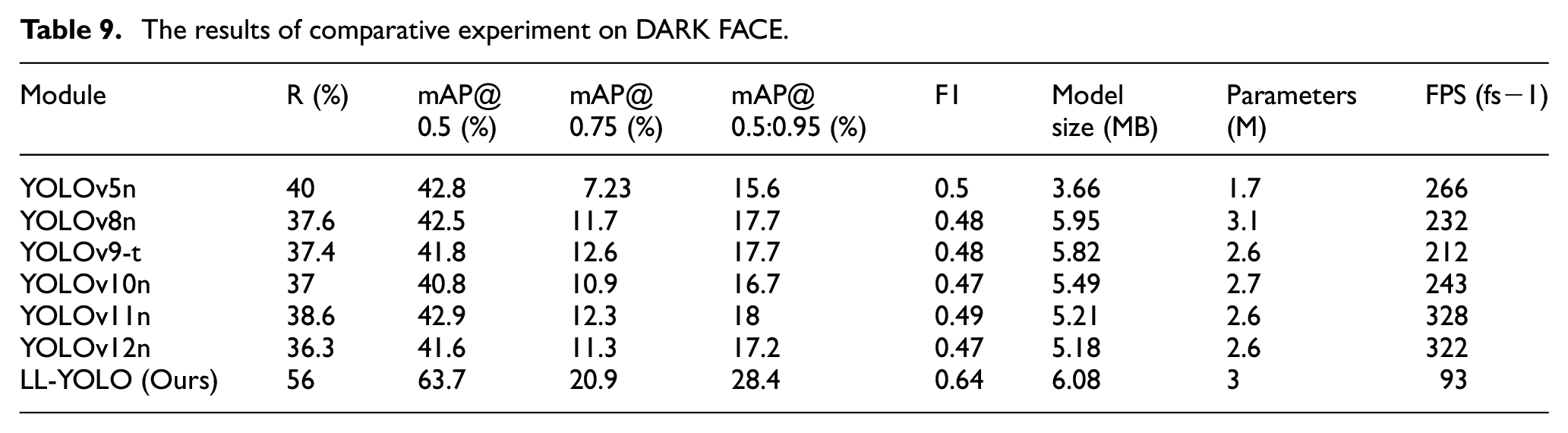

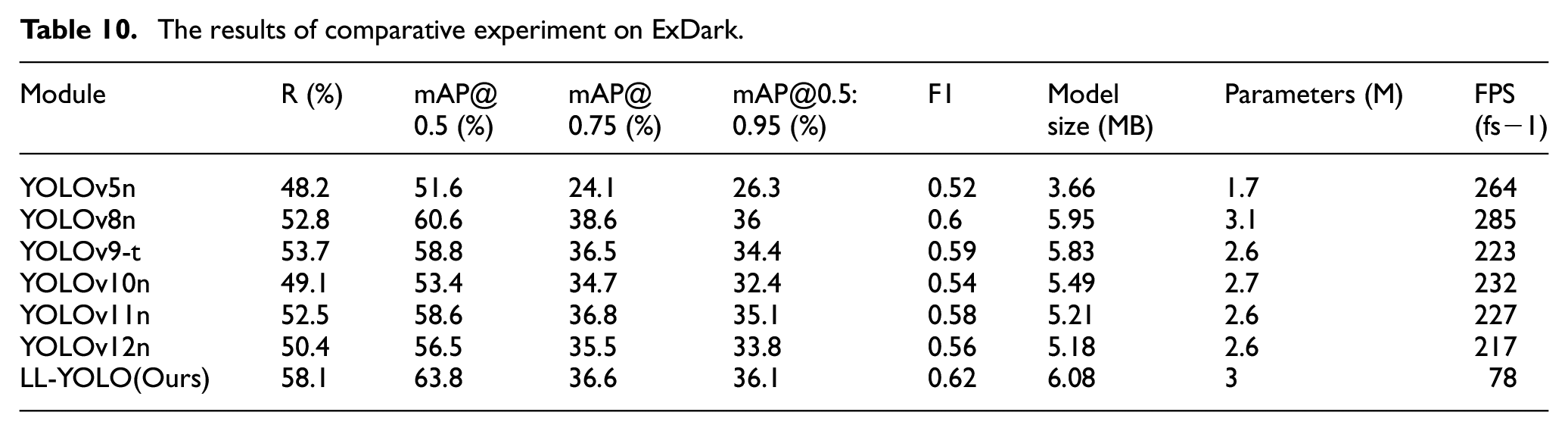

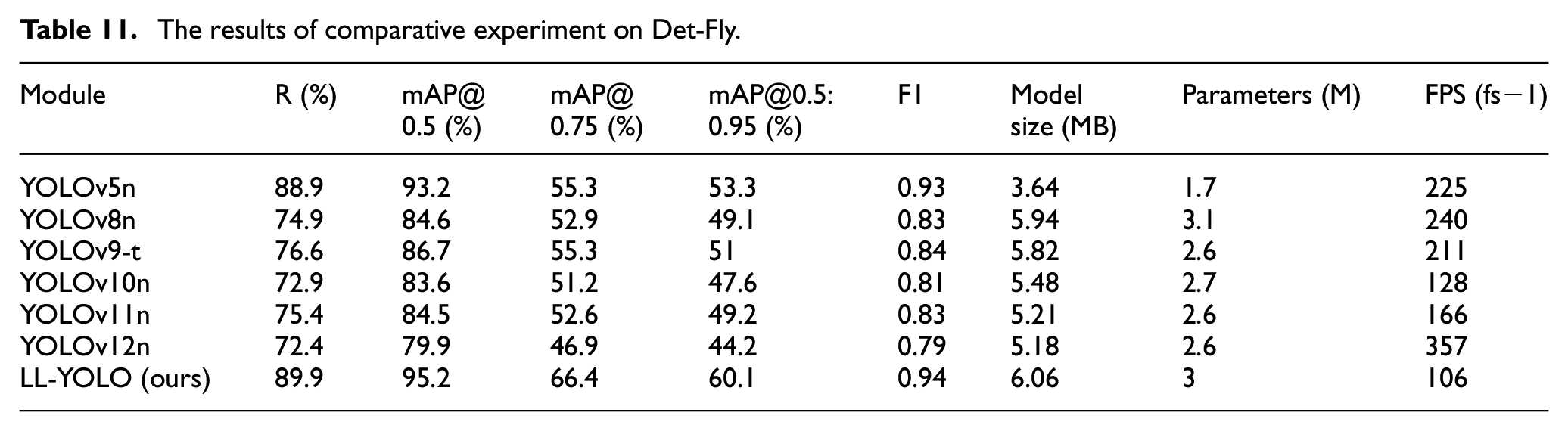

DARK FACE, 47 consists of 6000 low-light images captured at night in various locations, including educational buildings, streets, bridges, overpasses, and parks, all labeled with human faces. ExDark, 48 remains unchanged, encompassing 7363 low-light images across 10 different conditions and comprising 12 object classes. Det-Fly, 44 is obtained by capturing micro drones with a monocular camera. The size of the drone in the image is very small, and it involves four different environmental backgrounds: sky, urban, rural, and mountainous areas. After removing all non-low-light images from the Det-Fly dataset, a total of 8401 images were obtained. The results of the experiments are presented in Tables 9 to 11. To avoid domain inconsistency, DARK FACE, and ExDark were used strictly for testing and generalization analysis.

The results of comparative experiment on DARK FACE.

The results of comparative experiment on ExDark.

The results of comparative experiment on Det-Fly.

Figure 14 shows the detection results of different YOLO on DARK FACE. It can be observed that our LL-YOLO can not only detect more faces but also achieve a certain improvement in confidence. As shown in Figure 15, despite the large size of the target, the performance of the original YOLO is unsatisfactory, while LL-YOLO has the highest confidence. Compared to the original YOLOv8n model, it does not mistakenly detect the beach as a table. From Figure 16, it can be seen that the original YOLOv5n and YOLOv8n are prone to missed detections in low-light and complex background scenarios. YOLOv10n, although capable of correct detection, exhibits lower confidence. In contrast, our LL-YOLO can effectively eliminate background interference and achieve higher confidence while accurately detecting targets.

The comparison of different YOLO on DARK FACE: (a) YOLOv5n, (b) YOLOv8n, (c) YOLOv10n, and (d) LL-YOLO.

The comparison of different YOLO on ExDark: (a) YOLOv5n, (b) YOLOv8n, (c) YOLOv10n, and (d) LL-YOLO.

The comparison of different YOLO on Det-Fly: (a) YOLOv5n, (b) YOLOv8n, (c) YOLOv10n, and (d) LL-YOLO.

Conclusion

In this study, a novel UAV detection model based on YOLOv8n was proposed, specifically designed to address the challenges of detecting UAVs in low-altitude, low-light, and complex environments. The framework incorporates EnlightenGAN to enhance dark images, which are subsequently processed by LL-YOLO, thereby emphasizing the target features from input. Additionally, the design of SPD-Conv effectively minimizes information loss during feature extraction, particularly for small targets like UAVs, ensuring the retention of critical features. Moreover, by integrating LSKA attention with SPPF, our framework captures a rich set of contextual information, which enhances the extraction of spatial features and reduces background interference. To further optimize computational efficiency and reduce memory usage, we replaced the bottleneck block in C2f with the FasterBlock from FasterNet.

To validate the performance of the proposed method, we constructed the GUET-UAV-LL low-light dataset and conducted extensive experiments on this dataset as well as publicly available datasets. The results demonstrated that the combination of EnlightenGAN and LL-YOLO led to significant improvements, with a 13.8% increase in Recall, an 8.1% increase in mAP, and a 0.08 increase in F1 score compared to the baseline YOLOv8n on the GUET-UAV-LL dataset. Additionally, the proposed model maintains lightweight and real-time detection capabilities, making it suitable for practical deployment in low-light environments.

Footnotes

Ethical considerations

Our research does not involve any research which involves human participants or animal experimentation.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Author contributions

Jun Ma: conceptualization, funding acquisition, methodology, supervision, review and editing. Zejie Sun: investigation, methodology and software, validation, visualization, and writing –original draft. Yuling Wu: investigation and visualization. Dongyang Jin: discussion and methodology.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was by the Innovation Project of GUET Graduate Education (2024YCXS126).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data cannot be made publicly available upon publication because they contain sensitive personal information. The data that support the findings of this study are available upon reasonable request from the authors.