Abstract

In this study, we propose a monocular camera-based 3D detection technique that determines ship attributes, such as position, size, orientation, and relative distance, precisely in real time using visual sensor information. The detection algorithm leverages a keypoint detection network to predict the ship’s position, size, and heading direction. To enhance relative distance estimation accuracy, the network incorporates an explicit pixel distance estimation from the horizon line to the target ship. This design allows the network to more effectively exploit geometric cues and thereby enhance distance estimation performance. To acquire training data, AIS data and monocular camera images were acquired synchronously in the vicinity of Busan Port. The AIS data was utilized to define ground truth annotations and label the training dataset. The experimental results confirm that the proposed method showed a 5.4 percentage point improvement in 3D bounding box mAP and 5.79 percentage point enhancement in direction mAP to the baseline model. A novel contribution of this study lies in leveraging the pixel distance from the horizon line as a key geometric cue for improving monocular distance estimation. Furthermore, the integration of synchronously collected AIS data with visual imagery to generate high-quality training labels is a distinctive aspect of our approach.

Keywords

Introduction

In recent years, autonomous driving technology has advanced rapidly in the automotive and aviation sectors, ushering in revolutionary changes in society and industry. Currently, application of autonomous technology to the maritime sector is an active topic of research and development. Autonomous ship technology can be classified into perception, decision-making, and control technologies. Of these, perception technology is the most essential for autonomous functioning of ships. This involves detecting the surrounding environment using perception sensors and interpreting the data acquired from these sensors.

Commonly used sensors on autonomous ships include radars, automatic identification systems (AIS), Light Detection and Ranging (LiDAR) sensors, and cameras. These exhibit complementary relationships based on their strengths and weaknesses. Radar, which uses radio waves to measure the distance to an object, exhibits a wide detection range and robust operation with respect to varying weather conditions. However, despite its superior functionality, it exhibits relatively low resolution. AIS transmits and receives a ship’s basic and navigational information automatically, and is reputed for its highly accurate positional data. However, it features a slower data collection cycle than other sensors. LiDAR measures the distance to an object using light, and exhibits excellent accuracy. However, it is expensive and its detection range is relatively short. Finally, cameras capture images using light and acquire high-resolution images at low cost. However, they exhibit poor distance estimation performance, particularly in adverse weather conditions. A list of abbreviations and symbols used throughout this paper is provided in Table 1.

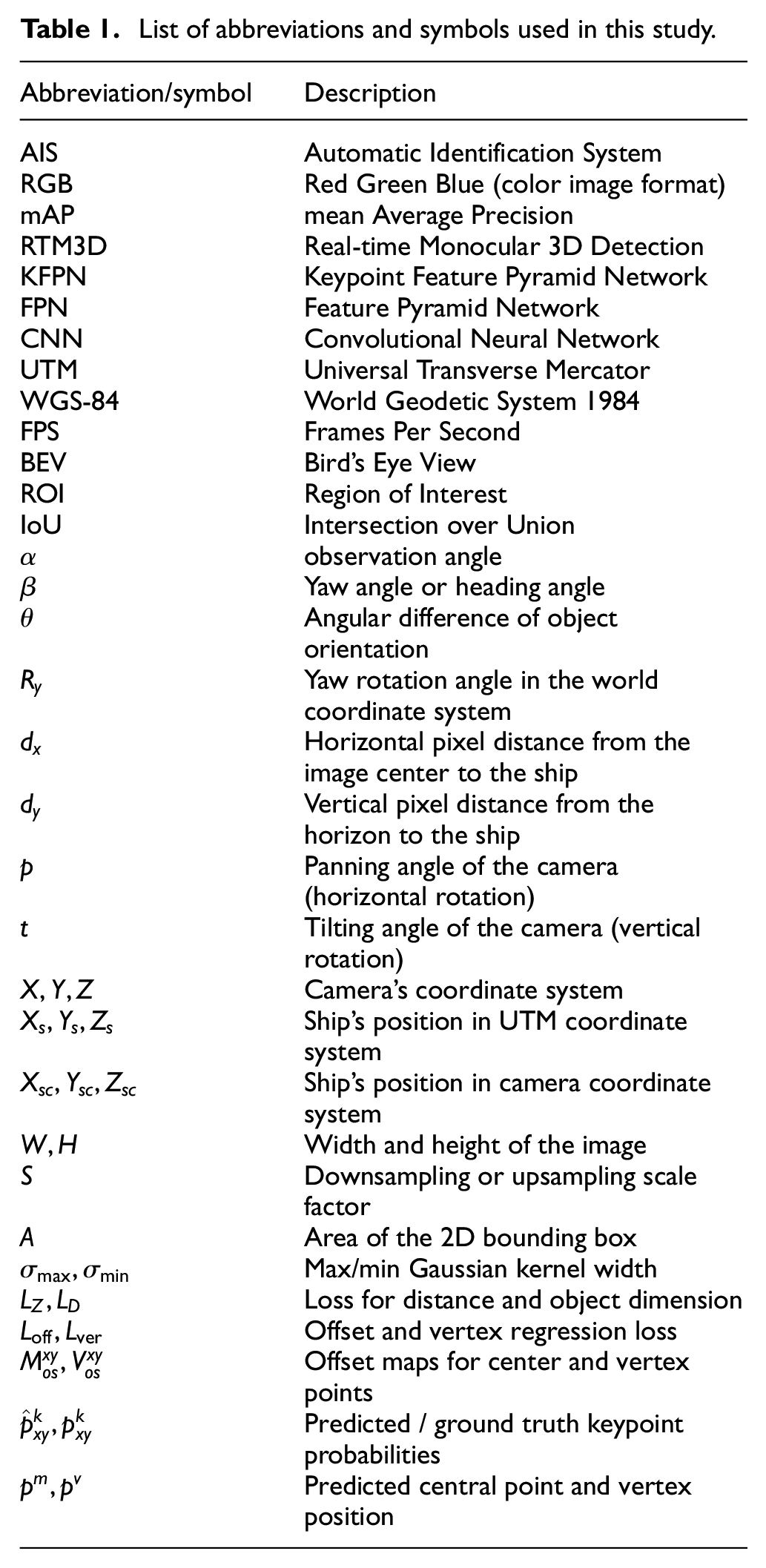

List of abbreviations and symbols used in this study.

Most initial perception technology research relied on expensive LiDAR sensors, such as Velodyne, to perceive surrounding information. Previous research has led to higher implementation costs for practical systems. Therefore, recently, there has been increasing interest in developing vision-based perception systems using cameras, which offer a cost-effective alternative while still providing sufficient spatial awareness for many maritime and autonomous navigation applications. 3D object detection using monocular camera has been receiving significant attention as a promising solution to reduce the implementation costs of such systems.1–4 This study presents a method for detecting other ships in the vicinity based solely on vision. To mitigate the limitations of monocular vision in relative distance estimation, AIS data were employed to initialize the labeling process. This approach enabled the generation of reliable training data, which supports object detection—a core task in vision-based maritime perception.5–7 Object detection is the primary topic in research on camera-based perception. It involves localizing the position of an object using a bounding box and classifying it. Object detection technology plays a crucial role in preventing collisions by enabling real-time recognition of surrounding maritime environments and nearby vessels. It enables an autonomous ship to recognize and analyze nearby ships in real time, thereby ensuring safe navigation. It can also optimize predicted collision courses in advance. This study proposes a ship detection system using a monocular camera that exhibits the aforementioned advantages.

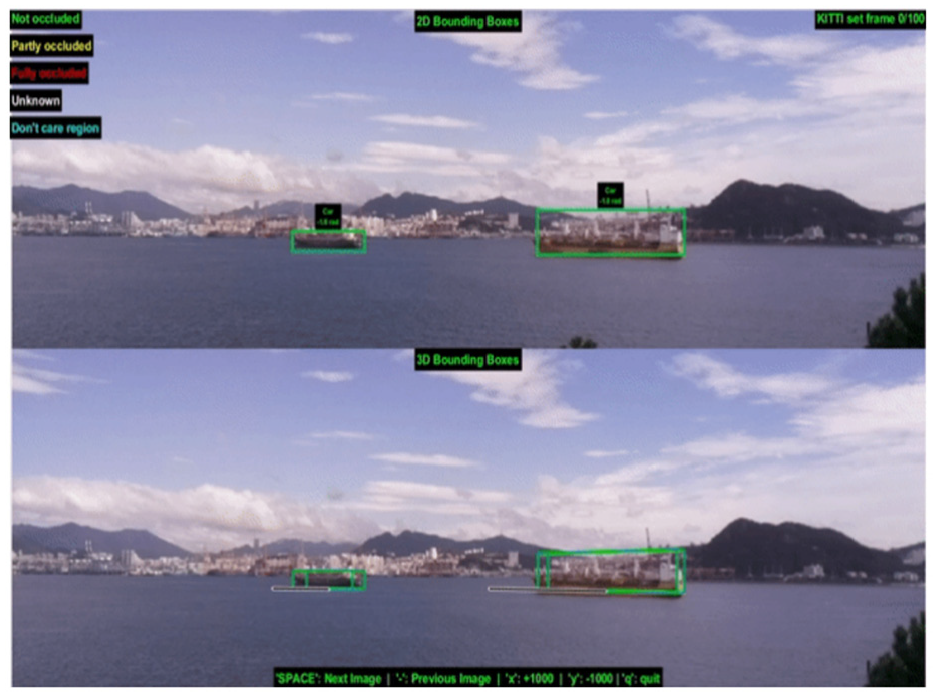

2D object detection technology is highly effective in identifying object locations and classes within images or videos accurately. However, such 2D methods are limited by their inability to fully understand actual 3D structures, locations, and relationships between pairs of objects. 3D object detection has garnered significant interest as a potential solution to this issue. 3D object detection not only predicts the presence and class ID of objects, like 2D detection, but also provides additional information, such as the object’s orientation, pose, and size. This technology is essential for understanding complex 3D structures of objects accurately and interacting with them more effectively in intricate environments. Figure 1 depicts the results of 2D and 3D object detection, displaying the results with 2D and 3D bounding boxes. In this study, we apply 3D object detection, which has traditionally been used on roads, to the maritime sector to detect nearby ships.

Comparison of 2D and 3D object detection results. The left image represents 2D bounding boxes, while the right image displays 3D bounding boxes, demonstrating the improved spatial awareness of the proposed method. 8

A potential issue with 3D object detection is erroneous depth prediction. This problem arises during the projection of a 3D bounding box onto a 2D screen, which is dependent on the accuracy of depth information. Errors occurring during this projection process serve as the primary cause of reduced detection performance, affecting the overall accuracy of the object detection results significantly.

To resolve this limitation, we employ an RTM3D network based on keypoint detection. 9 This network accurately discerns the relationship of the 3D bounding box corresponding to the object’s central point and predicts nine projected keypoints. This helps reduce the projection error substantially, leading to a marked improvement in the overall 3D object detection performance. This approach aims to compensate for projection errors in 3D object detection.

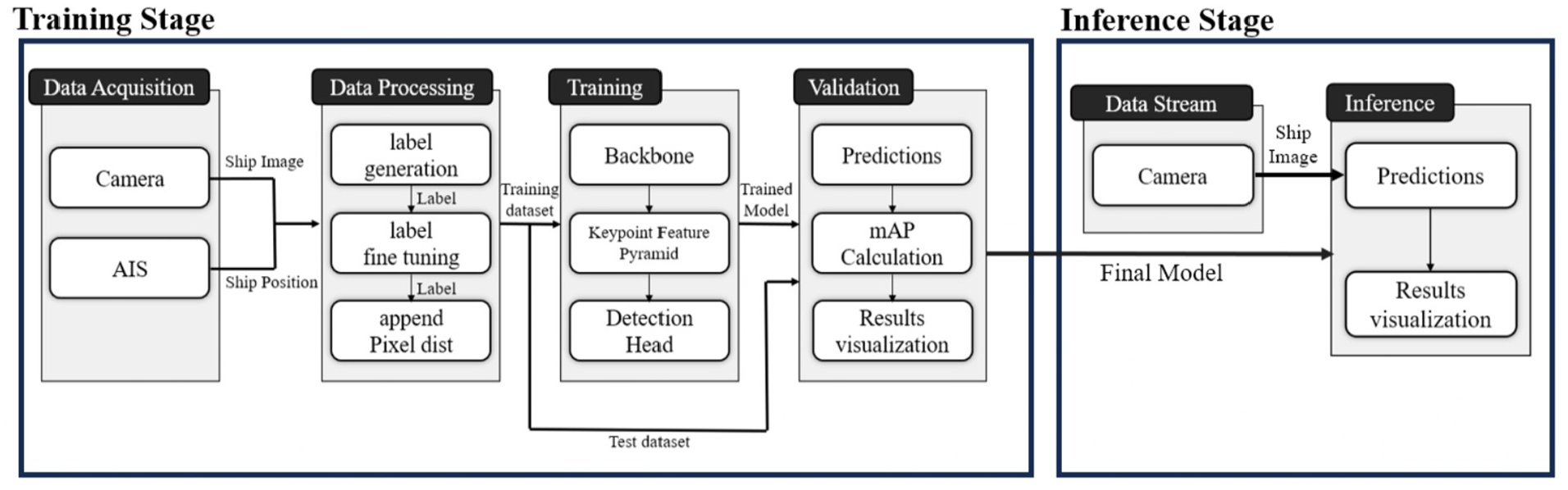

This study proposes a novel technique to improve the accuracy of ship detection in maritime environments. The proposed method is structured as shown in the flowchart in Figure 2. First, camera and AIS data are acquired, and the AIS data is used to label the position information of the ships. The labeled data is then fine-tuned by adjusting the size and orientation of the bounding boxes to generate more accurate labels. The final labeled data includes

Flowchart of the proposed 3D ship detection framework. The method consists of (1) data acquisition from cameras and AIS, (2) preprocessing through a three-step labeling process, and (3) model training using a Keypoint Detection Network. Performance evaluation is conducted using the mean Average Precision (mAP) metric, and the trained model is used for real-time 3D ship detection based on monocular camera images.

The remainder of the paper is organized as follows. Prior research that forms the foundation of our study is reviewed in Section 2. The keypoint detection network structure used in this study is described in detail in Section 3. The overall process, from data acquisition to the creation of training data, as well as the training process are described in Chapter 4, along with the performance validation of the proposed method. Finally, the conclusions and future prospects of this study are presented in Section 5.

Related works

Object detection has evolved from 2D detection to 3D detection. This study focuses on 3D object detection using a monocular camera among these developments. In particular, it is based on 3D object detection that utilizes keypoint detection.

2D object detection

2D object detection technology has developed to detect the presence and location of various target objects and has been applied to marine environments for obstacle detection in autonomous ships. The introduction of deep learning has revolutionized research on 2D object detection, with Convolutional Neural Networks (CNNs) being utilized as a key technology for image classification and object detection. Based on this, various benchmarks have been developed, which researchers use to evaluate the performance of object detection and segmentation algorithms in diverse marine environments.

The SGD (Singapore Maritime Dataset) benchmark 10 provides a dataset for 2D object detection aimed at detecting ships and other marine objects in complex maritime environments. It includes sequences recorded under various weather conditions and times of day, making it suitable for evaluating the robustness of models in maritime settings. Additionally, the MODS (Marine Obstacle Detection Data) benchmark 11 focuses on comparing and evaluating various methods for marine obstacle detection and segmentation for unmanned surface vehicles (USV). This benchmark, centered around a dataset and evaluation protocol, systematically analyzes the performance of 19 techniques through a standardized evaluation protocol. The MODD (Maritime Obstacle Detection Dataset) benchmark 12 employs a Markov Random Field framework to reflect the semantic structure of the marine environment. It features a constrained unsupervised learning approach for object segmentation and utilizes a highly efficient optimization algorithm that does not require the calculation of texture features in images. These benchmarks promote the advancement of object detection and segmentation technologies in maritime environments, providing researchers with standards to systematically compare the performance of various algorithms.

However, these 2D object detection methodologies only provide 2D information about the objects in the images and do not include information about the objects’ 3D position, orientation, or shape. To mitigate this challenge, research on 3D object detection is being conducted.

Horizon-based distance estimation in monocular maritime vision

Among various approaches to monocular distance estimation in maritime environments, the use of the horizon line has proven to be a particularly effective cue. Prior work in this area can be broadly categorized into two main strategies: geometry-based methods and deep learning-based methods. Geometry-based methods rely on known physical relationships, such as object dimensions and the position of the horizon in the image. For example, Gladstone et al. 13 introduced a geometric method that estimates the distance to marine vehicles by detecting the horizon line and the contact point of the vessel with the sea surface in video frames. By applying trigonometric calculations based on the camera’s mounting height and the angle between the horizon and the vessel, they achieved a mean absolute relative error of 7.1% in distance estimation. In contrast, deep learning-based approaches attempt to learn depth cues directly from visual data. For example, Vemula et al. 14 introduced DisBeaNet, a lightweight neural network that takes 2D bounding box features—such as size and vertical position—as input to estimate both distance and bearing. Although the horizon line is not explicitly fed into the network, the vertical position of the object—a feature inherently tied to the horizon—likely contributes to the model’s strong performance, especially in surface-level object detection. These studies suggest that both explicit and implicit utilization of horizon-related cues significantly contribute to improving monocular distance estimation at sea.

Development of 3D object detection

3D object detection overcomes the limitations of 2D object detection by providing detailed information such as the direction, pose, and size of objects, significantly expanding the applicability of computer vision. This 3D object detection has become a key component in scene recognition and motion prediction in autonomous driving.15,16 The transformation brought about by this technology plays a crucial role in improving the accuracy of vessel recognition and environmental analysis, realized through the integration of various sensors and technologies. The S2S-sim benchmark 17 is a dataset developed for maritime environment awareness for autonomous ships, providing Unity3D simulation data rather than real maritime environment data. It is constructed by simulating navigation scenarios and LiDAR sensor parameters. Utilizing this dataset, a new region clustering fusion-based 3D object detection method has been proposed. However, a benchmark reflecting real maritime environment data is yet to be provided, leading researchers to use benchmarks designed for vehicle detection on roads for maritime studies.

The KITTI Dataset benchmark 18 is a large-scale benchmark dataset for autonomous driving research, including high-resolution images, LiDAR point clouds, GPS information, and more, collected in various driving scenarios. Due to the precision and consistency of the KITTI dataset format, it is also utilized in maritime environments, contributing to the research of autonomous technologies in these settings. Additionally, the OPV2V benchmark 19 provides a CARLA simulation dataset rather than real-world data. It is suitable for evaluating sensor-fused data and has been assessed using 16 different methods. A fusion pipeline has been proposed that maintains performance even when the data is compressed for transmission. This benchmark is also used for building sensor data sharing and perception systems in maritime environments.

This approach has been limited in some applications due to the cost and complexity of sensors. On the other hand, 3D object detection using only single camera data has emerged as a major research interest because it significantly reduces the cost of sensors and simplifies the processing steps.

Monocular 3D object detection

Compared to the various methods mentioned earlier, the majority of recent research focuses on detecting 3D objects using a single RGB image. 20 This approach has advantages such as simplifying the processing steps and reducing processing time.

The study by Dennis et al. 21 proposes a method for perceiving the position of 3D ships using only a monocular camera. Assuming that the ship is placed on a flat surface, this algorithm uses the principle of back-projection to restore depth information from a single image. It employs a hybrid approach that combines image detection, camera geometry, and back-projection. Additionally, it suggests a method for calculating the height of the object, facilitating the prediction of the ship’s size.

Despite these advantages, most studies on 3D detection for ship detection in real marine environments rely on data obtained through sensor fusion, and there has been little research on 3D object detection using monocular cameras. 3D object detection through sensor fusion increases system complexity and requires significant costs for installation and maintenance. Additionally, perception technology for autonomous ships has received less research interest compared to autonomous driving due to the lack of appropriate datasets. 17 As a result, there is currently no universally used benchmark for 3D object detection in marine environments.

This study proposes a method for 3D ship detection at sea using only monocular camera. Additionally, AIS data is utilized as initial labeling data for camera-based detection to minimize prediction errors and enhance reliability. A dataset was also constructed by directly acquiring ship data from the Busan Port. In this process, a labeling technique specialized for marine environments is proposed. This approach is expected to contribute to the advancement of maritime safety and the perception systems of autonomous ships.

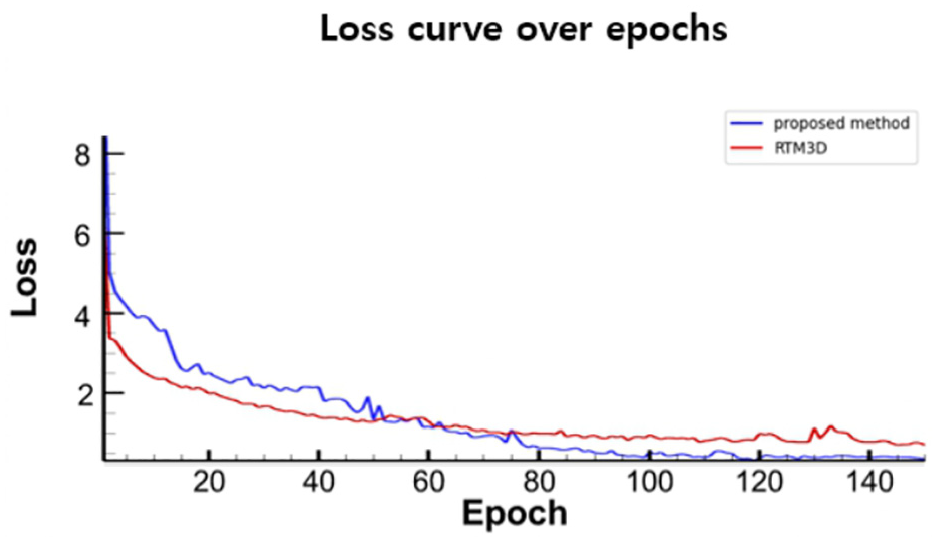

Network structure

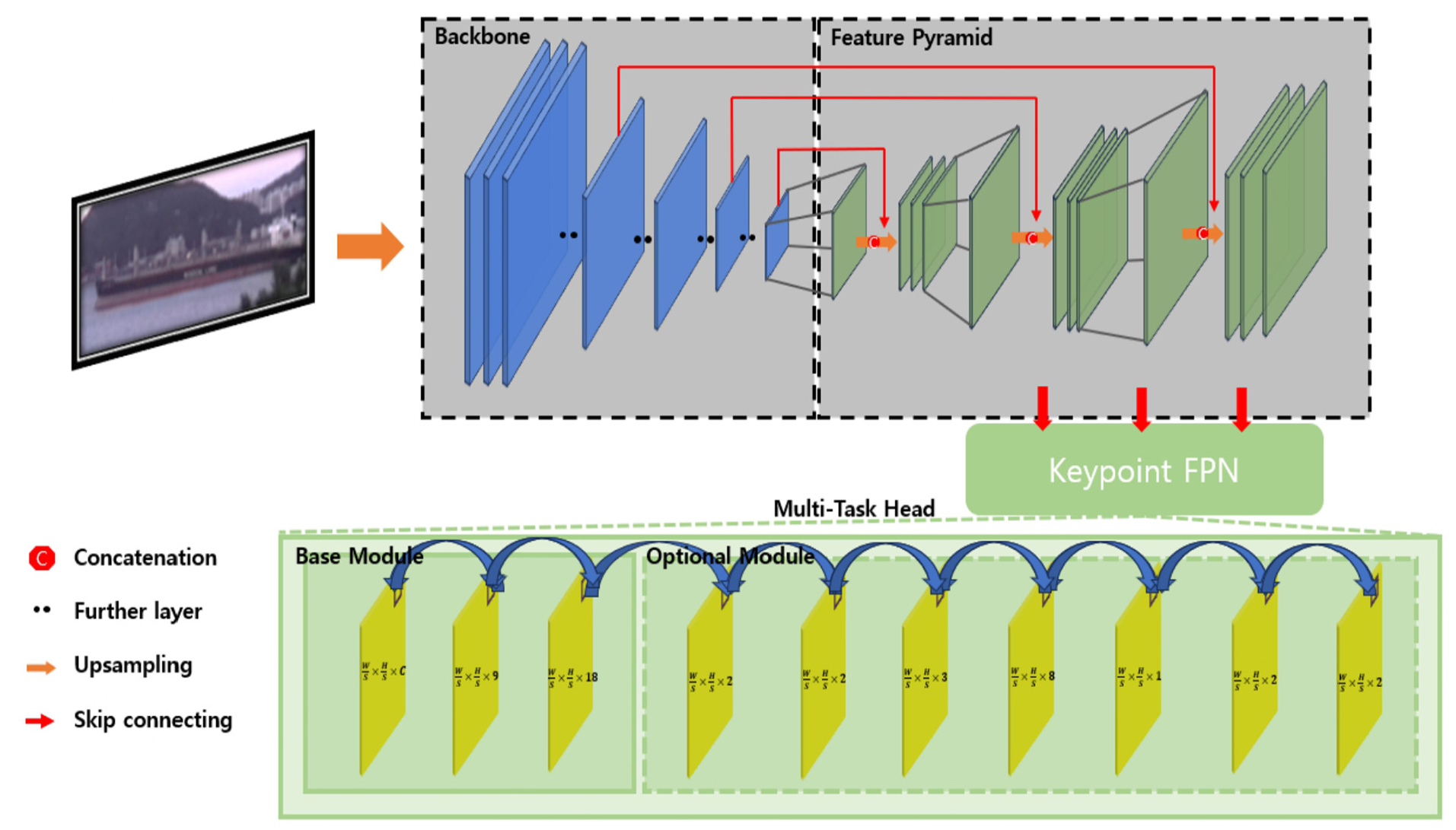

In this study, a neural network structure is constructed based on the RTM3D network, which is grounded in keypoint detection. This was done by utilizing the RTM3D network, which first introduced the KFPN technique optimized for keypoint detection, to conduct the training, thereby choosing the RTM3D network to achieve more effective detection performance. The learning process uses RGB images captured by a monocular camera as input, predicts one center point and eight vertices of each 3D bounding box, and outputs these coordinates based on the camera coordinate system. The network comprises a Backbone, Keypoint Feature Pyramid, and Detection Head, as illustrated in Figure 3.

Overview of the proposed Keypoint Detection Network. The input RGB image is processed through three main stages: (1) feature extraction via the Backbone, (2) multi-scale feature representation using the Keypoint Feature Pyramid Network, and (3) final object detection by the Detection Head. The network estimates 3D bounding boxes, and additional optional features can be integrated to enhance performance.

Backbone

The backbone is predominantly used in machine learning and computer vision and represents the core structure of a neural network. It primarily refers to the fundamental networks utilized in deep learning models, particularly CNNs, for extracting features from images. This process involves identifying and extracting crucial features from input images, which are then employed in subsequent keypoint detection tasks.

ResNet-18 22 is selected as the backbone architecture used in this study. It exhibits fast training speed and supports stable learning in deep networks using residual connections. Despite having relatively few parameters, ResNet-18 exhibits outstanding performance.

The backbone accepts RGB images as inputs and performs downsampling on them. Downsampling reduces the image resolution while increasing the number of channels used to summarize information. This not only reduces computational complexity, but also enables the network to perceive information over a broader area. Subsequently, the bottleneck undergoes a threefold process of upsampling. This involves three bilinear interpolations and a 1 × 1 convolution layer. The three bilinear interpolation steps estimate new pixel values using a linear combination of pixel values, which progressively restore the original high-resolution images. Finally, the obtained feature map is transmitted through another 1 × 1 convolution layer to reduce the channel dimensions. This step reduces the number of channels to 256, 128, and 64. 9 Finally, the resulting feature map is combined with low-level feature maps extracted during the initial stages of model operation. This allows the model to consider both high- and low-level information simultaneously.

The backbone with the aforementioned structure plays a crucial role in effectively extracting the necessary features from images. This enables the network to perform tasks, such as 3D ship detection, by utilizing the information extracted from the original RGB images.

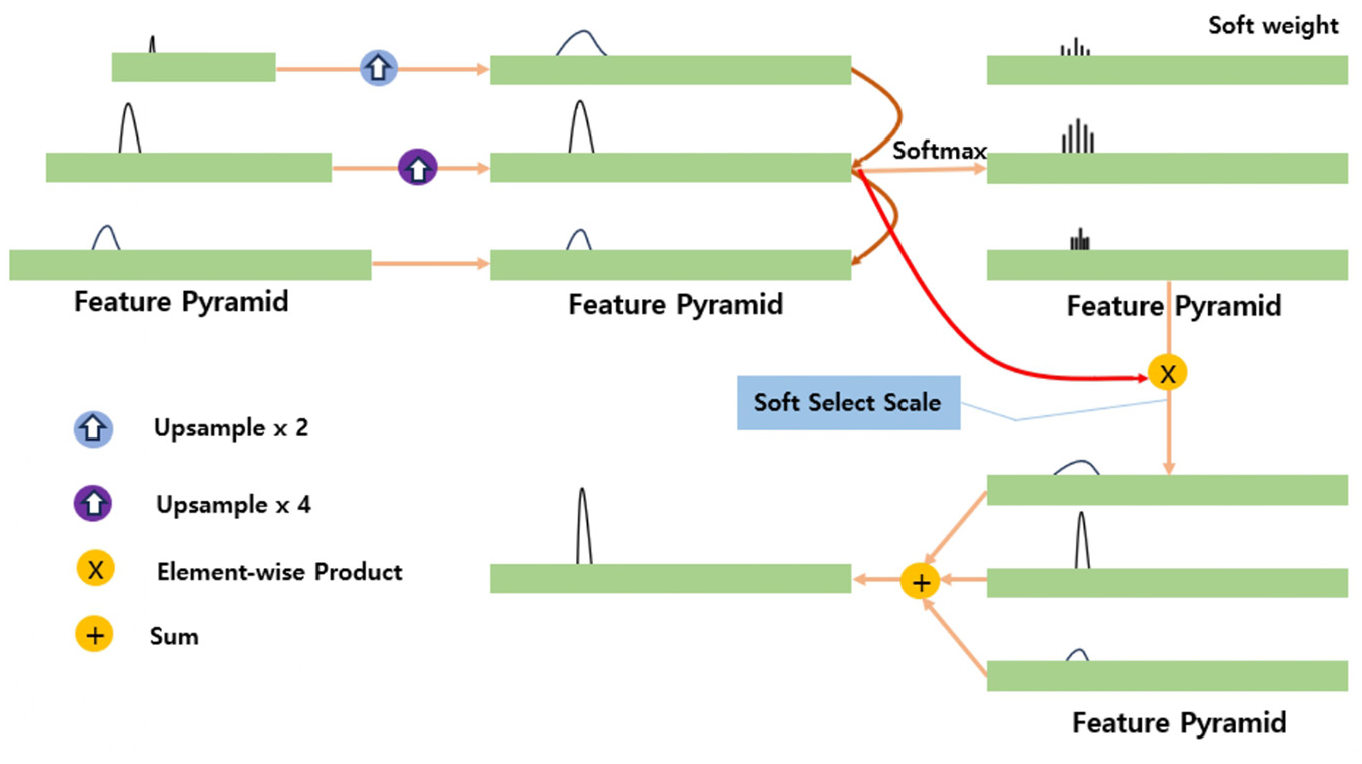

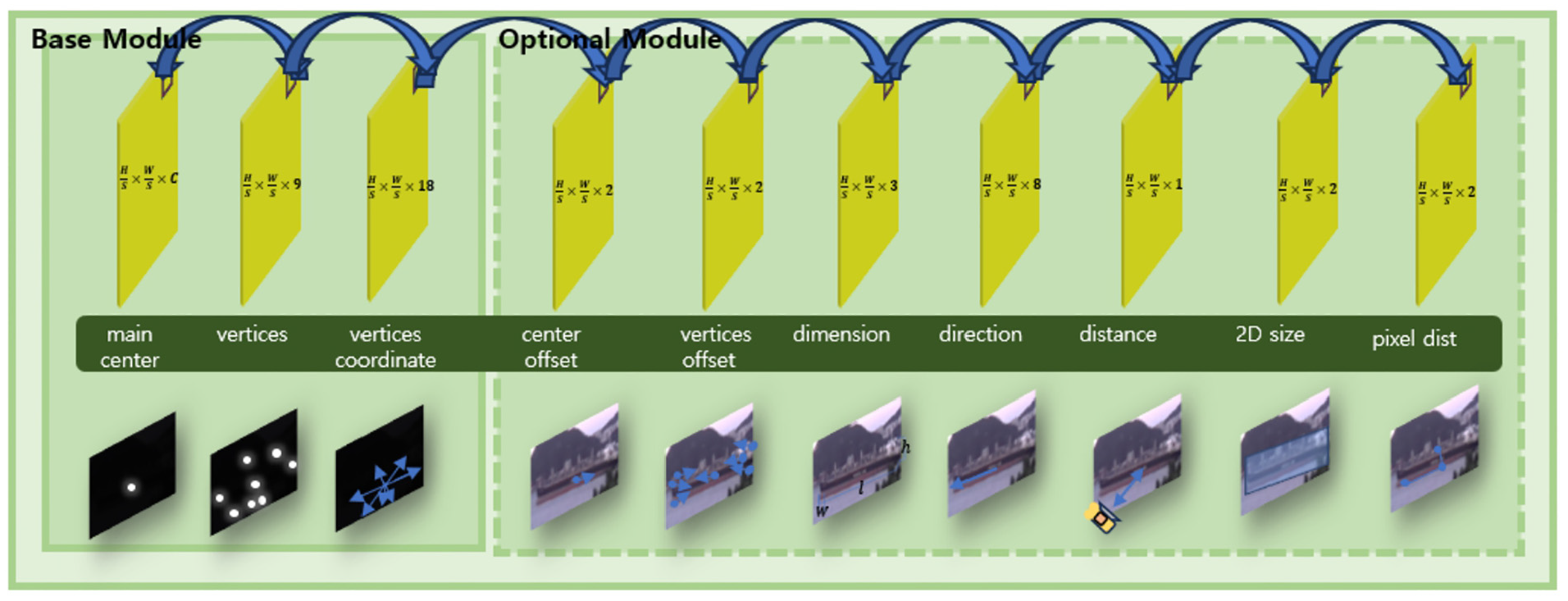

Keypoint feature pyramid network

A KFPN is designed for keypoint detection. As the size of the central points within an image is fixed, the traditional Feature Pyramid Network (FPN) method described by Lin et al. (2017), 23 which uses bounding boxes to detect objects of various sizes, is unsuitable for keypoint detection. Instead, KFPN is effectively utilized for the detection of scale-invariant keypoints.

The process of KFPN is illustrated in Figure 4. Each feature map,

Architecture of the Keypoint Feature Pyramid Network (KFPN), illustrating the multi-scale feature extraction process. Softmax-based weighting is applied to improve keypoint detection across different scales, ensuring robustness in ship detection tasks.

By adjusting feature maps of various scales, features obtained at different scales can be compared on a consistent basis, thereby enhancing the consistency of keypoint detection. Further, it enables the detection of scale-invariant keypoints. This is a crucial step because the same keypoint can appear differently at different scales. Therefore, using this approach, consistent keypoints can be detected from feature maps of various scales extracted from images, leading to the effective detection of 3D objects. The process for calculating the scale-space score is given by equation (1), where ⊙ represents element-wise multiplication.

Detection Head

Finally, the Detection Head utilizes the feature map generated by KFPN to detect 3D objects. Inspired by the CenterNet approach, our network predicts the central points of 3D objects. On this basis, additional characteristics of the 3D bounding box can be inferred simultaneously. This step involves determining whether each pixel in an image is a keypoint that distinguishes 3D objects from 2D images. A noteworthy feature of this technique is its ability to predict the location of an object as long as the central point of the 3D object lies within the image boundary, even partially. 9 These elements include the loss value for each predicted keypoint; the size, orientation, and relative distances of the 3D bounding boxes from the camera; the size of the 2D bounding boxes; the horizontal pixel distance between the image’s center point and the ship’s center of mass; and the vertical pixel distance between the horizon and the ship. The accuracy of 3D object detection can be enhanced by estimating these additional elements. In this study, we adopted learning options to predict all the additional elements.

3D ship detection

This chapter contains the proposed Pixel distance learning process for 3D ship detection research. To validate the performance of this study, the learning outcomes using the conventional KITTI dataset label format and those with the added Pixel distance were compared respectively.

Data acquisition process

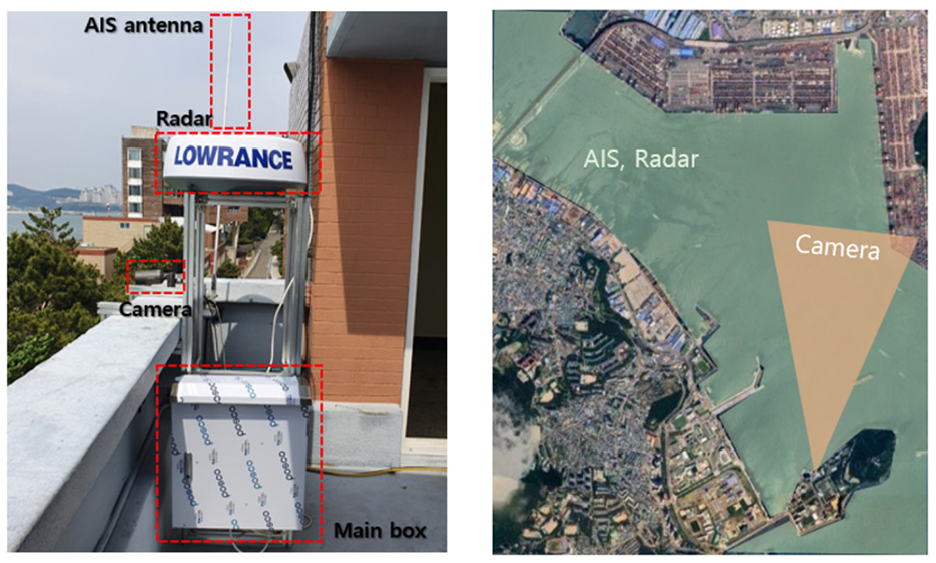

In this study, learning data were collected using ship data acquisition equipment installed at the Korea Maritime and Ocean University. This equipment, as illustrated in Figure 5, was installed facing the Gamman Pier direction to observe ships entering and exiting Busan Port and collect camera footage, AIS information, and radar images. This study uses camera footage and AIS data. The camera captured video data of ships moving through Busan Port at a resolution of 640 × 480. AIS provides both static information containing ship specifications and dynamic information containing navigation data. In this study, the location information of the ships moving through Busan Port obtained from AIS is used as the initial data for labeling.

Illustration of the data acquisition setup used in this study. The system comprises a monocular camera, AIS, and radar sensors, installed at Korea Maritime and Ocean University overlooking Busan Port. This study specifically utilizes camera and AIS data for 3D ship detection.

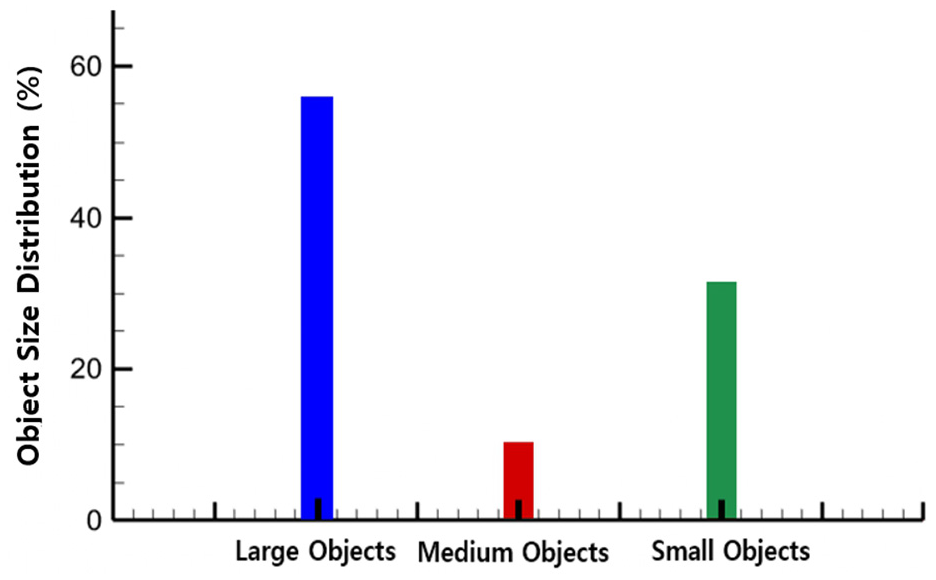

The images are resized during the preprocessing stage of training. The existing monocular camera image data was resized to match the KITTI Dataset image size of 1224 × 370. During this process, the sky and land portions were cropped out, excluding the sea area. The reason for preparing the training data in this way is to specify the region of interest (ROI) where the ship is located for training purposes. This approach helps reduce noise and unnecessary information in the data. The acquired data consists of 2500 training images, 500 validation images, and 500 test images, which were used for training and performance evaluation. Figure 6 shows a graph illustrating the proportion of object sizes based on 2D bounding boxes in the dataset. A small object is defined as an object occupying less than 10% of the image width, a medium object occupies between 10% and 20% of the image width, and a large object occupies more than 20% of the image width.

Distribution of object sizes in the dataset, classified based on the proportion of image area occupied by 2D bounding boxes. Small objects occupy 32% of the image, medium objects 12%, and large objects 56%.

Creation of training labels

First, labeling data are generated in accordance with the KITTI Dataset label format, which reflects 3D information. Subsequently, additional information proposed in this study is incorporated to produce the final labeling data. Additionally, to optimize the model’s performance by focusing on the ship class, the classes have been limited to only one class, ships. The labeling data are generated via three stages, which are described below.

The first-stage labeling process utilizes AIS data to label the location information of ships. AIS data encompass various types of information, including basic static information about ships and dynamic information reflecting their current status. For this study, only ship location information is extracted from the AIS data. This selective use is grounded in the research objective, which is to detect 3D ships solely based on vision. Therefore, the limitations of the monocular camera are compensated for by acquiring minimal essential data through AIS from other sensors, serving as the initial data for labeling.

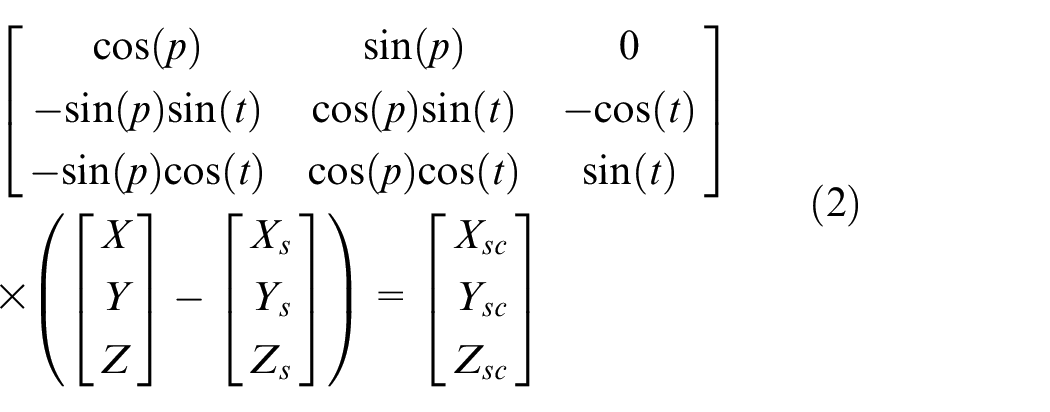

The ship location coordinates obtained from the AIS sensor are based on the WGS-84 (World Geodetic System 1984) coordinate system. The WGS-84 coordinate system considers the shape of the Earth and defines a coordinate system with the center of the Earth’s ellipsoid as its origin. These coordinates are converted into the Universal Transverse Mercator (UTM) coordinate system. The UTM system divides Earth into 60 sections, at intervals of 3°, and applies a 2D planar coordinate system to each section. By doing so, the UTM coordinate system facilitates the calculation of distance and direction between two points using the simple 2D planar coordinate system.

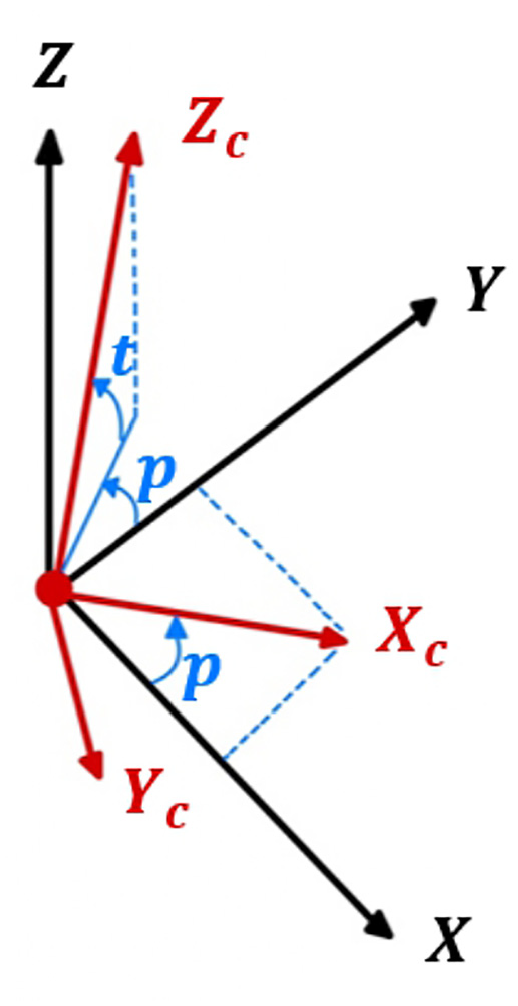

Subsequently, the ship’s location, which is represented in UTM coordinates, is converted into coordinates based on the camera’s coordinate system. As depicted in Figure 7, the Earth-defined coordinate axes correspond to the latitude and longitude on the horizontal plane, defining the x- and y-axes, respectively, with the z-axis pointing perpendicularly toward the horizon. In contrast, the camera coordinate system defines its axes using the camera’s location as the origin, the frontal direction of the camera as the z-axis, its right as the x-axis, and its bottom as the y-axis. Considering the pinhole camera model, the latitude and longitude coordinates of the target are converted to the ship’s location based on the camera’s coordinate system.

Comparison between the world coordinate system (WGS-84/UTM) and the camera coordinate system. The transformation process ensures accurate localization of ships within the camera’s field of view.

The formula used to determine the relative position of a ship in meters is given by equation (2). Here,

The second-stage labeling process utilizes camera image data to make fine adjustments to the size and orientation of the ships. This process employs the 3D Labeling software presented in Figure 8, which enables adjustments to the size, position, and orientation of 3D bounding boxes. The 3D Labeling software is based on the Development Kit provided by the KITTI Dataset, which was constructed on MATLAB. This kit includes features that allow modifications to information related to 3D bounding boxes. In this study, functions in the Development Kit related to angle and orientation are modified to perform labeling suited to the ship images used during the learning process.

MATLAB-based 3D labeling software used for ship detection annotation. The interface allows fine-tuning of 3D bounding boxes, automatically updating corresponding 2D bounding box pixel coordinates.

This study utilizes the RTM3D network, which was designed to detect automobiles on roads, and adapts it for ship detection by modifying it to a network specialized for maritime environments. This adaptation involves a third-stage labeling process to incorporate horizon information, and the network is adjusted to learn from additional data.

The third-stage labeling process involves the addition of pixel distance data to the label data processed during second-stage labeling. The concept of pixel distance is used to construct a network with enhanced detection accuracy. The pixel distance comprises

Illustration of horizontal and vertical pixel distance calculations. The top image shows the horizontal pixel distance (dx) between the image center and the ship’s center of gravity, while the bottom image shows the vertical pixel distance (dy) from the horizon to the ship. These measurements contribute to improved distance estimation.

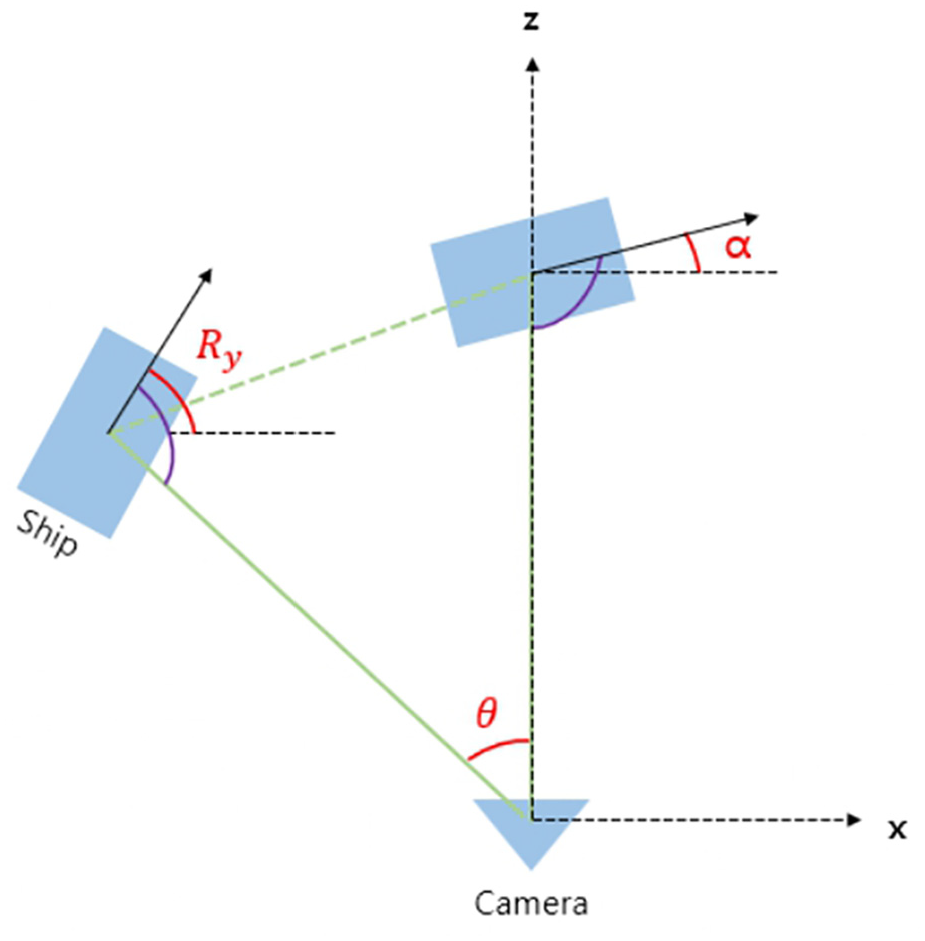

The final labeling data generated using the aforementioned process includes the object name, degree of occlusion and truncation of the object, pixel coordinates of the 2D bounding box, coordinates and size of the 3D bounding box in terms of the camera coordinate system, observation angle

As illustrated in Figure 10, the observation angle

Comparison of observation angle (

Loss function

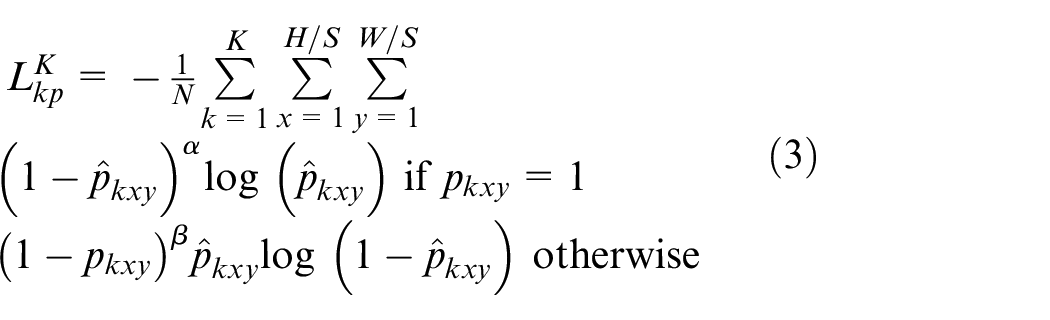

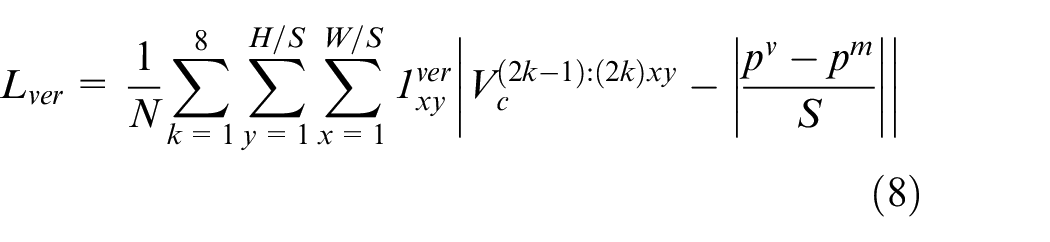

In this study, keypoint learning is conducted using the heatmap strategy proposed by Hei and Jia 24 and Xingyi et al. 25 The calculation of the keypoint loss value follows the process presented in equations (3)–(9). The focal loss of the keypoint is determined using the process given by equation (3), as per Tsung-Yi et al. 26

Here,

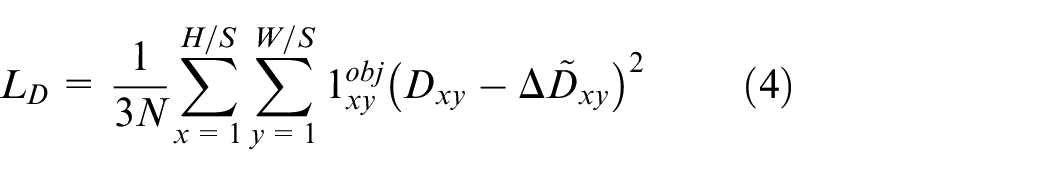

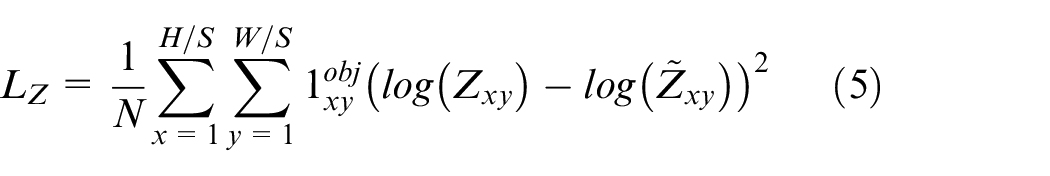

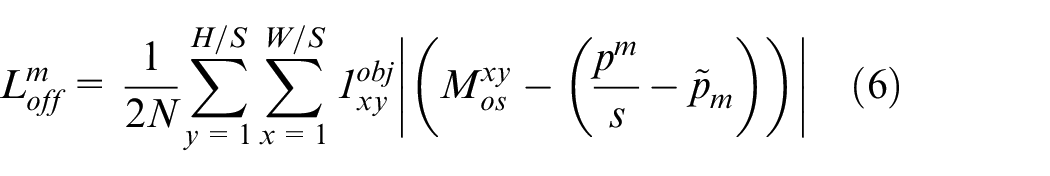

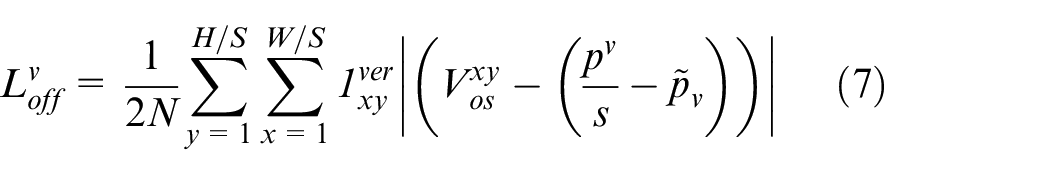

The loss related to the size of an object is obtained via the process given by equation (4), whereas the loss related to the distance of an object is derived via the process given by equation (5):

The offset losses related to the object’s central point and each vertex are calculated by following the processes given by equations (6) and (7), respectively, as per Xingyi et al.

25

In this case,

The keypoint

Regression loss, that is, the

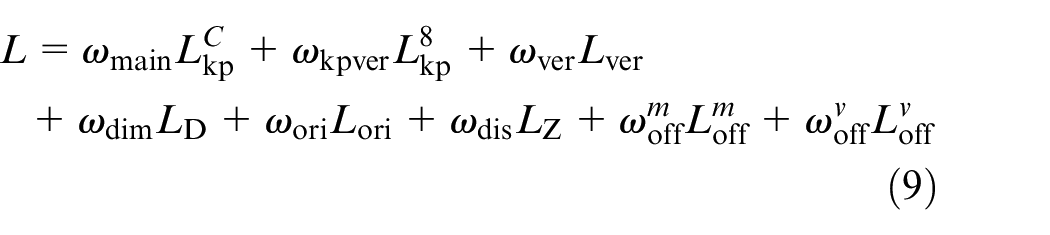

Figure 11 depicts the learning curves and compares the loss curves of RTM3D network training with those of the proposed method. The loss value of the proposed method is observed to converge more stably to zero than that of learning using the RTM3D network. The reduction in the value of the loss function indicates that the model’s predictions approach the actual values, thereby serving as a direct indicator of an improvement in the model’s performance. However, evaluating the performance of a model solely based on a loss graph has certain limitations. To address this issue, the performance evaluation metric, mAP, is utilized for a quantitative assessment of the model’s performance. mAP is a crucial indicator for evaluating the performance of object-detection models. It provides comprehensive evaluation by assessing the proportion of accurately detected objects. A higher mAP value signifies better performance, facilitating a more accurate understanding and assessment of the model’s performance in conjunction with the loss graph. The mAP evaluation metric is employed to compare the proposed method with the existing RTM3D method quantitatively, and the results are discussed in Section 4.5.

Training loss curve showing convergence behavior over epochs. The proposed method demonstrates a more stable loss reduction compared to the RTM3D baseline, indicating improved learning efficiency.

Training process

The network in this study was initially constructed by benchmarking RTM3D. Subsequently, the network was modified to learn pixel information. Additionally, the initial labeling was performed by benchmarking the 3D label format of the KITTI dataset, followed by training using the final labels with added pixel information. Sensor data included image data of ships entering and leaving Busan Port obtained through cameras, label data generated as explained in Section 4.2, and camera intrinsic parameter data. Training is conducted using two sets of label data for each image, with one set including the Pixel Distance as proposed in this study and the other following the format of the original KITTI Dataset labels without additional Pixel Distance.

Both the base and optional modules introduced in Section 3.3 are trained. The factor

As illustrated in Figure 12, each module possesses distinct characteristics. The Base Module comprises the main center, vertices, and vertex coordinates. The main center, representing the heatmap of the object’s central point, is expressed as

Description of the model’s three base modules (center keypoint, vertices, and vertex coordinates) and seven optional modules, which enhance object detection by incorporating distance, size, orientation, and pixel-based measurements.

The Optional Module also includes a center offset, vertex offset,dimension, orientation, distance, 2D size, and pixel distance. The term “center offset” refers to the discrepancy between the actual and predicted centers of a 3D object and is represented by

Further, “dimension,” signifying the width, height, and depth of a 3D object, is expressed as

The term “distance” represents the degree of separation between the 3D object and the camera and is expressed as

Finally, pixel distance, which refers to the horizontal pixel distance between the image center and the position of the vessel, and the vertical pixel distance between the horizon and the vessel, is expressed as . This is achieved by multiplying the dimensionality of 2 by

In this study, the network was modified to enable learning of pixel distance information. The trained model predicts the distance from the detected ship to the horizon and the distance from the detected ship to the center of the image. This facilitates distance estimation for 3D ship detection using a monocular camera. Unlike the data generation process during training, the prediction process using the trained model utilizes only a monocular camera. Any distance prediction errors that may arise when using the monocular camera can be compensated for by predicting additional information.

The operating system of the learning environment utilizes a packaged Ubuntu 16.04 Docker image. The network is implemented using Pytorch in a container environment.

The hyperparameter values used in training are set as follows. The number of epochs, representing the number of times the entire dataset is iterated during the training process, is set to 150. The batch size, which denotes the amount of training data used in each epoch, is set to 2. The learning rate, which modulates the extent of weight updates, is set to 0.000125. Training is conducted using the Adam optimization algorithm with a base learning rate of 0.000125, as per Diederik and Jimmy. 27 Adam adjusts the learning rate by estimating the first moment (mean) and second moment (variance) of the gradient, promoting fast and stable convergence of the neural network.

The similarity between pairs of data points in the model is measured in terms of Gaussian kernels. The standard deviation of the Gaussian kernel controls its shape and sensitivity

Training results

The learning outcomes are validated by calculating the mAP values of the accuracy of the 3D bounding box and direction. Here, mAP is a metric used to evaluate the performance of object detection models. It scores the model based on its ability to predict the location and class of objects within an image comprehensively.

In this study, we compare the results after training with data labeled in the KITTI dataset format and data labeled in the format proposed in this study, both using the same training data. The purpose is to demonstrate that while the conventional KITTI dataset labeling is suitable for autonomous driving research on roads, our research aims to prove that our labeling approach is more appropriate for autonomous ship research in maritime environments.

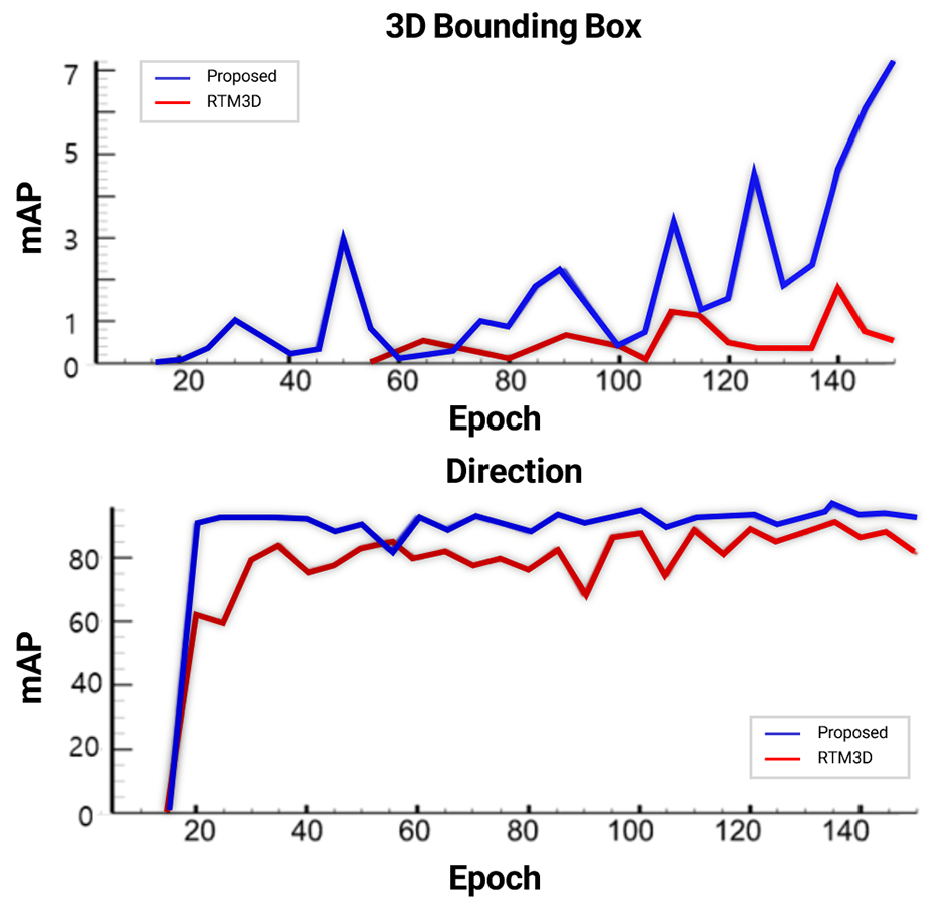

Figure 13 illustrates, through graphs, the variations in the 3D bounding box and direction mAP values as the training progresses. In this case, the mAP value is calculated by setting the IOU threshold to be 0.5. For the 3D bounding box, the mAP value is computed using a threshold of 0.5, whereas for the direction, the mAP value is indirectly influenced by the IOU threshold by calculating the average direction similarity between the ground truth and the predicted direction of the detected ships. This indirect influence corresponds to a decrease in the number of times the predicted bounding box direction matches the ground truth as the bounding box prediction frequency decreases, owing to a higher threshold. Therefore, the mAP value for the direction is higher than that for the 3D bounding box.

Training progress of the proposed model. The plot shows the variation of 3D bounding box and orientation mAP values over epochs, demonstrating improvements in detection accuracy.

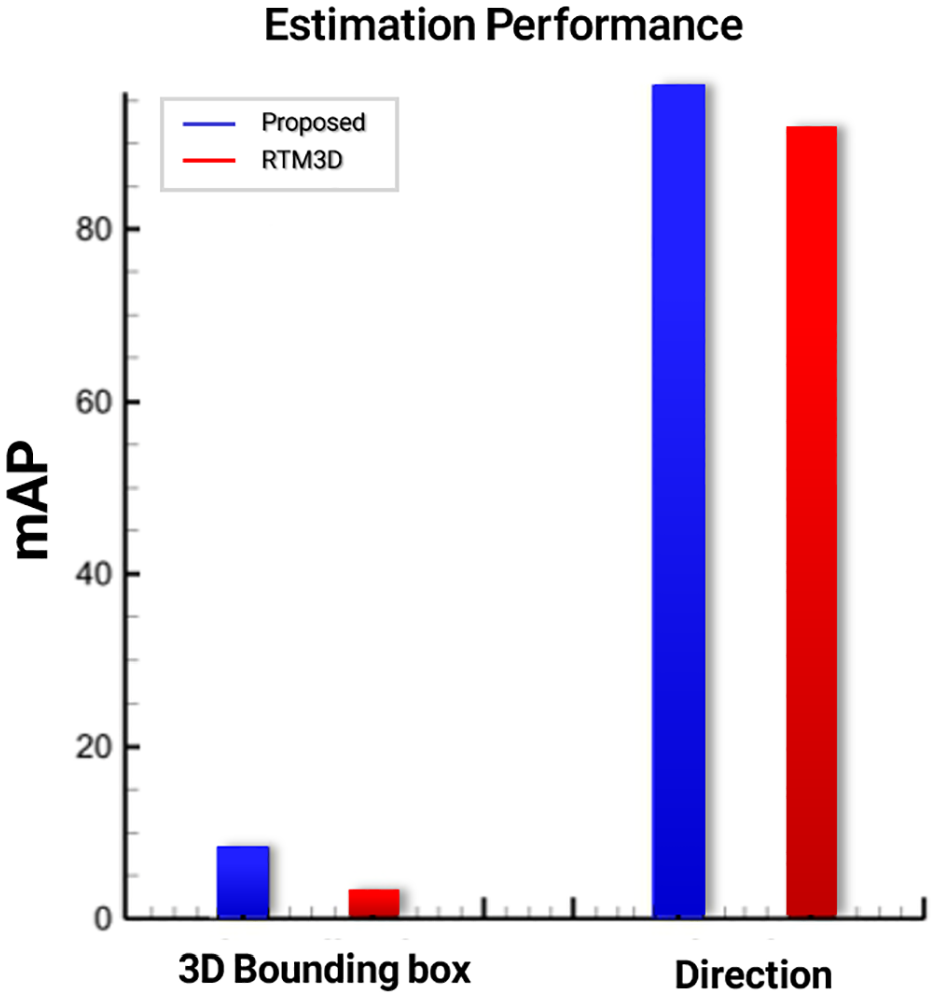

According to the experimental results, the proposed method outperformed the RTM3D approach, showing a 5.4 percentage point improvement in 3D bounding box mAP and a 5.79 percentage point increase in direction mAP. In Figure 14 displays graphs of the 3D bounding box and direction mAP values at their peak performance. The mAP value for the 3D bounding box at peak performance is increased by approximately 3.1 times, and the mAP value for the ship direction at peak performance is improved by approximately 1.09 times using the proposed method. In terms of computational efficiency, the proposed model achieves an inference speed of 36.93 FPS on an RTX 3080 GPU, demonstrating its suitability for real-time applications in maritime environments.

Performance of the proposed model. The plot compares peak performance, showing that the proposed method achieves a 5.4 percentage point improvement in 3D bounding box mAP and a 5.79 percentage point increase in direction mAP compared to RTM3D.

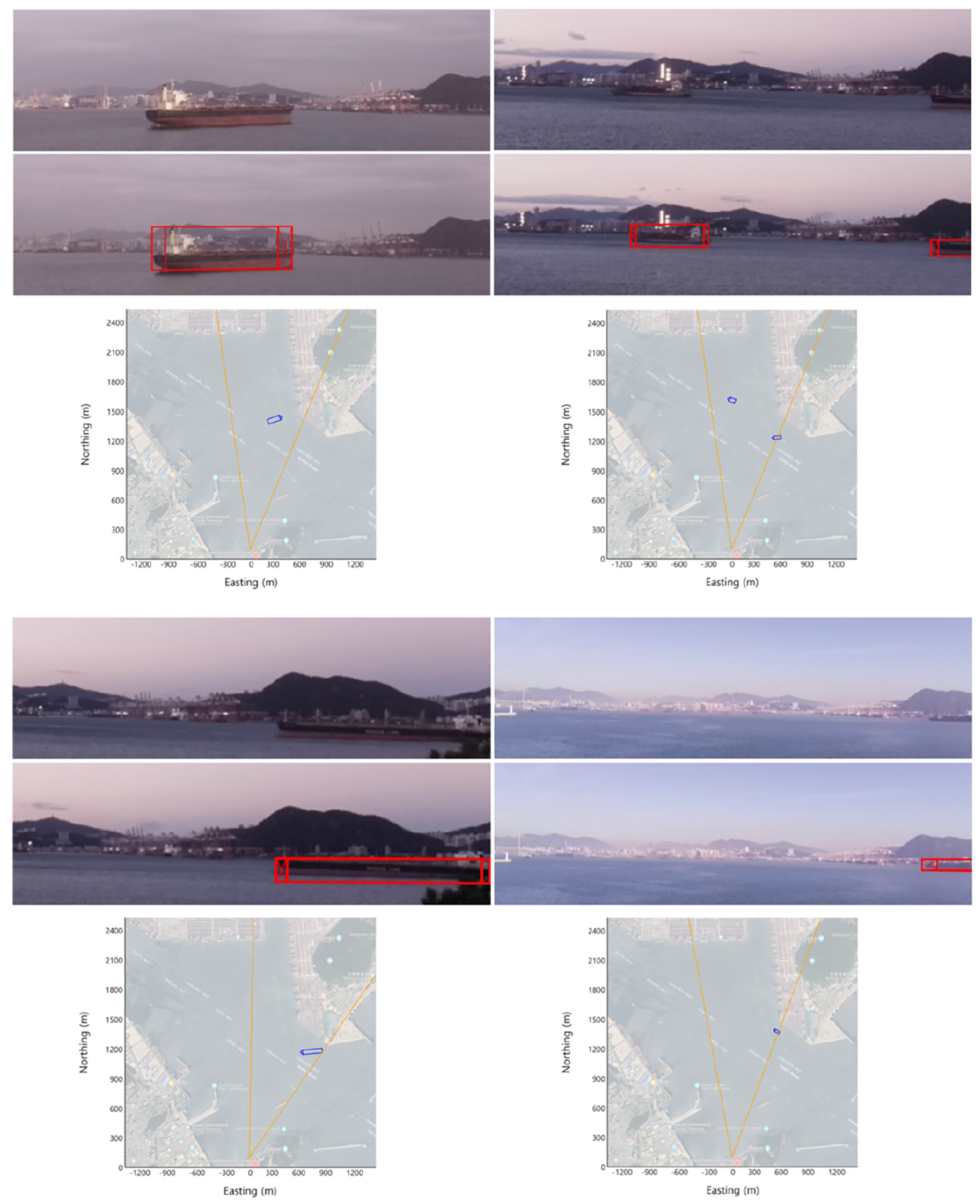

The visualization results for 3D ship detection are depicted in Figure 15, which displays the 3D bounding box created at the ship’s location. The prediction results for four scenarios are presented, with the original image at the top, the 3D detection visualization image in the middle, and the BEV detection image at the bottom. Each BEV detection image represents the camera’s origin position, the camera’s field of view, and the ship’s location. The camera’s field of view is set to approximately 35°. Through visualization, the ability to detect ships that are out of the image frame can be confirmed. This showcases one of the key features of keypoint detection, which is capable of detecting objects that are off-screen or obscured. Further, by utilizing data on the varying travel directions of ships anchored over time as test data, the capability of detecting 3D locations is verified and the ability to predict directions is confirmed.

Visualization results of the 3D ship detection system. The first column shows the original images, the second column presents the detected ships with 3D bounding boxes overlaid, and the third column displays the corresponding bird’s-eye view (BEV) projections. The BEV images illustrate the estimated ship positions relative to the camera, validating the spatial accuracy of the detection algorithm.

Conclusions

In this study, we proposed a 3D ship detection model for maritime environments using monocular camera data only. To compensate for the depth estimation limitations of monocular vision, relative distance information from AIS data was incorporated to generate ground truth annotations. Furthermore, horizon and center point features were utilized to improve detection accuracy.

The training results of the proposed method exhibited a 5.4 percentage point increase in 3D bounding box mAP and a 5.79 percentage point enhancement in direction mAP, compared to the RTM3D network. On this basis, we developed a 3D ship detection network capable of estimating object 3D pose and size, which are difficult to obtain using conventional 2D object detection methods. A limitation of this study is that the ship detection performance was not verified under diverse weather conditions or across different geographic locations. Addressing this in future studies will enhance the robustness of the algorithm and facilitate the development of a highly reliable ship detection system.

In addition, future research plans to use hybrid sensor fusion technology integrating monocular vision with radar and LiDAR to improve detection robustness and accuracy under adverse weather and environmental conditions. This aims to expand the scope of this research and enhance the precision and applicability of ship detection technology. Furthermore, we plan to expand the application of this research beyond static environments to scenarios involving moving platforms, such as cameras mounted on vessels. In such cases, additional techniques, including adaptive calibration, may be required to accommodate the dynamic nature of the environment. The 3D ship detection method proposed in this study is expected to play a vital role in the precise estimation of the size, location, and travel direction of marine vessels. This information underpins the situational awareness of technologies required for autonomous vessels to navigate safely along intricate routes. Thus, this study is expected to be a fundamental contributor to the future development of autonomous-ship technologies. To promote reproducibility and facilitate further research, the source code will be made publicly available at https://github.com/mimoklim/RTM3D_3DShipDetection.

Footnotes

Ethical considerations

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Korea Institute for Advancement of Technology (KIAT) grant funded by the Korea Government (MOTIE; RS-2021-KI002493, The Competency Development Program for Industry Specialist) and Korea Institute of Marine Science & Technology Promotion (KIMST) funded by the Ministry of Ocean and Fisheries, Korea (RS-2024-00432366).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Data associate with the paper is available upon requests to the corresponding author.