Abstract

The research on face recognition has become an integral part in the field of many engineering areas. The variation in the appearance of an human image makes proper face recognition a difficult task. However, face identification is an extremely important aspect of human identification system especially in gender identification system. Thus, this paper has aimed to detect the gender of human beings based on different frontal facial features. In this case we have considered facial images with different emotions like neutral, happy, sad, angry and surprised, respectively. To perform gender identification, frontal facial features are detected and extracted based on Region of Interest principle. Then, Fast Fourier Transform and Discrete Cosine Transform algorithmic logics have been incorporated to transform the input data from spatial domain to frequency domain, and on the resultant data further operations are performed to accomplish the task of gender identification. This has made the proffered algorithm different and unique from the existing algorithms. In addition, an alternate approach has also been proposed for gender identification and revelation based on the shape and structure of human finger nails. The latter method has primarily emphasized on the structural organization of finger nails in both male and female. The propounded algorithm has also succeeded in determining the gender of human being based on the shape and structure of human finger nails.

Introduction

Human beings have the capability to identify different faces based on their visual competence and understanding. Even after a long period of time, humans can recognize a large number of similar faces that were seen by eyes at some ceremonial places. Although many algorithms have been developed by researchers for detection of facial parts individually, it is not possible to create the actual human perception system. Several methodologies have already been suggested by the researchers to identify a face effectively. The objectives of these algorithms is to detect and identify different components in a face like eyes, nose, lips, chin, facial skin and so on. In addition, it also aims at identifying the face shape and face size. Therefore, the ongoing research on face recognition system fundamentally aims at developing a system which will perform its intended functions efficaciously in real-world applications. In this context, multiple techniques have been recommended by the researchers to accomplish the task of face recognition with higher degree of accuracy. One such proposal was the use of three-dimensional (3D) faces instead of normal facial images. One practical application is crime investigation system where identification of gender is required from facial images which can either be two-dimensional (2D) or 3D facial images.

Any research related to facial image comprises of two parts. One part is the verification of face and the other part is the identification of face. Verification refers to identification of a face and identification refers to recognizing a face. For identification, it is necessary to extract features from a facial image. Then, the extracted features are compared with the features stored in the image database to recognize a face appropriately. However, the authenticity of these algorithms is unpredictable.

In this paper, we have incorporated the logic of fast Fourier transform (FFT) and discrete cosine transform (DCT) for the detection of human gender based on the extracted features of a facial image.

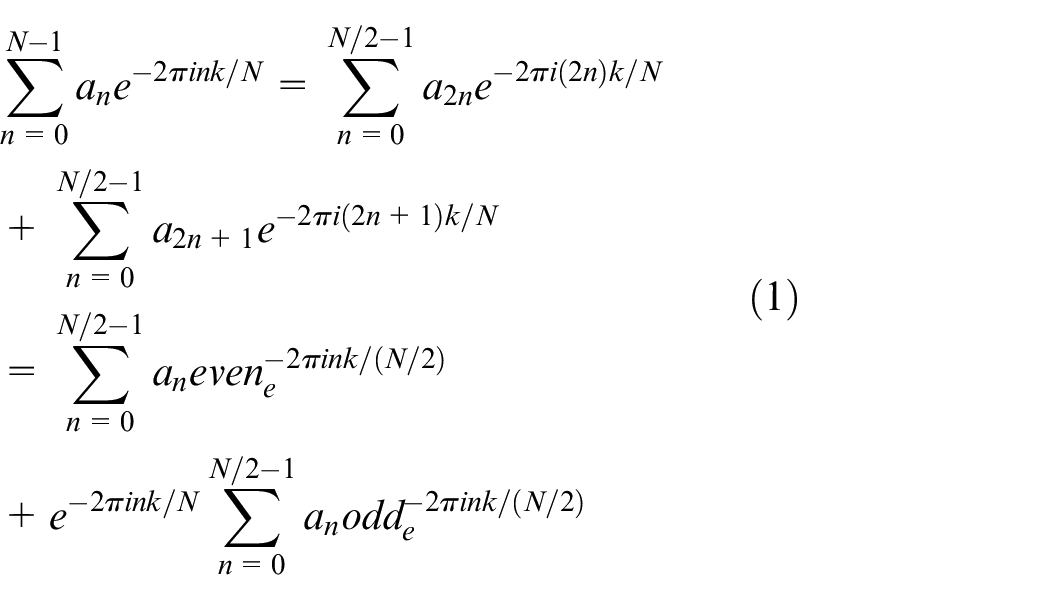

The FFT is a discrete Fourier Transform algorithm which is needed to minimize the number of calculations for “N” points from 2N2 to 2Nlg N, where lg refers to the base-2 logarithm. The elementary idea is to divide a transform of length “N” into two transforms of length N/2 using the formula as shown in equation (1) 1

FFT has wide variety of a\pplications in signal processing. It has proved beneficial for operations like reading sound waves and several other applications. FFT can also be used for solving different equations or in various types of frequency activities which prove to be useful in variety of ways. This algorithm has proved to be highly beneficial in the field of engineering and mathematics where it aims at changing or developing elements in different technologies like sound engineering, seismology or in voltage measurements.

Similarly, DCT can be defined as a technique which is used to convert the pixel values in spatial domain to values in frequency domain. In JPEG (Joint Photographic Experts Group) compression an image is divided into 8 × 8 blocks, and 2D DCT as explained below is applied to each of these 8 × 8 blocks. In JPEG decompression, the inverse DCT (IDCT) is applied on each of the 8 × 8 DCT coefficient blocks 1

Thus, DCT helps in separating an image into multiple components or spectral sub-bands with varying visual quality.

In our experiment, we have calculated the length and width of the extracted features like forehead, eyes, nose, eyebrow, chin and upper lip by utilizing the Euclidean distance formula for distance measurement. These values form an input matrix on which the proffered algorithm is implemented to obtain the end result. The role of the FFT and DCT methods in our experiment is to generate the coefficient matrices corresponding to the input matrix. Then, a threshold value is computed based on the values of the coefficient matrix. The next step involves generation of binary matrix corresponding based on the following logic, that is, if the coefficient value in the coefficient matrix is greater than the threshold value, then the outcome in the binary matrix will be “1” else “0.”

This paper has also defined another methodology for gender detection which is based on the shape and structure of human finger nails. A human hand comprises of five fingers namely thumb, index, middle, ring and little fingers respectively. These five fingers have nails of different shapes like (1) oval; (2) almond; (3) round; (4) square; (5) stiletto; (6) coffin/ballerina; (7) squoval; (8) flare; (9) mountain peak; (10) edge; (11) lipstick and (12) arrow-head. 2 Furthermore, it is an experimental proven fact that the shape and structure of male and female finger nails are different. Like, female nails are usually longer and narrower than the male nails which are comparatively shorter and broader. Also the area of a male nail is larger than a female nail because of its broad structure. Thus, in the proffered methodology this logic has been incorporated to determine the gender of human being corresponding to a specific nail structure.

Related works

Face recognition plays a pivotal role in gender detection that has predominant application in crime investigation, reconstruction of face, face detection and so on. Gender detection or identification inherently determines the gender from facial images. Facial image analysis has its wide application in biometrics, human–robot interaction and computer perception. In biometrics face recognition and authentication plays an important role in various applications. In all applications the gender examination is required. The existing methods for gender identification are divided into feature-based method and appearance-based method.

The objective of the appearance-based method is to extract features from a facial image where the facial image is interpreted as a one-dimensional vector and then a suitable classifier is used to perform the gender classification operation. To achieve this, researchers primarily extracted pixel intensity values and then fed these values to the classifier to perform classification. This method involves the following steps. The first step is the preprocessing step where face alignment, image resizing and illumination normalization operations are performed. In the next step, the image transformation is performed to typically reduce the dimensions of the input image and also to analyzes the underlying structure of the image. Finally, the image classification operation is performed using a suitable binary classifier like support vector machine classifier. Other classifiers that can be applied are decision trees, neural networks and AdaBoost.3–6

A complete face analysis algorithm is proposed in Moghaddam and Yang, 7 that performed three different operations like gender, race and age determination in a single framework. It has been practically observed that deep convolutional neural networks (CNNs) yield outstanding results when used for different image recognition problems. This is explained in paper. 8

Furthermore, to obtain vivid information about gender classification methodologies, a review paper explained in Khan et al. 9 can be explored. In the paper, the authors performed test operations on two databases, file exchange interface (FEI) 9 and a self-built database, and extracted different texture features from facial images. These texture features are extracted in three different levels that includes global, directional and regional levels, respectively. A kernel-based support vector machine has been used to perform classification. Similarly another concept related to gender and age is described in Modesto et al. 10 and Ping-Han et al. 11 Another paper 12 proposed a mechanism to achieve gender classification based on facial image. A hybrid system for gender and age classification has been presented in Khan et al. 12 In this paper, features are extracted through CNNs, and an extreme learning machine (ELM) algorithm is used for classification.

Proposed methodology

The propounded methodologies described in this paper primarily aims at determining the gender of a person on the basis of different components present in human body. The first proffered technique analyzes the performance of the FFT and DCT algorithms in determining the human gender when implemented on the input data set comprising of frontal facial images. The second methodology enables an end user to identify the gender of human being based on the shape and structure of finger nails which comprises of thumb nail, index fingernail, middle fingernail, ring finger nail and little fingernail.

The first part of the experiment is executed in two facets. First, the primary features of a human facial image are extracted which includes the following: (1) forehead; (2) nose; (3) eyes; (4) chin; (5) eyebrow; (6) upper lip; (7) nose tip–upper lip. Second, Euclidean distance formula has been used to compute the length and width of the extracted features with respect to their positions in a human face.

It is experimentally proved that the following certainties can clearly distinguish a male gender from a female gender:

(1) The forehead is more pronounced and larger in male than female.

(2) The eyes are more defined and larger with long eye lashes in female than male.

(3) A female has long and thin eyebrows whereas a male has shorter and thicker eyebrows.

(4) A female has a rounder face with short chin compared to a male whose face is predominantly square shaped with longer chin and a sharp and wide jawbone.

(5) The upper lip is thicker in a female face over a male face.

(6) The nose in a female face is combatively shorter and narrower compared to a male face.

(7) The region between the nose tip and the upper lip is wider in a male face over a female face.

These actualities have been effectively used in our experiment to identify and discriminate between a male and a female face.

Once the Euclidean distances including (1) forehead width; (2) eyes length; (3) eyebrow length; (4) eyebrow width; (5) nose length; (6) chin length; (7) upper lip width and (8) nose tip–upper lip width are obtained, a matrix is formed. In the matrix, each value represents the computed distance of the extracted features. This matrix is passed as an input to the FFT algorithm and DCT algorithm to obtain the coefficients in the frequency domain. On the basis of these coefficients a threshold value (T) is computed. This threshold value is used to identify the gender of the facial image in the following way.

For a given face,

If forehead width (FW) is >T; eye length (EL) <T; eyebrow length (EBL) <T; eyebrow width (EBW) >T; nose length (NL) >T; chin length (CL) >T; upper lip width (ULW) <T; nose tip−upper lip (NTUW) >T, then the predicted gender is male otherwise female. In this case, the value of the threshold (T) may vary with each computed distance.

The last section of the experiment aims at identifying and distinguishing between male and female genders based on the shape and structure of finger nails. It has been inferred through experiments that male finger nails are considerably broader and larger as compared to female finger nails which are usually thinner and narrower. Furthermore, the surface area of a male finger nail is larger than a female finger nails. These facts have been utilized in the proposed methodology to ascertain the human gender. The proffered algorithm has been implemented in the following way.

At the first step, the length “l” and width “w” of individual finger nail are calculated. Then the length (l)/width (w) ratio of each finger is computed. In this case, the Euclidean distance formula has been used to perform the distance computation. At the conclusive step, the area of each finger nail is computed. Finally, these two enumerated values are used to detect and reveal the gender corresponding to the finger nail.

Design

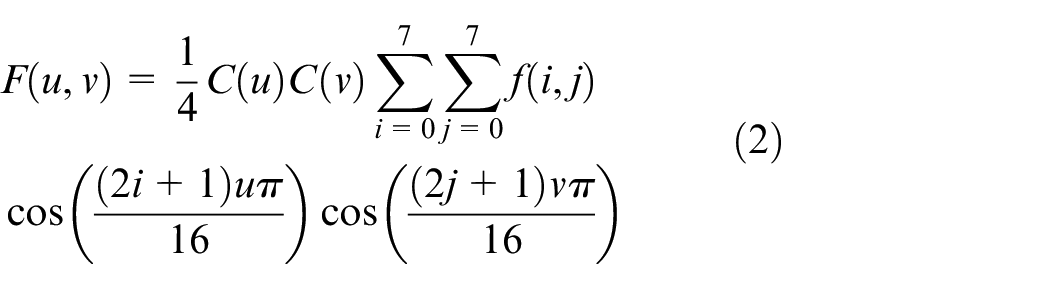

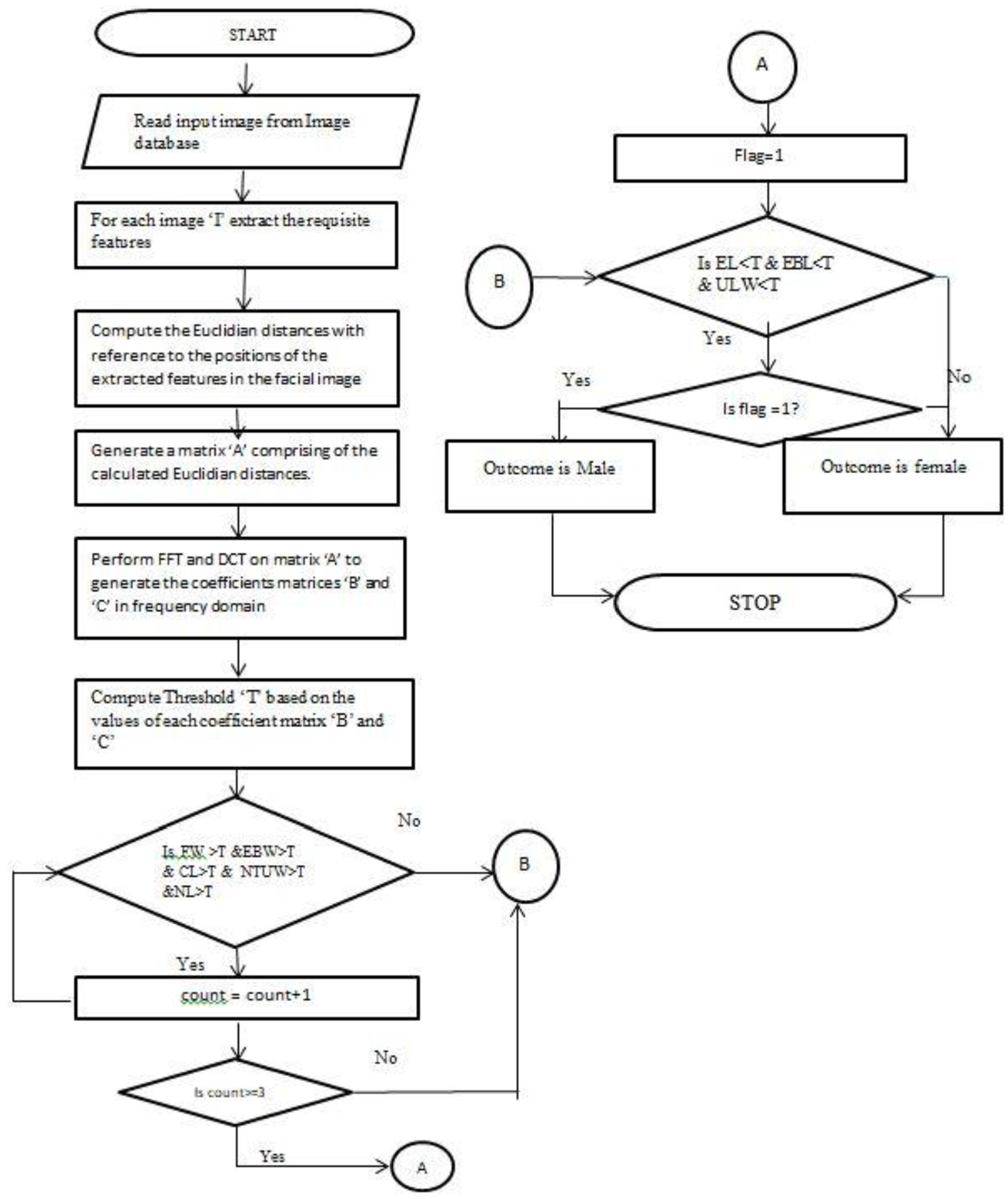

This section aims at depicting the diagrammatic representation of the proposed technique in the form of flowcharts as shown in Figures 1 and 2 which depict the flow of control or the logical sequence of the procedure.

Phase I—flowchart I

Diagrammatic representation of the flow of logic for gender detection based on the extracted features of frontal facial image.

Phase II—flowchart II

Diagrammatic representation of the flow of logic for gender detection based on the shape and structure of human finger nails.

Algorithm

In this section, the detailed algorithmic representation of the proffered technique is illustrated in the form of stepwise logical sequence of instructions.

Phase I—Algorithm I

Procedure GenderIdentify_FFT + DFT (Image Set)

//Perform gender detection based on the coefficient values obtained at frequency domain

Begin

(1) Read Individual image from Image Database

(2) Compute the size of the image database and assign to “n”

(3) for each image i = 1 to n (3.1) Obtain the grayscale Gray{i} for each RGB image

(4) end for

(5) for each image i = 1 to n (5.1) Perform the Histogram Equalization on each image and store the resultant image in HIS{i}

(6) end for

(7) for i = 1 to n (7.1) extract features ‘forehead’, ‘nose’, ‘eyes’, ‘eyebrow’, ’chin’, ’upper lip’, ‘nose tip-lip’ from stored images in HIS{i}. (7.2) Use Euclidean distance formula to compute the forehead width ‘FW’, nose length ‘NL’, eye length ‘EL’, eyebrow length ‘EBL’, eyebrow width ‘EBW’, chin length ‘CL’, upper lip width ‘ULW’ & nose tip – lip width ‘NTUW’. (7.3) Form a matrix ‘A’ comprising of the calculated values.

(8) end for

(9) Perform FFT on the matrix “A.” Form the resultant matrix “B” comprising of the coefficients in frequency domain.

(10) Perform DCT on the matrix “A.” Form the resultant matrix “C” comprising of the coefficients in frequency domain.

(11) for i = 1torow//row indicates the no of rows in the coefficient matrix (11.1) for j=1tocol//col indicates the no of columns in the coefficient matrix (11.2) Th=Call function Thresh (matrix B, row, col) // Compute //the threshold values for matrix B (11.3) Call function Thresh_Compute (Th, matrix B, row, col) (11.4) end for (11.5) end for

(12) for i = 1 to row (12.1) for j=1 to col (12.2) Th=Call function Thresh (matrix C, row, col) // Compute threshold values for matrix C (12.3) Call function Thresh_Compute (Th, matrix C, row, col) (12.4) end for (12.5) end for

(13) Display Result

(14) End

Thresh (D(]() m) //Computes the threshold based on the coefficient matrix

Begin

(1) for i = 1 to n (1.1) sum=0 (1.2) for j=1 to m (1.3) sum=sum + D[i][ j] (1.4) end for (1.5) Th[i]=sum/col

end for

End

Thresh_Compute (Th () D (](], n, m)

Begin

(1) for i = 1 to n (1.1) for j=1 to m (1.2) if (D[i][j]>=Th[i]) (1.3) E[i][j]=1 // ‘E’ is a binary matrix whose value ‘1’ (1.4) else // male and ‘0’ indicates female (1.5) E[i][j]=0 (1.6) end if (1.7) end for (1.8) end for

End

Phase II—Algorithm II

Procedure GenderDetect_NailStructure (Image Set)

// Perform gender identification based on nail structure

Begin

(1) Read individual image from the Image Database

(2) Compute the size of the image database and assign to “n”

(3) for each image i = 1 to n (3.1) Obtain the grayscale Gray{i} for each RGB image (3.2) end for

(5) for each image i = 1 to n (5.1) perform the Histogram Equalization on each image and store the resultant image in HIS {i} (5.2) end for

(6) for each image i = 1 to n (6.1) Extract the respective nail structure from each finger. (6.2) Compute the length ‘l’ and the width ‘w’ of each finger nail. (6.3) Compute the l/w ratio of female finger nail, store the result in ‘R1’ and l/w ratio of male finger nail and store the result in ‘R2’. (6.4) Compute the area of female finger nail, store the result in ‘A1’ and the area of male finger nail, store the result in ‘A2’. (6.5) end for

(7) Form a matrix “M” comprising of lengths “l,” widths “w,”‘R1 “R2.”

(8) for each i = 1to col //’col’ indicates the no of columns in matrix M (8.1) for each j=1 to row // ‘row’ denotes the no of rows in matrix M (8.3) T1= Call function Thresh (M, col, row) // Computes the threshold value for the matrix M (8.4) Call function Thresh_Compute (T1, M, col, row) (8.5) end for (8.6) end for

(9) Form a matrix A comprising of “A1” and “A2.”

(10) Call function thresh_compute(A, row) // “row” indicates the no of rows in matrix “A”

(11) Display Result

(12) end for

End

thresh_compute (A, n)

Begin

Step (1) a = 1

Step (2) b = 1 to n

Step (2.1) if (A[a][b]> A[a][b + 1])

Step (2.2) E[a][b]= 1

Step (2.3) E[a][b + 1]= 0

Step (2.4) else

Step (2.5) E[a][b]= 0

Step (2.6) E[a][b + 1]= 1

Step (1.5)end if

Step (1.6) end for

End

Experimental results

Data set

In our experiment, an image database is generated comprising of 240 images (120 males and 120 females), where each image denotes the frontal facial image of male or female with a specific emotion, that is, neutral, happy, sad, surprised and anger. The input images are primarily obtained from the Yale face database available in the Internet. Also we have referred to other images available online to constitute our input image set.

In the second phase of the experiment, we have used altogether 150 images of human finger nails comprising of 75 male finger nails and 75 female finger nails.

Experimental procedure and result

The proposed methodology aims at accomplishing the following two tasks:

Gender discrimination based on coefficient values at frequency domain by applying FFT and DCT.

Gender detection and revelation based on the shape and structure of the human finger nail.

To achieve the end results, the experiments have been carried out in two stages.

Stage I

At this stage, the gender discrimination is performed on the basis of some features of a human face. The proposed algorithm is implemented in four phases.

Phase I

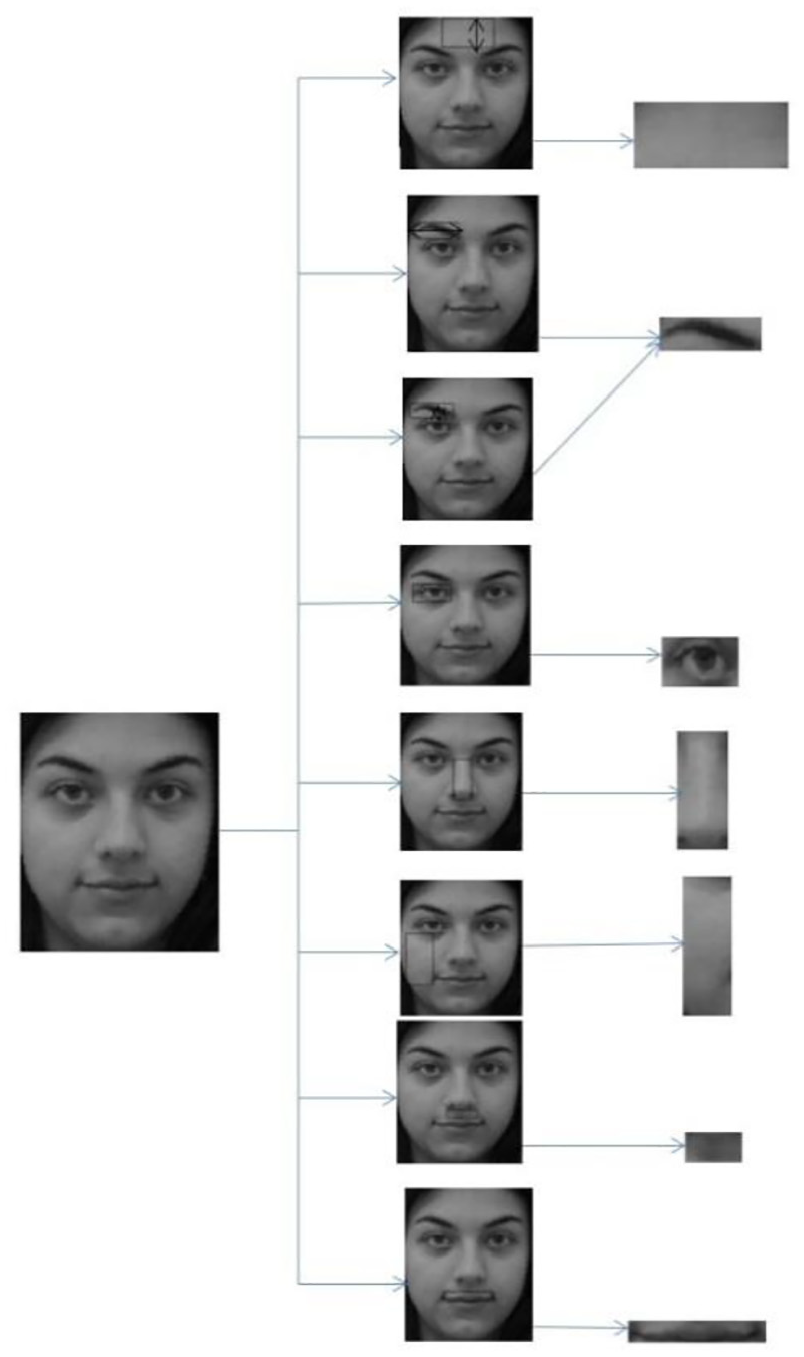

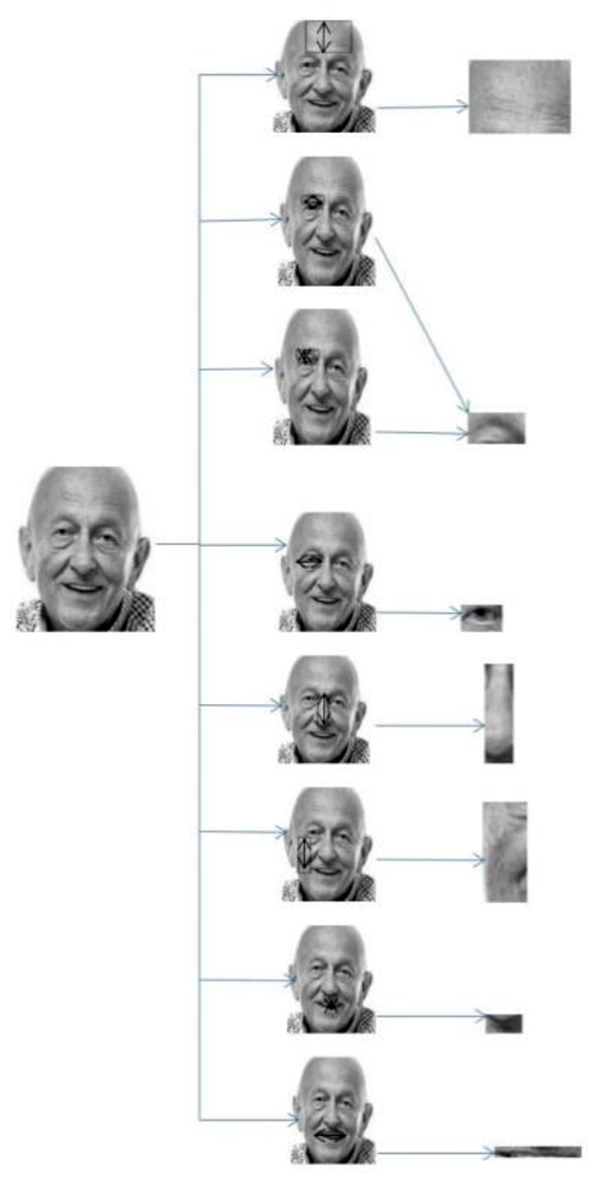

In this phase the aforementioned lineaments, that is, the forehead, eyebrow, eye, nose, chin, upper lip are extracted. The extraction procedure is depicted in Figures 3 and 4, respectively. Then, Euclidean distance ED = sqrt((x2−x1)2+ (y2−y1)2) is calculated based on the original position of these extracted features on the facial image. Thus, this distance formula has enabled us to compute the length and width of the extracted lineaments.

Diagrammatic representation of extracted features from facial image with neutral expression.

Diagrammatic representation of extracted features from facial image with happy expression.

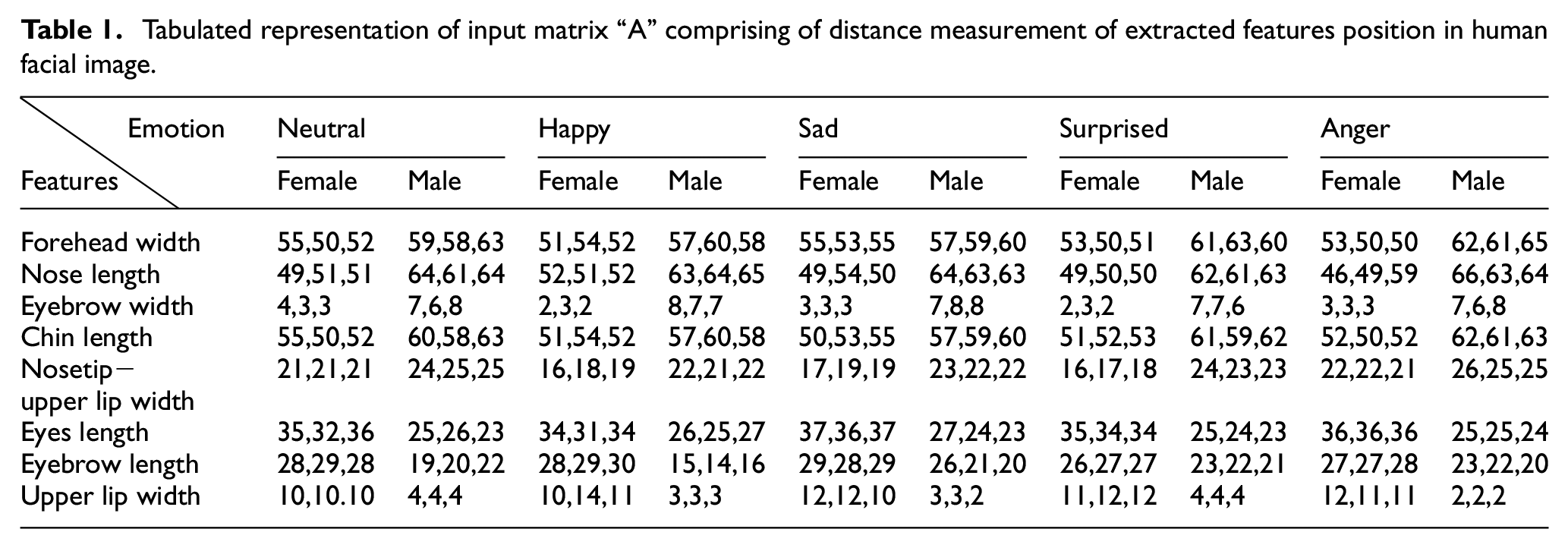

The experimental results obtained by implementing the above stated procedure are depicted in Table 1.

Tabulated representation of input matrix “A” comprising of distance measurement of extracted features position in human facial image.

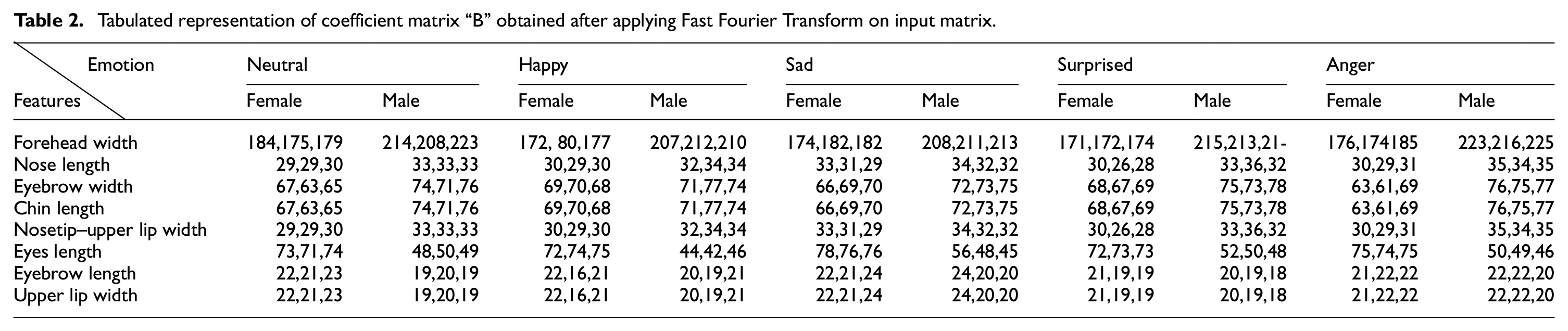

Phase II

In this phase, a matrix is generated comprising of the observed or experimental data. The FFT algorithm is implemented on this vector and a coefficient matrix is obtained as the output. The coefficient matrix represents the values obtained by the transformation of input values from spatial domain to frequency domain. Similarly another coefficient matrix is obtained by implementing DCT algorithm on the input vector.

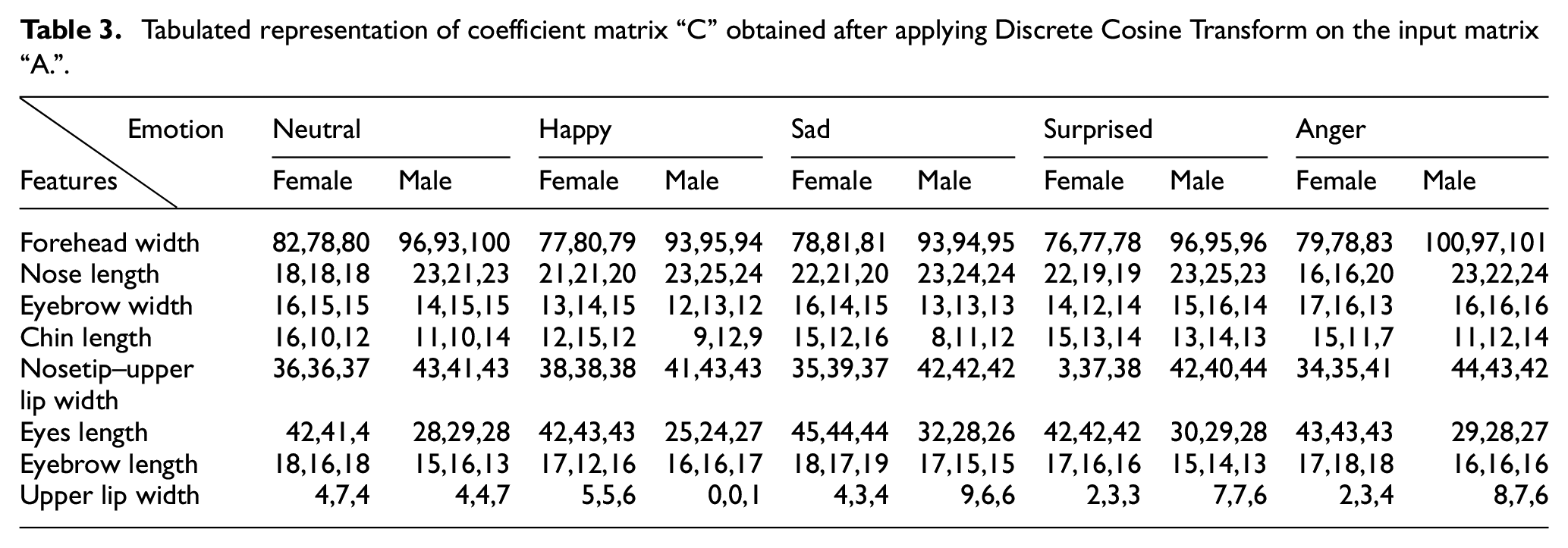

The experimental results obtained are shown in Tables 2 and 3.

Tabulated representation of coefficient matrix “B” obtained after applying Fast Fourier Transform on input matrix.

Tabulated representation of coefficient matrix “C” obtained after applying Discrete Cosine Transform on the input matrix “A.”

Phase III

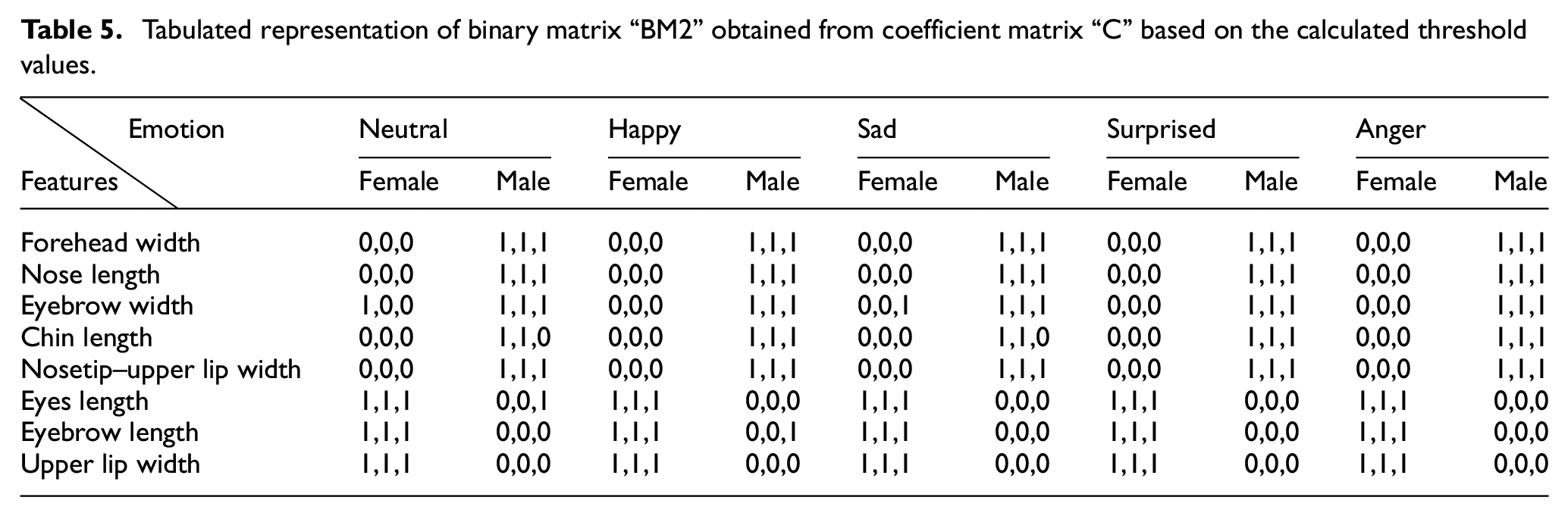

In the last phase, a key computation is performed, that is, threshold calculation. The threshold value is one of the primary components which help in determining whether the final outcome is male or female. This threshold value is calculated using the following formula

where “m” refers to the number of rows and “n” refers to the number of columns.

Therefore

In our experiment, threshold value is computed distinctively for each extracted feature based on the values of the coefficient matrix. The role of the threshold in gender discrimination is described as follows.

Consider the coefficient matrix to be D[][] obtained after applying FFT/DCT.

Let the threshold computed for the feature “forehead” be “Th”

Therefore

where E(i,j) is the value of the pixel at “i”th and “j”th column in the binary matrix E[][] and D(i, j) is the value of the coefficient at row “i” and column “j” in the coefficient matrix D[][].

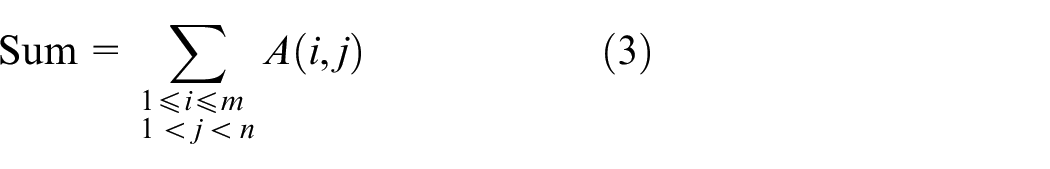

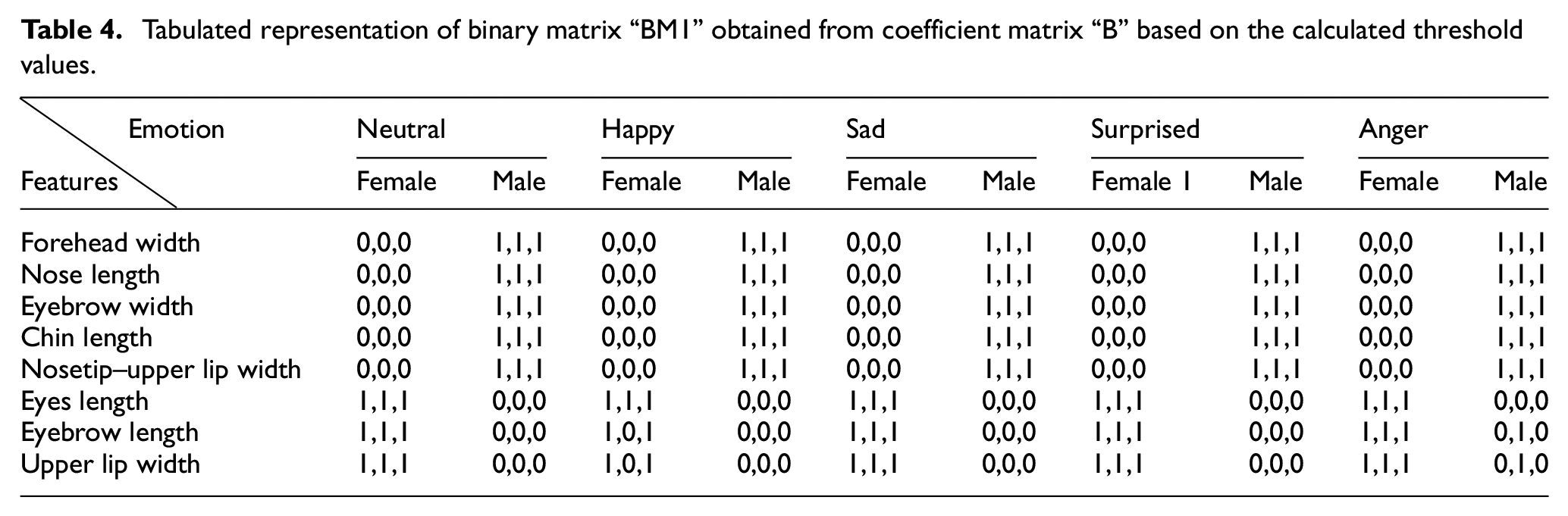

The inferred results obtained are represented in Tables 4 and 5.

Tabulated representation of binary matrix “BM1” obtained from coefficient matrix “B” based on the calculated threshold values.

Tabulated representation of binary matrix “BM2” obtained from coefficient matrix “C” based on the calculated threshold values.

In this way for each coefficient matrix a binary matrix is obtained where a value “1” at the “ijth” position in the matrix indicates that the threshold value “Th” >=coefficient in the coefficient matrix while a value “0” at the “ijth” position indicates that the threshold value “Th” <coefficient in the coefficient matrix.

For example, consider the extracted feature to be “eyebrow.” Since it is an experimental proven fact that the eyebrow length is larger in female than in male, therefore corresponding to the coefficient value in the coefficient matrix, for the parameter “eyebrow length,” the binary matrix will contain “1” for every female and will contain “0” for every male. Similarly for the extracted feature “forehead,” the binary matrix will contain “1” for every male and “0” for every female since the “forehead width” is considered to be larger in male than in female.

Phase IV

This is the conclusive phase where final testing is performed, that is, for each facial image the values of the binary matrix is checked against all extracted feature for that particular image. This is implemented by following the below-mentioned steps.

Let E[][] be the binary matrix.

Then,

for x = 1 to row

for y = 1 to col

if ((E[x][y]forehead = 1) &(E[x][y]NoseLength = 1) &(E[x][y]EyebrowLength = 0) &(E[x][y]EyebrowWidth= 1)

&(E[x][y]EyeLength = 0) & (E[x][y]ChinLength = 1) & (E[x][y]upperlipWidth = 0) &(E[x][y]Nosetip_LipWidth = 1))

then

Outcome is “Male”

end if

if ((E[x][y]forehead = 0) &(E[x][y]NoseLength = 0) &(E[x][y]EyebrowLength = 1) &(E[x][y]EyebrowWidth= 0) & (E[x][y]EyeLength = 1) & (E[x][y]ChinLength = 0) & (E[x][y]upperlipWidth = 1) &(E[x][y]Nosetip_LipWidth = 0))

then

Outcome is “Female”

end if

end for

end for

Stage II

At this stage the human gender is detected based on the shape and structure of finger nails which includes thumb nail, index finger nail, middle finger nail, ring finger nail and little finger nail. This task is accomplished by implementing the proffered algorithm as explained in the previous section in four phases.

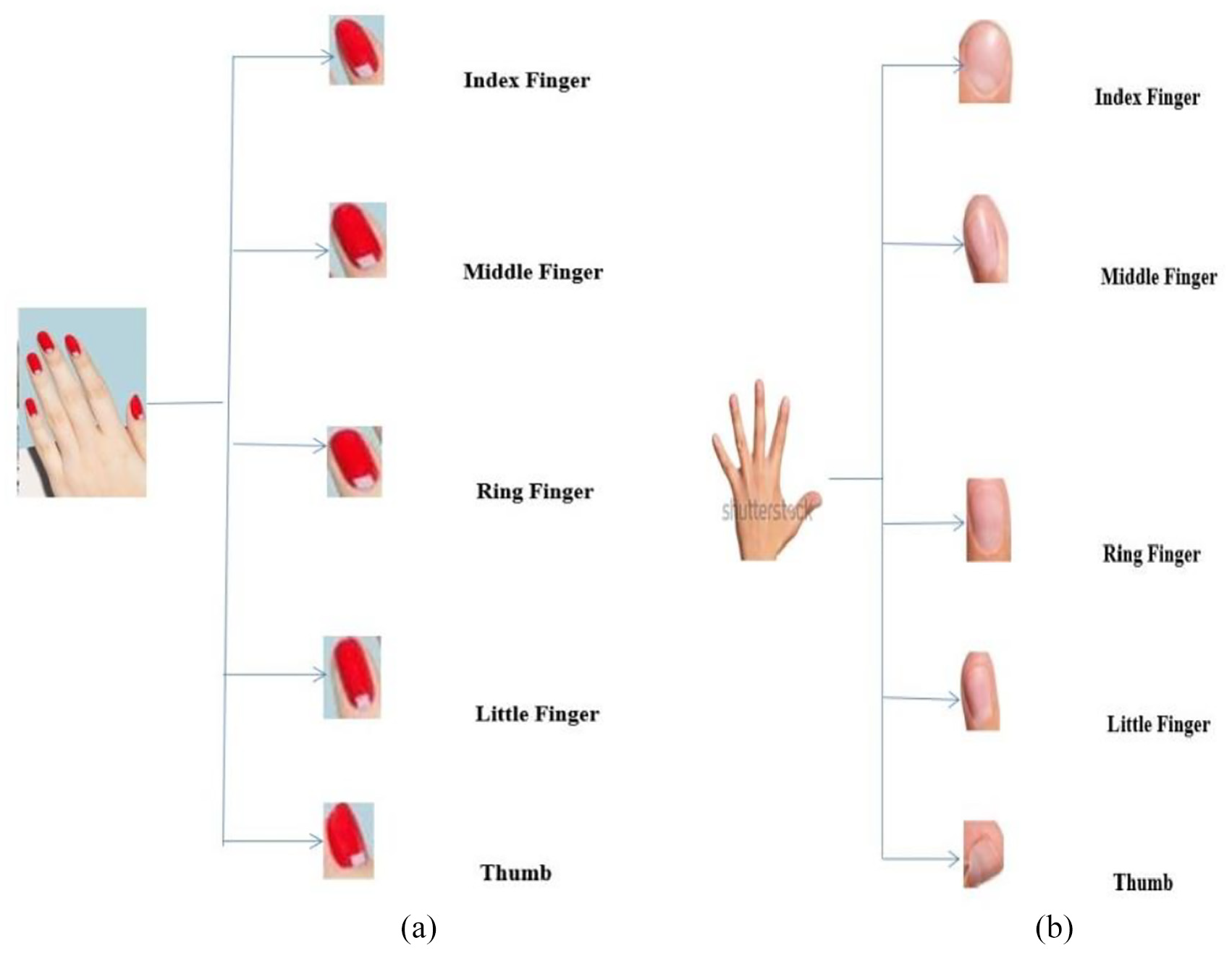

Phase I

At the inception, the nails of five fingers are extracted from the each hand and stored as depicted in Figure 5(a) and (b).

Diagrammatic representation of human hand and the extracted finger nails from respective hands: (a) Diagrammatic representation of the extracted female finger nails, (b) Diagrammatic representation of the extracted male finger nails. 13

Phase II

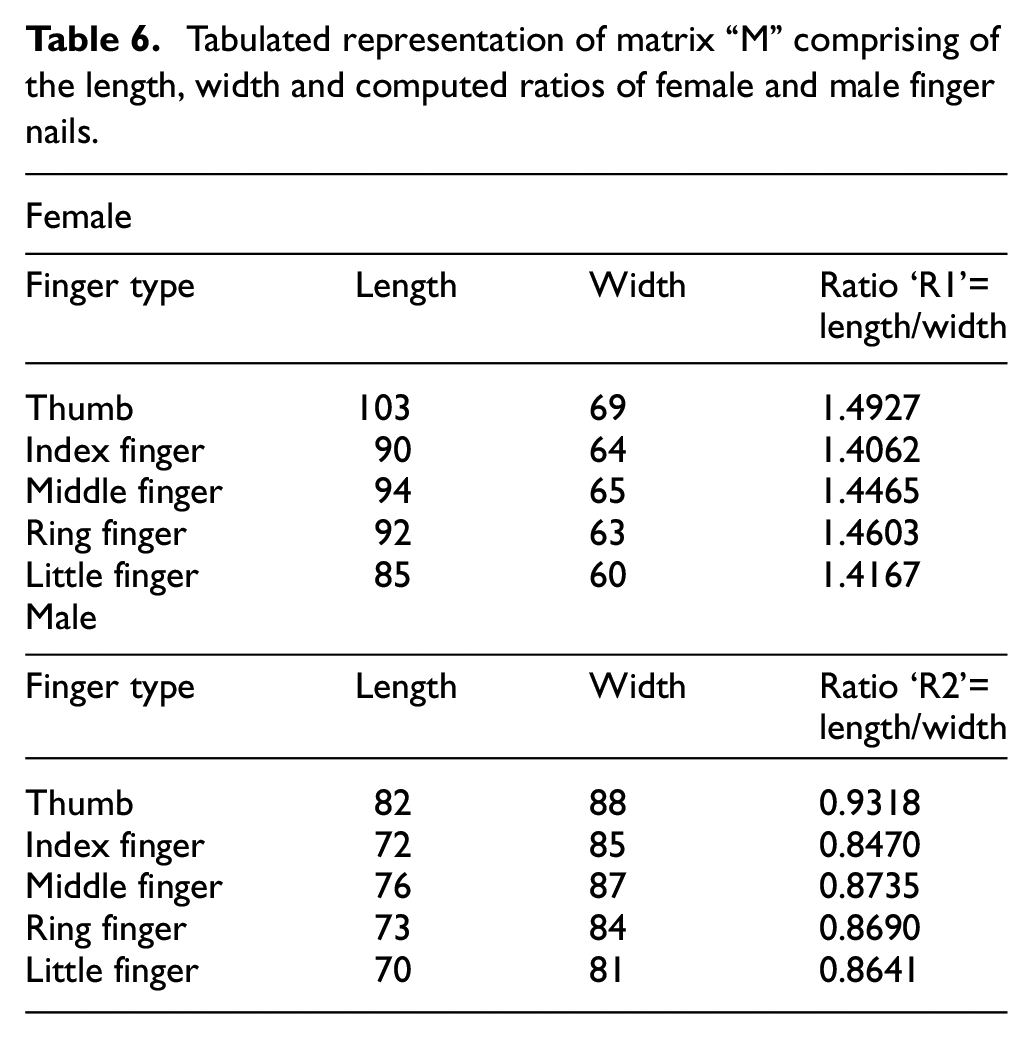

In this phase, for each finger the length and the width is calculated using the Euclidean distance formula as explained in Stage I of the experiment. Then the length/width ratio of each finger is calculated and stored in arrays “R1” and “R2.”

The inferred result is depicted in Table 6.

Tabulated representation of matrix “M” comprising of the length, width and computed ratios of female and male finger nails.

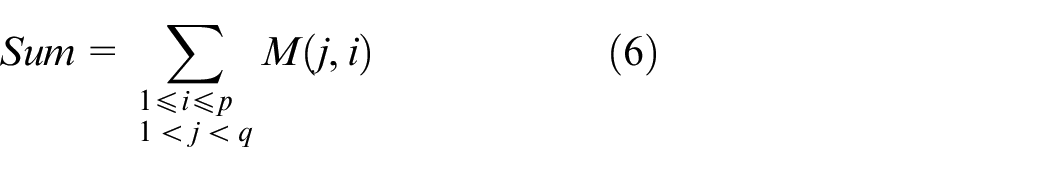

Among the stored result, a threshold value “T1” is obtained by following the equation as given below

Here, “p” refers to the number of rows and “q” refers to the number of columns of the matrix M.

Therefore

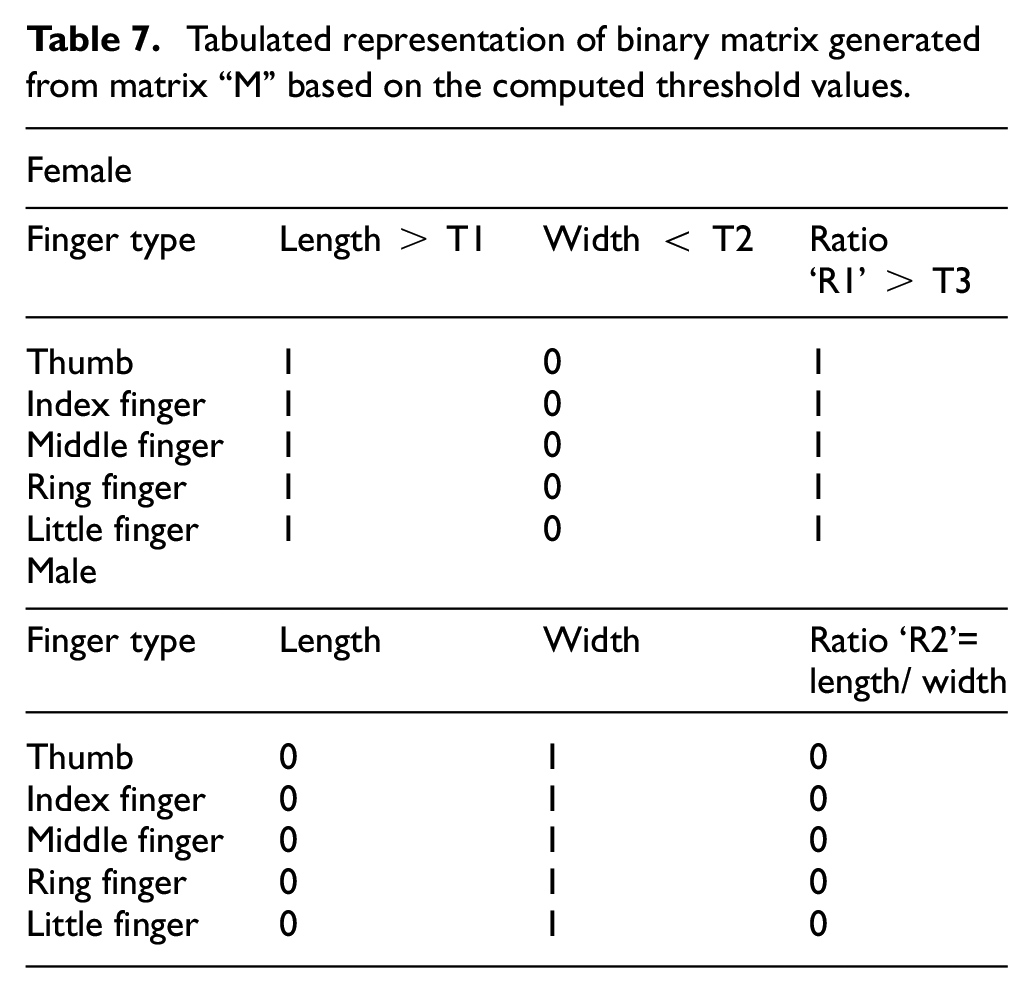

The inferred result is depicted in Table 7.

Tabulated representation of binary matrix generated from matrix “M” based on the computed threshold values.

Phase III

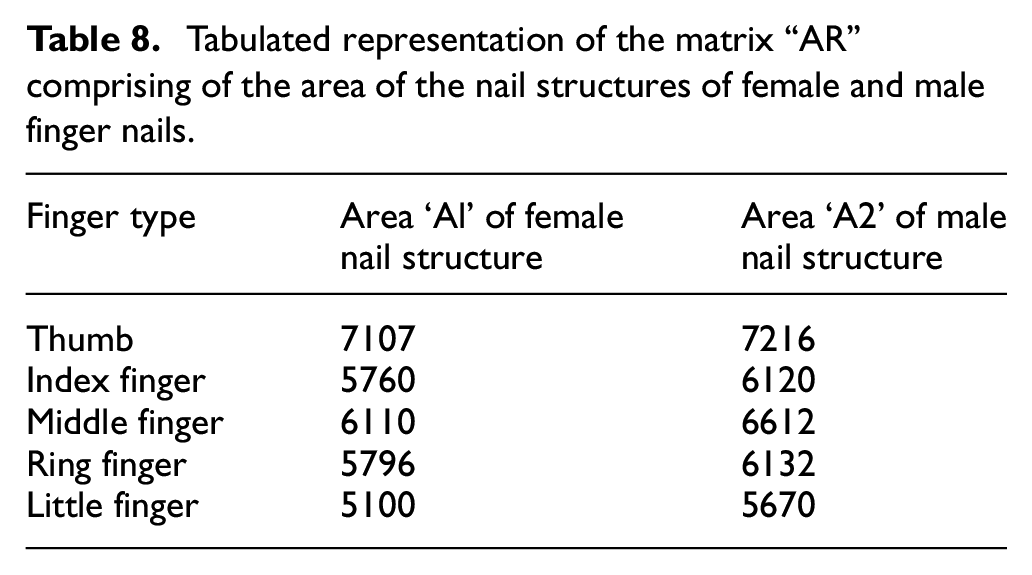

At this phase, the area of each finger nail for female and male is computed and stored in arrays “A1” and “A2.” Then, a matrix “A” is generated by concatenating “A1” and “A2.” The experimental results obtained are depicted in Table 8.

Tabulated representation of the matrix “AR” comprising of the area of the nail structures of female and male finger nails.

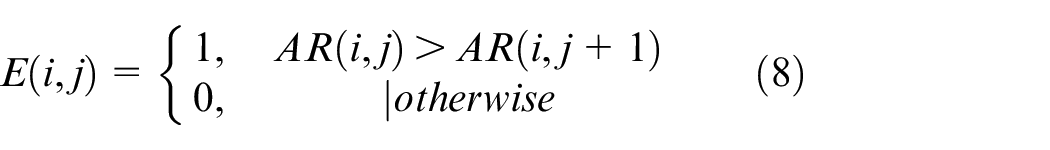

Based on the calculated values, a threshold value “T2” is obtained by following the equation as given below as shown in Equation 8

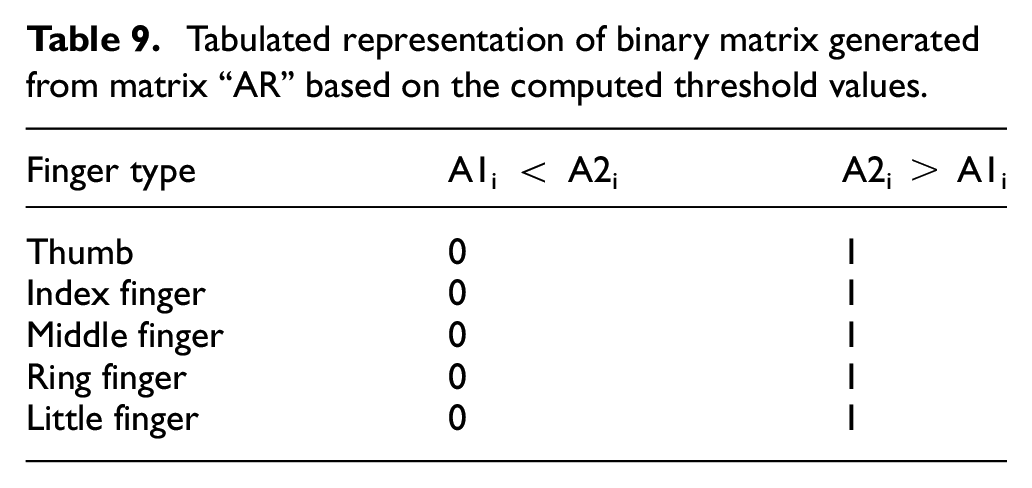

The experimental data are depicted in Table 9.

Tabulated representation of binary matrix generated from matrix “AR” based on the computed threshold values.

Phase IV

At the final phase, the gender identification and revelation is done in the following way.

That is

for each finger ‘i’th fingernail

if R1i > R2i & A1i < A2i then

The outcome is female

else

The outcome is male.

Conclusion

The face of human is the reflection of his personality. A human face plays a pivotal role in determining the identity of a person. The identity may be with respect to gender, age, caste, creed, emotion and so on. Thus, gender identification is the primitive phase in human identification process. Although there exists several supportive algorithms that serve the purpose of human gender detection, the methodologies proposed in this paper have equally succeeded in determining the gender of human being distinctly. In the first phase of the experiment, the various features in a human face have been utilized as the parameters for the experimentation. In our experiment, frontal facial images with different emotions have been considered since based on the emotional state of a person, a variation in facial expression is observed which creates a direct impact on the facial features. This logic has been utilized in length or breadth computation of the extracted features based on their positions in the frontal facial image. Further an attempt has been made to convert the computed values (which are basically the measured distances) from the spatial domain to frequency domain with the help of the FFT algorithm and the DCT algorithm with an objective of generating perfect result. The reason behind the conversion is that in frequency domain it helps to identify the sharpness of the features better than in spatial domain that eventually helps in discriminating the genders of human being. Furthermore, it can be used to identify the bandwidth of a region. This logic has been used in the feature extraction process where feature(s) are extracted based on the Region of Interest principal. In this case, the frequency domain helps in identifying the bandwidth of the boundary surrounding a particular feature. This computation cannot be performed in spatial domain.

In our experiment, the FFT algorithm has yielded improved result than DCT algorithm on the input data set. The reason is that the latter algorithm is best suited for larger input set roughly twice the length of data on which the FFT algorithm is implemented with even symmetry.

In the final phase of our experiment, an attempt has been made to identify the human gender based on the shape and structure of a human finger nail. However, in this part of the experiment we have made certain assumptions based on which the experimentation has been carried out. It includes the following:

The actual nail area has been considered for female/male excluding the extended part of the nail.

The size of the actual nail structure has been resized to a specific dimension before performing the computation since with different input images the size of the nail structure may vary.

For experimentation, one must choose the same alignment for hands, that is, either left hand or right hand. In our experiment, we have chosen the left hand for both male and female gender whose all five finger nails have been used respectively to perform the computation.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: Mrs S.G. and Prof. S.K.S. have received the funding support from the Department of Computer Science and Engineering, University of Calcutta. The terms of this arrangement have been approved by the University of Calcutta in accordance with its policy on its objectivity in research.