Abstract

Providing security to the citizens is one of the most important and complex task for the governments around the world which they have to deal with. Street crimes and theft are the biggest threats for the citizens and their belonging. In order to provide security, there is an urgent need of a system that is capable of identifying the criminal in the crowded area. This paper proposes a facial recognition system using Local Binary Patterns Histogram Face recognizer mounted on drone technology. The facial recognition capability is a key feature for a drone to have in order to find or identify the person within the crowd. With the inception of drone technology in the proposed system, we can use it as a surveillance drone as well through which it can cover more area as compared to the stationary system. As soon as the system identifies the desired person, it tags him and transmits the image along with the co-ordinates of the location to the concerned authorities using mounted global positioning system. Proposed system is capable of identifying the person with the accuracy of approximately 89.1%.

Keywords

Introduction

It is a fact that the face is an inherited identity of a person. A system based on facial recognition system is more suitable for the people who are not willing to collaborate with other means of biometric identification system such as finger print, iris or hand scan. Most of the time, the culprits get away with their loot and there is no system to track them. With the help of an image processing application such as facial recognition, it is possible to introduce a system that is capable of identifying the person committing a crime and at the same time, alert the concerned authorities to take precautionary measures in order to apprehend him. Some other applications for facial recognition include audio-visual scrutiny and safety measures. It is a biometric recognition process, but it requires face instead of hand or fingers. Military organizations prefer facial recognition technologies instead of finger print or hand scan. Since the introduction of Artificial Intelligence (AI), facial recognition system has become a worthy tool for the application such as this one. It is becoming more and more popular among researchers around the world in many applications such as medical, engineering, security, and so on.

Facial recognition algorithm proposed by Cheng et al. 1 introduces a deep sparse representation classifier to detect the facial features and identify the face of a person. Schools also introduced it for critical questions for specific students. 2 Kadambari et al. 3 also proposed a system that can take automatic attendance using facial recognition. Local Binary Patterns Histogram (LBPH) facial recognizer is a pre-trained facial recognition classifier capable of facial recognition, if enough dataset is available regarding the face that it needs to identify.4–6 Most real time applications use facial recognition algorithm that is in use of security companies or military organization around the world. 7 Applications such as remote monitoring which are present over long distances often require a hardware platform like Raspberry Pi.8,9 Sharma and Jain, 10 proposed a system to identify the faces using facial recognition for blind people. Over the year, researchers have utilized different algorithm for facial recognition such as Haar cascade, TPLBP/HOG Features, DBN Depth Model and Fisherface.11,12 For the security of ATM machine, facial recognition can play an important role for card authentication of a person. 13 Researcher also uses neural network along with the sparse auto-encoder to train the model for facial recognition.14–16 Hsu and Chen 17 discussed facial recognition mounted on a drone along with its limitation, in which the distance plays a major role to identify a person accurately. LBPH face recognizer can also trace and identify human action along with its dominant features and their classification.18,19

This paper proposes an embedded system based on drone technology, which is capable of identifying a person using LBPH face recognizer. The mounted camera on the drone captures the frames in real time and identifies the target person. Usually for the security purpose, cameras are at certain location and they are stationary due to which they are unable to cover wide area. With the help of a drone technology (Quad copter), it gains the ability to hover and cover much more area. We can manually control it from a remote location or by simple follow waypoint that we fed to it. RF transceiver is also present along with the global positioning system (GPS) to communicate with the drone and send it instruction for the next waypoint.

State of the art

This section offers some of the cutting-edge research into facial recognition technology using drones. Chen et al. 20 developed a drone facial identification system using principal component analysis (PCA) and Kernel Correlation Filter (KCF) algorithms. Initially, an onboard camera captures a target frame. Then, the algorithm uses PCA method for face identification. Finally, KCF algorithm performs the target tracking. Afterward, the algorithm estimates the position error and sends it to the control system using the MavLink protocol, thus adjusting posture and finishing the tracking and monitoring task. The results prove the efficiency of the proposed system.

In another recent research on facial identification using drones, Herrera and Imamura 21 offers a novel approach for facial recognition using deep learning. This study concentrates on the facial recognition of people with criminal backgrounds. It trains a neural network using the Caffe framework. The system uses the NVIDIA Jetson TX2 motherboard. Experimental results showcase the effectiveness of the proposed system.

System hardware model

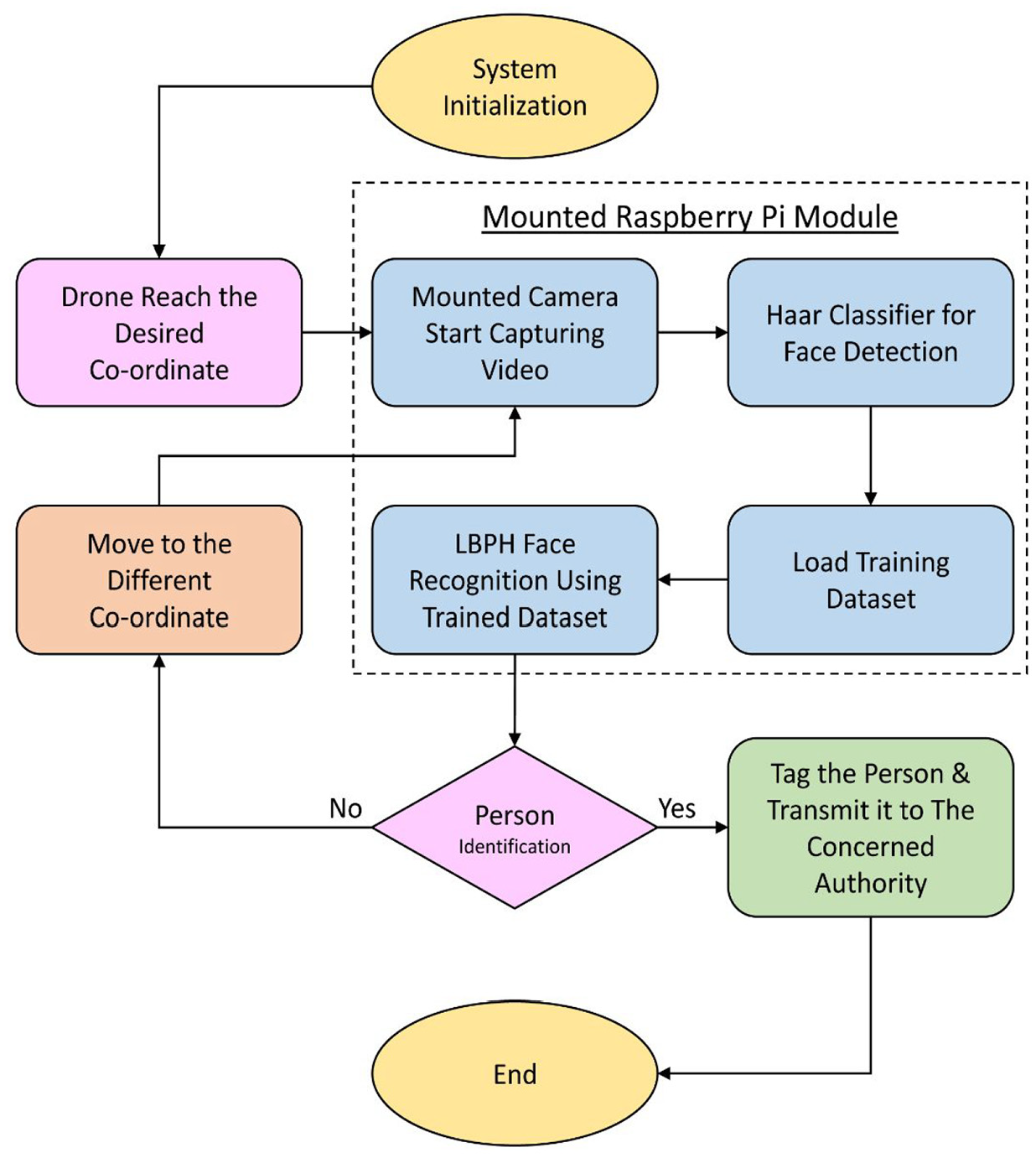

Figure 1 represents the system block diagram. After system initialization, the drone will move to the location provided to it in a form of co-ordinates. Either RF transceiver sends these co-ordinates to the drone or we feed them initially. We can also control the drone manually if the need arises. If the drone identifies the target person, it will inform the concerned authority by transmitting an image displaying the identified person. Then, they can take control of the drone for manual tracking of the culprit.

Model of the Quad-copter used for the proposed system.

Mounted camera on the drone behaves as a sensory object through which it can detect or track the person. We use Raspberry Pi module to interface with the camera. It is necessary to use a camera with good aspect ratio and resolution for better accuracy and result. Camera captures a frame and applies Haar Cascade to detect the faces in the crowd using mounted Pi module. All the detected faces are cropped and LBPH face recognizer runs on each of the detected faces and compares it with the trained dataset. If the system identifies the desired person, it will tag him with his name and captures the frame. It also transmits the captured frame to the concerned authority to take appropriate action. In case the system detects no person in the frame, the drone will move to the next waypoint. We can also perform this process manually. The whole process repeats again at the new location where the drone just arrived. The mounted camera starts capturing new information while trying to detect the desired person. We propose Haar Cascade for the face detection process. It can also detect facial organs like eyes and mouth.

Using a drone is the most efficient and cost effective offering of this study. If the camera is stationary, then it will only be able to tag or identify the desired person within its proximity. The reason of choosing Raspberry Pi module as an on-board computer is its light weight and ability to perform complex operations such as image processing and machine learning. Since, the proposed model uses a machine learning algorithm (LBPH) face recognizer, it is an AI based application as well. Figure 1 represents the model of the Quad-copter used for the proposed application. It can carry up to 4 pounds excluding its battery weight (Figure 2).

System block representation.

Software model

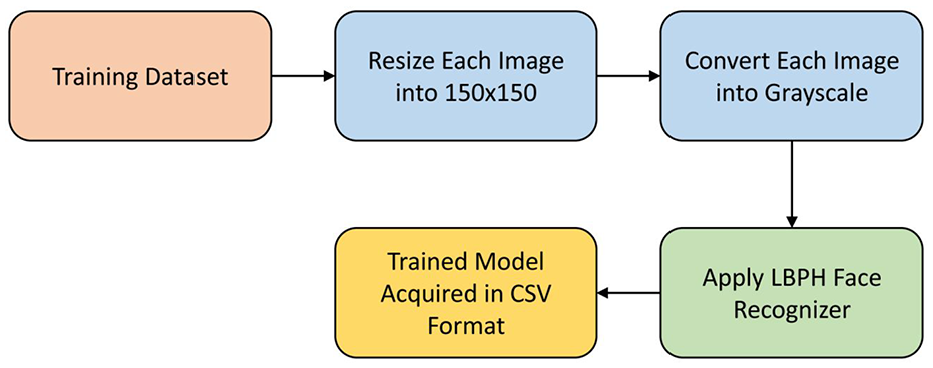

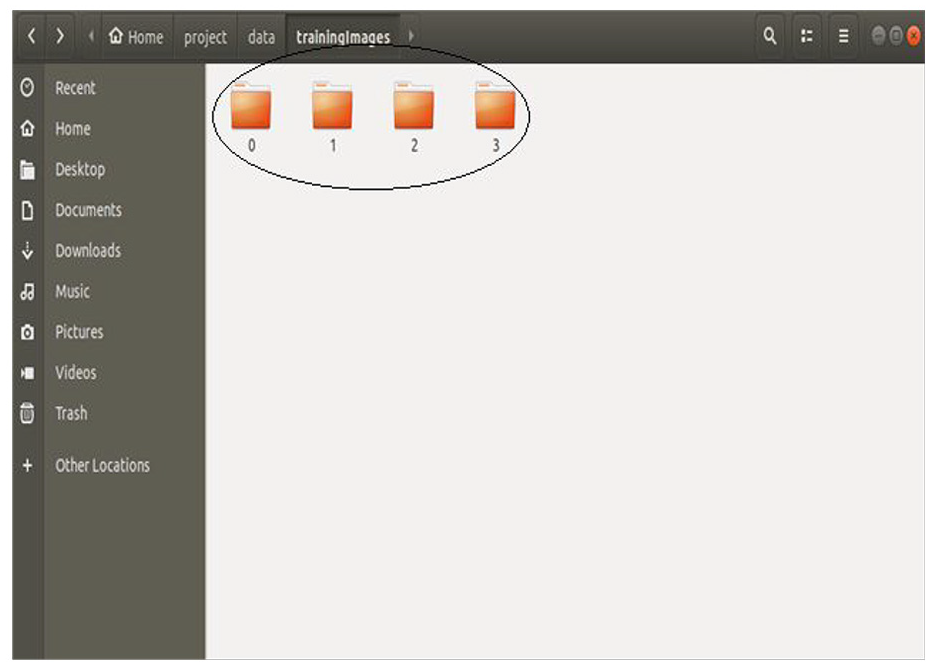

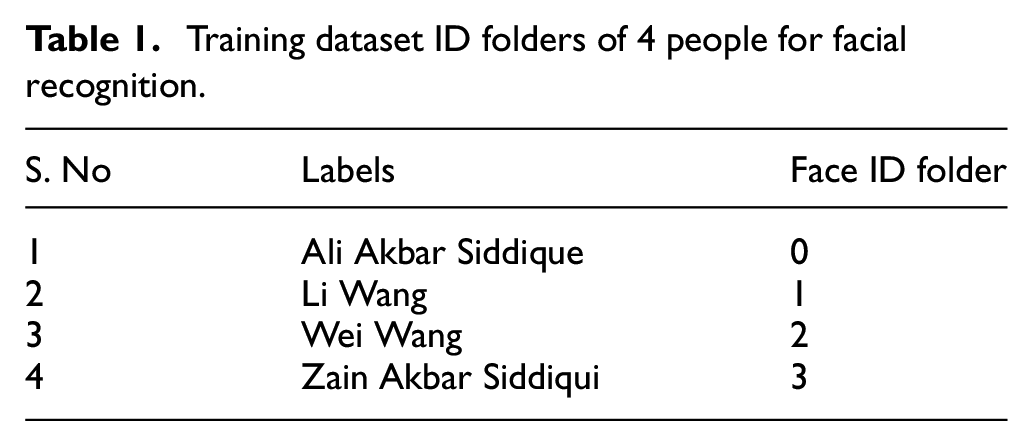

Applications based of Machine learning such as facial recognition require a huge dataset for training before we implement them. Figure 3 represents the training model with LBPH face recognizer. The training dataset in this application contains four folders as displayed in Figure 4, which means that the trained model is capable of identifying four people. The names and Face ID of each person is given in Table 1. By increasing the exiting dataset, we can easily increase the number of people that we want to identify.

Training model for face recognition using LBPH face recognizer.

Training dataset of 4 people.

Training dataset ID folders of 4 people for facial recognition.

The algorithm resizes all the images in the folders into 225 x 225 resolution and converts them into grayscale format. The reason for doing this is to reduce the information because more information means more time it will take to train the model. After the grayscale conversion, we can use LBPH Face recognizer to train the dataset. It will generate a csv file, which is for testing purpose. Now, we implement the model in a real time application which is shown in Figure 5.

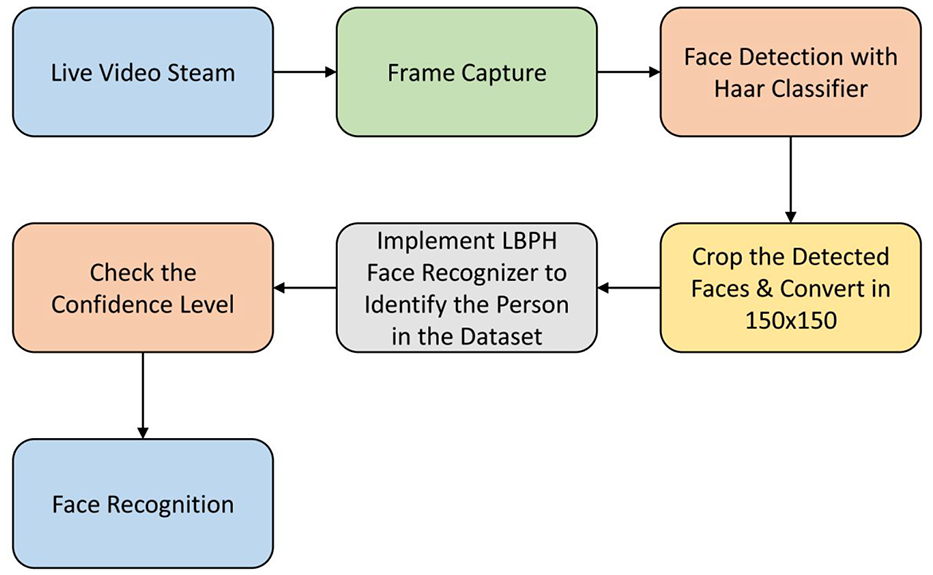

Implemented software model for facial recognition.

The algorithm captures the frame from the live video stream and passes it through the Haar cascade classifier for face detection. Haar cascade is a wavelet based machine learning algorithm that uses integral image concept in order to identify the features. It also uses Adaboost learning algorithm through which it can pick some important small set of features from a huge set for better classification. 22 It is basically a pre-trained for face detection that is able to detect multiple faces in an image.

The algorithm crops and resizes all the detected faces from the frame to 225x225 resolution just as we did for the training dataset. It is imperative to resize the cropped images of the faces to the same resolution as the dataset. All the acquired face images from the frame passes through LBPH Face Recognizer to identify which images matches the dataset features. More the match, the better the confidence level will be. If the confidence level is above the required threshold for any of the image from the frame, that actually matches the features from the dataset of four people in Figure 3, then the algorithm tags that person’s face with his or her name as shown is Table 1.

LBPH face recognition

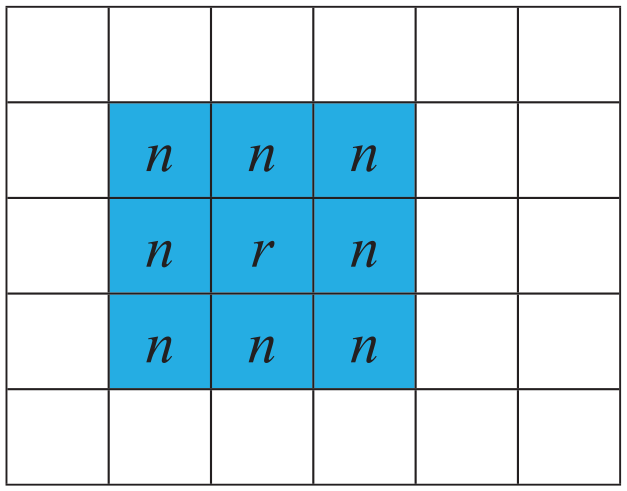

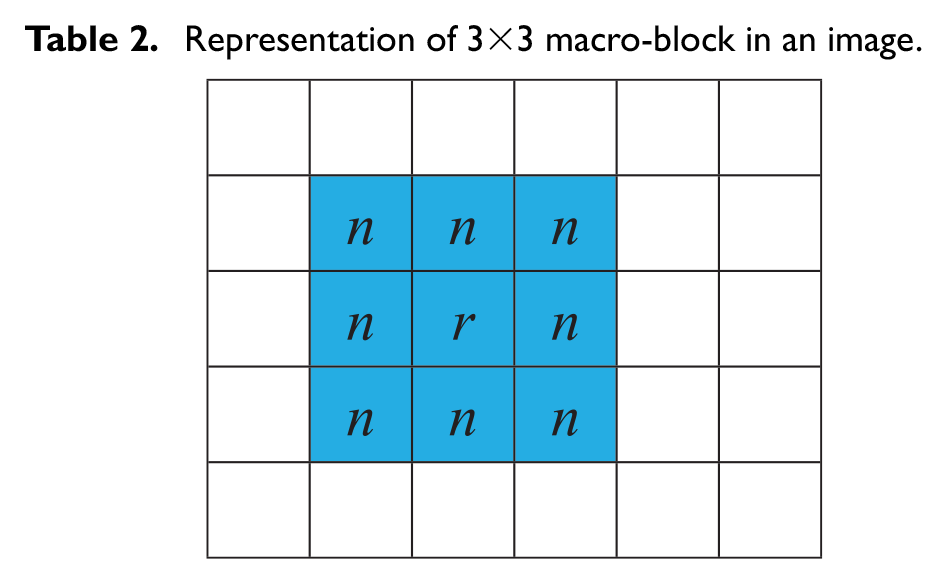

Since its discovery, LBPH is an excellent feature for the classification of certain textures like faces. It requires four distinct parameters to process an image, they are radius (r), neighbors (n), X-axis and Y-axis. Representation of (r) with respect to (n) is given in Table 2 where the highlighted area is a 3×3 macro-block. Here X and Y-axis represents the dimensionality of the features grid in vertical and horizontal manner. The first step is to train the algorithm, and to do so, it is necessary to use correct dataset with facial images of the people that we need to identify. For the computational step, it is imperative to transform an image of a person into set of 3×3 macro-block for better representation as given in Figure 6. By doing this, it is possible to pin point each and every feature that exist on a person’s face. Each macro-block have 9 pixels and they have the range of 0 to 255 as they are of grayscale format.

Representation of 3×3 macro-block in an image.

3×3 macro-block representation.

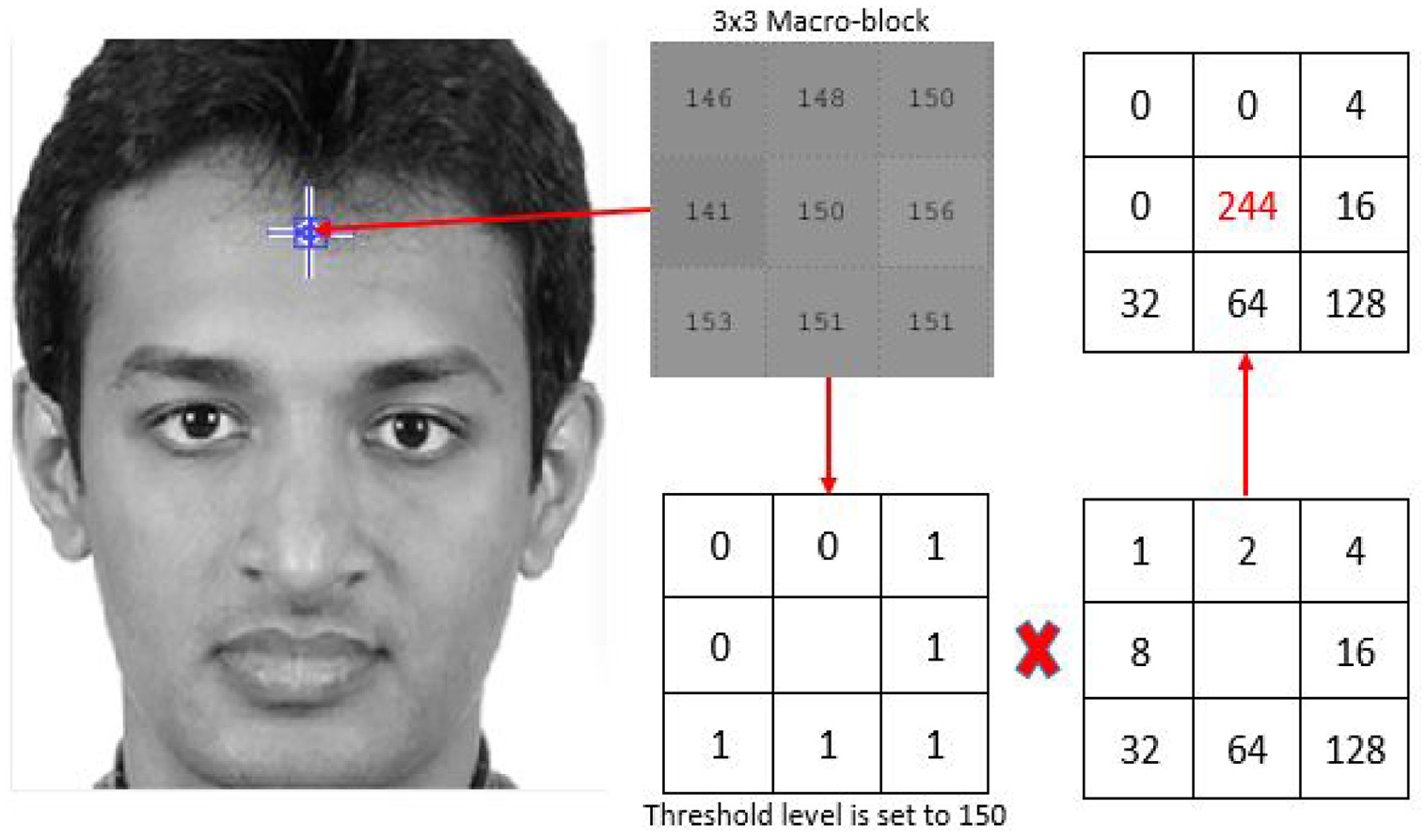

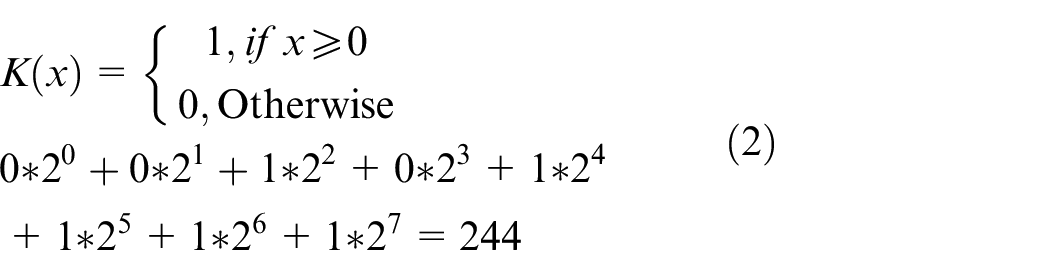

The next step is to convert the macro-block into a binary pattern. The value of the center pixel is a threshold for the rest of its neighbors. Any value that is equal to or greater than 150 is set to 1 and if they are less, then they are set to 0 as given in Figure 6. The algorithm concatenates these binary information and places it in a clockwise pattern. This process is actually known as Linear Binary Pattern (LPB). Equation (1) also expresses LPB. N is the number of pixels except the center pixel. There are 8 neighbors of the center pixel with the radius (r) of 1. This macro-block becomes a sliding window and it applies on each 3×3 pair individually. When equation (1) applies on the 3×3 macro-block pattern, it transforms into the binary pattern with reference to the threshold or center pixel value. Equation (2) represents this phenomenon.

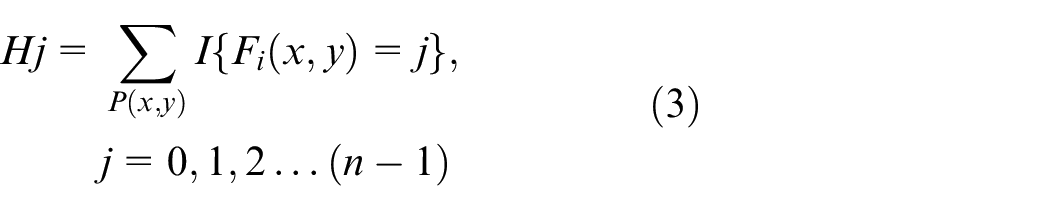

Figure 6 gives the matrix representation after the placement of each quantity using binary pattern in equation (2). The algorithm then multiplies it by the binary pattern and adds it to acquire LBP code. After acquiring LBP image, use equation (3) to gain a histogram representation of the image. Figure 7 displays the LBP converted image and its equivalent histogram. Each macro-block will generate its separate Histogram and in the end, the algorithm concatenates them all to generate a cumulative histogram of all macro-blocks present in an image

Where

(Left) gray scale image, (center) LBP image and (right) histogram of the LBP image.

Results

The dataset of four different people is available for training. Figure 8 represents the samples of 4 images of four different people, for whom dataset is available. Each folder in Figure 3 contains 250 images which means there are 250 images stored for each person to train the model.

Four sample images of 4 people for the training dataset (Row 1: Ali Akbar Siddique, Row 2: Li Wang, Row 3: Wei Wang, Row 4: Zain Akbar Siddiqui).

When the algorithm captures a frame from the live video stream, Haar cascade will detect all the faces in the frame as shown in Figure 9. The algorithm must crop all the detected faces for identification. Since the dataset contains images of 225 x 225 resolution, thus it is imperative to resize the cropped images into 225 x 225 resolution as well. After resizing, the algorithm converts these images into grayscale format. The reason for doing that is to reduce the information of these images by downsizing them into single dimension from three dimension. Figure 10 shows the five detected faces in grayscale format.

Face detection using Haar cascade classifier.

Detected faces in grayscale format.

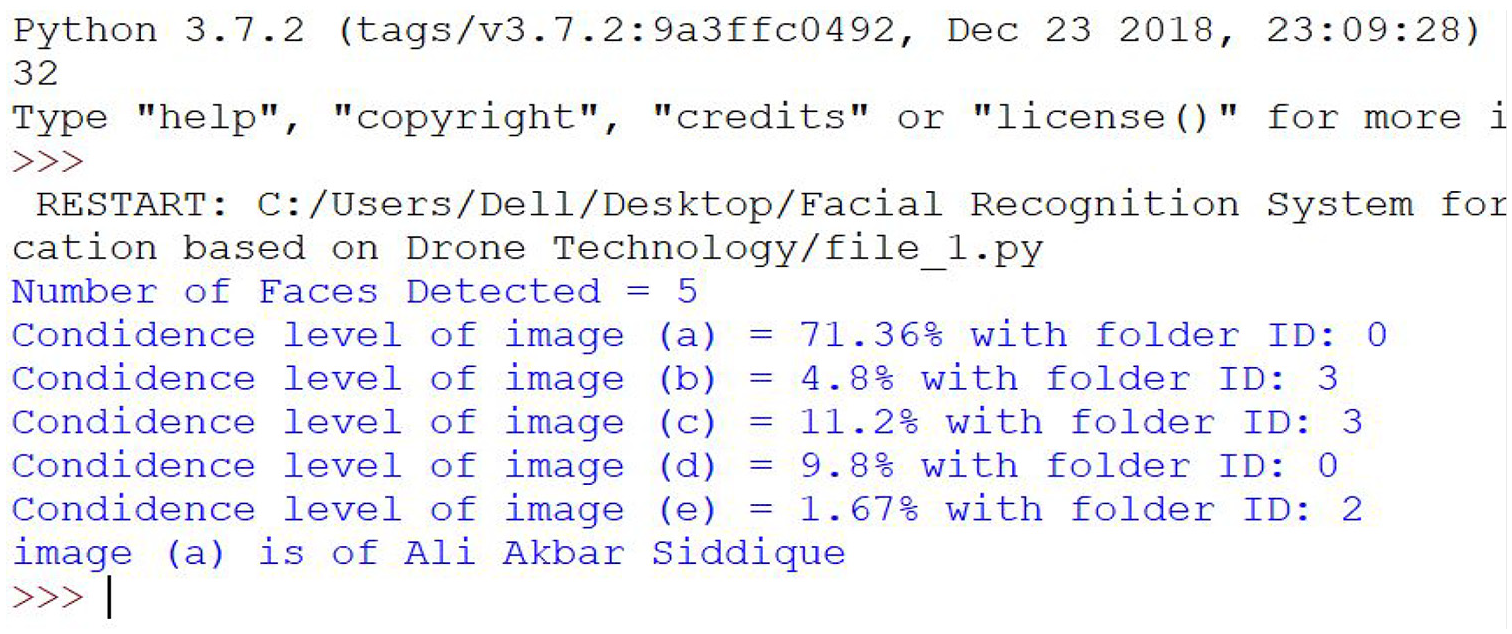

Confidence level is an important parameter for identification, it basically calculates the probability that weather the desired parameter falls within the threshold range. If the confidence level is small, then it means that there is little difference between the two images, but we take an inversion to discard any confusion in the reader’s mind. Now the confidence level points to the probability of the greatest value of the confidence level to identify the person in an image. This is apparent in Figure 11, where Haar cascade detected 5 faces and LBPH Face recognizer identifies the person in Figure 10 as Ali Akbar Siddique because his confidence level was 71% and the threshold value for the facial identification is set to 50%. In Figure 10, it is apparent that image (a) has a confidence level of 71% with folder ID: 0 and this folder has the images of Ali Akbar Siddiqui from Table 1. Similarly image (b) from Figure 10 has a confidence level of 4.8% with folder ID: 3, but it will not identify this person as the confidence level is below the threshold level. Some features of the input image will definitely match with images in the dataset hence the small value of the confidence level. Same applies to the rest of the images in Figure 10 which are images (c-d), their threshold values is less than the set threshold level.

Identification of a face through confidence level.

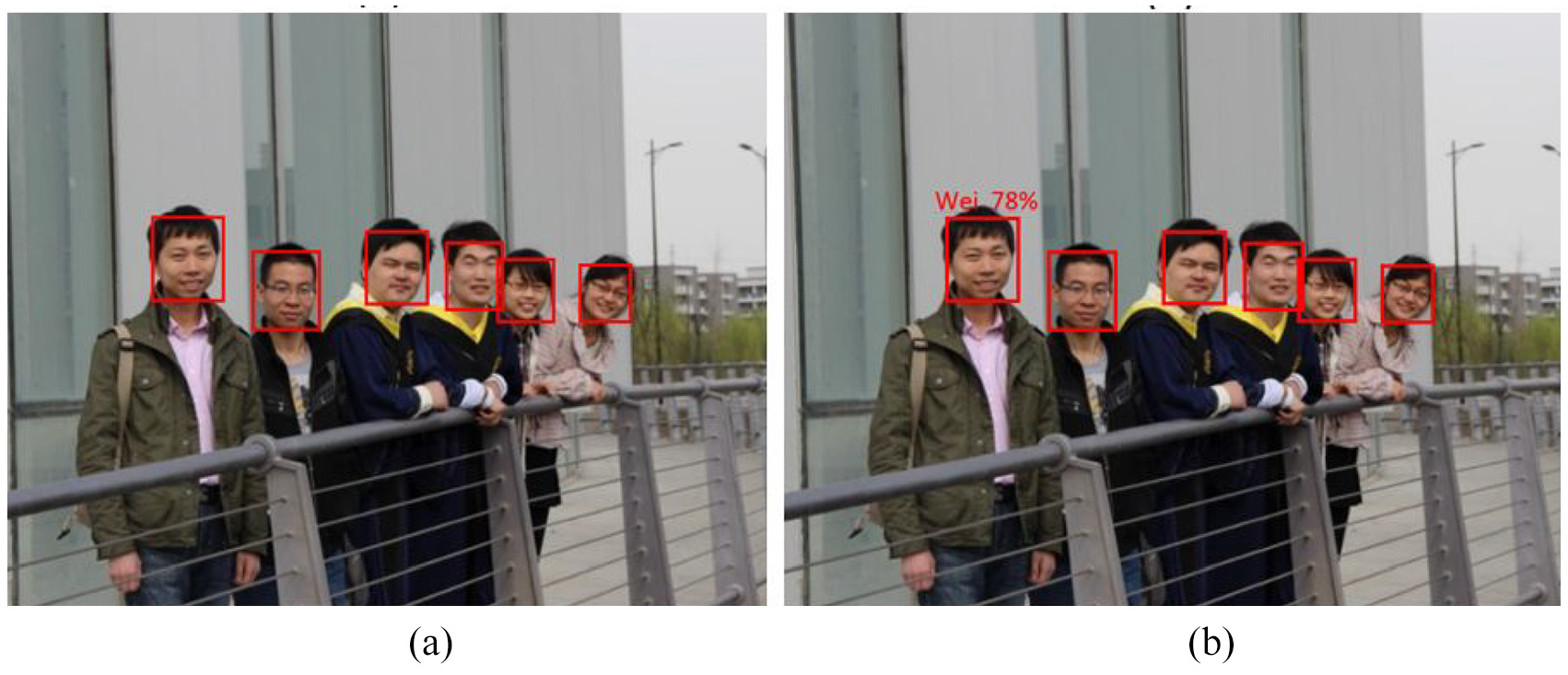

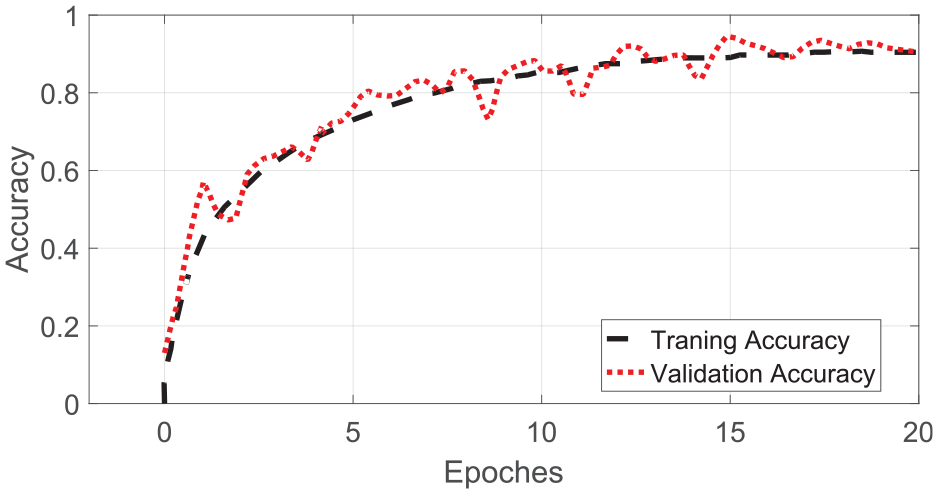

When the algorithm identifies the person in the frame, it tags him with his short name along with his confidence level like in Figure 12. Out of five faces, the system only identifies one person because the other four people were not in the training dataset. The proposed system can detect multiple people in a single frame provided that they are in the training dataset. Figure 13(a) and (b) are another sample of the algorithm identifying the person in an image. In this case, algorithm identifies Wei Wang with the confidence level of 78.2%. Figure 14 represents the accuracy of the trained dataset with respect to the application applied in real time. The value of epoch is set to 20 because it is unnecessary to increase its value as the accuracy will not get better and it will also require more computational resources and time to train. We also apply the same application using another well-known facial recognition algorithm, that is, Eigen faces. It uses a PCA to extract feature vectors from the designated image. This vector is flattened so that the entire feature vector is normalized. After the data train with same amount of images and epoch, its accuracy was approximately 82.4%.

Tagged person with the confidence level of 71.36%.

(a) Face detection. (b) Tagged person with the confidence level of 78.2%.

Trained dataset vs validation accuracy.

Conclusion

Face recognition systems basically belong to the category of image processing application and their importance is increasing fairly regularly. Mostly, these kinds of systems have applications in surveillance, personal verification and some other related security activities. The proposed system utilized the concept of facial recognition by using a pre-trained LBPH Face Recognizer to identify the person in the acquired frame. Drone mounted camera captured the live video stream. An onboard Raspberry Pi module processed the acquired video information. The system can detect the desired person with the accuracy of 89.1%. If we further increased the number of datasets, then the accuracy will also increase. We could also use the proposed system manually using RF transceiver in order to track the identified person if needed. We could also update the concerned authorities about the culprits’ location at the same time using mounted GPS module. The proposed system can prove most beneficial to improve the existing security system.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: National Natural Science Funds of China (Grant No. 61901350); Research Funds of Xi’an Aeronautical University (Grant No. 2019KY0208).