Abstract

Postural inaccuracies in persistent dental tasks indicated an upsurge in the prevalence of musculoskeletal disorders in dentists. This makes it imperative to restrain awkward postural movements while working. Biased results in self-reporting surveys; discomfort, expense, and time consumption involved in using wearable sensors; and expert’s opinion are required in observational methods. Hence, it is important to use significantly reliable, cheap technology as a substitute to overcome the shortcomings of the mentioned techniques. In this study, the markerless Kinect V2–based system was developed and compared with the conventional imaging technique for real-time postural assessment of dental seating tasks. The study assessed the angle parameters related to the dentist’s bodily movement of upper arm, lower arm, wrist, neck, and trunk. Ten dentists from the local dental institution volunteered for the study. Dentists were monitored with both techniques while performing real-time dental procedures. The agreement between the techniques was assessed using Bland–Altman plot at 95% bias, Pearson’s (r1) and concordance (r2) correlation coefficients, mean difference, and percentage error. For conclusive agreement analysis, contingency coefficient, proportion agreement index, Cohen’s kappa, and Mann–Whitney U at 95% confidence interval (CI) were evaluated. Data acquired from both techniques possessed strong correlations (r1 and r2 >0.90). Good agreement in Rapid Upper Limb Assessment data using Cohen’s kappa (0.67) at standard Landis and Koch’s scale was also observed. Postural analysis of slow-motion tasks like dentistry using the Kinect V2 system proved to be unobtrusive and efficient. This may be used by dentists to have periodic postural check. In future, Kinect V2–based feedback system may be used to develop an assistive technology using predictive algorithms, which may help in reducing the probability of occurrence of work-related musculoskeletal disorders in dentists.

Introduction

According to the American Working Conditions Survey 1 and the European Working Conditions Survey, 2 as a consequence of occupational hazards, 40.5% and 44% of workers were exposed to tiring postures, respectively, whereas 75% and 62% of workers were susceptible to repetitive arm and hand motions. Various studies documented in the literature worldwide have reported a high incidence of musculoskeletal disorders (MSDs) among dental practitioners.3–5 The dental profession demands precision, good visual acuity, psychomotor skills, depth perception, and manual dexterity and concentration accompanied with narrow work area (oral cavity of the patient), resulting in inflexible awkward postures for a longer duration during dental task.6,7

Movement- and posture-related data of the worker are a prerequisite to evaluate the vulnerability to risk factors of MSDs with the subsequent aim of ergonomic intervention. 8 Subjected to variation in the mensuration method, varieties of tools are available and are categorized as (1) self-reporting, (2) direct measurements, and (3) observational.9,10 Mostly, self-administered questionnaires, checklists, interviews, and rating scales were used in various studies investigating the prevalence of work-related musculoskeletal disorders (WMSDs) in dentists11–13 to analyze postural and biomechanical data in dentistry by making use of wearable sensors like goniometers, electromyography (EMG) electrodes, or markers for motion capturing in simulated dental environments.14,15 In actual dental work, direct methods may be difficult to implement as wearable devices usually cause discomfort and affect postural activity. The mass adoption of direct methods becomes inappropriate due to the intrusive, expensive, and time-consuming nature of sensors involved.16,17 Also, the high-contrast stickers used in marker technology may fall off during actual work.18,19 In dental ergonomics area, studies have been done using ergonomic evaluation by observational methods like Rapid Upper Limb Assessment (RULA) and Rapid Entire Body Assessment (REBA). These methods involve the judgment of bodily angles using images and video frames and thus require expert opinion for correct estimation of angles. 20

To rule out the drawbacks of the above methods, elbow, shoulder, and wrist (W) joint data from a field survey of videos during shoulder abduction in two dimensions (2D) were automatically picked up by using a graphic algorithm which controlled error within 12°. 21 However, to obtain body joint data in three dimensions (3D), in 2013, skeletal tracking system using Kinect V1 was integrated into the RULA method for 3D motion analysis, 22 and DHM Jack tool and Task Analysis toolkit module was used. 23 Choppin et al. 24 discussed the accuracy of Kinect; the maximum error, proportional error, median root mean square error (RMSE), and systematic bias were reported as 58.2°, 1.15°, 12.6°, and 4.38°, respectively, using iPiSoft algorithm and as 63.1°, 1.19°, 13.8°, and 3.16°, respectively, using NITE algorithm. The fully automatic, cheap, portable, non-intrusive, markerless, and high-frame-rate technology used by Kinect sensors justifies their robust applications and studies, covering healthcare, robotics, physical therapy, performing arts, natural user interface, virtual reality, fall detection and 3D reconstruction. 25 Novel materials, instruments, and technologies have almost revolutionized the contemporary clinical practice and have contributed significantly to the improvised treatment protocols.26–28 K2RULA, a semi-automatic software, was developed, which is capable of detecting awkward postures in real time using Kinect V2. 29 Kinect V2, according to its specifications and studies conducted, outperformed Kinect V1, being able to detect 25 body joints, robust to both natural and unnatural light sources, and more accurate to human body skeletal tracking.30,31 A marker-based study using Kinect V2 as a computational tool was conducted by Weidemann et al., 32 and upper body joint angle inclinations were highly accurate (deviation less than 7.2°) with lower accuracy in neck (N) angle (−31°± 9.1°) and upper body rotation across a longitudinal axis (24.0°± 3.5°). The real-time feedback was developed by Mgbemena et al., 33 which can inform the workers to change their instantaneous awkward sitting posture. Yusuf et al. 34 had captured static (lateral hand lift) and dynamic (lower arm (LA)) movements using Kinect V2 sensor, with a respective error rate of 2% and 5%. The above studies establish the possibility of Kinect V2’s use in real-time application tasks and as a promising tool for postural analysis.

Irrespective of the geographical location, dental practitioners are susceptible to WMSDs. 35 This demands the need to assess their postures while working. During long-duration dental tasks, it becomes difficult to analyze the posture of the dentist, and the presence of ergonomist cannot be avoided. The real-time postural evaluation of dentists, which may eliminate the need for ergonomists, is a serious challenge till date. Also, ISO standard 1128-3:2007(E) can be considered as the basis to establish workplace environment. A steady system for any workstation can be developed using depth sensors which can monitor body joint angles of a worker for an early check of exposure to WMSDs. This study aims to examine the use of Microsoft Kinect V2 sensor in capturing the real-time postural data in dental practitioners during their practice hours. The study focuses on the following: (1) examining the body joint angle data of dentist acquired by Kinect V2 while performing the actual dental task and its comparison with the data collected through the conventional imaging technique, and (2) calculation and comparison of final RULA score from body joint data collected from both Kinect V2 sensor and imaging techniques.

Methods

Subjects

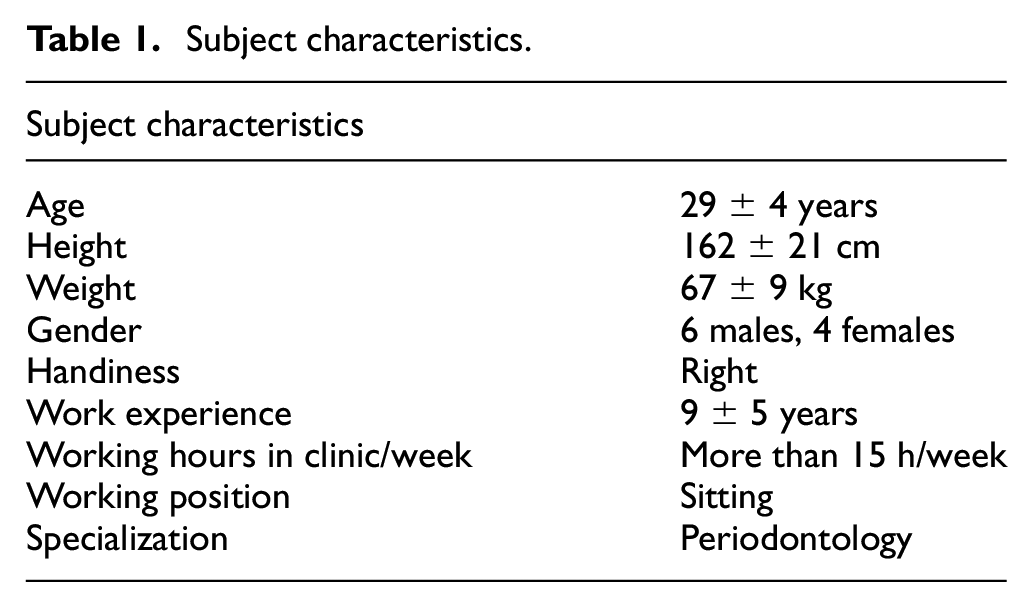

Dental practitioners and hygienists were enlisted from the regional dental institute. Subjects having any prior history of WMSDs and injuries in the hands, wrists, arms, neck, back, and shoulder were excluded from the study. Ethical permission for the study was obtained from Panjab University’s Ethics committee. Ten professional dental workers (six males and four females) voluntarily signed the written informed consent form of participation. To maintain the consistency of data, it was checked whether all the participants used their right hand as the dominant hand and performed dental scaling with manual tools as the job. Participants were explained the study protocol and purpose in advance. Subject characteristic details are explicitly represented in Table 1.

Subject characteristics.

System overview

The automatic data capturing software was developed using Microsoft Kinect V2 for Windows, PC with Windows 8.1, 64 bit, 8 BG RAM, Intel core i5 processor at 2.2 GHz. C# (.NET framework) and Microsoft Kinect for Windows SDK2.0 were used as the programming platform to capture 3D depth image and skeletal joint coordinate data. High-quality videography still camera (SONY HDR-XR550) was used to capture image streams simultaneously while capturing depth image data. The sampling frequency of both Kinect V2 and still camera was 30 fps. The visual images of SONY HDR-XR550 and Kinect V2 are shown in Figures 1 and 2.

SONY HDR-XR550 video camera.

Microsoft Kinect V2.

Experimental design

Data collection

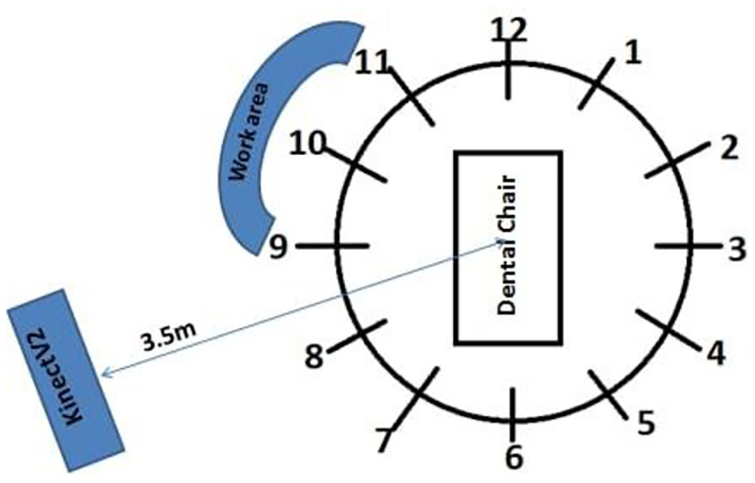

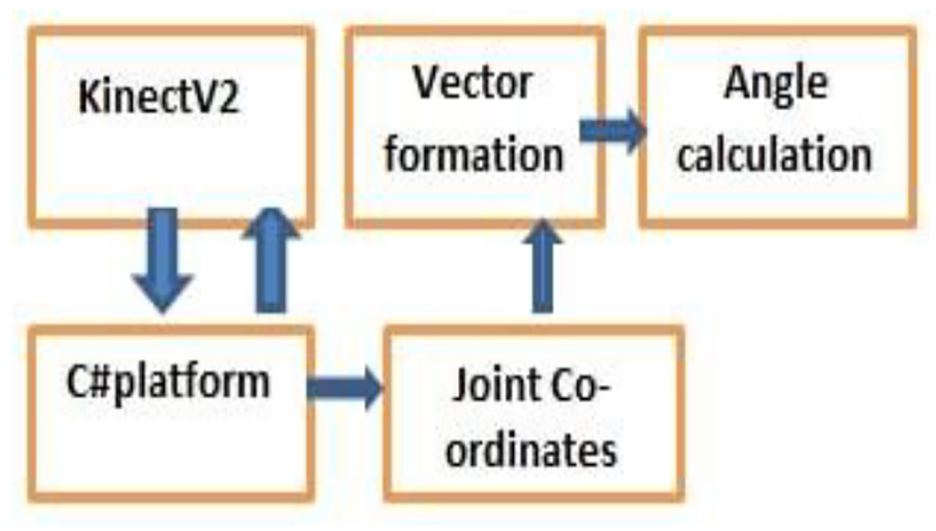

The postural data were collected from a typical dental workstation which included modern Pelton and Crane chair arrangement. This study involved manual dental scaling task, and for achieving the current objective of capturing better body joint data (with least occlusion), the hanging ultrasonic cleaning appliance was adjusted and nozzles were kept at a distant position from Kinect’s proximity. For this study, no other modification was made to the dental hospital’s workstation. Dentists performed the dental cleaning task in sitting postures as per the protocol of the study. The dentists who performed the job while sitting used dental stool with backrest. The Kinect sensor was placed radially in between the standard 8 and 9’o clock position of dental chair setup at a distance of 3.5 m from the chair center and at 1.2 m height from the ground using a tripod. The tilt angle of Kinect was kept zero as the optical axis of the sensor was parallel to the ground. The high-quality videography camera was placed at the nearest possible proximity of Kinect sensor at approximately the same position and direction (Figure 3). The digital clock was kept within the camera frame in a fashion that it does not disturb the dentist. To avoid the inessential body skeleton tracking of a patient’s body, the patient was asked to lie on the dental chair before the Kinect is switched on because the Kinect sensor considers patient and dental chair as merged bodies or unity and is unable to generate automated patient’s virtual skeleton. Dentists were instructed to stand still for once in front of Kinect for 3–4 s just before initiating the scaling task to allow Kinect to detect human body joint coordinates. The data were collected continuously for 5 min while each dentist performed real-time dental scaling task, sitting between the standard 9 and 11’ o clock dental chair position as illustrated in Figure 4. Both Kinect and videography camera were simultaneously initiated, and more accurate data synchronization was obtained using digital and stop watches. The flowchart shows the procedure followed in this study, which is given in Figure 5. The typical RGB (red/green/blue) image perspective while recording data for the study from Kinect and videography camera orientation is shown in Figure 6.

Imaging and Kinect V2 camera arrangement when both were kept in close proximity to each other.

Kinect positioning and sitting orientation of dental practitioner based on standard dental clock positions.

Data acquisition and calculation of postural parameters.

RGB image frame while data recording.

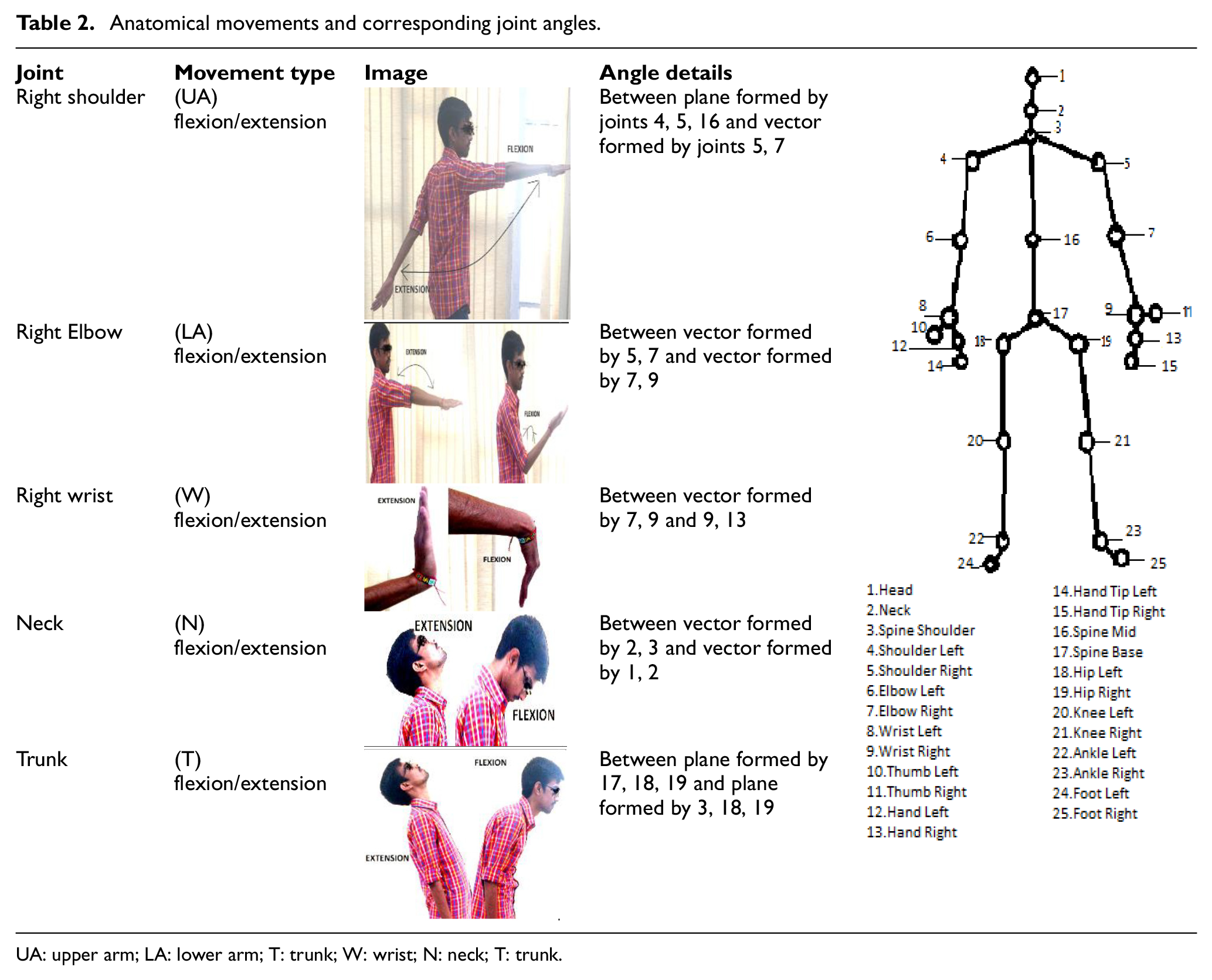

Data processing and analysis

The body skeletal information is transformed into a large set of features which are fed into a software program created in Visual Studio. The recorded coordinate joint data were used to create vectors, and subsequent computation was done to calculate final angle values. C# program computed the five prime body angles (dependent variables) corresponding to the flexion/extension movements at the joints. Upper arm (UA), lower arm (LA), wrist (W), neck (N), and trunk (T) movements calculated the body joint angle present at the right shoulder, right elbow, right wrist, neck, and trunk, respectively (shown in Table 2).

Anatomical movements and corresponding joint angles.

UA: upper arm; LA: lower arm; T: trunk; W: wrist; N: neck; T: trunk.

Mathematical expressions used in vector analysis

Vector algebra was used as a basis to develop mathematical equations for the development of software used in this study.

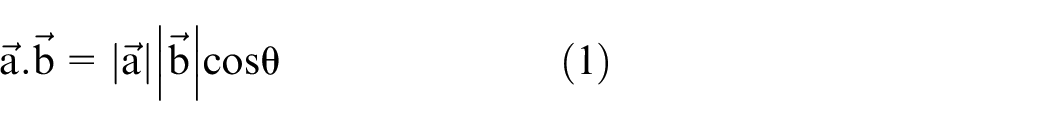

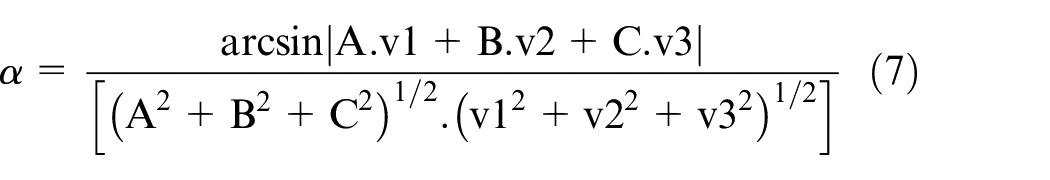

Angle between two vectors

Vectors in 3D spatial geometry were created using the coordinate points corresponding to the human body joints. Since the vectors formed using coordinate joint data have both magnitude and direction, the dot product method between the non-zero vectors corresponding to different body parts (taken as line segments) assisted in deriving angle values (as shown in equation (1))

where

Illustration showing angle between two vectors.

The above-mentioned equation was used to calculate angle values at right elbow (for LA flexion/extension), right wrist (for wrist flexion/extension), and neck (for neck flexion/extension) joints.

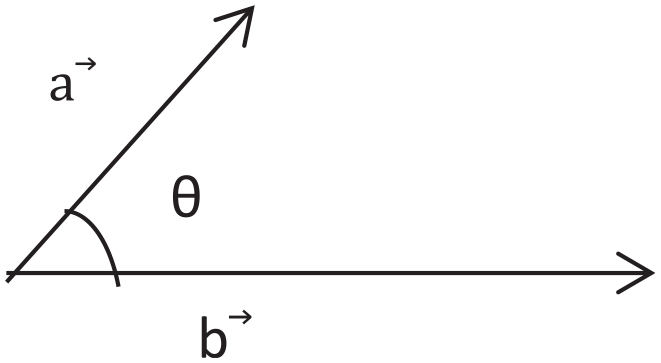

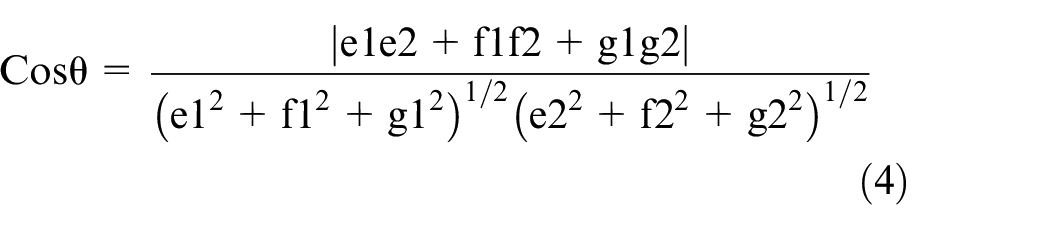

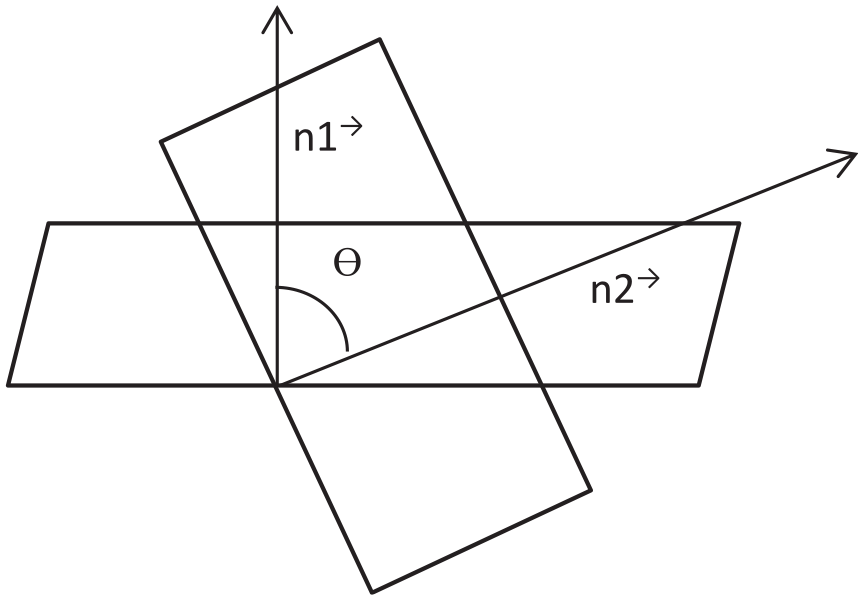

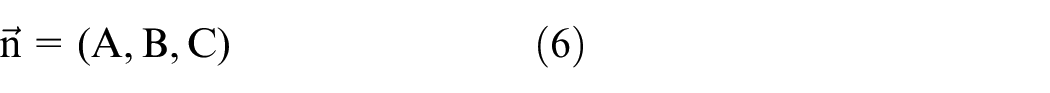

Angle between two planes

Plane is defined as the 2D figure which can be extended infinitely in 3D space. To evaluate the angle between the two planes using vector and Cartesian formulation, it is mandatory to calculate the angles between their corresponding normals. The equation of two planes in Cartesian form is represented in equations (2) and (3)

where (e1, f1, g1) and (e2, f2, g2) represent the direction ratios related to normals to both the 2D planar figures, and h1 and h2 are constant variables.

Furthermore, the cosine angle between the planar figures can be formulated using equation (4)

where θ represents the angle value between the two planar figures, shown in Figure 8.

Illustration showing the angle between two planes.

In Figure 8,

The equation illustrated above is used to find out the flexion/extension movements corresponding to trunk and the change in angle parameter values.

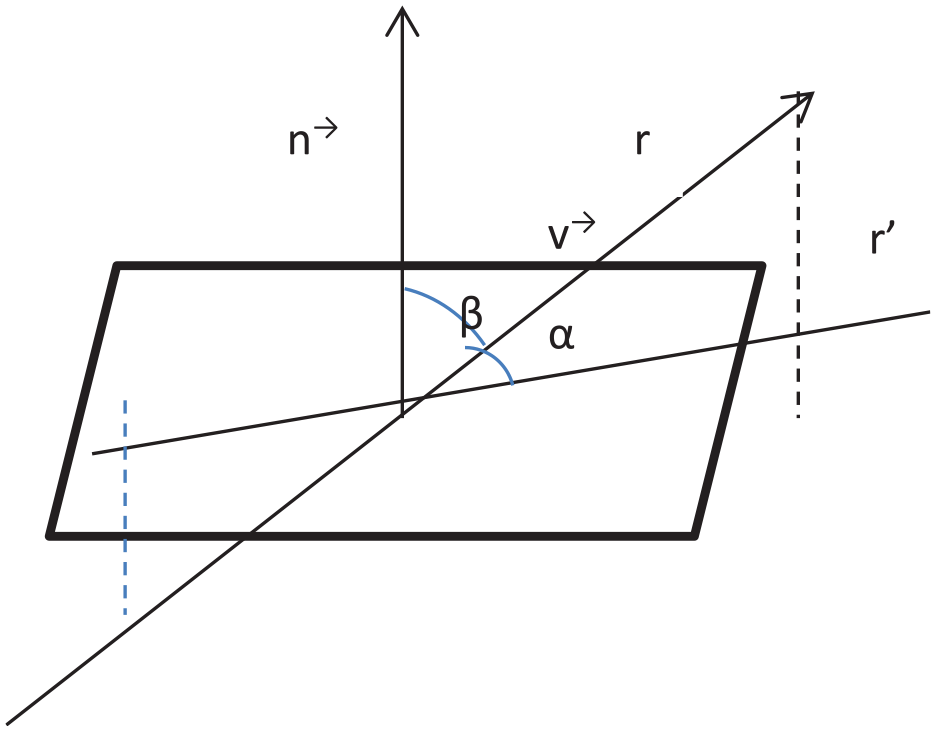

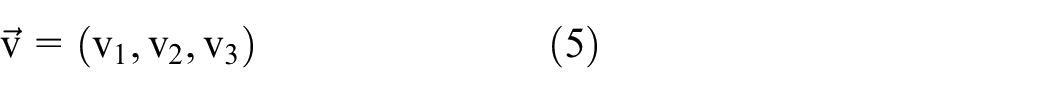

Angle between a plane and a vector

The angle made by the plane and the line is same in value to the complementary acute angle that exists between the normal vector of the plane and the direction vector of the line. The formulation for finding the angle between a plane and a vector is established in equations (5)–(7), as represented in Figure 9.

Illustration showing the angle between a plane and a vector.

Here, the line vector is

and the normal vector to the plan is

where α represents the angle between the line and the plane.

The equation illustrated above was used to find out the flexion/extension movements corresponding to the neck and change in angle parameter values.

The angle values corresponding to 30 relevant image frames from each cycle of the dental scaling work were extracted. The RULA method was applied for all the right side physiological features for both Kinect and image readings. Relevant values from both Kinect and conventional readings were considered after removing outlier values.

The following hypotheses were selected to assess the agreement between the results of Kinect and conventional imaging techniques.

Hypothesis 1: Kinect V2 data values are in agreement with data collected with the conventional method.

Hypothesis 2: The final RULA score calculated using Kinect V2 data is in agreement with the RULA score calculated using conventional techniques. IBM SPSS Statistics (version 20) software was used for statistical analysis of data.

To evaluate the agreement between Kinect and conventional imaging techniques, Bland–Altman mean difference (bias) and 95% limit of agreement (LOA) defined as bias ± 1.96 SD were plotted. Mean, mean difference, and standard deviation (SD) of mean difference values from both techniques related to each body angle parameter were recorded. To assess the strength of association among angle parameters recorded using both Kinect and conventional imaging techniques, Pearson’s correlation coefficient (r1) were evaluated and the corresponding p-values were assessed to know the probability of occurrence of results. Also, to evaluate the level of agreement or disagreement among angle parameters recorded using both techniques, concordance correlation coefficients (r2) were evaluated. Percentage errors (PEs) were evaluated to assess the percentage of differences between angle values for all body joints derived from both techniques.

For more concrete and conclusive agreement analysis between two sets of calculated RULA values (Kinect and imaging), non-parametric statistical tests were performed. Differences in medians of RULA scores between two techniques were assessed using Mann–Whitney U-test at 95% CI to test the null hypothesis: no significant difference exists in the RULA values between Kinect and imaging techniques. To assess the strength of association between both systems based on final RULA scores, contingency coefficient (C) was evaluated using 2D contingency tables. Proportion agreement index (Po) was calculated to check the proportion of cases for which RULA scores for both techniques agree. For the sample-to-sample inter-rater agreement, Cohen’s kappa coefficient (k) 36 was calculated as a quality index using ordinal RULA scores for both recording techniques.

Results

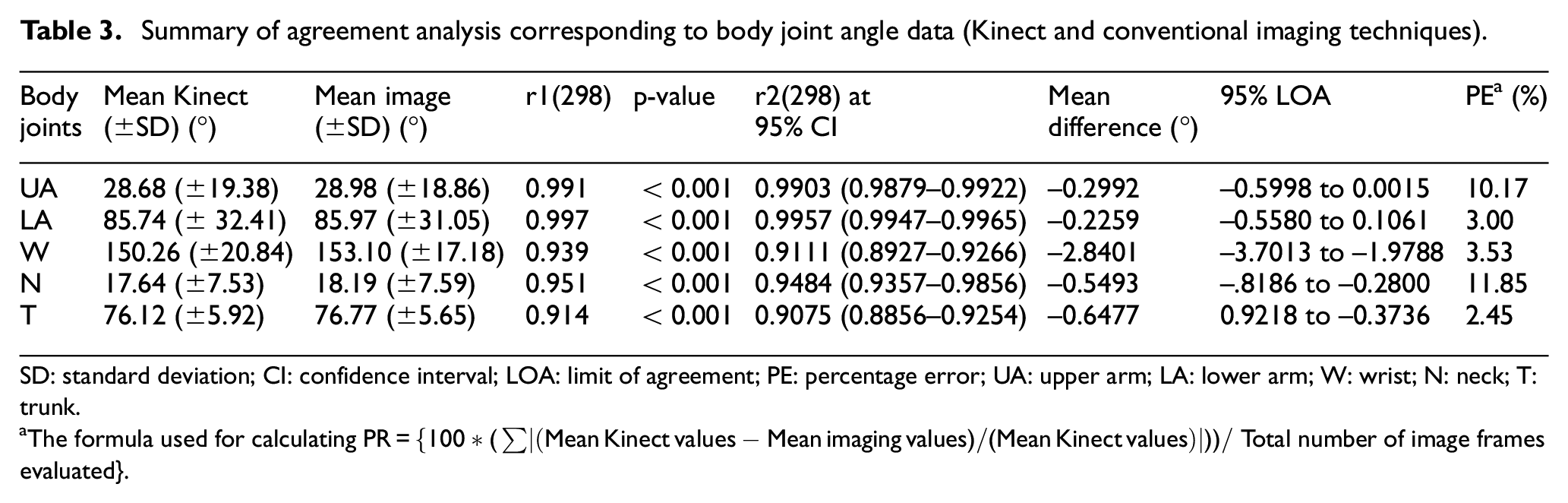

Agreement analysis between Kinect and conventional techniques was performed, and the resulting parameters such as mean (±SD) values corresponding to Kinect and imaging, mean difference (°), PE, Pearson’s correlation coefficient (r1), concordance correlation coefficient (r2), and 95% limits of agreement for body joint angle data from Bland–Altman plot are summarized in Table 3.

Summary of agreement analysis corresponding to body joint angle data (Kinect and conventional imaging techniques).

SD: standard deviation; CI: confidence interval; LOA: limit of agreement; PE: percentage error; UA: upper arm; LA: lower arm; W: wrist; N: neck; T: trunk.

The formula used for calculating

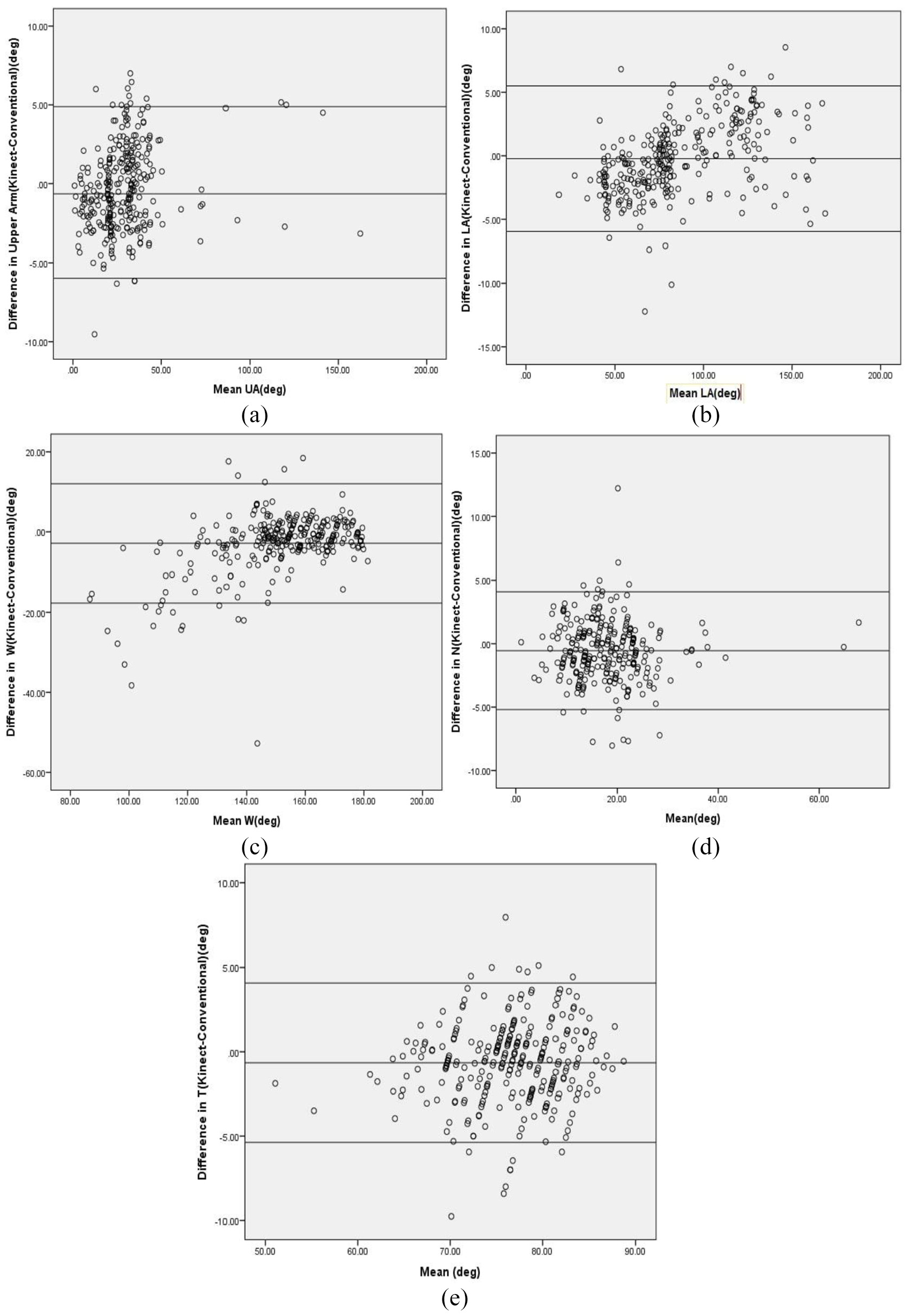

The graphs in Figure 10(a)–(e) illustrate the scatter plots of each respective body joint angle data using Kinect and conventional methods (the differences along the vertical axis against the mean values along the horizontal axis). Mean difference or bias and 95% LOA values are represented as horizontal lines on each graph. Bland–Altman test indicated that the data points were evenly and closely distributed across the horizontal bias line. For UA, LA, neck, and trunk, more than 95% of the method difference values were spotted within the defined LOA range, whereas in wrist, the presence of method difference values within LOA range was less than 95%. Also, comparatively higher bias value (systematic error=−2.84) was recorded in the case of wrist. In the case of LA, proportional bias was observed, as Kinect underestimated the LA angle values when the mean angle values were low and vice versa.

Bland–Altman plot with limits of agreement for postural parameters. The difference in angle reading between the two techniques (Kinect-Conventional imaging) is plotted on the y-axis and the mean value using both techniques is plotted on the x-axis: (a) Bland–Altman plot for angle corresponding to upper arm (UA), (b) Bland–Altman plot for angle corresponding to lower arm (LA), (c) Bland–Altman plot for angle corresponding to wrist (W), (d) Bland–Altman plot for angle corresponding to neck (N), and (e) Bland–Altman plot for angle corresponding to trunk (T).

Body angle data (for LA, UA, wrist, neck, and trunk) from Kinect and imaging techniques resulted in optimally high Pearson’s (r1(298) = >0.90, p < 0.001) and concordance correlation coefficients (r2(298) = >0.90), indicating the significant positive correlation between the both techniques.

For LA, correlation coefficients had the highest values (r1(298) = 0.997, p < 0.001) and (r2(298) = 0.995) with lowest PE (3%) and mean difference (0.225°) values, inferring more association and lower differences between Kinect and imaging techniques, therefore indicating the closeness in values obtained from both techniques.

For UA and neck, correlation coefficient values were optimally higher (r1(298) = 0.991, p < 0.001), (r2(298) = 0.990) and (r1(298) = 0.951, p < 0.001), (r2(298) = 0.948), respectively, with relatively higher PE values (10.75% and 11.85%), showing Kinect data values have a good association with conventional values but with more error differences in the values.

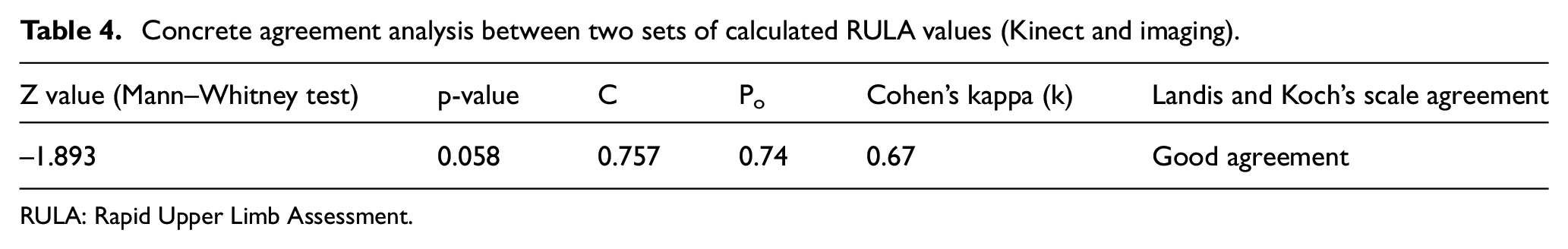

The concrete agreement analysis between two sets of calculated RULA values (Kinect and imaging) is summarized in Table 4. Z value (Mann–Whitney test at 95% CI), contingency coefficient (C), proportion agreement index (Po), Cohen’s kappa coefficient (k) for sample-to-sample inter-rater agreement, and agreement level at Landis and Koch’s scale for RULA scores were evaluated from angle data of both techniques (Kinect and imaging). The results of the Mann–Whitney test were statistically non-significant (z = −1.893, p > 0.05). Therefore, Mann–Whitney U-test results directed to accept the null hypothesis, inferring the similarity in RULA scores from both techniques. The value of contingency coefficient (C = 0.757) shows the existence of fairly high association between the two RULA score groups. Proportion agreement index (Po = 0.74) indicated that 74% of the total RULA values from both techniques had an agreement. Consistency among RULA values from both Kinect and imaging techniques was assessed by calculating Cohen’s kappa index, k = 0.67 (p < 0.001), 95% CI = 0.504–0.848. The value of the kappa index was evaluated on Landis and Koch’s scale, resulting in “good” agreement between both the techniques. The above results concluded the validity of Hypothesis 2: Final RULA score calculated using Kinect V2 data is in agreement with the RULA score calculated using conventional techniques.

Concrete agreement analysis between two sets of calculated RULA values (Kinect and imaging).

RULA: Rapid Upper Limb Assessment

The cross-validation of results obtained from Kinect V2 and conventional techniques pointed out that the values derived from Kinect V2 were close to those derived using conventional techniques.

Discussion

In this section, the results reported in this study are discussed and bring to light some limitations.

Main contributions

This study aimed at better understanding the body kinematics of dentist while performing the dental procedure and examining the feasibility of Kinect V2 in real-time postural analysis for slow-motion tasks. To the authors’ knowledge, this investigation was the first to use Kinect as a markerless technique to assess the dental practice ergonomically. In this study, considerable validity of recorded spatial parameters was observed between the values of joint angle data from markerless and imaging technique.

There lies an anomaly in the accuracy of data collection using imaging method while capturing orientations which require depth information (like in wrist) in 2D. Wide LOA in wrist plot may be interpreted as the large measurement gap between the values computed by both the techniques, which may also be stated as the less correlation between the methods in calculating wrist data. The presence of occlusions while capturing the wrist data in certain postures using Kinect might also be the responsible factor for inaccuracies. During the dental procedure, it was observed that 90% of the time hand palm was dorsiflexed, which made it cumbersome to capture joint data associated with wrist accurately. Also, the systematic difference in results was produced by the two methods due to the relatively higher value of the systematic error in wrist. Also in the case of wrist, observation of the Bland–Altman plot suggested overestimation of joint angle values (the higher existence of data points above the bias line). In general, no over- or underestimation of joint angle data points was observed in most of the data (w.r.t. UA, neck, and trunk). It is plausible that keeping the optical axis of Kinect sensor parallel to the ground contributed to the precise capture of the body joint angles, namely, UA, neck, and trunk. This is in contrast to the study where overestimated body angle data were obtained, 37 which may be due to the adjustment of the Kinect’s tilt angle to capture the body joint skeleton. Nonhomogeneity of Kinect data in measurement error within measurement volume was reported in a separate study backing up the fact that measurement errors along with all the three coordinate axes of the Kinect may be responsible for the results with proportional error. 38 The presence of significant outlier values in the case of wrist may also be responsible for the lower association between the two methods. 39 Small measurement gap between the two methods in the case of UA, LA, neck, and trunk is observed, indicative of strong correlation between the values computed by two techniques. In LA, lower angle values were observed than the reference imaging values, which may be due to the possible inability of the Kinect system to compute real values when the joint data points were so close to each other and the techniques not agreeing through the range of measurements.

As noticed, the values of correlation coefficients for body joint angle data from Kinect and imaging techniques ranged on the higher side (near to 1) unlike the study by other authors where the values of correlation coefficients ranged from 0.04 to 0.77. 40 This may be due to the less dynamic nature of dental work procedure when compared with the highly dynamic tasks which involve walking and jogging.

In this study, the PE values for neck and UA ranged on the higher side (10.75%–11.85%). Apart from recorded flexion and extension movements in neck and UA, the coexistence of other anatomical movements (lateral flexion or torsion in neck and abduction or adduction in the UA) in certain postures may be responsible for the large deviation of Kinect values from the imaging values.

The Kinect method has proved its potential for the precise spatial data recording of non-static tasks. In this study, spatial joint angle parameters involving slow-motion dynamic dental task had an overall PE (2.45%–11.85%) comparable with an overall PE (4.02%–7.73%) from spatial gait parameters in another study, 41 which involved the walking trials by backpack-carrying school children. The conventional imaging technique was considered as the gold standard for both this study and the study involving walking trials.

The value of the contingency coefficient (C) in this study is lesser than the value obtained in an investigation by other authors, 29 and the plausible influential factor may be the real-time data collection in this case study. The authors of this study suspected the presence of occluded data while performing real-time ergonomic assessment as the deterministic aspect for the good agreement level (and not excellent agreement) value of Cohen’s kappa that resulted at standard Landis and Koch’s scale.

It appeared from the results of this study that Kinect V2 may be considered for its use in detecting optimally correct body angles at least in less dynamically active but dexterity-demanding dental procedure tasks. Furthermore, the body angles may provide information about awkward postures in real time, and in the long run, it may be useful to prevent MSDs in dentists. This study illuminates the idea of developing a real-time posture correction feedback system, operationally compliant with the dentists’ use and that reduces the need for ergonomic expert in routine postural assessment. In dental studies, ergonomic aspects related to dentistry are taught theoretically in coursework, but currently there is no assessment tool to examine the postural correctness in amateur dentists like students and interns. Therefore, a warning cum posture assessment system may be developed to serve as a teaching aid in dental colleges, with the aim of forging young dentists habitual to recommended working postures.

Limitations

Due to methodological standardization and some acceptable shortcomings of Kinect device, results in this study were constrained. The study was conducted in a controlled lightening environment where no other human or any extra object intervention was allowed in between the Kinect and the dentist himself or herself, which may have avoided further occlusions in the data. Furthermore, dentists and patients were made to follow the protocol of the study so as to be able to capture the data in a standardized format which may be difficult to follow during routine dental procedures. Without disturbing the code of conduct of dentists, readings were taken while the dentists were in their normal daily wear and lab coats, which may have recorded some errors in angle calculation as Kinect scans the whole body surface for joint positioning. The role of working positions and isometric spinal loads in determining spine kinematics was not taken into consideration. The data recorded in this study did not contain any overhead or extremely awkward dentist postures, so appropriate prevalidation of Kinect is necessary for such data collection.

Conclusion

In this article, the study related to the use of Kinect V2 sensor in detecting postural data in the actual dental procedure and the variation in its results from conventional imaging methods were discussed. Considering dental work postural variation as non-frequent and non-quick, the proposed system seemed to be an effective option in the case of slow-motion real-time task assessment. Kinect data collection and assessment appeared to be quick and easier than conventional methods. Despite the reported limitations, the results of this study are promising enough to validate the Kinect method as a viable option for ergonomic evaluation of dental workstations. Hence, keeping a check on the postural inaccuracies in dentists during work using the user-friendly and contemporary Kinect device, the long-term effect on the biomechanical aspects can be restrained.

Footnotes

Acknowledgements

The authors wish to thank the dental practitioners who volunteered and participated in the study.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.