Abstract

I. How Do You Know What the Temperature Is?

A. Outline

Every day, at millions of locations around the world, people measure the temperature. Many people might wish for a new ‘device’ that would make the measurement easier, quicker or overcome a problem with their existing measurement technique. But very few people – probably actually none – complain that they cannot achieve their goals because of shortcomings with the definition of the units of temperature – the kelvin and the degree Celsius.

But the process leading to a redefinition of the units of temperature measurement is underway, 1 and a redefinition will almost certainly take place – probably in 2018. This article is about why, even though you probably will not notice, this is a good idea.

B. The two purposes of temperature measurement

There are two key requirements of a system of temperature measurement: reproducibility and accuracy. Thus, for example, if a certain process is found to take place in steel at a particular temperature, then good reproducibility of measurement enables the process to be replicated worldwide over many years. In addition to reproducibility, if a system of measurement enables small changes to be resolved precisely, then this will allow measurements made in different places or at different times to be meaningfully compared at the level of detail required by a user.

Thus, it is the combination of precision and reproducibility of temperature measurement that empowers technology. Accuracy of measurement is – perhaps surprisingly – less important in most cases. By accuracy, we mean the closeness between the number we associate with a particular environment – say T* – and the thermodynamic temperature, T, as used in physics and chemistry.

One extreme example of the separation of accuracy from reproducibility can be observed in the United Kingdom where domestic gas ovens do not have a temperature setting expressed in a conventional unit but instead use a ‘Gas Mark’ scale. The scale runs from ¼ to 9 and is highly non-linear. But it does enable reproducible setting of temperature. This example shows that even an arbitrary temperature scale that bears no relation to thermodynamic temperature – the ‘gas mark’ indications are merely historical tokens – can enable a wide class of activities to take place.

However, accuracy of temperature measurement is important in several scientific fields because there is a fundamental physical link between the temperature of a body and its internal energy. If the temperature measurement system produced estimates T* that were precise and reproducible, but significantly different from thermodynamic temperature, T, then the result would be reflected in apparent inconsistencies in physical laws and apparent anomalies in physical and chemical reference data. For example, quantities that varied linearly with thermodynamic temperature might be found to vary non-linearly with T*.

C. The International Temperature Scale of 1990

The International Temperature Scale of 1990 (ITS-90)2,3 represents a practical solution to the need for a system of temperature measurement which is highly reproducible, extremely precise and sufficiently accurate for all practical purposes. Importantly, it enables all this to be achieved reasonably quickly at modest cost. A thermometer calibrated according to ITS-90 produces a temperature estimate called T90, and the procedure has been constructed so that T90 is both extremely reproducible, typically at the level of 0.001 °C, and also close to T, typically within 0.01 °C.

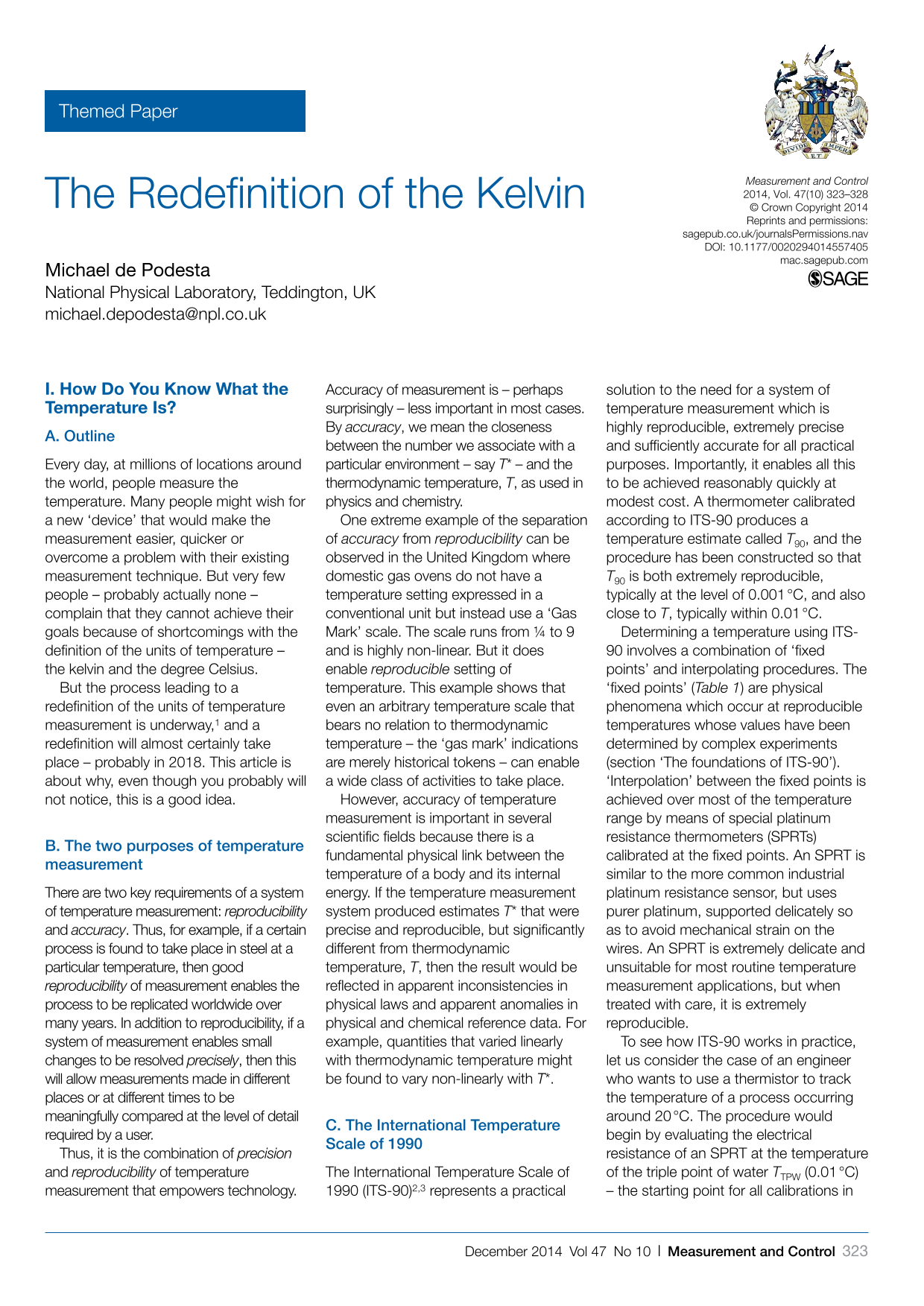

Determining a temperature using ITS-90 involves a combination of ‘fixed points’ and interpolating procedures. The ‘fixed points’ ( Table 1 ) are physical phenomena which occur at reproducible temperatures whose values have been determined by complex experiments (section ‘The foundations of ITS-90’). ‘Interpolation’ between the fixed points is achieved over most of the temperature range by means of special platinum resistance thermometers (SPRTs) calibrated at the fixed points. An SPRT is similar to the more common industrial platinum resistance sensor, but uses purer platinum, supported delicately so as to avoid mechanical strain on the wires. An SPRT is extremely delicate and unsuitable for most routine temperature measurement applications, but when treated with care, it is extremely reproducible.

The fixed points of the International Temperature Scale of 1990 (ITS-90)

The freezing and melting points are at one standard atmosphere pressure. ‘Triple points’ refer to the unique temperature at which the three phases (solid, liquid and vapour) coexist at equilibrium.

To see how ITS-90 works in practice, let us consider the case of an engineer who wants to use a thermistor to track the temperature of a process occurring around 20 °C. The procedure would begin by evaluating the electrical resistance of an SPRT at the temperature of the triple point of water TTPW (0.01 °C) – the starting point for all calibrations in ITS-90. The electrical resistance would then be measured at the melting temperature of gallium TGa (29.7646 °C) and the ratio of these resistances (W) would be evaluated WGa = RGa/RTPW. The melting temperature of gallium is the appropriate fixed point for the calibration because it is just above the maximum temperature that the engineer requires.

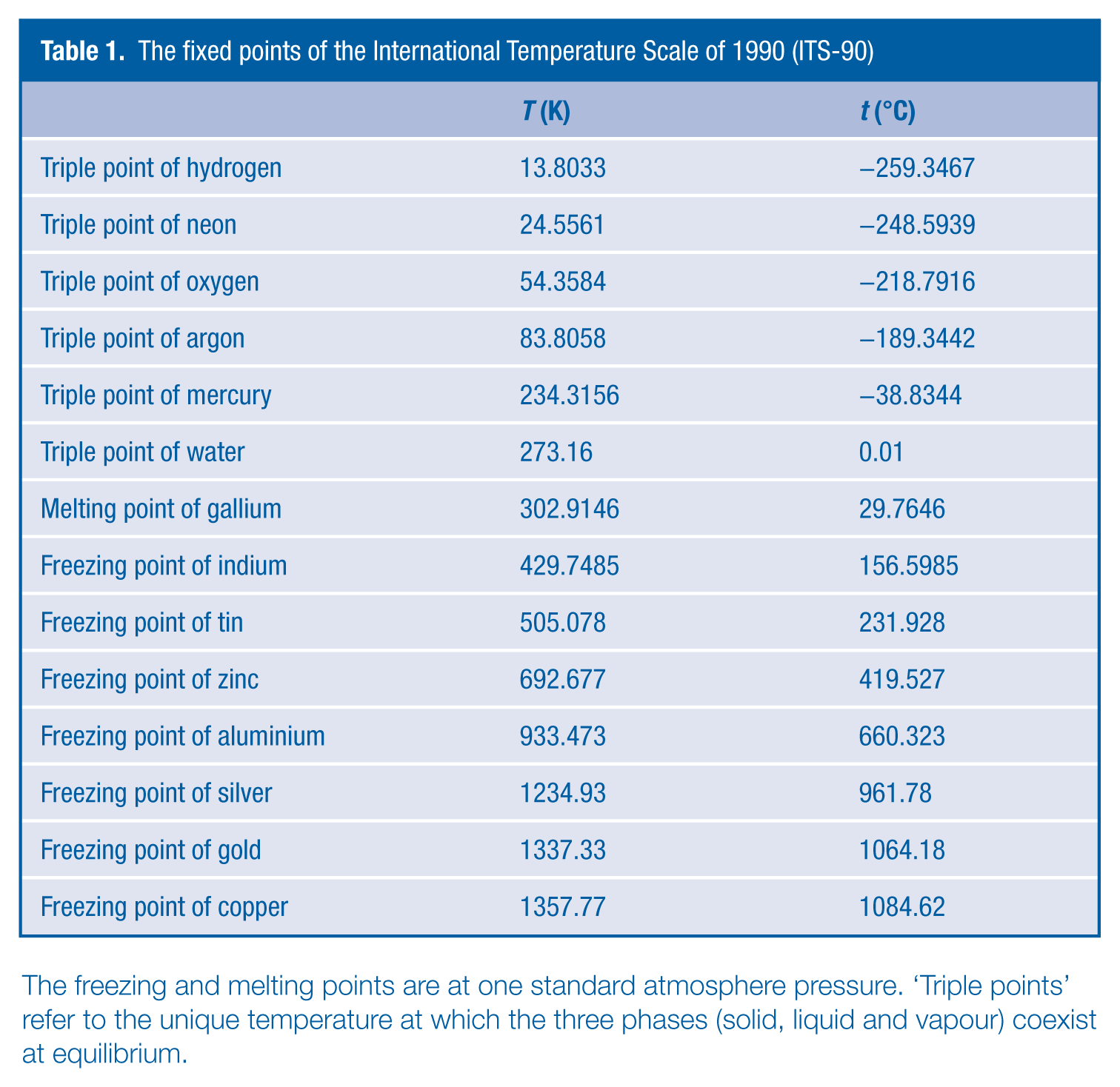

If WGa is sufficiently high (an indication of the purity of the platinum), then the SPRT would be considered of sufficient quality for the ITS-90 procedure to be valid. On the basis of extensive studies of the resistivity of pure platinum, the ‘ideal’ variation WREF(T), has been estimated, and a polynomial function allows a user to work out T90 for any value of W ( Figure 1 ).

The electrical resistivity of platinum versus temperature plotted as a ratio to the resistivity at the triple point of water, WREF(T)

However, experimentally the measured resistance ratio WGa is unlikely to exactly match the reference ratio. So based on the small differences, the ITS-90 specifies a smooth function that describes how the particular SPRT differs from the ‘ideal’ SPRT at all temperatures between the points at which it has been calibrated. Once the engineer has a calibrated SPRT, more practical sensors (such as a thermocouple or a thermistor) can be compared with the SPRT with only a modest additional transfer uncertainty.

Of course, in general, the engineer would be unlikely to possess a calibrated SPRT and the equipment to read it but would instead send the thermistor to a calibration laboratory. But it is through this procedure – and similar procedures extended to cover different temperature ranges – that the world measures temperature. If you care enough about knowing the temperature correctly to have your sensors calibrated, then you are benefiting from using ITS-90.

D. The foundations of ITS-90

Primary thermometry prior to 1990

The ITS-90 is based on thermodynamic temperature experiments known as primary thermometry carried out prior to 1990. It was these experiments which determined estimates for the temperatures of the fixed points ( Table 1 ) and allowed us to deduce how the resistance of platinum varied between these fixed points ( Figure 1 ).

Primary measurements of temperature must build on the unit definition which (since 1954) effectively defines the temperature of the triple point of (pure) water, TTPW, to be 273.16 K (0.01 °C) exactly: 4

The kelvin, unit of thermodynamic temperature, is the fraction 1/273.16 of the thermodynamic temperature of the triple point of water.

The degree Celsius is defined in terms of the kelvin by

The task of a primary thermometer is to tell us how much hotter or colder an object is than TTPW. The triple point of water was chosen as the reference temperature because it can be relatively simply realised in a glass cell and is extraordinarily reproducible (typically at a level of 30 µK or only one part in 107 of TTPW). This level of reproducibility is much better than can be achieved using the temperature of melting ice as a reference. 5

An SPRT is extremely reproducible and precise, but it is not a primary thermometer because we do not understand the basic physics of electron transport in metals well enough to say that when its resistance increases by (say) 10%, that its temperature must have increased by a known amount.

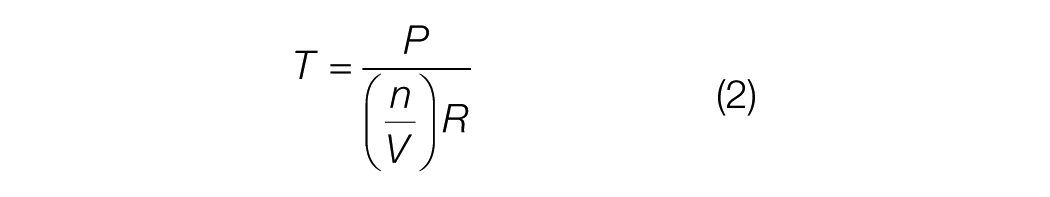

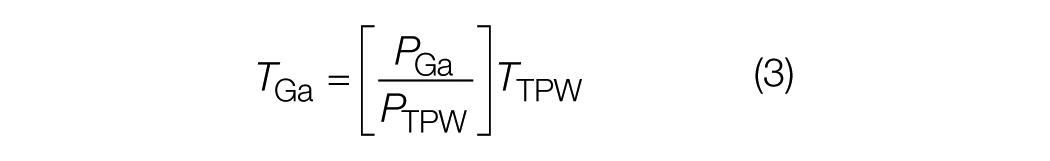

Instead, primary thermometers are based around simple physical systems, most commonly the properties of a low-density gas of weakly interacting molecules. If the ideal gas law were perfectly obeyed, then we could use the equation

Thus, if n moles of gas are trapped in a volume V, then we can deduce the temperature T by measuring the pressure P. So to infer an unknown temperature – such as the temperature at which gallium melts (TGa) – we would take a container of gas and measure its pressure PTPW at TTPW, then measure its pressure, PGa at TGa. We could then infer that the temperature of TGa was

However, the ideal gas law is only obeyed by real gases in the limit of low density. So in the above example, the experiment would be repeated for successively lower molar densities (n/V) and the limiting low-density value

Primary thermometry since 1990

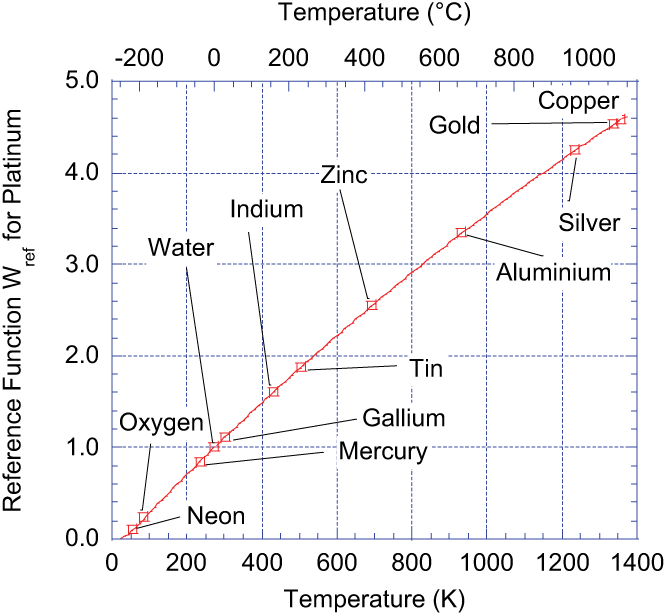

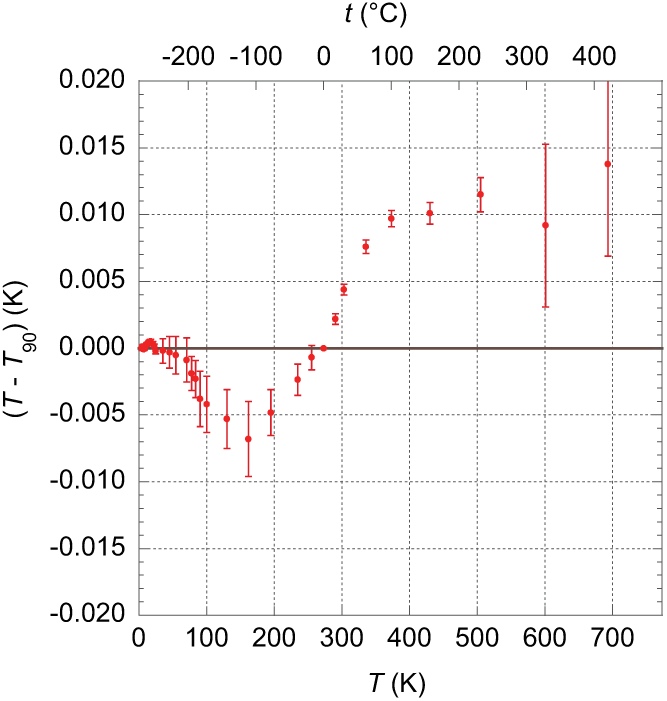

Since 1990, it has become apparent that some of the primary thermometry on which ITS 90 was based was in error. The differences between more modern estimates of thermodynamic temperature T and T90 ( Figure 2 ) can be found in a review by Fischer et al. 6 and online at the International Bureau of Weights and Measures, BIPM. 7

Current estimates of T − T90 based on the work of Fischer et al. 6

Many people find it surprising that the result of a temperature calibration at a National Metrology Institute can result in the assignment of a ‘wrong’ temperature. But that is in fact the case. The hypothetical engineer we discussed in the previous section would find that the uncertainty in the calibration of their SPRT at TGa would be – if carried out at NPL – less than 0.001 °C (1 millikelvin or 1 mK). However, we now believe that TGa as estimated in ITS-90 is ‘wrong’ – that is, it differs from thermodynamic temperature – by approximately 4.5 mK. However, it would be wrong by exactly the same amount in every calibration laboratory around the world.

It is important to understand that – from a philosophical perspective – the temperatures designated by ITS-90 are merely numerical labels. However, because of its careful design, the ‘labels’ (T90) assigned to temperatures by ITS-90 are close enough to the thermodynamic temperature T that for all practical purposes, they can be used interchangeably. I personally know of only one single scientific endeavour in which the difference between T and T90 is of any significance.

E. Summary

So the answer to the question raised at the start of this section ‘How Do You Know What the Temperature Is?’ is complex. It relies on a highly specified procedure (ITS-90) which has been adopted worldwide. This procedure is built upon a foundation of primary temperature measurements which involve the inference of temperatures based directly on the laws of physics and the basic definition of the unit of temperature.

Thus, the definition of the kelvin and degree Celsius can be considered as a key part of the ‘hidden architecture’ which underpins all temperature measurements. And as with any hidden structure – it occasionally needs examining to see if maintenance is necessary.

II. The Re-definition

A. Motivation

In section ‘How Do You Know What the Temperature Is?’, we saw that the current system of temperature measurement is complex, but that despite shortcomings, it does satisfactorily meet most user’s requirements. Why then should the conservative science of metrology undertake the radical step of re-defining the kelvin? There are three distinct reasons.

Reason 1: opportunity and context

First, we have the opportunity to do so. The problems with definition of the kilogram and the ampere are serious enough to merit a review of the International System of Units (The ‘SI’) on their own. 8 In this context, it makes sense to look at all the unit definitions and consider their appropriateness for use in the 21st century and beyond.

As metrology has evolved, we have seen the advantages of making unit definitions that stem from the advice of James Clerk Maxwell who in 1870 urged us … not to seek the units of length, time and mass in the movement or mass of our planet but in the wavelength, frequency and mass of the imperishable, invariable and altogether similar molecules.

Thus, the unit of time, the second, is defined in terms of the frequency of the natural oscillations of an atom of 133Cs. And the unit of length, the metre, is based on the unit of time combined with a fundamental constant of nature, the speed of light in vacuum, c.

We hope to revise definition of the kilogram so that it will be based on the units of time and length in combination with a fundamental constant of nature, the Planck constant, h.

Using these three unit quantities of mass, length and time, we can define a unity quantity of energy, the joule, which has the dimensions of [mass] × [length] × [time]−2.

The advantages of these redefinitions in general terms have been explained in accompanying articles. Here, we note two key features.

First, the abstraction implicit in these definitions should ensure the stability and reproducibility in time of the units. Although definitions in terms of artefacts (e.g. the kilogram definition) or specific physical properties (e.g. the kelvin definition) are more concrete and easy to visualise, they represent a weakness in the definition of the unit. For example, the definition of the unit of temperature had to be updated in 2005 to specify the precise isotopic content of what was meant by ‘pure’ water.

The second feature of the new form of the definitions is that each unit is defined in terms of other SI units and an associated physical constant. As we will see in the next section, the definition of the kelvin can be very simply expressed in terms of the unit of energy and a physical constant.

Reason 2: linking to the SI

As we take the opportunity to re-examine the definition of the kelvin, we immediately notice a curious aspect of the current definition of the kelvin: it contains no link to the other SI units. The unit of temperature is defined in terms of a standard arbitrarily chosen temperature.

This reflects the fact that historically the practice of thermometry was not directly linked to other aspects of physics. In part, this was because we learned to measure temperature and use the results before the fundamental significance of temperature was fully understood. 9

And this peculiarity in the definition of the unit has an unfortunate consequence. In every equation of physics in which a temperature is linked to atomic or molar quantities, it is always accompanied by a constant – either the molar gas constant R or the Boltzmann constant kB = R/NA where NA is the Avogadro constant.

The units of kB are joules per kelvin: that is, the constant serves to relate temperature to energy and its molecular effects. Now one might think that the Boltzmann constant would be ‘constant’, but because of the way temperature is currently defined, it is not: its value has to be determined experimentally and is periodically re-evaluated. Consider some molecules of a gas held at TTPW. From statistical mechanics, we know that the average energy associated with each degree of freedom is defined to be

From a philosophical perspective, it would clearly make more sense to define an exact value for the Boltzmann constant and reflect the uncertainties in measurements of E as uncertainties in the dependent quantity T. Then TTPW would lose its privileged status, and like all the other fixed points (e.g. TGa), its temperature would have an associated uncertainty.

So, simply defining an exact value for the Boltzmann constant would serve to define the kelvin. But the definition would now be fundamentally linked to the unit of energy and hence to the definitions of mass, length and time that form the foundations of the SI.

Reason 3: avoiding arbitrariness

The final reason for the redefinition involves the current choice of a particular temperature as a defining temperature. This makes every temperature measurement – either explicitly or implicitly – a comparison with the TTPW.

It might at first glance seem that a measurement made at 1500 °C using a radiation pyrometer makes no reference to the triple point of water, but in fact the reference is made. Typically, the pyrometer would be calibrated against radiation from a source at a ‘known temperature’ commonly the temperature of melting silver, TSilver. The temperature assigned to TSilver has been deduced based on primary thermometry that relates its temperature to TTPW. So – at least in principle – defining the unit of temperature in terms of a single arbitrarily chosen temperature might impede future advances in temperature measurements.

Commonly measured ‘high’ temperatures extend to a factor of 10 larger than TTPW and difficulties in determining the ratio represent an unnecessary impediment in the path of improved temperature measurement at high temperatures. The effect is actually more severe – though less commonly encountered – at cryogenic temperatures which can differ from TTPW by factors of more than 1000.

Summary

The review of the SI initiated by the need to redefine the kilogram has offered an opportunity to redefine the kelvin in terms of the Boltzmann constant and allow us to directly link the unit of temperature to the basic physical quantity that it represents: energy.

A definition of this form is more abstract than the current definition but would avoid the need for any future modification. Indeed, if we had historically known what we know now, we might well have done it this way some time ago.

B. The proposal and it consequences

Possible wording

The wording of the new definition of the kelvin has not yet been finalised, but Mills et al.

8

have discussed the pros and cons of alternative wordings. One of their suggestions is that The kelvin, unit of thermodynamic temperature, is such that the Boltzmann constant kB is exactly1.380 6XX X × 10−23 joule per kelvin.

If this is indeed the definition eventually adopted by CIPM, a document will accompany the headline definition called the Mise en Pratique for the kelvin 10 which will describe how the definition may be realised in practice.

Of course, it is essential that the magnitude of the ‘new kelvin’ be as close as possible to the magnitude of the ‘old kelvin’. In order to do this, the digits ‘XXX’ in the defined value of Boltzmann constant must be chosen carefully. The choice is made by carrying out experiments which consist of taking a simple physical system (a primary thermometer) to the temperature TTPW which is defined exactly in the current SI. The experiments must then measure a physical property which can be directly related to

Close to the time of the redefinition, the Committee on Data for Science and Technology (CODATA) will review the published estimates of kB and recommend a consensus estimate which will be used in the new definition. 14 The uncertainty of measurement of kB will most likely be slightly less than 1 part in 106 and when the redefinition has taken place this will become the fractional uncertainty with which we know TTPW. In other words, the Boltzmann constant will be exactly specified, linking temperature measurements directly to energy measurements.

Consequences

The most significant anxieties around the redefinition concern the effects on practical temperature measurements in science and industry. In this case, it is possible to be definitive: there will be no immediate effect. This is because, as we saw in section ‘How Do You Know What the Temperature Is?’, all practical temperature measurements use ITS-90, and this procedure will not change as a result of the redefinition.

As previously mentioned, TTPW will no longer be exactly known but will instead have an associated uncertainty likely to be a little less than 1 part in 106 of TTPW or ~0.27 mK. However, this is unlikely to have any profound consequences since this uncertainty is not considered in the ITS-90.

As the differences between T90 and thermodynamic temperature become clearer, it may be that a revision of ITS-90 is considered appropriate but that is unlikely in the present decade because ITS-90 is good enough for almost all users.

If a revision were undertaken, the values of all the fixed points would be reviewed and updated, and at this point, it is conceivable that TTPW may be assigned a slightly different value. This will be a sign that the old definition has passed into history. This would be reminiscent of the way that previously the boiling point of water was defined to be 100 °C, but in our current view, water at atmospheric pressure is estimated to boil at 99.985 °C.

In summary, the redefinition can be considered to be essential maintenance of the foundations of the system of temperature measurement, preparing it for centuries of future use by linking it directly to the three base quantities of the SI, mass, length and time. There is no reason why the value adopted for the Boltzmann constant need ever change.

One unexpected and positive consequence of the redefinition is that the need to re-measure the Boltzmann constant prior to the redefinition has stimulated research in primary thermometry.11,13 Several teams have extended the state of the art, 15 and since advanced measurement techniques rarely go un-exploited, we can expect to see developments in terms of improved thermometry in years to come.

It is quite conceivable that in decades or centuries to come, temperature measuring technology will evolve so that instead of using a procedure such as ITS-90 to calibrate sensors, they will instead be calibrated with lower uncertainty directly in terms of thermodynamic temperature. Indeed, this is already the case at very low and very high temperatures, where techniques of absolute noise thermometry and absolute radiometry are becoming established.

The definition of the kelvin represents an ultimate limit to measurement uncertainty. And the aim of the redefinition is to ensure that, in the face of centuries of future progress, perhaps using technology that we have not yet invented, the basic definition of the kelvin will not in any way impede progress in temperature metrology.

Footnotes

Acknowledgements

The author would like to thank Richard Rusby and Stephanie Bell for their considered reading of the manuscript.

Authors’ note

For permission to reuse this content, please e-mail the NPL Copyright Officer at

Funding

This research received no specific grant from any funding agency in the public, commercial or not-for-profit sectors.