Abstract

Artificial intelligence (AI) is increasingly used to streamline hiring procedures, but it raises concerns about transparency and discrimination. The European Union (EU)’s Artificial Intelligence Act (AIA) is the first broad attempt to regulate AI, using a tiered approach based on levels of risk. This article asks whether and to what extent such an approach can adequately protect job applicants’ fundamental rights. Focusing on the EU as a global reference point, it shows how connecting risk management with rights protection can make regulation more effective. The authors argue that involving affected groups through stakeholder-driven standardization, fundamental rights impact assessments, and co-determination can turn compliance from a box-ticking exercise into meaningful accountability. Placing the AIA within the broader contexts of data protection, equality, and product safety law, the article offers practical lessons for jurisdictions worldwide seeking to align technological innovation with fundamental rights protection.

Keywords

In today’s datafied world, people are constantly evaluated by algorithms, and the hiring process is no exception. Employers increasingly use artificial intelligence (AI) systems such as résumé scanners, automated interview platforms, skill tests, and chatbots (Z. Chen 2023; Lee 2023; OECD 2023; Paliokaitė et al. 2025). While these technologies promise efficiency and objectivity, they often rest on biased or incomplete data (Williams, Brooks, and Shmargad 2018). Moreover, their social costs fall mainly on job applicants, who usually have less information and weaker bargaining positions (Dipboye 1994), limiting their autonomy and well-being.

As a result, discrimination, a long-standing concern in hiring, takes on new dimensions through the scale, speed, and opacity of AI systems (Zuiderveen Borgesius 2020; Nedzhvetskaya and Tan 2022). Seemingly small design choices, such as missing birth years in dropdown menus or job ads filtered by demographics, replicate and intensify inequality, leading over time to more uniform and less diverse workplaces (Bodie, Cherry, McCormick, and Tang 2017; Ajunwa 2020).

Legally, AI in hiring exposes the weaknesses of current protections for job applicants. Adding to the complexity, several countries are adopting distinct strategies. For example, in the United Kingdom, data protection and non-discrimination law apply alongside context-specific, initially non-binding guidance, reflecting an effort to build a trusted yet innovation-friendly regime (Kelly-Lyth 2021; Roberts et al. 2023). In the United States, by contrast, a pro-business and anti-regulation stance prioritizes entrepreneurial freedom, resulting in a fragmented legal landscape (Donoghue, Huanxin, Moore, and Ernst 2024). In China, AI governance follows a cross-sector model aligned with the Party-State’s goals for economic, social, and political security (Donoghue et al. 2024; Liu, Zhang, and Sui 2024).

In the European Union (EU), Regulation 2024/1689—known as the Artificial Intelligence Act (AIA)—introduces harmonized rules for AI, including in hiring, and is the first binding law on this technology. Promoted as a global model (Almada and Radu 2024), it builds on the EU’s strong internal market and its influence beyond its borders. Yet, this standard-setting ambition, already visible in Brazil’s Bill 2.338/2023 (De Freitas Júnior, Zapolla, and Cunha 2024), is coupled with the AIA’s critical limitations, such as rigid risk classifications and reliance on self-assessment (Veale and Zuiderveen Borgesius 2021; Giraudo, Fosch-Villaronga, and Malgieri 2024; Prifti and Fosch-Villaronga 2024).

This article takes the EU as its central case study, treating it as a “best case” for evaluating which regulatory approaches hold the greatest promise for addressing broader governance challenges in AI. Its analysis also offers lessons for jurisdictions now designing AI governance regimes.

Against this background, we proceed in four steps. We begin by outlining key AI systems used in hiring, then present the AIA and its risk-based approach. Next, we situate it alongside core instruments on data protection, non-discrimination, and product safety and identify their synergies, overlaps, and frictions. Finally, we advance our theoretical contribution by reconceptualizing risk- and rights-based narratives in AI governance as potentially complementary, showing how co-governance mechanisms, particularly standardization and fundamental rights impact assessments (FRIAs), can embed participatory safeguards across the AI life cycle.

AI-Based Hiring Systems: Balancing Organizational Efficiency and Applicants’ Rights

AI-based hiring systems can be divided into four main groups (Bogen and Rieke 2018):

Tools for creating targeted job descriptions;

Systems that scan and process résumés, CVs, and motivation letters;

Software for interviews that analyze facial expressions, movements, and vocal data;

Applications to assess skills and knowledge, including using gaming techniques.

Indubitably, such systems can optimize hiring, by reducing time, costs, and effort (Sánchez-Monedero, Dencik, and Edwards 2020). This efficiency lies in outperforming humans at screening applications, extracting information swiftly, and balancing human intuition with analytical precision (Jarrahi 2018). Additionally, these technologies promise smoother coordination, fewer biases linked to personal characteristics, and freedom from errors caused by fatigue or disengagement, while offering greater traceability than human decision-making (Langer and Landers 2021; Lobel 2022; Dencik, Brand, and Murphy 2024). Yet, human oversight remains essential—from design and data set curation to training, testing, and review—precisely the stages into which social, institutional, and cognitive dynamics can embed bias (Kim 2022; Adams-Prassl, Binns, and Kelly-Lyth 2023).

It is therefore unsurprising, perhaps, that the literature highlights the adverse effects on job applicants, who are already vulnerable due to information asymmetry, unbalanced decision-making power, and economic dependence (Kingsley, Gray, and Suri 2015). AI systems process personal and sensitive data, raising privacy and data protection concerns (Ajunwa 2020) and potentially replicating social marginalization (Acikgoz, Davison, Compagnone, and Laske 2020; Abraha 2023). This may have cascading effects on job applicants’ social participation, economic circumstances, housing opportunities, and family dynamics, potentially leading to physical and mental health implications (Rigotti and Fosch-Villaronga 2024). AI systems may further infringe fundamental rights (Yam and Skorburg 2021), which are often challenging to quantify or link causally to individual outcomes. The opacity of AI-driven decisions compounds the problem, hindering scrutiny, undermining accountability, and dissuading presumed victims from pursuing claims and grievances.

Overall, AI-based hiring may expand inequalities, erode the socio-technical foundations of a democratic community, and reduce trust (Yeung 2019), while discouraging both individuals and organizations from using AI systems. Consequently, innovation may be hampered not only by reputational or legal risks but also by increasing reluctance to rely on technologies perceived as unfair or unreliable—ultimately undermining potential benefits for job applicants and employers.

The AI Act in a Nutshell: Promises and Dissonances

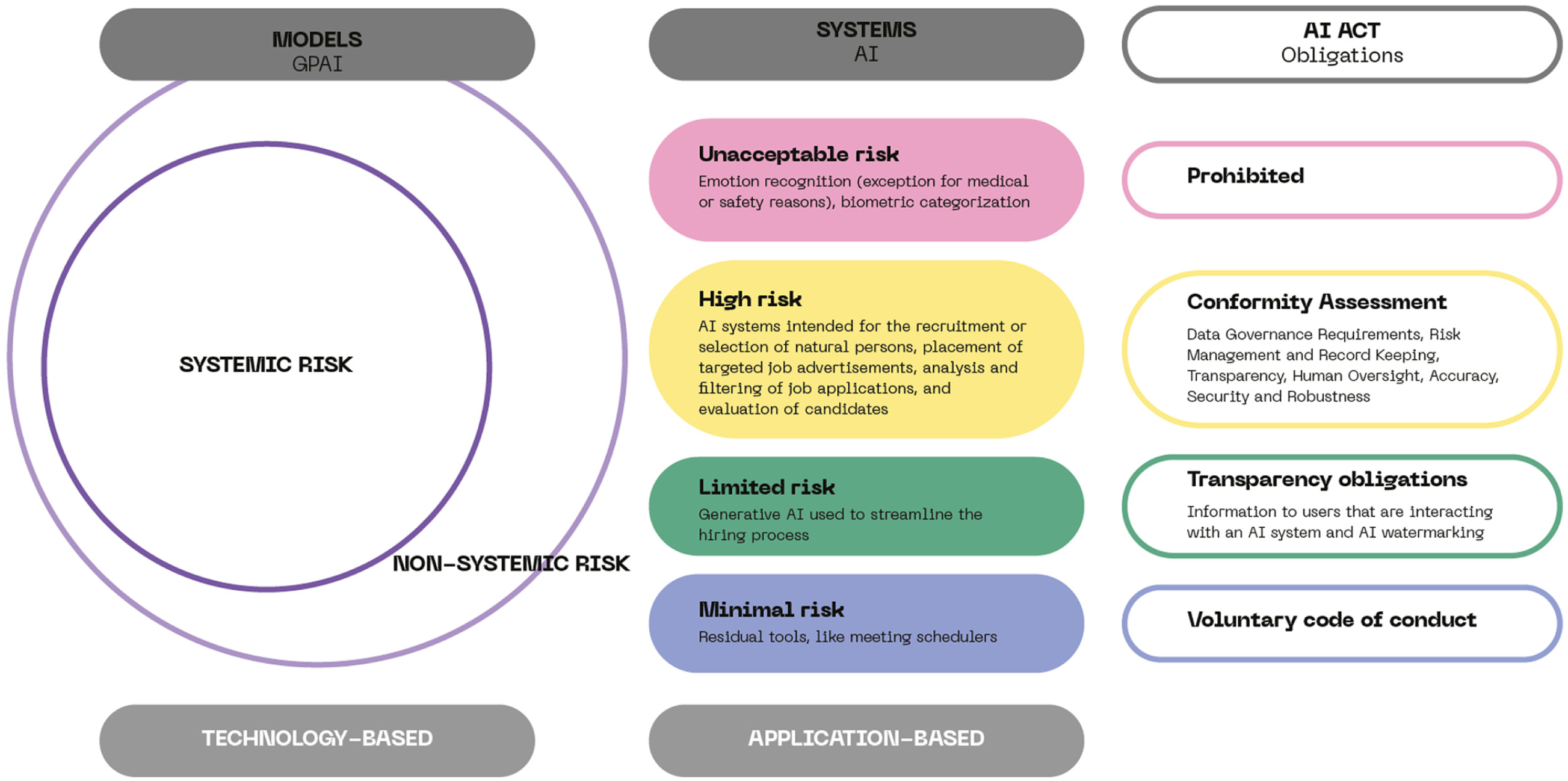

The AIA aims to “improve the functioning of the internal market and promote the uptake of human-centric and trustworthy (AI) while ensuring a high level of protection of health, safety, and fundamental rights . . . and supporting innovation” (Art. 1 AIA). To this end, it adopts a tiered, risk-based approach for AI systems, ranging from unacceptable to high-risk, limited, or minimal risk. The two tiers for General Purpose AI (GPAI) are systemic or non-systemic risk. (See Figure 1.)

AIA Risk-Based Approach

The AIA defines risk as “the combination of the probability of an occurrence of harm and the severity of that harm” (Art.1.2 AIA) and uses this definition to prioritize, calibrate, and target enforcement action that is proportionate to the potential harm (Dunn and De Gregorio 2022). In other words, the risk-based approach—by setting categories of risk and associated compliance obligations—serves as the methodology for tailoring prohibitions and binding rules for AI according to the functions or contexts in which the systems are deployed (AIA, Recital 27). It operates as a yardstick for assessing the risks and adverse impacts on fundamental rights, including those of job applicants.

Prohibited AI Practices

Certain AI systems for hiring are considered to pose unacceptable risks and are prohibited as of February 2, 2025, under Article 5.1 AIA. This is the case of AI systems designed to infer individuals’ emotions in the workplace, such as tools monitoring candidates’ facial or vocal expressions during recruitment (Boyd and Andalibi 2023; Narayanan and Kapoor 2024). This ban stems from concerns about the questionable scientific foundations of such systems, their limited reliability and generalizability, and the variability of emotional expression—both across cultures and within individuals—which may lead to fundamental rights’ violations. While the notion of “emotions” is to be interpreted broadly (European Commission 2025), it does not extend to physical states or “readily apparent expressions, gestures, or movements,” provided the AI system merely detects them without inferring emotional states (AIA, Recital 18).

However, Article 5.1(f) contains an important exception: AI systems intended for medical or safety reasons are permitted, provided they are strictly necessary, proportionate, and accompanied by safeguards (European Commission 2025). Examples include hiring software purported to reasonably accommodate job applicants with disabilities or detect fatigue in drivers (Boyd and Andalibi 2023). Yet, this exception may weaken the prohibition’s effectiveness, potentially allowing systems that assess candidates’ stress or attention levels under the guise of safety or reasonable accommodation (Marassi 2025). Such applications risk reintroducing emotional profiling through the back door, raising difficult trade-offs between safety, inclusion, and fundamental rights protection (Veale, Binns, and Ausloos 2018).

Article 5.1(g) AIA further prohibits “biometric categorization systems that classify individually natural persons based on their biometric data to deduce or infer their race, political opinions, trade union membership, religious or philosophical beliefs, sex life or sexual orientation.” This ban is relevant in workplaces where employers collect biometric data for other purposes, including authentication and performance prediction (Holland and Tham 2022). What is alarming about this provision is that, as explained below, the grounds for AI-driven discrimination are expanding to less conventional ones (Wachter and Mittelstadt 2019), and special categories of biometric data also include health (Brown 2020). Without a complete list of potential inferences from these systems, companies may exploit legal vacuums.

High-Risk AI Systems

Most AI systems for hiring fall under the high-risk category referred to in Article 6.2 AIA. This stems from Annex III, point 4(a), which includes, “AI systems intended to be used for the recruitment or selection of natural persons, in particular to place targeted job advertisements, to analyse and filter job applications, and to evaluate candidates.” According to Recital 57, this classification is justified by these systems’ potential impact on career prospects, livelihoods, and workers’ rights, and the risk of perpetuating historical patterns of discrimination. However, an important exception appears in Article 6.3 AIA, excluding systems that pose no significant risk to health, safety, or fundamental rights, and that do not materially influence decision-making outcomes. It applies to AI systems intended to perform a narrow procedural task; to improve the result of a previously completed human activity; to detect patterns or deviations from prior decision-making without replacing or influencing the human assessment; or to perform a preparatory task. Profiling systems, including those used for hiring, are not covered by these exceptions.

Classification as a high-risk system imposes obligations on both providers and deployers, 1 including the requirement to establish, implement, document, and maintain a risk management system. This must function as “a continuous iterative process” carried out by providers throughout the entire life cycle of the high-risk AI system (Art. 9.2 AIA). The requirement applies only to risks that can be “reasonably mitigated or eliminated through the development or design of the high-risk AI system, or the provision of adequate technical information” (Art. 9.3 AIA). The process comprises four steps:

Identify and analyze known and reasonably foreseeable risks to health, safety, or fundamental rights while the AI system is used per its intended purpose.

Estimate and evaluate risks that may emerge when the AI system is used following its intended purpose and under conditions of reasonably foreseeable misuse.

Evaluate other risks that may arise, based on the data resulting from the post-market monitoring system.

Adopt risk management measures to address the identified risks.

These measures shall be appropriate, targeted, and effective in mitigating risks, balancing the requirements of the risk management system (Art. 9.4 AIA). Once adopted, any residual risk should be “judged to be acceptable” (Art. 9.5 AIA).

The AIA further specifies the factors to consider in identifying the most appropriate risk management measures. These include 1) elimination or reduction of risks as far as technically feasible through adequate design and development of the AI system; 2) adequate mitigation and control measures addressing unavoidable risks; and 3) provision of required information and training to deployers.

Systemic and Non-Systemic Risks in GPAI Models

Special provisions apply to GPAI models, which popularly emerged during the central phases of the AIA legislative trail. A GPAI model is “trained with a large amount of data using self-supervision at scale, that displays significant generality and is capable of competently performing a wide range of distinct tasks regardless of the way the model is placed on the market and that can be integrated into a variety of downstream systems or applications” (Art. 51 AIA). In hiring, GPAI includes generative AI tools for tasks such as writing emails or job vacancies. These GPAI models can be “official,” that is, provided by a company to the users, or “unofficial,” such as ChatGPT, that can be freely used.

GPAI providers are subject to distinct obligations that follow another tiered risk classification than the “main” one described above:

This three-tier classification system for GPAIs has been criticized for being both over- and under-inclusive, failing to consider the specificities of downstream applications (Novelli et al. 2024). The categorization is rigid and does not consider the use context, as it depends only on the computation potency. Whether it is used in high-risk sectors or in low-risk areas is irrelevant, yet this undermines tailored risk assessment and mitigation. This approach is particularly challenging when large language models (LLMs) are used for résumé/CV screening optimization or automated virtual interviews, cases in which bias risks are higher and human oversight is weaker.

“Other” Risks

Some AI features used in hiring, such as chatbots assisting with job applications, may fall under the category of systems that interact directly with people, triggering transparency obligations. Under Article 50.1 AIA, providers must ensure that affected persons are informed they are interacting with AI, unless this is obvious from the perspective of a “reasonably well-informed, observant and circumspect” person and the context. Similarly, AI-fueled tools, including GPAIs, are used to generate synthetic audio, image, video, or text content. In this case, Article 50.2 AIA requires providers to ensure that their outputs are marked in a machine-readable format and detectable as artificially generated or manipulated. Other duties include interoperability, robustness, and reliability, though this excludes systems performing merely assistive functions for standard editing or not substantially changing input data. Coupled with an explicit duty of compliance with data protection, the notification obligation also covers deployers of an emotion recognition system or a biometric categorization system (Art. 50.3 AIA). In all cases, the information must be accessible, and provided clearly and recognizably, at first interaction or exposure (Art. 50.5 AIA).

During hiring, HR practitioners often rely on AI systems such as meeting schedulers and other general-use tools (Rigotti, Fosch-Villaronga, and Rafnsdóttir 2025), typically classified as “low risk” under the AIA. This rigid classification fails to consider the potential for AI systems to be repurposed beyond their originally intended functions, potentially affecting hiring outcomes. Despite this low(er)-risk classification, such tools can transfer bias, causing discrimination and other fundamental rights’ violations.

Critical Remarks on the Risk-Based Approach

AI-based hiring systems may pose significant risks to fundamental rights in the workplace (Z. Chen 2023), perpetuating biases, violating data protection, and affecting individual safety and society. In what follows, we critically aim to reconceptualize and align the risk-based approach of the AIA with a more effective fundamental rights protection.

Informed by product safety legislation, the AIA adopts an anticipatory, risk-based approach. The only individual rights it explicitly provides are the right to lodge a complaint with the relevant market surveillance authority (Art. 85) and, for those affected by decisions based on high-risk AI systems listed in the Act’s Annex III, the right to receive clear and meaningful explanations of the system’s role in the decision and its main elements. Conversely, data protection and anti-discrimination laws are tasked with chiefly protecting fundamental rights. They aim to address existing power and information asymmetries within society, including those between employers and HR practitioners on the one hand, and job applicants and workers on the other. Combining two distinct approaches, namely risk-based and rights-centered, may be an uphill task, as the logic behind them and their purposes differ (Almada and Petit 2023; Aloisi and De Stefano 2023).

The liberalizing thrust of the AIA entails designing and commercializing AI systems that could impact fundamental rights, “tolerating” high risks to these rights as long as they are either eliminated or, if elimination is not possible, effectively prevented or mitigated. Thus, the effort to integrate the market logic with the fundamental rights’ protection may have nefarious consequences: The development of systems with discriminatory features—detected and only partially mitigated—may continue to proliferate (Poulsen, Fosch-Villaronga, and Søraa 2020). In the best scenario, risks are “known unknowns” and can be identified through risk assessment and anticipated. The problem arises when risks are “unknown unknowns” because the standard developed to ensure AI provider obligations did not identify the risks.

In essence, the AIA conflates the dynamic balancing required for fundamental rights’ protection with the binary logic of technical standards—typically defined by whether they are met or not met—offering limited scope for revisiting risk categories and mitigation measures over time. This limitation could lead to routinized compliance, whereby regulatory frameworks are mechanically followed without meaningful engagement, reducing them to mere box-ticking exercises. Risk management often overlooks the nuances of different types of risk, serving as barriers to meaningful change. This approach focuses too narrowly on formal adherence rather than addressing the deeper, less quantifiable impacts on individuals (Waldman 2021) and undervalues intangible harms that cannot be quantified in financial terms.

Separate channels of compliance between the providers and the deployers under Article 26 AIA further exacerbate this problem, as the obligations “are without prejudice to other deployer obligations under Union or national law.” In the context of AI-based hiring systems, employers/deployers may benefit from a compliance scheme that overlaps only partially with other procedural obligations and guardrails (in the areas of equality, data protection, and, broadly speaking, labor law). However, since the AIA complements other legislation, its compliance alone does not absolve employers of their broader obligations. As explored below, these overlapping duties call for a more integrated and participatory approach to AI governance. More bottom-up methods should be tested in work ecosystems, and compliance mechanisms should be multidimensional. They should integrate complementary approaches, enhancing the chances of designing and deploying AI systems that uphold fundamental rights, rather than merely promoting bureaucratic streamlining of risk identification and mitigation.

No Law Is an Island: Situating the AIA within a Broader Legal Framework

Besides the AIA, a set of legislation protects job applicants, aiming to safeguard their fundamental rights and address power imbalances. If understood not as punitive constraints but as enabling guardrails, such rules can facilitate the design and deployment of AI systems that are error-resistant and less susceptible to contestation, thereby fostering trust. As we argue below, however, the interplay of these laws is fraught with mismatches, potentially leaving job applicants under-protected.

Data Protection

AI-based hiring systems process massive volumes of personal data to create training data sets or perform specific tasks (Albassam 2023). They are often trained on data from past HR practices and used to analyze, categorize, score, or make decisions about job applicants. This process poses risks such as increased surveillance and information asymmetry (Ebert, Wildhaber, and Adams-Prassl 2021). Furthermore, the European Data Protection Board (formerly the Article 29 Working Party, or A29WP) highlights the tension between workers’ rights and employers’ interests and the typical power imbalance in their relationship, which is exacerbated by modern technology. Consequently, it notes that recruitment data should be “deleted as soon as it becomes clear that an offer of employment will not be made or is not accepted by the individual concerned” (A29WP 2017)—a requirement at odds with AI systems that rely on such data to generate predictive patterns.

The right to data protection, anchored in Article 8 of the Charter of Fundamental Rights of the EU (CFREU), is primarily upheld by the General Data Protection Regulation (GDPR). Yet, its application in work environments is imperfect, as the EU has not established uniform rules for workers’ data protection (Ogriseg 2017), leaving this prerogative to member states. Precisely, Article 88 GDPR allows member states to introduce specific rules to safeguard employees’ data in the employment context. This discretion has led to concerns that such national reforms may contradict the objectives of the GDPR, resulting in further fragmentation, legal uncertainty, and inconsistent enforcement (Abraha 2022).

The literature has extensively examined the ambitions and limitations of the GDPR in regulating AI systems for HR practices (Abraha 2023). Specifically, Article 5 GDPR outlines a set of key principles that could serve to safeguard job applicants and workers’ fundamental rights and mitigate various risks associated with data processing (Hacker 2018; Aloisi 2024). For example, a broad interpretation of the accuracy principle could ensure that the data set’s quality is representative of society (Fundamental Rights Agency 2019), aligning with anti-discrimination law. Article 5.1(a) also mandates transparency, requiring AI systems to inform job applicants and workers about data processing in clear, accessible language, to be implemented by design (Felzmann, Fosch-Villaronga, Lutz, and Tamó-Larrieux 2020). However, given that AI systems often operate as opaque “black boxes” beyond full human control (Zuiderveen Borgesius 2020), implementing these principles may be challenging.

Employers are required to conduct ex ante measures such as data protection impact assessments (DPIAs) according to Article 35 GDPR (Yam and Skorburg 2021; Abraha 2023; Aloisi 2024). Despite criticism (Calvi 2023), DPIAs represent an efficient mechanism for safeguarding individuals’ fundamental rights, creating a channel for evaluating risks through a participatory mechanism. They can demonstrate proactive steps taken to identify and mitigate potential risks to workers’ rights, including discrimination. Unfortunately, there is no DPIA data repository in which companies can share their practices, which would ease the risk and hasten the development of lesson-learned policy guidelines (Fosch-Villaronga and Heldeweg 2018).

To combat discrimination, the GDPR prohibits the processing of “special categories of personal data,” including information on race, politics, religion, or health, among others (Art. 9 GPDR). While certain exceptions apply, such as when processing is necessary in the field of employment (Art. 9.2 GDPR), the AIA adds a new layer to this framework. Under Article 10.5 AIA, processing sensitive data may be allowed when necessary to reduce bias in high-risk AI systems. This exception creates a tension between the AIA’s aim to improve fairness and the GDPR’s restrictions on sensitive data use (Deck et al. 2024). The concern is further heightened by Article 5.1(g) AIA, which prohibits AI systems that use biometric data to infer personal characteristics—yet notably omits health data from its scope, despite its relevance and risks in workplace contexts (Brown 2020).

The GDPR mandates the provision of comprehensive information to job applicants and workers regarding the existence, logic, significance, and potential consequences of certain forms of automated processing (Arts. 13 and 14) (Ebert et al. 2021; Abraha 2023). Article 22 is generally considered to impose a prohibition on data controllers regarding automated decision-making (Bygrave 2020). Nevertheless, exceptions outlined in Article 22.2, including contractual necessity or legitimate interest, should be critically assessed considering the power dynamics inherent in employer–employee relationships (A29WP 2017). Furthermore, and notably, Article 22.3 GDPR mandates data controllers to adopt measures protecting data subjects’ rights, such as the right to human intervention and to contest decisions.

The enforcement measures outlined in the GDPR can be crucial in mitigating various risks to the fundamental rights of job applicants using AI systems. National data protection authorities (DPAs) can verify the existence of bias identification and mitigation strategies by requisitioning pertinent information, accessing personal data, and conducting audits (Art. 58.1 GDPR). Moreover, they can compel the implementation of bias identification and minimization strategies through their corrective powers (Art. 58.2 GDPR), including by imposing administrative fines (Art. 83 GDPR).

Nonetheless, the discourse that the GDPR effectively mitigates the AI-related risks in hiring has faced criticism. For example, while the GDPR requires employers to implement safeguards ensuring fair, transparent, and non-discriminatory use of data, these safeguards should be negotiated jointly with worker representation bodies rather than decided unilaterally (Todolí-Signes 2019). Salient enforcement deficits are also present—for instance, in addition to being under-staffed and under-resourced, DPAs are not experts in labor law (Abraha 2023).

More broadly, criticisms have been leveled at the GDPR’s individual-centric approach to harms, which assumes that granting individuals control over their personal data can effectively mitigate them. This perspective overlooks the limitations of individual control in the face of AI systems and neglects collective and societal harms. Worker representatives could be better placed in voicing data protection issues based on their interest and legitimacy, although they may also lack expertise and resources. In AI-driven hiring, a job applicant’s experience may be significantly influenced by data of other competitors rather than their own, necessitating the establishment of collective data rights for workers. Yet, activating such rights at the recruitment stage remains highly complex, as job applicants often lack solidarity networks, collective representation, or institutional embeddedness.

Some more specific provisions regarding the processing of personal data in the workplace have been put in place in Directive (EU) 2024/2831 on improving working conditions in platform work. This instrument concretizes the GDPR provisions concerning the use of algorithmic management systems used by digital labor platforms, making explicit reference to the hiring stage (Aloisi, Potocka-Sionek, and Ratti 2025). This regulatory approach may indeed become a useful blueprint for new instruments that would go beyond the narrow confines of the platform economy.

Anti-discrimination

In the EU, the potential of anti-discrimination law to mitigate risks associated with the adoption of AI systems is extensively debated (Wachter, Mittelstadt, and Russell 2021; Rigotti and Fosch-Villaronga 2024). The right to non-discrimination is a fundamental right, enshrined in instruments such as the Treaty on the Functioning of the EU (Art. 19) and the CFREU (Art. 21). This broad prohibition is further elaborated upon in several directives, including Directive 2000/43/EC against discrimination on the grounds of race and ethnic origin, Directive 2000/78/EC establishing a general framework for equal treatment in employment and occupation, and Directive 2006/54/EC on equal treatment for men and women in matters of employment and occupation.

These legislations aim at protecting against direct and indirect discrimination. Direct discrimination occurs when an individual is treated less favorably than others in similar circumstances based on their protected characteristics. While exceptions may exist (Adams-Prassl et al. 2023), it is commonly assumed that AI systems are unlikely to factor in legally protected characteristics of job applicants, as isolating a single factor reduces technical performance (Hacker 2018; Aloisi 2024). Conversely, indirect discrimination arises when ostensibly neutral criteria or practices disadvantage some individuals or groups compared to others, unless justified by a legitimate purpose, as well as necessary and proportionate means. Indirect discrimination is particularly relevant for AI, which primarily uses facially neutral metrics. Nonetheless, the effectiveness is frequently questioned, primarily because of the opaque nature of the technology and the difficulty in recognizing AI-driven discrimination (Zuiderveen Borgesius 2020). Moreover, AI-based hiring systems can lead to unconventional and abstract classifications, not clearly associated with existing protected grounds (Wachter et al. 2021).

Most literature on AI-driven discrimination discusses the concept of “proxy,” in which neutral information substitutes for prohibited characteristics (Kim 2016; Xenidis 2020), potentially strengthening legal protection for job applicants. Proxy discrimination occurs when AI systems use seemingly neutral information as a substitute for prohibited grounds. An example can be asking for a job applicant’s address to infer their race or ethnicity (Bertrand and Mullainathan 2004). While proxies are not new, AI systems are even more prone to using them (X. Chen 2024). Being programmed to identify correlations between input data and target variables, they can autonomously spot characteristics predictive of these variables. Consequently, when direct predictive traits are unavailable due to legal restrictions, AI systems may seek proxies, thereby posing complex legal challenges (Xenidis 2020).

AI-driven discrimination extends beyond traditional power imbalances, affecting groups not traditionally protected such as single parents, homeless people, or those with similar online behaviors (Wachter 2022). Another challenge relates to intersectional discrimination (Xenidis 2020), whereby multiple characteristics interact to produce distinct harms. AI systems can amplify these harms and introduce new dimensions of bias by analyzing data sets organized around intersecting axes of social inequality (Yemane 2020).

Note, too, that job applicants face hurdles in proving discrimination through private litigation, particularly regarding the causality link between the harm and the protected characteristic (Sargeant 2025). Procedurally, presenting facts suggesting discrimination is sufficient to trigger evidentiary simplifications benefiting the presumed victim. Despite this simplified evidence regime, obstacles persist due to limited awareness and understanding of algorithmic complexity, and the costs and unpredictability of litigation. By promoting AI legibility and accountability, the DPIA could rebut the respondent’s evidence to discharge the burden of proof (Aloisi 2024). Compliance with transparency and other trustworthiness obligations under the AIA can enhance job applicants’ exercise of the right to non-discrimination. However, a limitation of the AIA lies in relying on self-assessed conformity, limiting output-based safeguards (Meding 2025).

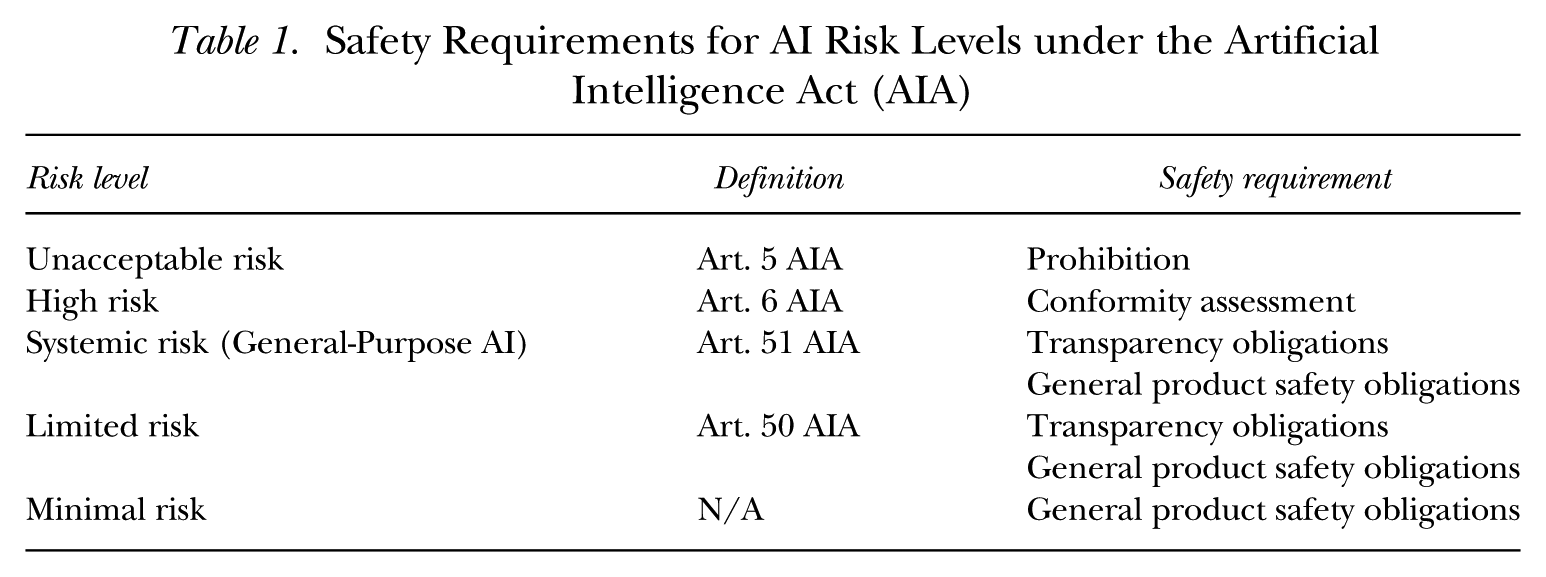

Product Safety

The AIA aims to ensure AI systems are safe, with the required level of safety proportionate to the risks they pose to society (see Table 1). Vague provisions within the AIA, however, challenge appropriate risk classification (Veale and Zuiderveen Borgesius 2021). As noted, the high-risk category in Annex III applies only to systems that “pose a significant risk of harm to the health, safety or fundamental rights of natural persons,” yet the AIA does not clarify what constitutes this risk, which may vary depending on context and users. In the asymmetrical structure of hiring, for instance, it is difficult to determine how significant the risk is, for whom, and who should decide (Ponce Del Castillo 2021). This determination is relevant because most AI systems can exploit oversight vulnerabilities, operate unpredictably in new circumstances, learn by trial and error, and behave in ways that are difficult to monitor or correct. As this factor determines high-risk labeling, we find it surprising that no further guidance is provided. The AIA notes that Regulation (EU) 2023/988 on general product safety (GPSR) will serve as a safety net.

Safety Requirements for AI Risk Levels under the Artificial Intelligence Act (AIA)

In establishing harmonized rules on consumer product safety, the GPSR was amended in 2023 to reflect ongoing discussions about whether the traditional focus on physical risks—such as mechanical hazards—adequately addresses emerging AI risks, particularly those affecting psychological well-being, emotional integrity, or human dignity (Poulsen et al. 2020). Also, it addresses intersectional aspects, highlighting that “when assessing whether a product is safe . . ., the categories of consumers using the product, in particular by assessing the risk for vulnerable consumers such as children, older people and persons with disabilities, as well as the impact of gender differences on health and safety” should be considered (Art. 6 GPSR). Article 8 GPSR further includes the state of technology as a factor in assessing product safety, ensuring that novelty cannot be used as an excuse to overlook risks. In other words, the explicit inclusion of GPAI in the AIA would not have made a significant difference.

Still, although AI systems may be objectively safe, they may have unanticipated effects on various communities (Poulsen et al. 2020). Recruiters report that these technologies risk using non-inclusive or outdated data, leading them to perceive the technology as immature and struggle to deliver reliable results for complex job searches (Horodyski 2023), potentially making them unsafe for applicants. Since AI systems rely on data rather than knowledge and lack human judgment, it remains unclear whether the safeguards in the AIA or GPSR are sufficient to ensure job applicants’ safety.

Thinking Collectively Outside of the (Black) Box: Co-governance Methods for AI Systems

To bridge the disconnect between risk management and fundamental rights protection, this article proposes integrating risk- and rights-based approaches in the regulation and design of AI-based hiring systems. Far from a mere procedural formality, we argue that participatory mechanisms, particularly those grounded in co-governance, can enhance AI systems’ legitimacy, transparency, and fairness by embedding job applicants’ perspectives into regulatory design and oversight. The logic is to enshrine involvement as a paramount principle when addressing sophisticated technologies, drawing on European traditions of social dialogue and co-determination (Bernhardt, Kresge, and Suleiman 2023; Doellgast, Wagner, and O’Brady 2023; Özkiziltan and Landini 2025).

Co-regulation operates at the intersection of top-down legislative measures and self-regulation, allowing legislators to delegate policy objectives to recognized actors in the field, including nongovernmental organizations, standardization bodies, or industry associations (European Commission 2023). Its underlying principle is also well-established in industrial relations (in the EU, Article 154–155 TFEU provides for a formal legislative role of social partners). Involving worker representatives in decision-making helps better identify and address discriminatory risks (Dencik et al. 2024).

Individual applicants may lack the resources or awareness to detect bias, making collective representation essential for revealing evidence and supporting legal discovery. Trade unions, works councils, and shop-floor delegates facilitate knowledge-sharing and help level the playing field in hiring, especially for those without technical or legal expertise. Trade unions have called for strengthening co-determination in technological change, modernizing training, and safeguarding employee data against digital surveillance (Özkiziltan 2024). Collective bodies have also played a significant role in negotiating company-level agreements on technology introduction, including securing veto rights over AI deployment (Chagny and Blanc 2024; Haipeter, Wannöffel, Daus, and Schaffarczik 2024). Their growing role is also visible in case law and legislation of some countries. In France, for instance, the Nanterre Court of Justice held that deploying AI tools, even in a pilot phase, requires prior consultation with the works council when employees will significantly interact with the system (Sedaei and Martin 2025). In Germany, the Works Council Modernization Act (in force since June 2021) has strengthened co-governance rights in cases of AI introduction. In particular, work councils can co-determine selection guidelines, including the criteria to be used during recruitment. They can also consult an external expert on the AI implementation at the expense of the employer.

More generally, co-governance can enable continuous engagement among regulators, developers, users, and affected individuals, including their representatives, to align technological design with diverse expectations, needs, and rights. However, such methodology is often approached with caution due to concerns over legitimacy and the risk of regulatory capture by dominant commercial or political interests. While industry participation in the AIA’s operationalization is both necessary and inevitable, given the extensive obligations placed on developers, co-governance in AI-driven hiring must ensure that private influence does not eclipse job applicants’ fundamental rights. When structured inclusively, co-governance could help redress power asymmetries by embedding diverse expertise, stakeholder input, and mechanisms of accountability into the regulatory process.

In the AIA, certain provisions stand out as more explicitly co-governance-oriented, most notably Article 56 on codes of practice, exemplified by the AI Office’s ongoing effort to draft guidance for general-purpose AI in consultation with nearly 1,000 stakeholders. Our analysis, however, centers on harmonized standards (Art. 40) and the FRIA (Art. 27), as these enshrine the tension between the AIA’s risk-based logic and its fundamental rights aspirations. We contend that this friction can be more effectively addressed through inclusive participation and shared decision-making. In this regard, the AIA could draw valuable insights from both the strengths and shortcomings of the regulatory frameworks already analyzed in this article, namely, data protection, anti-discrimination, and product safety laws.

Simultaneously, we acknowledge a major limitation: the varied interpretations and degrees of multi-stakeholder engagement in co-governance, which can lead to the term being misused. In some cases, co-governance may merely involve stakeholders in design and pilot phases that are ultimately controlled by AI developers or employers. Stakeholders often lack real influence, with researchers or developers setting agendas and controlling analysis and outcomes (Martinez-Vargas 2022). Furthermore, co-governance projects typically operate under resource and time constraints, prompting trade-offs between inclusivity and the need to achieve specific goals, often measured by ex ante indicators (e.g., stakeholder diversity and quotas) and ex post outcomes (e.g., deliverables or milestones) (Costanza-Chock 2020). Multi-stakeholder engagement then risks becoming a superficial exercise, overlooking the complex, context-specific interactions that are key to meaningful collaboration.

Harmonized Standardization as a Collaborative Approach

As a general feature of EU product safety legislation, products must meet essential legal requirements before they can be placed on the market. While developers can interpret these requirements independently, the European Commission can task European Standardization Organizations (ESOs) with developing harmonized standards adherence, which gives manufacturers a presumption of compliance with certain laws. 2 In principle, this form of co-governance allows legislation to adapt to ever-evolving technological advancements.

This approach is relevant because the AIA requires providers and deployers of high-risk AI systems to ensure compliance with essential legal requirements, either directly or through their interpretation and adherence to harmonized standards. This process has been criticized due to private bodies’ lack of legitimacy, industry self-serving interest, and dubious competence in translating legal principles into standard practices (Veale and Zuiderveen Borgesius 2021; Laux, Wachter, and Mittelstadt 2024). To address this, the AIA foresees multi-stakeholder governance to ensure balanced representation and effective participation of all relevant actors (Art. 40.3 AIA). These provisions mandate both the stimulation of adequate representation and the effective participation of relevant stakeholders (Art. 5.1 ESO), which cover, among others, undertakings, universities, and research centers (Art. 5.2 ESO).

Generally, making standardization more pluralistic could help address structural asymmetries in labor market relations, now amplified by the growing influence of deployers and providers on outcomes affecting job applicants. This shift would contribute to reconciling the tension between the AIA’s risk-based approach and its fundamental rights protection goal (Ho-Dac 2025). Standardization should be understood as an ongoing endeavor that continuously seeks better ways of addressing workplace discrimination, embracing differences, and learning from them. In this light, the ethical requirement of “fairness, non-discrimination, and diversity” (AIA, Recital 27) should be viewed not as a challenge to overcome but as a catalyst for cultivating trustworthy AI among employers, HR practitioners, and job applicants. While no single blueprint for inclusive standardization in this domain can be proposed, dedicated mechanisms and adequate resources must allow smaller entities and marginalized groups to participate.

Fundamental Right Impact Assessment (FRIA)

The critiques of the AIA during drafting and consultation were valid in highlighting that its original text could not adequately protect fundamental rights. In response, the European Parliament proposed an obligation for those deploying high-risk AI systems to conduct a FRIA in its 2023 compromise text. Despite some resistance, the FRIA is now enshrined in Article 27 AIA (Mantelero 2024). This methodology entails public bodies and private entities providing public services, prior to the use of high-risk AI systems, to describe the system’s purpose, affected groups, reasonably foreseeable impacts on fundamental rights, and specific risks of harm. Although high-risk AI-based hiring systems do not fall explicitly under this obligation, the FRIA could represent a tool to increase accountability and ensure fundamental rights co-governance.

Its assessment includes a detailed description of the system’s intended use, the affected populations, and the potential risks, as well as an outline of human oversight mechanisms and contingency plans to address materialized risks. It must be conducted before the initial deployment and updated as necessary. To facilitate compliance, the AI Office will provide a template for this process (Art. 27.5 AIA). Although helpful, its effectiveness depends on expert knowledge (Mantelero 2024), which often excludes diverse voices capable of advocating for marginalized groups and privileges employers’ interests (Marsiglia, Kulis, and Lechuga-Peña 2021). Consequently, stakeholder participation can appear performative, thereby undermining legitimacy and impact (Canto Moniz 2025).

As mentioned, the most important shortcoming of the AIA is not requiring FRIA for all AI systems, which could have provided insightful information about impacts and mitigation strategies in various contexts of application, including in AI-driven hiring. Nevertheless, there remains hope that elements of FRIA might be integrated into the broader risk-management obligations for high-risk AI systems. In this context, employers—as providers—must identify and analyze both known and reasonably foreseeable risks to health, safety, and fundamental rights (Chiara and Galli 2024).

Based on Article 27 AIA, FRIA’s scope lies in the “impact on fundamental rights.” Specifically, subsection d) requires the identification of specific risks of harm likely to affect specific individuals or groups, considering deployers’ transparency obligations. This raises a persistent challenge: assessing fundamental rights impacts without reducing them to quantifiable risks. Quantitative approaches often interpret risks to fundamental rights through probabilistic analyses of tangible harms, detectable facts, or quantifiable losses. While these methods offer predictability, they fail to capture the full scope of fundamental rights impacts, particularly in individual or collective experiences (Malgieri and Santos 2025). Fundamental rights should not be commodified, they serve as normative boundary markers that safeguard human dignity and respect as equals, regardless of any additional harm, thereby avoiding a hierarchy of rights (Giraudo et al. 2024).

For instance, one could consider the right to not be discriminated against in hiring. An AI system may map job applicants’ skills and “cultural fit” to streamline hiring (Horodyski 2023). Quantitative assessments might focus on measurable outcomes such as productivity gains, instances of potential unfairness, or structural inequalities. Yet, this overlooks the nuanced impact on candidates’ dignity and agency and the impacts on HR practitioners (Renkema 2022). AI-driven hiring can also create a pervasive sense of “dehumanization,” leading to stress, anxiety, and reduced autonomy among candidates (Jarota 2023). The psychological and emotional toll is difficult to capture, since candidates may be unaware of AI use or how they feel about it. For recruiters, it can deteriorate the work environment, reduce job satisfaction, and increase turnover.

Addressing these concerns requires a more qualitative approach to FRIA, emphasizing the broader implications of AI systems on human dignity and job applicants’ experiences. At all latitudes, it is crucial to develop methodologies that go beyond numerical data to include narratives, testimonies, and contextual analyses that reflect the true extent of the normative impact on fundamental rights qualitatively. In this respect, stakeholder engagement in FRIA can foster self-determination and community empowerment (Delgado, Yang, Madaio, and Yang 2021; Birhane et al. 2022), thereby ensuring human dignity. A structured system of consultation and social dialogue between worker representatives and employers is essential for this process, aiming to give workers greater control over technology design and deployment.

Following FRIA completion, Article 27.3 AIA requires deployers to notify the market surveillance authority of its results. Unlike the GDPR, which obliges data controllers to identify and implement technical and organizational measures to mitigate risks (Art. 35.7), the AIA provides little guidance on how to operationalize such obligations. Consequently, developers are entrusted with decisions that carry significant normative weight, particularly when it comes to balancing competing interests and upholding job applicants’ fundamental rights. To mitigate the risk that developers may prioritize their own interests (Chiara and Galli 2024), thus undermining the credibility of this exercise, it is essential to ensure the active involvement of affected communities and their representatives, as well as a broader range of actors, including equality bodies, consumer protection agencies, social partners, and DPAs. These actors can voice concerns of communities disproportionately impacted by AI harms, including undocumented individuals, who may be more likely to experience—or feel compelled to tolerate—violations of their rights (Nedzhvetskaya and Tan 2022).

Finally, developers of high-risk AI systems are not under a statutory obligation to publish the outcomes of the FRIA. This lack of transparency hampers the job applicants’ ability to scrutinize potential risks and engage meaningfully in oversight. Developers could make FRIAs publicly available, at least in summary, to cultivate trust and support informed stakeholder engagement. Moreover, establishing a repository for FRIAs related to AI-based hiring systems could serve as a key mechanism, promoting knowledge and exchange of best practices.

Conclusions

With the growing adoption of AI in hiring, and the mix of risks, benefits, and diverse regulatory approaches it entails, the EU case has been shown to be particularly instructive. To be precise, the AIA integrates participatory safeguards into its architecture, including stakeholder input in standardization, fundamental rights impact assessments, and workers’ consultation.

Crucially, co-governance is embedded in black-letter law—through binding AIA obligations and governance of standards, and complemented by GDPR duties, equality law, and EU information-and-consultation directives—thus creating enforceable levers for worker and civil-society participation. Realizing this promise requires smart operationalization in concrete ways: creative arrangements that update the classical repertoire of industrial relations (e.g., algorithmic clauses in collective agreements; data-access rights; co-designed FRIAs; participatory standard-setting; enforceable veto points in high-risk deployments).

This article illustrates how risk-based logic can be recalibrated through rights-based and participatory mechanisms, offering lessons for jurisdictions worldwide. The EU framework thus serves less as a model to copy wholesale than as a “living laboratory” exposing both the possibilities and pitfalls of comprehensive AI governance.

Our analysis supports co-governance as a general principle for regulating AI in high-stakes contexts such as hiring. Embedding affected groups and their representatives throughout the AI life cycle improves transparency, recalibrates power asymmetries, and transforms compliance from box-ticking into substantive accountability. Co-governance also counterbalances the limits of quantitative risk logic by integrating qualitative judgments about dignity, equality, and collective harms. Robust participation can redirect AI adoption away from labor-substituting and control-oriented applications toward complementarities that enhance work, strengthen worker voice, and embed innovation within safeguards (Doellgast et al. 2025). Where representative bodies are present and involvement is substantive, AI adoption tends to deliver higher-quality outcomes.

To align risk-based controls with fundamental-rights guarantees in hiring, we recommend 1) extending FRIAs to private deployers of high-risk hiring systems and requiring public summaries; 2) tightening joint accountability between providers and deployers through documentation hand-offs and audit rights; 3) resourcing DPAs, equality bodies, and market-surveillance authorities for coordinated oversight; 4) operationalizing workers’ information, consultation, and codetermination rights with enforceable veto or iteration points in high-risk deployments; 5) ensuring pluralistic participation in standardization, with resourced seats for worker and civil-society representatives; and 6) creating repositories for DPIAs/FRIAs and post-market incident reports to enable learning, benchmarking, and scrutiny.

Footnotes

This article is part of an ILR Review Special Issue on AI and the Future of Work: Institutions. The article is also part of the ILR Review’s ongoing Policy Paper Series.

Rigotti and Fosch-Villaronga are thankful to the Horizon Europe BIAS Project that funded this project and received funding from the European Union with Grant Agreement Number 101070468. Aloisi’s contribution is made within the framework of the project PID2023-149184OB-C43 granted by MCIU / AEI / 10.13039/501100011033 and the FSE+ and forms part of the research activities of the Pérez-Llorca/IE Chair at the IE University Law School.

For general questions as well as for information regarding the data and/or computer programs, please contact the corresponding author, Carlotta Rigotti, at

1

Briefly, Articles 3(3)–(4) AIA define a provider as any natural or legal person, public authority, agency, or body that develops or places an AI system or GPAI model on the market under its name or trademark, and a deployer as any such entity using an AI system under its authority, except for personal, non-professional use.

2

Regulation (EU) No 1025/2012 on European standardization recognizes the European Standardization Organizations. These are the European Committee for Standardization (CEN); the European Committee for Electrotechnical Standardization (CENELEC); and the European Telecommunications Standards Institute (ETSI).