Abstract

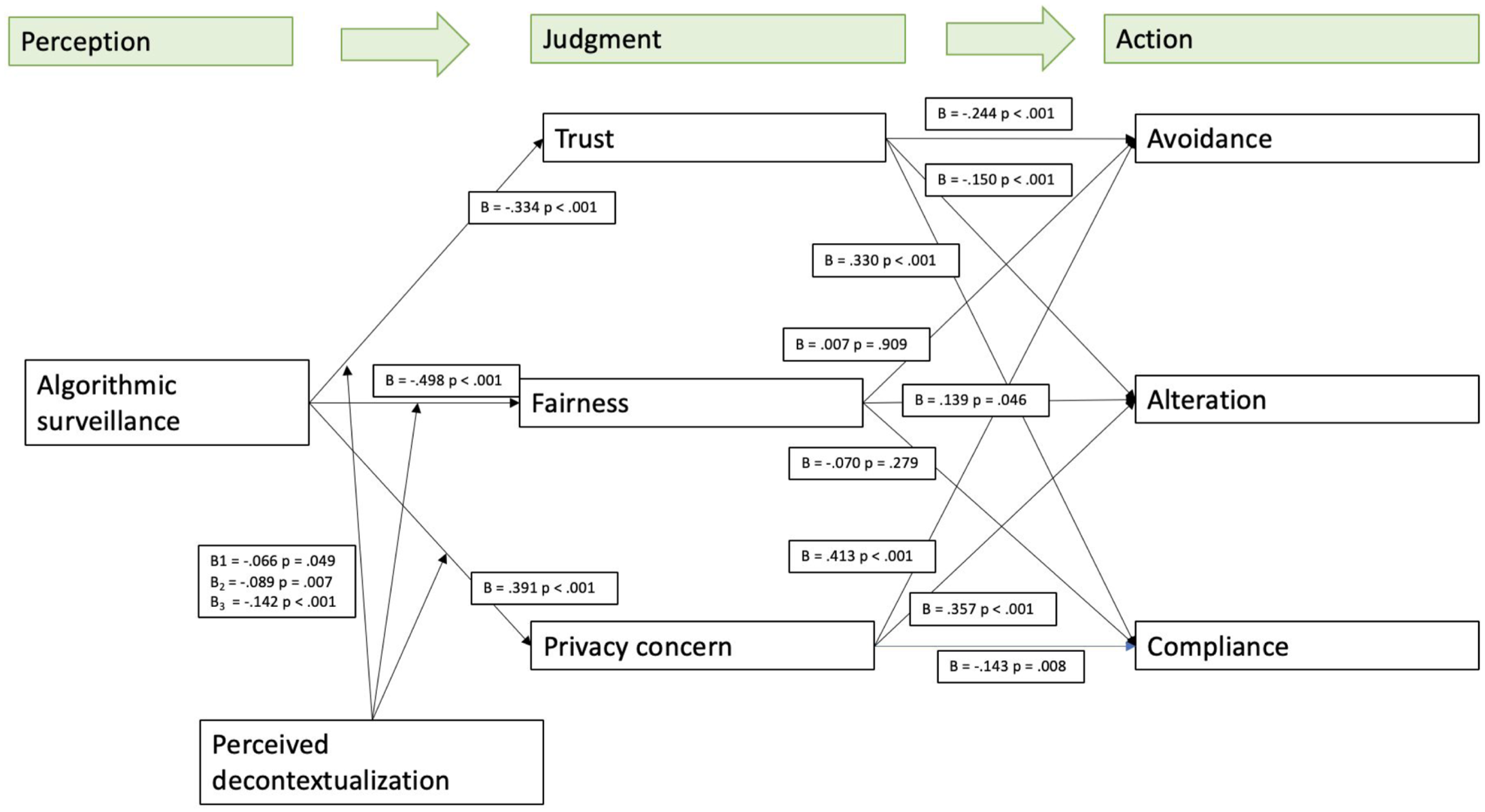

How do workers decide to comply with, alter, or resist algorithmic surveillance? We argue that decontextualization is a key, yet overlooked, mechanism that shapes workers’ responses to algorithmic surveillance. Research has widely critiqued algorithmic surveillance, focusing on diminished worker control and agency. However, the control-resistance mechanisms related to algorithmic surveillance are undertheorized and underexplored. We draw on socio-technical systems theory and micro-level legitimacy to examine mechanisms of surveillance and resistance in online crowdwork. Our findings, based on three-wave data from 435 European online crowdworkers, show that perceived algorithmic surveillance undermines trust and fairness, while increasing privacy concerns, which in turn inform workers’ intentions to comply, alter, or resist algorithmic surveillance. Perceived decontextualization moderates these relationships, exacerbating the adverse effects on trust and fairness while mitigating the effects on privacy concerns. These outcomes extend the view that individual outcomes are shaped by social and technical factors only by demonstrating that perceived decontextualization and micro-level legitimacy judgments—that is, trust, privacy concerns, and fairness—are important socio-technical mechanisms that also impact workers’ compliance. By highlighting the overlooked role of decontextualization in shaping resistance and compliance, this study challenges dominant control-centric narratives and offers a new lens on algorithmic governance.

Keywords

As crowdwork is becoming an established form of online work, worries regarding how this type of work should best be designed to optimize technical and social systems have not subsided (Guest et al., 2022). Gig work is defined by three characteristics: (1) task/project-based, (2) term-limited, and (3) outside organizational structures (Caza et al., 2022), typically facilitated by digital platforms (Alacovska et al., 2025; Pulignano et al., 2023). This article specifically focuses on crowdwork, which involves remote microtasks through platforms like Amazon Mechanical Turk. Hence, crowdwork is a subset of gig work, alongside other forms of Gig work like capital platform work (e.g., Etsy) and app-based work (Duggan et al., 2020), which includes ride-hailing and freelancing (Caza et al., 2022).

Controversy surrounding the nature and conditions under which online crowdwork is conducted is well-documented (e.g., Caza et al., 2022; Gegenhuber et al., 2021; Kincaid and Reynolds, 2024; Myhill et al., 2021; Tirapani and Willmott, 2023; Vallas and Schor, 2020). One common underlying problem relates to the technological innovation of labor processes and algorithmic workforce management (Bucher et al., 2021; Cini, 2023; Gandini, 2019; Guest et al., 2022; Newlands, 2021). This managerial ideology represents a problematic techno-normative form of control, where workers are subjected to ranking and rating systems that relentlessly surveil their performance and impose strict control mechanisms (Gandini, 2019), while “workers’ agency is doomed to be negligible or completely absent” (Cini, 2023, p. 126). The surveillance of workers based on data and rankings stimulates them to justify and improve their performance (Pulignano et al., 2023), and is used to manage tasks, scheduling, and compensation, but at the same time, it raises questions about whether and how workers may comply with or resist algorithmic surveillance (Jaser and Tourish, 2024; Kellogg et al., 2020).

The tensions between control and resistance in the context of algorithmic management are widely theorized (e.g., Kellogg et al., 2020). Moreover, researchers have noted that current empirical work overemphasizes control while downplaying worker resistance (Gandini, 2019; Howcroft and Bergvall-Kåreborn, 2019; Pignot, 2023). As a result, so far, “platform worker resistance has been under-researched and under-theorized” (Joyce and Stuart, 2021, p. 158), and the underlying mechanisms linking control with resistance too often remain “black boxes” (Wiener et al., 2023). We seek to remedy this problem by suggesting that responses to algorithmic surveillance of online crowdworkers likely encompass different types of behavioral intentions, which vary from subjection or compliance to resistance (Ettlinger, 2018). Hence, although workers often cannot choose whether they are monitored (Purcell and Brook, 2022) under hegemonic surveillance (Jaser and Tourish, 2024), workers are not passive recipients of algorithmic systems either (Jarrahi et al., 2021). For instance, while some workers comply by adopting behaviors rewarded by algorithms, others subvert control by avoiding unappealing tasks or finding workarounds (Bankins et al., 2024; Kellogg et al., 2020).

Currently, what is lacking is theorization that begins to capture how and when algorithmic surveillance is met with compliance or resistance intentions by online crowdworkers. This is important because it helps push our knowledge beyond the fear and hype narrative of algorithmic systems and provides insights into the implications of algorithmic surveillance systems beyond their technical definition (Dourish, 2016; Huysman, 2020; Wiener et al., 2023). Specifically, we propose the following refinement that is likely to advance our understanding of algorithmic surveillance-resistance dynamics in online crowdwork. We integrate socio-technical systems (STS) theory (Emery, 1982; Trist, 1981; Trist and Bamforth, 1951, and recently Guest et al., 2022) with micro-level legitimacy judgments (Bitektine et al., 2020) to examine online crowdworkers’ intentions to comply, alter, or resist algorithmic surveillance. Cognizant of the central premise of the STS theory (Guest et al., 2022; Trist and Bamforth, 1951), technological and social systems should jointly be optimized, thus moving beyond deterministic and coercive aspects of algorithmic control. We further bring legitimacy judgments into our gaze—which refers to “a generalized perception or assumption that the actions of an entity are desirable, proper, or appropriate within some socially constructed systems of norms, values, beliefs” (Suchman, 1995, p. 574). Specifically, we suggest that micro-level legitimacy (Bitektine and Haack, 2015) provides a critical lens to understand how crowdworkers evaluate and respond to algorithmic surveillance and reductionist logic embedded in such technological systems (Newman et al., 2020).

Together, these theories help us move beyond deterministic assumptions of surveillance and open up the black-boxed algorithmic surveillance-compliance/resistance relationships by identifying important socio-technical moderators and mediators. We approach this study as a theory elaboration effort (Fisher and Aguinis, 2017), aimed at advancing both STS theory and micro-level legitimacy theory. While prior work has applied these perspectives separately to algorithmic management, our integration offers three key theoretical refinements. First, we structure specific relationships by identifying trust, fairness perceptions, and privacy concerns as sociotechnical mechanisms (Parent-Rocheleau and Parker, 2022) that shape legitimacy judgments of algorithmic surveillance. Furthermore, we suggest that perceived decontextualization is an important contextual factor that influences the extent to which legitimacy judgments explain the surveillance-compliance/resistance dynamic. Decontextualization refers to how algorithms fail to adequately account for a worker’s broader context when considering their work activities through limited and crude data traces (Newman et al., 2020). Second, we categorize the outcomes of these judgments into three distinct behavioral intentions: compliance, alteration, and avoidance, thereby extending legitimacy theory beyond binary acceptance-resistance models. Third, we apply these refined constructs in the context of online crowdwork, elaborating the explanatory power of both STS and legitimacy theory in highly decontextualized, digital labor settings. This is important because it informs how algorithmic management can be designed to balance social and technical systems, providing theoretically grounded findings to humanize work in online crowdwork (Guest et al., 2022).

Theory

Algorithmic surveillance in online crowdwork is often conceptualized as a deterministic force of control, where algorithmic control, such as real-time performance tracking, automated task allocation, and rating-based reward systems, systematically regulates worker behavior (Newlands, 2021; Purcell and Brook, 2022). Rahman (2024) highlights how algorithms create a tight system of control that is totalizing and largely invisible, making resistance difficult. In online crowdwork, algorithmic surveillance operates by continuously monitoring worker activity through computer screenshots, tracking mouse movements and clicks, recording keyboard inputs, automatically logging communications between workers and requesters, measuring task completion times, and applying online rating schemes (Gegenhuber et al., 2021; Jarrahi et al., 2021; Liang et al., 2023; Wood et al., 2019). This invasive and seemingly inescapable grip of algorithms could lead workers to accept technological judgment despite its flaws (Jaser and Tourish, 2024).

However, algorithmic surveillance has proven far more complex, representing a “contested terrain of control” shaped by control and resistance dynamics (Kellogg et al., 2020). Unfortunately, too often, research glosses over the processes mediating algorithmic surveillance and worker responses, assuming that surveillance either coerces compliance or provokes resistance without understanding the socio-technical mechanisms that shape these outcomes. Drawing on a moderate voluntarist perspective (as opposed to a deterministic viewpoint), we integrate STS theory and the micro-level legitimacy framework to identify several socio-technical moderators and mediators that shape algorithmic surveillance and workers’ compliance and resistance intentions.

Moderate voluntarism challenges both technological determinism and extreme worker agency perspectives (Leonardi, 2012), asserting that the effects of algorithmic surveillance depend on the interaction among technological constraints, organizational strategies, and worker agency (Parent-Rocheleau and Parker, 2022; Strohmeier, 2009). Specifically, moderate voluntarism aligns with the STS theory by recognizing that algorithmic surveillance is not an autonomous or fixed structure, but one whose consequences are shaped by how it is designed, implemented, and interpreted within specific work arrangements or contexts. At the same time, it complements theorizing on legitimacy as perception (Suddaby et al., 2017) by explaining why algorithmic surveillance does not uniformly lead to compliance or resistance—instead, workers’ responses are (somewhat) contingent on how they perceive the legitimacy of these systems.

STS helps us to understand how technology in action produces varied outcomes across individuals and contexts (Leonardi, 2012), while legitimacy judgments help to identify specific mechanisms linking control practices to behavioral intentions (Kellogg et al., 2020). We specifically focus on compliance and resistance. In the context of workplace surveillance, compliance stems from a fear of being exiled while resistance stems from the need to limit one’s exposure (Ball, 2022; Hafermalz, 2021). Compliance entails workers’ intentions to accept the presence and usage of algorithmic surveillance without attempting to alter or avoid it. Resistance, on the other hand, is slightly more complex and may come in various ways as workers attempt to wrest control back from technology (Pollock, 2005). Specifically, resistance entails workers’ “actions that subvert an organization’s control system” (Cameron and Rahman, 2022, p. 39), and Ball (2010) notes that resistance in most cases entails workers altering or avoiding the monitoring process in ways that provide a little more freedom on the job. In addition, Mettler (2024) posited that “knowing that one is being monitored and appraised by a machine every second of the workday can lead to the counter-productive effect of actively resisting, evading, or tricking the system” (p. 557). Following Spitzmüller and Stanton (2006), we examine two dimensions of resistance—that is, alteration and avoidance. Alteration refers to actions by workers such as altering the system’s settings to avoid surveillance (Kassim and Marzukhi, 2014; Spitzmüller and Stanton, 2006). For instance, workers can use data obfuscation tools to alter which data is evaluated (Newlands, 2021), such as using VPN clients to evade work location restrictions imposed by algorithms. Another way to resist algorithmic surveillance is to try and evade the control mechanisms altogether. For instance, in the context of high-skilled crowdwork (i.e., Upwork), workers are found to avoid the platform to circumvent transaction fees, maintain privacy, and preserve autonomy (Jarrahi et al., 2020).

We argue that a socio-technical moderator (i.e., perceived decontextualization) and mediators (i.e., micro-level legitimacy judgments) provide important missing explanatory mechanisms. While perceived decontextualization reinforces technical constraints by standardizing control, micro-level legitimacy judgments mediate whether these constraints are perceived as coercive and appropriate, ultimately shaping worker behavior. Hence, by integrating STS theory and legitimacy judgments, we develop a framework that provides a theoretically grounded model for understanding when and why algorithmic surveillance results in compliance or resistance intentions.

Bonezzi and Ostinelli (2021) argue that a fundamental belief underlying algorithms is that they decontextualize decision-making in organizations, as they neglect the unique characteristics of the individual being scrutinized. Hence, perceived decontextualization refers to the perception that algorithms fail to accurately capture certain qualitative attributes of work performance (Faraj et al., 2018; Van Zoonen et al., 2024), thereby reducing complex labor processes into quantifiable, machine-readable data (Newman et al., 2020). Hence, despite the proclaimed efficiencies of algorithms for organizational control and surveillance (Alaimo and Kallinikos, 2021), the impersonal, data-driven nature of algorithmic management has raised concerns regarding its capacity to fully comprehend and incorporate the nuanced, context-specific aspects of human labor (Newlands, 2021; Newman et al., 2020; Zhang et al., 2025). This reductionist logic prioritizes calculation and measurement principles over holistic and contextually rich evaluation (Alaimo and Kallinikos, 2021).

In particular, the reliance on machine-readable data exposes workers to vulnerabilities in the platform design (Wu and Huang, 2024) as it limits opportunities to make accurate and fair managerial decisions (Newlands, 2021). This raises critical questions about bias, justice, and fairness, overall, prompting a re-evaluation of legitimacy judgments about algorithmic surveillance. From a psychological perspective, perceived decontextualization has the potential to heighten distrust (Wu and Huang, 2024) and intensify algorithmic management’s adverse effects by alienating workers (Bucher et al., 2024), as it makes algorithmic surveillance appear arbitrary. The notion that algorithmic processes and evaluations may seem disconnected from reality may amplify fairness concerns. Overall, workers may feel that important aspects of their work effort, identity, and competencies are systematically ignored under strict algorithmic surveillance (Zhang et al., 2025).

As algorithmic control (including surveillance) reduces complex labor into rigid, performance-based metrics, thereby stripping away situational context and reinforcing opacity and power asymmetry (Gegenhuber et al., 2021; Jarrahi et al., 2021; Schüßler et al., 2021), understanding workers’ responses remains incomplete without considering how workers perceive and react to these algorithmic structures. We suggest that micro-level legitimacy judgments serve as pivotal mediating mechanisms (Bitektine and Haack, 2015; Wiener et al., 2023), providing a nuanced understanding of whether algorithmic surveillance is accepted, contested, or resisted. Drawing on previous research on legitimacy as perception (Suddaby et al., 2017) and on algorithmic surveillance and decision-making, we conceptualize micro-level legitimacy judgments as perceptions of trust, fairness (Lee, 2018), and privacy concerns (Liang et al., 2023; Wiener et al., 2023). These three factors shape legitimacy judgments because they entail an overall assessment of the social acceptability of specific algorithmic practices. Workers’ responses to algorithmic surveillance, then, are not purely dictated by the technology itself, but emerge through their evaluations of the legitimacy of these control mechanisms (Spitzmüller and Stanton, 2006; Wiener et al., 2023). Hence, integrating STS and the micro-level legitimacy judgment framework is essential for a more nuanced understanding of control-resistance dualities, which are too often painted with a broad brush.

Hypotheses’ development

Trust judgments

We argue that trust is an important micro-level legitimacy

Indeed, trust development in technological contexts is often obstructed, especially when technological processes are opaque (Grimmelikhuijsen, 2023). Several studies have suggested that constant surveillance of workers in the gig economy may signal distrust by the organization of the workers (e.g., Möhlman and Zalmanson, 2017). For instance, trust in algorithms has focused on workers’ confidence, faith, and/or hope in algorithms and their decisions (Shin et al., 2022), suggesting opaque functioning and heightened power imbalances foster little confidence in the positive outcome of algorithmic processes (Cabiddu et al., 2022) and might evoke fear and mistrust in technology (Alacovska et al., 2024). Thus, the erosion of trust may be particularly impacted by the notion that individuals may no longer feel that they have control over the decisions, rendering them mere recipients of algorithmic processes (Cabiddu et al., 2022), thereby limiting their ability to derive insights for improvement (Cui et al., 2024).

Furthermore, earlier research suggested that algorithmic surveillance is mainly manifested by automated information collecting and observational monitoring that does not directly involve the crowd workers (Wang et al., 2022) and could therefore lead to distrust and disengagement (Li et al., 2024). In this regard, Lee (2018) added that with algorithms, “establishing the right level of trust, or how much people believe in the reliability and accuracy of the technology’s performance, can be a challenge, which can deter the adoption and efficacy of the technology” (p. 4). The lack of actionable information and opportunities for contestation places crowd workers in a vulnerable position in which they may have no choice but to rationalize and cope with the unpredictability of the algorithms (Lata et al., 2023). This emphasizes the potential inequality that leaves workers highly vulnerable to coercion and control via algorithmic surveillance and manipulation (Athreya, 2020).

Hence, algorithmic surveillance exposes online crowdworkers to heightened vulnerability to exploitation by subjecting them to opaque, data-driven evaluations, often resulting in arbitrary penalties or biased outcomes (Newlands, 2021; Rahman, 2024). While workers may accept this vulnerability as an unavoidable condition of platform labor—engaging in performative consent to maintain access to work (Jaser and Tourish, 2024)—the resulting erosion of trust in algorithmic governance fosters resentment and, ultimately, fuels resistance against the system’s perceived unfairness (Ball, 2010; Duggan et al., 2020; Zhang et al., 2025). Therefore, building on the micro-level legitimacy judgments framework (Bitektine and Haack, 2015) and the empirical findings shared above, we hypothesize:

Privacy concerns

Privacy is endemic to workplace surveillance (Ball, 2010) and a salient concern in an age where personal data have become a valued commodity (Fast and Jago, 2020). Indeed, data-gathering technologies have led to increased workplace surveillance accompanied by challenges such as bias and discrimination, as well as a loss of privacy and lack of transparency (Nguyen, 2021). Broadly, privacy refers to an individual’s ability to control information about oneself personally and has become an increasingly important challenge in the digital age (Ball, 2010).

Not surprisingly, scholars have long recognized the delicate relationship between workplace surveillance solutions, such as computerized performance monitoring systems (Grant and Higgins, 1991), and workers’ right to privacy (Kling and Allen, 1996). Hence, while computerized surveillance is not new (Mettler, 2024), the rise of algorithmic surveillance and ‘bossware’ has sparked a renewed interest in privacy concerns for workers (Do et al., 2024). The key problem of algorithmic surveillance in online crowdwork is that it is unrelenting, omnipresent, and invasive, often including screen recordings, mouse tracking, webcam activation, location/IP tracking, and keystroke recording (Sannon et al., 2022). Hence, under the guise of productivity enhancement, algorithmic surveillance may impose upon workers’ privacy (Do et al., 2024). Particularly, when workers feel that algorithmic surveillance limits their control over personal information, they are likely to feel their privacy has been invaded (Alge, 2001; Chory et al., 2016).

Importantly, while people have historically cared about their right to privacy, Fast and Jago (2020) cautioned that the widespread diffusion and convenience of algorithms could be “systematically eroding people’s capacity and psychological motivation to take meaningful action” (p. 44). Hence, it seems opportune to examine to what extent workers take

In an experiment that manipulated monitoring intensity involving crowdworkers on Amazon Mturk and Prolific, Liang et al. (2023) found that when monitoring intensity increased, workers became less likely to accept monitored jobs (i.e., increased workaround actions) because of elevated privacy concerns. In addition, in the context of Uber, lower privacy concerns were found to reduce workaround use and improve compliance (Wiener et al., 2023). These findings suggest that when workers feel their privacy is safeguarded, their intention to resist algorithmic surveillance or control through workarounds is lower. Following this logic, we assume that when privacy concerns are higher due to surveillance, thereby affecting workers’ perceived control over their personal data (Alge, 2001), they will be more motivated to resist surveillance through alteration or avoidance strategies, and less likely to comply with the surveillance system. Hence, we hypothesize:

Fairness

Traditional discussions of organizational justice (for an overview, see Greenberg, 1987) have long recognized the importance of fairness in both organizational procedures and outcome distributions (e.g., Procedural Justice Theory: Thibaut and Walker, 1975; Equity Theory: Adams, 1965). While a detailed review of organizational justice theories is beyond the scope of this study, we argue that fairness judgments are central to understanding workers’ responses to algorithmic surveillance systems (Alder and Ambrose, 2005; Alge, 2001; Stanton, 2000).

Fairness is often considered a global perception of appropriateness, shaped by procedural and distributive justice (Colquitt and Zipay, 2015). Procedural fairness, in particular, focuses on the perceived legitimacy and transparency of organizational processes rather than just the outcomes they produce (Leventhal, 1980; Thibaut and Walker, 1975). In algorithmic management, procedural fairness is particularly salient, as surveillance and decision-making processes occur through automated systems that can obscure the rationale behind workplace decisions (Newman et al., 2020; Starke et al., 2022). The inability of workers to challenge or appeal algorithmic assessments has been identified as key concerns that undermine procedural fairness perceptions (Jabagi et al., 2024).

Arguably, the algorithmic management systems these platforms deploy highlight the power imbalance and information asymmetries in the triadic relationship between the platform, requesters, and the workers, thereby impeding fairness assessments (Duggan et al., 2020; Shanahan and Smith, 2023). The platform organization is the only party in this relationship with full access to and control over the data, processes, and rules of the platform (Jabagi et al., 2019). Indeed, perceived unfairness related to online crowdwork, and their algorithmic governance styles has been repeatedly documented (e.g., Jabagi et al., 2024). Shanahan and Smith (2023) found that workers subjected to algorithmic surveillance sought ways to maintain fair exchanges between workers and the platform by flouting the rules by pooling their experiences and sharing tricks that could help circumvent platform surveillance.

Research on computer-based monitoring and surveillance in organizational contexts has consistently highlighted the role of fairness in shaping worker responses (e.g., Ball, 2001, 2022; Spitzmüller and Stanton, 2006). We argue that workers’ assessments of procedural fairness—the extent to which surveillance is consistent, transparent, and offers recourse—largely determine their responses (Leventhal, 1980). Procedural unfairness, particularly in algorithmic surveillance, has been linked to increased worker resistance (Ball, 2021). Studies suggest that when workers perceive algorithmic surveillance as unfair due to its opaque or inconsistent decision-making, they are more likely to engage in resistance behaviors, including altering or avoiding surveillance mechanisms (Cameron and Rahman, 2022). While crowdworkers may have limited direct avenues for challenging surveillance, perceptions of procedural fairness shape the extent to which they intend to comply with or resist algorithmic surveillance. Hence, we hypothesize:

Perceived decontextualization

Algorithmic surveillance may be particularly problematic when perceived decontextualization strengthens its eroding effects on trust and fairness (Lee, 2018), Ironically, perceived decontextualization may simultaneously mitigate privacy concerns by rendering algorithmic surveillance less personal and invasive. As algorithmic surveillance intensifies, workers may perceive highly decontextualized systems as more detached from their identities, thereby reducing the perception of surveillance as an intrusive, hyper-individualized form of control.

Hence, we argue that perceived decontextualization is important in understanding surveillance-resistance dynamics by illuminating conditions under which surveillance triggers stronger micro-level legitimacy judgments. While workers accustomed to technological management may accept algorithmic judgment despite its limitations (Jaser and Tourish, 2024), their awareness of algorithmic flaws, such as decontextualization, may give rise to a re-evaluation of the legitimacy of algorithmic surveillance. When algorithmic surveillance is perceived by workers as decontextualized from qualitative and contextual work practices, it may amplify the negative impact of surveillance on trust and fairness perceptions, ultimately fostering greater resistance intentions. However, high perceived decontextualization can also create a perceived layer of depersonalization, paradoxically leading workers to feel less personally scrutinized, softening their privacy concerns, and reducing resistance intentions. Taken together, we propose that perceived decontextualization moderates the relationship between algorithmic surveillance and workers’ perceptions of trust, privacy concerns, and fairness. Specifically:

Methods

Sample

To recruit online crowdworkers, we adopted a purposive sampling strategy, recruiting them in their digital work environments. In this case, respondents were recruited among crowdworkers registered on the Europe-based online labor platform Clickworker. In the first wave (T1), we collected data on workers’ perceptions of algorithmic surveillance and decontextualization. In the second wave (T2), 1 month later, we assessed workers’ legitimacy judgments—that is, trust, privacy concerns, and fairness. In the third wave (T3), conducted 1 month after T2, we examined workers’ behavioral intentions, measuring compliance, alteration, and avoidance. We refer to T1, T2, and T3 to denote the measurement occasion in the section on measurement instruments.

To the best of our knowledge, no earlier research has explicitly determined the optimal time lag for examining micro-level legitimacy judgments based on algorithmic surveillance in crowdwork. Notably, legitimacy judgments are cognitive evaluations that require reflection and may evolve (Tost, 2011). We considered 1-month lags to be sufficient based on studies on related topics. For instance, Santuzzi and Barber (2018) found that a 1-month lag effectively captured the relationship between technological demands and subjective work judgments. Furthermore, Jiang et al. (2024) observed that perceptual and behavioral responses to workplace internet monitoring (akin to surveillance practices) emerged after 1 month. Finally, methodologically, longer intervals risk study attrition and may introduce confounds (Dormann and Griffin, 2015).

We posted our survey as a task on Clickworker and received 828 completed surveys from the respondents at T1. These respondents were re-invited to participate in T2 (

Measurements

Table 1 reports all measured items (scored on 7-point Likert scales) alongside their corresponding factor loadings and standard errors. At T1,

Measurement instruments and statistics.

All factor loadings are significant at

Unit loading indicator constrained to 1.

At T2, we measured legitimacy judgments, including trust, privacy concerns, and fairness.

At Time 3, we measured compliance intention by examining individuals’ intentions to comply with, alter, or avoid monitoring based on Kassim and Marzukhi (2014).

Analytical approach

We used Structural Equation Modeling in AMOS 27 to examine our hypothesized model. First, we conducted a Confirmatory Factor Analysis (CFA) to probe the reliability and validity of the measurement instruments. After establishing adequate reliability and validity, we moved on to testing our hypotheses by estimating a fully latent structural model using algorithmic surveillance and perceived decontextualization as our predictors (exogenous constructs; the first as the independent variable and the second as the moderator), with trust, privacy concerns, and fairness as mediators (endogenous constructs), and with avoidance, alteration, and compliance intentions as outcomes (endogenous constructs). The hypothesized moderation was probed using the Johnson-Neyman technique. The models were estimated using a maximum likelihood (ML) estimator, and bias-corrected model parameters were obtained using bootstrapping, extracting 10,000 bootstrap resamples. Overall, model fit was assessed using various model fit indicators. We report the χ2/df, with values below 5 typically indicating a good model fit. In addition, we report the standardized root mean squared residuals (SRMR) and root mean square of approximation (RMSEA), with values below 0.05 and 0.08, indicating a good model fit. Finally, the Comparative Fit Index (CFI) and Tucker-Lewis Index (TLI) were examined, with values above 0.95 considered indicative of a good model fit.

Results

Measurement model

The CFA indicated adequate model fit as evidenced by the χ2/

Reliability and validity statistics.

CR: Composite reliability; MaxR(H): maximum reliability H; AVE: average variance extracted; MSV: maximum shared variance; Values in bold on the diagonal represent the square root of the AVE indicating the Fornell-Larcker criterion in met. Reliability and validity statistics are only provided for latent constructs in our model. Significant correlations are flagged *.

Gender was coded 1 = male, 0 = female.

Structural model

The structural model fitted the data well (χ2/

Results of hypotheses tests.

B represents unstandardized regression weights; beta indicates standardized regression weights. BC 95% CI indicates the 95% bias-corrected confidence intervals associated with the regression weights.

Regression model—Legitimacy process model.

Indirect effects

Hypothesis 1 assumes that algorithmic surveillance is associated with compliance intentions through trust judgments. Results indicated that algorithmic surveillance is negatively associated with trust (B = −.369 95% CI [−.527; −.232],

Hypothesis 2 posited that algorithmic surveillance increases privacy concern, which in turn affects compliance. Algorithmic surveillance is positively associated with privacy concerns (B = .421 95% CI [.280; .590],

Hypothesis 3 proposed that algorithmic surveillance is related to compliance through fairness. The results show that algorithmic surveillance is negatively associated with fairness (B = −.498 95% CI [−.670; −.344],

Moderation

Finally, Hypothesis 4 proposed that the influence of algorithmic surveillance on legitimacy judgments—that is, trust, privacy concerns, and fairness—is influenced by perceived decontextualization. Using the Johnson-Neyman technique to probe moderation, we examined the interaction effect of algorithmic surveillance and perceived decontextualization on trust (Hypothesis 4a), privacy concerns (Hypothesis 4b), and fairness (Hypothesis 4c). Results demonstrated a significant negative moderation effect (B = −.066 95% CI [−.132; −.001],

Hypothesis 4b predicted that the positive relationship between algorithmic surveillance and privacy concerns is weaker when perceived decontextualization is higher. The results indicated that perceived decontextualization significantly negatively moderates the relationship between algorithmic surveillance and privacy concerns (B = −.142 95% CI [−.215; −.070],

Finally, we hypothesized that the relationship between algorithmic surveillance and fairness is moderated by perceived decontextualization. There was a significant negative interaction effect (B = −.089 95% CI [−.153; −.025],

Discussion

Using STS (Emery, 1982; Trist, 1981) and micro-level legitimacy (Bitektine and Haack, 2015), we examined crowd workers’ responses to algorithmic surveillance. We found that forms of compliance and resistance can be explained through micro-level legitimacy judgments and perceived decontextualization. Our study highlights that algorithmic surveillance erodes trust and fairness and heightens privacy concerns among workers. These problems are exacerbated by the reductionist logic (i.e., perceived decontextualization) embedded in algorithmic surveillance, highlighting the need for more nuanced and context-aware algorithmic management practices. We further highlight how workers’ legitimacy judgments may lead to decreased compliance and increased tendencies to alter or avoid surveillance mechanisms. These findings have several implications for theory and practice.

Theoretical implications

This study extends previous research that conceptualizes algorithmic control, including surveillance, as a sociotechnical assemblage where technologies and social actors co-construct control mechanisms (Alacovska et al., 2024; Jarrahi et al., 2021; Krzywdzinski et al., 2024; Orlikowski and Scott, 2015; Pulignano et al., 2023; Sarker et al., 2019; Sutherland et al., 2020). Overall, our empirical work makes three distinct theoretical contributions by elaborating (Fisher and Aguinis, 2017) both STS theory and micro-level legitimacy theory. First, we combine STS and legitimacy perceptions in an integrated framework to explain how crowdworkers make sense of, and respond to, algorithmic surveillance. This integration bridges macro-level perspectives on sociotechnical design with micro-level mechanisms of normative evaluation, thereby providing a cross-level theoretical synthesis that advances our understanding of how control and resistance dynamics unfold in online crowdwork. Second, we specify and split the outcome space of legitimacy judgments by showing that worker resistance is not a monolithic response. Instead, we theorized and empirically distinguished between alteration and avoidance in addition to compliance. Overall, this nuanced view, along with the identified socio-technical mechanisms linking surveillance and forms of resistance, addresses long-standing critiques that resistance remains under-theorized (Joyce and Stuart, 2021) and expands the application of micro-level legitimacy perceptions as an underlying mechanism. Third, by applying and extending these theoretical notions in the crowdwork context, we horizontally contrast their assumptions and constructs in a setting characterized by decontextualized algorithmic governance, expanding their empirical scope and relevance. In doing so, we move beyond treating digital labor platforms as exceptional cases and instead demonstrate their value for elaborating foundational organizational theories in novel settings.

Specifically, by integrating STS and the legitimacy in an integrated framework (Suddaby et al., 2017), we structure the underlying mechanisms that shape processes of compliance and resistance related to surveillance (Jarrahi and Sutherland, 2019; Wiener et al., 2023). In line with an STS perspective (Emery, 1982; Trist, 1981), we demonstrate that algorithmic surveillance diminishes trust and perceptions of fairness while increasing privacy concerns among workers, which in turn affect workers’ compliance, alteration, or resistance intentions. In addition, we split the outcome of interest (Fisher and Aguinis, 2017) into three distinct behavioral intentions, thereby moving beyond binary acceptance/resistance outcomes of legitimacy perceptions. Together, this highlights that the social context of algorithmic surveillance shapes worker reactions, reinforcing that algorithmic management is not a neutral, deterministic force but an evolving sociotechnical construct. Moreover, we underscore the role of perceived decontextualization as a key moderator. This highlights the importance of incorporating contextual and technological richness into surveillance practices to mitigate negative impacts, emphasizing that both social and technical dimensions must be considered (Dolata et al., 2022; Sarker et al., 2019).

We show that surveillance practices, especially when deprived of rich contextual information (i.e., perceived as decontextualized), reduce trust and increase privacy concerns, making compliance intentions less likely and triggering alteration or avoidance strategies among workers. More specifically, algorithmic surveillance benefits from quantifying and decomposing tasks into small parts and evaluating and monitoring worker performance through ratings and rankings. However, simplifying complex behaviors and contexts into quantifiable metrics strips away context and disregards nuance. In the context of people analytics, earlier research recognized that objective data is an illusion, and neutral representations of work and workers are difficult to obtain (Weiskopf and Hansen, 2022). We demonstrate that if workers perceive the process through which evaluations are determined as inadequate in considering the broader context of work, the adverse effects of algorithmic surveillance become more pronounced.

These findings also provide critical insights for developing governance policies that align with ethical standards and enhance the overall legitimacy of algorithmic management systems (Newlands, 2021). More broadly, in line with Gandini (2019), we highlight that worker compliance with, or resistance to, techno-normative control mechanisms is shaped by legitimacy judgments. Specifically, we confirm that workers may seek and exploit ways to regain control when under strict technological control (Kellogg et al., 2020) and add that this is more likely when algorithmic surveillance leads to lower legitimacy judgments and is perceived as decontextualizing workers’ performance.

While algorithms make surveillance more pervasive and inescapable, for example, hegemonic surveillance, Jaser and Tourish (2024), workers’ habituation to these systems may make them more aware of the limitations and flaws of these technologies (e.g., perceived decontextualization). Ultimately, rather than accepting surveillance as a fact of life, workers may view this as a liminal space to evaluate the legitimacy of these control dynamics. Our findings highlight that perceived decontextualization may shift the needle for workers in their calculus of trust, privacy concerns, and fairness judgments. Future research could deploy longitudinal studies into how habituation to algorithmic surveillance may make workers more aware of the limitations of these practices, affecting their judgments, for example, privacy calculus theory (risk-benefit assessment regarding technology use: Bhave et al., 2020).

Moreover, we contribute to theorizing about micro-level legitimacy judgments by highlighting the importance of trust, privacy concerns, and fairness as mediating mechanisms shaping worker responses to algorithmic surveillance. However, it is worth noting that this only applies to trust and privacy concerns. Counter to our hypothesis, fairness was positively associated with alteration intentions. This finding can be explained by the notion that fairness promotes proactive behavior, encouraging workers to attempt to make positive changes that benefit themselves or the organization (Ren et al., 2022). If algorithmic surveillance is perceived as fair, it is possible that workers proactively adjust their behavior to positively influence, or evade, surveillance metrics, aligning with perceived expectations, preempting potential penalties, or maximizing individual outcomes (i.e., pacifying the algorithm: Bucher et al., 2021). Interestingly, this aligns with earlier counterintuitive findings about surveillance responses. For instance, Spitzmüller and Stanton (2006) reported that organizational identification was positively associated with surveillance avoidance intentions.

Taken together, these contributions move the conversation from whether workers comply or resist algorithmic surveillance to understanding the conditions, mechanisms, and forms through which legitimacy perceptions shape behavioral intentions. Rather than treating workers as passive recipients of technological systems, we demonstrate that they actively assess legitimacy across multiple dimensions, and these perceptions differentially shape their willingness to comply, alter, or avoid surveillance systems. This not only enriches theorizing about algorithmic management but also advances a more human-centered, sociotechnical perspective on the future of work.

Practical implications

Our findings offer practical implications for platform governance by demonstrating that workers’ intentions to comply with or resist algorithmic surveillance are shaped not by control alone, but by their legitimacy judgments as well, specifically, how fair, trustworthy, and respectful of privacy they perceive these systems to be. Based on our work, we conclude that as platforms develop their managerial ideology, they should adopt a legitimacy-based approach rather than a control-based one. In other words, they should focus on designing algorithmic systems as participatory, transparent, and context-sensitive sociotechnical arrangements. Our study highlights two areas where platforms can facilitate change. First, the use of intensive algorithmic surveillance raises privacy concerns (Liang et al., 2023). Therefore, we argue that adopting data minimization principles by collecting only the data strictly necessary for task performance and communicating this policy to workers could partly mitigate these concerns and help increase workers’ perception of fairness (Newman et al., 2020). Additionally, creating a clear privacy statement and providing workers with the ability to opt-in or opt-out of certain types of data collection could help to increase perceived fairness and reduce privacy concerns.

Second, despite platform workers’ profound distaste for highly intensive surveillance techniques (e.g., Liang et al., 2023, p. 316), our findings suggest that adding more context to platform management can help reduce its negative implications on workers’ legitimacy judgments and subsequently result in less resistance. Some possible avenues for platforms to add more contextual factors to crowdwork could include management collaborating with surveillance system designers and data scientists to integrate contextual variables (e.g., worker location, task complexity, prior experience) into algorithmic evaluations, rather than relying solely on reductive metrics such as task completion time or rating scores. To implement this practically, platforms could develop algorithms that adjust task expectations based on individual workers’ profiles or the complexity of the tasks at hand, rather than using a one-size-fits-all model. Additionally, introducing regular worker surveys or feedback loops could help provide real-time data on task-related challenges and contextual factors, which can be used to fine-tune algorithmic assessments and reduce perceived unfairness. In their efforts to mitigate perceived decontextualization, platforms could introduce feedback mechanisms to enable workers to contest, clarify, or contextualize algorithmic decisions (e.g., appeal processes, human audits), thereby moving toward more sustainable and legitimate forms of digital labor governance. This could include an “appeals” system, where workers can challenge algorithmic decisions with supporting evidence, which would be reviewed by a human supervisor.

Limitations and future research

Several limitations of this study warrant consideration in future research. First, the study relies on data from European crowdworkers on a single online labor platform. Clickworker was selected because it is the largest European Labor Platform, claiming to have approximately 6 million registered workers, of whom 30% reside in Europe 1 . This study examined the experiences of European workers on this platform, limiting the ability to generalize our findings beyond the European context or the specific platform. Future research is needed to jointly consider the roles of culture and individual differences, such as judgment evaluations, within different situational contexts. The Culture × People × Situation (CuPS) approach (Leung and Cohen, 2011) might be a fruitful avenue in this regard, particularly when integrated with an STS perspective. From an STS viewpoint, algorithmic surveillance can be understood as embedded within broader social and technical interactions, where cultural and situational factors shape how workers interpret and respond to technology. According to the CuPS framework, individual differences carry varied meanings across cultures, suggesting that workers may experience distinct outcomes even in similar technological situations. Following the CuPS approach highlights an important social context that may inform our hypothesized model. We expect that societal and organizational levels of culture will interact with perceived decontextualization in order to moderate relations with compliance and resistance intentions.

To further enhance the generalizability of findings, following Morgan et al. (2023), future studies could sample from multiple platforms and embed surveys within a wider variety of tasks, including translation tasks, sentiment analysis, or data labeling. In addition, Aguinis et al. (2021) outlined important recommendations that may help create “more robust, reproducible, and trustworthy” research based on various samples of online workers (p. 823).

Second, our study relies on self-report data in general, introducing potential biases associated with self-reports. While workers are ideally positioned to reflect on their legitimacy judgments, future studies could deploy various techniques to better understand the surveillance-compliance dynamics. For instance, interviews and observations may provide richer descriptions of situations and considerations underlying workers’ actions. Third, this study focused on the implications of algorithmic surveillance and perceived decontextualization. However, research on algorithmic management, generally (Parent-Rocheleau et al., 2024) and specifically in the gig economy (Duggan et al., 2020), has highlighted the multifaceted nature of algorithmic governance practices. Specifically, certain aspects of algorithmic management (e.g., matching) may yield more positive outcomes than other aspects of algorithmic management (e.g., control) (Van Zoonen et al., 2024). In addition, we focused on perceived decontextualization as an important aspect of reductionist logic underlying algorithmic management. However, beyond perceived decontextualization, other aspects of reductionism may play a role, for example, quantification (Newman et al., 2020), and warrant attention in future efforts to understand the impact of algorithmic management, specifically surveillance.

We also encourage future researchers to follow recent recommendations that advocate for definitional and conceptual clarity in AI-related scholarship (Stollberger et al., 2025). To facilitate cumulative knowledge-building on algorithmic management, it is essential to specify the nature and capabilities of the systems under study, distinguish between types of AI technologies, and reflect on how evolving technical features and human attitudes may shift their meaning and impact over time.

Conclusion

This study advances theoretical understanding of algorithmic surveillance by elaborating both STS theory and micro-level legitimacy theory. First, we clarify how algorithmic control shapes worker responses, not through deterministic force but via legitimacy judgments centered on trust, privacy, and fairness. These mechanisms structure the relationship between surveillance and worker intentions, distinguishing between compliance, alteration, and avoidance. Second, we show that perceived decontextualization undermines these legitimacy judgments, intensifying resistance rather than fostering compliance. Third, by introducing and specifying these mechanisms and outcome types, we extend STS theory to account for individual perceptions and agency in algorithmic environments and expand the scope and explanatory power of legitimacy-as-perceptions within digital labor contexts and in doing so, we challenge the narrative that crowdworkers are passive under algorithmic governance and demonstrate that compliance is contingent, not automatic. As algorithmic systems continue to govern platform labor, our findings underscore the need for design principles that respect sociotechnical complexity, foreground legitimacy, and support fair and context-aware management practices.

Footnotes

Acknowledgements

The authors would like to thank Professor Ronald Rice for providing insightful comments and constructive feedback on earlier drafts of this paper.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by funding from the Research Council of Finland Kulttuurin ja Yhteiskunnan Tutkimuksen Toimikunta 356143