Abstract

Performance management systems are intended to positively influence employee behaviour but do they also motivate significant gaming? This concern is increasingly noted in the literature yet research into gaming and how it arises has been very limited. Using data collected from 65 semi-structured interviews with academics working in 13 research intensive business schools/schools of management in the United Kingdom, this article demonstrates how performance management systems can encourage employees to engage in a range of behaviours termed gaming in order to navigate performance management systems. It categorises gaming behaviours into five types: gratuitous proliferation, hoarding performance, collusive alliances, playing safe and cooking the books. The article then examines the distinctive features of each type and illustrates how it arises as a response to performance management systems. Given the widespread use of performance management systems and the close similarities in the way they are implemented in different public and private sector organisations, the derived categories are relevant to contexts beyond the university setting.

Keywords

Introduction

Performance management (PM) systems currently constitute a key function in human resource management and are considered indispensable tools for organisations to improve employees’ motivation, performance and productivity (Denisi, 2011). PM has no single definition but is generally defined as managing employees’ performance of their work duties (Schleicher et al., 2018). While there is a widespread assertion that organisations implementing PM systems do better than those that do not (Aguinis, 2014), research exploring the relationship between PM and employees’ performance has been inconclusive (Poister et al., 2013). Not only do PM systems rarely lead to their intended outcomes but they can also lead to employees engaging in gaming behaviours that hamper the achievement of certain goals organisations seek to reach (Hood, 2006; Smith, 1995). Examples of highly problematic gaming behaviours have been noted in settings including health care (Conrad and Uslu, 2012), schools (Figlio and Getzler, 2006), financial institutions (Graham et al., 2005), car manufacturing (Ordóñez et al., 2009), social welfare services (Koning and Heinrich, 2013), laboratory studies (Schweitzer et al., 2004) and higher education institutions (Bedeian et al., 2010).

The term gaming is frequently used to describe instances where employees exploit the inadequacies and loopholes in PM systems to better their career but its usage is often broad and unclear. With the exception of a few empirical studies (e.g. Bevan and Hood, 2006; Kelman and Friedman, 2009) and a small number of conceptual papers that propose an initial analysis of gaming as an undesirable outcome of PM systems, very few attempts have been made to understand this topic (Franco-Santos and Otley, 2018). Generally, gaming can help employees to achieve their PM targets and so when judged in terms of certain performance metrics, gaming might lead to positive outcomes for employees and organisations. However, the conceptual ambiguity about gaming and lack of empirical studies make it difficult to move from the notion that PM systems can lead to gaming to more detailed explanations of what exactly gaming is and the different types of gaming arising in response to PM systems.

This article contributes to the literature by identifying employee gaming behaviours that result from PM systems, categorising gaming behaviours into distinct types and articulating the features of each type. We start by reviewing existing work on the intended outcomes of PM systems and on employee gaming. We then discuss PM systems in the higher education sector in the UK. Next, we present our findings about how PM systems in our sampled universities motivated employee gaming related to academic research publications.

The intended outcomes of PM systems

For many years PM was defined as the micro-management of employees’ behaviour but gradually it has evolved and become a broad term that signifies many practices and serves multiple purposes (Smith and Goddard, 2002). As a result, there is now ambiguity about the functions and intended outcomes of the systems (Mckenna et al., 2011). Although it is difficult to distil the intended outcomes of PM systems into a definitive set, there are certain commonalities regarding the expected outcomes of PM systems. PM research typically uses models that include outcomes such as employees’ affective organisational commitment, engagement, performance, perception of fairness, job satisfaction and motivation. These models indicate researchers’ propensity to view PM systems as enabling organisations to achieve two key outcomes. The first is to improve employees’ efforts to support the aims of the organisation and the second is to improve employees’ performance (Aguinis, 2014).

PM systems are expected to increase employees’ efforts in various ways; for example, by getting employees to participate in goal setting (Murphy and Cleveland, 1995), providing performance ratings (Pulakos, 2009), providing performance feedback (Schleicher et al., 2018), offering career opportunities and rewarding good performance (Aguinis, 2014).

Performance improvement is viewed as the ultimate goal of PM systems. PM systems are often seen as the panacea for poor performance (Pulakos, 2009). The PM system for individual employees typically sits within larger organisational systems and is shaped by organisational goals. However, individual PM systems remain key because employees often interact with organisational goals at an individual level; for example, in performance appraisals (Murphy and Cleverland, 1995). The focus on individual employees’ experience of PM is also reflected in the stream of research on employee performance and one-to-one performance meetings, and so to understand PM systems it is important to consider how they affect employees, which is the focus of this article.

The extent to which PM systems achieve their intended outcomes is questionable. PM systems have inspired much research and criticism because they often do not lead to the intended outcomes proposed by their advocates. In fact, PM systems have been found to lead to gaming and while gaming can lead to meeting certain individual and organisational targets, it can also frustrate the attainment of other key organisational aims.

Gaming: An unintended outcome of PM systems

Gaming has been predominantly noted in the area of performance measurements, especially in public sector organisations and typically refers to behaviour used to achieve performance targets that leads to undesirable outcomes for employees, organisations or both (Graf et al., 2019). However, as the findings in this article show, when academics’ gaming behaviour facilitated publishing in top journals and enabled academics to meet their performance targets, this could be viewed as a positive outcome for them and their universities because, for example, good performance in the Research Excellence Framework (REF) (a national evaluation exercise for assessing research quality in UK higher education institutions, discussed in the next section) raises academics’ profile and enables their universities to attract predictable income from the government. Nevertheless, the use of gaming as a means of achieving this outcome was problematic because as academics reported, it negatively affected their ability to deliver quality research. Hence, to understand the effects of PM systems on employees, it is important to distinguish between the outcomes of the systems, the means of achieving those outcomes and whether the means conflict with other academic and institutional values/concerns not specified by PM systems.

Different definitions of gaming are available in the literature. Smith (1995) described gaming PM systems as the process of changing behaviour to gain a competitive advantage. On the other hand, Bevan and Hood (2006: 521) defined gaming as ‘reactive subversion such as “hitting the target and missing the point” or reducing performance where targets do not apply’. Graf et al. (2019) argued that gaming is a voluntary form of negative deviant workplace behaviour displayed by employees that harms organisational performance. In a few cases, gaming is also perceived to potentially lead to both desirable and undesirable outcomes for individuals performing the gaming behaviours and their organisations.

Further definitions include a variety of classifications and distinctions. For example, Kelman and Friedman (2009) made a distinction between effort substitution and gaming, the former referring to employees improving their performance in measured areas at the expense of areas that are not measured and the latter referring to employees making their performance look better than it is. Drawing on the experience of the Soviet Union, Bevan and Hood (2006) identified three types of gaming resulting from PM targets: ratchet effects, threshold effects and output distortion. Ratchet effects is setting the following year’s goals based on employees’ performance in the last year and, as a result, employees may be incentivised to under-perform in their current year or not to surpass their goals for the current year. Threshold effects are where the same target is uniformly given to everyone. This may pressurise poor performers to meet their goals but it gives little motivation for employees to excel (Hood, 2006). Output distortion refers to attaining measured goals at the expense of non-measured ones (also called effort substitution, myopia, cream skimming, goal displacement and tunnel vision elsewhere) (Bevan and Hood, 2006).

A few studies have shown that employees who engage in gaming can also benefit their organisations. For example, in 2001, the UK Government set a goal for hospital Accident and Emergency departments to treat all patients within four hours (Bevan and Hood, 2006; Kelman and Friedman, 2009). Hospitals were required to provide data about waiting times during an annual one-week period set by the government. Hospitals meeting the goal would be rewarded with extra funding and hospitals falling short would be investigated (Kelman and Friedman, 2009). Many hospitals engaged in gaming to improve waiting times only during the government assessment week. For example, patients were left waiting in ambulances outside hospitals until the Accident and Emergency department believed they could be treated within the four-hour time limit, which started upon entering the hospital (Hood, 2006; Kelman and Friedman, 2009). In PM terms, many organisational goals were met and employees’ gaming behaviours benefited hospitals through, for example, an uninterrupted or increased stream of government funding. However, meeting targets in this way constitutes dubious performance improvement because the gaming behaviours may have compromised public health.

A focus on metrics was found to have repeatedly led employees to engage in undesirable behaviour (Conrad and Uslu, 2012). For example, in the 1960s, Ford Motor Company set challenging targets for employees to manufacture an affordable small car to compete with foreign manufacturers. Faced with tight deadlines, employees approved safety checks that were never carried out. Resulting design flaws led to 53 deaths, numerous injuries and lawsuits (Ordóñez et al., 2009). In addition to incurring financial costs, gaming can damage company reputations and market value (Graf et al., 2019).

Some researchers likened gaming to cheating; however, while overlapping, they differ conceptually. Pollitt (2013) describes gaming as ‘bending the rules’ whereas cheating is ‘breaking the rules’. Similarly, one of Hood’s (2006) interviewees described gaming as tax avoidance and cheating as tax evasion because the former involves creative interpretation of data and the latter involves data falsification. However, distinguishing between cheating and gaming is not clear cut. For example, in academia, the common practice of academics selectively reporting their findings is described as a ‘grey area’ because researchers do not falsify data in terms of changing or overwriting text or adding quantitative values but the practice is certainly ethically questionable (Butler et al., 2017).

The above analysis shows the importance of discriminating between the value of an outcome and the behaviour used to achieve the outcome. We therefore define gaming as deliberate, opportunistic and strategic behaviours performed by employees in response to PM systems with the intention of achieving performance targets that lead to desirable outcomes for individual employees and their organisations; however, the means (i.e. the gaming behaviours) used to achieve the targets breach other non-measured ethical values/codes of conduct and if exposed would risk employee and organisational reputational damage. Given that our article examines gaming in British universities, we posit that gaming goes against core academic values noted variously by researchers as transparency, research integrity, important intellectual contributions, advancing scholarship and developing innovative ideas (Alvesson and Spicer, 2016; Biagioli et al., 2018; Tourish and Craig, 2020). For example, Kerr (1998: 209) argued that as an academic, one has an ‘obligation to communicate one’s work honestly and completely’ as a fundamental ethical principle of science.

PM systems in British universities

British universities are a suitable context to examine PM gaming because in the last few decades they have experienced important changes whereby the spirit of collegiality has been challenged by increasing managerialism (Shattock and Horvath, 2019). Most prominently, in 1986 the British Government introduced an exercise to evaluate research in universities that takes place every six to seven years (Mingers and Willmott, 2013) currently known as the REF. Universities receiving high research assessment ratings have been given larger amounts of funding (Chandler et al., 2002). REF funding now typically constitutes about 6% of a UK university’s overall income (Jump, 2015), where publication output is the most heavily weighted REF outcome (60% in the REF 2021) (Pinar and Unlu, 2020). While the amount of income from the REF is small, league tables based on research evaluations are used by universities as an important indicator of institutional prestige (Deem et al., 2007). Alongside these changes, concerns have grown about gaming in academic research (Tourish and Craig, 2020). Accordingly, this article examines how academics game publication goals in British universities.

While there are differences in how individual universities and departments implement PM systems, UK universities are subject to a number of similar national frameworks such as the REF (Shattock and Horvath, 2019). PM systems vary within and between universities and departments depending on factors such as the broad targets of universities, the agendas of heads of schools, departments, deans and directors of research, the availability of resources and the outlook of the department. However, overall government interventions still play a key role in shaping the way universities manage the performance of academics (Shattock and Horvath, 2019). Interviews and focus groups conducted by Deem et al. (2007) showed that only a handful of academics believed that their performance was managed only by their department because their work had become largely externally controlled.

In response to government initiatives to manage academics’ research, universities have created internal PM systems (e.g. journal lists) that are arguably more restrictive than government measures (Agyemang and Broadbent, 2015). Journal lists such as the Chartered Association of Business Schools (CABS) Journal Guide and the Financial Times Research Rank have become influential in recruitment and promotion decisions, and have started to determine the nature, composition and structure of academic research (Mingers and Willmott, 2013). Consequently, academics are increasingly faced with ambitious publication targets, urged by their universities to publish in top journals and support their universities in enhancing league table ratings (Tourish and Craig, 2020).

Owing to the new public management style of assessing research, certain academics have adopted a more instrumental attitude, becoming more competitive rather than collaborative and capitalising on PM systems to benefit their career (Chandler et al., 2002). Agyemang and Broadbent (2015) noted that PM systems encouraged academics to achieve desirable outputs but perhaps not desirable outcomes. Academics may produce large quantities of publications and obtain a high number of paper citations but these publications may be of a low standard and not make original or meaningful contributions to their field (Biagioli et al., 2018). Tourish and Craig (2020) explained that gaming occurs because academics’ work is primarily autonomous and it is difficult to monitor what they do. Furthermore, the environment provides academics with the tools, opportunity and motivation to engage in gaming so when they engage in cost/benefit considerations over how to approach their work, the benefits of engaging in gaming to achieve better outcomes may far outweigh the costs of not doing so (Tourish and Craig, 2020). Indeed, not engaging in gaming behaviours can put one at a competitive disadvantage compared with those who do (Biagioli et al., 2018).

In addition to research, universities have various goals such as providing quality education, engaging with industry and responding to the needs of businesses, widening access to education, enhancing students’ employability, making an impact on society and contributing to the national and regional economies (Nedeva, 2007). Universities are in various ways also held accountable in these areas. For example, a relatively recent but now key performance measure is ‘impact’ (introduced in the REF 2014), meaning that for the REF 2021, 25% of the evaluation is based on the impact of academics’ research beyond academia (Pinar and Unlu, 2020). However, we focus on research output in the form of publications for three reasons. First, research output constitutes the largest proportion of the REF (60%); second, gaming publication goals is the area most prominently noted in the literature and by our participants; and third, all academics with research contracts are evaluated by the REF in terms of their publications, whereas only a small proportion of academics (about 10%) are required to give serious consideration to impact by producing impact case studies. Therefore, while academics’ job roles include various other responsibilities (e.g. teaching, mentorship, obtaining research funding and management responsibilities) that may influence how much attention they can dedicate to research, overall in research universities, academics’ publication output remains a key performance dimension (Shattock and Horvath, 2019).

This article focuses on how PM systems can inadvertently put employees in a position where they feel the need to engage in gaming in order to fulfil publication expectations set by their university. In so doing, this research complements studies on academic gaming in response to PM systems found in other countries, such as Graf et al. (2019) in Germany and the study of US business schools by Bedeian et al. (2010) in which the publication gaming behaviours identified included plagiarism, acceptance of undeserved authorship credit, fabrication of data and selective reporting. These papers show how academics’ behaviour may sometimes not support universities in producing quality research. The research questions are: first, what are the types of employee gaming behaviour and second, what are the features of gaming behaviours that arise from PM systems?

Methods

This research adopts an inductive methodology focusing on academics as the unit of analysis because employees who are on the receiving end of PM systems often have different views about the systems than those who design them (Murphy and Cleveland, 1995). We selected a sample of 13 schools of business and management in research intensive universities in the top quartile of the REF 2014 ranking table because as Mingers and Willmott (2013) noted, business schools have become some of the largest departments in universities and academics working in them are often subject to a high degree of pressure. For example, the use of journal ranking lists such as the CABS list is deeply embedded in business schools and strongly influences the research performance expectations placed on academics to publish in top rated journals. Furthermore, evidence from previous studies indicates gaming in academic research taking place in schools of business and management (Alvesson and Spicer, 2016; Clarke and Knights, 2015; Tourish and Craig, 2020).

Data collection

In each university, three to seven academics were interviewed depending on the size of their business schools/schools of management and their willingness to participate. In total, 65 academics were interviewed with a response rate of 15% (65 out of 431). The interviews lasted 45 minutes to 2 hours and 30 minutes. A balanced spread across gender and seniority was attempted. Our sample comprised 22 lecturers, eight senior lecturers, 10 associate professors and 25 professors. 36 academics were male and 29 were female. Forty-eight were on a permanent contract and 17 (lecturers) were on probation. Sixty-three worked full time and two worked part time. Their contact details were found on their universities’ websites and they were invited via email to participate in a study exploring the unintended outcomes of PM systems. Data collection took place between May 2016 and February 2017. Academics were asked to report either on their experience with the systems or on the experience of their colleagues in their department. The interview questions included open questions such as ‘What effects do PM systems have on you?’, ‘How do PM systems influence the way you go about your work’, ‘What do you do or observe your colleagues doing to meet the publication goals stipulated by your institution?’, ‘What led you to take this action?’, ‘What do you think of this action?’, ‘Can you think of a time when you had a bad experience with PM systems? How did you respond?’, ‘Can you think of a time when you had a good experience with PM systems? What happened and how did it affect you?’, ‘If there were no PM systems in place, would you have worked differently? How?’, and ‘How do you think PM systems influence the advancement of scholarship in your area?’.

Data analysis

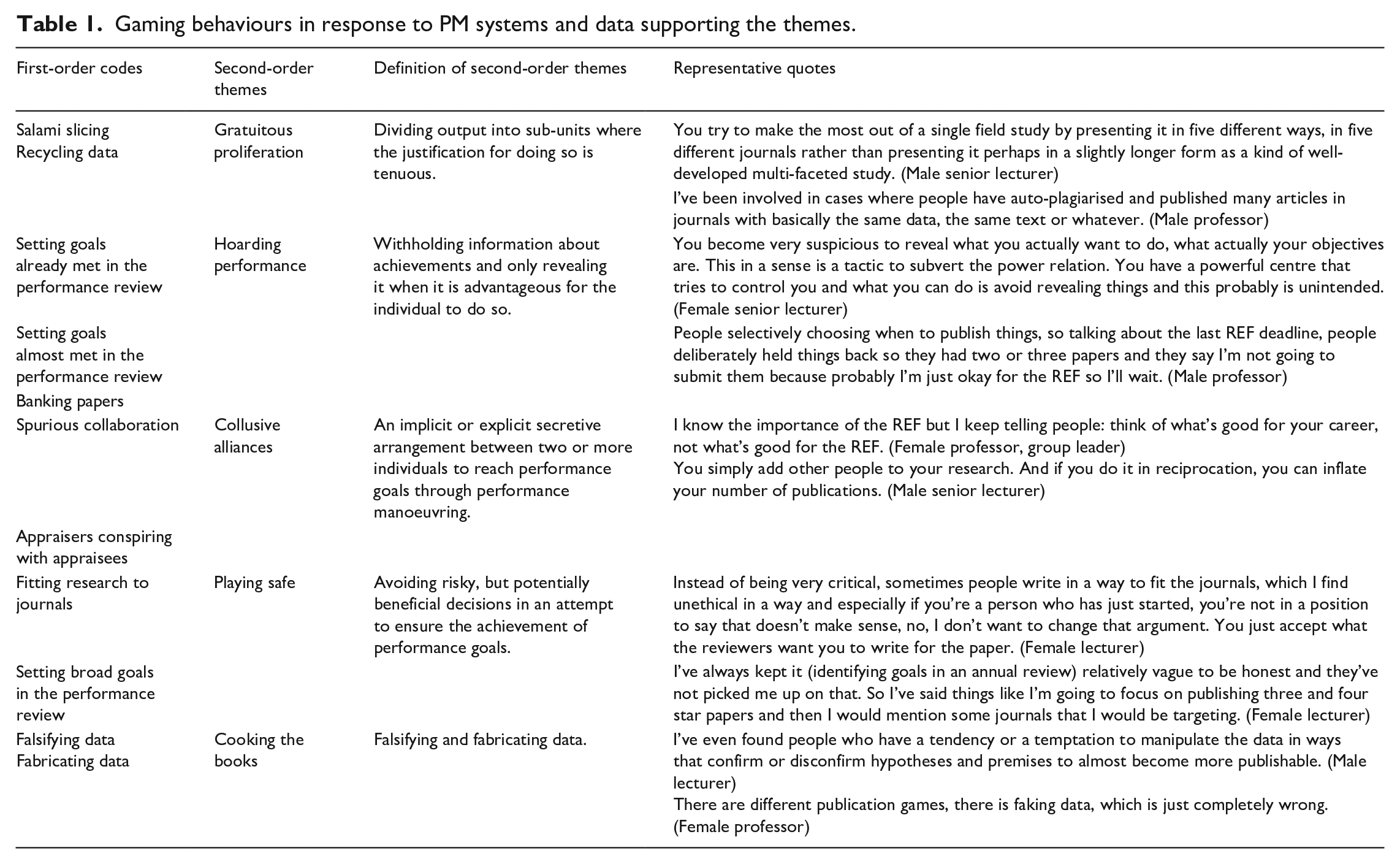

Out of the 65 interviews, 62 were face-to-face and three were via Skype. The first author conducted and analysed the data. All interviews were recorded and transcribed verbatim. The coding and supporting statements were extensively discussed with the second author. Data analysis started once the first few interviews had been conducted. The overlap of data collection and analysis helped in the data analysis, capitalising on the flexibility of data collection in qualitative research (Eisenhardt, 1989). The data was analysed iteratively using thematic analysis, moving from descriptive (referred to as first-order codes) to interpretative coding (referred to as second-order themes) (see Table 1).

Gaming behaviours in response to PM systems and data supporting the themes.

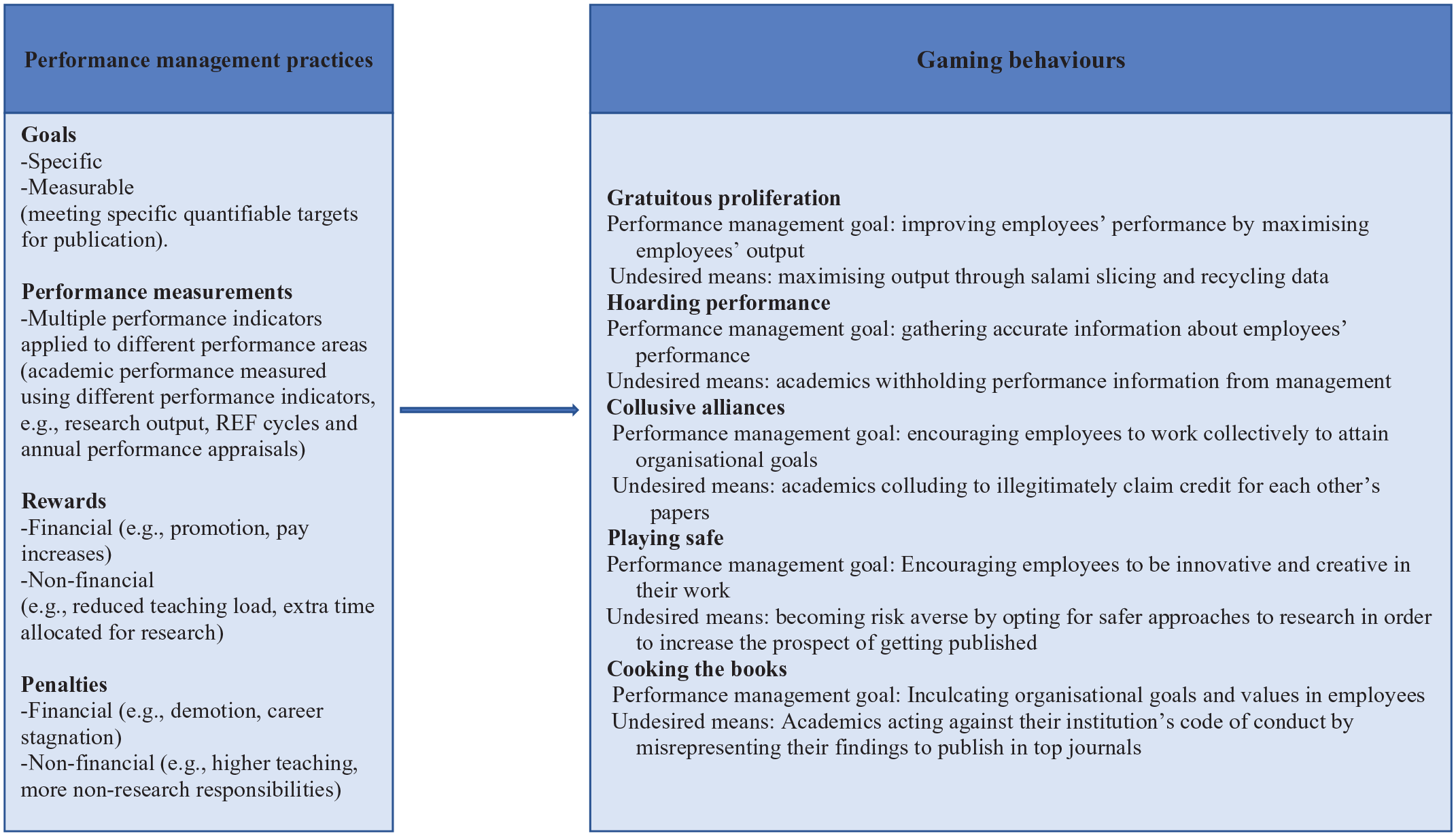

The analysis focused on how academics perceived PM systems in their institution and how they responded to them. After the first few interviews, discontent about the systems was evident and it became apparent that gaming was a key behaviour engaged in by academics to deal with the systems. When discussing gaming, academics repeatedly related it to the PM practices of goals, measurements and rewards (see Figure 1). However, while academics reported having engaged in gaming or having observed others doing it, they also criticised the use of gaming to achieve performance goals and therefore considered it undesirable.

Gaming behaviours resulting from PM practices.

Figure 1 shows the links between PM systems and the gaming types found in the data. The PM practices, gaming types and undesired means of achieving performance goals in Figure 1 are based on our data whereas PM goals are derived from the literature and were also mentioned by our participants or readily inferred. Below, we report academics’ views on and value judgements about PM systems. We start by showing how gaming was presented in broad terms by interviewees and then identify the different types of gaming and the specific features of each type. For each gaming type, we discuss the first-order codes and show how the second-order themes arose from PM systems.

Findings

The 13 universities in our sample all measured academics’ research performance based on clear performance measures. A major difference lay in the extent to which universities intervened in the choice of journals in which academics could publish. Some universities exhorted academics to publish in journals rated within the 4 rating categories in the CABS list (expressed as 4 and 4* in the CABS journal list, with 4* journals being of higher standing) 1 while others required academics to publish in 3 rated journals and beyond in the CABS list, with a strong preference for 4 and 4* journals. Other institutions also had their own shortlist of journals consisting of a sample of the 4 and 4* journals. This did not mean that academics could not publish in other journals but having papers in 1,2 or 3 rated journals without also having papers in 4 and 4* rated journals for the REF would likely be perceived as low performance by universities.

The similarities in the management of academics’ performance might be owing to our sampling strategy because research intensive universities were purposely selected and such universities generally compete based on similar PM indicators. As a result, the gaming behaviours found occurred in all the sample universities and across all academic groups.

Gaming: Undesirable but ubiquitous

Gaming was used pervasively by academics to describe their response to PM systems. In almost every interview, academics used phrases such as ‘this is a game’ or ‘you need to play the game’ or ‘you need to know the rules of game’. For example, a male head of group professor explained: There is the game, there are the rules of the game. We don’t like it, but that doesn’t take away the reality of that game. You’re in that game. I’m afraid you’re one of the players, a very important actor.

Similar negative views were expressed by many of our participants when they spoke about gaming and described their experiences. Although playing by the rules of a game in its literal meaning does not necessarily have negative connotations as it could simply mean that as in every game, there are rules by which players should abide, in the interviews, gaming was not used by academics in this sense. As in the literature (e.g. Conrad and Uslu, 2012), academics used the term gaming to describe acting in inappropriate ways to meet performance goals.

Not all academics reported engaging in gaming but almost every academic reported observing or hearing about gaming behaviours in their departments. The way academics described gaming resembled its description in the literature in that when an individual engages in gaming, they deviate from an ‘appropriate form of conduct’ (Smith, 1995). Overall, academics described gaming as commonplace, as diminishing the scope and quality of academic research and as an almost inevitable response to PM systems. A professor said: ‘Everybody does gaming. The only people who don’t do it, don’t have academic jobs any more. I think some level of gaming if you want to be even half successful, you are going to have to do it.’

Table 1 shows the gaming behaviours academics reported. These are discussed below under the gaming themes of gratuitous proliferation, hoarding performance, collusive alliances, playing safe and cooking the books. The labelling of the five themes reflects the sentiments of our participants. As noted below, all the gaming behaviours discussed enabled academics to meet their organisationally set performance goals, however the behaviours conflict with core academic values noted earlier in this article such as integrity, transparency and advancing scholarship. Some of the gaming types also constitute more substantial breaches of ethical research conduct than others (we return to this point in the discussion).

Gratuitous proliferation

Proponents of PM systems argue that the systems motivate employees to increase their output (Pulakos, 2009). From the interviews, academics were focused on increasing their publication output to meet their performance goals. However, they reported that this was often achieved by salami slicing and recycling data. We term this behaviour gratuitous proliferation. Gratuitous proliferation could be considered desirable in that it enabled academics and their employing universities to meet research targets but undesirable because of the questionable academic merit of the papers produced.

Salami slicing was described by participants as deliberately dividing research into distinct papers where the justification for doing so was not supported scientifically. A female associate professor who aimed to publish different papers from one data set said: I diluted the research into two, three papers rather than one paper, you have to do that . . . it’s damaging in this way in the sense that it’s encouraging us to dilute the content of our work. You could have one very good paper. No, go for three mediocre papers.

A male professor provided a rationalisation of how salami slicing occurs. He said: They are kind of companion pieces. That’s how I would think about it. They’ve got a data set or whatever they have done, a series of interviews, and then rather than saying ‘Okay, can I somehow distil this into one piece that really presents what I consider to be the most interesting findings?’ they’ll say, ‘Well, actually no, there’s this angle here, and then there’s another angle here, and there’s another angle here’, and so on, so they can get four or five or three things out of one and certainly, you see that going on. Whether that’s salami slicing, I suppose it’s a version of it.

Recycling data is similar to but different from salami slicing. When academics recycled their data, they employed the same data used for other publications to generate additional publications by reusing and repackaging existing data in a different way. A female professor explained: There are different publication games, there is using data more than once, squeezing it up, reusing it, which I think is wrong because it makes it look as if there are two different papers, two different sets of data supporting this.

Another male professor described the popularity of recycling data and explained how this practice did not support the advancement of scholarship. He stated: It may be basically reporting the same study in a slightly different way in another journal. These are things that don’t ultimately add much to the pursuit of knowledge or science but simply for someone to get more publications in better journals. There’s a lot, a lot of that around.

Generally, when academics recycled their data, they sent their papers to different journals to disguise the similarities between papers so that their chances of getting published and attaining their performance targets were increased. Academics reported that because their universities quantitatively measured their publication outputs, they had to focus on publishing enough papers to meet their performance targets, which also enhanced their universities’ publication profiles. However, they did so at the expense of other important research goals that are not easily measured; for example, developing scholarship, doing innovative research and making noteworthy contributions to their field. These types of behaviours are similar to the notion of output distortion discussed by Bevan and Hood (2006) (see definition above).

On the other hand, a small number of academics explained that some of the behaviours listed here as examples of gratuitous proliferation enhanced the quality of their papers. For example, they sometimes had to divide their research into different papers because journal editors and/or reviewers asked them to concentrate their current paper on a specific segment of their work. It was acknowledged that sometimes this was an appropriate division of their work as it enabled them to produce more focused research papers and we would distinguish this from gratuitous proliferation, which is more a decision at the outset to create more than one paper from research that could be presented in a single paper in order to meet performance targets that require the publishing of a number of papers.

Hoarding performance

A key aim of PM is to enable organisations to collect performance information about employees to better manage their performance (Aguinis and Pierce, 2008). Academics knew they were expected to continually publish papers in top journals and would likely be rewarded for doing so or otherwise penalised. Consequently, they often responded by engaging in a practice we term hoarding performance. Hoarding performance can be viewed as desirable in that it helps academics to meet their future performance targets but undesirable because it motivates them to conceal their work and curtails the advancement of scholarship.

When academics had more papers ready or nearly ready for publication than was required by their PM assessments, they sometimes did not disclose that information in their current performance assessment so that they had enough output to present in their next assessment. For example, academics chose when to submit their papers to journals based on whether they had achieved the REF requirements. When their REF goal was reached, they banked any surplus papers, typically by delaying publishing them. A male professor noted: You need to make sure there’s stuff in the pipeline that’s going to actually appear before the REF. And some people bank papers. If they’ve got a lot of papers from the previous REF, they’ll keep some in reserve to make sure that they’ve got some for the next REF.

In their performance reviews, academics also set goals that were almost or already met. A female senior lecturer explained: I’m very strategic and I’m not ambitious. I write objectives that I’m almost sure I have almost completed. So the appraisal is in September, I put things that I know quite well that I will have completed by December. Just in case there are delays, I know that I will still have nine months to complete.

Another male senior lecturer who followed a similar approach said: ‘In that review, what I’m trying to do is to describe things which I’m planning to do or which I’m already close to finishing hopefully in a way that they would look at least moderately challenging goals.’

Hoarding performance enables academics to provide a regular supply of publications and so helps them to meet their current and future performance targets but delaying submission until the next REF may prevent new and useful knowledge from being accessible to others in the available literature and perhaps limits the advancement of scholarship. Withholding performance information has been highlighted in research on dysfunctional work behaviours and it generally refers to instances when employees deliberately conceal performance information, benefiting themselves while disadvantaging the employer (Warren, 2003). Hence, withholding information has been negatively viewed in the literature.

Academics reported concealing their goals because they could not predict whether their papers would be positively received by journals or whether they would have enough output for their next assessment. To guard themselves against unsuccessful publication attempts and missing their targets in what they perceived to be rigid PM systems that emphasised meeting performance metrics over scholarly value, academics felt they had to make use of the information asymmetry between them and their managers, who were usually unaware of the progress of academics’ work. This information asymmetry has been an important criticism of PM systems in public services (Jackson, 2011).

Hoarding performance has some parallels with what Bevan and Hood (2006) described as the ratchet effect in the Soviet Union (see definition above). As in the Soviet Union, PM systems in the sampled universities involved a review of past and future performance. However, unlike employees in the Soviet Union who reduced their performance after attaining their goals, participants in the sample hoarded their performance so that their performance would not be poorly rated if their future goals were unachieved.

Overall, academics felt that publishing in top journals was not entirely within their control and so hoarding performance was used as a way of responding to their universities’ demands to regularly get published in top rated journals across ongoing assessment cycles.

Collusive alliances

Employees working collectively to attain organisational goals is important for the functioning of organisations. The assessment of team performance is usually accompanied by an assessment of individuals’ performance and their contribution to their team (Aguinis and Pierce, 2008). When employees disproportionately contribute to a task, the credit received or lack thereof should be commensurate with their input. In our sample, we found that academics sometimes made arrangements to benefit from each other’s work by claiming credit as contributing authors of papers to which they had not or had minimally contributed. While this practice enables academics to meet the demands of their PM systems to publish different papers in top journals, it is undesirable because it conflicts with core academic values such as integrity and transparency. We term this practice collusive alliances.

In the interviews, academics were generally in favour of collaboration. One reason given was that they found PM goals challenging and working with co-authors helped to surmount publication difficulties. A male professor explained: ‘I think it is very difficult (the REF goal). For me, the only way I can do something like that is because I work with other people and I have done historically.’ However, on occasions collaboration turned into collusion when academics either did not make any contribution to papers in which they were named as co-authors or when they contributed very little so co-authoring the paper was viewed as an unearned credit. This practice supported academics to boost their publication records. A male professor said: Quite a lot of people seem to be moving towards more collaborative publications, which is good when they’re genuinely collaborative publications because it’s a team effort but sometimes you wonder whether the collaborative authorship isn’t a little artificial. People plotting, thinking, we work in three different universities, therefore our articles can be counted in three different REF submissions.

A male professor also described how his colleague co-authored a paper where she made no contribution other than taking it to print: There are people that are professors – I’m telling you this because I know – that have carried the paper for printing and put it in. In a team of five people that have published 10 articles and he or she is the fifth author and basically was carrying the paper for printing, and that’s a professor, and I know a few of them.

Collaboration also took place between appraisers and appraisees during the performance reviews but was conducted in a manner distinct from the way such collaboration is prescribed in the literature. In theory, although rarely in practice, collaboration in performance reviews involves a manager objectively assessing their appraisee and rewarding them accordingly (Murphy and Cleverland, 1995). However, in the interviews, we found that appraisers colluded with appraisees by, for example, showing them how to complete their annual reviews without necessary seeking to improve performance. A female lecturer said: She (her appraiser) gave me some tips on wider engagement just sort of saying things, it is a bit like a wishy-washy goal . . . She just told me to put down things that I would be doing anyway but put it as a goal. I think that was useful.

Overall, the collusive behaviour mostly took place because many appraisers said that they did not believe in their PM systems yet had to conduct performance reviews they opposed. Appraisers also reported that they wanted to help their appraisees navigate what they perceive to be challenging PM systems and so they offered appraisees guidance on how to meet their performance goals. In terms of publications, some academics stated that they found academic work a solitary activity and preferred to work collectively. These kinds of collaborations that focused on genuine teamwork and development were positively perceived by participants and are not what is described here as collusive alliances.

Playing safe

PM systems are often thought to encourage employees to be innovative in their work and help them to maximise their contribution to organisational goals (Aguinis, 2014). In the interviews, academics reported having to become risk averse and less exploratory in their research in order to get papers published, which was viewed as undesirable because it hampered their ability to do innovative research. We describe this practice as playing safe.

A number of academics thought that PM systems constrained their ability to do quality exploratory research because they had to ensure that their papers fitted the preferences of highly ranked journals. A female senior lecturer explained: ‘The problem with the publication game is that you always write papers and topics in a way that would suit any of the three and four star journals in the ABS list.’ Another male professor described how in his view, academics do research in the wrong order: We observe gaming. I think the REF has changed the way that research is done so again research now is governed by rankings of journals in the sense that rather than write a paper and then look for a journal, you look for a journal and write a paper so it’s the other way around.

Academics were also less willing to learn new skills or develop existing ones. When they lacked a skill, they collaborated with others who had the skill they missed. A female reader explained: I don’t come from a very quantitative background so four years ago I did a summer school in econometrics because I wanted to learn. I think that’s wrong. That’s the wrong thing to do because it develops my expertise as a researcher but it’s not rewarded, it’s a route that is not rewarded. The most efficient thing to do is to team up with someone who is in econometrics and do things together rather than learn it yourself. So I think this is the most efficient way to go about it, about this pressure and to meet the requirements.

Academics also did not take risks in performance reviews, often minimising their goals and phrasing them in broad terms on their review forms. For example, if an academic aimed to publish a paper (the goal), the written goal might be to submit a paper for publication (i.e. the step leading to the goal). A female professor group leader explained: You keep them open [goals] and then that’s where you start taking it as a useless game. Somebody [the management] comes with something stupid like ‘you need to set up goals’ and then we all know that it’s stupid but we don’t say that it’s stupid and we pretend that we do it.

Academics set broad goals because, in addition to being unable to ensure successful publication of their work in top journals, they did not trust PM systems. A female senior lecturer said: The person who chairs the appraisal and the head of school have to tick boxes and say she’s met the objectives. But this information is not confidential to the department - it goes to human resources. So if you haven’t met the objective, it’s stored somewhere in the human resources office.

Another female professor explained that she avoided setting specific goals in order to account for all eventualities. She said: ‘I try to be as non-specific as possible so that I’m not creating any hostages to fortune if I don’t achieve them’.

Academics believed that if they missed their goals, this information would be documented, which they believed could be used against them should their institution wish to do so. Having broad goals was viewed as easier to achieve, an outcome that goes against the tenets of goal setting theory, which exhorts employees to have specific difficult goals at work (Locke and Latham, 2006) and also goes against the view that appraisees need to set stretched goals that motivate improved performance (Murphy and Cleveland, 1995).

Generally, academics’ scepticism about PM motivated them to take a cautious attitude to PM systems; for example, in the way they presented their goals in performance reviews and in the way they managed risks associated with publication by doing research that they considered less adventurous but more likely to be published and so meet performance targets.

Cooking the books

It is widely acknowledged that PM systems are powerful tools by which organisations can inculcate their goals and values in employees and make positive changes to employee behaviour (Pulakos and O’Leary, 2011). However, our interviewees stated that PM systems sometimes encouraged academics to resort to research practices that we term here cooking the books.

Interviewees reported being aware of academics fabricating, misrepresenting or falsifying their research findings to get published in top journals. Fabricating data is defined as ‘making up data or results and recording and reporting them’, whereas falsifying data is defined as ‘manipulating research materials, equipment, or processes, or changing or omitting data or results such that the research is not accurately represented in the research record’ (Bedeian et al., 2010: 716–717).

As in the literature, falsifying or fabricating data was viewed by the interviewed academics as bad research practice. A female professor stated: ‘I know there are people who actually fake data and do all kinds of questionable stuff. I can’t name all the names any more but there have been a few high-profile cases.’ However, despite participants saying that such activities often took place, not many reported having seen their colleagues falsifying or fabricating data, or having heard of their colleagues being caught doing so.

A male professor gave examples of how researchers can manipulate their data. He stated: ‘Other game playing is more at a micro level around publication, like HARKing and dropping participants or increasing participants in certain kinds of studies.’ HARKing (hypothesising after the results are known) refers to the practice of academics changing their hypotheses after data are analysed (Kepes and Mcdaniel, 2013). Another female lecturer criticised PM systems for motivating academics to manipulate their data. She said: When I analyse data, it would be very, very easy for some cases to go missing and you have totally different findings. So while I then put the data on the side and say, oh I can’t publish my findings, if you would delete a few cases, something totally different would come out. I don’t think that would be ethical but I think the REF would encourage that because the REF obviously they want you to publish a lot so you might instead of not using that data, you might.

Consequently, academics had become sceptical about published data. A male professor explained how PM systems encouraged academics to become ‘selfish’ and publish papers that did not truly represent their data. He stated: One thing is people may be trying to make a story when there isn’t one in a paper and I’m not talking about deliberate faking or anything like that. I’m talking about maybe just reporting the results that are favourable to the story rather than the others, which is something that I think is quite common. It’s not good science and I’ve seen it in my department of course. I’m also an associate editor at a journal and it can be spotted sometimes but not always when people submit articles.

At its extreme end, cooking the books is similar to the concept of cheating (Pollitt, 2013). However, as was reported in the literature, we found that cooking the books took different forms. Academics misrepresented their data using a range of techniques, some of which are subtle forms of research misbehaviour and therefore whether certain behaviours could be categorised as definite academic misconduct is debatable (Butler et al., 2017). For example, there is an ongoing debate in the literature about how and when the practice of HARKing is acceptable and unacceptable (Tourish and Craig, 2020). While HARKing is to some extent deceptive as without any mention to the contrary, readers are likely to infer that the authors developed their hypothesis prior to data analysis (Tourish and Craig, 2020), both the literature and some of our participants showed some reluctance to describe it as cheating (Butler et al., 2017). For example, as is shown in the quote above, our interviewee describes the practice of distorted reporting as ‘not good science’. Labelling distorted reporting as such indicates the problematic nature of this behaviour because certain academics may see them as legitimate. This perhaps also explains why researchers continue to find these kinds of behaviours in abundance in universities (Bedeian et al., 2010; Graf et al., 2019).

Overall, academics found performance goals hard to consistently achieve and reported that PM systems gave academics an incentive to misrepresent their data. However, it is also possible that because what is permissible and impermissible is not always clear in research, academics might unknowingly engage in what is described here as cooking the books.

Having categorised and explained gaming, we now articulate the PM practices that we found to be associated with gaming.

How PM practices link to the gaming types

In the interviews, academics repeatedly referred to the PM practices of goal setting, performance measurements and rewards as incentives for gaming. They are presented separately below, although in the interviews, participants often discussed goals, measurements and rewards together in relation to gaming behaviours.

Goal setting

Goal setting across the universities was primarily operationalised in the form of very specific and measurable goals typically requiring four research outputs in top journals for the REF. Publishing papers of the calibre set by their universities was considered by academics to be an important goal that significantly determined career trajectories and institutional success. Academics often labelled themselves as ‘REF-able’, meaning REF returnable or ‘Non REF-able’, meaning non REF returnable. A male reader stated: We’re talking about REF REF REF. It’s become a fruit or vegetable that you take all the time. If you really care about your job, you feel that you need to do what’s required and have to contribute to the institution.

Performance measurement

Goal setting was followed by universities monitoring academics’ performance. Academics’ performance was measured at different points; for example, in annual performance reviews, during the REF cycles and informally through occasional meetings. For example, in one university, in addition to annual reviews, academics had a one-to-one meeting every year with their director of research in which they had to demonstrate that they were on track to meet their departments’ publication goals. A female senior lecturer explained how doing quality research was time consuming and performance measurements did not account for the time the publication process can take. She said: ‘Everything is measured in one year, four years. The REF is every six years, the appraisal is every year, so everything is really short-term and this is not good for academia when things need a long time to develop.’ Academics also believed that their universities strictly assessed their performance based on the performance measures they set. Hence, faced with great pressure to meet university performance measures, academics sometimes turned to gaming.

Rewards/penalties

Rewards were a critical part of the academics’ PM models. When academics met the performance measures in their institution, they were able to reap greater rewards. Universities rewarded academics in different ways; for example, some of them attached financial rewards to the outcomes of performance reviews, others rewarded academics through promotion and others through more favourable workloads. When academics’ performance was measured unfavourably, a range of ramifications typically followed. For example, in addition to rewards being withdrawn from academics, they also faced a stagnant career, the prospect of higher teaching loads and greater administrative duties, and the possibility of contract termination. A female lecturer said: The university here sent this really interesting email where they basically started congratulating us on doing such a great job so far and performing so well and kind of meeting the REF requirements from the last round. And then kind of wove in the fact that we’re introducing new performance management systems and people who don’t comply, they’re going to be on a capability procedure, which is a very serious thing to highlight basically . . . when you use the words capability procedure, you’re very much introducing the conversation that could eventually lead to termination.

In another university, a female senior lecturer stated that her university punishes academics who do not meet their research targets by not allowing them to earn extra income. She said: Part of our job role is if we are up to our workload then we choose to do additional teaching . . . then we get paid for that teaching on top of our salary. If we choose to, we don’t have to but we can choose to but one of the things they’ve done recently is that they’ve determined that if you don’t perform to an adequate standard in the research exercise, they’re not going to allow you to do any additional teaching so there’s this kind of almost a punishment really for not publishing quickly.

Academics were therefore aware of the implications of not performing well in PM systems. While they did not view academic gaming as appropriate, they still devised a range of gaming responses. The existing PM model was strongly criticised by academics because they viewed PM practices as blunt instruments that were insensitive to the nature of academic work and that also motivated academic gaming. While this approach to rewarding or punishing performance does not excuse gaming, it provides a logical explanation as to why some academics may engage in gaming. Graf et al. (2019) argued that gaming occurs because PM systems reward academics for meeting performance targets but do not reward them for engaging in ethical conduct.

Differences in gaming between universities

While there were many similarities in the way the sampled universities assessed academics’ performance, there were some differences in their overall strategies. As our aim was to examine how academics on the receiving end handled PM systems, we cannot determine whether there were major differences in the way university executives wished to implement the systems or comment on the way they wanted academics to understand them. However, we can report on how academics perceived the PM systems used by their institution.

All the universities had PM systems in place but the extent to which the systems were aggressively enforced varied across universities and this, in turn, influenced gaming. For example, universities differed in the extent to which they tolerated academics missing performance targets and gave them other opportunities to address the perceived performance deficiencies. Interestingly, we did not find major differences in the way academics responded to PM systems in these various institutions because academics in all the selected universities aspired to meet their PM goals to provide highly rated papers for the REF. Even in places where academics felt that their institution might offer them the opportunity to address underperformance (for instance, by extending their probation period if their position was yet to be confirmed), the uncertainty of what could happen to their position strongly motivated them to meet their targets. As a result, we found that academics in all the sampled institutions reported engaging in and/or witnessing gaming in their institutions. However, overall, gaming was more pronounced in universities that were heavily metrics driven and aggressively enforced their PM systems relative to those that did so less. Specifically, the extent to which universities implemented the PM practices of goals, measurements and rewards influenced academics’ propensity to engage in gaming. This is because it was made clear by these institutions that meeting performance targets was expected and academics had very little doubt regarding the undesirable implications if their performance targets were not achieved.

On the other hand, institutions that tended to have higher performance expectations and rigid PM systems in place also provided more research related support to academics. For example, some institutions provided more funding to support academics’ research, others provided additional financial rewards (to be added to academics’ research pots) for those who were able to publish in certain journals, others linked the outcomes of annual reviews to financial rewards and others used all or some of the above. However, while the possibility of gaining rewards was generally positively received by academics, the availability of rewards coupled with the desire to avoid adverse consequences motivated gaming.

Discussion

This article presents a systematic account of the gaming behaviours displayed by academics in response to PM systems within an elite group of British universities. We organise our discussion around the main contributions of the article: offering a refined analysis of gaming behaviours, exploring the conditions under which gaming is more likely to arise, discussing the perceived desirability and undesirability of gaming and providing further evidence of a changing academic ethos. This is followed by the practical implications of the research.

First, we found important distinctions in the way academics game PM systems. Making such distinctions is important because, as shown above, the systems gave rise to different gaming behaviours and so unless a more specific understanding of gaming is achieved, it will remain difficult to understand how gaming manifests in organisations and identify corrective actions to prevent it. Gaining a nuanced understanding of various types of employee gaming is also important because some of the gaming types constitute more significant breaches of ethical research conduct (‘cooking the books’) than others (‘playing safe’). For example, certain gaming behaviours if exposed could have more serious legal and/or reputational consequences for employees and their universities whereas others could simply be described as what some researchers call ‘grey areas’ or ‘questionable practice’. By articulating the main features of gaming, this research contributes to the small set of studies detailing gaming in PM systems (e.g. Bevan and Hood, 2006; Graf et al., 2019; Kelman and Friedman, 2009).

An important point to note is that judging the gaming behaviours we presented above is not always clear cut. While we believe we have captured the sentiments of our participants, our labelling of the gaming types could be couched in more positive terms depending on whose perspective is taken. For example, employers and employees could view what we described as collusive alliances as teamwork, playing safe could be seen as managing risk, hoarding performance could be seen as dealing with uncertainty and performance outcomes beyond one’s control, gratuitous proliferation could be seen as producing more focused papers and cooking the books could be seen as dealing with the PM demands to continuously achieve hard goals. Establishing the extent to which a behaviour breaches ethical values/codes of conduct is therefore likely to be contested and dependent on one’s viewpoint.

Second, this article contributes to research on the shortcomings of PM systems. Our findings are in line with the view that implementing fool-proof PM systems that lead to their intended outcomes is difficult to achieve (Pulakos and O’Leary, 2011). However, unlike previous studies that focus on identifying deficiencies in the design and implementation of PM systems, this research explores the propensity of the systems not to work as intended in practice by leading to employee gaming. Our findings show that the extent to which organisations pursue PM in overly intensive ways increases the likelihood of gaming. In our data, overly intensive was characterised as goals verging on being beyond the reach of most academics and excessive rewards/penalties (e.g. promotion or the threat of job loss).

These findings contribute to calls to better understand the relationship between PM practices and inappropriate work behaviours (Ordóñez et al., 2009). A number of studies have argued that goal setting can have undesirable effects as employees’ overriding concern to achieve their performance goals may interfere with ethical conduct (Barsky, 2008). Barsky (2008) argued that an organisation that aggressively implements goal setting can also be interpreted by employees as having given them a licence to engage in suboptimal behaviours.

Third, our findings reveal why gaming behaviours are both desirable and undesirable to employees and organisations, depending on the criteria used. In showing that gaming helps to meet certain performance goals but violates other standards, this article offers a more nuanced analysis beyond considering gaming as simply undesirable to one or both parties (e.g. Smith, 1995; Franco-Santos and Otley, 2018). Employees who engage in gaming may be able to meet their performance goals and in this case gaming could be seen as desirable but if gaming violates academic values, it could also be seen as undesirable. As goals measured by the organisation are clear and specific and are attached to rewards/penalties, whereas academic values such as transparency, innovation and advancing scholarship are unlikely to be measured, this may explain why gaming behaviour is inadvertently incentivised in organisations.

Given that gaming brings some positive outcomes to organisations, one may question the extent to which it benefits or harms organisations. In our sample, when academics successfully gamed the systems and published top rated papers, both academics and their institutions benefited but the behaviours academics engaged in to achieve these beneficial outcomes were problematic. Hence, the benefits accrued from individuals meeting organisational targets through gaming need to be balanced against the possible undesirable outcomes that may follow from the means used to achieve targets. Whether gaming is ultimately beneficial or harmful to organisations will be determined by factors such as whether the undesirable consequences of gaming (e.g. reputational damage) ever become revealed and perhaps also the kinds of gaming behaviours employees perform because as we noted above, certain gaming behaviours if exposed could have more serious consequences than others for employees and organisations.

In our data, gaming was not an unknown phenomenon to management. However, as Bevan and Hood (2006) noted, while the presence of gaming is widely acknowledged, it is not necessarily in the interest of decision makers to prevent it. It is also likely that those implementing the systems have some degree of influence on their design. For example, when a government imposes performance targets on an organisation, they may be more concerned to see that the targets are met than questioning the means by which they are met.

Fourth, we contribute to PM research in the higher education sector. The gaming behaviours displayed by academics to do well in PM systems challenge existing arguments about academic work being a vocation pursued for its intrinsic value (Alvesson and Spicer, 2016). This is particularly relevant in light of evidence showing how academics responded to managerialism in business schools by placing career advancement over academic values (Clarke and Knights, 2015) and increasingly resorting to gaming (Alvesson and Spicer, 2016). Furthermore, academics’ gaming responses indicate some form of conformity to PM systems, suggesting a change in the ethos and values of academics (Kallio et al, 2015).

This research has a number of practical implications. Managers need to consider both employee output and how the output is achieved (Pulakos, 2009). This is not a new observation, however, we found that employees meeting performance targets often took precedence over ethical conduct and we therefore call for practitioners and researchers to consider both the outcomes and the means employees use to achieve them. This might necessitate organisations monitoring how employees achieve their targets and proactively searching for anomalies in behaviour that might indicate gaming. We do not suggest that organisations add more targets to the means by which employees reach their targets as this may lead to an even greater incentive to engage in gaming. We simply suggest that where an organisation suspects gaming behaviour is taking place, it takes steps to rebalance its PM system away from a narrow focus on performance metrics (which from our analysis was found to motivate gaming) and instead to encourage core organisational values, academic in this case, such as autonomy regarding where one publishes and an appreciation of an article’s intellectual qualities rather than using the journal in which it is published as the marker of quality. Managers could also consider focusing on developmental goals for employees rather than adopting narrowly defined metrics because like other research (Schweitzer et al., 2004), we found that these kinds of metrics/goals can lead to gaming.

The findings are relevant to universities and other organisations in the public and private sector in western economies. While the UK is notable for its excessive PM systems, there are important parallels in the management of public institutions across western countries (Kallio et al., 2015). Furthermore, the gaming behaviours discussed in this article could similarly be performed by employees in the private sector. Hence, managers in various kinds of organisations could make use of the gaming analysis presented in this article.

Limitations and suggestions for future research

Our article focused on the PM systems used in a group of research intensive universities. However, many UK universities describe themselves as more teaching focused (Shattock and Horvath, 2019). These universities tend to place less emphasis on academics’ research outputs relative to research focused universities and so have different PM systems. Our research was also limited to a specific group of research universities that scored highly in the REF 2014 and so it is likely that they have more stringent PM systems than other research universities. Future research could compare the PM systems used by different groups of universities and how different PM models may relate to gaming.

In the sampled universities, gaming was widely discussed by academics. However, this might be owing to the period we collected our data, which was around the middle of the REF cycle. It is likely that PM targets were pursued more intensively by universities during that period and academics were more concerned with their publications as they needed to allow two to three years for their papers to get published in top journals by 2020 (the end of the REF cycle). A longitudinal study could interview the same group of academics across the REF cycle and see whether their responses to PM systems vary over the cycle.

This article focused on academics’ research performance. However, academics perform many other important tasks such as teaching, external engagement and citizenship behaviours. The sampled academics sometimes also held other positions (e.g. directors of research, heads of subject groups, mentors and appraisers) so, as Deem et al. (2007) noted, academics can be faced with conflicting priorities and demands. As a result, some academics reported sometimes having to make trade-offs and prioritise tasks they deemed important. Future research could investigate whether conflicting work demands affect academics’ propensity to engage in gaming, not just in research but also in other areas (e.g. teaching).

Sometimes academics reported engaging in gaming, sometimes they saw colleagues doing it, sometimes they were less clear about whether or not they engaged in gaming. Social desirability bias may have led academics to report others engaging in gaming rather than themselves. If responses were subject to social desirability, this would suggest our findings under-represent the scale of gaming at the sampled universities. Like other qualitative studies (e.g. Graf et al., 2019), our research is not best suited to determine precisely how much gaming behaviour is taking place or the extent to which it is attributable to organisational factors versus individual factors. Future research could use other methodologies to assess the scale of gaming such as anonymous questionnaire designs and quantitatively examine causal relations between organisational and individual factors and their links to gaming behaviours.

Conclusion

Gaming behaviours assisted academics in accomplishing their performance targets. However, academics considered gaming to be undesirable as it conflicts with academic and ethical values and also presents a reputational risk to employees and institutions. Despite warnings, the popularity of PM systems is on the increase and organisations continue to perceive them as a means of reaching ambitious business goals. Therefore, it is important that the research community scrutinises PM systems and gives more attention to how they may inadvertently incentivise gaming.

Footnotes

Acknowledgements

We would like to thank our interviewees for being very generous with their time and taking part in our study. We would also like to thank the three anonymous reviewers for their input and helpful comments, and the editor, Melanie Simms, for her guidance and valuable observations.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.