Abstract

Digital work platforms are often said to view crowdworkers as replaceable cogs in the machine, favouring exit rather than voice as a means of resolving concerns. Based on a qualitative study of six German medium-sized platforms offering a range of standardized and creative tasks, we show that platforms provide voice mechanisms, albeit in varying degrees and levels. We find that all platforms in our sample enabled crowdworkers to communicate task-related issues to ensure crowdworker availability and quality output. Five platforms proactively consulted crowdworkers on task-related issues, and two on platform-wide organisation. Differences in the ways in which voice was implemented were driven by considerations about costs, control and a crowd’s social structure, as well as by platforms’ varying interest in fair work standards. We conclude that the platforms in our sample equip crowdworkers with ‘microphones’ by letting them have a say on workflow improvements in a highly controlled and easily mutable setting, but do not provide ‘megaphones’ for co-determining or even controlling platform decisions. By connecting the literature on employee voice with platform research, our study provides a nuanced picture of how voice is technologically and organisationally enabled and constrained in non-standard, digital work contexts.

Introduction

Over the past decade, outsourcing tasks to online crowds via digital platforms has become increasingly common. Digital work platforms bring together clients needing to solve a problem with a crowd of workers willing to attend to it (Howcroft and Bergvall-Kåreborn, 2019; Kaganer et al., 2013). By matching and administrating the labour capacities of geographically diffuse crowdworkers, digital platforms play a central role in coordinating labour markets, often on a global scale (Kenney and Zysman, 2016; Lehdonvirta et al., 2018). Despite not being formally classified as employers (e.g. Prassl and Risak, 2016), 1 platforms significantly shape the work relations between clients and crowdworkers by setting rights and obligations, defining task distribution, and determining whether and how crowdworkers can communicate with each other (Fieseler et al., 2019; Gandini, 2018; Schörpf et al., 2017; Wood et al., 2019). Scholars in various research fields therefore suggest studying crowdwork as a new form of ‘non-standard’ work (Duggan et al., 2020; Kalleberg and Dunn, 2016; Kuhn and Maleki, 2017) and considering platforms as quasi-employers (De Stefano, 2016).

Crowdwork is often associated with problems such as low wages, economic and legal insecurity, repetitiveness, and a lack of communication, representation and collective organising (Berg, 2016; Fieseler et al., 2019; Irani and Silberman, 2013). Suggestions for improving these conditions revolve around the need to strengthen worker representation and voice (Johnston and Land-Kazlauskas, 2018; Vandaele, 2018), i.e. of providing opportunities to speak up and seek change, rather than silently accept, or exit from, an objectionable state of affairs at work (Farrell, 1983; Hirschman, 1970). In the employment relations literature, voice is not only tied to notions of workplace democracy and worker empowerment, however, but also to employers’ strategic interests such as enhancing worker motivation and preventing turnover (Marchington, 2007; Spencer, 1986; Wilkinson et al., 2013). Both of these issues matter for platforms that, despite often drawing on a global labour pool, face turnover problems and challenges in motivating platform workers towards high performance (Acar, 2019; Deng and Joshi, 2016; Langner and Seidel, 2015; Zheng et al., 2011). Although extant literature has examined the role of regulators and trade unions in facilitating crowdworker representation and voice, few studies examine why and how platforms themselves may integrate voice channels in their governance systems and technical infrastructures. If such studies exist, they focus either on the lack of voice opportunities on ‘micro task’ platforms (e.g. Irani and Silberman, 2013) or on ways in which crowdworkers can be motivated and retained in more creative, ‘macro task’ contexts (e.g. Boons et al., 2015). 2

Against this backdrop, this article examines why and how different kinds of platforms provide channels for crowdworker voice by drawing on case studies of six German digital work platforms covering a range of micro and macro tasks using data from covert participatory observations, document analysis and interviews with CEOs or community managers. We find that all platforms create – albeit varying – voice regimes, thereby challenging the common view that micro task platforms in particular ignore crowdworkers’ concerns. Dominated by communication (crowdworkers can report issues to the platform) and consultation (crowdworkers can give input to issues defined by the platform), these voice regimes allow crowdworkers to address task-related issues and sometimes issues related to platform-wide work organisation, but not issues concerning corporate development or strategy. Analysing voice regimes in terms of their governance decisions and technological building blocks, we conclude that platforms give crowdworkers microphones, i.e. limited voice on pre-defined issues that can be muted, but refrain from giving up control by providing megaphones, i.e. enabling crowdworkers to speak up freely and co-determine platforms’ decisions.

These findings contribute to current debates in the voice as well as the platform and crowdwork literatures in the following ways. First, by bringing the voice literature into debates on digital platforms, we are able to draw a more refined picture of crowdworker voice regimes: rather than crowdworker concerns being taken seriously only by certain platforms, such as those in creative macro task contexts (e.g. Boons et al., 2015; Gol et al., 2019; Jabagi et al., 2019; Langner and Seidel, 2015), crowdworker voice is limited to certain degrees and levels on all platforms we examined. Second, responding to calls in the employment relations literature to theorise and empirically research the challenges of digital work platforms for employee voice and participation (Wilkinson et al., 2018, 2020), we detail the ways in which voice in digital, non-standard work contexts is organisationally and technologically provided, yet constrained. Third, we contribute to growing debates on crowds’ social structure and voice dynamics (Dahlander and Frederiksen, 2012; Gegenhuber and Naderer, 2019) by identifying two potentially conflicting approaches. On the one hand, platforms understand that crowdworkers do not necessarily speak with one voice because of varying interests. To avoid reacting solely to a loud minority, platforms use tools like surveys to capture a broad range of voices. On the other hand, some platforms increase heterogeneity by establishing support crowds with loyal crowdworkers, which are likely to have a louder voice as they ‘reside’ closer to platform management.

Worker voice – and why platforms might care about it

In his exit-voice-loyalty framework, Hirschman (1970: 30) defines voice as ‘any attempt at all to change, rather than to escape from, an objectionable state of affairs’. Farrell (1983) argues that this framework can illuminate how workers organise behavioural options when confronted with dissatisfying situations at work. Dissatisfied workers can exit the firm, stay loyal (or neglect the issue) for a period of time, or opt for voice: speaking up and attempting to change an employer’s practices, policies or outputs to reduce their dissatisfaction. Voice can thus be an important mechanism for organisations to retain workers (Morrison, 2011; Spencer, 1986). Mechanisms, structures, and practices related to voice endow workers with various amounts of knowledge and power (Wilkinson et al., 2013). There are many voice opportunities for crowdworkers in external forums (Irani and Silberman, 2013; Salehi et al., 2015; Wang et al., 2017), acting as bottom-up voice channels that may serve as a substitute for the lack of top-down channels provided by a platform. In this article, however, we focus on voice opportunities provided by platforms themselves.

Voice options may vary by degree, i.e. the extent of worker influence they provide. The escalator model from Wilkinson et al. (2013) depicts the degree of employee participation spanning from simple information about decisions over communication with management, consultation of management and co-determination to control. Each step indicates a separate extent to which workers can become involved in work-related decisions. In adapting this model to the platform context, we are interested in four degrees of voice: 3 communication denotes crowdworkers reporting issues to the platform, consultation refers to platforms gathering crowdworker input on pre-defined issues, co-determination defines a formal decision authority in certain areas, and control defines the exclusive decision-making power within specific domains. Voice opportunities also vary in terms of level. In this sense, voice can affect decisions and processes at a task level, department level, firm level or corporate level. As with degree, the level of voice denotes various extents of power that accompany voice. In the platform context, this means that platforms can involve crowdworkers in decisions exclusively affecting the organisation of specific tasks, but also in those affecting the entire work organisation, if not a platform’s strategic direction.

In contrast to traditional employers that have enduring work contracts with their workers, platforms engage their crowdworkers in an open, flexible relationship with few ‘strings’ attached. Digital platforms cannot oblige crowdworkers to show up for work; they manage a large-scale and distributed workforce of crowdworkers who voluntarily choose to respond to a call based on a self-selection mechanism. From a legal viewpoint, platforms and crowdworkers engage in a series of spot contracts, whereby crowdworkers are classified as independent contractors (e.g. Prassl and Risak, 2016). 4 Platforms excessively nurture this viewpoint by framing themselves as mediators between clients and crowdworkers (Healy et al., 2017). Although this situation does not oblige platforms to provide institutionalised forms of voice for crowdworkers such as a works council that are prescribed in some countries’ industrial relations laws, it does not preclude them providing voice mechanisms voluntarily (Dundon et al., 2004; Spencer, 1986; Wilkinson et al., 2013).

Crowdwork platforms are commonly distinguished along the kinds of tasks they offer, ranging from routine, standardised support tasks requiring little or no prior knowledge (‘micro tasks’) to knowledge-intensive, creative tasks that require specialised knowledge (‘macro tasks’) (Brabham, 2013; Gerber and Krzywdzinski, 2019; Gol et al., 2019). To date, these contexts have largely been analysed separately, resulting in conflicting views regarding platforms’ disposition to provide voice channels for crowdworkers.

One stream of research invokes the metaphor of micro task platforms as ‘digital sweatshops’, arguing that platforms refrain from deploying voice mechanisms because they see themselves as neutral market agents that establish a distanced, technocratic relationship to crowdworkers. A prototypical example of this category is Amazon Mechanical Turk (AMT), which prioritises profit over working conditions (Deng and Joshi, 2016; Deng et al., 2016; Ellmer, 2015) and can ignore crowdworker relations owing to the ‘sheer size of the potential pool’ (Gol et al., 2019: 189; Howcroft and Bergvall-Kåreborn, 2019). Fieseler et al. (2019) report that crowdworkers using such platforms perceive themselves as having low influence, autonomy or voice.

Another stream of research revolves around innovation and creative platforms and discusses how platforms can sustain crowdworkers’ commitment and motivation, for instance by utilising an elaborated community management (Boons et al., 2015; Gol et al., 2019; Jabagi et al., 2019; Langner and Seidel, 2015; Meijerink and Keegan, 2019; Troll et al., 2018). This literature does not explicitly look at voice, but suggests that platforms do have an interest in actively engaging with crowdworkers, as the tasks concerned require specific skills and it is imperative to meet clients’ needs. Assuming, then, that at least some platforms may be interested in providing voice channels for crowdworkers, how might platforms enable voice in technical and organisational terms?

How platforms manage voice: Voice governance and technical building blocks

The platform voice regime is a core instrument designed to directly and indirectly shape crowdworkers’ actions on the platform. We analytically distinguish between two mechanisms to establish a platform voice regime: making organisational decisions regarding platform governance and deploying technical building blocks in the IT-infrastructure and interface (de Vaujany et al., 2018).

In general, platform governance encompasses activities for managing relationships between the platform and the crowd such as defining membership and career options (e.g. who can become a worker, as well as talent- and metric-based workforce structuring), distributing and evaluating tasks, setting incentive and feedback mechanisms (e.g. financial and non-monetary remuneration), and setting the general rules for the involved parties (e.g. clarifying crowdworker rights and responsibilities) (Forte et al., 2009; Kirchner and Schüßler, 2019; Kornberger, 2017; Malhotra and Majchrzak, 2014). In terms of governing voice, platforms can decide the degrees and levels of voice for different crowdworkers.

When platforms deploy technical building blocks, they construct the material foundation of digital work platforms and worker management (Leonardi and Rodriquez-Lluesma, 2013; Leonardi and Vaast, 2017). Although technical building blocks can also encompass a platform’s underlying hardware and software scripts (such as servers or algorithms), we focus our analysis on building blocks allowing crowdworkers to ‘speak up’ and that manifest in parts of the platform that are accessible online via web-based interfaces (e.g. message boxes, rating systems, discussion forums, blogs, support chats, etc.) (Jabagi et al., 2019; Kornberger, 2017).

Governance and technological building blocks are tightly interwoven on platforms. Their mutual configurations may afford (i.e. enable courses of action) and constrain (i.e. prevent a particular action) agency (Faraj et al., 2011; Leonardi, 2011). Nevertheless, it makes sense to treat each as analytically distinct (Leonardi and Rodriquez-Lluesma, 2013), particularly when examining crowdworker voice. For instance, in deploying an open discussion forum as a technological building block, a platform grants crowdworkers a space to ‘publicly’ raise voice and form opinions based on peer interaction. Yet, whether and how a platform processes the forum input and discussion critically affects the scope and vigour of this opportunity as a voice mechanism. For instance, when a platform lacks resources to closely attend to the forum, issues raised by crowdworkers may remain unanswered (Dobusch et al., 2017).

Crowdwork platforms specialising in micro tasks can standardise their operations and leverage the efficiency of centralised governance and control. In such a system, interaction and communication take place between crowdworker and platform or clients, but not among crowdworkers (Gol et al., 2019). For instance, AMT deploys algorithms to structure and evaluate the crowd but made the governance decision to disregard community management (e.g. hiring sufficient staff answering to crowdworkers’ concerns). It also lacks building blocks such as a live chat to contact the platform or other crowdworkers (Fieseler et al., 2019; Howcroft and Bergvall-Kåreborn, 2019; Irani and Silberman, 2013). By contrast, platforms offering macro tasks may decentralise governance to some extent. For instance, they might enable exchange amongst users by providing features as such as commenting on others’ contributions, a community forum, or proactive community management to improve quality and increase identification with the platform (Boons et al., 2015; Gol et al., 2019; Langner and Seidel, 2015).

Methods

Research design and case selection

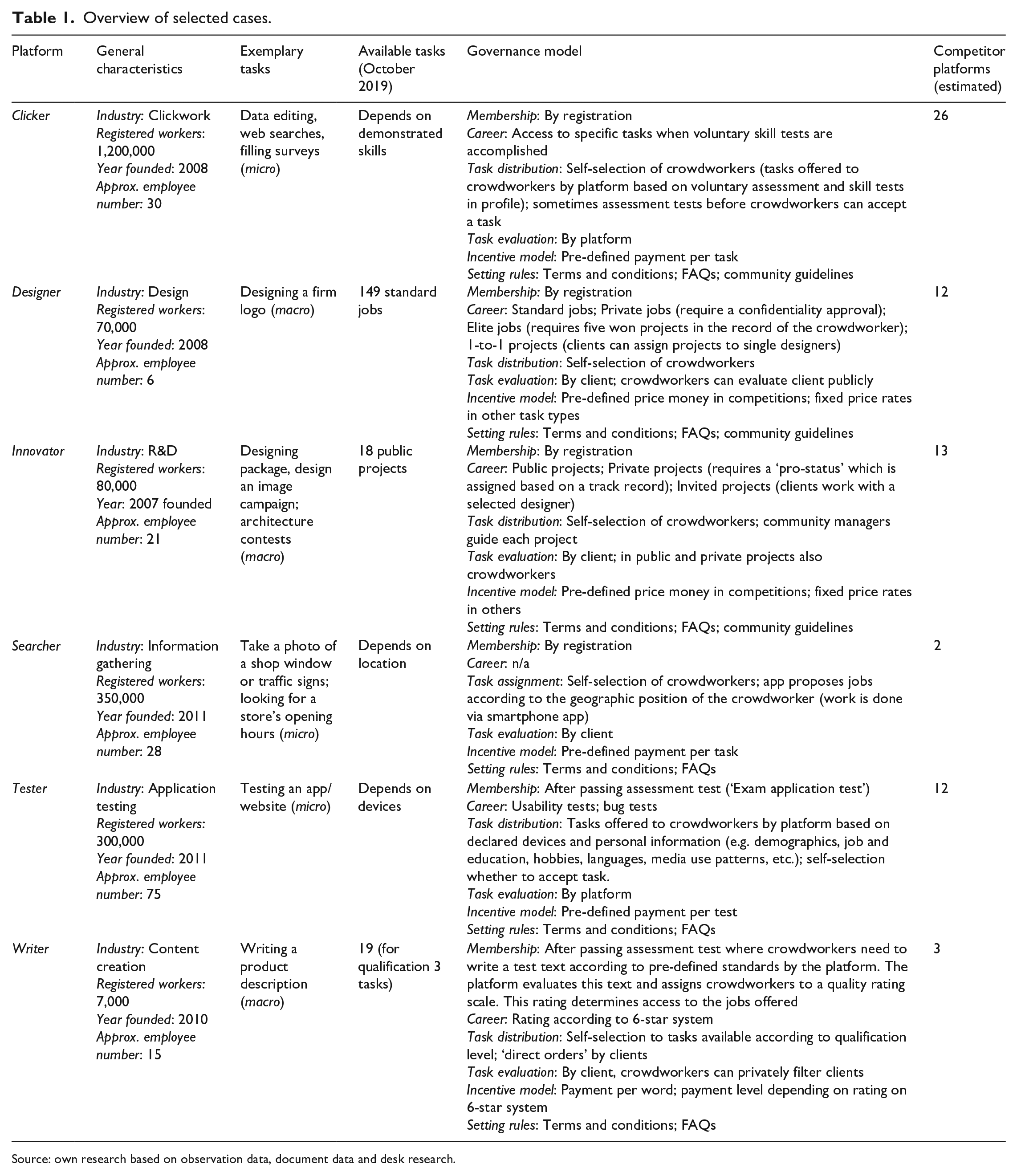

For analysing and synthesising similarities and differences in crowdworker voice regimes across various platforms, we deployed a comparative case study design (Eisenhardt and Graebner, 2007; Yin, 2014), with the platforms and their voice systems as the main units of analysis. We chose to study six crowdwork platforms in Germany (Table 1 offers an overview of the selected cases; note that we use pseudonyms for the platforms). Two considerations guided our case selection. First, we aimed to keep the platforms’ institutional context constant because macro-level institutions are paramount in enabling and shaping the dimensions of voice (Kaufman, 2015; Wilkinson et al., 2013). Germany, for instance, has a strong tradition of employee voice regulated in the Betriebsverfassungsgesetz (industrial relations law), guaranteeing, amongst other stipulations, the right to constitute a works council with influence on a range of corporate issues. Although these rights do not apply to crowdworkers, this institutional environment might still exert a normative influence on platforms. Second, given that extant literature suggests that voice mechanisms differ according to platforms’ task complexity, we aimed to capture this variance by examining platforms ranging in task complexity from micro work to macro work. Searcher, Clicker are on the micro work end of the spectrum, followed by Tester, offering somewhat more complex tasks. The platform Innovator represents the most creative end of the continuum, followed by Designer and Writer, which offer already more standardised, yet knowledge-intensive tasks.

Overview of selected cases.

Source: own research based on observation data, document data and desk research.

Data collection

We collected our data in two phases. In the first phase, we chose an integrated approach based on covert participant observation (Roulet et al., 2017) and document analysis (Bowen, 2009) to gain an ‘in situ’ understanding of the platform regimes and associated voice mechanisms. We created crowdworker identities and actively conducted work on each platform from August 2017 to February 2018. We took this approach because some platforms rely on a centralised work organisation (i.e. communication is primarily between platform and crowdworker), and disclosing our identities as researchers may have yielded unreliable or no data.

As we concealed our researcher identities from the platform, we departed from the practice of gathering participants’ informed consent (Ho, 2009; Kozinets, 2002). Nevertheless, we think our approach is acceptable, for the following reasons. First, we conducted research at a platform level, which means these data are in principle available to everyone signing up for the platform. We neither disclose data about individual subjects, nor reveal any proprietary information (Ho, 2009; Levina and Vaast, 2015). Second, we created accounts in accordance with platforms’ terms and conditions, and actively worked on the platforms (i.e. created value). Third, we limited interaction between us and the platform to what was necessary for our research interests.

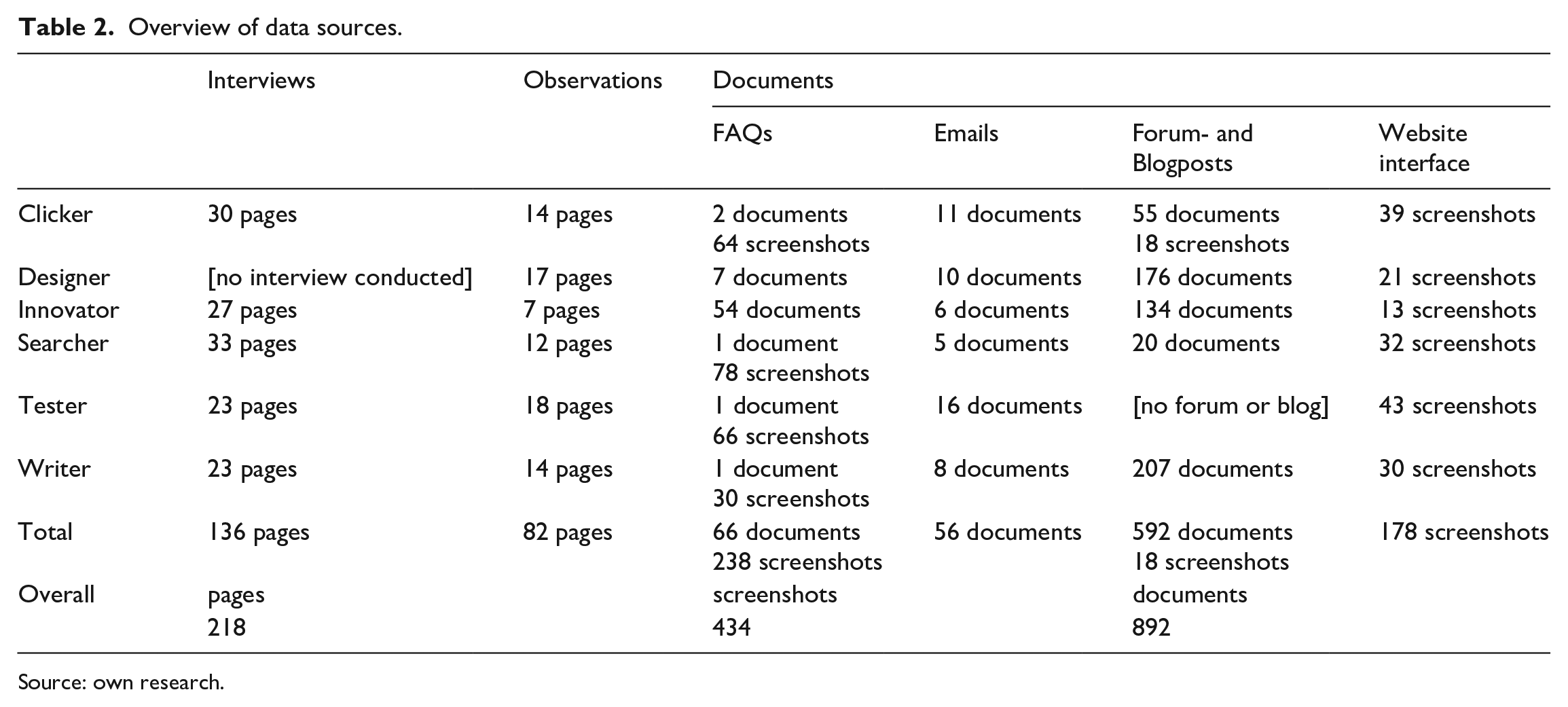

For securing comparable observation reports for data analysis, we stipulated a ‘path’ through the platform and summarised the information obtained in an observation guide. The results of this data collection effort were six extensive platform observation journals in which we included background information on the platforms, individual steps in the work processes, descriptions of the voice tools in place, and notable examples from, summaries of, and reflections on our experiences in the data collection process. Following the advice of Kozinets (2002), we both generated data through our observations and directly copied data from the platform, which we treat as documents (Levina and Vaast, 2015; Reischauer and Mair, 2018). These include FAQs and blogs, as well as overviews of forum threads when applicable. We also collected newsletters and informational emails sent out by the platforms. In summary, we gathered 218 pages of interview and observation data and 892 documents (including additional 434 screenshots) for our analysis (see Table 2).

Overview of data sources.

Source: own research.

In the second phase, we conducted interviews with five platforms’ CEOs or community managers (except for the platform Designer) between April and May 2019 to corroborate our understanding of how platforms approach voice and gain a deeper understanding of why certain voice channels are (not) provided. We interviewed CEOs because they manage the two-sided business model and make key voice-related decisions such as staffing or approving resources for developing new features. In one case (Innovator) we interviewed the head of community management, who was highly knowledgeable about the voice strategy of the platform, owing to her long tenure. The observational and document data enabled us to ask targeted questions, both checking and extending insights generated from the first phase of data collection. Each interview took place at the respective platform’s headquarters and lasted 56 to 62 minutes. For each site visit, the interviewer (first author) immediately wrote up a research diary (totalling 7.5 pages). Comparing the observational and interview data allows us to triangulate the management view on voice with the actual work experience on a given platform.

Data analysis

In our analysis, we sought a balance between rigour and the strength of qualitative research – harnessing interpretative insights and openness to discovering the unexpected. We analysed our data in three steps. In the first step, the first two authors engaged in coding sessions where they openly coded and discussed various themes related to voice in our observation journals and documents.

Based on this initial coding round, we inductively developed categories related to voice and then turned back to the literature. Drawing on the escalator model from Wilkinson et al. (2013), we understood that platforms provided various degrees of voice, including ‘communicate’ (i.e. crowdworkers can report issues to the platform) and ‘consult’ (i.e. platforms gather crowdworker input on issues the platform defines). However, we could not identify that any of the platforms used ‘co-determination’ (i.e. formally involving crowdworkers through giving them decision-making authority in critical areas), let alone ‘control’ (i.e. crowdworkers having exclusive decision-making power within specific domains). For the various levels of voice, we created the higher-order categories ‘task-based processes’, ‘platform-wide work organisation’ and ‘corporate strategy and development’. Task-based processes included voice opportunities immediately related to the work on tasks the platform offers, such as task descriptions and work procedures. Platform-wide work organisation refers to voice regarding platforms’ work organisation structure, e.g. work modes or terms and conditions. Corporate strategy and development related to contents concerning platforms’ future plans and strategy-making, such as business model changes. For example, when we encountered a building block such as a ‘suggestion box’, it became part of the category ‘communication’; using this suggestion box for clarifying legal issues in contributing was assigned to the category ‘task-based processes’.

Second, we analysed the interview data both deductively and inductively. While we applied our existing codes (e.g. coding an interview passage about enabling 1:1 communication between platform and crowdworker as ‘communication’), we also identified themes such as concerns about crowdworker availability, client satisfaction and fair work standards as well as issues of costs (i.e. staffing to maintain voice channels), control (i.e. to shape the amount and direction of voice) and crowd heterogeneity (i.e. crowdworkers’ varying interests). For instance, we coded the platform Writer’s statement that it deletes insulting comments on their Facebook page as an issue of ‘control’. We further realised that the insights from the observations and the interviews allowed us to meaningfully distinguish between technological building block (e.g. a forum) and associated governance decisions (e.g. refraining from using a forum due to control concerns). In both phases, mutual coding sessions between the first and second authors served to sharpen our interpretative sensory faculties. The third author played the devil’s advocate, challenging and questioning the other authors’ interpretations (Creswell, 2007).

In the third step, we sought to increase the credibility of our analysis through triangulation from our various data sources whenever possible (Flick, 1992). For instance, the platform Tester told us they survey their crowdworkers after a project’s completion (i.e. task-based process consultation). Accordingly, we re-checked our observation guides and documents; indeed, we received an email requesting participation in a survey after finishing a test. In the supplementary data table (see Appendix 1, available online), we list all main constructs with exemplary statements from our three data sources: interviews, observations and documents. Additionally, we did a ‘member check’ (Lincoln and Guba, 1985) at two German union meetings held in early December 2018 and June 2019, which brought together workers and platform representatives (CEOs and community managers), and we clarified with two platforms some of their statements in November 2019.

Findings

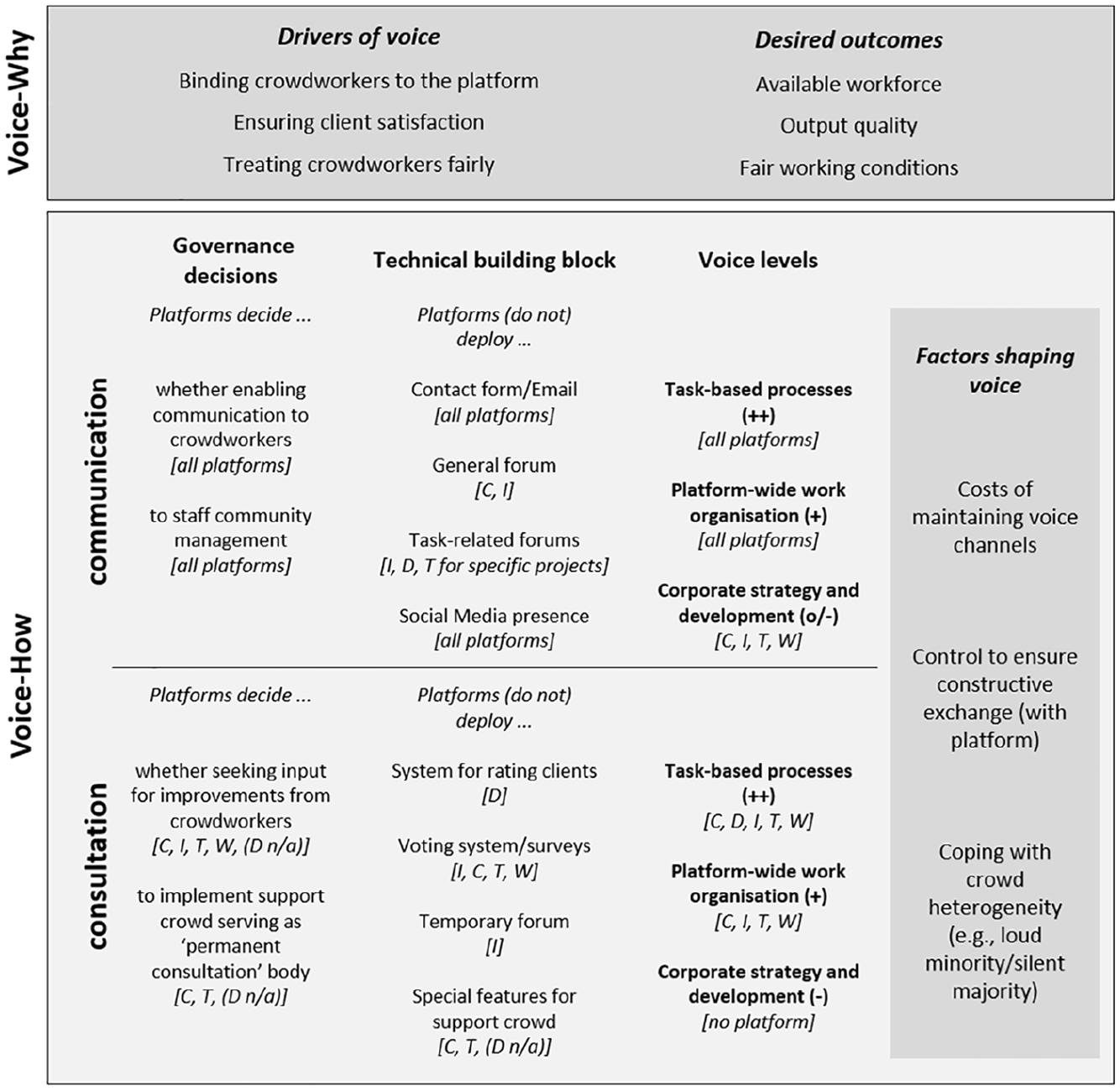

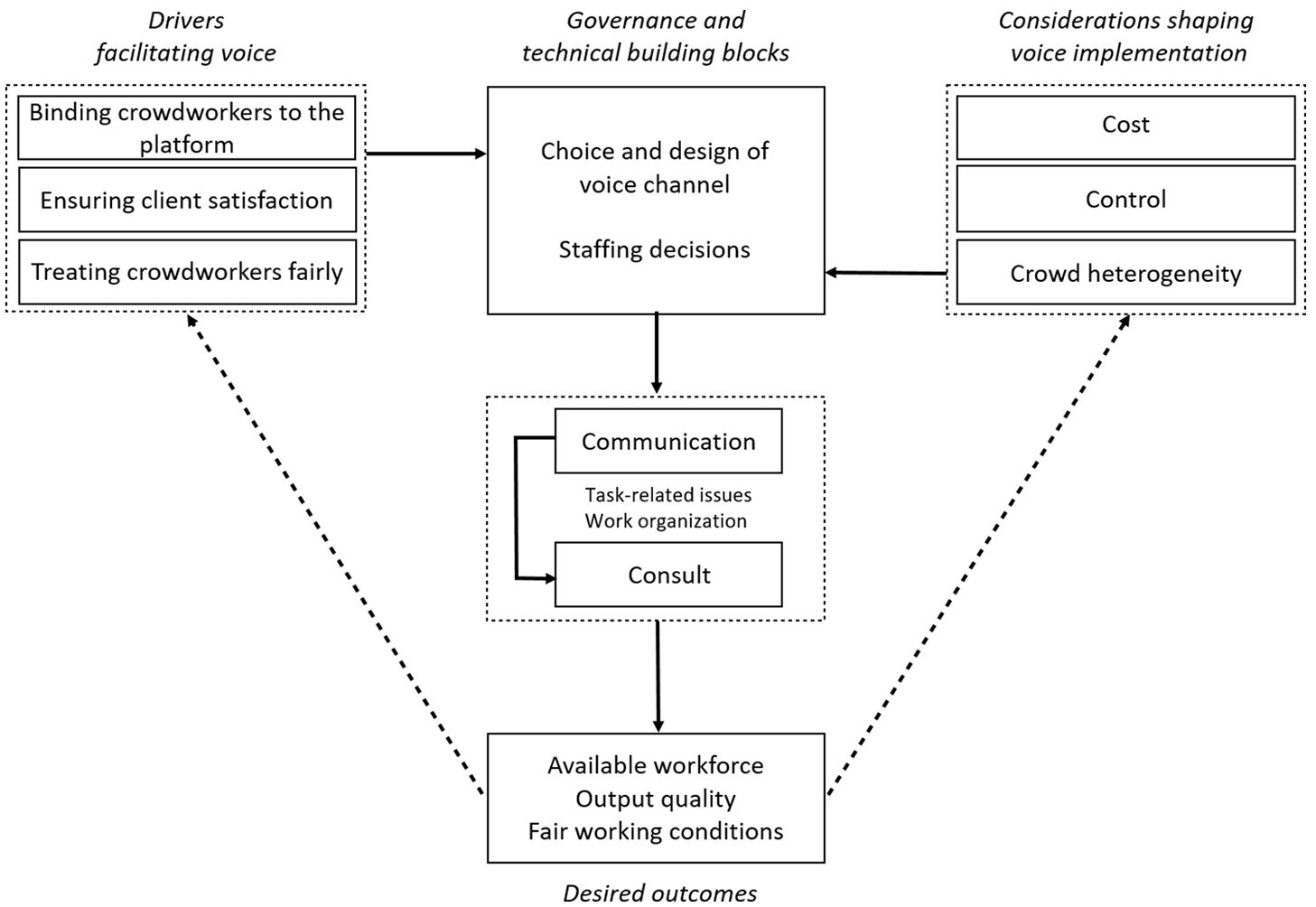

We present our findings in two parts. In the first part, we provide accounts of why voice appears to matter for platforms, followed by details on how platforms manage voice (i.e. degree and level). Figure 1 provides an integrated overview of our findings.

Overview of findings.

Why platforms enable worker voice

Our interviews reveal that platforms offering micro and macro tasks alike recognise that they operate in a context where they face the challenge to bind crowdworkers to the platform. Platforms understand that the flexibility of crowdsourcing has the following implication: for each task, they must be able to mobilise sufficient workers who may only perform the task if they have ‘idle capacities’ (Writer interview). Hence, voice is relevant for ensuring that the supply side of a platform’s business model operates seamlessly by keeping crowdworkers on board even in times when there are not sufficient work opportunities for everyone.

All platforms reported in the interviews that their workforce structure follows a core-periphery logic: a smaller core crowd completes most tasks, whereas a larger peripheral crowd sporadically completes a minor share of tasks. Consider the exemplary statement from Searcher’s CEO explaining the need to retain also the less active crowdworkers: In principle, we could do the majority of tasks with our 1000 core users, but for some tasks, particularly in rural regions and/or special tasks, I also need people who are less active, who do a task maybe once a week or once a month.

Thus, platforms face the challenge of keeping core workers as well as peripheral workers tied to the platform in case suitable tasks come up. Competition, on the other hand, exacerbates turnover, as crowdworkers can switch to other platforms and work on multiple platforms simultaneously.

5

As the CEO of Searcher, a platform offering micro tasks, explains: If you do not provide a certain service level, users perceive platform communication as a one-way street on which their enquiries are unheard. Such a perception hurts our business. I am paid only if crowdworkers perform our tasks. Without their performance, I will not be paid. In the media, there is the perception that I can choose crowdworkers and replace them easily; that is wrong. There is a fight over crowdworkers. Crowdworkers vote with their feet. That means if the crowdworker does not find a platform environment that works for him/her, he/she will go elsewhere.

6

Searcher’s CEO further contends that particularly active crowdworkers ‘know all existing platforms and may use them’. Clicker seeks to draw a distinction between platforms such as AMT where workers are ‘treated like a small cog in a big machine’. Clicker’s CEO declares they ‘need to ensure that crowdworkers prefer our platform over others’ since there is ‘no fixed contractual relationship’. Clicker recognises crowdworkers’ need to have support, as they are ‘alone at home, and not part of a company where you can go to a colleague’s office and ask a question’ (Interview Clicker). Macro task platforms echo this sentiment and see relationships with crowdworkers as a means to retain crowdworkers, too. As an example of a platform offering macro tasks, consider Innovator: At 99designs creatives are just a number. On our platform, we seek to treat everyone as personal as possible. This distinguishes us from our competition, and we feel this is also a reason why creatives stick around.

Another reason why voice matters to the platforms in our sample is ensuring client satisfaction. A platform’s business model rests on crowdworkers not only attending to tasks, but also performing them well. Accordingly, platforms use mechanisms such as assessment tests for vetting crowdworkers (e.g. Clicker requires crowdworkers to conduct tests for various skills), career systems (e.g. Writer provides professional users more lucrative tasks), rating mechanisms (e.g. Designer’s clients rate crowdworkers), feedback mechanisms (e.g. Tester distributes feedback polls to clients) and input controls (e.g. Innovator invites only crowdworkers who demonstrate requisite skills to work on certain projects).

Four of the platforms understand responding to crowdworker concerns as pivotal for improving quality to ensure client satisfaction. As this statement from Clicker exemplifies: That crowdworkers get support, that we rapidly respond to their enquiries and try to solve their problems, that we seek to treat them fairly – these measures result in crowdworkers being more likely to work on tasks and stay on the platform instead of going to the competition. It helps with mobilising them and [emphasis added] getting them to deliver a higher quality. This is imperative, since it is essential to meet client expectations.

Likewise, Tester’s CEO says that without satisfied workers they would be unable to maintain the quality of their service. Macro task platforms share this assessment. Innovator’s community manager argues that providing building blocks permitting communication enables: . . . [a] closer connection to the creatives, allowing for better feedback which then results in better creative outputs . . . Having a closer relationship with our creatives . . . has created a personal involvement on their side that makes them excited to work with us and motivates them to provide the best quality outputs.

Writer’s CEO also acknowledges that responding to workers’ enquiries it is vital to keep good workers on the platform. Innovator’s community manager goes further and points to the following indirect effect of regularly harnessing crowdworkers’ feedback: it can be used to improve the platform, which in turn increases the quality of their product. For instance, Innovator had a system for uploading ideas, which was rather unstructured: ‘It made it really tough to evaluate ideas and compare them to each other which creates some unfair element in itself.’ Given that the crowd distributed some of the prize money based on peer-to-peer voting, it triggered the crowd’s criticism. Hence, Innovator changed the structure of how crowdworkers can upload their ideas: ‘We introduced a one-page submission system, after which was the moment when everybody said ‘this is great’ . . . But the idea for this change came from them, from their feedback.’

This also resulted in improvements for Innovator’s clients. The new system made it easier to ‘evaluate ideas against each other, which allows the client to pick the best ideas more easily’.

Searcher views its platform guided by a ‘market-based process’, and our observations and documents indicate that Designer embraces a similar viewpoint. However, four of the platforms reveal motivations that go beyond a simple cost–benefit analysis (Clicker, Tester, Innovator, Writer). They see treating workers fairly as fitting their values, as Tester emphasised: It is important for us founders, and we are proud, that people have positive experiences with us. That they say, ‘the support is great, I am treated in a friendly way, I get answers to my enquiries, and I am heard’. This makes us proud; this is also our personal goal.

Innovator emphasises its mission to create a professional community. Writer’s mission includes protecting crowdworkers from large corporations’ unfair contracting practices towards individual freelancers. Clicker presents itself as enabling self-determined work worldwide under fair conditions, but argues it is limited by financial constraints: Our lack of manpower is a bottleneck to the crowd’s involvement. We are a very small team . . . we do not have $50 million in funding to allow us to think about new and beautiful things. Our medium-sized, German company’s orientation is that revenues and profit drive our actions. This disadvantageous lack of financing prevents us from hiring staff to involve the crowdworkers – it would be nice to improve the platform in this manner. We would certainly like to do more in worker involvement.

In sum, all platforms are interested in opening voice channels to bind crowdworkers to the platform and increase output quality. Additionally, four platforms have expressed an interest in treating crowdworkers fairly, which has an impact on the degree and level of voice they provide, as we will now demonstrate.

How platforms organise crowdworker voice

To illuminate how platforms organise voice, we structure this section according to the two degrees of voice prevalent in our sample: communication and consultation.

Communication

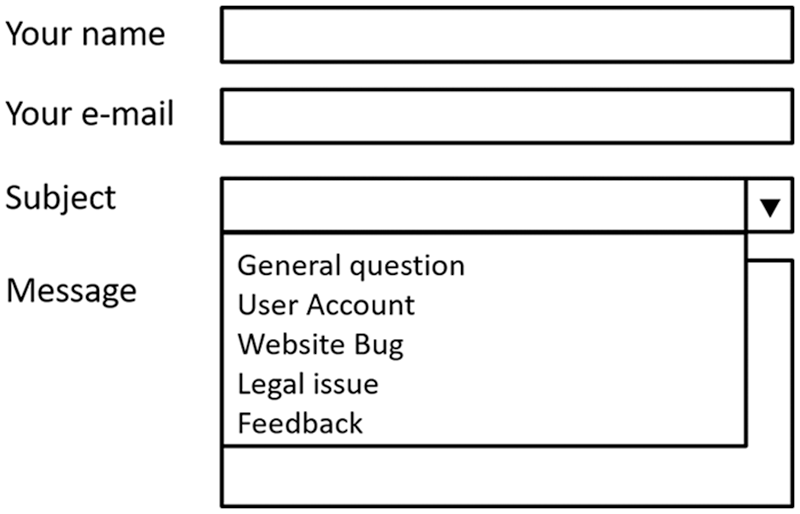

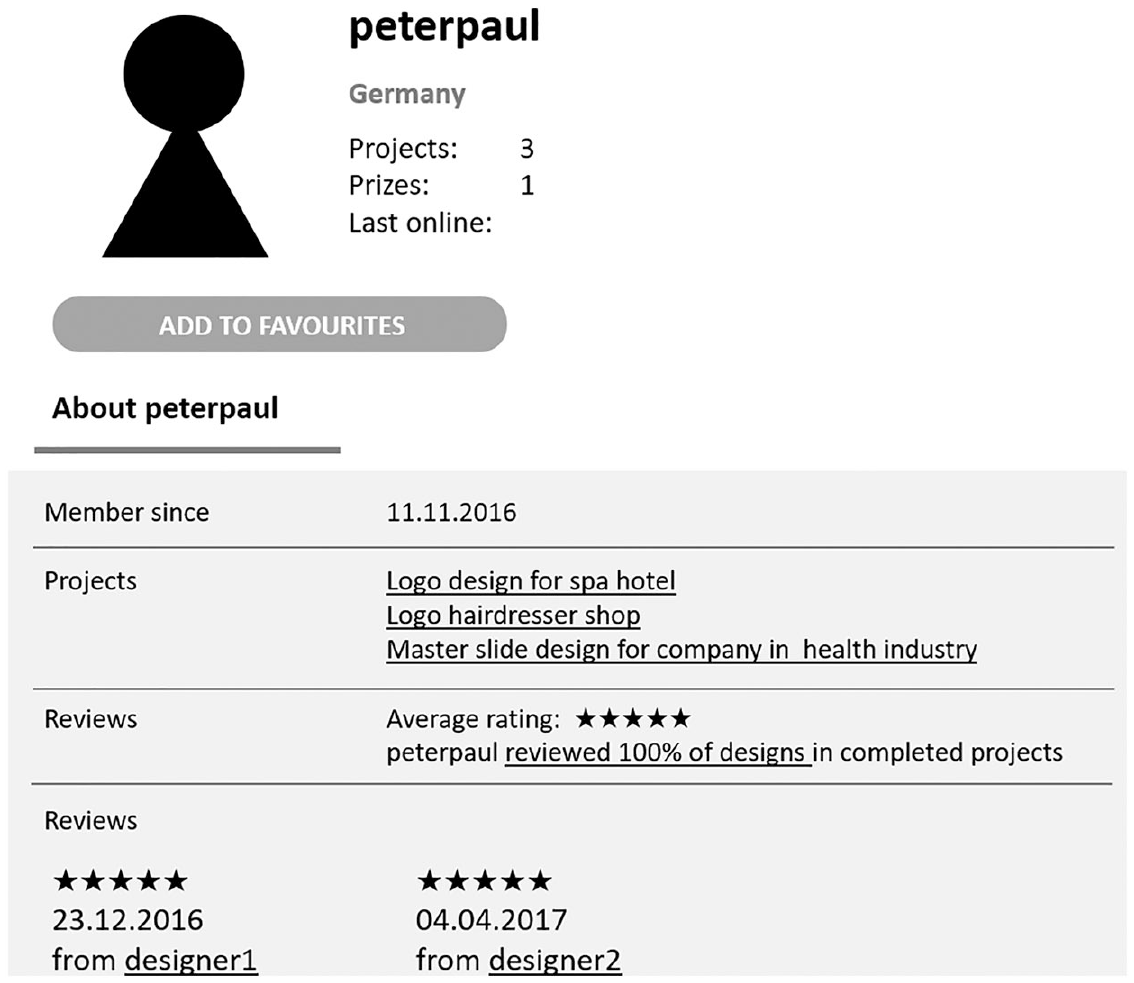

Communication allows crowdworkers to contact the platform (e.g. to report issues or ask questions). All platforms in our study decided to have a community management function (as a separate department or within project management) and accordingly deployed technical building blocks enabling communication. Through our observation, we found contact forms (which translate into email), messaging systems and chat boxes to be the primary technical building blocks for the communication between platforms and crowdworkers. In terms of contact forms, Designer and Tester provide roll-out menus in their message box from which crowdworkers can select ‘feedback’ as their message subject (see Figure 2), and Writer even uses ‘feedback for us’ as the general headline on its contact form. Writer’s CEO states: We are open and human; people can talk to us. We have two full-time positions in community management. They know the crowdworkers well, particularly those who contact us quite often. Our goal is to answer email that reaches us by noon on the same day, and messages we receive after noon by the next day.

Rebuilt example of a contact form as found on platforms.

Through our observation, we wanted to find out whether and how platforms answer crowdworkers’ enquiries. We sent three questions from three separate worker accounts to each of the six platforms. One question concerned task-based processes (e.g. potential earnings), one platform-wide work organisation (e.g. concerning assessment tests), and one corporate strategy and development (e.g. the platform’s future plans). Each message was in accordance with the platforms’ respective work systems. In addition to these questions, we integrated a suggestion in each message to see how platforms would react to our attempts to exert influence on platform-wide work organisation.

All platforms responded to our questions concerning task-based processes and platform-wide work organisation within one day. Consider the following example from Searcher. A typical task on Searcher is taking pictures of pre-defined objects in public and sending them to the client, so we asked: ‘Could I face any legal problems if I take a photo and a person appears in the background?’ and ‘What happens if I have an accident while taking photos for a client?’ We added the suggestion, ‘It would probably be helpful to provide a written guide with this information to all [Searcher]!’, as this information was not available in the platform’s FAQs. Searcher responded: Dear [crowdworker synonym], In general, it is no problem when another person appears on the photos, since most jobs take place in public spaces (e.g. streets or super markets). Some jobs, however, require that there should be no identifiable person in the photos (e.g. take a photo when they turn their back to you). Regarding insurance: an accident would not be covered by us, because you are not employed by our company. This would be a case for your health or accident insurance because you do the job in your spare time. I hope that helps you out! Best, [support worker name]

As the message above shows, Searcher did not address our suggestion, and neither did Clicker, Innovator or Writer. Tester indirectly addressed our suggestions by referring to a function in the user profile. In the case of Designer, we asked what we could do in response to unfair client reviews, adding the suggestion that the platform could install an arbitration system. Designer answered, ‘We don’t plan to install an arbitration system. However, there is also no need for one’.

To pose questions related to corporate strategy and development we used a separate working account to send all platforms the following questions: Because I plan to invest more time in working for your platform, I wanted to ask how you will proceed in the long-term. Is your business growing? What do you have planned for the next few years?

The answers concerning our questions on corporate strategy took longer and were more restrained. Tester answered that they were growing and continuously hiring new staff to process more projects. Clicker’s support replied that they were not authorised to provide any details on corporate strategy or development. Writer wrote generally that their business was growing. Innovator invited us to join a dialogue. Searcher and Designer did not respond to our strategic questions.

Although some platforms ignored some of our suggestions in their answers and others did not respond to our messages containing strategic questions, our interviews reveal that issues crowdworkers raise may still matter to the platforms. First, enquiries may reach upper echelons of the organisation. In the interview, Tester’s CEO mentioned a crowdworker complaint that moved from community management to him. He communicated personally with the complaining crowdworker, attempting to explain the ‘founder perspective’. Second, accumulated concerns may trigger platform action, particularly if the timing is right and the issue matches community managers’ observations. As Innovator reported: I received something and maybe I have seen something happening on the projects anyways and something was on my mind; or sometimes we think, ‘okay we have a solution’. Then we hold community management meetings and I bring it up and say, ‘this is what happened’. And then we say, ‘okay, let’s table it and take it on later’, or we make this happen. It doesn’t matter how nor who says stuff, if it’s the right moment, it makes sense, and we have the time to do it, we will use the information.

The interviews further indicate that volume makes a difference in responding accordingly. For instance, Tester states, ‘If, for example, several people are asking how to add new devices for testing to their profile and we realise there is a problem, then we fix it’. Similarly, Clicker reports in the interview that if they receive 10 messages about a similar issue, they know something is going on. Fourth, as we will elaborate in the next section below, communicated concerns may trigger a platform to consult crowdworkers.

Aside from general contact forms, we found that some platforms deploy forum-like building blocks to enable communication, but with different governance decisions motivating them. Clicker has an external forum, though moderated by crowdworkers. The forum’s purpose, according to the CEO, is ‘self-help and helping each other. This forum is not steered by us’. With Designer and Innovator, we identified permanent task-related discussion channels as platform-specific tools. Task-related discussion channels are mini-forums where crowdworkers can make suggestions and discuss matters related to the project. At Designer, these mini-forums are not moderated by the platform, and serve as a communication channel between crowdworkers and clients. In Innovator’s mini-forums, crowdworkers can also ask questions to a community manager guiding the project. Innovator’s community manager says that such open mini-forum discussions . . . came out of nowhere. And maybe that was the issue; for the community it was exciting because all of the other creatives were there and they could hold a conversation there. But for the guides it was terrible because it was like, ‘okay let’s stay focused’, and for the clients it was also terrible because they didn’t care, because they just wanted their project to run and they were like, ‘why is this conversation happening?’

This reflection shows that voice consumes community managers’ attention (i.e. costs), and discussing non-project matters may alienate clients. Innovator implemented an external forum before realising it was suboptimal. Community management tried to drive the conversation there, but it did not ‘catch on’; Innovator invested insufficient efforts in ‘getting it going’. They believe that crowdworkers do not want to ‘leave the platform [for an external forum website]. They want to stay here, and if that is impossible then they would rather not speak up’. As Innovator sees the advantage of a forum as supporting community-building, the platform is experimenting with implementing a better forum on the platform so that crowdworkers ‘can communicate outside of projects, but still be a part of the platform’. Tester also has task-related forums for some projects, if they feel that open communication amongst the testers makes sense for the task. Tester’s CEO says that they debated whether to implement a platform-wide forum: We have discussed this several times. Maybe it might happen at some point. It is a mixed bag when weighing the benefits against costs needed to maintain such a forum. We do not think that this is the channel where we would get a lot of feedback, nor that our crowdworkers really want that feature.

Searcher has no forum. When asked why they do not implement one, the CEO established that enabling voice follows a strict cost–benefit analysis: It is possible, maybe useful. People can get in touch with us. So I do not think it is necessary to have a function that user A communicates with user B. I am not sure why we would need that, unless I can reduce the cost of my community management through such a feature.

Similarly, Writer decided against a forum, raising the issue that such a forum requires staff to moderate it (i.e. additional costs) in order to maintain control (i.e. ensuring constructive exchange): We decided to not have a forum because we saw on other platforms that they develop a life of their own. People write in the forum, and may publicly shame clients or whatever instead of working on tasks. If I have a forum, I need staff to moderate it and make sure it stays civilised.

Through our observations, we also found that all platforms have a social media presence on Facebook. Clicker and Searcher see social media channels as ‘outgoing-media’ (Clicker interview), for instance to promote jobs or other activities. Searcher says Facebook is no place for ‘constructive dialog’. Similarly, Writer says that: ‘Facebook is a strange environment. We try to maintain control over communication. If a comment on our Facebook page is weird or insulting, which can happen quickly, we do not approve it.’

In sum, our findings suggest that all platforms provide opportunities for communicating directly with the community management. The focus is more on task-based processes than on platform-wide work organisation or strategic issues. If platforms have open forums, they see them more as a means to facilitate community-building than as appropriate channels for raising voice. Platforms refraining from deploying such building blocks are reluctant because of maintenance costs and concerns about creating an unproductive atmosphere. The latter also applies to social media channels, which platforms view as inappropriate for engaging in constructive dialogue.

Consult

Platforms consult crowdworkers to provide input on specific issues. We identified three ways in which platforms enabled consultation: through standardised technological building blocks enabling rating or voting; through temporary forums or surveys directed at specific issues; and by establishing ‘permanent consulting’ roles for crowdworkers by formally installing a support crowd discerning relevant issues. Searcher did not use any form of consultation, and Designer mostly used only standardised rating and voting tools for giving workers voice in task-related processes.

On Designer, crowdworkers’ evaluations of clients (5-star rating including verbal descriptions) are publicly accessible to everyone on the platform (see Figure 3). Following a marketplace logic, such a system helps weed out bad clients. In its FAQs, Designer states that, ‘At the end of each project, you [only the winner of a contest or a directly hired creative] can review the client’s handling of the project and data transfer. In this way, other designers can orientate themselves by your experiences. Please formulate your review truthfully, politely, and objectively’. In the case of contests, Designer is only interested in the opinion of the winner, thereby neglecting the voice of the many ‘unsuccessful’ crowdworkers. The evaluation system on Writer works in a private setting: crowdworkers can mark good clients and block bad clients, which affects the project list on the crowdworker’s interface (e.g. omitting negatively flagged clients from the individual crowdworker’s list).

Rebuilt client profile on the platform Designer.

Platforms may use voting to provide input for decision-making in task-based processes. Across platforms, we found two voting-based voice opportunities. Innovator has a community award where crowdworkers distribute multiple prizes based on a peer voting in open innovation contests. However, the client distributes the majority of the competition prize pool money. Designer invites crowdworkers to vote for a ‘design of the month’, which they publish in a newsletter that registered crowdworkers receive. The winner receives a minor monetary appreciation, indicating that it is an entertainment rather than a voice instrument.

The second way in which platforms enable crowdworker consultation is through temporary forums and surveys, sometimes combined with another building block (e.g. a blog) to share how the platform handled the crowdworkers’ input. Most platforms hereby focus on task-specific issues: Clicker uses surveys to understand why a project runs well or poorly, or to gain user insights and feedback; Tester regularly conducts surveys on similar issues. Innovator uses surveys to collect crowdworker feedback on invited projects. Designer also runs surveys for improving task-specific issues, and asks (in case of contests, only the winners) after projects for: ‘. . . concrete feedback concerning the processes on the platform. This feedback helps us to facilitate further optimisations and will not be available publicly.’

Two of the macro task platforms further expanded the voice level to platform-wide work organisation. Through our observations of Writer, we found a rare example where it sought crowd input through a survey combined with a blog post publishing and discussing the results. The survey asked questions regarding crowdworkers’ satisfaction with the present evaluation system, the result being that the majority preferred the established system. Writer’s CEO explains that this initiative occurred in response to the crowd raising this issue when communicating with the platform. This indicates that communication may trigger consultation, and that surveys are an instrument to cope with a crowd having various interests (i.e. crowd heterogeneity): We had a peak in complaints. But we kind of neglected it: apart from 10 complaints, 5000 other tasks were completed without any problem. Nevertheless . . . I thought, we need to find out how big the problem actually is – how many are unhappy and how many are just quietly happy with the system. So we said, ‘let’s do a survey and find out how the crowdworkers see the issue’. Overall, the survey showed that people are generally content with the current system.

Communication also triggered consultation in the case of Innovator, albeit in a less immediate way. As the community manager puts it: ‘Hearing that the rating system is not fair for quite some time motivated us to find a solution for this problem’. In response, the platform opened a temporary forum to engage in a specific dialogue with its crowdworkers. When asking what it could do to improve distribution of the community prize, which is based on crowdworker voting, an intensive discussion followed, after which the platform yielded to critical voices calling for a reduction of the community prize pool to shift more money to client prizes and other prizes.

The third way platforms can organise consultation is to delegate or ‘outsource’ part of the community management to core loyal crowdworkers. Two of the micro work platforms who expressed interest in worker concerns, Clicker and Tester, used this option. These platforms inserted a small, dense nucleus into their core by formally implementing a support crowd. Clicker ‘outsourced’ a large part of voice management to their crowd by enlisting approximately 100 experienced workers to answer other crowdworkers’ questions submitted through the contact form. Clicker thereby expanded its system of outsourcing quality management by paying crowdworkers to check others’ work. Enlisting a support crowd for co-managing voice is feasible, as ‘80% of all enquiries are basic questions’, i.e. related to task-based processes. Support crowdworkers are paid for each ticket they answer, and sign a special contractual agreement (e.g. to ensure confidentiality). In terms of voice, the support crowd collects and filters crowdworker feedback to report directly to the community management. Clicker endows the support crowd with a special building block not available for regular crowdworkers: a live chat allowing them to talk with their support peers and granting them privileged access to community managers. Clicker’s support crowd enables them to have an ‘early warning system’ (they can detect errors in task descriptions or other issues before they unfold) and to respond flexibly to rising or decreasing numbers of enquiries without incurring fixed costs. Tester is experimenting with a similar system, because they feel that some crowdworkers want to take on ‘more responsibility’. Currently, a few crowdworkers have project management responsibilities, receive higher pay, and are tasked with giving Tester ‘direct feedback’.

In sum, we find that most consultation revolves around task-related topics but, in the cases of Writer and Innovator, also addressed platform-wide work organisation. Furthermore, Clicker and Tester involved a support crowd in handling crowdworker concerns. These four platforms are the ones that expressed an interest in fair work standards. The different deployment of these tools can be explained by cost, control and crowd heterogeneity considerations. Regarding costs, it seems that Clicker and Tester are able to build on their existing centralised IT infrastructure to establish such a support crowd. Writer, a much smaller platform, may lack the resources to do so. Innovator, in turn, has an elaborate and visible community management in place, and a support crowd may not fit to the platform’s orientation (e.g. professional creatives’ lack of interest to answer standard issues). From a control perspective, consulting through surveys in 1:1 communication allows platforms to remain in charge. However, the macro task platforms Writer and Innovator seem to be willing to relent some control when consulting the crowdworkers – but still only on pre-decided issues using suitable building blocks (e.g. surveys or temporary forums). Regarding crowd heterogeneity, we identify two potentially conflicting trends: platforms consult crowdworkers to capture varying voices, whereas the installation of a support crowd elevates core workers’ voice.

Discussion and conclusion

Drivers, boundary conditions and consequences of platform voice regimes

Our findings illuminate the questions of why and how platforms enable crowdworker voice by establishing varying platform regimes based on governance decisions and technical building blocks. Building on a reflection of these findings (summarised in Figure 4), we advance three contributions at the interface of the voice and the platform literatures.

Overview of conditions, considerations shaping functional voice and its (intended) outcomes.

First, we show that supply-side and demand-side considerations are motives for enabling crowdworker voice among all platforms we studied. Platforms depend on crowdworkers for economic success, as they must be able to match client demand with crowdworker supply. Yet, a platform’s workforce is fragile for various reasons (Bauer and Gegenhuber, 2015; Berg et al., 2018). For instance, crowdworkers may cease crowdwork activity because of changes in life situations or discovering that the work is unattractive, or they register on multiple platforms to reduce the risk of income dependency (Möhlmann and Zalmanson, 2017). The workforce’s fragility increases when a task requires specific attributes (e.g. location, demographics) that can only be met by more peripheral users. Given this highly competitive situation, platforms must continuously extend but also bind existing crowdworkers to the platform in breadth (i.e. network size and diversity) and depth (i.e. having an experienced user-base delivering high-quality contributions) to ensure client satisfaction. Deploying staff to personally interact with crowdworkers (rather than using algorithms) allows platforms to establish a more motivating and encouraging work environment, while reducing recruitment costs. 7

Whereas the crowdwork literature portrays micro task platforms as refraining from offering voice opportunities (Gol et al., 2019; Howcroft and Bergvall-Kåreborn, 2019), our findings suggest that both micro task and macro task platforms care about voice. Why did prior studies miss this parallel? One reason might be that studies typically examined only one platform, thereby neglecting facets that appear when considering multiple platforms in comparison. Relatedly, research and media treat AMT, a platform where crowdworkers arguably remain ‘voiceless’, as an over-representative ideal type of crowdwork. To avoid a similar overgeneralisation of our results, we suggest that a boundary condition of our identified voice patterns is due to the specific attributes of our sample comprising medium-sized platforms facing multiple competitors and focusing mainly on a national market (see Table 1).

Second, we contribute to the voice literature by shedding light on how platform voice regimes differ and what considerations shape these regimes. While binding crowdworkers to the platform and ensuring client satisfaction motivates all platforms to consider voice, platforms – like other organisations – differ in the extent to which they have an interest in treating crowdworkers fairly (Franca and Pahor, 2014; Gilman et al., 2015; Helfen and Schüßler, 2009). Platforms declaring to have an interest in fair work standards consult crowdworkers more extensively, either by gathering feedback on platform-wide issues (Innovator and Writer) or through deploying a support crowd (Clicker and Tester). Platforms self-identifying as a marketplace refrained from such consultation practices. The identified platform voice regimes hereby exceed what the voice literature would imply. Wilkinson et al. (2018: 714) note that ‘many organisations that rely on precarious work offer no opportunities for voice’. In contrast, the platforms in our study do embrace voice, but largely in a functional way and within the confines of improving and optimising organisational performance (Barry and Wilkinson, 2016; Dundon et al., 2004). Such a functional voice regime allows organisations to remain in control and mute voices if necessary, thus providing microphones rather than megaphones that could increase crowdworker voice in unpredictable ways.

Decisions regarding the choice and design of voice channels were influenced by considerations regarding costs (i.e. resources needed to maintain voice), control (i.e. the platforms’ ability to shape voice direction) and crowd heterogeneity (i.e. balancing the interests of a crowd with diverse viewpoints and backgrounds). Voice channel maintenance is costly, as it requires corresponding staffing and IT infrastructure. Each additional building block enabling voice increases complexity and communication, resulting in potential information overload (Dobusch et al., 2017). In order to retain control, platforms enable crowdworkers to exercise voice primarily via direct communication, as forums may also be an arena for issuing criticism and ‘letting off steam’ (Marchington, 2007; Spencer, 1986). If forums are used for the purpose of leveraging voice for community-building, they are either meant to be work-related (e.g. task-based forums), set up temporarily (e.g. opening a forum to debate a specific issue) or deployed with constraints (e.g. using Facebook primarily for PR, and deleting inappropriate posts). Crowd heterogeneity considerations shape the ways in which platforms deploy standardised and semi-standardised voice channels. Although non-standardised building blocks lend ad-hoc qualitative insights, crowdworkers may simply lack time to speak up, or refrain from speaking up in a forum while a louder minority effectively pressures an organisation or uses the forum as a ‘venting’ space (Etter et al., 2019; Gegenhuber and Naderer, 2019). Here, communication triggers consultation: A combination of semi-standardised and non-standardised channels (i.e. a survey combined with a discussion on their blog) yields the advantage of capturing voices beyond a ‘loud minority’ while at the same time increasing inclusion and commitment (Deng et al., 2016; Dobusch et al., 2018; Faraj and Johnson, 2011).

Third, by highlighting that crowd heterogeneity matters also for crowdworker voice, our study illuminates the relationship between a crowd’s social structure and voice dynamics (Dahlander and Frederiksen, 2012; Gegenhuber and Naderer, 2019). As mentioned above, platforms comprehend the need to manage a crowd’s heterogeneity, but use potentially conflicting approaches of dealing with it. Although a survey gives more equal voice to all crowdworkers, the establishment of a support crowd as a ‘permanent’ consultation body with special interface functions provides selected crowdworkers with richer opportunities to speak up compared to ‘standard’ workers. Each support crowd is larger than the community management department, scalable because of variable costs (i.e. payment per support ticket), creates a stronger bond to loyal crowdworkers (i.e. providing additional ‘career’ opportunities), and serves as a distributed filter for discerning regular crowdworker enquiries warranting a platform’s attention. Our insights on how platforms differentiate crowds are hereby also relevant for ‘offline’ contexts, where organisations struggle with simultaneously addressing full-time employees and temporary workers or where access to voice is shaped by socio-demographic characteristics such as gender and sexual orientation (Wilkinson et al., 2018).

Conclusions, limitations and avenues for further research

In sum, without the voice degrees of co-determination (e.g. formally involving crowdworkers through accountable voting on critical decisions) and control (e.g. offering crowdworkers exclusive decision-making power within specific domains; see Wilkinson et al., 2013), our study shows that platforms deploy functional voice regimes rather than democratic ones (Barry and Wilkinson, 2016; Wilkinson et al., 2018). Such regimes equip crowdworkers with a microphone where the platform addresses individual crowdworkers and can balance and adjust crowdworker voice, if not mute it. This is far from a megaphone, potentially increasing crowdworker voice in unpredictable ways and thus ceding control. With this finding, we establish that ‘traditional’ and digital non-standard work contexts have something in common: management seeks to remain in control over voice structures (Wilkinson et al., 2004). Our contribution to both the voice and the platform literature lies in showing how the combination of platform governance and technological building blocks enable platforms to create such a functional, centralised, and highly controlled platform voice regime.

Certainly, our study suffers from the usual limitations associated with a case study approach. As such, we consider our study as a vantage point for future enquiries in how voice mechanisms affect platform dynamics. Going beyond a managerial perspective, future research should attend more to clients’ and workers’ perspectives. How do clients perceive voice mechanisms for crowdworkers or to what extent do clients encourage or manage voice themselves? We also need more data on the crowdworkers’ assessment of various voice mechanisms (Dundon et al., 2004), most importantly in relation to their heterogeneity as well as crowdworkers’ perceptions of fair work standards. Moreover, how do different technical building blocks affect crowdworkers’ propensity and ability to organise collective action (Salehi et al., 2015; e.g. Schwartz, 2018)? Another avenue is unearthing the consequences of voice for each party. How do community managers manage voice in the day-to-day operations (e.g. navigating tensions with other departments)? What are (unintended) consequences of consultation (e.g. escalation of inclusion expectations; Hautz et al., 2017)? Relatedly, what are the consequences of establishing a support crowd for these workers (e.g. over-identification with management) as well as for the platforms? To understand these social dynamics, longitudinal research designs are needed, which can also shed further light on how one degree of voice (e.g. communication) may trigger other ones (e.g. consultation), as our findings indicate.

Future research may also capture contextual factors more extensively, such as the role of venture capital (e.g. favouring or impeding voice), institutional embeddedness (e.g. Thelen, 2018; Uzunca et al., 2018) and different types of platforms (with different sizes and market positions). For instance, one might expect different dynamics in spatially-bound platform work: gig workers in branded uniforms working in shift-based systems cannot easily engage in switching, thereby weakening crowdworkers’ positions (see Thelen, 2018; Vandaele, 2018).

Lastly, another fruitful avenue would be to study under what conditions platforms embrace democratic voice as a right to exert some control over managerial decision-making. We allude to ‘mainstream’ platforms seeking substantial profits being less likely to embrace democratising voice than new forms of platform organising, such as platform cooperatives (Scholz, 2017; Schor and Attwood-Charles, 2017). Our focus on voice also excludes other worker-related issues. For instance, enabling voice does not automatically entail fair payment or less precarious work conditions, which are certainly topics worth addressing in the future (Frenken et al., 2019).

Supplemental Material

Gegenuber_supp_mat – Supplemental material for Microphones, not megaphones: Functional crowdworker voice regimes on digital work platforms

Supplemental material, Gegenuber_supp_mat for Microphones, not megaphones: Functional crowdworker voice regimes on digital work platforms by Thomas Gegenhuber, Markus Ellmer and Elke Schüßler in Human Relations

Footnotes

Acknowledgements

We are indebted to the editor Catherine Connelly and the three anonymous reviewers for their helpful suggestions and constructive criticism that tremendously helped us to shape the arguments in this article. Moreover, we are grateful to Robert M. Bauer, the members of LOST (Leuphana University Organization Studies Group), and the attendees at various conferences (e.g. Reshaping Work Conference Amsterdam, Academy of Management Conference Boston, EGOS Colloquium Tallinn, International Human Resource Management Conference Madrid) for providing feedback and vital input. We also thank Claudia Scheba for her invaluable research assistance, as well as Lilith and Kathryn Dornhuber for aiding in preparing the manuscript. Lastly, our thanks go to the Hans-Böckler-Foundation and the IG Metall for supporting our research.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article. This work was supported by the Hans Böckler Stiftung (Project Number 2017-291-2).

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.