Abstract

Objective

This systematic review evaluates the use of cognitive load measurement methods in usability testing across diverse software interfaces. It provides guidance for researchers and practitioners by proposing a framework for selecting appropriate cognitive load measurement techniques.

Background

Cognitive Load Theory offers insights into software usability by addressing users’ mental effort during task performance. Although cognitive load measurement methods are increasingly used in usability testing, no comprehensive analysis has focused specifically on various software interfaces.

Method

We systematically analysed 87 experimental studies published between 2001 and 2025. Databases searched included IEEE, ACM, ScienceDirect, SpringerLink, and Scopus. Inclusion criteria focused on studies applying cognitive load measurement to usability testing for different types of software interfaces.

Results

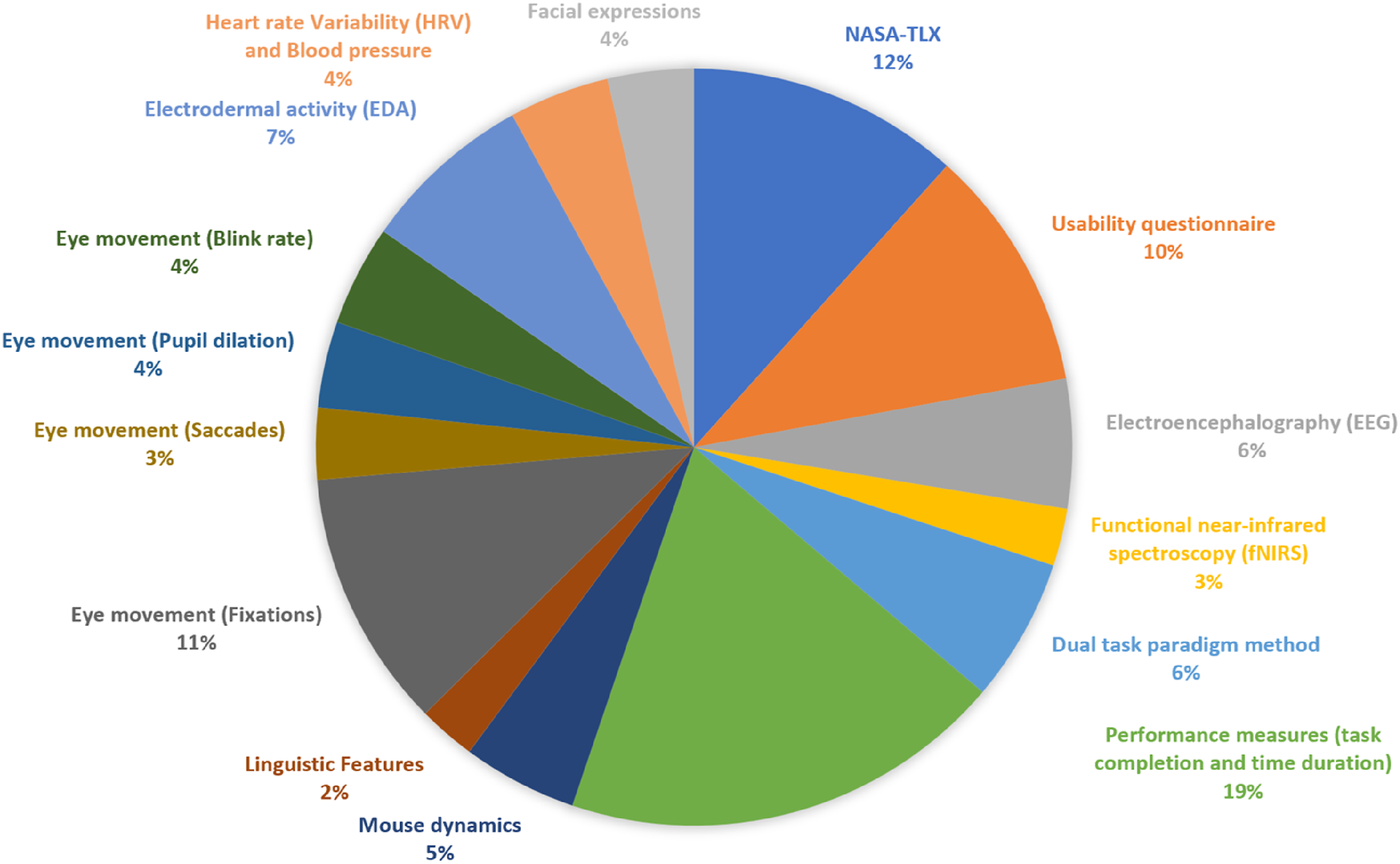

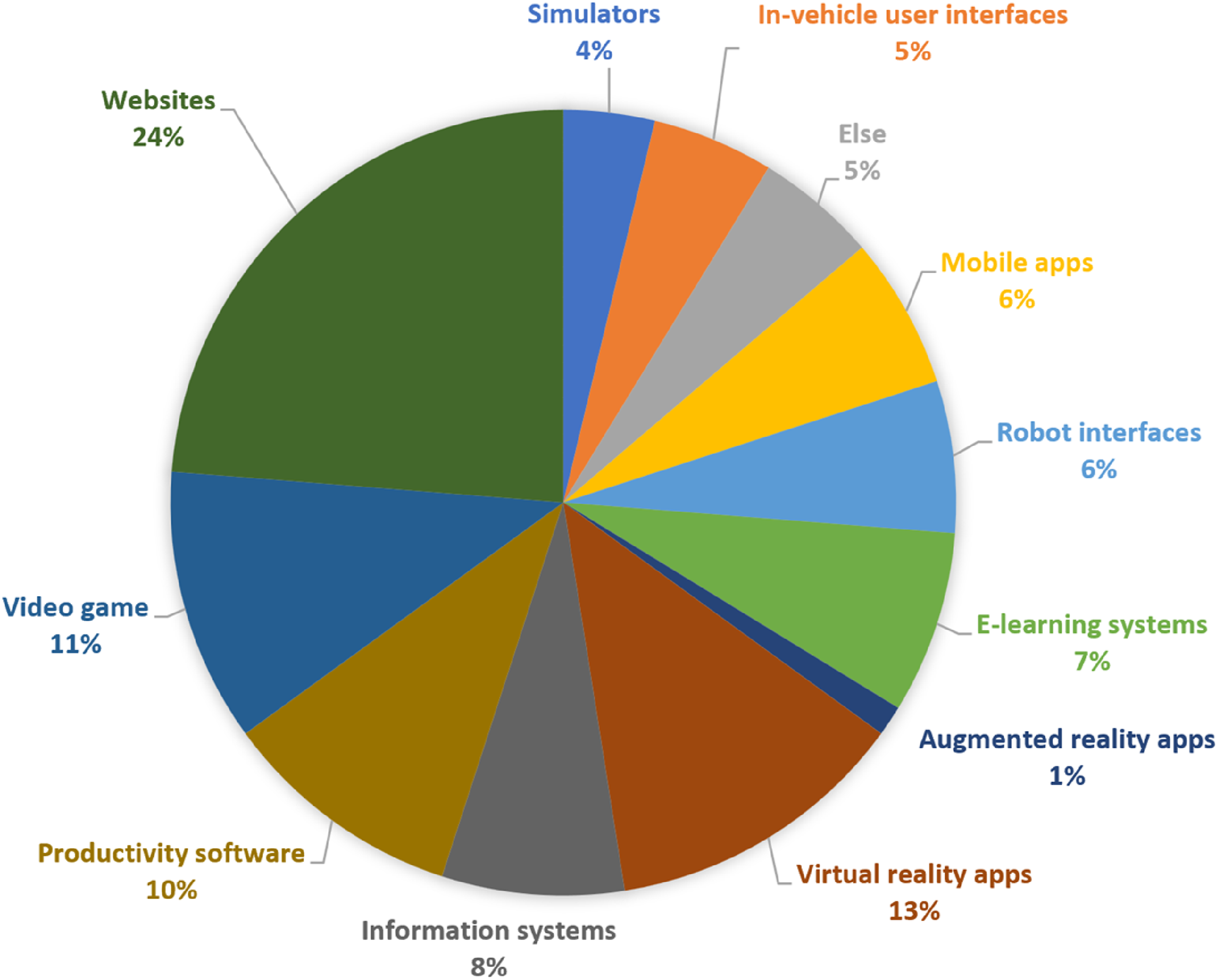

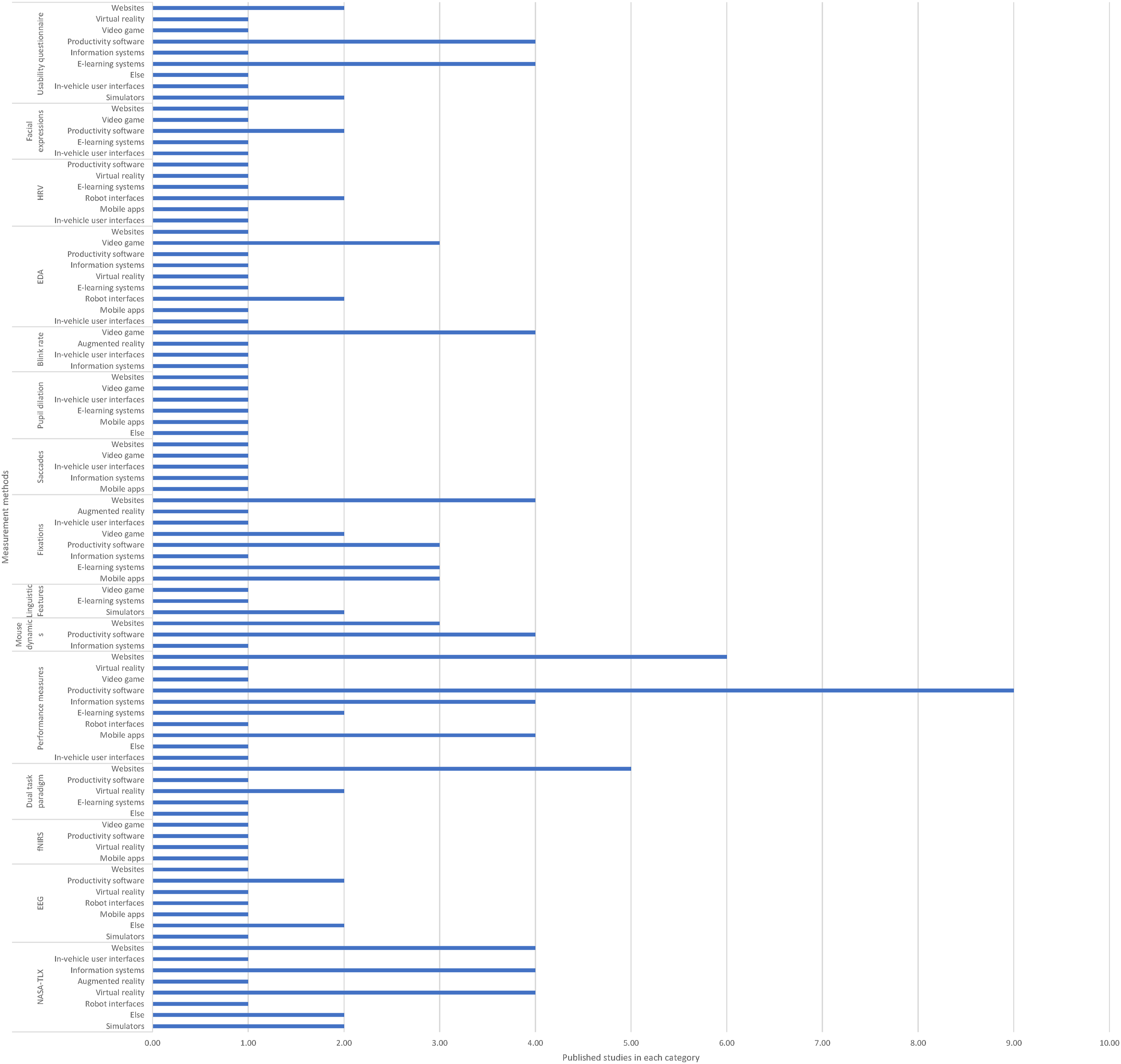

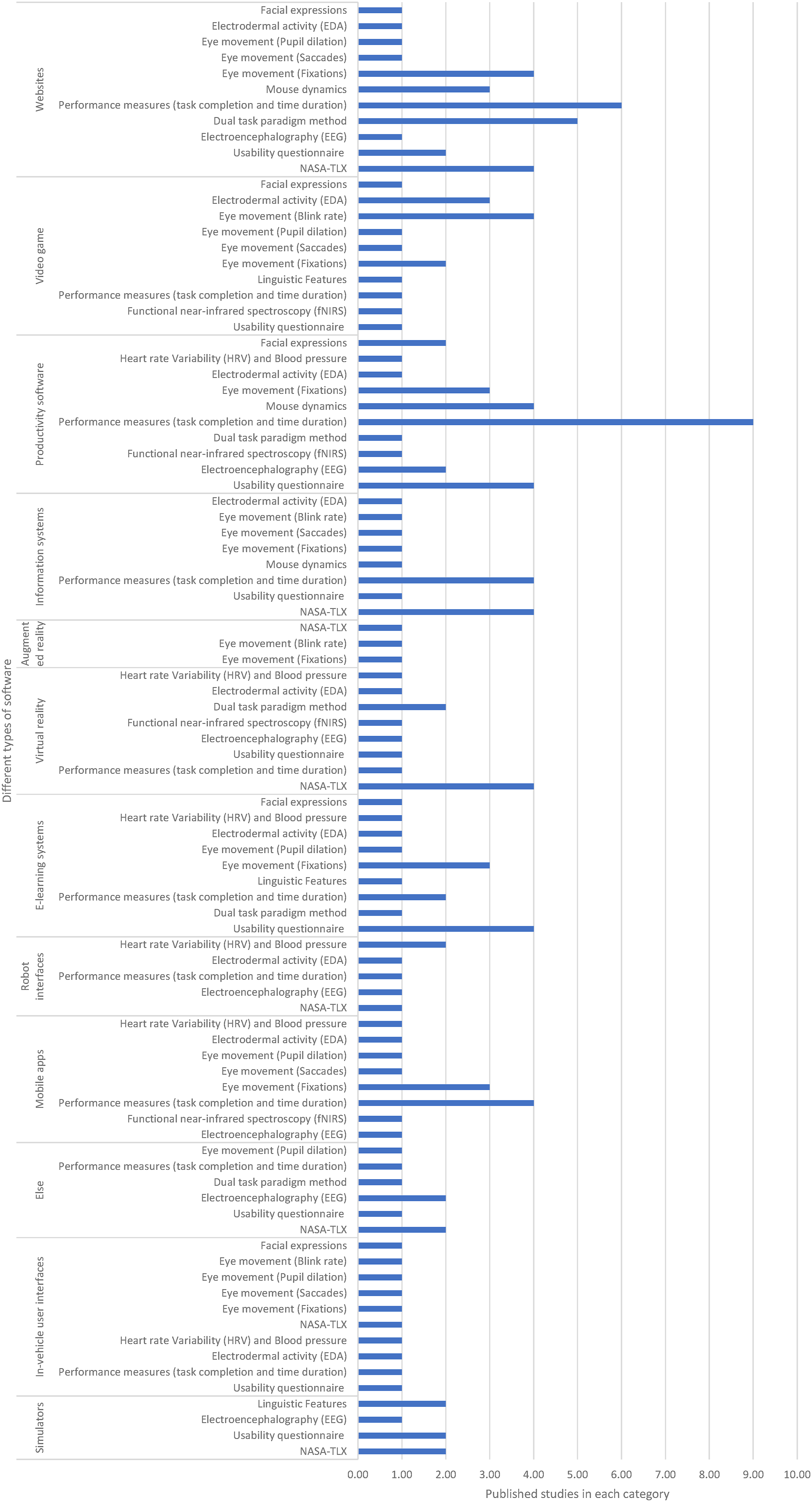

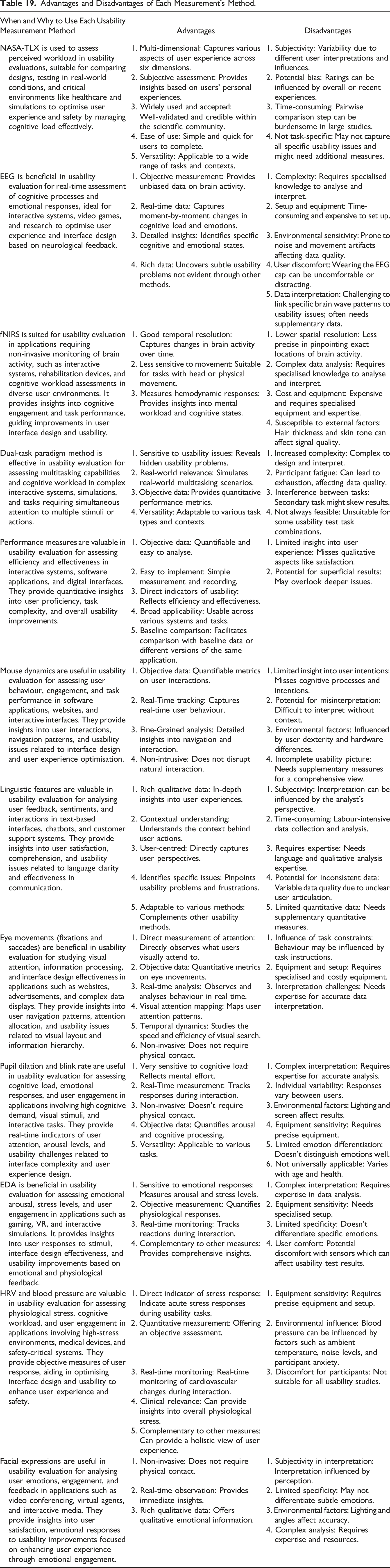

Cognitive load measurement methods were categorised as subjective (e.g., NASA-TLX and self-reports) or objective (e.g., EEG, eye-tracking, dual-task paradigms, and physiological sensors). The most frequently used methods were performance measures (19%), NASA-TLX (12%), and eye fixations (11%). Commonly evaluated platforms included websites, virtual reality systems, and productivity tools. Each method’s applicability, strengths, and limitations were identified.

Conclusion

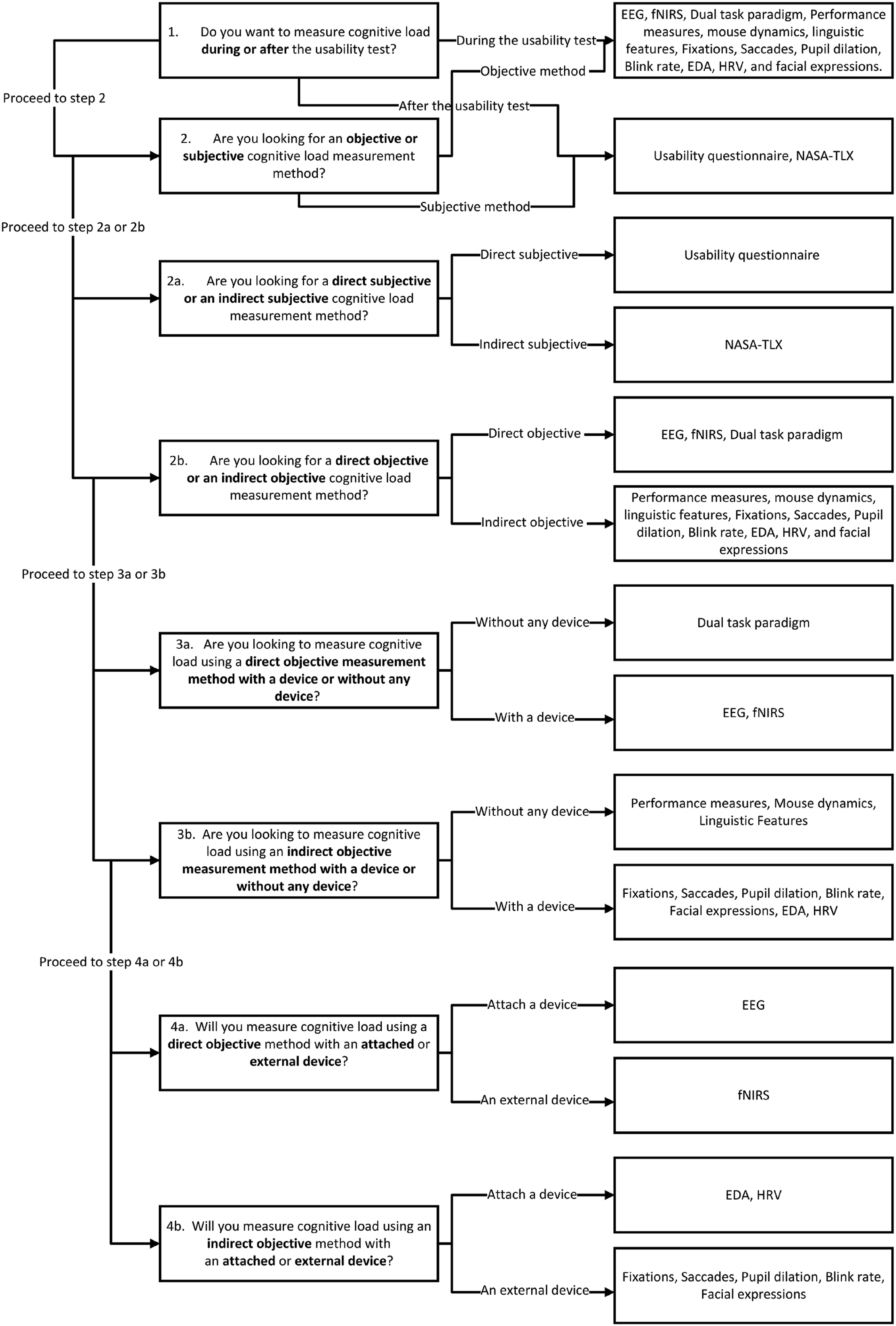

The review synthesises the relative merits of cognitive load measurement methods in usability evaluations and introduces a framework to guide the selection of techniques based on interface type and evaluation goals.

Application

The proposed framework operationalises CLT to support targeted, user-centred usability testing. It facilitates the selection of effective cognitive load measurement strategies, enhancing evaluation accuracy and informing better software design.

Keywords

Introduction and Theoretical Background

This paper reviews various cognitive load measurement methods used to evaluate the usability of different types of user interfaces. It also discusses the advantages and disadvantages of each method to identify which techniques are most effective for assessing cognitive load in usability contexts. Cognitive load theory (CLT) was introduced and developed by educational researchers to facilitate learning (Sweller, 1988). It provides instructional guidance based on human cognitive architecture (Sweller, 2025) and our knowledge of evolutionary psychology (Sweller, Ayres, & Kalyuga, 2011; Sweller, Van Merriënboer, & Paas, 2019). Cognitive load is defined as the cognitive resources required to acquire a concept or learn a procedure (Sweller, 1988, 2011). CLT emphasises the limitations of working memory when processing new information during learning or problem solving (Paas Renkl, & Sweller, 2004; Sweller, 1988; Sweller, Ayres, & Kalyuga, 2011). For instance, when users interact with an unfamiliar software interface or learn to operate a new device, they must hold and process multiple elements of information simultaneously, such as icons, navigation paths, and the system structure, which places demand on their working memory and can lead to cognitive overload if the interface is poorly designed.

While CLT was originally developed within educational contexts, its principles extend beyond learning environments and can be applied to other domains involving complex cognitive activity (Engström, Johansson & Östlund, 2005; Huttunen et al., 2011). Interface usability is one of the areas that can significantly benefit from CLT, since the structure of the interface can affect software usability and user’s cognitive load (Hu, Ma, & Chau, 1999; Saadé & Otrakji, 2007). Interface usability can be improved through measuring user’s cognitive load and optimising the interface based on issues that affect user’s cognitive load (Müller et al., 2008).

Usability is one of the central concepts of human–computer interaction (HCI) that is defined as the extent to which a user can work with a product effectively, efficiently, and with satisfaction (Bevan et al., 2015). Usability testing is important to evaluate if the product can be used by target users easily (Nielsen, 1994; Silva et al., 2021). In software interface design, usability depends on how well the system accommodates the characteristics of its target users and the specific tasks they perform. To design highly usable applications, developers must gain a deep understanding of users’ needs, contexts, and interaction behaviours (Sharp, Preece, & Rogers, 2019). Accordingly, cognitive load measurement methods can serve as effective tools for conducting accurate and systematic usability evaluations. This review paper is expected to be relevant to researchers and practitioners interested in cognitive load measurement within user interface contexts. The intended audience includes usability experts, user experience (UX) designers, HCI researchers, and computer scientists who evaluate or design interactive systems. The findings may assist professionals involved in assessing and improving the usability of information systems, e-learning platforms, video games, immersive technologies such as virtual and augmented reality applications, healthcare devices, manufacturing systems, smartphone applications, and websites by guiding their selection of suitable cognitive load measurement methods and interpretation of results.

In the upcoming sections, we delve into the human cognitive architecture and explore the different types of cognitive load. We subsequently provide a detailed description and analysis of various measurement methods for cognitive load, encompassing subjective and objective approaches. These methods are specifically pertinent to the area of usability evaluation for diverse software interfaces. Following that, the discussion section presents a comparative analysis of these measurement methods, evaluating their strengths and limitations. This analysis is followed by the introduction of our proposed framework, which aims to assist usability testers in selecting an appropriate cognitive load measurement method for conducting precise usability evaluations. The framework takes into account various criteria to ensure the selection of the most suitable method.

Human Cognitive Architecture and Cognitive Load Theory

Based on evolutionary psychology, information processed by the human cognitive system can be divided into biologically primary or secondary categories (Geary, 2008, 2012; Geary & Berch, 2016). The primary category consists of information that over countless generations we have evolved to acquire easily and unconsciously. An example is learning to listen to and speak our native language. Biologically secondary information consists of a category that we need for cultural reasons but require instruction and conscious effort to acquire. Learning to read and write provides an example as does learning to use a computer program. Cognitive load theory is principally concerned with biologically secondary information.

There is a specific cognitive architecture that allows humans to acquire, process, and use biologically secondary information (Sweller, 2025). Within the framework of CLT, information acquisition refers specifically to how learners obtain biologically secondary knowledge that demands deliberate cognitive processing and the engagement of working memory. Such information can be acquired either through individual problem solving, where new knowledge is actively constructed through exploration, or through direct instruction and transmission from others (Geary, 2008; Sweller, 1988; Sweller, van Merriënboer & Paas, 2019). These two modes of acquisition differ from other well-established learning mechanisms, such as observational learning, or probabilistic inference, which are typically associated with biologically primary learning processes that do not impose the same working-memory limitations emphasised in CLT (Bandura & Walters, 1977; Sutton & Barto, 2018).

Once information is acquired, it must be processed within the constraints of our human cognitive architecture. Novel information from the environment is first temporarily held and processed in working memory, which has limited capacity and duration, before being encoded into long-term memory for later retrieval. Once information is stored in long-term memory, it can be retrieved through memory retrieval processes and temporarily held in working memory to guide action appropriate to the current context. For example, when a user interacts with an unfamiliar software interface, they must consciously attend to each step, such as locating menu options, interpreting icons, and remembering procedural sequences, placing a heavy demand on working memory. With repeated use, these actions become automated and integrated into long-term memory, allowing the user to navigate the interface efficiently with minimal cognitive effort.

Although working memory is capacity-limited when processing novel information (typically 7 ± 2 elements; Miller, 1956), these limits can be effectively bypassed when dealing with familiar information that has been automated and stored in long-term memory. Within the CLT framework, this limitation is considered theoretical rather than absolute: when dealing with familiar or automated information stored in long-term memory, working memory is assumed to operate as if its capacity is no longer limited (Paas, Renkl, & Sweller, 2004; Sweller, 2025). In such cases, information is retrieved as integrated “chunks,” reducing the load on working memory (Sweller, 2010). This cognitive architecture provides the foundation for CLT and underpins how mental effort is managed during complex learning and problem-solving tasks.

Beyond this cognitive architecture, it is also important to situate CLT within the broader landscape of psychological constructs concerned with mental effort and performance. In broader cognitive psychology, CLT is closely related to, but distinct from, the construct of Mental Workload. Both address the allocation of limited cognitive resources during task performance, but they differ in theoretical orientation and application. Mental Workload originates from human-factors and ergonomics research and is often operationalised through subjective scales (Cain, 2007; Hart & Staveland, 1988). While both frameworks share methodological overlap in measurement, CLT places stronger emphasis on learning outcomes and schema acquisition, whereas MWL focuses on performance efficiency and operator strain. Understanding their complementarity helps clarify why cognitive load measures are increasingly adopted in usability research that bridges both perspectives.

Different Types of Cognitive Load

There are two types of cognitive load: intrinsic and extraneous that interact to produce the total cognitive load (Sweller, 2010).

Intrinsic Load

Intrinsic cognitive load is defined as the natural complexity level of a specific instructional topic or an entity such as a mathematical concept, software, or a device. It is fixed and cannot be changed, except by altering the entity design or knowledge level of the learner. For example, statistical analysis and 3D modelling applications involve multiple interdependent steps and complex visual–spatial relationships, which inherently increase element interactivity and contribute to a higher intrinsic cognitive load. Intrinsic load is determined by the number of information elements that must be processed simultaneously and the extent to which they interact to achieve a learning goal (Sweller, 1994).

In cognitive load theory, element interactivity is an index to measure the complexity of a learning topic and depends on the learners’ prior knowledge, nature of the materials, and the relationship between the concepts that users should connect and process simultaneously (Chen et al., 2015; Marcus, Cooper & Sweller, 1996; Sweller, 1994, 2010). Elements with low interactivity can be learnt in isolation, with minimal or no reference to other learning elements, for example, learning the features like bold, italic, and underline in MS Excel. Low interactivity elements impose a low working memory load because each element can be processed independently of every other element. However, elements with high interactivity consist of different integrated elements that cannot be learnt in isolation, for example, typing functions in MS Excel or drawing a chart (Sweller, 2010). High levels of element interactivity impose a heavy working memory load because all the elements must be processed simultaneously. Levels of intrinsic cognitive load should be optimised to ensure learning is maximised without overloading working memory.

Although intrinsic load can sometimes appear to be influenced by system design, it fundamentally reflects the inherent complexity of the task or information being processed. For example, interacting with a multi-step data-visualisation tool may impose a high intrinsic load because the user must coordinate several interdependent elements to complete the task. Likewise, even a simple or well-designed interface can impose a high intrinsic load when it represents operations that are conceptually complex in nature. In both cases, the source of difficulty stems from the intrinsic complexity of the material or task requirements rather than from the interface design itself.

Extraneous Load

Extraneous cognitive load is related to the difficulty imposed by the method used to present instructional materials or the complexity level of an interface or a device as a result of the way they have been designed. In contrast to intrinsic load, which is tied to the essential complexity of a task or information, extraneous cognitive load stems from preventable design inefficiencies that add unnecessary mental effort beyond what is required by the task at hand. Examples include cluttered layouts, inconsistent iconography, or unclear navigation paths that force users to expend cognitive resources on interface management rather than the task itself. Intrinsic and extraneous load differ in origin: intrinsic load reflects inherent task difficulty, whereas extraneous load arises from avoidable design-induced barriers.

Some instructional procedures increase element interactivity and so unnecessarily increase extraneous load. Common examples of extraneous load include redundant information such as repeated links, menus, or decorative graphics on websites, which are non-essential to the task at hand and may in fact be distracting and consume limited cognitive resources (Bus et al., 2015; Jin, 2012; Lehmann, Hamm, & Seufert, 2019). Split attention is another common type of extraneous load, where users have to split their attention between different screens, windows, or parts of an interface in order to perform a task or understand a website or interface. The user then needs to use cognitive resources to mentally integrate information that is physically separated and that is non-essential to the task at hand (Al-Shehri & Gitsaki, 2010; Sweller, Kalyuga & Ayres, 2011; Schroeder & Cenkci, 2018).

In the context of interface usability, extraneous cognitive load may refer to the design of the user interface, tooltips and software help system that are designed to guide users while working with the interface. In contrast to intrinsic load, which reflects task complexity, extraneous load results from the design and presentation of the interface and instructional materials (Van Merriënboer & Sweller, 2005). For example, websites are generally not difficult in nature, but a poor interface design can make a website difficult to learn, or a good help system that is designed for complex software can make learning the software easier, even if the software is difficult in nature.

Designing an interface based on principles of good design and providing an appropriate help system can enhance learning performance by increasing available working memory resources and reducing extraneous load (Chandler & Sweller, 1991). When the intrinsic load is high, total cognitive load can be decreased by decreasing extraneous load. Learning is enhanced by minimising mental resources that are allocated to deal with a user interface and teaching materials so that working-memory can use its maximum resources to deal with the instructional topic (Sweller, 2010). Therefore, when the nature of the software or the associated interface are cognitively demanding, a good user interface design or an appropriate and comprehensive help system become important ways to decrease extraneous cognitive load.

Review Methodology

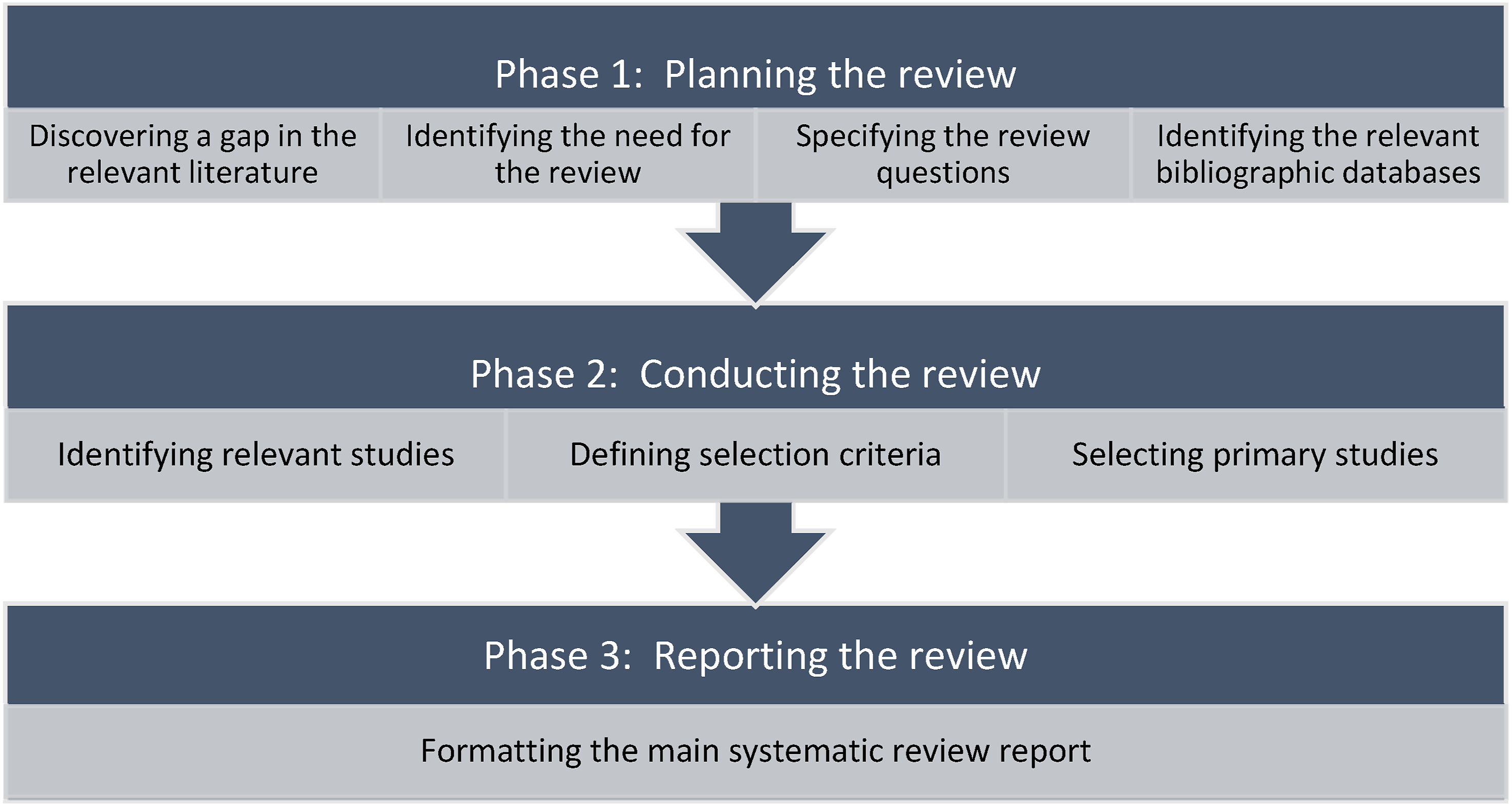

We used the Kitchenham and Charters (2007) methodology, which is a widely adopted approach in computer science and human–computer interaction research. We did not use other frameworks, such as the PRISMA protocol because PRISMA is primarily designed for systematic reviews involving quantitative meta-analyses and intervention-based studies, particularly in health and medical research (Page et al., 2021). In contrast, the present review aimed to qualitatively synthesise methodological patterns and theoretical perspectives on cognitive load measurement in usability evaluation rather than to aggregate statistical outcomes. Therefore, the Kitchenham and Charters approach was more suitable for achieving the objectives of this study, providing a structured yet flexible framework for identifying, categorising, and analysing relevant literature within the context of software usability and cognitive load research. We carried out this review in three main phases: (a) planning of systematic mapping, (b) conducting the review, and (c) reporting the review. Systematic mapping is a structured literature review approach used to classify and summarise existing research within a broad topic area, providing an overview of research trends, gaps, and methodological characteristics rather than quantitative synthesis.

The phases of this systematic review and the related activities are shown in Figure 1. Phases of conducting this systematic review.

Phase 1: Planning the Review

Discovering a Gap in Relevant Literature

In this step, a comprehensive search was performed on web databases to locate studies relevant to cognitive load measurement methods that are used in the area of evaluating software usability. Although there are a few studies that reviewed different cognitive load measurement methods in the area of computer science, to the best of our knowledge, none have comprehensively listed and compared the full range of cognitive load measurement approaches, including subjective self-report, physiological, behavioural, and performance-based methods, specifically within the context of software usability, nor identified which techniques are most suitable for evaluating the usability of different types of user interfaces.

For example, Duran, Zavgorodniaia and Sorva (2022) conducted a systematic review to identify how CLT has been used across a number of computing education research forums. Frazier, Pitts and McComb (2022) examined how cognitive load is evaluated in studies with a focus on human-automated knowledge work. They compared the studies that evaluated the impacts of automation on cognitive load of operators.

In a recent study, Kosch et al. (2023) conducted a systematic review of cognitive load measurement methods used in HCI. Their focus was on defining and interpreting cognitive workload within HCI and exploring its broader applications in interactive computing systems. While their review covered various cognitive load measurement methods, it provided a broad overview rather than a detailed analysis of their applicability to usability evaluation across different user interfaces. Similarly, Suzuki, Wild, and Scanlon (2024) examined the use of cognitive load measurement methods specifically in the context of augmented reality applications. In contrast, this paper offers a more targeted examination of cognitive load measurement methods, focussing on their advantages and disadvantages in assessing usability across diverse user interfaces within HCI. By narrowing our scope, we aim to provide a practical framework for selecting the most suitable cognitive load measurement techniques for specific interface evaluations.

Identifying the Need for a Review

Evaluating the usability issues of computer software, websites, and mobile apps by considering users’ cognitive load is an efficient method as it considers users’ cognitive structure, and it can involve both qualitative and quantitative measures. However, we are unaware of any review that summarises and compares all the possible cognitive load measurement methods that are available and can be used in different areas of software usability.

Specifying the Review Questions

The questions we aimed to examine in this review sought to identify and summarise all cognitive load measurement methods that can be used in software usability evaluation. In addition, given the diversity of identified methods, we also considered their validity and practical applicability so that the review contributes more than a descriptive catalogue of methods. The questions are listed below: • Q1 What are the existing cognitive load measurement methods that have been effectively used in the area of software usability? • Q2 What are the most common cognitive load measurement methods that can be used in the area of software usability? • Q3 What types of software have been evaluated by using cognitive load measurement methods? • Q4 What types of software have been evaluated by using each cognitive load measurement method? • Q5 What cognitive load measurement methods have been used for evaluating the usability of each type of software? • Q6 How valid are the identified cognitive load measurement methods, particularly in terms of convergent validity, for evaluating software usability?

Identifying the Relevant Bibliographic Databases

In order to answer our questions and find the relevant studies, we tried to select bibliographic databases that cover the majority of journals and conference papers associated with the field of computer science and HCI. The following relevant bibliographic databases were identified: ACM, IEEE, SpringerLink, ScienceDirect, Proquest, Scopus, Wiley Inter Science, and Google Scholar. The search included articles published from 2001 up to February 2025.

Phase 2: Conducting the Review

This is the main phase of our systematic review with the purpose of selecting related studies as well as synthesising data.

Identifying Relevant Studies

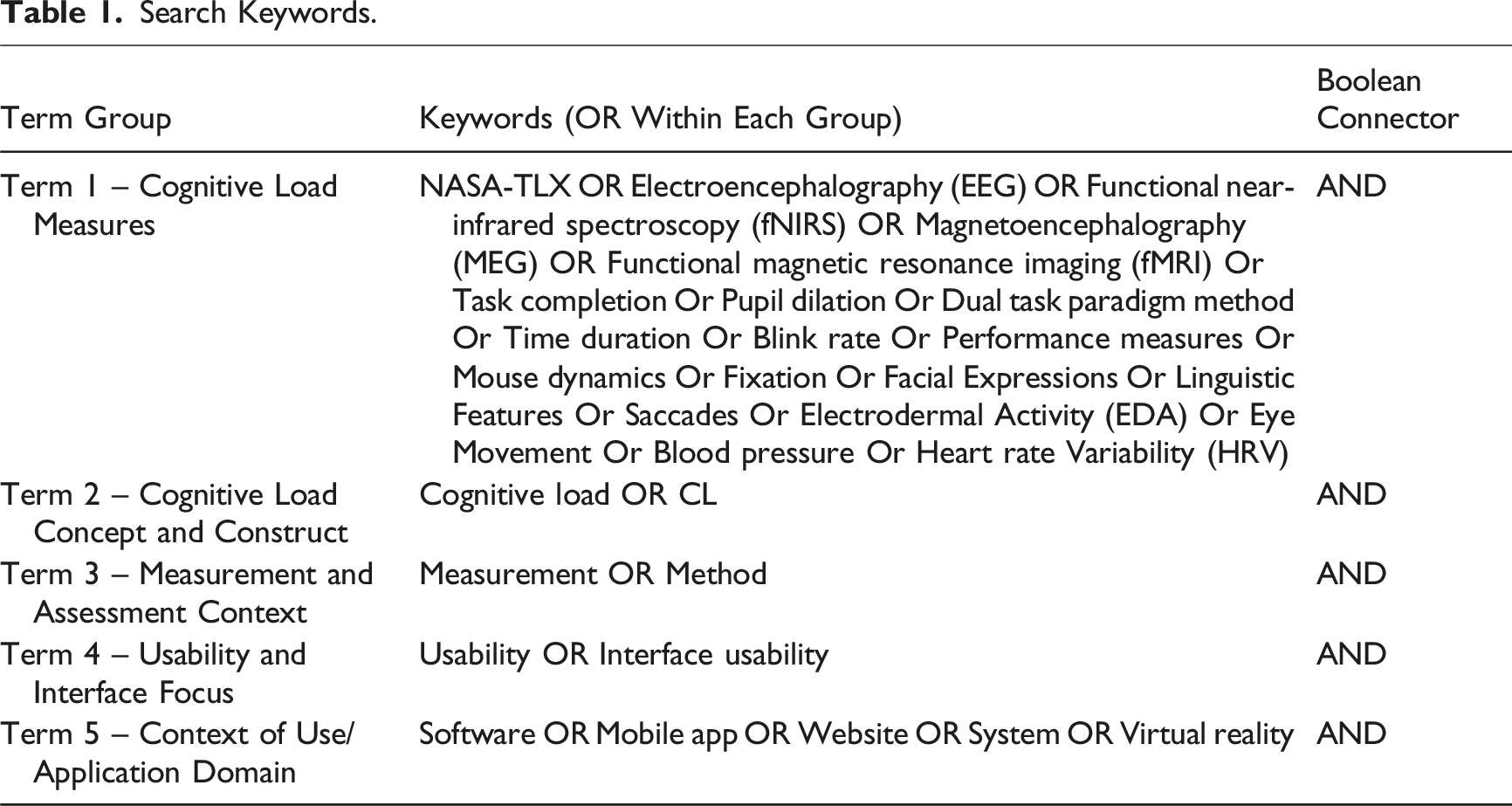

Search Keywords.

Summary of the Search in Bibliographic Databases.

Terms 2–3 were used to narrow the search to studies that applied the cognitive load measurement methods identified in Term 1 specifically for measuring cognitive load. Terms 4–5 added an additional layer of criteria, further refining the search to include studies focussing on software usability across different types of applications.

Preliminary test searches conducted without Term 1 retrieved largely overlapping results with fewer unique studies, indicating that the inclusion of known measures enhanced recall without limiting discovery. Additional root terms such as web, internet, and online were also tested during pilot queries but did not substantially expand the result set, as most relevant papers already used the terms “website,” “software,” or “interface” in their metadata.

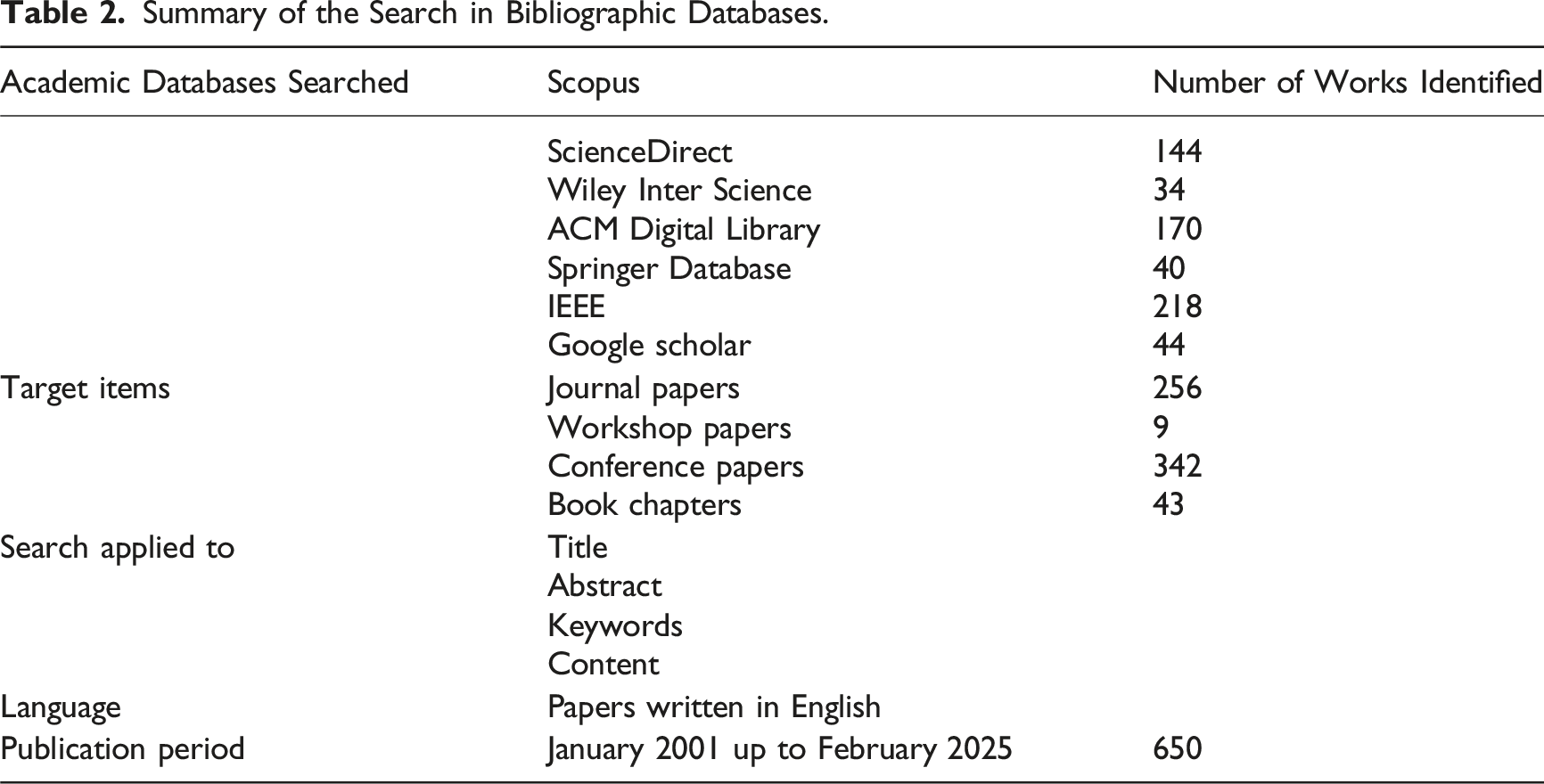

Two additional search strategies were applied to retrieve the maximum number of relevant papers. The first strategy reviewed the reference list of selected papers to find more related papers. The second strategy involved searching for the authors of the selected studies in Google Scholar to identify additional related research. A summary of the search in bibliographic databases is presented in Table 2.

Defining Selection Criteria

In order to select the primary papers, we defined the following criteria based on the purpose of our study.

Inclusion criteria: (1) Studies that measured users cognitive load to evaluate the usability of any type of software including computer software, websites, mobile applications, virtual reality applications, and video games. (2) Studies that were published between January 2001 and February 2025.

Exclusion criteria: (1) Studies that measured cognitive load in another context rather than software. (2) Non-experimental papers. (3) Papers available only in the form of an abstract or presentation. (4) Papers not written in English.

Selecting Primary Studies

The titles and abstracts of the retrieved papers were screened according to the predefined inclusion and exclusion criteria. Studies meeting at least one inclusion criterion and none of the exclusion criteria were retained for further review. When eligibility could not be determined based solely on titles and abstracts, full texts were examined. Screening and selection were conducted by two reviewers. Any disagreements were resolved through discussion and consensus, and when consensus could not be reached, a third reviewer was consulted.

The database search across ACM, IEEE, SpringerLink, ScienceDirect, Scopus, Wiley InterScience, ProQuest, and Google Scholar initially yielded 650 records. After removing 35 duplicates, 615 papers remained for screening. Of these, 344 were excluded for being unrelated to usability or cognitive load. The remaining 271 full-text articles were assessed for eligibility based on methodological completeness and relevance to software usability evaluation. Following this assessment, 184 papers were excluded because they did not include a usability evaluation, measured cognitive load outside a software context, or lacked sufficient methodological detail. The final 87 studies met all criteria and were included in the review.

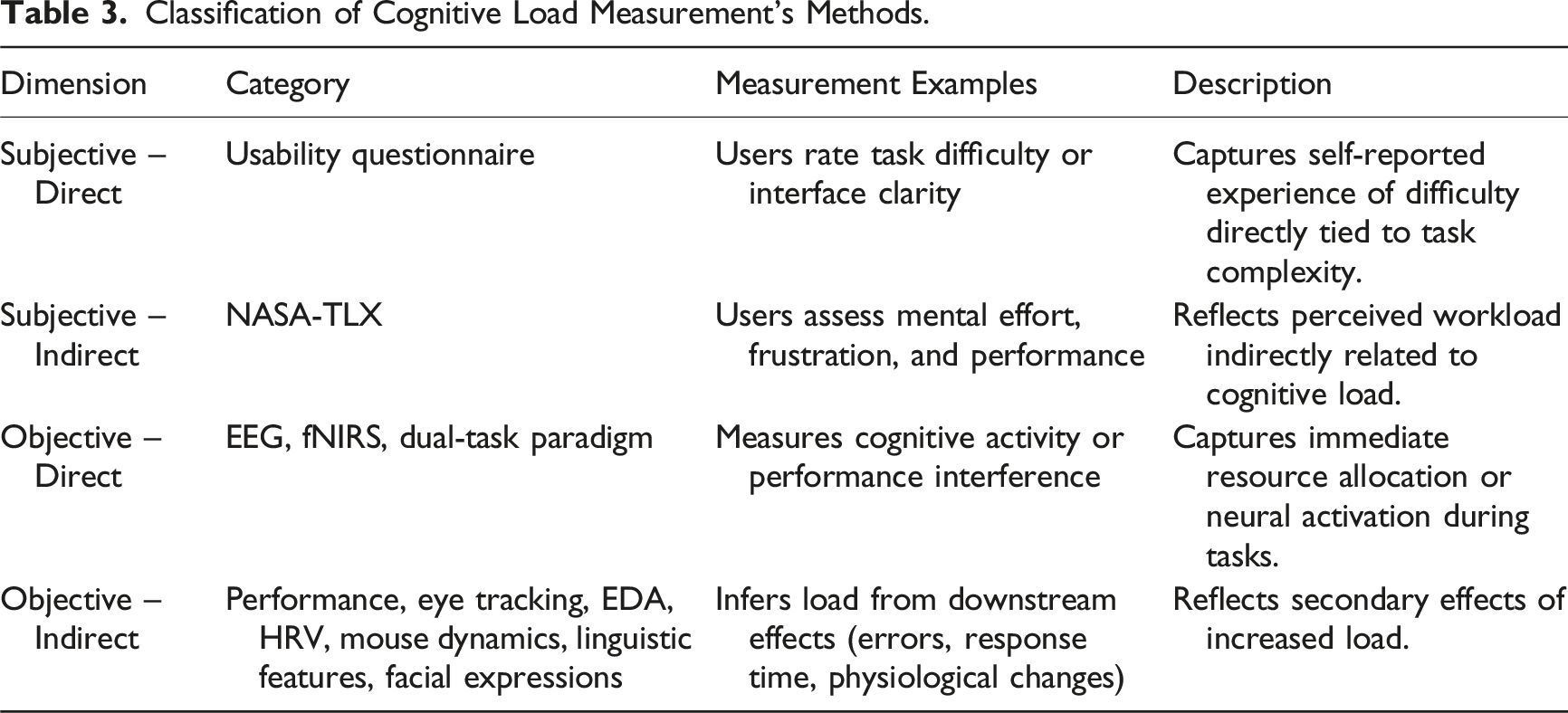

Phase 3: Reporting the Review

In reporting the results of this study, we used a taxonomy that builds on the dual-dimension framework proposed by Brunken et al. (2003), which has been widely applied in cognitive load research to distinguish measurement methods according to their level of objectivity and causal proximity to cognitive processing. In line with this framework, the reviewed studies were categorised along two dimensions to ensure conceptual consistency and comparability across different types of measures: (a) The objectivity of the measure (subjective vs. objective) and (b) The causal relationship between the measure and cognitive load (direct vs. indirect).

The objectivity dimension differentiates between subjective approaches, which rely on self-reported data such as questionnaires, and objective approaches, which depend on behavioural observations, physiological signals, or performance outcomes.

The causal-relations dimension distinguishes between direct and indirect measures of cognitive load according to their proximity to cognitive processing. Direct measures capture indicators that respond immediately to mental activity or cognitive effort, offering fine-grained temporal resolution of users’ mental states during task performance. Examples include self-reported task difficulty or mental effort ratings, neurophysiological methods such as EEG and fNIRS, and the dual-task paradigm, where secondary-task performance directly reflects the cognitive resources consumed by a primary task.

Indirect measures, by contrast, infer cognitive load from its downstream effects or outcomes, such as task accuracy, error rate, completion time, or reaction time. Physiological responses like pupil dilation, HRV, EDA, or facial temperature are also indirect indicators because they can be affected by factors beyond cognitive demand, including emotion or fatigue. Because many indicators overlap conceptually (e.g., arousal or motivation), the distinction between direct and indirect measures is treated pragmatically rather than absolutely.

Classification of Cognitive Load Measurement’s Methods.

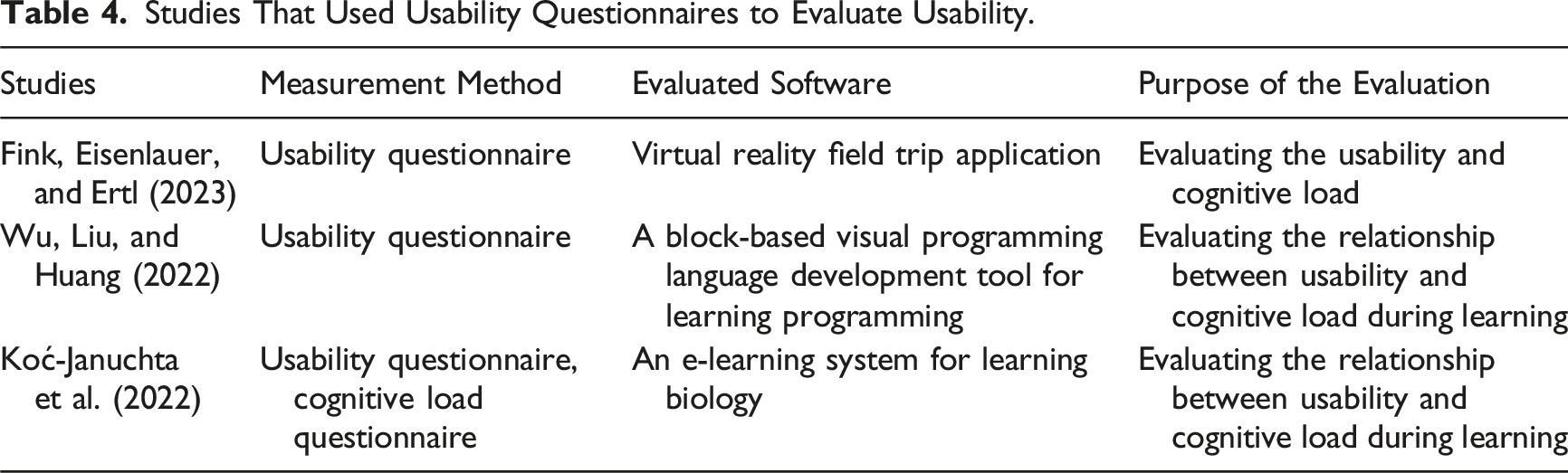

Studies That Used Usability Questionnaires to Evaluate Usability.

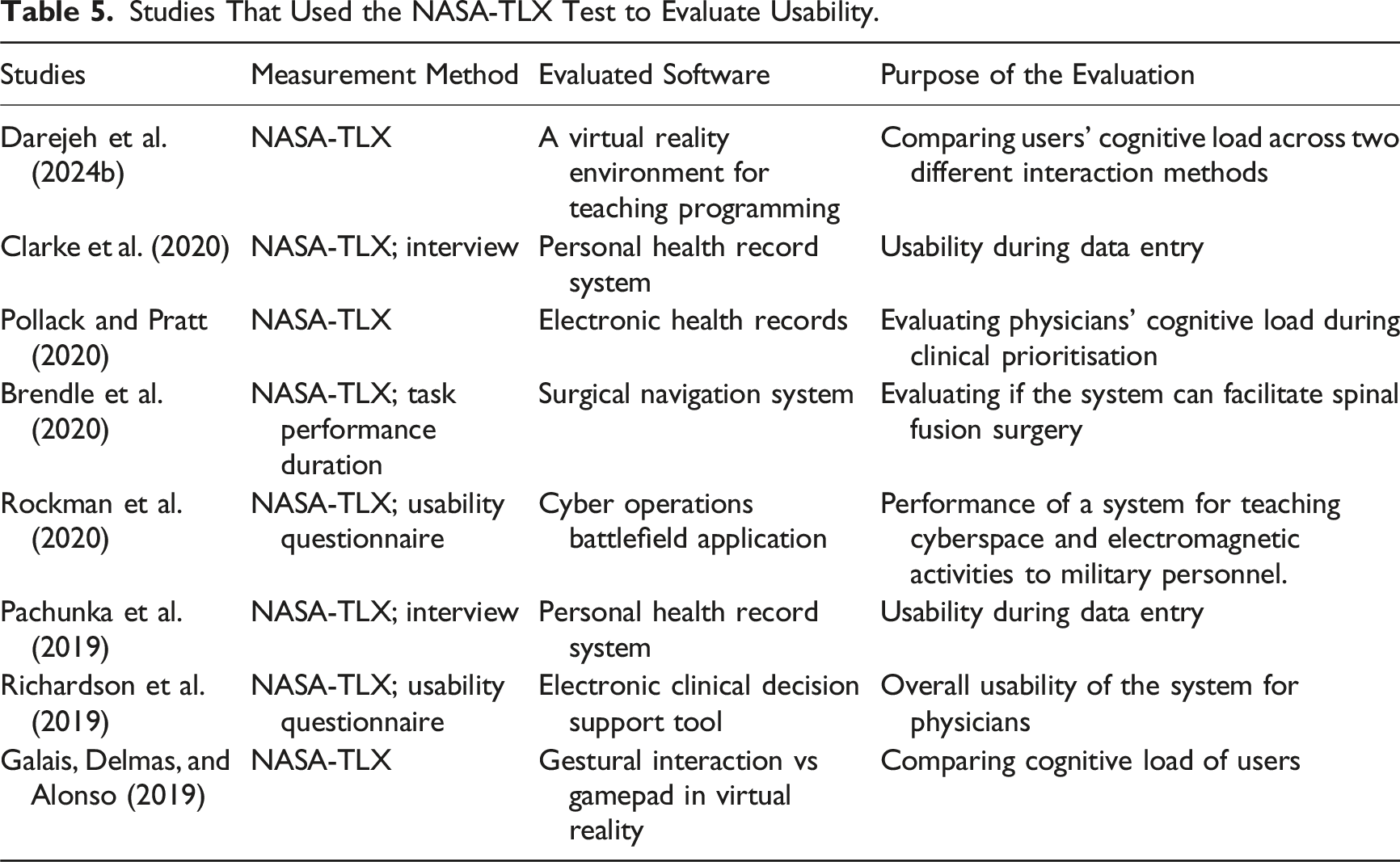

Studies That Used the NASA-TLX Test to Evaluate Usability.

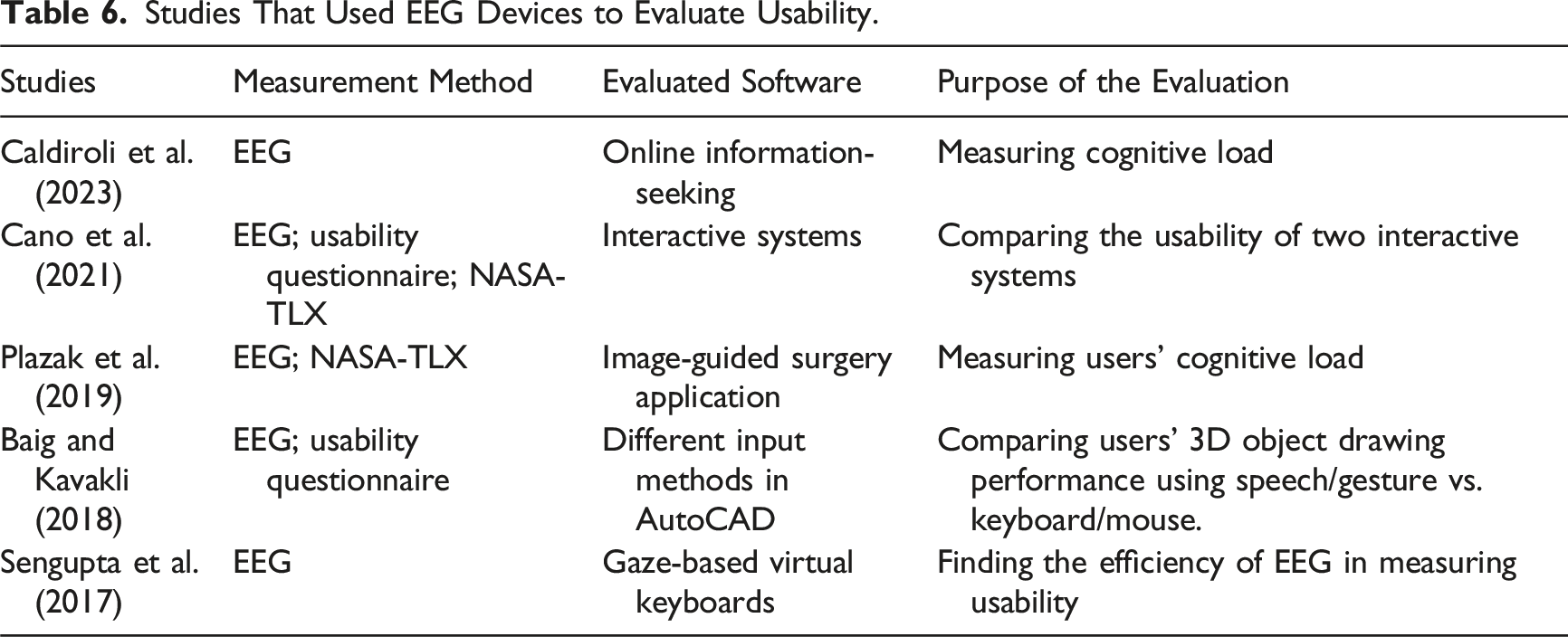

Studies That Used EEG Devices to Evaluate Usability.

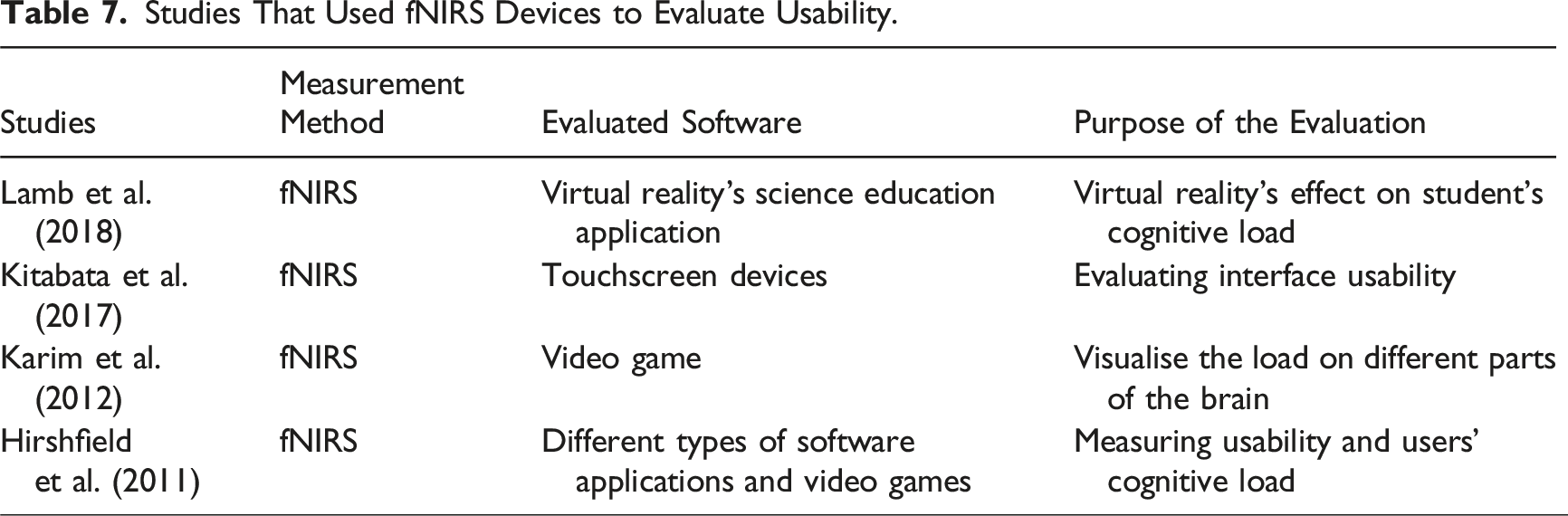

Studies That Used fNIRS Devices to Evaluate Usability.

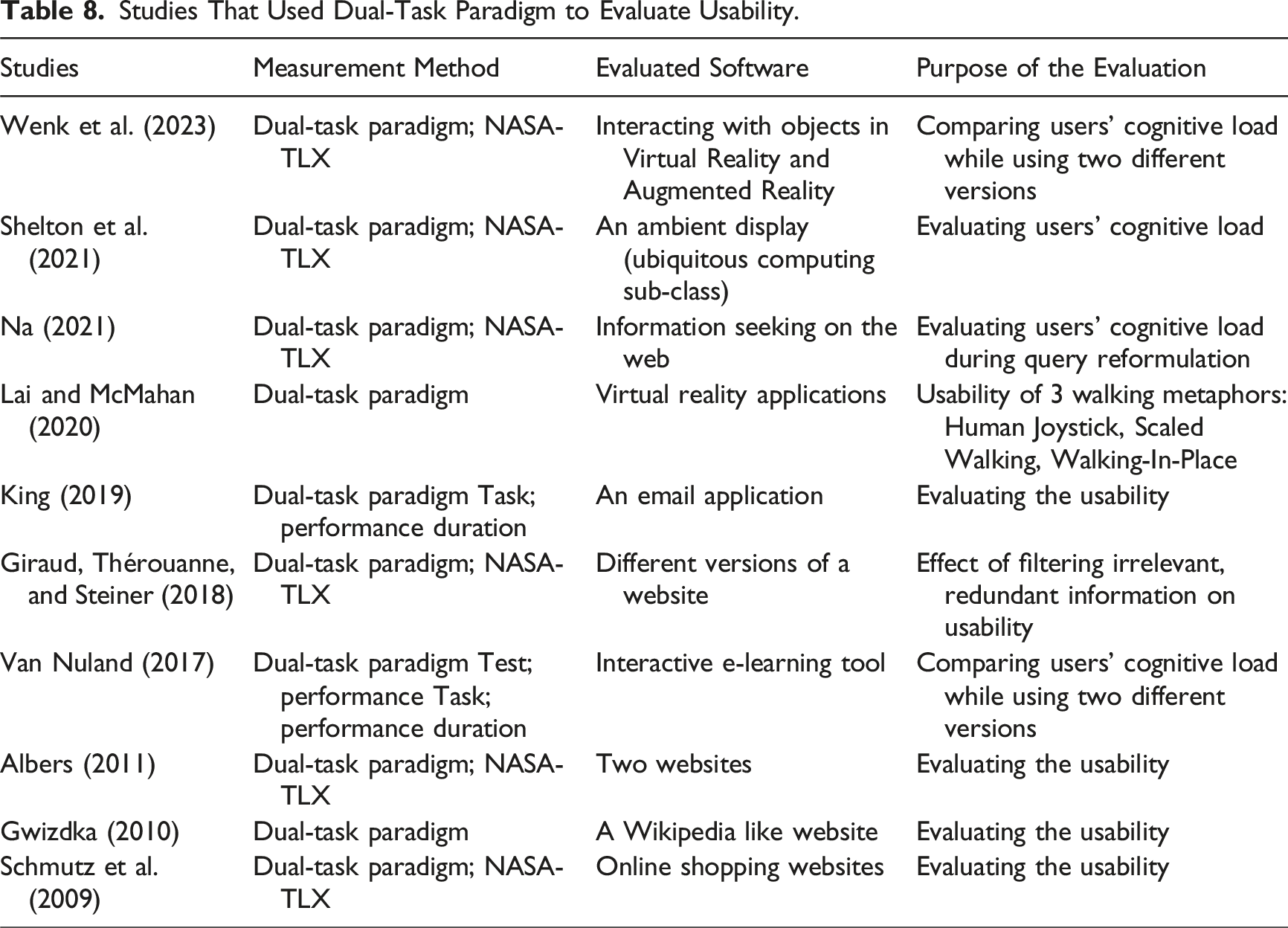

Studies That Used Dual-Task Paradigm to Evaluate Usability.

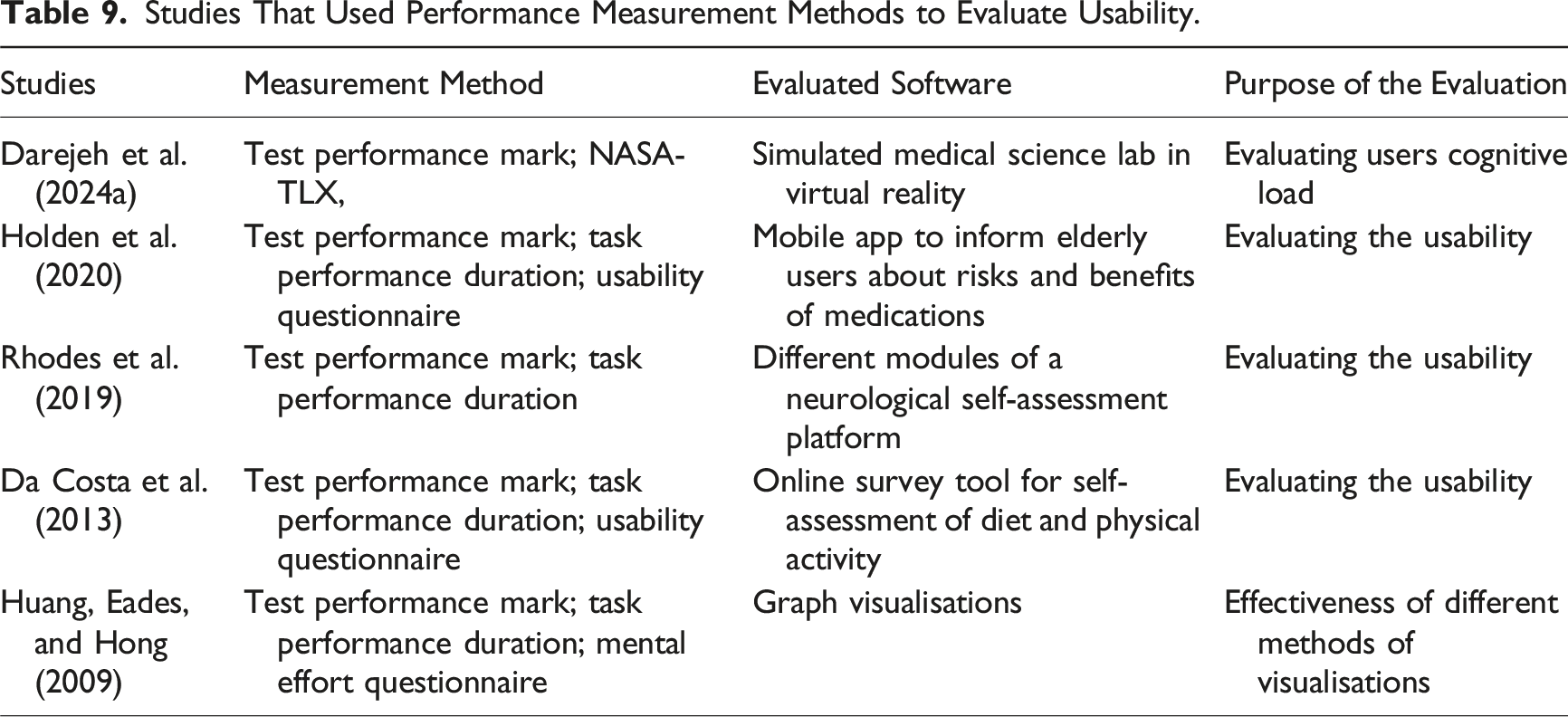

Studies That Used Performance Measurement Methods to Evaluate Usability.

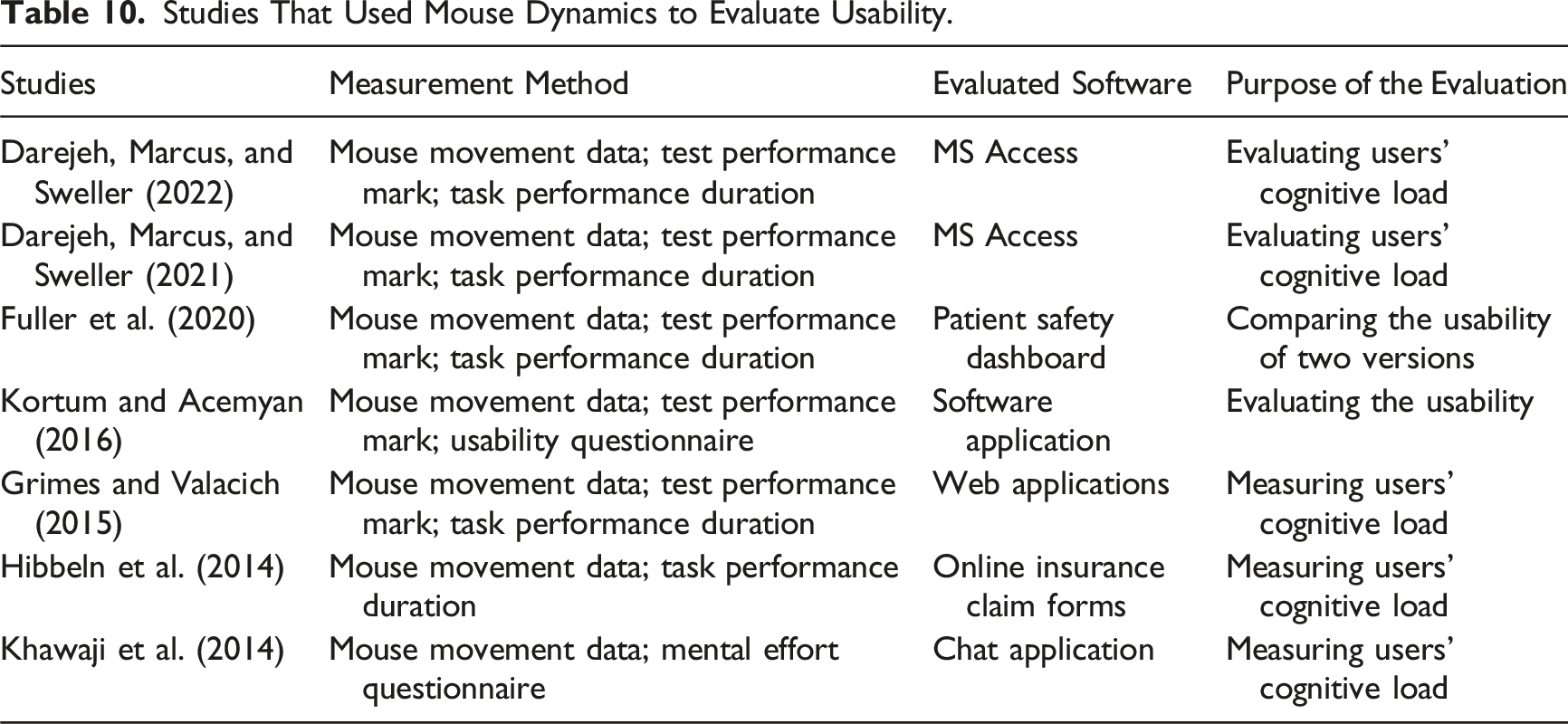

Studies That Used Mouse Dynamics to Evaluate Usability.

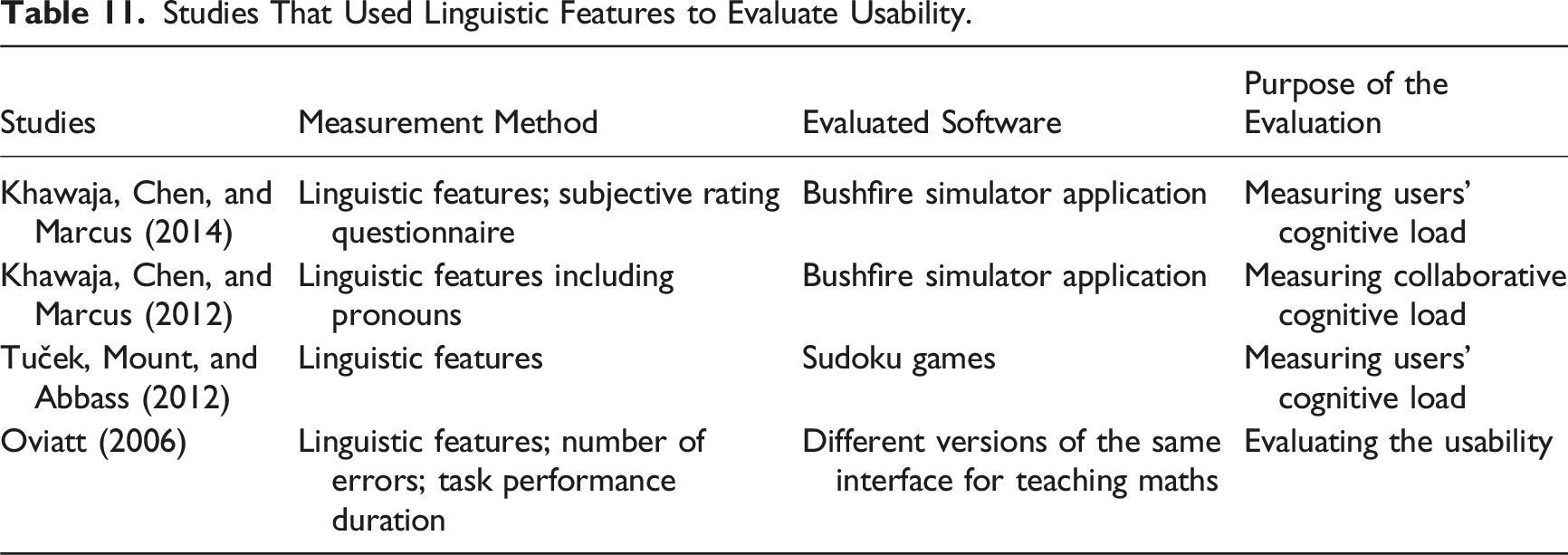

Studies That Used Linguistic Features to Evaluate Usability.

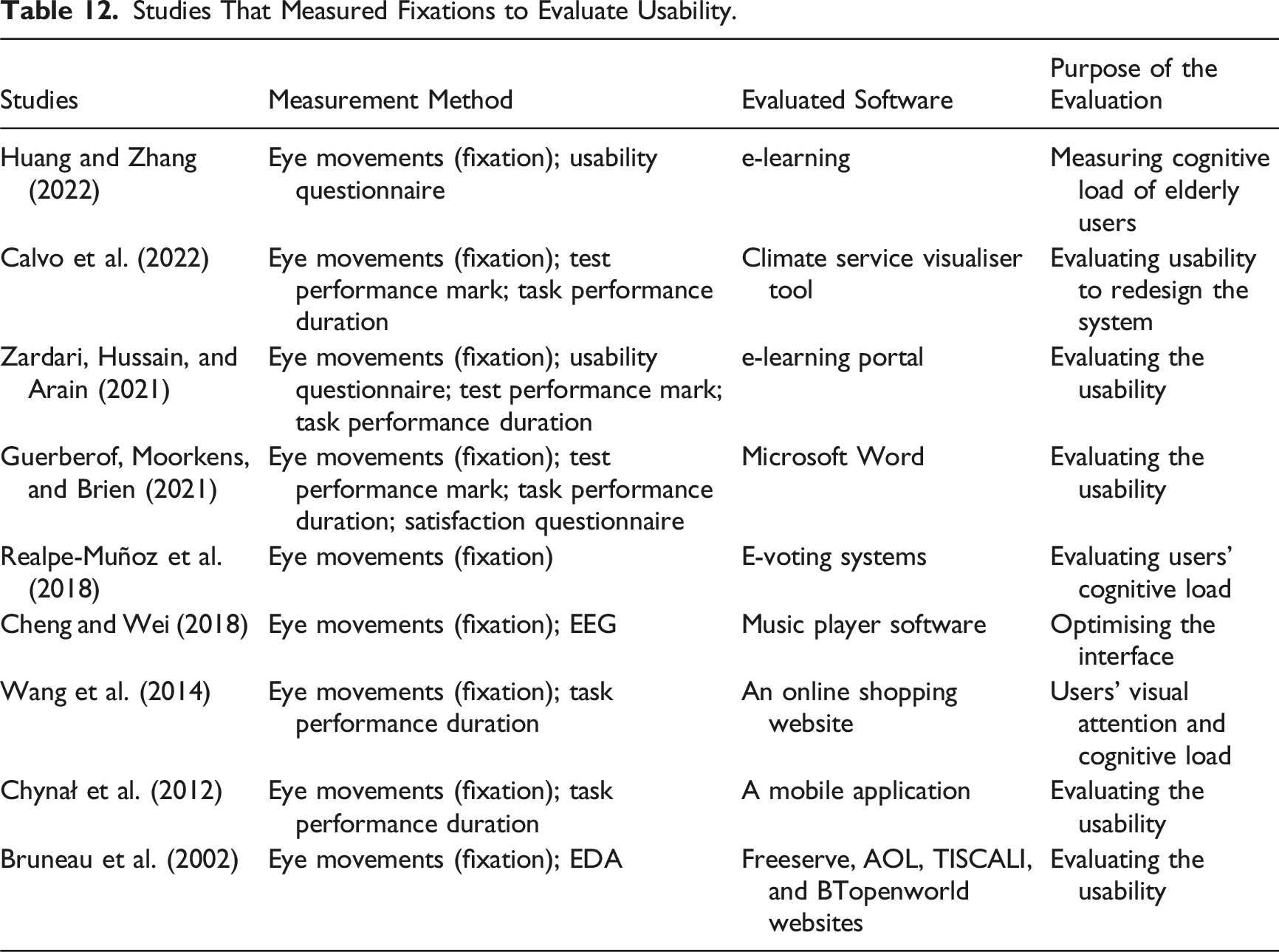

Studies That Measured Fixations to Evaluate Usability.

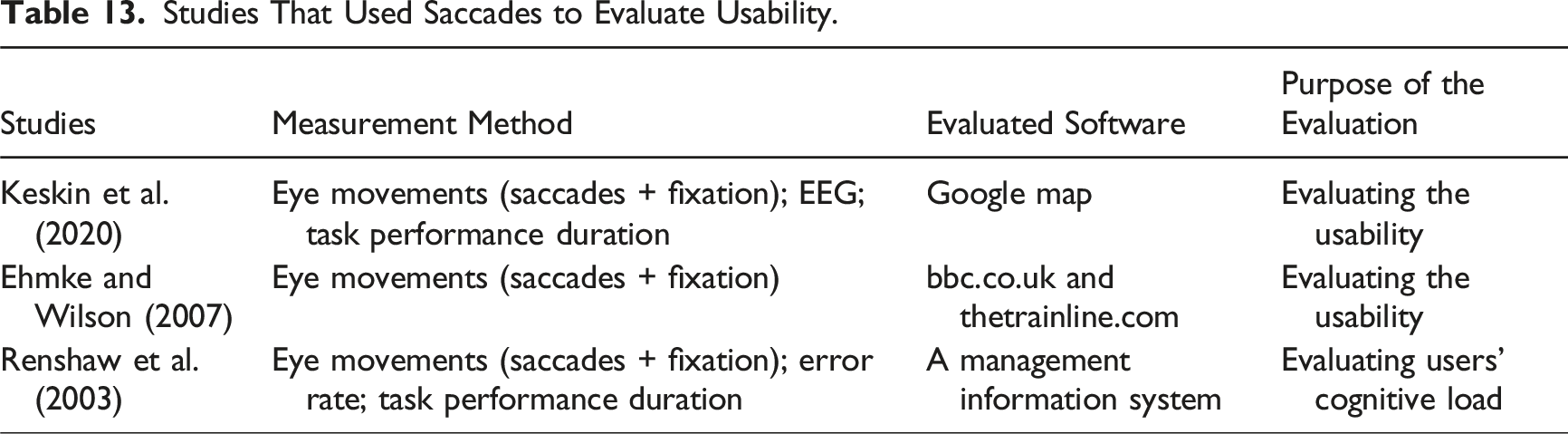

Studies That Used Saccades to Evaluate Usability.

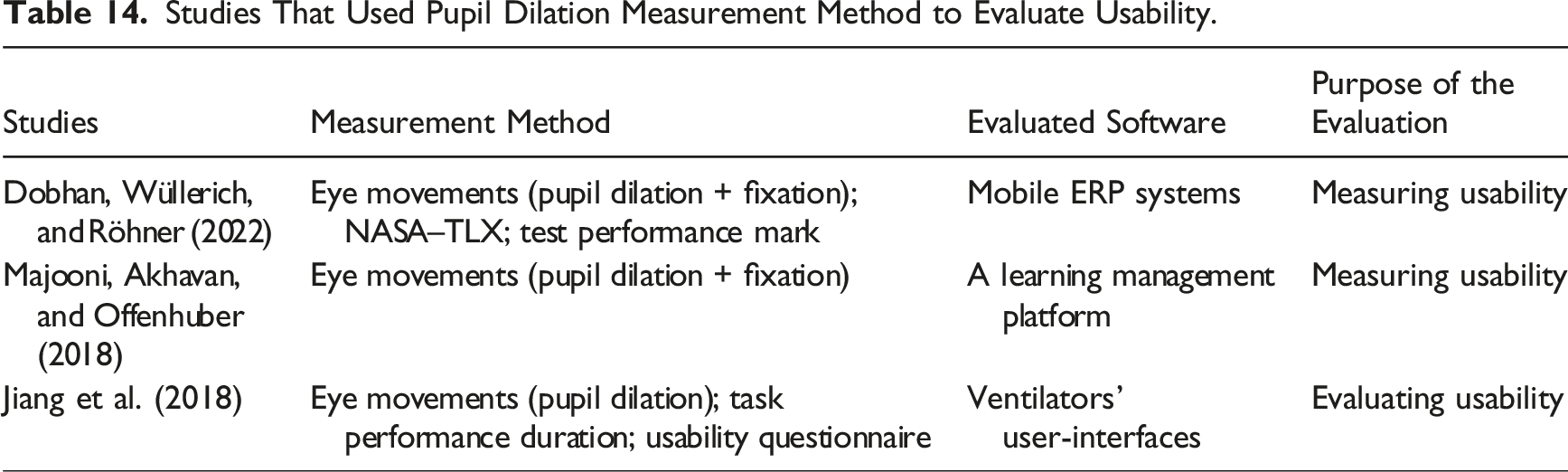

Studies That Used Pupil Dilation Measurement Method to Evaluate Usability.

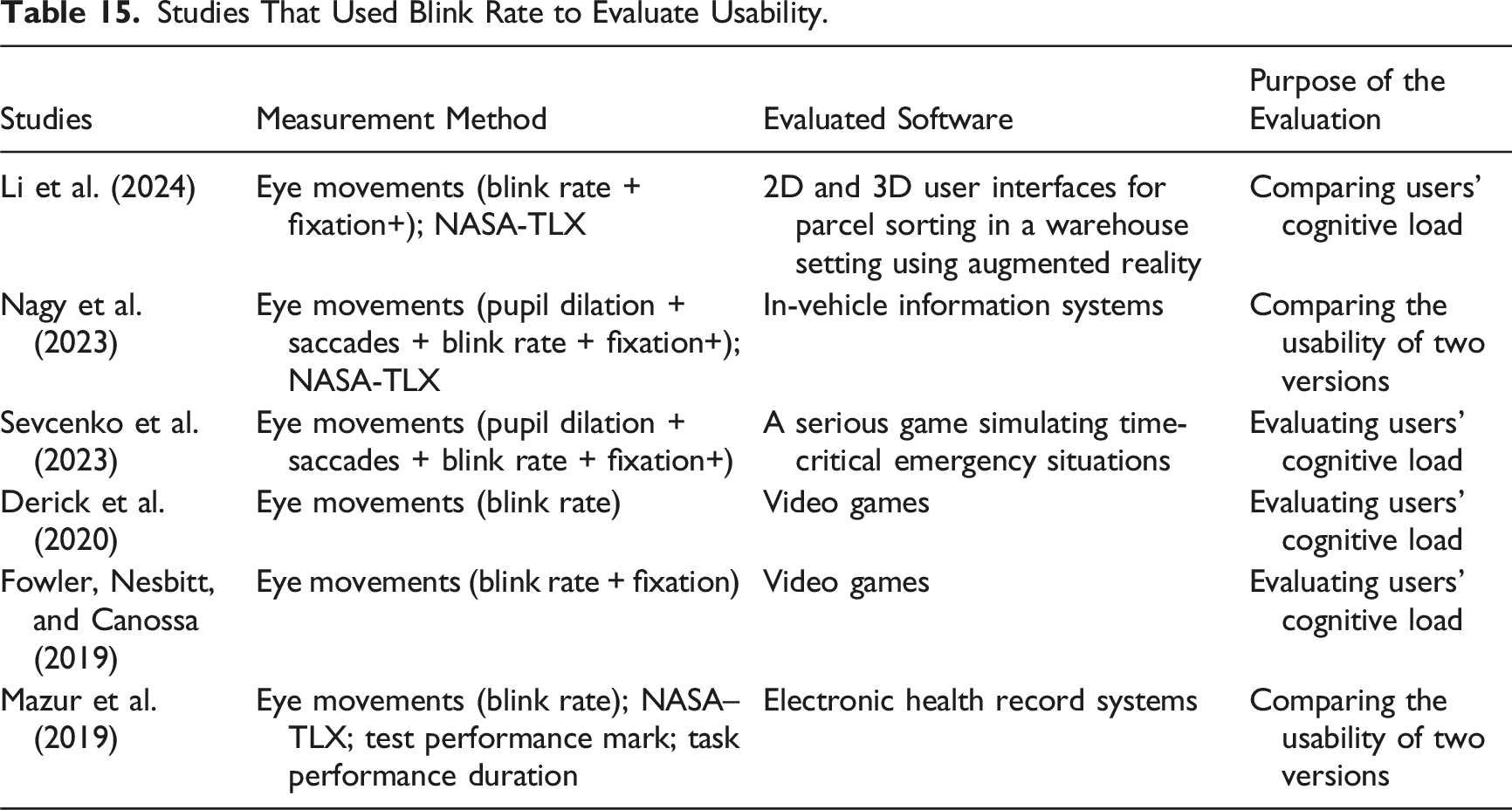

Studies That Used Blink Rate to Evaluate Usability.

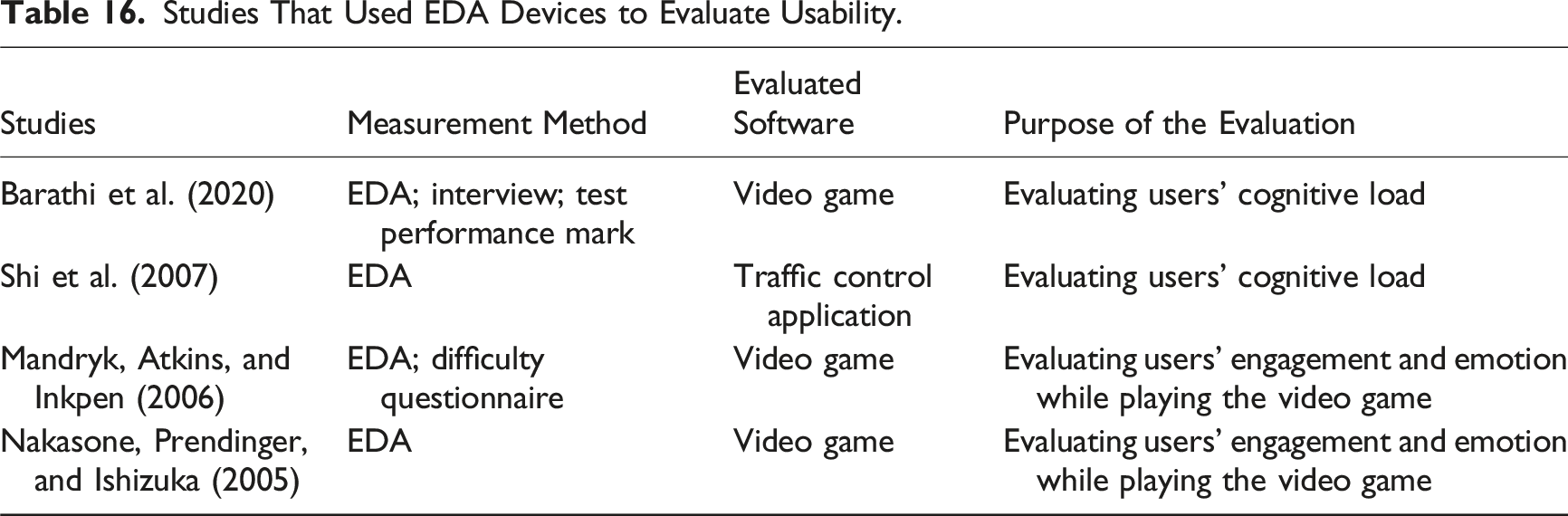

Studies That Used EDA Devices to Evaluate Usability.

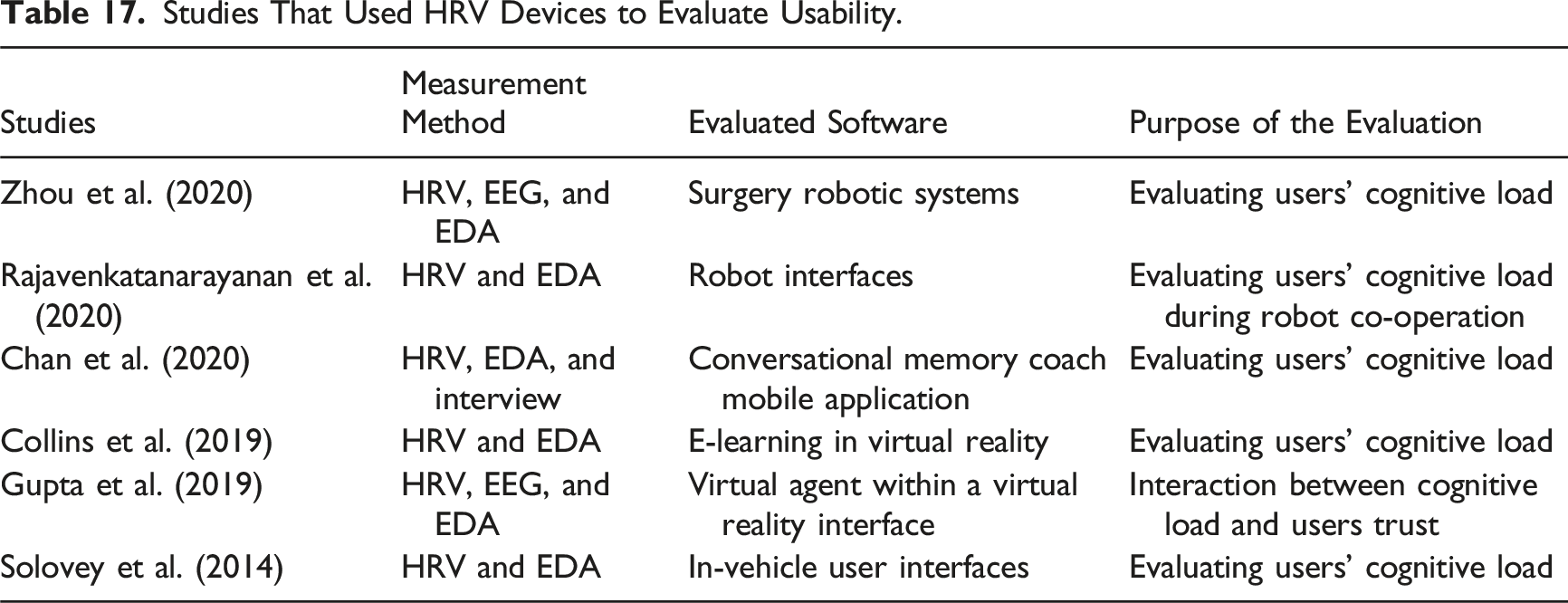

Studies That Used HRV Devices to Evaluate Usability.

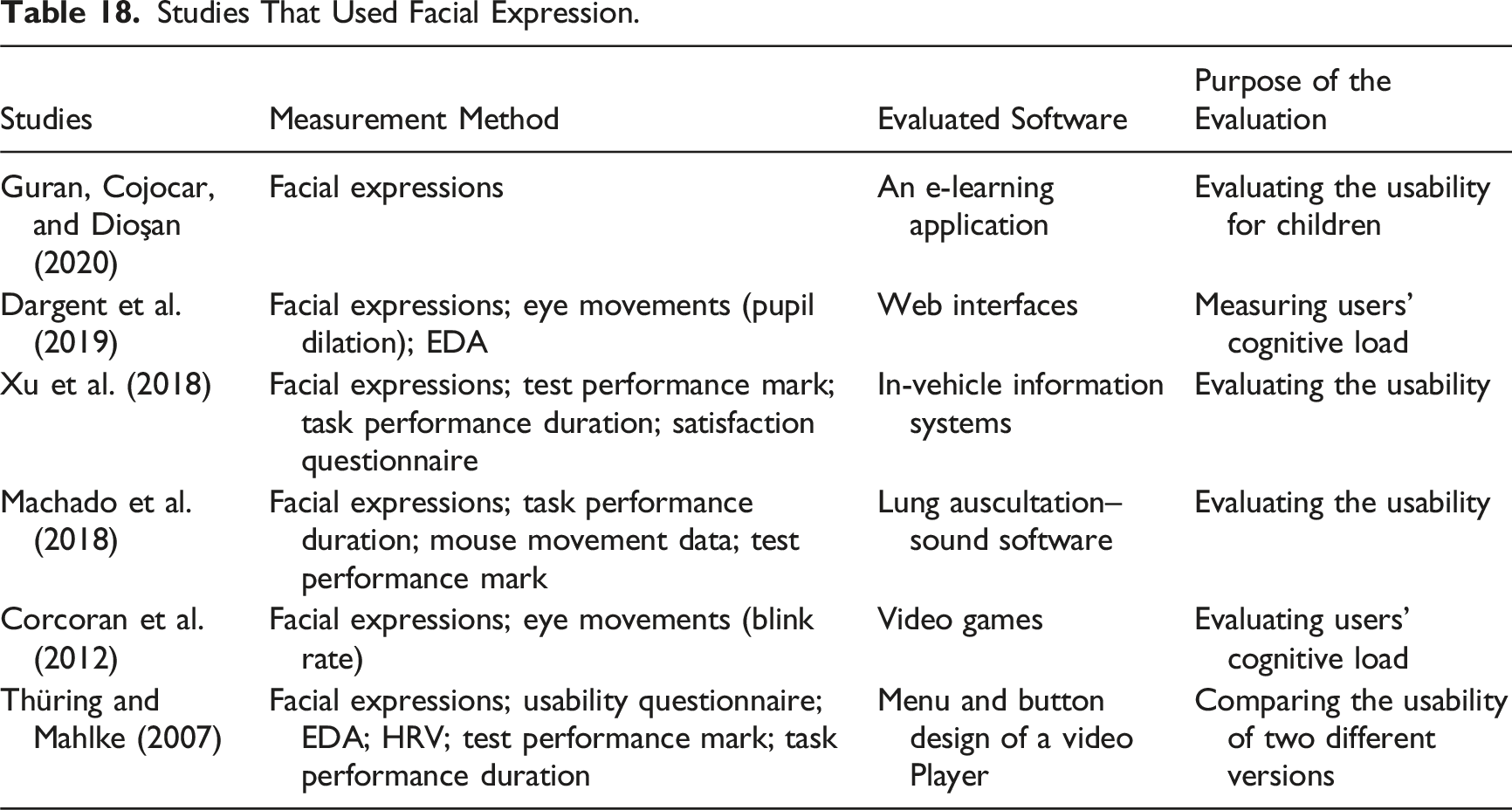

Studies That Used Facial Expression.

Most of the 87 reviewed studies employed cognitive load measurement methods as practical tools for evaluating user-interface usability rather than as instruments undergoing formal psychometric validation, meaning that validity coefficients were rarely reported. Consequently, validity was assessed through convergent validity, following Campbell and Fiske’s (1959) principle that different methods intended to measure the same construct should yield comparable outcomes.

A method was therefore considered to provide evidence of validity when it consistently revealed similar usability-related trends across studies, indicating cross-study robustness, and when its results aligned with those obtained from additional measurement methods within the same study, such as task-performance data, fixation behaviour, or dual-task outcomes. Alignment across different measurement approaches was interpreted as convergent evidence contributing to construct validity (Cronbach & Meehl, 1955; Messick, 1995a, 1995b). In line with contemporary validity theory (Cook & Beckman, 2006; Cronbach & Meehl, 1955; Messick, 1995a, 1995b), such convergent evidence was treated as a key contributor to construct-level validity. To operationalise this, the review examined convergence across subjective, behavioural, and physiological measures when used together. Cross-method convergence, supported by consistent patterns observed across studies, provided applied evidence of validity and enabled a coherent evaluation of the empirical soundness and interpretability of cognitive load measures within usability research, in alignment with Research Question 6.

Results

This section presents the findings of the systematic review based on 87 studies examining cognitive load measurement methods in software usability testing. Results are organised by the dimensions of objectivity (subjective vs. objective) and causal proximity (direct vs. indirect). Each subsection summarises the application, advantages, and limitations of the respective methods.

Subjective Measures

Subjective methods rely on self-report approaches to collect data directly from users after interacting with an interface. Users can rate various factors such as learning, mental effort, or work experience on a 5, 7, or 9-point Likert scale, ranging from 1 (extremely easy) to 9 (extremely difficult). For example, in a software usability test, participants may rate how visually complex or confusing an interface layout appears (a direct measure) or how much mental effort was required to complete a task such as navigating menus or interpreting icons (an indirect measure).

According to a review paper by Van Mierlo et al. (2012), in the context of using cognitive load measurement methods for interface and e-learning system design, subjective techniques assume that users can reliably monitor and report their cognitive processes while working with an interface. These methods are widely used to measure cognitive load as they are easy to conduct, they do not interfere with the primary task and they have proven to be reliable methods to indicate the amount of cognitive load (Ayres, 2006). In order to increase the reliability of self-report methods, using multi-question assessment has been suggested (Gerjets et al., 2009).

Subjective Direct Methods

The main direct method of measuring cognitive load is rating the difficulty of interface layout and functionality, after users work with an interface (DeLeeuw & Mayer, 2008; Gerjets et al., 2009; Kalyuga, Chandler, & Sweller, 1999). This scale is very sensitive in identifying differences in the cognitive load of users; however, users’ knowledge, attention, or task difficulty can affect the results as well (Brunken et al., 2003). Some examples of the Likert-scale questions are: ‘I found the difficulty of the interface layout: Easy/Neutral/Difficult’, ‘I found the difficulty of working with feature x: Easy/Neutral/Difficult’, and ‘I found the navigation through the interface pages: Easy/Neutral/Difficult’. See Table 4 for relevant studies that used usability questionnaires.

Studies that employed usability questionnaires, either alone or in combination with cognitive load questionnaires, identified them as effective tools for assessing interface usability and perceived workload. For instance, Koć-Januchta et al. (2022) combined a usability questionnaire with a cognitive load questionnaire to examine the relationship between interface design and users’ mental effort in an e-learning system, demonstrating consistent associations between usability ratings and perceived cognitive load. Across studies, these self-report instruments were used as applied measures of user experience rather than for formal psychometric validation. The consistent correlation between usability ratings and reported mental effort provides applied evidence of their convergent validity as subjective indicators of cognitive load in usability evaluation.

Subjective Indirect Methods

In the direct subjective method, the difficulty of the interface layout and functionality are reported; however, in the indirect methods, users report their amount of mental effort devoted in understanding the interface (DeLeeuw & Mayer, 2008; Paas, Renkl, & Sweller, 2003). Several studies have demonstrated that these self-reported mental effort ratings are reliable and valid indicators of cognitive load when appropriately designed and administered, showing consistent correlations with objective performance and physiological measures (Brunken et al., 2003; Paas, Renkl, & Sweller, 2003; Xiao et al., 2005). Most researchers agree that low reported effort corresponds to low cognitive load, whereas higher cognitive load requires users to expend more effort when interacting with the interface (Reed, Burton, & Kelly, 1985). Supporting this assumption, effort and difficulty ratings have been found to correlate significantly (DeLeeuw & Mayer, 2008).

The choice between subjective indirect and direct cognitive load measurement methods depends on the specific research question, and the nature of the cognitive task. Subjective direct cognitive load measurement methods can provide more direct insight into the cognitive load experienced by the individual and may be easier to administer. However, the accuracy of subjective direct methods may be limited by individual differences in the perception of task difficulty and mental effort, as well as by self-report bias, such as participants’ tendencies to underestimate or overestimate their workload due to social desirability, misinterpretation of scale items, or inaccurate introspection (Brunken et al., 2003; Yates, Gough, & Brazil, 2022).

A widely used Likert-scale questionnaire for measuring the mental effort of users after interacting with a software application or website is the NASA Task Load Index (TLX). It is widely adopted because it provides a multidimensional assessment of workload across six factors, mental, physical, and temporal demands, performance, effort, and frustration, offering a more nuanced evaluation than single-item scales. The NASA-TLX has demonstrated strong validity and reliability and cross-cultural robustness, for example, a large-scale Chinese validation study with 1268 mental workers reported both split-half reliability and Cronbach’s α values exceeding 0.80 (Xiao et al., 2005). This instrument has been applied successfully in diverse human–machine environments involving technologies and equipment such as control rooms and laboratory settings (Albers, 2011).

Similar to other subjective methods of measuring cognitive load, NASA-TLX cannot measure cognitive load while users are working with a website or software. The usage of the NASA-TLX occurs after completing each action and relies on users’ opinion of their previous workload. For example, it can be used to evaluate the cognitive load of users after working with different tools of a software application or different parts of a website. However, since users must rely on their memory and remember the process, some details can be forgotten. Also, the default questions of NASA-TLX should be adapted based on the functionality of the software (Pachunka, 2018; Pachunka et al., 2019).

The NASA-TLX test assesses cognitive load based on six scales consisting of: mental demand, temporal demand, physical demand, performance, effort, and frustration. It asks users the following questions: (1) How much mental effort was required? (Mental Demand); (2) How much time pressure did you feel? (Temporal Demand); (3) How much physical effort was required? (Physical Demand); (4) How hard did you work to finalise the task? (Effort); (5) How successful were you in completing the task? (Performance); (6) How disappointed, bored, or annoyed were you while completing the task? (Frustration Level) (Hart & Staveland, 1988; Pandian & Suleri, 2020; Schmutz et al., 2009).

There are many instances of the NASA-TLX test being used to evaluate usability and cognitive load. See Table 5 for relevant studies that used NASA-TLX test alone or in conjunction with the other measurement methods such as usability questionnaires and interviews.

Across multiple usability studies, NASA-TLX has shown strong convergent validity, with scores consistently aligning with behavioural indicators such as task duration and task completion (e.g., Brendle et al., 2020; Galais, Delmas, & Alonso, 2019). Several investigations also combined NASA-TLX with complementary methods, including usability questionnaires and task-performance metrics, producing coherent interpretations of user workload (e.g., Clarke et al., 2020; Pachunka et al., 2019; Richardson et al., 2019; Rockman et al., 2020). This repeated convergence across independent measures provides applied evidence supporting the construct validity of NASA-TLX as a post-test indicator of perceived workload in usability contexts. Although only a small number of studies reported formal validity coefficients (e.g., Xiao et al., 2005), the recurring agreement between NASA-TLX outcomes and behavioural measures supports its interpretive validity for capturing workload demands during software interaction. This evidence reflects the practical value of NASA-TLX in usability evaluation rather than a formal psychometric validation of the instrument.

Objective Measures

Although subjective measures of cognitive load are widely used by many researchers, objective methods have their own benefits. Objective methods can be categorised into brain activity measures, dual-task-paradigms, performance outcome analysis, behavioural patterns, and physiological measures other than brain activity measures. A major benefit of objective methods such as behavioural patterns and physiological measures is that they can provide a continuous measure of cognitive load that enables researchers to collect and analyse fluctuations in a stream of data over time, in contrast with the subjective self-reported techniques that only provide a few data points at the end of the usability test. They also do not rely on participants’ subjective assessment of their own load.

Direct Methods

In this category, use of brain activity measures and dual-task paradigm methods are common measurements.

Brain Activity Measures

Brain activity measurement methods use neuroimaging techniques to evaluate cognitive load based on continuous brain signal measurements while users are performing a task. Neuroimaging techniques can measure brain activities when performing cognitive tasks by visualising brain region activation (Anderson et al., 2011; Just et al., 2001). These measurement methods have several benefits including measuring the load continuously with high sensitivity and helping researchers to distinguish stress from mental workload as they can have the same effect on learning performance. However, these measurement methods are quite intrusive for users, require a time-consuming setup, their data analysis is complex (Baldwin & Cisler, 2017) and they are not suitable for routine applications. Different techniques can be used for measuring brain activity and cognitive load including electroencephalography (EEG), functional near infrared spectroscopy (fNIRS), functional magnetic resonance imaging (fMRI), and magnetoencephalography (MEG). Since EEG and fNIRS in comparison with fMRI and MEG have simpler installation processes with portable equipment, they are more common methods.

EEG

One of the most popular brain activity measurement methods is EEG that is designed to capture continuous brain activity including alpha, beta, and theta waves. EEG data changes based on cognitive stimuli and working memory load (Anderson et al., 2011). For example, when task difficulty is increased, alpha and theta bands show more activity (Gevins & Smith, 2003). EEG is performed by placing electrodes on the scalp that can measure voltage fluctuations that are related to ionic current within the brain neurons (Schomer & Da Silva, 2012). These sensors are connected via flexible fibre optic cables to a portable base, which allows researchers to measure cognitive load while participants perform a task in either a standing or walking position.

There are many instances of EEG devices being used to evaluate usability and cognitive load. See Table 6 for relevant studies that used EEG alone or in conjunction with the other measurement methods.

Studies that employed EEG to assess cognitive load in usability contexts consistently demonstrated its effectiveness as a direct and sensitive indicator of mental workload. Across the reviewed literature, EEG-based measures were frequently used alongside complementary methods such as NASA-TLX, usability questionnaires, and task-performance outcomes (e.g., Baig & Kavakli, 2018; Cano et al., 2021; Cheng & Wei, 2018; Plazak et al., 2019). Findings showed that variations in EEG frequency bands, particularly changes in alpha, beta, and theta activity, systematically corresponded with differences in task difficulty, interface complexity, and user performance. This alignment between neural indicators and subjective, behavioural, and performance-based measures provides applied evidence of the convergent validity of EEG for evaluating cognitive load in usability testing. Although the reviewed studies primarily applied EEG as an evaluative tool rather than conducting formal psychometric validation, the repeated convergence of EEG outcomes with established cognitive load measures supports its construct validity as a direct physiological method for assessing cognitive demand during interaction with software interfaces.

Functional Near-Infrared Spectroscopy (fNIRS)

fNIRS is a portable brain monitoring technology that can record changes in the cerebral blood flow of the brain. fNIRS records brain activity with the use of near-infrared spectroscopy through optical sensors that are placed on the scalp. Similar to EEG, these sensors are connected via flexible cables to a portable base, which allows researchers to measure cognitive load while participants perform a task in either a standing or walking position (Ferrari & Quaresima, 2012). See table 7 for relevant studies that used fNIRS alone or in conjunction with other measurement methods.

Studies that employed fNIRS to measure cognitive load consistently identified it as an effective method for assessing workload and detecting usability-related challenges. All reviewed studies used fNIRS independently to evaluate interface-related cognitive load rather than to establish formal psychometric validity. Across diverse applications, including virtual-reality learning environments, touchscreen systems, and gaming interfaces, fNIRS reliably detected variations in neural activation that corresponded to differences in task difficulty and user performance (Hirshfield et al., 2011; Karim et al., 2012; Kitabata et al., 2017; Lamb et al., 2018). The alignment between fNIRS activation patterns and behavioural indicators provides applied evidence of convergent validity, suggesting that fNIRS functions as a sensitive physiological indicator of cognitive load in usability evaluation, even though existing studies were designed primarily for performance assessment rather than psychometric validation.

MEG and fMRI

Several studies have indicated that both these technologies can be used effectively to measure cognitive load. Since no studies as far as we are aware have been used to evaluate interface usability and since bulky equipment would probably prevent such use, these techniques will not be discussed.

Dual-Task Paradigm Methods

The dual-task paradigm is a direct, objective method for measuring cognitive load, grounded in the assumption that individuals possess a limited pool of cognitive resources that must be distributed across concurrent tasks (Padilla et al., 2019). The dual-task method can be used with two different approaches. The first approach is adding a secondary task to a primary task to induce a memory load. In this approach the focus is on the primary task, and it is expected that performance in the primary task will be decreased with a dual-task condition in comparison with a single-task condition (Park & Brünken, 2015). For example, the primary task can be opening different pages of a website as fast as possible, and the secondary task can be clicking on a red spot on the screen. Increasing the time duration of opening website pages can be an indicator of increasing cognitive load.

The second approach uses a secondary task to determine the cognitive load imposed by the primary task. Thus, the focus is on the secondary task and based on the amount of load that the primary task imposes, performance of the secondary task will be changed. For example, the primary task can be working with a specific part of an interface and the secondary task can be clicking on a red spot on the screen. Decreasing the number of clicks can be an indicator of increasing cognitive load (Haji et al., 2015; Martin, 2014; Park & Brünken, 2015; Schoor, Bannert, & Brünken, 2012).

Although some scholars argue that dual-task paradigms measure spare attentional capacity rather than cognitive load itself (Park & Brünken, 2015), the two constructs are closely related: as cognitive load increases, the amount of available attentional capacity decreases. Hence, secondary-task performance provides an immediate behavioural indicator of how cognitive resources are allocated during task execution. While the dual-task paradigm may be viewed as indirectly reflecting cognitive load, in this review it is classified as a direct method because it captures real-time performance outcomes that arise from immediate cognitive processing demands.

For usability testing with the use of the dual-task paradigm method, the primary task is commonly working or learning with the interface, and the secondary task is a visual observation task such as remembering a letter or a word, asking participants to press a keyboard’s key or move the mouse and click a button based on changing the colour of a small window or the appearance a letter or a shape on the screen (DeLeeuw and Mayer, 2008; Lai & McMahan, 2020; Schmutz et al., 2009; Van Nuland, 2017).

One of the most commonly used dual-task methods for measuring users’ cognitive load while interacting with an interface is the Detection Response Task (DRT), in which a secondary manual or tactile response, such as a tapping action, is used to detect changes in attention and cognitive demand (Albers, 2011; Innes et al., 2021; Stojmenova & Sodnik, 2018). In the tapping action method, users are asked to tap (one tap per second) with either their hand or foot while working with a website and the evaluator counts the number of taps by recording a video, recording the tapping sound, or connecting some hardware to the hands or foot of the users to count the number of taps automatically (Olive, 2004). Tapping is a secondary task to the main task which is working with the website and puts an extra load on users. Based on the difficulty of different parts of the website, the number of taps can be either increased or decreased which can be an indicator of increasing or decreasing cognitive load.

For example, to evaluate the cognitive load of users while working with a website, we can use the tapping method by asking users to use one of their hands to control the mouse and the other hand to tap on the desk. If users face any usability issue such as an inability to find the desired feature, it is expected that the number of taps will be decreased as they should expend more mental effort to work with the website. After finding the desired feature, the number of taps should return to the normal baseline rate (Miyake, Onishi, & Poppel, 2004).

Since the dual-task paradigm methods such as the tapping method, measure cognitive load continuously, they can reveal different types of usability issues such as those that cannot be measured with the other methods of measuring cognitive load such as difficulty scale questionnaires that only measure cognitive load at the conclusion of the task. It can even help to find minor usability issues that may not significantly overload users, however, discovering them can help to optimise the design (Albers, 2011). The main drawback of dual-task methods is that the secondary task may influence participants’ performance on the primary task (Van Mierlo et al., 2012), and so some researchers prefer not to use it. See Table 8 for relevant studies that used the dual-task paradigm alone or in conjunction with other measurement methods.

Most of the studies summarised in Table 7 indicated that the dual-task paradigm is an effective method for measuring cognitive load in usability contexts. Several investigations combined the dual-task paradigm with subjective measures such as NASA-TLX (e.g., Albers, 2011; Giraud, Thérouanne & Steiner, 2018; Na, 2021; Schmutz et al., 2009; Shelton et al., 2021; Wenk et al., 2023), while others integrated behavioural indicators such as task completion time and error rates (e.g., King, 2019; Van Nuland, 2017). Across these studies, convergence between subjective (NASA-TLX) and behavioural (dual-task and performance outcomes) measures was consistently observed, providing applied evidence of the convergent validity of the dual-task paradigm for assessing usability-related cognitive load. Two studies (Schmutz et al., 2009; Van Nuland, 2017) did not report significant differences in cognitive load, suggesting that the sensitivity of the dual-task approach may vary depending on task characteristics and experimental design.

Indirect Methods

In this category, performance measures, behavioural measures, and physiological measures are the common measurement methods.

Performance Measures

One of the most common methods of measuring cognitive load is analysing performance outcomes by calculating correct answers, error rates, and the time duration of performing a task. This method is indirect because it depends on mental processing speed and retrieval which can be affected by cognitive load. In order to compare the usability of two versions of an interface with the use of this method, the same tasks should be assigned to users while they are working with the interfaces and based on the number of correct answers, mistakes, or time duration, a performance mark is calculated. Since the tasks are the same, we can expect that the differences in performance outcomes are a reflection of mental load that is induced by the interface design (Antonenko & Niederhauser, 2010; Brunken et al., 2003; DeLeeuw & Mayer, 2008; Mayer, 2001; Paas, Ayres, & Pachman, 2008; Van Mierlo et al., 2012). For example, in order to evaluate the usability of the drawing chart feature of Microsoft Excel compared to the same feature using Apple Numbers, the task completion rate, and error rate of both drawing chart features can be measured and compared.

A key advantage of performance measures is their objectivity and ease of collection, as they do not rely on users’ self-assessment or specialised equipment (DeLeeuw & Mayer, 2008). They are particularly useful for detecting observable consequences of excessive cognitive load, such as slower task completion or increased errors (Antonenko & Niederhauser, 2010; Van Mierlo et al., 2012). However, their major limitation is sensitivity: performance can be affected by factors unrelated to cognitive load, such as prior experience, motivation, fatigue, or task familiarity, making it difficult to isolate cognitive load as the sole cause of performance variation (Paas, Ayres, & Pachman, 2008; Zheng, 2017). Moreover, very high or very low performance may reflect underload (task too easy) or overload (task too demanding), meaning that optimal interpretations often require triangulation with subjective or physiological measures to ensure accurate conclusions (Antonenko & Niederhauser, 2010; Brunken et al., 2003).

Time-on-task is another performance measurement factor that can be calculated based on the amount of time that users spend completing different tasks while working with different elements of an interface (Brunken et al., 2003; Cranford et al., 2014). Extended time spent on a specific part of an interface may indicate suboptimal cognitive load, which can reflect either overload, when users struggle with excessive task demands, or underload, when tasks fail to maintain engagement and attention (Antonenko & Niederhauser, 2010; Brunken et al., 2003; Khawaja, Ruis, & Chen, 2007). For example, in the context of multimedia systems, navigation speed and errors that increase the time duration of the target task completion can be considered to be a result of cognitive load (Astleitner & Leutner, 1996; Yin et al., 2008). See Table 9 for relevant studies that used the performance measurement method alone or in conjunction with other measurement methods.

Overall, the findings indicate that lower error rates, higher test-performance scores, and shorter task durations function as practical indicators of reduced cognitive load and greater usability. In several studies, performance measures were used alongside self-reported tools such as NASA-TLX or usability questionnaires (e.g., Da Costa et al., 2013; Darejeh et al., 2024a, 2024b; Holden et al., 2020; Huang, Eades & Hong, 2009), and the results across these approaches consistently revealed similar cognitive load trends. This convergence between performance-based and subjective indicators provides applied evidence of the convergent validity of performance measures for evaluating cognitive load in usability contexts, even though most investigations aimed to assess interface performance rather than to conduct formal psychometric validation.

Behavioural Measures

Behavioural measurement methods evaluate cognitive load based on users’ behaviour while they are performing a task with a user interface. The most common behavioural measures that are used for usability purposes are evaluating mouse dynamics and linguistic features.

Mouse Dynamics

Mouse dynamics are one of the behavioural measures of cognitive load that are supported based on our human motor system. Mouse dynamics rely on different mouse movement attributes including speed, direction, action, distance, and time (Ahmed & Traore, 2007). Based on these attributes, higher level features such as acceleration, angular velocity, scroll wheel activity, clicks/double clicks, drag-and-drop operations, point-and-click operations, and silence can be measured (Ahmed & Traore, 2007; Gamboa & Fred 2004; Hocquet et al., 2004; Jorgensen & Yu 2011; Pusara & Brodley, 2004). These features are aggregated and measured in distinct time periods for analysis (Grimes & Valacich, 2015). There are different applications such as Mouse Tracker and Mousotron that can be used to measure mouse movement data.

Several studies showed that mouse dynamic attributes decrease with increasing cognitive load, since users have less resources to perform the tasks as load increases and so have less resources left to move the mouse (Grimes & Valacich, 2015; Khawaji et al., 2014; Rheem, Verma, & Becker, 2018). In particular, if the mouse movements are redundant to the task at hand, by increasing cognitive load the movement will be decreased. However, if the mouse movement is used to complete the main tasks, the movements may increase when increasing the complexity level of the task as participants require more effort to find the solution and complete the task, and the mouse movements may support this goal. Whether mouse movement increases or decreases as cognitive load changes will depend on the purpose of the movements to the task at hand. See Table 10 for relevant studies that used mouse dynamics in conjunction with other measurement methods.

Mouse-dynamic measures have been recognised as an effective method for assessing cognitive load and identifying usability-related difficulties. Across the reviewed studies, mouse-movement indicators were analysed alongside complementary measures such as task-performance duration, test-performance scores, usability questionnaires, and mental effort ratings (e.g., Darejeh, Marcus & Sweller, 2021, 2022; Fuller et al., 2020; Grimes & Valacich, 2015; Hibbeln et al., 2014; Khawaji et al., 2014; Kortum & Acemyan, 2016). Findings consistently showed that mouse-movement characteristics—such as speed, distance, and acceleration—varied systematically with task difficulty and aligned with subjective and performance-based indicators of cognitive load. Although mouse-dynamics were primarily applied to evaluate interface usability rather than to establish psychometric validity, the convergence observed across multiple measurement methods provides applied evidence of the convergent validity of mouse-dynamic measures for detecting cognitive load variations during interactive tasks.

Linguistic Features

One of the indirect subjective methods of measuring cognitive load that can be used in evaluating usability is analysing the complexity level of users’ spoken language including sentence length, number of words, punctuations, syllables, use of pauses, repetitive words, corrections, complexity, and the comprehensibility level of the words. Linguistic complexity is measured by two major factors known as syntactic complexity and semantic difficulty. Syntactic complexity observes the sentence length, which is the best indicator of language complexity. Semantic difficulty analyses the use of words, their lengths (syllables and letters) and their structure (Lennon & Burdick, 2004).

Different studies showed that there will be different linguistic patterns based on the complexity level of the task and the associated cognitive load. Some have indicated in high-load situations, participants’ speech rate, amplitude, speech energy, and variability will be increased (Brenner et al., 1985; Lively et al., 1993). Others have reported peak intonation and pitch range patterns (Kettebekov, 2004; Lively et al., 1993) and pitch variability (Wood et al., 2004) are related to high cognitive load. Also, by increasing the cognitive load level, participants may use significantly longer sentences with more complex structures that can make comprehension more difficult (Khawaja, Chen & Marcus, 2014). Khawaja, Chen and Marcus (2012) also found that as the task complexity increased, so teams collaborate more and their language patterns change as reflected by an increase in speech, use of longer sentences, more disagreement, and using more plural pronouns such as “we” and “they.”

Across these studies, the recording and analysis of language varied: some examined spoken utterances captured through audio recordings (Khawaja, Chen & Marcus, 2014; Oviatt, 2006), whereas others analysed team dialogues or typed linguistic inputs within interactive or simulation-based environments (Khawaja, Chen & Marcus, 2012; Tuček, Mount & Abbass, 2012). These methodological differences partly explain the variation in reported linguistic indicators of cognitive load. Since linguistic methods can measure the load in real time, it can be an efficient method to find different usability issues, especially if software users need to communicate and speak with each other. See Table 11 for relevant studies that used linguistic features alone or in conjunction with the other measurement methods.

The reviewed studies indicate that linguistic features can serve as effective indicators of cognitive load and usability. Linguistic analysis was used either independently or in conjunction with subjective rating questionnaires or performance measures (e.g., Khawaja, Chen & Marcus, 2012, 2014; Oviatt, 2006; Tuček, Mount & Abbass, 2012). Findings consistently showed that increases in task difficulty were associated with reduced speech intensity, higher pitch, and simpler sentence structures, reflecting the diversion of working-memory resources toward task processing. In collaborative contexts, greater cognitive load corresponded with more tightly coupled speech patterns and shared linguistic structures among participants. Although these studies primarily applied linguistic analysis to evaluate user performance rather than to establish formal psychometric validity, the consistent convergence with subjective and other behavioural indicators provides applied evidence of the convergent validity of linguistic features as markers of cognitive load in usability evaluation.

Eye Movements

Eye movement can be simply measured with the use of cameras and eye tracking devices without the need to attach anything to users that can make it uncomfortable for them and decrease reliability of the results. Different studies have proved the efficiency of measuring eye movements in the process of usability testing and designing adaptive e-learning systems (El Haddioui, 2019). Some researchers found that when self-report questionnaires cannot show an accurate result in the usability evaluation, eye tracking methods can be used as an alternative and reliable solution (Schiessl et al., 2003; Wang et al., 2020).

Compared to EEG or fNIRS, which capture direct neural activity associated with cognitive processing, eye-movement measures provide an indirect but highly interpretable reflection of visual attention and information-processing strategies. While EEG and fNIRS offer higher temporal precision and sensitivity to instantaneous cognitive fluctuations, eye-tracking methods are less intrusive, easier to implement in usability studies, and can reveal how users allocate attention across interface elements in real time. Accordingly, eye-movement data can be used in conjunction with EEG to triangulate cognitive load findings and enhance interpretive validity (Beatty, 1982; Baldwin & Cisler, 2017; Hess & Polt, 1964).

Eye movement measures can be categorised into behavioural (voluntary) including fixations and saccades and physiological (involuntary) including pupil dilation and blink rate that will be discussed in the physiological measures section (Chen et al., 2016; Pfleging et al., 2016; Rudmann, McConkie, & Zheng, 2003).

Fixation is a behavioural (voluntary) eye movement measurement which refers to a situation when the eyes remains still over a period of time, from 200 milliseconds to several seconds, focussed onto an area of interest (AOI). In this measurement, the interface element that is relevant for the current cognitive activity is identified based on the gaze direction (Rudmann, McConkie, & Zheng, 2003). Increasing the fixation duration on an interface element can show that users needed more attention as the understanding of the interface was difficult for them. It can be an indication of increased processing in working memory and high cognitive load (Chen et al., 2011). See Table 12 for relevant studies that used the fixations measurement method alone or in conjunction with other measurement methods.

The reviewed studies indicate that fixation-based eye-tracking measures are effective indicators of cognitive load and usability. Across investigations, fixation metrics were frequently analysed alongside other measures such as usability questionnaires (Huang & Zhang, 2022; Zardari, Hussain & Arain, 2021), task-performance duration (Calvo et al., 2022; Chynał et al., 2012; Wang et al., 2014; Zardari, Hussain & Arain, 2021), test-performance scores (Calvo et al., 2022; Guerberof, Moorkens & Brien, 2021; Zardari, Hussain & Arain, 2021), EEG (Cheng & Wei, 2018), and EDA (Bruneau et al., 2002). Findings consistently showed that fixation duration and frequency increased with higher task complexity and reduced usability, mirroring patterns observed in error rates and task-completion times. This alignment between visual-attention metrics and subjective, behavioural, and physiological indicators provides applied evidence of the convergent validity of fixation-based measures for evaluating cognitive load. Although most studies focused on applied usability assessment rather than psychometric validation, the consistent fixation-based findings across diverse interfaces underscore their robustness as indicators of attentional demand in usability contexts.

Another behavioural (voluntary) eye movement measurement involves the use of a saccade, which refers to rapid eye shifts between two locations (from one fixation to another) that reflect users’ visual search behaviour and attentional shifts. The most common measurement method for saccades is observing the patterns and velocity of scan paths, defined as the number and duration of gaze fixations across different AOIs. In usability contexts, increasing saccade velocity or frequency indicates higher cognitive load because users must exert greater mental effort to locate relevant interface elements, suggesting inefficiencies in visual layout or information organisation. When cognitive processing is smooth and the interface supports user expectations, saccades tend to be shorter and more targeted; when users struggle to find information, they become faster, more frequent, and less predictable. Conversely, a decrease in saccade velocity can be an indication of tiredness or reduced attentional engagement (Barrios et al., 2004; Chen et al., 2011). See Table 13 for relevant studies that used the saccades measurement method alone or in conjunction with other measurement methods.

In the reviewed studies, saccade-based eye-movement measures were recognised as effective indicators of cognitive load and usability. These measures were frequently examined alongside other indicators such as fixation metrics, task-performance duration, error rates, and EEG (Ehmke & Wilson, 2007; Keskin et al., 2020; Renshaw et al., 2003). Across these investigations, increased task complexity and reduced usability were associated with shorter, more frequent saccades and higher fixation counts, reflecting greater visual-search effort. The convergence between saccadic behaviour, performance outcomes, and physiological responses provides applied evidence of the convergent validity of saccade-based measures for detecting cognitive load variations, even though these studies primarily focused on interface evaluation rather than formal psychometric validation.

Physiological Measures

Physiological measures are a group of cognitive load measurement methods that are based on brain activities and our physiological reactions. By increasing mental processing, cortical activity causes a small nervous response within the body that can affect pupil dilation, blink rate, heart rate, blood pressure, facial muscles, and electrodermal activity (Zagermann, Pfeil & Reiterer, 2016).

Pupil Dilation and Blink Rate

In the category of physiological cognitive load measures, analysing pupil dilation and blink rate are two of the most reliable approaches and are used by many, as they can be simply measured with the use of cameras and eye tracking devices without the need to attach anything to users that can make it uncomfortable for them and decrease reliability of the results.

As explained in the previous section, eye movement measures can be categorised into behavioural (voluntary) including fixations and saccades and physiological (involuntary) including pupil dilation and blink rate (Chen et al., 2016; Pfleging et al., 2016; Rudmann, McConkie, & Zheng, 2003). In the next sections the physiological measures of eye movement are discussed.

Pupil dilation is a physiological (involuntary) eye movement measurement that is affected by cognitive processes. With increased cognitive load, users’ pupils dilate and by decreasing the difficulty level of the task or towards the end of a task, pupils diameter size decreases (Chen et al., 2011; Klingner, Kumar, & Hanrahan, 2008; Porta, Ricotti, & Perez, 2012; Rafiqi et al., 2015; Rudmann, McConkie, & Zheng, 2003; Zagermann, Pfeil, & Reiterer, 2016). However, it should be considered that in addition to cognitive processes, pupils diameter size can be changed based on the brightness of the environment. Pupils’ diameter size increases when the environment becomes darker to obtain more light and decreases when the environment becomes brighter. Therefore, controlling the brightness of the environment and display brightness are critical factors that can affect pupils’ size and affect the reliability of the usability test results. In order to get more accurate results when testing a user interface based on pupils’ size, the calibration value for the display brightness should be subtracted from the measured pupils’ size (Liu, Zheng, & Zhou, 2019). See Table 14 for relevant studies that used pupil dilation measurement methods.

The reviewed studies demonstrate that pupil-dilation measures are effective indicators of cognitive load and usability. Pupil metrics were frequently analysed alongside other measures such as fixation behaviour, task-performance outcomes, usability questionnaires, and NASA-TLX ratings (Dobhan, Wüllerich & Röhner, 2022; Jiang et al., 2018; Majooni, Akhavan & Offenhuber, 2018). Across these investigations, greater task difficulty and reduced interface usability were consistently associated with increased pupil dilation, longer fixation durations, and higher self-reported workload scores. This alignment between physiological, behavioural, and subjective indicators provides applied evidence of the convergent validity of pupil-dilation measures for assessing cognitive load in usability evaluation, even though the reviewed studies focused primarily on applied interface assessment rather than formal psychometric validation.

Another physiological (involuntary) eye movement measurement is rate and latency of blinking which can show the attention of users. A high blink latency and a low blink rate are an indication of a high mental load (Chen et al., 2011). When users need to process the usage of an interface element that is not easily comprehensible for them and they need to devote more attention to a task, their blink rate will decrease. Therefore, the blink rate is an indication of increasing cognitive load in the area of human computer interaction (Joseph & Murugesh, 2020). See Table 15 for relevant studies that used the blink rate.

The reviewed studies indicate that blink-rate measures are effective indicators of cognitive load and usability. Blink-rate analysis was frequently conducted alongside other physiological and subjective measures such as fixation behaviour (Fowler, Nesbitt & Canossa, 2019; Li et al., 2024; Nagy et al., 2023; Sevcenko et al., 2023), pupil dilation and saccades (Nagy et al., 2023; Sevcenko et al., 2023), NASA-TLX ratings (Li et al., 2024; Mazur et al., 2019; Nagy et al., 2023), and performance outcomes such as task duration and test-score accuracy (Mazur et al., 2019). Across these investigations, higher cognitive load and reduced usability were consistently associated with decreased blink frequency and increased fixation duration, reflecting sustained visual attention and mental effort. The convergence between blink-rate patterns and physiological, behavioural, and self-reported indicators provides applied evidence of the convergent validity of blink-rate measures for assessing cognitive load in usability studies, even though most investigations were designed for applied interface evaluation rather than formal psychometric validation.

When measuring cognitive load using eye movement measurement methods, there are three factors including individual interaction, social interaction, and environmental factors that should be taken into account (Jetter, Reiterer, & Geyer, 2014).

Individual interaction describes the way users interact with a system interface including menus, content, and tools. Based on the system design and the activity that users perform with the system, they will be required to focus on a specific part of the interface which can influence users’ fixations. Thus, interface layout and the visual presentation of content can also influence users’ fixation location, duration, and rate without changing their cognitive load. Also, the interface structure as well as the task at hand can influence saccadic eye movements including length, velocity, and angle of saccades (Zheng, 2017). For example, while users perform a visual analytic task in spreadsheet applications, they need to switch between different tools, worksheets, and charts which can affect fixation and saccadic eye movements. Another example is the difference between eye movements when users are searching for information by reading a text versus checking an image.

The other factor that can influence saccadic eye movements and fixations without affecting users’ cognitive load is the input and output technologies including mouse, keyboard, and touch screen displays that are used to interact with the interface. Input devices might require users to fixate on the input in addition to the visual information on a display (Gao & Sun, 2015). For example, graphic designers often need to switch between desktop displays, and digital pen and tablet to draw an artwork (Keim et al., 2008). Input and output devices can also affect pupil dilation and blinking behaviour as the luminance of the devices such as mobile phones, tablets, and laptops can be changed frequently based on the brightness of the environment (Pfleging et al., 2016). Thus, in terms of individual interaction, it is important to consider the software content, the task at hand, and the input and output devices as additional elements that can influence eye movements without changing cognitive load.

Social interaction describes the social aspects that influence the way we collaborate with the use of the system. Some systems such as online games, telecommunications applications, and e-learning platforms are inherently social, as users need to work with the system in groups and have communication and perform coordination activities with the other users. Depending on the activity, the number of users that interact with each other, and social roles of users, fixations and saccadic eye movements can be affected without changing cognitive load (Zagermann, Pfeil, & Reiterer, 2016). Switching focus between different users and interactive devices is exhausting for the eyes and consequently it can increase blinking rates and the velocity of eye movement as well as fixations and saccades. Therefore, the interference of eye movements through communication with the other users should be considered in order to increase the reliability of the usability test results when they are measured using eye movements (Zagermann, Pfeil, & Reiterer, 2016).

Environmental factors describe the characteristics of the physical environment such as the office, lab, class, or conference room where users interact with the system. Since each environment has its own characteristics such as temperature, humidity, and luminance with different types of furniture, equipment, and devices, users’ attention and consequently eye movements can be changed during the usability test without changing cognitive load. Thus, during a usability test the fixations should be evaluated with respect to the physical surroundings. Also, the environmental characteristics can exhaust the eye and increase blinking. For example, the environmental luminance has the highest impact on pupil size. Therefore, environmental factors should be taken into account when conducting a usability test using an eye movement measurement method as it can interfere with eye tracking measurements in several different ways (Chen et al., 2016).

Electrodermal Activity (EDA)

EDA is one of the characteristics of the human body that causes continuous changes in the electrical properties of the skin (Boucsein, 2012; Critchley, 2002). EDA theory indicates that skin resistance varies based on the state of sweat glands in the skin and the sympathetic nervous system is responsible in controlling sweating (Martini & Bartholomew, 2001). By arousing the sympathetic nervous system, the activity of sweat glands is increased, enhancing skin conductance. Therefore, skin conductance can be measured as an indication of physiological arousal (Carlson, 2013). In other words, based on environmental factors and a person’s cognitive state, emotional arousal changes can increase sweat gland activity and consequently lead to an increase in skin conductance. It should be noted that the EDA signal is representative of the intensity of an emotion but not emotion type.

In applied usability testing, EDA is measured by attaching very thin sensors around the fingers or wrist with the sensors sending the signals wirelessly to a computer, then the computer quantifies moments of significant increased arousal (iMotions, 2021). For example, a spike in conductance while a user interacts with a complex or confusing interface element may indicate an increase in cognitive or emotional load at that moment. This allows researchers to align EDA data with specific user interactions, helping identify which interface components contribute to elevated cognitive demand.