Abstract

Objective

This study reports the development and preliminary evaluation of SAAAR, a multimodal system designed to assess and support the development of situation awareness (SA).

Background

SA is critical in anesthesiology, yet existing assessment methods lack standardized tools tailored to its complexities of anesthetic practice. Systems developed in other domains have limited applicability, highlighting the need for a purpose-built approach for anesthesia residents.

Method

The SAAAR comprises two components: a 16-item behavioral marker scale and a structured debriefing with eye-tracking. Thirteen anesthesiology faculty tested interrater and test-retest reliability, while five experts conducted content validation of the scale. Both components were implemented in a simulation-based training program for preliminary system evaluation.

Results

The behavioral marker scale demonstrated moderate content validity and high reliability. Internal consistency was strong (McDonald’s Ω = 0.928), test-retest reliability high (Spearman’s ρ = 0.952), and interrater agreement moderate (Kendall’s W = 0.412). Faculty reported the scale to be clear, comprehensive, and easy to use. Pilot implementation showed significant improvements across domains (Wilcoxon signed-rank test), indicating the system’s potential to provide targeted feedback and guide educational interventions.

Conclusions

Grounded in HFE principles, the SAAAR provides a structured approach to assessing SA in anesthesia residents and demonstrates preliminary potential to inform educational strategies. Further research is required to determine its impact on clinical performance.

Application

The SAAAR offers residency programs and human factors experts a practical tool for assessing SA and designing targeted training. Its adaptable framework suggests potential applicability in other high-pressure medical contexts, pending further evaluation.

Keywords

Introduction

Effective decision making in high-stakes, dynamic environments—such as the operating room—depends on clinicians’ ability to interpret and anticipate evolving scenarios. In this context, situation awareness (SA) is essential to anesthetic practice, enabling professionals to perceive relevant cues, comprehend their significance, and project future states. SA, as defined by Endsley (1995b), comprises three levels: perception, comprehension, and projection. This model has been widely applied in aviation, military operations, and, increasingly, in healthcare, where failures in SA have been linked to adverse events and compromised patient safety (Endsley & Garland, 2000; Schulz et al., 2016).

SA failures have been identified in approximately 81.5% of adverse events in anesthesiology, underscoring its central role in clinical performance (Schulz et al., 2016). Despite this, SA training remains largely informal, relying on experiential learning over standardized tools or pedagogical strategies (Haber et al., 2017). Existing SA assessment systems, developed for other domains, are ill-suited to the complexities of anesthesiology residency education.

Several efforts have been made to adapt SA measurement to medical simulation. Wright et al. (2004) emphasized the need for objective, validated metrics in human patient simulation and identified the Situation Awareness Global Assessment Technique (SAGAT) as an alternative to traditional performance indicators. Crozier et al. (2015) developed the Team Situation Awareness Global Assessment Technique (TSAGAT), demonstrating its reliability in trauma teams. Hogan et al. (2006) validated SAGAT’s ability to distinguish expertise levels in trauma simulations. Shelton et al. (2013) refined the Situation Present Assessment Method (SPAM), enabling real-time SA queries via handheld devices. Schulz (2016) identified SAGAT as the gold standard, SART as a post hoc self-report, and the ANTS behavioral rating scale (Fletcher et al., 2003) as the most common observational method.

While these tools offer valuable insights, their applicability to anesthesia training is limited. The freeze-probe SAGAT interrupts task flow, making it unsuitable for real operating rooms. The SART posttask self-evaluation scale is easier to use but raises validity concerns due to memory bias and limited insight into SA failures (Endsley, 1995a). Observer-based tools such as ANTS can assess nontechnical skills, including SA, but observable behaviors often fail to capture the underlying cognitive processes—particularly at Level 2 (comprehension). Furthermore, most SA tools lack rigorous longitudinal evaluation (Stanton et al., 2013), restricting innovation and pedagogical advancement. Therefore, SA assessment in anesthesiology should aim not only to measure performance but also to foster SA development as an educational competency.

These tools were designed to evaluate expert performance rather than to facilitate learning in formative training contexts. Their limited scope, feasibility, and pedagogical applicability underscore the need for assessments specifically tailored to anesthesiology residency education, where distinctive SA demands arise from the dynamic and complex nature of anesthetic practice. In this setting, residents must integrate physiological data, direct observations, team interactions, equipment function, and environmental cues (perception) into a coherent mental model that anticipates potential complications (comprehension), while forecasting risks, planning contingencies, and managing competing goals under high cognitive load, time constraints, and team demands (Dishman et al., 2020; Fioratou et al., 2010; Gaba et al., 1995). Although simulation-based education effectively improves SA (Walshe et al., 2019), and constructive instructor-led debriefing enhances learning (Savoldelli et al., 2006), anesthesiology training still relies mainly on workplace learning, the backbone of postgraduate education (Olmos-Vega, 2018). Consequently, SA assessment methods must be adaptable to both simulated scenarios and real clinical environments.

This study aimed to design and conduct a preliminary evaluation of the Situation Awareness Assessment for Anesthesia Residents (SAAAR), a multimodal system composed of a behavioral marker scale and a structured debriefing supported by eye-tracking. The system was developed for use in both simulated and clinical environments, aligned with residency education goals and the ACGME Anesthesiology Milestones 2.0—specifically Patient Care 7 (PC7): Situational Awareness and Crisis Management (ACGME, 2021). The SAAAR supports SA as a core competency through assessment, continuous feedback, and progressive skill development.

Methods

Design of the SAAAR System

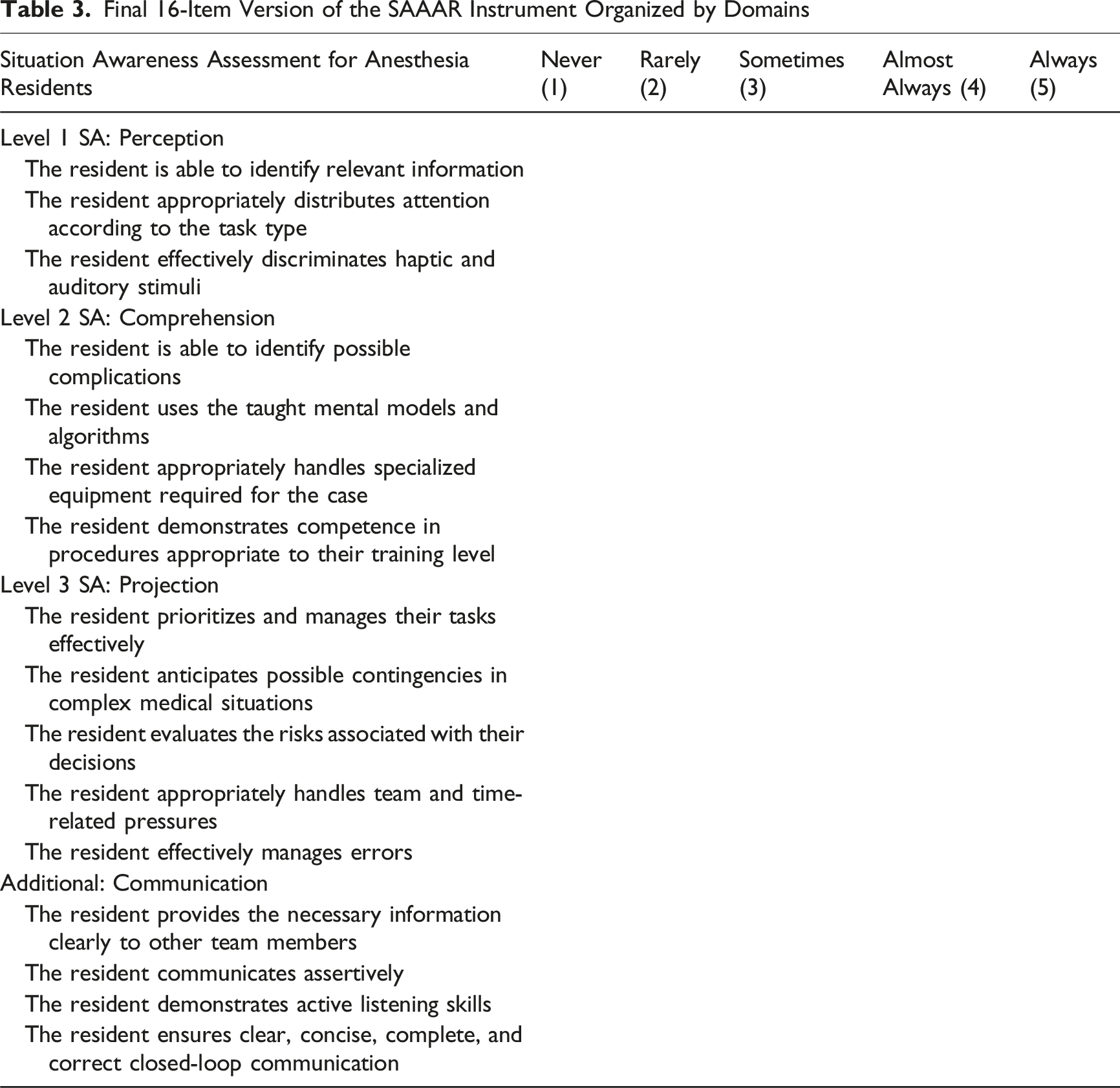

The Situation Awareness Assessment for Anesthesia Residents (SAAAR) was designed from an HFE perspective to support the assessment and development of SA in anesthesiology training. It adopts Endsley’s definition of SA as the perception of environmental elements, their comprehension, and the projection of future status (Endsley, 1995b). The SAAAR comprises two components: a 16-item behavioral marker scale and a structured debriefing supported by eye-tracking. The scale is organized into four domains: three reflecting Endsley’s theoretical levels of SA (perception, comprehension, and projection) and one pedagogical domain, communication.

Although not part of the original SA taxonomy, communication emerged during the formative design process as a key behavioral indicator for making residents’ mental models visible during critical events. A prior study on SA training needs (Daza-Beltrán et al., 2025) found many SA errors stemmed from communication failures. In this context, four observable behaviors were included in the SAAAR—providing necessary information clearly, communicating assertively, demonstrating active listening, and ensuring closed-loop communication—not as a theoretical SA dimension, but as a means to externalize reasoning and enable timely, targeted pedagogical feedback to shape mental models.

The domains targeted for assessment were: perception (Level 1), defined as the ability to detect relevant information and manage attention, measured via gaze overlap in eye-tracking videos; comprehension (Level 2), the development and application of mental models, inferred from performance and verbalizations; and projection (Level 3), anticipating contingencies and responding to errors, evaluated through actions and reasoning. Communication—conveying and obtaining key information—was also targeted for assessment within the system. These behavior-based observations (SA as product) were enriched by structured video debriefing, accessing cognitive processes (SA as process) and supporting pedagogical feedback.

Within this academic context, the SAAAR was developed to: (1) assess SA during both clinical and simulated procedures; (2) identify SA-related behavioral gaps and support the implementation of targeted educational interventions to strengthen SA competencies; (3) foster reflective learning through video-based debriefing supported by eye-tracking; and (4) support the evaluation of the effectiveness of SA-focused instruction.

Its design was informed by previously identified training needs in anesthesiology (Daza-Beltrán et al., 2025), but it was conceived as a flexible formative tool that can be adapted to different educational programs and applied in both clinical and simulated environments. The system provides structured feedback to enhance situational awareness learning, requires minimal observer training, and addresses the limitations of freeze-probe and self-report methods, which are often impractical and lack immediate pedagogical value in clinical settings.

The development of the system was informed by an exploratory literature review on SA-related skills in anesthesiology. This included foundational studies by Gaba et al. (1994, 1995, 1998) on production pressure, SA, and behavioral performance during simulated crises, and studies by Schulz et al. (2011, 2013, 2016, 2018) on SA-related errors. We also reviewed behavioral marker systems for nontechnical skills, particularly ANTS and ANTS-AP (Fletcher et al., 2004; Rutherford et al., 2015). The needs identified in the previous research (Daza-Beltrán et al., 2025) were aligned with the findings in the literature and guided the development of the initial item pool for the SAAAR.

The system includes two components:

Behavioral Marker Evaluation Format

This includes a 16-item behavioral marker scale organized into four domains: three reflecting Endsley’s theoretical levels of SA (perception, comprehension, and projection) and one pedagogical domain, communication. Each item is rated on a 5-point Likert scale (1 = Never, 5 = Always), indicating how often the resident demonstrates the behavior during the scenario.

Supervising professors, acting as expert observers, complete the 16-item format in real time while observing the simulated or clinical case and the live feed from the Tobii Pro Glasses 2 (Tobii Technology, 2014), a wearable eye-tracking device used by the residents. This first-person view helps verify attentional focus and interactions during the task. Combining direct observation with this perspective enhances interpretation of perception and projection behaviors and allows inference of comprehension through verbal reasoning, and provided opportunities to capture communication as a cross-cutting pedagogical complement that externalizes residents’ mental models.

In contrast to previous quantitative studies that used eye-tracking to examine perceptual differences between novice and expert anesthetists in simulated scenarios (Desvergez et al., 2019), our study did not conduct quantitative analysis of eye-tracking data. Instead, gaze-overlay videos were qualitatively reviewed to support formative observation and feedback. This approach aligns with the formative purpose of the SAAAR, enabling immediate interpretation and personalized feedback during debriefing. Quantitative processing, in contrast, requires specialized software and delays analysis, limiting its applicability for real-time reflection—one of the main constraints of current SA assessment methods.

The gaze-overlay recordings helped evaluators identify observed undesirable events (OUEs)—observable deviations from expected behavior that may signal SA failures (Daza-Beltrán et al., 2025)—which guided the debriefing process. The first-person video provided evaluators with access to critical information not visible from their physical position, such as the resident’s gaze direction, missed cues, and visual interactions with equipment or the environment. Faculty consistently described this feature as one of the most valuable components of the system, as it enabled a more accurate interpretation of behavior during high-pressure moments.

Importantly, gaze behaviors were not interpreted in isolation or used as definitive indicators of good or poor situation awareness. Potentially ambiguous behaviors, such as brief glances, were intentionally examined within the video debriefing session, where residents were invited to explain their intentions, reasoning, and contextual constraints at that moment. In this way, eye-tracking data functioned as a prompt for guided reflection rather than as a standalone evaluative metric, helping to mitigate subjective interpretations and support access to situation awareness as a cognitive process.

Video Debriefing Session

Immediately after each simulation, residents participate in individual debriefing sessions structured according to the formative debriefing model by Rudolph et al. (2008). The session begins with participants’ reactions to the scenario, followed by performance analysis using segments from the eye-tracking recordings that capture OUEs, along with results from the Behavioral Marker Evaluation Format (SA as process).

By revisiting OUEs from their own visual field, residents are able to identify discrepancies between perceived and actual performance, reconstruct their decision-making processes, and mitigate memory bias. This process supports guided reflection on their cognitive strategies and enables specific feedback grounded in real, SA-related behaviors.

Integrating real-time observation with self-reflection supported by eye-tracking video ensures that feedback remains timely, individualized, and pedagogically meaningful. It also allows evaluators to assess residents’ SA—from the interpretation of perceived information to the mental models and anticipatory processes that guide their decisions (SA as process). This approach complements the evaluation of SA as a product and concludes with a summary of the key learned by the residents for future performance improvement. This strategy is grounded in a dual understanding of SA: as a process, informing skill development; as a product, enabling performance assessment and gap identification.

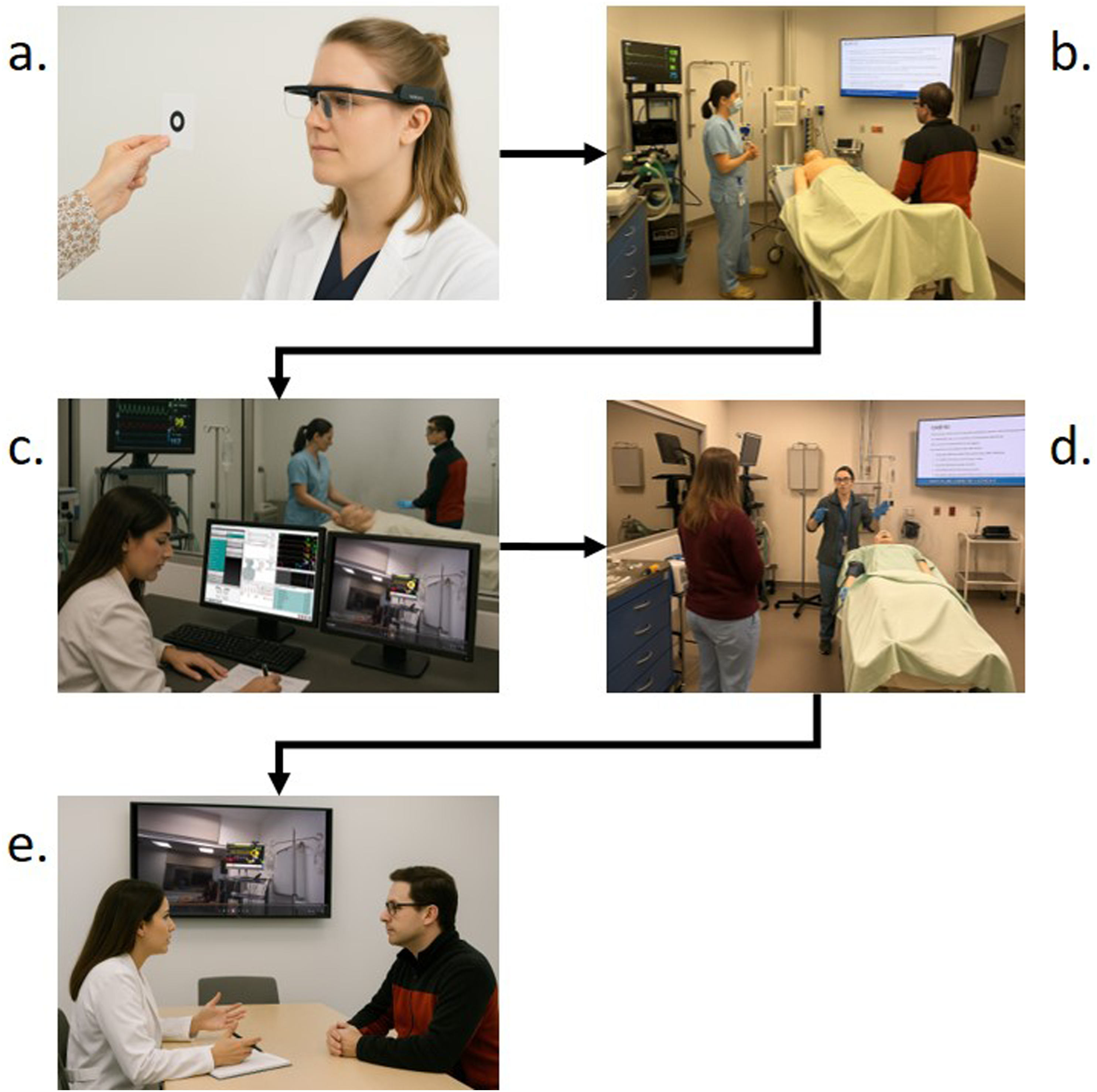

Figure 1 summarizes this process. Sequential stages of pilot implementation of SAAAR in simulation-based training. Note. The figure describes the five stages of SAAAR pilot implementation: (a) calibration of eye-tracking glasses, (b) scenario start, (c) live assessment of 16-item behavioral marker format with gaze-overlay, (d) scenario end, and (e) video-based debriefing. AI-generated images were used to protect confidentiality, based on real study scenarios.

Simulation-Based Implementation of the SAAAR

The SAAAR was implemented in a simulation-based setting as part of a pilot study with the full 2023 cohort of anesthesiology residents at Pontificia Universidad Javeriana (18 participants: six per training year). Inclusion criteria were: (1) active enrollment in the residency, (2) full participation in the training module for their level, and (3) signed informed consent. No exclusion criteria were applied, as the intervention was educational and designed for full cohort participation.

The intervention was designed by the research team specifically to train situation awareness (SA) skills across three residency levels (R1–R3). Each group received a training module that included a pretraining simulation scenario (15 min), a theoretical session covering core SA principles for all participants with differentiated emphasis according to residency year (2 h), and a posttraining simulation scenario of similar difficulty conducted approximately eight days later (15 min). No debriefing was conducted between the two simulation scenarios. At completion of the module, participants engaged in a video-based debriefing session (30 min). The topics addressed were aligned with the SA training needs previously identified for each residency level (Daza-Beltrán et al., 2025), and the simulation scenarios were tailored to each group’s clinical responsibilities and experience.

Two structured scenarios—pretraining and posttraining—were conducted in the critical care room of the Clinical Simulation Center. The room was equipped with an Ohmeda Excel 110 anesthesia machine (GE Healthcare, UK), a Laerdal SimMan® 3G mannequin (Laerdal USA), and all necessary medical supplies. Each scenario involved an actor playing a nurse assistant and a biomedical engineer operating the simulator.

Residents wore Tobii Pro Glasses 2 (Tobii Technology, Sweden) to capture visual attention and behavior. Two anesthesiology faculty conducted synchronous SA evaluations using the behavioral marker format. Eye-tracking videos were used in structured debriefing sessions to develop individualized improvement plans. One faculty member led the simulation and assessment; the other coordinated logistics, including actors, equipment, recordings, and informed consent. Assessment data were used to evaluate the SAAAR’s internal reliability.

The implementation involved the complete multimodal SAAAR system, integrating both components: the 16-item behavioral marker scale and the structured debriefing guide supported by eye-tracking, which together provided complementary quantitative and qualitative evidence of residents’ SA performance.

Preliminary Evaluation of the SAAAR Behavioral Marker Format

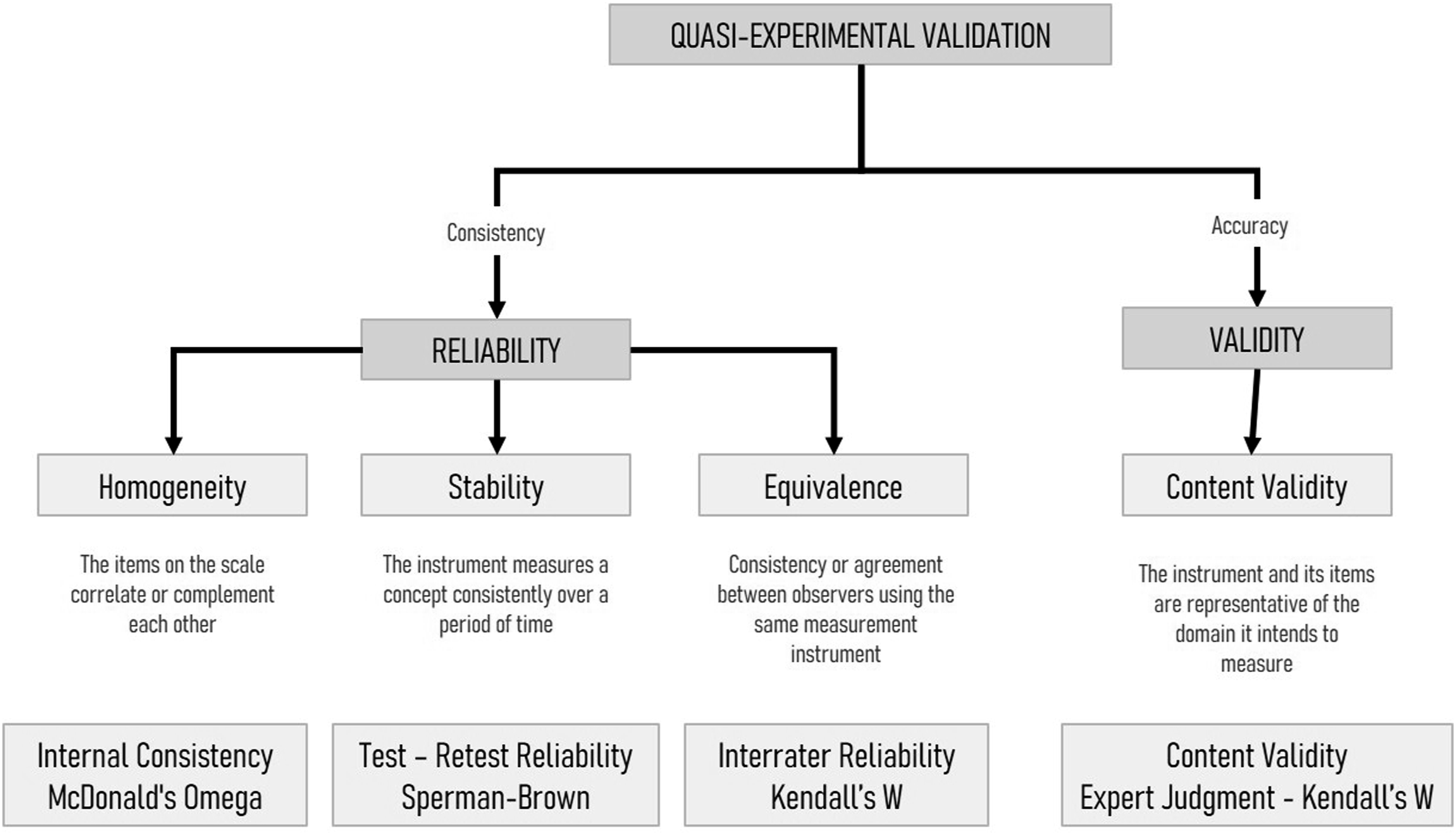

The preliminary evaluation of the SAAAR presented in this section refers exclusively to the 16-item Behavioral Marker Evaluation Format. This component was examined for content validity, internal consistency, temporal stability, and interrater agreement within the context of anesthesiology residency training (see Figure 2). The structured debriefing component, by contrast, was not subjected to psychometric testing, as it serves a qualitative and formative feedback function within the training process. This evaluation represents an initial step in the instrument’s development and does not constitute a full psychometric validation of the complete SAAAR system. Validation and reliability assessment techniques applied in the study. Note. This figure includes only the validation techniques implemented and reported in this study.

Content Validity

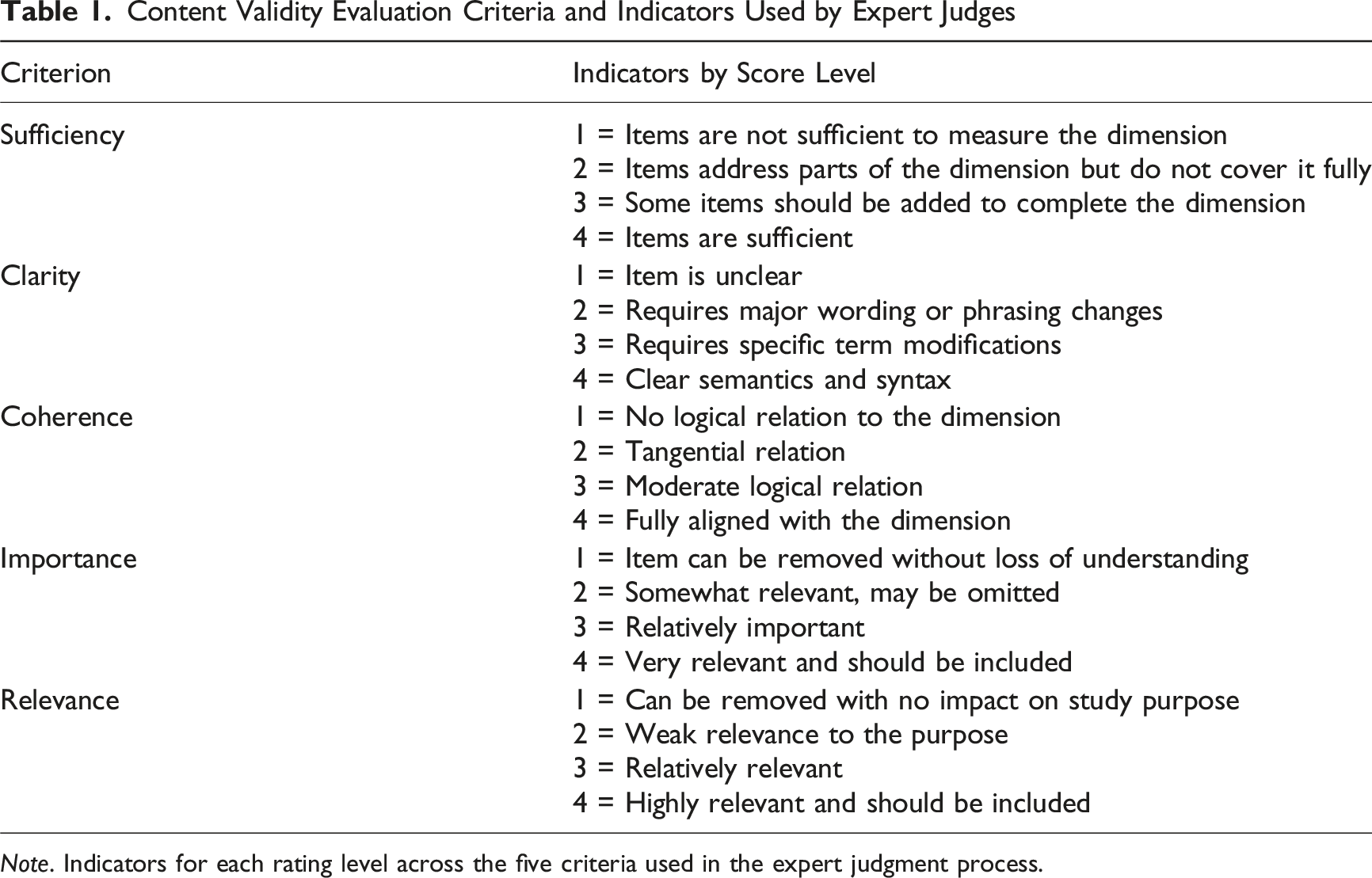

Content Validity Evaluation Criteria and Indicators Used by Expert Judges

Note. Indicators for each rating level across the five criteria used in the expert judgment process.

Kendall’s W was employed to measure interrater agreement. After two evaluation rounds, nine of the sixteen items were revised based on expert feedback. Changes included rewording for clarity, refining behavioral descriptions to reduce ambiguity, and reassigning items to more appropriate SA dimensions. These modifications aimed to strengthen conceptual alignment and ensure that each item reflected a distinct, observable behavior relevant to its target SA component.

Internal Reliability

The internal consistency was evaluated using McDonald’s Omega coefficient to estimate the overall reliability of the SAAAR. The analysis used the 36 evaluations from the simulation-based implementation with the full 2023 cohort of anesthesiology residents (18 participants: six per training year). Each resident was assessed twice—before and after a training session—allowing for the evaluation of the instrument’s reliability across repeated applications and training levels (homogeneity). This structure, including level-specific scenarios and training content, was detailed in the “Simulation-Based Implementation” section.

Test-Retest Reliability

To assess the temporal stability of the SAAAR, test-retest reliability was evaluated using eye-tracking recordings from four first-year residents, collected during the pilot implementation. Each resident’s video was assessed twice by faculty at different time points to ensure identical input across sessions. Thirteen anesthesiology faculty members (all with over ten years of experience) participated in the first evaluation round; nine completed the second round. In both sessions, evaluators independently scored the recordings using the behavioral marker format. Score consistency was analyzed using Spearman’s correlation coefficient. At the end of the second round, evaluators provided open-ended feedback on the format’s clarity and usability.

Interrater Reliability

To assess the equivalence of the SAAAR, interrater reliability was analyzed using the data from the first round of the test-retest evaluation. In that session, thirteen anesthesiology faculty members independently rated four simulation videos, each showing a first-year resident performing a comparable clinical task. Using the 16-item behavioral marker format, each evaluator generated 64 item-level ratings (4 videos × 16 items). Agreement among raters was calculated using Kendall’s coefficient of concordance (W), providing a robust measure of scoring consistency across evaluators observing identical performances.

Ethical Considerations

The project was approved by the ethics and research committees of the Faculty of Engineering (FID-22-366, November 17, 2022) and the Faculty of Medicine (FM-CIEI-0199-23, March 15, 2023) at Pontificia Universidad Javeriana. In accordance with the Declaration of Helsinki, participant anonymity was guaranteed, and all participants provided written informed consent before participating in the study, including consent for publication and the use of their photographs or other images. Additionally, all collected information has been anonymized. No incentives were offered.

Results

Evaluation System

The SAAAR was successfully implemented as a tool for evaluating and providing feedback on SA skills during simulated scenarios. This instrument integrated behavioral markers and video debriefing sessions, identifying gaps in perception, comprehension, projection, and communication. Faculty highlighted its applicability and usefulness for delivering specific, structured feedback.

Instrument Validation

Content Validity

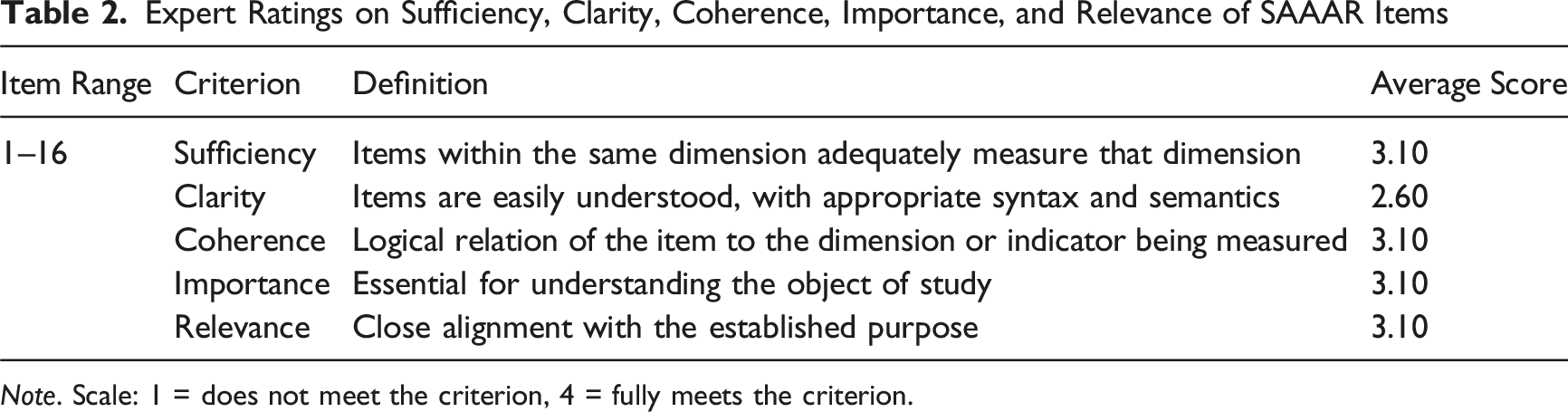

Expert Ratings on Sufficiency, Clarity, Coherence, Importance, and Relevance of SAAAR Items

Note. Scale: 1 = does not meet the criterion, 4 = fully meets the criterion.

Kendall’s W coefficient (W = 0.090) indicated low initial interrater agreement, although the chi-square test (χ2 = 28.917, p < 0.001) confirmed that this agreement was statistically significant and unlikely to have occurred by chance. The results suggest a high level of agreement for sufficiency, coherence, importance, and relevance (mean rank = 3.10), and a moderate level of agreement for clarity (mean rank = 2.60).

Qualitative feedback suggested refinements in item clarity and relevance for greater semantic precision and alignment with dimensions, and the reassignment of one task management from the perception domain to the projection domain. A second round of review was conducted with three of the original five experts. Through iterative discussion, qualitative consensus was achieved on all items; however, no quantitative reassessment of interrater agreement was performed in this round. As a result, while semantic and conceptual improvements—particularly in clarity, coherence, and dimensional alignment—were substantial, the degree of statistical agreement in the final version could not be empirically verified. Additionally, the reduced number of participating experts may have facilitated consensus but limits the generalizability of these results.

Final 16-Item Version of the SAAAR Instrument Organized by Domains

Internal Reliability

Internal consistency, estimated with McDonald’s Omega coefficient (ω = 0.928; 95% CI [0.805, 1.0]), showed high reliability. By domains, values ranged from 0.844 (Comprehension) to 0.940 (Projection), with Communication scoring lower (0.671), indicating the need to adjust related items to enhance their contribution to overall consistency.

Test-Retest Reliability

A strong correlation was found between scores from the first and second evaluation rounds using Spearman’s correlation (ρ = 0.952, p < 0.001), indicating excellent test-retest reliability and supporting the temporal stability and robustness of the SAAAR instrument across repeated measures.

Interrater Reliability

Interrater agreement was analyzed using Kendall’s W coefficient of concordance (W). The analysis showed moderate agreement among the evaluators (W = 0.412), and the associated chi-square test indicated that this agreement was statistically significant (χ2 = 316.579, df = 12, p < 0.001). These results suggest the potential value of future calibration or training efforts to further improve evaluator consistency, particularly if the instrument is scaled beyond pilot use.

Evaluator Feedback on Format Usability

As noted in the Methods section, faculty evaluators who participated in the test-retest phase provide open-ended feedback on the clarity and usability of the SAAAR format. Their comments consistently described it as clear, complete, and easy to use. No suggestions for changes in item structure or content were reported. While not a formal usability study, these practitioner insights offer valuable qualitative evidence of the instrument’s practicality and formative utility in anesthesiology training.

Results of the Simulation-Based Implementation of the SAAAR

To assess the instrument’s utility in detecting meaningful changes in SA performance, the SAAAR was applied before and after a simulation-based training intervention with the full 2023 cohort of anesthesiology residents. Each resident completed a training module that included a theoretical session, a pretraining simulation scenario and posttraining simulation scenario evaluated quantitatively with the 16-item behavioral marker format (SA as a product), and a video-based debriefing session evaluated qualitatively (SA as a process).

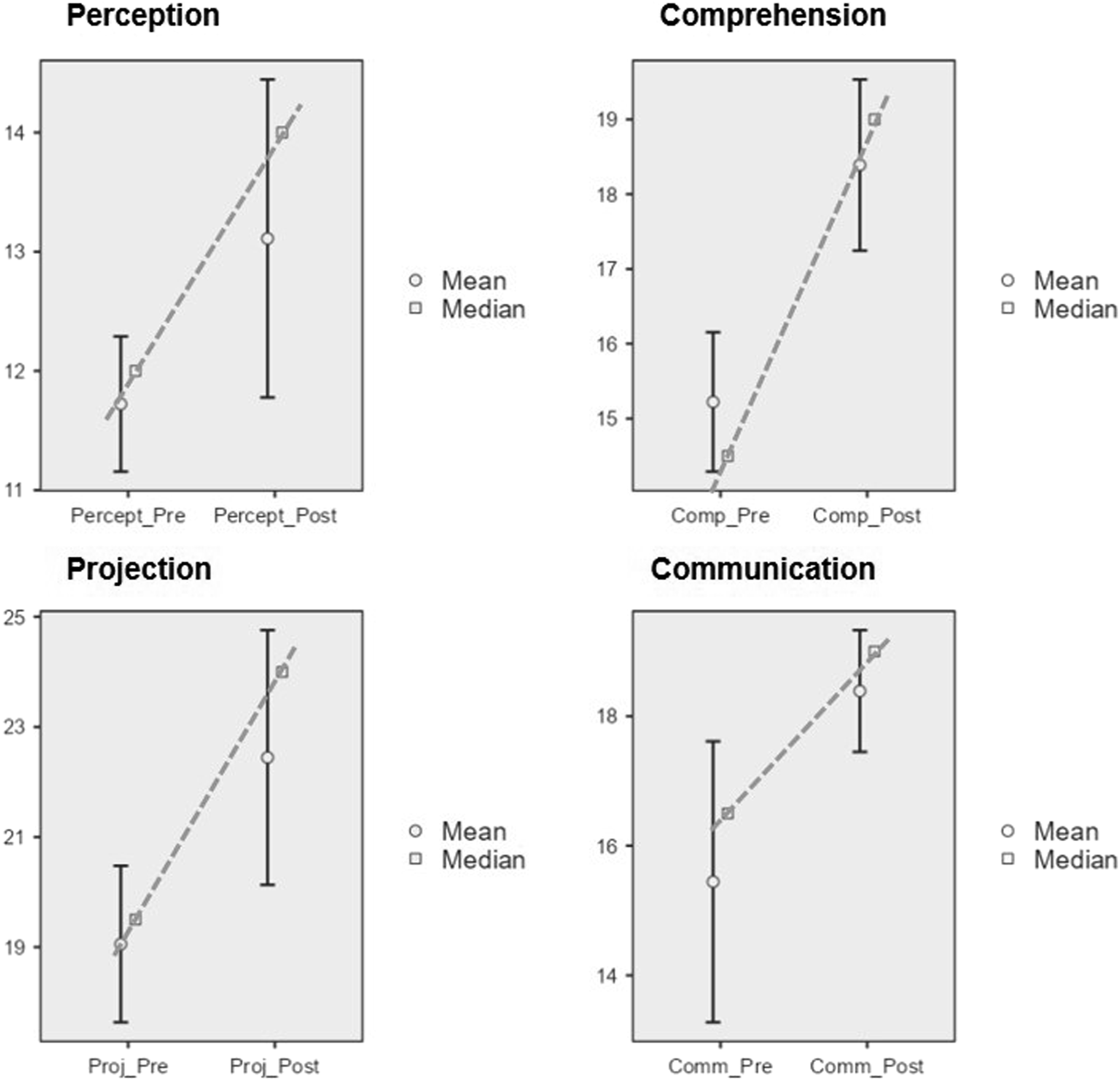

For the quantitative analysis, item scores were summed within each domain of the SAAAR to obtain an aggregated score per domain: perception (maximum 15 points), comprehension (20 points), projection (25 points), and communication (20 points). Using the Wilcoxon signed-rank test with a one-tailed hypothesis, statistically significant improvements were observed across all domains of the SAAAR. Specifically, perception (W = 37.00, p = .031, r = .52), comprehension (W = 5.00, p < .001, r = .93), projection (W = 17.00, p = .001, r = .80), and communication (W = 19.00, p = .003, r = .76) showed consistent increases posttraining.

These results suggest that the SAAAR system detects improvements in resident performance across SA domains. Figure 3 illustrates the pre- and posttraining scores for each item. Pre- and posttraining SAAAR scores by domains. Note. Each graph compares pre- and posttraining scores for each domain. Data were collected using the SAAAR during the pilot implementation with anesthesiology residents. Bars represent means displayed for descriptive purposes, while squares indicate medians connected by lines that show the direction and magnitude of change per domain. Statistically significant improvements were found across all domains using the Wilcoxon signed-rank test (one-tailed).

During debriefing, instructors used gaze-overlay videos to corroborate the causes of SA errors (EOND), facilitate reflective discussion, and connect behavioral observations with cognitive processes. Qualitative feedback from residents highlighted the usefulness of this integrated approach, particularly the opportunity to visualize their own decision making, identify overlooked cues, and receive targeted feedback. The satisfaction survey indicated high levels of acceptance and perceived pedagogical value of the training.

These results suggest the potential utility of the SAAAR not only as an evaluation tool but also as a means to guide pedagogical interventions and specific training.

For example, in a simulation scenario involving a blood transfusion, units were intentionally mismatched to simulate a latent error. Most residents failed to verify the blood type, and the patient developed an anaphylactic reaction. Gaze-overlay review during debriefing revealed that residents had not checked the blood unit information themselves. Their verbal explanations showed difficulties anticipating such complications and verifying critical information, indicating a projection failure (Level 3 SA), and incomplete mental models (Level 2 SA). Communication issues also emerged, as verification steps were not confirmed verbally. This example illustrates how two core components of the SAAAR—Behavioral Marker Evaluation Format and the Video Debriefing Session supported by eye-tracking—enable evaluators to detect SA deficiencies in residents and reinforce core SA competencies through targeted feedback.

Discussion

Summary of Key Findings and Connection to Study Objective

This study presented the development and preliminary evaluation of the SAAAR, a multimodal system created to assess situation awareness (SA) in anesthesia residents. By integrating behavioral markers, eye-tracking, and structured debriefing to identify gaps across the three SA levels—perception, comprehension, and projection—while supporting formative feedback. From a Human Factors and Ergonomics perspective, the SAAAR offers a context-adapted approach for simulation-based training, with potential applicability to clinical settings.

Comparison with Prior Research

Existing SA tools such as SAGAT (Endsley, 1988), SART (Taylor, 2017), and behavioral marker systems like ANTS (Fletcher et al., 2003) and ANTS-AP (Rutherford et al., 2015) have advanced the assessment of nontechnical skills in healthcare; however, their application to anesthesiology training remains constrained. These instruments often rely on retrospective or observer-only methods, offering limited access to how residents actually perceive, interpret, and anticipate evolving clinical situations.

In contrast, the SAAAR was designed to both assess and foster SA development by triangulating behavioral markers, first-person eye-tracking, and structured video debriefing. This integration enables the identification of attentional gaps through eye-tracking (Level 1), manifestations of internal comprehension expressed through communication behaviors (Level 2), and OUEs, including omitted or delayed anticipatory actions captured on video (Level 3), while transforming observed errors into opportunities for guided reflection and feedback.

These events are analyzed during debriefing to reveal residents’ reasoning processes, enabling access to Level 2 (comprehension) and Level 3 (projection), and complementing SA evaluation as both product and process. Unlike tools focused on scoring, the SAAAR fosters pedagogical dialogue—transforming errors into learning and feedback into reflection. Faculty reported ease of use, clearer evaluations, and deeper resident reflection. This approach aligns with educational goals and HFE principles by promoting resilience and safety in complex healthcare systems (Carayon et al., 2014).

Theoretical, Methodological, and Practical Implications

From a theoretical perspective, the SAAAR addresses SA as both product and process, treating these dimensions as complementary but distinct. This design responds to concerns raised by Endsley (2007) regarding the risks of inferring cognitive processes solely from observable behavior. By separating performance indicators from reflective access to reasoning, the SAAAR supports a more balanced understanding of how SA is constructed, maintained, and degraded in complex settings.

Methodologically, the SAAAR integrates eye-tracking, behavioral observation, and structured debriefing to infer attentional focus, reasoning, and anticipatory actions, allowing evaluators to contrast observed behaviors with residents’ mental models and thereby support guided reflection, targeted feedback, and learning.

From a practical standpoint, the pilot implementation demonstrated the feasibility and pedagogical value. Faculty reported clarity in evaluations, acceptable interrater reliability, and strong resident engagement. Whereas prior work often infers SA using quantitative eye-tracking metrics such as saccadic frequency or fixation time (Cha & Yu, 2021), the SAAAR adopts a qualitative approach that facilitates its integration into educational settings. This enabled immediate, personalized feedback and meaningful reflection without the complexity of quantitative data analysis, and required minimal faculty training, addressing limitations previously noted in simulation-based SA evaluations (Gaba et al., 1998). Communication, included as a pedagogical complement, further enhanced practicality by making residents’ mental models explicit and guiding supervisory feedback when interpretive errors emerged.

Based on these outcomes, the anesthesiology department and the simulation center where the pilot was conducted have expressed interest in formally incorporating the SAAAR into the residency curriculum, and have begun investing in dedicated infrastructure to support its continued development.

Although tested only in simulation, the SAAAR shows promise for clinical implementation. Its structured format enables identification of perception, comprehension, and projection errors, along with communication breakdowns that may compromise safety. The tool’s adaptability also suggests potential for other high-stakes areas such as emergency medicine, critical care, or perioperative transitions.

Limitations and Future Directions

Despite the strengths, the SAAAR presents several limitations. First, the psychometric evaluation was preliminary and limited to the 16-item behavioral marker format; therefore, the findings should not be interpreted as conclusive. The debriefing component was not subjected to psychometric testing because it is a pedagogical process rather than a measurement instrument. Its purpose is to explore residents’ reasoning, clarify mental models, and facilitate targeted feedback, contributing to learning and access to SA as a cognitive process rather than to the generation of quantitative scores.

The weighting assigned to the Communication component also represents a preliminary design decision. Although Communication is not conceptualized as a dimension of situation awareness, its higher weighting reflects its pedagogical relevance and observability in training contexts and should be considered provisional and subject to refinement in future studies.

Second, interrater reliability in the pilot study was moderate (Kendall’s W = 0.412), suggesting the need to enhance evaluator training. Third, although the system was designed for both simulated and clinical environments, its current evaluation is limited to simulation-based settings and to a single residency cohort, restricting generalizability. Additionally, despite the use of different scenarios separated by approximately eight days, some degree of familiarization with the simulation environment and task structure may have partially influenced performance.

Future research should address these limitations by testing the SAAAR in real clinical contexts, expanding psychometric evaluation to include convergent, discriminant and predictive validity, and comparing its outcomes with established tools such as SAGAT or ANTS. Beyond individual assessment, the SAAAR could be adapted to team-based SA assessment in high-stakes environments such as emergency medicine or intensive care. Exploring its application in interdisciplinary teams could expand its scope and contribute to a better understanding of shared awareness and collaborative decision making in patient care.

Conclusions

Building on this foundation, the present study introduces and preliminarily evaluates the SAAAR as a structured multimodal system for assessing SA in anesthesiology training. By integrating behavioral observation, verbal reasoning, and eye-tracking evidence, the SAAAR enables a deeper understanding of SA and supports targeted pedagogical feedback. Its design responds to the specific training needs of anesthesia residents and offers a feasible approach for improving SA and patient safety in complex environments.

Key Points

• This study presents the SAAAR, a system for assessing SA in anesthesia residents and reports its preliminary evaluation in simulated settings. • It integrates innovative tools such as behavioral markers, eye-tracking, and video debriefing, enabling integral evaluation across three theoretical SA levels—perception, comprehension, and projection—together with communication as a pedagogical component. • The behavioral marker format demonstrated high internal reliability (ω = 0.928) and test-retest reliability (ρ = 0.952, 95% CI: [0.752, 0.992]), supporting its robust implementation in training programs. • The SAAAR’s adaptable design allows for potential applications in clinical environments and other high-complexity medical domains.

Footnotes

Acknowledgments

This study was conducted as part of the first author’s doctoral dissertation, we extend our gratitude to the Department of Anesthesiology at Pontificia Universidad Javeriana and the Hospital Universitario San Ignacio for their collaboration in this research, as well as to the operating room staff, residents, and anesthesia supervisors who generously volunteered their time. Special thanks to the staff at the Clinical Simulation Center at Pontificia Universidad Javeriana and its director, Leonar Aguiar, for providing their resources and time for the tests conducted with residents.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported by Pontificia Universidad Javeriana; 20854.