Abstract

Objective

The impact of the context in which automation is introduced to a decision-making system was analyzed theoretically and empirically.

Background

Previous work dealt with causality and responsibility in human-automation systems without considering the effects of how the automation’s role is presented to users.

Methods

An existing analytical model for predicting the human contribution to outcomes was adapted to accommodate the context of automation. An aided signal detection experiment with 400 participants was conducted to assess the correspondence of observed behavior to model predictions.

Results

The context in which the automation’s role is presented affected users’ tendency to follow its advice. When automation made decisions, and users only supervised it, they tended to contribute less to the outcome than in systems where the automation had an advisory capacity. The adapted theoretical model for human contribution was generally aligned with participants’ behavior.

Conclusion

The specific way automation is integrated into a system affects its use and the perceptions of user involvement, possibly altering overall system performance.

Application

The research can help design systems with automation-assisted decision-making and provide information on regulatory requirements and operational processes for such systems.

Keywords

Introduction

Human operators collaborate with automated decision support systems to improve task outcomes in complex scenarios in domains, such as medical diagnostics (Esteva et al., 2017; Gardezi et al., 2019; Moreira et al., 2019; Rangayyan et al., 2010), ground transportation (Caballero et al., 2021; Lu et al., 2010; SAE, 2016), aviation (Billings, 1996; Pritchett, 2009; C. D. Wickens et al., 1998), military applications (Naseem et al., 2017; Scharre & Horowitz, 2015), and information technology (IT) security (Salloum et al., 2020). In such systems, humans and automation team up to improve task performance and achieve better outcomes. Such collaborative systems involve decision-making in various stages of the tasks, and both the human operators and automation have important roles in the process. However, what influence does each of them have on the outcome? Who should be held accountable if an incorrect decision is made and the task is deemed a failure? Who contributed more to the result and could therefore be assumed to have caused it, and by how much? Past studies addressed such questions by analyzing specific decisions after they were made. These analyses could not be used to estimate the influence of humans and automation on future decisions. Douer and Meyer (2020, 2021) proposed an information-theory-based model that provided such an a priori analysis. In this model, human causal responsibility is quantified as the proportion of the overall information in the outcome due to the unique information (i.e., reduction in uncertainty) the human contributed.

However, this model does not consider the system configuration and the context in which the automation is presented to the operator, which may affect actual or perceived human causal responsibility. Whether the automation is there to assist humans or to do their jobs, leaving them in supervisory roles, could significantly influence how humans interpret the automation’s recommendations or decisions and how they react to them.

Related Work

In systems where human operators collaborate with automation, the assignment of different activities to the operator and the automation defines the level of automation (LOA) (Endsley, 1987; Endsley & Kaber, 1999; Sheridan, 2012; Sheridan & Verplank, 1978). Using Sheridan’s 8-level scale (Sheridan, 2012), we can categorize the LOAs as either (a) a Decision Support System (DSS), where the automation can suggest an action but not execute it (levels 1–3), (b) Automated Decision-Making (ADM) where the automation can decide and execute the action while allowing the operator to override it (levels 4–5), or (c) an Autonomous System where the automation executes the action without an override option for the operator (levels 6–8). Such systems are sometimes referred to as (a) human in the loop (HITL), (b) human on the loop (HOTL), and (c) human out of the loop (HOOTL), corresponding to the classifications above (Scharre & Horowitz, 2015).

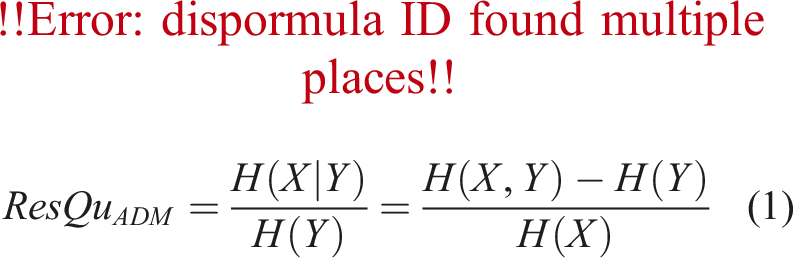

In decision-making processes, when people’s lives, health, or welfare are at stake, it is expected that someone can be held responsible for an incorrect decision that caused harm (Amoroso & Tamburrini, 2020; European Union, 2016; Pritchett, 2024). This is typically required for legal, ethical, and procedural reasons. However, in systems where humans and automation collaborate, it is not easy to attribute responsibility for outcomes. People may tend to ascribe blame to automation, whether it is an artificial intelligence (AI) system, robots, or autonomous cars (Cunningham et al., 2019; Furlough et al., 2021; Kneer & Stuart, 2021; Lima et al., 2023), reflecting concerns about these systems. Regulatory and legal requirements call for meaningful human control (MHC), according to which a human should be held accountable for failed outcomes. Still, automation is increasingly “blamed” for failures, raising the fundamental question of who is responsible for the outcome, the human or the automation, when decisions are made with advanced intelligent systems, and if they share the responsibility, how can it be divided between them? This question has been extensively explored in the literature, but mostly for legal and ethical responsibility (Gerstenberg & Lagnado, 2010; Lagnado et al., 2014; Matthias, 2004). Such an analysis also considers the designers of the automation, the managers or commanders who decided to deploy the system, and the operator’s behavior or decisions beyond the specific use of the system (e.g., if they chose to operate the system while they were tired and unable to function well). However, at the most fundamental level, these analyses of responsibilities do not specify the causal relationships between the different agents and the task outcome, which is better defined by causal responsibility, describing the level of contribution of each agent to the outcome (Hart, 2008). Quantifying causal responsibility would help determine humans’ influence and control on the outcome. Douer and Meyer (2020, 2021) proposed such a quantification by defining causal responsibility as an agent’s unique share in determining the system output’s (the implemented actions) probability distribution. Their Responsibility Quantification (ResQu) model analyzed a system in which automation provides the initial classification and decision that the human considers and determines whether to accept or override. According to this definition, human responsibility is the uncertainty left in the system after the automation issued its classification and recommendation. The ResQu model expresses this using the conditional entropy of the random variable representing the human’s decision (which in their model also represents the resulting action) given the automation’s recommendation, as a portion of the entropy of the resulting action, such that

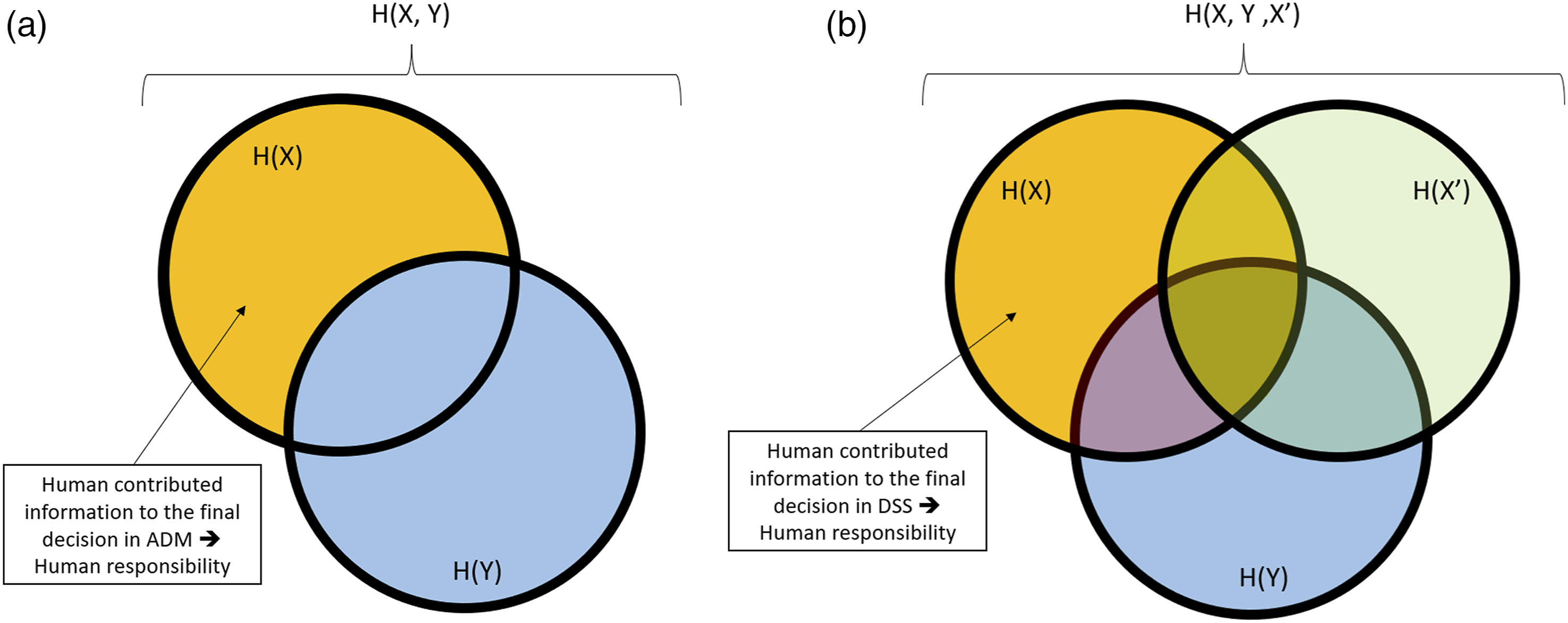

with An illustration of the information contributing to the final decision. X, X’, and Y represent the final decision, the human-only decision (without automation), and the automation’s recommendation. The orange-shaded area represents the information attributed to the human, which is translated into responsibility. (a) An ADM system, where the overlapping information is attributed to the automation; (b) a DSS system, where the human is also attributed the information they would have contributed if they were to decide without automation.

The ResQu model described above provides a quantitative indication of the human’s causal responsibility for ADM systems when the human is added to monitor the automation, which would have decided and acted without them if they were not present. However, such logic is challenging in the case of a DSS system, where humans make the decisions, and automation is added to assist them. To illustrate this situation, we can look at a specific (though extreme) case of all-knowing humans and automation, when both humans and automation are perfectly accurate and can always make the correct decision. In this case, we should consider that causal responsibility is associated with general type-causation in Probabilistic Causation (Eells, 1991; Hitchcock, 2021; Saad & Meyer, 2023). The outcome E is caused by an event C if and only if C raises the probability for E to occur, that is,

The above scenario exemplifies how DSS and ADM systems may be viewed differently in the context of human control and responsibility. The difference between them, which is driven by the order in which classifications are made and the role of each agent, influences the decision-making system. This was illustrated, for example, in the European Union’s Artificial Intelligence (AI) Act that allows AI systems to “improve the result of a previously completed human activity” in high-risk systems, but they cannot be the first decision-maker (European Union, 2024). Hence, using a single ResQu model to determine human responsibility in all human and automation collaboration cases may be impossible. However, while it may be simple to determine responsibility in extreme cases, such as the one shown above, in most real-life situations, human and automation accuracy is neither 0 nor absolute, but something in between. Augmenting the ResQu model for quantifying human responsibility in DSS systems is important to allow regulators, managers, commanders, and systems designers to better address the questions of required human control, influence, and responsibility for the outcome.

Based on the above, we suggest that: H1 Human operators’ contribution to the outcome is seen as lower in ADM than in DSS systems. H2 Highly accurate humans are seen as contributing more in DSS systems since they can provide more valuable joint information to the decision-making. H3 Humans view their responsibility to the outcome as higher in DSS than in ADM systems.

Updated Model

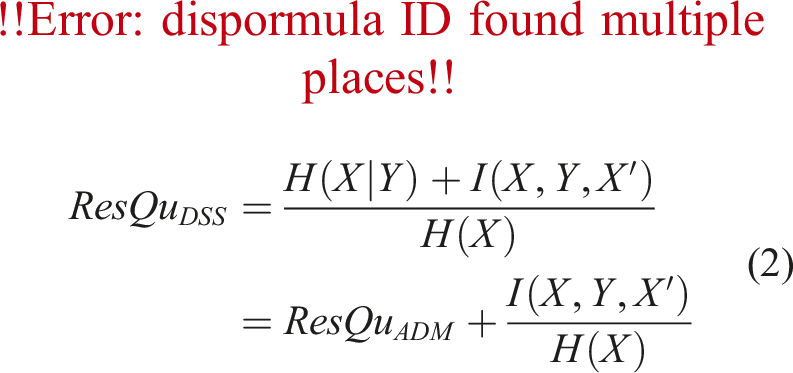

In the ResQu model, the human contribution to the outcome is the remaining uncertainty in the results after the automation has contributed its part. It aligns with ADM system behavior by attributing the shared information entirely to the automation. Suppose that in a certain situation, both the human and automation, when operating independently, would have made the same decision. The ResQu model would assume this was an “automation contribution” and assign it to “automation responsibility.” In DSS systems, however, the definition of human responsibility should be adapted to attribute such human and automation’s joint information to the human. In entropy terms, it means that the human responsibility (denoted in this case as ResQu

DSS

) is the portion of the conditional entropy of the final decision

To illustrate how the difference between ADM and DSS systems is presented in realistic systems, we used an example of a uni-dimensional binary decision system. The same scenario will be tested empirically in the experiment described below, and this analysis is shown to predict the actual human behavior in the experiment. In this example, the human should decide whether rods are faulty or intact based on their length. The lengths of rods are normally distributed, with intact rods’ distribution being

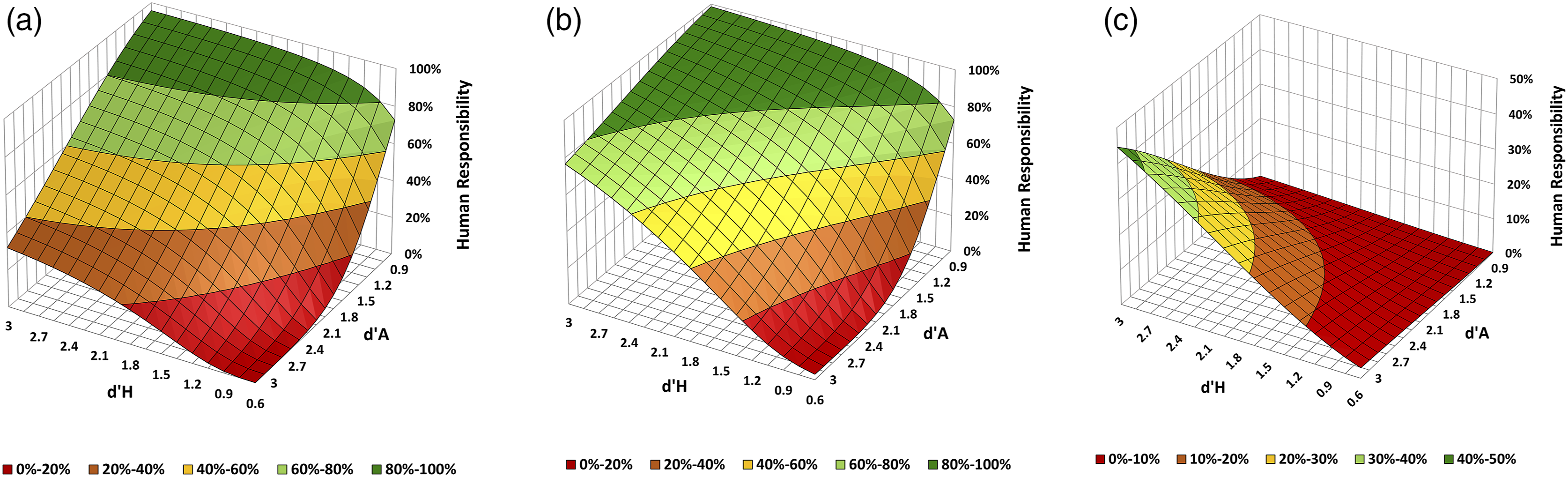

The analysis results, presented in Figure 2, illustrate how the context in which the automation is presented to the human operator can change the human’s causal responsibility for the outcome. When either the automation or the human sensitivity is low, ADM and DSS systems do not significantly differ in human responsibility. However, as human sensitivity increases, the human responsibility in DSS systems exceeds that of ADM systems, and the difference increases with higher automation sensitivity. This observation is important, considering that the development of technology tends to raise automation sensitivity. Furthermore, there is a desire to maintain meaningful human control, so human sensitivity needs to increase, as illustrated in Figure 2(a) and (b). However, when both the human and automation sensitivity increase, the context in which the automation is presented to the user becomes more critical. If the human’s role is to monitor and approve or override the automation’s decisions, as in ADM systems, their responsibility for the outcome can potentially drop below what would be considered the minimal responsibility for MHC, and regulatory requirements may not be met. Analytical model results of human responsibility for (a) ADM system with the original ResQu model and (b) DSS with the adjusted ResQu

DSS

model. (c) The difference between the models as a function of the human and automation’s detection sensitivities d′. P

S

= 0.3 and U = 0.6667. (a) Responsibility in ADM systems. (b) Responsibility in DSS systems. (c) Difference in responsibility: ResQu

DSS

− ResQu

ADM

.

While this analysis provides important insights, it assumes that the human operator behaves normatively (according to a model of optimal decision-making to maximize the values of outcomes). An empirical study can provide information about the correspondence of model predictions with actual behavior. An experiment was designed to explore such behavior and assess the effects of the decision-making context on decisions and outcomes.

Experiment

We conducted an experiment to study decisions and evaluations of human responsibility with automation advice in DSS and ADM systems. Based on the above analysis, we predicted that human responsibility would be judged as lower in the ADM configuration than in the DSS configuration.

Method

Participants

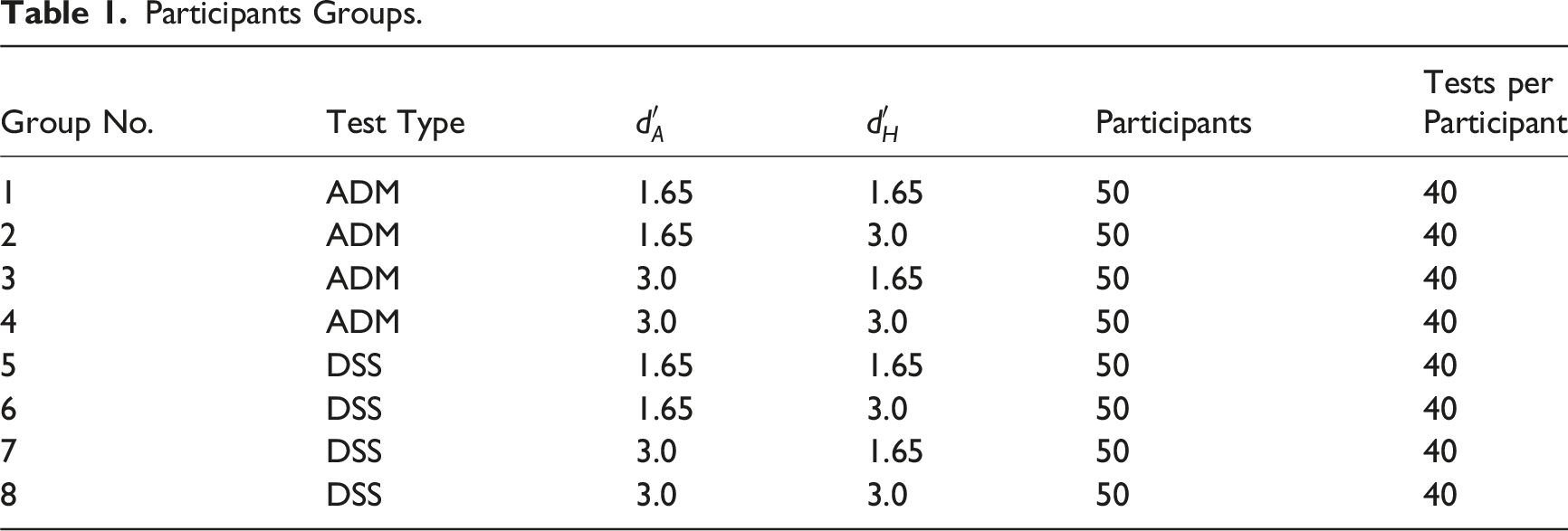

Participants Groups.

Apparatus

The experimental system was a web application written in Python 3.9. The interaction with the application was through a standard web browser running on desktop computers, laptops, and smartphones. The system simulated the manufacturing of titanium rods, where the rods’ lengths were a one-dimensional measure of their quality: intact or faulty. Faulty rods were, on average, longer than intact rods. The participants were asked to determine if the manufactured rods were intact or faulty. Rods were faulty with a probability of P

S

= 0.3, which means that 4800 rods out of the total 16,000 rods (400 participants x 40 tests each) were faulty. These were randomly assigned to the tests. Each participant had a certain accuracy level,

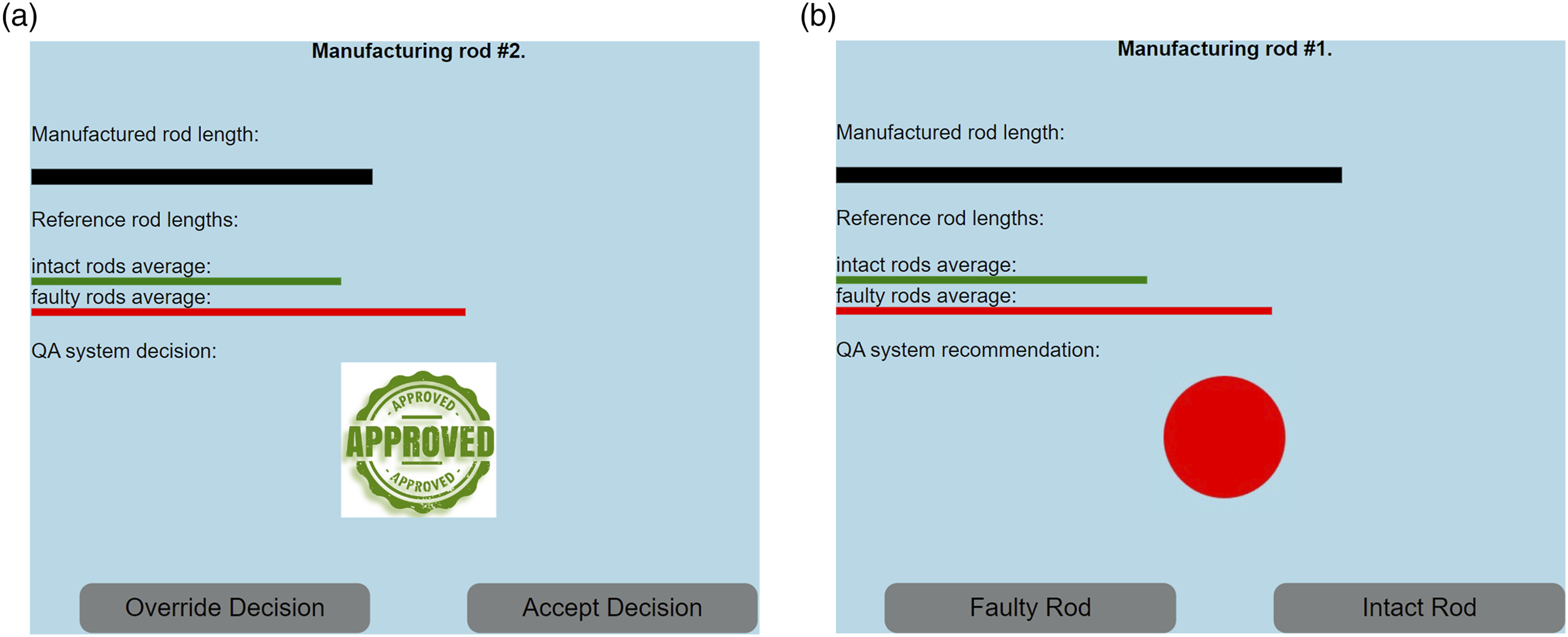

The participants received advice from automation, which was presented as an ADM or a DSS. In an ADM system, the automation’s classification was described as the “QA system decision” with a graphical symbol of a quality seal (“Approved,” “Rejected”), and the participants had to choose between “Override Decision” or “Approve Decision.” For a DSS system, participants saw a “QA system recommendation” with a graphical representation of a simple colored circle, green (Hex color code: #4CAF50) for an intact system or red (#FF0000) for a faulty system. They had to choose whether it was a “Faulty Rod” or “Intact Rod” (Figure 3). Except for the above changes in the way some information was presented to the participants, the system behaved the same in both cases, that is, the system waited for the participants’ decision before moving to the next test, aligned with Sheridan’s Level 4 automation (Sheridan, 2012). The automation was assumed to function according to SDT with an optimal decision threshold and detection sensitivity of Experiment screenshots for (a) ADM and (b) DSS systems. Text and graphics were differently designed to convey the differences between ADM and DSS system types.

Procedure

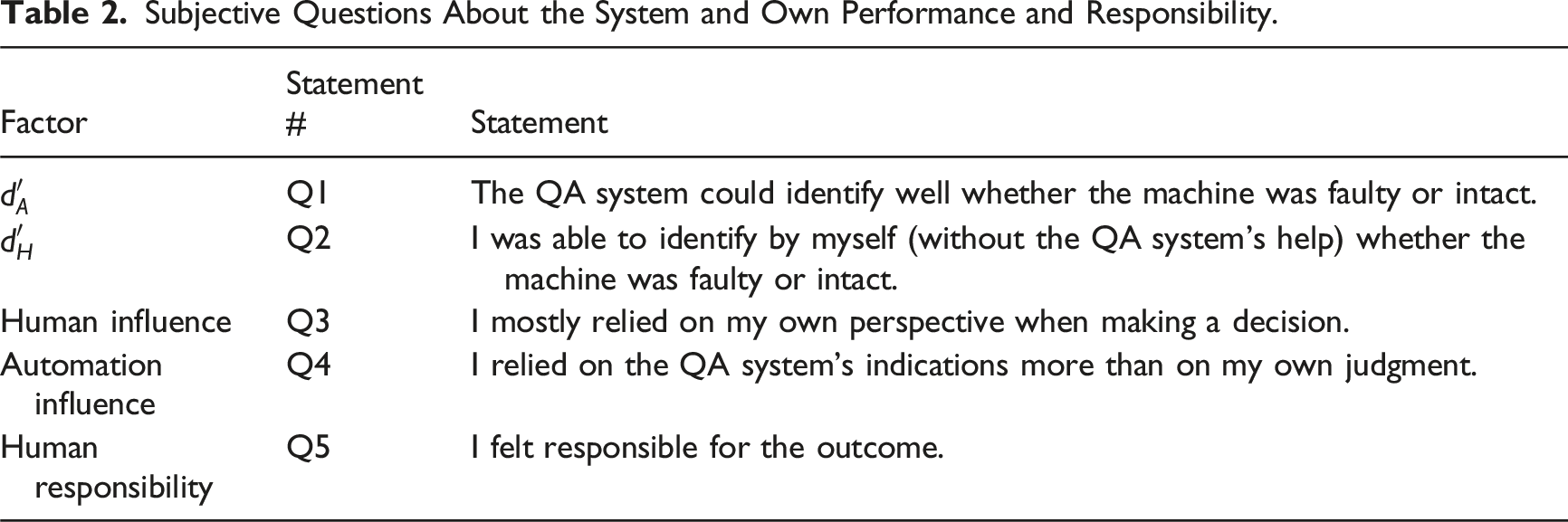

Subjective Questions About the System and Own Performance and Responsibility.

Results

We calculated the probabilities to act and the joint probabilities for human and automation combinations to act. To calculate the entropy values defined above, we also needed the probability for human-only decisions to act (without the automation’s advice) and its joint probabilities with assisted human and automation-only decisions. Since we did not have human-only decisions (all participants were presented with automation advice), we used instead the calculated expected decision of a normative human using optimal decision criteria. The results for the actual task performance are included in the Supplementary Material.

Measured Responsibility

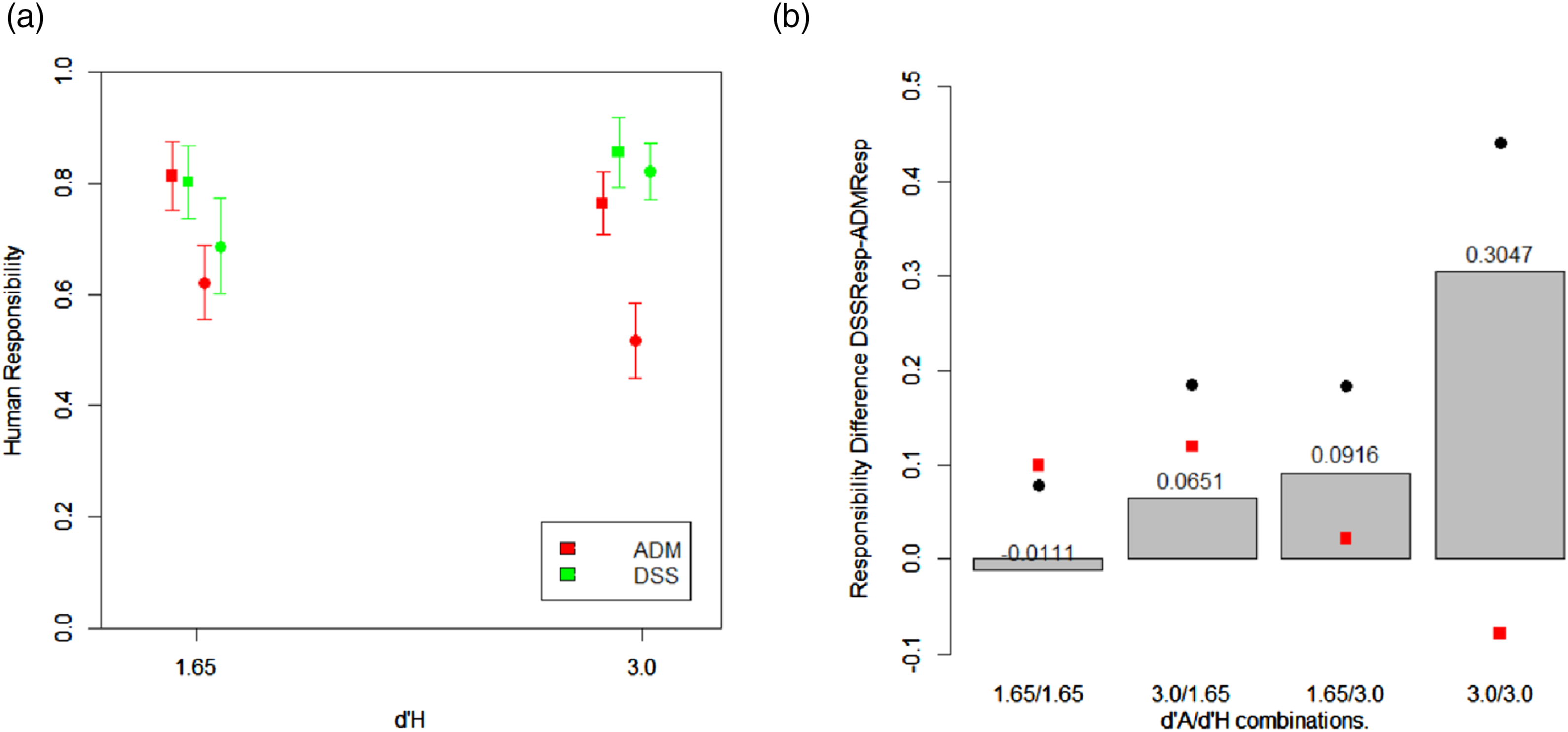

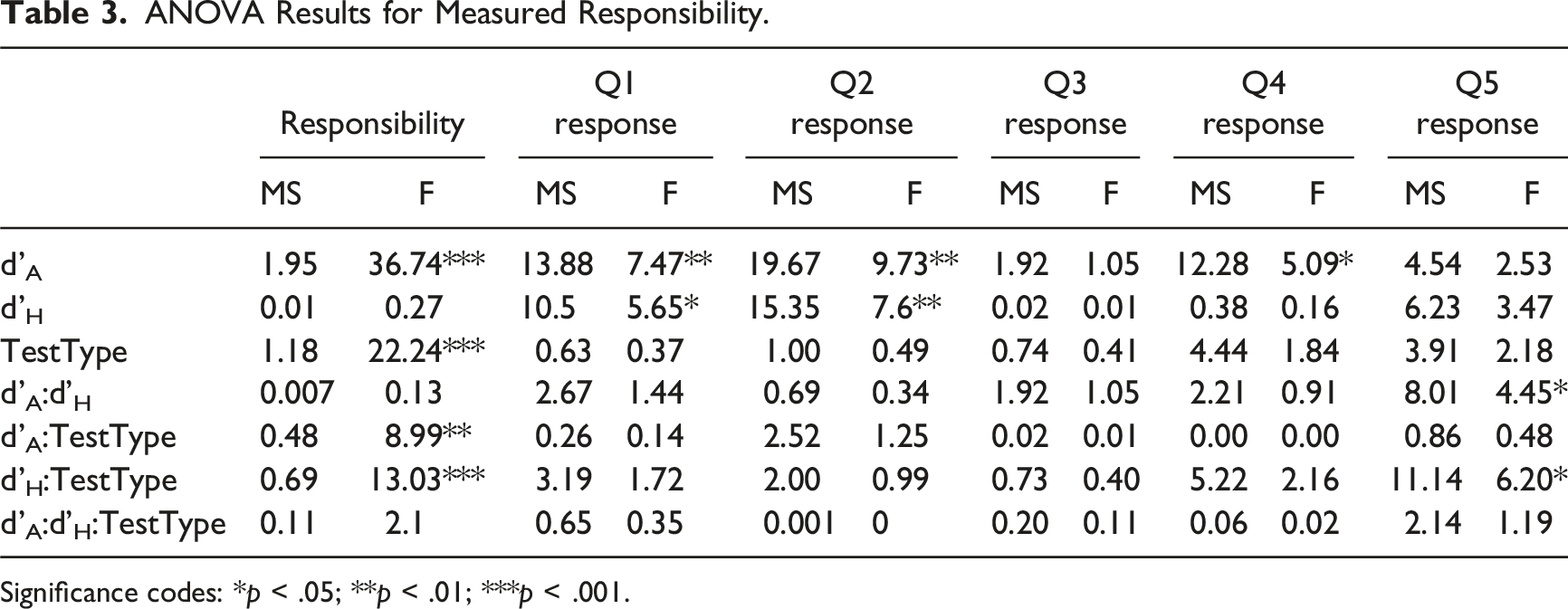

Figure 4(a) presents the measured human responsibility. An Analysis of Variance (ANOVA) of the results (see Table 3) showed that the system type influenced human responsibility, driving higher responsibility in DSS versus ADM systems, F (1,378) = 18.86, p < .001. Additionally, as expected, the human responsibility was lower for more accurate automation, F (1,378) = 33.17, p < .001. However, human accuracy had no significant effect on the responsibility, which could be explained by the significant interaction between human accuracy and the system type, F (1,372) = 13.03, p < .001. For DSS systems, higher human accuracy drove higher mean responsibility, F (1,195) = 7.4, p = .007, while, surprisingly, for ADM systems, the higher human accuracy reduced the mean responsibility, F (1,181) = 4.17, p = .042. Experiment results. (a) Human responsibility as a function of human accuracy. The automation accuracy is represented by squares ANOVA Results for Measured Responsibility. Significance codes: *p < .05; **p < .01; ***p < .001.

To better observe the differences in responsibility between ADM and DSS systems, the difference between the means of the measured responsibility in both systems (ResQuDSS − ResQuADM) is presented by the vertical bars in Figure 4b for the four tested

The participants’ subjective perceptions of the situation during the experiment were captured by their answers to the survey questions.

Assessing Human and Automation Accuracy

When asked about the accuracy of the automation (Q1), the participants ranked the more accurate automation as higher, F (1,378) = 7.4, p = .007. When they estimated their own accuracy (Q2), the more accurate participants rated themselves higher, F (1,378) = 7.19, p = .0076. Interestingly, we also observed that accurate participants tended to rate the automation higher in Q1, F (1,372) = 5.65, p = .018, and participants rated themselves higher in the presence of accurate automation, F (1,372) = 9.73, p = .002. It appears that the presence of an accurate agent (human or automation) influenced the subjective perception of the accuracy of the other agent.

Estimating influence: In their response to Q3 about perceptions of their self-reliance, no significant dependency was observed on the actual human accuracy. However, their responses increased with their subjective, self-perceived accuracy, F (1,376) = 49.8, p < .001, and decreased with their perception of the automation accuracy, F (1,376) = 8.74, p = .0033. Similarly, while their perceived automation influence (Q4) increased for higher actual automation accuracy, F (1,372) = 5.09, p = 0.025, we observed a much higher affinity with their perception of the automation accuracy as shown in their response to Q1, F (1,376) = 87.1, p < .001.

Determining Responsibility

Finally, the participants were asked about their subjective responsibility for the outcomes (Q5). The mean responses for this question are illustrated as red dots in Figure 4b. Neither the participants’ nor the automation’s actual accuracy had significant effects. However, there was a significant effect of the perceived accuracy of both (responses to Q1 and Q2) on the response to Q5 for both accurate and less accurate participants (for all groups: F (1,182)>14, p < .001). The effect of the system type (ADM or DSS) was only statistically significant for less accurate participants, F (1,182) = 5.85, p = .017. It seems that accurate participants held themselves responsible for the outcome in either system type.

Discussion

Automation is usually added to decision processes to assist humans or even replace them while usually allowing human oversight and the possibility to override the automation’s decisions. In sensitive systems, regulations usually require MHC to prevent possible problems that may arise in fully autonomous decision making. However, having a human operator in the decision-making process will not necessarily be sufficient to address this requirement adequately. Previous studies demonstrated that when the human’s detection sensitivity is inferior to the automation’s, the human’s causal responsibility for outcomes will be low, leading to potentially less-than-meaningful human control (Douer & Meyer, 2020, 2021). We demonstrated here that the human’s causal responsibility for the outcome also depends on the configuration of the context of the human involvement in the decision-making process.

In our model, the context is particularly important when both humans and automation are accurate. When the human is identified as the primary decision-maker and the automation is presented in an advisory capacity (DSS), the human’s causal responsibility is higher than when the automation is presented as the main decision-maker with the human supervising its work and accepting or overriding its decisions (ADM). The experiment showed the predicted results. Even though the participants generally assumed higher responsibility than optimally expected by the theoretical model, the dependency on the context of the automation’s assistance remained, and their actual responsibility was higher in DSS systems than in ADM systems. However, the participants’ average perception of their responsibility in such situations was surprisingly higher in ADM systems than in DSS systems (Fig. 4b), suggesting that humans tend to assume higher responsibility than their actual influence on the outcome.

When either the human or the automation was accurate while the other was not, the theoretical difference between DSS and ADM was much smaller. The actual measured responsibility difference was even smaller. It was almost negligible with more accurate participants who tended to hold themselves responsible regardless of the context. As predicted by the model, no significant differences were observed in the experiment when both human and automation accuracy were low.

The above analyses and findings provide several insights:

Human contribution to the outcome is context-sensitive, especially when both human and automation are accurate. As automation accuracy improves, considering the context in which automation is used in the system is essential to ensure MHC. On the one hand, one should strive to increase the human’s accuracy to maintain more substantial human influence on the outcome, above the minimum needed for MHC; see Figure 2 and Douer and Meyer (2020). However, as shown here, thereby the system’s context would have a stronger effect. A clear understanding of the context and clarifying it to the human operators becomes imperative to ensure MHC and compliance with regulatory requirements since, as shown in Figure 2, the human responsibility could vary significantly between extreme values (0.32 and 0.76), and could drop below the MHC threshold. This confirms our first hypothesis

Humans overestimate their influence on the outcome, especially when they use accurate automation. This result has been observed in the past (Bartlett & McCarley, 2017; Douer & Meyer, 2021; Maltz & Meyer, 2001; Meyer, 2004), and our experiment helps to identify the behavior that leads to it. In the presence of accurate automation, human operators tend to overestimate their own accuracy and rate themselves higher. Then, since they assume responsibility based on their perceived accuracy (vs. their actual accuracy), they assume higher responsibility and follow the automation’s recommendations less than optimally required. This leads to situations where humans “listen” less to automation, especially when they could most benefit from it. Therefore, systems designers and process managers should clearly communicate to the operators the accuracy of the automation versus their own so that they gain the advantages it can provide. Interestingly though, while less accurate participants estimated their responsibility for the outcomes as higher in DSS than in ADM systems, as anticipated by our hypothesis

Determining human’s causal responsibility should consider the human’s perceptions. The ResQu model computed causal responsibility with a simple analytical model. We have shown here that it is necessary to consider additional factors. The system type determines which information in the outcome was contributed by which agent and, in particular, to whom the joint information that could have been created by each agent independently should be attributed. This is not just a mathematical analysis of the information contributions, but, as was shown empirically, the participants in the experiment changed their behavior based on how they perceived the situation, ADM or DSS. Additionally, their estimate of the automation and their own accuracy were important in their decision to follow the automation. The fact that human operators’ perceptions, determined by how the system is presented to them, influence the level at which they would follow the automation’s advice aligns with findings in a different context, showing that positioning the automation as “AI” or a “Rule-based system” changed how humans reacted to its advice after it was wrong a few times (Candrian & Scherer, 2024).

Limitations and potential further research. Most participants in our study declared that they were older than 65. We do not expect that this impacted the results since the task was very simple, and they were sufficiently tech-savvy to connect and operate the experiment on their device. However, future work can study the potential influence of the participant’s age on their tendency to follow advice from ADM or DSS. The analysis also used the theoretical optimal decision criteria to estimate the human-only (unassisted) decision probabilities. This was done due to practical reasons (it was impossible to have the same person make independent decisions on the same scenario twice, with and then without automation). Still, it may have slightly influenced the results. Additionally, it may be of interest to explore how task complexity and criticality influence users’ following the automation advice by experimenting with more realistic scenarios resembling common high-stakes tasks. In complex decisions with extremely high failure costs, humans may rely more on automation, as it may give them a sense of confidence and transfer of liability.

Conclusions

As automation’s role in decision-making processes grows, the level of human involvement becomes an essential issue when considering where and how to deploy such automation. We demonstrated here that the context in which automation is presented is important to determine humans’ causal responsibility for the outcomes. This context also influences how humans act and make decisions while being advised by automation. Setting the system in the right context is necessary to ensure adequate behavior and proper analyses of human influence, ensuring MHC.

Key Points

• Defining a system as a DSS or ADM affects the human contribution to the process and how humans perceive their influence on the outcome. • Human contribution is greater in DSS than ADM, especially when human sensitivity is higher. • Humans overestimate their influence on the outcome, especially when they use accurate automation.

Supplemental Material

Supplemental Material - Context-Based Human Influence and Causal Responsibility for Assisted Decision-Making

Supplemental Material for Context-Based Human Influence and Causal Responsibility for Assisted Decision-Making by Yossef Saad, and Joachim Meyer in Human Factors.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is part of the first author’s PhD dissertation. This work was partly funded by the Israel Science Foundation Grant 2019/19 to the second author and by a grant from the Tel Aviv University Center for AI and Data Science (TAD).

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.