Abstract

Objective

The present scoping review aims to transform the diverse field of research on the effects of mixed reality-based training on performance in manual assembly tasks into comprehensive statements about industrial needs for and effects of mixed reality-based training.

Background

Technologies such as augmented and virtual reality, referred to as mixed reality, are seen as promising media for training manual assembly tasks. Nevertheless, current literature shows partly contradictory results, which is due to the diversity of the hardware used, manual assembly tasks as well as methodological approaches to investigate the effects of mixed reality-based training.

Method

Following the methodological approach of a scoping review, we selected 24 articles according to predefined criteria and analyzed them concerning five key aspects: (1) the needs in the industry for mixed reality-based training, (2) the actual use and classification of mixed reality technologies, (3) defined measures for evaluating the outcomes of mixed reality-based training, (4) findings on objectively measured performance and subjective evaluations, as well as (5) identified research gaps.

Results

Regarding the improvement of performance and effectiveness through mixed reality-based training, promising results were found particularly for augmented reality-based training, while virtual reality-based training is mostly—but not consistently—as good as traditional training.

Application

Mixed reality-based training is still not consistently better, but mostly at least as good as traditional training. However, depending on the use case and technology used, the training outcomes in terms of assembly performance and subjective evaluations show promising results of mixed reality-based training.

Keywords

Between Potentials and Challenges: Mixed Reality-based Training for Manual Assembly Tasks

Augmented (AR) and virtual (VR)-based training is increasingly used in industrial application areas such as product design, production and manufacturing, and health applications (Berg & Vance, 2017; Blattgerste et al., 2017; Horigome et al., 2020). Despite the popularity of AR or VR, also referred to as mixed reality (MR) technologies (Skarbez et al., 2021), the evidence on their effectiveness and efficiency as a training medium is extremely diverse. This holds particularly true for the training of manual assembly tasks in production, although this is considered a highly relevant area for MR-based training (Werrlich, Lorber, et al., 2018). Here, MR-based training is increasingly used to train employees inexperienced in certain assembly tasks, which vary more frequently and more quickly due to the increasing variety and complexity of products compared to the long prevailing mass or flow production (AlGeddawy & ElMaraghy, 2012). However, looking at the recently published empirical studies on the effects of training, it has quickly become apparent that this is—in many respects—a diverse field of research and that the results on the effects of augmented and virtual reality-based training have been very heterogeneous and partly contradictory. We present a scoping review in order to transform the diverse studies on the effects of MR-based training on performance in manual assembly tasks into comprehensive statements about industrial needs, training outcome measures, effects of training on objectively measured performance, users’ subjective evaluations, and research gaps in the field.

Preparatory, we will first look at what manual assembly tasks are and what characterizes them. As a next step, the term of mixed reality (MR) is presented as an overarching construct to subsume the different instances of AR and VR technologies. Then, an introduction into the research field of training for manual assembly tasks provides insight into the need and the goal of the current scoping review.

Manual Assembly Tasks

Manual assembly tasks represent a significant part of activities in the production environment, despite the trend toward automation of manual tasks (Kothiyal & Kayis, 1995). Manual assembly activities can be described as the entirety of all operations for the assembly of objects with geometrically determined shape, such as joining (e.g., screwing, nailing, welding, gluing, soldering, clamping), handling (e.g., grab, place, turn over, move, secure), inspecting (e.g., check, control, measure), and adjusting (e.g., setting or auxiliary operations such as caulking, deburring, and (un)packing) (Lotter, 2006). Thus, an assembly process is characterized by activities ranging from providing the material to be assembled to inspection and packaging, whereby the complexity (e.g., number of steps or assembly parts and the difficulty of assembly) of the assembly process of part or whole products can vary greatly (Lotter, 2006).

According to an analysis by Yuviler-Gavish, Krupenia, and Gopher (2013) of what skills underlie assembly tasks, it appeared that while both sensorimotor and cognitive skills are involved, the most important skill required for manual assembly tasks involves procedural skills. According to Koziol and Budding (2012), procedural learning does not only refer to the acquisition of cognitive skills but primarily to motor skills and habits. Specifically, in manual assembly tasks, workers have to be taught on the one hand what actions to perform, in what order, and with which method (cognitive skills). On the other hand, assembly tasks usually consist of a complex sequence of steps and require knowledge of specific procedures and techniques (procedural skills) (Gavish et al., 2015). In contrast to solely cognitive tasks and factual information (e.g., to select, sequence, and use the correct assembly parts), which can be explicitly retrieved, procedural tasks (e.g., routine sequences of actions such as grasping, turning, or pressing) usually need to be repeated and trained several times before the learning outcome is demonstrated through improved task performance.

One potential goal of industry and production is to keep the work cycle time of assembly tasks as short as possible while at the same time ensuring the quality of products assembled (Wang et al., 2009). Nevertheless, important factors have to be considered in the evaluation of this goal, such as the weight of the parts and complexity of the product, the individual work capacity, health and safety of the workers, and appropriate training and qualification (Kothiyal & Kayis, 1995).

Until recently, training for manual assembly tasks have been primarily conveyed by means of paper-, video-, or trainer-based formats. These methods have been established over years and are still widely used today. However, they show significant shortcomings concerning time-efficiency (e.g., plant managers or learning mentors need to take time to train novices which results in a high expenditure of personnel resources), location independence (e.g., when training is carried out on-site on running machines in the production line), or individual learning requirements (e.g., paper manuals follow the one-size-fits-all approach and have not been adapted to possible prior knowledge) (Hou et al., 2013).

Due to the increasing variety and complexity of products, there has been growing interest in the industry for flexible, effective (i.e., selecting a suitable and successful training method to enhance training outcomes) and efficient (i.e., saving time and financial resources) training methods to provide workers quickly, safely, and reliably with the necessary cognitive and procedural skills (Doolani, Wessels, et al., 2020; Gavish et al., 2011). For this reason, digital training methods such as AR- or VR-based training are increasingly used to train employees inexperienced in a certain assembly task (Guo, 2015; Wang et al., 2016). However, a look into this highly interdisciplinary field of research quickly reveals that there are very heterogeneous and partly inconsistent results on the impact of AR- and VR-based training on training outcomes (Gavish et al., 2015; Loch et al., 2019; Werrlich, Nguyen et al., 2018). In the following, a characterization of AR and VR technologies as instances of the broader term MR is provided in order to classify and understand different findings on the effectiveness of these training means.

MR-Based Training for Manual Assembly Tasks

AR and VR training systems are designed to prepare workers safely and efficiently for a task without, for example, downgrading the cycle times of machines or causing occupational safety risks through mistakes (Sautter & Daling, 2021). While AR-based training is mostly used to display virtual objects into the real world, for example, by overlaying virtual objects or instructions onto the workspace, training in VR environments enables the user to interact in a computer-generated 3D environment (e.g., using a virtual tool to assemble components), while the real world is (partially) hidden (Milgram & Kishino, 1994). In order to research the effectiveness of these training methods, it is essential to understand to what extent AR- or VR-based training is even comparable.

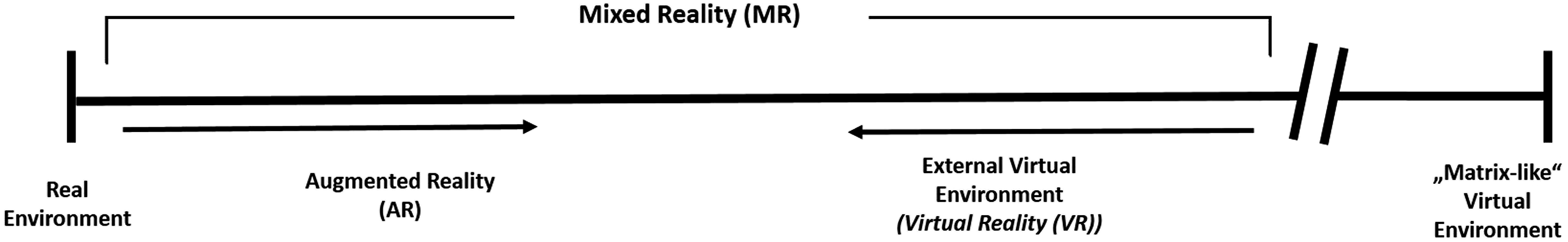

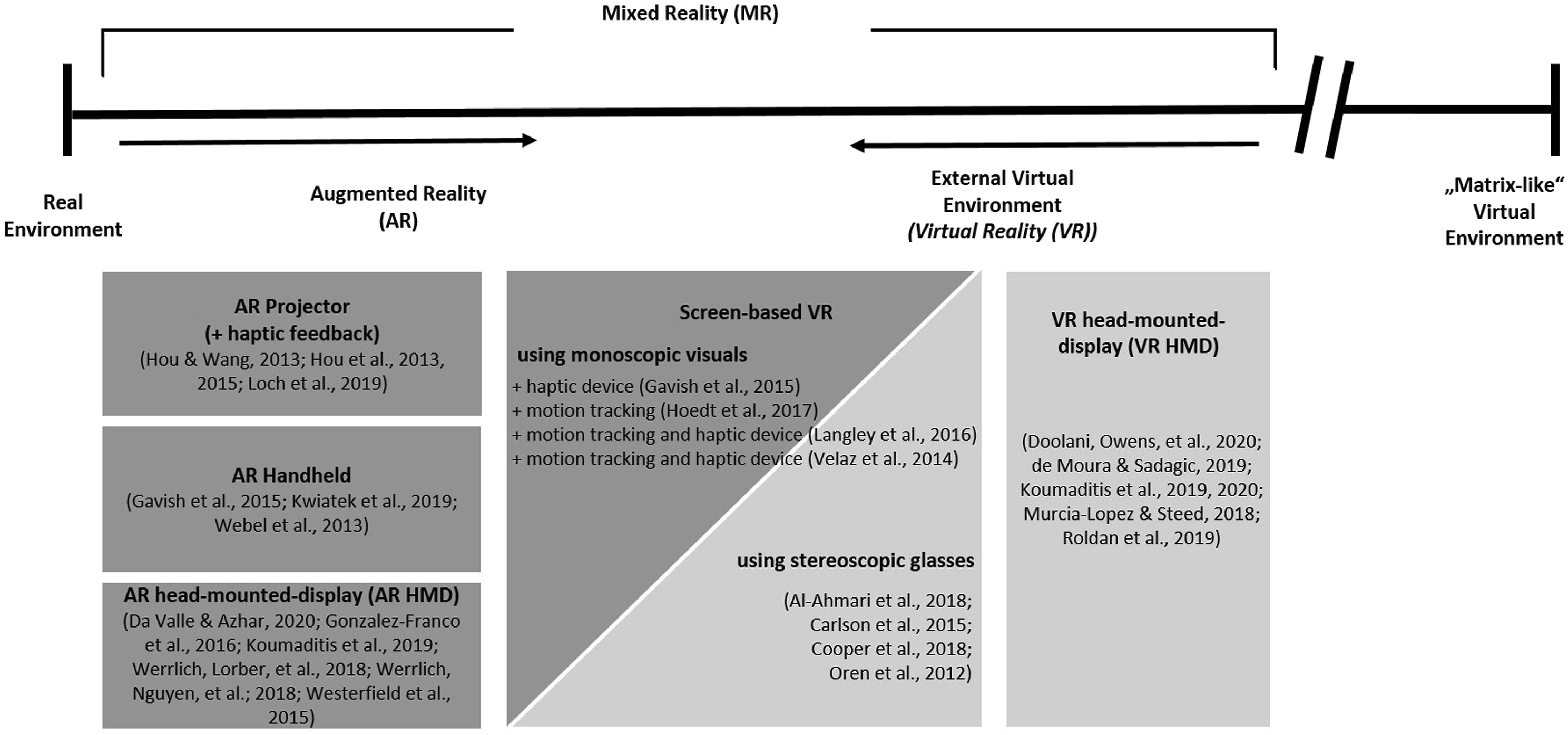

In an early definition, Milgram and Kishino (1994) grouped AR and less-commonly used augmented virtuality (AV) technologies under the term MR. VR, on the other hand, was excluded from this term as a component of virtual environments (Milgram & Kishino, 1994). Since then, there has been much discussion around the development of taxonomies of AR and VR technologies (Lindeman & Noma, 2007; Mackay, 2000; Normand et al., 2012). In this review, we follow the most recent consideration by Skarbez et al. (2021), who proposed MR as a broad umbrella term for both AR and VR and stated that “mixed reality is broader than previously believed, and, in fact, encompasses conventional virtual reality experiences” (p.1). In their definition, VR experiences are classified as external virtual environments, indicating that only users’ exteroceptive senses, that is, sight, hearing, touch, smell, and taste, are controlled by the technology. A state in which both exteroceptive and interoceptive senses are stimulated by technology was excluded from the MR-term and has been described as so-called “Matrix-Like” Virtual Environments (Skarbez et al., 2021).

In this course, AR and VR are mainly differentiated according to the extent to which the system is, so to speak, aware of its real environment and can respond to changes in that environment (introduced as extent of world knowledge by Milgram & Kishino, 1994) and their degree of immersion. Immersion can be defined as objective parameters of a system that are, on the one hand, displays (in all sensory modalities) and, on the other hand, tracking capabilities that ensure high fidelity and lead to changes in users’ perception of the environment (Slater, 2004). Skarbez et al. (2021) stated that AR systems generally have low or medium immersion, but a higher level of world knowledge, while external virtual reality systems (i.e., VR) generally have high immersion, with little or no world knowledge. Together, they are classified as instances of MR, which is defined as an environment in which the physical (i.e., real) world and virtual objects and stimuli are presented together within a single percept (Skarbez et al., 2021). The joint consideration of AR and VR as part of MR enables the analysis of different training formats with special consideration of additional features, which are used for, for example, the interaction with tools and assembly parts through controller or haptic devices. Consequently, in this scoping review, we use the term MR for various technologies and hardware of AR to VR as shown in Figure 1. Revision of the Reality-Virtuality Continuum (based on Milgram & Kishino, 1994), where Mixed Reality Was Used as Umbrella Term for AR and VR technologies (based on Skarbez et al., 2021). Note that VR was Considered as Part of External Virtual Environments in Skarbez et al., 2021.

Although MR as a training medium offers new visualization and learning possibilities, great skepticism has been prevailed in the industry for several years, primarily related to the return on the initial investment in terms of the cost of installing the system hardware and software (Gallagher et al., 2005). However, the costs of technologies are subject to constant change, which is why the benefit of using MR must be made clear independently. Despite the identified shortcomings of traditional training methods, it remains unclear to the industry why to use MR training and what potential outcomes to expect. To increase industry confidence in MR-based training, ongoing research aims to demonstrate the benefits of MR-based training through various performance and accuracy measures.

Two recent reviews have revealed that how successfully MR-based training has been used to improve users’ performance varied based on several factors, such as task type and population being trained (Doolani, Wessels, et al., 2020; Kaplan et al., 2021). In their meta-analysis, Kaplan and co-authors (2021) differentiated between cognitive, physical, and mixed tasks and found that MR (described here as XR) is a powerful training medium especially for physical tasks that involved some sort of bodily training, such as aerobic, dancing, or balance activities (Kaplan et al., 2021; Prasertsakul et al., 2018; Rose et al., 2000). Overall, no significant effects on performance measures have been found for cognitive tasks, such as remembering facts or information. In accordance with our previous definition, maintenance or manual assembly tasks have mainly been classified as mixed tasks, meaning that a combination of cognitive and physical tasks was required in training. For those tasks, the picture has been very ambiguous regarding the potential benefits of MR-based training on performance with d = −.07. While one study found that compared to no training, training in VR has improved the speed of a maintenance task (Ganier et al., 2014), other studies who have compared MR-based training with conventional training have not been able to support these superior effects of MR (González-Franco et al., 2016; Webel et al., 2013). However, Kaplan et al. (2021) reported that these findings were not consistent due to different MR technologies used, varying tasks and training methods, as well as different performance measures. Furthermore, they claimed that the sparsity of data made it extremely difficult to perform a meta-analysis. In their review, Kaplan et al. (2021) concluded that further research would need to consider a broader scope of outcome variables to research the benefit of training. Moreover, they considered a wide range of training tasks and populations (e.g., stroke patients, technicians, students, etc.). Thus, an isolated consideration of manual assembly training is indicated to further examine the validity of these results.

The second recent review of Doolani, Wessels, and co-authors (2020) on the use of various MR technologies in manufacturing, safety, education, military, rehabilitation, and medical training already provided insights into the tasks for which MR technologies have been used within various phases of the work and manufacturing process. Here, the overall suitability of MR in all phases of manufacturing processes and first tendencies for the particular suitability of VR in introductory or orientation phases have already become apparent. Although AR has been mentioned to be useful in later phases of inspection or for the use of hand tools or rare machinery, the authors have summarized VR to be superior to AR tools. Overall, the technically oriented review by Doolani, Wessels, et al. (2020) described the shortcomings that still need to be overcome concerning the standardization of hardware and software to investigate MR across different applications. In this context, the authors emphasized the importance of including further interaction modalities in the consideration of MR technologies to gain deeper insights into their effectiveness.

The two reviews have clearly shown that MR-based training have not yet shown a clear advantage over traditional training. Nevertheless, Kaplan et al. (2021) and Doolani, Wessels, et al. (2020) concluded that across all tasks and fields of application, MR-based training did not show any disadvantage either. At this point, it remains to be stated that MR-based training is at least as good as traditional training with regard to performance measures.

For industry practitioners to decide on whether MR-based training might be more suitable than traditional training for the use case of manual assembly tasks, further aspects need to be taken into account beyond the factors considered in the previous reviews. First of all, higher applicability of results need to be ensured by exclusively evaluating studies that examine MR-based training in the context of manual assembly tasks and comparing it to other formats of traditional training. Furthermore, the consideration of the outcomes of MR-based training is indicated to go beyond the purely quantitative consideration of performance to other relevant qualitative aspects that might play a role in the decision for or against the suitability of a training medium (e.g., other psychological aspects such as immersion, task load, or user experience) (Kaplan et al., 2021). Finally, it is proposed to take into account that a broad range of MR technologies (hardware and software) has been used in prevalent studies, which aggravates the comparability of their impact on user’s performance and subjective evaluations. Therefore, an in-depth consideration of the respective MR technology and interaction features is needed to make overarching statements about the effects of different MR-based training formats on training outcomes such as performance.

The aspects mentioned are essential extensions of previous reviews to improve the understanding of the impact of MR-based training compared to traditional training in the use case of manual assembly tasks. The present review addresses these aspects. The objectives and guiding questions of the review resulting from the current state of the literature are presented in the following section.

Purpose of the Scoping Review and Review Questions

The present scoping review aimed to provide a comprehensive understanding for researchers and practitioners in the industry on the impact of MR-based training versus traditional training on user performance in manual assembly tasks. Thus, we transformed different research findings on the impact of MR-based training for manual assembly tasks on performance and subjective evaluations into concise and meaningful conclusions and provided a close link between research outcomes and industry needs.

In order to synthesize the wide range of literature in the field, this review followed the methodology of a scoping review (Arksey & O’Malley, 2005). In the case of particularly heterogeneous research evidence on MR-based training for manual assembly tasks, this type of review is suitable for summarizing and disseminating research findings and to describe in more detail the findings and range of research. Thus, it serves as a precursor to a systematic review, aiming at identifying key characteristics or factors related to the concept of MR-based training for manual assembly tasks (Munn et al., 2018).

To ensure the applicability of the research results for the use cases of industry, the first step of the review was to elicit the needs and requirements on the part of the industry and what benefits are expected from the use of MR in the context of manual assembly. This topic was examined in review question (RQ) 1. Furthermore, the present review extended existing findings from previous reviews by missing in-depth consideration of different MR technologies and their interaction features used in manual assembly training. Here, the focus was on whether systematic differences in training outcomes are depending on technology and features used (RQ 2). Moreover, the evaluation of training outcomes went beyond the purely quantitative consideration of performance and thus included relevant qualitative aspects and subjective evaluations of the users. To achieve this, we categorized and compared how different objective measures and subjective evaluations as training outcomes of MR-based training were defined and measured (RQ 3). This in turn will help to make future research in the field comparable with regard to dependent variables.

The central question of the scoping review related to the effects of MR-based training concerning the in RQ 3 identified outcome measures. To this end, we analyzed in RQ 4 how different MR-based training formats impact user performance and subjective evaluations compared to traditional training. Finally, current research gaps in the field of MR-based training were analyzed, discussed, and practical implications were derived (RQ 5). Within the scope of this review, all findings from the five review questions listed below were translated into understandable and applicable statements.

What are the industrial needs and expected benefits of using MR-based trainings in assembly tasks?

What kind of MR technologies are currently used for training procedural assembly tasks in the industrial context?

What measures to capture objective performance effects and subjective evaluations are used to assess the outcomes of MR-based training?

What are the effects of using MR-based training compared to traditional training regarding the different outcome measures?

What research gaps are reported by the authors?

METHOD

The method of this scoping review referred to the iterative approach for scoping reviews proposed by Peters and co-authors (2020), which was based on the five stages framework proposed by Arksey and O’Malley (2005) as well as Levac et al. (2010). In the following, inclusion criteria are described and summarized according to the population, concept, and context (PCC) scheme. Then, based on defined inclusion and exclusion criteria, the search strategy and selected studies with post hoc adjustment of the criteria based on terminologies and content used are presented. The extraction of results was subsequently described using the PRISMA Flow diagram adapted for scoping reviews (Moher et al., 2009). The objectives, inclusion criteria, and methods for this scoping review were specified in advance and documented in a protocol (Daling, 2021).

Inclusion Criteria

The research questions derived through the current state of research provided a clear framework for inclusion criteria related to types of participants, concept, context, and types of sources, which are described in the following.

Types of Participants

MR-based learning systems are particularly used when employees who are inexperienced in a new task or temporary workers need to be trained at short notice. However, even experienced employees can be novices when it comes to a task they have not had to complete before. The prerequisite for the inclusion of participants was therefore that they have no previous experience for the task under investigation. Accordingly, the review included articles examining healthy and adult participants who were currently working or being trained for a job, including students or university samples or employees from the industry.

Concept

The scoping review investigated current research on MR-based training for manual assembly tasks, aiming at (1) exploring the needs and expected benefits mentioned in the articles, (2) mapping the technologies and features being used, (3) categorizing relevant outcome measures, (4) analyzing the effects and outcomes of MR-based training in comparison with traditional training as well as (5) identifying relevant research gaps. Thus, the review focused on learning or training systems, which is why on-the-job assistance systems were not included. As a result, only studies were considered in which training of a certain assembly task ranging from simple, more abstract tasks to highly complex assembly tasks was conducted and then actual task performance in performing the respective task was measured. The investigated training had to include one of the core terms of augmented (reality), virtual (reality), or mixed reality or, if these terms were not specifically mentioned in the description of the technology, meet the presented definition of MR (based on Skarbez et al., 2021). Thus, we included trainings in which physical world and virtual objects or stimuli were presented together within a single percept. Moreover, only articles with a comparison or control group that included another form of training (e.g., paper-based, video-based, trainer-based, or other technologies) were included.

Context

The research object of this review referred exclusively to procedural and cognitive training for industrial manual assembly. More abstract tasks (such as assembling Lego parts or 3D puzzles) were considered as long as the task included at least one typical assembly activity (joining, handling, inspecting, or adjusting). Medical training or other procedural tasks were not considered. Furthermore, the tasks to be trained should not include any further cooperation or collaboration with other humans or machines, or robots.

Types of Sources

From the existing literature, only primary studies were included in the scoping review. Meta-analyses or systematic reviews were excluded. To ensure the quality of the empirical results, no gray literature, blogs, or similar were considered. Since technologies are constantly evolving and some are not comparable with very early versions, only studies published between 2010 and 2020 were included. English- and German-language articles were considered, regardless of the authors’ origin or location.

Search Strategy

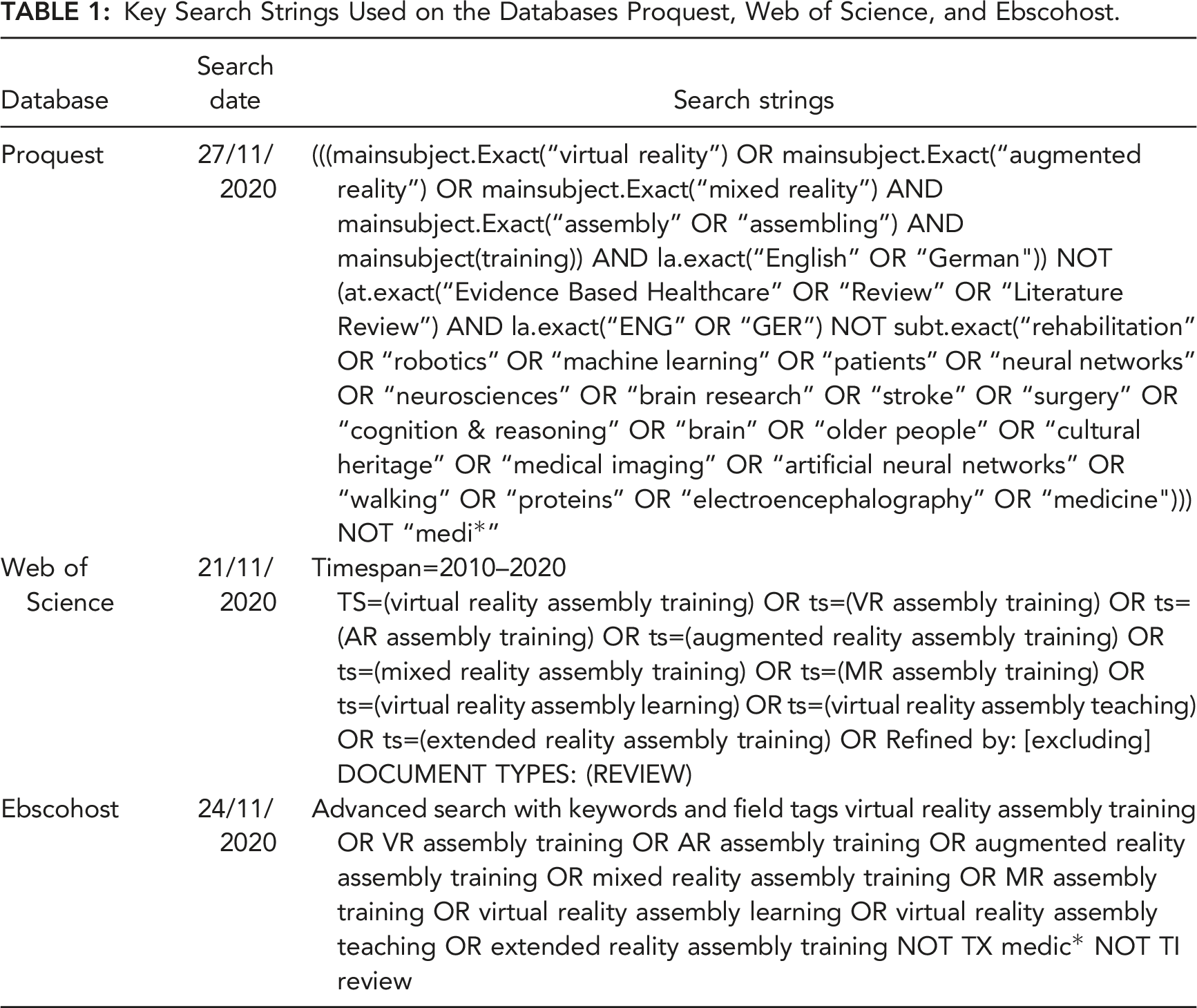

Key Search Strings Used on the Databases Proquest, Web of Science, and Ebscohost.

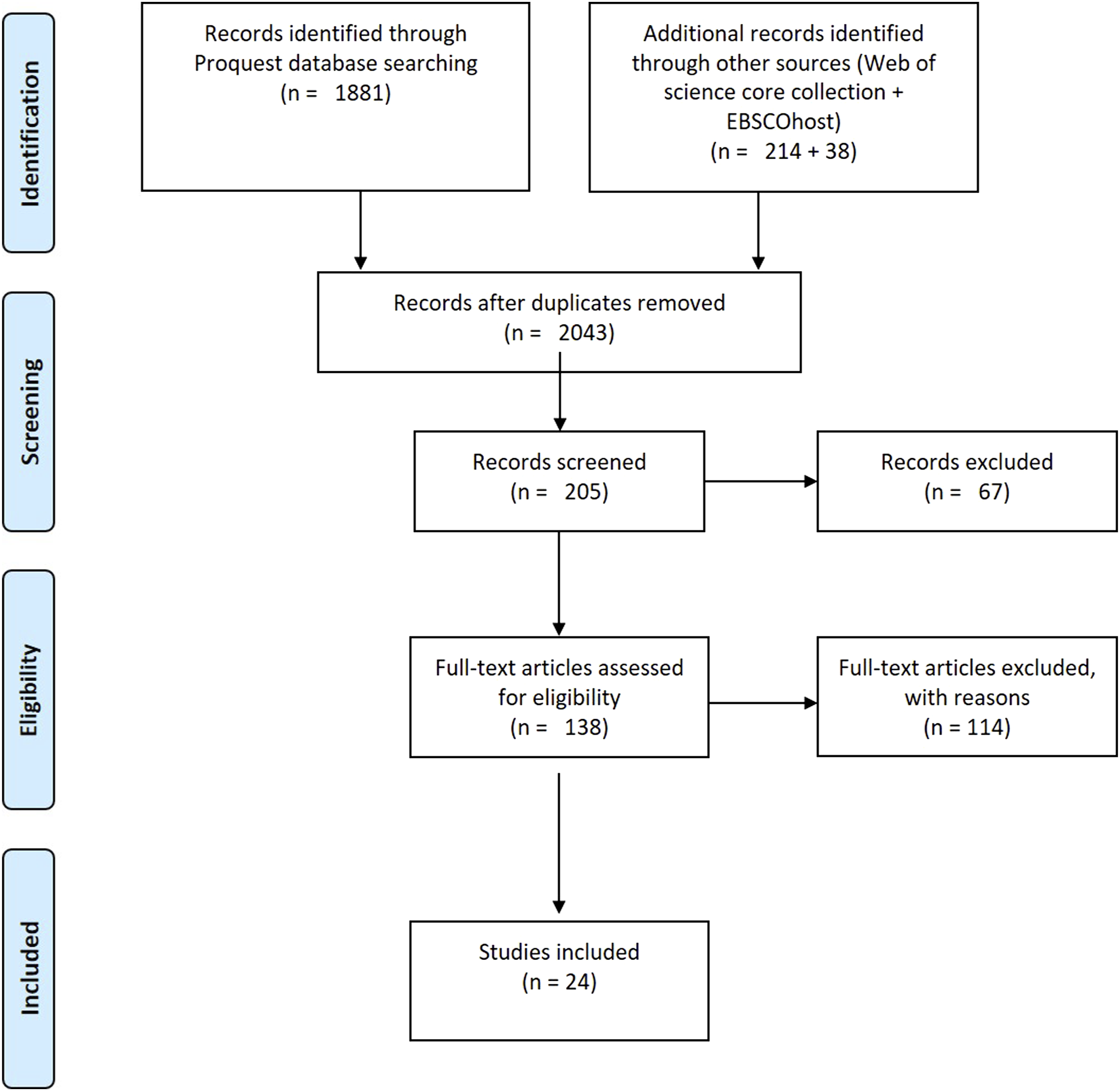

All identified papers were imported to the reference management tool EndNote and the duplicates were deleted, resulting in a total of 2043 articles (see Figure 2). After filtering through the titles, the identified papers were reduced to 205 and yet again narrowed down to 138 after a practical screening of the abstracts. PRISMA flow diagram for scoping reviews.

Extraction of Results

An overview of the data extraction process is specified and summarized in Figure 2. A random sample of 25 articles was selected out of the 138 articles and full texts were screened by two reviewers using defined inclusion and eligibility criteria. Within this pilot test, the reviewers agreed that usability studies without additional objective performance indicators, concept papers, or articles with a purely technical focus would have to be excluded to filter findings on the effects and outcomes of MR-based training. In order to include studies with reliable and precise estimates, it was also determined that only studies with sample sizes of n ≥ 10 should be considered in the final review, ensuring the statistical foundation of the conclusions (Hackshaw, 2008; Aguinis & Harden, 2008).

After assessing the modified eligibility, 24 studies were included in the review. The most frequent reasons for excluding articles were a lack of content fit to the topic (e.g., remote assistance for maintenance in Wang et al., 2019; or developing models for VR teleoperation in Lipton et al., 2018) (49 articles) and an exclusive technical focus, where the analysis presented was not based on human data (e.g., Leu et al., 2013; Xia et al., 2012) (30 articles). Thirteen articles were excluded because important statistical values (e.g., M, SD/SE, p) were not reported, there was a lack of examination of the requirements for statistical testing of small samples, sample sizes were not reported, or because the chosen level of alpha was set to >.05. Furthermore, 14 concept papers were excluded, as well as three articles with a purely descriptive statistical analysis, three articles with a sample with less than 10 participants, and two articles whose dependent variable did not involve any performance measures as outcome.

A draft charting table using Excel was developed and tested by two reviewers independently screening ten randomly selected papers. The data chart was finally narrowed down to five main categories: Overview, background and need for MR, method and measures, results as well as identified research gaps, which are subsequently described in the following.

Overview

The overview category included the following information: Published year, country of origin, type of material, research design of the study, and whether the paper was published in a peer-reviewed journal. All information was summarized and charted.

Background and Need for MR

In the background and need for MR category, the information regarding what was defined as the need for MR training was collected, and what kind of training task was given in the study. Qualitative content analysis (Mayring, 2014) was conducted to analyze the described needs, requirements, and expected benefits of MR regarding RQ1. The aim of the content analysis was the definition of precise categories that capture the substance of the investigated content. In the first step, approximately half of the selected papers were reread to get an overview of the relevant sections of the papers in preparation for the development of the category system. The focus was on those sections containing descriptions of the general context, specific problems, and the relevance of the topic selected in the article. The central statements from these text sections were paraphrased and tagged with keywords, which were used to classify similar groups and larger thematic areas. After the various content-related aspects of the data were identified in this way, a coding guideline was developed and used to code and subsequently analyze all material.

Method and Measures

In the method and measures category, information about the study design and the MR technologies used in the study was collected. Regarding RQ2, information on MR technologies used was collected, categorized, and sorted along the revised reality-virtuality continuum (based on Skarbez et al., 2021). Furthermore, the experimental data such as the hypothesis, experimental, and control group specifications as well as objective and subjective measurements were collected. Within the scope of RQ3, all measures used in the reviewed articles regarding objective performance and subjective evaluations were classified and frequencies of the identified categories were calculated.

Results of MR-Based Training

The reported results of MR-based training on objective and subjective measures in comparison to respective control groups were summarized and broken down into short and clear statements. Statistically significant results were marked accordingly. Effects were clustered by technology and counted by frequency. Subsequently, a more in-depth analysis of the effects was performed, taking into account the task, type of population, and other study characteristics.

Identified Research Gaps

In the identified research gap category (RQ5), important discussion points were summarized and implications for possible future work were derived, following the same approach of the above-mentioned qualitative content analysis (Mayring, 2014).

RESULTS

All results are presented following the above-mentioned categories and review questions. First, an overview of all reviewed articles is given. Subsequently, the identified industrial needs and expected benefits of using MR-based training in assembly tasks are reported according to RQ1. Then, the results of RQ2 on what kind of MR technologies were currently used for training procedural assembly tasks in the industrial context are presented. Afterward, we present the results of RQ3, revealing which measures were used to capture objective performance and subjective evaluations as outcome measures of MR-based training. In the section of RQ4, the results concerning the effects of using MR-based training compared to traditional training regarding the different outcome measures are presented. Finally, the identified research gaps of the reviewed articles are reported in RQ5.

Overview

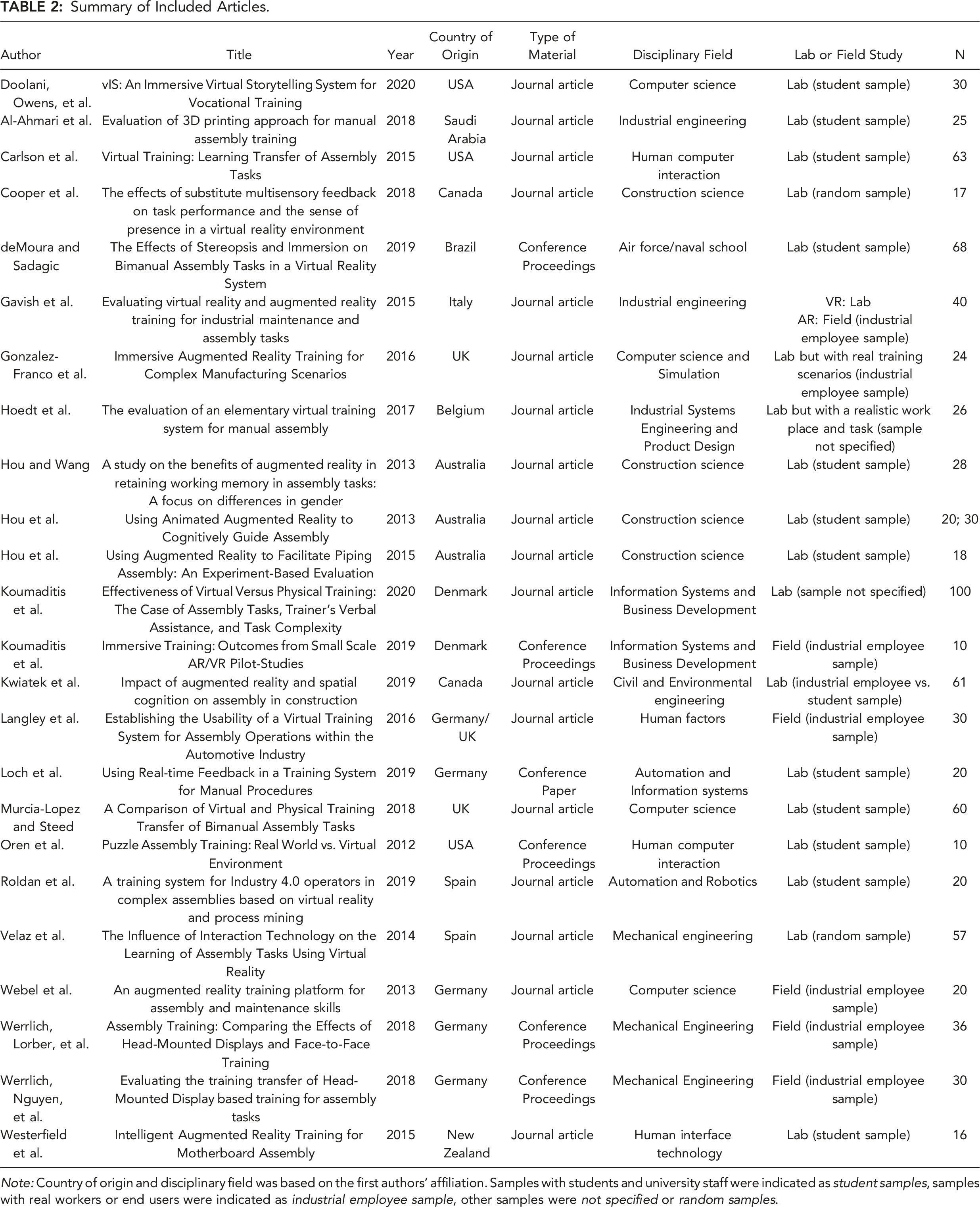

Summary of Included Articles.

Note: Country of origin and disciplinary field was based on the first authors’ affiliation. Samples with students and university staff were indicated as student samples, samples with real workers or end users were indicated as industrial employee sample, other samples were not specified or random samples.

Type of material was divided into Journal Articles (72%) and Conference Proceedings (28%). Seventeen studies were conducted in a lab environment with student or university samples, three articles used non-specified or random samples in their lab-based studies, and five were conducted as a field study with employees from the industry. At this point, it is important to mention that the article of Gavish et al. (2015) contained two independent experiments, which were analyzed separately and thus counted twice. Different types of conventional training were used as control groups in the articles, as specified in Table 2. Some used several groups of comparisons: From the articles included, seven used paper-based training as control group (Hou & Wang, 2013; Hou et al., 2013, 2015; Kwiatek et al., 2019; Murcia-Lopez & Steed, 2018; Roldan et al., 2019). Video-based training was used eight times as control group (Doolani, Owens, et al., 2020; Gavish et al., 2015 (2x); Koumaditis et al., 2020; Loch et al., 2019; Murcia-Lopez & Steed, 2018; Webel et al., 2013; Velaz et al., 2014). Trainer-based training, that is, training with real human instructors, was used within six articles (González-Franco et al., 2016; Hoedt et al., 2017; Koumaditis et al., 2019, 2020; Langley et al., 2016; Werrlich, Lorber, et al., 2018). Physical objects (such as 3D prints) were used as control group in four experiments (Al-Ahmari et al., 2018; Carlson et al., 2015; Oren et al., 2012; Murcia-Lopez & Steed, 2018). In five studies, other technologies and interaction features were used as control group (Cooper et al., 2018; deMoura & Sadagic, 2019; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015, Velaz et al., 2014).

In the following, the results are clustered and analyzed according to the five review questions of the present scoping review, starting with the identified needs of the industry of using MR-based training.

RQ1: What are the Industrial Needs and Expected Benefits of Using MR-Based Trainings in Assembly Tasks?

We conducted a qualitative content analysis based on Mayring’s approach (2014) using the software MAXQDA (Version 2020) on the 24 selected articles to gain a differentiated perspective on the existing needs in the industry context, the main potentials as well as the expectations of using MR in manual assembly training.

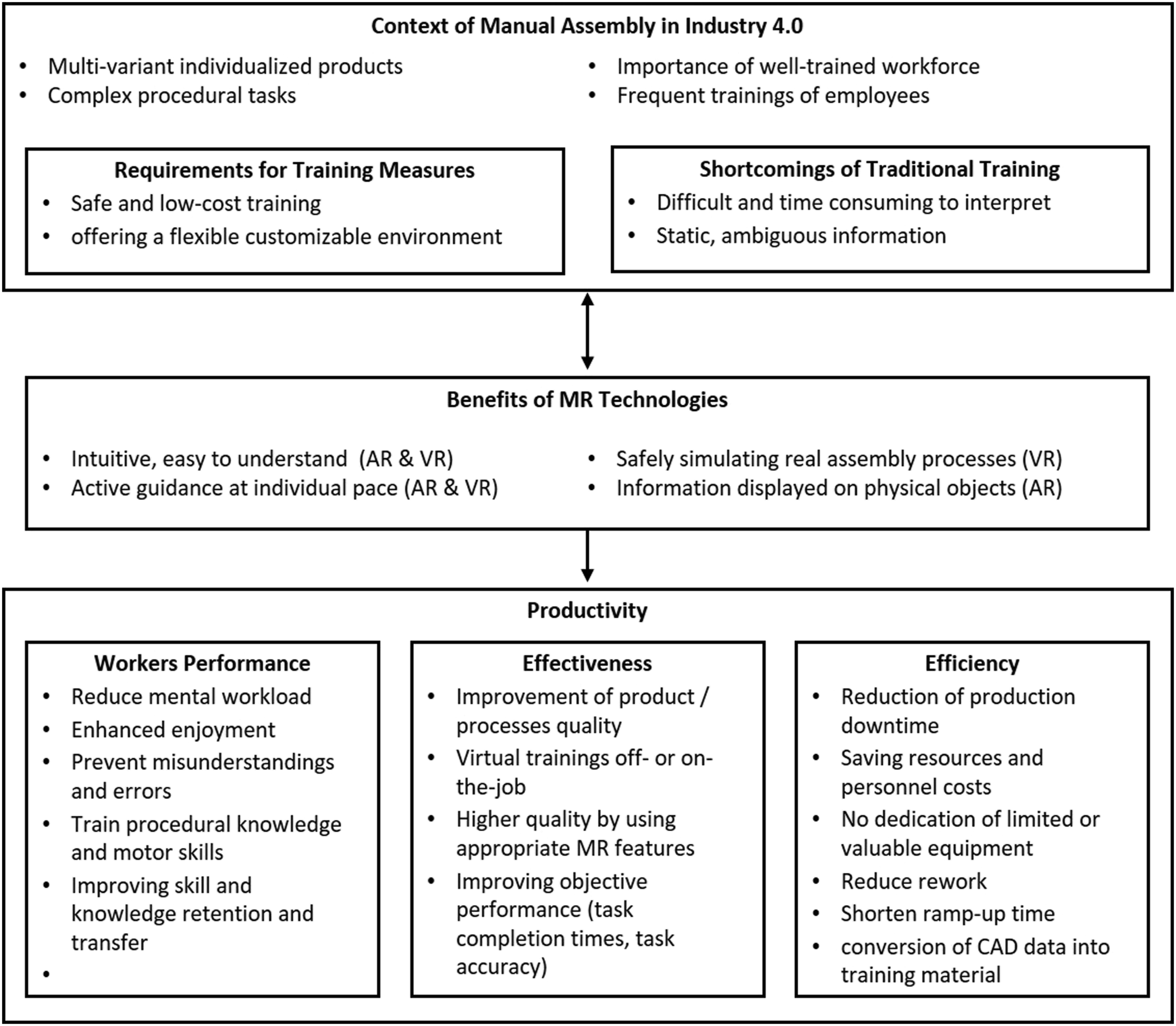

The category system that resulted from the qualitative content analysis comprised various main categories and subcategories as shown in Figure 3. The first block contains different statements on the general context of manual assembly in Industry 4.0. Almost all authors emphasized that the trend toward a high degree of product diversity and customizability increased the complexity of assembly tasks making employee training a key factor in this context. As a central requirement for the training processes, several authors stated that the training should be as (cost-)efficient as possible (e.g., low costs for hardware and developing or adjusting the software) and should take place in a safe environment that can be easily adapted to the frequently changing products in the assembly line (Doolani, Owens, et al., 2020; deMoura & Sadagic, 2019; González-Franco et al., 2016; Koumaditis et al., 2019; Loch et al., 2019; Murcia-Lopez & Steed, 2018; Oren et al., 2012). Currently, training in the industry was often carried out using paper manuals, which was mentioned to be time-consuming to interpret and could lead to misunderstandings and errors due to ambiguous information (Gavish et al., 2015; Hou et al., 2013; Kwiatek et al., 2019; Westerfield et al., 2015). Taking into account the status quo in manual assembly, it became apparent that the benefit of using MR as training medium was influenced by a reciprocal process of considering the industry needs and unforeseen possibilities offered by technology. The benefits of MR mentioned in the articles were summarized in the second block. Overall, it was emphasized that through the use of MR, information could be presented in a way that was easier to understand (González-Franco et al., 2016; Hou & Wang, 2013; Kwiatek et al., 2019), for example, because it was displayed as a virtual replica directly on the relevant objects, such as in AR (Hou & Wang, 2013; Webel et al., 2013; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). In VR, training of assembly processes could be simulated in a safe and engaging way (Doolani, Owens, et al., 2020; deMoura & Sadagic, 2019; González-Franco et al., 2016; Velaz et al., 2014). Overall, MR systems actively guided the workers at individual pace through the assembly process (Hou & Wang, 2013; Webel et al., 2013; Werrlich, Lorber, et al., 2018). Identified needs and expected benefits of MR-based trainings for manual assembly tasks in the context of Industry 4.0 (RQ1).

It was found that the concept of productivity in the papers referred to numerous different aspects and was often used non-specifically. Within the framework of the content analysis, three content-related dimensions were identified based on which the improvement potential arising from MR was described using the terms performance, effectiveness, or efficiency, as indicated in Figure 3. The first dimension referred to the direct influence MR has on employee performance, that is, their cognitive and motor ability to perform assembly procedures quickly and without errors as well as their subjective user experience (Cooper et al., 2018; Doolani, Owens, et al., 2020; Hou et al., 2013; Hou et al., 2015; Kwiatek et al., 2019; Webel et al., 2013). In the second dimension, which is effectiveness, aspects were summarized which referred to the success of the training outcome itself by using well-designed MR technologies and features for certain tasks, but also to the organization of other tasks and activities relevant for enterprises. In this context, MR did not appear solely as an alternative training medium, but as a component of the general digitalization of work processes (Hoedt et al., 2017; Langley et al., 2016; Oren et al., 2012). The third dimension, which is efficiency, referred to the saving of resources. Traditional on-the-job training on the assembly line was usually accompanied by a loss of productivity. This was characterized by the use of personnel or valuable machinery to allow new employees to learn assembly through hands-on practice and to develop an understanding of the work processes. Rework during training and machine downtimes were thus in contrast to the highest possible production capacity exploitation. MR, in contrast, provides time-, location-, and trainer-independent training that reduces the time required to practice on the machine itself. Moreover, the overall efficiency could be enhanced using existing CAD models, or production planning and training could be partially carried out in parallel (Hoedt et al., 2017; Hou & Wang, 2013; Oren et al., 2012; Roldan et al., 2019; Velaz et al., 2014). To investigate these identified needs, studies with a wide variety of different MR technologies and features were examined, which are analyzed and classified below.

RQ2: What Kind of MR Technologies is Currently Used for Training Procedural Assembly Tasks in the Industrial Context?

The technologies used in the reviewed articles varied in their hardware, software, and specific functionalities related to their extent of world knowledge, immersion, and fidelity. For the scoping review presented here, we used a consistent categorization of technologies that might have deviated from the authors’ original definition (e.g., if the authors defined Microsoft HoloLens as MR, we categorized it as AR HMD, since MR was used here as umbrella term). In order to form comparable categories, the technical descriptions of the hardware and interaction features used were listed and classified according to the revised reality-virtuality continuum and the presented definition of MR (based on Skarbez et al., 2021). This resulted in the main categories AR-based training, screen-based VR training, and VR head-mounted-display (HMD)–based training (Figure 4). The respective allocations of articles to main- and subcategories are described below. The study of Gavish et al. (2015) is listed twice, since two independent technologies were investigated. Classification of MR technologies and their interaction features used in the reviewed articles as AR-based training, Screen-based VR training, and VR head-mounted display (HMD)–based training (RQ2) according to the revised reality-virtuality continuum based on Skarbez et al. (2021).

Twelve articles were identified using AR-based formats and thus were allocated to the left side of the continuum, where a high extent of world knowledge and perception of real elements was enabled by the presented MR-based training (i.e., AR-based trainings displaying virtual objects onto the real world). These were assigned to the three subcategories AR projectors (Hou & Wang, 2013; Hou et al., 2013, 2015; Loch et al., 2019), AR handhelds (Gavish et al., 2015; Kwiatek et al., 2019; Webel et al., 2013) and AR head-mounted-displays (HMD) (González-Franco et al., 2016; Koumaditis et al., 2019; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). AR projectors were defined as being fixed to the environment (e.g., monitors or fixed projectors above the workstation), whereas AR handhelds were used as a mobile and flexible tool, that is, tablets, connected either to the user or the environment. AR HMD technologies were characterized as displays being permanently attached to the users’ heads while still allowing them a see-through view of the real environment.

Eight articles used screen-based VR training, which was allocated in the middle of the continuum, representing MR solutions between reality and virtuality. These technologies were further differentiated according to their use of monoscopic or stereoscopic visuals. We used the description monoscopic screen-based VR training if the authors indicated that they were presenting 3D-modeled environments on a screen without any use of, for example, stereoscopic glasses. Monoscopic VR can be defined as presenting an image simultaneously to both eyes (Singer et al., 1995), making it less immersive and providing a relatively high extent of world knowledge. Nevertheless, they were mentioned as a relevant form of VR in industry-related assembly training (Gavish et al., 2015; Hoedt et al., 2017; Langley et al., 2016; Velaz et al., 2014). Screen-based VR training using stereoscopic glasses was used in another four studies. Stereoscopic visualization in VR creates an illusion of depth through two two-dimensional images corresponding to the view of a scene from two different angles (Singer et al., 1995). Thus, these technologies were allocated closer to the right side of the revised reality-virtuality continuum, enabling a lower extent of world knowledge and higher immersion (Al-Ahmari et al., 2018; Carlson et al., 2015; Cooper et al., 2018; Oren et al., 2012).

Another five articles used VR HMD-based training, which was allocated close to the right, that is, the external virtual environment (Doolani, Owens, et al., 2020; deMoura & Sadagic, 2019; Koumaditis et al., 2020; Murcia-Lopez & Steed, 2018; Roldan et al., 2019). The category of VR HMD had a low extent of world knowledge, following the definition that the real environment was hidden through closed goggles and the user was completely immersed in the 3D environment (Milgram & Kishino, 1994).

In the course of this review, the selected publications were analyzed regarding their effects on training outcomes in manual assembly tasks and thereby clustered along with the defined technology categories AR projectors, AR handhelds, AR HMD, screen-based VR, and VR HMD. In the following section, we first present an overview and classification of collected training outcome measures to ensure comparability of effects concerning dependent variables.

RQ3: What Measures to Capture Objective Performance Effects and Subjective Evaluations are Used to Assess the Outcomes of MR-Based Training?

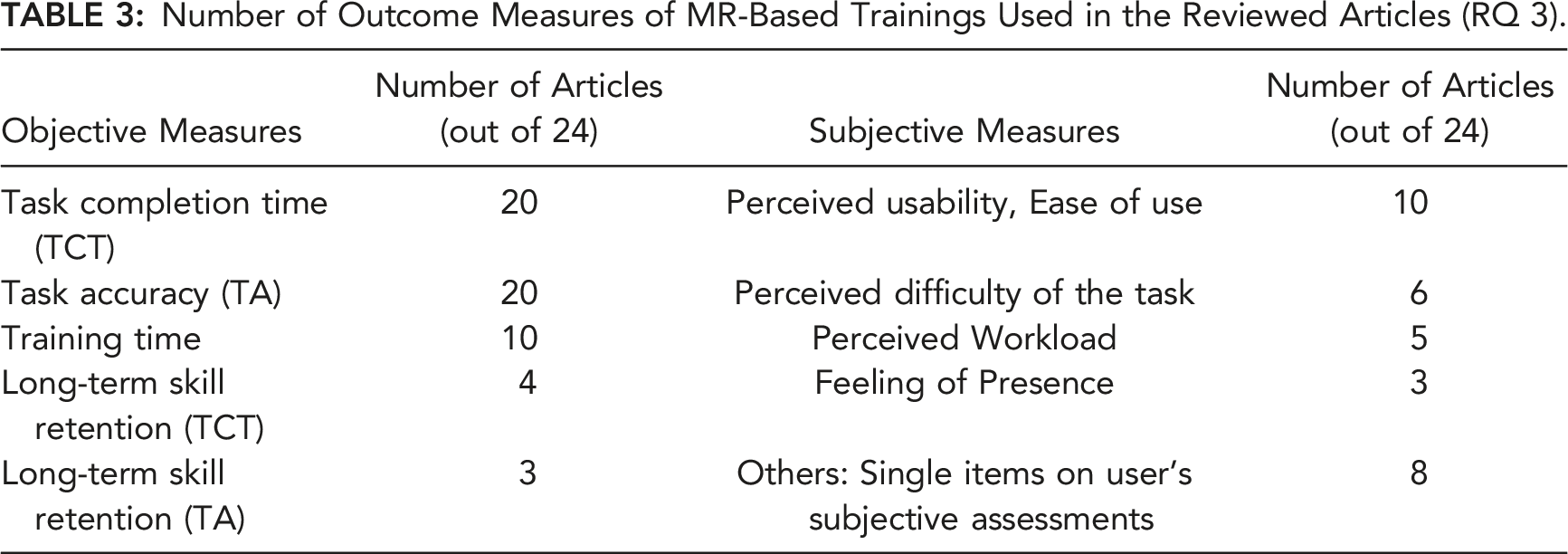

Number of Outcome Measures of MR-Based Trainings Used in the Reviewed Articles (RQ 3).

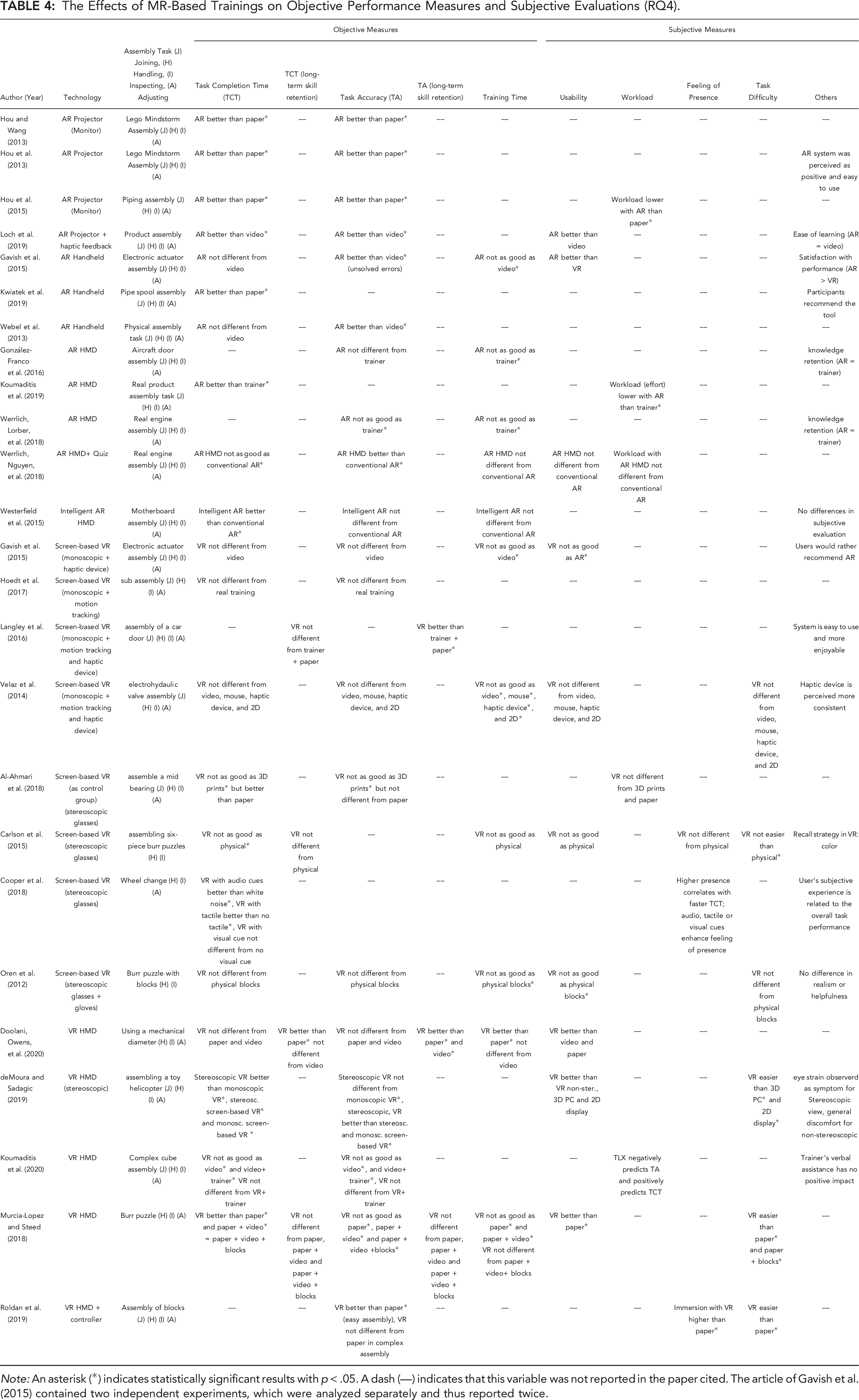

The Effects of MR-Based Trainings on Objective Performance Measures and Subjective Evaluations (RQ4).

Note: An asterisk (*) indicates statistically significant results with p < .05. A dash (––) indicates that this variable was not reported in the paper cited. The article of Gavish et al. (2015) contained two independent experiments, which were analyzed separately and thus reported twice.

The most frequently used objective measure was performance, either the time needed for completing the assembly after training (task completion time) and/or the accuracy in assembling a product, that is, the error rate or quality of task processing (task accuracy). Task completion time (TCT) was measured in 20 out of 24 articles (Doolani, Owens, et al., 2020; Al-Ahmari et al., 2018; Carlson et al., 2015; Cooper et al., 2018; deMoura & Sadagic, 2019; Gavish et al., 2015; Hoedt et al., 2017; Hou & Wang, 2013; Hou et al., 2013, 2015; Koumaditis et al., 2020; Kwiatek et al., 2019; Langley et al., 2016; Loch et al., 2019; Murcia-Lopez & Steed, 2018; Oren et al., 2012; Roldan et al., 2019; Velaz et al., 2014; Webel et al., 2013; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). Similarly, task accuracy (TA) was also collected in 20 of the 24 articles (Doolani, Owens, et al., 2020; Al-Ahmari et al., 2018; deMoura & Sadagic, 2019; Gavish et al., 2015; González-Franco et al., 2016; Hoedt et al., 2017; Hou & Wang, 2013; Hou et al., 2013, 2015; Koumaditis et al., 2020; Langley et al., 2016; Loch et al., 2019; Murcia-Lopez & Steed, 2018; Oren et al., 2012; Roldan et al., 2019; Velaz et al., 2014; Webel et al., 2013; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). Additionally, in ten articles statements were made about the training time required with the respective technology to learn how to fulfill the defined assembly task (Doolani, Owens, et al., 2020; Carlson et al., 2015; Gavish et al., 2015; González-Franco et al., 2016; Murcia-Lopez & Steed, 2018; Oren et al., 2012; Velaz et al., 2014; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). To record how well the training content was remembered in the long term, that is, after a certain time, skill retention was assessed as the assembly success regarding TCT after a certain time period had passed in three articles (Doolani, Owens, et al., 2020; Carlson et al., 2015; Murcia-Lopez & Steed, 2018). Long-term skill retention concerning TA was assessed in two articles (Doolani, Owens, et al., 2020; Murcia-Lopez & Steed, 2018).

Subjective evaluations were assessed to complement the findings on objective performance and included all information collected through questionnaires and the subjective assessment of users. These variables were mostly related to usability factors. Of the nine articles that captured usability (Doolani, Owens, et al., 2020; Carlson et al., 2015; deMoura & Sadagic, 2019; Gavish et al., 2015; Loch et al., 2019; Murcia-Lopez & Steed, 2018; Oren et al., 2012; Velaz et al., 2014; Werrlich, Nguyen, et al., 2018), five used the standardized System Usability Scale of Brooke (1996) (Doolani, Owens, et al., 2020; deMoura & Sadagic, 2019; Gavish et al., 2015; Velaz et al., 2014; Werrlich, Nguyen, et al., 2018) and one used the USE questionnaire of Lund (2001) (Loch et al., 2019). The difficulty of the task was measured in six articles (Carlson et al., 2015; deMoura & Sadagic, 2019; Murcia-Lopez & Steed, 2018; Oren et al., 2012; Velaz et al., 2014; Roldan et al., 2019), which was sometimes also operationalized as the difficulty of completing the task while interacting with the device (e.g., Velaz et al., 2014). The workload was assessed in five articles through the NASA TLX of Hart & Staveland (1988) (Al-Ahmari et al., 2018; Hou et al., 2015; Koumaditis et al., 2019, 2020; Werrlich, Nguyen, et al., 2018). In three articles, the feeling of presence was assessed (Carlson et al., 2015; Cooper et al., 2018; Roldan et al., 2019). One single paper recorded the subjective assessment or satisfaction of the user’s performance (Gavish et al., 2015). Additionally, in seven articles various single items were used to assess user’s perceptions (e.g., stress, frustration, seriousness) (deMoura & Sadagic, 2019; Gavish et al., 2015; Hou et al., 2013; Langley et al., 2016; Oren et al., 2012; Velaz et al., 2014; Westerfield et al. 2015).

The effects of the investigated MR-based training formats regarding the defined performance measures TCT and TA as well as the subjective evaluations are summarized in Table 4 and reported in the following in comparison to traditional training.

RQ4: What are the Effects of Using MR-Based Training Compared to Traditional Training Regarding the Different Outcome Measures?

The effects and outcomes related to MR-based training compared to traditional training were analyzed in terms of the objectively measured performance outcomes (TCT, TA) and subjective evaluations described previously. Looking at the frequencies exclusively, AR-based training (n = 12) led to statistically significantly better performance than traditional training in 7 of 10 studies in which TCT was collected as the dependent variable (Hou & Wang, 2013; Hou et al., 2013, 2015; Koumaditis et al, 2019; Kwiatek et al., 2019; Loch et al., 2019; Westerfield et al., 2015), the same in two studies (Gavish et al., 2015; Webel et al., 2013), and statistically significantly worse in one (Werrlich, Nguyen, et al., 2018). In terms of TA, which was measured in 10 AR-based studies, the AR condition performed statistically significantly better in eight studies (Gavish et al., 2015; Hou & Wang, 2013; Hou et al., 2013, 2015; Loch et al., 2019; Webel et al., 2013; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018) and equally well twice (González-Franco et al., 2016; Westerfield et al., 2015). None of the articles tested long-term skill retention after AR-based training.

Looking at the different VR-based training methods (n = 13), we observed that concerning short-term, that is, immediate effects after training on TCT, statistically significantly better performance was achieved in one study using VR HMD compared to paper- and video-based training (Murcia-Lopez & Steed, 2018) and in another when compared to screen-based VR training (deMoura & Sadagic, 2019). In five articles, VR-based training led to equally good results compared to traditional training (Doolani, Owens, et al., 2020; Gavish et al., 2015; Hoedt et al., 2017; Oren et al., 2012; Velaz et al., 2014). Statistically significantly worse results compared to traditional training regarding TCT were found in three articles (Al-Ahmari et al., 2018, Carlson et al., 2015; Koumaditis et al., 2020). Moreover, Cooper et al. (2018) reported positive effects of additional audio and haptic cues on TCT. Regarding TA, VR-based training led to equally good results as traditional training in five studies (Doolani, Owens, et al., 2020; Gavish et al., 2015; Hoedt et al., 2017; Oren et al., 2012; Velaz et al., 2014). In three studies, VR-based training was statistically significantly worse in TA than traditional training (Al-Ahmari et al., 2018; Koumaditis et al., 2020; Murcia-Lopez & Steed, 2018). Roldan et al. (2019) found different results for easy and complex assembly tasks, and deMoura & Sadagic, 2019 showed again that VR HMD was statistically significantly better than screen-based VR. With regard to long-term skill retention, four studies showed that VR-based training led to the same or even statistically significantly better results than traditional training, even when the immediate effects were not as good as traditional training (Carlson et al., 2015; Doolani, Owens, et al., 2020; Langley et al., 2016; Murcia-Lopez & Steed, 2018).

Beyond the overview presented above, an in-depth look into the study context (i.e., lab vs. field study), task type, and training specifications provided further insights into the effects of MR-based training. In each section, we first summarized the effects of the respective MR technologies AR projectors, AR handhelds, AR HMD, screen-based VR, and VR HMD as training media in comparison to other training formats. Subsequently, we analyzed how the effects related to objective performance measures and subjective evaluations varied depending on task type and training specifications. Main findings on the effects of MR-based training and statistically significant group differences are summarized in Table 4, where the specifications of the assembly task are indicated by stating whether joining, handling, inspecting, and/or adjusting activities were involved.

AR Projectors

AR projectors as MR technologies were located on the revised reality-virtuality continuum on the left side, that is, close to the real environment, indicating a high extent of world knowledge. In total, four articles focused on AR projectors as MR technology to train assembly tasks (Hou et al., 2013; Hou & Wang, 2013; Hou et al., 2015; Loch et al., 2019). Overall, the evaluation and analysis showed that the projector-based AR training led to statistically significant better results in terms of TCT and TA when compared to paper-based manuals or video-based training. All assembly tasks included joining, handling, inspecting, and adjusting activities. Subjective evaluation of AR projectors showed that AR projectors were perceived as easy to use and statistically significantly reduced workload compared to paper-based training. The individual findings of the studies about objective measures such as TCT, TA, and training time as well as results of subjective evaluations are summarized below.

Effects of AR Projector-based Training on Objective Performance Measures

Hou and co-authors (2013) compared an animated AR projector system with a paper-based manual system in two different experiments (n = 20 and n = 30) to assess performance. In a lab-based Lego® assembly, the authors found that participants made statistically significantly fewer mistakes (TA) and took statistically significantly less time (TCT) to assemble when being trained with the AR-based projection monitor. An improvement in the learning curve was illustrated by the fact that AR-trained participants were able to remember more assembly instructions from the previous training task than those trained with the paper manual. Hou and Wang (2013) further investigated the gender-specific effects of the use of AR (here used on a monitor) on performance (n = 28). The experimental group used AR, while the control group was trained using a paper manual with 3D elements. Both groups consisted of seven males and seven females. In comparison to the paper-manual control group, both male and female participants showed statistically significantly better performance in terms of TCT and TA. A statistically significant gender difference was only found concerning the control group, caused by the fact that manual-based training was more effective for males. In another study, Hou et al. (2015) investigated how AR affected performance and workload in a lab-based construction piping scenario (n = 18). Compared to training with isometric drawings, the assembly novices trained with AR performed statistically significantly better, since they made half the number of errors (TA) and took only half the time to complete the task (TCT) and rework activities. The superior results of AR projectors were also confirmed by the study of Loch et al. (2019), who showed that using AR-based projection on a workbench with physical objects was statistically significantly better in terms of TCT and TA (n = 20) compared to a video-based system. In their study, they used a pick-to-light principle that enabled direct feedback for correct or wrong steps in the assembly of a circular flange onto a baseplate.

Effects of AR Projector-based Training on Subjective Evaluations

Overall, AR projectors were rated better in terms of ease of use, usefulness, and workload reduction in the subjective evaluation. Hou and co-authors (2013) showed that the paper-based training was perceived as complicated and cumbersome. However, a statistically significant difference was only reflected in the perceived workload, which was significantly higher in almost all scales of the NASA TLX (Hart & Staveland, 1988) for the paper manual group than in the group guided with AR. No difference was reported for the subscale subjectively perceived performance of the NASA TLX. Hou and Wang (2013) did not include subjective evaluations in their study. In the piping assembly task (Hou et al., 2015), assembly novices reported perceiving the AR system as easier to use and navigate, while experiencing statistically significantly lower workload (NASA TLX; Hart & Staveland, 1988) during the task. Loch et al. (2019) descriptively analyzed the USE questionnaire (Lund, 2001), containing 30 items on usefulness, ease of use, ease of learning, and satisfaction. The authors found that satisfaction and usefulness were rated higher when using the AR projector system compared to the video system. However, statistically significant group differences were not reported. Ease of learning was perceived equally positive for both systems.

AR Handhelds

Similar to AR projectors, AR handhelds (such as tablets) were used to augment a real training environment with virtual objects. Three of the 24 reviewed studies used AR handheld systems for assembly training and showed that this kind of AR-based training led to statistically significantly better results in TCT in comparison to paper-based isometric drawings (Kwiatek et al., 2019). Equal performance in TCT was shown in comparison with video-based training (Gavish et al., 2015; Webel et al., 2013). In these studies, statistically significantly better performance was shown in TA using AR handhelds compared with video. In particular, AR led to fewer unsolved errors, i.e., errors that were not corrected by the user (Gavish et al., 2015). Overall, all applications were tested in field settings or with employees from the production context using assembly tasks with joining, handling, inspecting, and adjusting activities. Subjective evaluations revealed that AR was perceived to have high usability and that AR-based 3D visualization was assessed as helpful in the assembly process. The following section describes these findings in detail.

Effects of AR Handheld-based Training on Objective Performance Measures

Gavish and co-authors (2015) presented two independent experiments, with one experiment relating to the comparison of an AR system with a video-based control group (n = 20) and another examining a VR system compared to a video-based control group (n = 20). In this section, the results regarding the AR system compared to the control group are reported. Later on, the second study of their article will be reported under VR systems. The tested AR training system consisted of a tablet PC with a touchscreen that was used to work directly on the machine. In terms of training time, the AR-based training took statistically significantly longer than the control group, which was trained with video. The AR group showed statistically significantly fewer unsolved errors, meaning that participants trained with AR identified and corrected errors more often. Otherwise, there were no differences between the groups in terms of TCT and the number of errors solved. Comparable results were also shown in the study of Webel and co-authors (2013), who studied a multimodal AR tablet system compared to video-based training for the assembly of an electro-mechanical actuator with experienced workers (n = 20). While TCT and the number of errors solved were not different from the video control group, the number of unsolved errors (TA) was statistically significantly lower in the AR group compared to the control group. Furthermore, Webel and co-authors (2013) reported that training with AR took slightly longer (851.9 s) than with video-based training (682.0 s).

While these two studies showed that using AR handhelds for training led to comparable results as video-based training, but statistically significantly reduced unsolved errors, the study by Kwiatek and co-authors (2019) revealed that using AR handhelds led to better results when compared to paper-based training. Here, participants were trained to assemble a complex pipe spool using conventional isometric drawings or AR-based guidance on a tablet. All participants were previously classified into two groups (engineers vs. pipe-fitters) and into high, medium, and low spatial skills. Across both groups, the use of AR on a tablet statistically significantly reduced assembly time (TCT) and rework time. The authors showed that those whose cognitive abilities were considered to be low benefited more from the AR application than the other participants.

Effects of AR Handheld-based Training on Subjective Evaluations

Gavish and co-authors (2015) reported no comparison between AR and control group in the subjective evaluations, but between both experiments on AR and VR—although both systems were evaluated independently. The authors showed that AR was rated with statistically significantly higher usability and better transfer of training compared to VR. Participant feedback in the study by Kwiatek et al. (2019) revealed that 3D design and visualization were perceived as helpful to facilitate the assembly and rework process. It needs to be noted that no standardized questionnaire was used in these studies.

AR HMDs

While AR projectors and handhelds allowed the user to look away from virtual augmentations, the latter were inevitably integrated into the user’s field of view when using AR HMDs. In the following, we analyzed the effects of using AR as a head-mounted device as a training medium. The effects of AR HMDs were investigated within five articles and mostly conducted in real training settings for all assembly activities joining, handling, inspecting, and adjusting (González-Franco et al., 2016; Koumaditis et al, 2019; Werrlich, Lorber, et al., 2018; Werrlich, Nguyen, et al., 2018; Westerfield et al., 2015). These studies revealed that in comparison to trainer-based formats, training with AR HMD required statistically significantly more time. However, using AR HMDs in assembly training led to statistically significantly higher performance than trainer-based training in terms of TCT, but to the same or even statistically significantly worse accuracy (TA). Additional features in AR (such as quizzes) led to a statistically significant increase in TA. The subjective evaluations showed that AR HMDs were rated as requiring statistically significantly less effort than trainer-based training. All results are further described in the following.

Effects of AR HMD-based Training on Objective Performance Measures

Three studies reported the effects of using AR HMDs for assembly training compared to trainer-based formats. First, the study of González-Franco and co-authors (2016) showed that participants in AR HMD training (using see-through Oculus Rift) achieved the same performance in the assembly of an aircraft maintenance door as participants in traditional face-to-face training (n = 24). Here, the authors assessed both factual knowledge in a knowledge retention multiple-choice test and procedural knowledge in a knowledge interpretation test, where participants were asked to perform the assembly step by step. There was no difference in the scores of knowledge retention and interpretation between the conditions. The latter was treated as the accuracy of the task (TA) in our analysis. Training times were statistically significantly higher in the AR condition compared to a trainer-based format. Second, a comparison between AR HMD (Microsoft HoloLens) and trainer-based training was conducted by Werrlich, Lorber, et al. (2018) in an assembly of a real engine (n = 36). Here, training time was statistically significantly higher in the AR HMD group, too. Although participants in the AR HMD group made 10% fewer picking mistakes, 5% fewer assembly order mistakes, and caused 60% less rework, overall assembly quality and quality per tact were statistically significantly higher in the trainer condition. Similar to González-Franco et al. (2016), Werrlich, Lorber, et al. (2018) decided to use a questionnaire capturing factual knowledge acquisition of the performed assembly task besides measuring TA as an assembly performance indicator. The results showed that participants who trained with AR retained factual knowledge equally well as participants who were instructed by a trainer. As a third study comparing AR HMD and trainer-based training, Koumaditis et al. (2019) used a turning table workstation, where a six-part product assembly product composed of supports, mechanical elements, and electronic components was assembled by handling and connecting components without the help of other tools. They showed that the group trained with AR HMD performed statistically significantly better than the group trained by a trainer in terms of TCT (n = 10).

Two other studies showed that using AR HMDs with additional features such as intelligent tutors or quizzes in training led to better results than AR HMD-based training without such features. Werrlich, Nguyen, et al. (2018) compared two AR HMD modalities (n = 30), both covering different skill levels from beginner to expert. In one of their AR HMD training, an additional quiz determining participants’ procedural knowledge was implemented before conducting the final assembly of an engine. Here, participants had to select the correct sequence of assembly steps and received immediate feedback from the system. Compared to the AR HMD-based training without the quiz, participants in the quiz condition took statistically significantly longer to complete the final assembly task (TCT) but committed statistically significantly fewer sequence errors (TA). Westerfield and co-authors (2015) integrated an intelligent tutor with feedback functionalities into the AR HMD system and tested its effects against an AR HMD system without immediate feedback (n = 16). Participants were trained to conduct a motherboard assembly, consisting of identifying and installing five motherboard components: memory, processor, graphics card, TV tuner card, and heatsink. Westerfield et al. (2015) found that the group receiving AR-based training with the intelligent tutor was statistically significantly faster in the final assembly task in terms of TCT compared to the group being trained by the conventional AR HMD system without feedback, but both groups made comparable numbers of errors (TA).

Effects of AR HMD-based Training on Subjective Evaluations

Overall, it appeared that the use of AR HMD for assembly training was perceived as positive and, in some cases, even helped to reduce workload. Werrlich, Lorber, et al. (2018) evaluated the usability of the tested system (Microsoft HoloLens), which was assessed with a mean system usability (SUS) score of 73.5, indicating acceptable to good usability. SUS Scores are ranging from 0 to 100 (Brooke, 1996), where 68 is considered as average usability score (Sauro, 2011). However, the authors discussed that making it more user-friendly would enhance its potential. Koumaditis and co-authors (2019) assessed the NASA TLX and indicated that the participants using AR HMD reported requiring statistically significantly less effort to accomplish the task than the participants guided by a trainer. No differences were found for the other scales of the NASA TLX. Testing the AR HMD system with an additional quiz to ensure training transfer revealed that participants’ perceived workload did not differ from participants’ evaluations who were trained with the conventional AR HMD method without the quiz (Werrlich, Nguyen, et al., 2018). System Usability was rated equally excellent for the AR HMD system without (SUS = 90.50) and with the quiz (SUS = 91.83). The results of subjective evaluations in the study of Westerfield et al. (2015) supported previously reported findings. Here, participants were asked to fill out a questionnaire and indicate whether, for example, they perceived the tutor as effective, and whether they felt physically or mentally stressed during the training process. The authors reported that both AR HMD with an intelligent tutor and conventional AR HMD systems were positively evaluated and showed no differences in these subjective evaluations (e.g., perceived stress, effectiveness, frustration).

Screen-Based VR

The category of screen-based VR technologies was characterized by the fact that although virtual, that is, 3D modeled environments were presented, and they were displayed on a screen or monitor. All articles included the four assembly activities joining, handling, inspecting, and adjusting (Al-Ahmari et al., 2018; Carlson et al., 2015; Cooper et al., 2018; Gavish et al., 2015; Hoedt et al., 2017; Langley et al., 2016; Oren et al., 2012; Velaz et al., 2014). The superordinate category screen-based VR was located in the middle of the revised reality-virtuality continuum (based on Skarbez et al., 2021), while the subcategory monoscopic VR (i.e., 3D environments that are presented without stereoscopic visuals) provided a higher extent of world knowledge and could thus be located closer to the real environment. Screen-based VR with stereoscopic glasses, on the other hand, enabled depth perception through the addition of, for example, shutter glasses and thus a more intensive immersion in the 3D environment. However, both variants did not exclude users’ perceptions of the environment. Results indicated that monoscopic screen-based VR showed comparable effects on TCT as trainer-based training and video-based training and resulted either in the same (Gavish et al., 2015; Hoedt et al., 2017; Velaz et al., 2014) or statistically significantly higher task accuracy (Langley et al., 2016). Studies, where screen-based VR was used in combination with stereoscopic glasses, showed that participants who were trained with physical objects performed assembly tasks statistically significantly faster and more accurately than participants trained with screen-based VR and stereoscopic glasses (Al-Ahmari et al., 2018; Carlson et al., 2015). However, with more experience in VR and the use of additional features and cues, results seemed to improve (Cooper et al., 2018; Oren et al., 2012).

In the following, we will first review the effectiveness of monoscopic screen-based VR training with regard to objective performance measures and subjective evaluations. Second, studies using screen-based VR with stereoscopic visuals will be reviewed. Subsequently, the effects of the latter on subjective evaluations will be examined.

Effects of Monoscopic Screen-Based VR Training on Objective Performance Measures

Four out of eight articles on screen-based VR used monoscopic VR (Gavish et al., 2015; Hoedt et al., 2017; Langley et al., 2016; Velaz et al., 2014). These studies will be described in more detail in the following. Gavish and co-authors (2015) used a VR platform consisting of a screen displaying a 3D graphical scene and a haptic device where trainees were able to manipulate the tools and components of the virtual scene to train the assembly of an electronic actuator of a motorized modulating valve (n = 20), while the control group was instructed via video. Performance was measured as TCT and number of solved and unsolved errors (TA), where no differences between video and non-HMD VR conditions occurred. However, participants took statistically significantly longer to train with the VR system than with the video system. Similar effects were reported by Hoedt and co-authors (2017), who examined a screen-based VR-trained group vs. a non-trained group (n = 26) during an assembly of a medium complex ®MECCANO sub assembly. The screen-based VR training was realized using a tablet, a screen, and a Kinect V2 ®Microsoft. During the training period, participants’ hands were tracked in real-time while assembling the virtual objects. At the first measurement time point (T1), the VR-trained participants showed statistically significantly better scores in terms of TCT. At the second measurement point (T2), the control group was considered “trained” and again compared to the first measurement point of the VR group. We only included T2 in our analysis to ensure comparability of the results with regard to trained control groups. Even though the non-trained control group took slightly longer to complete the T1 assembly and thus the training, there were no differences between screen-based VR and the trained control group from T2 onwards. The overall learning rate was found to be equal, although the absolute difference in assembly times was 27% faster in the control group. As the third study in the field of monoscopic screen-based VR, Langley and co-authors (2016) compared their screen-based VR training system to a trainer-based learning environment and showed that in the long-term, their monoscopic VR training resulted in achieving comparable TCT but statistically significantly higher TA. More specifically, they investigated the effectiveness of a virtual training environment on screen using a Wii controller compared to a trainer plus paper-based training procedure for a car door assembly task. After one week, participants (n = 30) were asked to perform the final assembly task without guidance. There were no differences in long-term skill retention concerning TCT, but there was a statistically significant difference for long-term skill retention concerning overall and trainer-corrected errors (TA), indicating that participants who were trained with the screen-based VR system made fewer errors one week after training. No difference for TA was found concerning self-corrected errors. Finally, Velaz et al. (2014) investigated different interaction modes in screen-based VR and compared them with video-based training. Four out of five groups trained to assemble an electrohydraulic valve in VR with either a computer mouse, a haptic device, or two configurations of a markerless motion capture system (with 2D or 3D tracking of hands). The screen-based VR + 3D markerless motion capture system was referred to as experimental group here. The fifth group, that is, control group, was trained with video. Overall, no difference between the training methods was shown regarding TCT and TA. In terms of training time, the screen-based VR + 3D markerless motion capture system took statistically significantly more time than video training as well as training with other interaction devices such as using a mouse, a haptic device, or 2D. In the following, the effects of monoscopic screen-based VR on subjective evaluations will be examined.

Effects of Monoscopic Screen-Based VR Training Training on Subjective Evaluations

Gavish and co-authors (2015) asked participants whether they thought the training with VR rapidly enhanced their skill level, which was answered with “yes” by all participants. In their study, the authors further examined how the presented VR system was subjectively evaluated in comparison to their AR handheld system presented earlier. In a direct comparison, the compared AR handheld system was rated statistically significantly higher than the screen-based VR system in terms of satisfaction with performance, usability, and willingness to recommend the system. The evaluation of different VR interaction types in the study of Velaz and co-authors (2014) revealed that usability and interaction difficulty was perceived the same for all tested interaction types (computer mouse, haptic device, 3D, and 2D) and the video control group. Langley and co-authors (2016) assessed the subjective evaluations of their virtual system in an additional evaluation study by observing and interviewing participants and analyzing the results with theme-based content analysis. Three main usability issues were identified accordingly: (1) Participants stated that extra instruction would be required concerning the Wii controller and relating to its use, (2) the instructions for discarding the screw were insufficient, and (3) that instructions for moving the whole body to change the view area were inadequate. In addition, the participants indicated in their comments that the system was easy to use and that it was more enjoyable than trainer-based training.

Effects of Screen-Based VR Training with Stereoscopic Glasses on Objective Performance Measures