Abstract

Educational and psychological tests with an ordered item structure enable efficient test administration procedures and allow for intuitive score interpretation and monitoring. The effectiveness of the measurement instrument relies to a large extent on the validated strength of its ordering structure. We define three increasingly strict types of ordering for the ordering structure of a measurement instrument with clustered items: a weak and a strong invariant cluster ordering and a clustered invariant item ordering. Following a nonparametric item response theory (IRT) approach, we proposed a procedure to evaluate the ordering structure of a clustered item set along this three-fold continuum of order invariance. The basis of the procedure is (a) the local assessment of pairwise conditional expectations at both cluster and item level and (b) the global assessment of the number of Guttman errors through new generalizations of the H-coefficient for this item-cluster context. The procedure, readily implemented in R, is illustrated and applied to an empirical example. Suggestions for test practice, further methodological developments, and future research are discussed.

Keywords

A Guttman scale (Guttman, 1950) is an ordered set of items where a positive (e.g., correct or affirmative) response by a person to any item in the set implies that this person provided positive responses to all preceding items in the ordered set. This type of scale has attractive features in terms of interpretation (e.g., Tucker, 1953) as the meaning of a scale sum score unambiguously defines your location on the scale continuum (i.e., the last item correct in the ordered set) and determines what you can (i.e., items at earlier positions in the scale) and cannot do (i.e., items at later positions in the scale). However, a Guttman scale leaves no room for measurement error (i.e., mistakes or inconsistencies in responses), and hence, this deterministic feature makes it impossible in practice to create a working Guttman scale, except for the most trivial number of items within a narrow domain (Kofsky, 1966).

However, with minor modifications to allow for measurement error, the core principles underlying a Guttman scale have been readily adopted for test design in educational and psychological measurement: Instead of an ordered set of single items, an ordered set of item clusters may be designed; items within a cluster are considered approximately equivalent and items between clusters are considered ordered. Having clusters of items allows us to use more reliable cluster scores instead of being forced to rely on error-prone responses to a single item. Tests with such an ordered cluster structure are omnipresent in the behavioral sciences. For measuring intelligence and other abilities, such a test represents a progressive continuum that enables practitioners to track atypical development and test efficiently by for instance starting at an examinee’s age-appropriate item cluster or by stopping the test once the child is making a lot of mistakes, to not needlessly frustrate the child any further (e.g., Bishop, 1979; Dunn et al., 1997; Wechsler, 1999). Similar test formats are used for patient-reported outcomes where the item-cluster order allows for tracking the accumulation of symptoms in a severity ordering and aids in determining relevant treatment options (e.g., Dreyße et al., 2020; Roorda et al., 2005; Watson et al., 2014).

The proper functioning of these tests relies heavily on the strength of its ordering structure. An ordering structure means that there is in general a meaningful ordering at the cluster or item level, and that this ordering is the same for everyone (i.e., invariant). For example, if the clusters are increasing in difficulty, the first cluster should be the easiest cluster for all respondents, regardless of their individual skill levels. If, however, this is not true, then the ordering structure does not hold, which is problematic for the construct validity of the test. Hence, evaluating the ordering structure should be an essential part of investigating the construct validity of a test that is designed to measure a (theoretically) ordered construct, such as tests that reflect learning progressions or skill development (e.g., Wilson, 2009). However, most of the time there is only a tentative theoretical foundation and researchers lack the knowledge and means to validate the ordered item-cluster structure and the corresponding test administration practices and inferences that are built on that item order (Arnesen et al., 2019; Haaf et al., 2020; Huang et al., 2015; Meijer, 2010).

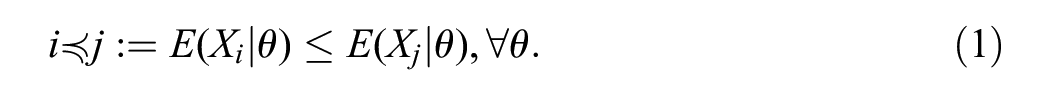

At the core of the validation challenge is the question whether an item (cluster) is indeed more difficult for all respondents than the preceding item (cluster) in the test. More formally, a pair of items

The conditional expectation

If pairwise invariant ordering (Equation 1) holds for all items in an item set, the ordering structure of the item set is an invariant item order (IIO). Yet, an IIO is an unrealistic requirement for various scales (Meijer & Egberink, 2012; Sijtsma & Van der Ark, 2022), and not equipped to handle scales with clustered items. Hence, there is a practical need for ordering properties that are less restrictive compared to IIO, but which nevertheless provide information on the ordering structure of a scale. One promising way to achieve this may be by focusing on the ordering of clusters of items rather than the ordering of individual items (Ligtvoet et al., 2011; Van der Ark & Van Diem, 2003). However, incorporating the cluster level is challenging for three reasons: First, it is not clear whether a definition of an invariant cluster ordering (ICO) should relate to the expectation of the cluster scores or to the expectation of item scores across clusters; second, a structured approach is lacking to distinguish violations at the item level within and between clusters, but also violations at the cluster level; and third, no overall coefficients exist to provide information on whether an ordering is better represented at the item level or at the cluster level.

In this article, we develop a procedure to evaluate the ordering structure of scale with fixed item clusters, based upon initial work in nonparametric item response theory (IRT; Ligtvoet et al., 2010; Sijtsma & Junker, 1996). In the process, we conceptualize different levels of invariant item and cluster orderings, suggest local evaluation methods of discrepancies among empirical response functions, and propose a multilevel extension of the

Ordering Structures of Clustered Items

Let an item set consist of

Let the items be divided into

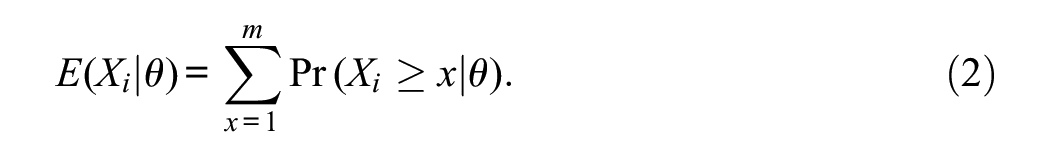

Let the cluster response function be the expected value of the average within-cluster item score:

Let a clustered item set be ordered and numbered according to increasing

This pairwise ICO at the cluster level has only weak implications, as it does not exclude violations of item ordering at the item level. If, for a clustered item set, Equation 4 holds for all cluster pairs for which

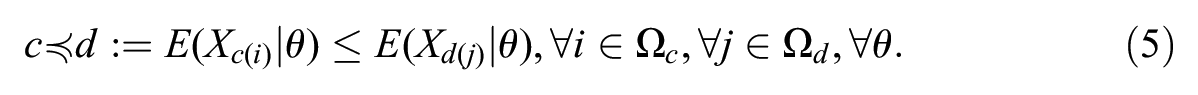

A pair of clusters is invariantly ordered at item level if the expected score on each item

This pairwise invariant ordering at item level has strong implications, as it requires ordering at the cluster level and ordering at the item level for items across different clusters. If, for a clustered item set, Equation 5 holds for all item pairs with

If, for a clustered item set, pairwise IIO (Equation 1) holds for all items in an item set, the ordering structure of the item set is an IIO. An IIO has strong implications, as it requires ordering at the cluster level, ordering of the items across different clusters, and ordering of the items within clusters.

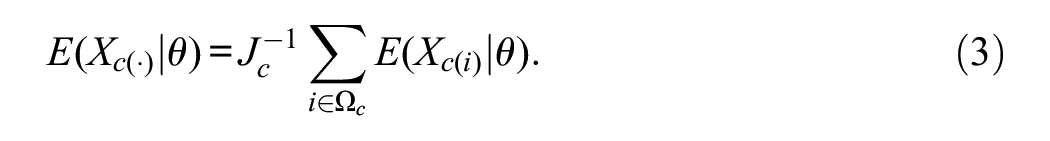

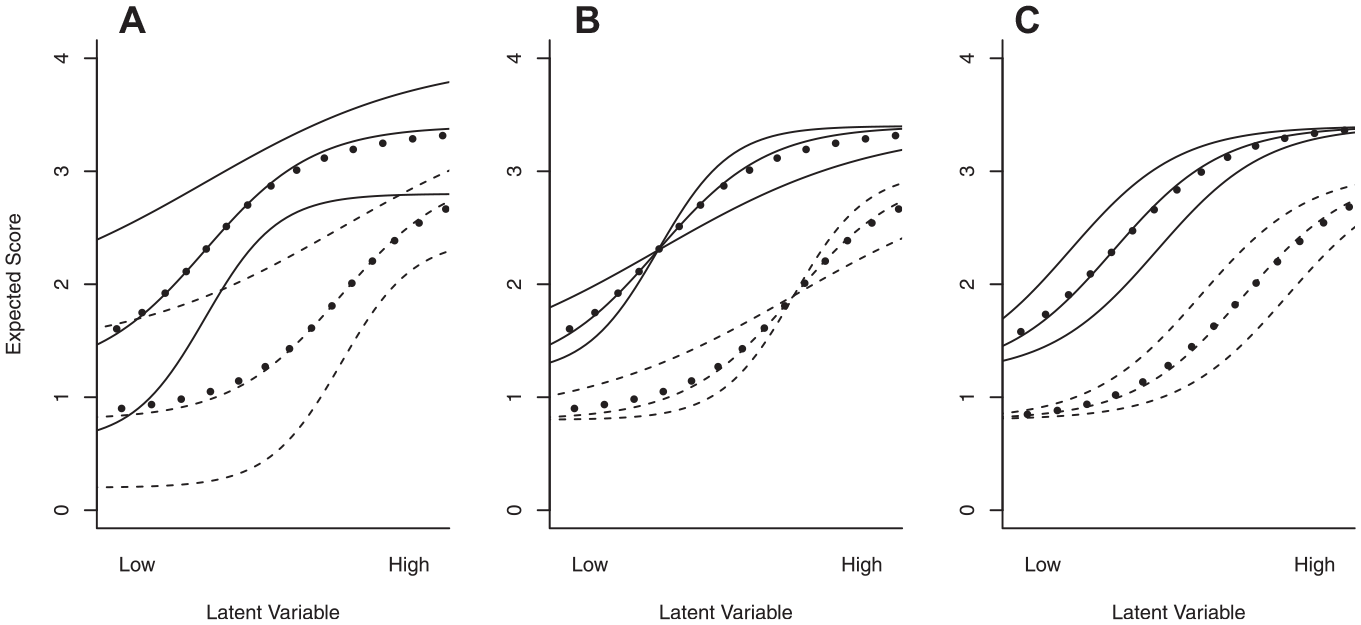

The difference between the weak ICO, strong ICO, and IIO is illustrated in Figure 1. Weak ICO (panel (a)) only requires cluster response functions to not intersect and does not impose any restrictions at the item level, whereas strong ICO (panel (b)) requires all item response functions across different clusters not to intersect, but has no such restrictions for item response functions within the same cluster. IIO (panel (c)) requires all item response functions within and between clusters not to intersect. IIO implies strong ICO, which implies weak ICO, but not vice versa; except for the trivial case where

Three Examples of Ordering Structures for Two Clusters of Three Items. Based on the Intersection of the Item Response Functions (Solid for Items in the First Cluster and Dashed for Items in the Second Cluster) and Cluster Response Functions (Dotted), the Item Set Has a (a) Weak ICO, (b) Strong ICO, or an (c) IIO

Quantifying the Ordering Structure

With its focus on ordering of persons and ordering of items, nonparametric IRT has been shown to provide a useful framework for investigating whether a test (with nonclustered items) has an IIO (Sijtsma & Hemker, 1998; Sijtsma & Junker, 1996). Within this framework, the most general approach to study IIO, applicable to both dichotomous and polytomous items, was proposed by Ligtvoet et al. (2010), based upon pairwise evaluation of item response functions. Given that an IIO has been established, the overall consistency of the item ordering by respondents can be evaluated with coefficient

Methods for Nonclustered Items

Local Fit

The procedure by Ligtvoet et al. (2010) is exploratory and involves an iterative heuristic search for an item set that has an IIO. First, items are ordered and numbered according to their manifest marginal item means

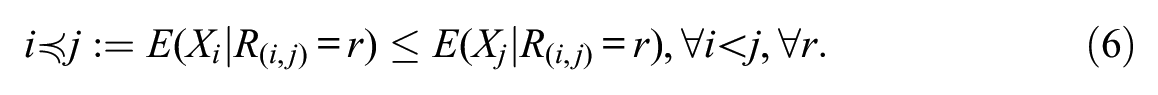

For a locally independent item set, IIO implies manifest IIO (Ligtvoet et al., 2011, Corollary). The expected item scores in Equation 6 are then estimated by their sample equivalents

Global Fit

For an item set that has an IIO, coefficient

For a locally independent item set that satisfies an IIO,

Generalizations for Clustered Items

Rather than an exploratory approach that uses the empirical item means to order and number the items, a more confirmatory approach may consider an a priori defined, theoretical ordering of clustered items. If no a priori ordering is defined within clusters, we suggest ordering and numbering items within each cluster based on their mean scores. The goal is to assess the structure (local fit) and strength (global fit) of the assumed ordering. In increasing order of strictness, the ordering structures to be assessed for clustered items are weak ICO, strong ICO, and IIO.

Local Fit

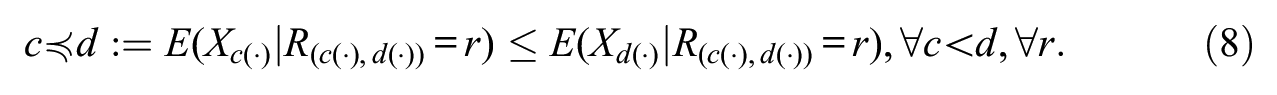

The basis for investigating weak and strong ICO will be corresponding adaptations to the comparison of item response functions as given in Equation 6. To enable between-cluster investigation, we propose using a cluster rest score

In contrast, strong ICO requires an investigation at item level and will be accommodated by investigating the manifest order property as in Equation 6, with the modification that an order violation can only occur for items pertaining to a different item cluster. This results in manifest strong ICO:

To evaluate IIO for clustered items, we suggest replacing rest score

Global Fit

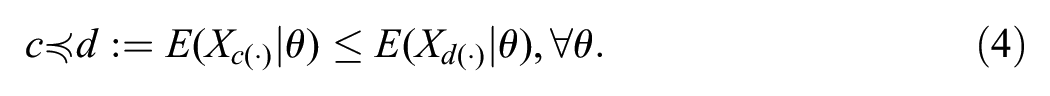

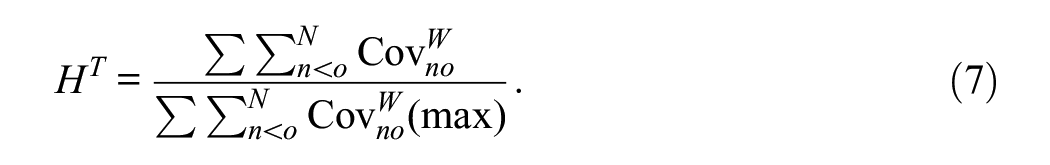

The practical use of coefficient

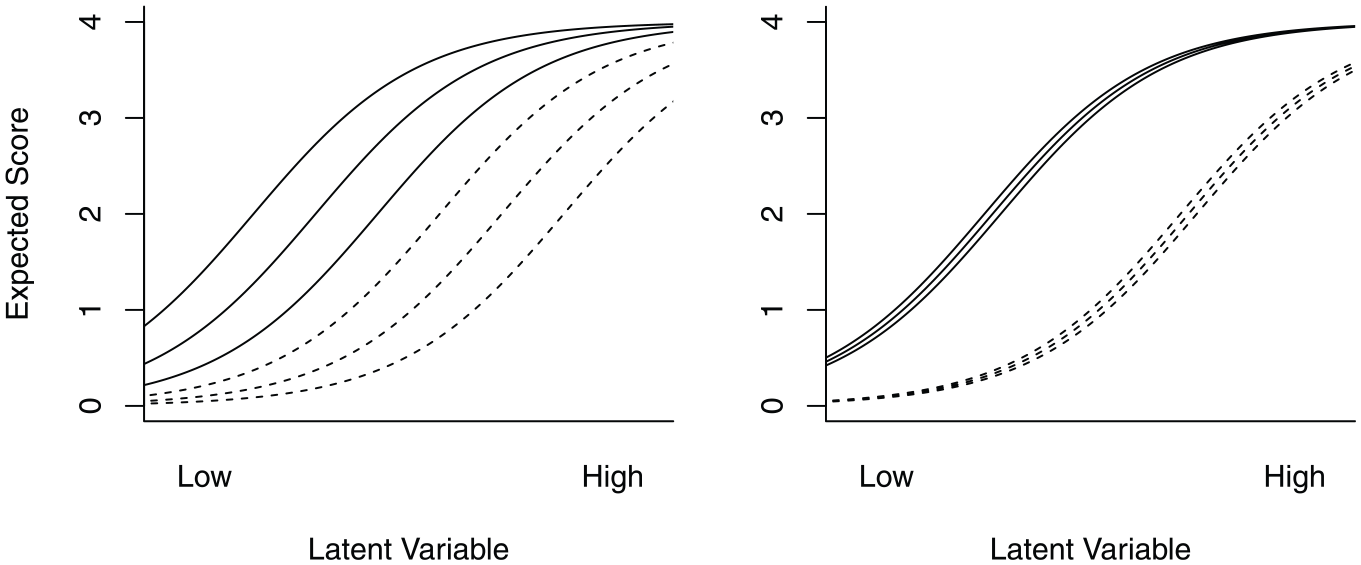

Left Panel: Two Clusters of Three Item Response Functions With Equidistant Difficulty. Right Panel: Two Clusters of Three Item Response Functions With Similar Difficulty. In Both Cases, Coefficient

We aim at distinguishing between the ordering consistency of items and the ordering consistency of clusters. This can be achieved by evaluating not only the consistency of respondent scores within items but also the consistency of respondent scores between items within the same cluster. We approach this distinction using a similar strategy as Snijders (2001), who generalized

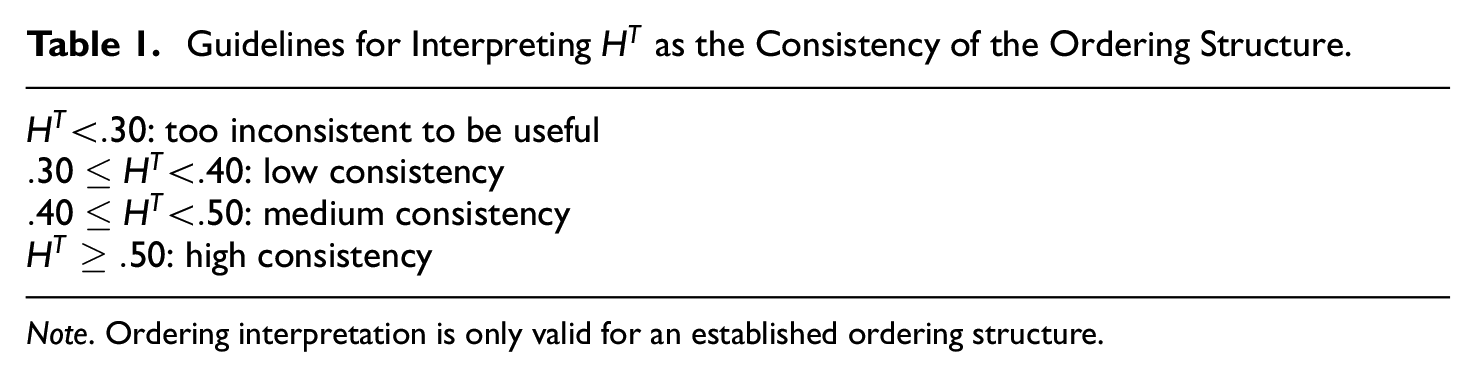

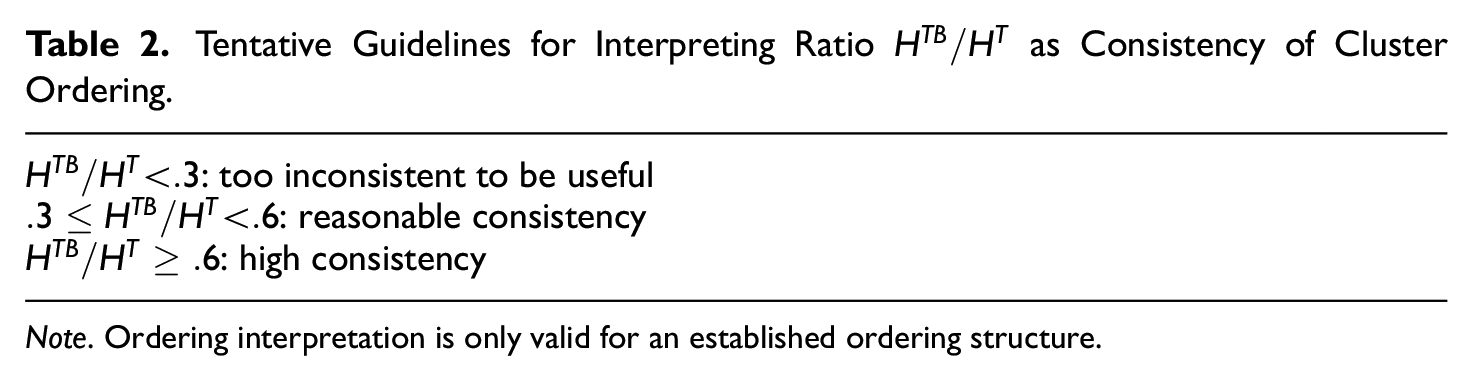

As tentative guidelines, we propose the following. First, we suggest, based on Ligtvoet et al. (2010), to interpret

Guidelines for Interpreting

Note. Ordering interpretation is only valid for an established ordering structure.

Tentative Guidelines for Interpreting Ratio

Note. Ordering interpretation is only valid for an established ordering structure.

Investigating the Ordering Structure of a Clustered Item Set

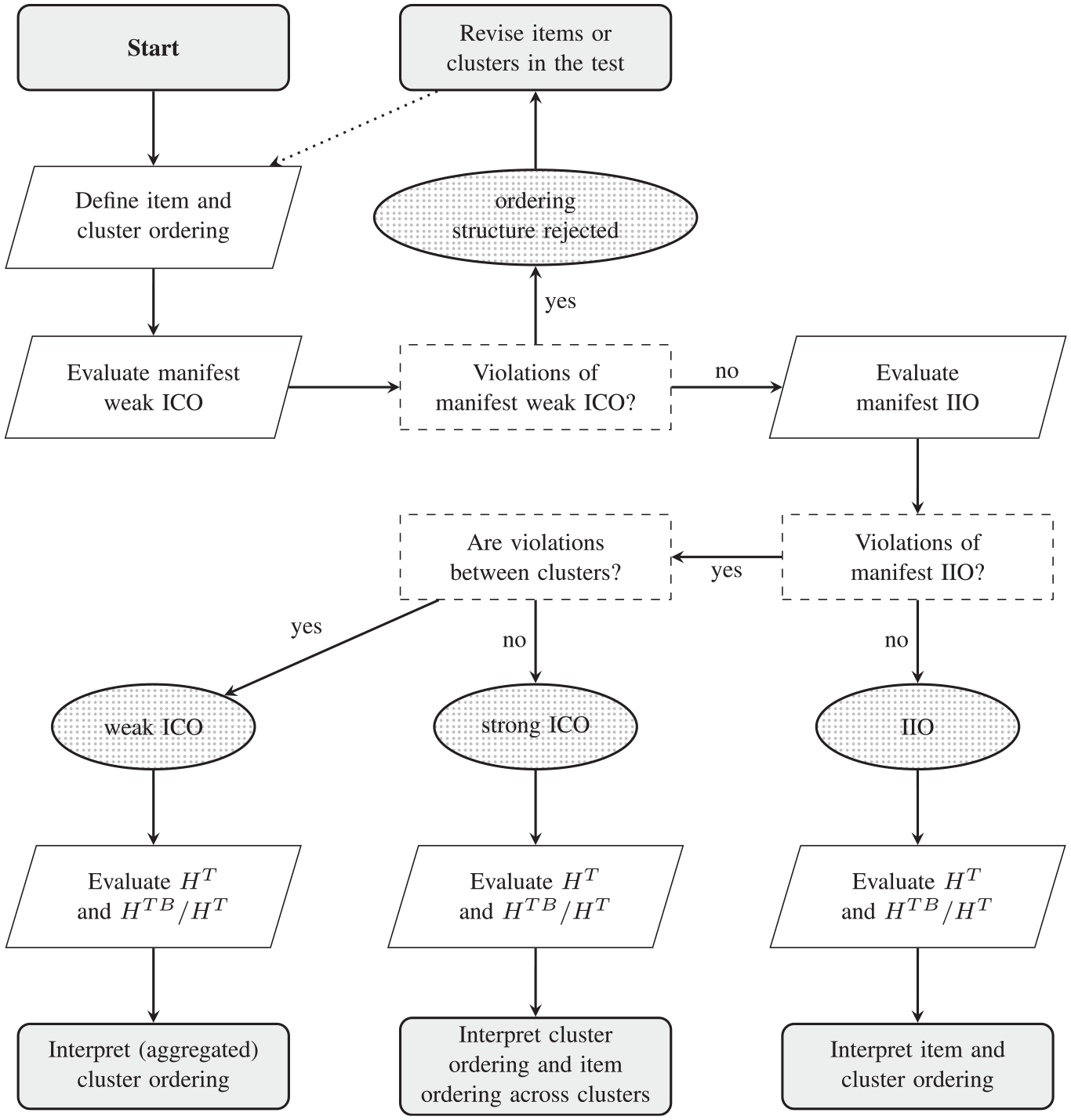

Figure 3 shows a flow chart of a structural procedure for investigating the ordering structure of a test with a given clustering of the items.

Flowchart of the Procedure for Investigating the Ordering Structure for a Given Set of Clustered Items

The procedure commences with defining the cluster ordering for the population. This ordering may be based on theory or estimated from the sample data (e.g., by ordering the clusters from easy to difficult). If desired, an item ordering (within clusters) may also be defined. If only a cluster ordering is defined, items within clusters are assumed unordered. This means that these items may be equally difficult or violating IIO. Manifest weak ICO is evaluated for all cluster pairs. If significant violations of manifest weak ICO are present, any ordering structure is rejected, because violation of weak ICO implies violation of strong ICO and of IIO for item clusters. Note that this does not mean there exists no ordering structure in the test, just that the investigated structure does not hold for the a priori defined item and cluster order.

If no violations exist, manifest IIO for item clusters is investigated, as this entails both manifest strong ICO and manifest IIO of item pairs within clusters. If significant violations of manifest IIO are revealed between clusters, it is concluded that only weak (and not strong) ICO holds for the test. Coefficient

If significant violations are only present within clusters, it is concluded that a strong ICO holds for the test. Coefficient

If no significant violations of manifest IIO are revealed, it is concluded that an IIO holds for the test. Coefficient

Revising the items, the clustering, their defined order, or removal of items or clusters may result in a (stronger) ordering structure. The output from the analysis indicated which items or clusters are problematic. For example, clusters or items from clusters may be subsequently removed, until a test satisfies a desired ordering structure. However, revisions and removing items may substantially affect the empirical cluster ordering and may invalidate previously drawn conclusions pertaining the weak ICO structure. Be aware that such an iterative procedure comes with all the risks and no guarantees similar to what applies to (mis)specification searches in other psychometric contexts (see, e.g., MacCallum, 1986).

Real-Data Example

As an illustration, we will look at item response data of

We investigated the ordering structure of the TROG using the procedure displayed in Figure 3. We implemented the proposed methodology in the

Descriptives

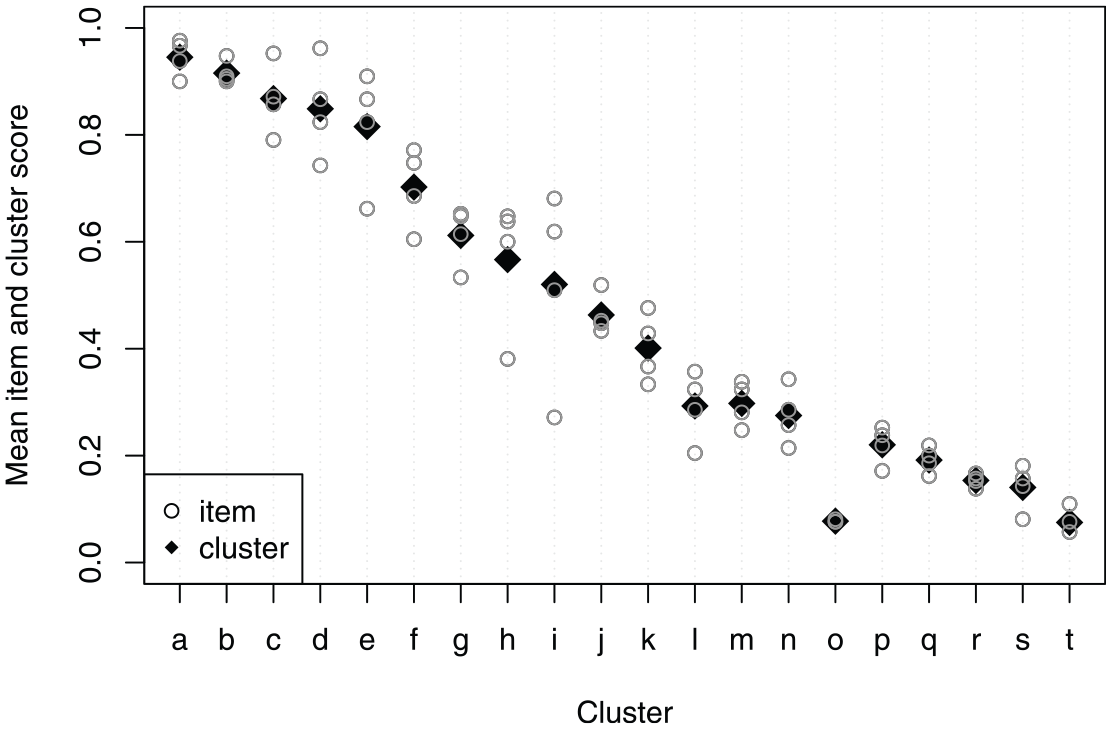

Figure 4 shows the mean item and cluster scores for the theoretically defined cluster ordering. In general, the defined cluster order coincides with the empirical cluster order (i.e., the order in mean cluster scores), but two clusters may be displaced. Cluster

Observed Mean Item Scores (Circles) and Mean Cluster Scores (Diamonds) for the 20 Theoretically Ordered Clusters (4 Items per Cluster) of the TROG in the Total Sample

Investigating the Ordering Structure

Local Fit

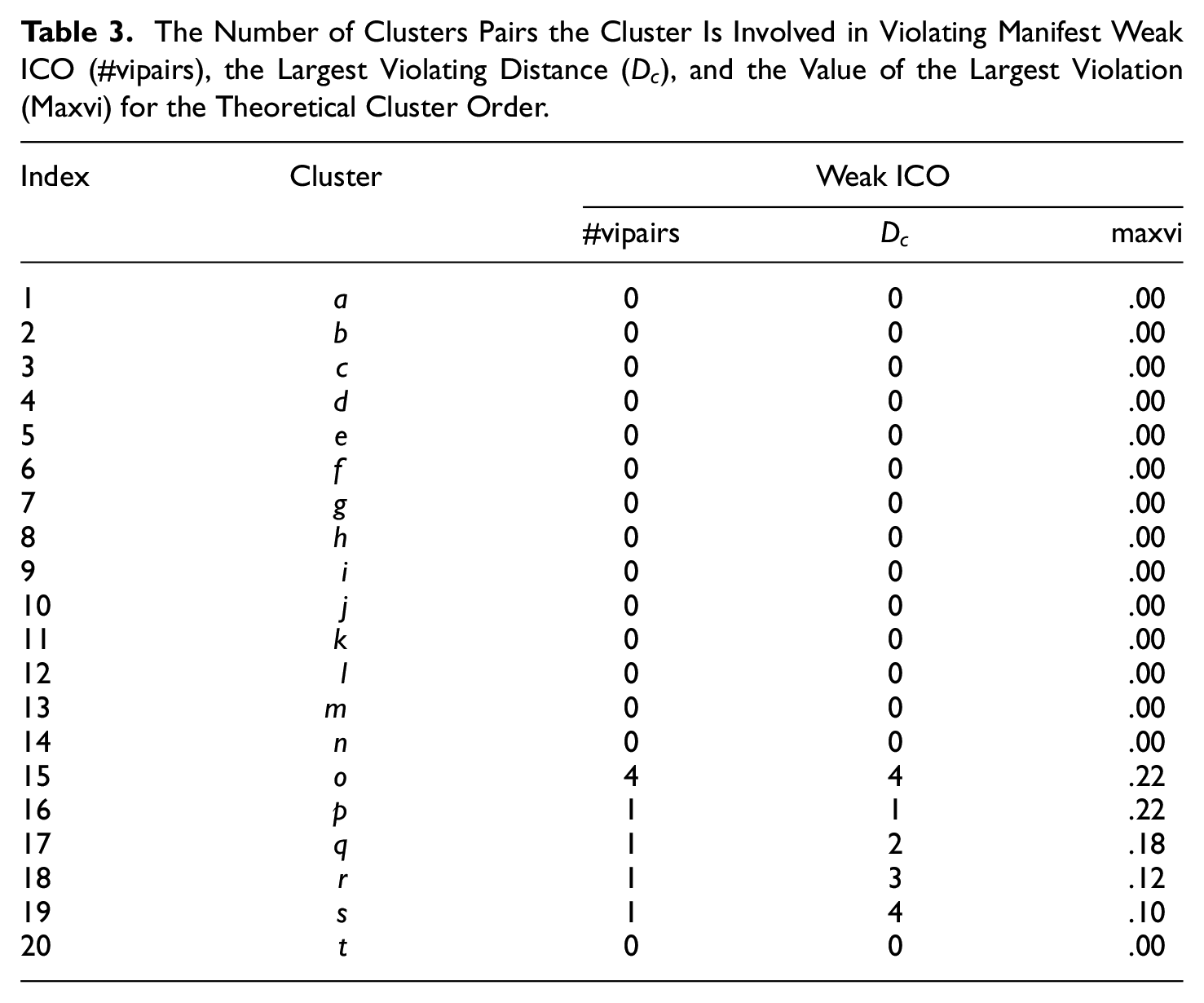

Manifest weak ICO was for each cluster pairwise evaluated with the other 19 clusters. Table 3 summarizes the local fit evaluation of the test’s theoretical ordering structure and shows for each cluster for how many cluster pairs there was at least one significant violation of manifest weak ICO (Column 3), the largest violating distance (i.e., the largest absolute distance between the indices of the violating cluster pairs; Column 4), and the value of the largest violation (i.e., the maximum observed pairwise difference between conditional cluster means; Column 5). There was at least one significant violation of manifest weak ICO for cluster

The Number of Clusters Pairs the Cluster Is Involved in Violating Manifest Weak ICO (#vipairs), the Largest Violating Distance (

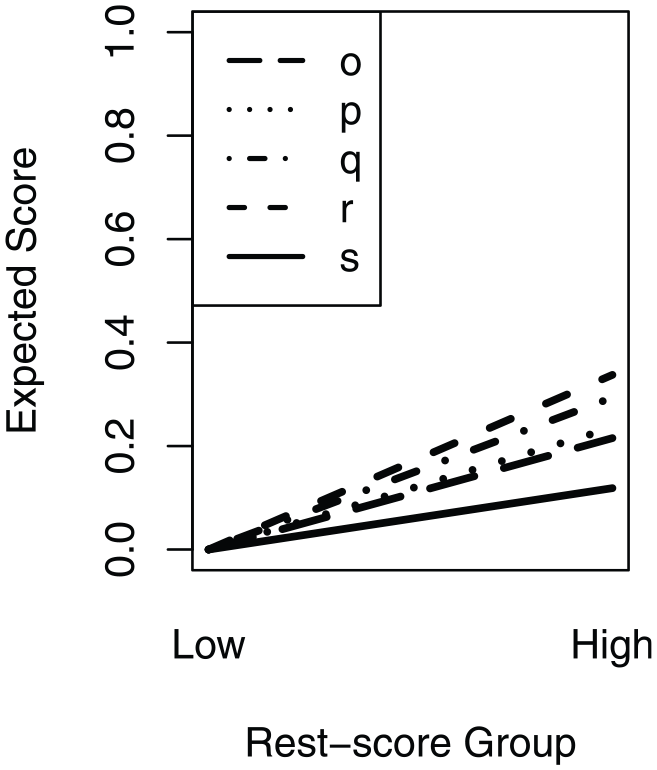

Figure 5 shows the estimated cluster response functions for clusters

Estimated Cluster Response Functions of Clusters

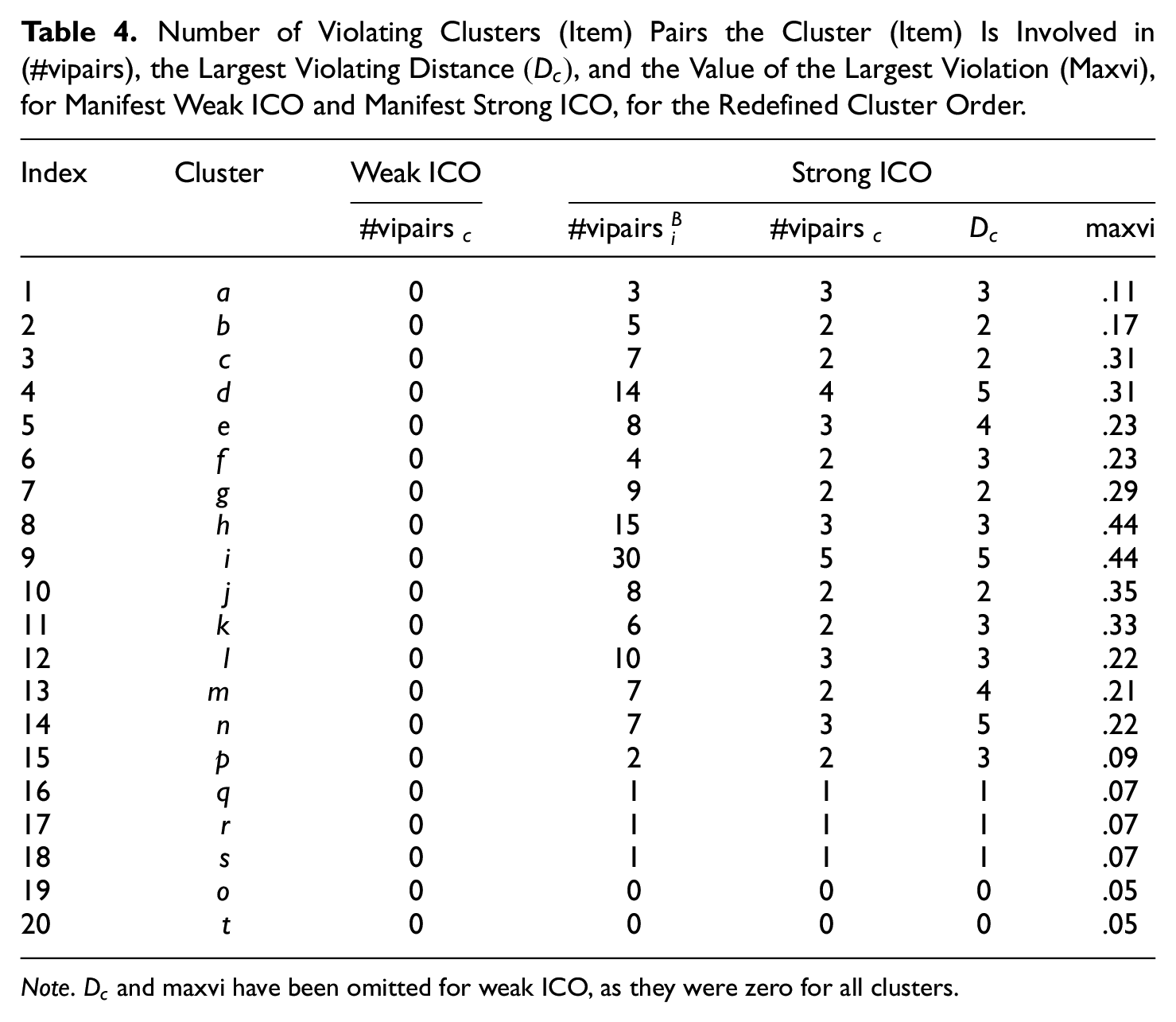

Number of Violating Clusters (Item) Pairs the Cluster (Item) Is Involved in (#vipairs), the Largest Violating Distance

Note.

Evaluating manifest weak ICO for the redefined cluster order showed no significant violations of manifest weak ICO (Table 4, Column 3). This confirms that the violations in the first manifest weak ICO analysis were explained by the cluster not being in the appropriate position, rather than the cluster ordering not being invariant.

We continued the analysis by evaluating manifest IIO for item clusters, as this includes evaluating strong ICO and IIO. Here, we merely focus on the results relevant to strong ICO; hence, manifest IIO was evaluated for each item with the 76 items that were in a different cluster (between), ignoring the three items that were in the same cluster (within). Across all

Global Fit

Based on the results, we conclude that the ordering structure of the TROG with the redefined order is a weak ICO. The general consistency of the ordering structure of the test was evaluated with

A weak ICO allows for interpreting the ordering structure at the cluster level, but not at the individual-item level. Hence, even though the clusters are increasing in difficulty, individual items may be more or less difficult than expected based on their cluster. The weak ICO ordering structure supports the practice of administering the test in the theoretical cluster order, for which cluster

Discussion

For clustered items, an invariant ordering can apply at various levels and in various degrees of strictness. Furthermore, a distinction needs to be made between ordering of items within a cluster, where no a priori expected order restrictions apply, and the ordering of items between clusters, where an ordering is required. Evaluating the ordering structure of a test benefits the interpretation of a test and test scores and is a requirement to allow various types of test administration, including presenting items in ascending difficulty, and using starting and stopping rules in a sequentially ordered test. In this article, we distinguished three increasingly strict types of ordering to describe the ordering structure of a measurement instrument with item clusters. Weak ICO requires an invariant ordering defined at the aggregate level of cluster scores, whereas strong ICO requires the invariant ordering to apply directly at the item-score level between all items belonging to different clusters. IIO is the strongest property, requiring all items to be invariantly ordered, between but also within clusters.

Building on nonparametric IRT, we proposed a procedure for evaluating the ordering structure of a clustered item set according to these sequentially stricter invariant ordering properties. These properties are investigated by evaluating violations of manifest weak ICO for all cluster pairs and manifest IIO for all item pairs, where the latter distinguishes between within- and between-cluster violations. To support this investigation of local ordering violations, we introduced coefficient

Besides being intuitive and flexible, the procedure is also computational straightforward, making it an attractive tool for practitioners in the field that are concerned with the validity of the ordering structure of their measurement instrument. For even better accessibility, we implemented the methodology into function

The suggested analysis is confirmatory in nature, useful to evaluate the ordering structure of a fixed clustering of items, after which the ordering or clustering may be manually adapted. Hence, this approach is an important part of investigating the construct validity of an instrument that in theory has an ordering structure. Future simulation studies can provide insight how the method performs in the presence of different types and severity of violations and different cluster characteristics. Furthermore, they may update the suggested guidelines for the global fit coefficients. In addition, items may not naturally be clustered, requiring a more exploratory approach. In that case, items may be ordered based on their observed item means, and method manifest IIO may then be manipulated to combine items into clusters until the desired ordering structure holds (also, see Van der Ark & Van Diem, 2003). From the latter perspective, generalizations of the approach toward a more exploratory detection of item clusters, weighting violations between items based on neighborhood proximity, could be of interest for future work. Simulation studies could contribute to evaluating the performance of different methods, how (well) clusters are constructed, and the preferred number of clusters, items, and respondents. Computationally, the proposed methods work if there are at least three clusters containing at least two items each (although the number of items may vary across clusters). Moreover, future research can investigate the relation between the ordering structure of a test and guidelines for adaptive test administration, such as the starting and stopping rules.

We defined the cluster score as the average item score within the cluster, such that the cluster score is on the same scale as the item scores and to allow for varying cluster sizes. Our results and procedures do not translate straightforwardly to other types of cluster scores, such as an “all items correct” or “at least one item correct” score. For such dichotomization of cluster scores, both the expected cluster score and the cluster response function are different, possibly leading to a different observed order of cluster scores.

In addition, we pursued a nonparametric IRT approach, such that no further restrictions were placed on the item or cluster response function besides the ordering structure, and the procedure made use of typical asymptotic tests for pairwise comparisons. Alternative procedures may be developed along the lines of, for instance, shift-scale parametric item response models (Haaf et al., 2020) or by incorporating resampling techniques such as permutation testing (e.g., Chung & Romano, 2013) for violations of pairwise manifest IIO or formulating Bayes factors for sets of order hypotheses (Van de Schoot et al., 2012).

The procedure for evaluating the ordering structure of a clustered item set may be used as part of a more elaborate scaling analysis, such as Mokken scale analysis (e.g., Mokken, 1971; Sijtsma & Molenaar, 2002; Sijtsma & Van der Ark, 2017). Other methods in this analysis provide insight in other test and item characteristics, such as the scalability of items, whether the item response functions are monotonically increasing, or whether there is local dependency between items. Furthermore, for a more systematic investigation of the construct validity, the proposed method may be complemented with the investigation of other item properties or attributes, such as by incorporating them into an explanatory (parametric) item response model as proposed by De Boeck and Wilson (2004, Section 1.4). In general, investigating the ordering structure of a test can provide useful information, but the decision to redesign or remove items or clusters should also be based on item content and theoretical considerations, to avoid losing important aspects of the theoretical construct in the measurement instrument.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Gustafsson & Skrondal visiting scholarship 2022 of the Centre for Educational Measurement at the University of Oslo for the first author and a research grant (FINNUT-342925) of the Norwegian Research Council for the second author.