Abstract

Setting cutoff scores is one of the most common practices when using scales to aid in classification purposes. This process is usually done univariately where each optimal cutoff value is decided sequentially, subscale by subscale. While it is widely known that this process necessarily reduces the probability of “passing” such a test, what is not properly recognized is that such a test loses power to meaningfully discriminate between target groups with each new subscale that is introduced. We quantify and describe this property via an analytical exposition highlighting the counterintuitive geometry implied by marginal threshold-setting in multiple dimensions. Recommendations are presented that encourage applied researchers to think jointly, rather than marginally, when setting cutoff scores to ensure an informative test.

Introduction

The use of measures and scales to classify individuals is one of the oldest and perhaps most controversial uses of testing to this day (Kaplan & Saccuzzo, 2017; Loewenthal & Lewis, 2018; Thorndike et al., 1991). From the early days of the Standord-Binet test for mental capacity to modern computerized adaptive testing, relying on tests to classify people into groups or rank them according to some trait or (latent) ability has been of major interest. To understand the scope of this prevalence, consider the following. According to the College Board (2019), in the United States alone 2.2 million students took the SAT and more than 8 million students took a test from the SAT suite of tests during the 2018-2019 school year. For the GRE, more than a half million people took the test in the past year alone (Educational Testing Services, 2019). Large-scale educational testing has become a critical component of a high school student’s aim to be selected to a prestigious college, university, or other postsecondary program, and the decision hangs, at least in part, on whether the student is above or below a certain cutoff score on those tests, usually derived from their quantile position with respect to other students (Soares, 2015).

Given the relevance that testing has in helping classify, rank, or diagnose individuals, various approaches have been developed to find optimal decision points along a scale above (or below) which an individual must score before she or he is categorized (Cizek, 2006; Habibzadeh et al., 2016; Kaftandjieva, 2010). The immediate question then becomes how should this threshold be determined? Since many of the conclusions derived from a test administration depend on whether or not the threshold has been chosen as correctly as possible to match the intended uses of the test, this question is among the most salient.

In the majority of cases, the process of setting thresholds depends on whether a test is norm-referenced or criterion-referenced (Hambleton & Novick, 1973). Norm-referenced tests compare a test-taker’s responses with the responses of their peers in order to create a distribution of scores along which each respondent can be located. Criterion-reference tests specify a cutoff score in advance, which does not change irrespective of the performance of the test-takers.

Perhaps one of the most widely used classification systems of methods used to set cutoff scores is the Jaeger (1989) system, which divides them into test-centered or examinee-centered. Test-centered methods seek to establish threshold values based on the characteristics of the test, the items or the scoring process, whereas examinee-centred rely on the particular characteristics of the test-takers to set the cutoff scores. In spite of the importance of considering the characteristics of test-takers during the scoring process, test-centered methods are more widely used and we will offer a brief summary of some methods that are popular among researchers and test-developers (Kane, 1998; Lewis & Lord-Bessen, 2017). For a more extensive discussion of examinee-centered methods please consult Kaftandjieva (2010).

Judgment-based methods encompass procedures such as those described in Angoff (1971), Ebel (1972), Jaeger (1982), and Nedelsky (1954). The commonality among these (and other) methods is that the impressions of subject-matter experts and experienced researchers in the domain area play a role in deciding which items or test scores should be used as thresholds. They usually intersect with other types of statistical analyses, but the emphasis is placed on the subjective evaluation and agreement among expert judges regarding what the cutoff score should be.

There are also a variety of statistically oriented techniques where the emphasis is on the score distribution and cutoffs are set based on whether or not this distribution has certain properties. One of the oldest and perhaps most popular methods still being used within the applied psychometric literature is selecting the threshold value to correspond to a certain number of standard deviation (SD) units above or below the mean, usually under the assumption of normally distributed data. Traditionally, a value beyond 2 SD units is selected to emphasize the fact that individuals are categorized on the basis of their extreme scores, which differentiates them from what a “typical” score respondent would look like. Scales such as the Ages and Stages Questionnaire (Bricker et al., 1988), the Minnesota Multiphasic Personality Inventory (Schiele et al., 1943) and the Early Development Instrument (Janus & Offord, 2007) have relied on this method, at least in their initial conceptualization. A closely related method that is widely used in the health sciences is setting cutoff values based on percentiles (Loewenthal & Lewis, 2018). In this scenario, the threshold values would also come from a normative sample, but the difference from the SD units described above is that a particular cumulative probability is used as a decision point, as opposed to a value away from the mean. Both methods are intrinsically related, though, since one could switch from one approach to the other under the assumption that the random distribution’s parameters are known. For instance, if

As computational power became more readily available, more sophisticated approaches also became popular among researchers interested in developing new measures. A particularly popular one, which comes from signal detection theory, is the receiver operating characteristic curve (ROC). In essence, ROC curve analysis attempts to solve a binary classification problem by finding the optimal points that balance the classifier’s true positive rate (also known as probability of detection or sensitivity) and the false negative rate (also known as probability of false alarm or specificity; Fawcett, 2006; Zou et al., 2016). Ideally, the point that maximizes the area under the curve also offers the best balance between sensitivity and specificity, thus offering researchers with an optimal cutoff value to use as a threshold. Since the popularization of ROC curve analysis in psychometrics came after the use of the percentile or the SD method, many scales have collected further validity evidence by analyzing new data using this technique, such as the Beck Depression Inventory-II (Beck et al., 1996) or the revised version of the Ages and Stages Questionnaire (Squires et al., 1997). For a more exahustive overview of how to use ROC curve analysis, please refer to Fawcett (2006).

Item response theory is perhaps the most advanced theoretical framework devoted to the development and analysis of tests and measures. Item response theory approaches attempt to relate the probability of item responses to hypothesized, true latent traits (usually designated by the Greek letter

Irrespective of which method is employed to set the threshold values, their practical and applied use is very similar across scales. Once a respondent scores above or below the predefined cutoff, she or he is assigned to a particular category to complement the process of assessment or to aid in some diagnostic procedure. As we will unpack in the following sections, such a process imposes a particular geometry on the resulting categories. When these categories are the product of cutoff scores from many subscales set independently, we will show that such assessments quickly lose their value; that is, lose their ability to meaningfully discriminate members of one category from another. Even when a categorization is based on as few as 4 subscale scores, as many as 25% of sample subjects will be unreliably classified (see section Empirical Demonstrations). Such tests thus may be considered to have questionable discriminatory reliability simply because of how they create their diagnostic categorizations marginally over multiple dimensions/subscales of the target phenomenon.

Theoretical Framework

Reliably Classified Individuals

Throughout this article, the method of setting threshold values based on being above or below the mean plus or minus a certain number of SD units will be employed as our primary working example, as we consider it to be the easiest one to understand (and one of the oldest ones still in use). Nevertheless, it is important to point out that similar conclusions would be found if different methods, as discussed in the Introduction, were implemented.

Anytime one employs an imperfect measurement (i.e., test) to classify individuals into groups, some sample individuals will eventually be misclassified. Intuitively, the less reliable a test, the less reliable the classifications. We mean to invoke both the intutitive and the technical meaning of reliablity here, as defined classically in, for example, Lord and Novick (1968). Indeed, low reliablity of a test necessarily implies that individuals with the same (latent) true score will likely be assigned substantially different observed scores by the test. The less reliable the test, the more variation we will observe in these observed scores for otherwise interchangeable sample respondents (interchangeable in that they all share a true score/ability). And by the same token, a perfectly reliable test would perfectly distinguish sample individuals according to their (latent) true scores/abilities; thus, classification arising from such a test would be error-free.

We do not intend to dwell here on the exhausting number of ways to quantify reliablity that have been proposed and argued in the literature, as our point only requires the acknowledgement that a test that is not perfectly reliable in the population will inevitably lead to misclassification when people are split into groups that are supposed to reflect some latent ability/trait according to their observed scores on the test. Then, those individuals whose observed scores fall near the boundary of these group definitions are the most likely to be misclassified. A few examples will help us illustrate this.

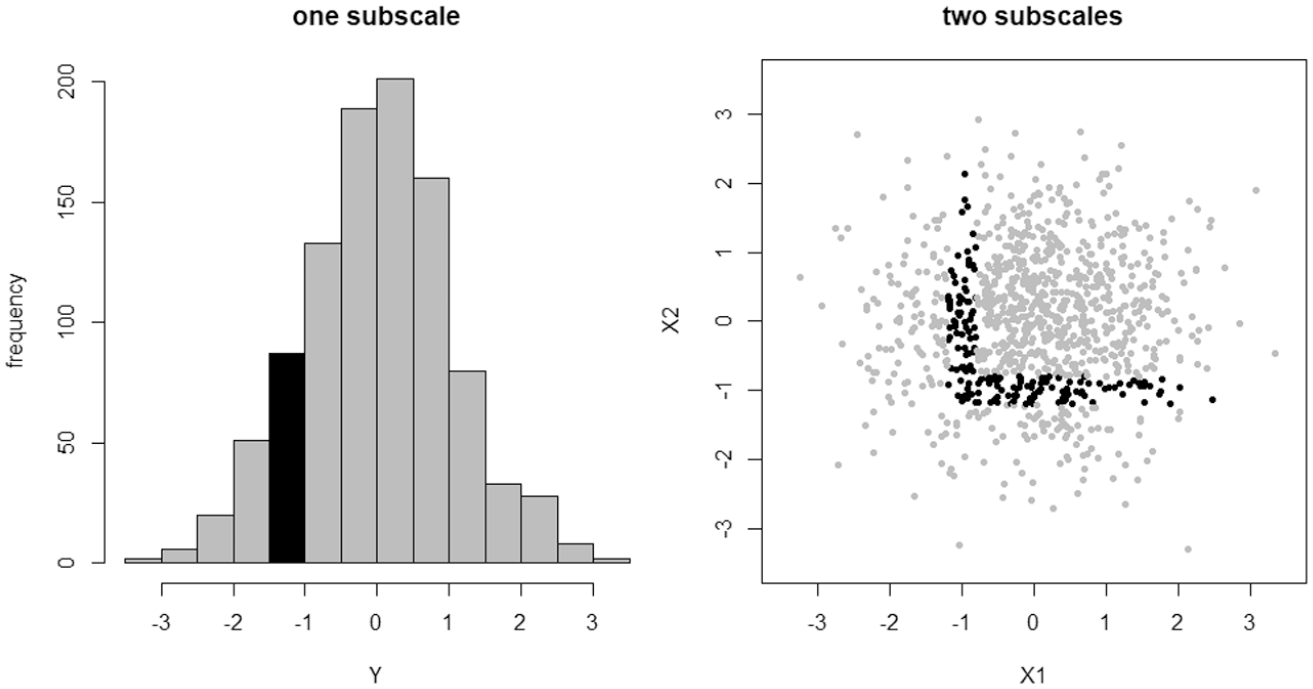

Let

Under test

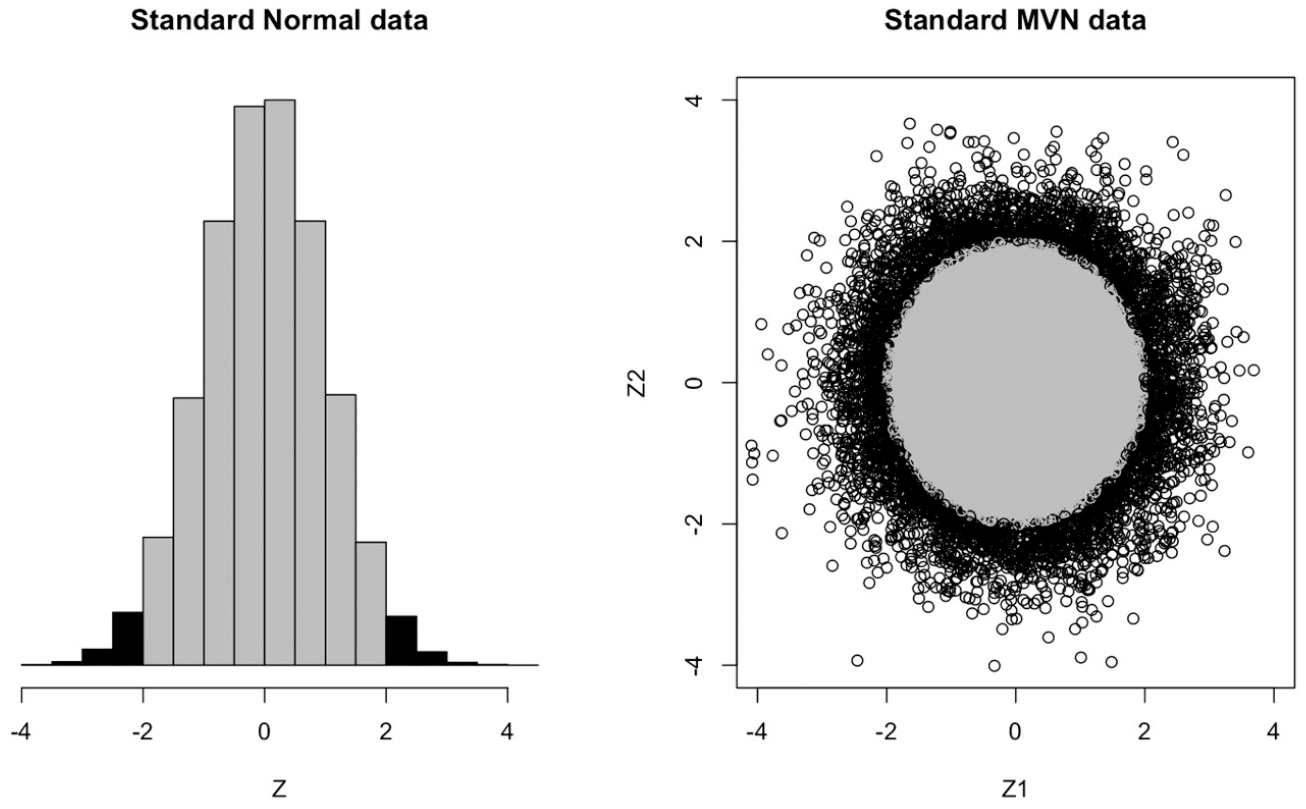

Unreliably classified individuals (black points) from a one subscale or two subscale (uncorrelated) test where cutoffs have been set independently. Approximately 9% of sample individuals are unreliably classified under test

Now consider the analogous situation under test

What we see here is that a test with two subscales and independently set cutoff scores creates a considerably higher proportion of unreliably classified individuals than an analogous test on only one (sub)scale. The situation gets worse as we continue to add subscales (see the next section), until eventually virtually the entire sample will be unreliably classified.

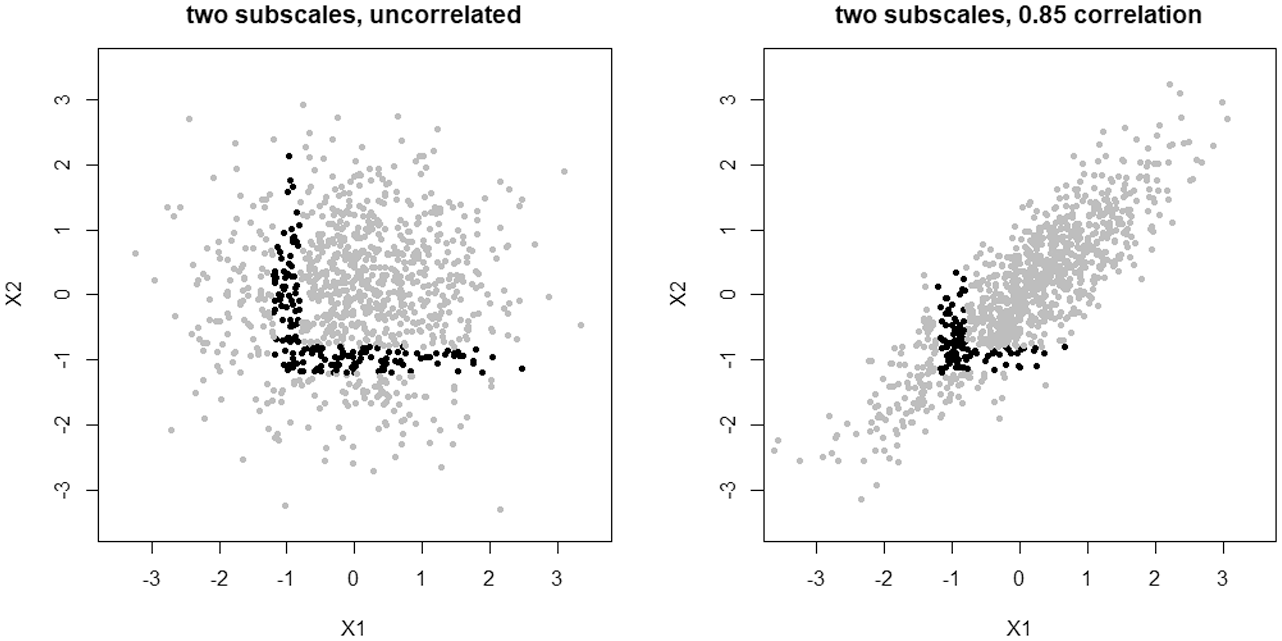

There are two ways one could hope to address this problem. One would change how the subscales relate to each other; the other would change how the pass/fail boundary is defined. Considering the first possibility, we will see in the next section that inducing correlation between subscales can slow but not ultimately prevent the problem. Indeed, this can be visualized as in Figure 2. Here, the previous two subscale test

Two tests, each composed of two subscales with

The second option then is where we can hope to avoid this problem. In the next section, we state and prove a theorem that quantifies just how informative a test can be as the number of subscales to be (independently) thresholded increases, assuming normal data. More precisely, we show that as the number of subscales increases, a test determined by independently setting thresholds at each subscale necessarily loses all of its ability to disciriminate between “passing” and “failing” individuals; that is, all sample respondents will become unreliably classified, indistinguishable up to ordinary measurement error. This suggests that the way forward requires setting cutoff scores jointly across subscales, rather than marginally/independently.

Theoretical Results

We first present our main result and its proof. In the subsequent subsections, we unpack the practical meaning of this result for applied practice, with a focus on the geometrical implications of threshold-setting in higher dimensions (i.e., with many subscales). We focus on the case of multivariate normality for two important reasons. First, because it appears that (either explicitly or implicitly) this is the standard assumption made regarding the joint distribution of subscale scores in most testing situations. And second, because the requisite mathematics are reasonably accessible under a multivariate normal structure.

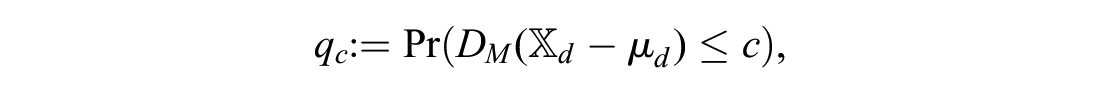

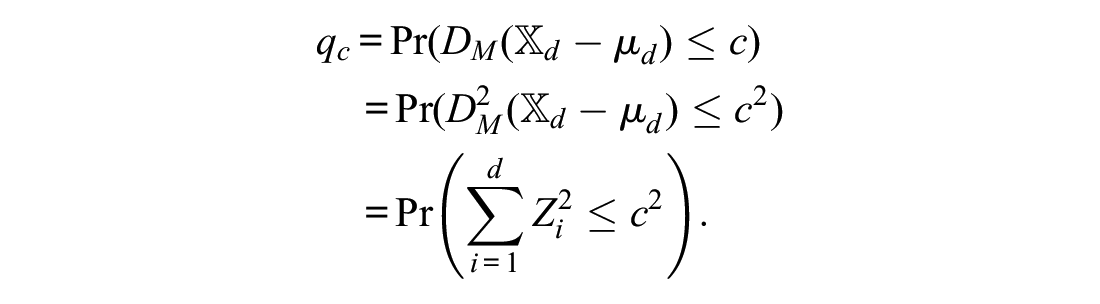

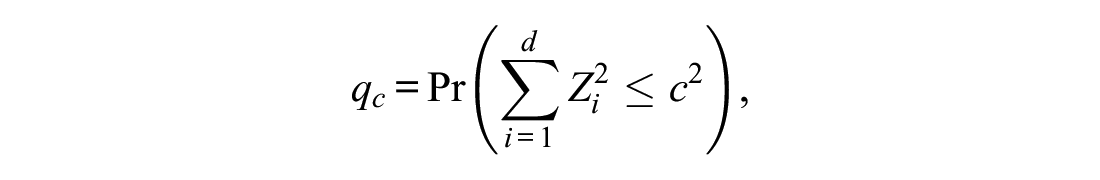

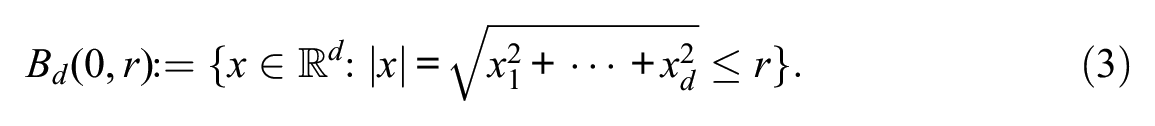

where

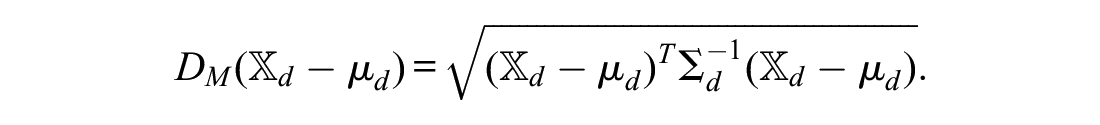

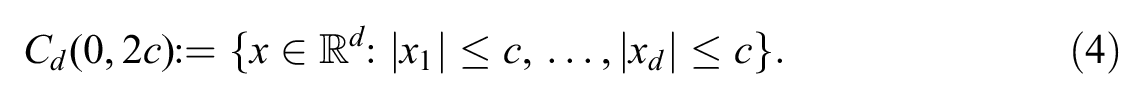

Also denote

where

In one dimension,

At the same time, in one dimension,

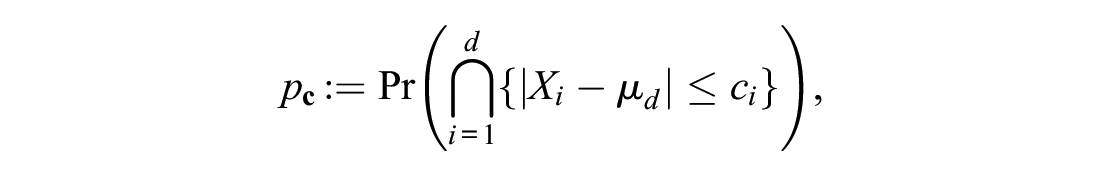

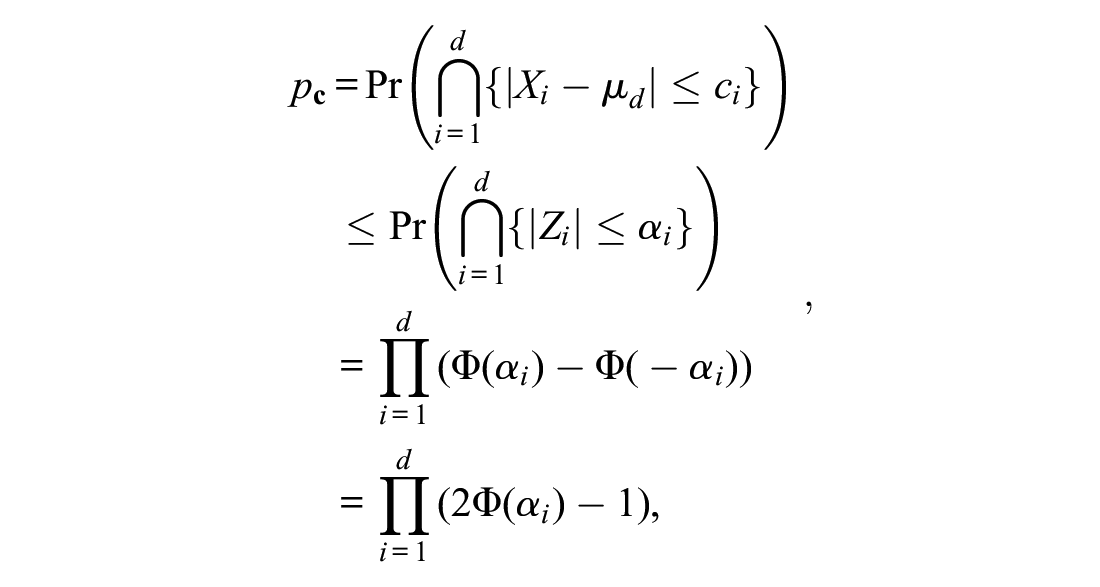

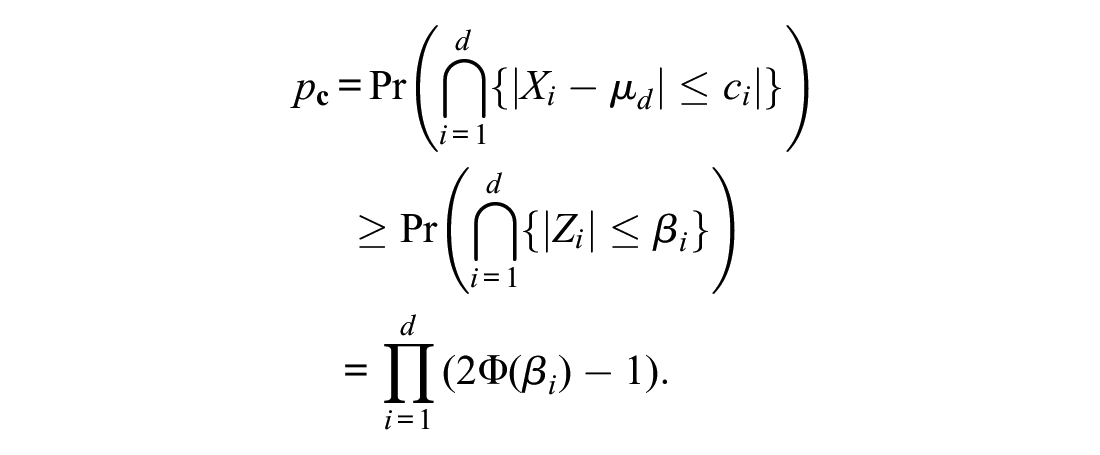

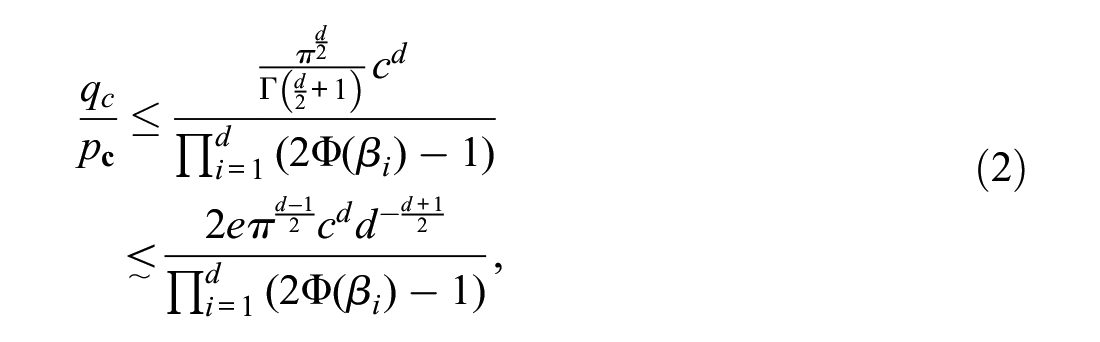

Proof of Theorem 1. We first convert

where

Note that, applying the central limit theorem and then standardizing, one recovers the classical result that

It is in fact also true that

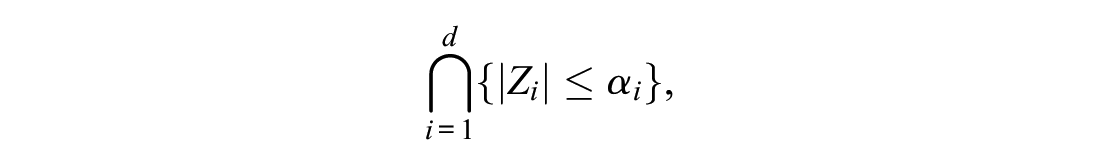

describes a box in

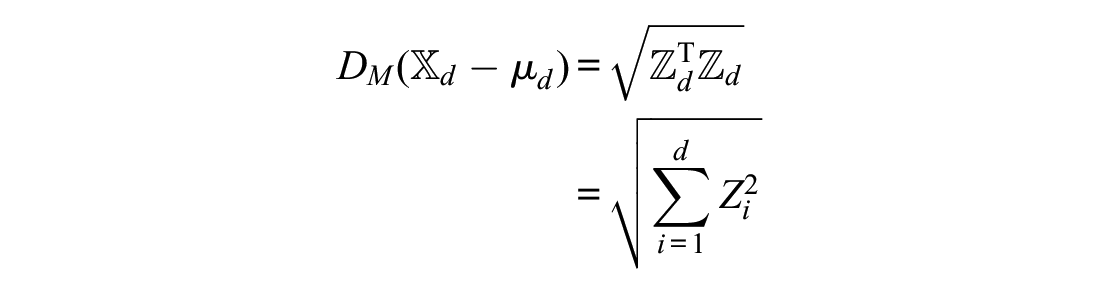

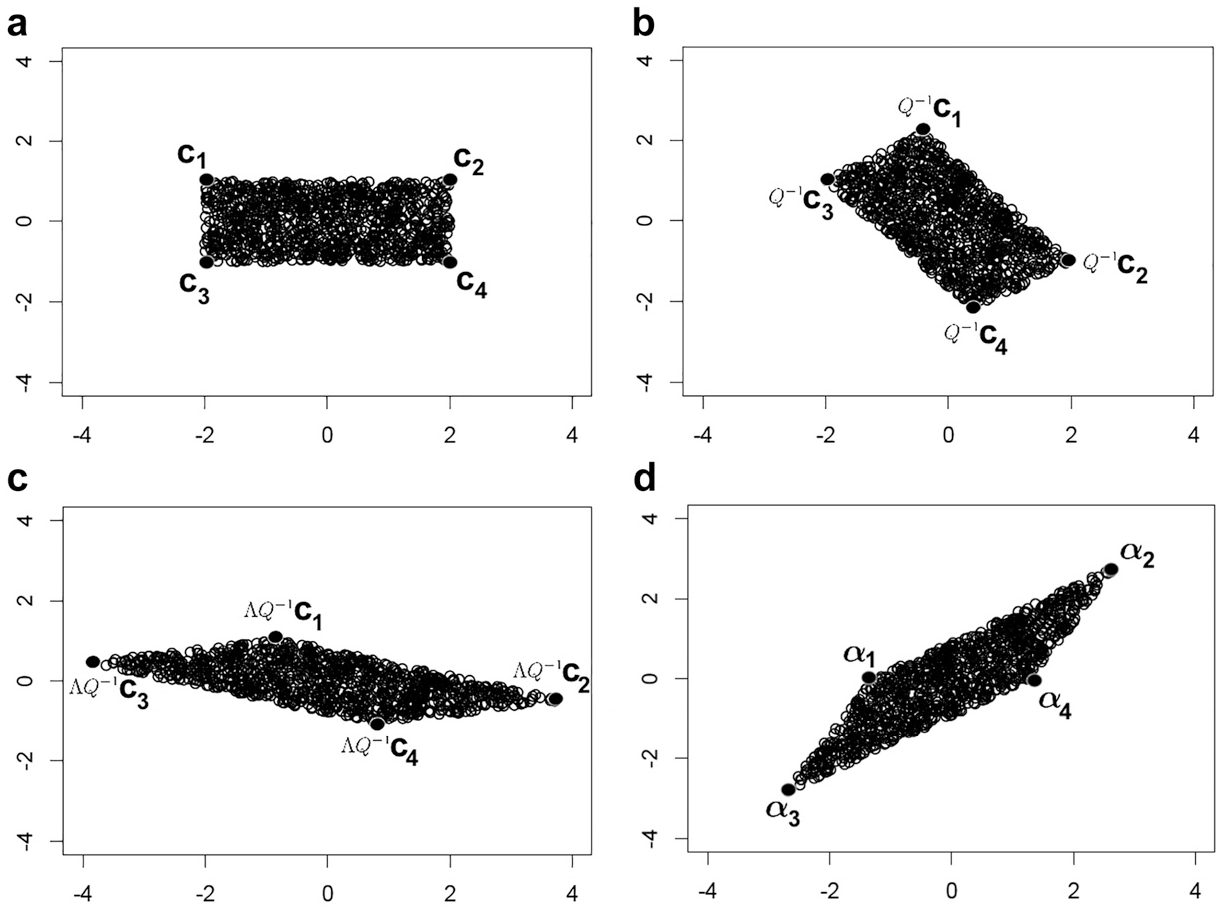

Transforming (a) a box/rectangle into a parallelepiped/parallelogram, via the spectral decomposition of

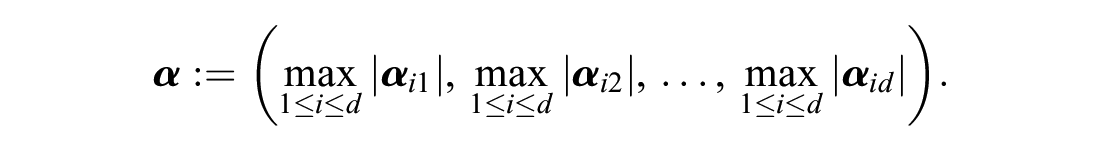

Define

Consider the event

where

and we are now in a position to take advantage of the simple structure of

where

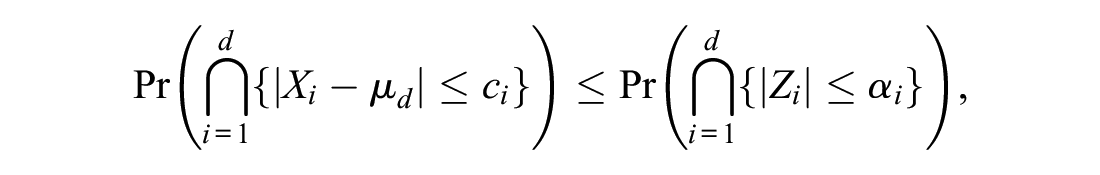

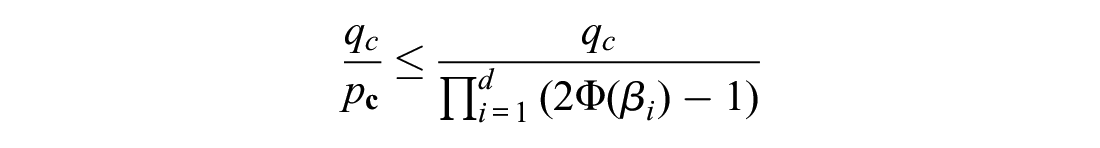

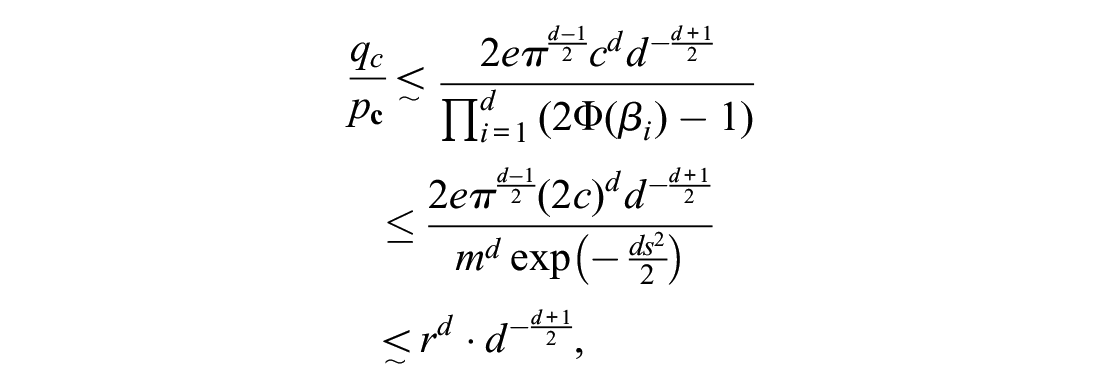

Now, to prove that the ratio

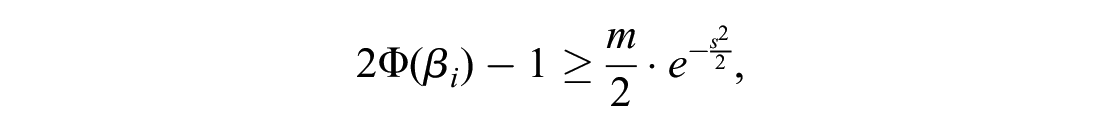

We need to ensure that this box does not degenerate as

Now, we may construct a lower bound on

Note that since

As we have already seen, the numerator can be expressed as

where

where the “

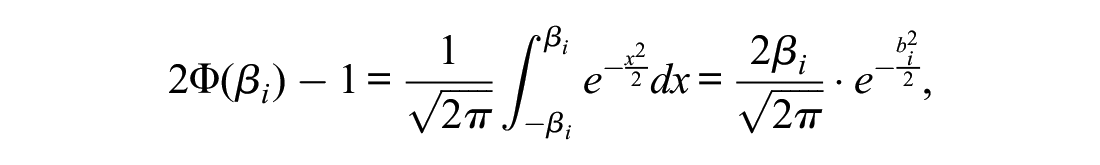

For the denominator of (2), we apply the mean value theorem to find

for some

for each

for some positive constant

Geometry of Cutoff Scores

The consequences of Theorem 1 for the discriminatory power of a test defined by setting cutoff scores in multiple subscales demands a closer consideration of the ambient geometry. While practitioners are generally quite comfortable with intuiting univariate phenomena, this intuition can severely breakdown in higher dimensions.

We first reiterate that the quantities

In

In two dimensions, this is all points on or in the circle of radius

In two dimensions, this is all points on or in the square of sidelength

Notice that

(a) Approximately 95% of the probability density lies within two units of zero of the univariate standard normal. (b) Approximately 86% of the probability density lies within two units of zero of the bivariate standard normal.

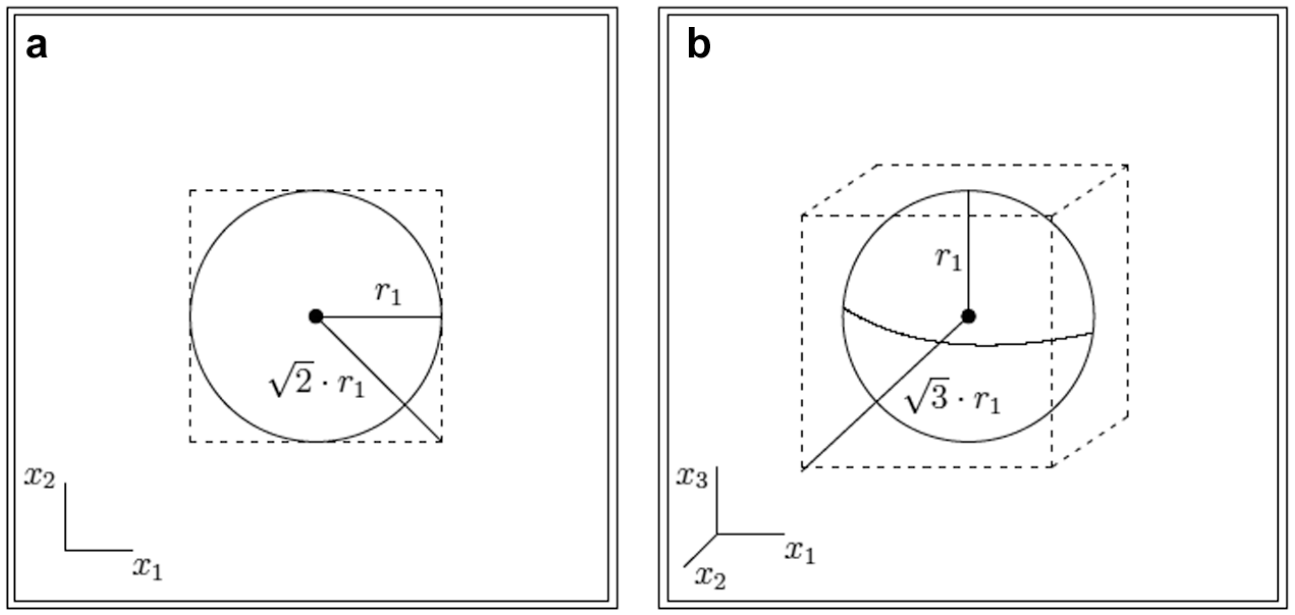

It is a classical fact of analytic geometry that any

Squaring both sides of this inequality, we see that

for all

(a) Circle (2-ball) of radius

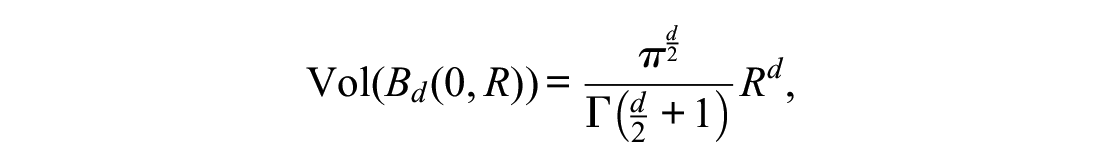

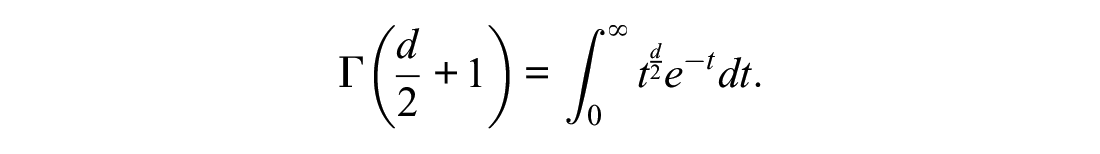

For completeness, we show the classical but rather counterintuitive result in high dimensional geometry that, as the number of dimensions grows, the volume of the unit

where

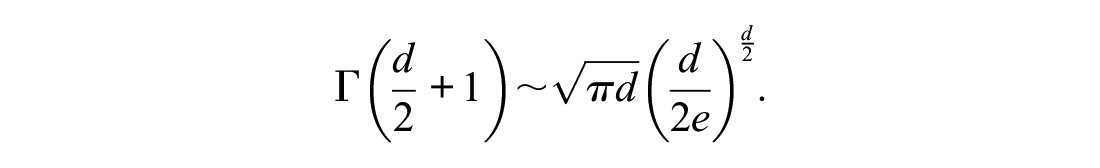

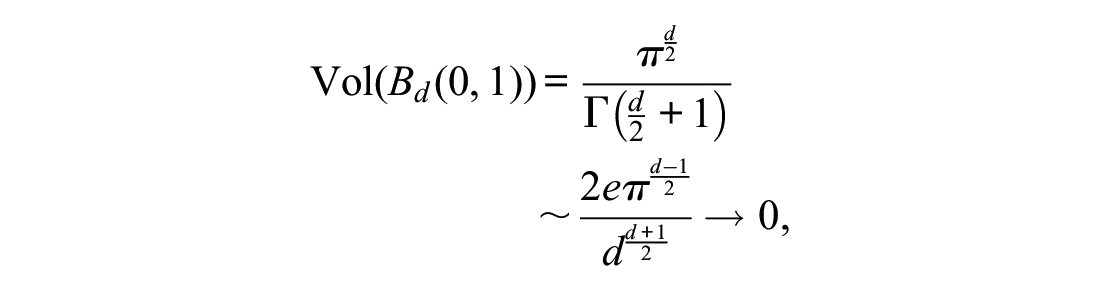

By Stirling’s formula, this function is asymptotically equivalent to

Thus,

since

It is easy to see that these results can be extended to balls and regular boxes of nonunit scale. Specifically,

One major consequence of these facts is that most of the volume of the regular

These results immediately generalize to ellipsoids and irregular boxes, so long as the lengths of their principal axes and, respectively, sidelengths remain uniformly bounded (this was our condition that

What is important for us though, and what Theorem 1 establishes, is that a similar geometry holds when we measure the volume of an ellipsoid or box using a multivariate normal measure, rather than the standard notion of Euclidean volume (i.e., Lebesgue measure). Indeed, Theorem 1 shows that the multivariate normal probability of falling inside a

In direct contrast with the univariate setting, more and more of the sample data from a multivariate normal distribution will fall away from the “typical respondent” (i.e., the

Consequently, if sample individuals are required to satisfy many marginal thresholds simultaneously and independently in order to be classified as belonging to some normative group of interest (i.e., in order to “pass the test”), then most of these individuals will appear near the boundary of at least one of these thresholds.

In the presence of naturally occuring measurement error (or, equivalently, under a less than perfectly reliable test), these individuals inside the normative group and near the boundary are indistinguishable from individuals outside the normative group. Thus, such a test loses all its discriminatory power of classification.

Percentage of reliably classified individuals as a function of the number of subscales. That is, ratios of the multivariate normal probability of falling inside the ellipsoid over the multivariate normal probability of falling inside the smallest box containing the ellipsoid as a function of the number of dimensions. The ellipsoids and boxes are determined by hypothetical threshold

Implication 3. is the most relevant for our practical recommendations. If we imagine a collection of subscales designed to assess aptitude of some particular latent trait

It is important to note that while we have concentrated on normative sets defined by regular, symmetric thresholds, the same results hold for irregular, asymmetric thresholds (e.g.,

Empirical Demonstrations

Theorem 1 demonstrates that any normative set constructed via independent thresholding of subscales will become less discriminating as the number of subscales increases. This is a reflection of the ambient geometry of higher dimensional space, where most of the mass of a normative set is forced to lie near the “corners” of the set, as defined by the subscale thresholds. Consequently, as the number of subscales increases, most individuals become very atypical in the sense that they must occupy a point in space that is far away from the mean/mode for multivariate normal phenomena. Moreover, if the normative set is defined as those individuals who fall within a certain distance from the marginal means of the subscales, then the size of this set will shrink without bound as the number of subscales increases. Take, for instance, a scenario where a battery of screening psychological tests are implemented on a group of participants with the aim of grouping them in “at risk” versus “not at risk” categories. One would expect that only a certain percentage of participants would exhibit enough markers or symptoms to be classified as belonging to the clinical group, the “true” population percentage of people exhibiting the pathology or characteristic of interest. If decision thresholds classifying participants as “at risk” versus “not at risk” did not consider how the various tests interact (i.e., setting them scale by scale as opposed to jointly), one would expect to have more participants classified as “at risk” as the number of tests or subscales increases arbitrarily, missing the true population percentage of participants who exhibit the pathology or characteristic of interest. Counterintuitively, having more tests or more subscales classifying participants does not necessarily imply these participants are being more reliably classified, unless the process of setting thresholds was calibrated with the aim to make one decision based on the joint relationship between tests, as opposed to one decision based on simply their individual, marginal structures.

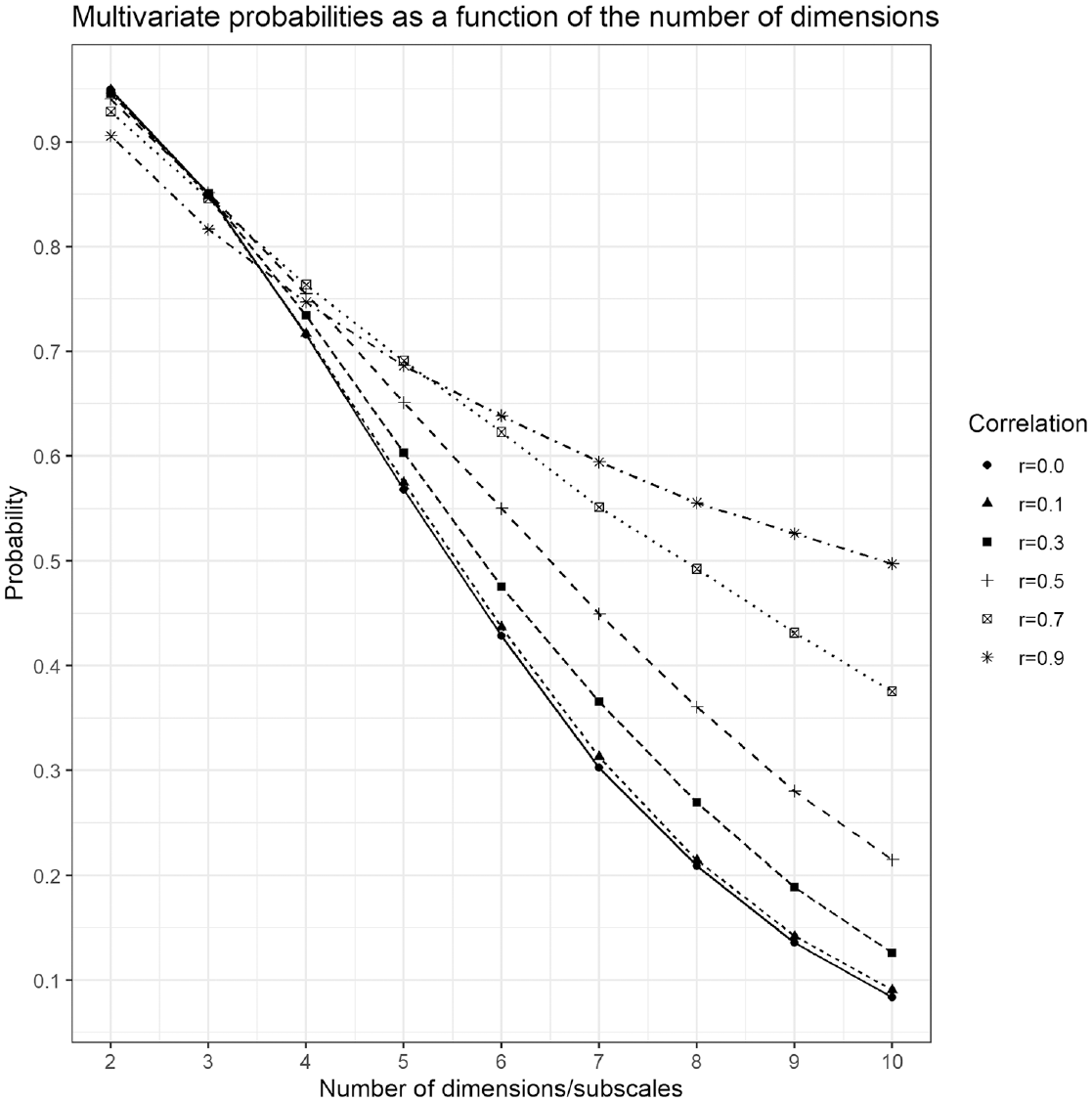

To showcase the practical implications of Theorem 1 and the geometrical explanations that follow, Figure 6 presents the decreasing probabilities of

Figure 6 illustrates the proportion of people in the normative group that are clearly distinguishable (i.e., reliably classified) from those outside the normative group—the multivariate normal volume of the ball divided by the multivariate normal volume of the smallest box containing the ball,

As the number of thresholded subscales increases, Figure 6 clearly illustrates that fewer and fewer people are reliably classified by the test as more respondents will be forced into the “corners” of the “pass” and “fail” groups. These respondents will not be reliably classified when the test is subject to measurement error. Moreover, the lack of discriminatory power for marginally thresholded tests only worsens as test reliability is compromised.

The degree of correlation between subscales also plays an important role in the ability to reliably classify participants, with higher correlations slowing the rate of unreliable classification as the number of subscales grows. This mathematical fact makes good practical sense too, since one can easily argue that a test composed of two highly correlated subscales has about as much ability to reliably classify individuals as a single subscale test. Regardless, Theorem 1 guarantees that no amount of correlation will be enough to overcome the problem eventually, given enough subscales. Figure 6 shows that by the time one reaches 10 subscales, a test with highly correlated subscales (

Conclusions and Recommendations

Given the frequent use of tests and measures as tools that aid in the classification of respondents, we believe it is important to highlight the differences implied by the traditional method of setting thresholds marginally (i.e., each subscale at a time) versus setting them jointly. From the theoretical results presented and the test scenarios explored, we believe a few important lessons should be highlighted and brought into consideration for applied researchers who may be interested in either developing their own scales or interpreting existing ones. We have summarized these lessons in Implications 1, 2, and 3 of section Geometry of Cutoff Scores and reiterate the lessons here.

First, if a scale has no subscales or a joint decision is not needed to aid in classifying or diagnosing, then setting thresholds univariately bears little influence in the final decision and the results presented here do not necessarily apply. The shape and properties of the marginal distributions would be the only relevant ones in this case. If, however, more than one subscale is used in the decision process, then it is important to remind test users and developers that the probability of selection is always less than or equal to the one implied by each subscale independently. Theorem 1 highlights this issue by pointing out the fact that, for example, even for the well–known case where 95% of the probability of a normal distribution is contained within 2 SDs, that probability goes to 0 as the number of dimensions grows. Implications 1 and 2 of section Geometry of Cutoff Scores summarize this problem as the fact that more sample respondents will necessarily fall far away from the “typical” respondent as the dimension increases. Therefore, we would like to encourage researchers to consider the decision process multivariately as opposed to in separate univariate pieces. Marginal thinking is how the majority of cutoff values are currently set, but this necessarily ignores joint dependency between the subscales and creates the possibility of entirely untenable diagnostics.

Moreover, the correlations among the subscales affects the probability of classification. In general, larger correlations among the simulated subscales implies a slower rate of decrease in the probability of reliable classification. Therefore, when deciding on a cutoff value (irrespective of the method in which this cutoff value is selected), it is important to keep in mind the correlations among the subscales and to adjust the thresholds accordingly. A potential approach that could be explored would be to use multivariate generalizations to univariate approaches that consider more than just the marginal structure of the subscales. One could, for instance, rely on the centroid of the distribution and the variance-covariance matrix so that thresholds could be set in terms of Mahalanobis distances and then see which combination of coordinates (i.e., sample individuals) correspond to the Mahlanobis distance of choice. Methods that incorporate both marginal and joint information simultaneously are welcome to tackle this issue. 1

Applied practitioners should expect an inherent amount of measurement error to be present in any testing situation. Too much measurement error often results in unreliable testing procedures, thus motivating the push for the creation of formally reliable scales. What our Theorem 1 demonstrates however, is that in the context of independent subscale thresholding, such tests will necessarily lose their discriminatory power to meaningfully categorize individuals as the number of subscales increases, regardless of how formally reliable the underlying measurements/subscales are. One natural way to address this intrinsically maladaptive feature is to switch to a more thoughtful process of threshold setting, one that does not always set thresholds on different subscales independently.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.