Abstract

Aftercare seeks to address challenges juveniles face when re-entering the community after residential placement. This study compares changes in recidivism in five Pennsylvania counties that piloted aftercare reforms in 2005 to 2010 as part of Models for Change to recidivism in counties that did not. The sample includes 3,107 referrals to court in pilot counties and 8,272 in comparison counties. Estimates show no discernible effect of the reforms on recidivism. The estimated change in re-referrals to court lies between a decrease of 4 percentage points and an increase of 14 points. Differences in implementation effectiveness and participation may explain these null results. Although this analysis does not detect changes in recidivism, these pilots laid the groundwork for Pennsylvania’s later juvenile justice reforms.

Criminal offending places juveniles at greater risk of failing to graduate high school, experiencing unemployment, and experiencing incarceration as adults. This makes it essential to find ways to improve juvenile re-entry after placement and encourage desistance from delinquent behavior. Support and supervision for juveniles exiting placement in a juvenile justice facility, known as aftercare, may help address the challenges youth face upon returning to the community.

This study examines the effect of aftercare supports produced by an innovative pilot program in five Pennsylvania counties on recidivism for juvenile offenders. Changes in aftercare practices in these five counties were spurred by Models for Change, an initiative to reform juvenile justice systems that took place in Pennsylvania from 2005 to 2010. This study employs a difference-in-differences design to estimate the effect of changes in aftercare in the five pilot counties, compared with non-participating counties, on juvenile recidivism outcomes (referral to court, adjudication of delinquency, and placement).

Because the lessons from these pilots formed the basis for further aftercare reforms in Pennsylvania, understanding their impact on recidivism sheds light on the potential for statewide reforms in aftercare. In addition, understanding the effect of the pilot programs on recidivism could help juvenile justice professionals make decisions about community-based supervision and support in the current era when fewer juveniles are being sent to residential placement (Hockenberry, 2020).

The Need for Aftercare and Re-Entry Support for Youth

The goals of aftercare are to reduce length of stay in placement, support juvenile reentry into the community, reduce recidivism, and improve juveniles’ connections with school, work, and relevant resources (Dewar, 2009). Aftercare programming ideally starts with consideration of how the services youth receive while in placement prepare them for reentry into the community and continues with both services and supervision after youth exit placement (Joint Policy Statement on Aftercare, 2004; Weaver & Campbell, 2015). In contrast, a traditional juvenile justice approach typically only uses supervision after placement (Weaver & Campbell, 2015). In theory, successful aftercare helps juveniles build the skills and knowledge necessary to avoid future delinquency and improve their lives.

Youth enter placement with both risk factors (such as behavioral disorders, learning disabilities, and history of trauma) as well as protective factors (bonds with caring adults, prosocial skills, education, and job readiness). The Models for Change aftercare initiative was designed to modify some of the risk factors youth experience and enhance protective factors for youth to ease their transition back into the community.

Transition programs like those that aftercare might provide can help youth build resiliency as they move back into their schools and community (House et al., 2018). At the same time, juvenile justice interventions that increase services to youth carry the potential for net-widening (Mears et al., 2016): with the intention of providing supportive services to youth, justice officials may broaden the reach of the system to involve youth who may have been better off with less contact. Similarly, if aftercare lengthens or intensifies a juveniles’ involvement with the system but does not actually provide youth with the skills to succeed upon exit from the system, the programs may not be a good investment.

Youth may experience a variety of challenges while in placement which may affect how well they re-integrate into their schools and communities after release. For example, the experience of incarceration may negatively affect juveniles’ educational achievement and mental health, and juveniles may or may not receive needed treatment while incarcerated (Lambie & Randell, 2013). Also, a lack of re-entry planning can result in youth experiencing homelessness upon exiting residential facilities (Britton & Pilnik, 2018). Experiencing homelessness may result in youth committing further offenses or violating probation orders (e.g., by missing school), and may increase youths’ risk of victimization.

Youth sentenced to placement tend to enter facilities behind others their age in terms of education, often have special education needs, and have a history of lower academic achievement (Blomberg et al., 2011; Lambie & Randell, 2013). Successful educational transitions upon entering and leaving placement are critical to youths’ eventual educational success. The more academic credits youth earned during incarceration the less likely they are to recidivate (Blomberg et al., 2011). At the same time, credits earned within a facility, which may be located in a different area than a youth’s local school district, may not transfer easily to the youth’s school upon reenrollment (Goldkind & Hirschfield, 2009). Slow or incomplete sharing of information between placement facilities and youth’s home school districts can impede youth’s educational progress, as can negative attitudes of school staff towards youth when they return to school (Stephens & Arnette, 2000).

Crime and delinquency are more likely to occur when a person’s bonds to society (such as attachments to school, family, and institutions) are weakened (Laub et al., 2006). With strong social bonds, an individual has more to lose by engaging in crime and getting caught. These social bonds can change over a person’s life (Sampson & Laub, 1993): the challenges of placement may weaken youths’ attachment to school, reduce youths’ trust in school staff, or strain their relationships with their family or others in their community. Effective aftercare programming could strengthen youths’ social bonds and help them succeed after placement.

Prior Research on Effectiveness of Aftercare and R-Entry Programs

Two meta-analyses point to the ability of aftercare programming to reduce recidivism by small but statistically significant amounts. Although the overall effect of the 30 included studies in Weaver and Campbell’s (2015) meta-analysis was not statistically significant, some subsets of the studies did demonstrate reduced recidivism for youth who participated in aftercare programs. Programs that served older youth (risk ratio = 0.79) and youth with violent offenses (risk ratio = 0.67) demonstrated significant treatment effects, as did well-implemented programs (risk ratio = 0.82). Another meta-analysis of 22 studies of aftercare programs (James et al., 2013) found that aftercare produced a small but statistically significant reduction in recidivism (Cohen’s d = 0.12). James et al. (2013) also found that well-implemented aftercare programs, and those that served older youth, youth with violent offenses, and youth at higher risk of recidivism had greater reductions in recidivism. Both meta-analyses only included aftercare programs with some form of support services in the community in addition to any supervision components.

Research has identified practices that can help youth make progress in their education despite the disruption of placement. Hirschfield (2014) describes how in Virginia, designated “Transition Teams” within facilities and reenrollment teams in student’s home school district work together to transfer necessary documentation for a youth’s smooth reenrollment.

Similarly, an evaluation in Arizona found that being randomly assigned to enhanced transition services—with both a transition specialist and a detailed school portfolio with all possible documentation necessary for re-enrollment—reduced recidivism by 64% after 30 days, compared with having a transition specialist and only a basic school portfolio (Griller Clark et al., 2011). Although the enhanced transition services reduced recidivism for the treatment group relative to the control group, overall recidivism rates were still high: after 90 days, 66% of the treatment group had returned to the detention center, as had 87% of the control group. For many youth, timely re-enrollment in school is a necessary, but potentially insufficient, step towards avoiding recidivism (Hirschfield, 2014).

Intervention: Reforming Aftercare Practices in Pennsylvania

From 2004 to 2011, the MacArthur Foundation invested over $100 million nationwide on juvenile justice reform through the Models for Change initiative. Fifteen percent of the grants given out through this initiative sought to improve aftercare (Griffin, 2011a). In Pennsylvania, aftercare-related grants (including the pilots) totaled approximately $1.2 million as of 2006 (Models for Change Selected Grants List, 2006). Besides supporting the pilots, Models for Change promoted cross-county and cross-state learning and resource development. For example, the Education Law Center in Philadelphia produced an Educational Aftercare and Reintegration Toolkit to aid juvenile probation officers as they help youth reenroll in school after placement; associated trainings were then held for juvenile probation officers around the state. Models for Change aligned with the state’s existing reform efforts; a large part of the funding for aftercare reforms came from the Pennsylvania Commission on Crime and Delinquency as part of the state’s Comprehensive Aftercare Reform initiative (Torbet, 2008).

Aftercare programming can be designed to address a variety of outcomes including length of stay, recidivism, and connections with school, work, and other community resources (Dewar, 2009). The Aftercare pilot counties pursued different ways of improving aftercare services (Griffin, 2011b; Griffin & Hunninen, 2008; Torbet, 2007, 2008). Some approaches emphasized more intensive supervision and support (York and Philadelphia). Specifically, York piloted two intensive supervision programs for higher-risk youth, both of which included referrals to supportive services. Philadelphia introduced the Reintegration Reform Initiative which involved multiple agencies and elements: Reintegration Workers planned youths’ transitions out of placement starting 3 months before release, an oversight committee met with youth and families after violations, and supervision and services were divided into standard and intensive levels based on a risk assessment tool.

Other counties (Allegheny, Cambria, and Lycoming) focused on particular aspects of the transition out of placement: education, employment, and family stability. Allegheny hired three full-time education specialists to assist juvenile probation officers in helping youth return to school, while Cambria created a job training program with Goodwill Industries. Lycoming piloted a number of programs including cognitive-behavior therapy for youth, a support group for parents, and intensive family counseling via Multisystemic Therapy.

Of the five pilot counties, Allegheny and York directly served the lowest proportions of their counties’ placed youth in their pilots. York focused on the highest risk youth, while Allegheny addressed system-wide issues in addition to working with specific youth. Allegheny’s educational specialists assessed the quality of education provided in placement facilities and therefore could have indirectly affected more youth than those enrolled in programming.

Counties implemented their pilot projects with varying levels of effectiveness, according to a contemporaneous implementation evaluation (Griffin, 2011b) and interviews with state and county staff with knowledge of the initiative. For example, multiple pilots were marked by high staff turnover, a lack of institutional support for newly created staff roles, and poor communication between placement facility staff and community-based staff. The programs’ designs often required multiple organizations (such as juvenile courts, school districts, health care, and community programs) to effectively coordinate to meet juveniles’ needs both while in placement and after returning to their community. However, these entities did not always agree on program design or goals. For example, pilots’ attempts to shorten lengths of stay or institute graduated release policies failed to find support from placement facilities and from some juvenile court judges. Finally, leadership changes in York and Philadelphia resulted in dropping or modifying parts of those pilot programs.

The pilots were part of an existing statewide effort to reform aftercare in Pennsylvania. A multi-agency Aftercare Working Group put a Joint Policy Statement on Aftercare into effect on January 1, 2005, the goal of which was to support counties in developing comprehensive aftercare systems by 2010 (Joint Policy Statement on Aftercare, 2004). According to this joint statement, a model system aftercare system should include: public schools that work with placement facilities and juvenile justice representatives (courts and probation) to help youth return to school; coordination between juvenile probation officer and placement staff to plan youths’ experiences and treatment; and consistent services to help youth overcome problems and develop their strengths and competencies, both during and after placement.

An evaluation of aftercare practices in each of Pennsylvania’s 67 counties conducted in the months after the Joint Policy Statement was issued indicated that most of the state’s counties did not reflect the policies and practices laid out in the joint statement and that reform efforts were needed (Griffin et al., 2007; Steele & Franklin, 2006). For example, 66% of counties said that educational transitions were a significant problem for youth after placement.

The Aftercare pilot counties worked together to develop lessons that other counties could learn from as they then sought to improve their own aftercare systems (Griffin, 2009). For example, the All-Sites Group, which included officers from aftercare pilot counties and additional probation administrators, published guidelines and strategies for effective probation case management for youth in placement called Probation Case Management Essentials for Youth In Placement (Torbet, 2008). These strategies include developing a plan that guides youth activities in placement with an eye towards what youth will need to accomplish after they are released and drawing on community resources to support youth after placement.

In addition to sharing lessons from the All-Sites group, when the Joint Policy Statement was released in 2005 staff from the Juvenile Court Judge’s Commission and the Council of Chief Juvenile Probation officers visited each county to conduct an initial assessment of aftercare practices and raise awareness of the Policy Statement. Over the course of the Models for Change years, these state leaders visited stakeholders in each county to develop county-specific strategic plans around aftercare reforms modeled on the Joint Policy Statement. Lessons from Models for Change were also shared in annual statewide juvenile justice conferences.

Finally, the aftercare initiative influenced later Pennsylvania reforms: after the initial Models for Change funding ended in 2010, Pennsylvania juvenile justice actors integrated the lessons learned into the state’s Juvenile Justice System Enhancement Strategy, which focuses on integrating evidence-based tools and practices such as structured risk-needs assessment (Youth Level of Service), detention screening tools, motivational interviewing, and a focus on measuring results (Blackburn, 2019; Pennsylvania Commission on Crime and Delinquency, 2012).

Prior evaluations have focused on implementation of pilot programming (Griffin, 2011b) and on systems change across all Models for Change states (Stevens et al., 2016). According to an interview with a state-level administrator familiar with the pilot programs, an evaluation of the pilots’ impacts on recidivism was not conducted at the time because Pennsylvania did not have the data to do so, nor did it have a standardized definition of recidivism. The Models for Change initiative and movements for reform caused the state to begin gathering and organizing data in a way that would enable it to track recidivism. Adding to the existing evaluations of the overall Models for Change initiative, this study measures whether the pilots reduced recidivism during the initiative. Because the pilots were designed to address the risk factors youth experience when re-entering the community after placement, and because prior research has shown that well-implemented aftercare programs can reduce recidivism (James et al., 2013), this study hypothesized that the pilots reduced recidivism.

Method

This study employs a difference-in-differences design to assess relative changes in outcomes in the counties involved in the aftercare pilot compared with non-involved counties. To address the hypothesis that implementing this aftercare programming decreases recidivism, outcomes for the pilot counties are compared with counties that did not participate in the Models for Change aftercare pilot program. By comparing the change in recidivism before and after counties piloted Models for Change to recidivism in counties that did not, this study is able to estimate the effect of the aftercare initiative on recidivism (Angrist & Pischke, 2015). The difference-in-differences method allows this study to account for time trends in recidivism that may have existed independent of the pilots. This design is synonymous with a between-within observational design. Also using a difference-in-differences design, Donnelly (2017) found that Models for Change reforms in five Pennsylvania counties reduced disproportionate minority contact/confinement relative to counties that did not undergo reforms. This study was approved by the University of Pennsylvania’s Institutional Review Board.

Data

De-identified referral-level Pennsylvania juvenile court data from 2000 to 2012 were obtained from the National Juvenile Court Data Archive with permission from the Pennsylvania Juvenile Court Judge’s Commission. Referrals are included in the data if they resulted in an initial case outcome (e.g., probation and placement) in these years. Juvenile county ID variables make it possible to link referrals of the same juvenile to track recidivism.

Outcomes

The outcomes include the following measures of recidivism: whether a juvenile experiences a subsequent referral to court, an adjudication of delinquency, or a placement within 2 years of their initial referral to court. These outcomes are tested for juveniles whose initial referral resulted in placement. The 2 year time frame for recidivism was chosen based on the definition used by the Pennsylvania Juvenile Court Judge’s Commission, which defines recidivism using a 2-year window after case closure (Fowler & Anderson, 2016). (I also examine a 1 year time frame as a robustness check and find similar results.)

Covariates

To improve precision of the difference-in-differences estimate, controls for juvenile, referral, and county characteristics are included. First, the model controls for the race, ethnicity, gender, and age (at the time of the initial referral) of the juvenile, and whether the juvenile had a referral or adjudication of delinquency during the prior year. Controls for characteristics of the initial referral include charge seriousness (felony, misdemeanor, and summary offense) and type (person offenses; property offenses; nonpayment of fines, fees, and restitution; drug; traffic violations; status offenses; and public order offenses). Definitions for the charge type categories are based on those used in the annual juvenile court statistics produced by the National Center for Juvenile Justice (Hockenberry & Puzzanchera, 2020).

County-level demographic and juvenile justice characteristics are included as controls, as in the Donnelly (2017) study of Pennsylvania’s efforts as part of Models for Change to reduce disproportionate minority contact/confinement. The county child poverty rate and school dropout rate are included because of associations between poverty, school dropout, and juvenile delinquency. County child poverty rates (the percent of children ages 0–17 years living in poverty) are drawn from the 2000 Census (U.S. Census Bureau, 2000a). Dropout rates for grades 7 to 12 were available from the Pennsylvania Department of Education for 2005 to 2009; since all years were not available, these rates were averaged into one measure of dropout for each county (Pennsylvania Department of Education, 2009). Other county level characteristics include the percent of the county population living in urban areas (U.S. Census Bureau, 2000b), the youth population ages 10 to 17 years in each year (Puzzanchera et al., 2020), and annual unemployment rates (U.S. Bureau of Labor Statistics, 2010). Finally, to account for the types of cases handled on average in each county per year, county-level means of the age and gender of juveniles referred are included, as are the proportion of referrals with felony and misdemeanor charges (leaving out the proportion of summary charges).

Sample

The study focuses on juveniles in Pennsylvania with at least one residential placement between 2001 and 2009. Data is at the court referral level. The original dataset includes all referrals with initial case outcomes from 2000 to 2012. After calculating priors and recidivism for each referral, the sample is truncated to eliminate the first and last 2 years in the data. (For the first year data prior offenses are not available. Similarly, the recidivism window is not captured in the last 2 years of data.) From 2000 to 2009, a total of 420,631 referrals to court were made in Pennsylvania, of which 32,933 (8%) resulted in placement. These referrals involved 244,867 unique juveniles, of which 21,653 (9%) experienced placement during this time.

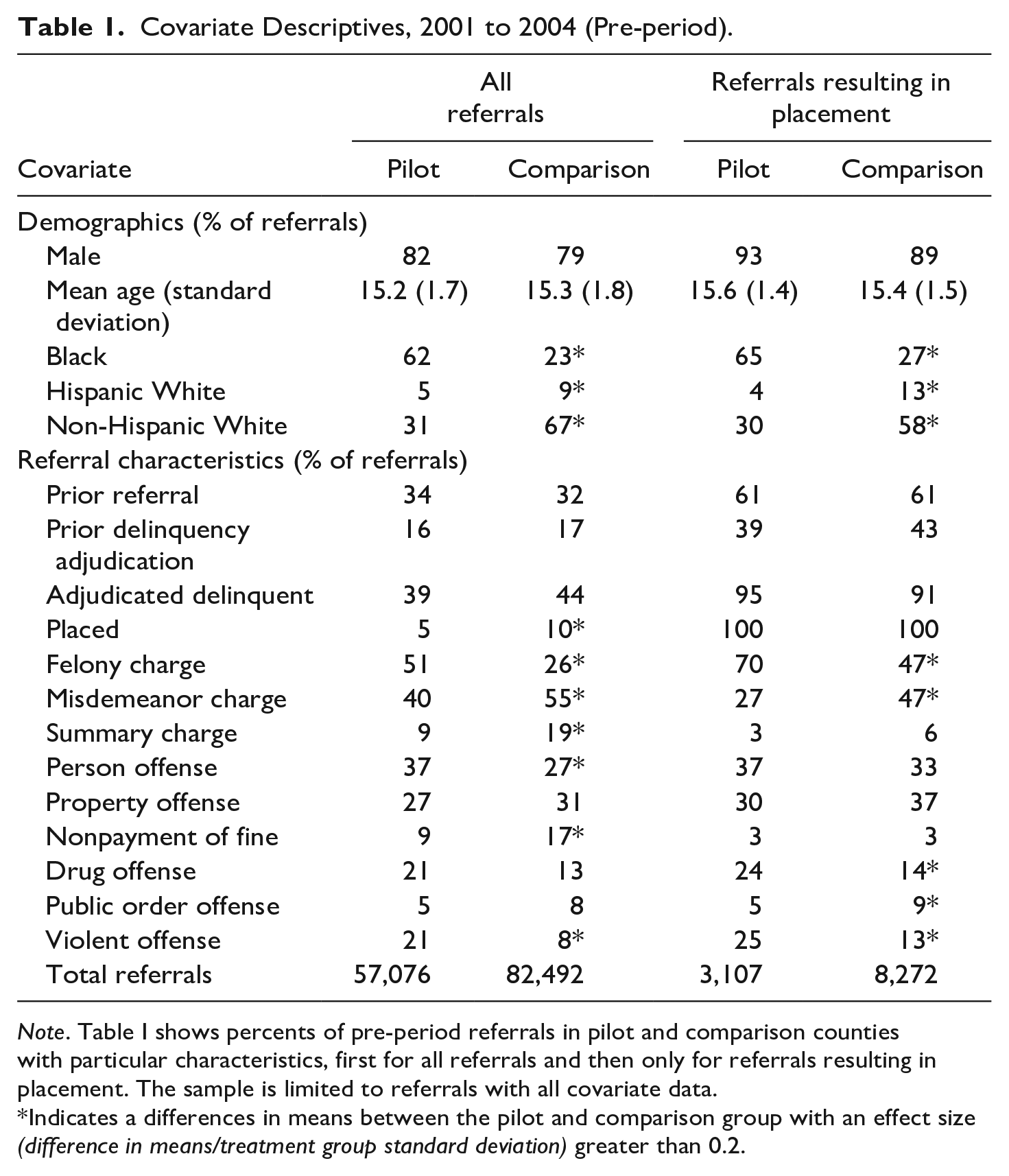

Table 1 shows percents of pre-period covariates in pilot and comparison counties, for all referrals, and for only those referrals that resulted in placement (the focus of this study). Only referrals that have complete data for the covariates in the analysis are included in Table 1. (Missing data were not imputed in analyses. I test models with and without the 6,858 (out of 32,933) referrals with missing covariate data and find that excluding these 21% of referrals does not affect the results.)

Covariate Descriptives, 2001 to 2004 (Pre-period).

Note. Table I shows percents of pre-period referrals in pilot and comparison counties with particular characteristics, first for all referrals and then only for referrals resulting in placement. The sample is limited to referrals with all covariate data.

Indicates a differences in means between the pilot and comparison group with an effect size (difference in means/treatment group standard deviation) greater than 0.2.

Youth in pilot counties’ referrals were more likely to be Black and less likely to be non-Hispanic White. A similar proportion of referrals in pilot and comparison counties involved youth with a prior referral (and with a prior referral resulting in adjudications of delinquency) within the past year.

A greater proportion of pilot counties’ referrals were for felony charges compared with referrals from comparison counties; this is true for all referrals and when limiting to referrals that resulted in placement. Pilot counties’ referrals were also more likely to be for violent offenses, for both referrals overall and referrals resulting in placement. Conversely, a greater proportion of comparison counties’ referrals to court were for misdemeanors (both overall and referrals resulting in placement).

In the pre-period, similar percentages of referrals in pilot and comparison counties were adjudicated delinquent (39% in pilot vs. 45% in comparison counties); however, referrals in comparison counties were more likely to result in placement (5% in pilot counties vs. 10% in comparison).

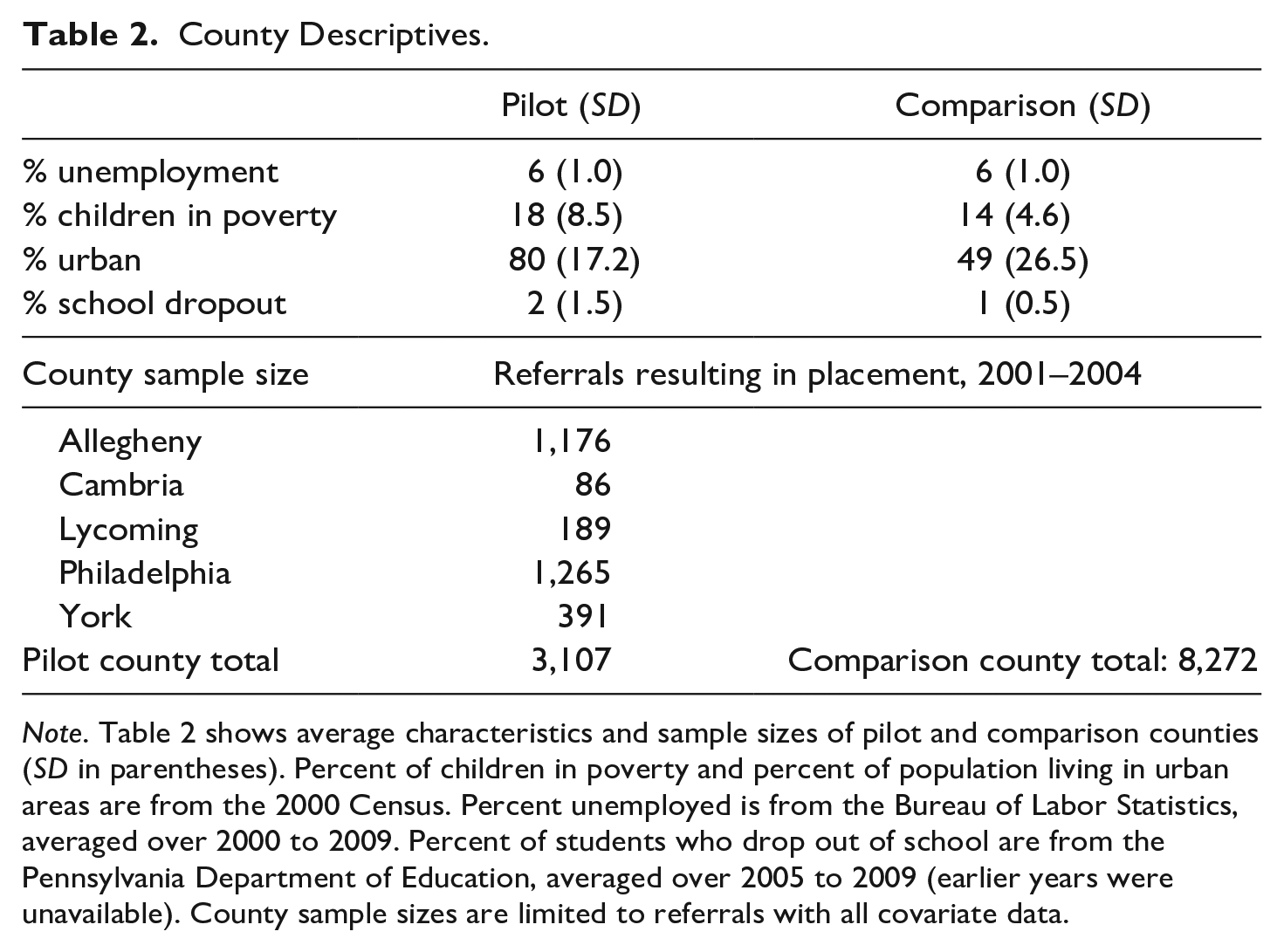

At the county level, child poverty rates were higher in pilot counties (Table 2). Greater proportions of people in pilot counties lived in urban areas: the pilot counties include Pennsylvania’s two largest cities, Philadelphia and Pittsburgh (Allegheny County). Table 2 also shows the size of the sample of referrals in each county.

County Descriptives.

Note. Table 2 shows average characteristics and sample sizes of pilot and comparison counties (SD in parentheses). Percent of children in poverty and percent of population living in urban areas are from the 2000 Census. Percent unemployed is from the Bureau of Labor Statistics, averaged over 2000 to 2009. Percent of students who drop out of school are from the Pennsylvania Department of Education, averaged over 2005 to 2009 (earlier years were unavailable). County sample sizes are limited to referrals with all covariate data.

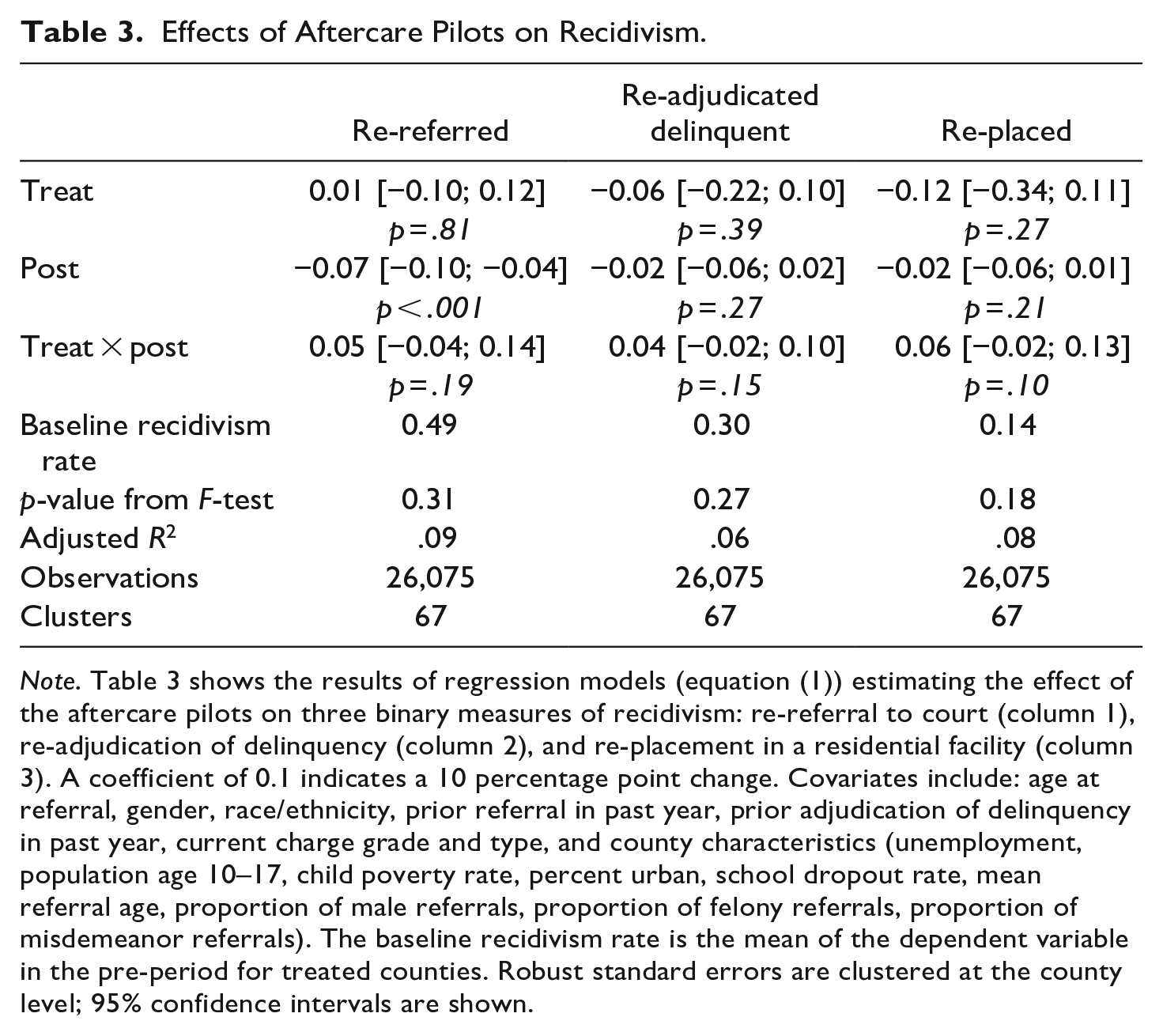

Power calculations were conducted to analyze the study’s ability to detect effects of the intervention by using the standard errors of the regression models to calculate the minimum detectable effect (Bloom, 1995). Statistical power was found to be adequate to detect effects of the magnitude seen in this study (Table 3). Specifically, for re-referrals to court, the study has 80% power to detect a relative difference of 12% of the baseline rate of re-referrals, which (using the 49% re-referral rate for treated counties) is 5.9 percentage points. For re-adjudications of delinquency, the study can detect a relative difference of 8%, which (relative to a baseline re-adjudication rate for treated counties of 30%) is a difference of 2.4 percentage points. For re-placement, the study can detect a difference of 10% relative to the baseline rate of 14%, which is a difference of 1.4 percentage points.

Effects of Aftercare Pilots on Recidivism.

Note. Table 3 shows the results of regression models (equation (1)) estimating the effect of the aftercare pilots on three binary measures of recidivism: re-referral to court (column 1), re-adjudication of delinquency (column 2), and re-placement in a residential facility (column 3). A coefficient of 0.1 indicates a 10 percentage point change. Covariates include: age at referral, gender, race/ethnicity, prior referral in past year, prior adjudication of delinquency in past year, current charge grade and type, and county characteristics (unemployment, population age 10–17, child poverty rate, percent urban, school dropout rate, mean referral age, proportion of male referrals, proportion of felony referrals, proportion of misdemeanor referrals). The baseline recidivism rate is the mean of the dependent variable in the pre-period for treated counties. Robust standard errors are clustered at the county level; 95% confidence intervals are shown.

Model

This study compares pilot counties’ changes in recidivism before and after the introduction of the pilot program to that of counties that did not adopt this initiative. The effect on recidivism for a juvenile exiting placement in an aftercare pilot county is estimated by the following difference-in-differences equation:

where Yrjc is the recidivism outcome for referral r of juvenile j in county c. Treatrjc equals 1 if the referral that resulted in the juvenile’s placement took place in a county that was part of the aftercare pilot. Postrjt equals 1 if the referral occurred in the years after the start of the pilot. The coefficient of the Treat × Post interaction term

Xrj is a vector of referral-level controls including: age of the juvenile at time of referral, gender, race, whether the juvenile had a prior referral or adjudication of delinquency in the past year, the type of charge (e.g., person, drug, and property), and the charge grade (felony, misdemeanor, and summary). Zct is a vector of county-level variables that may affect juvenile outcomes, including: unemployment rate, child poverty rate, percent of residents living in urban areas, and high school dropout rate. Finally, the model controls for the average characteristics of the referrals each county handled in each year: the proportion of referrals that were of males, the average age of juveniles referred, the proportion of referrals with a felony charge, and proportion with a misdemeanor charge. Errors are clustered by county since the treatment occurs at the county level.

To check the robustness of the results to modeling and sample decisions, additional models are tested that: use a 1-year rather than a 2-year recidivism window; drop one pilot county at a time to make sure one county is not driving results; drop late-starting pilot counties; drop counties that participate in other Models for Change pilots; and use “late adopting” counties as an alternative comparison group.

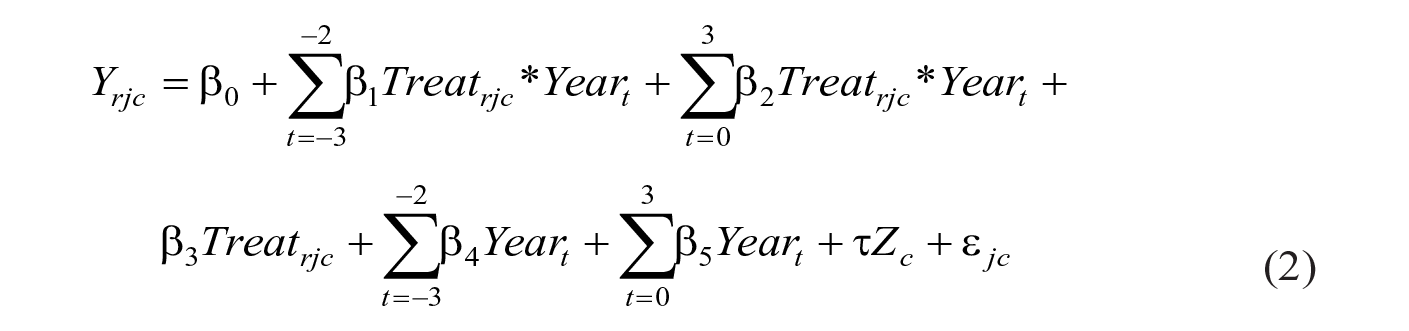

This difference-in-differences analysis assumes that the trend in counties that did not pilot aftercare programming is a valid counterfactual for the pilot counties. In other words, we assume that outcomes in the pilot counties would have followed a similar trend as the comparison counties had they not begun new aftercare programming. This assumption is tested by estimating an event study model according to the following form:

The model examines pre and post trends by interacting year indicators with the pilot county locations, for years 2001 to 2009. The year before the pilot programs begin (2004) serves as the reference year (t − 1). If the coefficients for the interaction terms in the pre-period (

Results

Pre-period recidivism rates were lower in pilot counties than in comparison counties: 49% of referrals in pilot counties were re-referred again within 2 years, compared with 60% of referrals in comparison counties. In the post period, re-referral rates for pilot counties remained the same (49%), while re-referral rates for comparison counties declined from 60% to 55%. Other outcomes (re-adjudication and re-placement) follow similar patterns.

Table 3 shows the regression estimates for equation (1). There are no discernable differences between pilot and comparison counties on recidivism for referrals, adjudications of delinquency, or placement within 2 years of the original referral date. All the coefficients are positive (indicating increases in recidivism) but none are statistically significant. The estimates are imprecise: the 95% confidence interval for the proportion of referrals with a recidivating referral within 2 years is [−0.04, 0.14], meaning that the effect of the program on recidivism likely likes somewhere between a reduction of 4 percentage points and an increase of 14 percentage points. Relative to the baseline of 49% for the pilot group in the pre-period, this means decreases in recidivism greater than 8%, and increases recidivism greater than 28% can be ruled out at the 5% level.

Similarly, for referrals with a recidivating adjudication of delinquency, the effect of the program likely lies somewhere between a decrease of 2 percentage points (7%) and an increase of 10 percentage points (33%). For referrals resulting in another placement, likely effects range from a decrease of 2 percentage points (11%) to an increase of 13 percentage points (90%).

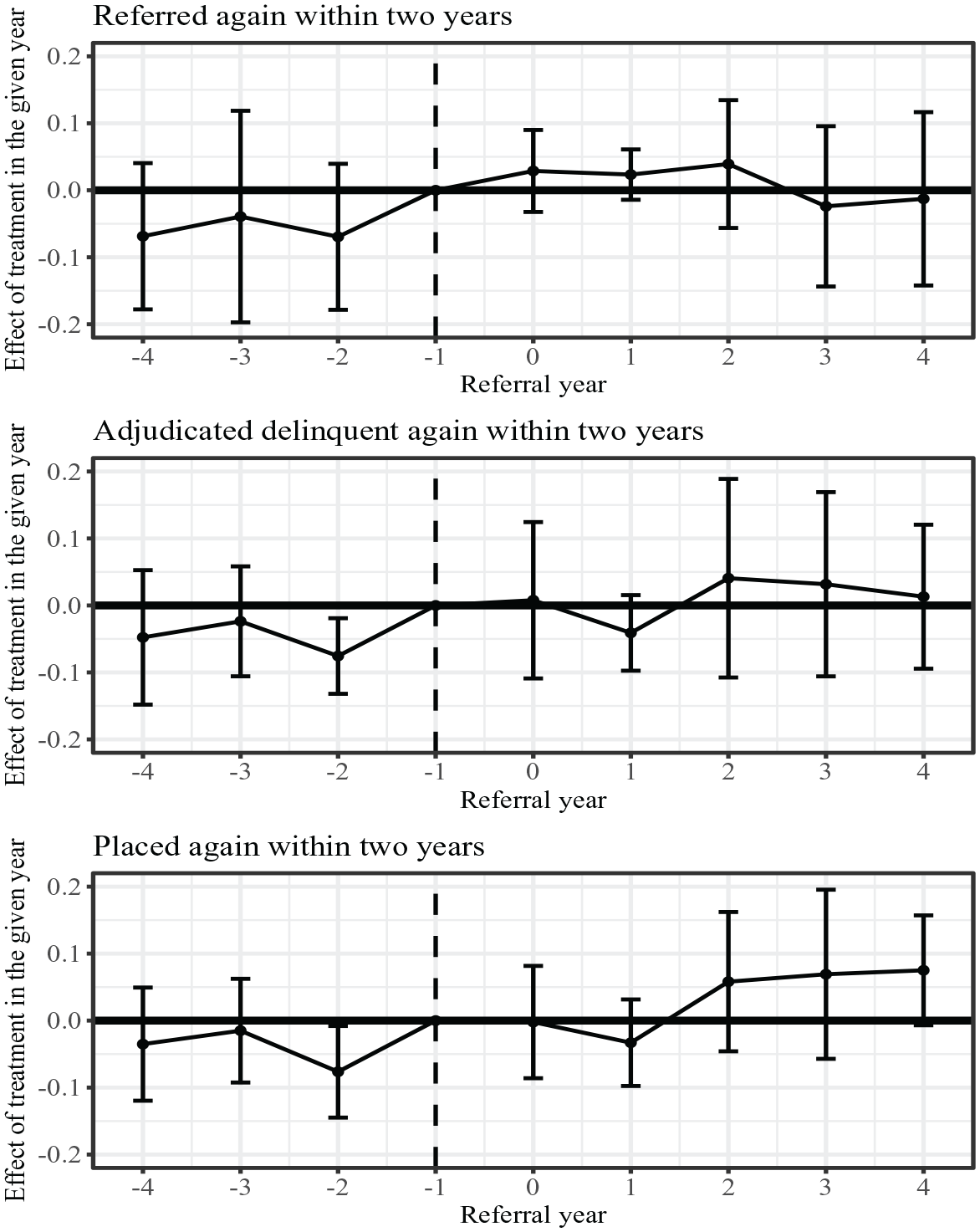

Figure 1 plots estimates of the interaction terms (

Effects of Aftercare Pilots on Recidivism Over Time.

Robustness Checks

I conduct a number of robustness checks to ensure that the effects observed are not sensitive to small changes in the models. (Results for all robustness checks are available in the Supplemental Appendix.) Outcomes in the main analysis use a 2-year window for recidivism. As an alternative definition, I examine recidivism within 1 year of the initial referral using the same controls as the main specification. As in the main specification, all the coefficients are positive, but none are significantly different from zero. For example, the point estimate for re-referrals to court is the same as the main model (5 percentage point increase) with the same confidence interval [−0.04, 0.14] (Supplemental Table A1).

To ensure that one county is not driving the results, I remove one pilot county at a time and run the model with the remaining four counties as the treatment group. Point estimates are similar to those in the main model and confidence intervals are similarly wide. For example, point estimates for re-referral to court range from increases of 3 to 8 percentage points, with confidence intervals ranging from a decrease of 10 percentage points to an increase of 22 percentage points (Supplemental Table A2). In another test, I remove the two counties where programming started late in 2005 or in 2006 (Allegheny and York). The point estimates are similar to that in the main model and the confidence intervals also cross zero; for example, re-referrals are estimated to increase by 3 percentage points, with a confidence interval of [−0.14, 0.19] (Supplemental Table A3).

The Models for Change initiative included other areas of focus besides aftercare: reducing disproportionate minority contact/confinement and improving mental health and juvenile justice collaboration. Two of the five counties piloting aftercare innovations also participated in the other areas of focus: Allegheny and Philadelphia (Schwartz, 2013). The “treatment” for these two counties is therefore difficult to disentangle. I therefore drop these two counties and only include counties with just one area of focus (Cambria, Lycoming, and York). The point estimates are similar to the overall model; however, the estimates are less precise since Allegheny and Philadelphia are the largest two counties in the pilot group (for example, re-referrals increased by an estimated 3 percentage points, with a [−0.27, 0.32] confidence interval; Supplemental Table A4).

In addition, five counties in the comparison group were involved in piloting other Models for Change reforms: reducing disproportionate minority confinement/contact in Berks, Dauphin, and Lancaster counties (Griffith et al., 2012), and increasing diversion and improving services for youth with mental health needs in Chester and Erie counties (Griffin, 2009). To understand whether these reforms in comparison counties affected the results, I exclude these counties from the comparison group. The results are very similar to the original models (for example, the point estimate for re-referrals is an increase of 4 percentage points, with a [−0.05; 0.12] confidence interval; Supplemental Table A5).

In 2007, fifteen counties formally agreed to adopt the principles of the Joint Policy Statement on Aftercare (Griffin et al., 2007). These counties could be thought of as “late adopters” of aftercare reforms. Because leaders in these counties sought to improve aftercare practices and signaled their intentions to begin reforms, they may be more like the pilot counties on intangible factors not captured in the data such as attitudes towards reform. Therefore, I use these counties as an alternative comparison group by comparing this subset of counties to the pilot counties for the first 2 years after the pilots began (2005–2006). Again, results are similar to those in the main model (the point estimate for re-referrals is an increase of 5 percentage points, with a [−0.06; 0.16] confidence interval; Supplemental Table A6).

Discussion

The lack of a discernable effect of the aftercare Models for Change pilot programs in Pennsylvania on recidivism is in line with a meta-analysis that found no overall treatment effect of aftercare programs (Weaver & Campbell, 2015), but stands in contrast to another meta-analysis that found that aftercare programs can produce small reductions in recidivism (James et al., 2013). Even though the effect estimates in these meta-analyses did not agree, both found that the quality of program implementation made a difference in the size of the program’s effects on recidivism.

This study has several notable limitations. First, the study estimates the effects of the aftercare pilot on the entire county’s recidivism rate for youth who experienced placement, but in some counties, only a small proportion of placed youth were part of pilot programming. For example, York county’s focus was on serving higher-risk youth more intensely. Accordingly, the programs served a small number of youth relative to the number of youth put in placement in the county each year. A meta-analysis of aftercare programs (James et al., 2013) found that more intensive treatments produced greater effects. Recidivism from intensively served youth in York may have declined, but their outcomes cannot be isolated from those of other youth exiting placement in York. Future evaluations would benefit from additional program participation data tracking to support such analyses.

Second, understanding the mechanisms behind changes is not possible with the administrative data collected. The data do not include the treatments, services, or achievements of youth in placement, such as the education credits they earn. This information would have shed light on how the aftercare pilots could have influenced recidivism and, along with program participation data, could be incorporated into future evaluations. In addition, this study is based on administrative court data that, despite best efforts to track youth, may be missing cases. If so, this missingness could impact the results.

Furthermore, ascribing the results of this study to a particular form of aftercare is complicated by the fact that the pilots pursued a variety of aftercare programming. Although the aftercare pilots sought to achieve similar aims laid out by the state’s Joint Policy Statement on Aftercare, the “pilot” aspect of the program—that is, to test out different models of aftercare—makes assessing them as a whole group more difficult. In addition, no systematic information on aftercare practices in comparison counties is available.

Two characteristics of poorly implemented aftercare programs noted in the Weaver and Campbell meta-analysis—staff turnover and low levels of communication between placement facility staff and community-based staff—were present in some of the pilot programs. In some counties, the new staffing roles created for the pilots lacked support. For example, in one county, the expectations and responsibilities placed on staff within the new role created to support youth in aftercare were reportedly out of proportion with the salaries offered, leading to less experienced individuals filling these roles, and to high turnover rates. In aftercare, relationships between placement providers, probation officers, school staff, and community program providers help aftercare programming function effectively; high staff turnover would hinder the development of these relationships. In another county, the roles and responsibilities of the new positions were scaled back when qualified individuals were difficult to find and retain.

Other obstacles to strong implementation included obtaining support for the new aftercare programming from all parties involved in a youth’s case. For example, one of the drawbacks to York’s programming was a lack of support from placement facility staff for the graduated release aspect of the program: facilities were hesitant to change their procedures for one county. Another county that pressed for shorter lengths of stay in placement, or for earlier release so that youth could participate in newly enhanced community-based programming, met with resistance from individual placement facilities and some judges who wanted to keep longer placement stays.

Leadership changes in two counties resulted in pilot projects being partially cut (York) or modified from their original design and eventually discontinued (Philadelphia). With the loss of particular individuals in leadership roles who supported the projects, these efforts were not sustained. An individual involved in the pilots described the importance of institutionalizing programming reforms so that they do not rest on one or two people and can instead become embedded in the organization.

Some pilots focused on systems-level changes and may have taken a while to show any recidivism results. Allegheny, for example, focused on building systems to check the quality of education in placement facilities. These systems-building efforts did alter the placement landscape in Pennsylvania. The aftercare pilots in Allegheny and Philadelphia spurred the creation of new standards and supports for placement education through the creation of the Pennsylvania Academic, Career and Technical Training (PACTT) Alliance in 2008. Similarly, based on the case planning and risk assessment some pilot counties undertook, the state adopted the Youth Level of Service (YLS) risk needs assessment tool to assess youth needs and risk of reoffending. The YLS is conducted before disposition and at multiple timepoints during a youth’s placement, and counties are now required to use the YLS in order to qualify for certain state funding.

Although this analysis does not detect any changes in recidivism outcomes in pilot counties, according to conversations with individuals involved in the pilots and contemporary documentation (Griffin & Hunninen, 2008; Pennsylvania Commission on Crime and Delinquency, 2012), the pilots spurred change in leadership thinking and philosophy and laid the groundwork for later initiatives and reforms, such as the PACCT Alliance (which supports education and job training within placement facilities) and the state’s Juvenile Justice Enhancement Strategy, the next phase of reform in juvenile justice in Pennsylvania.

This study speaks to the difficulty of changing systems like aftercare that involve multiple agencies and actors, and of sustaining these changes. Changes in complex arenas such as juvenile placement and aftercare require sustained staffing and funding commitments from multiple agencies, as well as agreement on common goals such as reducing length of stay and enrolling youth in community-based programming. On the whole, the improvements pilot counties made to their aftercare systems were small and while some were sustained after the pilot programming ended, most were not. Marginal changes such as those seen here are unlikely to change juvenile offending unless maintained as part of wider systems change. Overall, these pilots helped Pennsylvania counties learn about different techniques for supporting youth and highlighted the challenges to building and delivering successful aftercare programs at scale.

Supplemental Material

sj-docx-1-cad-10.1177_00111287231170118 – Supplemental material for Assessing Aftercare Reforms in Pennsylvania’s Juvenile Justice System

Supplemental material, sj-docx-1-cad-10.1177_00111287231170118 for Assessing Aftercare Reforms in Pennsylvania’s Juvenile Justice System by Monica Mielke in Crime & Delinquency

Footnotes

Acknowledgements

Many thanks to the Pennsylvania Juvenile Court Judges’ Commission for permission to access the data used in this research, and to the National Juvenile Court Data Archive for providing the data.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305B200035 to the University of Pennsylvania. The opinions expressed are those of the author and do not represent views of the Institute or the U.S. Department of Education.

Supplemental Material

Supplemental material for this article is available online.

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.