Abstract

Digital transformation has been changing and will continue to change nearly every aspect of business. As a result, the foundations of entire fields are now being revisited and updated to align with new developments. Corporate responsibility (CR) is one such field, and this article examines how important digital economy developments affect CR. The article uses three core questions—responsible for what? responsible toward whom? who is responsible?—to assess the impacts of digitalization on CR. Then, the article describes the implications of these impacts for managers, CR advocates, management scholars, and policymakers.

Keywords

Digital technology is transforming the economy, bringing with it tremendous gains for society but also significant challenges. 1 The phrase “everything that can be digital, will be” is often quoted, and the transformations brought by digitalization are described with words such as “seismic,” 2 “sea change,” 3 or “revolutionary” and “disruptive.” 4 The changes have been predicted to be so deep that the foundations of entire fields need to be revisited. Indeed, such research is beginning to emerge: there are now studies about how digitalization affects the fields of economics, 5 strategy, 6 management information systems, 7 accounting, 8 working and organizing, 9 and even moral philosophy. 10 These studies highlight how digitalization calls for re-examining previous assumptions, how those assumptions may or may not continue to apply to a given field, and how those assumptions may need to change to align a field with contemporary developments.

Corporate responsibility (CR) is one of the fields where a digital disruption in the economy can have significant consequences. 11 Yet, an examination of the impact of digitalization on the foundations of CR is notably absent. A large and growing body of literature examines individual ethical issues in the digital economy—an increasingly hot topic not only in academic research but also in policymaking and in corporate practice as the ethical implications of digitalization become evident. There are also more holistic discussions of CR and digitalization 12 and on the dark side of digitalization. 13 Also, “corporate digital responsibility” has been recently proposed as a separate concept (either a subset of or parallel to CR) covering the responsibilities associated with the use of data and digital technology. 14 Still, these studies have not addressed the question of how CR as a field, including its basic foundations and assumptions, may be affected by digitalization. To what extent CR might experience a disruption because of a digital disruption in the economy depends on the nature of the current understanding of CR and its robustness in the face of the digitalization challenge—in other words, whether CR can accommodate changes brought by digitalization without having to change any basic assumptions. Thus, the question begging examination is: How does digitalization change the field of CR, and how fundamental are those changes?

To address this lack of knowledge on an important topic, we provide a systematic examination of the digital economy and CR from this big-picture perspective. We ask: How may the fundamental changes in the digitalizing economy shape the field of CR and require managers, scholars, CR advocates, and policymakers to think differently about CR? To answer this question, we proceed from digital economy phenomena to their ethical impacts, then to the collective effects of these impacts on the CR field, and finally to an overall evaluation of the changes and their implications. We point out the wide-ranging impacts arising for CR from the digital economy and offer a framework through which those impacts can be understood.

From Digital Technology to Ethical Issues in a Digital Economy

Michael Lenox describes what we are currently observing as the most recent manifestations of a process that has been going on since the first electronic typewriters and digital watches became popular in the 1960s and 1970s. Indeed, digital transformation has proceeded in waves as developments in digital technology have unleashed new opportunities in the economy. First, it was about representation: creating digital artifacts through digitization. Later, it was about connectivity: sharing on the internet and other platforms. Now we have reached the third wave, aggregation, where data are aggregated through advanced technologies to create value. 15

The current wave of digital transformation, however, has seen accelerating and exponential advances, fueled by the dramatic growth in processing power, storage capacity, and bandwidth. 16 This has culminated in the coming together of big data and the power of artificial intelligence (AI) to analyze that data. The term “big data” generally refers to the accumulation of large data masses in a digital environment. Not only are there huge volumes of data, but that data comes in many varieties, at a high velocity, and across broad levels of granularity. 17 Artificial intelligence generally refers to algorithms that undertake problem-solving tasks. Thanks to machine learning, rather than being directly programmed by humans, machines can observe and learn from data given to them for training purposes, or from experience accumulated during use, and generate the algorithms needed to achieve a goal.

Now the field is fast-moving, with huge, disruptive implications. Since the release of the AI-powered chatbot ChatGPT to the public in 2022, there have been remarkable developments, especially with generative AI, which can output text, images, audio, video, music, and code, and thus revolutionize practices in multiple domains. 18 And the evolution certainly does not stop here. These exponential developments give new urgency to examining and understanding the connections of digital transformation with all aspects of business, including CR.

Identifying Digital Economy Phenomena from a CR-Relevant Perspective

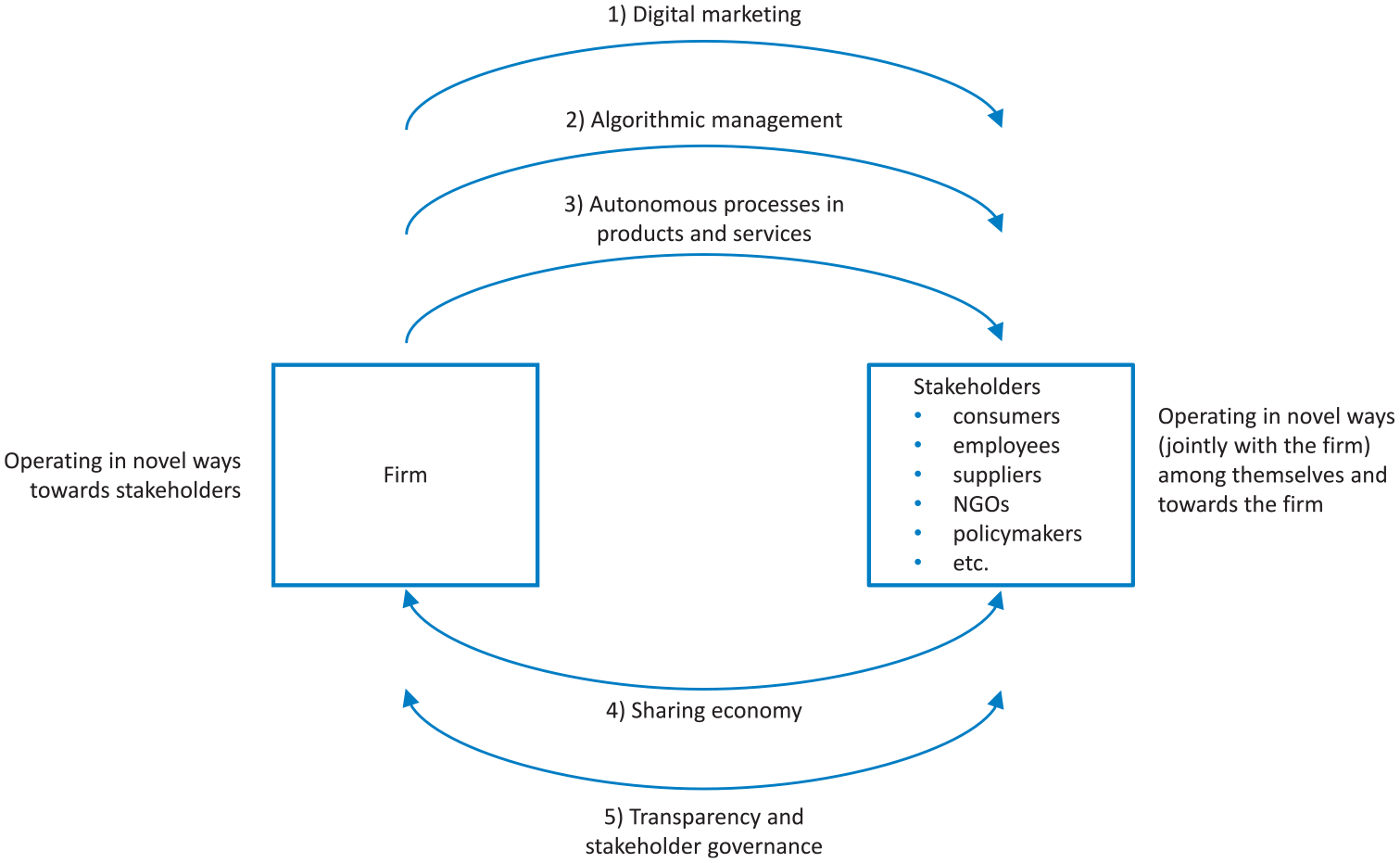

The scope of digital transformation ranges from changes occurring in the processes and operations within a firm, from firm boundaries and inter-firm collaboration to industry boundaries and economic relations. 19 Across these domains, it was essential for our purposes to identify digital economy phenomena from a CR-relevant perspective. Stakeholder theory is a foundational framework in CR 20 that positions the stakeholder relationship as the central unit of analysis, 21 so this guided our identification process. We began by examining how digitalization affects stakeholder relationships. To ensure a comprehensive perspective, we considered changes from two directions: firms operating in novel ways toward their stakeholders, and stakeholders operating in novel ways among themselves and toward the firm. We then examined what such novel ways could be for key stakeholder groups. Our process of inductive reasoning drew from a broad range of sources, including academic articles, practitioner reports, and news items, and thereby we developed a list of five phenomena that capture key changes potentially affecting the CR field (see Figure 1). 22 Each phenomenon is well-established as relevant to CR, as evidenced by the literature we reference in the descriptions. The phenomena are mutually non-exclusive in the sense that they can co-exist in various combinations, and in the sense that autonomous processes may intersect with the other phenomena.

Key digital economy phenomena from a CR-relevant perspective.

First, it is possible for a firm to employ digital technology in its various functions and toward its various stakeholders. Key digital economy phenomena resulting from these novel ways of operating include digital marketing toward customers, algorithmic management toward employees (current and prospective) as well as suppliers and business partners, and autonomous processes in products and services.

Second, thanks to digital technology, it is possible for the stakeholders of the firm to operate in novel ways, jointly with the firm. Stakeholders can interact among themselves as participants of the sharing economy, enabled by the platforms set up by firms. Digital technology also enables novel two-way interactions between the firm and its stakeholders (like customers, NGOs, local inhabitants, the general public, and policymakers) through enhanced transparency and stakeholder governance.

Key CR-Relevant Digital Economy Phenomena and Their Ethical Implications

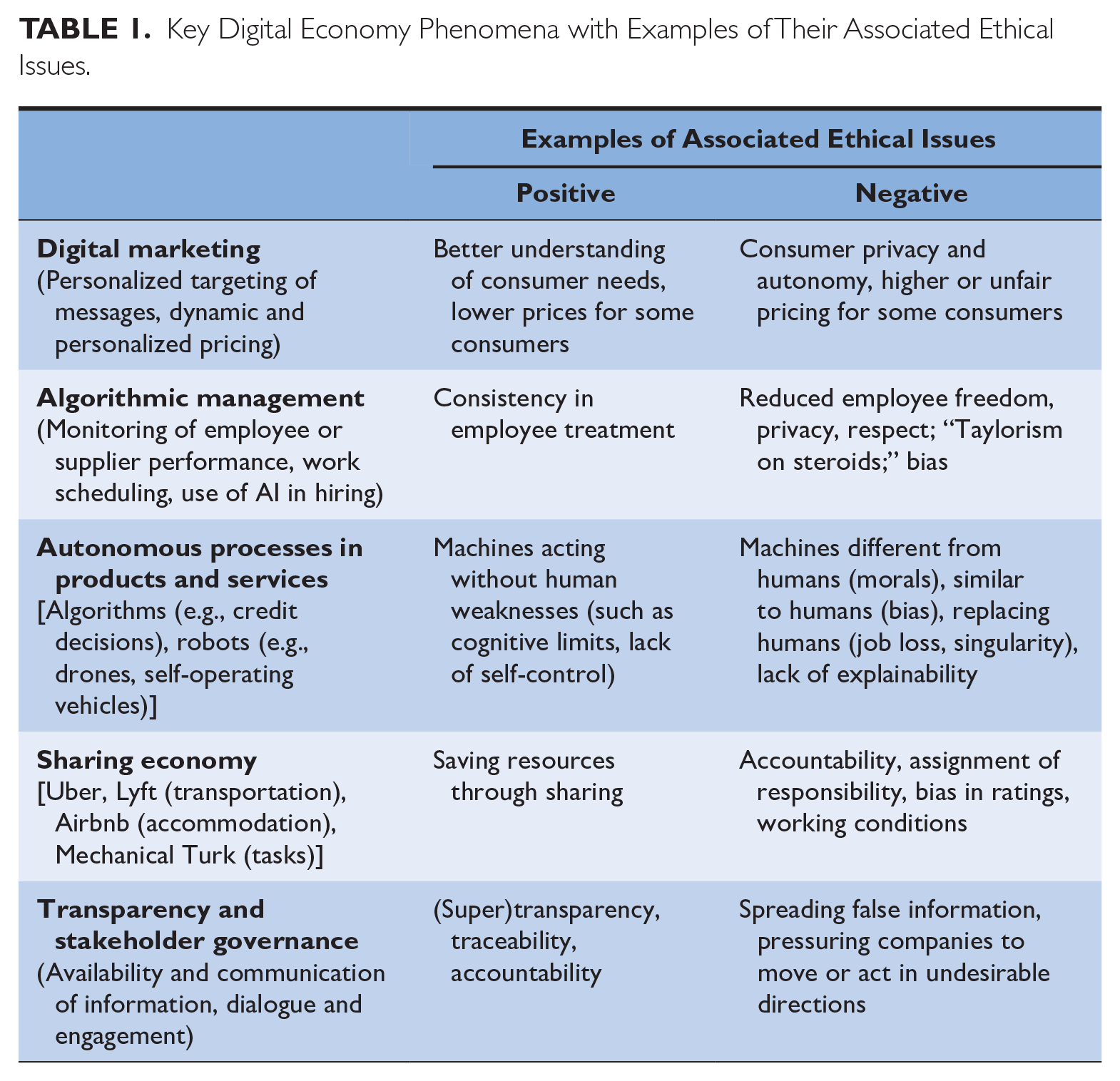

Below we describe these key digital economy phenomena and the kinds of positive and negative ethical impacts that have been identified in connection with them (Table 1 provides a summary with examples).

Key Digital Economy Phenomena with Examples of Their Associated Ethical Issues.

Digital Marketing

The term digital marketing captures a wide variety of measures used by firms to market their products and services to customers more effectively with the help of digital media. It can involve the personalized targeting of the timing, content, and form of messages, as well as the dynamic and personalized setting of prices. 23 Digital marketing relies heavily on data about the customer and on algorithms to make sense of these data. 24

Ryan Calo summarizes many of the ethical issues relating to digital marketing in his discussion of “digital market manipulation.” Market manipulation refers to firms using “what they know about human psychology to set prices, draft contracts, minimize perceptions of danger or risk, and otherwise attempt to extract as much rent as possible from their consumers.” 25 In a digital context, the availability of big data creates an information asymmetry that dramatically increases firms’ ability to influence consumers, even at an individual level. As a result, consumers are less able to pursue their own interests through their choices and may be led to purchase products and services they did not need or want or to pay higher prices than necessary. Thus, digital market manipulation can result in economic harm, privacy harm (subjective and objective), and autonomy harm for the consumer. 26 However, it should also be noted that there may be benefits too, such as better understanding of individual consumer price elasticity of demand, bringing benefits to some consumers in the form of lower prices when companies price discriminate. In some ways, these are old concerns about marketing in a digital guise. However, the scope to exploit consumer psychology at massive scale and depth is unprecedented, and even more so with the advent of generative AI. 27

Algorithmic Management

Both within firms and with platform labor, algorithms track people’s performance to optimize organizational structures and human resource management. 28 Algorithmic management can also be used between firms and their suppliers, including by platform firms such as Amazon.com, where algorithmic management combines with the monopolistic power of the company to exert dominance over vendors. 29 Algorithmic control takes place through several mechanisms: restricting and recommending (to direct), recording and rating (to evaluate), and replacing and rewarding (to discipline). 30 When algorithmic management is taken to the extreme, “the core function of management has gone from managing the business to managing the bots that are managing the business.” 31

As the use of algorithmic, data-driven management increases, 32 there is a risk that CR issues may be sidelined from decision-making since CR considerations may not generate data points to the same extent as other considerations. When it comes to employee monitoring and control, the ethical issues revolve around questions that include freedom, privacy, and respect. 33 These issues arise because algorithmic management makes it possible to capture and evaluate employee performance in real time and at a detailed level to the extent that questions of surveillance clearly arise; algorithmic management has been called “Taylorism on steroids.” 34

For instance, the algorithm of the takeaway delivery company Deliveroo systematically monitors all aspects of the tasks of its couriers (time to accept orders, travel time to restaurant and to customer, time at customer, late orders, unassigned orders) and compares them against a benchmark. 35 Amazon’s similar monitoring of its warehouse workers in France was judged “excessive” surveillance and illegal, resulting in a €32 million fine. 36 On the positive side, algorithmic management of employees may guarantee a certain consistency: an algorithm has no good or bad days, nor does it resort to favoritism (although it might be biased in ways that favor particular employees).

Autonomous Processes in Products and Services

A third type of digital economy phenomena is the emergence of artificial agents that operate autonomously. 37 Such artificial agents can be software programs (bots) making decisions, or hardware-software combinations (robots) carrying out physical activities, and they “perform tasks on behalf of humans and do so without immediate, direct human control or intervention.” 38 Firms can employ artificial agents in their services (e.g., in credit decisions), or the firm’s product can be an artificial agent (like a robot vacuum cleaner or a self-operating vehicle). The recent radical developments in AI technologies, including but not limited to generative AI, have resulted in the integration of autonomous processes across an increasing number of organizations. 39

The relevant ethical issues with autonomous processes are those that have been presented as ethical concerns with AI. 40 Some relate to the inputs needed for running AI systems: for example, the dire working conditions of those doing the data moderation and annotation required for generative AI, and the toll that work takes on their mental health. 41 Others relate to the outputs of AI systems. One concern is the fact that machines are different from humans in their reasoning and operation. Machines lack human moral considerations even though their decisions can have moral consequences. Whereas human action is generally restricted by a moral conscience (albeit to a different degree for different individuals), machines could undertake immoral or illegal actions without the blink of a (mechanical) eye—that is, unless they are programmed or taught to incorporate ethical reasoning, thus becoming so-called Artificial Moral Agents. Ironically, however, another concern relates to the fact that machines might be too much like humans: if they learn by imitating human behavior, they will perpetuate the same biases and repeat the same unethical habits and behaviors as humans. 42 In any case, algorithmic accountability or explainability is a relevant issue because as AI gets complex and self-improving, its internal workings become a “black box,” and it is not possible even for experts to know why the algorithm has reached a particular result. A final concern relates to AI replacing humans. In the more immediate future, there is the question of job losses as artificial agents occupy jobs that were, until now, conducted by human workers (especially with the advent of generative AI). Then there is the ultimate question posed by some of technological singularity, which refers to artificial superintelligence surpassing human intelligence and potentially taking control of itself and our societies.

However, it has been argued that if it were possible to incorporate ethical reasoning into AI so that it would be accompanied by artificial morality, machines might make more ethical decisions than humans. While humans have weaknesses like cognitive limits and biases, visceral reactions, and a lack of self-control, and are therefore not always able to choose the ethical course of action, machines don’t have such limitations. That said, developing Artificial Moral Agents still faces crucial challenges 43 and arguments have also been presented against such efforts. 44

Sharing Economy

The sharing economy, another manifestation of the digital economy, is made possible by digital platforms provided by firms where algorithms connect product and service providers with product and service recipients, and which also include a rating system to build trust between the parties. Four features of sharing economy organizations can be identified: they are organized as digital platforms; they facilitate peer-to-peer transactions; they emphasize temporary access rather than ownership; and they are focused on the use of underutilized capacity. 45 Well-known examples of sharing economy companies include Uber and Lyft (in transportation), Airbnb (accommodation), and Amazon Mechanical Turk (tasks), a division of Amazon.com.

The sharing economy creates the potential to utilize excess capacity and save natural resources. The growth of vehicle sharing in various forms (e.g., Zipcar, Uber, and BlaBlaCar) should in principle reduce demand for vehicle ownership. However, there are also ethical concerns, including questions of accountability and assignment of responsibility. 46 Whether the platform-providing companies should be held accountable for the actions of the individuals (e.g., drivers) offering their services through those platforms has been debated from a legal as well as a CR perspective. Particularly noteworthy are responsibilities that traditional providers in a sector have long assumed but that new entrants avoid or attempt to avoid. For example, Airbnb has been criticized as unfair competition for hotels that must comply with lodging regulations and charge occupancy taxes, while car-share platforms like Uber have been accused of exploiting drivers who they claim are independent contractors rather than employees. Still another concern is that consumer-sourced rating systems might be discriminatory against certain protected groups; while companies are prevented from workplace discrimination by law, discriminatory bias could be present in the ratings by individuals. 47 Even the environmental benefits are questioned. Some people may give up car ownership because of the availability of ride-sharing options, but for others these options may lead to a car purchase as the car becomes an income source. In some cities, lower prices for car-based transportation have reduced demand for public transportation.

Transparency and Stakeholder Governance

Finally, digital technology has enabled the rise of a multi-faceted phenomenon best described as transparency and stakeholder governance. More granular and real-time data are available, including about complex supply chains, which enables greater transparency and traceability. Furthermore, new digital media allow the firm to establish a two-way dialogue with stakeholders, 48 thus creating “dynamic transparency.” 49 Digital media can also empower stakeholders, such as activist networks or the general public, to organize and communicate more effectively 50 and hence influence and pressure organizations about their CR. 51 All this contributes to increasing transparency (even “super-transparency,” 52 or making firms “naked” 53 ) and stronger governance of firms by stakeholders, 54 although it may require a certain convergence of evaluations to be impactful. 55

Of the digital economy phenomena we have identified, transparency and stakeholder governance is the one that relies more on traditional information and communications technology than the latest developments in big data and AI. (However, it should be noted that blockchain technology is having major implications on the way that records are being kept and thus on traceability.) This phenomenon’s associated ethical impacts are also predominantly seen as positive. Transparency is considered important for stakeholders to be able to preserve their autonomy and make informed choices, 56 and it is one of the key principles of CR (e.g., in the ISO 26000 standard on social responsibility). The ability of stakeholders to pressure firms to increase CR efforts is also considered beneficial. However, swift dissemination methods could be misused to spread false information, 57 and the quality of information (e.g., in social media) may be more suspect. 58 Consequently, stakeholders might pressure companies toward socially undesirable directions.

Digital Economy and the Changing Landscape of CR

In the preceding section we saw how developments in the digital economy can lead to ethical impacts, both positive and negative. In this section, we examine those positive and negative impacts to identify and describe how they might collectively affect the landscape of the CR field. As a starting point, we characterize the domain and boundaries of CR through three questions. While the field of CR can be seen to contain strategic and operational management topics, it has an important normative core. 59 We focus on this normative core and understand the landscape of CR to cover the questions of assignment of responsibility and the boundaries of this assignment. Thus, our analysis does not discuss questions relating to the measurement and implementation of CR, or other such topics, even though they may also be affected by digitalization. Our interest is more fundamental. For the same reason, we are also focusing primarily on the “inside-out” perspective on CR: that is, the positive and negative impacts that companies have on environmental and social issues, rather than the risks and opportunities that social and environmental issues might present for the company.

Questions Defining the Landscape of CR

Stakeholder theory is at the core of the field of CR. As Donaldson and Preston explain in their seminal paper, 60 while stakeholder theory is descriptive and instrumental, it is more essentially normative. By this, they mean that “the normative basis for stakeholder theory involves its connection with more fundamental and better-accepted philosophical concepts.” 61 Thus, consistent with theorizing in moral philosophy 62 and moral psychology, 63 we argue that the field of CR in relation to stakeholder theory may be analyzed through three key elements: moral agents (performing moral actions), moral patients (upon whom the actions are performed), and the moral actions themselves. These are distinct, underlying dimensions to discussions of CR. Together they capture the scope of the assignment of CR.

First, moral actions are those that “can cause moral good or evil” 64 and which may, therefore, create responsibilities for companies. The contemplation of moral actions in the context of CR thus results in the question of what can be considered moral actions or issues: responsible for what? Second, moral patients consist of all entities that “can in principle qualify as receivers of moral action.” 65 In the context of CR, this poses the question of who are the parties that may experience good or evil, or right or wrong, because of moral actions by companies, and toward which companies may, therefore, have responsibilities. This culminates in: responsible toward whom? Third, moral agents are those entities that “can in principle qualify as sources of moral action.” 66 From a CR perspective, this relates to the point of who are sources of moral action and who might therefore be assigned responsibility for such actions: who is responsible?

Moral Actions: Responsible for What?

The first of our analytical questions asks about the issues for which the firm might be responsible. There have been several attempts to capture the broad, diverse, and somewhat vague domain of CR by dividing it into multiple narrower issues. Among the most comprehensive and authoritative efforts is the ISO 26000 standard, which identified social responsibility issues through an international multi-stakeholder process. 67 It lists seven core social responsibility subjects, which are further divided into thirty-six more specific issues. Parallel and largely compatible issue lists can be found, for example, in the Global Reporting Initiative (GRI) set of universal, sector, and topic standards. 68 The UN Sustainable Development Goals, adopted in 2015, also serve to pinpoint relevant domains for CR. Most recently, the EU has introduced legislation that mandates sustainability reporting (Corporate Sustainability Reporting Directive [CSRD]) and supplemented this with European Sustainability Reporting Standards (ESRS) that specify the information to be reported. 69 The ESRS standards detail more than 80 sustainability matters across ten topical environmental, social, and governance categories. We can examine the changes brought by the digital economy against the CR issues identified in initiatives such as these.

Digital economy can, first, make existing CR issues manifest in novel ways. For example, although AI has prompted questions about job replacement by robots and generative AI, the fundamental social responsibility issue of employment is an old one. The same goes for working conditions, questions about which arise anew in the gig economy. Or take the question of big data, digital marketing and privacy: even though it now comes up in the digital context, the underlying issue of consumer data protection and privacy was there before large-scale digitalization. In a similar vein, the fundamental social responsibility principle of transparency now manifests itself in a novel form with the black box nature of some machine learning algorithms, and the question of protecting intellectual property rights does so with the unauthorized use of the work of artists and writers in training generative AI. While these are old issues in certain respects, they have gained new urgency.

Second, digitalization can help alleviate or solve existing CR issues. At a more technical level, improved transparency both within the firm and externally, brought about by the availability of more data and faster communications around such data, allows firms to track performance in product supply chains, and consumers to take informed action. This helps firms better implement CR and provides more incentives for doing so since reputation and legitimacy are more readily at stake. More broadly, great expectations are being placed in the ability of digitalization, and AI in particular, to help solve humanity’s challenges. This includes advances in medicine (e.g., drug discovery, alternatives to animal testing, medical image analysis), agriculture (e.g., weather forecasting for better planning), energy saving (e.g., through real-time monitoring and adaptation), and many other fields. According to one report, AI could be a key enabler for 82% of social sustainability targets, 70% of economic sustainability targets, and 93% of environmental sustainability targets under the UN Sustainable Development Goals. 70

Third, the digital economy can intensify existing CR issues. An obvious example is the hugely increased electricity consumption required for data centers, cloud providers, and training generative AI. 71 Or consider how digitalization has enabled business models that are not merely fast fashion but ultra-fast fashion, with their harmful environmental impacts. Furthermore, the availability of big data makes many data-dependent activities in the consumer and employee domains possible in an unprecedented manner. Marketers have always collected information about customers (or firms about employees), but now this is happening on a different level. Now, not only is there vastly more information, but it is also more granular and originates from surprising sources, such as your robot vacuum cleaner selling your house map to furniture marketers. 72 The information can be combined in imaginative ways, and algorithms can draw sophisticated inferences from it. Airbnb is said to use a trait analyzer to detect dark personality traits, such as narcissism, by evaluating hundreds of signals with predictive analysis and machine learning. 73 Consequently, marketers (or employers) will know more about you, including things you would not want to reveal (e.g., that you really need that flight for next April, no matter the price). They may also know things that you did not even know about yourself, thereby understanding your “most personal motivations and vulnerabilities.” 74

Fourth, the digital economy can open up new CR issues. Think about how digitalization impacts individuals and society, bringing, for example, addiction, mental health effects, and shortened attention spans at the individual level as well as misinformation, deepfakes, surveillance, election influencing, and polarization at the societal level. 75 Consider Sora, the generative AI system announced in 2024 that can produce realistic one-minute videos from text prompts, in combination with the fact that young consumers increasingly get their news from TikTok. Could these profound individual and societal impacts be considered a genuinely new CR issue brought by digitalization? The view that these impacts challenge the traditional understanding of CR finds support in how Trittin-Ulbrich and colleagues 76 summarize certain research findings 77 : “notably . . . responsibility professionals, which previous studies have identified as particularly committed to facilitating responsible business practices . . . reject, somewhat unexpectedly, the idea of organizational responsibility for the social harm of digitalization.”

In addition, the arrival of new issues in the future seems increasingly possible with a topic as transformative as digitalization. The developments of digital technology will not end with what we are witnessing now (e.g., the emergence of generative AI). In fact, in the pipeline, there are applications related to quantum computing, blockchain beyond cryptocurrency, augmented and virtual reality, and others that may come with CR issues of their own. 78

Moral Patients: Responsible toward Whom?

Our second analytical question is about toward whom (or what) the firm is responsible. The established way of approaching this issue is to say that firms are responsible toward their stakeholders: groups and individuals who can affect the company or be affected by it. 79 Besides identifying stakeholders per se, an important consideration is stakeholder salience, which determines the priority awarded to different stakeholder groups by managers. According to the influential framework proposed by Mitchell and colleagues, 80 stakeholder salience is based on the extent to which the stakeholders exhibit the characteristics of power, legitimacy, and urgency. (However, treatment of salience in that framework is highly firm-centric when it relies on power, and only the characteristics of legitimacy and urgency relate to the inside-out view that we are taking in this article.)

The digital economy can change the salience of existing stakeholder groups. Power, legitimacy, and urgency are all socially constructed concepts, and the model is dynamic, meaning that attributions of these characteristics can change with time. 81 The advent of social media can be strategically used by stakeholders to influence these characteristics and thus build salience. 82 For example, a stakeholder can make visible claims in social media and thus threaten firm reputation 83 or amass the support of other stakeholders even from far-away contexts thanks to fast and cheap communications around the world. In an extreme case, a group could move from being a non-stakeholder with no salience (without any power, legitimacy, or urgency) to being a stakeholder. Note that the salience of a stakeholder group could also decrease thanks to digital technology, such as if increased transparency leads to questions about the legitimacy of a stakeholder group’s claims.

In addition, digitalization may make new stakeholder groups emerge. This means that digitalization may invite us to question the definition of a stakeholder in a fundamental sense. The classic definition of stakeholders as groups and individuals restricts stakeholders to human entities. According to a strict interpretation, even the natural environment is therefore not a stakeholder, but its interests need to be represented by others who are (though not all see it this way 84 ). However, with digital technology, the question has been posed whether algorithms and robots could be regarded as stakeholders. The scope of ethical consideration for centuries only included humans, but as it has become more inclusive (e.g., extending to animals), the next question is: What about the machine? 85 Whether AI could be granted legal personhood was discussed as a theoretical question as early as 1992. 86 It has also been asked whether artificial agents should be granted rights similar to those accorded to group agents. 87

Moral Agents: Who Is Responsible?

The simplest answer to our third analytical question would be that a company is responsible for its own direct actions. However, hand in hand with increased outsourcing and globalization, this scope has considerably expanded. The UN has developed an influential normative framework according to which a company can be responsible for an adverse impact if it causes the impact, contributes to it through actions or omissions, or is linked to it through business relationships with partners, value chain actors, or other entities. 88 Thus, it is no longer sufficient for a firm to limit its responsibility considerations to its own direct actions only. 89 In addition, a legislative initiative in the EU (the Corporate Sustainability Due Diligence Directive) will significantly increase the accountability of firms for environmental and human rights issues arising in their supply chains. Macdonald discusses institutional relationships through which firms may indirectly influence social outcomes: decentralized structures such as joint ventures, participation in business networks and supply chains, and relationships with governments and other actors in host countries. 90 She concludes that “there remain important domains of business influence . . . for which the boundaries of business responsibility remain unclear.” 91

The concept of complicity can also help identify corporate responsibilities beyond the direct actions of the firm itself, and it has received more attention recently. 92 Complicity, which describes how firms may contribute to social impacts through business relationships, has been discussed in the context of human rights, 93 but it has broader applicability. Complicity can be direct (as when a company knowingly assists in a harmful action), beneficial (when a company benefits from the harmful action committed by someone else), or silent (when a company fails to raise the issue with appropriate authorities). 94 Applying similar thinking in the context of online data supply chains, Martin argues that firms should consider their responsibilities in entire systems where they benefit from or could stop harmful practices, or where they played a part in implementing harmful practices. 95

The digital economy can affect the allocation of responsibility among actors in at least three ways. First, it can blur answers to the question of where responsibility resides between firms and individuals in multi-sided markets where individuals outside the firm can participate in multiple roles beyond that of a traditional consumer. For example, in the sharing economy, should the responsible actors be seen to be the firms (operating through platforms) or the citizens participating in peer-to-peer transactions (as potentially both providers and consumers and aided by the platforms provided by firms)? Further, can firms such as Facebook and X (formerly Twitter) be considered only neutral intermediaries for communications, or do they have some responsibility for the content that third parties (including individuals) post on their platforms (e.g., as under the UK Online Safety Act 2023)? What of the growing use of these platforms in human trafficking and ransom demands? 96 And what about the responsibility for mobile applications that are sold through Google Play or App Store but whose development ecosystem may include many non-firms and non-professionals, such as application developers who are hobbyists with little knowledge of privacy considerations? 97

Second, the digital economy can blur answers to the question of where responsibility resides among different firms. An ecosystem can be defined as “a group of interacting firms that depend on each other’s activities.” 98 This might sound familiar—Nespresso is an ecosystem 99 —but there has been a surge of interest in ecosystems in recent years, associated in large part with the growth of the digital economy. Fuller and colleagues speak of the emergence of the digital economy giving rise to “dynamic, multicompany systems as a new way of organizing economic activity.” 100 This is critical in thinking about allocating responsibility because “the firm is no longer an independent strategic actor.” 101 If the firm no longer acts independently and, instead, is acting in concert with multiple parties, then it becomes more difficult to say who is to blame when something goes wrong.

Third, the digital economy can raise novel questions concerning where responsibility resides between humans and machines. Will a firm that is producing or utilizing artificial agents be responsible for the decisions and actions of those agents? What if the artificial agents are self-learning and autonomous, and the firm could not predict how they would behave in a particular situation, for instance because parametric updates take place continuously? 102 And which firm would be held responsible, the developer or the user of the technology? A responsibility gap is created when moral responsibility for the actions of an autonomous system cannot be assigned to any humans or their organizations. 103 But could the machine itself be responsible? Can moral responsibility be assigned to a machine? Humans often tend to do so, especially if the machine is human-like, 104 but whether this is justified now or in the future is the subject of considerable debate. 105

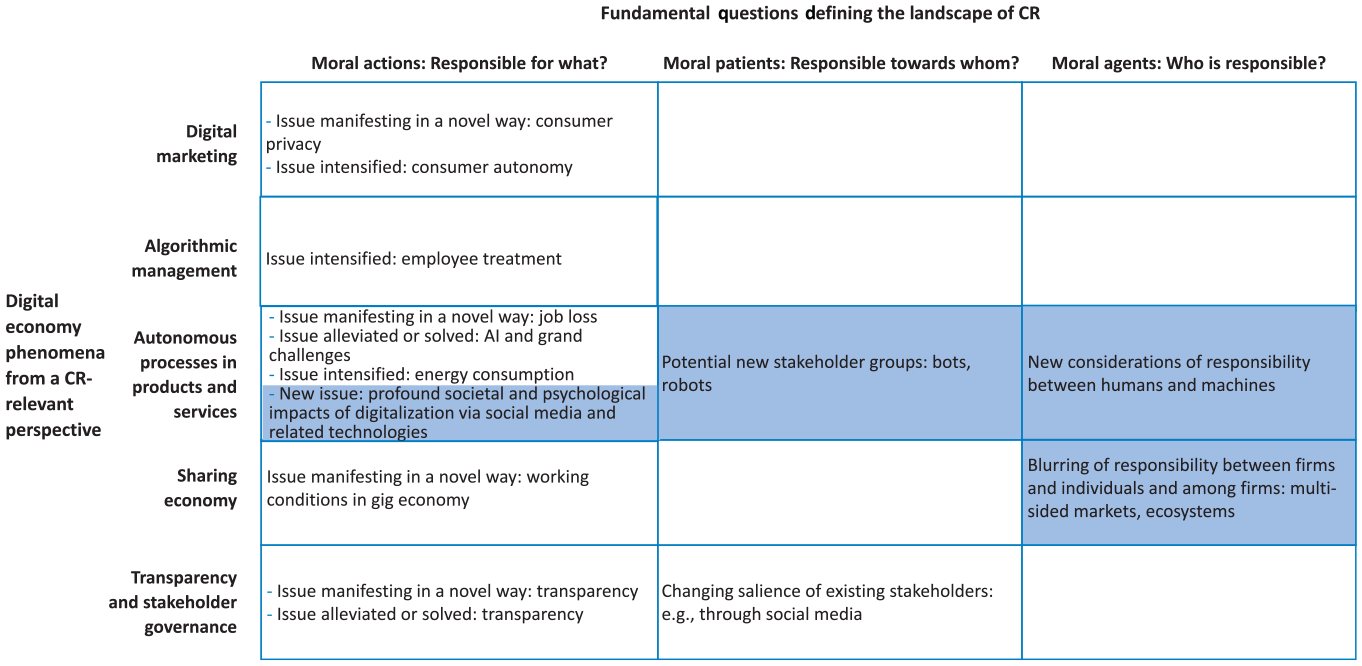

Evaluating the Scope of Changes to the CR Field

We now look overall at how digitalization of the economy impacts the domain of CR. As shown in Figure 2, two important conclusions can be drawn. First, while changes are happening across the entire CR field, not everything changes. The empty cells in the figure indicate that not all the key digital economy phenomena affect the three core CR questions. The first question about moral actions is widely affected, but the second and third questions about moral patients and moral agents are affected only by some of the phenomena. Second, while there are extensive and important changes arising for CR from digitalization, not all changes are foundational. Our examination of the changes reveals that only some of them (denoted by background shading) challenge basic assumptions in the current theoretical understanding of CR. Overall, therefore, we identify a mix of three potential outcomes from CR meeting the digital economy: no changes to the CR landscape; changes that do not challenge basic assumptions but are still important; and important changes that do challenge basic assumptions of CR.

Illustrating how the digital economy can impact the field of CR.

With the first question about moral actions (“responsible for what?”), CR issues may now be manifest in a different form, or possibly even intensified, and with new urgency. Nonetheless, existing conceptions about what kinds of issues firms are responsible for can still accommodate most of the issues arising with digitalization. Where new issues are ultimately intensified forms of old issues, there is no requirement to re-examine basic assumptions. The sole exceptions we identify are the profound societal and psychological impacts of digitalization via social media and related technologies (e.g., smartphones). 106

As to the second question about moral patients (“responsible toward whom?”), insofar as we are talking about the changed salience of stakeholder groups, this is again fully accounted for within extant theorizing and assumptions. Stakeholder salience is known to be dynamic, 107 and even if the changes in salience now occur because of digitalization, this does not challenge basic assumptions. If we are talking about awarding moral consideration to artificial agents, that would certainly call for a re-examination of the basic assumption concerning who can be a stakeholder because stakeholder theory is currently about human actors and their interactions. 108 It is generally argued that to be deserving of moral consideration, an entity needs to be sentient, able to possess affective mental states. The possibility of artificial agents becoming moral patients and hence stakeholders thus hinges on the question of whether they could at some point become sentient. 109

The third question about moral agents (“who is responsible?”) gives rise to the greatest need to re-examine basic assumptions. We identify two such cases. The first is the need to remove the assumption of human-only actors. The assumption of human-only actors is evident in the literature on corporate moral agency. While there is debate about whether we can ascribe moral responsibility to organizations, as opposed to the individuals that make up those organizations, 110 proponents of both views start from assumptions about human actions and intentions, be they as individuals or as a collective. However, allocating responsibility now requires attention to the decisions and actions of machines in the digital economy.

Machines can be actors with causal responsibility. For example, in March 2018, an autonomous car driving at forty miles per hour in Tempe, Arizona, struck and killed a woman who was pushing her bicycle in the street. 111 The self-driving vehicle was undergoing tests with a backup driver behind the wheel who had not intervened. But what does a case like this one mean for moral responsibility? Does responsibility lie with the backup driver, the developers of the technology, the city government that approved the tests, or, perhaps, with the vehicle itself? It has been postulated that humans are always ultimately responsible (even if they are one or more steps removed and lack awareness of their responsibility), but also that humans cannot be responsible for actions by autonomous machines, that such machines might someday be morally responsible in a narrow sense, or that responsibility should be shared between machines and humans. 112

According to the standard conception, the status of an agent as morally responsible is determined based on the internal properties of the agent, such as intentionality, free will, and a conscious mind. 113 While machines may appear to have goals, and in a sense they do (a chess computer selects moves to win), fundamentally they do not have their own intentions, and their goals are ultimately set by humans. For example, the chess computer does not decide to play poker. This is an area with potential for discrepancy between normative ethical theory and layperson perceptions. The standard conception is currently also being challenged by alternative positions that argue for a conceptual shift in thinking about moral responsibility. 114 Furthermore, questions of legal responsibility, which may not fully overlap with moral responsibility, are also important in shaping the workings of the digital economy. Whether machines are responsible, morally or legally, has implications for the extent to which humans and their organizations are responsible, and which humans or organizations are responsible. In sum, crucial questions about allocating CR arise with the removal of the assumption of human-only actors.

Machines have had potential causal responsibility before the arrival of the digital economy. For example, an automated coffeemaker can be pre-set to brew coffee in the morning, but the machine might also malfunction and set itself on fire. However, the CR literature did not give this much attention because of the limited scope of non-human actors. Examples of machines having control were generally few and far between, the range of activities over which they had control was small, and human oversight was generally present or close at hand and with full understanding of what the machine was doing and how to intervene if need be. This scope has changed out of all recognition with the machines now operating in the digital economy.

Another case where basic assumptions are questioned is the need to remove the assumption of clearly defined entities as actors. By “clearly defined entities,” we mean that an actor-entity is distinct, with clear organizational boundaries, that its role in the action is clear, and that its relationship with other actor-entities is clearly defined. Schrempf-Stirling and colleagues capture the fact that such assumptions are indeed attached to corporate moral agency, summarizing: Key characteristics of corporate moral agency are that the agent . . . is a single entity or unit to whom responsibilities can be assigned . . . The corporation is a distinct entity . . . scholars routinely take this assumption for granted when discussing responsibilities of corporations.

115

Yet this characterization of business as involving clearly defined entities is increasingly outdated. First, organizational boundaries become permeable with new organizational forms, such as platforms and ecosystems, including “independent contractors performing contingent, part-time, and temporary work.” 116 Second, the roles of producers versus consumers, or those of employees versus contractors, become fuzzy in multi-sided markets. Third, outcomes tend to arise from interactions of multiple parties—potentially much more numerous than before because of how digitalization enables parties to come together in different ways—though not always with clear governance mechanisms. 117 All this serves to obscure the identification of which actors, with what boundaries, could be assigned responsibility, and what their contribution and role are and thus what share of responsibility could be apportioned to them. Important questions about allocating responsibility arise with the removal of the assumption of clearly defined entities.

Implications for Different Audiences

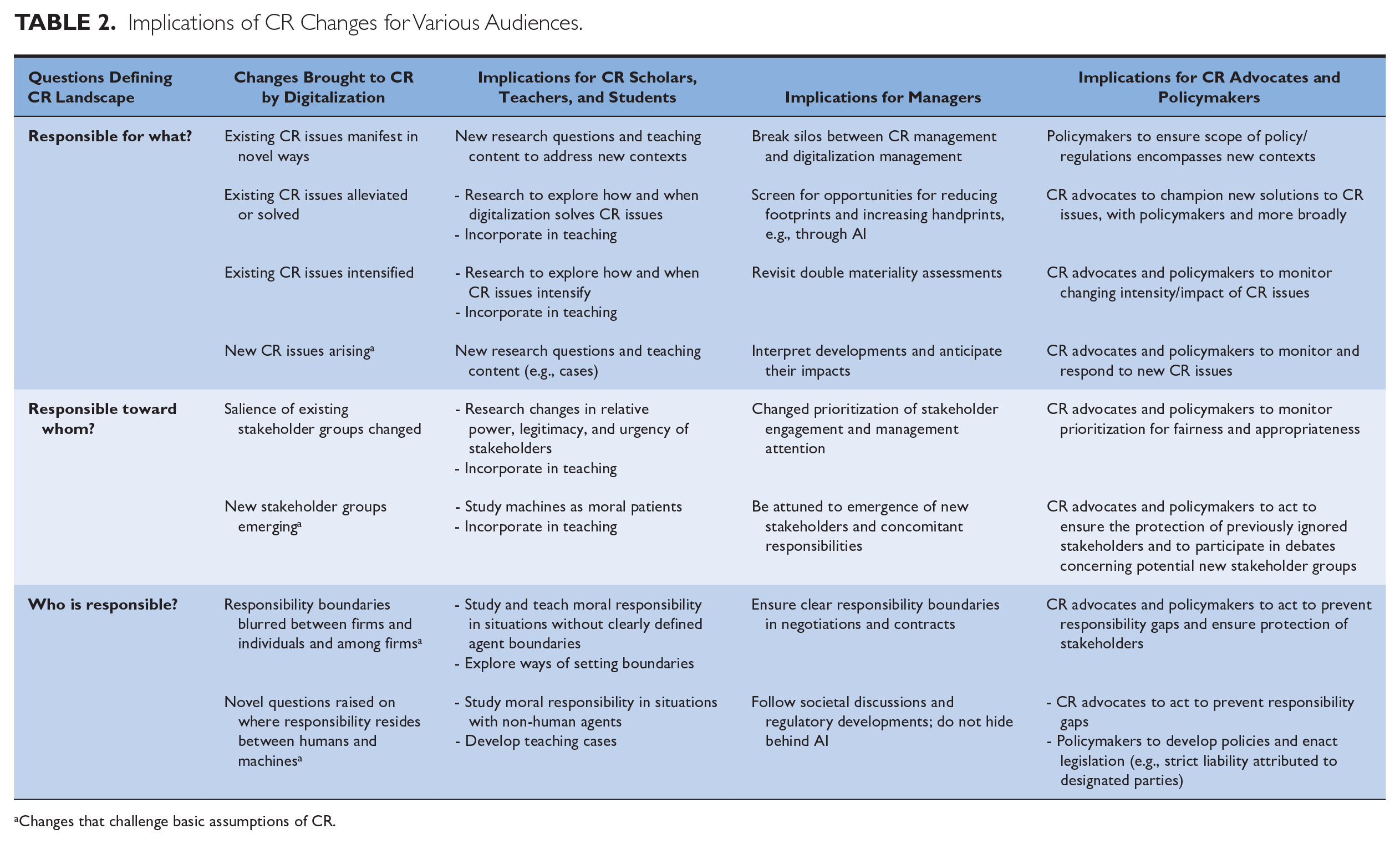

Table 2 summarizes the key implications for various audiences of the significant changes resulting from CR meeting the digital economy.

Implications of CR Changes for Various Audiences.

Changes that challenge basic assumptions of CR.

Implications for the CR Field and CR Scholars

Gunkel writes: “The machine question . . . puts in question the entire edifice of ethics [. . . it] not only adds interesting new dimensions to old problems, but leads us to rethink, methodologically, the very grounds on which our ethical positions are based.” 118

Our findings indeed identify interesting new dimensions to old problems. Existing CR issues are manifest in novel ways, are intensifying, or are becoming alleviated or solved; and the salience of existing stakeholder groups is changing. CR scholars need to stay abreast of this changing landscape, even if changes can be accommodated within current assumptions.

Beyond this, exposing the edifice of CR to the challenge of digitalization serves as an ‘acid test’ that can reveal eventual gaps or weaknesses. Our findings show four areas where basic assumptions of the current CR field can be brought into question: new CR issues arising, new stakeholder groups emerging, responsibility boundaries blurring between firms and individuals and among firms, and novel questions being raised on where responsibility resides between humans and machines. All have direct implications for future CR research and teaching. We also leave the door open to further, entirely new CR issues emerging, beyond the harmful societal and psychological impacts of social media and related technologies.

For example, changes in stakeholder power, legitimacy, and urgency because of digitalization can change stakeholder salience. Current theories of stakeholder salience implicitly prioritize stakeholders according to the interests of the firm, meaning some stakeholders are discounted or even ignored. Digitalization provides opportunities for these stakeholders to be heard. In this sense, they are new stakeholders. However, genuinely new stakeholders, from the perspective of the CR field, would be robots as new moral patients created by digitalization. While this is controversial, researchers are already exploring robot rights, 119 and robots are already afforded some legal rights (e.g., delivery robots on pavements in some jurisdictions). Changes like these need to be accommodated within research and teaching.

As CR scholars adapt to digital transformation, both the normative and the descriptive perspectives will be essential. The dominant feature of a normative perspective on CR is “its emphasis on formulating prescriptive moral judgments.” 120 It asks where responsibility “correctly” lies and who should be responsible. By contrast, the descriptive perspective aims to “answer questions about what is by attempting to describe, explain, and/or predict phenomena in the empirical world.” 121 It asks where people perceive responsibilities to lie. The normative perspective helps managers and other actors to identify the morally right course of action and to determine their individual moral responsibility for outcomes. The descriptive perspective helps those managers and other actors to understand the perceptions and judgments that stakeholders make about the actions undertaken and who is responsible for those actions. Misalignments between the two are also important to consider.

The normative questions need to be addressed conceptually and cover several topics. First, who or what can be the bearer of moral responsibility: can machines be responsible agents, in what sense, and under what circumstances? Second, how should responsibility be allocated between the many actors involved, like the developers, data providers, users, and potentially the algorithm or robot itself? Third, how is the responsibility of humans affected by the fact that the actions of the machine can be unpredictable (e.g., because of machine learning)? And finally, how could machines be trained to act ethically—and by whose conception of morality?

The descriptive questions require empirical investigation (e.g., experiments) to establish how people understand the allocation and limits of responsibility. Relevant questions include whether people perceive machines as responsible agents, and the factors that influence this perception, such as characteristics of the machine, the context, or the language and framing used (e.g., anthropomorphizing the machine).

Implications for Managers

General managers, managers tasked with digitalization, and CR managers can all benefit from our findings in thinking systematically about CR in a digitalized world. Indeed, when it comes to understanding and managing the interface between CR and digitalization, it is important that there is coordination and integration across different management roles, not silos and fragmentation.

To effectively manage CR today, managers must be digitally literate and understand the ethics-relevant changes brought by digitalization. They need to understand:

How digitalization changes the CR issues the firm needs to address. For example, what are the issues? How do they manifest?

The stakeholders toward whom the firm needs to be responsible. Who/what are they? Which ones are most important?

Who bears responsibility under various scenarios and the limits of the firm’s responsibility.

Deep understanding is required because digitalization brings changes that are not only technical and superficial but that can also fundamentally disrupt business—and hence CR. Moreover, the technologies are developing fast (as witnessed with generative AI), and predicting the future is becoming increasingly more difficult. Accordingly, it is crucial to have a deep understanding whereby managers can judge what developments are significant, foresee the meaning of those developments for CR management, and build preparedness and agility to react rapidly when necessary.

Our framework can help managers better navigate CR under digitalization. The question “Responsible for what?” instructs managers to regularly revisit their double materiality assessments while paying attention to the changes brought by digitalization. 122 Something new may emerge on the firm’s materiality matrix, or the position of an existing CR issue may change, as we have discussed. Notably, materiality assessments usually contain a strong stakeholder engagement component, but stakeholders may not immediately grasp the impacts of digitalization and may struggle to anticipate how it affects them, so being attuned to these issues within the firm itself is important. Also, while some issues may not be new per se, they may be new for CR managers—for instance, if topics like CR and AI ethics are compartmentalized in separate silos within the organization. Managers should also explicitly and systematically screen for opportunities for reducing the organization’s footprint, or increasing its handprint, through digital technologies. Whereas a footprint refers to the harmful impacts of a company, a handprint refers to the positive impacts of a company beyond reducing its footprint. Consider, for example, how organizations have found that online meetings eliminate the need for much business travel, cutting both costs and emissions.

The question “Responsible towards whom?” calls on managers to monitor the changing salience of different stakeholders, and to prepare for increased transparency of their activities. The debate on machines as potential stakeholders cannot be ignored, even if the prospect of firms being responsible toward them seems implausible at present.

Finally, the question “Who is responsible?” also comes with strong management implications. Obscured responsibility is problematic if firms cannot be clear about where they may be held responsible. Managers therefore need to follow societal discussions and regulatory developments on the responsibilities of various parties. Given unclear circumstances, it becomes especially important to ensure in negotiations and contracts with suppliers and clients that the boundaries of responsibilities are unambiguous. Equally, managers should confirm that their strategy is not based on trying to avoid responsibilities by hiding behind AI or taking advantage of unclear market roles. Moreover, managers should anticipate legislative changes that attempt to address the responsibility gap, including the possibility of imposing strict legal liability on firms in cases where the responsible parties cannot be readily identified.

Implications for CR Advocates and Policymakers

Policymakers need to stay alert to existing CR issues that become manifest in novel ways or become more intense. As truly new CR issues emerge, policymakers will need to pay attention and may need to update their expertise. The same holds for changes in stakeholder salience and the emergence of new stakeholder groups. Policymakers may, for example, need to adapt information provision to new stakeholders or develop regulations to address new CR issues if firms do not self-regulate. Policymakers will likely be particularly attentive to the challenges of responsibility gaps, where it is uncertain who is responsible. While self-regulatory efforts by firms and business associations will no doubt attempt to establish norms around who assumes responsibility for outcomes under these circumstances, ultimately there may need to be strict legal liability attached to certain parties and prescribed under new legislation. Several regulatory initiatives to address CR-related issues in the digital economy are already underway at various stages. For example, the EU is considering the AI Liability Directive (which includes a new liability regime to cover harms caused by AI systems) and has adopted the Platform Work Directive (which includes classification of employment status for platform work and rules on algorithmic management in the workplace) and the AI Act (which includes safeguards, limitations, and bans on certain uses of AI systems).

Advocates of CR can be expected to direct policymaker attention to CR issues that intensify in impact or are found under new guises, as well as to campaign directly. They are also likely to champion the new solutions to CR issues that digitalization provides. Like policymakers, they are likely to be attentive to changes in the salience of stakeholders, the emergence of new stakeholders, as well as the responsibility gaps that will emerge as attributing responsibility becomes opaquer, if not impossible.

Conclusion

Speaking about how to improve understanding of CR, Wang and colleagues write that “we need theoretical efforts that either provide precision by being narrowly focused with clearly defined constructs and assumptions . . . or offer an integration of various contingencies and perspectives, providing a comprehensive understanding . . . under one unifying framework.” 123 It is this latter route that we have taken in our paper. We set out to examine how digital transformation in the economy may change the field of CR and whether some of its basic assumptions need to be re-examined.

Mapping the changes against three core questions that capture the basic landscape of the CR field, we found important changes for all three questions. For our first question (“Responsible for what?”), we find that existing CR issues are manifest in novel ways, intensified, alleviated or solved, and that new CR issues could arise. As to our second question (“Responsible toward whom?”), the salience of existing stakeholder groups is changing, and new stakeholder groups may be emerging. For our third question (“Who is responsible?”), there are responsibility boundaries blurring between firms and individuals, as well as between firms, and novel questions raised on where responsibility resides between humans and machines. These changes have important management implications. Moreover, new CR issues, the possible emergence of new non-human stakeholders, and the allocation of responsibility when machine actors are involved or when human actors and their organizations are no longer clearly defined entities challenge important basic assumptions in the field.

Digital technology is developing rapidly, bringing major transformations in ways of doing business. To remain relevant, the CR field must update accordingly. This extends, as we have shown, to basic assumptions in the field and comes with profound implications for scholars, managers, CR advocates, and policymakers.

Footnotes

Acknowledgements

The authors gratefully acknowledge Dreyfus Sons & Co. Ltd., Banquiers for research support of this project.

Notes

Author Biographies

Leena Lankoski is a Senior University Lecturer in Sustainability in Business at Aalto University School of Business and a Visiting Scholar at INSEAD Ethics and Social Responsibility Initiative (ESRI) (email:

N. Craig Smith is the INSEAD Chair in Ethics and Social Responsibility, Director of the INSEAD Ethics and Social Responsibility Initiative (ESRI), and a Visiting Professor at the University of Birmingham (email: