Abstract

In recent years, attention has turned to the development of evidenced-based learning progressions/trajectories as a means of identifying the likely paths learners might take in developing a deep, well-connected understanding of key aspects of mathematics. However, the extent to which this work influences what happens in mathematics classrooms varies greatly depending on the prevailing relationship between curriculum, pedagogy and assessment. This article will draw on current policy documents and the literature to challenge current assumptions at the national level about what constitutes a learning progression. It will draw briefly on the results of a recently completed, large-scale study on mathematical reasoning in the middle years of schooling to make a case for evidenced-based learning progressions/trajectories as boundary objects in reconnecting and rebalancing the curriculum, pedagogy and assessment relationship to support reform at scale.

Keywords

Learning progressions, learning trajectories, continuums and progress maps are terms that have been used to describe the key steps or pathways learners typically take as they move from naïve to more sophisticated understandings in a particular domain over time (Australian Curriculum, Assessment and Reporting Authority [ACARA], 2019b; Masters & Forster, 1996). However, how these are understood, developed and used varies considerably. This article will briefly review the different ways in which learning progressions/trajectories are understood and used by different communities of practice for different purposes while sharing a common commitment to the importance of identifying and building on what learners already know and what might be within their reach with support from their teachers and peers. It will then explore why the focus of mathematics education reform needs to shift from the classroom to the national arena and make a case for the role of evidenced-based learning progressions/trajectories in supporting reform at scale (Carpenter et al., 2004). To illustrate what this role might entail, the discussion will turn to a description of the Reframing Mathematical Futures II (RMFII) project that developed evidenced-based learning progressions for algebraic, geometrical and statistical reasoning, before making a case to consider the outputs of the RMFII research as boundary objects between different communities of practice to effect reform at scale. The aim is to engage those concerned with national curriculum development, those concerned with the development of assessment frameworks at the national level, and those concerned with improving the quality of mathematics teaching and learning in classrooms, in a discussion about what is important in progressing the mathematics learning of all students.

Understanding learning progressions/trajectories

However learning progressions/trajectories are understood, developed and used, they share a common theoretical premise that has its roots in both cognitive science and social constructivism (e.g. Clements & Sarama, 2004; Confrey, 2019). This shared view recognises learning ‘as both a process of active individual construction and a process of enculturation into the mathematical practices of wider society’ (Cobb, 1994, p. 13). Further, what is learnt and the extent to which it is learnt is a function of the learner’s prior knowledge, past experience of learning, their beliefs and attitudes, and the sociocultural environment in which the opportunity to learn is situated. But common to most, if not all, instantiations of learning progressions/trajectories, is the view that learning takes place over time and that teaching involves understanding where learners are in their learning journey and providing challenging but achievable learning experiences likely to progress that journey (Masters, 2013, 2017; Siemon, 2019).

The term learning progressions/trajectories (LP/Ts) is being used here to recognise that while learning progressions and learning trajectories are often used interchangeably in the mathematics education literature, this is not the case in other fields such as science education and education policy. In what follows, the terms will be used as they are used by the authors whose work is being cited, otherwise the reference will be to LP/Ts.

LP/Ts in the public domain

Public interest in learning progressions has been significantly heightened in recent years, as seen, for example, in the report prepared by Gonski and colleagues for the Department of Education – Through growth to achievement: Report of the Review to Achieve Educational Excellence in Australian Schools (DET, 2018) – which defines a learning progression as outlining: …a sequence of observable and increasingly demanding levels of knowledge, skills and understanding. All levels are defined by criteria that relate to what a child knows, understands, and can do at the time of assessment, independent of year level or age (p. 129)

While the shared theoretical premise is evident in this statement, the report does not specify how the learning progressions will be developed beyond acknowledging that further work is needed in areas other than literacy and numeracy, and a reference to ‘drawing on the expertise of the national, state, and territory curriculum authorities and other experts in the field’ (DET, 2018, p. 32). However, the subsequent publication of the learning progressions for literacy and numeracy (ACARA, 2020), and the report’s recommendation that the Australian Curriculum should be progressively revised to ‘present the learning areas and general capabilities as learning progressions’ (DET, 2018, p. xiii), suggest that learning progressions are seen more as an alternate way of presenting the existing curriculum than as an evidenced-based means of questioning current assumptions about what knowledge, skills and understandings should be taught and when they should be taught. This learning progressions as curriculum perspective can be seen in the report’s recommendation to develop ‘a new online and on demand student learning assessment tool based on the Australian Curriculum learning progressions’ (p. 66), the purpose of which is to ‘diagnose a student’s current level of knowledge, skill and understanding, to identify the next steps in learning to achieve the next stage in growth, and to track student progress over time’ (p. x).

Not surprisingly, the ‘learning progressions as curriculum’ view is evident in the definition of the National Numeracy Learning Progressions (NLNPs) (ACARA, 2019b) that refers to the ‘learning pathway(s) along which students typically progress in particular aspects of the curriculum regardless of age or year level’ (p. 7). It is also evident in the mapping processes use to develop the final versions of the NLNPs. Five separate mappings were conducted to compare and/or cross-validate the NLNPs in relation to existing curriculum-related, learning progressions and assessment items. These included the measures-based scales from the Progressive Achievement Tests for Reading and Mathematics (Australian Council for Education Research [ACER], n.d.) and the National Assessment Program in Literacy and Numeracy (NAPLAN, https://www.nap.edu.au), as well as ‘jurisdictional assessment resources’ (ACARA, 2019a, p. 3), meaning state- or territory-based literacy and/or numeracy assessments. While these mappings provide an empirical basis for the literacy and numeracy progressions, the models of student learning they generate are limited by what has been assessed and the mode of assessment. That is, curriculum-related knowledge, skills and inferred understandings assessed by multiple-choice test items in an online learning environment. This practice assumes that the curriculum as assessed is a fair and reasonable model of the typical paths all students take as they learn in Australian schools over time. This assumption is challenged by declining rates of participation in the more advanced mathematics and science subjects at the senior levels of schooling (Wienk, 2020), the continuing decline in Australian students’ performance on international assessments of mathematical literacy (Thomson et al., 2019, 2020), and evidence to suggest a growing divide between lower and higher achieving students in mathematics (Masters, 2013; Thomson, 2021).

Learning progressions developed in this way cannot claim to be evidenced-based models of students’ thinking and learning derived from ‘theoretically rich design studies’ Confrey, 2019, p. 22) of students’ learning over time, which is a key characteristic of LP/Ts represented in the research literature (e.g. Baroody et al., 2004; Lehrer et al., 2014).

LP/Ts in the education research literature

Interest in research-based learning progressions evolved in response to calls to ‘build equitable and coherent systems of assessment’ (Shepard et al., 2018, p. 22) that are based on, and consistent with, research-based models of students’ thinking and learning in particular domains over time (Masters, 2013). This reflects the need to align assessment regimes with reform-oriented curriculum and pedagogy at the national level (i.e. reform at scale), a focus that has been shared in both the mathematics and science education research literature (e.g. Black et al., 2011; Duncan & Hmelo-Silver, 2009).

From a science education perspective, Smith et al., (2006) defined learning progressions as ‘descriptions of successively more sophisticated ways of reasoning within a content domain’ (p. 1, emphasis added). Corcoran et al. (2009) described learning progressions in science as ‘empirically grounded and testable hypotheses about how students’ understanding of, and ability to use, core scientific concepts and explanations and related scientific practices grow … over time, with appropriate instruction ’ (p. 8, emphases added). The notion of what is meant by ‘core’ is elaborated by Lehrer and Schauble (2015), who describe learning progressions as ‘accounts of the growth of organising domain concepts or “big ideas” … often coordinated with forms of practice that are characteristic of the disciplines’ (p. 433, emphases added). That is, tasks or activities through which students can demonstrate their understanding of the big ideas and scientific reasoning practices.

From a mathematics education perspective, the learning trajectory notion has its origins in Simon’s (1995) conceptualisation and use of a hypothetical learning trajectory (HLT) which he explored in the context of a constructivist teaching experiment involving primary pre-service teachers. In his view, an HLT involves ‘consideration of the learning goal, the learning activities, and the thinking and learning in which students might engage’ (Simon, 1995, p. 133). In this case, the learning goal was to understand the multiplicative relationships involved in determining the area of a rectangle, and while the anticipated model of students’ thinking and learning was informed by research, it was nonetheless hypothetical, to be reviewed and modified as a result of reflection on the students’ thinking and learning as they engaged with the teaching activities. Confrey et al. (2014a) has described this notion of an HLT as a ‘tool for individual teachers to make sense of their students’ day-to-day progress and to frame their moment-to-moment and day-to-day instructional planning’ (p. xiii). Since then, the learning trajectory notion has shifted to a more public space where students thinking and learning is based directly on empirical evidence and the purpose is to support the development of an ordered set of instructional materials ‘designed to engender those mental processes or actions hypothesised to move children through a developmental progression of levels of thinking’ (Clements & Sarama, 2004, p. 83) towards the achievement of specific goals (e.g. the Building Blocks programme, Clements & Sarama, 2008). Here, a learning trajectory comprises a (pre-determined) learning goal, a developmental progression (i.e. a research-based model of students thinking in relation to the goal) and an ordered set of instructional activities. This progression is based on reviews of the literature and clinical interviews and the hypothetical elements are the tasks and task sequence, which are conjectured to be the most likely to support learning with understanding.

While this work has been shown to be effective at scale when supported by professional development and implemented with fidelity, it has also challenged previously held views about how young children’s mathematical thinking develops over time (Clements & Sarama, 2008, 2014; Sarama & Clements, 2019), suggesting that evidenced-based LP/Ts can do more than inform the development of instructional materials; they can also inform what the learning goals (i.e. standards) should be. For example, Confrey and her colleagues’ work on learning trajectories for rational number reasoning across grades K to 8 (e.g. Confrey & Maloney, 2010; Confrey et al., 2014b) has challenged not only what was previously known about the teaching and learning of fractions, but established equipartitioning as an essential foundation for multiplicative reasoning more generally.

From the perspective of the RMFII research team, a learning trajectory is an integrated, empirically based learning and assessment framework that is focused on the development of one or more big ideas in mathematics and the links between them (Siemon et al., 2006, 2017, 2018; Siemon, 2019). Consistent with more familiar definitions of learning trajectories (e.g. Clements & Sarama, 2004; Confrey, & Maloney, 2010), the framework incorporates an evidence-based learning progression that serves as a model of how students’ thinking in a particular domain evolves over time, validated diagnostic assessment tools that identify where students are in relation to the learning progression, and targeted teaching advice to help teachers progress students’ learning from one level/zone of the progression to the next.

While these instantiations of LP/Ts differ in scope and focus, like the ones in science education, they clearly share many of the same components. That is: a learning goal that includes the types of reasoning involved, such as measurement in the early years (e.g. Clements & Sarama, 2008), and big ideas in mathematics over many years (e.g. Confrey & Maloney, 2010; Siemon et al., 2019); evidenced-based developmental progressions indicating key levels of student understanding and possible obstacles (e.g. Confrey et al., 2014b); progression-aligned teaching activities from the highly specified in the US (e.g. Clements & Sarama, 2008; Confrey et al., 2014b, 2018) to indicative teaching advice in Australia (e.g. Siemon et al., 2006; Siemon et al., 2018); and assessment tools that can be used to identify starting points for teaching and track student progress over time (e.g. Confrey, 2019; Siemon et al., 2019).

The need for reform at scale

Efforts to reform the teaching and learning of mathematics since the mid-1980s have their roots in social constructivist principles and research (e.g. Boaler, 2002; Kilpatrick et al., 2001). Reform practices include recognising and building on student’s prior knowledge and strategies, and a greater focus on understanding, making connections, multiple representations, problem solving, collaboration, discussion and reasoning. However, despite the endeavours of reform-oriented mathematics educators in Australia for over 40 years (e.g. Australian Academy of Science, 2021; Australian Association of Mathematics Teachers [AAMT], 2014; Lovitt & Clarke, 1988; Sullivan, 2011, 2017), international assessments of mathematical literacy consistently suggest that problem solving and mathematical reasoning are areas of difficulty for Australian students (OECD, 2019; Thomson et al., 2019; Thomson et al., 2020). Moreover, participation rates in senior science and mathematics courses are declining and there is a growing gap between low achieving students and their peers, which disproportionally impacts those from disadvantaged and lower socio-economic backgrounds (Thomson, 2021).

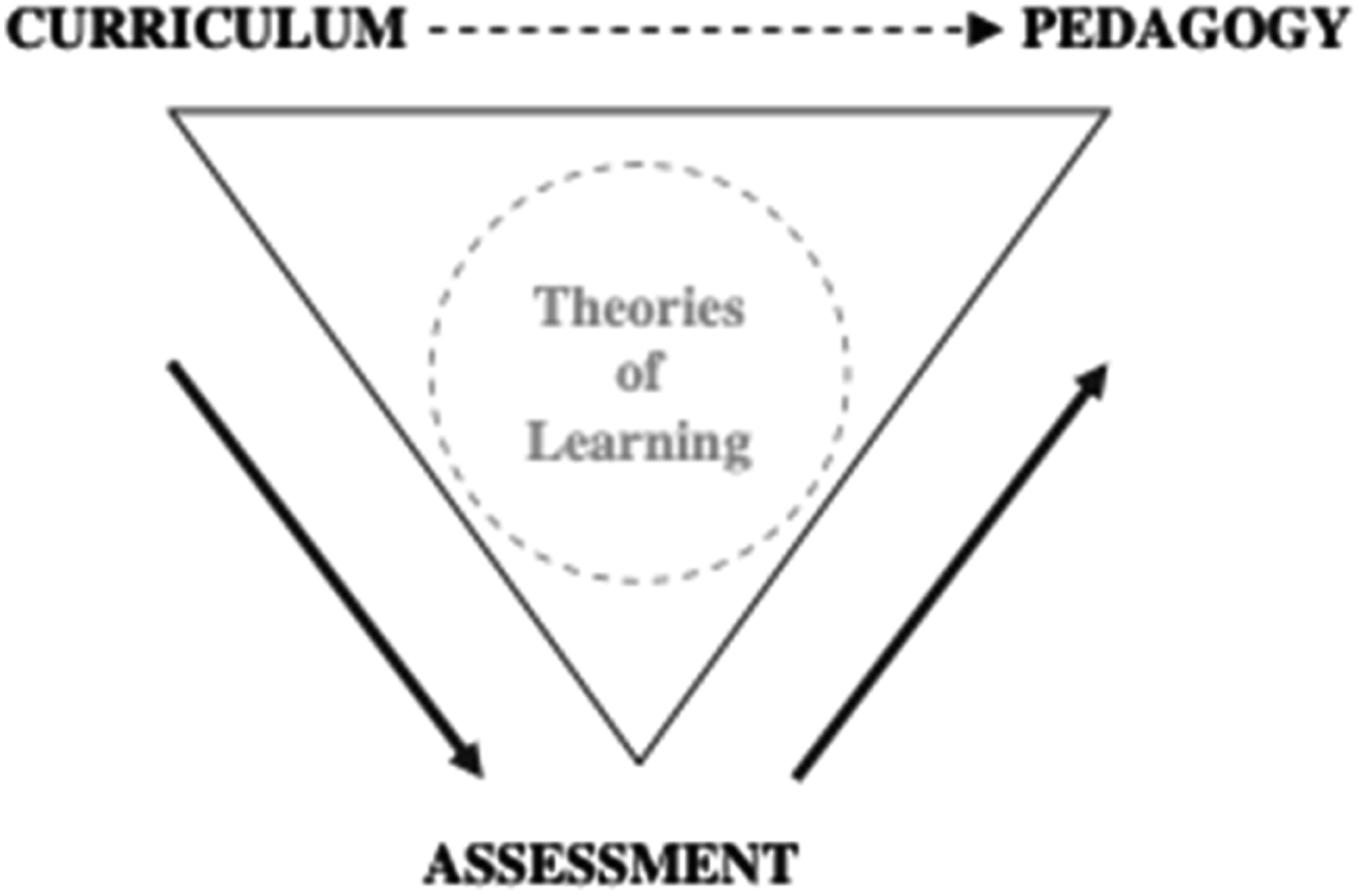

One reason for this is that where the intended/mandated curriculum is described in terms of observable behaviours, assessment tends to value performance over mastery (Klenowski & Wyatt-Smith, 2012; Sullivan, 2011) which, in turn, generates a ‘damaging backwash effect’ (Swan & Burkhardt, 2012, p. 4) on the choices teachers make about content and pedagogy. Black et al. (2011) describe this situation as a breakdown in the interrelationships between curriculum, assessment and pedagogy, which they represented as a ‘vicious triangle’ to make the point that where a curriculum is little more than a list of desired outcomes, little incentive is provided to design pedagogical approaches that value conceptual understanding and assessment does little more than distinguish between ‘got it’ and ‘not got it’. Then as Figure 1 Black et al.’s, 2011 ‘vicious triangle’. Note: From ‘Road maps for learning: A guide to the navigation of learning trajectories’, by P. Black, M. Wilson, and S. Yao, 2011, Measurement: Interdisciplinary Research & Perspective, 9(2–3), p. 80. DOI: 10.1080/15366367.2011.591654.

This breakdown in the relationship between curriculum, pedagogy and assessment can be seen in the resources developed to support teachers enact the Australian Curriculum F-10: Mathematics (AC: M) on a day-to-day basis. While these notionally value conceptual understanding and refer to problem solving and reasoning, the dominant pedagogy suggests demonstration and practice rather than discussion, connections and understanding (Shield & Dole, 2013; Siemon et al., 2012; Sullivan, 2011). This breakdown is also evident in system-wide assessments such as NAPLAN that are point-in-time assessments geared to the AC: M which tend to assess observable outcomes rather than higher order cognitive processes involved in mathematical problem solving and reasoning (e.g. Eacott & Holmes, 2010).

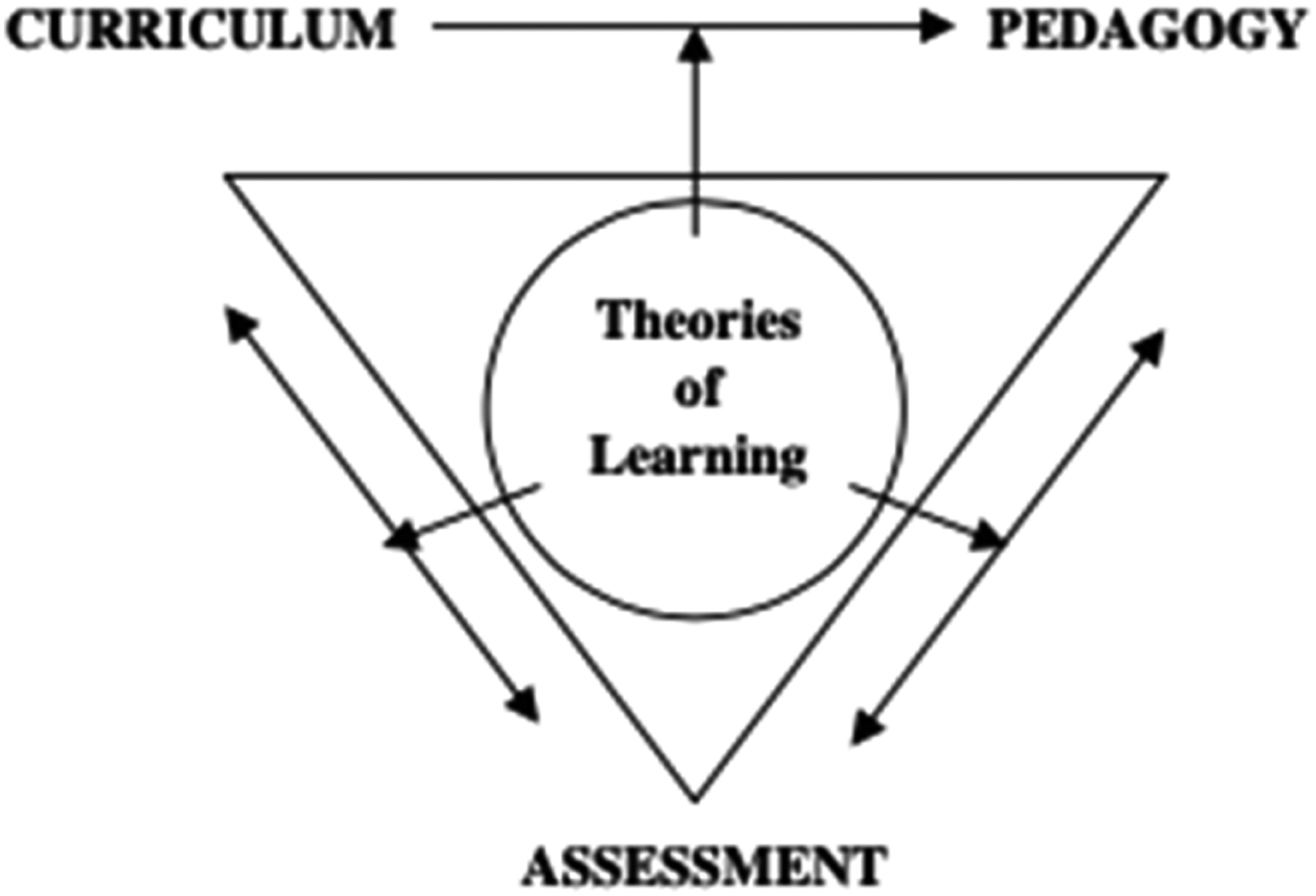

Black et al. (2011) argue that the ‘vicious triangle can be replaced’ (p. 81) by a formative approach to the learning triangle (see Figure 2), to better support and sustain reform at scale by achieving a more coherent relationship between curriculum, assessment, and pedagogy. Like other researchers (e.g. Confrey & Maloney, 2014; Sarama & Clements, 2019; Smith et al., 2006), Black et al. (2011) see a powerful role for research-based LP/Ts in this process. A formative approach to the learning triangle. Note: From ‘Road maps for learning: A guide to the navigation of learning trajectories’, by P. Black, M. Wilson, and S. Yao, 2011, Measurement: Interdisciplinary Research & Perspective, 9(2–3), p.81. DOI: 10.1080/15366367.2011.591654.

To illustrate what the role of research-based LP/Ts in this process might look like, the next section describes a recently completed, large-scale research project that developed LP/Ts for mathematical reasoning (Siemon et al., 2018, 2019).

Building evidenced-based frameworks for mathematical reasoning

The Reframing Mathematical Futures Project II (RMFII) worked with industry partners, practitioners and the professional community to build evidence-based, learning and assessment frameworks (i.e. LP/Ts) that could function formatively to support the teaching and learning of mathematical reasoning in Years 7–10 (Siemon, 2017). The decision to focus on algebraic, spatial and statistical reasoning across Years 7–10 was ambitious, but felt necessary to provide the sort of evidence and resources needed to support a sustained change in practice, away from low-complexity, procedural exercises, to teaching based on a deeper understanding of the big ideas and the connections between them (Sullivan, 2011).

For the purposes of the RMFII project, mathematical reasoning was defined in terms of: core knowledge needed to recognise, interpret, represent and analyse algebraic, spatial, statistical and probabilistic situations and the relationships/connections between them; an ability to apply that knowledge in unfamiliar situations to solve problems, generate and test conjectures, make and defend generalisations; and a capacity to communicate reasoning and solution strategies in multiple ways (i.e. diagrammatically, symbolically, orally and in writing) (Siemon, 2017)

The project used a design-based research approach (e.g. Barab & Squire, 2004; Cobb et al., 2003) involving iterative cycles of design, enactment, analysis and redesign from late 2014 to early 2018. In the first phase of the project, hypothetical learning trajectories for algebraic, geometrical and statistical reasoning were derived from the literature and used to inform the design of rich assessment tasks comprising multiple items. The tasks were trialled and refined before being tested more widely in the second phase. More than 200 items were tested and analysed using Rasch analysis (Bond & Fox, 2015). The results were used to identify the big ideas in each area and to refine the hypothetical learning trajectories. Subsequent trials and analyses were used in the second phase to validate the progressions, identify developmental zones and inform the development of teaching advice to support teachers in progressing student learning from one zone to the next. A range of validated assessment tools that identify where learners are in terms of the progressions and monitor their progress over time were also finalised during this phase (see Siemon et al., 2018 and 2019). Key project outcomes are briefly described and illustrated below to support the later discussion of the potential role of LP/Ts as boundary objects (Star & Griesemer, 1989).

Identifying the big ideas

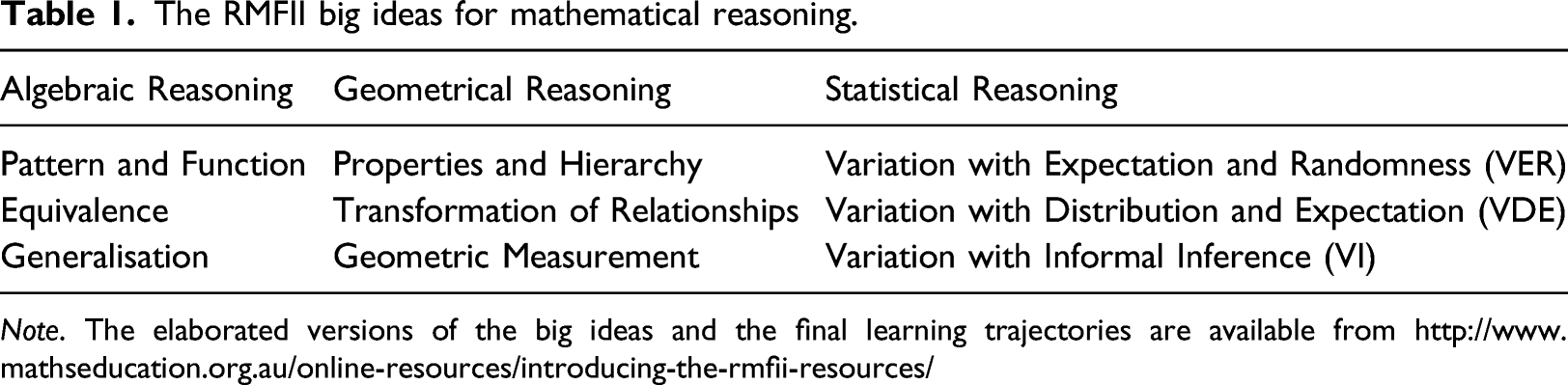

The RMFII big ideas for mathematical reasoning.

Note. The elaborated versions of the big ideas and the final learning trajectories are available from http://www.mathseducation.org.au/online-resources/introducing-the-rmfii-resources/

Developing the tasks

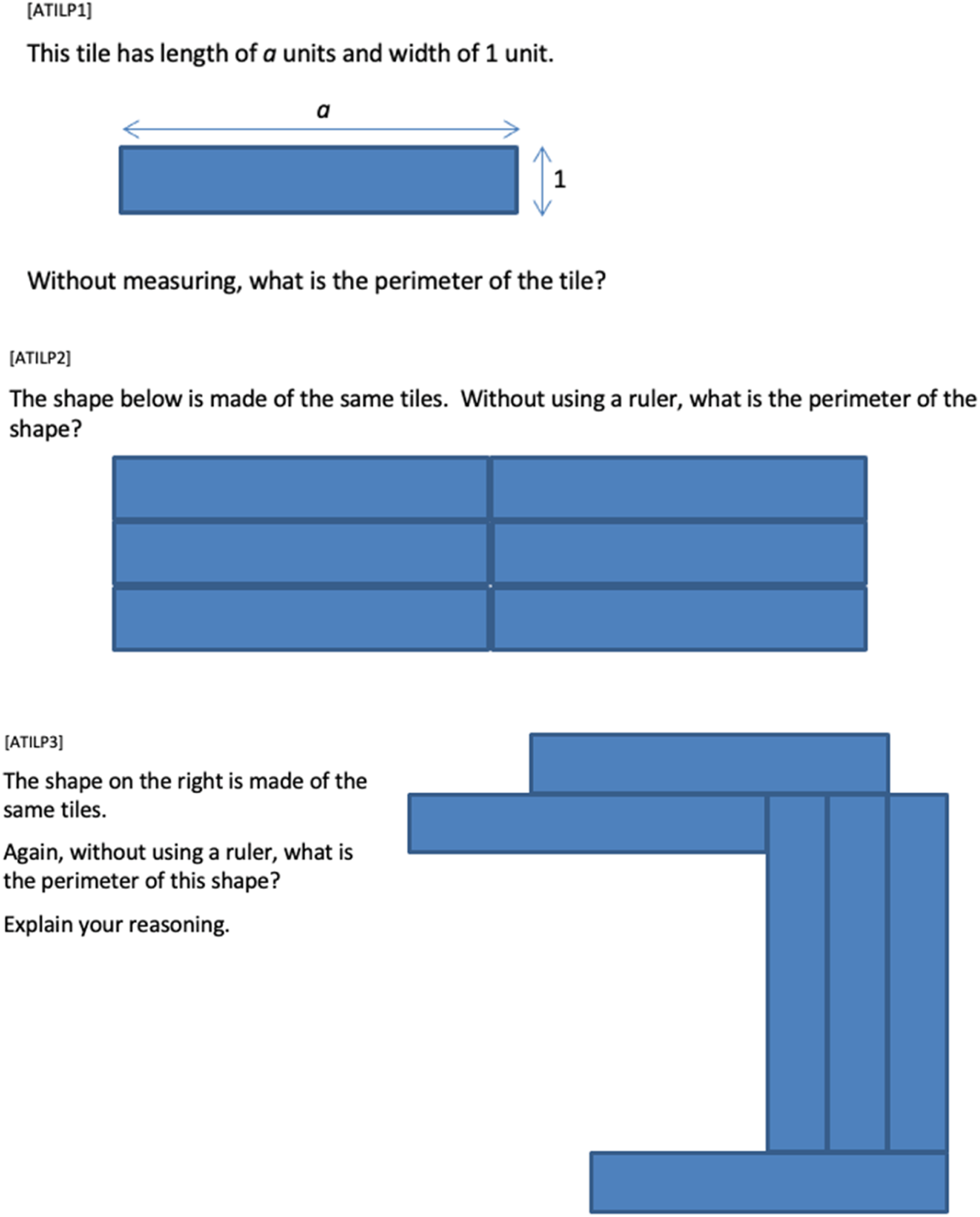

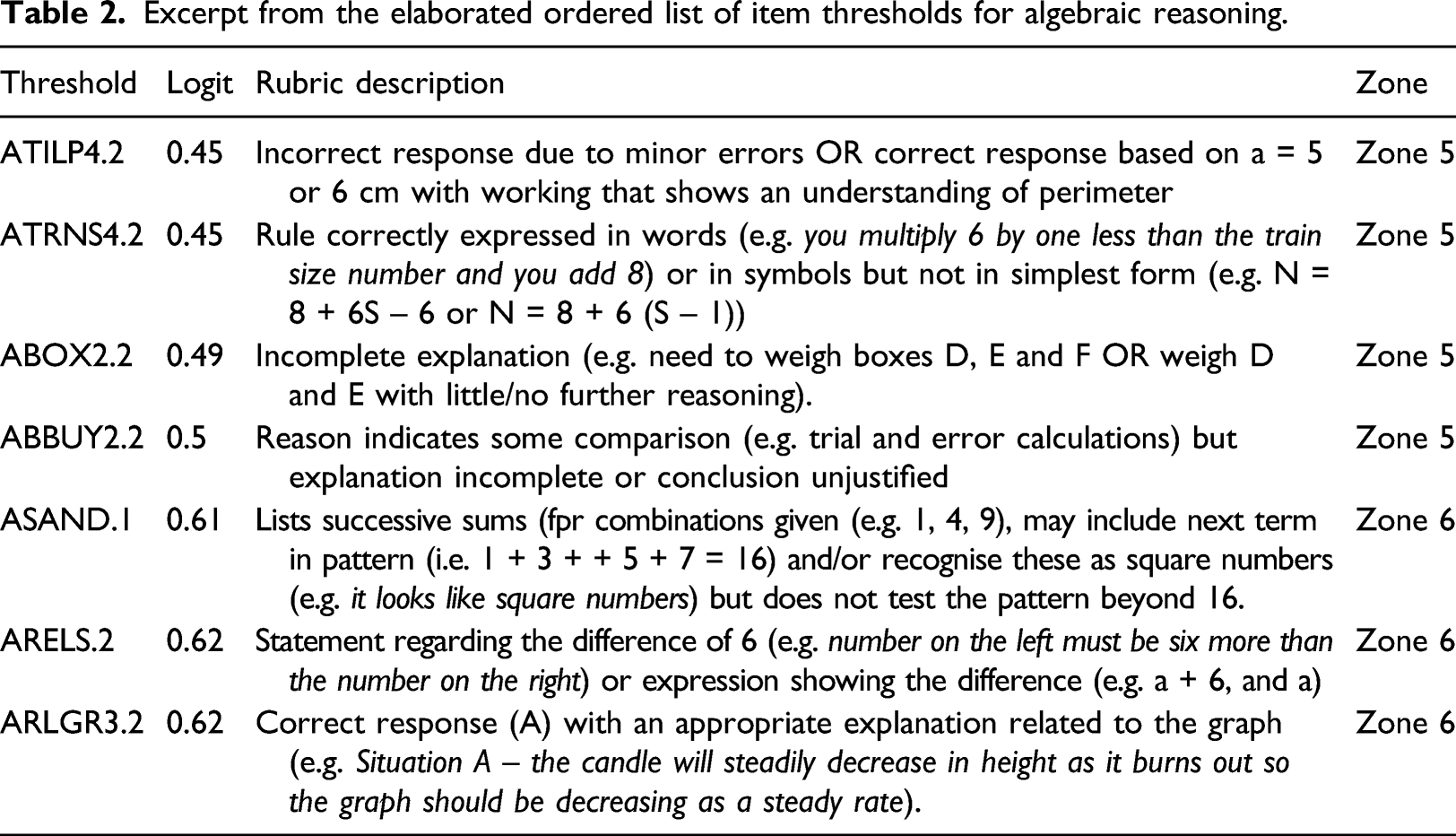

A range of assessment tasks were sourced or created to assess key aspects of the hypothetical learning trajectories and mathematical reasoning as defined above. Where possible tasks were designed to assess reasoning vertically (i.e. at different levels of complexity within the same hypothetical learning progression) and horizontally (i.e. at similar levels of difficulty across different hypothetical learning progressions). This meant that most tasks had multiple items. Partial credit scoring rubrics (Masters, 1982) were used to evaluate qualitatively different responses (see Figure 3). Three items and their respective scoring rubrics from the RMFII Algebra Tiles Task (ATILP). Note: From Algebra Form A Booklet prepared by L. Day, M. Horne, and M. Stephens, July 2018 for the RMFII project. Available from http://www.mathseducation.org.au/online-resources/algebraic-reasoning/.

In general, tasks were developed around a meaningful context (e.g. packaging a gift to be sent overseas, finding the best route in an emergency or making sense of statistical claims made in relation to a school-wide survey), but tasks were also set in decontextualized settings (e.g. explaining why a given relationship is true or false). An elaboration of the RMFII tasks and how they were used can be found in Siemon et al. (2019).

Finalising the learning progressions, developing the teaching advice

Excerpt from the elaborated ordered list of item thresholds for algebraic reasoning.

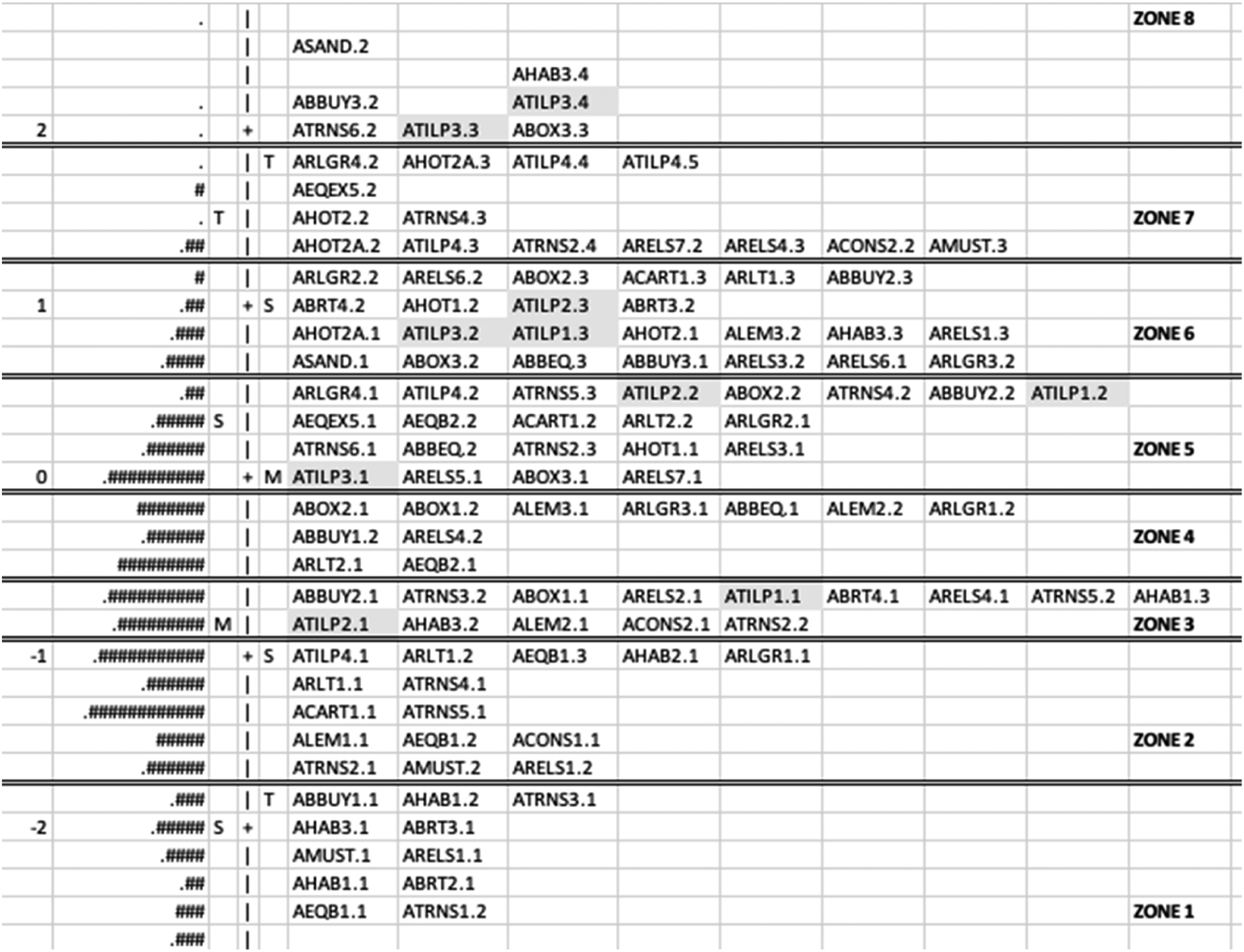

Once the three mathematical reasoning scales were stable, the research team met to interrogate the final ordered list of item thresholds, to identify what they implied about what students were able to do at particular points on each scale, and where they needed to go to next. This analysis was afforded by the Wright maps produced by the Winsteps software that locates persons (from lower to higher achieving), and item thresholds (from easier to harder) on the same scale using the same logit unit (interval level units that are the Rasch-derived estimates of ability and difficulty). The assumption underpinning this is that where persons appear at the same logit value as an item, they have a 50% chance of achieving the score allocated to that item. Figure 4 shows the final Wright map for the algebraic reasoning scale. The ordered logit values are located on the left of the scale, student achievement relative to the scale is indicated by #s (where each # represents 7 students), and the ordered item thresholds are shown on the right. The final version of the Wright map for the algebraic reasoning scale. Note: Each # represents seven students. Some items and students at the very top and bottom of the scale have been omitted to save space. The highlighted thresholds are for Item ATILP (presented in Figure 3).

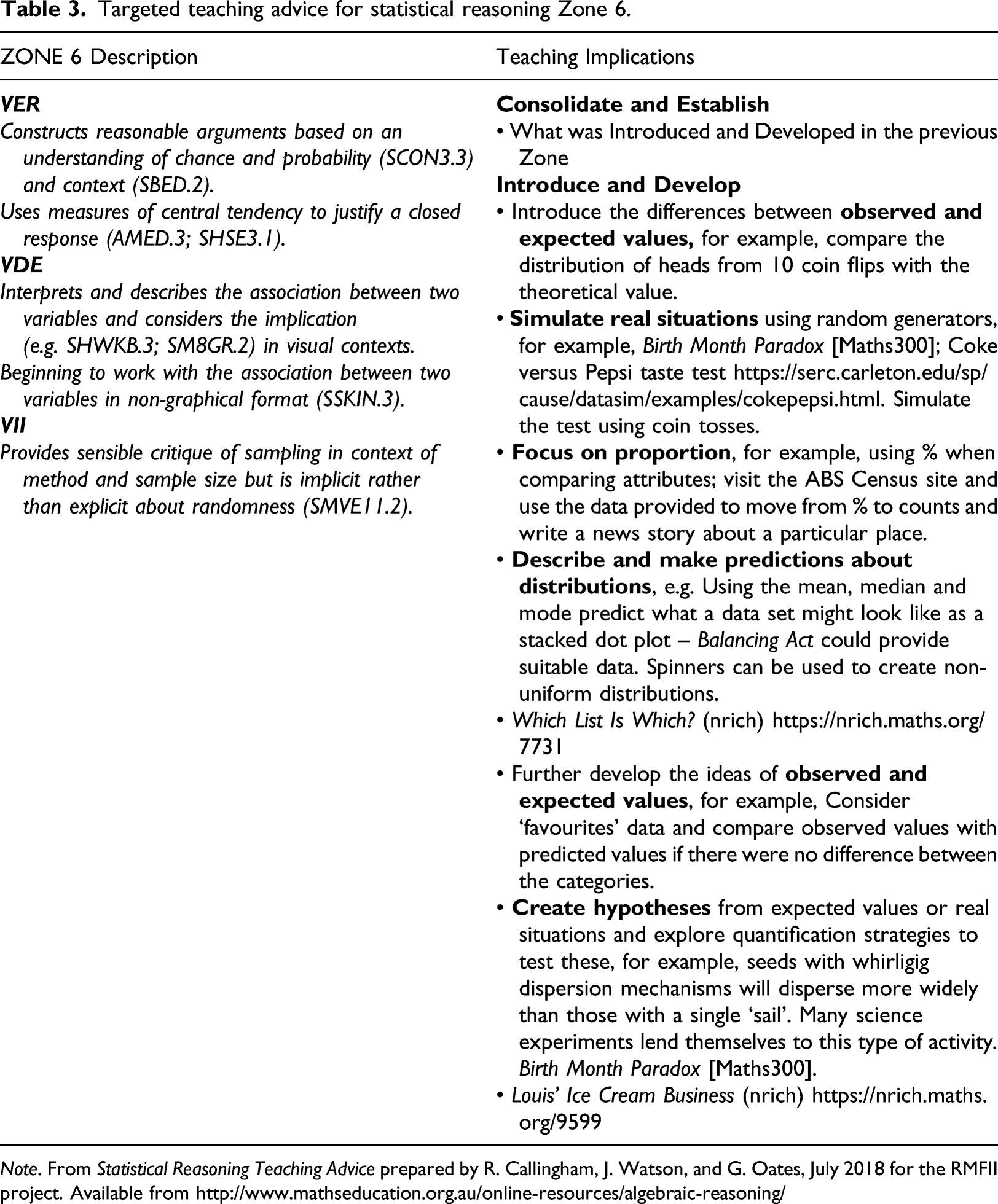

Targeted teaching advice for statistical reasoning Zone 6.

Note. From Statistical Reasoning Teaching Advice prepared by R. Callingham, J. Watson, and G. Oates, July 2018 for the RMFII project. Available from http://www.mathseducation.org.au/online-resources/algebraic-reasoning/

While the RMFII outcomes go some way to addressing the imbalance between curriculum, assessment, and pedagogy described by Black et al. (2011), they sit in a context where curriculum and assessment are developed at the national level using processes that require balancing the views of many stakeholder groups (e.g. Gleeson et al., 2020; Stephens, 2014). While all groups share a mutual interest in improving mathematics education, they can have very different views about how this might be achieved (e.g. Baker & McPhee, 2021). As a result, it is unrealistic to expect particular evidenced-based LP/Ts to be taken up directly. However, it does seem reasonable to suggest that the task of bringing about a more coherent relationship between curriculum, assessment, and pedagogy to support reform at scale could be advanced by considering the products of the LP/Ts as boundary objects (Star & Griesemer, 1989). That is, as objects and processes that can ‘foster co-operation and progress among many of the groups by helping to maintain a focus on the development of children’s mathematical reasoning and skills’ (Confrey & Maloney, 2014, p. 131).

Boundary objects: a means of supporting reform at scale

Several useful concepts have emerged from the need to find shared forms of representation and communication across different but intersecting communities of practice. The notion of boundary objects was proposed by Star and Griesemer (1989) as a means of addressing the question: ‘how do heterogeneity and cooperation coexist?’ (p. 414). They describe boundary objects as abstract or concrete objects that ‘inhabit several intersecting social worlds … They have different meanings in different social worlds, but their structure is common enough to more than one world to make them recognizable’ (p. 393), and thereby available to each as a means of translating and coordinating their respective activities in relation to a joint enterprise. Importantly, they make the point that ‘consensus is not necessary for cooperation nor for the successful conduct of work’ (p. 388) across the different but intersecting communities of practice.

Applying this notion, Lehrer et al. (2014) suggested considering a learning progression as a 'trading zone in which different realms of educational practice intertwine, much as a cable is constructed’ (p. 54). In this case, the realms comprised learning researchers, assessment specialists and teachers, and the ‘most prominent boundary objects were the construct maps’ (p. 54), although they acknowledge that the assessment items, scoring rubrics and instructional materials also played a part in coordinating the activity of the different realms of professional practice and contributing to the refinement of the learning progression.

Confrey and Maloney (2014) suggested that learning trajectories, representations of learning trajectories and standards be collectively considered as boundary objects that ‘can be shared among an even broader set of communities’ (p. 131). They concluded that learning trajectories provide a ‘new tool for implementing the standards in classrooms, and for discussing, critiquing, adapting, and eventually reviewing them’ (p. 155). But they were careful to point out some important qualifiers mentioned by Star (2010), namely, that for some communities of practice there is an advantage in imprecision in the description of boundary objects, while for others, there will be a need for more detail to ensure they fulfil their function to ‘foster communication band cooperation among the different who have a vested interest in mathematics education’ (Confrey & Maloney, 2014, p. 131).

Boundary objects at the interface of three communities of practice

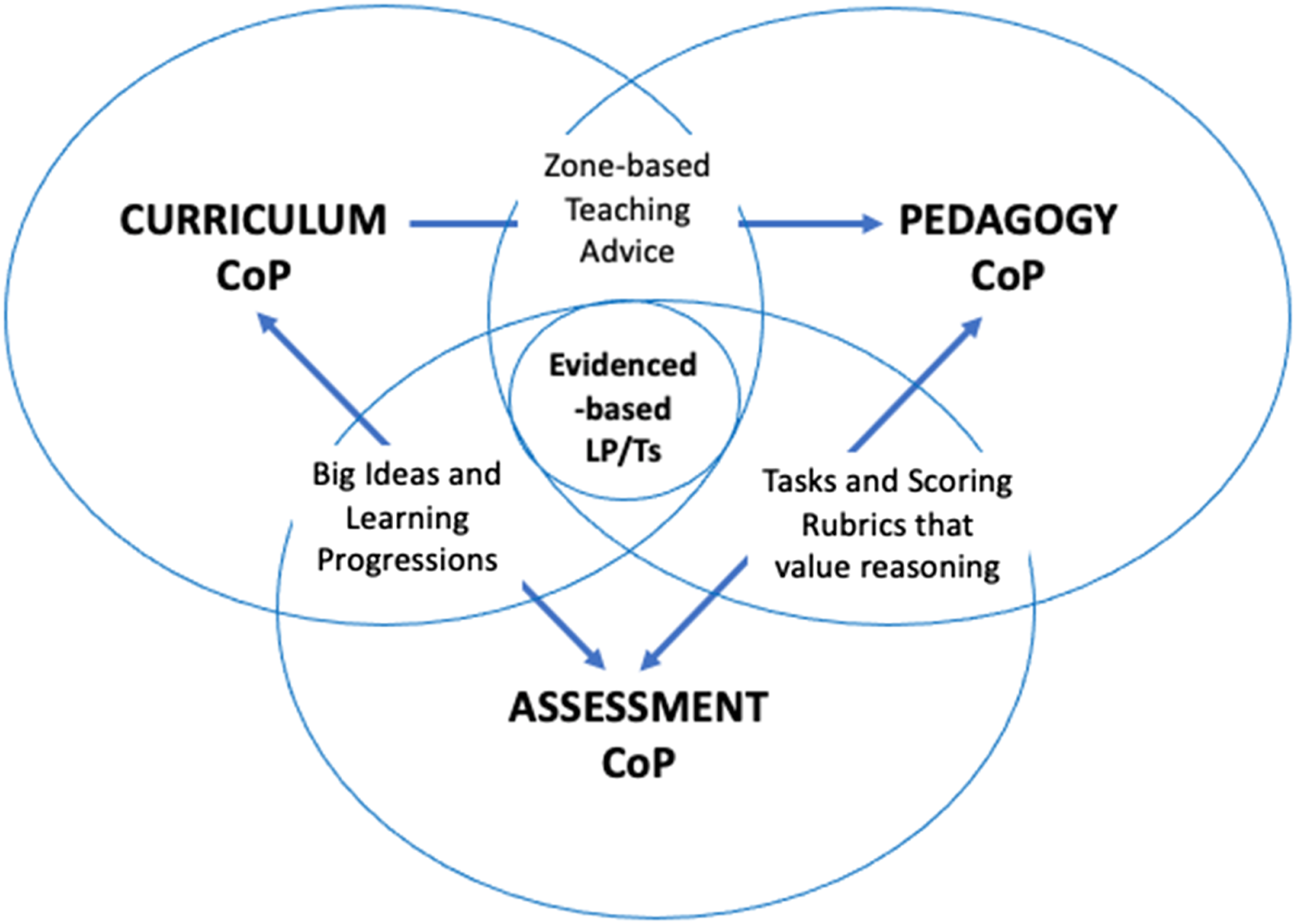

To consider how the products of evidenced-based LP/Ts might act as boundary objects, Black et al. (2011) learning triangle (see Figure 5) has been adapted to place the evidenced-based LP/Ts for mathematical reasoning at the centre (i.e. as a trading zone) to emphasise that the elements of the LP/Ts are interwoven, and that progressing student’s mathematical reasoning is central to the work of the three communities of practice concerned (i.e. curriculum assessment and pedagogy). Although the RMFII outcomes are interdependent co-constructions (i.e. one cannot exist without the others), they are separated in Figure 5 for the purpose of illustrating where they are most likely to be effective as boundary objects to influence reform at scale (i.e. at the national level). Together and/or separately they may serve as boundary objects at different interfaces keeping in mind Star and Griesemer’s (1989) observation that boundary objects are plastic enough to adapt to local needs and the constraints of the several parties employing them, yet robust enough to maintain a common identity across sites. They are weakly structured in common use and become strongly structured in individual site use (p. 393). A representation of LP/T research products as boundary objects between different communities of practice.

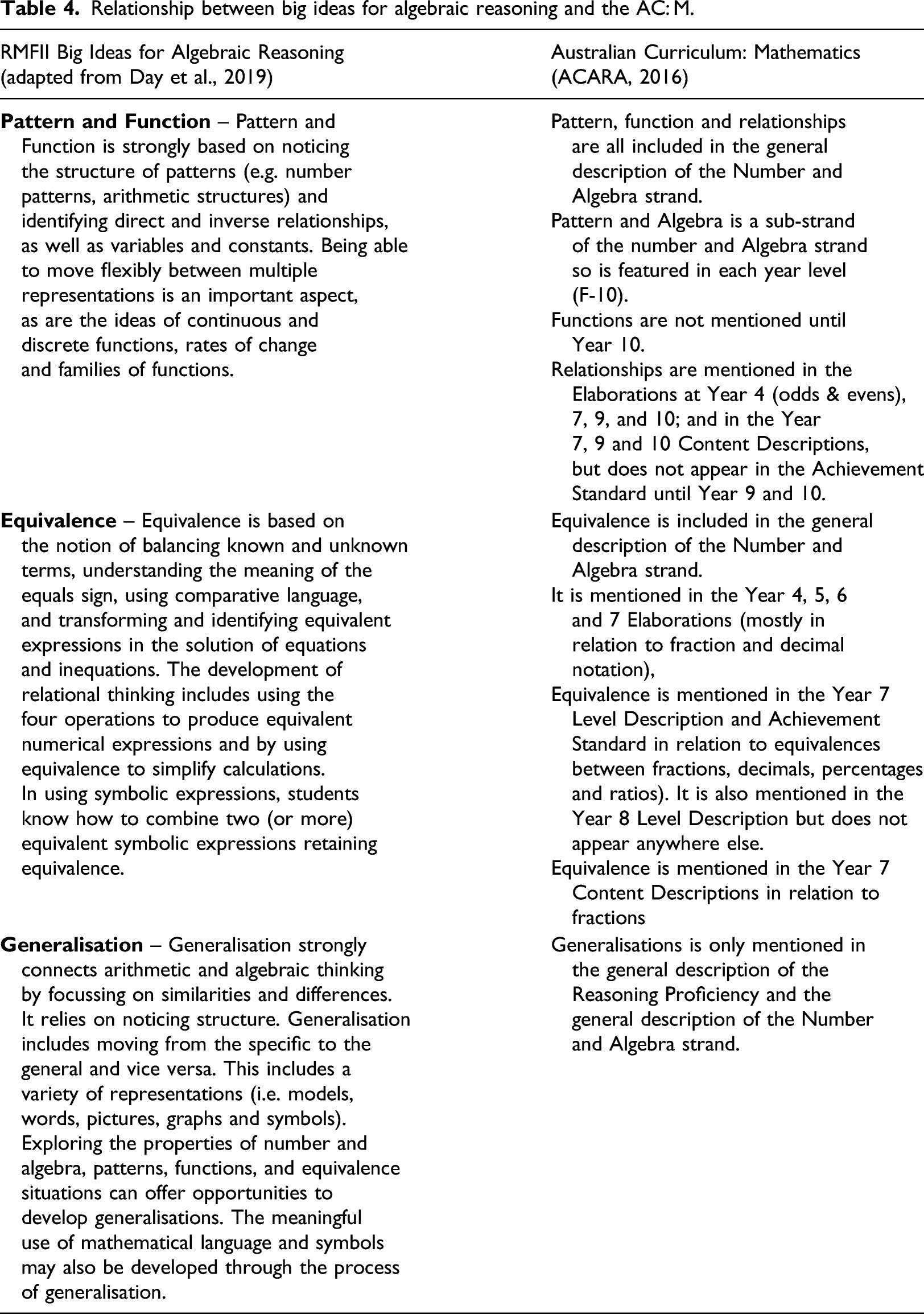

(i) The big ideas and evidenced-based learning progressions produced by the RMFII project are proposed as boundary objects at the interface of the curriculum and assessment communities of practice (Figure 5), as they provide an empirical basis for suggesting what mathematics is important and how it is likely to develop over time. This has implications for those involved with the review of the AC: M, but it is also of interest to those responsible for designing assessment frameworks such as the National Numeracy Learning Progression (ACARA, 2020), particularly where the focus of that assessment is to identify starting points for teaching (Masters, 2013; Wiliam, 2011, 2013). Big ideas and their elaboration over time in the form of evidenced-based learning progressions contribute to reform at scale by providing clear ‘lines of sight’ through the curriculum and help teachers better understand how the concepts and skills developed at one level are connected, and how these are connected to those that come before and those that come after (Charles, 2005; Siemon, 2010). Highly effective teachers understand these connections and support their students to develop a deeper understanding of mathematics than would otherwise be the case (Askew et al., 1997). Further, because evidenced-based learning progressions focus on important mathematical practices as well as content, they can contribute to the development of national assessment frameworks in areas where there are identified gaps such as in problem solving and reasoning (ACARA, 2019a, Table 11).

Relationship between big ideas for algebraic reasoning and the AC: M.

To the extent that the big ideas and the evidenced-based learning progressions operate at the boundary to establish a ‘communicative connection’ and prompt ‘efforts of translation’ between the two communities of practice, they are an example of coordination as a learning mechanism across boundaries as described by Akkerman and Bakker (2011, p. 144).

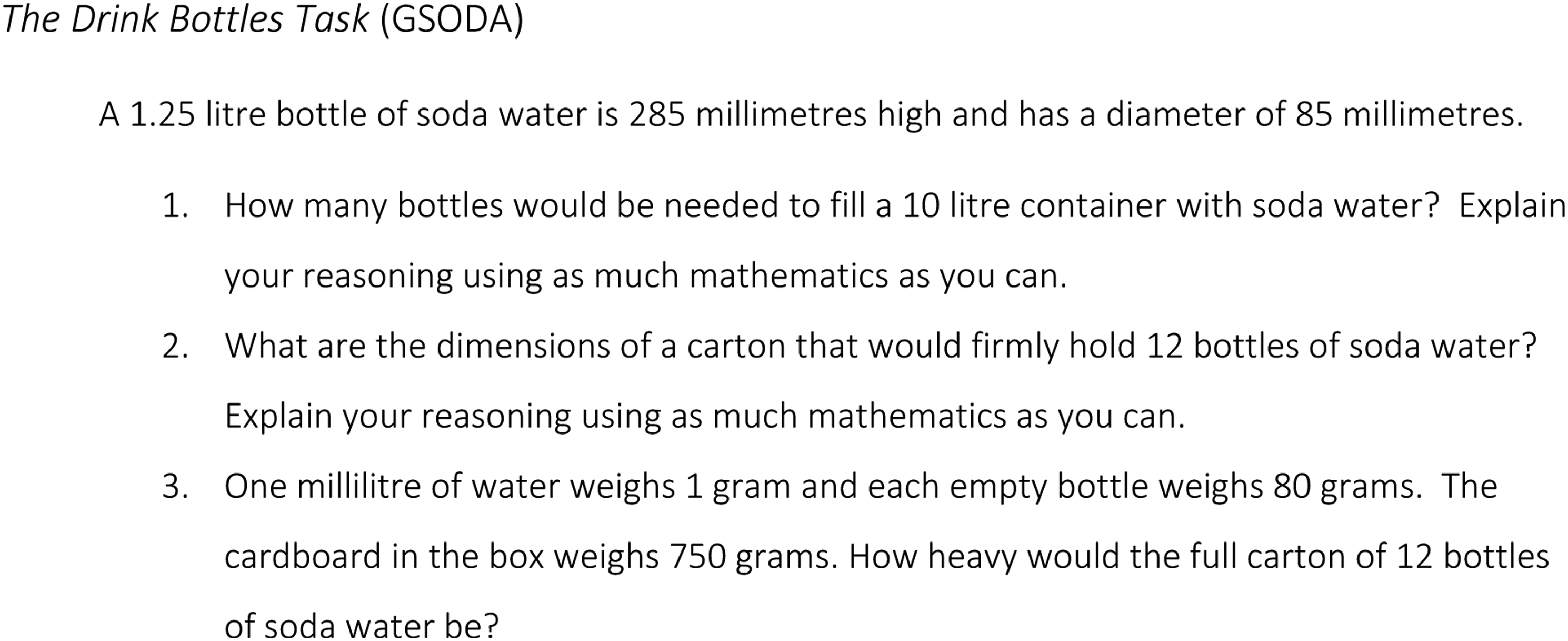

(ii) The assessment tasks and scoring rubrics for each area of mathematical reasoning are proposed as boundary objects between the communities of practice for assessment and pedagogy (Figure 5), as they place a high value on student’s reasoning and provide models of how that reasoning might be elicited and valued, which can then be translated into classroom practice. For example, a number of project schools reported that as a result of using the RMFII assessment tasks, teachers noticed that students were providing explanations even where these were not specifically required. The assessment tasks and scoring rubrics also have a role in promoting assessment practices at the national level that expose student’s thinking, and value the capacity to explain and justify solution strategies. In particular, they can provide models of how different aspects of mathematics can be assessed in the one task. For example, the Algebra Tiles task assesses perimeter and areas ideas as well as the use of algebraic notation and the process of generalisation. The geometric reasoning Drink Bottles task (GSODA, Figure 6) integrates number, measurement and geometry ideas and includes two multi-step problems which was recognised as a gap in the recent review of the National Numeracy Progression (ACARA, 2019a). The fact that this task was much more difficult than expected confirms this gap, but it also provides a model of the sort of assessment tasks that are needed. The drink bottles task (GSODA). Note: From Geometrical Reasoning Form A prepared by M. Horne and R. Seah, July 2018 for the RMFII project. Available from http://www.mathseducation.org.au/online-resources/algebraic-reasoning/.

In the RMFII project, the experience of working with other teachers to mark and moderate the assessments not only deepened their own knowledge for teaching mathematics, it prompted many of the project-school teachers to develop their own scoring rubrics for classroom tasks and assessments that valued reasoning. To the extent that the ‘boundaries between practices are encountered and reconstructed, without necessarily overcoming discontinuities’ (Akkerman & Bakker, 2011, p. 143), this is an example of the identification mechanism for learning at the boundary.

(iii) The zone-based targeted teaching advice for each area of mathematical reasoning and the related, indicative cross-zone teaching activities are proposed as boundary objects between the communities of practice for curriculum and pedagogy (Figure 5). These resources can help those concerned with pedagogical practices translate year-level content descriptors and proficiency descriptions into reform-oriented pedagogical practices that value conceptual understanding, discourse, collaboration, problem solving and mathematical reasoning. For those concerned with curriculum development, the targeted teaching advice and cross-zone activities (e.g. see Table 3) can inform curriculum review processes by pointing to what is important at a level of detail consistent with the content descriptors and elaborations. To the extent that this occurs, it is an example of the third and fourth learning mechanisms reported by Akkerman and Bakker (2011) to explain learning at the boundary, that is, reflection and transformation.

Given that the teaching advice was derived from the evidenced-based learning progressions, they can also play a role between these two communities of practice by providing a deeper insight into and conversations around ‘lines of sight’ through the curriculum. These are far too detailed to consider here but they can be accessed at: http://www.mathseducation.org.au/online-resources/introducing-the-rmfii-resources/.

Conclusion

This article drew on the outcomes of the RMFII research project to challenge current assumptions at the national level about what constitutes a learning progression. It also made a case for considering the products of evidenced-based learning progressions/trajectories (LP/Ts) as boundary objects in reconnecting and rebalancing the curriculum, assessment and pedagogy relationship to support reform at scale. While there is more work to be done in this regard, there is also more work to be done in theorising the nature of the ongoing relationships with the professional community with whom this work was conducted. By partnering with AAMT and State and Territory education authorities, the RMFII materials are in the public domain, but it is the processes around these that teachers, school leaders and policy makers engage in that will determine the extent to which these materials impact teacher’s everyday decision making and students mathematics learning. Coming to know and understand the big ideas that underpin each of the three reasoning areas can help teachers make connections to other areas of mathematics. They also provide an organising frame to help teachers make decisions about what mathematics is important and how best to spend their time and the time of their students in mathematics classes. And, by bringing teachers together in collaborative groups to mark and moderate, the assessment forms bring teachers face-to-face with evidence of students learning and reasoning to provide a strong basis for school-based professional learning.

At the present time it is not clear what impact, if any, evidenced-based learning progressions like the ones developed by the RMFII project will have on the final version of the AC: M, but there are some promising signs in the National Numeracy Learning Progression V3 (ACARA, 2020) that conceptual understanding and reasoning are recognised and valued.

Footnotes

Acknowledgements

The Reframing Mathematical Futures II (RMFII) Project 2014-18 was funded by the Australian Mathematics and Science Partnership Programme scheme, Australian Government, Canberra. The views expressed here are those of the author and do not necessarily reflect the views of the funding body. I would like to acknowledge the members of the RMFII project team, Rosemary Callingham, Lorraine Day, Marj Horn, Greg Oates, Rebecca Seah, Max Stephens, Jane Watson and Bruce White for their contributions to the work on mathematical reasoning. I would also like to acknowledge the anonymous reviewers for their very helpful feedback on an earlier version of this appear.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.