Abstract

This article reports on ways in which one Australian independent school seeks to develop and sustain best practice and academic integrity in its programs through a system of ongoing program evaluation, involving a systematic, cyclical appraisal of the school’s suite of six faculties. A number of different evaluation methods have been and continue to be used, each developed to best suit the particular program under evaluation. In order to gain an understanding of the effectiveness of this process, we conducted a study into participants’ perceptions of the strengths and weaknesses of the four program evaluations undertaken between 2009 and 2011. Drawing on documentary analysis of the evaluation reports and analysis of questionnaire data from the study participants, a number of findings were generated. These findings are provided and discussed, together with suggestions about ways in which the conceptualisation and conduct of school program evaluations might be enhanced.

Introduction

The process of systematic program evaluation through formal strategic planning cycles has been a long established tradition in many schools, both at the school and the system levels (Barber, Chijioke, & Mourshed, 2010). Where there is a purposeful, systematic approach to the management of quality in academic programs, a solid profile of ongoing sustainability and quality has been shown to emerge. For example, through providing a focus on learning and teaching, an effective evaluation process can assist in developing strategies, actions and measurable targets that, in turn, can contribute to new strategic planning cycles. Further, the process can foster the notion of best practice in learning and teaching as a common goal for all members of staff through, for instance, the provision of meaningful feedback to teachers to improve their teaching practice. The literature shows that the provision of such feedback to teachers can have significant impact on student learning (see, e.g. Hattie, 2009; Leigh, 2010).

Designing and conducting effective school program evaluations has, however, been shown to be problematic. In the case of the denominational school in which this study was located, which functions as an independent entity under the jurisdiction of a School Board, a considerable degree of autonomy exists at the school level. As such, it is not constrained by some of the factors impeding program evaluations in heavily centralised schools. Nevertheless, there are a number of external and internal factors that impact upon, and need to be considered, in undertaking any approach to evaluating school program effectiveness. The complexity between these factors also needs to be effectively managed (Wikeley, Stoll, Murillo, & De Jong, 2005). Although contextualised in different ways, key factors include community engagement, leadership, management policies and practices, and the school context and culture (McDavid & Hawthorn, 2006).

In the case of the latter, for example, it has been convincingly demonstrated that strong school cultures enhance student performance (see, e.g. Fullan, 2001; Hoy, Tarter, & Hoy, 2006; MacNeil, Prater, & Busch, 2009) and that improvement initiatives in learning and teaching are ineluctably mediated through the climate and culture of the school (Hallinger & Heck, 2011). Lakomski (2001, p. 68) makes the point that healthy organisational cultures are able to embrace improvement processes and change to extend the organisation ‘beyond its currently held understandings of itself and its ways of dealing both with its internal and external reality’. In schools, this means promoting and embracing high academic standards, strong and effective leadership and sustained collegiality, all of which generate a climate conducive to student success and achievement (MacNeil et al., 2009). Barber et al. (2010) argue that successful school systems are able to both navigate challenges of context and also use context to their advantage, provided the following four contextual factors are taken into account: the desired pace of change; whether the desired change is ‘non-negotiable’; the degree to which there are winners and losers as a result of the change; and the credibility/stability of system leadership and governance.

None of this can occur without the presence of effective school leadership, which has been shown to be crucial to any organisation that aspires to cultivate a culture conducive to change and new approaches (see, e.g. Holmes, Clement, & Albright, 2013; Robinson, 2010). Fullan (2002) argues that reforms leading to sustained improvement in student outcomes can only be achieved in schools where there are leaders adept at handling complex and rapidly changing environments. Durlak and DuPre (2008) agree, claiming that strong leaders who lead and promote change agency ‘can do much to help orchestrate an innovation through the entire diffusion process from adoption to sustainability’ (p. 338).

In the school under study, the leadership team established and, since 2009, has progressively implemented a strong evidence-based improvement agenda, couched in terms of amelioration of measurable student outcomes. Clear and explicit school-wide targets for improvement, with accompanying timelines, have been set and communicated to all in the school community. One of the ways in which school leaders have attempted to meet targets in learning and teaching has been through the introduction of a systematic and ongoing program evaluation process across the school’s six teaching faculties. A number of different evaluation methods have been – and continue to be – used, each one developed to best suit the particular program under evaluation.

In order to gain an understanding of the effectiveness of this process, we conducted a study into participants’ perceptions of the strengths and weaknesses of the four program evaluations that were undertaken between 2009 and 2011. Drawing on documentary analysis of the evaluation reports and analysis of questionnaire data from the study participants, we generated a number of findings that are presented and discussed in this paper, together with suggestions about ways in which the conceptualisation and conduct of school program evaluations might be enhanced.

Context

The school that provided the context for the study is a non-selective, urban Australian denominational day and boarding girls’ school. Its mission is to be a learning community that welcomes diversity in an educational environment that is shaped by Christian values. Founded 120 years ago, the school has a strong academic charter, supported by a diverse range of sporting, cultural and co-curricular programs. Located across three campuses (Junior School: Kindergarten to Year 4, Middle School: Years 5 to 8 and Senior School: Years 9 to 12), the school’s focus is on providing an academic, liberal education with an emphasis on intellectual rigour, personal responsibility and commitment to others. A wide range of subjects is offered from Kindergarten onwards and there are active programs for extension and academic support in all sections of the school. Also within the school is a School of Performing Arts (SPA), which is a selective entry specialist school preparing students academically while focussing on specific performing arts areas such as music, dance or theatre performance.

Of the 160 staff members, approximately 100 are part of the teaching faculty working directly with the 850 students. A leadership team comprised of the heads of the internal mini-schools and the school chaplain are led by the deputy principal who in turn reports to the school principal. The deputy principal also has carriage of the whole of school curriculum team who meet fortnightly to progress the academic agenda of the school. Other leadership groups include the pastoral teams, faculty teams and a sports administration team.

The program evaluation process

Framework

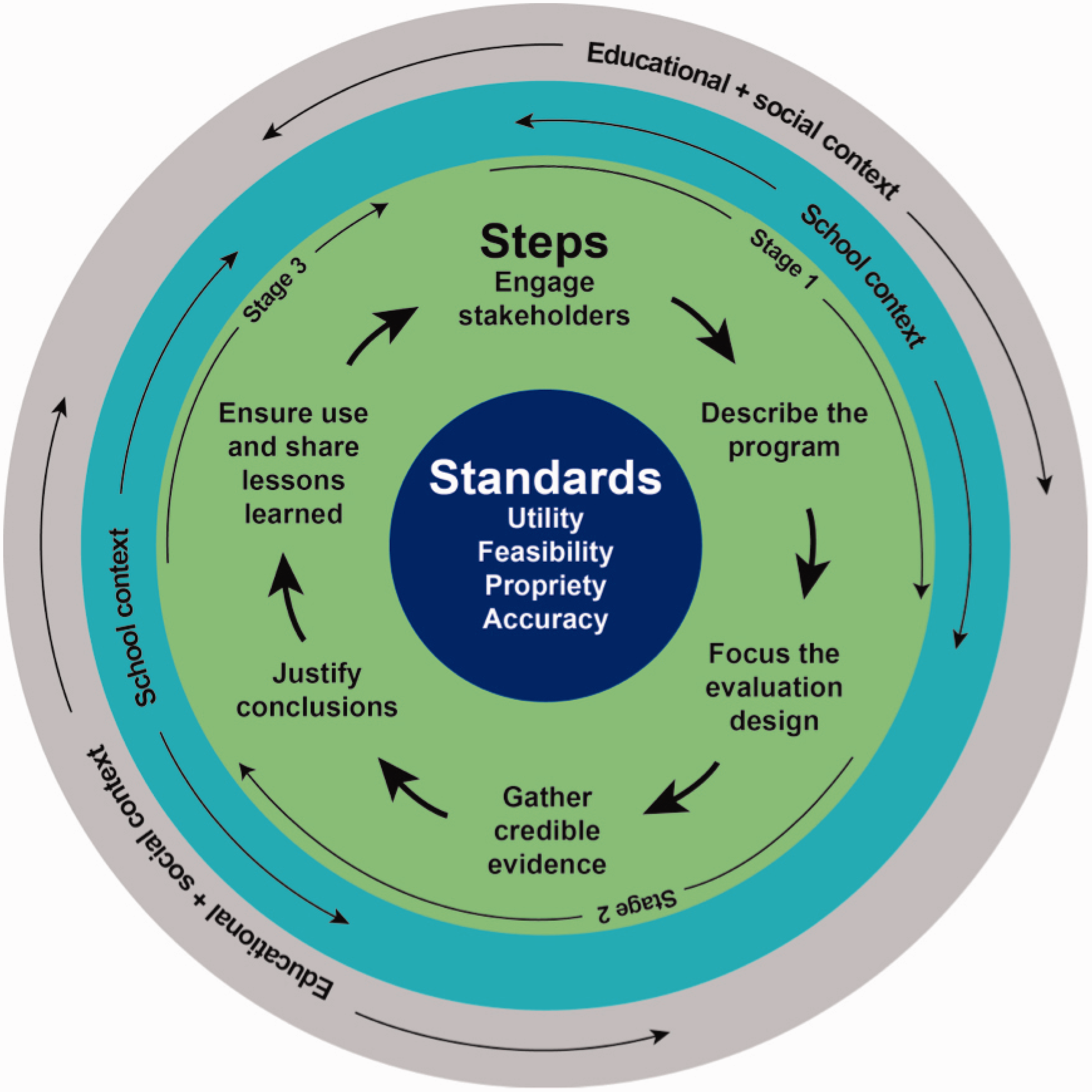

The framework used to conceptualise and design the school’s program evaluation process proved effective in summarising, organising and sequencing the essential stages and features of the process. Presented in Figure 1, the framework is a modification of the public health evaluation framework developed by the Centers for Disease Control and Prevention (CDC) (1999).

Program evaluation framework (adapted from CDC, 1999).

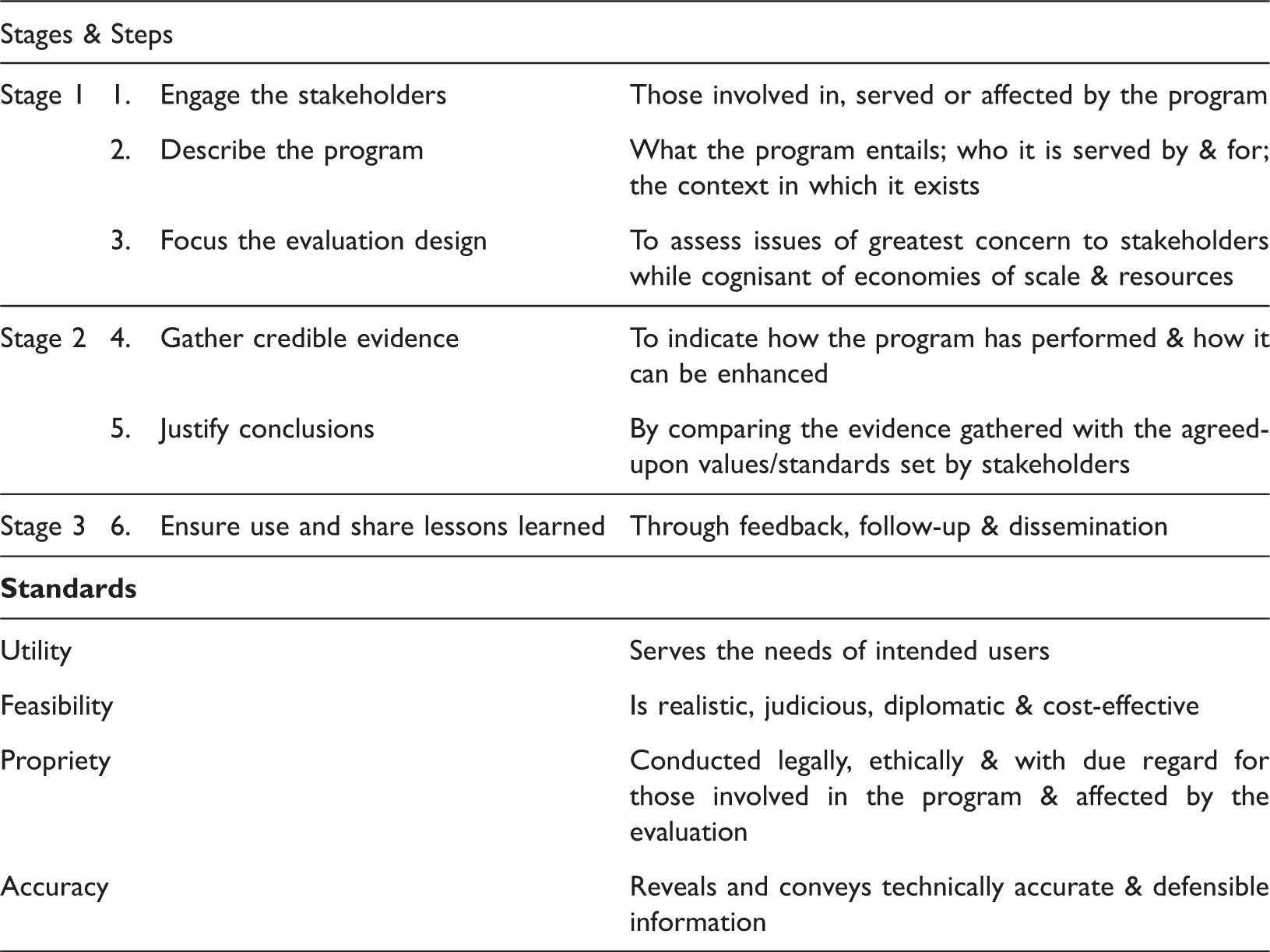

The framework comprises the steps involved in evaluation practice and the standards for effective evaluation, all of which are imbedded within, inseparable from, and impacted by both the school context and climate and also broader external community and educational factors. The process is further framed in three stages. Although there is some interrelation between the stages, Stage 1 roughly involves the first three steps of engagement, design and focus, Stage 2 the steps of data collection, analysis and interpretation (Steps 4 and 5), and Stage 3 the sharing and dissemination of findings (Step 6).

Stages, steps & standards of the evaluation framework (adapted from CDC, 1999).

As we now describe, the four program evaluations contextualising this study were couched within this framework.

Design

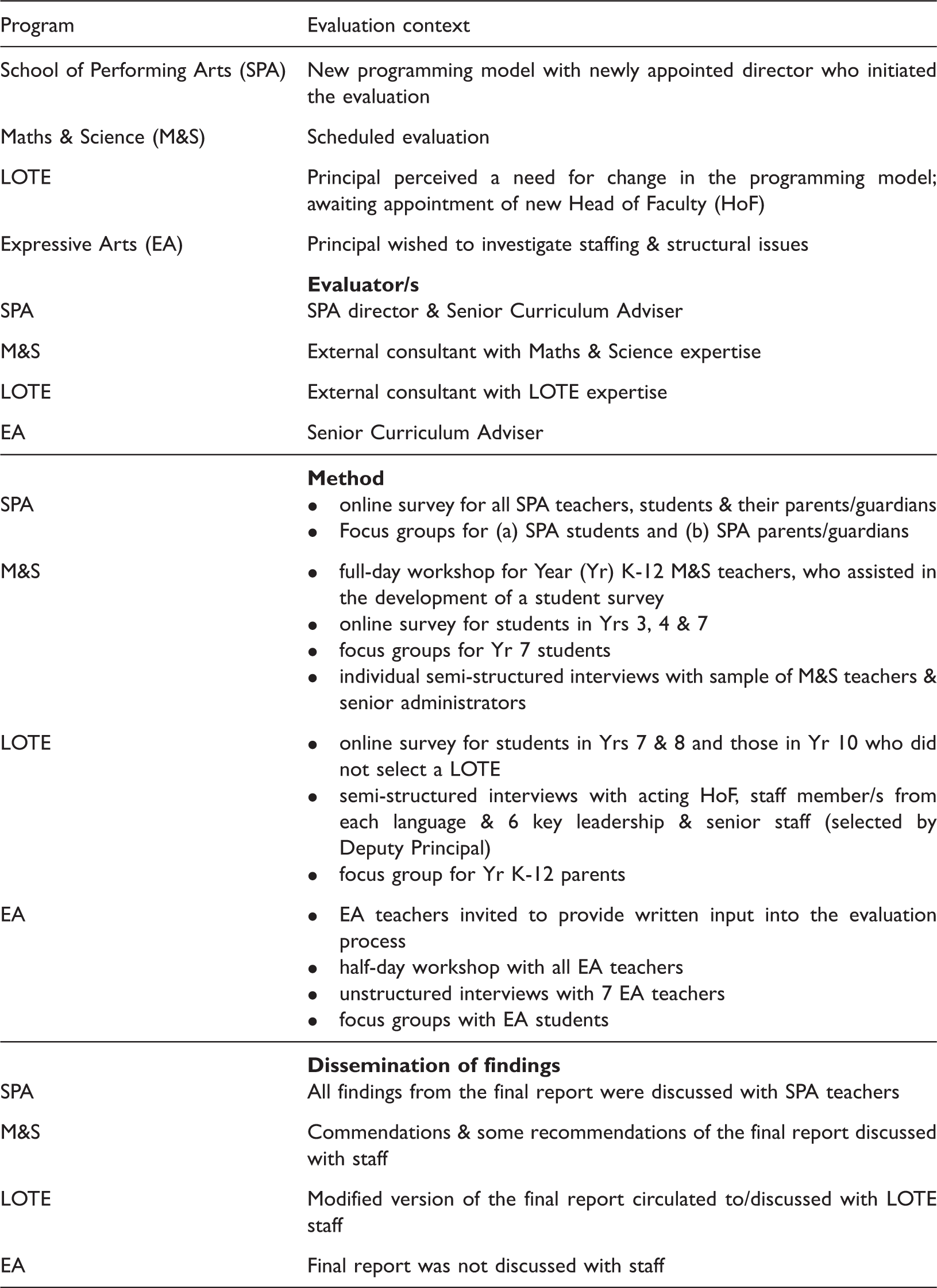

Design approaches of the four evaluation.

The different approaches listed above were tailored to suit each particular program and its context, while meeting the school’s program evaluation standards. It is beyond the scope of this paper to discuss the reasoning behind each set of approaches. Rather, we focus on how teachers responded to the evaluation/s in which they were involved.

The study method

The aim of our study was to gain an understanding of the strengths and limitations of the different school program evaluation methods, from the perspectives of the staff members involved in those evaluations. Thus, the central framing research question was: In the view of participating staff members, what were the strengths and weaknesses of the four program evaluations undertaken between 2009 and 2011? While there was arguably scope to broaden the study to include other stakeholders, such as students and their families, our particular interest was in understanding the views of the teachers who would be predominantly responsible for implementing any program changes recommended in the evaluations. Thus, we limited our study to teaching staff.

The two researchers responsible for designing and conducting the study both played roles in the program evaluations, albeit in different ways. Specifically, one researcher, a key curriculum leader in the school, acted as an (internally based) facilitator in one evaluation (SPA) and as an evaluator in one other (Expressive Arts). She also takes co-responsibility for the oversight of the school’s embedded evaluation process. The other researcher, a university academic, was employed in a consultancy role as an ‘external’ evaluator of the languages other than English (LOTE) program. (A third external evaluator, responsible for the Mathematics and Science evaluation, did not play a role in the study.) Once the school principal had provided consent and the relevant Social Sciences HREC had granted ethics approval (University of Tasmania, 2010), data were collected across two phases, spanning 2009 to 2012.

Phase 1: Documentary analysis

A key principle of the school’s program evaluation design is that it incorporates participation from across the range of school and community stakeholders. As such, and as outlined above, students, their families, school board members, leadership team members, and teaching and administrative staff were invited to participate. From each evaluation, a number of documents, including survey data, field notes taken during workshops, interview transcripts, and final reports were generated. The first phase of our study involved (a) extracting from these documents data provided by teaching staff that related to the evaluation process (as distinct from the program per se), and (b) analysing these data within the frame of our research question.

Following Rapley’s (2007) interpretive approach to documentary analysis, we each examined the documents and document extracts through a series of iterative readings to establish ways in which teachers socially constructed their experiences of engaging in the evaluation process/es. Working collaboratively, we then compared and contrasted our margin notes, applied codes (single words and phrases) to the views and interpretations reported in the data, and finally generated three of the five themes reported in the findings of this paper. These themes included: understanding the evaluation aim and purpose, staff contribution to the evaluation, and timing, scope and sequencing of the evaluation.

Phase 2: Online questionnaire

In late 2011 and early 2012, when the four program evaluations – and associated documentary analysis – had been completed, we designed and generated an online questionnaire (SurveyMonkey Inc., 2013) with the purpose of further gauging staff members’ views of the evaluation processes. The questionnaire included five open-ended questions that were purposefully designed to collect as broad a range of responses as possible, namely:

From your perspective, what were the strengths of the evaluation process and how it was conducted? From your perspective, what were the limitations of the evaluation process and how it was conducted? In what ways, if any, do you believe the evaluation process could have been improved? Do you believe the findings of the evaluation (e.g. Commendations, Recommendations) will be acted on? Why//Why not? In your view, whose responsibility is it to ensure the findings (e.g. Commendations, Recommendations) are acted on? Please explain why.

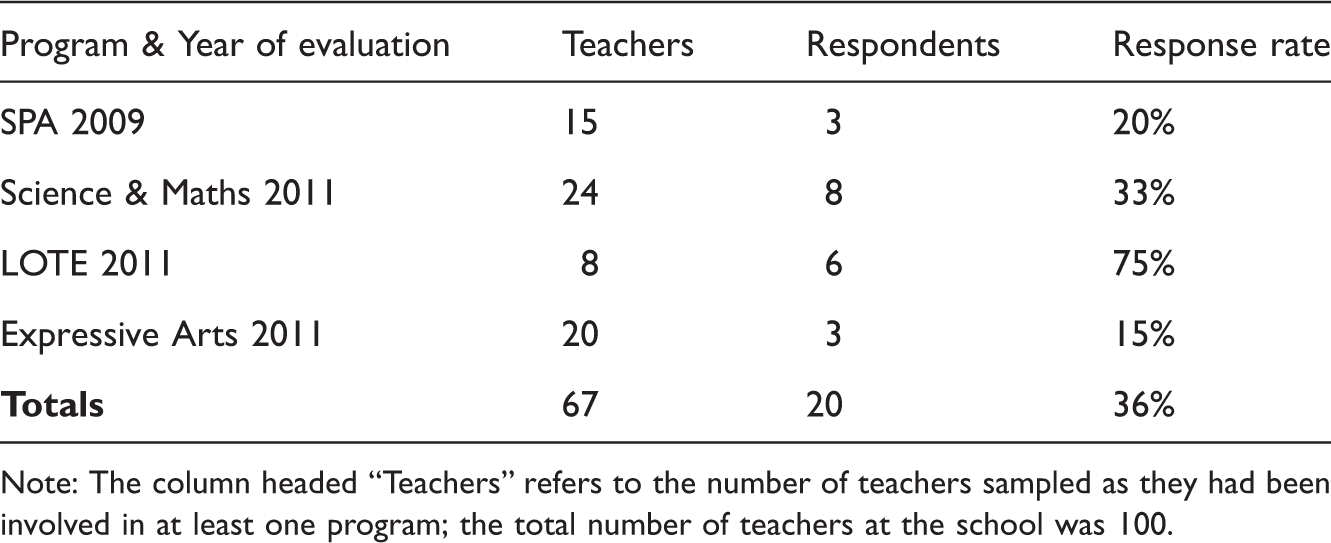

Questionnaire respondent numbers & response rates.

Note: The column headed “Teachers” refers to the number of teachers sampled as they had been involved in at least one program; the total number of teachers at the school was 100.

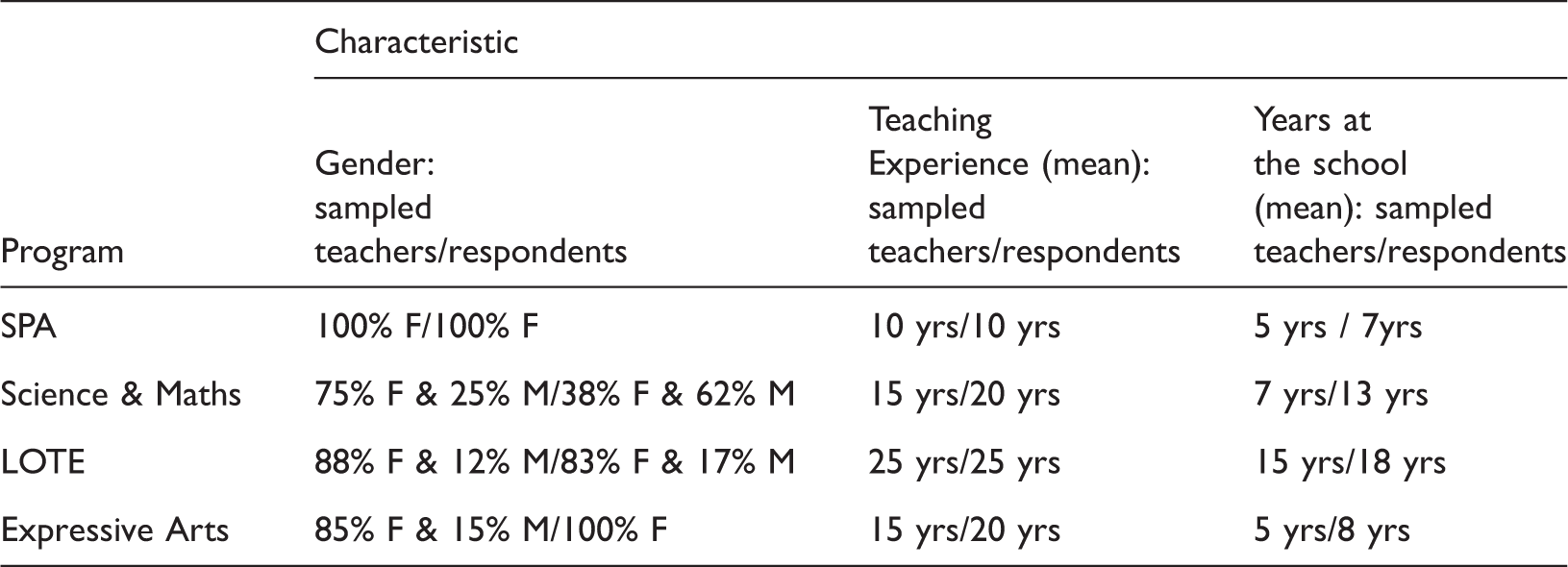

Comparative information for sampled teachers and achieved respondents.

Questionnaire data were analysed using cluster analysis (Miles & Huberman, 1994), which, in a similar method used in the documentary analysis, involved the two researchers individually coding and categorising the data, comparing codes and categories, and modifying the final categories. We then conducted a cross analysis with the codes and categories derived in Phase 1 in order to generate the themes that represent the findings of this study.

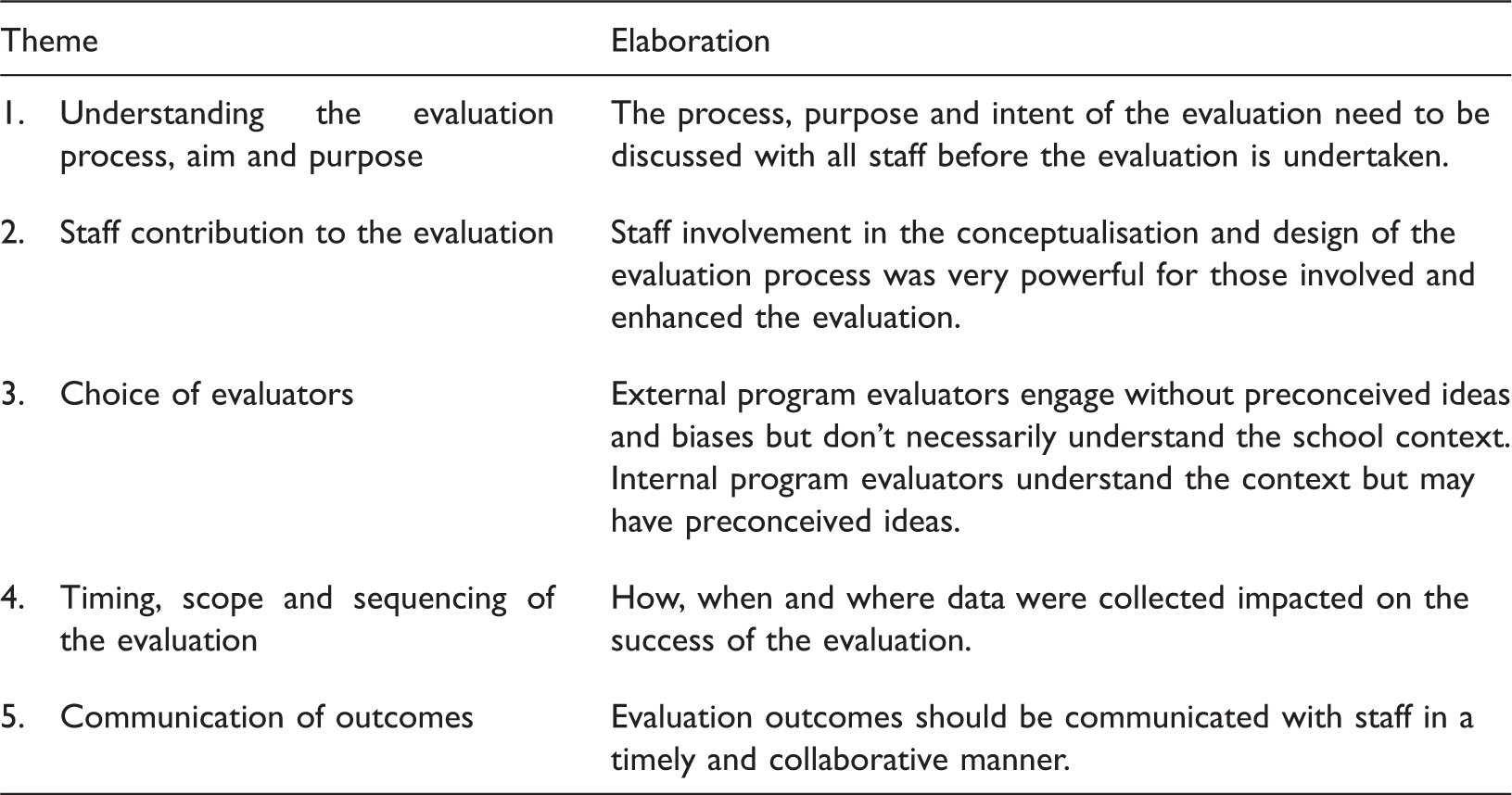

Findings

Five key findings emerged from data analysis conducted to yield a response to the research question: In the view of participating staff members, what were the strengths and weaknesses of the four program evaluations undertaken between 2009 and 2011? The five themes and a brief extrapolation of each are presented in Table 5. Our data analysis draws attention to some key areas, many of which need to be considered in other school program evaluation processes.

Typically, the analysis of data collected in this study has resulted in support or corroboration of the extant literature. However, there are a number of issues raised by participants that add more detail or nuance to what has already been reported. For instance, although each evaluation was designed to suit the particular program and the context within which it was located, a number of teachers reported questioning this design process on the grounds that, for example, there were inequities inherent in the differentiated approaches across the evaluations. This articulated concern points to the lack of understanding that some participants reported having about the evaluation aim and purpose, and to the questions raised about the selection of evaluators. Thus, in many ways, as this example demonstrates, each of the themes is interrelated and interdependent in terms of an overall picture of an effective school program evaluation.

Discussion

Understanding the evaluation aim and purpose

Not surprisingly, there was consensus among teachers that the process and intent of the evaluation need to be preferably shared by, and at the very least clearly articulated to, all staff before it is undertaken. This type of collaborative communication has been shown to be key to effective evaluations (see, e.g. Scheerens & Demeuse, 2005). In regards to the actual conduct of the evaluations, quite disparate views were reported about how well teachers understood the aim and purpose of the process.

Each of the program evaluators had different agendas in terms of their review’s terms of reference and different degrees of control over their ability to communicate the evaluation aim and purpose to participants. Even when the aims of the review are articulated to individuals, and in some cases collaboratively created, there is still room for suspicions and ill-feeling to emerge. Feelings of a lack of trust about any possible underlying issues or an alternative agenda can easily emerge especially where there is a lack of transparency in the processes and where the evaluation process may appear to be ‘top-driven’. Respondents’ views reflect these different perceptions; the first where time was spent in an initial whole group sessions are positive: It was an open and transparent review … [and] we knew why we there and what to expect. The initial meeting gave a good contextual background for the evaluation. I arrived at the meeting not knowing what it was really about and what my role might be. It would have been good to have more time as a group with the facilitator, so that the aims of the evaluation were clear to everyone. I would like to have known more about the timeline of the process.

Staff contribution to the evaluation

The conceptualisation and design of the four evaluations involved different levels of engagement by staff, varying from significant input by Mathematics and Science teachers to none at all by LOTE staff. Although staff involvement in these preparatory stages was not initially afforded much consideration by facilitators, it proved quite powerful for those who participated. For example, on the one hand, all Mathematics and Science teachers had the opportunity during a whole-day workshop to discuss the aim, purpose and anticipated outcomes of the evaluation, as well as to contribute to the development of the student questionnaire and student focus group questions. Participants reported this level of staff engagement as one of the overriding strengths of the evaluation, as exemplified in these comments: All teachers of Mathematics and Science were included right from the start in how the evaluation would work. This was refreshing and proved productive. [The workshop] allowed for an interesting discussion, with all teachers being called upon to contribute. Through this discussion, it was very evident to us that there was work to be done to bring the teaching of science … to the required standard. The terms of reference were very open and generic. … As LOTE teachers, we could have developed more explicit terms of reference instead of wondering what was being reviewed. The way LOTE is taught? The efficiency of LOTE teachers? The scope for LOTE teaching at school? Even though a portion of the School community was included, maybe not the widest section. Not sure why … it wasn’t our decision to make apparently.

Further, there was evidence that those involved in the evaluation design process benefited from collaborative engagement and discussion that transcended the level of involvement in planning the procedural requirements of the evaluation: The single session at the start [allowed for] an interesting discussion with all teachers being called upon to contribute. The way it was set up made it an open and transparent review.

Choice of evaluators

Two of the four evaluations were undertaken by external consultants and two were undertaken by an internal evaluator. There are benefits and limitations in the two differing approaches (Conley-Tyler, 2005). An internal evaluator can have a deep understanding of the school context and of the project; there is familiarity also with the staff and the community groups involved through being part of the organisational structure of the school. They are undoubtedly less costly. On the downside there may be perceptions of lack of objectivity, lack of skills or time to devote to the work, and there may be perceptions that the evaluation and its outcomes are less important to school management because of the lack of external evaluation, and that the recommendations made will hold less weight and credibility. All of these elements were evident in the participants’ responses to the internal evaluator’s work as evidence in these comments: [The evaluator] understood the issues we were grappling with. Some people were concerned about a lack of anonymity. I’m not sure why we had an internal reviewer. Was it to do with the importance of the review of our program? Finances? There was no feeling of bias in the way the information was collected and presented. It is arguable that the facilitator had a view formed before data was readily available. The review did not occur in the context of the school organisation with all that [entails].

Timing, scope and sequencing of the evaluation

Despite planning that takes into account the time needed to undertake a review, it has to be recognised that schools are busy places where many teachers work under time pressures and in an unpredictable environment. This busyness invariably leads to issues around the timing, scope and sequence of evaluations.

The overall impact of the evaluation is dependent on the features of the evaluation process such as its timeliness, relevance, quality, and responsiveness to the school environment. There were both positive and negative comments on the processes used and the timing of the processes as indicated in the following indicative statements: It was terribly disappointing that there were no further meetings or discussions to follow the first meeting. I would have preferred a written questionnaire where I had time to consider my answers more fully. It was a very short timeframe.

Communication of outcomes

School program evaluation comprises an accountability component and a formative component in order to inform future decision making. One of the key assumptions and expectations of teachers involved in program reviews is that they would be able to use the findings of the program evaluation to improve implementations. When this does not occur or the dissemination process is delayed, there is a sense of frustration and lack of faith in the process. In one program evaluation the evaluator had an opportunity to meet with participants who noted such things as: It was very good that there was a final meeting with the reviewer and the faculty so that participants could clear up any misunderstandings.

The Expressive Arts evaluation report had a lengthy gestation period where it sat on the principal’s desk for some months before it was considered. The principal then talked the findings over with the head of faculty but made no further overt moves to release the findings more broadly. This inevitably led to dissatisfaction in the process and uncertainty about the review process. Participants commented, for example: I would hope that the findings will be made available to the participants and recommendations brought forward for further discussion. The review findings have not been discussed with any of the faculty. To date, nothing has been done. The recommendations … have been ignored. Who has seen what we wrote? What points were acknowledged as worthy of real consideration?

Conclusion

This paper provides insight into participants’ perspectives of the strengths and limitations of four differently constructed program evaluation methods in an independent school. The five key findings that were generated through documentary and questionnaire data analysis related to staff members’ understanding of the evaluation aim and purpose and their contribution to the process, the selection of internal and external evaluators, the timing, scope and sequencing of the evaluation, and the communication of outcomes. Interestingly, none of the evaluation methods emerged per se as being perceived as more effective or appropriate than any other. Rather, the study showed that participants overwhelmingly valued being provided with a clear understanding of the nature of and rationale for the evaluation and ‘having a say’ in its conceptualisation, development and conduct.

The importance of shared decision making in organisational practice has been widely acknowledged (see. e.g. Durlak & DuPre, 2008; Wikeley et al., 2005). Durlak and DuPre (2008) note, for example, that situations in which there is collaborative decision making among key stakeholders have consistently led to better implementation of organisational programs. Our study shows that, in the view of participants, shared decision making should extend to the conceptualisation and creation of program evaluation processes, which indicates that they would have welcomed playing a role in all three stages of the evaluation cycle (see Figure 1). Works by Bryson, Patton, and Bowman (2011) and Weiss (1997) highlight the potential value of involving primary users in the evaluation process. Despite the additional work that this entailed for Mathematics and Science teachers in our study, all valued the opportunity to provide input into the design and implementation of the evaluation of their programs. Participants also stressed the importance of receiving timely, ongoing and detailed feedback about the evaluation process; and knowing that the outcomes of the evaluation would be acted on. In cases where staff considered the above factors to be absent, scepticism, mistrust and a lack of engagement prevailed.

Summary of key findings.

Footnotes

Declaration of conflicting interests

None declared.

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.