Abstract

Objectives:

A range of communication skills training programmes have been developed targeting trainees in various medical specialties, predominantly in oncology but to a lesser extent in psychiatry. Effective communication is fundamental to the assessment and treatment of psychiatric conditions, but there has been less attention to this in clinical practice for psychiatrists in training. This review examines the outcomes of communication skills training interventions in psychiatric specialty training.

Methods:

The published English-language literature was examined using multiple online databases, grey literature and hand searches. The review was conducted and reported using Preferred Reporting Items for Systematic Reviews and Meta-analyses guidelines. Studies examining the efficacy of communication skills training were included. Randomised controlled trials, pseudo-randomised studies and quasi-experimental studies, as well as observational analytical studies and qualitative studies that met criteria, were selected and critically appraised. No limits were applied for date of publication up until 16 July 2016.

Results:

Total search results yielded 2574 records. Of these, 12 studies were identified and reviewed. Two were randomised controlled trials and the remaining 10 were one-group pretest/posttest designs or posttest-only designs, including self-report evaluations of communication skills training and objective evaluations of trainee skills. There were no studies with outcomes related to behaviour change or patient outcomes. Two randomised controlled trials reported an improvement in clinician empathy and psychotherapeutic interviewing skills due to specific training protocols focused on those areas. Non-randomised studies showed varying levels of skills gains and self-reported trainee satisfaction ratings with programmes, with the intervention being some form of communication skills training.

Conclusion:

The heterogeneity of communication skills training is a barrier to evaluating the efficacy of different communication skills training programmes. Further validation studies examining specific models and frameworks would support a stronger evidence base for communication skills training in psychiatry. It remains a challenge to develop research to investigate behaviour change over time in clinical practice or to measure patient outcomes due to the effects of communication skills training.

Introduction

Rationale

The benefits of effective clinical communication are well established. For example, effective clinical communication leads to better health outcomes, including higher satisfaction, improved illness understanding and adherence to treatment (Maguire and Pitceathly, 2002) and increased clinician confidence and reduced levels of clinician distress (Cegala and Lenzmeier Broz, 2002; Maguire and Pitceathly, 2002). However, evidence shows insufficient communication skills across several clinical fields, including psychiatry (Maguire and Pitceathly, 2002).

It is also well established that communication skills can be learnt, with strong evidence of the efficacy of communication skills training (CST) programmes (Maguire and Pitceathly, 2002) in undergraduate medicine and some specialties (e.g. oncology) (Barth and Lannen, 2011; Uitterhoeve et al., 2010). CST in medical schools often includes generic skills such as active listening, questioning and appraising cues (Kissane et al., 2012), but postgraduate CST assists trainees to apply communication skills relevant to their discipline.

Despite this evidence, the uptake of CST is varied, with research focusing on primary care and oncology (Aspegren, 1999; Barth and Lannen, 2011; Cegala and Lenzmeier Broz, 2002; Delvaux et al., 2005; Kissane et al., 2010; Lienard et al., 2010; Merckaert et al., 2015; Razavi et al., 2003; Uitterhoeve et al., 2010; Van den Eertwegh et al., 2013). However, there is a significant gap in the literature concerning CST for psychiatry, including its impact on clinical practice change and patient outcomes.

In many postgraduate educational programmes, communication skills are considered core curriculum. For example, competency-based requirements in both Canada (Leverette et al., 2009) and the United States emphasise teaching and assessment of communication skills (Rider and Keefer, 2006). Similarly, the new competency-based Royal Australian and New Zealand College of Psychiatrists (RANZCP) Fellowship Training Program identified communication skills among the Entrustable Professional Activities required for progression through training, for example, providing a family member with an explanation about a young adult with a major mental illness. Regardless, there is a paucity of teaching tools that target specific skills, such as communicating a diagnosis or prognosis (Seeman, 2010). Limited empirical research indicates that, not unlike people with other medical conditions, people with psychiatric disorders wish to be informed about their diagnosis (Giacco et al., 2014; Mitchell, 2007). The majority of psychiatric patients (>90%) wish to receive this information through discussion with their treating psychiatrist (Hallett et al., 2013). Although guidelines stating that patients be informed about the nature of their illness currently exist (e.g. the National Institute for Health and Care Excellence [NICE, 2014] guideline), fewer than half of psychiatrists explicitly inform patients of their diagnosis (Clafferty, 2001; McDonald-Scott et al., 1992; Magliano et al., 2008; Outram et al., 2014), with rates of disclosure varying across different diagnostic conditions. Euphemistic terminology for severe conditions (e.g. ‘psychosis’ for schizophrenia) is frequently used but does not enhance patient understanding (Cleary et al., 2009). Clinicians indicate insufficient communication skills for these types of conversations (Levin et al., 2011).

This systematic review provides a rigorous, structured examination of what CST programmes have been conducted in postgraduate psychiatry, the efficacy of these interventions and a critical analysis of the methods and models used in an attempt to determine best practice in this area. To our knowledge, this is a first for the field.

For the purposes of this review, communication skills are defined as the direct or indirect transmission of information between two or more people that is achieved through verbal and non-verbal methods, including speech units, eye contact, body language, gestures and facial expressions, as well as listening methods. Effective use of these skills enables the other party to understand and process the information provided, to share their concerns and to ask questions. Although teaching psychotherapeutic skills or clinical interviewing skills for specific purposes (e.g. risk assessments or mental state examinations) also use communication skills, this review focuses primarily on training interventions that explicitly deal with the development of communication skills, per se.

Methods and analysis

We used the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) statement for reporting systematic reviews and meta-analyses of studies that evaluate healthcare interventions (Liberati et al., 2009). We specified methods and inclusion criteria in advance and listed the protocol with Prospero, accessible at https://www.crd.york.ac.uk/PROSPERO/display_record.asp?ID=CRD42016033333.

Eligibility criteria

Population

The study population included medical doctors of any age participating in a postgraduate psychiatry specialty training programme. For this review, ‘residents’ or ‘registrars’ are considered equivalent to postgraduate trainees in a psychiatry specialty training programme. Studies included were English-language only with no date limit (i.e. before 16 July 2016).

Types of intervention

We included studies examining the efficacy of CST. We excluded studies evaluating training in psychotherapies, although these met inclusion criteria if they addressed CST.

Types of outcome measures

The main outcomes of interest were trainee satisfaction, behaviour change or skill retention over time, and impact on patient health outcome – measured quantitatively or qualitatively. Secondary outcomes included any validated outcome measure of CST in psychiatry, as well as any unintended adverse effects or barriers associated with the intervention, including its effect on patients, clinicians, health services or other health professionals. We took an inclusive approach to allow any study type and any outcome, given the paucity of existing literature and the need to capture all studies available.

Comparator(s)/control

In comparative studies, CST interventions were required to have been tested pre- and post-training, or versus a non-exposed control group, other educational institutions or alternative methods of CST. We also included non-comparative studies.

Types of studies

We included randomised controlled trials (RCTs), pseudo-randomised studies and quasi-experimental studies, as well as observational analytical studies and qualitative studies that met the above criteria. We excluded conference abstracts, unpublished data and clinical trials.

Information sources

We used a snowballing technique to identify studies, by searching electronic databases, scanning reference lists of articles and by conducting a grey literature search of Google Scholar (with the first 200 citations examined). We also ran a dedicated search for known authors in the field. Electronic databases provided all results with no additional studies found using other methods. A search was developed for MEDLINE and adapted and applied to all the following databases: A+ Education (1978+), CINAHL (complete), Cochrane library, Dissertations & Theses (Proquest International), Embase, ERIC (Proquest), Informit Database Collection, MEDLINE (1946+), Mednar, Prospero, PsycINFO, PsycEXTRA and Scopus.

Search

We conducted the initial search on 5 February 2016 and set up search alerts to capture any new additions to the literature up until 16 July 2016. No new additions were found. Search criteria and strategies for all databases are provided in Online Supplementary Material 1.

It became apparent after a hand search of citations that the term ‘interview skills’ should also be included in the search strategy. For the most part, this term related to clinical interviewing strategies but was occasionally used interchangeably with ‘communication skills’. We added this term to the keywords and repeated the search in all databases, yielding an additional four papers.

Study selection and data collection process

Two authors (P.D.-P. and C.L.) independently performed initial screening using title and abstract, followed by full-text eligibility assessment in an un-blinded, standardised manner. We resolved disagreements by consensus, and where necessary, it was arbitrated by another author (B.K.). The lead author (P.D.-P.) extracted data, which was checked by the second author (C.L.).

Data items

Outcomes identified in the data extraction included change in communication skills ability; self-evaluation, including satisfaction with CST; and self-ratings of attitudes. Information extracted from each study included sample size, participant characteristics (i.e. age and gender), the study’s inclusion and exclusion criteria, sample size, type of intervention and type of outcome measure.

Risk of bias in individual studies

For further information about some studies, we contacted corresponding authors by email. Authors P.D.-P. and C.L. independently conducted a ‘risk of bias’ using the Cochrane tool (Higgins & Green, 2011) for quality assessment, assessing the following domains as high risk, low risk or unclear: sequence generation, allocation concealment, blinding of participants and personnel, blinding of outcome assessors, incomplete outcome data, selective outcome reporting and other sources of bias. However, the Cochrane tool is not optimal to determine the quality of non-randomised studies in the field of medical education and does not take into account the hierarchy of educational outcomes (Kirkpatrick, 1967; Reed et al., 2007; Sullivan, 2011). Therefore, we also used the validated Medical Education Research Quality Instrument (MERSQI; Reed et al., 2008).

Summary measures

The principal summary measures varied due to the heterogeneity of reporting and include differences in pre/post means, percentage of respondent ratings, mean change scores and qualitative themes.

Synthesis of results

We decided (a priori, as published in the protocol) not to conduct a meta-analysis in this review as any comparison of effect size could be misleading due to the differences in CST delivery in dose, frequency, duration and methods. Similarly, we did not impute missing standard deviations, p-values or effect sizes in the extracted data because sample sizes were too small. We restricted our analysis to a qualitative overview with critical appraisal of all included citations, presented narratively with a summary of the strength and direction of quantitative evidence.

Results

Study selection

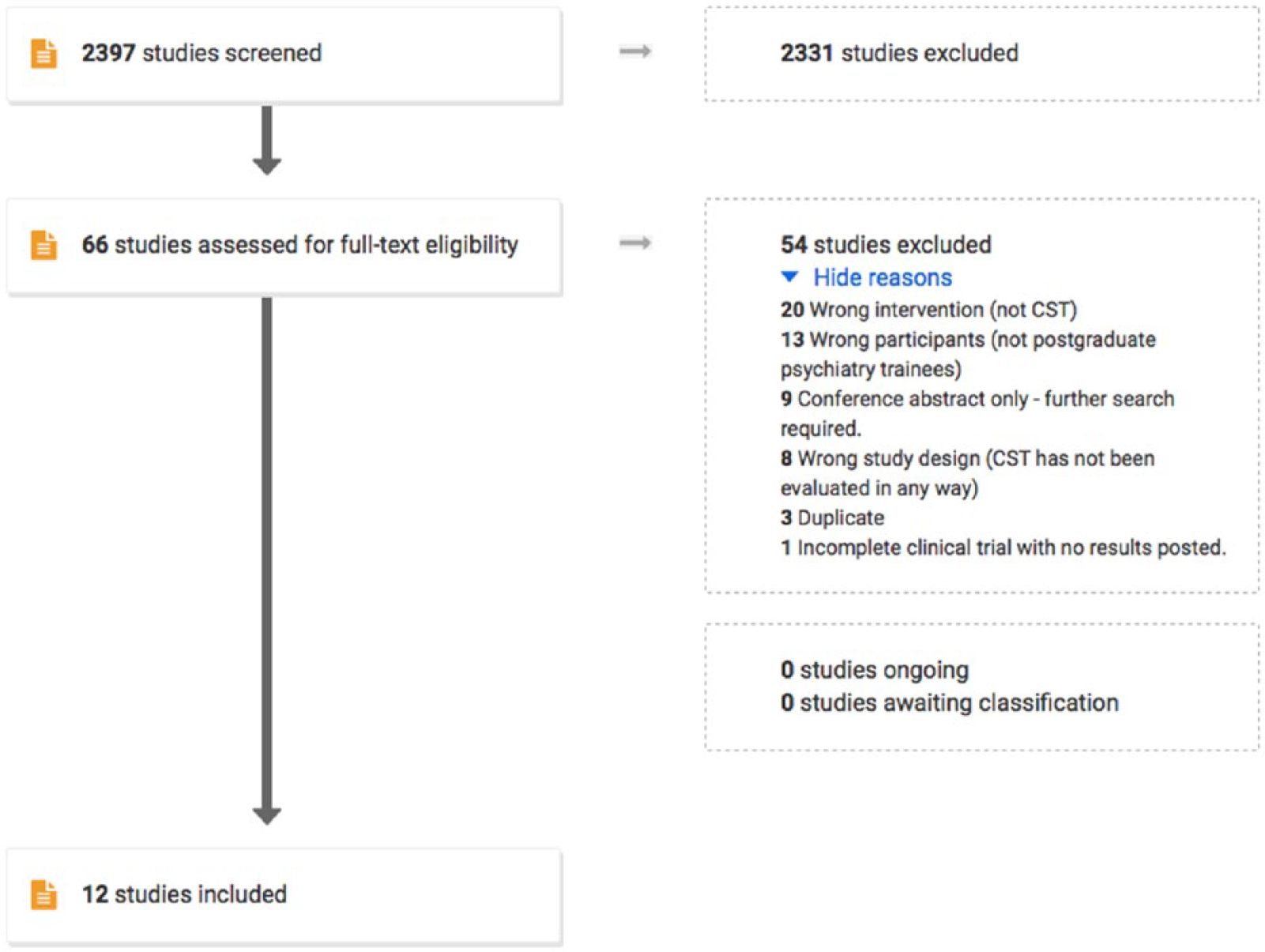

The PRISMA diagram below (Figure 1) provides details of screened records.

Flow diagram for systematic review (produced from Covidence software).

The initial search produced 2574 records, which we imported into EndNote. Of these, 177 duplicates were identified and removed, and we imported the remaining 2397 records into Covidence, an online tool for managing systematic reviews. We screened all records by title and abstract, after which 58 conflicts were resolved by consensus. A further 2331 records were excluded as irrelevant based on the title and abstract because they included the wrong participants (not postgraduate psychiatry trainees), the wrong intervention (not CST) or the wrong study design (CST was not evaluated in any way). The remaining 64 articles were then further assessed for eligibility by reading the full-text versions. Exclusions were based on wrong intervention (n = 20), wrong participant group (n = 13), conference abstracts only (n = 9), wrong study design (n = 8), duplicates (n = 3) and incomplete results (n = 1). The fourth author (B.K.) arbitrated on 2 of the 12 remaining studies, given their focus (psychotherapeutic skills and sexual medicine), and determined they were eligible as they included CST. In total, 12 studies were deemed eligible for inclusion in the review.

Study characteristics

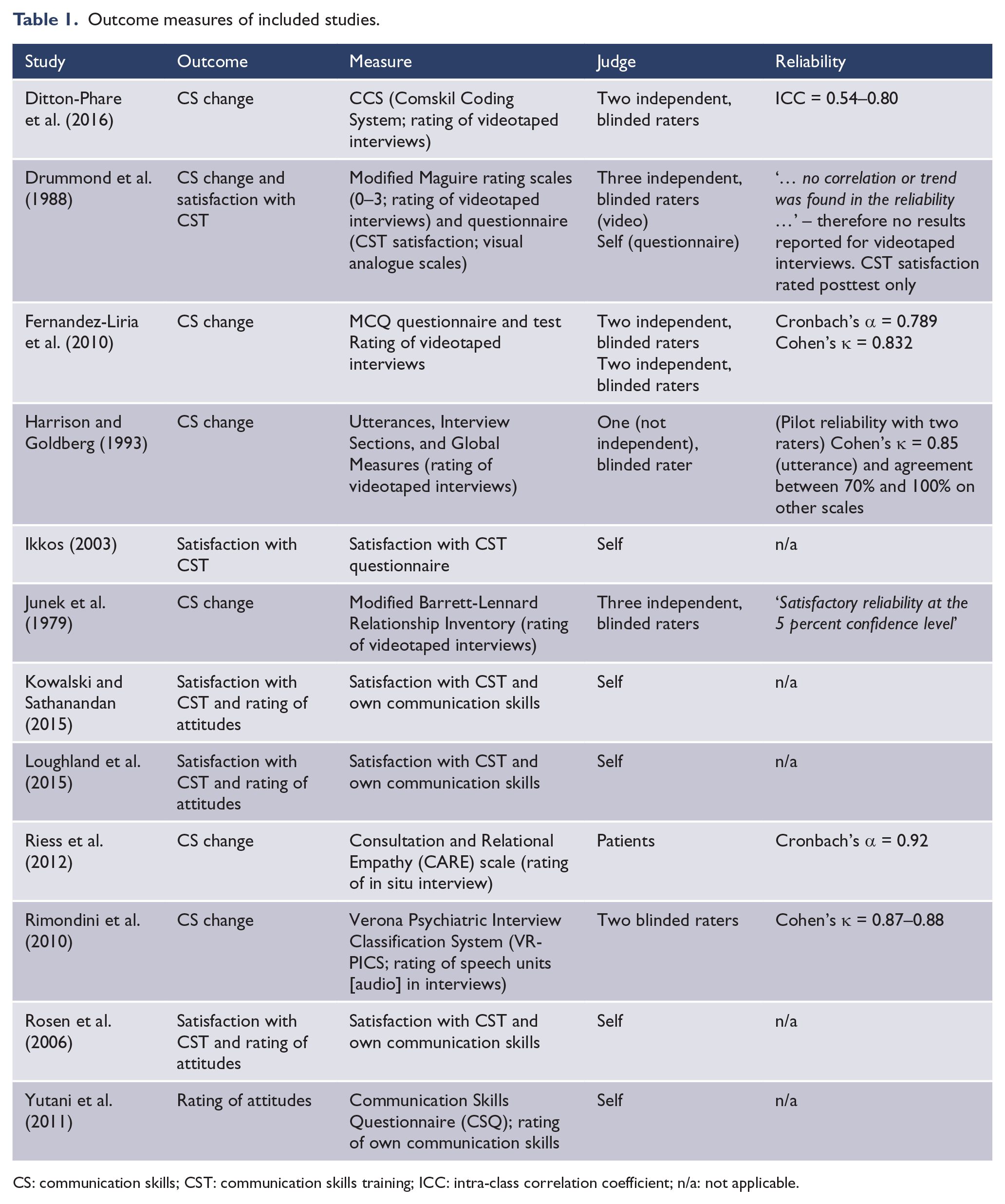

Tables 1 and 2 provide a tabulated summary of included studies’ outcome measures and characteristics.

Outcome measures of included studies.

CS: communication skills; CST: communication skills training; ICC: intra-class correlation coefficient; n/a: not applicable.

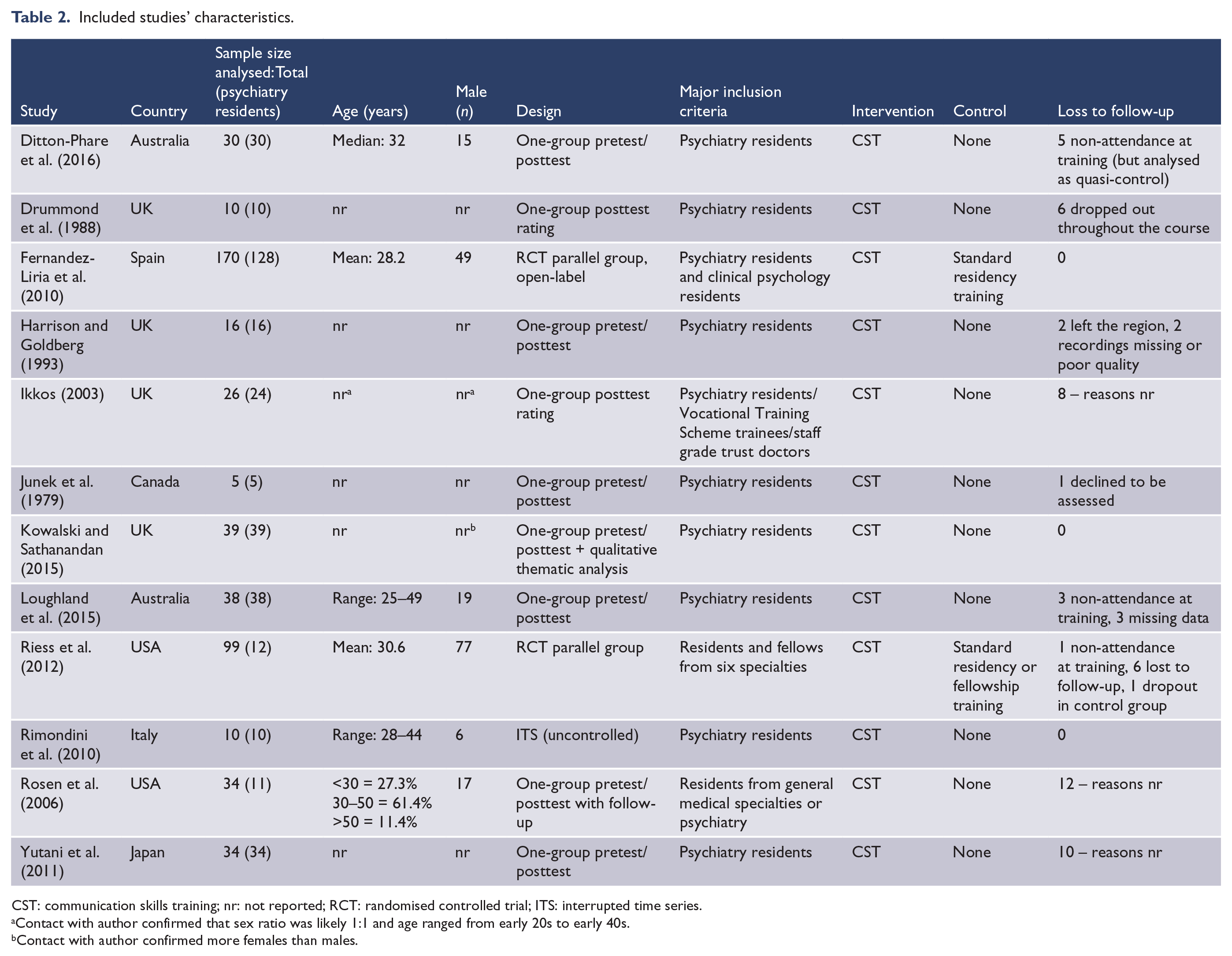

Included studies’ characteristics.

CST: communication skills training; nr: not reported; RCT: randomised controlled trial; ITS: interrupted time series.

Contact with author confirmed that sex ratio was likely 1:1 and age ranged from early 20s to early 40s.

Contact with author confirmed more females than males.

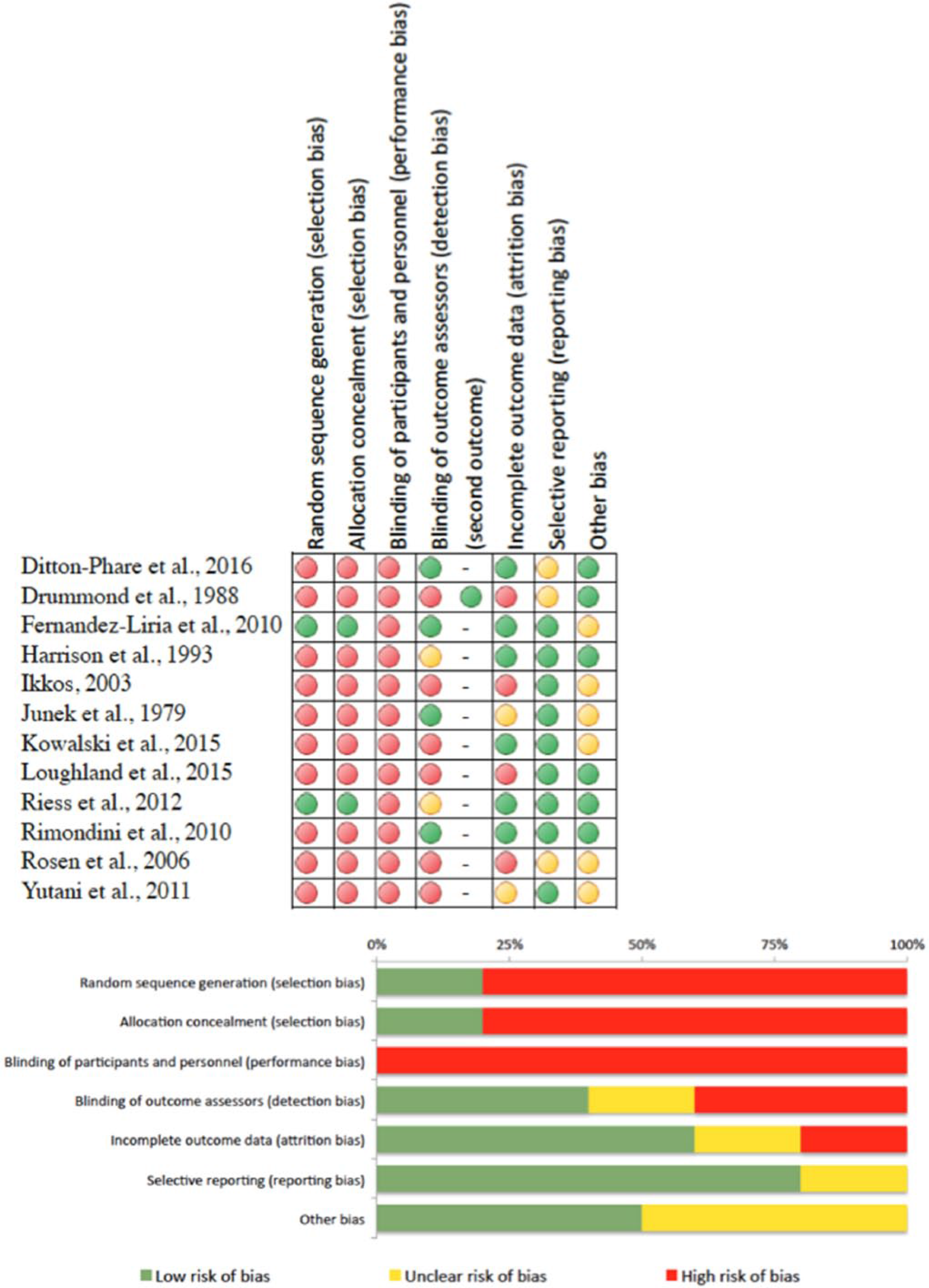

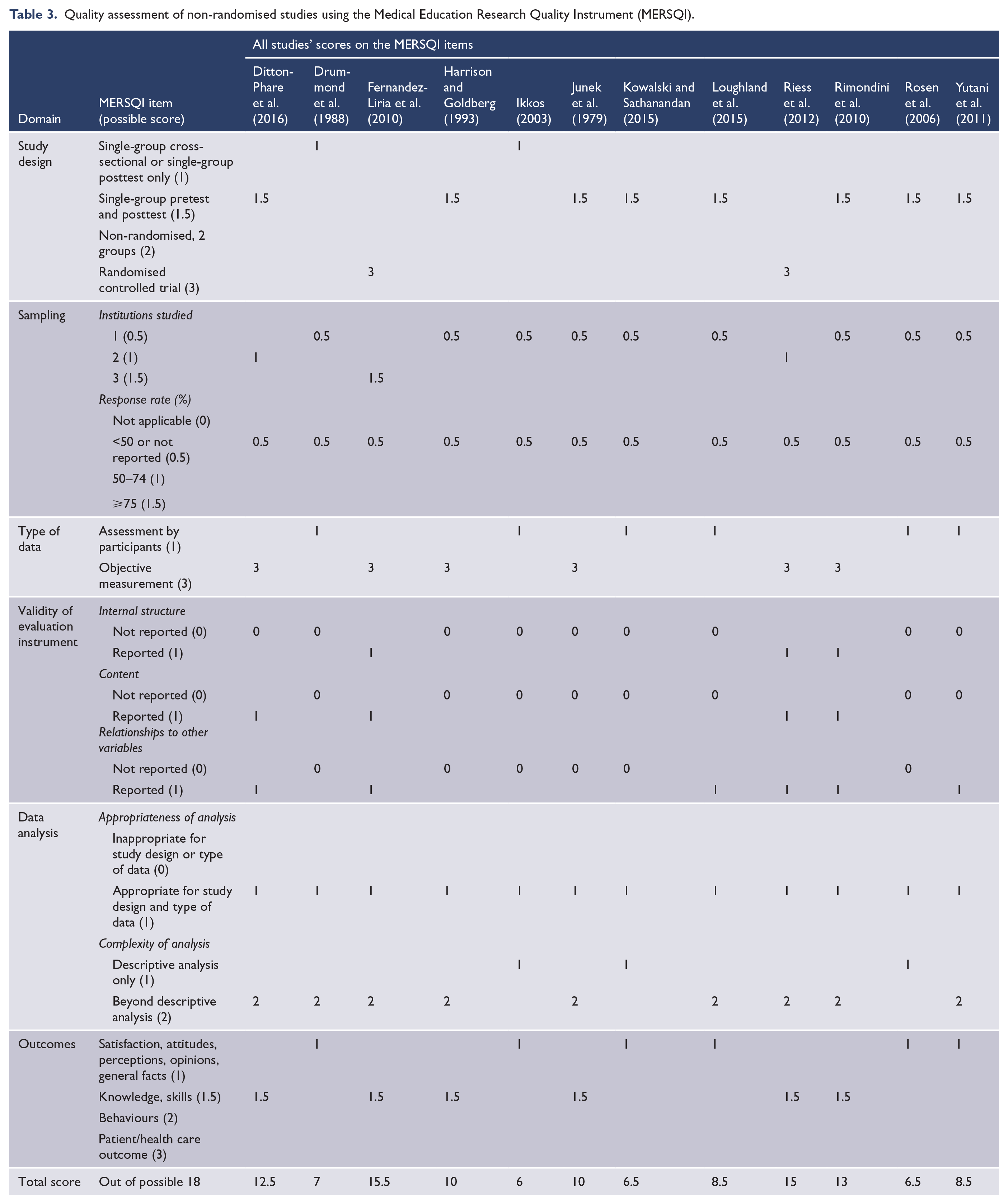

Risk of bias within studies

Figure 2 shows the risk-of-bias assessment for the included studies. Each of the 10 non-randomised studies was at high risk of bias for sequence generation and allocation concealment by having a single-group pretest/posttest design or a posttest-only design with no comparator or control group allocated. There was no ability for blinding of outcome assessors in self-report designed studies, and blinding of participants or personnel was not possible where both the trainers and trainees were aware of the training intervention. Therefore, a more meaningful assessment of quality for studies regarding evaluations of medical education in this review is provided by the MERSQI (Table 3). There was also a potential for risk-of-bias as 2 of the 12 (17%) included studies are by the same author team doing this review. However, all reviewers attempted to conduct this review systematically, truthfully and without bias.

Potential sources of bias in included trials using the Cochrane tool for quality assessment.

Quality assessment of non-randomised studies using the Medical Education Research Quality Instrument (MERSQI).

In a study by the current authors (Ditton-Phare et al., 2016), participants were allocated to the training group across two institutions, without comparison group. Pre- and post-digital recordings of trainee encounters were objectively and independently rated by blinded coders. A quasi-control group of five participants who participated in the pre/post assessments but did not participate in the training were allocated non-randomly with reasons for attrition reported. While not reported in the final publication, two of the empathic communication skills and one Information Organisation skill were removed from analysis due to poor interrater reliability, leaving 17/20 discrete skills taught during the intervention reported. Originally, this was included in a footnote and mistakenly removed during editing.

Drummond et al. (1988) ran a single-group posttest study with three independent raters who were blinded to pre/post using objective ratings of skills change. High dropout rates led to high risk of incomplete outcome data, and outcome data for skills change were not reported due to the unreliability of raters; therefore, only satisfaction self-ratings were reported. The risk of selective outcome reporting was unclear because the number of items and actual questions in the questionnaire were not reported. This article reported satisfaction ratings with predominantly descriptive analysis and one t-test comparison of dropouts and the attending group.

In a study by Fernandez-Liria et al. (2010), the control group (n = 35) was randomly selected from three teaching units using an independent service for randomisation. Two independent raters objectively evaluated the interviews, with two different raters evaluating the questionnaires. All were blinded to the source of the material and whether it was from pre- or post-assessment. No attrition was reported. It is unclear how many people were initially invited to participate (although 170 people are reported to have accepted the invitation).

Harrison and Goldberg (1993) used a one-group pretest/posttest-designed intervention. One of the outcome assessors was also a trainer and not an independent rater, making it unclear whether this person was truly blinded. Although one of the authors who was ‘blind to pre/post training status’ and another person randomised the recordings, it is nonetheless possible that the trainer, in providing the training and knowing the trainees, may have been able to tell the difference between pre and post outcomes.

Ikkos (2003) reported a one-group posttest evaluation. Only 26 of the 34 doctors returned completed questionnaires, revealing a high risk of incomplete outcome data. The author confirmed that feedback collection started 1–2 years after training and some doctors had moved on. There was an unclear risk of other bias because the author conceded that they were unable to distinguish between responses from different grades of doctors and that his multiple capacities as teacher and assessor made it possible that respondents did not honestly report their opinions.

Junek et al. (1979) reported a one-group pre- and post-training evaluation. Independent raters rated 30 video segments from various points of the recorded interviews in random order, suggesting that they were blinded to pre- or post-training. It was unclear whether there was incomplete outcome data because although the attrition of one participant was reported, he was referred to as a ‘poor’ performer. Given the small participant pool (n = 6), the inclusion of this participant may have impacted the overall results of this study. A baseline imbalance was found between native English speakers and those from non-English-speaking backgrounds. Because of the small sample size with insufficient power to conclude any reasonable findings, other sources of bias were unclear.

Kowalski and Sathanandan (2015) presented findings from a one-group pretest/posttest study that included a qualitative component. Other potential bias may be present as teachers of the training intervention analysed both the data and the interviews. Reporter bias may therefore have been introduced due to trainees wanting to please the trainers.

The current authors (Loughland et al., 2015) conducted a self-report one-group pretest/posttest study. Thirty-eight participants attended the CST; however, the evaluations reported consisted of only 32 participants. Although three were missing data due to training non-attendance, three other participants did not submit their pre/post self-assessments and thus were excluded from the analysis. The risk for incomplete outcome data is therefore high. One item was removed from analysis because it did not discriminate among respondents, but this was reported adequately.

In a study by Riess et al. (2012), trainee participants from two institutions were randomly assigned in a 1:1 allocation ratio to a training intervention group or a control group (training as usual). A computer-generated number sequence determined allocation and participants and patient raters were both blinded to the randomisation. The blinding of outcome assessors was not clear as the patients knew that they were rating their doctor and what they were rating them on; however, they were blinded to whether the doctor had completed training yet or not.

Rimondini et al. (2010) reported an interrupted time series design with one group and no comparator or control group. Recorded interviews were randomly assigned to two objective raters who were blinded to the purposes of the study and to whether the recordings were pre- or post-training. Missing data were attributed to damaged tapes, not attrition. It was acknowledged that significance testing of pre/post outcomes could not be performed due to the small sample size and regression analyses had to be performed on the units of speech, but this was reported.

Rosen et al. (2006) performed a one-group pretest/posttest study. A total of 46 residents were reported to have attended the programme, but data were only available for 34 of these. No reason was reported as to whether the remaining 12 simply did not fill in the evaluations or did not stay until the end of the programme. In addition, only 9 of the 34 completed the follow-up questionnaire. The number of trainees to answer each question was variable, so there were missing data. While it was reported that 28 participants were residents and 17 were attending/faculty, other students or guests (making a total of 45 participants), the authors reported 46 in the study. Therefore, the risk of incomplete outcome data is high and it remains unclear whether there is bias of selective outcome reporting. Since this research was funded by a grant from a pharmaceutical company (Pfizer, Inc.), there is an unclear risk of bias about whether the CST may have involved discussions about medications. If not, it is unclear why a pharmaceutical company would fund this study.

The Yutani et al. (2011) study was a one-group pretest/posttest self-evaluation. The risk for incomplete outcome data was unclear because although 44 agreed to participate, data were analysed for only 34 participants with no information provided about the 10 who did not respond. Therefore, there is unclear risk of possible bias as to why they did not report their own performance. While other papers in the review indicated their funding source or acknowledgements, this paper did not, so it was unclear whether there was any other source of bias.

MERSQI

Two authors (P.D.-P. and R.D.) independently conducted a MERSQI assessment for each study. Reliability between the ratings was tested using the intra-class correlation coefficient (ICC; two-way, mixed, alpha model using absolute agreement rating, reporting average measures with lower and upper bounds). There was no variance between the raters for the sections of study design, content validity, relationships to other variables, appropriateness of analysis and outcome. Reasonable reliability was found for institutions, ICC(3,2) = 0.88 (95% confidence interval [CI]: [0.60, 0.97]); type of data, ICC(3,2) = 0.91 (95% CI: [0.70, 0.97]); and sophistication of analysis, ICC(3,2) = 0.90 (95% CI: [0.63, 0.97]). However, there was poor reliability regarding response rate and internal structure. The authors met to discuss inconsistencies and clear up any misinterpretation, and unanimous agreement was reached for all sections. It was unclear in all studies whether the intervention groups represented the entire cohort of that type of participant available in the area or institution or whether the group consisted of just those who consented to participate.

Results of individual studies

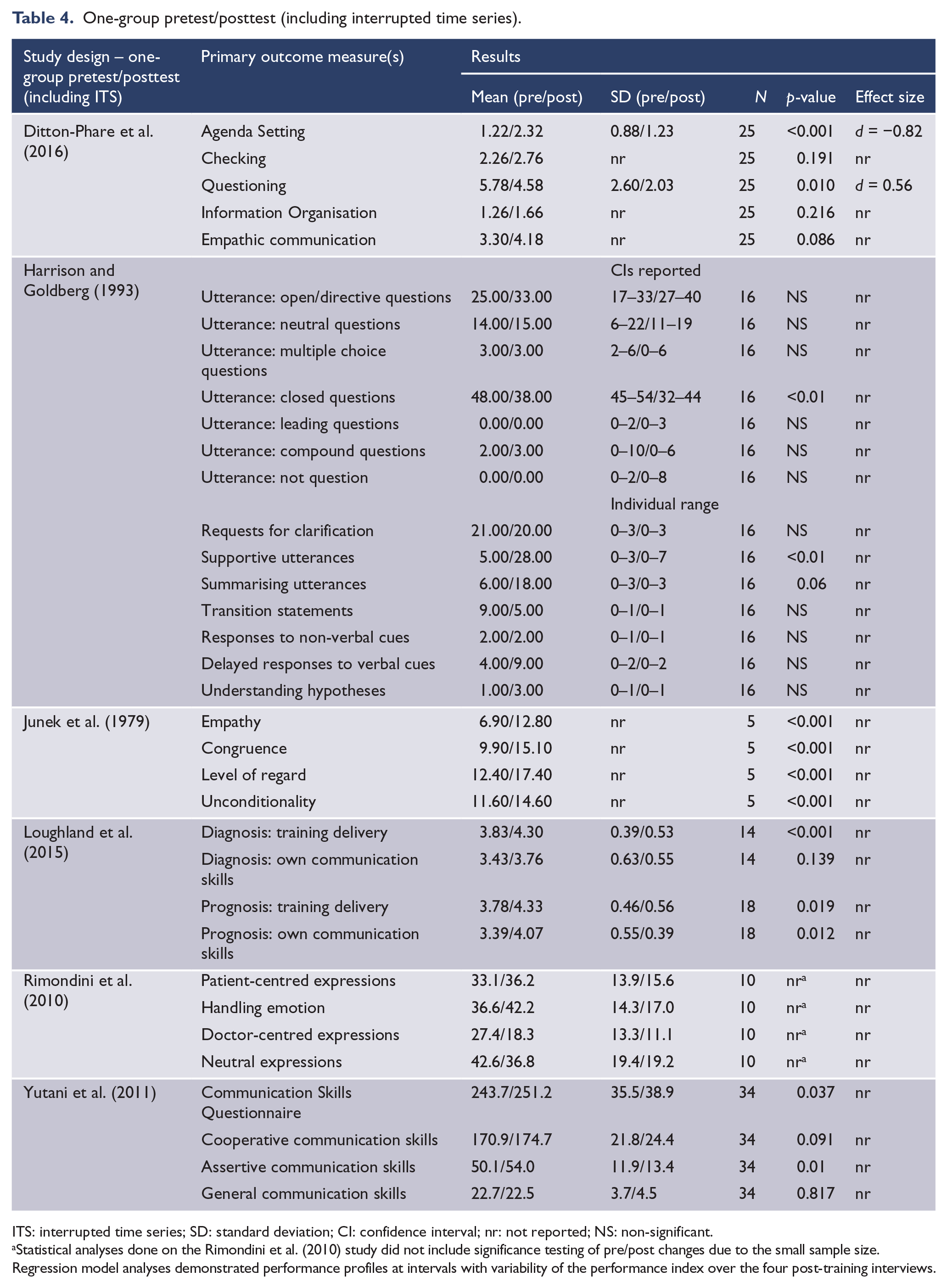

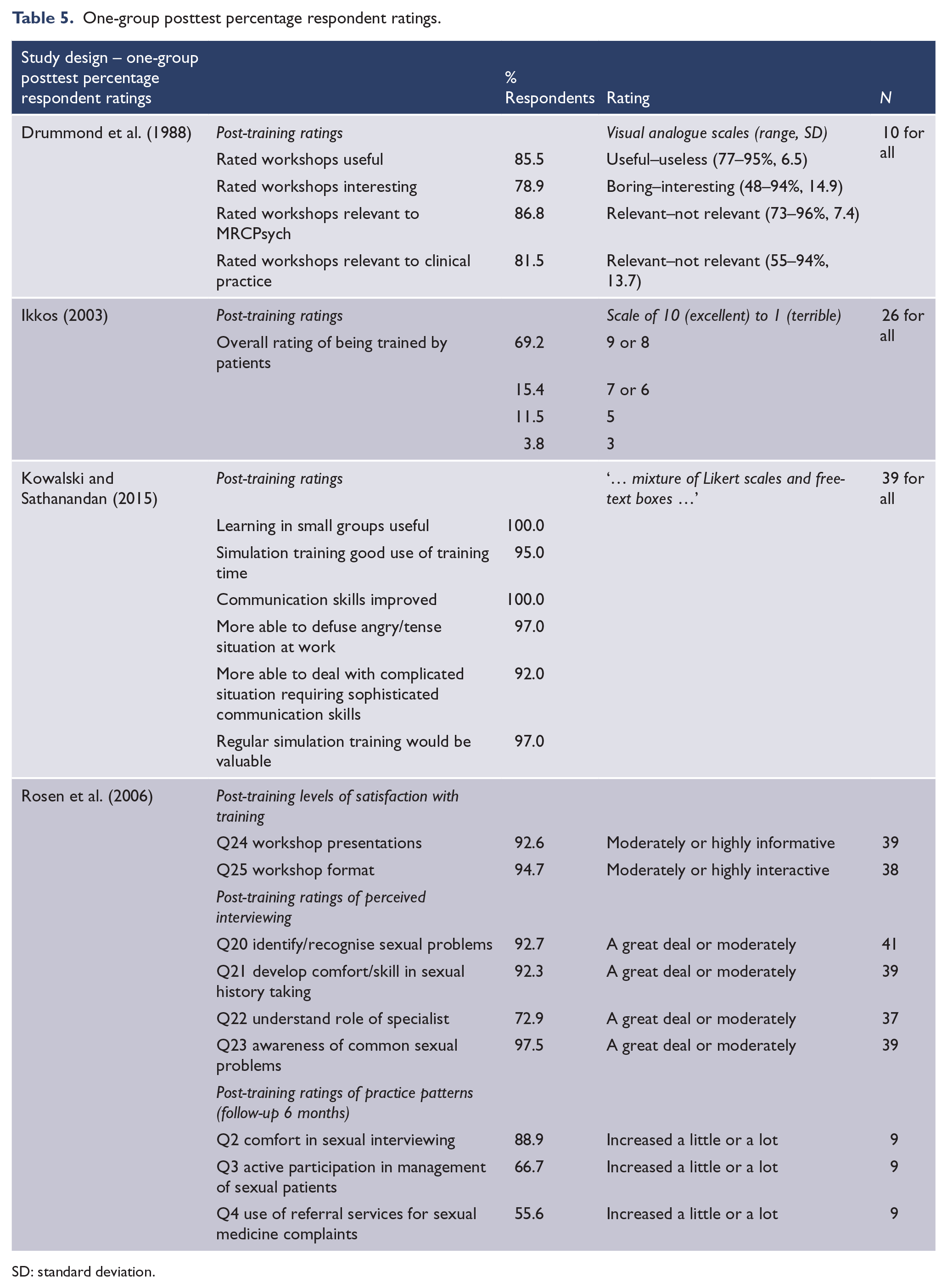

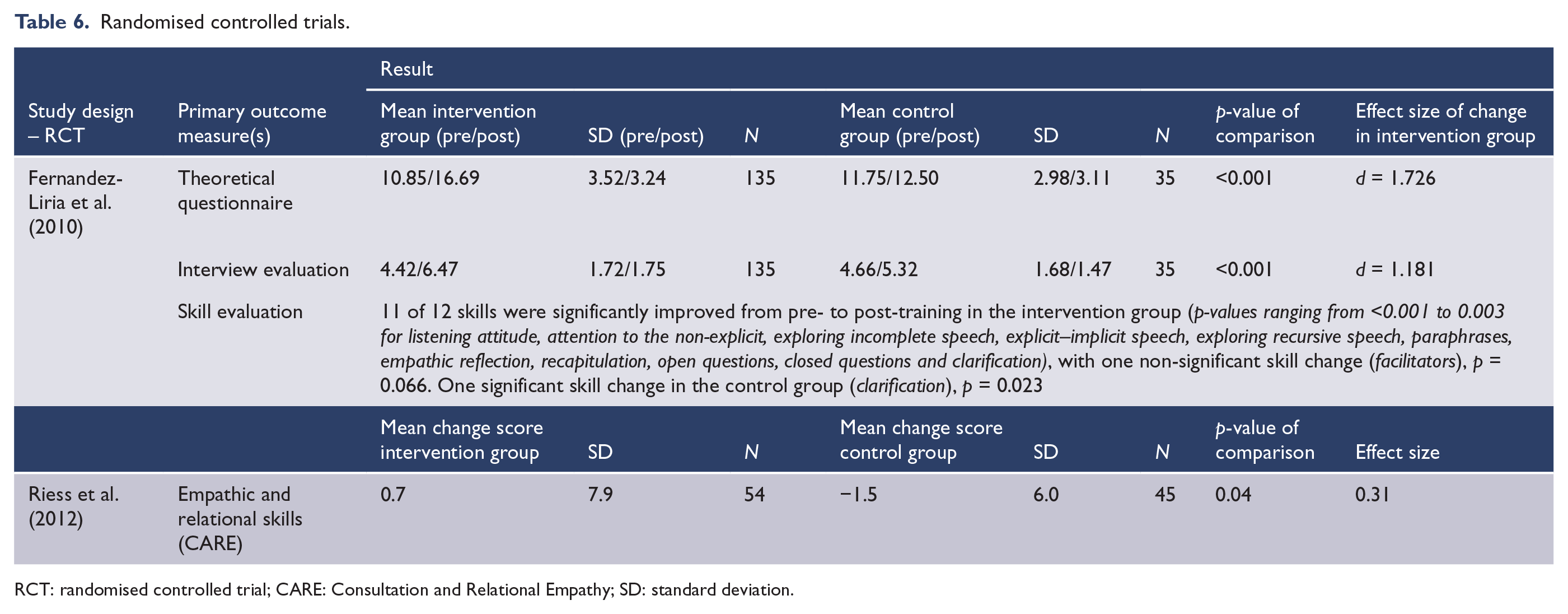

Tables 4–6 provide a tabulated summary of included studies’ quantitative extracted data and results.

One-group pretest/posttest (including interrupted time series).

ITS: interrupted time series; SD: standard deviation; CI: confidence interval; nr: not reported; NS: non-significant.

Statistical analyses done on the Rimondini et al. (2010) study did not include significance testing of pre/post changes due to the small sample size. Regression model analyses demonstrated performance profiles at intervals with variability of the performance index over the four post-training interviews.

One-group posttest percentage respondent ratings.

SD: standard deviation.

Randomised controlled trials.

RCT: randomised controlled trial; CARE: Consultation and Relational Empathy; SD: standard deviation.

In addition to the above quantitative data, qualitative responses were presented in the Kowalski and Sathanandan (2015) study and the Ikkos (2003) study. Neither of these studies employed a stringent qualitative methodology. Participants were asked questions in semi-structured interviews (Kowalski) or on a form (Ikkos). Questionnaire feedback from Ikkos ‘indicated some specific criticisms of a number of participants and dissatisfaction by a small minority of doctors, but the overall evaluation of the experience was positive’ (p. 312). Qualitative analysis of themes in the Kowalski study ‘showed that trainees found the scenarios realistic, that the experience had led to an increased awareness of their communication style and that original improvements in confidence had translated to their clinical work’ (p. 29). Readers are directed to view these papers for narrative summaries of qualitative responses.

Synthesis of results

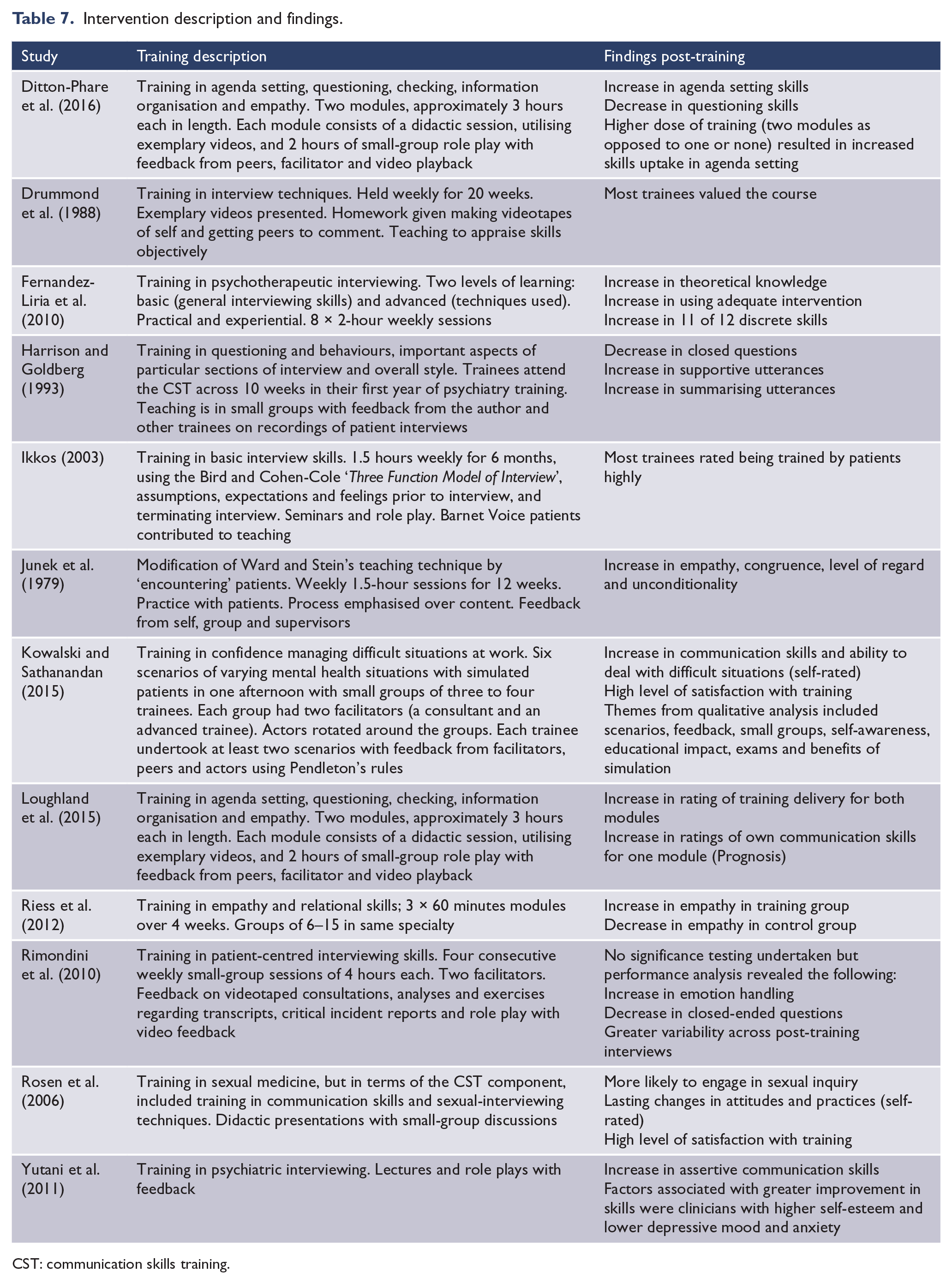

A description of each CST and each study’s findings are reported in Table 7.

Intervention description and findings.

CST: communication skills training.

Discussion

Summary of evidence

The aim of this review was to examine the efficacy of CST programmes in postgraduate training in psychiatry. Overall, evidence from self-reported feasibility studies and objective skills evaluations support the applicability of CST in postgraduate psychiatry; however, no studies measured skill retention over time in clinical practice or patient outcomes. All studies in this review reported either an increase in communication skills following CST or high satisfaction with the CST employed.

The majority of the studies in this review were non-randomised, with small sample sizes ranging from 4 to 44 with outcomes showing varying levels of communication skills gains and increased self-reported trainee satisfaction ratings with CST programmes. However, the overall risk of bias and the highly heterogeneous nature of training interventions, the evaluation tools used and the methodologies employed precluded any useful hypothesising about the efficacy of CST in psychiatry based on these studies. This said, these comprehensively described CST programmes and their training delivery methodologies provided evidence of their feasibility and acceptability and an excellent platform for future replication studies.

The outcomes from two RCTs included an increase in clinician empathy (rated by patients) resulting from training in empathy and relational skills and an increase in psychotherapeutic theoretical knowledge, the ability to choose the right intervention and an increase in communication skills as a result of training in psychotherapeutic interviewing. This tells us that embedding components of CST within specifically focused programmes for empathy development or psychotherapeutic interviewing is effective, inviting future studies to reflect on how CST embedded within skill-specific programmes might enhance CST skill retention as opposed to teaching the skills on their own without a goal-driven focus.

Inherent across most studies reviewed were problems with achieving rigour. These included the following.

Sample size

Due to the population of interest, cohorts were often small. Studies may therefore need to be run over multiple years to obtain larger samples. Fernandez-Liria et al. (2010) achieved a larger sample by recruiting across the country of Spain and managed to train all 135 participants over an 8-week course in the intervention group with no reported missed sessions. However, most training units that run programmes for a number of weeks may have more difficulty recruiting across such a wide geographical area, with greater dropout rates for some sessions likely. It may be that for most training institutions, dose of training and sample size are a trade-off due to geographical limitations and availability of large cohorts.

Study design

RCTs are inherently problematic to conduct, especially with respect to evaluating CST in postgraduate psychiatry. For example, there is often a strong desire for training by trainees, but the need for a control group may mean withholding access to training for a proportion of trainees, raising concerns about how ethical wait-listing might be. Crossover trials provide a solution of sorts to this issue. However, trainees do not necessarily stay in one service or location for long as they rotate geographically throughout their training years or may leave services. Therefore, the threat of dropout or loss to follow-up over a multi-year crossover trial is high. Rosen et al. (2006), even with a simple pen-and-paper questionnaire, lost 74% of their cohort to follow-up after 6 months (from 34 down to 9). In addition, some programmes run their CST at a certain point in training (e.g. the Ditton-Phare et al. (2016) programme runs CST in the first-year formal education course), making a crossover design impossible in these contexts.

An alternative research design might be a larger interrupted time series. The Rimondini et al. (2010) study offers a model that included a pre-training time series of four assessments and a post-training time series of four assessments, but their uncontrolled study had a small sample size (N = 10), preventing them from conducting any significance testing of before and after effects. Conducting many assessment points over time, however, not only requires a large amount of resources but also faces the same loss to follow-up challenges as a crossover trial if the length of time between the first and last assessment is long. It seems that research groups with greater access to resources, time and available participant cohorts have a better chance of conducting a rigorous study to determine objectively whether communication skills of psychiatry trainees improve due to CST.

Another alternative would be to conduct a study of higher order educational outcomes to examine how CST for psychiatry trainees impacts clinical practice and patient perspectives and clinical outcomes. While Riess et al. (2012) asked patients to rate the empathy of their doctors, future studies need to determine patients’ attitudes about their care and whether medication adherence, length of stay in hospital, recurring admissions, suicide rates and other measures of patient outcome are affected by treatment from doctors with better communication skills. How to measure these outcomes within this paradigm has not yet been explored and remains a target for future studies of this type.

While there is very little robust evidence concerning the efficacy of CST in psychiatry, there is a clear need for such training with an abundance of evidence concerning the efficacy of CST in other specialty areas. There is evidence also for its translation into the workplace (Lienard et al., 2010; Merckaert et al., 2015; Van den Eertwegh et al., 2013), and in this context, the lack of literature available for CST in psychiatry does not constitute a lack of efficacy. Rather, it reflects the lack of available literature in this growing field and the need for quality and consistency in reporting. Importantly, there is a need in psychiatry to demonstrate that CST can be translated into clinical practice and better patient outcomes. Although the variability of translation studies in this area has been highlighted by Van den Eertwegh et al. (2013), studies such as these are important for understanding the barriers and enablers to translations of CST to clinical practice (Van den Eertwegh et al., 2014).

Limitations

There are a number of limitations associated with this review, mainly that the training interventions and outcomes are not the same across studies and the quality of the studies varied. Restriction of the review to English-language publications may have also been a limitation, and decisions about study selection were sometimes difficult. A publication bias may have influenced the findings of this review, as no studies reported nil significant findings. It is unknown whether or how many unpublished studies with no significant findings exist. Six out of 12 studies used self-reporting to ascertain training effects, which may introduce reporter bias due to selective reporting of information, potentially because attitudes towards facilitators and trainers conducting the training might influence reporting of training outcomes. In addition, research into self-assessment suggests that the least skilled residents may be most at risk of inaccurately assessing their abilities (Hodges et al., 2001).

Conclusion

There have been no previous systematic reviews or meta-analyses conducted examining the effect of CST on postgraduate psychiatry trainees’ communication skills, although 12 different articles have been published between 1979 and 2016. All confirmed increased communication skills ability following a CST intervention. However, the estimated impact of CST is impossible to calculate for a variety of reasons, including the lack of statistical power due to small sample sizes, the heterogeneity of training interventions with differing dose, frequency, duration and methods, different methods of measuring the effect of CST, and varying quality and risk of bias of the studies’ methodology.

The next step for future studies would be to conduct either a large-scale RCT or interrupted time series study (potentially multisite to ensure adequate statistical power) with a follow-up assessment that measures skill retention over time and demonstrating skill retention ‘in vivo’ in clinical practice. There is a particular need to look at how skills translate to real-world practice outside the controlled setting of training and to determine what factors encourage or inhibit implementation in practice, as well as assessing how doctors trained in communication skills affect the outcome of patients. A substantial body of work done in oncology residency demonstrates the need for supplementation of training and follow-up workshops due to high attrition in skills over time if ‘refresher’ sessions are not provided (Delvaux et al., 2005; Razavi et al., 2003; Van den Eertwegh et al., 2013). Other types of studies that should be conducted include assessments of the cost-effectiveness of these training interventions to aid the decision-makers in health departments when considering funding programmes of this nature. The heterogeneity of CST is a fundamental reason for the difficulties in comparing the efficacy of different CST programmes to one another. However, further validation studies examining specific models and frameworks would support a stronger evidence base for this component of education.

Footnotes

Acknowledgements

This review was conducted by a PhD student from the University of Newcastle, in conjunction with the student’s supervisors and a senior lecturer at the University of Newcastle. We would like to thank the University of Newcastle librarian, Debbie Booth, for her assistance with search methods and access to databases.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The funding body of the student’s PhD played no role in study design, collection, analysis, interpretation of data, writing the report or in the decision to submit the paper for publication. They accept no responsibility for the contents.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.