Abstract

In the rapidly advancing landscape of surgical education, the traditional apprenticeship model is being increasingly complemented by individualized learning, competency-based assessment, and data-driven feedback. Work-hour restrictions, administrative burdens, and limited operative exposure have intensified the need for innovative solutions to supplement faculty-led training. Artificial intelligence (AI) has emerged as a promising adjunct, offering scalable platforms for technical skill acquisition, personalized feedback, and structured progress tracking. Early applications include AI-guided simulation, feedback, natural language processing for resident evaluation, and advanced applicant-screening systems, which hold the potential to streamline holistic review while reducing faculty workload. Despite these advances, significant challenges remain, including bias mitigation, ethical data governance, and the need for rigorous outcome-based validation. The greatest promise lies in hybrid models, where AI augments rather than replaces mentorship, freeing faculty for complex, context-dependent teaching. With careful implementation, AI is poised to meaningfully transform surgical education worldwide.

Keywords

Introduction

Surgical education is undergoing a paradigm shift from sole reliance on the traditional apprenticeship model; while skills are still primarily acquired through observation, repetition, and incremental responsibility, there is now increased emphasis on individualized learning, efficiency, and measurable competency. 1 This change has been accelerated by regulatory, technological, and cultural changes that challenge how residents acquire operative skills and prepare for independent practice. The Accreditation Council for Graduate Medical Education (ACGME) introduced duty hour restrictions in 2003, capping resident work at 80 h per week. While intended to reduce fatigue and improve patient safety, these reforms have raised concerns about reduced operative exposure and the readiness of trainees for unsupervised surgical practice. 2 Simultaneously, the increasing importance of electronic medical records and emphasis on administrative documentation has further impeded opportunities for experiential learning in the operating room.3,4

To address these constraints, researchers have begun exploring artificial intelligence (AI) and immersive technologies as supplemental tools for technical surgical training, with promising initial results. Even with emerging evidence for AI as a teaching modality, thoughtful deliberation on integrating these new tools into existing curricula and leveraging AI to provide more equitable access to surgical education worldwide is imperative. AI may additionally aid residency leadership with resident selection, especially in the wake of growing applicant numbers and desire for holistic review. Alongside the myriads of opportunities that AI portends, lie ethical and implementation challenges that must be addressed as widespread adoption is considered.

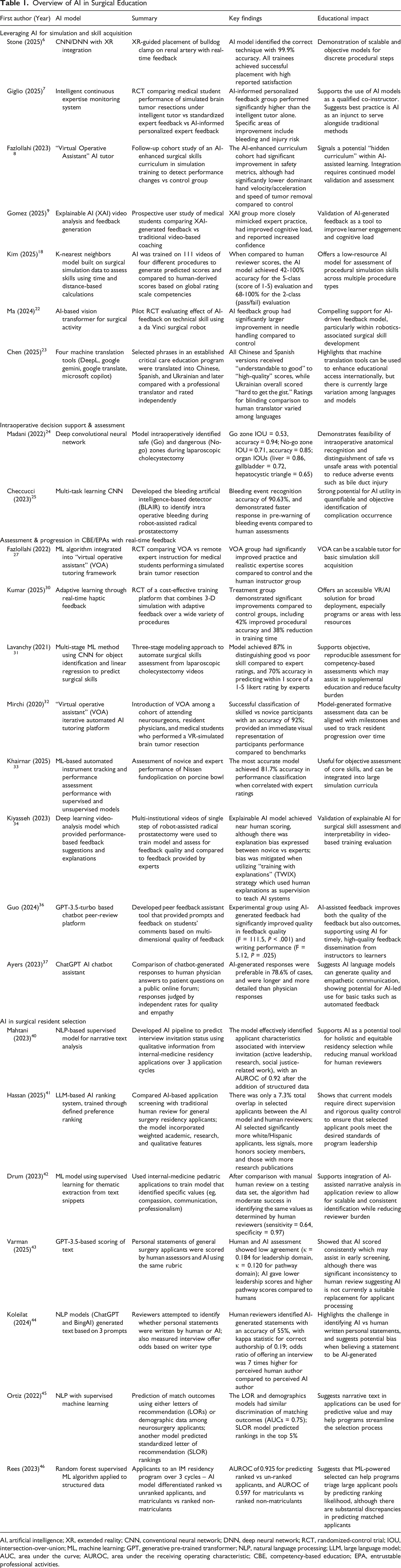

Overview of AI in Surgical Education

AI, artificial intelligence; XR, extended reality; CNN, conventional neural network; DNN, deep neural network; RCT, randomized-control trial; IOU, intersection-over-union; ML, machine learning; GPT, generative pre-trained transformer; NLP, natural language processing; LLM, large language model; AUC, area under the curve; AUROC, area under the receiving operating characteristic; CBE, competency-based education; EPA, entrustable professional activities.

Leveraging AI for Simulation and Skill Acquisition

The increasing complexity of medical education, rapid innovation in surgical techniques, variability in teaching styles, variable exposure to rare procedures, and increasing demands on teaching faculty create uneven training experiences and potential skill gaps—challenges that could directly impact patient safety. In response, surgical education is shifting toward a CBE framework, which emphasizes measurable milestones. Central to CBE are Entrustable Professional Activities (EPAs), defined as discrete, observable tasks that a trainee may perform independently once competence is demonstrated. 5 Successful implementation of this framework requires longitudinal performance tracking and frequent, individualized feedback—an area where AI can have transformative value.

Unlike traditional “one-size-fits-all” curricula, AI has the potential to dynamically assess each trainee’s performance and tailor learning accordingly. Simulation platforms powered by AI can evaluate progress toward specific EPAs and prescribe targeted practice in safe, low-stakes environments. As an example, Stone et al (2025) introduced an AI-driven extended reality (XR) system that guided trainees through renal artery clamp placement on a kidney phantom. Using deep learning applied to first-person video, the system distinguished among correct and incorrect clamp sites and provided real-time corrective feedback. In this proof-of-concept study, all 17 participants successfully completed the task, with algorithmic classification achieving nearly 100% accuracy and survey feedback showing strong acceptance.

6

At McGill University’s Neurosurgical Simulation and Artificial Intelligence Learning Centre, researchers tested a hybrid model in which 87 medical students practiced neurosurgical tasks under either AI-only instruction, human-only instruction, or human instruction informed by AI feedback.

7

The hybrid group significantly outperformed the others in both technical performance and skill transfer, demonstrating that AI is most effective when

By assessing unique data such as case logs and performance metrics, models may identify knowledge gaps and technical deficiencies and subsequently use this information to prescribe targeted learning activities such as additional dexterity training, anatomy reviews, and reading assignments. 9 This approach not only optimizes and modernizes the learning process but also ensures that available training hours are spent tailored to each trainee’s specific requirements.

Augmented and Virtual Reality Platforms

AI can integrate with augmented reality (AR) and virtual reality (VR) platforms to deliver immersive, adaptive training experiences. AR uses visuospatial technology to overlay computer-generated content onto the real world, while VR creates fully simulated environments. 10 Such systems have already shown measurable benefits. A randomized-controlled trial on laparoscopic salpingectomy found that proficiency-based VR training significantly enhanced surgical skills in novice registrars. The VR-trained group achieved a median performance score of 33 points—equivalent to an intermediately experienced surgeon, and completed the operation in half the time, with a median of 12 min compared to the control group’s 24 min. 11

AR/VR platforms commonly employ real-time hand and instrument tracking using sensors and cameras, allowing the system to immediately report objective data such as instrument path accuracy or hand stability.12,13 Emerging modalities including eye-tracking and physiological data are becoming increasingly integrated within AR/VR to provide rich assessments of performance.14-16 A randomized-controlled crossover trial investigated the effect of augmented reality telestration on surgical performance and gaze behavior in minimally invasive surgery training. 17 The study compared an AR-based system (iSurgeon) with verbal-only instruction, measuring outcomes in 40 laparoscopically naive medical students. Trainees instructed with the AR system exhibited improved gaze behavior, as evidenced by a substantial reduction in gaze latency and an increase in collaborative gaze convergence with the instructor. This guidance translated to a lower number of errors and higher scores on both the global and task-specific Objective Structured Assessment of Technical Skills (OSATS) scales. The use of AR also reduced the trainees’ cognitive workload, as measured by the NASA Task Load Index and blink rate, suggesting a more efficient and less taxing learning process.

AI for Global Surgical Education through Simulation

AI has the potential to broaden access to surgical education by delivering scalable, consistent learning experiences that do not rely on faculty availability. In low-resource environments, AI-driven systems can provide structured practice and feedback, helping trainees achieve competency benchmarks with limited supervision. Additionally, low-cost platforms have been developed which can facilitate adoption of AI technology to advance global surgical education.

Bridging gaps between high and low-resource countries require tools that are designed for low cost, and several recent proof-of-concept and pilot programs have had some success building these types of models. For example, Kim et al (2025) developed an open-source model for laparoscopic simulation, specifically designed for use in low-resource areas. Notably, the authors demonstrated that AI training on multiple different procedures (eg, appendectomy and salpingectomy) through the African Laparoscopic Learners – Safe Advancement for Ectopic pregnancy (ALL-SAFE) simulation platform could assess a different procedure (eg, enterectomy) within that same platform with moderate accuracy. The study found that training AI in this manner could most accurately assess performance on laparoscopic appendectomies; this study provides some pilot data towards demonstrating how AI models can be implemented in cost-effective, scalable methods in low-resource settings. 18

Virtual mentoring through AI to personalize learning and assess a trainee’s performance and skill remotely, transcending geographic boundaries, presently remains a theoretical potential which has not been studied.19,20 Proof-of-concept works have evaluated AI-based feedback for skill training. As highlighted in the earlier sections, AI-augmented personalized expert feedback resulted in superior performance on a VR simulation platform at McGill University; this study provides a preliminary impetus for AI guidance in focusing instructor feedback and time towards achieving optimal trainee performance through deliberate practice. 7 The concept of deliberate and directed practice in acquisition of expert performance has been a fundamental time-tested pillar in the domain of surgical education. 21 In the era of AI, the potential for AI-driven automated skills assessment and feedback is enormous as it addresses the key bottleneck of time commitment from faculty in busy clinical practice settings. As an example, authors from the University of Southern California designed an AI-based automated feedback system that assessed novice performance on robotic suturing of a vesicoureteral anastomosis. They noted that the AI-feedback group improved more than the control group (without AI-feedback) in specific domains of robotic suturing, and that AI-feedback most benefitted underperformers and receptive learners, while maintaining concordance with human assessment. 22

AI applications are also being used to address language and cultural barriers inherent to global education. Advances in language processing models have enabled researchers to translate health care curricula into many languages, increasing resource availability among diverse populations. Chen et al (2025) evaluated AI models such as Google Gemini and Microsoft CoPilot for translating critical care education materials from English into Spanish, Chinese, and Ukrainian. 23 Blinded clinical assessments and automated metrics indicated generally satisfactory performance, although specific outcomes varied depending on AI model and target language.

Finally, automated evaluation systems can standardize performance metrics globally, mitigating differences in national standards and supporting universal benchmarks for competency. Such objectivity can enhance patient safety and equity in surgical care worldwide.

Intraoperative Decision Support & Assessment

Beyond simulation, AI has potential intraoperative training applications. For example, Madani et al (2022) developed a deep learning model (GoNoGoNet) to identify anatomy and “safe zones” during laparoscopic cholecystectomy, offering real-time AR guidance. 24 This study highlights AI’s significant value in surgical education by accurately identifying safe and dangerous dissection zones during surgery. This technology can serve as a real-time digital mentor for trainees, enhancing their anatomical understanding and helping prevent critical errors. The model’s strong performance, with a mean F1 Dice score of 0.80 for No-Go zones, indicates its effectiveness in identifying high-risk areas. Such AI tools can be integrated into simulators and operating rooms to standardize training, objectively assess performance, and ultimately improve patient safety. In 2023, San Luigi Hospital developed a dedicated artificial Neural Network (NN) was created and trained to recognize active bleeding during robot-assisted radical prostatectomy (RARP). 25 The software was designed to analyze the video feed from the endoscope in real-time, scanning every 3 s to identify and predict the occurrence of bleeding. A confidence score, represented as a percentage, was used to signal the likelihood of an upcoming bleeding event. The software currently identified active bleeding with an accuracy of 90.6%. Researchers also noted that on average, the model was able to predict bleeding events 3 s faster than the human surgeon.

Such tools foreshadow a future in which AI enhances both surgical training and intraoperative safety. Virtual mentoring can also be useful beyond surgical training; AI-driven telemedicine platforms can allow for surgeons in rural settings to connect with specialists and seek real-time guidance on complex anatomy encountered during cases. 19 Although continued development and validation of these tools on larger scales is necessary, the potential for implementing AI in such settings can produce performance improvements globally.

Finally, despite the need for significant ongoing investment and early stages of technological development, AI-integrated AR/VR platforms could procedurally generate novel anatomic variations and operative scenarios, reducing practice redundancy and strengthening intraoperative decision-making. Together, these advances highlight AI’s potential to adapt training to each learner, optimize preparation, and reinforce patient safety.

Assessment & Progression in CBE/EPAs with Real-Time Feedback

Perhaps the most transformative role of AI in surgical education lies in performance evaluation and feedback. Traditional assessment relies heavily on faculty observation. While expert evaluators bring invaluable clinical judgment, human assessment is inherently limited by subjectivity, inter-rater variability, and observation time. A 2023 scoping review found that even well-intentioned evaluators may demonstrate implicit bias when rating trainee performance. 26 AI offers a powerful complement by generating objective, continuous, and reproducible reports of technical skill.

This aligns well with competency-based frameworks that emphasize EPAs. AI-enabled simulation platforms can track resident progress toward specific EPAs and prescribe practice regimens to meet performance targets. For example, Fazlollahi et al (2022) performed a randomized-controlled trial among 70 medical students, and the group that received feedback from an AI audiovisual model had greater improvement than students receiving instruction from a human expert, when assessed on a VR neurosurgical model. 27 The model provided goal-oriented, metric-based suggestions, which translate well into today’s EPA-based guidelines. AI could also be leveraged to devise suitable training schedules to achieve proficiency by a target date.

AI additionally offers opportunities to standardize resident assessment beyond written exams such as the In-Training Exam (ITE) or American Board of Surgery Qualifying Exam, which purely measure trainee knowledge.28,29 Using motion-tracking and haptic sensors, AI can evaluate parameters such as smoothness of operative motion, instrument path, and error frequency.

30

Ma et al (2024) found that a group of surgical trainees who received AI-based skill assessment results from suturing tasks using the

Beyond individual assessment, AI enables benchmarking. Trainee performance can be compared against expert surgeon metrics, identifying gaps and prescribing structured training schedules to achieve proficiency within defined timelines. This is demonstrated by Khairnar et al (2025), who applied a machine learning model to videos of novice and expert surgeons performing laparoscopic suturing and found that their model correctly distinguished learner status with 81.7% accuracy. 33 Such stratification tools may allow earlier identification of underperforming learners, ensure equitable training outcomes across programs, and ultimately improve patient safety. By combining objective assessment with personalized feedback, AI has the potential to elevate surgical education into a more transparent, data-driven process.

Curriculum Integration of AI in Surgical Training

Despite AI’s promise, it is not a substitute for human mentorship, which remains the cornerstone of surgical education. Current evidence suggests the greatest gains occur when AI and faculty complement each other. For example, Kiyasseh et al (2023) compared AI-based feedback to expert feedback and found that while AI explanation is often in concordance with that of experts, they can be less reliable for certain learner groups. 34 Given this discrepancy, they proposed the “TWIX” (training with explanations) model, wherein AI systems are trained to mimic human explanations, rather than simply providing a numerical score. Effective integration therefore requires a balanced curriculum where AI augments expert teaching.

Several platforms already demonstrate how AI can be incorporated into surgical training. SIMPL (System for Improving and Measuring Procedural Learning) enables attendings to log resident operative performance in real time via a mobile app. 35 While convenient, its utility is limited by scorer variability and the time burden placed on faculty. AI could enhance such systems by aggregating faculty evaluations, adjusting for rater stringency, and generating concise anonymized reports highlighting resident strengths and weaknesses for clinical competency review. Although such an approach in surgical training in its infancy, comparable approaches have been demonstrated to be efficient in other educational settings. For example, Guo et al (2024) introduced an AI chatbot designed to evaluate undergraduate student comments on their peers’ essays. Results showed that the integration of AI feedback improved the quality of peer reviews. These findings highlight the benefit of AI-generated feedback within an educational setting. 36

AI tools are particularly well suited for repetitive technical tasks such as robotic suturing or needle passing, where objective performance metrics can be tracked longitudinally. For nuanced domains including intraoperative judgment, management of complications, or patient communication, human oversight is gold standard, although AI may have a place in improving these facets as well. In fact, Ayers et al (2023) found that evaluators preferred AI-generated responses over physician-generated responses to questions from an online medical forum. 37 A practical approach for curricular integration of AI may therefore involve using AI for repetitive skill reinforcement and progress tracking, while reserving faculty expertise for the complex, context-dependent teaching, where AI is most likely to falter.

AI in Surgical Resident Selection

With residency applications increasing annually, programs face mounting difficulty in reviewing applicants thoroughly and equitably.38,39 The growing volume often limits holistic review, especially of narrative components such as personal statements, recommendation letters, and descriptions of meaningful activities. AI, long applied to analyzing complex data, is now being tested as a tool to process text-based information at scale, potentially easing this burden.

Mahtani et al (2023) used natural language processing to evaluate over 188 000 narratives from internal-medicine applicants, integrating thematic markers with structured data to predict interview offers with ∼92% accuracy. 40 In contrast, Hassan et al (2025) reported that an AI- based resident selection algorithm aligned with the program director selection in only 7% of cases, with AI-selected applicants being more likely to be White/Hispanic and with higher standardized exam scores and research metrics. 41 Drum et al (2023) developed a model that identified traits such as compassion, teamwork, and work ethic from residency applications, highlighting the ability to quantify qualities often valued but difficult to measure. 42 These studies underscore both the promise and variability of AI, emphasizing that outcomes depend heavily on model design and oversight. While these examples reflect the early developments of incorporating AI into the residency selection process, care must be taken to avoid over-reliance which may pave the path for savvy applicants to alter their content towards securing a favorable score on AI-only review. This is highlighted by Varman et al (2025), who found that while AI scores of prospective general surgery resident personal statements were consistent, they differed significantly from human reviewers. 43 As AI use becomes more widespread and accessible, there are growing concerns that students may use these tools to generate their personal statements, allowing them to draft and revise their work far more quickly than those who wrote their essays themselves. To this effect, Koleilat et al (2024) found that surgeons from a resident selection committee were only able to distinguish AI and human-generated personal statements with an accuracy of 55%, reflecting the potential issues that may emerge with broad knowledge and adoption of AI tools. 44

The most compelling role for AI is not to replace human reviewers but to act as an intelligent filter, triaging large applicant pools and flagging candidates for deeper review. Ortiz et al (2022) used 2 natural language models: one that used applicant letters of recommendation and one that used demographic data like honor society membership, standardized examination scores, and number of research publications. 45 Both models were able to discriminate whether applicants did or did not match into neurosurgical residency program, although the letter of recommendation model was superior (area under the curve = 0.80 vs 0.75). Likewise, Rees and Ryder (2023) used a machine learning algorithm to predict outcomes for 5067 internal-medicine residency applicants, and found high accuracy in distinguishing ranked from unranked applicants (area under the receiving operating characteristics curve [AUROC] = 0.925), but less success in distinguishing matched from unmatched candidates (AUROC = 0.597). 46

In summary, AI models can preserve faculty oversight, reduce time burden while ensuring rigorous standards, and manage repetitive, time-intensive tasks amid ever-growing application volumes. AI also has the potential to enhance holistic review by streamlining the extraction of key data points that programs prioritize, thereby reducing the need to rely on algorithms for interpreting context from letters, personal statements, or narrative experiences—areas where AI outputs may be discordant with human assessment. For example, one of the large graduate medical education selection platforms (Thalamus) has pioneered an applicant-screening platform (Cortex) that uses AI, NLP and transcript normalization to extract relevant application datapoints which are then aggregated into an interface optimized for the human reviewer. 47 The system claims to increase screening efficiency by 50%, allows programs to customize which datapoints matter by letting faculty define what parameters they consider predictive for success in their residency. Finally, as AI algorithms inevitably reflect the biases present in their training data, it is essential that a diverse group of stakeholders defines which datapoints are incorporated. Such deliberate design is critical to mitigate bias and ensure fair, equitable opportunities that align closely with human judgment.

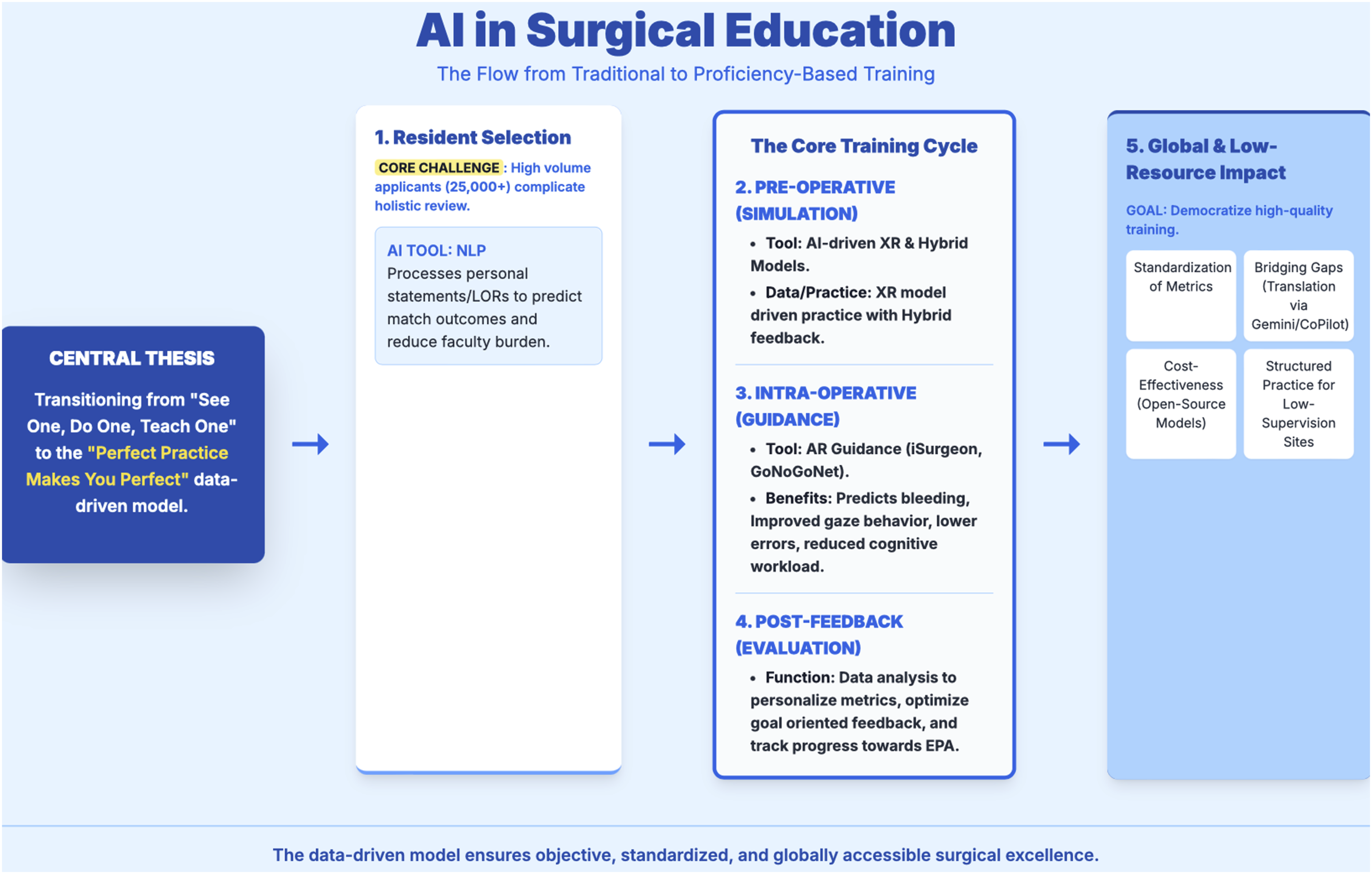

The overview of AI in surgical education as elaborated in the preceding sections of this article is illustrated as a concept map in Figure 1. The corresponding literature discussed is tabulated in Table 1. Concept Map Overview of Artificial Intelligence in Surgical Education. NLP: Natural Language Processing; LOR: Letter of Recommendation; AI: Artificial Intelligence; XR: extended reality; AR: augmented Reality; EPA: Entrustable Professional Activity

Ethical Consideration of AI in Surgical Education

The adoption of AI raises significant ethical concerns, foremost among them the ownership and use of resident performance data. Many AI systems rely on continuous data collection sent to vendors for model refinement. 48 While useful for optimization, these data sets may include sensitive trainee information, raising risks of privacy breaches, misuse, or even monetization. 49 Public or insurance disclosure of raw performance data could also unfairly influence patient perceptions of individual surgeons. 50 While programs may value such data to identify struggling residents, critics argue that blinded or aggregate use is preferable to avoid discrimination. Clear governance frameworks remain lacking.51,52 Informed consent is critical, and residents and patients should know what data is collected, its storage duration, and how it is shared. Opt-out provisions, institutional review board oversight, and explicit policies for human review and override are necessary safeguards. 53 AI-generated evaluations should be used as adjunctive, not definitive, measurements of performance. Finally, AI use for residency applicant review should avoid bias through input from a diverse group of individuals during model development as well as frequent post hoc analysis and adjustment. Time and effort need to be allocated to these tasks to mitigate risks of homogenization and lack of diverse personnel and perspective during recruitment.

Challenges and Future Directions

Despite early promise, several barriers must be addressed for AI to be integrated into surgical education. Faculty acceptance is pivotal. Resistance often stems from skepticism about validity and concerns that AI undermines traditional mentorship strategies.54,55 Transparent development, pilot programs demonstrating improved trainee performance, and alignment with established simulation guidelines may foster quicker adoption. Data security also remains a critical challenge. Breaches like the 2024 Change Healthcare hack, which exposed data for over 100 million patients, highlight the stakes. 56 Vendors managing trainee or patient data must comply with strict regulations, emphasizing anonymity, secure storage, and scheduled deletion. Future large multicenter trials with long-term follow-up are needed to validate efficacy and generalizability of AI in surgical training and provide the leap from a proof-of-concept to trusted educational infrastructure.

Conclusion

Artificial intelligence has emerged as a powerful adjunct in surgical education, offering personalized feedback, scalable training, and standardized assessment that can bridge institutional and global gaps. The greatest promise lies in hybrid models where AI augments, rather than replaces traditional mentorship. To realize this potential, rigorous outcome-based research, long-term validation, and careful attention to ethical, technical, and implementation challenges are essential. With deliberate governance and thoughtful integration, AI can become a transformative pillar of surgical training.

Footnotes

Author Contributions

NR, PCP – Conceptualization, draft of preliminary manuscript, revision, approval of final version. DL, MXM, JWK – conceptualization, critical review of manuscript with revision for incorporating intellectual content, approval of final version. AR – senior author, conceptualization, critical review of manuscript with revision for incorporating intellectual content, approval of final version.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.