Abstract

Introduction

A steadily rising opioid pandemic has left the US suffering significant social, economic, and health crises. Machine learning (ML) domains have been utilized to predict prolonged postoperative opioid (PPO) use. This systematic review aims to compile all up-to-date studies addressing such algorithms’ use in clinical practice.

Methods

We searched PubMed/MEDLINE, EMBASE, CINAHL, and Web of Science using the keywords “machine learning,” “opioid,” and “prediction.” The results were limited to human studies with full-text availability in English. We included all peer-reviewed journal articles that addressed an ML model to predict PPO use by adult patients.

Results

Fifteen studies were included with a sample size ranging from 381 to 112898, primarily orthopedic-surgery-related. Most authors define a prolonged misuse of opioids if it extends beyond 90 days postoperatively. Input variables ranged from 9 to 23 and were primarily preoperative. Most studies developed and tested at least two algorithms and then enhanced the best-performing model for use retrospectively on electronic medical records. The best-performing models were decision-tree-based boosting algorithms in 5 studies with AUC ranging from .81 to .66 and Brier scores ranging from .073 to .13, followed second by logistic regression classifiers in 5 studies. The topmost contributing variable was preoperative opioid use, followed by depression and antidepressant use, age, and use of instrumentation.

Conclusions

ML algorithms have demonstrated promising potential as a decision-supportive tool in predicting prolonged opioid use in post-surgical patients. Further validation studies would allow for their confident incorporation into daily clinical practice.

Introduction

Over the past two decades, the opioid pandemic has been steadily on the rise. 1 In 2008, US citizens, constituting less than 5% of the world’s population, consumed about 80% of the global opioid supply. 2 Despite declining consumption over the following decade, the US still leads the charts for opioid use and its complications. 3 A CDC report in 2021 estimated total deaths in the US from opioid overdoses alone to have increased from 56064 to 75673 over the preceding year. 4 Additionally, the devastating social implications of opioid misuse and the related significant morbidity and mortality5-7 burdened the US economy in 2013 with around $78.5 billion8,9 which increased to $1.02 trillion in 2017. 10 Although the emergence of illegally manufactured opioid derivatives shares the blame for the emergence of this issue, its impact is not well tracked and studied. 11 The primary culprit, however, seems to be the increased pharmaceutically regulated opioid prescription (OP). 12 What started as a sincere effort to humanely and adequately manage pain has turned into a national emergency; not at the hands of drug dealers.

Understanding pain and determining the best management protocol through opioids or other analgesia is a debatable topic that requires extensive research.13,14 Nevertheless, tackling this issue by analyzing current practices has recognized several factors fueling the increased OP. For example, Kalakoti et al found preoperative opioid dependence to be the most decisive risk factor for postoperative opioid dependence. 15 Orthopedic surgeons rank third among physicians and account for 7.7% of all OP in the US16,17 despite surgery ranking behind cancer pain, backache, and many other rheumatological and musculoskeletal conditions that are top causes linked to an office visit with an OP.18-20 Certainly, the simultaneous presence of such factors increases the risk of prolonged postoperative opioid (PPO) use, predisposing long-term dependence behavior and significant adverse consequences.21-24

Artificial Intelligence (AI) domains, particularly Machine Learning (ML), have been increasingly implemented in health care over the past decade with promising results.25,26 For example, several studies have utilized ML models to predict PPO.27-41 If such an estimation proves accurate, elimination of unindicated OP and interventions to adopt a safer and more conservative OP pattern could be implemented before exposure to a significant risk factor like surgery. Furthermore, such information will help categorize conditions and clinical settings and stratify patients into low and high-risk for opioid misuse. Indeed, doing so will rationalize the use of opioids and offer a more personalized patient-specific treatment approach. Therefore, we have conducted this systematic review by compiling the current evidence to date to answer a specific research question: can ML algorithms accurately predict PPO use?

Methods

Search Strategy

This systematic review was conducted in compliance with the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA). 42 We performed an all-time search on May 10th, 2022, utilizing four electronic medical databases: PubMed/MEDLINE, EMBASE, CINAHL, and Web of Science. The following keywords: “Machine Learning,” “Opioid,” and “Prediction” were used to generate the search string: (“Machine Learning” AND opioid AND prediction). In addition, boolean operators, truncation, and MeSH terms were applied where appropriate. The search results were limited to human studies in the English language with available full texts through our institutional access.

Eligibility Criteria

We included all (1) peer-reviewed journal articles that addressed (2) an ML algorithm model to (3) predict (4) long-term (5) opioid use by (6) adult patients in the (7) post-operative setting, (8) while reporting the results for both training and validation cohorts. Both qualitative and quantitative studies were included. We excluded unpublished data, data under review, viewpoints, dissertations, thesis, conference proceedings, short surveys, letters to editors, and book chapters. In addition, we excluded studies that predicted pain occurrence instead of an OP or those that predicted a short-term opioid use; less than 2 weeks postoperatively.

Study Selection and Collection process

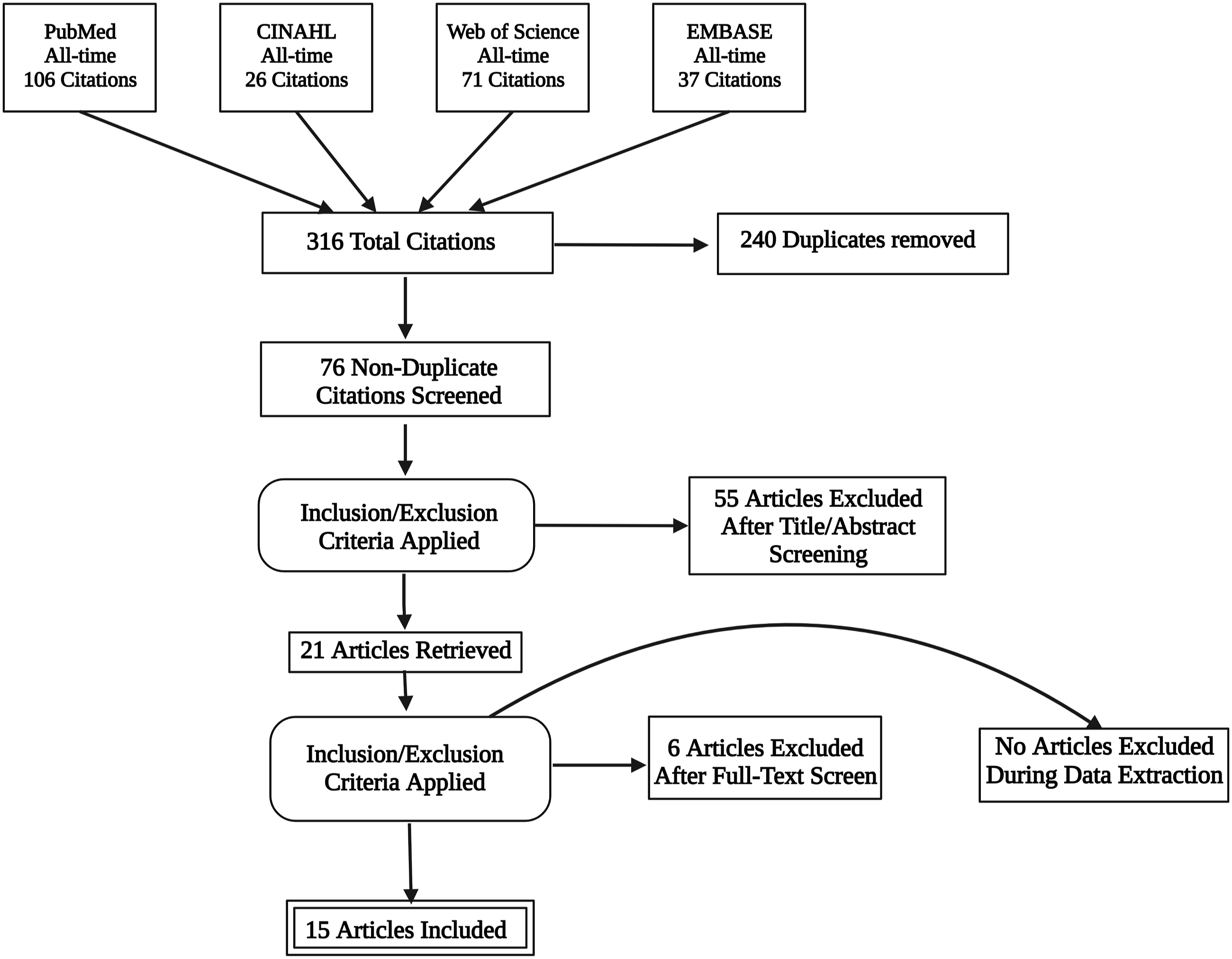

Two authors independently performed the search and imported the results to the EndNote v.20 reference manager, where duplicates were removed. Any conflicts or selection issues were solved by resorting to a third author. No studies were added from references of the included 15 papers. Figure 1 summarizes the search process. A PRISMA flowchart diagram summarizing the database search process.

Quality Assessment

In addition, we assessed the potential risk of bias using the MINROS index for non-randomized studies. 43 Inter-rater reliability was ensured by having three authors independently assess each study using the same standardized sheet. In cases of disagreement, the majority of votes were the deciding factor for that particular scoring point (see the supplementary materials).

Results

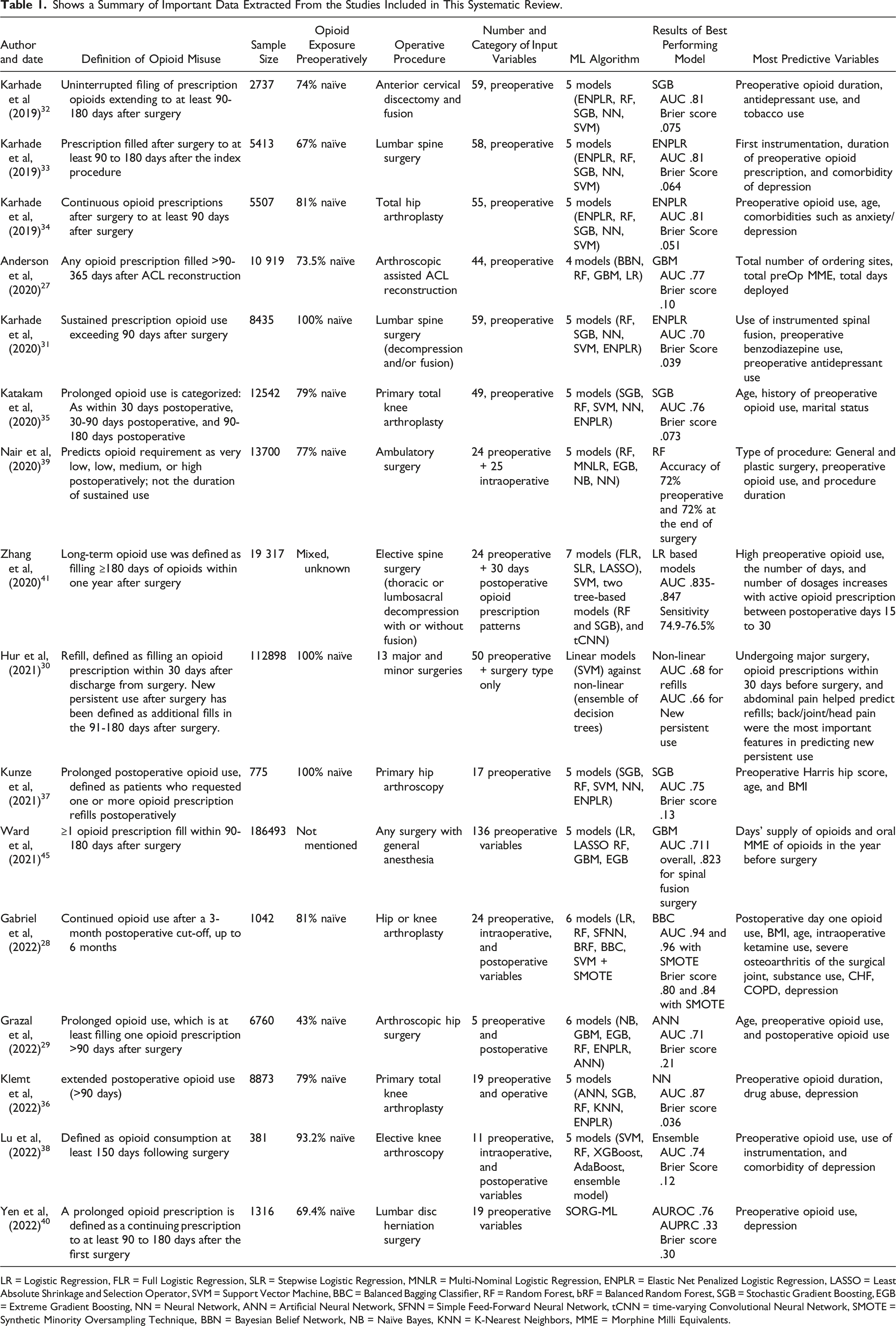

Shows a Summary of Important Data Extracted From the Studies Included in This Systematic Review.

LR = Logistic Regression, FLR = Full Logistic Regression, SLR = Stepwise Logistic Regression, MNLR = Multi-Nominal Logistic Regression, ENPLR = Elastic Net Penalized Logistic Regression, LASSO = Least Absolute Shrinkage and Selection Operator, SVM = Support Vector Machine, BBC = Balanced Bagging Classifier, RF = Random Forest, bRF = Balanced Random Forest, SGB = Stochastic Gradient Boosting, EGB = Extreme Gradient Boosting, NN = Neural Network, ANN = Artificial Neural Network, SFNN = Simple Feed-Forward Neural Network, tCNN = time-varying Convolutional Neural Network, SMOTE = Synthetic Minority Oversampling Technique, BBN = Bayesian Belief Network, NB = Naïve Bayes, KNN = K-Nearest Neighbors, MME = Morphine Milli Equivalents.

Synthesis Of Evidence

Defining Opioid Misuse

The definition of long-term opioid misuse varied among the studies. For example, Nair et al predicted the opioid requirement on a very low, low, medium, and high scale, not the duration of use. 39 All other authors looked at the prolonged period of use postoperatively as a marker of misuse. For example, Kunze et al defined “any" patient-requested refills in the postoperative period as prolonged opioid use. 37 Katakam et al categorized OP postoperatively into within 30 days, 30-90 days, and beyond 90 days. 35 All other 12 studies used the time point of 90 days postoperatively as a milestone for opioid misuse: whether sustained use since the operation, refills, or new OP extending beyond that hallmark (27-34,36,38,41).

Sampling and Datasets

Sample sizes ranged from 381 38 to 112898 30 adult patients who underwent surgery. Fourteen studies looked at patients at either an institutional level 28,31-36,38-40 or a more inclusive database set, for example, M2 Military Health System Data Repository,27,29 Optum's Clinformatics DataMart database, 30 MarketScan Databases (Truven Health), 41 and National Insurance Claims. 44 Only Kunze et al looked at the patients of a single fellowship-trained surgeon. 37

Opioid Exposure vs Naivety

Opioid naïve patients are those who have never been exposed to opioids before the surgery. Karhade et al, 2020, 31 Hur et al, 30 and Kunze et al 37 included only opioid-naïve patients in their studies. Grazal et al included more opioid-exposed (57%) than opioid-naïve patients. 29 The remaining 11 authors had a mixture of both groups, with the opioid-naïve patients being at least 67% of the sample size.27,28,32-36,38-41

Type of Surgery

Most of the included studies investigated the risk of PPO use in patients undergoing orthopedic surgeries. First, Karhade et al,31-33 Zhang et al, 41 and Yen et al 40 looked at patients undergoing spine surgeries, whether cervical, 32 thoracic, 41 or lumbar.31,33,40,41 Second, Anderson et al, 27 Katakam et al, 35 Gabriel et al, 28 Klemt et al, 36 and Lu et al 38 studied knee-related procedures, namely, arthroscopic-assisted ACL reconstruction, 27 primary total knee arthroplasty,28,35,36 or elective knee arthroscopy. 38 The third group of studies looked at either hip arthroplasty28,34 or arthroscopy.29,37 Finally, two studies examined patients undergoing various types of surgeries, whether major or minor.30,39

Input Variables

The number of input variables used by the ML algorithm ranged from 9 27 to 23. 39 The nature of these variables was mainly preoperative in all studies; hence, the model prediction was processed before surgery. Alternatively, eight studies added intraoperative or post-operative variables to the predictive model,28-30,36,38-41 thereby processing the prediction either at the end of surgery immediately or in the immediate postoperative period, within 15 to 30 days of the procedure.

Training Sets

Two main training methods were observed across the 14 studies that developed their algorithm, excluding Yen et al, 45 who utilized a readily available model to validate its clinical utility on a different population.

Only Lu et al 46 trained and validated their model via .632 bootstrapping with 1000 resampled datasets. Bootstrapping is a method that simulates new data samples by replacement so that observations never run out. It estimates the accuracy of a sample statistic of the author’s choice by calculating its estimate, confidence interval, and standard error. Its advantage lies in providing a more accurate standard of error estimate as it doesn’t assume the model’s distribution. However, if a small data set is used, as with Lu et al, the representability of such generated samples, and therefore the training, is questioned.

The remaining thirteen authors adopted the more commonly used cross-validation approach, either k-fold or leave-one-out. Karhade et al,31-34 Katakam et al, 47 Kunze et al, 37 and Zhang et al 41 divided the total patient population into training (80%) and testing (20%), also known as hold-out, sets, referred to as a stratified 80:20 split. The subset of variables determined for final modeling was selected by recursive feature selection with a random forest algorithm. Next, 10-fold cross-validation of the training set, repeated three times, was used to develop the respective algorithms developed in each of their studies. However, those authors didn’t specifically mention other specific criteria.

On the other hand, Gabriel et al, 28 Klemt et al, 48 and Nair et al 39 specifically mentioned randomizing the master data set before splitting, which is a very critical detail that enhances the validity and accuracy of the model from an engineering perspective. Grazal et al 29 split the data into 80:20 but balanced by the outcome variable, keeping the prolonged post-operative opioid use percentage the same across both sets. Hur et al 30 did a 5-fold cross-validation instead of 10-fold on the training set. They also chose the held-out set to be mainly of those who underwent surgery most recently, as if the model was trained using older data and then tested on newer, more recent ones.

Anderson et al 27 shuffled and split data into 80% training and 20% hold-out sets, balanced by outcome variable at 90 days. Next, the training set was divided into training 75% and validation 25%. Each model was built on the training data set, tuned with the validation set as applicable, and tested on the separate hold-out dataset. Feature selection varied for models, and The Boruta algorithm for feature selection based on a 100-tree random forest algorithm was used to extract the relevant variables (It systematically eliminates irrelevant variables by comparing their calculated importance and randomly calculated importance out of 10 possible features)

Finally, the performance of all trained models was assessed through discrimination (c-statistic, AUC), calibration (plot, slope, intercept), overall performance (Brier score), and decision curve analysis for clinical utility analysis. Model interpretability and explanation were provided at the global and local levels before the models were deployed to run on the testing sets.

Nature of Machine learning Models

Yen et al used only one publicly accessible model, SORG-MLA, to test its clinical applicability in predicting PPO use. 40 The rest of the studies developed many ML models, ranging from 2 30 to 7, 41 with the mode being 5 (n = 9). Supervised ML models included K-nearest neighbor, 36 logistic regression (LR)-based models as elastic-net penalized LR,29,31-37 multi-nomial LR, 39 full LR, 41 stepwise LR, 41 Least Absolute Shrinkage and Selection Operator (LASSO), 41 and LR with an L2 penalty and with an L1 LASSO penalty. 28 Decision tree-based models included random forest classifier,27-39,41 stochastic gradient boosting,31-37,41 gradient boosting machine,27,29 extreme gradient boosting,29,39 XGBoost,30,38 and AdaBoost. 38 Other models included naïve bayes, 29 balanced bagging classifier, 28 and support vector machine.28,30-35,37,38,41 Finally, reinforcement ML algorithms comprised neural network,31-35,37,39 artificial neural network,29,36 simple-feedforward neural network, 28 and time-varying convolutional neural network. 41

Assessment of Machine learning Models

The algorithms varied in nature, and their respective outputs were compared against each other. The best-performing algorithm type was supervised in thirteen studies27,28,30-35,37-41 and of a reinforcement nature one in only two studies.29,36 Out of the thirteen supervised ML models, eight were decision-tree based models/classifiers27,28,30,32,35,37-39 and five were logistic regression-based.31,33,34,40,41 Five of the eight decision-tree-based models were boosting algorithms.27,30,32,35,37

Models were assessed via two primary metrics: the area under the receiver operating curve (AUC) and the Brier score. AUC indicates an accurate prediction if closer to 1. The opposite goes for the Brier score, in which a score of 0 indicates a perfect model, and a score of 1 represents the worst model prediction. Gabriel et al reported the highest AUC of .96 of a balanced-bagging classifier model enhanced with the Synthetic Minority Oversampling Technique (SMOTE). 28 Klemt et al reported the best Brier score of .036 in a neural network model. 36 The 5 best-performing boosting algorithms had an AUC ranging from .81 32 to .66 30 and a Brier score ranging from .073 35 to .13. 37 Only Nair et al assessed the performance using accuracy, where a random forest algorithm scored 72%, both before and at the end of surgery. 39

Most Predictive Variables

Several studies reported the top three contributing factors to their model prediction. These variables were the most likely to weigh in on the model's output and result in PPO misuse. Preoperative opioid use was the most reported predictive variable,27,29,30,32-36,39-41,44,49 followed by depression and antidepressant use.31-34,36,38,49 Other predictive variables included age, use of instrumentation, type and duration of surgery, OP pattern in the immediate postoperative period (day 1, first 2 weeks, and days 15-30), and tobacco and drug use.27-41,44,49

Discussion

An Opioid Crisis

One distinctive feature of the opioid epidemic is its emergence from the professional clinical settings, the fighter against substance abuse being the main culprit. 12 Indeed, pharmaceutical campaigns’ misinformation on undertreated pain under the umbrella of ethical humane considerations has pushed for massive regulations to control the practice and is a contributing factor.11,16 The complexity of the dilemma lies in its’ intertwinement with the delicate issue of appropriate pain management. Overtreatment of pain with potent analgesics ensures the absence of suffering at the expense of long-term consequences of opioid dependence, questioning their pressing indication.4-7 The root of this issue starts with an OP.

The Opioid Prescription and Pain Dilemma

Pain is a mysteriously ambiguous phenomenon with various components, mechanisms for activation, and signaling pathways. 50 Owing to the variability in its subjective experience and the absence of an objective detection method, self-report remains the current gold standard for assessing pain.51,52 This evaluation gives rise to the challenging issue of adequately balancing the potential for analgesia over prescription or abuse while respecting a highly variable subjective feeling and ensuring the absence of any suffering. Accordingly, choosing the best analgesic medication, opioid or non-opioid, is a controversial research subject with substantial evidence backing the different arguments put forward to guide its procedure-specific and multidisciplinary nature.53-57 Perhaps studies predicting the type of analgesic protocol to be prescribed postoperatively may help address the need for opioids to begin with. Timing the administration of analgesia is another vital issue that impacts the pain response and, consequently, the pain experience and the dose of analgesia required. The concept of pre-emptive analgesia, which starts before surgery, aims to minimize the pain response and hypersensitivity that will have resulted from surgery; before any nerve stimulation or activation ensues.58-60 Despite the lack of conclusive evidence on this matter, several animal, clinical studies, and meta-analyses have demonstrated many potential benefits, such as decreased dosage and potency of analgesia required.60-65

All these aspects of pain management question the pressing need for high doses of potent analgesia as opioids. Nonetheless, despite the many regulations governing and controlling OP, it remains one of the highly sought strong pain-relieving medications by surgical patients, second to chronic pain and cancer patients.18,66 Indeed, with prolonged use of opioids, more tolerance is built, and the more likely a dependence behavior to develop. 67 As such, a new OP to a naïve patient is the critical moment to target. This preventive approach is the rationale behind the studies included in this review. They aim to detect high-risk individuals who are more likely to develop any form of opioid overuse after surgery. Treating physicians’ awareness of such a precarious clinical setting will help guide their decisions along with the rest of the health care team towards an individualistic pain management protocol. In addition, early knowledge of such risks would be of great value during preoperative patient counseling, particularly regarding the choice and dose of analgesia as well as the timing of its administration.

Furthermore, identifying the exact factors that are majorly contributing to PPO use would improve future regulation of the issue. For instance, preoperative opioid use was found to be the most critical factor in predicting PPO use.15,68 Any opioid or opioid-derived products used the year before surgery seems to increase PPO use significantly, either as a continued sustained use, higher dosage needs, or increased OP refills. Similarly, O'Connell et al. reported that preoperative depression increased cumulative opioid use and decreased the likelihood of post-operative opioid cessation following lumbar fusion procedures. 69 Accurately identifying such predictive factors was done through the use of ML algorithms.

Machine Learning Models

The past decade has witnessed an increased reliance on AI and ML technologies in medicine and health care.

25

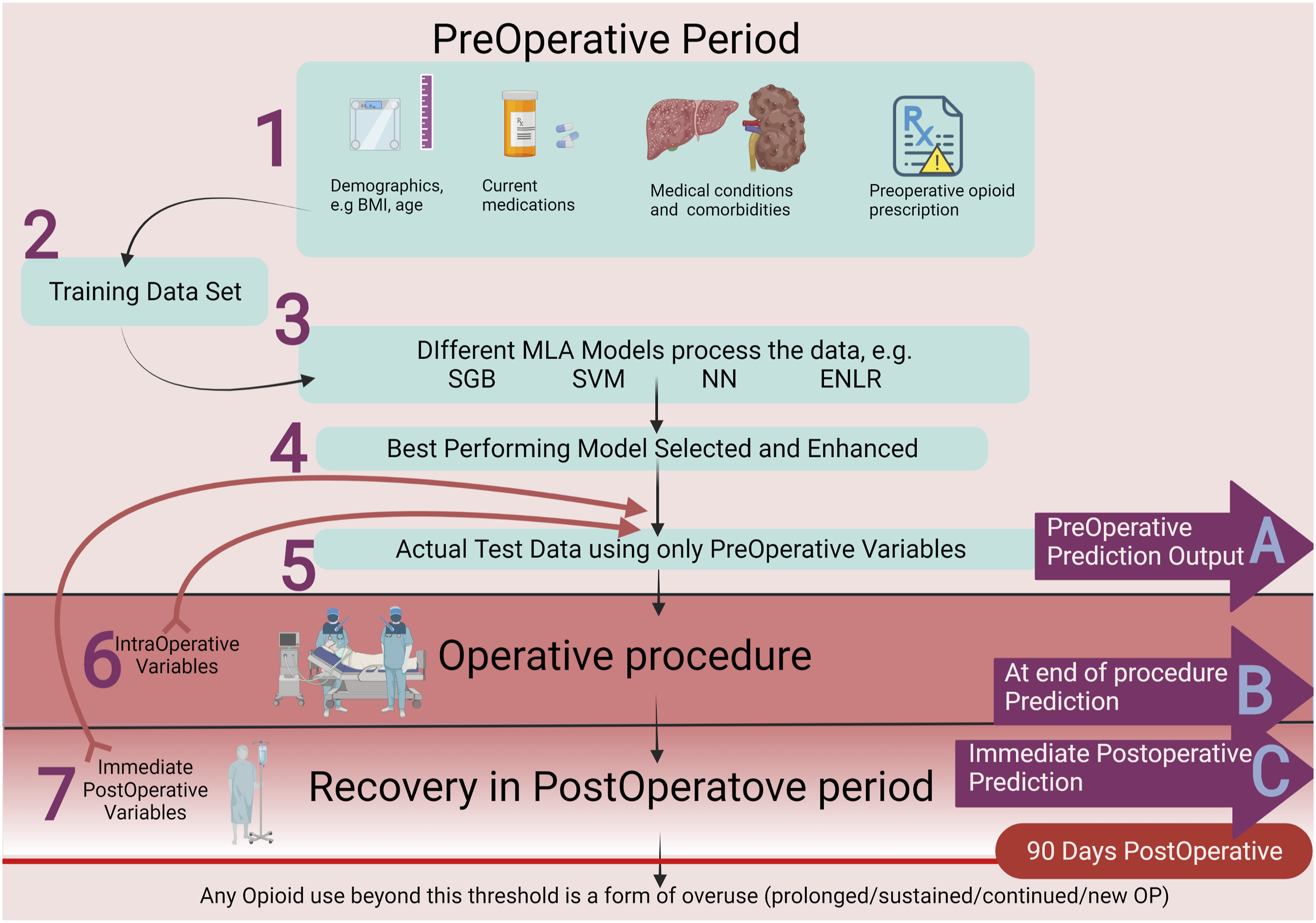

They are more than simple automation tools as they consider the dynamic nature of specific variables and “learn” from such real-time fluctuations and past mistakes to constantly improve future performance. Moreover, their unique coding gives them a superior edge when performing complex tasks, like continued monitoring with real-time feedback processing or analysis of multifaceted problems. For these reasons, various ML algorithms were developed to predict PPO use; see Figure 2. Shows the process of ML model prediction at three different timepoints: (a) Preoperative prediction.27,30-35,37,40 through the following steps

1

Collecting relative preoperative variables, such as patient demographics, medical conditions and comorbidities, and medication taken regularly.

2

Data is split into in two sets where most of it is used for training of the algorithms.

3

Different ML models are developed.

4

Performance of the different algorithms was compared and the best-performing models were then enhanced by considering the weight of top contributing factors to the predicted outcome.

5

Actual test data is entered into the final model to be processed. (b) Prediction immediately at the end of surgery, through steps 1-5 plus a sixth step of adding operative variables to the ML mode, for example, duration of surgery, bleeding amount, tissue manipulation, and intraoperative drug administration.36,39 before processing. (c) Postoperative prediction through steps 1-6 plus a seventh step of adding postoperative variables to the ML model, for example, dosage and frequency of opioids on the first day after surgery and the increase in the dosage over the immediate 2-4 weeks postoperatively28,29,38,41 before processing.

Prediction at either of the three time points outlined provides an estimate of PPO use. However, an early prediction at point A prediction27,30-35,37,40 will have the advantage of sharing these findings with involved health care personnel and patients during preoperative counseling. A postoperative forecast at point C28,29,38,41 may have acquired the most comprehensive data encompassing possibly significant operative and postoperative factors and, therefore, a more accurate prediction. Unfortunately, the output would be at a late point after the patient had already been exposed to opioids. Prediction at point B36,39 may be more balanced in acquiring the necessary variables while producing output before postoperative analgesia is initiated. Future research is needed to determine the optimal predictive time point and the complex interaction between variables to analyze the most contributing ones for further enhancement to the applicability of this technology in clinical practice. Likewise, researchers are encouraged to develop a standardized assessment metric, which is lacking owing to the novel nature of these models.

A few notable challenges are worth mentioning. Firstly, these predictions were based on OP obtained from EMRs. Surely, an OP does not necessarily imply precise adherence to the physician’s directions, and seeking opioids from a different source couldn’t be ruled out. Furthermore, every study devised a different approach to deal with missing data and documentation bias, emphasizing the significance of complete and comprehensive medical documentation. Another issue is that pain in the postoperative setting is multicausal, and other non-opioid analgesic protocols, such as NSAID or nerve blocks, were not studied. Additionally, many authors excluded possible causes of chronic pain as confounding factors. For example, Katakam et al 35 and Kunze et al 37 did not include revision surgeries for TKA and hip arthroscopy, respectively. Karhade et al excluded patients with preparative conditions that may cause chronic pain or are likely to complicate the surgical outcome requiring extended opioid use, for example, trauma, tumor, and infections.31-34 Another significant issue is that a one-size-fits-all approach is not the way to implement such algorithms or unjustifiably generalize the outcomes. The variability in ML model performances and most predictive factors highlights the individuality of an algorithm to its respective setting and population. For example, Ward et al investigated similar outcomes in adolescents undergoing surgery. 44 Undoubtedly, their pain experience and response to opioids would be very different from elderly undergoing more aggressive orthopedic surgeries by Lu et al 38 Many authors uploaded the final ML model to an online platform as an open-access source for public use. Sharing such vital data is highly encouraged during these early times of AI and ML research to accelerate the utilization of such novel tools.

Conclusion

Machine learning algorithms have demonstrated a promising potential to predict PPO use accurately. Their introduction into the clinical setting is an excellent step toward improved patient-centered care. With more validation studies, prospective cohorts, and application to larger patient populations in different settings, their accuracy and proper implementation in clinical practice would be more reliable. They can be refined into a convenient decision-supportive tool for surgeons, anesthetists, and other physicians involved in patient care. Efficient preoperative counseling and rationalized postoperative consumption of opioids will have an imminent impact on patients directly, physicians, health care institutions, and society.

Supplemental Material

Supplemental Material - Machine Learning Algorithms Predict Long-Term Postoperative Opioid Misuse: A Systematic Review

Supplemental Material for Machine Learning Algorithms Predict Long-Term Postoperative Opioid Misuse: A Systematic Review by Omar S. Emam, Abdullah S. Eldaly, Francisco R. Avila, Ricardo A. Torres-Guzman, Karla C. Maita, John P. Garcia, Sally Anne Brown, Clifton R Haider, and Antonio J. Forte in The American Surgeon™.

Footnotes

Acknowledgments

Figure 1 and 2 were created using BioRender.com.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported in part by the Mayo Clinic Clinical Research Operations Group (CROG) and Mayo Clinic Center for Regenerative Medicine (CRM).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.