Abstract

Critics of evidence-based crime prevention argue that extant research lacks insight into aspects of crime control and prevention that are critical to policymakers. Systematic reviews and meta-analyses of programs require the use of experimental and quasi-experimental designs, and some scholars argue that relaxing that methodological criterion would result in a larger pool of studies that could better inform policy and practice. We test that proposition by using a comprehensive database of 160 evaluation studies of public-area video surveillance and measuring their policy relevance by looking for four specific factors: causal mechanisms, moderators, implementation, and economic costs. We find that studies incorporating experimental and high-quality quasi-experimental designs scored significantly higher than studies using less rigorous designs on three of the four dimensions. This suggests that adherence to a high standard of methodological rigor does not compromise the practical value of video surveillance research. We then discuss the implications of this finding.

Keywords

Researchers have developed a rich body of literature on evidence-based crime and justice policy in recent decades. Within the realm of crime prevention, scientific evidence generated from rigorous evaluation studies helped develop a catalog of effective (and ineffective) programs to inform policy and practice (Sherman et al. 2006; Weisburd et al. 2016; Welsh et al. 2024). Several societal benefits can be realized by following an evidence-based approach: more effective and fiscally responsible prevention of crime and disorder; a reduction in citizen concerns about crime prevention strategies being wasteful; a diminished chance that interventions will cause unintended harm to the community; and less political divisiveness among stakeholders and constituents—because interventions that are rigorously evaluated are neither conservative nor progressive but, rather, either effective or ineffective as determined by the analysis results (Bueermann 2020). In the lexicon of this volume’s theme, evidence-based crime prevention offers the opportunity to simultaneously pursue social impact and social justice.

Practitioners and policymakers must consult the scientific research for evidence-based crime prevention to realize its full potential (Mitchell and Huey 2019, introduction; Piza and Welsh 2022, ch. 1). While it is still not standard practice, there has been increasing interest in better communication of research findings to support the development of effective crime prevention practices (Telep 2024). Systematic reviews are perhaps the core strategy of communicating the “best available research” with which to guide policy and practice (Mitchell and Huey 2019, ch. 8). The Campbell Collaboration’s Crime and Justice Coordinating Group, for example, oversaw the completion of 52 systematic reviews between 2000 and 2020, their first 20 years in operation (Wilson et al. 2021).

While systematic reviews vastly increased the amount of scientific knowledge available to policymakers, some scholars criticize the evidence-based movement for not being more policy-relevant (Knutsson and Tompson 2017, ch. 4; Sparrow 2011; Tompson et al. 2021). A main component of this criticism is the primary focus on program outcomes. While outcomes are obviously important to consider, policymakers need information on other factors to successfully develop, deploy, and sustain an effective program (Johnson et al. 2015; Laycock 2012; Piza and Welsh 2022, ch. 5).

Critics argue that a primary reason for the lack of policy-relevant findings is an outsized emphasis on research design. Systematic reviews adhere to strict criteria in selecting studies, foremost among them the use of experimental and high-quality quasi-experimental research designs. As a consequence, only a small proportion of studies are considered in most systematic reviews. Some argue that the excluded studies, while lacking the internal validity to sufficiently measure program effect, may contain valuable information on other programmatic factors that are critical to successful program design and implementation (Eck 2006; Knutsson and Tompson 2017, ch. 4; Sparrow 2011).

Drawing on a comprehensive database of more than 160 evaluation studies of public-area video surveillance (Piza et al. 2019; Thomas et al. 2022; Welsh et al. 2020), the current study investigates the claim that relaxing standards of methodological rigor increases the policy relevance of crime prevention research. We apply the EMMIE framework (Johnson et al. 2015) to compare studies with experimental and high-quality quasi-experimental designs to studies with less rigorous designs on four policy-relevant dimensions: causal mechanisms, moderators, implementation, and economic costs.

Review of Relevant Literature

Situated within the wider context of evidence-based policymaking, this section brings together key research that is informing the ongoing debate on high-quality evaluation designs and the policy relevance of systematic reviews.

Evidence-based crime prevention

Evidence-based crime prevention is committed to ensuring that the best available research evidence is considered in any decision to implement a crime prevention program or policy. Or, to put it more broadly, “An evidence-based approach requires that the results of rigorous evaluation be rationally integrated into decisions about interventions by policymakers and practitioners alike” (Petrosino 2000, 635).

A key output of an evidence-based approach is knowledge dissemination to practitioners. Attempts to develop a research-to-practice framework reflect the core objectives of research translation, which Laub (2012) defines as the communication of science directly to practitioner audiences in order to institutionalize evidence-based practice. This exemplifies the knowledge-to-action model popularized by implementation science, in which a “knowledge creation” cycle is followed by an “action” cycle that applies research to practice (Graham et al. 2006; Milat and Li 2017). To facilitate this cycle, researchers need to create vehicles for knowledge dissemination to practitioners.

One example of fostering the translation of research to practice is the systematic review. Systematic reviews use rigorous methods for locating, appraising, and synthesizing evidence from prior evaluation studies. One of their key features is an explicit eligibility criterion—researchers specify in detail why some studies are included and others are excluded. This involves all the variables of interest, including sample, context, evaluation design, and outcome data. Many (if not most) recent systematic reviews incorporate meta-analyses to strengthen their findings. Meta-analyses enhance the review by quantitatively synthesizing data from multiple studies, thus providing a pooled effect size that offers a more precise estimate of the overall impact (Turanovic and Pratt 2021). Although meta-analyses can enrich the analytical depth of a systematic review, their inclusion is not mandatory.

Important to the present discussion is internal validity: that is, how well an evaluation study unambiguously demonstrates that an intervention (e.g., video surveillance) influenced an outcome (e.g., crime). To ensure the highest degree of confidence in reported effects—whatever those effects may be—only the highest-quality evaluation designs, as measured by overall internal validity, are included in systematic reviews. According to Cook and Campbell (1979), the minimum interpretable design involves measures of crime before and after the intervention in treatment and comparable control conditions.

Critiques of what constitutes evidence

Critics have often chastised the evidence-based movement as being “too purist,” citing the large proportion of studies that are typically excluded within systematic reviews (Ratcliffe 2023). For example, in the first systematic review of problem-oriented policing, only 10 studies met the inclusion criteria after an initial identification of more than 5,000 reports (Weisburd et al. 2010). Similarly, a systematic review of counterterrorism strategies identified more than 20,000 reports but found that only seven studies met the inclusion criteria (Lum et al. 2006). In commenting on the counterterrorism review, Sparrow (2011, 14) lamented the exclusion of such a large proportion of studies, arguing “one might pay more attention to other forms of evidence or ponder, at least for a moment, the insights and wisdom contained in the other 5,445 reports.” Other researchers have built on similar arguments by using “high-impact low-probability” events, which are unlikely to be robust or directed at a complex problem (Tompson et al. 2021). They argue that practical lessons can be gained beyond assessing effect size due to the mechanisms through which the intervention works and how it was implemented. This argument suggests that a sole focus on internal validity may overlook important insights into how and why interventions succeed or fail in practice.

The potential shortcomings of an evaluation framework primarily concerned with internal validity and causal effects are illustrated through Pawson and Tilley’s (1997) realistic evaluation framework. Realistic evaluation argues that programs must be evaluated not only for their outcomes but also for how they work, for whom, and under what conditions. Their emphasis on understanding the interaction among context, mechanism, and outcome demonstrates the importance of considering the varied circumstances in which programs or interventions work. Realist models of evaluation argue that a blind commitment to internal validity may suppress the policy relevance of evaluation research because rigorous methodology requires local conditions unreflective of the operational reality (Eck 2006). As such, realistic evaluation embraces small interventions as a powerful tool for theory testing and aims to triangulate findings from multiple case studies to derive lessons for policy and practice (Pawson and Tilley 2001). In this vein, Pawson and Tilley (2001) argued for an evidence base that was more cumulative, i.e., that drew implications from a wider array of methodologies and intervention types. Interestingly, similar arguments have been offered as ways to advance contemporary crime prevention strategies, such as problem-oriented policing (Knutsson and Tompson 2017, ch. 3) and situational crime prevention (Farrell and Sidebottom 2019, ch. 4).

Others have argued that relaxing the methodological criterion of evidence-based crime prevention would generate a larger pool of studies that could better inform the practical application of programs and practices (Eck 2006; Laycock 2012). In making the case for lowering the evaluation design threshold, Hope (2005, 276) argues that key threats to internal validity (i.e., selection, history), which the experimental method attempts to control, are the “social, collective ‘mechanisms’ that they [the interventions] activate in order to bring about outcomes.” By focusing on maximizing internal validity at the expense of understanding how such mechanisms can bring about change, experimental and quasi-experimental evaluation studies—while effectively identifying “what works” to address specific problems—may do little to help generate new ideas and develop effective interventions (Clear 2010; Kennedy 2020).

It has also been suggested that systematic reviews—given the inclusion criterion of an experimental or quasi-experimental design—can sacrifice generalizability (Eck 2006; Knutsson and Tompson 2017, ch. 4), stripping away valuable knowledge and making it more difficult for practitioners to apply the findings to local initiatives. The source of this critique is often the randomized controlled trial (RCT), but few if any current systematic reviews restrict eligibility to RCTs. RCTs are difficult to carry out, so there are relatively few of them. Research has found that reviews that prioritize RCTs can greatly compromise conclusions and policy recommendations when compared to reviews that also include high-quality quasi-experimental designs (Stockard and Wood 2017).

Toward “second-generation” studies

In their comprehensive assessment of systematic reviews of crime prevention and correctional treatment programs, Weisburd et al. (2016, 2017) recommend that it is time for the field to move from “first-generation” studies—with a limited focus on crime effects—to “second-generation” studies, which provide evidence that can give a higher level of guidance to practitioners. According to Weisburd et al. (2016, 318), “Second-generation studies would provide specific guidance regarding what types of programs are effective, for which types of offenders, and which types of settings.” To provide more practical guidance to practitioners and policymakers, researchers would better center implementation science, qualitative research, and cost-benefit analysis within such a “second-generation” paradigm (Weisburd et al. 2017).

There is also a need for greater attention to program sustainability. For example, Pease and Roach (Knutsson and Tompson 2017, ch. 6) reported that not one of the UK projects previously nominated for a Herman Goldstein Award for Excellence in Problem-Oriented Policing still existed as of the date of their writing. Boston’s Focused Deterrence strategy provides a complementary example in the U.S., with the Boston Police Department discontinuing the strategy in 2004 (before reinstating it in 2007), despite the strategy being heralded as a national model for violence prevention (Braga et al. 2019). This suggests that, despite the documented crime prevention effect of problem-oriented policing (Hinkle et al. 2020) and focused deterrence (Braga et al. 2018), there is insufficient research evidence on how to effectively manage and sustain the programs at the local level (for a noteworthy exception, see Ward et al. 2025).

Recognizing the needs of crime prevention practitioners and policymakers and motivated by the realist approach to evaluation, Johnson et al. (2015) developed the EMMIE framework for assessing research evidence, especially the quality of systematic reviews. The development of EMMIE reflects recent debates on how to make systematic reviews and meta-analyses more useful for policymakers in criminal justice (Turanovic and Pratt 2021; Wong and Bouchard 2023) and social science more generally (Littell 2024; Maynard 2024). The EMMIE framework covers five important factors: effects, mechanisms, moderators, implementation, and economics. “Effects” refers to the impact of the intervention on the outcome of interest and is typically the primary focus of evaluation studies. “Mechanisms” refers to how an intervention caused the measured effect—in other words, how mechanisms capture the agent of change. “Moderators” refers to the contextual variables that influence the measured effects of a given intervention. “Implementation” refers to a wide array of factors (e.g., staffing, resources, dosage of intervention) that enable an intervention to produce the measured effect. “Economics” refers to the financial costs of operating the program and the financial benefits associated with its effects. The EMMIE framework explicitly reflects the fact that outcome measures (i.e., effect) alone provide insufficient guidance to practitioners, “as success is often contingent on a number of other factors, and is not guaranteed from one setting to the next” (Farrell and Sidebottom 2019, 100).

Several studies have reanalyzed published systematic reviews using the EMMIE framework. In one study of more than 70 systematic reviews, Thornton et al. (2019) found that systematic reviews scored well on effects but lacked information on the other four factors, a finding reached in other studies. This was especially true for economics. Of the 70 systematic reviews, only six examined financial costs and benefits. In commenting on the scope of these 70 systematic reviews, Tompson et al. (2021) argued that there is a distinct lack of crime prevention research evidence that currently speaks to the knowledge needs of practitioners.

Scope of the Current Study

This study focuses on a specific research question: Can studies incorporating research designs that do not meet the methodological criterion of systematic reviews (i.e., use of experimental or high-quality quasi-experimental designs) help improve the policy relevance of crime prevention research? For this to be the case, evaluation studies excluded from systematic reviews need to report more often on policy-relevant dimensions than their counterparts do. We explore this research question in the context of public-area video surveillance research (Piza et al. 2019). We code original evaluation studies using the EMMIE framework, comparing evidence ratings of the studies included in the video surveillance review to studies that were excluded on methodological grounds. Each study was scored on four of the EMMIE dimensions: causal mechanisms, moderators of effect, implementation issues, and economic costs. A Q Score (quality score), which was assigned for each dimension, reflected the quality of information reported (Thornton et al. 2019; Tompson et al. 2021). To our knowledge, this is the first application of EMMIE to individual primary evaluation studies. All prior applications used the framework as a rating of evidence contained within systematic reviews, despite the guidance EMMIE can provide in the design and interpretation of primary studies (Piza and Welsh 2022, ch. 5).

Methodology

The population of evaluation studies comes from a database of 165 studies initially compiled for the most recent systematic review and meta-analysis on the effects of video surveillance (also known as closed-circuit television or CCTV) on crime in public places (Piza et al. 2019). We included studies in the systematic review if they met the following criteria: (a) CCTV was the main focus of the intervention; (b) there was an outcome measure of crime; (c) the research design involved, at minimum, before-and-after measures of crime in treatment and comparable control areas; and (d) both the treatment and control areas experienced at least 20 crimes during the preintervention period. We used a comprehensive set of search strategies to identify studies meeting the inclusion criteria: (a) searches of electronic bibliographic databases, (b) searches of reviews of the literature, (c) searches of bibliographies of included and excluded evaluations, (d) forward citation searches of included evaluations, and (e) contacts with leading researchers. A total of 80 studies met the inclusion criteria. Of the 85 excluded studies, we excluded all but six because of the lack of a comparable control area.

For the purposes of the current study, we followed the approach used by Thomas et al. (2022) and Welsh et al. (2020) and broadened the inclusion criteria to allow for consideration of evaluation studies in which CCTV was part of a package of interventions but not considered the main intervention. This did not impact the original decision to exclude studies that did not use a comparable control group design. Of the 165 studies, 14 needed to be removed because they were written in languages other than English. Our final sample was 151 studies (73 met the inclusion criteria and 78 did not).

We assigned Q Scores to four evidence dimensions for each evaluation study: causal mechanisms, moderators, implementation, and economics. The coding process began with an initial training session involving all authors. We discussed each dimension’s parameters and the level of detail needed when assigning Q Scores. Following the approach of prior applications of EMMIE (Thornton et al. 2019), two members of the research team double-coded all studies to ensure accuracy and consistency. These two researchers first coded studies on their own before meeting to compare their respective scores and discuss any discrepancies. All discrepancies that remained following these meetings were resolved through a discussion among the full research team.

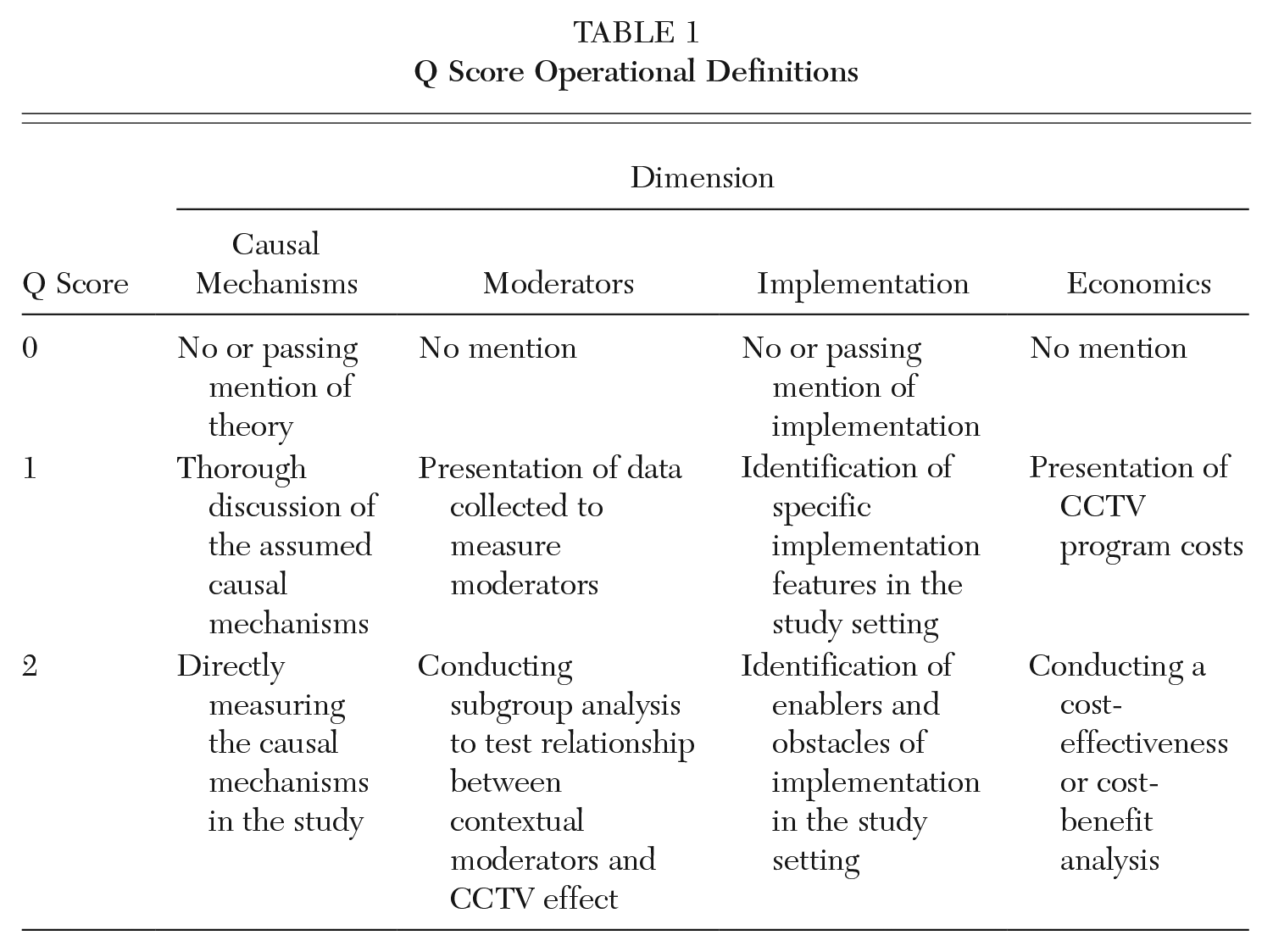

Table 1 describes in detail the operational definitions of the scoring method. A three-point scale ranging from zero to two was applied, with the lowest Q Score (zero) demonstrating no or low information and the highest Q Score (two) demonstrating a higher comprehension level of included information. These Q Scores quantified the quality and relevance of each study, allowing for a comprehensive assessment of the evidence across the four dimensions and ensuring a thorough and robust assessment.

Q Score Operational Definitions

It should be noted that our scale is less than the standard five-point EMMIE scale. Using the full EMMIE scale resulted in substantial disagreement between coders in the current study, with interrater reliability statistics consistently falling below acceptable standards for each dimension except economics. Such challenges have been previously observed with the EMMIE framework. Thornton et al. (2019) noted similar disagreement between coders applying the EMMIE coding instrument to systematic reviews. They found the “complex nature” of the MMI and, specifically, E (economics) dimensions, presented difficulties during the coding process (Thornton et al. 2019).

Our experience suggests that interrater reliability issues may be exacerbated when applying the EMMIE framework to individual studies rather than systematic reviews. Importantly, our compressed scale means that our analysis is measuring broader categories of the dimensions—for example, whether the study conducted a cost-benefit analysis (Q Score = 2), presented only the costs of the program (Q Score = 1) or made no mention of costs (Q Score = 0). This is in contrast to the nuanced levels of quality reflected in the original EMMIE coding instrument—for example, whether the study measured the marginal or total opportunity costs/benefits by bearer/recipient (Q Score = 4), the marginal or total opportunity costs/benefits (Q Score = 3), the direct or explicit and indirect and implicit costs (Q Score = 2), or only the direct or explicit costs (Q Score = 1), or made no mention of costs (Q Score = 0) (see Piza and Welsh 2022, 78). As a result, the current study may be underestimating differences across evaluation studies. However, Thornton et al. (2019) highlights how frameworks that rely on categorization and quantitative scoring inherently lack flexibility, often forcing coders to make distinctive decisions when the evidence does not align neatly with predefined categories. Thus, while our compressed scale allows for a more practical analysis, it also underscores the inherent challenges in balancing nuance with simplicity.

It is also worth highlighting that our study’s compressed scale does not include the effects dimension. Our decision to exclude effects aligns with our goal of focusing on other policy-relevant factors (i.e., the MMIE dimensions) and acknowledges the fact that effect sizes would need to incorporate different formulas for the included (which have a comparable control condition) and excluded (which lack a comparable control condition) studies. This would present complications for comparing the effect dimension across groups.

Differences in Q Scores were compared between the included and excluded categories through the nonparametric Wilcoxon rank-sum test (Wilcoxon 1945), also commonly referred to as the Mann-Whitney test (Mann and Whitney 1947). Analyses were conducted via the ranksum command in Stata 17. By using this approach, we were able to assess whether the differences in Q Scores between the included and excluded studies were statistically significant, thus offering insights into the quality differences within the dataset.

Results 1

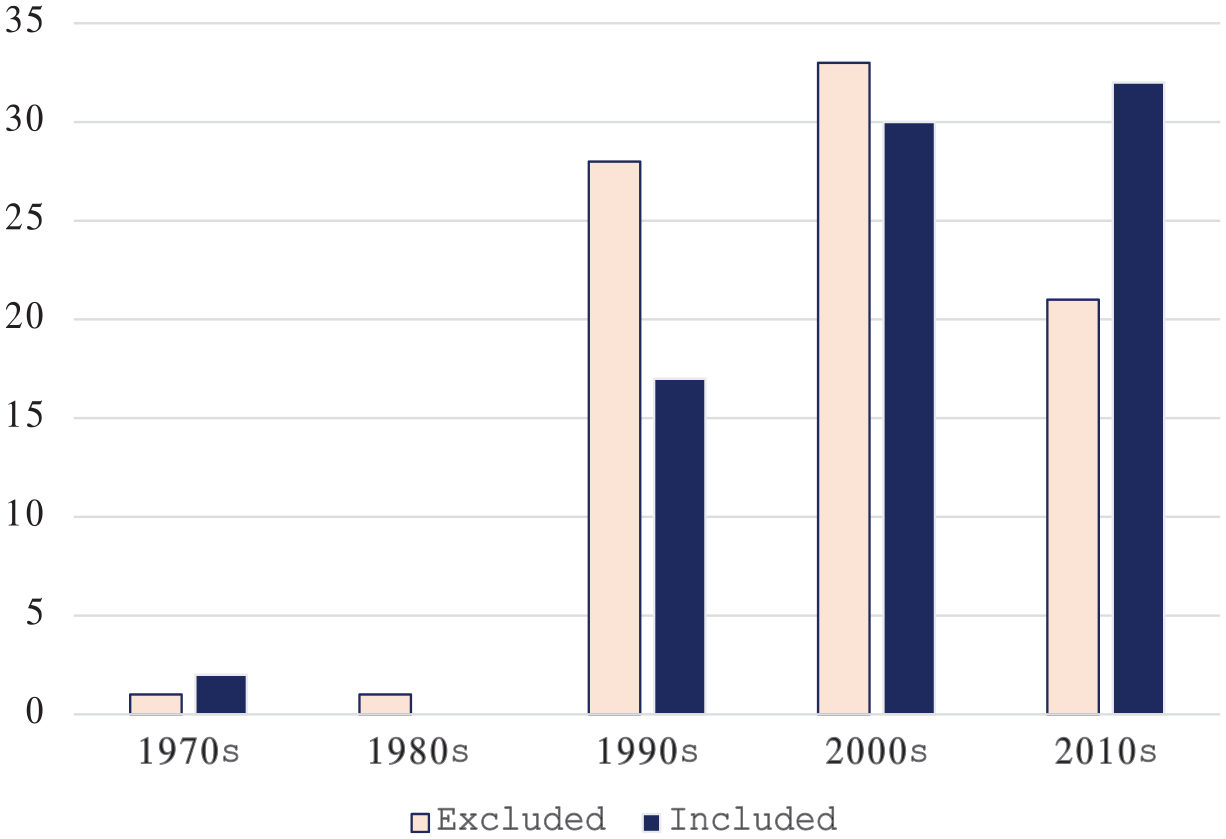

Figure 1 shows the relationship between included and excluded studies from 1975 through 2017 (the final year covered in Piza et al. 2019), organized by decade. There was a dramatic growth in evaluation research on video surveillance interventions to prevent crime in the 1990s. It is also during this decade that we can get a sense of the marked difference in the quality of the evaluation research, as represented by far more excluded studies (n = 28) compared to included studies (n = 17). Evidence suggests that the quality of the evaluation research improved markedly by the 2010s, with far more included studies (n = 32) compared to excluded studies (n = 21).

Evaluation Studies Excluded and Included from the CCTV Systematic Review by Decade

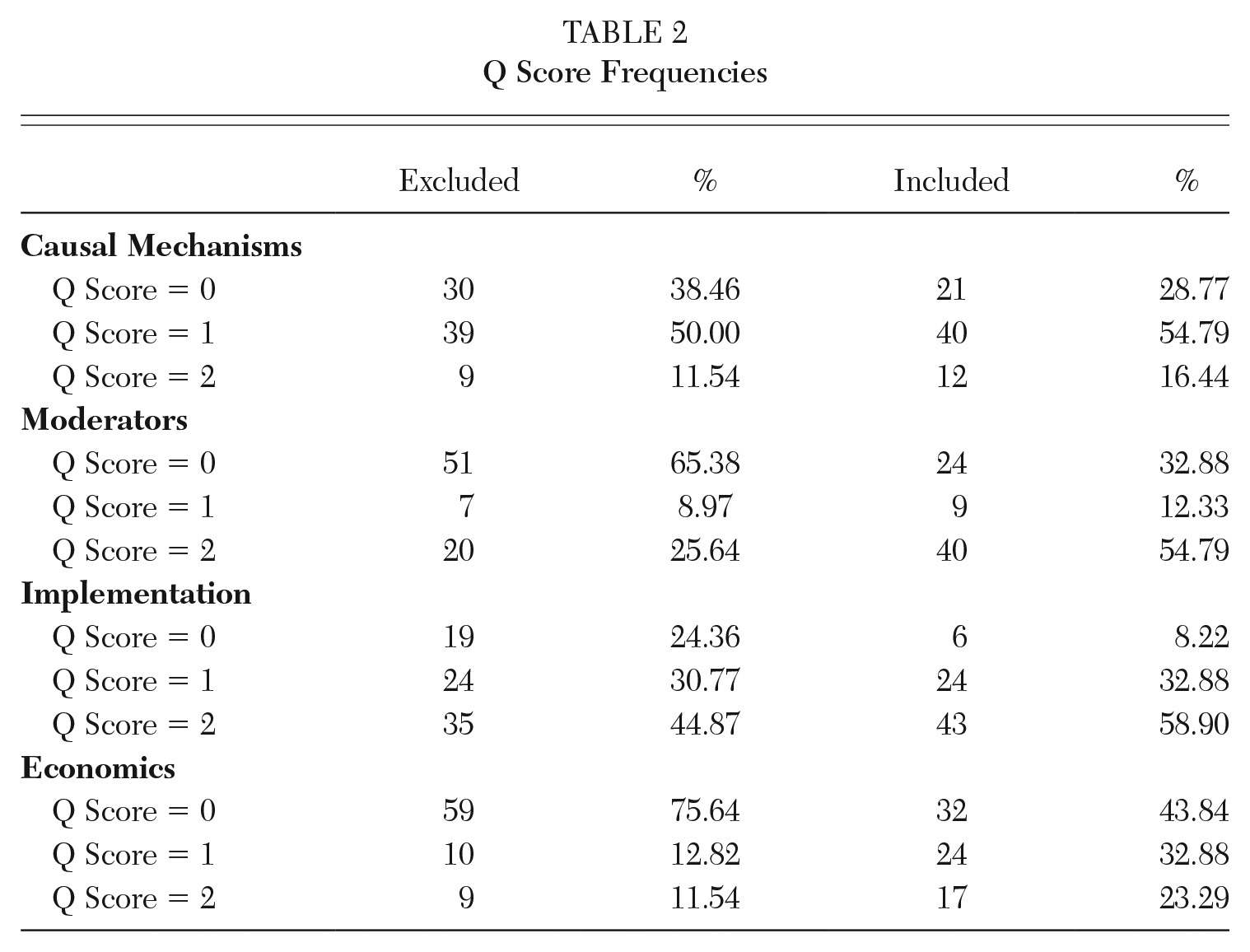

Table 2 presents the raw frequencies of each dimension’s Q Score. For causal mechanisms, 38 percent of excluded studies and 29 percent of included studies mentioned theory in passing or had no mention of theory altogether, thus receiving a Q Score of zero. About half of the included and excluded studies were assigned a Q Score of one. A Q Score of two was rather uncommon for both excluded and included studies (11 percent and 16 percent, respectively).

Q Score Frequencies

For moderators, nearly two-thirds (65 percent) of excluded studies received a Q Score of zero. By contrast, 33 percent of included studies received a Q Score of zero. On the other end of the scale, 26 percent of excluded studies and 55 percent of included studies received a Q Score of two. A smaller proportion of both excluded (9 percent) and included (12 percent) studies were assigned a Q Score of one than for the other three EMMIE dimensions.

For implementation, 24 percent of excluded studies received a Q Score of zero, with only 8 percent of included studies scoring zero. Included studies further present more substantial emphasis on high-quality implementation, with 59 percent scoring two compared to 45 percent of excluded studies. A similar percentage of excluded and included studies received a Q Score of one.

For economics, 76 percent of excluded studies and 44 percent of included studies were assigned a Q Score of zero. About 12 percent of excluded studies and 23 percent of included studies were assigned the highest Q Score of two. About 13 percent of excluded studies and 33 percent of included studies were assigned a Q Score of one.

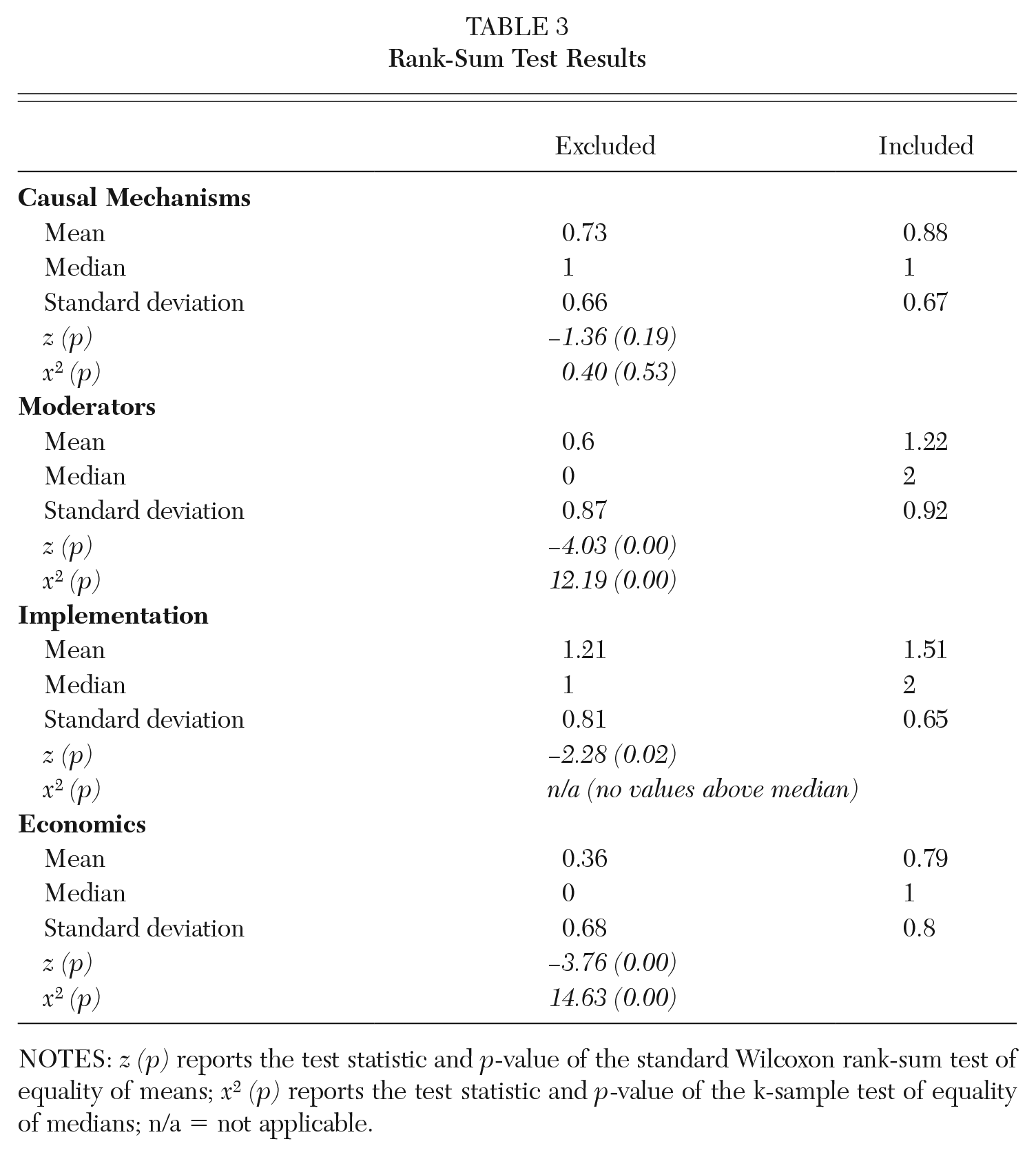

Table 3 presents findings from the rank-sum tests for each dimension, comparing the differences in means (through a z statistic) and medians (through a x2 statistic). For the causal mechanisms dimension, there was no significant difference between excluded and included studies. This suggests that the theoretical discussion and frameworks within these studies are scored relatively consistently, regardless of methodological rigor. This finding also points to a potential weakness in the field, with a need for a deeper engagement with theory to enhance our understanding of how and why video surveillance interventions can be effective.

Rank-Sum Test Results

NOTES: z (p) reports the test statistic and p-value of the standard Wilcoxon rank-sum test of equality of means; x2 (p) reports the test statistic and p-value of the k-sample test of equality of medians; n/a = not applicable.

For the moderators’ dimension, there was a significant difference between excluded and included studies, with the latter more likely to consider contextual factors that could influence the effectiveness of video surveillance interventions. There was also a significant difference between excluded and included studies for implementation, again favoring included studies. (The equality of medians test was not applicable due to insufficient variation around the median among the excluded studies.) The higher value of included studies is a result of more detail and comprehensive discussions of how interventions were applied in the study setting. Lastly, there was also a significant difference between excluded and included studies for economics, with the latter more likely to report details of economic analyses.

Overall, the frequencies of Q Scores suggest that included studies tend to have higher Q Scores than excluded studies do. The rank-sum test results indicate that these differences were statistically significant in the case of moderators, implementation, and economics. The significant differences suggest that included studies are generally more robust and reliable, offering a deeper and more nuanced understanding of factors critical for practitioners and policymakers. The lack of a significant result for causal mechanisms suggests that the quality of theory-related discussions and testing can be improved in both controlled and uncontrolled evaluations.

Discussion and Conclusions

We examined whether relaxing the methodological rigor of evaluation studies could enhance the policy relevance of crime prevention research. Applying the EMMIE framework to a large sample of evaluation studies of video surveillance interventions (n = 151), we compared studies that meet the rigorous criteria of systematic reviews with studies that are excluded for using less rigorous evaluation designs. Findings showed that experimental and high-quality quasi-experimental studies generated more policy-relevant findings (as reflected by the EMMIE dimensions) than did studies with lower methodological quality. In other words, adhering to a high standard of methodological rigor has not compromised the practical value of evaluation research on video surveillance interventions. For example, the significant association observed in the moderators’ dimension highlights how studies with rigorous designs are more likely to account for contextual factors that influence the potential effectiveness of interventions. The higher quality of included studies on this dimension suggests that a more thorough consideration of contextual variables leads to more reliable and robust evidence to inform policy decisions. Evaluation studies using experimental and high-quality quasi-experimental designs similarly provide higher-quality information on implementation and economics than do studies that use weaker designs.

Limitations

The current study has some limitations. As previously mentioned, we encountered difficulty in generating coder agreement when applying the original EMMIE coding instrument to our sample of studies. This issue has been identified previously, with Thornton et al. (2019, 274) raising fundamental questions about the replicability of EMMIE “given the considerable amount of judgement required at various stages of the review . . . and during the coding phase.” The inherent subjectivity in the EMMIE instrument led us to use a simplified, three-point coding instrument, which provided less nuance in measurement. Additionally, the focus on public-area video surveillance limits the applicability of our results to other crime prevention interventions. (We discuss this point in more detail below.) It is further noted that, unlike for Piza et al.’s (2019) systematic review, we did not code the 14 non-English language studies owing to a lack of funding for translation services (of the full studies). It is noteworthy that these studies were almost evenly divided between the excluded (n = 6) and included (n = 8) categories.

Implications for policy

Our work here pushes back on recent arguments that deemphasizing research design would increase the policy relevance of evidence-based crime prevention and instead suggests that rigorous methodological standards are necessary to maintain the validity and quality of the evidence used to inform policy decisions. Much work remains to move toward a “second generation” of crime prevention studies (Weisburd et al. 2016, 2017) and to further improve the policy relevance of this research. However, our results indicate that minimizing rigor is not how we advance the practical evidence base. Instead, a balanced approach is needed that integrates both methodological rigor and practical relevance. In terms of systematic reviews, scholars should begin to consider alternate ways to communicate the findings to public audiences, including the use of complementary vehicles of research translation. For example, to empower police professionals, the newly developed Applied Research Briefings journal (at https://appliedpolicebriefings.com) aims to make research accessible—free of charge—and easy to understand. Such a method could expand the reach of systemic reviews by communicating their findings to wider audiences.

The findings also underscore several important implications for crime prevention and evidence-based policymaking. Policymakers should emphasize the need for high-quality evaluations when designing interventions, as rigorous studies yield more reliable, valid, and actionable evidence. Importantly, this does not mean a sole reliance on RCTs. It is crucial that the research question dictate the evaluation design to be used—drawing on the wide range of experimental and high-quality quasi-experimental designs.

Additionally, systematic reviews should maintain strict methodological standards for study inclusion; relaxing these standards risks diluting the quality of evidence. Policymakers who uphold high standards make informed policy decisions using the most credible evidence available, which is essential for both program efficacy and public trust. Moreover, beyond this study’s specific findings, there is an overarching need for the highest-quality research to remain central to the policymaking process. This is consistent with the tenets of an evidence-based approach—advocating for policy decisions grounded in robust, comprehensive research rather than anecdotal evidence or lower-quality studies.

Other factors should be more readily considered alongside methodological rigor. For one, the duration of the study period can play a key role in determining the relevance and applicability of findings. Shorter intervention periods may not capture the full range of long-term outcomes, while longer-duration studies can provide valuable insights into the sustainability of program effects. As such, researchers should more readily communicate whether primary evaluation study findings speak to the short-, intermediate-, or long-term effects of the intervention in question—information that can inform decisions on how to best scale programs to achieve population-level impacts (Fagan et al. 2019).

Priorities for research

Our findings draw attention to several priorities for future research. The decision to code individual CCTV studies according to the EMMIE framework introduces new avenues for research evaluation by expanding its application to more granular levels of analysis that may offer insights that enhance our understanding of individual study contexts. Findings from prior EMMIE applications to systematic reviews revealed that evidence on individual dimensions was “noticeably sparse,” a result attributed to researchers’ search strategy and the narrow scope of prior evidence-based policy and practice, which focused on evaluating studies through the usual question of “what works?” (Piza and Welsh 2022, ch. 5). Applying the framework to individual studies allows the EMMIE elements to address key questions about the underlying context associated with intervention effect. As noted above, we cannot claim that our findings are generalizable to other crime prevention interventions. Researchers should carry out replication studies with other situational or environmental crime prevention interventions (e.g., alley gates, street lighting, place managers), proactive policing measures (e.g., problem-oriented, hot-spots, community-based), and interventions associated with other crime prevention strategies, including developmental and community prevention. This effort could begin with the use of existing databases of evaluation studies drawn from systematic reviews published by the Campbell Collaboration, Cochrane (formerly known as Cochrane Collaboration), or other agencies to continue improving the evidence base for crime prevention.

In carrying out evaluations of crime prevention interventions, researchers need to examine and document causal mechanisms, implementation, and moderators. As made clear in the current study and in the work by Johnson et al. (2015), these are not ancillary factors but, rather, crucial. They get to the heart of understanding how and why an intervention is effective, ineffective, or harmful in preventing crime and provide policymakers and practitioners with the knowledge needed to tailor evidence-based programs to other contexts and conditions. In the reporting of evaluations, failure to document these factors is a sign of weak descriptive validity (Farrington 2003).

There is also a vital need for economic analyses of crime prevention interventions. Ideally, researchers planning an outcome or impact evaluation of a crime prevention program will include plans for a (prospective) benefit-cost analysis that accounts for the full range of monetary costs of the program (i.e., capital and operating) and monetary benefits of program effects (Welsh et al. 2015). Because an economic analysis is only as defensible as the evaluation on which it is based, it has long been recommended that economic analyses be restricted to programs that have been evaluated with an “experimental or strong quasi-experimental design” (Weimer and Friedman 1979, 264).

To improve the policy relevance of evidence-based crime prevention, scholars and academic organizations could consider creating protocols to guide the design of primary evaluation studies. This could follow the design of existing guides, such as the PRISMA statement for systematic reviews (Page et al. 2021) and the CONSORT statement for RCTs (Butcher et al. 2022). While PRISMA and CONSORT are concerned with methodology and transparency around the reporting of results, a policy-relevant checklist could provide guidance on the types of data sources needed to measure the EMMIE dimensions. Such guidance to authors can prove critical to reaching the “second generation” of evidence-based crime prevention (Weisburd et al. 2016, 2017).

Footnotes

Notes

Savannah A. Reid is a doctoral student in criminology and justice policy at Northeastern University. Her research interests include the spatial analysis of crime, evidence-based policing, community engagement, and crime prevention. Her recent scholarship appears in Criminology & Public Policy.

Eric L. Piza is Lipman Family Professor of Criminology and Criminal Justice, director of Crime Analysis Initiatives, and codirector of the Crime Prevention Lab at Northeastern University. His research centers on the spatial analysis of crime patterns, evidence-based policing, crime control technology, and the integration of academic research and police practice.

Brandon C. Welsh is Dean’s Professor of Criminology, director of the Cambridge-Somerville Youth Study, and codirector of the Crime Prevention Lab at Northeastern University. From 2022 to 2024, he was Visiting Professor of Global Health and Social Medicine at Harvard Medical School. His research focuses on the prevention of delinquency, crime, and interpersonal violence and evidence-based social policy.

John P. Moylan is a research assistant for the Crime Prevention Lab at Northeastern University, a research and policy fellow for the City of Boston Mayor’s Office of Returning Citizens, and an incoming crime analyst at the Boston Police Department. His research interests include environmental criminology, evidence-based crime prevention, and carceral policy.