Abstract

Higher Education Evaluation Systems supply information for diverse stakeholders. A “one size fits all” approach in university rankings is not enough. Looking to the future, evaluation may need to take into account criteria such as links with employers, lifelong education, implications of digitization, and interdisciplinary and interinstitutional collaboration across borders. The extensive possibilities of today’s research data based analyses are analysed, against the background of a whole industry devoted to this. Shortcomings, challenges and unintended consequences of the current approach are discussed. Impact analyses are seen as one of the ways forward, taking into account contributions to societies and their transformations. Diversity, “glocal” mindset and international collaboration are suggested as additional criteria for the competitive rankings of the future.

Keywords

Why Look at Higher Education Evaluation Systems?

Common features among the higher education systems in many countries around the world are the recent rapid rise of student enrollment and the growth of diverse higher education providers. About 250 million students are enrolled in more than 20,000 universities and higher education providers around the world. Only about 2 per cent of university students are studying outside their home country. Universities are mainly national in their provision even if they are global in their aspirations and standards. Substantial investment of public funds as well as personal incomes of families in the form of student fees have contributed to the massive expansion of higher education. Accountability, productivity, effectiveness and greater good of higher education are sought when public funds are used. Quality, relevance, return on investment, and usefulness of higher education are expected when personal incomes are involved. The public good aspect is better-acknowledged via adequate investments in higher education in certain countries than in others. The longer-term social and economic aspects are regarded as the domain of public funding, while personal development is to be paid for by the individual. Hence the evaluation of higher education providers and systems is important to policy makers as well as to the public (Astin & Antonio, 2012). Moreover, the choices and mobility of students are influenced by the publicly known outcomes of evaluations. Employers or recruiters of graduates consider standards and reputation of their universities. Future funding priorities, strategies and policies of the governments rely on the outcomes of evaluation. Donors and philanthropists are cognizant of the needs, purpose as well as the effectiveness of universities. Collaborations and partnership decisions of private sector are shaped by the performance of universities. International partnership decisions of universities are influenced by the standing and experiences of collaborating universities. Alumni are always nostalgic about their alma mater, and proud to see the continued progress of respective university over the years. Economic growth and social well-being are symbiotic with the quality of the higher education system. Ultimately a better performing higher education system is the pride of a nation: societal expectations include excellence in (1) teaching and learning, (2) research and (3) community engagement. In other words, the stakeholders of higher education evaluation are diverse, and they have different expectations of higher education providers and system.

Traditionally higher education evaluation systems are there to protect students and families from malpractices of higher education providers and to ensure quality education. They are the means employed by the policy makers to determine the suitability of an institution to award qualifications, degrees, diplomas or certificates. Country specific accreditation, by definition, is an on/off switch which indicates that a higher education provider has or has not got the resources and quality systems needed to educate students in specific courses. Policy makers and experts are looking at ways and means to update and fine tune higher education evaluation systems that are sensitive to disciplines and are also relevant to the broader needs of countries. Higher education evaluation systems have always been evolving (Carelli & Sachsenmeier, 1977) but change has sharply accelerated in recent years.

What Is a Higher Education Evaluation System? What Are the Shortcomings?

In most countries, higher education evaluation systems and frameworks exist in terms of national recognition, authorization, certification and attestation, license to operate and receive public funds, quality assurance and accreditation to ensure higher quality standards. History, scale, depth, intensity and quality of higher education evaluation systems vary from one country to another. Higher education providers tailor respective mission and vision as per the different ecosystems in which they operate. Different countries and ecosystems are in different stages of development with varying social and economic needs. No single evaluation system is applicable to all higher education systems. Different countries practice different higher education evaluation systems. The overarching purpose of diverse higher education evaluation systems is to measure a) quality, b) performance and c) impact (Hazelkorn et al., 2018).

In some countries, accreditation is used as the evaluation system of higher education. The review of a higher education provider is conducted by bodies associated with the government or by independent and certified private agencies. They conduct an outside assessment of the quality assurance of higher education institutions. In some cases, they also check the compliance of the higher education provider with national legislation and regulations, compliance with licensing requirements and conditions, and the required national education quality standards. In the case of professional degree programs accreditation is done by the respective professional societies or parastatal stakeholder associations. Some countries draw up the framework and methodology for quality assurance of higher education providers and expect the universities to put together dossiers of self-evaluations, case studies, and external reviews and surveys in accordance with local and or internationally recognized standards. Assessment reports for public consumption and restricted access are outcomes of this exercise. The quality assurance and accreditation processes involve time consuming efforts, and hence are carried out at regular intervals of four or five years.

Perceived shortcomings of accreditation and quality assurance assessments are many. They are time consuming efforts on the part of higher education providers or universities. Hence not conducted every year, i.e. summative rather than formative. Some stakeholders desire to have yearly reports. The detailed and voluminous evaluation reports are suitable for the consumption of specialists and policy makers. The reports are opaque and vague to the students, families and the public. They rely heavily on the peer review of self-assembled dossiers of universities. Peer review is limited to few select experts who may be biased and concerned only about a few aspects of higher education which they deem very important. Moreover, the accreditation and quality assurance assessment reports conclude that a university or higher education provider either met or did not meet the self-defined expectations and standards. These reports do not provide information on how well a university performed when stacked against others, as they do not conduct extensive benchmarking on national and international levels. Oftentimes, they are not verified extensively and objectively by an independent third party. The information sources are mostly proprietary to the universities, and not collected and managed by respected independent third parties. For justifiable reasons of universities and policy makers, the transparency of information and facts is limited.

Numerous criteria and the language of the reports do not relate to the needs of all stake holders of universities. Those considering themselves not well enough served seek data publicly available in real time and searchable online. The public and many stakeholders would wish to base their support for higher education on concrete evidence. There is the question of relevance: measure outcomes as well as outputs! Focus on why it matters.

In the slow-moving world of higher education evaluation, four important assessment criteria are slow to emerge: (1) the need for strong links with employers to qualify more knowledge workers, (2) a genuine provision for lifelong education, (3) consequences of digitization for the future of the university, and (4) the necessity for collaboration and interdisciplinarity among subject areas and among institutions. These four quality criteria are currently insufficiently explored and not sufficiently transparent to most types of stakeholders.

Instead, one of the great successes of higher education evaluation systems has also become one of its major shortcomings: Seizing on the opportunities opened by the limitations of historical practices of quality assurance and accreditation evaluation methods, organizations for international ranking of universities have emerged in the early 2000s. They started publishing lists of international ranking of universities around the world with instant attention from the media (Ramakrishna, 2010). International rankings are based on the research output, education resources, and reputation among peers and employers. These lists allowed policy makers and university leaders to get a sense of relative performance of universities at national, regional and international levels. In response to the strong criticism by the experts and other critics, the international ranking organizations subsequently fine-tuned their assessment criteria, indicators, weightings for to different parameters, and methodologies regularly, and strengthened their data sources. They made the international rankings more appealing by providing searchable international ranking lists by subject areas and specific fields and disciplines, as well as by geographic region and history of institutions. Moreover, they translated aggregated information into simple numbers to be easily understood by students, families, media and the public. Such international university ranking lists enabled the international mobility of millions of students. About 5 million university students are studying outside their home country. Students can identify better institutions in their areas of interests. Policy makers prioritized funding decisions based on these lists as they are assumed to be based on the relative performance of universities.

International rankings came up in a time of growing competition among countries around the world. Nations are competing for market share, innovation edge, and sustainable growth. They understand the need for competitive universities producing needed talent and innovations. The international ranking lists served as a useful and convenient tool for the policy makers to convey their tough and prioritized funding decisions to the public and academia. Worldwide attention to the international rankings facilitated the growth of a scientific databases industry. The scientific databases industry successfully leveraged advances in the information and communication technologies industry. It is now able to provide customized benchmarking reports and intelligence of scientific areas to the country policy makers and university leaders thus making evidence-based policy making and strategies possible. For example, Clarivate Analytics, formerly known as Thomson Reuters Intellectual Property and Science, provides customized bench marking reports on university-industry cooperation and international cooperation in addition to the usual reports on research intensity and performance in terms of number of journal papers, citations, H-index, patents, and so on. It is a US$3.5 billion company with over 4,000 employees in more than 100 countries. It owns Web of Science, Cortellis, Derwent Innovation, Derwent World Patents Index, CompuMark, MarkMonitor, Techstreet, Publons, EndNote and Kopernio, Arabic citation index, and other companies. Its services are based on big data analytics. The Web of Science focuses on research published in over 12,500 journals and over 170,000 conference proceedings in science, medicine, arts, humanities and social sciences. Derwent World Patents Index is a comprehensive database of more than 30.5 million inventions detailed in more than 65 million patent documents.

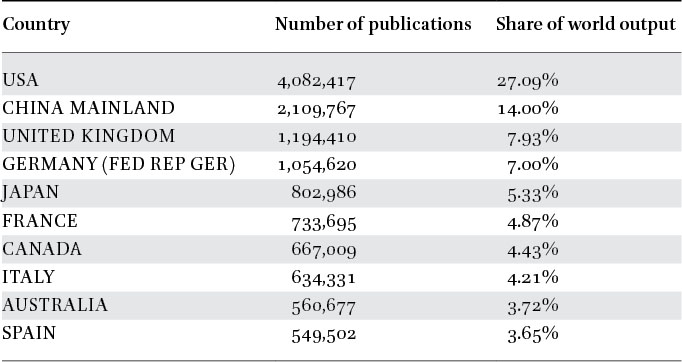

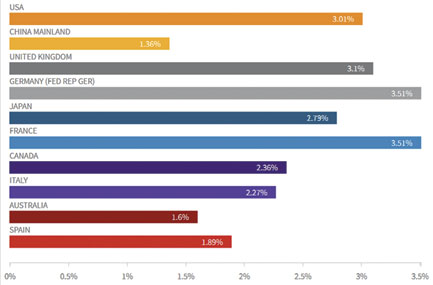

As examples of possible analyses, Table 1 illustrates the number of scientific publications and relative share of world output by the top ten countries in the world during 2008 to 2017. Table 2 provides a summary of number of citations and world share of citations for countries corresponding to table 1. Figure 1 illustrates how China is rapidly catching up with the USA in terms of yearly publications output. Figure 2 illustrate average citations, percentage of papers in the first quartile of journals, and percentage of highly cited papers respectively by the top 10 countries in the world. Figures 3 and 4 show the percentage of international collaborative papers and percentage of Industry collaborative papers respectively of top 10 countries/regions. Tables and figures contain 2017 data, unless otherwise stated.

Top 10 countries/regions in 2008–2017 based on pub volume

Citations performance of top 10 countries/regions

Yearly trend publications of top 10 countries/regions

Average citations of top 10 countries/regions

Percentage of international collaborative papers of top 10 countries/regions

Percentage of industry collaborative papers of top 10 countries/regions

Scientific publications data analytics companies are also able to provide customized comparisons of select institutions around the world. For examples, Figure 5 shows a comparison of the yearly publications output of National University of Singapore and Nanyang Technological University (both in Singapore) and Beijing University and Tsinghua University (in China) over the past ten years. For the same period of assessment, Figures 6, 7, 8 and 9 compare average citations, H-index, percentage of documents in 1st quartile journals, percentage of highly cited papers, percentage of international collaborative papers, and percentage of industry collaborative papers, and patent filings, respectively for the four benchmarked universities. Figure 10 provides a star diagram comparison of profiles of four target universities in terms of research output or web of science documents, research income from public sources, research income from industry, global research reputation and global teaching reputation. Table 3 provides detailed descriptions of various indicators and sources.

Yearly trend publications of 4 target universities

Average citations performance of 4 target universities

Percentage of highly cited papers

Percentage of international collaborative papers

Percentage of industry collaborative papers

Institution profile of 4 target universities

Indicators and descriptions

Another notable example of advanced

There is still room for more fundamental improvements: More needs to be done to help these institutions overcome challenges in making their collections widely available on line and free for the future progress of humans. The research publishing landscape is evolving rapidly with growing diversity and expansion of stakeholders. Growing competition among researchers encouraged by research funding mechanisms and rewards systems also led to unintended consequences such as compromised research integrity in the case of certain researchers and unethical practices of institutions. This emphasizes the need for updating and raising the standards of scientific publishing and reporting.

The widely popular international university rankings are also not free from shortcomings, challenges and unintended consequences (Ramakrishna, 2011a, 2011b). They are updated annually and announced publicly by international organizations. They are external to the universities, and hence criticized for their limited appreciation of universities’ internal academic processes which take time to change. The range of areas and parameters used for university performance assessment and comparison are growing. In other words, the ranking methodology has not been stabilized, and is causing unintended knee jerk reactions on the part of some university leaders who are second guessing policy makers. This results in further stress on the university leadership at various levels, and in turn on the faculty members and resources of the universities. Universities wish to opt out of global comparisons, but they are no longer in a position to do so. Criticisms leveled against international rankings include a) driving the leaders of universities to take short term views as opposed to the needed focus on long term development of the institution, b) universities’ performance is narrowly defined and subject to the availability of reliable international databases, c) driving universities to give undue importance to research over education and social engagement (research performance being relatively easier to evaluate and benchmark than teaching), d) driving universities and individual researchers to assume a publish or perish attitude, e) constraining the diversity in research domains to demonstrating research impact, f) favoring short-term applied research over the long-term, open ended basic research which will have more profound progress of humanity, g) encourage protective and silo behavior over collaborative and positive behavior, h) encourage researchers to pursue hot research areas rather than pursue their passions, i) chasing research funds rather than doing research, j) rehash easily achievable and limited quantitative findings as opposed to seeking relevant qualitative solutions k) over focus on mere institutional performance over the research excellence, quality and relevance, and l) not appreciate the fact that serendipitous research outcomes may be far more valuable than the pre-determined research outcomes. Critics suggest that thought leadership and innovation are far more important than mere rankings since thought leadership ultimately advances any field of study; and that universities and research funding sources ought to encourage researchers to strive for thought leadership in their respective areas of passion. Ranking organizations rebut these criticisms and emphasize that international rankings are fit for a purpose and cannot be for all purposes.

Rankings also differ widely. In the 2018 international ranking of universities by Quacquarelli Symonds (QS), a London based company specializing in higher education, there are six universities from mainland China among the world’s top 100 universities. QS ranked Tsinghua University at 17 and Beijing University at 30. In the same year the Center for World University Rankings (

Common among many international university rankings is their focus on academic research output. More specifically, the number of research papers and articles and the number of citations. This is primarily due to the ready availability of verifiable, global databases of published scientific papers. Companies such as Clarivate Analytics and Elsevier’s Scopus provide large data analytics services based on respective sources and databases and analysis tools and methods. These databases cover all areas of scientific research spanning several decades. Databases are updated regularly and automatically, and expanded year on year for wider and deeper coverage of diverse fields of scientific research. Apps for searching and querying databases are fine-tuned and customised to suit the needs of individual researcher0s, universities, funding agencies, policy makers and international ranking organizations. They enable benchmarking of research output at the levels of individual researchers, subject areas, universities and countries. They also enable benchmarking at the national and international levels for different windows of time. Apps employ advanced digital technologies such as big data analytics, machine learning and artificial intelligence. They are employed to identify trending research areas and researchers. For example, Clarivate Analytics, formerly known as Thomson Reuters IP & Science, publishes annual list of World’s Most Influential Scientific Minds with much national and international attention to the identified researchers. Webometrics publishes a list of highly cited researchers in the history of science and technology (

Such comparisons are helpful at the gross level for the administrators, funding agencies and policy makers. Critics are of the view that too much importance is placed on these gross level comparisons by university leaders and researchers. It is driving researchers to develop publish or perish syndrome. They emphasise that more attention to be given to the value of original research rather than paying attention to how many papers are published or research productivity. Researchers and universities are turning into paper mills and drifting away from the original mission of generating new knowledge. Proponents view that these pseudo metrics are the only means for objective measure of research outputs. Subjective measures and approaches such as peer review are necessary to fully appreciate the significance of scientific research. Shortcomings of the peer review process include bias and limited knowledge of peer reviewers, the development of a sameness of thought among peers (otherwise, no publication!) and its time consuming and limited scope. There have also been whispers about mutually beneficent rank optimising behaviours in some science research communities, and arts researchers have complained that their slow monograph writing habits cannot compete with the multi-author shorter publications in the sciences. Apps and AI tools are yet to be made which are smart and intelligent enough to deeply appreciate scientific research and make comparisons!

Way Forward

Ongoing debates point to the need to be rational and moderate about the international university rankings, and to conduct systematic research on how to evaluate teaching and research contributions and performance, as well as the four other qualitative criteria for university development. Hence there is a pressing need to establish a common understanding of monitoring and evaluating research impact nationally and internationally, as well as their latest developments; to establish a set of overarching principles and common language and terminology which underpin the research impact measurement and articulation; to identify common data requirements that can be used to verify research impact outcomes; to develop cost effective and efficient databases and analytical platforms, new measures of impact, and methodologies for collection and reporting; and to share experiences in communication strategies with diverse stakeholders. For example, the research impact is the contribution that research makes to the economy, society, environment or culture, beyond the contribution to academic research. Excellent research is valuable to the progress of humans and indirectly underpins the research impact. Transformative research is at the boundaries and interfaces of different disciplines. Moreover, innovative solutions to the challenges of humanity require advances of multiple disciplines through collaboration.

International Connectivity Should Be Made a Key Measure of Universities’ Evaluation

Policy makers of leading countries understand the need for continued supply of knowledge and innovation for quality growth and competitiveness of economies and for better solutions to the challenges faced by societies. They pay closer attention to fostering a culture of innovation by strengthening innovation systems, protecting intellectual property rights, providing opportunities to test best innovations, and providing incentives for venture capital growth and ease of doing business.

Grand challenges that need better solutions include how to provide quality living environments, mitigating the effects of climate change, quality urbanization, building circular economies, revitalising rural areas, overcoming labour shortages due to aging population and lagging of human capital skills behind the technology changes, and the changing expectations of new generations. These challenges are common to most countries around the world. There is much to be gained by finding better solutions through international collaboration and sharing of best practices and experiences (Chandraham & Fallow, 2009).

In contrast, in a hyper-competitive world, the tendency of researchers, academics and universities is to limit openness. Such limitations are counter-productive. Knowledge will not generate new knowledge without sharing knowledge and experiences. The diversity inherent in international partnerships is necessary for developing out of the box solutions and breakthroughs.

Pro-activeness on the part of academic leaders is much needed to sustain international cooperation as individual academics and students respond to the rewards and recognition systems within their universities. The intensity and quality of international partnerships should be a key measure of quality, relevance and performance of a university. Open and competitive environments raise the overall standards and enable progress. Therefore, international connectivity should be made a key measure of universities’ evaluation (Ramakrishna, 2015b). The defining trend of higher education in the 21st century is that universities assume a central role in the generation of new knowledge, scientific inventions and innovations, and training of future ready human capital. Public funds are invested into the universities to perform these functions. Entrepreneurs, businesses and companies turn these into new products, services and business processes. This process provides opportunities for economic growth and job creation. The internet is an example of a product of public research and exploitation by the private sector for the benefits of society (Ramakrishna & Ng, 2011; Ramakrishna & Krishna, 2011).

Glocal Mindset and Values Should Also Be Measures of Universities’ Evaluation

Diversity has been recognized as very important to open the minds and see new possibilities. Higher education evaluation systems need to encourage a) mixing of diverse students with different social, economic, ethnic, cultural, gender, and nationality backgrounds, and b) academic faculty members drawn from different nationalities and different backgrounds. In Germany, outstanding engineers change fairly easily between enterprise and academe and are often active in both.

Geopolitics of the world in 21st Century are very different from the 20th and 19th Centuries. Countries around the world have become stronger and more nationalistic, and vigorously guard their industries, businesses, economies, societies and competitive edge. Companies and businesses thrive by their abilities to leverage value chains across the world to compete and serve in different markets. They need human capital with deep local and global understanding, i.e. “glocal” mindset and values (Ramakrishna, 2015a). This can be realised effectively only when the university teachers themselves have “glocal” experience and knowledge, and universities prioritise international education, research partnerships and collaboration. The curriculum should be adjusted such that there are more relevant opportunities for students.

Summary

Historically, accreditation and quality assurance are rooted in the concept of graduates as supplicant job seekers. International rankings, by contrast, are the product of an era in which countries, cities, universities and employers are all involved in competition for talents and innovation. New criteria were suggested to complement existing ones. A passionate plea is being made to maintain and expand international collaboration for the sake of innovation and competitiveness.

No higher education evaluation system is perfect. Each evaluation system has positive attributes as well as shortcomings. Given the diversity of higher education providers and diverse needs of students, societies and countries, it is not wise to pursue uniform higher education evaluation systems.

Ever new ways of evaluation will emerge to fit the changing demands made on universities in the years to come.

Footnotes

Acknowledgements

The authors wishes to acknowledge the support of Mr David Liu and Ms Wei He of Clarivate Analytics (clarivate.com; ip-science.thomsonreuters.com), Beijing 100190, P.R. China in gathering data and organizing them into infographics, tables and illustrations. The authors also acknowledge the review of manuscript by Mr Martin Ince, Chair of the Global Academic Advisory Board, QS World University Rankings, and