Abstract

Abstract

Assessing how students engage and what they know and can do are pressing change frontiers in contemporary higher education. This paper examines large-scale work that has sought to advance the capacity of higher education systems and institutions to engage students through to graduation and ensure they have capabilities required for future study or work. It reviews contexts fuelling the importance of engagement and learning outcomes, reviews two large-scale case studies, and advances a broad model for structuring assessment collaborations that create and deliver new value for higher education. We conclude by discussing implications and opportunities for Chinese higher education and collaborative international partnerships.

Responding to Quality and Productivity Imperatives

Fireside chats remain part of higher education, but this growing industry is subject to increasing quantification, assessment and review. Australian higher education provides an interesting case of a system at the forefront of such change, with the 2008 Bradley Review ushering in reforms that have prompted new forms of expansion and accountability. 2 The highly innovative and internationalised nature of Australian higher education provides a useful point of reference for analysing emerging system- and institution-level trends of significant and growing relevance to China and the broader international context.

As we discuss below, along with other leading nations, Australian higher education is moving into an era that places greater emphasis on keeping students engaged and ensuring they have high-quality outcomes. As participation in higher education unfurls quality assumes fresh momentum and it becomes essential to find effective ways to engage and retain students. Similarly, as learning and curriculum resources become more openly and universally available outcomes assessment assumes much of the gateway role that admissions testing played in more elite eras. The key challenge for higher education has switched from selecting who gets in, to supporting and grading who gets out.

This paper examines large-scale work that has sought to advance the capacity of higher education systems and institutions to engage students through to graduation and ensure they have capabilities required for future study or work. We begin our analysis in the next section by reviewing contexts driving increased interest in engagement and learning outcomes, and lending weight to the need for new international collaborations. From there, we review two large case study initiatives—namely the Australasian Survey of Student Engagement (AUSSE) and the Assessment of Higher Education Learning Outcomes (AHELO). These provide a basis for advancing a broad model for guiding assessment collaborations that are creating and delivering new value for higher education. We close with consideration of the broad implications these idea have for Chinese higher education and international collaboration.

It is important to note at the outset that these ideas are explored not because engagement or learning outcomes are handled poorly at Australian or foreign universities, but rather because they are done in considered and reflective ways that are open to critique and improvement. Indeed, the need for more peer review and collaboration is our broader argument in this paper. Continuous improvement is important, for improving students’ engagement and outcomes has the potential to yield substantial impact on the quality and productivity of higher education.

Unfolding Pressures Shaping Higher Education

As Coates and Mahat have discussed, significant forces are reshaping core facets of higher education, many of which cannot be ignored even in the most conservative environments. 3 Key forces include those associated with cost and pricing, transparency and privatisation, diversification and stratification, curriculum and provision, and students and academics. We analyse these forces in turn.

Even among service industries higher education stands out as being particularly afflicted by what Baumol described as the cost disease. 4 Universities have large infrastructure costs, large labour costs and reliance on expensive face-to-face provision. This underpins high fixed and variable costs, and limited economies of scale. The model is not highly expandable without seeing diseconomies of scale, particularly in a highly person-centric services sector that manifests several growth-inhibiting factors. This puts increasing pressure on institutions to explore revised cost structures. The urgent need to boost university productivity has been noted by many. 5

Revenue as well as expenditure is being squeezed. Coupled with cost pressures, universities typically have only limited capacity to set price. In domestic markets, regulation and subsidisation tend to nourish elite oligopolistic clubs which would enable universities to function as ‘price makers’ were it not for the usual imposition of tuition price ceilings. Internationally, most of the world’s universities tend to be ‘price takers’ like any others, competing on the open market for student enrolment. Compounding these pricing pressures is the emergence of new institutional players, which are offering higher education services at substantially lower cost.

Higher education is also encountering transparency forces the likes of which have never been seen before. The proliferation of institution and program rankings flags and fuels this thirst. But more broadly, governments are demanding that institutions detail activity, and prove performance and standards. 6 Potential students and their families are seeking information to guide investments in learning. Business is seeking reliable data to guide graduate recruitment and research partnerships. Many of these transparency developments are international, working off ‘found data’ and restricting the capacity of institutions or governments to establish or control reporting. Increasingly, institutions are attempting to manage and assure the data that feeds into such processes (in Australia, for instance, institutions have hired ‘rankings coordinators’ and consultants to assist with positioning), but much can already be sourced passively by third parties.

At the same time, universities are confronting new commercial constraints. Though various facets of university research have long had commercial flavours, the new pressures surround core education business. New streams of often private finance are flowing into higher education, seemingly in loose counterpoint to the diminution of government subsidy. This kind of money can create problems for universities, imposing new obligations—for instance, around intellectual property and disclosure—which mix uneasily with basic tenets of scholarly work (and the transparency demands exposed above). As has long been recognised with research, but now increasingly with institutional governance and management, ‘commercial transparency’ and ‘scholarly openness’ differ in theory and practice. This situation puts pressure on universities to rethink collegial conventions regarding knowledge creation and dissemination, many of which are tacit and somewhat uncontrollable. Who owns knowledge, for instance, and how freely can it be accessed and shared?

Among all this, institutions are facing enormous stratification pressures in new global ecosystems. National systems are not knowledge islands—academics, students and ideas travel widely among systems. In many advanced systems it seems increasingly fruitless to seek ‘national sense’ out of either research or education, for so much of higher education is international in scope. The national barriers that protected universities are being eroded by new international hierarchies. Institutions are situating, though mostly being ‘situated’, in emerging borderless orders partly driven by student markets and preferences. Price and Kennie, for instance, detail one taxonomy of an emerging international ecosystem, which structures the landscape by selectivity (open/elite) and funding (public/private). 7 Van Vught and Ziegele write of a hierarchy consisting of the top echelon, international research universities, a range of niche/specialised institutions, a plethora of local teaching institutions, and a set of virtual global players. 8 Barber, Donnelly and Rizvi propose another taxonomy—the elite university, the mass university, the niche university, the local university, and the lifelong learning mechanism. 9

Against these stratification pressures sits a host of policy and strategic desires for a diverse higher education system. Systems desire policies that maximise the value and reach of scarcer public dollars. 10 Institutions seek ‘blue oceans’ 11 that deliver alpha performance in increasingly contested terrains. Both eschew isomorphism that leads to structural inertia. Finding and establishing difference gets harder just as it becomes more important.

And knowledge is being flatpacked. Universities are facing business pressures arising from the promulgation of online open-access proprietary curriculum products. Protecting access to knowledge resources once gave higher education a strategic edge. Until very recently, universities could distinguish themselves through the substance and quality of curriculum materials. Institutions with access to leading professors/experts, with ownership of distinctive technologies, and with expensive facilities, had relatively exclusive access to knowledge. In many areas of higher education this differentiation parameter has gone, with the internet and global flow of talent servicing what several major United States research institutions referred to nearly a decade ago as the ‘open courseware initiative’. 12 The new knowledge architectures lead to reconceptualization and reform of how higher education is conducted. Providers can recode and recompile information, repackaging this in myriad ways to suit different individuals and groups.

A decade ago, aspirations shaping books on ‘virtual universities’ 13 might have swamped sales, but such literature is being reprinted now that software services expectations. What once higher education ostracised as ‘programmed learning’ today may constitute ‘authentic pedagogy’. The physical university has not died, but virtual learning has proliferated and been incorporated within existing institutions. Despite persistent shortcomings, 14 learning management systems have automated many core teaching functions. Far from being a backwards slide, however, this refiguring of teaching creates space for innovation, positioning, and diversification. The same ‘Accounting 101’ may be ‘implemented’ by a robotic algorithm, a fully tenured professor, or a sessional lecturer, all with different financial structures, market potentials, and intellectual textures. Institutions capture more degrees of freedom to locate themselves in the market. The disruptive consequences for higher education are well documented 15 even though sustainable business models for these new forms of provision are yet to be established.

These new access dynamics—relatively open curriculum and automated provision—enable the distribution of higher education to more different learners than ever before. 16 As economies mature, not many countries are shrinking higher education, and even where there is unmet demand—or massive unmet demand as in China—it can be serviced by global online providers. The student body is growing and diversifying, ramping up pressures on universities around provision and support.

While learner demand grows, the supply of teachers is stressed or bottlenecking. In many systems universities are facing workforce pressures such as increasing international competition for talent coupled with a looming tranche of retirements. 17 This means a lot, for even in the most programmed context highly skilled people are needed to create curriculum and teach. Teachers and other professionals need to support students. Academics need to produce research, and to integrate and synthesise information into knowledge. This basic restatement of core academic work is required as many of the most vital facets of academic work are intangible, and all knowledge has a half-life, even in highly digitised environments. Displacing core teaching work to people on contingent contracts is symptomatic not curative.

Of course, anyone working in or around universities recognises these pressures play out in different ways in different moments. Invariably, these contexts raise questions, and pressures, about standards and how institutions monitor and enhance what teachers and students know and can do. Many pressure points stem from the change forces explored above. Ultimately, however, as we flagged in the introduction there are two critical areas that while already reasonably well-established in certain contexts are in need of even more sustained attention—student engagement and learning outcomes. 18 In key respects, these are pressing change frontiers. It would be too radical—just—to claim that due to the increased delegation of education to learners, institutions can no longer be held to account. But undoubtedly how students engage is even more instrumental to production. Likewise, assessing what students know and can do takes new stakes in post-compulsory environments in which access to knowledge resources is quite freely available. Finding effective ways to structure collaborations in these areas is becoming critical.

Data-Driven Collaborations—AUSSE and AHELO

Two large-scale data collections illustrate Australian-led innovation over the last few years to produce data that sheds insight into university education and, ultimately, improve student engagement and learning outcomes. In this section we review the AUSSE and AHELO. These two case studies highlight the breadth and depth that has been progressed, another example of potential interest being the Australian Medical Assessment Collaboration (AMAC). 19 In a longer analysis, we could consider a range of other work on matters such as student admissions, teaching standards, or leadership effectiveness. Each of the projects reviewed here highlight aspects of the collaborative model we present in the following section, and the broad value derived from structured international collaboration.

The AUSSE produces data on how students are engaging in education and on students’ perceptions of institutional support. Use of common survey materials and processes helps institutions benchmark internally and with other institutions. Through its foundation in the United States National Survey of Student Engagement (NSSE), the AUSSE offers Australasian institutions international benchmarking options.

Directed by Hamish Coates, the AUSSE is managed by the Australian Council for Educational Research (ACER) and run in collaboration with Australian and New Zealand higher education institutions. Foundations were laid in late 2006 through conversations between ACER and interested institutions. 20 The methodology and materials were developed in early 2007, and a pilot collection with 25 Australian and New Zealand universities followed later that year. Institutional participation expanded considerably as the survey developed. Thirty-nine of Australia’s 40 universities have participated in the AUSSE at least once, along with a range of non-university higher education providers. Since 2007, over 600,000 Australasian students have been sampled to participate in the AUSSE.

The AUSSE measures student engagement by administering the Student Engagement Questionnaire (SEQ) to a representative sample of first- and later-year students. The SEQ is based on NSSE’s College Student Report and is used under license. The College Student Report (CSR) flows from decades of scholarly research, and since 1999 has been deployed at almost 1,500 institutions and subjected to numerous tests and improvements. The CSR was extensively revised, developed and validated for Australasian higher education before being used with Australian and New Zealand students.

While the SEQ measures many of the same aspects of engagement and includes many of the same engagement scales as the CSR—Academic Challenge, Active Learning, Student and Staff Interactions, Enriching Educational Experience and Supportive Learning Environment—the SEQ also provides data on another engagement scale—Work Integrated Learning. In addition, the SEQ also provides measurement of seven outcome measures—Higher Order Thinking, General Learning Outcomes, General Development Outcomes, Career Readiness, Average Overall Grade, Departure Intention and Overall Satisfaction.

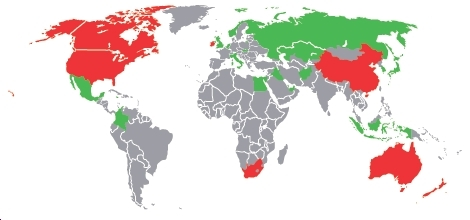

Clearly, these are core education concepts, and they are gaining increasing traction internationally. Figure 1 shows the large (and still expanding) scope of international collaboration around the NSSE. 21 Partly for this reason, the Bradley Review 22 recommended national administration of the AUSSE, a policy implemented via development of the Australian Government’s University Experience Survey. 23 In the map, red shading indicates large-scale use and green more ad hoc use by a handful of institutions. Given its expanding use over a decade, there are likely thousands of institutions around the world who can use data produced via the NSSE or an international derivative for powerful benchmarking.

International adaptations of the United States NSSE

By way of example, Figure 2 shows a sample benchmark report that includes first- and later-year results for four universities from Australia, New Zealand, Canada and the United States. While high-level institutional summary estimates, such comparison against reasonably alike (de-identified here) institutions spotlights areas for improvement. These figures—particularly the student/staff interaction results—underpinned recommendations made in a national review to revise base funding to universities. 24 The analysis is relatively simple, underlining the practical relevance and applicability of data on student engagement. In brief, students’ interactions with staff are a major determinant of students’ engagement and outcomes. Yet staff costs, and faculty salaries in particular, are one of the main cost drivers in higher education. Hence enhancing student/staff interaction goes directly to core discussions about cost, revenue and productivity.

Sample benchmark comparisons between four universities.

As this brief example conveys, the AUSSE has stimulated a considerable amount of higher education research and development since its inception. Over several years the concept of student engagement has been embedded in strategic and operational plans at many Australian institutions. Incorporation into governance and leadership arrangements, including in senior executive role titles, signals particular recognition of the significance of the phenomenon. As well, AUSSE data has been used by institutions to conduct internal quality audits and reviews of teaching, learning and curriculum. Outside institutions, information on student engagement has influenced government discussions and policy. 25 Findings from the AUSSE have been cited in myriad external quality audits, used in institutional marketing campaigns, filtered into institutional and scholarly research, and used to report quality on websites and information materials. This snapshot signals the kind of multifaceted impact that a collection such as this can sustain.

The second case study, OECD AHELO, constituted a pioneering multinational data collection in higher education. In 2008, the OECD’s thematic review of tertiary education identified a set of key issues shaping higher education. Somewhat analogous to our context review above, these issues included expansion of systems, diversification of provision, more heterogeneous student bodies, new funding arrangements, an increasing focus on accountability and performance, new forms of institutional governance, global networking, mobility, and collaboration. Combined, these forces drive demand from a variety of stakeholders for transparent information on the success of higher education.

Over the last few decades a suite of quality initiatives have attempted to address the paucity of information on university education. Despite significant gains, each of these initiatives has been limited in its own way. Rankings address partial performance in specific contexts but tend to focus on research productivity and impact. 26 Competency based approaches such as the Tuning Project 27 have considerable merit but are limited to framing competencies as expected outcomes that graduates should have. National qualification frameworks began as a move towards competency-based education 28 but have become policy instruments which often underemphasise their specific contexts. 29 Measures of student engagement such as the NSSE and its various international derivatives 30 are insightful but only deliver proxy information on student learning. AHELO was established to fill this gap by developing and testing assessment instruments across countries and institutions. 31

As a core facet of academic standards, assessment in higher education has traditionally been a matter for individual institutions and faculty. As result, student knowledge and skill is often measured using un-calibrated tasks that are scored normatively by different markers using un-standardised rubrics and then, often with little moderation, adjusted to fit prescribed percentile distributions. 32 It is certainly important that assessment of student learning is localised to take account of specific contexts. But there is an emerging realisation of the need to complement (and also validate and stimulate) such everyday practice with more generalizable forms of assessment and data. Of course, well-designed generalizable assessment can itself be made highly relevant to local contexts, and a considerable amount of research and development is seeking to produce approaches that offer an optimal blend of ‘top down’ and ‘bottom up’. 33

Distribution of country participants in OECD AHELO.

Transcending these localised approaches, AHELO thus far reflects the most advanced international manifestation of a scalable graduate test that provides independent insights into learners’ capacity to apply knowledge and skill to solve real-world problems. With policy leadership by the OECD and international directorship by Hamish Coates, AHELO was designed and implemented by an international consortium of expert agencies. Governance took place through a group of national experts (national ministry representatives), with advice from a stakeholders consultative committee. Specialist oversight and input came from expert groups, including a group of eminent technical advisors.

AHELO ran between 2010 and 2012, and there were 17 country participants (see Figure 3). Regionally, these countries included seven from Europe (Belgium, Finland, Italy, Netherlands, Norway, Russian Federation and Slovak Republic), four from the Middle East (Egypt, Kuwait and Abu Dhabi in the United Arab Emirates), four from the Americas (Canada, Colombia, Mexico and the United States) and three from Asia (Australia, Japan and South Korea). Each participating country established a national centre with one or more national project managers who coordinated all activities in their jurisdiction. Each participating institution was represented by an institution coordinator who reported directly to the national centre.

AHELO was divided into two phases. Designs and infrastructure were developed in the first phase. Assessment frameworks and instruments were developed, localised and validated in each of the three selected testing strands—generic skills, engineering and economics. These materials were pitched at students in the final year of bachelor degrees, and comprised both production-focused constructed response tasks and multiple choice questions. The assessment instruments sought to assess students’ capacity to apply their skills and knowledge to real-world problems. They were designed to collect information on learning across a wide range of institution types, countries and languages.

The second phase incorporated fieldwork, analysis and reporting. Lists of student populations were validated, students were sampled, and all assessment and context instruments were delivered online. Test data on students’ learning outcomes was collected from 23,000 students across 250 institutions in the 17 countries. Data were also collected on a wide range of contextual factors by means of questionnaires completed by students, faculty, institution representatives and national coordinators. Equivalency in national and institutional procedures was controlled through extensive documentation of process as well as international and national training for core personnel. A practice audit was conducted, 34 which found that stakeholders at both the national and international level reported widespread adherence to protocols.

As an indication of the high-level output from this work, Figure 4 shows a sample chart from a Generic Skills Strand Institution Report. The international mean of all institutions is shown (set at 500), as is the cumulative distribution for one sample institution, and for all other institutions (about 100). The chart shows the results for the sample institution are slightly higher compared with international figures. While very high-level information, this unparalleled information can be used by institutions for all sorts of quality monitoring and improvement. The AHELO Institution Reports included many more detailed breakdowns to support institutional benchmarking.

Sample cumulative student score distributions.

The focus of AHELO was not only to test whether it was technically and operationally feasible to design, develop and implement common assessments with international scope, but also to position AHELO in ways that provided robust foundations for future cross-national development. Establishing and maintaining equivalence was central to all activities. The study set new standards for the scale and quality of empirical studies of higher education. Numerous technical and practical challenges were met and overcome as the study progressed. While AHELO has been progressed to date as a feasibility study, it has already signalled major governmental interest in progressing this field of work. As the above report affirms, the work proved that it is possible to define learning outcomes internationally, that it is possible to build assessment instruments to measure these outcomes, that it is possible to implement these instruments online on a global scale, and that informative reports can be produced for institutions and governments alike. While early days for such a large-scale initiative, unprecedented and robust data on such a core facet of university education—student learning—has an evident potential to yield substantial and new value for institutions and stakeholders alike.

A Generalizable Model for Structuring Assessment Collaborations

As we flagged at the outset, these contexts and case studies provide substantial insight into the rationales and prospects for work that seeks to improve student engagement and learning outcomes. They also affirm the broad value of international collaboration in these core areas, and highlight the rudiments of an architecture for advancing partnerships. We provide an overview of this collaborative structure here, adopting a methodological stance and noting that specifics about scheduling, governance, management and intellectual property are defined in any implementation.

While collaboration in scholarly research has had a long history, collaboration in other aspects of higher education is a recent phenomenon. Due to the change forces described above, however, the tide appears to be changing. The above case studies provide empirical evidence of how institutions are working collaboratively at national and international levels. The benefits are numerous, not only for individual institutions, but for systems generally. An enduring definition of collaboration, developed through a synthesis of a number of theories and definitions on the construct, is the process that occurs “when a group of autonomous stakeholders of a problem domain engage in an interactive process, using shared rules, norms, and structures, to act or decide on issues related to that domain”. 35 This definition encompasses elements that answer the following questions: Who is doing what, with what means, toward which ends?

As noted above, work in this field has adopted either a ‘bottom-up’ or ‘top-down’ approach. Both carry benefits and limitations. While a bottom-up approach (working primarily with teachers and institutions) is essential to engaging practitioners and ensuring local relevance, under the cloak of ‘academic freedom’ it can spawn conceptual and operational relativism that is expensive and inhibits the formulation of generalizable assessment practices or outcomes. Top-down work (in which specialists engage in the production of assessment infrastructure for ministry or institution leaders, independent of practitioners), by contrast, yields efficiencies inherent in a collaborative approach yet can be too far divorced from everyday educational contexts and realities. The key, we contend, is a blended controlled collaboration 36 that moves beyond the artificial bifurcation inherent in bottom-up or top-down approaches.

A four-stage model of controlled collaboration.

How does this controlled collaboration work? Broadly, it involves mirroring collaborative processes used in the creation and review of scholarly research. More specifically, it results from setting up collaborative assessment communities that provide instruments for switching on and tuning various forms of collegial sharing. Figure 5 advances a four-stage model of controlled collaboration. Briefly, the model encapsulates: establishing assessment partnerships; producing assessment tasks; developing shared processes; and reporting. The model also responds to the three broad questions posed by Wood and Gray: 37 Who is doing what, with what means, toward which ends? The arrows depict the collaborative nature of the model, which we unpack below.

The first stage involves establishing an assessment framework, which clarifies the focus, scope and approach to be used for assessment. As a minimum first step, sufficient though not comprehensive amounts of definitional sharing is a necessary condition for any subsequent empirical comparability. Normally, assessment frameworks go beyond curriculum statements to provide sufficient detail for item generation and mapping. 38 This task is made complex by the diverse and nuanced nature of many higher education curricula, yet might reasonably be expected with large early-year subjects and in certain final-year programs. A certain amount of central steering is required to achieve consensus among expert contributors, making a facilitated collaboration an ideal organisational structure for progressing this phase of the work. Collaboration at this level has been progressed in Australia on a wide scale through industry groups through work sponsored by the now-disbanded Australian Learning and Teaching Council (ALTC) to produce ‘standards frameworks’ for key disciplines.

The second stage of sharing involves collaboration around the production of assessment tasks. Broadly, this involves collaboration for the design, sourcing, development, validation and review of materials. This could be progressed in several ways to optimise the scope and quality of material generated. One approach is to gather materials already in use within the field—the world is replete with test items in many fields—and review and compile these into a quality-assured task library. Another approach is to design specifications and train educators in basic principles of item production, then to seek contributions from practitioners. Both approaches and other variants are used in the field, and invariably a combination is deployed.

The third stage along the ‘sharing spectrum’ involves sharing of assessment processes. This goes to sharing facets of administration, analysis and reporting process. Such sharing does not necessarily imply a centralised approach, but it does require coordination of key steps in the process. For instance, academics or departments may outsource assessment work to professional organisations, something that in certain basic respects is already widespread given uptake of assessment tools within learning management systems over the last decade. Online deployment and external marking of assessments may facilitate quality assurance and efficiencies, and support more elaborate forms of moderation and reporting.

The most extensive fourth level of sharing, mostly though not essentially contingent on the earlier three stages, pertains to sharing of data and results. Even here, sharing can take different formats, which are conditional, for the most part, on the extent of de-identification involved. Depending on context and need, different groups and individuals will find comfort with different levels and varieties of sharing. In certain cases, sharing of de-identified aggregate results may be sufficient. Examples include AMAC mentioned above, 39 in which results from student assessments are benchmarked in a variety of ways by participating institutions. At the other end of the spectrum sit comprehensive licensing examinations in which all data and scores are compiled in a uniform fashion, though typically dispersed to only a very few parties.

Almost regardless of the level of sharing or collaboration adopted, formalising assessment collaborations that facilitate forms of sharing such as these yields compound benefits for higher education. It concentrates educational energy on core facets of learning and teaching, building institutional and system capacity. It directs improvement activity towards an area in which many university teaching staff, even those with teaching qualifications, are likely to have had little training. It opens up the development and use of assessment resources to collaboration, unfolding the opportunities, efficiencies and quality checks that flow from collaboration. At the most basic level it offers added assurance that the assessment of student learning and development is done in ways more likely to yield valid and reliable results that mean something beyond the local context in which people have learned.

Collaborative Partnerships with Chinese Higher Education

China is growing by leaps and bounds. Its share of the world’s gross domestic product was less than five per cent in the late 1970s and set to reach 25 per cent by 2030. 40 Investment in research and development rose from 0.6 per cent in 1996 to 1.7 per cent of GDP in 2009. 41 China’s aspirations for world class universities, flagged by development of the Academic Ranking of World Universities, 42 are gaining momentum. China’s research output has made significant strides in the last decade. Specifically, China’s production of scientific papers expanded by 14 per cent annually in the ten year period to 2008, placing it second behind the United States. 43 The mass expansion of postsecondary sector since 2000 has led to almost five times increase in undergraduate enrolment. 44 And amidst all these, there are growing concerns related to students and faculty recruitment, retention and outcomes. 45

Assuring and improving quality in Chinese higher education assumes greater importance given substantial growth in scope and scale. This is affirmed by the ‘30 clauses’ policy paper published in 2012 by China’s Ministry of Education. 46 Much can be achieved internal to institutions and the system, but as China continues to grow in strength, international collaboration would seem to have essential role to play. Such collaborations can be calibrated according to the settings modelled in this paper.

China’s participation in the NSSE-China/CCSS project is an example of effective international collaboration. Through the leadership of Tsinghua University, the NSSE-China/CCSS is now the largest and most systematic college student survey in China. But much more can be done. As argued in this paper, the principles of sound assessment can—and should—be generalised across contexts. Doing this furnishes information and perspective that can transform conceptualisation about the quality and productivity of university education. The rudiments are in play to enact the assessment strategy sketched above.

Increasingly, ‘quality improvement’, ‘quality reform’, and ‘quality assessment’ have become watchwords in China’s higher education reforms. These terms and the discourses that underpin them are global in nature, though they play out differently in local contexts. The assessment collaboration model sketched above can provide the required assurance regardless of the level of sharing. The sharing of definitions or tasks—hence of processes or results—provides added confidence in the generalizability of local assessment data. Sharing of processes or results enables genuine external verification of local practice, either by building strength into the local assessments or by supplementing local assessments with independent cross-checks.

Forming New Approaches to Quality

In this paper we have discussed how building structured assessment collaborations advancing the capacity of higher education systems and institutions to engage students through to graduation and ensure learners have capabilities required for future study or work. Of course, work on these fronts can and should be advanced by individual faculty, departments and institutions, but given contemporary challenges of engaging students in ways that achieve high-quality learning outcomes, substantial value derives from collaborative international approaches. The two case studies illustrate different ways in which such work can proceed, and provided seeds for the more generalizable model. As the analysis suggests, we see this as an effective means of advancing partnerships.

The above discussion—building assessment collaborations and assessing learning outcomes—flows into consideration of broader forms of higher education performance assessment. While these two areas have led independent lives for much of the history of higher education they are increasingly being synthesised into new approaches to assuring higher education quality and productivity. 47 This flows from the need, within an expanding and diversifying systemic and institutional environment, for new and more effective approaches to leading, managing and regulating higher education.

In any country, experts and the public alike tend to answer the question ‘What is the best university?’ with reference to the university that is hardest to get into—‘the most elite’, the one that ‘takes the best students’, the one that ‘has the highest Gaokao, SAT, ATAR, GRE . . .’. But which university produces the best graduates? Likely, the same institutions are listed. Why? Because ‘they take the best students’, they ‘do more research’, they are ‘preferred by employers’, etc. These kinds of answers speak to unverified assumptions 48 about relations between learner inputs, engagement and outcomes (which are complex and non-linear), and the links between research and teaching (which are small, and indeterminate). Implementing international collaborations that produce better insights on engagement and learning has an important role to play in advance conceptions and perceptions of quality.

Such large-scale agendas, of course, confront orthodoxies and stir the pedagogical, political and cultural challenges that shape and stimulate any new assessment paradigm. Education can be a conservative activity constrained by cultures, technologies, epistemologies, generations and curriculum. Simply providing data on student performance has an immediate and almost telic impact on teaching, and careful consideration must be given to designing against adverse outcomes such as curriculum standardisation. Moving focus onto student engagement and outcomes is a major shift that must be owned and progressed by the academic profession. At the same time, it sends clear policy and cultural signals about the importance placed on knowledge and human capital.

Footnotes

5 For example: B.G. Auguste et al., Winning by Degrees: The Strategies of Highly Productive Higher-Education Institutions (New York: McKinsey and Company, 2010); W.F. Massy. Initiatives for Containing the Cost of Higher Education. (Washington, D.C.: American Enterprise Institute, 2013); Teresa Sullivan et al., eds., Improving Measurement of Productivity in Higher Education (Washington D.C.: The National Academies Press, ![]() ).

).

6 For example: European Commission, “European Commission,” Accessed November 2, 2013.http://ec.europa.eu/index_en.htm; Tertiary Education Quality and Standards Agency (TEQSA), “Tertiary Education Quality and Standards Agency” (TEQSA). Accessed 5 December. ![]() .

.

7 Ilfryn Price and Tom Kennie. Disruptive Innovation and the Higher Education ‘Eco-System’ Post ![]() : Discussion Paper (London: Leadership Foundation for Higher Education, 2012).

: Discussion Paper (London: Leadership Foundation for Higher Education, 2012).

13 For example: K. Robins and F. Webster, eds. The Virtual University? Knowledge, Markets and Management (Oxford, UK: Oxford University Press, 2002); H.J. van der Molen, ed. Virtual University? Educational Environments of the future (London: Portland Press, ![]() ).

).

16 OECD. Education at a Glance (Paris: OECD, 2012).

18 Hamish Coates. Student Engagement in Campus-based and Online Education: University connections. (London: Taylor and Francis, 2006); Hamish Coates. “Defining and monitoring academic standards in Australian higher education.” Higher Education Management and Policy 22:1 (![]() ): 1-17.

): 1-17.

22 Bradley et al., Review of Australian Higher Education.

23 A. Radloff et al. Development of the University Experience Survey (UES). (Canberra: Department of Education, Employment and Workplace Relations, 2012); A. Radloff et al., UES National Report (Canberra: Department of Industry, Innovation, Science, Research and Tertiary Education, ![]() ).

).

25 Bradley et al., Review of Australian Higher Education; Lomax-Smith, Watson, and Webster, Higher Education Base Funding Review; Radloff et al., Development of the University Experience Survey (UES).

32 Hamish Coates, “Defining and monitoring academic standards in Australian higher education.”

33 Australian Medical Assessment Alliance. “Australian Medical Assessment Collaboration.” Australian Council for Educational Research. Accessed 5 December 2013. http://www.acer.edu.au/amac; Medical Schools Council Assessment Alliance. “Medical Schools Council Assessment Alliance.” Accessed 5 December 2013. ![]() .

.

37 Wood and Gray. “Toward a comprehensive theory of collaboration.”

38 ACER, NIER, and EUGENE. Ahelo Feasibility Study Engineering Assessment Framework (Paris: OECD, 2011).

39 Wilkinson et al., “Assessment of medical students’ learning outcomes in Australia: Current practice, future possibilities.”

45 Ibid.

46 Ibid.

48 Hamish Coates and Goedegebuure. “Recasting the academic workforce: why the attractiveness of the academic profession needs to be increased and eight possible strategies for how to go about this from an Australian perspective.”