Abstract

Accelerating energy consumption and increasing data traffic have become prominent in large-scale wireless sensor networks (WSNs). Compressive sensing (CS) can recover data through the collection of a small number of samples with energy efficiency. General CS theory has several limitations when applied to WSNs because of the high complexity of its

1. Introduction

A wireless sensor network (WSN) is a system that involves information collection, transmission, and processing. It is widely utilized in environmental monitoring, military defense, health monitoring, smart homes, and many other important fields. WSNs have attracted an increasing amount of attention from the academia because of their extensive application. As the scale of WSNs continues to expand, energy consumption and transmission costs increase as well. There are some methods to decrease the energy consumption and transmission costs. One way is to increase the number of sinks during the deployment. Another alternative way is to design the compressed algorithm during the transmission. We apply compressive sensing (CS) to WSNs in this study to address this problem. CS technology [1, 2] employs a much lower sampling rate than the frequency present in the original signal to sample signals; it is far more effective than the Nyquist sampling theorem [3]. Compressive sampling can reduce the amount of data sent, received, and processed by WSN nodes to save energy.

Over the past few years, many scholars have studied the application of CS in WSNs. The authors in [4] employed principal component analysis (PCA) [5] to capture the spatial and temporal characteristics of real signals and analyze the combined use of CS and PCA with a Bayesian framework. Reference [6] investigated the adoption of CS in WSNs by considering network topology and routing, which is utilized to transport random projections of the sensed data to the sink. However, the performance was not as good as expected [6]. Conventional

In this study, we focus on how to approximately perform signal recovery with high accuracy (over 90%) and reduce the energy consumption at the same time. Considering that the greedy algorithm, which is a heuristic algorithm that uses the locally optimal choice at each stage rather than a global optimum, has the advantages of low computational complexity and high computational speed [11], we apply this algorithm to WSNs to approximate data recovery. Moreover, we utilize the spatial correlation among WSN nodes in combination with LEACH method to design a measurement matrix and conduct compressed sampling and approximate reconstruction in an energy-efficient manner. Lastly, we design and implement a new framework called data acquisition framework of compressive sampling and online recovery (DAF_CSOR). We conduct a detailed analysis and comparison of three different algorithms under DAF_CSOR in actual WSN scenarios and conclude that DAF_CSOR is relatively optimal. The main contributions of this study are as follows:

In view of the real signal in WSNs, we design DAF_CSOR and construct the observation matrix through LEACH clustering. We study three different recovery methods based on the greedy algorithm to optimize DAF_CSOR. We conducted a detailed analysis and performed a comparison of three different algorithms under the DAF_CSOR framework in real WSN scenarios. We selected DAF_CSOR_SAMP as a signal recovery algorithm due to its sparsity-adaptive capability. It not only has high recovery accuracy but also guarantees extended WSN lifetime.

This paper is structured as follows. The traditional CS framework of sensing, compression, and recovery is presented in Section 2. DAF_CSOR for compressive sampling and online recovery is proposed in Section 3, and three different recovery methods are investigated based on the greedy algorithm. The distributed signal model and simulation results are presented in Section 4. Here, we confirm that the assumptions under DAF_CSOR are effective for real-world signals. The conclusions of the study are presented in Section 5.

2. CS Basics

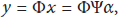

In conventional CS theory, the natural physical signal is represented as vector

Through random sampling of the sparse transform coefficients of the original signal, we write

Measurement matrix

Commonly utilized measurement matrices include Gaussian white noise matrix [13], ±1 Bernoulli matrix, and sub-Gaussian random measurement matrix. The common characteristic of these measurement matrices is that the matrix elements independently obey a certain distribution. Such measurement matrices are incoherent with most sparse signals; thus, precise reconstruction requires a small number of observations. However, these matrices do not have the characteristics of distributed networks and often require large storage space and high computational complexity. Therefore, adopting this type of measurement matrix in data compression is unsuitable for WSNs. Consequently, we designed the Gaussian random measurement matrix based on clustering.

Finally, the estimation of sparse coefficient α is recovered via sample vector y and the measurement matrix. This problem must be solved with a recovery algorithm that can be described as

3. DAF_CSOR and Recovery Method Based on the Greedy Algorithm

DAF_CSOR is presented in this section. The structure of the measurement matrix based on LEACH algorithm is investigated, and the coherence of the measurement matrix is analyzed. Finally, we focus on three different recovery methods based on the greedy algorithm (CoSaMP, ROMP, and SAMP) to optimize DAF_CSOR.

3.1. DAF_CSOR

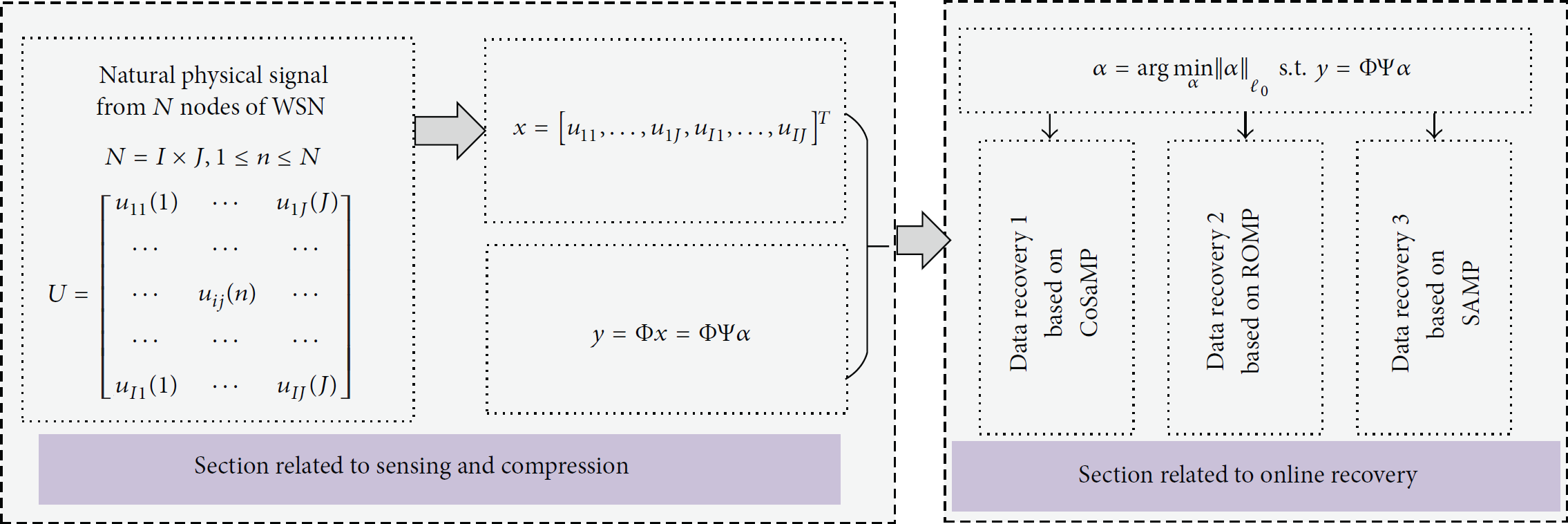

As shown in Figure 1, DAF_CSOR includes three parts, namely, sensing, compression, and online recovery. We performed sensing and compression of the original physical signals by using measurement matrix Φ and sparse matrix Ψ. We selected DCT as a sparse matrix, utilized LEACH algorithm for node clustering in WSNs, and constructed the measurement matrix based on the clustering (Section 3.2). Online recovery is the focus of our study, and three recovery methods, namely, DAF_CSOR_CoSaMP, DAF_CSOR_ROMP, and DAF_CSOR_SAMP, were investigated based on the greedy algorithm (Section 3.3). We investigated each of the components of the framework.

DAF_CSOR.

3.2. Design of the Measurement Matrix

The CS technique and the LEACH method are both well known techniques. However, they cannot be used well if we combine them in their native forms [6]. To use the CS technique and construct an appropriate measurement matrix, we modified the LEACH method. Unlike the original LEACH method, where there was no need to consider the number, randomness, and coherence of the cluster head selection, we select the cluster heads based on the following three points.

During the cluster election phase, the selection of each node as a cluster head or not depends on the percentage of cluster heads in WSNs and the number of times of it being a cluster head. If the node's remaining energy is smaller than the threshold, then the node no longer qualifies as a cluster head. Unlike the general LEACH algorithm, the number of cluster heads depends on the number of rows of observation vector based on the combination of CS theory, which should be at least

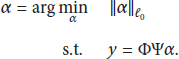

Each node generates a random number ranging from 0 to 1. If the number is smaller than the threshold

Finally, a measurement matrix of size

The probability of “0” or “1” appearing in each row of the Gaussian random measurement matrix is fixed; thus, the coherence calculations of the Gaussian random measurement matrix generated in each experiment show minimal change. As the number of cluster heads M increases, the number of “1” in the measurement matrix also increases. The coherence formula indicates that the correlation decreases while the corresponding recovery stability increases. DAF_CSOR focuses on stable recovery with low coherence.

3.3. Energy-Efficient Data Recovery via the Greedy Algorithm

Energy-efficient data recovery is a key step of the application of CS in WSNs. On the one hand, according to the Section 3.2, there is a subset of sensor nodes that participate in the CS process, so that the method can cut the number of transmitted data and reduce the overall energy cost. On the other hand, it is true that energy consumption must exist for the data recovery of CS. However, taking inspiration from references [4, 14], we let the data recovery process by using greedy algorithm occur at the sink node; that is, we only perform the computation required for compressive sensing at the sink node, where there are no stringent energy requirements. The

3.3.1. DAF_CSOR_CoSaMP

The algorithm utilized in DAF_CSOR_CoSaMP method is derived from RIP. Assuming that

Initialization: Initial approximation

( ( ( ( (

3.3.2. DAF_CSOR_ROMP

This method calculates correlation coefficient

Initialization:

( ( ( select index values satisfying ( ( (

3.3.3. DAF_CSOR_SAMP

DAF_CSOR_SAMP is based on a sparsity-adaptive greedy algorithm. The entire algorithm is divided into several phases, and the size of the support set is fixed in each phase. The algorithm follows the atom selection strategy in the matching pursuit algorithm and introduces the idea of backtracking. The candidate set is selected according to relevant guidelines, and the atomic support set is obtained by screening the candidate set to improve the accuracy of the algorithm. In addition to its capability to improve atom selection, this algorithm is sparse adaptive and is thus able to approximately evaluate the original signal when sparsity k is unknown. The key feature of the algorithm is the selection of step size s to approximate the actual signal sparsity as close as possible. A small s requires a big number of iterations which result in increased computation time. By contrast, a large s requires less computation time. DAF_CSOR_SAMP only requires

Initialization: Residual

( ( ( (

The abovementioned three methods under the DAF_CSOR framework indicate that because the algorithm in DAF_CSOR_CoSaMP is derived from RIP, it eliminates the limit on threshold parameters and ensures the accuracy of positioning through the RIP of the measurement matrix. Thus, the overall speed of this algorithm is improved. The algorithm in DAF_CSOR_ROMP adds the regularization process to the OMP algorithm and thus makes the atom selection process simple and fast. However, these two algorithms require sparsity k to be known in advance. The most significant difference of DAF_CSOR_SAMP is that it can recover the original signal without knowing sparsity k. Moreover, with the design of backtracking and the improvement in the atomic selection method, the accuracy of recovery is improved. Overall, in the proposed DAF_CSOR, DAF_CSOR_SAMP is expected to perform excellently.

4. Experiment and Performance Evaluation

Referring to references [14, 15], we can assume that all nodes are roughly synchronized to perform the compressive sampling process. We let the sink node collect the data from the cluster head nodes, while the cluster head nodes collect the data from the sensing nodes in each round via the MAC mechanism – IEEE Std 802.15.4™-2006 [14]. The basic idea of our experiments and evaluation may be divided into two major steps. Firstly, we represent the network topology of our experiment and optimize the design of measurement matrix, which has large influence on the network lifetime. And then, under the same optimized measurement matrix (i.e., CS_LEACH_Method), we focus on the relative error, running time, and energy efficiency of data recovery instead of the network lifetime again. Based on the numerous experiments using real data sets, we evaluate the three types of energy-efficient recovery methods.

4.1. Experimental Data and Distributed Network Model

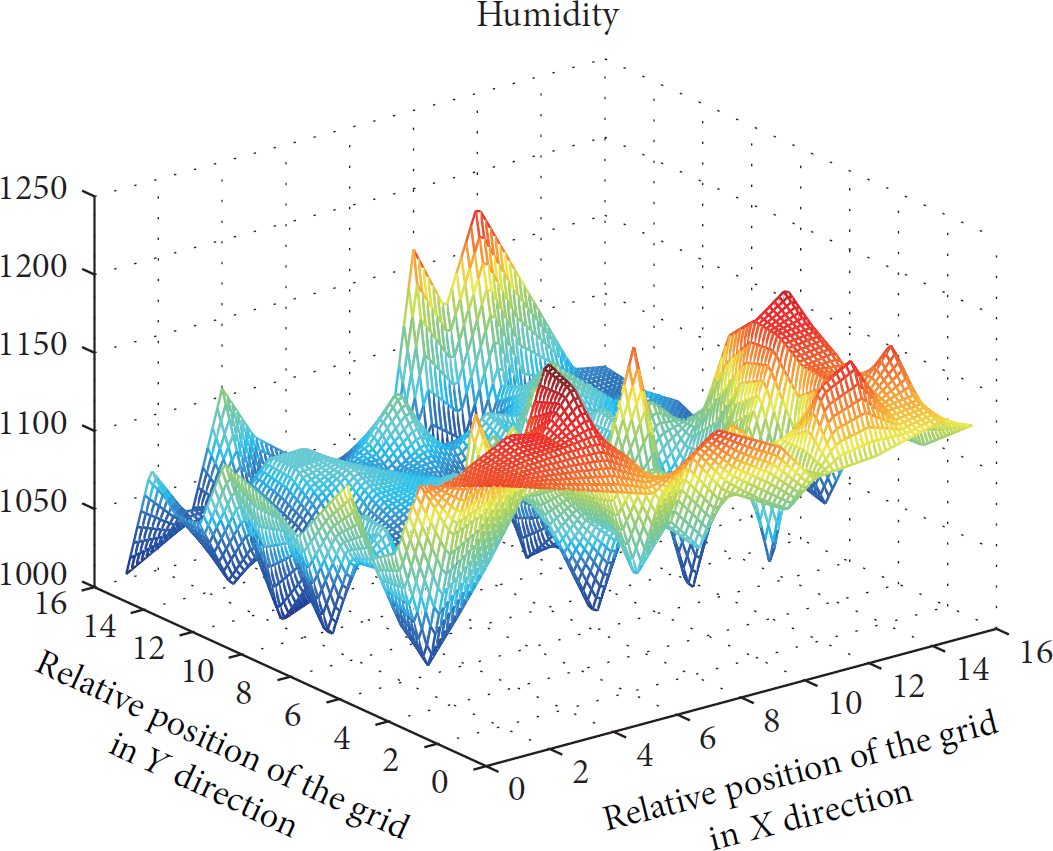

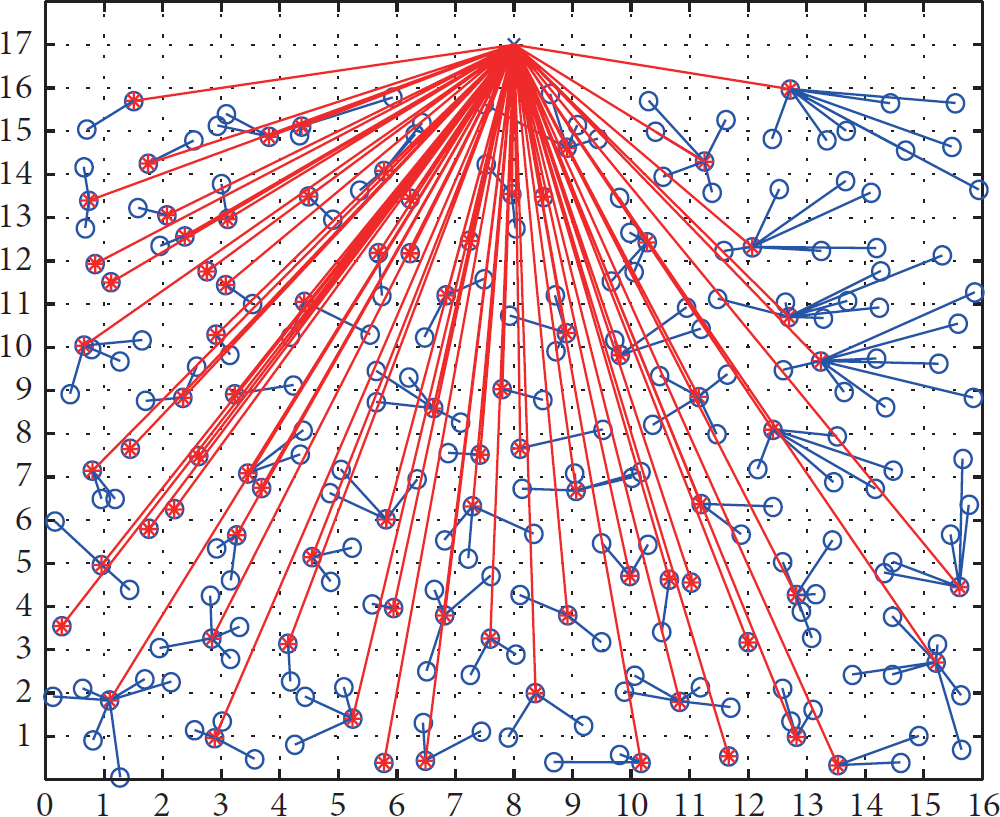

Deployment was performed with GreenOrbs [16], and the humidity signal of the most densely distributed nodes was selected as the experimental data. The relative position is depicted on the x- and y-axes according to grid structure preprocessing, and the monitored humidity readings are on the z-axis as shown in 3D in Figure 2. As shown in Figure 3, a square area of size 16 × 16 contains 256 sensor nodes and 64 cluster heads. The blue circle represents the observed sensor node, and the red star represents the cluster head node. The figure shows a simulation of a distributed WSN. Information on noncluster heads is sent to the cluster heads initially and then delivered to the sink node. Considering the influence of number of cluster heads on the measurement construction, according to the findings in the references [1–3], the number of cluster heads should be larger than

Real monitoring data values displayed in 3D.

Simulated distributed WSN.

Number of cluster heads versus network lifetime.

Number of cluster heads versus recovery precision.

4.2. Network Lifetime Based on Different Measurement Matrices

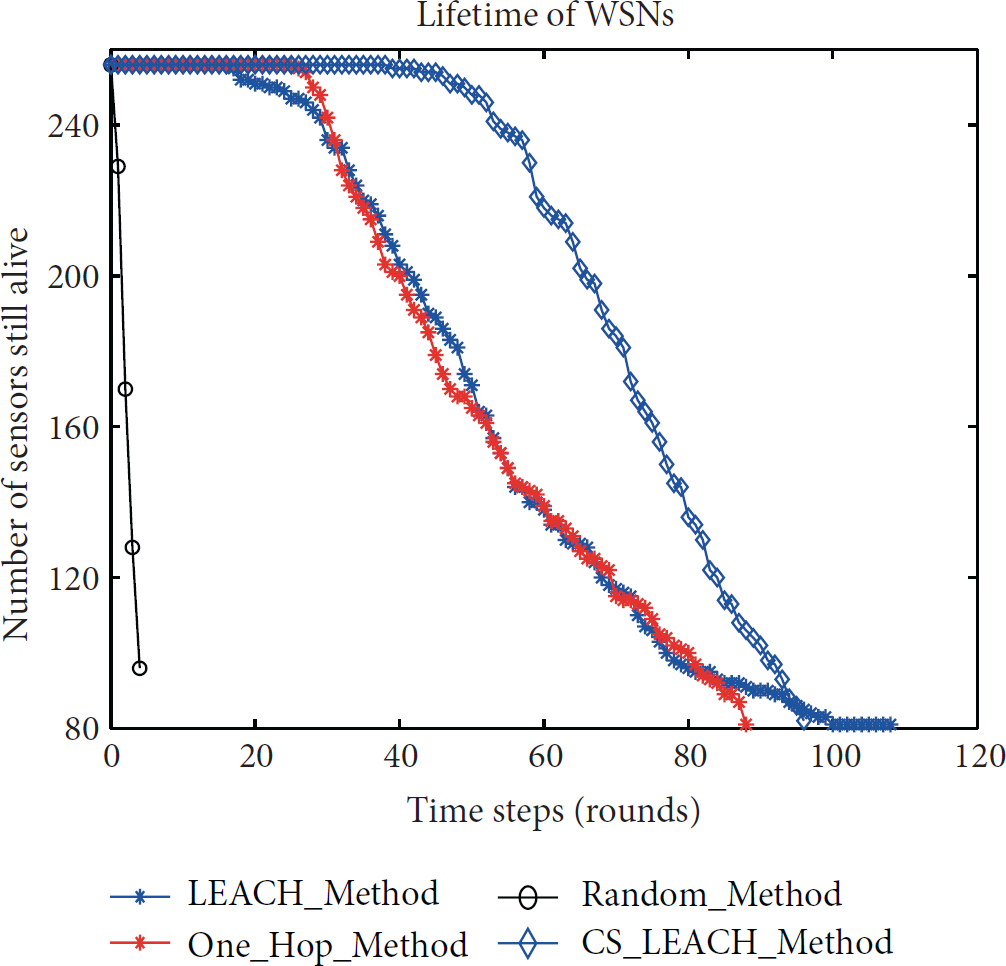

Our experimental simulation results show that although the Gaussian random measurement matrix is widely utilized in image processing, it does not have the characteristic of distributed networks and often requires large storage space and high computational complexity. Thus, it is unsuitable for WSNs. The proposed measurement matrix design based on LEACH clustering can effectively prolong the lifetime of WSNs. Figure 6 shows the network's lifetime based on four different methods, namely, traditional LEACH clustering method without CS (LEACH_Method), Gaussian random measurement (Random_Method) [6], measurement based on single-hop routing policy (One_Hop_Method) [17], and our proposed measurement based on LEACH clustering method (CS_LEACH_Method) [18]. Note that One_Hop_Method assumes that all the nodes can send messages to the sink node within one hop. As shown in Figure 4, our solution CS_LEACH_Method is superior to the other methods because of 2 main facts: (1) in both methods of Random_Method and One_Hop_Method, there is large transmission cost because there are no data convergence and compression during the data acquisition; (2) in the method of Gaussian random, there exists higher uncertainty that will lead some nodes unexpectedly to be dead because it fails to consider the nodes' remaining energy while we evenly distribute the energy load among the sensors in the network in the CS_LEACH_Method [18]. We developed a clustering scheme by considering the remaining energy of each node and compressing the data in the head node of each cluster, which, as a result, decreases the number of communication packets and prolongs the lifetime. Thus, in the subsequent data recovery experiments, we employed CS_LEACH_Method to construct the measurement matrix for DAF_CSOR.

Lifetime of different methods.

4.3. Performance Analysis

The performance of the three recovery methods under the DAF_CSOR framework is shown in Figure 7. The blue marks represent the original signal, and the red ones represent the recovered signal. The three methods exhibit different performances and features because of their different atomic selection strategies and iteration stop conditions. In this section, we present the comparison and analysis of our experimental results and measure the performance of the three recovery methods in terms of running time and relative error.

Performance of different recovery methods.

4.3.1. Error Comparison

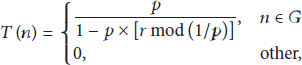

We utilized the following formula to calculate the relative recovery error:

Error of the recovery algorithm under the DAF_CSOR.

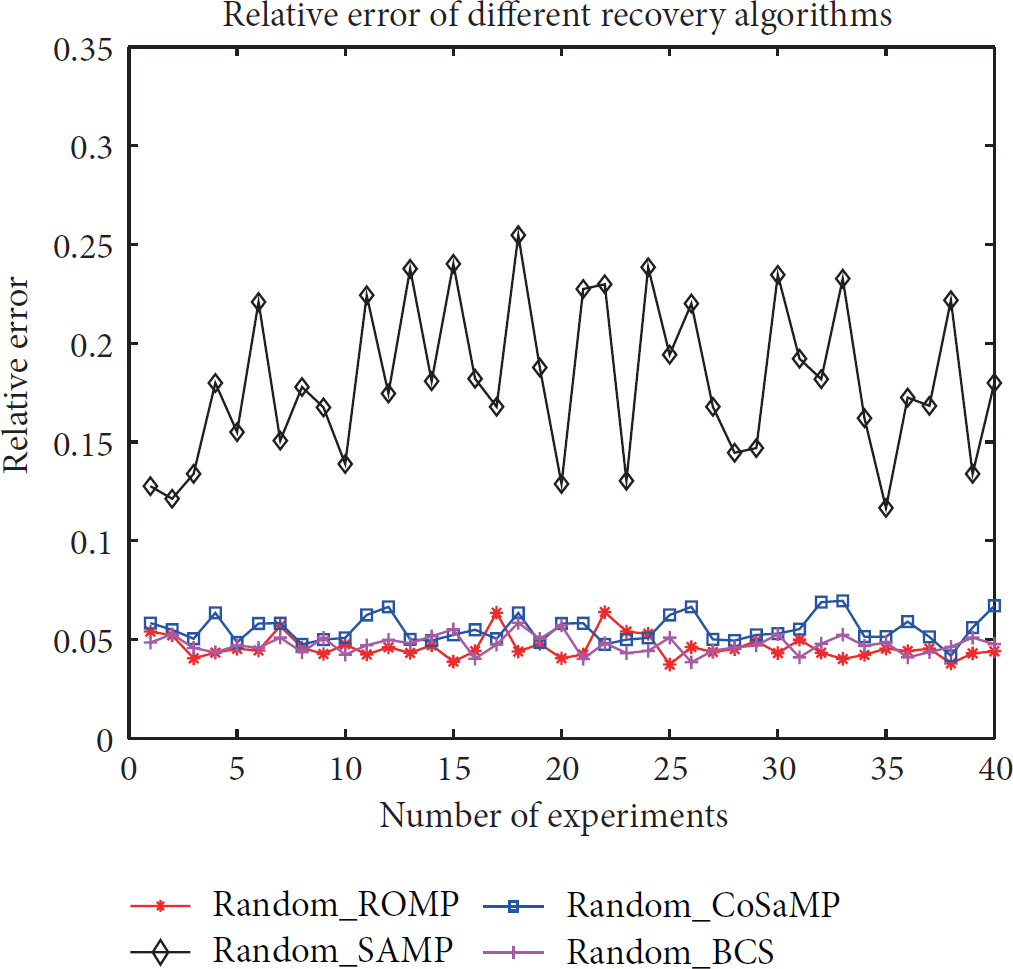

The experiments in Figure 9 are conducted under our framework DAF_CSOR. In order to compare with the other framework, we conducted with the 4 different recovery algorithms under the framework with Gaussian random observation matrix. Figure 9 shows the recovery error of four different algorithms. We can see that the recovery algorithm SAMP is inferior to the other 3 recovery algorithms, which have similar result as Figure 8. Overall, DAF_CSOR is superior to Gaussian random and DAF_CSOR_SAMP is more suitable for signal recovery in WSNs when sparsity k is unknown.

Error of the recovery algorithm under the Gaussian random.

4.3.2. Running Time of the Recovery Algorithms

Figure 10 shows that DAF_CSOR_ROMP is superior in terms of running time. DAF_CSOR_CoSaMP performs excellently in terms of recovery speed. Given that the running time of DAF_CSOR_SAMP is related to the step length, we modified the step length in some of the experiments. As a result, running time changed obviously with different step lengths. The closer the sparsity is to the real value, the shorter the running time is. Network lifetime is unaffected because online recovery is performed in the sink node, which has no energy constraints. Although the speed of DAF_CSOR_SAMP is slightly inferior to that of the other three methods, it is still very fast such that it can meet the needs of WSN applications, such as environmental monitoring.

Running time of the recovery algorithms.

5. Conclusions and Future Work

In this paper, we combined CS theory with WSNs in view of

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgments

This work was supported by the National Natural Science Foundation of China (nos. 61572260, 61373137, 61472193, and 61401221), Six Industries Talent Peaks Plan of Jiangsu Province (no. 2013-DZXX-014), Natural Science Foundation of Jiangsu Province (nos. BK2012436 and BK20141429), Scientific and Technological Support Project (Society) of Jiangsu Province (nos. BE2014718 and BE2013666), Natural Science Key Fund for Colleges and Universities in Jiangsu Province (nos. 11KJA520001 and 12KJA520002), Jiangsu High Technology Research Key Laboratory for Wireless Sensor Networks (nos. WSNLBZY201519), and NJUPT Natural Science Foundation (nos. NY213157 and NY213037). In addition, the authors of this paper are grateful to Professor A-Xing Zhu in Department of Geography, University of Wisconsin-Madison, for his suggestions.