Abstract

Mobile crowd photographing has become a major crowd sensing paradigm, which allows people to use cameras on smart devices for local sensing. In MCP, pictures taken by different people in close proximity or time period can be highly similar and different MCP tasks have diverse constraints or needs to deal with such duplicate data. In order to save the network cost and improve the transmitting efficiency, pictures will be preselected by mobile clients and then uploaded to the server in an opportunistic manner. In this paper, CooperSense, a multitask MCP framework for cooperative and selective picture forwarding, was designed. Based on the sensing context of pictures and task constraints, CooperSense structures sequenced pictures into a hierarchical context tree. When two participants encounter, their mobile clients will just exchange their context trees and at the same time automatically accomplish forwarding high-quality pictures to each other via a tree fusion mechanism. Via virtual or real pruning and grafting, mobile clients learn which picture should be sent to the encounter and which one should be abandoned. Our experimental results indicate that the transmission and storage cost of CooperSense are much lower comparing with the traditional Epidemic Routing (ER) method, while their efficiency is almost the same.

1. Introduction

People using cameras to get pictures is a typical application of mobile crowd sensing. Nowadays, widespread smart devices are driving more and more people to use cameras as visual sensors to relook at this world. It raised a particular type of participatory sensing paradigm: Mobile Crowd Photographing (MCP) [1, 2].

MCP applications collect uploaded pictures and send them to the backend server for further collective knowledge mining. Typical MCP achievements include monitoring the pollution of creeks [3], reposting and sharing fliers distributed in urban areas [4], and gathering pictures of buildings for 3D city modeling [5]. These MCP applications assume that transmission is available anytime and anywhere. However, communication will become unavailable and the internet will become inaccessible in some cases, such as an emergency. For example, 36 cellular base stations were out of service due to the power cut caused by the Tianjin explosion accident in 2015 [6]. Then some MCP applications were developed to report scenes of emergency situations (e.g., disasters or fire) [7–9]. Also, because pictures are of large size (around 200 KB for a useable zoomed-out picture to 3 MB for a full-size picture taken by an iPhone 6S), they cost much network flow and money (about 0.29 RMB for 1 MB on average in China) when the commercial network is connected (e.g., 3/4G). Therefore, some researchers studied opportunistically forwarding pictures [10] with ER (Epidemic Routing) [11] based on some free and high-speed transmissions like Bluetooth, WiFi, and so on. Researches on Delay Tolerant Network (DTN) [9, 12] and Mobile Opportunistic Network (MON) [13, 14] are typical examples.

MON and DTN utilize cooperative Work Nodes (WN) to store, carry, and forward pictures, which has been mentioned by Uddin et al. [7], Weinsberg et al. [12], Jiang et al. [15], Zhao et al. [16], and Yu et al. [17]. Their works leverage the cooperative sensing scheme to eliminate sampling redundancy so as to save energy. Cooperative WNs refer to those participants who join in to collect or forward pictures in this paper.

In MCP, it is possible that participants perform the same task and collect similar pictures. As a result, not all WN-carried pictures are valuable. Then what kind of pictures are valuable? Different applications give different answers. For instance, in the disaster area after an earthquake, pictures of buildings taken from different directions and in different places might be more valuable than those taken with the same spatial context [7]. Valuable pictures refer to those about-to-receive ones that are different from carried ones. Therefore, in order to select valuable pictures, MCP applications will keep additional context information on saved pictures. However, existing picture dissemination schemes in MON/DTN are not sufficient since the utilization of photographing contexts has not been effectively explored.

Based on requirements of forwarding pictures through an opportunistic way, five important constraints for the solution are summarized as follows.

(1) Self-Driven Delivery. Under the submitting permission from participants, the self-driven picture delivery is more applicable comparing with the manual transmission. Therefore, opportunistic forwarding is applicable.

(2) Energy-Saving. Energy limitation has been stated in all mobile applications, and thus our method must keep balance between the energy-saving and performance.

(3) Coverage Assurance. Selecting representative high-quality data and then forwarding or uploading them is a more effective way to decrease the traffic and storage consumption, but only on the condition that the selection method guarantees the coverage of the selected pictures from those raw ones.

(4) Low Delay. People out of the observation area need pictures on the scene to know about the situation, so the receiving delay of these pictures must be as minor as possible.

(5) Useless Epidemic Termination. Whenever WNs encounter, they exchanges their pictures via an epidemic way. Once a picture is uploaded to the data center, those duplications of this picture carried by WNs are useless and thus should not be forwarded any more. In this case, useless epidemic must be terminated as soon as possible.

In order to address these constraints mentioned above, we will use data fusion or aggregation in our picture forwarding method. Picture data fusion in MCP is different from numeric data fusion (e.g., an average value of temperature) [18, 19] or splicing pictures in wireless sensor network [20], and it usually relies on a data selection scheme [7, 9, 15]. In other words, based on some selection constraints, the WN will only forward valuable pictures selected from a picture set union of this WN and others’ picture collections.

CooperSense is a novel cooperative and selective picture forwarding framework for varied MCP tasks. It leverages the cooperation of opportunistically encountered participants to decrease the delay of uploading pictures and utilizes epidemic picture routing with fusion to eliminate sampling redundancy. Specifically, the following contributions are mainly made:

Proposing a generic framework based on a cooperative data fusion to address the problem of how to select and forward high-utility picture for MCP tasks; we, especially, are the first to consider useless epidemic termination for energy-saving in its cooperative picture forwarding framework. Developing a novel and efficient tree model to structure the information of picture collection, named picture tree (PicTree), which satisfies various MCP task needs and constraints for data fusion; photographing contexts as locality, timestamp, and shooting angle are used to build this tree structure. Proposing a PicTree fusion based method for picture selection; without image processing, PicTree fusion can efficiently select valuable pictures and abandon useless ones.

We have developed a visualized simulator to simulate and analyze forwarding behaviors of WNs, including PicTree fusion based and Epidemic Routing based [11] (ER-based) picture forwarding schemes. Results show that CooperSense saves over 50% traffic and storage space comparing with the traditional ER-based method.

The rest of the paper will be organized as follows. Section 2 outlines the related work on common data fusion and picture selection and fusion methods for MCP. Section 3 presents the CooperSense framework and data models followed by PicTree-based picture set fusion method in Section 4. We will present and discuss experimental results in Section 5, and we conclude the paper and speculate about the future work in Section 6.

2. Related Works

CooperSense well addressed problems in the cooperative picture forwarding procedure in MON/DTN, which utilizes a data fusion method and a data selection method. In this section, we will introduce some related works about these two methods.

2.1. Data Fusion

Studies focusing on the issue of data forwarding in MON/DTN during a disaster/emergency either introduces DTN architecture to an efficient disaster response network [21] or eliminates redundancies in disaster related content [7] for fast message delivery out of the disaster zone. The performance of simple routing protocols (e.g., flooding) for data forwarding is generally poor [10]. Many generic opportunistic forwarding protocols, such as Epidemic Routing (ER) and its variations, for example, Epidemic Routing with Fusion (ERF) [16], have been proposed to route data among mobile nodes.

Picture data cannot be fused as numeric data can. For the visual sensor network, Chow et al. proposed the method to process and combine overlapping portions of pictures in neighbor sensors so as to reduce the energy spent on image transmission [20]. But, in MCP, battery capacity of mobile devices is limited. In order to save the power used on image processing, the representative pictures should be simply selected in the first place by mobile devices as a fusion result. Picture set fusion is regarded as picture selection, and we will introduce some related works in the following.

2.2. Picture Selection

MCP applications can measure similarity of pictures based on different features due to their various knowledge mining purposes. Available features include both visual features and photographing contexts [2], such as the shooting direction, the timestamp, the location, and the ambient light. For example, FlierMeet [4] collects crowdsourced photos of fliers for cross-space public information reposting, tagging, and sharing, and it only selects one picture of every flier based on the visual feature. PhotoCity [5] leverages a game-based incentive way to collect photos of buildings in cities for 3D city modeling, and it selects pictures of buildings taken from different directions. Photonet [7] and the work in [9] are disaster response applications, by using which a group of survivors and first responders may be able to survey the damage and send images to a rescue center without the access to functional communication infrastructure. These two applications focus on collecting visually different pictures based on the location and visual features. Therefore, in order to satisfy various situations of MCP applications, CooperSense will measure similarity of pictures based on the location, the shooting direction, and the timestamp for picture selection.

In order to save traffic and decrease the communication overhead, some MCP applications use a preselection way to forward valuable pictures, for example, PhotoNet [7] and MediaScope [15]. Preselection means that valuable pictures are selected by mobile clients before they are uploaded. However, those applications are application-specific and cannot address varied needs/constraints (e.g., spatiotemporal contexts, single or multiple shooting angles to a sensing target). COUPON [16] addresses data fusion in MON, but it is a generic and formal solution and needs further improvement for MCP. Therefore, CooperSense can address data fusion issue for MCP in MON and utilizes a data fusion for the preselection process. For MCP, data fusion is actually merging picture sets of different WNs.

2.3. Picture Set Fusion

For MCP, most applications will focus on how to efficiently assess similarity of pictures and select diverse pictures. For example, WNs of PhotoNet [7] a priori forward other pictures that can increase content-diversity of others’ picture sets. The work in [9] detects critical content (e.g., fires, road blocks) on the images through image processing, and those detected images with critical content can be forwarded faster than those without critical content in order to quickly address critical events. Mediascope [15] also selects different pictures based on their locations. Although they tried to decrease duplicate picture transmission through picture set fusion, the comparing algorithm to select pictures is queue-based, which is time-consuming. In order to improve the efficiency, CooperSense uses a faster tree-based algorithm to aggregate picture sets, which will be introduced in the following sections.

3. CooperSense Framework

3.1. An Overview

CooperSense aims to satisfy the sensing requirements of various MCP tasks. It uses an efficient and effective picture set fusion method and an overhead-saving selective forwarding method based on the picture set fusion. In this section, we will present three data models and the framework of CooperSense.

As shown in Figure 1, CooperSense has three-stage workflow: (1) the initiation stage, (2) the photographing and forwarding stage, and (3) the picture uploading stage. We will introduce the mechanism via the workflow at the WN side.

The framework of CooperSense contains three stages.

Firstly, in the initiation stage, when a person is entering the observed area, he/she will download task requirements to his/her smart device, namely, the WN. Since some tasks may be long-term, the WN can download requirements of both new tasks and old unexpired tasks. Meanwhile, in order to terminate the epidemic of useless pictures in time, the collection log of unexpired old tasks is also downloaded. The collection log is a PicTree structure and contains the information of carried and uploaded pictures. Both MCP server and WNs have collection logs. According to the collection log contributed by other WNs, any WN can delete useless pictures and forward useful pictures to other WNs in the next stage, that is, the photographing and forwarding stage. Here, useless pictures refer to WN-carried duplicates of uploaded pictures, so deleting them means terminating useless epidemic.

Secondly, in the photographing and forwarding stage, people take pictures according to tasks’ requirements, and WNs automatically forward valuable pictures to other WNs when they encounter. For instance, assuming that the WN wn1 encounters a WN wn2, then the forwarding consists of two steps: (i) wn1 sends its collection log to wn2 and wn2 merges wn1's collection log with its own, named PicTree fusion; (ii) wn2 can select pictures during the PicTree fusion procedure and send them back to wn1, named PicTree fusion based forwarding. Also, using this two-step procedure, wn2 sends pictures to wn1.

Finally, in the picture uploading stage, the WN leaves the observed area and uploads picture collections when the data center becomes accessible. Also, uploading picture is based on PicTree fusion to save traffic.

3.2. Data Modeling

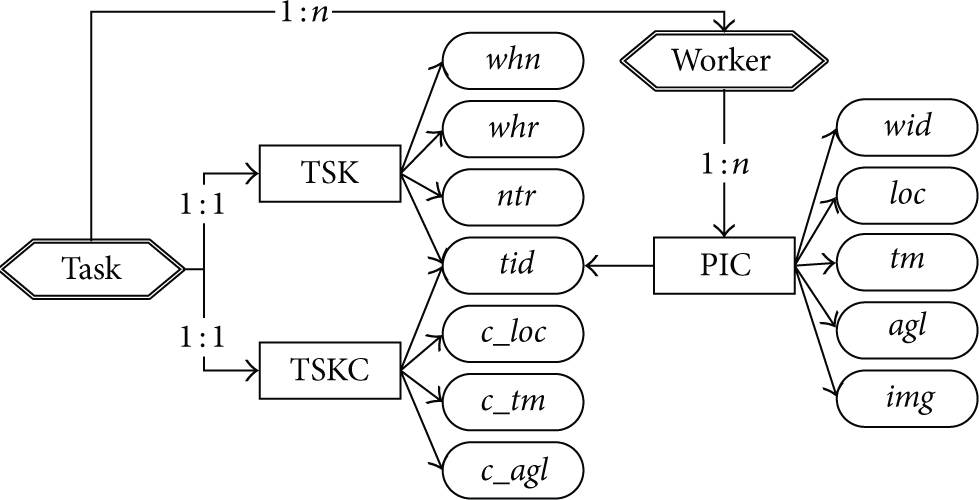

3.2.1. Task Model

An MCP task is denoted by TSK:

Since pictures taken in close proximity or time period may be highly similar, the implicit task constraints are defined for the picture selection in most MCP applications, for example, PhotoNet [7], Smartphoto [22], and Fliermeet [4]. The task constraint is denoted by TSKC:

3.2.2. Picture Model

A picture captured by a smart device is denoted with PIC:

3.2.3. PicTree Model

The picture set carried by a WN is denoted by

Layers and tree nodes’ structures.

Each layer of the PicTree is mapped onto a feature of the picture, so properties of tree nodes in different layers are denoted by different vectors. As shown in Table 1, most attributes of tree nodes correspond to attributes of both the task and the picture shown in Figure 2. Attributes (ts, te) in the layer

Data model relationship between pictures and a task.

Traditional tree fusion utilizes two operations, pruning and grafting. In order to terminate useless epidemic, we proposed four operations for PicTree fusion shown in Figure 3, namely, virtual or real grafting and virtual or real pruning. The tree node whose vLink is False means that it is virtual pruned, and its offspring tree nodes can be removed, that is, real pruned. Consequently, if vLink of the tree node is False, then, the fact that this node is grafted is called virtual grafting, and if not, the grafting is called real grafting.

Four kinds of basic operations for PicTree fusion.

4. Methodology

We use the PicTree structure to store the collection log, including all the context information of carried pictures. Through merging two PicTrees of two WNs, useful pictures can be selected while useless ones can be deleted. Next, we will introduce PicTree fusion followed by PicTree fusion based picture forwarding.

4.1. PicTree Fusion

4.1.1. Tree Node Fusion

PicTree fusion is a procedure of merging one PicTree with another PicTree through merging or grafting tree nodes. The root node is meaningless and tree nodes mentioned in this paper do not include the root node.

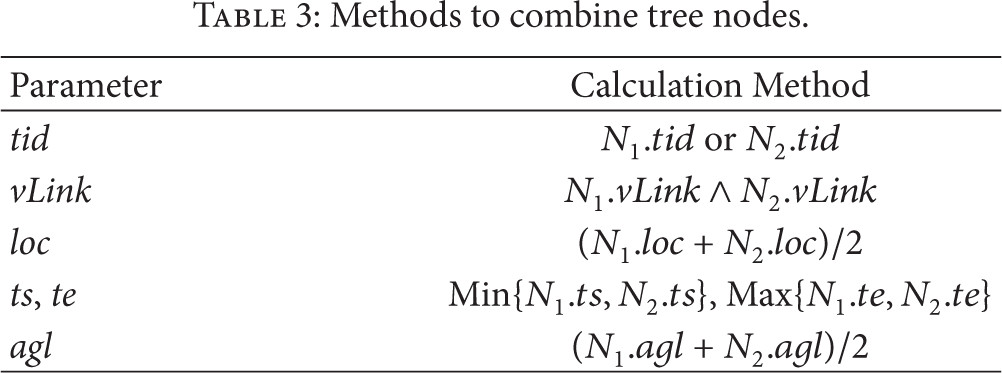

In order to merge PicTrees, two methods are proposed as follows. A method to assess the similarity of two tree nodes: as shown in Table 2, A method to value parameters of the merged tree node: if two tree nodes are merged, parameters of the new tree node will be valued with methods shown in Table 3.

Conditions for accessing similarity of two tree nodes

Methods to combine tree nodes.

Further, there are seven basic fusion rules for tree node fusion as follows: Any nonleaf tree nodes can be merged if they are similar. If a picture is uploaded to the data center, its container leaf node's parent node will be virtually pruned. If two leaf nodes are similar, then the older one (i.e., the stored picture's timestamp is earlier) will be abandoned. So the leaf node has no siblings. Child nodes of a virtually pruned tree node is useless, and they will be really pruned, that is, deleted. The virtually pruned node (i.e., vLink = False) has the strongest epidemic capability. Any node can be grafted to a PicTree only if it is different from other nodes of this PicTree. Nodes of two branches are merged from top to bottom like a zip until nodes on a certain layer cannot be merged.

According to the conditions and valuation methods shown in Tables 2 and 3, we will use an example to show the PicTree fusion based on fusion rules mentioned above as well as to explain two operations: pruning and grafting. As shown in Figure 4, the WN wn2 and the WN wn3 combine their PicTrees with the PicTree Pruning. The node Grafting. Nodes

PicTree fusion of CooperSense. The dashed line means vLink = False. The left fusion is a pruning process while the right fusion is a grafting process.

Tree node fusion is a fundamental process in PicTree fusion. Next we will explain depth-first PicTree fusion algorithm.

4.1.2. Depth-First PicTree Fusion Algorithm

PicTree fusion uses the depth-first search. Given two PicTrees

Calculate other parameters of

haveCombined = False;

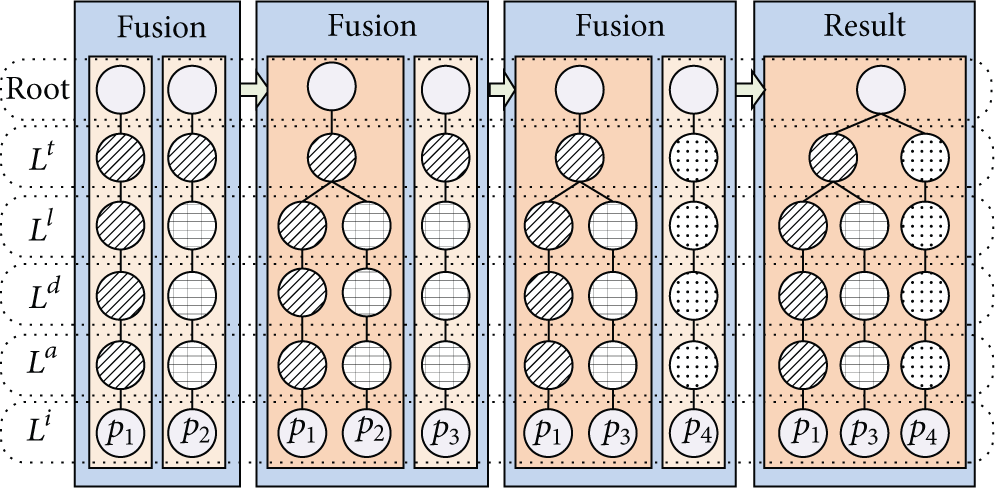

4.1.3. Fusion-Based PicTree Generation

PicTree fusion can be used not only for multi-WNs but also for single WN. Each WN has a PicTree, which is initially copied from the data center and further grows during the picture-taking and picture forwarding procedures.

Given a task (TSK) tsk and its task constraints (TSKC) tc, when a picture PIC p of this task is taken, a single-branch PicTree will be generated. Tree nodes of this new one-branch PicTree are initiated according to Table 1. vLinks of all tree nodes are valued True, L

d

.ts = p.tm, and L

d

.te = p.tm. Other parameters of tree nodes are equal to attributes of tsk, tc, and p, such as L

l

.loc = p.loc, L

l

.c_loc = tc.c_loc. The PicTree will grow by merging the old PicTree and the new one-branch PicTree. If there are multitasks and more than two pictures, a complex multibranch PicTree will be generated through the PicTree fusion. As shown in Figure 5, the PicTree is generated with

A PicTree is generated through three fusions from left to right with four pictures. Same colored nodes in the same layer are similar nodes.

4.2. Fusion-Based Picture Forwarding

In this section, how to utilize PicTree fusion to forward useful picture and terminate useless epidemic will be explained.

4.2.1. A PicTree Fusion Example

Both virtual/real grafting and virtual/real pruning are utilized for three purposes. (i) The picture file will be forwarded only if there is a real grafting and the picture is sent from the subbranch provider to the subbranch receiver. (ii) The accomplished task and copies of uploaded pictures will be eliminated through the virtual grafting and the virtual pruning. (iii) The storage space is saved through the real pruning.

Figure 4 basically explained the PicTree fusion, but how to utilize this fusion to forward pictures is still a question. Another PicTree fusion example was shown in Figure 6. wn1 and wn2 are two working WNs. Picture sets carried by them are

PicTree fusion of CooperSense. There are 3 WNs

4.2.2. Picture Forwarding

When two WNs encounter, they would like to receive pictures that they do not have. In order to select pictures, each WN costs a very small amount of traffic to receive the collection log from the other WN, and according to the collection log, the WN knows which picture needs be sent to and received from the other.

As shown in Figure 6, before these three WNs encounter, the effective pictures carried by wn1, wn2, and wn3 are

4.2.3. Useless Epidemic Termination

The information of both accomplished and unaccomplished tasks is downloaded by WNs. In order to mark the accomplished task, a leaf tree node in the layer L t corresponding to the task is created and its vLink is set to False. By the aid of the PicTree fusion, other WNs in the observed area will prune the branch mapped with this accomplished task. Furthermore, the WN will download the PicTree of uploaded pictures, whose vLinks of all tree nodes on the L a layer are valued False. Here those virtually pruned nodes mean that the picture with the context (denoted by tree nodes of this branch) has been uploaded to the data center already.

As shown in Figure 6, the task

5. Performance Evaluation

In this section, we will evaluate the performance of CooperSense from different facets, including overhead, efficiency, traffic, and storage.

5.1. Simulation Setup

The Legion Studio [23] traces we use in this study are available at CRAWDAD [20], which are microsimulation of pedestrian mobility Contributed in the Web. They are simulated data set by the work in [13]. These traces are from a simulation of walkers in a part of downtown Stockholm in which several parameters are varied. Sparse and medium-density dataset are used here. The Ostermalm area consists of a grid of interconnected streets shown in Figure 7 and 4 MCP tasks are defined with 4 interesting points. Twelve entrances connect the observed area with the outside world. The active area of the outdoor scenario is 5,872 m2.

Simulation scenarios. (a) The electronic map of the observed area. It is Ostermalm area in downtown Stockholm. (b) Simulated moving WNs and interesting points. And medium-density WNs are simulated and shown in the map.

We started collecting data after a warm-up period because the system starts empty. After that we kept collecting observations until no WN forwarded pictures any more. The reason for that is a WN will not take and forward pictures when a WN learns that the task is accomplished from others through exchanging collection logs, and some WNs will still perform tasks when the data center has already got plenty of pictures for the task. The simulator of CooperSense is shown in Figure 8. In the simulation zone, the WN denoted by a color circle is moving according to the trajectory dataset of CRAWDAD [24].

The interface of the simulator of CooperSense (CS: CooperSense; ER: Epidemic Routing). Medium-density WNs are used.

In order to simulate the forwarding behavior of the WNs, we define four rules as follows:

The data and task centers are connected with the outside world, so the data center can receive task requirements from the task center. If a WN is close to the interesting point (i.e., whr of a task) enough, then it will take pictures as a working WN. A WN might take multiple pictures from different positions for one task, and shooting angles will be computed based on this WN's trajectory with the range When enough pictures have been uploaded to the data center, the corresponding task will be marked as accomplished, but the WN might still upload pictures. Once two WNs are close enough, only if the link duration time is larger than the transmission time, then data delivery can be finished.

5.2. Baseline and Metrics

ER (Epidemic Routing) [11] is a popular opportunistic forwarding approach, and we will compare CooperSense with it. In the simulation, each WN of ER has a set of pictures and a set of tasks. The flag of the accomplished task is set to False, all unexpired tasks are downloaded like CooperSense, and each WN forwards pictures and tasks’ flags.

Different metrics are defined in this paper to evaluate the method, including traffic-saving, storage-saving, efficiency, effectiveness, and coverage. Detailed metrics are defined in the following sections along with the experimental results.

5.3. Experimental Result

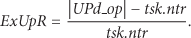

5.3.1. Energy-Saving Evaluation

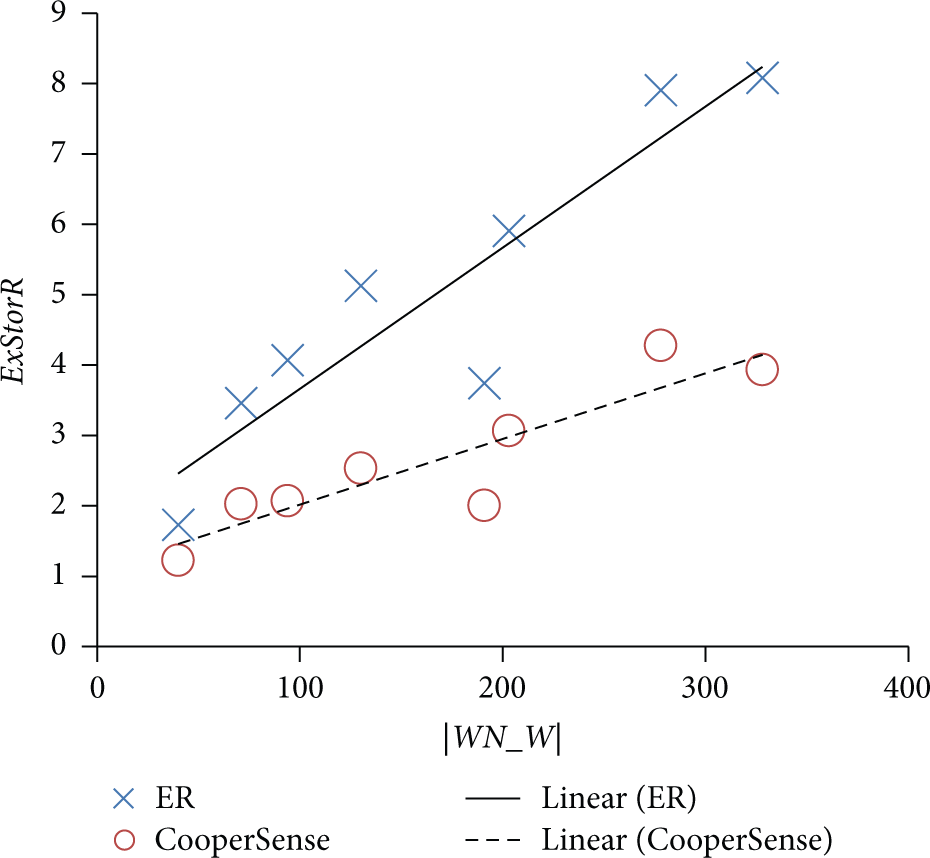

Data transmission is the most energy-consuming process, so we use traffics of forwarding pictures (denoted by TffC) and ratio of extra uploaded pictures (denoted by ExUpR) to evaluate the energy-saving performance. ExUpR denotes the ratio of extra uploaded pictures calculated with (1), where UPd denotes the set of uploaded pictures, UPd_op denotes the optimal diversified subset [2] of UPd that contains valuable pictures, and tsk is a task TSK:

WN_W denotes the set of working WNs in the observed area. Because different WN enters and leaves uncertainly, WN_W at different time points are different. So |WN_W| in the experiment has an average value.

As shown in Figure 9, TffC of ER is much higher than that of CooperSense, especially when |WN_W| is high. This is due to the fact that more pictures will be forwarded when the density of WN increases and ER creates more useless forwarding.

Experimental result of comparing the traffic-saving performance of CooperSense and ER. All tasks are accomplished.

ExUpR are utilized to evaluate the extra overhead. Epidemic termination is the way to decrease useless forwarding, which is evaluated by the metric ExUpR. As shown in Figure 10, most ExUpRs of both ER and CooperSense are close and less than 50%, but sometimes ExUpR of CooperSense is much lower than that of ER.

Experimental result of comparing the traffic-saving performance of CooperSense and ER. All tasks are accomplished.

Through comparisons of TffC and ExUpR between ER and CooperSense, it is obvious that the latter can save more traffic overhead.

5.3.2. Storage-Saving Evaluation

ExStorR denotes extra storage overhead ratio calculated with (2), where RP refers to the set of raw pictures taken by cameras and CP denotes to the set of pictures (including copies) carried by all WNs:

As shown in Figure 11, ExStorR of ER is much higher than that of CooperSense. Therefore, CooperSense saves storage overhead more effectively. CooperSense uses PTree-fusion to stop forwarding redundant pictures, so the storage overhead and the traffic overhead are saved. Although ER can also use variables to mark already uploaded pictures and find duplicate pictures, for MCP, its traffic overhead is still higher than CooperSense's because WNs of ER must send complete PICs while WNs of CooperSense only need to send a certain part of PICs according to the PTree-fusion context.

Experimental result of comparing the storage-saving performance of CooperSense and ER. All tasks are accomplished.

5.3.3. Efficiency Evaluation

Delay of uploading pictures is used to evaluate the efficiency of opportunistic forwarding method. AvgDelay refers to the average delay of uploaded pictures calculated with (3), where tArr denotes the timestamp of the picture being uploaded to the data center. TmC denotes average time-consumption of a task:

Efficiency evaluation is shown in Figure 12, where both AvgDelay and TmC of ER and CooperSense are close. Generally, ER is an efficient way to upload pictures, but the result shows that CooperSense provides a similar efficiency as ER can do. On the one hand, although WNs of CooperSense forward fewer pictures via the opportunistic network than those of ER do, CooperSense's efficiency for task performing is not influenced. On the other hand, both AvgDelay and TmC decline as |WN_W| increases, so the efficiency of opportunistic forwarding method cannot be improved by increasing the number of PIC copies but by involving more WNs. Hence the selective forwarding mode utilized by CooperSense consumes less resource on the promise of efficiently accomplishing tasks.

Experimental results of the efficiency of CooperSense and ER.

5.3.4. Effectiveness Evaluation

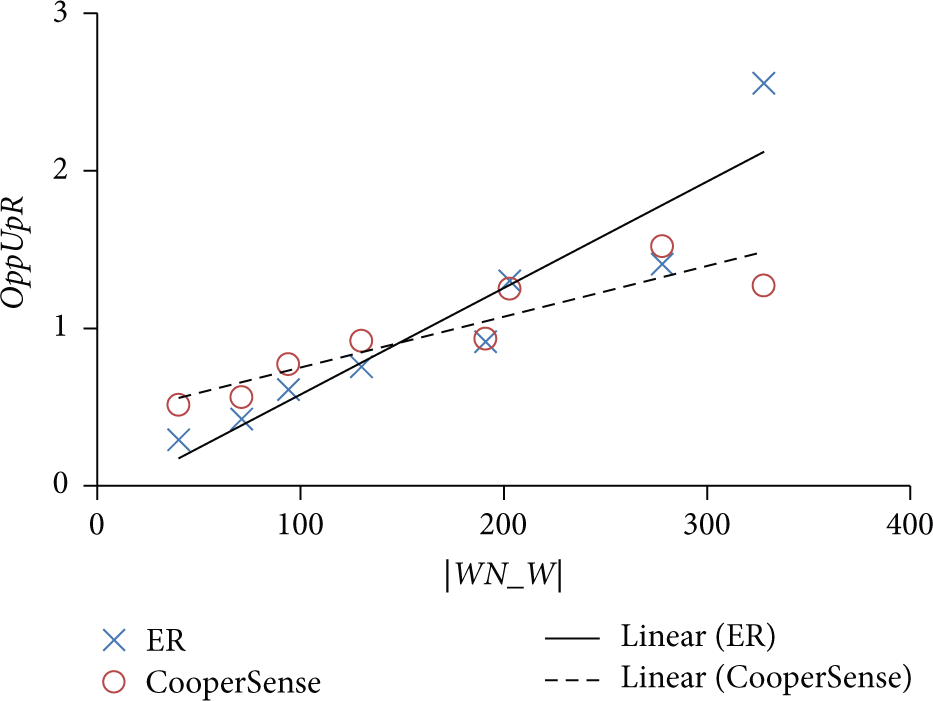

In order to evaluate the effectiveness of our method, we define metrics to indicate how much opportunity of uploading pictures our method can utilize. OppUpR denotes the ratio of opportunistically uploaded pictures to the data center calculated by (4), where the picture set UPo (UPo ⊆ UPd) consists of pictures that are not uploaded by their photo-takers:

Effectiveness evaluation result is shown in Figure 13. OppUpR of them have no clear difference; therefore, CooperSense and ER have similar effectiveness of opportunistically uploading pictures. Additionally, but when |WN_W| increases, OppUpR of them increases because more people could opportunistically carry pictures.

Comparison between ER and CooperSense on the ratio of opportunistically uploaded pictures.

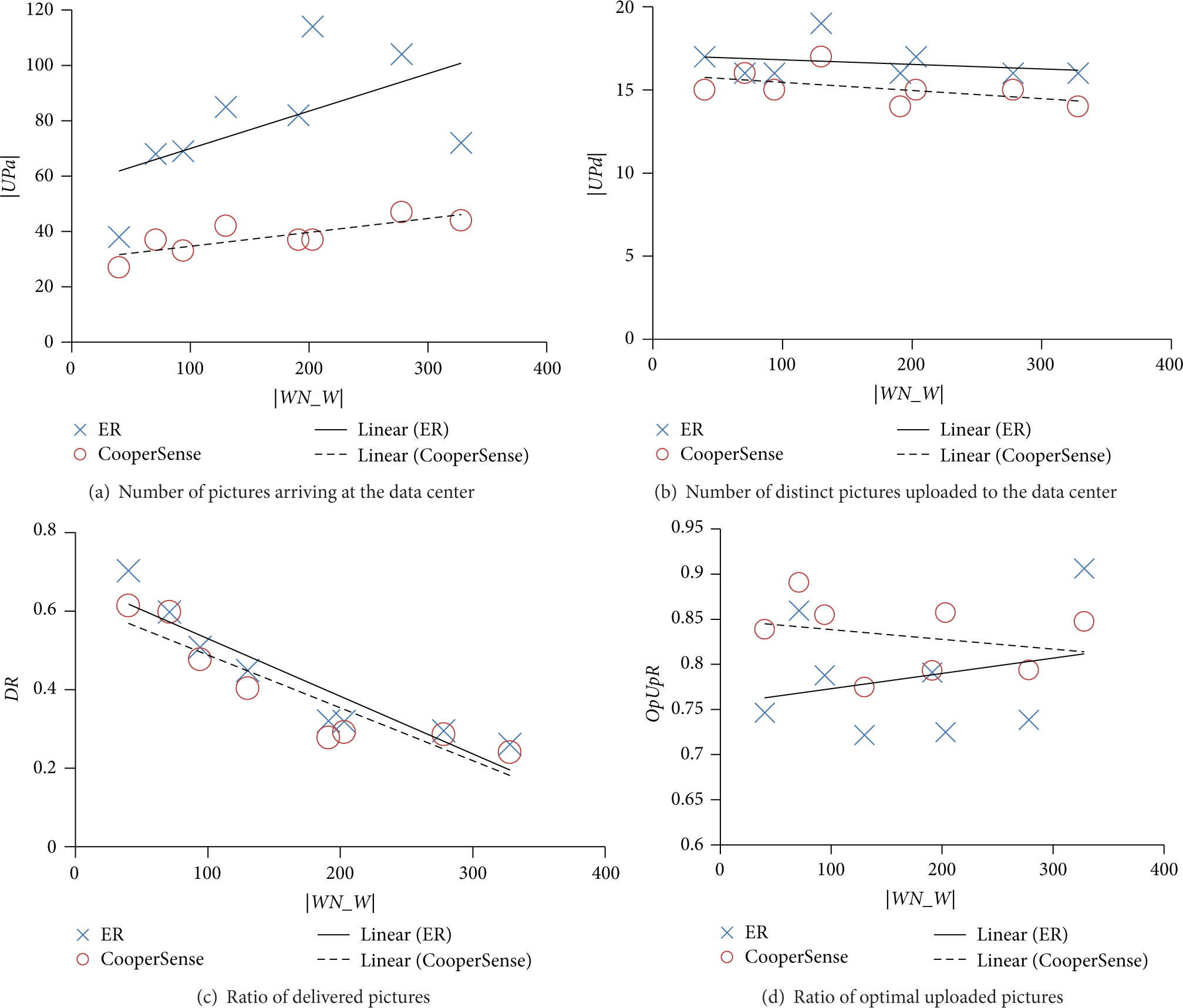

5.3.5. Coverage Evaluation

Coverage evaluation is based on four metrics: |UPa| is the number of those pictures that are carried out of the observed area, |UPd| is the number of uploaded pictures, DR denotes the ratio of delivered pictures calculated with (5), and OpUpR denotes the ratio of optimal uploaded pictures calculated by (6):

The comparison results are shown in Figure 14. |UPa| of ER are much larger than that of CooperSense and |UPd| are similar, which proofs that CooperSense can save storage overhead comparing with ER. Additionally, since |UPa| of CooperSense is less than that of ER but OpUpR of CooperSense is a little higher than that of ER, this means that UPd of CooperSense is more diverse than that of ER.

Experimental result of comparing the performance of CooperSense and ER.

As experimental results show, CooperSense almost has the same picture collection capability of ER while it saves much traffic and storage overhead comparing to ER. The PTree-fusion method utilizes a tree structure to store picture collection logs and PTree-fusion is an efficient way to exchange different data among WNs for MCP in MON. Therefore, our method is flexible, which can selectively forward valuable pictures without complex computations.

5.4. Discussion

CooperSense forwards pictures through exchanging PicTree of encountered worker nodes, and there are still some issues that are not explained in this paper, some of which are listed below:

The visual feature is not used during the PicTree fusion. In this paper, we do not include the visual feature of pictures to assess the similarity because the visual similarity measurement is highly related to the application. However, the image layer can be easily inserted when it is needed in real applications because of the flexibility of PicTree construction. Some works on opportunistic forwarding leverage the prediction of people's position to select the right people to carry and forward data, which is more adaptable for delay tolerant tasks. Since reducing delay is the goal of this scenario, we do not use this method in this paper, but it may be utilized in our future work. Although an emergency scenario is used to test our work, cooperative crowd sensing is also applicable in some crowed spots, such as in a thousand-attendee conference, a football match, or a singer show. Defining a task and its task constraint, using CooperSense, people can receive various pictures shared by others nearby who have better or different views without using the limited and narrow-bandwidth cellular communication.

6. Conclusion and Future Work

Above all, CooperSense is a novel cooperative and selective data forwarding framework for MCP. In this paper, the PicTree model and its fusion method were proposed for participant collaboration and selective data forwarding. Experiment results indicate that CooperSense has higher performance in terms of storage and network cost than ER. It is planned to be used in city-sense project for large-scale user study, and all the methods will be further improved accordingly.

Footnotes

Competing Interests

The authors declare that they have no competing interests.

Acknowledgments

This work was partially supported by the National Basic Research Program of China (no. 2015CB352400) and the National Natural Science Foundation of China (no. 61332005, 61373119, 61222209).