Abstract

Novel and effective engines for data analysis in integrated information systems are urgently required by diverse applications, in which massive business data can be analyzed to enable the capturing of various situations in real time. The performance of existing engines has limited capacity of data processing in distributed computing. Although Complex Event Processing (CEP) has enhanced the capacity of data analysis in information systems, it is still a big challenging task since events are rapidly increasing in diverse applications. In this paper, a lightweight intelligent data analysis system with a novel CEP engine named LIDA-E is introduced, which employs the knowledge base with rules and an event processing algorithm for analysis. Event models as well as operators support the rules for event selection and aggregation. These operators and rules have been utilized for constructing new CEP system architecture which combines expressiveness and efficiency in analysis. It adopts the agents and filter conception explicitly to provide the event transmission mechanism efficiently. Finally, the comparison between the proposed engine and the existing engine shows that LIDA-E has 48.65% averagely reduced time cost in different tests. The experimental results demonstrate that the developed architecture has better performance in both transmitting and analyzing a large number of events.

1. Introduction

In recent years, the capacity and usage of information systems have been increasing. These are integrated together primarily due to business processes [1]. The data volume generated by a variety of heterogeneous sources has increased around the real world [2, 3]. As a result, it is important that systems need to efficiently collect and analyze the huge amount of data and events in real time to discover meaningful results.

Data analysis is a process of inspecting, cleaning, transforming, and modeling data with the goal of discovering useful information, suggesting conclusions, and supporting decision-making [4]. It is increasingly vital for the success of business information systems. Therefore, there is a need of extremely efficient and flexible data analysis platforms to manage and process such data sets [5]. Traditional methods are not suitable for the massiveness and variety of data. As a result, a new efficient solution for data processing is imminently needed.

Some reliable approaches are available for data processing [6, 7]. The well-known MapReduce paradigm has been widely used and experienced by both academia and enterprise. It is a programming model and software framework developed by Google. It simplifies the processing of vast amounts of data in parallel on large clusters of commodity hardware in a reliable, fault-tolerant manner [6]. However, Hadoop and MapReduce are not suitable for processing event streams. They were designed for offline batch processing of static data, in which all input data needs to be stored on a distributed file system in advance. Storm is another efficient solution [7]. It is a distributed, reliable, and fault-tolerant system, which is used particularly for processing continuous real-time data streams. It adopts a default scheduler, which employs a simple round-robin algorithm based cluster, without considering the internode and interprocess traffic, and it may have a significant impact on performance [8]. Besides this, the default scheduler in Storm often uses all available nodes in a cluster, regardless of workload. For the light workload of input stream, the operational cost can be reduced by using a single node in a cluster [9].

Therefore, a solution for real-time processing of large-scale web data stream with less cost should satisfy four requirements. They are as follows.

However, the volume of data created by integrated information systems is enormous, which is beyond the processing capabilities of the system. A new solution is needed to process the continuous data in real time as well as discover the exceptions, threats, and actionable information behind all data, indicating that the Complex Event Processing (CEP) is a good candidate. Applying CEP approach could provide an adequate solution: enable access to real-time information while providing advanced query capabilities and minimal perturbation [10, 11]. Therefore, CEP could be used in intelligent data analysis for enabling real-time processing and situation detection consequently leading to a new quality of system.

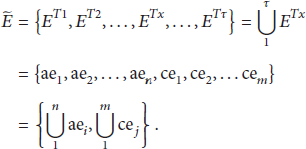

In general CEP applications, engine usually processes events according to the rules and detects different situations during events. Rules are defined by system administrator in build time. It is a component of CEP engine for saving the composite constraints of complex event. The general architecture of such CEP applications is shown in Figure 1. It is working in three steps. Firstly, the streams of incoming events are captured by CEP engine (run time). Secondly, event patterns and CEP rules defined by users (build time) are implemented in CEP engine to analyze the event streams and detect meaningful data (run time). Finally, immediate actions are triggered in CEP engine (run time).

General architecture of CEP application.

According to the traditional CEP technology, we aim to build lightweight, modularized, and extensible architecture to improve the data analysis capability in integrated information system by CEP approach. In particular, the need of a novel CEP engine to deal with a large number of input events from integrated systems is obvious. Starting from these premises, a lightweight intelligent data analysis system (LIDAS) is proposed. It has a CEP engine LIDA-E, which is developed to provide event processing services. In order to represent the relationship and operation of events, we propose event models with operators to describe event rule through Event Processing Language (EPL). CEP engine is an important component, which adopts an efficient event processing algorithm. It translates rule statement into rule instance for processing input events. Inside the system, we introduce a data knowledge base used for business data knowledge storage and presentation as well as a rule base presented for rules storage. A distributed event transmission mechanism is presented for event delivery from information systems (agents) to CEP engine (filter).

As a solution, we propose LIDAS so that the administrator (has no knowledge on CEP) can concentrate on the definition of event rules without the need of handwriting any code. The contributions of this paper can be summarized as follows:

Event models define the semantics of both atomic and complex events. They allow easy adaptation to information system needs and are used to describe event aggregation in event processing rules. The high-performance CEP engine LIDA-E employs the event processing algorithm and knowledge base is introduced for intelligent data analysis in real time. A distributed event transmission mechanism is proposed by agents (deployed in information systems) and filter (deployed in LIDA-E engine) explicitly conceived to realize easy and efficient event delivery. We discuss the design and implementation of LIDAS architecture, which is an integration of LIDA-E engine and event transmission mechanism.

The rest of the paper is organized as follows. In Section 2, we discuss related works of CEP technology. Section 3 describes the relevant concepts of LIDAS, such as event model, operators, and SQL-like Event Processing Language. The proposed architecture is presented in Section 4. Section 5 shows the experimental results. Finally, in the last section, we summarize our research work.

2. Related Work

CEP is considered as extracting complex situations and reacting on them from massive events [12]. Meanwhile, CEP is not a new terminology, as Luckham first introduced [13]. It has been widely exploited during the past years mostly in big data analytics [5], RFID [14], Internet of Things (IoT) [15], failure prediction systems [16], real-time grid monitoring [11], and healthcare [17]. According to CEP, different algorithms have been proposed to increase Complex Event Processing capability. They adopt CEP engine (like Storm [7], Drools [18], and Esper [10, 19]) in their applications, while others design new event processing engines and system architectures [11, 12, 15, 16, 20] with evaluation to show the usefulness and scalability.

Storm is a free and open source distributed real-time computation system, which makes it easy to reliably process unbounded streams of data in real-time processing by batch operations [7]. It includes spouts and bolts, which could be executed with many tasks in parallel on multiple machines (worker nodes) in a cluster [8]. Storm is designed to process unbounded streams of data in a storm cluster (master node and worker nodes). However, as discussed by Xu et al. [8], Storm weakly focuses on the performance and job assignment with different workload. Moreover, the deployment of Storm requires more nodes.

Differing from Storm, Drools [18] and Esper [10, 19] are CEP engines which include a module providing native support for events evaluation and temporal logic analysis in a single work node. Yao et al. [18] presented a Drools based CEP framework which was used to process surgical events and provided sense and response capability for hospitals. In the framework, the author mentions that the knowledge with CEP is quite complicated, most of which comes from experts and is fuzzy and hard to verify the accuracy. To combine knowledge with CEP system, Bruns et al. [10] describe a novel event-driven architecture for a decision support system which leads to a new quality of M2M systems, which are intelligent and flexible. The system adopts Esper as the core event processing component and employs a rules base to explicitly represent the M2M knowledge of domain experts. Therefore, CEP could be used as an appropriate candidate with rule base for intelligent data analysis.

However, with the development of web technology, the open source engines become less efficient to deal with huge volume of data. In order to get higher performance, some special CEP engines have been developed for particular domains (LiSEP [12], DPCEP [15], and RTE-CEP [20]). Zappia et al. [12] described the design and implementation of a lightweight and extensible Complex Event Processing engine, called LiSEP, for sensing and responding applications. The author adopted the Staged Event-Driven Architecture (SEDA) principles to clearly separate core event processing logic from lower level resource management issues. However, event model and operators are not designed and used for LISEP, as well as event collection. The event processing method of LISEP is not clearly described in their research.

Combining CEP with distributed systems, GEMINI2 [11] is proposed as a custom framework dedicated for CEP based real-time monitoring of grid infrastructures. In GEMINI2, 100 sensors loaded 400 events in one second, and the performance of the CEP engine was not evaluated. The performance of working nodes and servers could not support massive events transmission.

In summary, most CEP system architectures used in existing distributed environment are not oriented towards real-time processing for integrated information systems. The prevailing CEP engines are not to explore the performance of event processing but rather expose the event processing methods, while the engines are suitable for processing event streams and are not well suited for analyzing massive data in integrated systems. The work described here is a result of the previous investigations in CEP and intelligent data analysis in distributed network.

3. CEP for Intelligent Data Analysis

3.1. Application Scenario

A Project Management Information System (PMIS) is one important type of Computer-Based Information Systems (CBIS) and is used to collect, process, analyze, and publish the data for a particular purpose [21]. Project management (PM) is a knowledge-centric and experience-driven activity supported by an appropriate PMIS [22]. PMIS is an integrated information system that helps project manager to carry out projects systematically and monitor progress of projects closely.

A project life cycle is divided into many states, such as proposal, review, implementation, and acceptance states. Starting from project proposal to implementation, Taghavi et al. [23] proposed a web based project management functional model that separates project work progress management into time, fund, quality, contract, bidding, demand, integrity, comprehensiveness, handover, and accomplishment. Each project state is supported by different subsystems, which are combined together as a PMIS. According to project states, we propose a project state model which shows the state changes in a project life cycle. In Figure 2, “−” is a start state and “+” is an end state; others are middle states (proposal, review, implementation, and acceptance); they represent the whole life cycle of a project. In different project states, systems verify (

Project state model.

According to the events attributes in PMIS, constraints are necessary for analyzing data availability and validity when events occurred by user activities. Some rules for data analysis are elaborated in the following. Rule 1: monitor the active state of a subsystem. Rule 2: examine the document examination time of a project in one day. Rule 3: examine whether the number of projects of a manager is above a defined value.

3.2. Event Model

Luckham and Schulte [24] defined an event, as there is a large amount of events occurring inside or outside the system during multiple phases such as making a business transaction, receiving an email, creating a sales report, or uploading a file. Events are extracted from web services, user activities, system log, database, and so on. They are separated into atom and complex; atom events are identified through data collection, and complex events are detected by the analysis of atom event stream [25, 26].

Definition 1 (atom event).

An atom event means that a special activity or data transition occurs in a point in time, which is represented by a six-tuple. The elements of the six-tuple are as follows:

AEid is the identification of an atom event. Pid presents the identification of a project. And A is the set of project attributes and data. S is a source from where the event is generated. T is event type and t is timestamp which identifies when the atom event occurs. In the project management system example, each user activity or operation in system generates an atom event. Atom events usually represent the state of a business attribute or some changes.

Definition 2 (complex event).

It is a combination of atom events or complex events consisting of the predefined rules, such as constraints, logical relationship, and time sequence combination. It is also denoted by a six-tuple:

CEid is the identification of a complex event. C is a combination of the atom events and complex events that trigger this event to happen, where

Definition 3 (event type).

The set of all similar events is called the event type T. It shows the object which triggers the event. Hence,

Events in an event type have the same attributes of T. For instance, the type may refer to events regarding information verification; however, the other event properties may be different (e.g., source or attribute). An example is the type of all events that denote the submitted information.

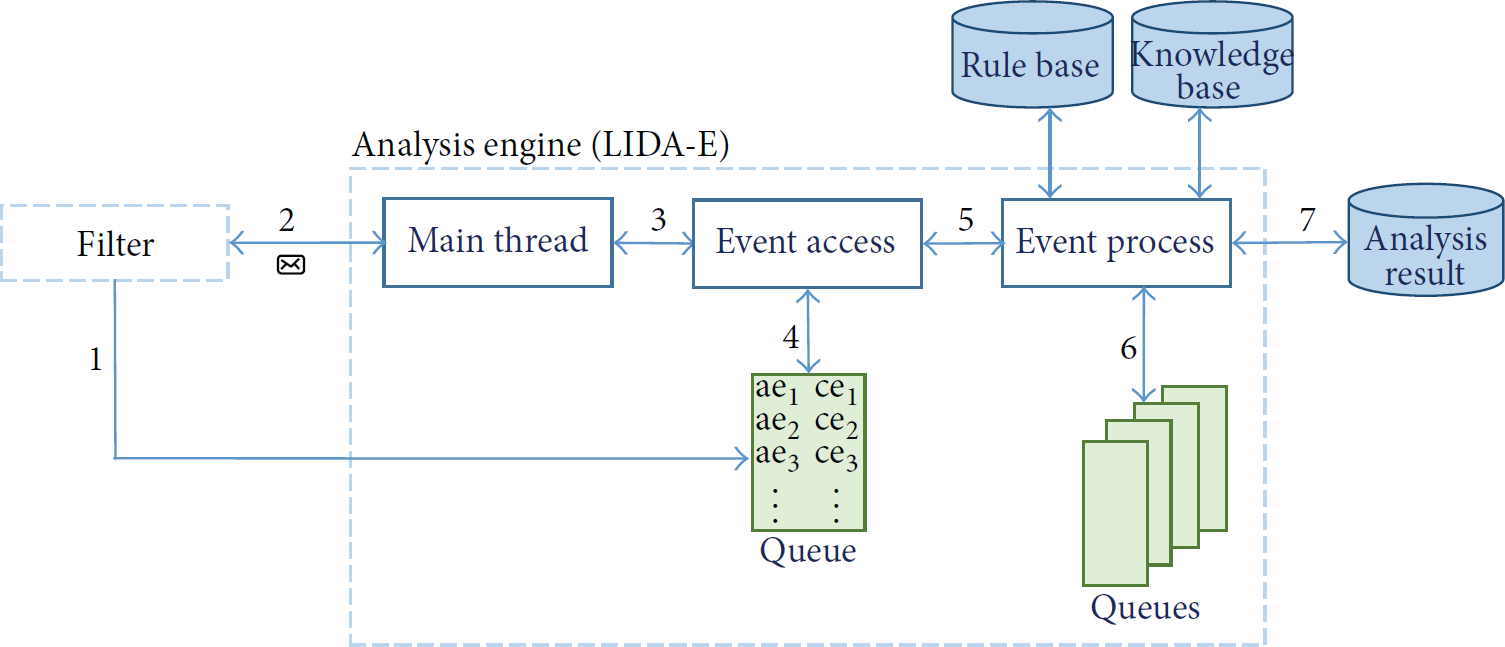

Definition 4 (event space).

The set of all possible events known from a certain information system is called the event space

The event space is formed by all the sets of event types, as well as all the atomic events and complex events. For example,

3.3. Operators

This section describes the operators for aggregating events. They are used to show the relationship among detected events. We extend them from event constructors [18, 27], which consider the aggregation demands on event composition. We present three types of operators: logical operator, mathematical operator, and temporal operator. Table 1 shows the most frequently used operators for event detection. Atom and complex events are both denoted by e.

Event processing operators.

These operators are used to aggregate events and detect complex patterns that catch the meaningful information from real-time data streams. For example, an event pattern WITHIN

3.4. Event Processing Language and Rules

Event Processing Language (EPL) provides a set of patterns like filtering, correlation, applying constraints, and aggregating. These patterns define the availability of events querying and filtering. EPL statement is a description of event operators that are used to derive and aggregate information from event streams. The authors in [27] elaborated twenty EPLs to compare their operators and consumption modes. According to the proposed event operators and consumption modes, we employ Esper EPL syntax [28] for specifying event processing rules supporting by event operators in this investigation. The EPL syntax is presented in the following:

select select_list from stream_def[as name] [, stream_def[as name]] [,…]. [where search_conditions] [group by grouping_expression_list] [having grouping_search_conditions]. [output output_specification] [order by order_by_expression_list] [limit num_rows].

The above EPL syntax includes some query clauses that are introduced by Ahmad et al. [29]. The select clauses are used to select all properties or to specify the list of event properties and expressions. The “from clause” indicates event stream name. The “where clause” is an optional clause used to join and correlate event streams. The “group by clause” separates the output events into groups. The “having clause” is used in combination with the “group by clause” to restrict the groups of returned rows to only those whose condition is true. Adopting EPL syntax, rules declared in Section 3.1 can be represented in a standard structure as follows:

Rule 1: MissingEventAgent

SELECT COUNT(e.Sid) WHITIN(Require Time) IF (COUNT(e.Sid) = 0) THEN Rule 2: UserRepeatDocExamine

SELECT COUNT(e.Pid) WHERE e.TYPE = ‘DocExamine’ WHITIN(1 day) IF ANY(e.COUNT(e.Pid)) > LIMIT_DOCEXAMINE THEN Rule 3: Project ManagerExamine

SELECT COUNT(e.Manager) WHERE e.TYPE = ‘SubmitProjectInfo’ ∧ e.Manager = IF COUNT(e.Manager) > LIMIT_MANAGER THEN

We assume that the above rules provide analysis engine with rules for what to do in event streams. For this aim, the engine is intelligent to execute rules by adopting a knowledge base. Knowledge base provides intelligence for the engine as it contains rich parameters for both engine and rules.

For example, LIMIT_DOCEXAMINE is defined by LIDAS administrator in Rule 2. Its value is according to the document of examination server runtime state. Rule 2 is constructed to balance the capacity of the server to each project and detect vicious document examination request. It specifies that the trustworthiness of a document examination request should be continuously monitored. The notification is generated as soon as the value rises above a given threshold value. Rule 3 is made for analyzing how many uncompleted or submitted projects does a manager have in system. If the number of a manager's projects is beyond the limitation, analysis engine will create an error action.

4. The Architecture of LIDAS

4.1. Architecture Design of LIDAS

We have to extend the capability of integrated information systems towards the intelligent data analysis by adopting advantage of event processing technologies, keeping clearly apart each peculiarity of the CEP components to perform even deep changes without affecting related integrated systems. In designing the intelligent data analysis architecture, we started by specifying the CEP components that interact with the technological architecture and traditional integrated systems.

As shown in Figure 3, LIDAS is a lightweight and modularized framework designed to connect the integrated systems for intelligent data analysis. The bottom of the framework is agents that are deployed in the traditional integrated information systems to extract data in complicated business processes. The upper part is an intelligent data analysis with CEP which deals with event processing and data analyzing. It is implemented as an intelligent processing center connected to explicit event queues in accordance with predefined event rules. System administrators define event processing rules and knowledge through user admin board in system build time. The users get analysis results from user dashboard in real time.

LIDAS modularized architecture with distributed systems.

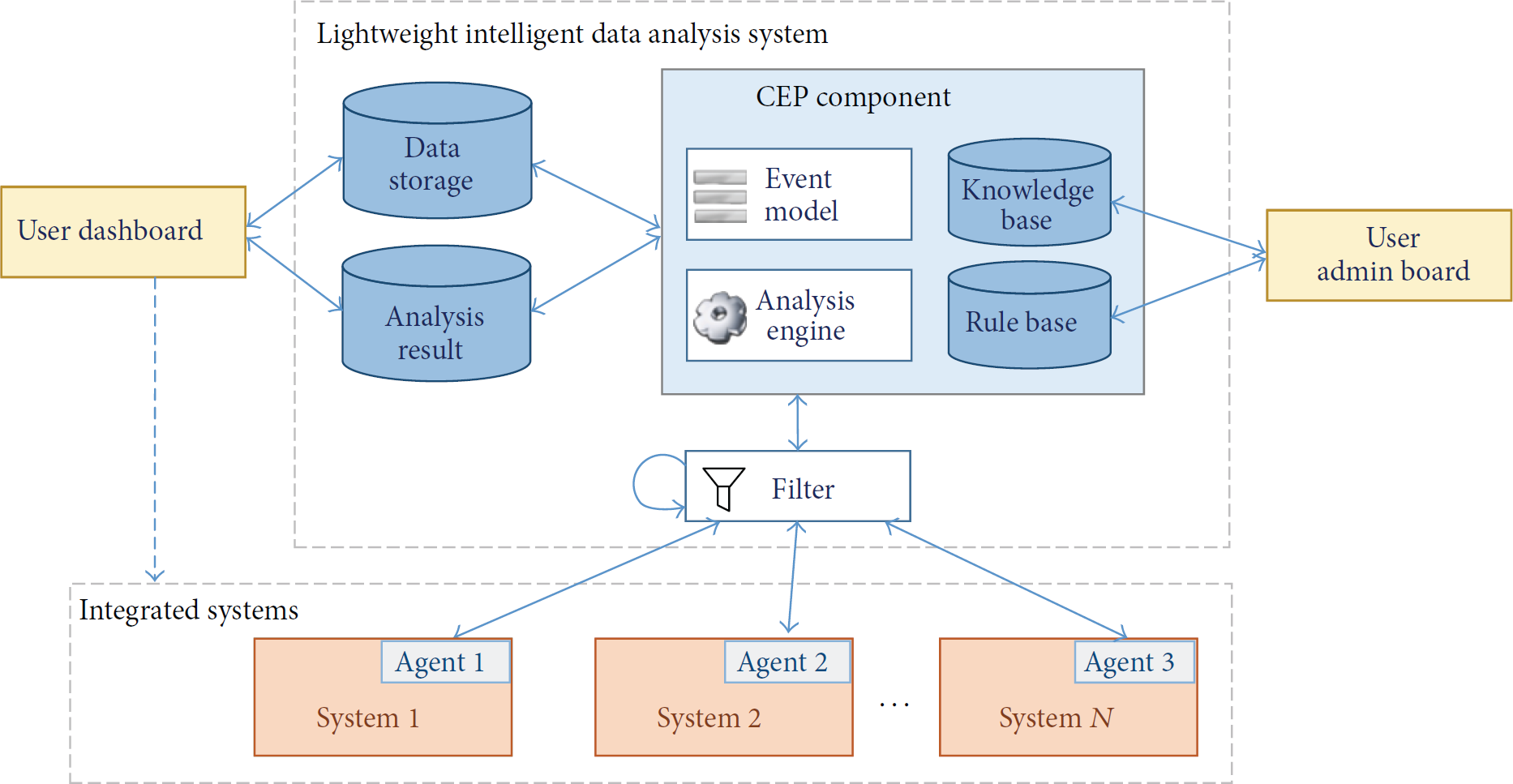

In order to show the workflow of LIDAS framework, we introduced agent, filter, and analysis engine to illustrate event transmit and processing (see Figure 4). First, agents send atom events to the listener module in filter. And then preprocess module reads events from listener and stores them in queue; it sends a message of event arriving to analysis engine. And the engine receives messages from filter; it enables the processing thread to access new events from queue. The graphical representation of the workflow is illustrated in Figure 4 and introduced in the following subsections in detail.

The event transmission and process workflow of LIDAS.

4.2. Event Transmission Component

In order to improve the scalability in large-scale integrated systems and reduce the network delay caused by online event streams delivery, almost all current event processing frameworks are based on CEP. However, event receiving and dispatching problems are commonly ignored. Figure 3 illustrates the simplified view over the LIDAS architecture in distributed systems. The combination of filter and agent works as a bridge that transmits events from information systems to LIDA-E. They are basic components which act as an event provider to CEP engine.

We use agent which is similar to sensor [11] and adapter [30] used in monitoring system to collect information from distributed environment. Agent is responsible for extracting real-time business data and generating atom event. It is event source and is deployed in an integrated system to generate massive events with business data. Using the proposed event model, agent reads data from an interface of integrated system and translates them into atom events. According to the event model (Definition 1), agent puts data as attributes in A as a list. It generates atom event immediately when data is detected in systems.

Collaborating with CEP technology, the filter detects composite events from different information systems [27]. Filter is an event receiving and preprocessing module that handles subscription related to control coming messages from analysis engine and processes high volumes of event objects which are received from agents. According to the availability of business data, filter pushes each valid atom event into a map that is held in memory while it notifies the analysis engine that a new event has arrived and is stored in map. We summarize the functions of filter as follows: (1) receive events from distributed agents; (2) preprocess events streams according to event availability; (3) push events into memory and notify analysis engine.

Some methods, for example, TCP, FTP, SMTP, HTTP, and TELNET, can be used in event transfer method between filter and agent. However, we use a New IO (NIO) client server Netty framework in our event transmission mechanism to find a way to achieve ease of development, performance, stability, and flexibility with high throughput, low latency, less resource consumption, and minimized unnecessary memory copy. We have developed a socket client and server by Netty; client is deployed in agent and server, which is adopted by filter. By accepting socket communication protocol, filter has the capability to listen on a system port and receive events from different agents. Event transmission mechanism is the first step that collects real-time data from integrated systems; the CEP based event processing method will be introduced in the next subsection.

4.3. LIDA-E Engine

The analysis engine, called LIDA-E, is the core component of LIDAS architecture; it is responsible for processing the events queue and judging whether there is a complex event inferred [25]. It provides effective queuing, scheduling, time and count-window support, and fast in-memory processing of high-speed, continuous, unbounded data streams. In order to divide functions of engine, we design three modules in LIDA-E; they are main thread, event access, and event process. Main thread module is used to monitor filter and control event access module. Event access module is dedicated for accessing events from the queue which is written by filter. Event process module executes rules and processes all the events. It is a linear task controller that deploys rules one by one and manages events to be processed through them.

CEP component comprises a set of rules and knowledge to detect a predefined group of anomalies on the basis of the receiving event attribute which are the same in [31–33]. The rule base specifies how to infer complex events from the event stream. Howver, knowledge base provides the link between known information (the antecedent) and the information to be deduced (the consequent) or actions to be executed. It is a useful supplement to rule base for processing events. Both rules and knowledge are defined in build time and loaded when LIDAS starts to work.

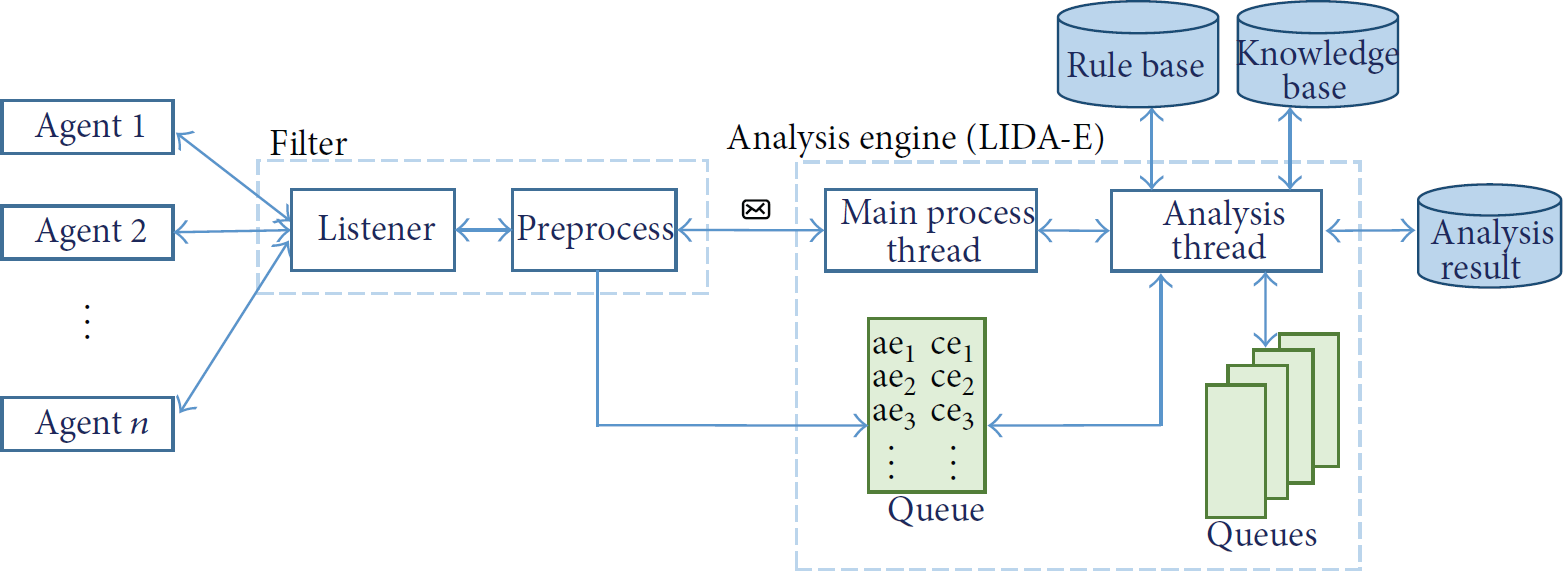

LIDA-E workflow is shown in Figure 5; it is a runtime workflow in which the analysis engine, waiting for events arriving, has already executed rules with knowledge in event process module. The workflow follows the substeps as follows: (1) filter pushes events into queue as soon as it receives events from different agents; (2) filter sends a message to analysis engine to notify it that a new event has come; (3) main thread module receives the message and notifies event access module that new events have been pushed in queue; (4) event access module reads events from queue and prepares the submission for event process module; (5) event access module sends event to event process module immediately; (6) event process module accesses queues for event processing; in event process module, each event is orderly processed by rules; for each rule, at least one queue is provided for runtime event storage; (7) after processing, analysis results are submitted to LIDAS users.

The working pipeline of LIDA-E.

4.4. The Proposed Event Processing Algorithm

In this section, we present the new Complex Event Processing algorithm, which is a simplified version of the algorithm. It has been implemented in LIDA-E. Each rule is translated into rule instance and applied in event processing module. Additional constraints are applied by parameters, which are stored in knowledge base, such as LIMIT_DOCEXAMINE in Rule 2. It identifies the max value of document examination time: the instance used to process the input events instead of automaton instance that was introduced in AIP Algorithm [34]. The detailed steps for engine algorithm are shown in Algorithm 1.

(1) Load_Rules(); (2) Load_Knowledge(); (3) (4) AtomEventItem ae = EventQueue.shift(); (5) (6) Thread.sleep(10); (7) (8) (9) (10) Rule[i].getParament; (11) ComplexEventItem ce = Rule[i].excute(ae); (12) (13) Action(ce); (14) AnalysisResult.add(ce); (15) EventStorage.add(ae, ce);

The key role in our approach is played by rule instances, which are used to detect complex events in LIDA-E. Event queue is a queue which stores the undisposed events in LIDA-E. At the beginning, LIDA-E loads predefined rules and knowledge (lines 1 and 2), which run in the initial state for the arrival of appropriate events. First, engine gets a message of “EventArrive” from filter and a new event arrives (line 3). If the EventQueue is not null (line 3), a variable ae (atom event) is defined and assigned by an event in EventQueue (line 4). The function shift of EventQueue not only reads event from EventQueue but also deletes the event which is read (line 4). If ae is null (line 5), the processing thread will sleep for 10 milliseconds and then go on working (lines 6 and 7). If ae is not null, it will be processed by every rule (line 8). In each rule instance, once a complex event is detected, a variable ce (complex event) will be assigned by the detected event (line 11). After checking the validity of ce, an action of ce is generated to system user (line 13), and the detected event is sent to processing result (line 14). Finally, all events would be stored in a database as history records (line 15).

The graphical representation of algorithm is shown in Figure 6. Input events are processed from rule 1 to N, in which a complex event is sent to result once it is detected. Otherwise, the events are signed by pass. Each rule instance maintains its own event processing status in a private queue. Finally, all the complex events results are collected from each rule instance.

The graphical representation of the event processing algorithm.

5. Experimental Evaluations

In order to perform functional validation and performance evaluation of the proposed LIDAS architecture with LIDA-E, we have developed a simple proof-of-concept prototype system, supporting both the event transmission and data analysis capabilities, with the use of currently available open source components. Evaluating the performance of the proposed LIDAS framework is not easy as it is strongly influenced by the workload. The number of rules and events to be processed is considered in each test case [34]. It is also limited by the event transmission capability in network environment. In experiments, we evaluated both the event transmission mechanism and the analysis engine. They show the performance of LIDAS architecture. All the test is demonstrated on notebook computer having Intel Core i5-3210 M CPU 2.50 GHz and 4 GB of RAM, running 32-bit Windows 7 Professional.

Our evaluation had two main goals: (1) studying the performance of event transmission mechanism in our system architecture and (2) comparing engine LIDA-E with another common processing engine that could handle some rules described in Section 3.

5.1. Event Transmission

Event transmission refers to the procedure that an event is sent from an agent until it is received by filter. We started by using HTTP request to send data agent to filter. First, we used the PHP to develop an Apache server as filter. In experiment, the Apache server (versions 2.2 and 2.4) could receive no more than 2000 HTTP requests in one second. Moreover, we built the filter by Servlet, which is developed by Java that extends the capabilities of a server. The performance of Servlet service was similar to PHP service that receives about 1500 events per second on Tomcat 6.0 server. Additionally, we have used a special server technology Node.js [35] to improve the performance of filter. However, its performance was worse than the filter that is built by PHP and Servlet.

Unfortunately, the main technologies for accepting HTTP requests did not reach the requirement of massive events transmission in LIDAS architecture. This issue was well recognized for the complex structure of HTTP request, as demonstrated by the investigation in HTTP request message headers [36]. To address this issue, we decided to use socket to transmit event data, exploring socket client and server based on Netty 4.0.40 Final. We implemented the proposed event transmission mechanism in a prototype based on a product compliant with the JMS standard, specifically Netty, which provides a popular and powerful asynchronous event-driven network application framework [37, 38].

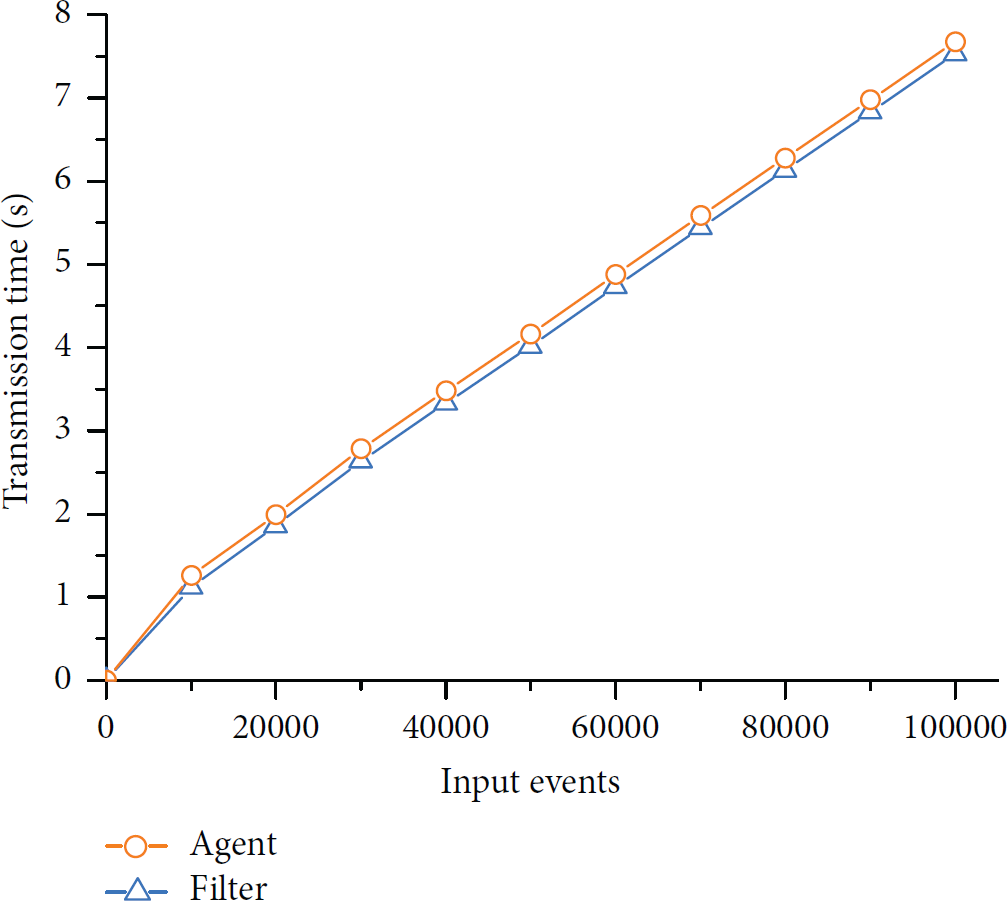

Netty client and server were developed in agent and filter, which were performed on two notebook computers to check the performance of event transmission capability. During our tests, the number of events sent by agent was increased from 10,000 to 100,000, and we recorded both time costs in agent and filter side. Figure 7 shows the time cost of event transmission in agent and filter.

The event transmission time cost of agent and filter.

In terms of the number of events to be transmitted, there is no apparent difference between agent and filter in event transmission time cost. And event receiving time cost of filter is a little less than event sending time cost of agent. Our experimental results show that the agent and filter have good performance to transmit huge volume of events in a short time.

5.2. Event Processing Capability

We evaluated the event processing capability of our engine compared with the Esper [39] engine. Esper has many features, including high scalability, memory efficiency, in-memory computing, SQL standard, minimal latency, and historical event analysis. It is a streaming-capable engine for processing real-time arriving variety data. It was embedded in Java, which makes the comparison of our engine easier.

The prototype LIDA-E was implemented in Java language, which is the most popular programming language in 2015 [40]. The applicability and usefulness of our approach have been evaluated by sample implementation of LIDA-E that processes events sent by filter (see Figure 5).

5.2.1. Event Filtering

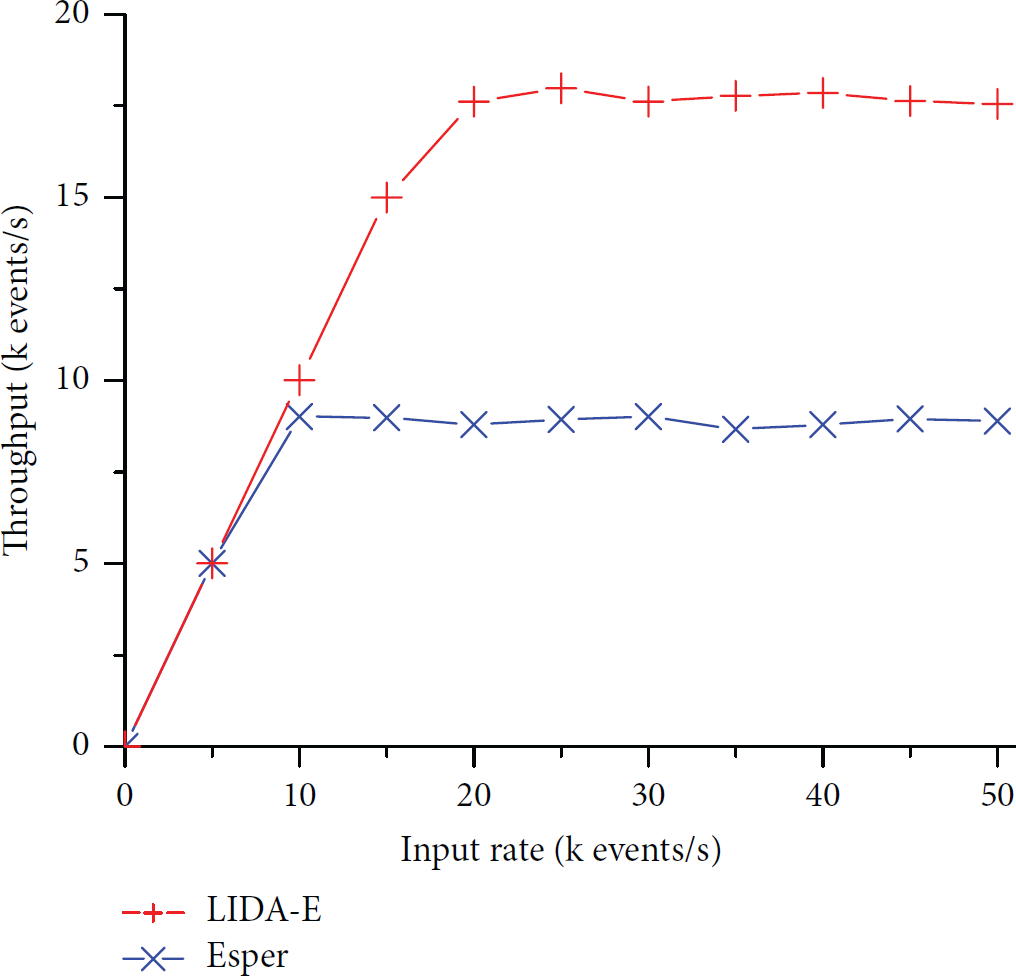

The base operation of CEP engine is selecting input events as soon as the listener module receives new events. For this reason, the evaluation is started by the comparison of filtering capability. 100 different rules were deployed in both engines. The rules selected input events according to their attributes and generated new events containing the same attributes. Therefore, each event was filtered by 100 rules, and this meant the event input rate should be equal to the output rate. The filtering results of the two CEP engines are shown in Figure 8.

The performance comparison of filtering between LIDA-E and Esper.

This evaluation highlights how LIDA-E processes input events faster than Esper with the same input events and deployed rules. As the workloads of the two engines are identical and are processed by a similar set of rules, the throughput should theoretically be the same. We observe that both engines process huge numbers of events in a short time, particularly when the input rate increased from 5,000 to 50,000 events per second. The throughput of LIDA-E is higher than of Esper. Esper handles up to about 9,000 composite events per second as the maximum throughput. However, LIDA-E starts to drop the input events at rate of 15,000 events per second. The maximum throughput of LIDA-E is 18,000 composite events/s.

5.2.2. Data Analysis

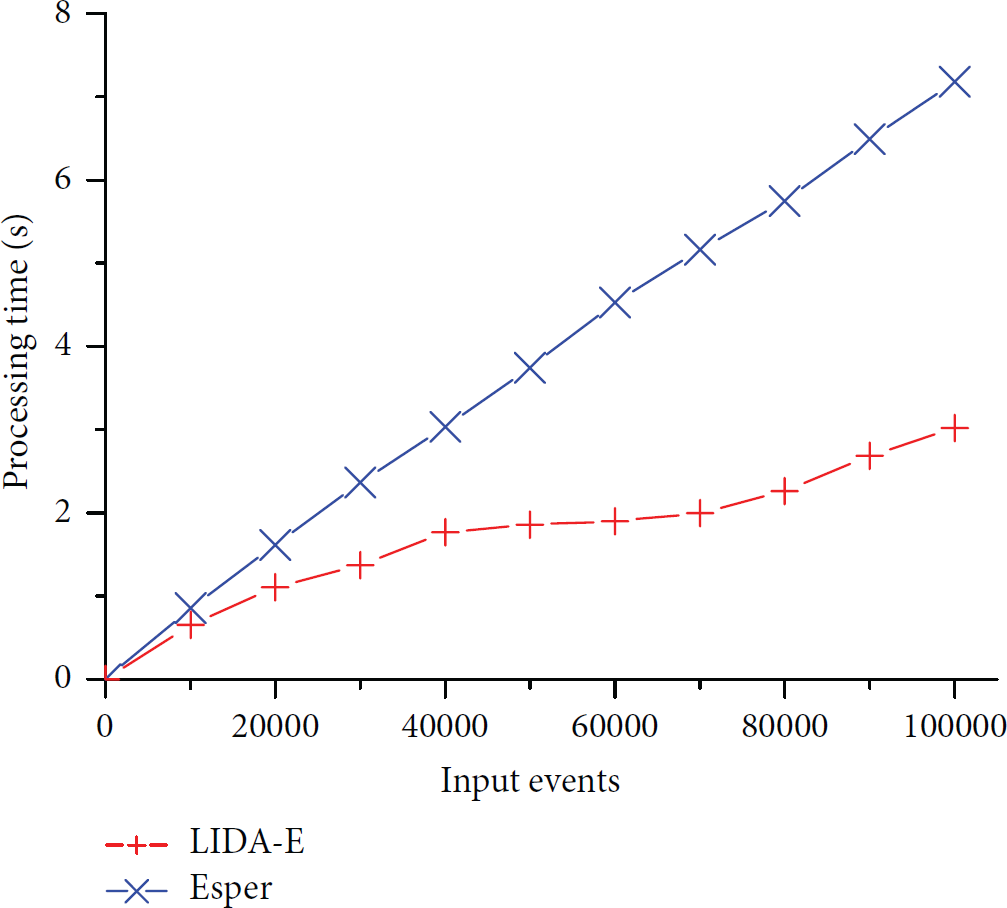

Processing input events by rule instances is the main task of a CEP engine. So we continue to perform the experiments by comparing event processing capability of LDIA-E and Esper in complex operations. To demonstrate the performance of event processing without temporal operation, we defined Rule

The time cost comparison between LIDA-E and Esper using Rule

These measure highlights show that the LIDA-E processes events faster than Esper when both engines deployed the same rules. Compared with Esper, LIDA-E has 48.65% averagely reduced time cost in different tests. It has apparent advantage over Esper because of its event processing algorithm. When the number of atom events was increased from 10,000 to 100,000, the event processing time was increased from 0.65 to 3.02 (seconds) in LDIA-E. However, if we used Esper, the processing time was increased from 0.86 to 7.18 (seconds).

As a second one, we have compared the performance of LIDA-E on different number of rules. This step used the definitions of Rule

LIDA-E time cost comparison between 5 and 10 rules.

5.2.3. Sliding Window

The capability of the two engines in processing sliding window is compared here. The third case of LIDA-E is based on a simple testing rule computing the value of the selected events with two strict constraints. Rule

To evaluate the capability of event processing, LIDA-E and Esper were, respectively, deployed in Rule Rule

SELECT EVERY(e.Value) WHERE e.TYPE = ‘CPU_Usage’ ∧ e.Value > Selectivity WHITIN(0.1 sec) THEN Rule

SELECT EVERY(e.Value) WHERE e.TYPE = ‘CPU_Usage’ ∧ e.Value > Selectivity BATCH(10) THEN

First of all, Rule

Comparison between LAIPE and Esper using Rule

Second, Rule

Comparison between LIDA-E and Esper using Rule

In general, our experimental results confirmed the advance in real-time event processing. The implementation of LIDAS with LIDA-E has power of dealing with large volume of events during different experiments. Therefore, it efficiently and reliably provides a real-time delivery and analysis service to high-frequency business data streams.

6. Conclusion

In this paper, a novel and effective architecture with the combination of a lightweight, intelligent data analytics system (LIDAS) and CEP engine (LIDA-E) is presented. In LIDAS, event models (atomic and complex) and operators (logical, mathematical, and temporal) are formulated for event detection and analysis. LIDAS is based on a modular architecture, which clearly separates the core logic and is devoted to event transmission from agent to filter, and event processing handled by LIDA-E engine. Its knowledge base with rules is responsible for intelligent data analysis with the proposed event processing algorithm. An event transmission component, explicitly designed to target the needs of applications that have to cope with event generation and their transmission, utilizes an efficient engine LIDA-E capable of analyzing large volume of input data in an efficient way.

A comparison of LIDA-E and Esper, which is the most widely used commercial solution for CEP and is known for its expressiveness and efficiency, shows that the performance of LIDA-E is better than of Esper in different tests. In filtering events, the throughput of LIDA-E is almost twice that of Esper when input rate is above 20k events/s. Comparing with Esper, LIDA-E decreases the processing time in different selected rates on dealing with sliding window. The better performance of LIDA-E can be clearly summarized through the results of comparative tests.

Footnotes

Competing Interests

The authors declare that they have no competing interests.

Acknowledgments

This work was supported by the Research on Key Technology of Virtual Restoration Mosaic in Terracotta Army (20136101110019), Research on the Method of Virtual Restoration of Damaged Terracotta Army Based on Global Optimization (61373117), and Web Services Monitoring Technology in Distributed Network Environment Based on CEP (YZZ14119).