Abstract

Distributed camera networks play an important role in public security surveillance. Analyzing video sequences from cameras set at different angles will provide enhanced performance for detecting abnormal events. In this paper, an abnormal detection algorithm is proposed to identify unusual events captured by multiple cameras. The visual event is summarized and represented by the histogram of the optical flow orientation descriptor, and then a multikernel strategy that takes the multiview scenes into account is proposed to improve the detection accuracy. A nonlinear one-class SVM algorithm with the constructed kernel is then trained to detect abnormal frames of video sequences. We validate and evaluate the proposed method on the video surveillance dataset PETS.

1. Introduction

Detecting abnormal events via video sequence analysis is crucial for public security management. Compared with the single camera setting, distributed camera networks with overlapping views are capable of obtaining additional information for surveilling the movement of the crowds. We illustrate a multicamera setting with the performance evaluation of tracking and surveillance (PETS) dataset [1]. This dataset was collected for testing existing or new systems for the detection of one or more of 3 types of crowd surveillance characteristics/events within a real-world environment. The scenarios are filmed from multiple cameras and involve up to approximately forty actors. The scene and the camera locations are shown in Figure 1. Several normal and abnormal scenes from different angles of view are shown in Figure 2. In Figures 2(a), 2(c), and 2(e), people were walking in random directions, which are considered to be normal. In Figures 2(b), 2(d), and 2(f), people were walking or running in the same direction. This group behavior implies that they were attracted by some particular events. Consequently, these scenes are considered to be abnormal. Note that the scenes were captured by different cameras. Figures 2(a) and 2(b) were captured by camera 1 which was set at the side of the road, and the movement of the people can be easily identified. Figures 2(c) and 2(d) were captured by camera 2 which was set towards the movement direction of the people, with occlusion of the individuals existing in this view. Figures 2(e) and 2(f) were captured by camera 3 which was also set at the side of the road, but with larger distance. The purpose of the distributed camera surveillance is to detect abnormal events by benefitting from information conveyed by the multiview video sequences. Distributed camera networks are currently widely used for security surveillance applications.

The plan of the multicamera localizations in PETS dataset. Three cameras are set in the campus as marked.

Examples of the normal and abnormal scenes captured by distributed cameras of PETS dataset. (a, c, e) The people are walking in different directions, the normal scenes of Time 14–55 sequence; (b, d, f) the people are walking in the same direction, the abnormal scenes of Time 14–17 sequence; (a, b) scenes captured by camera 1; (c, d) scenes captured by camera 2; (e, f) scenes captured by camera 3.

In the literature [2, 3], the framework for multiple pedestrian tracking by using overlapping cameras was presented. In [4], several major challenges in distributed video processing, including robust and computationally efficient inference and opportunistic and parsimonious sensing, were discussed. This progress is coupled with the fact that large-scale video networks start to play an important rule for video surveillance, object recognition, abnormal event detection, and people tracking in crowd environments. Modeling the movement feature of pixels is fundamental for detecting the abnormal event. In [5], a method that tracked the local spatiotemporal interest points was proposed, and the abnormal activity was indicated by uncommon energy-velocity of the feature points. In [6], a spatiotemporal descriptor was computed based on computing the histograms of optical flow in the neighborhood of detected points. In [7], dense points were sampled from each frame and were tracked based on displacement information from an optical flow field. But for the crowd event analysis, it is difficult to obtain the predetected pixels of the blob due to the occlusion of the individuals. In order to deal with the uncertainty of observations existing in video events, Bayesian modeling approaches such as hierarchical Dirichlet processes were used in [8], and probabilistic latent semantic analysis was used in [9]. A method based on the variable-duration hidden Markov model was proposed in [10], where the durations of states were modeled except for the transitions between states, and the temporal understanding of the structure of complex events was tackled. Latent Dirichlet allocation (LDA) was also a typical standard topic model which was used to model video clips as being derived from a bag of topics drawn from a fixed set of proportions [11]. In [12], the covariance matrix descriptor fusing the optical flow to encode moving information of a frame was presented. In the above literature, feature extraction methods and event models were intensively studied for abnormal detection; however, only single view video sequences were considered in the analysis. Abnormal event detection via distributed camera networks is an emerging problem and attracts researchers to investigate. In this paper, we propose an abnormal detection algorithm to identify unusual events captured by multiple cameras. The visual event is represented by the histogram of the optical flow orientation descriptor and then a nonlinear one-class SVM algorithm using a multikernel strategy that takes the multiview scenes into account is proposed to improve the detection accuracy. Simulation will illustrate the effectiveness of the proposed scheme.

The rest of the paper is organized as follows. In Section 2, the optical flow-based feature for video analysis is introduced. In Section 3, the abnormal detection framework based on one-class SVM classification method is presented, and then a multikernel strategy is proposed to deal with the abnormal event detection problem for distributed camera networks. In Section 4, the experimental results are illustrated and discussed. Finally, Section 5 concludes the paper and gives a perspective of future work.

2. Feature Selection for Abnormal Detection

The proposal by Horn-Schunck (HS) has been chosen to compute the optical flow for the representation of the movement information. The HS method formulates the optical flow as a global energy functional for the gray image sequence:

Based on the optical flow, the histogram of the optical flow orientation (HOFO) [13] is computed to fuse the movement information as a high dimension vector. A

Histogram of the optical flow orientation (HOFO) feature descriptor of the image.

3. One-Class SVM with Multiple Kernels

In practice, most data belong to the normal frames of video sequences. It is reasonable to assume that these data lie in the high density zone of the underlying data distribution. Data of an abnormal frame will appear as an outlier of this data distribution. Thus, one-class classification methods, such as OCNM (one-class neighbor machine) and OCSVM (one-class support vector machine), are appropriate to deal with the abnormal event detection problem, by checking whether a data point lies in the characteristic region constructed with normal data. Specifically, in the literature [14–16], OCNM which asymptotically converges to the exact minimum volume set was proposed. Because the suboptimal performance of OCSVM may arise from the fact that its decision function is not based on the use of neighborhood measures, the OCNM performs consistently better than OCSVM. In this paper we focus on verifying the advantage of using the feature representation of an action from multiview cameras. Thus, the OCSVM is chosen as a baseline in this paper. OCNM, which potentially has more competent performance, will be studied as a future work.

The problem of nonlinear one-class SVM [17, 18] can be cast as a quadratic programming problem:

For constructing a more representative and discriminative feature descriptor for distributed camera networks, we take the scene captured by each view as a partial feature. The multikernel strategy considers a linear combination of candidates kernels:

Each sample vector

For a given scene monitored by multiple cameras, suppose we can obtain a set of training frames. Based on the one-class SVM hypothesis, the abnormal behavior is the sample deviating from the training set. Take the plaza monitored by the three cameras shown in Figure 2; for example, if

Algorithm 1 (abnormal event detection algorithm).

Input. Image set captured by the cameras.

Algorithm. (1) Compute the optical flow of the training frame set

(2) Compute the histogram of the optical flow orientation (HOFO) of the image in different views:

(3) Construct the feature samples of the image k under c for the distributed camera network:

(4) Obtain the support vectors by training the feature samples with nonlinear one-class SVM method:

(5) Each incoming frame

(6) The normal event or abnormal event is detected.

Step 1.

The optical flow feature is computed. The training frame set

Step 2.

The second step consists of calculating the histogram of optical flow orientation (HOFO) of the training frames. It can be generalized as

Step 3.

The third step consists of fusing the

Step 4.

Nonlinear one-class SVM is applied to the training frame HOFO descriptor in multiview to obtain the support vectors. It is described as follows:

Step 5.

In the online detection phase, based on the support vectors obtained in the training step, one-class SVM classifies each incoming frame feature

4. Abnormal Events Detection Results

We then conduct experiments to evaluate the performance of the one-class SVM classification method for abnormal frame event detection with the distributed camera network from the PETS dataset [1]. In the experiments of PETS [1] dataset, each event is represented by 3 separate scenes; thus, the event is described by a set of 3 separated HOFO features. We mark the use of these three features as “3 HOFO,” while we mark the use of a single feature as “1 HOFO.” If the multikernel strategy is used, we mark it as “3 kernels”; otherwise, we mark the single kernel strategy as “1 kernel.” The detection accuracy of the detection results are shown for the experiments.

The normal and abnormal events of sequence Time 14–17 in the PETS dataset are shown in Figure 4. The training samples and the normal samples for testing are the frames in 3 views chosen from the sequence (Time 14–55) where the people were walking in different directions. 400 training frames (Frame0000 to Frame0399) and 90 normal testing frames (Frame0400 to Frame0489) were selected from Time 14–55. The abnormal testing samples were selected from the sequence (Time 14–17) where the people were walking or running in the same direction. 89 abnormal testing frames (Frame0000 to Frame0089) were selected from Time 14–17.

Detection results of the normal and abnormal scenes of Time 14–17 captured by distributed camera network. (a, c, e) The detection results of one normal frame. (b, d, f) The detection results of one abnormal frame.

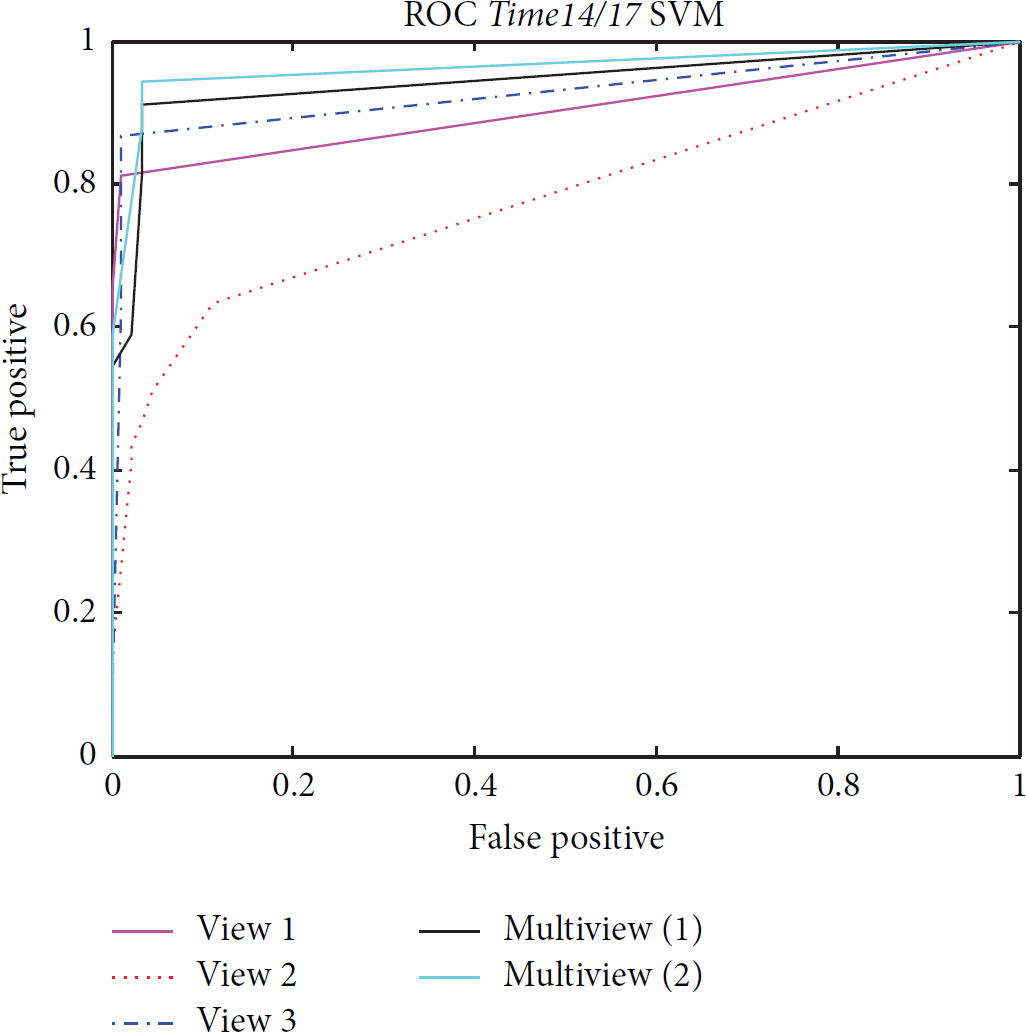

In the experiments, the parameters of the one-class SVM, ν and σ, were selected from a set of preassigned values to plot the ROC (receiver operating characteristic curve) and comparing the AUC (area under the curve) values from the curves. The AUC value of the abnormal detection results in different views and different multikernel strategies are shown in Table 1. “Single view” means “1 HOFO 1 kernel” strategy, and “multiview” means “3 HOFO 3 kernels.” “View 1” denotes the scene that was monitored by camera 1, and the abnormal detection results are shown in Figures 4(a) and 4(b). “View 2” denotes the scene that was monitored by camera 2, as shown in Figures 4(c) and 4(d). “View 3” denotes the scene that was monitored by camera 3, as shown in Figures 4(e) and 4(f). “Multiview (1)” denotes the multikernel strategy with the parameter setting

Comparison of the abnormal detection AUC values with the single view and with the distributed cameras using the multikernel strategy for sequence Time 14–17.

ROC of abnormal detection results of sequence Time 14–17 under different views and different kernel strategies.

View 2 has the lowest area under the ROC, for the reason that camera 2 faces the movement direction of the crowds, and the occlusion influences the computation of the optical flow. Thus, the HOFO feature based on the optical flow cannot represent the accurate movement information. The results show that the abnormal detection algorithm of HOFO feature can obtain satisfactory detection results. Moreover, the multikernel strategy can generally improve the performance.

The experiment detecting the running activity as the abnormal event is shown in Figures 6 and 7. The normal event corresponds to the frames where the people were walking. The training data are selected from the sequence (Time 14–17 and Time 14–31) in PETS dataset where the individuals were walking in one direction. In this experiment, 61 frames (Frame0000 to Frame0060) where people were walking from left to right in sequence Time 14–17 and 50 frames (Frame0000 to Frame0049) where the individuals were walking from right to left in sequence Time 14–31 are chosen as training samples. Correspondingly, 104 normal samples and 118 abnormal frames in sequence Time 14–16 are detected. The abnormal detection performance is improved by multikernel strategy also. The AUC values of the detection results are shown in Table 2.

The abnormal detection results of sequence Time 14–16. The comparison of the abnormal frame event detection results in single view scene and in distributed camera scenes via multikernel strategies.

Detection results of the normal and abnormal scenes of Time 14–16 captured by the distributed camera network: the individuals are moving from right to left. (a, c, e) The detection result of one normal frame: the individuals are walking in one direction. (b, d, f) The detection result of one abnormal frame: the individuals are running in one direction.

Detection results of the normal and abnormal scenes of Time 14–16 captured by the distributed camera network: the individuals are moving from left to right. (a, c, e) The detection result of one normal frame: the individuals are walking in one direction. (b, d, f) The detection result of one abnormal frame: the individuals are running in one direction.

5. Conclusions

In this paper, we have proposed a method for abnormal frame event detection with distributed camera networks. The histogram of optical flow was computed as the descriptor to represent the movement of a frame. A multikernel strategy was presented to benefit from multiple views captured by the distributed camera network. The benchmark dataset PETS has been tested to demonstrate the effectiveness of the proposed algorithm.

In the future work, the optimal coefficients of the multikernel strategy should be obtained automatically based on the scene, while these parameters were preset according to the importance of each view in the current work. Additional application scenarios, such as single person action recognition or action tracking in multiview scenes, will be considered to show the advantages of distributed camera networks. Finally, advanced one-class classification techniques such as OCNM will also be investigated for the video anomaly detection.

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgments

This work is partially supported by the SURECAP CPER Project (fonction de surveillance dans les réseaux de capteurs sans fil via contrat de plan Etat-Région) and the Platform CAPSEC (capteurs pour la sécurité) funded by Région Champagne-Ardenne and FEDER (fonds européen de développement régional), the Fundamental Research Funds for the Central Universities, and the National Natural Science Foundation of China (Grants nos. U1435220 and 61503017).