Abstract

We are concerned with the problem of trust evaluation in the generic context of large scale open-ended systems. In such systems the truster agents have to interact with other trustee peers to achieve their goals, while the trustees may not behave as required in practice. The truster therefore has to predict the behaviors of potential trustees to identify reliable ones, based on past interaction experience. Due to the size of the system, often there is little or no past interaction between the truster and the trustee, that is, the case wherein the truster should resort to the third party agents, termed advisors here, inquiring about the reputation of the trustee. The problem is complicated by the possibility that the advisors may deliberately provide inaccurate and even misleading reputation reports to the truster. To this end, we develop techniques to take account of inaccurate reputations in modeling the behaviors of the trustee based on the Bayesian formalism. The core of the techniques is a proposed notion, termed Advisor-to-Truster relevance measure, based on which the incorrect reputation reports are rectified for use in the trust evaluation process. The benefit induced by the proposed techniques is verified by simulated experiments.

1. Introduction

Nowadays, many computational systems, such as peer-to-peer networks, e-commerce, and the Grid, are moving toward open, large-scale, dynamic, and distributed architectures [1–3]. In such open-ended systems, an agent often has to interact with other peers to achieve its goal, while the partner agents may be malicious and thus do not behave as required [4–9]. The new features of these systems, for example, scalability, mobility, autonomy, ubiquity, incomplete information, and global connectivity, imply that traditional security mechanisms are no longer applicable to find malicious agents or control their behaviors [9, 10]. One of the alternatives currently being investigated is an approach based on the notion of trust. The basic idea is to provide a quantitative evaluation for trust in each possible participating agent using history of its behavior [5–9]. The trust value here is a number expressing the level of trustworthiness and this view is known as the computational trust. Based on the output of this trust evaluation process, the truster agent can choose its interaction partners.

Due to the size of the system, there is often little or no past interactions between the truster and the trustee, which hinders the truster from evaluating the trustee's trustworthiness precisely enough. In this case, the truster can resort to the third party agents, termed advisors here, in order to collect more historical observations on the trustee's behavior [7–9]. The advisors are expected to provide honest report about the trustee's behavior to the truster, while, in practice, some advisors may deliberately provide inaccurate and even misleading reports to the truster. Thus, an efficient trust model taking account of possible inaccuracies in the advisors’ reputation reports is required.

To this end, we develop techniques to take account of the aforementioned issues in modeling the behaviors of the trustee. The core of the techniques is a proposed notion, termed Advisor-to-Truster relevance (ATTR) measure, based on which the incorrect reputation reports are rectified for use in the trust evaluation process.

The remainder of the paper is organized as follows. In Section 2, we formalise the general notion of probabilistic trust by introducing the typical beta trust model. The issues related with inaccurate reputations are pointed out precisely in a mathematical manner. In Section 3, we propose the notion termed ATTR measure and present the trust evaluation method using that measure. In Section 4, we test the performance of the proposed method via simulation experiments. Section 5 concludes the paper.

2. Beta Trust Model

In this section, we define the basic notion and mathematically formulate the problem illustrated in the Introduction. We use the same notion as in [9] and focus on the perspective of probabilistic trust. The task is then to build probabilistic models for agents’ behaviors using the outcomes of historical interactions. Using these models, a truster can estimate the probability of particular outcomes of the next interaction with a trustee te. Such a probability defines the trust of the truster tr in the trustee te. This notion of trust mimics the trusting relationship between human beings illustrated in [11].

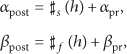

The crucial part of any probabilistic trust model is a behaviour model, which is used to estimate the probabilities of future outcomes of the next interaction. Assume that the outcomes are either success s or failure f; a beta trust model is introduced in [8] and then followed by other works, for example, in [6, 7, 12]. The idea is to model the behavior of a trustee te by a beta probability distribution over possible outcomes, that is, success s or failure f, of an interaction with te. Given a sequence of outcomes

The outcomes are binary here with the beta trust model. We therefore focus on the single probability

Here the estimate for

As mentioned in the Introduction, the truster tr has to consider reputation reports provided by other peers, termed advisors here, about the trustee te under consideration to enhance the trust evaluation process, especially when there is little or no past interaction experience about the trustee. The beta trust model encompasses a mechanism for handling the reputation reports. It treats each interaction with the trustee as a Bernoulli trial regardless of the interacting partner. Given the representation of te's behavior by the beta probability distribution (parametrised by

Both the beta trust model and the Dirichlet trust model process the reputation reports provided by the advisors in the same way as for the outcomes of direct interactions of the truster with the trustee. In another word, they do not take account of the possibility that the reputation reports, that is,

3. Handing Inaccurate Reputations Using Relevance Information

As mentioned above, the advisor peers may provide inaccurate and even misleading reputations reports to the truster on the trustee, while many existing trust models, for example, the beta trust model and the Dirichlet trust model, do not take account of that. In this section, a notion of Advisor-to-Truster relevance (ATTR) measure is proposed, based on which we develop techniques in order to handle the inaccurate reputation reports in modeling the trust of the truster in the trustee.

3.1. Advisor-to-Truster Relevance (ATTR) Measure

The ATTR measure is used to quantify the extent to which the reputation report provided by an advisor

To begin with, suppose that we have a trustee set

3.1.1. The Cosine ATTR Measure

Here the taxonomy of the ATTR measure is estimated as the cosine between the corresponding vectors,

Observe that such cosine measure is a dot product scaled by magnitude, and, because of this scaling, it is normalized between 0 and 1. For a pair of two-dimensional vectors, such a cosine measure only depends on the included angle between the two vectors, regardless of the vectors’ magnitudes.

To further corroborate the above statement, consider two example cases. In the former one, we have

3.1.2. Adjusted Cosine ATTR Measure

To deal with the aforementioned counterintuitive property of the cosine ATTR measure, we propose here an adjusted cosine ATTR measure, which defines to be

Now let us reconsider the example cases presented above; that is, we have

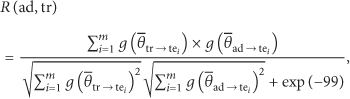

3.2. Trust Evaluation Using ATTR

Here, we present our approach to trust evaluation using the proposed notion of ATTR measure.

The assumption behind the approach is that a given malicious advisor

We present the proposed approach within the framework of beta trust model introduced in Section 2. To make the description as clear as possible, we rewrite the beta pdf here

It is shown that, in this approach, the sign of ATTR determines whether to reverse the count numbers of successful and failing outcomes in the reputation reports and the magnitude determines to which extent the reported counts are discounted, for updating the a posteriori pdf.

4. Experiments

In this section, we design simulated experiments to examine how effectively we could cope with inaccurate reputation reports using the proposed notion of ATTR measure. We compare two implementations of the ATTR measure, based on the cosine measure and the adjusted cosine measure described in Section 3, respectively. The beta trust modeling approach described in Section 2 is included as the benchmark for performance comparison.

The objective here is to demonstrate that the proposed ATTR measure works. A comparative study of our method with other related methods, for example, TRAVOS [7] and BLADE [18], is certainly interesting but has not yet been performed and is not the intention here.

We are concerned with the ability of our methods to deal with the deceptive advisors at first. The deceptive advisors will report the reversed counts of successful and failing interactions with a trustee, which means that if the true counts of successful and failing interactions are s and f, respectively, then it reports that the counts of successful and failing interactions to be f and s, respectively. We simulated 20 deceptive advisors, each having a common training set of trustees with the truster. Each training trustee has interacted with both the truster and the advisor in the past, and the number of past interactions is randomly drawn from a uniform discrete distribution over the range

We consider the process of aggregating 20 deceptive advisors one by one and this process is initialized by a truster which knows nothing about the behavior of the potential trustee, and thus we use a beta pdf with chosen parameters

The mean errors of the trust predictions versus the number of deceptive advisors whose reputation reports have been aggregated.

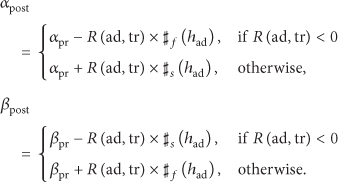

Figure 2 graphs change in the mean error of each method as the percentage of deceptive advisors grows, and this result is obtained by a Monte Carlo simulation consisting of 100 independent runs of each method with respect to each specific percentage value.

The mean errors of the trust predictions versus the percentage of deceptive advisors in the deception experiment.

It is shown that, using the proposed adjusted cosine ATTR measure, we could cope with deceptive reputation reports very well.

Now we evaluate whether this ATTR measure will affect the efficiency of the trust evaluation process when the reputation reports are all accurate; namely, they are all provided by the honest advisors. Use the same setting as for the above deception experiment, except that the advisors now will report honestly the true interaction data to the truster. The simulation result is shown in Figure 3, which reveals that all the involved methods perform equally well in this case. We could approximately deem that if the reputation reports are accurate, the ATTR based method automatically degenerates into the traditional beta trust model approach.

Experiment with honest advisors.

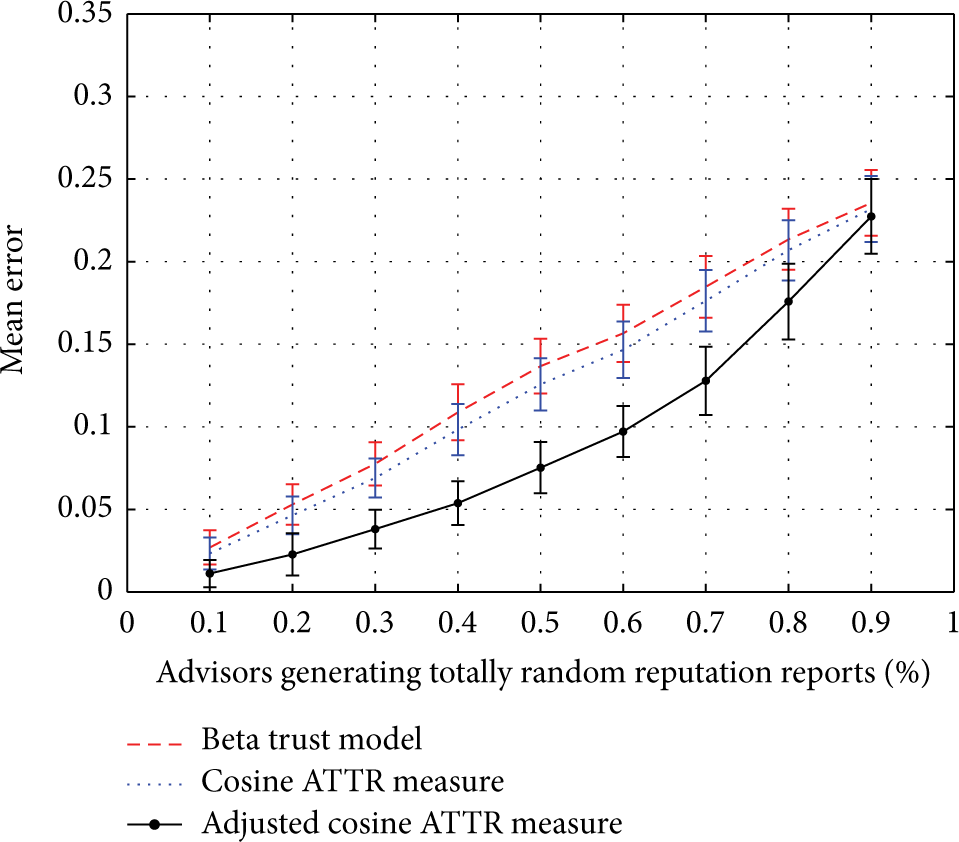

The same experimental setup is used to examine how the totally random reputation reports will affect the trust evaluation methods under consideration. The totally random reputation reports represent the reports in which the counts of successful and failing interactions are determined in a totally random manner regardless of the truth. In the simulation, the count of successful interactions is drawn from a uniform discrete distribution over

Experiment with totally randomly generated reputation reports.

Figure 5 graphs change in the mean error of each method as the percentage of abnormal advisors which produce totally randomly generated reputation reports grows. The same as before, this result is obtained by a Monte Carlo simulation consisting of 100 independent runs of each method with respect to each specific percentage value. It is shown that the mean error of each method grows as the percentage of abnormal advisors grows, while the adjusted ATTR measure based method performs better than the others. Specifically, as this percentage is approaching the point

The mean errors of the trust predictions versus the percentage of abnormal advisors which produce totally randomly generated reputation reports.

5. Conclusions

In this paper, we proposed techniques to cope with the problem of trust evaluation involving inaccurate reputation reports for large scale open-ended systems. The basic idea is to utilize the relevance information between the truster agent and the advisor agents. We proposed a notion termed ATTR measure to quantify such relevance information and developed two implementations of the ATTR measure. We demonstrated that one of the proposed implementations, namely, the adjusted cosine ATTR measure, could provide promising solutions to complex trust evaluation problems involving inaccurate reputation reports.

Through simulated experiments, it is shown that the proposed techniques perform remarkably satisfactorily in dealing with deceptive advisors and perform better than the other methods involved in dealing with inaccurate, totally random generated reputation reports.

The notion of ATTR measure is proposed here within the framework of beta trust model. It is also feasible to use this notion and develop corresponding techniques based on other probabilistic trust models, for example, the Dirichlet trust model [5, 10], which is a generalization of the beta trust model, in order to cope with inaccurate reputation reports.

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgments

This work was partly supported by the National Natural Science Foundation (NSF) of China under Grant no. 61302158, the NSF of Jiangsu province under Grant no. BK20130869, the Natural Science Research Project of Department of Education of Jiangsu Province under Grant no. 13KJB520019, and the Scientific Research Foundation of Nanjing University of Posts and Telecommunications under Grant no. NY213030.