Abstract

This paper explores an inner-knuckle-print (IKP) biometric recognition, based on mobile phone. Since IKP characteristics are captured by using mobile phone camera, the greatest challenge is that IKP images from the same hand have different illumination, posture, and background. In order to construct autonomous and robust recognition, we present a range of techniques as follows. Firstly, the hand region is preprocessed by using mean shift (MS) and K-means clustering. Secondly, the region of interest (ROI) of IKP is segmented and normalized. Thirdly, the IKP feature is extracted by using 2D Gabor filter with proper orientation and frequency. Finally, histogram of orientation gradient (HOG) algorithm is applied for matching. According to the experimental results, the proposed method is capable of achieving considerable recognition accuracy.

1. Introduction

Recently, biometric recognition based on mobile device has drawn significant attention from both academia and business. Mobile devices (smart phone, etc.) have been widely used in daily life, such as shopping, payment, and social networking. However, rapid growth in the deployment of mobile application brings not only promising opportunities, but also security challenges. People often worry about exposure of sensitive and private information stored in their mobile phones. Although PIN (personal identification number) is commonly used, it is usually under the risk of cryptanalysis or being forgotten. Biometric recognition is the key to mobile security, but it is limited by mobile computing and sensors.

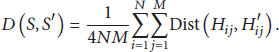

In this paper, we proposed a robust IKP-based biometric recognition by using mobile phone camera. Our goal is to survive under a mobile acquisition environment which is usually out of control on illumination, hand position, and background. First, for accurately extracting the hand region from complex background and illumination, we develop a hand detector which integrates the mean shift (MS) and K-means clustering methods. Moreover, for segmenting the fingers, we formulate the new equations based on the prior knowledge of the normal hand geometry. In addition, to locate the region of interest (ROI) of IKP accurately, we apply the radon projection which is very sensitive to the image feature with the line patterns in Figure 1. More importantly, to extract the stable and unique feature of IKP under different illumination conditions, we employ the Gabor filter which has been proved to be robust to illumination and further improve it by tuning an optimal single scale and orientation since the lines in IKP usually contain similar scales and directions. Lastly, to achieve the deformation tolerance during the matching the IKP feature, we apply the histogram of orientation gradient (HOG) algorithm which has been proved to be tolerant to local deformation for human detection.

The inner-knuckle-print (IKP) image.

Experiments show that our method achieves 96.8% of BIR and 8.9% of EER in single ROI matching. Furthermore, the accuracy of our method is improved by integrated 8-ROI matching, which achieves 98.2% of BIR and 6.5% of EER. The experimental results demonstrate that our method can be robust to the variation of backgrounds and illuminations, as well as tolerant to the local deformations due to the change of hand position.

The rest of this paper is organized as follows: Section 2 describes the related works, and Section 3 presents details of proposed processing method. Section 4 reports the experimental results, and the conclusion is drawn in Section 5.

2. Related Works

The best-known case of mobile recognition is “Touch ID” technique, which uses fingerprint as a pass code, with just a touch on the Home button of iPhone 5s [1]. Fingerprint recognition has been applied successfully for many years. However, it needs special sensor IC equipped in mobile device. Meanwhile, moisture or finger scars can obviously distort the fingerprint pattern [2]. Besides fingerprint, the potential mobile biometric recognition includes iris and vein. Iris-capturing recognition needs ultrahigh resolution camera [3], which is too expensive for mobile device. Furthermore, certain factors can degrade the performance of iris recognition, such as eyelashes [4], glass reflections [5], and eyelids [6]. Methods using vein patterns of palm or finger have drawn a heated discussion [7]. But vein patterns are acquired using near-infrared (NIR) camera, which is expensive and unusual on mobile device. Furthermore, NIR camera cannot work in the sunshine because solar source is a strong near-infrared light source and seriously interferes with the vein recognition.

Face recognition is comparatively convenient in terms of usability, since faces have lager dimensional characteristics [8, 9] and can be acquired using common camera on mobile device [10]. However, several factors can degrade the recognition accuracy of common face recognition system, such as facial expressions [11], illuminative variations [12], and interferences from wearing masks or glasses [13].

Compared with the above biometric method, inner-knuckle-print (IKP) is quite attractive for mobile biometric recognition, as shown in Figure 1. Firstly, it has rich texture information on a small region of finger, which can be acquired using common mobile camera. Secondly, the IKP information is quite unique in individuals since birth. Thirdly, the IKP texture distributed on a smooth surface has less distortion under illumination and posture variation.

Some previous works based on IKP have been explored [14–17]. Li et al. [14] proposed applying the Hausdorff distance, since it is an effective way to measure shape similarity and can take the deformation into account well. However, it has the risk of finding the wrong matches, since the pixel-based descriptor (e.g., position) may not be sufficient to correctly reflect the real structures. For example, one image containing two close lines and the other containing a single line would be considered very similar. There are also some works attempting to handle pose invariance by applying BLPOC [15, 16], the reconstruction of the affine transformation [16], and image-based reconstruction [17]. However, BLPOC can only achieve translation invariance when images are captured in the same illumination, since it is sensitive to the change of illumination. The reconstruction of the homography matrix or the affine transformation matrix has the risk that the corresponding feature points may be unreliable, which quite possibly happens in IKP recognition, since IKP usually lacks valid feature points. Image-based reconstruction is a quite robust method, but it relies on a complete training database. However, this may be not so practical, since it may need enormous samples to cover all possible cases.

Overall, existing IKP methods attempted to achieve illumination robustness and deformation tolerance in a small range. Unfortunately, they are still far from satisfactory for uncontrolled acquisition environments of mobile phone camera.

3. Proposed Processing Method

Figure 2 shows the pipeline of the proposed system, which is composed of six steps: data acquisition, hand extraction, finger segmentation, ROI location, feature extraction, and matching.

The flow of the proposed IKP-based recognition system.

3.1. Data Acquisition

The hand images used in our system are acquired by ordinary cameras equipped on mobile devices. We describe the uncontrolled acquisition environment as follows. First, the lighting condition and the camera exposure have no special restrictions, but there should be no severe overexposure or underexposure, as little recognizable structure information can be acquired in these two conditions. Second, the hand is asked to be naturally stretched out without any occlusion among the fingers. Third, the acquired image should cover the whole palm, and the palm should occupy the image as much as possible. Fourth, the captured images should have clear boundaries of hand, while they are allowed to contain the variation of textures, color, or illumination in the background. According to our experience, these restrictions are not tough and are easily satisfied for most mobile devices in practice.

3.2. Hand Extraction

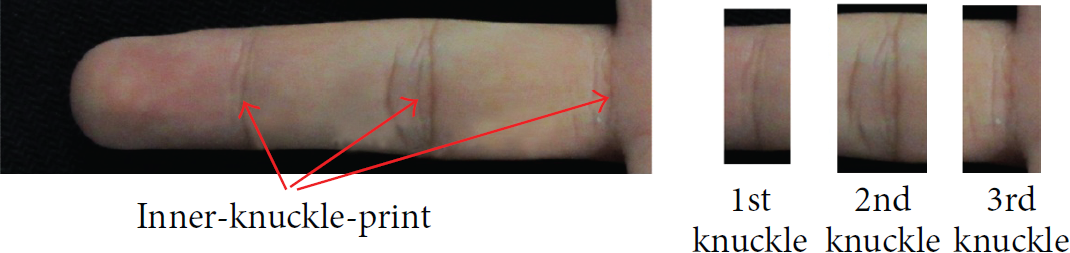

As shown in Figures 3(a) and 4(a), the background of captured image contains textures, color variation, and smooth change on luminance. It is well known that mean shift (MS) algorithm [18, 19] is a nonparametric feature-based clustering method, which can smooth the information of texture and illumination variation, while maintaining the edge information in the same region. Thus, we propose to utilize an MS preprocessing as shown in Figure 3(b). The MS procedure starts with mapping all data points into an

The proposed hand extraction method. (a) Captured original image. (b) Mean shift preprocessing. (c) K-means clustering. (d) Contour line.

Comparison of segmentation methods. (a) The origin image with texture in the background. (b) OTSU. (c) Canny operator. (d) Grab cut. (e) The proposed method.

Here, n is the number of data points.

Further, as shown in Figure 3(c), a simple K-means clustering method [20, 21] is presented to divide the captured image into two significant regions: background and hand. Finally, the hand contour is extracted by using Canny operator for finger segmentation, as shown in Figure 3(d).

In Figure 4, we compared our method with other segmentation methods, such as thresholding technique (OTSU [22]), boundary-based technique (Canny operator [23]), and Grab cut method [22]. OTSU and Canny operator methods are usually applied to extract the hand shape. But they are suitable for the image captured under strictly controlled environment with black background, absolutely uniform illumination and fixed pose. They cannot process our captured images with texture of the background and smooth illumination variation, as shown in Figures 4(b) and 4(c). Grab cut method is good to extract the hand region shown in Figure 4(d). But it is inefficient on repeated iteration, which takes 7~15 s to process the images of Figure 4(a). Meanwhile, our method just needs 2.56 s. Compared with the above methods, our method shows both robustness and efficiency in hand extraction.

3.3. Finger Segmentation

After hand extraction, the datum points on hand contour should be located for finger segmentation. The general method of segmentation is based on maximum and minimum curvature [22]. However, it needs to calculate the curvature of all the points on the contour twice and is less time-efficient. In fact, accurate datum points are not necessary since they are only used for indicating the rough region of the finger.

In this paper, we simplify the finger segmentation in two steps. Firstly, as shown in Figure 5(a), we sample points along the contour in every 10 units of Euclidean distance. Secondly, instead of searching the maximum and minimum curvature, we calculate the curvature of the sample point by using

(a) Mark of sample points. (b) Calculation with angle θ of sample points. (c) Datum points location. (d) Finger axle wire. (e) Indication of rectangle finger-strip regions.

As shown in Figure 5(b), a, b correspond to the two adjacent vectors

Finally, we normalize the finger images into the uniform scaling and orientation for transformation. We first estimate the axis of each finger. For example, the region of middle finger is shown in Figure 5(d) we mark the point M1 at one-third of length, and M3 at two-thirds of length along the contour between T3 and B4. Then we can find the similar points M2 and M4 along the contour between T3 and B3. The point V3 is defined as the middle point of line segments B3-B4, and the line segment T3-V3 across the middle of line segments M1-M2 and M3-M4. Thus, T3-V3 defines the axis of the finger. Since the middle part of finger is stable and clear, we can segment the middle part of the finger by using a small rectangle along the axis, starting from the one-sixth and ending at the five-sixth of T3-V3 length. As shown in Figure 5(e), four finger-strip regions are all normalized into the dimension of 340 pixels with finger width and the horizontal orientation with the finger axis.

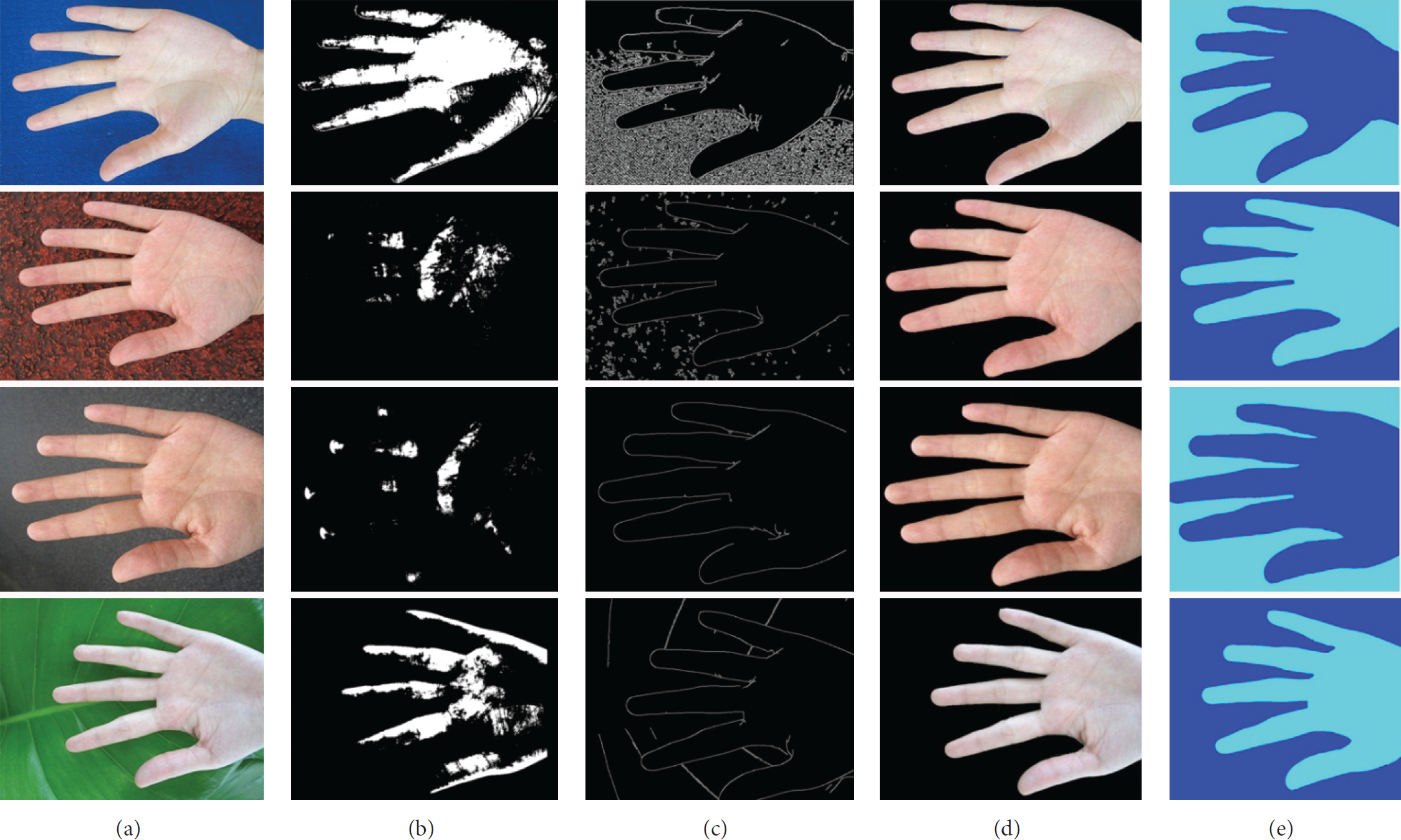

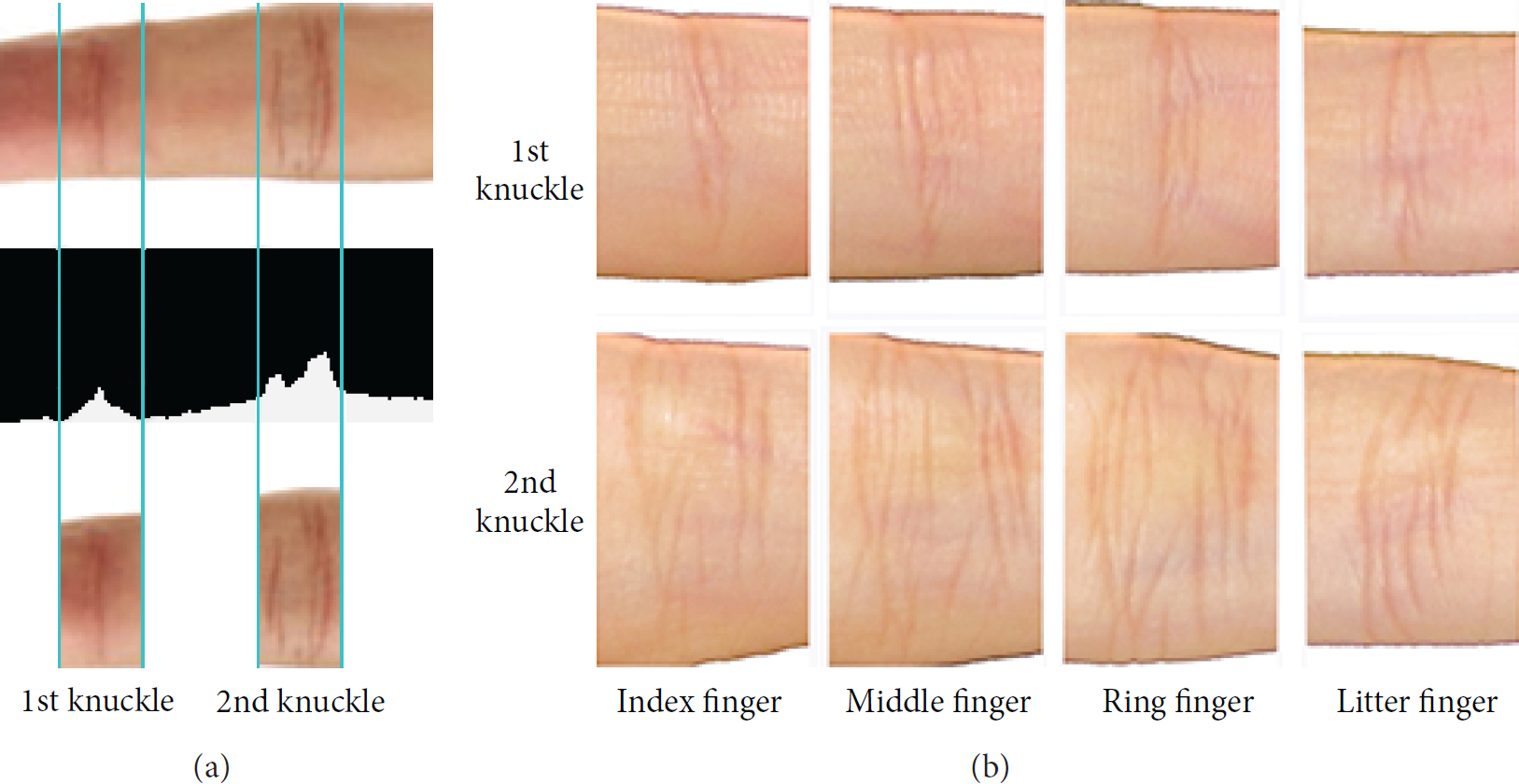

3.4. ROI Location

After finger segmentation, we use Radon projection to locate the ROI for recognition. Due to highlight the IKP features and eliminate the effect caused by variable illumination, we preprocess the segmented finger images by contrast-limited adaptive histogram equalization [24, 25]. Moreover, the Radon projection is used into the same orientation of segmented finger images. As shown in Figure 6(a), we define the centroid line of each knuckle as the peak-to-average of the Radon projection results. We segment these two knuckles with the length of 200 pixels in both sides of the centroid line and use a low pass filter to obtain accurate ROI, respectively. Figure 6(b) shows the eight ROIs extracted from four segmented finger images with the same translation, scaling, and orientation. It is noted that we only focus on eight interesting regions on the 1st and 2nd knuckles of four fingers, except thumb (Figure 2).

(a) Radon projection result. (b) 8 ROIs extraction in one same hand.

3.5. Feature Extraction

Before feature extraction, we firstly need to reduce the noise of mentioned above ROI images. Figure 7 shows that the IKP features become more clear and easy to be extracted, after Gaussian blur filter.

(a) Image before Gaussian blur. (b) Image after Gaussian blur.

Since orientation-based feature can extract line-like structure information from image, with high accuracy and robustness to illumination variation [26], we further try to extract and match IKP features according to the orientation, rather than luminance or color information. Due to the Gabor filtering technique widely used in texture feature extraction [27–29], we chose Lee's 2D Gabor filter [30] with variable orientation in extraction, and the formulas are shown as follows:

Here,

(a) Original ROI. (b)

3.6. IKP Matching

After feature extraction, the final step of the proposed recognition method is IKP matching. It is noted that although IKP feature images have been normalized to the uniform translation, scale, and rotation, they still contain misalignment due to different acquisition environments. So, the matching method should be misalignment-tolerant. Currently, there are some commonly structure-aware image matching methods, such as SIFT, AISS, CW-SSIM, and HOG. SIFT [31] has considered a certain degree of structure information, but it is primarily based on angular points and cannot be misalignment-tolerance. AISS [32] can effectively tolerate the misalignment, but it focuses on line width, rather than the orientation. That is to say, all matching lines should have the same line width, and thus it only works on the line drawings. Actually, the orientation-based feature shown in Figure 8 is gray image, which is difficult for the same line width. Thus, AISS has poor matching accuracy in our recognition system. CW-SSIM [33] is based on SSIM [34] and it can achieve robustness of translation. However, it has too small tolerance and then two-pixel misalignment may cause huge error.

Lastly, HOG [35] can process the image structure by using the gradient information and utilize block-based histogram on the gradient information to achieve misalignment tolerance. Thus, we consider HOG algorithm for matching and calculate the gradient maps (orientation and response) of the normalized IKP feature images in this paper. Firstly, we perform histogram on gradient map using a block diagram. As shown in Figure 9(a), each block contains

HOG eigenvector. (a) Block diagram. (b) Calculation of pixel energy value. (c) Scanning process. (d) Histogram sequence.

Thirdly, as shown in Figure 9(c), we scan the whole gradient maps by moving the block diagram from left to right, and from top to down, with stride of

Hence, we concatenate all the histograms, and a high-dimensional eigenvector is obtained to represent the image. By using it, we can evaluate the matching level between captured IKP features and corresponding features from database, as shown in Figure 10. The dissimilarity of HOG eigenvector between two IKP features can be presented as follows:

HOG matching between captured IKP features and corresponding features from database.

Here, S and

4. Experiments

As shown in Figure 11, we built a basic database of 4000 ROIs processed by our method. The ROIs are captured by mobile phone camera, from 500 samples of human hand under various illumination, posture, and background. While taking advantage of this database, we conducted experiments of single ROI matching and multiple ROI matching. The former is performed to test the matching accuracy of our method by single ROI in the same position of hand sample, and the latter is performed by integrating 8 ROIs of the hand sample. For each experiment, both verification and identification modes are tested, and the nearest neighbor rule is used for classification.

Samples of different hand images under various environments in our database.

Generally, verification is a one-to-one comparison against single stored template, which aims to identify “whether the person is whom he claims to be.” The statistical value of equal error rate (EER) is adopted to evaluate the method performance. It is defined as the error rate (EER = FAR = FRR) when the false accept rate (FAR) is equal to the false reject rate (FRR). Here FAR = FA/(GR + FA), FRR = FR/(GA + FR) and FA, FR, GA, and GR represent the proportions of false acceptances, false rejections, genuine acceptances, and genuine rejections, respectively. On the other hand, identification is a one-to-many comparison against all stored templates, which aims to identify “who is this person.” The statistical value of the best identification rate (BIR), which is known as rank-1 recognition rate as well, is adopted to evaluate the method performance. For a one-to-many comparison, if the sample in the database which owns the best similarity with the query sample happens to be the genuine one, we call it a best identification. BIR is defined to measure this kind of rate from the comparisons of all the samples in the database. In this paper, we present the EER and BIR performances of representative methods, including CompCode [36], PCA [37], BLPOC [38], and our method. Meanwhile, we illustrate the receiver operating characteristic (ROC) curves, which present the false accept rate (FAR) against the genuine accept rate (GAR) which is defined as GAR =

4.1. Signal ROI Matching

In this experiment, we randomly chose 100 pairs of ROIs in the same position from different hands for matching. The BIR and EER of the BLPOC, CompCode, PCA, and our method are listed in Table 1. It is obvious that CompCode and our method have better recognition performance than BLPOC and PCA. The best BIR and EER of our method achieve 96.8% and 8.9%, respectively. Further, the ROC curves of the CompCode and our method are presented in Figure 12. Since BLPOC and PCA are originally proposed for controlled acquisition environment and essentially sensitive to the variation of illumination and deformation, their ROC curves are much lower than the curves of our method and CompCode and are hardly illustrated in the same figure. So we only reserve two curves as shown in Figure 12. It is shown that our method is superior to CompCode. Although CompCode can extract the orientation of image and ignore the influence of illumination variation, our method considers both illumination robustness and misalignment tolerance at the same time.

The comparison of representative methods on single ROI.

The ROC curves of CompCode and our method on single ROI.

4.2. Integrated 8-ROI Matching

In this experiment, every time we randomly chose 100 hand samples with integrated 8 ROIs for matching. The BIR and EER of the BLPOC, CompCode, PCA, and our method are listed in Table 2. It is obvious that the performance of all methods has been improved than single ROI matching, and our method is still the best one. The BIR and EER of our method with 8-ROI matching achieves 98.2% and 6.5%, better than that results of single ROI matching. The ROC curves of the CompCode and our method are presented in Figure 13. It is shown that our method is still superior to CompCode.

The comparison of representative methods on integrated 8 ROIs.

The ROC curves of Comp Code and our method on integrated 8 ROIs.

4.3. Time Performance

We carried out all the experiments on a work station with Intel Core i7 3770K 3.5 GHz CPU and 8 GB RAM. Table 1 shows the consumed time for single matching experiments. Meanwhile, we use multithreads acceleration to enhance the performance of the integrated matching experiments, as shown in Table 2. Although our method is a little slower than the others, it is still very efficient and can work in real-time processing.

5. Conclusion

In this paper, a novel mobile biometric recognition is presented, which uses mobile computing and camera sensor to capture IKP characteristics of human hand. Compared with previous works, the proposed method is robust to the illumination, posture, and background texture variation. It has several novelties as follows. Firstly, the hand extraction algorithm combines the advantage of mean shift and K-means color image clustering. Secondly, the IKP features are extracted by using 2D Gabor filter, according to special orientation and frequency. Thirdly, the HOG algorithm is used during the feature matching process. Finally, we have built a basic IKP database including 4000 ROIs from 500 hand samples. Experiments show robust recognition performance under complex mobile environment. Although our system can achieve nice performance and efficiency in many cases, it still has some limitations. First, the hand extraction would be still influenced by the complex background, especially when the background has a similar color to skin. Moreover, the extreme illumination situations (e.g., overexposure and underexposure) and large deformation of hand pose can significantly reduce the performance of feature extraction. Meanwhile, the amount of samples in the database needs to be augmented. In the future work, we will further focus on the robustness of environment variation and build a better self-adaptative system.

Footnotes

Conflict of Interests

The authors declare no conflict of interests.

Acknowledgments

This work was supported by the funding from Guangzhou Novo Program of Science and Technology (Grant no. 0501-330), the National Natural Science Foundation of China (Grants nos. 61103120, 61472145, 61272293, and 61233012), the Research Fund for the Doctoral Program of Higher Education of China (Grant no. 20110172120026), the Shenzhen Nanshan Innovative Institution Establishment Fund (Grant no. KC2013ZDZJ0007A), the Shenzhen Basic Research Project (Project no. JCYJ20120619152326448), the Natural Science Foundation of Guangdong (Project no. S2013010014973), a grant of RGC General Research Fund (Grant no. 417913), and the Fundamental Research Funds for the Central Universities (for students, no. 10561201475).