Abstract

The integration of wireless sensor technologies has increased awareness of many laboratories on the field of embedded network system. Many researchers seek exploiting these advances to develop technological assistance for frail people in smart homes. However, to reach the full potential of applications using network embedded systems such as assistive smart home, the first challenge to overcome is the recognition of the ongoing inhabitant activity of daily living (ADL). Moreover, to provide adequate assistance, it is essential to be able to detect every perceptive error. Such an approach proposes the use of ubiquitous sensors hidden in the environment for monitoring and detecting behavioral abnormalities associated with cognitive deficits and then does a proper guidance by providing advice using different kinds of effectors (screen, light, sound, etc.). In this paper, we present an affordable system that exploits a combination of passive RFID and the load signatures of appliances to assist elders and to detect errors related to cognitive impairment. The entire multi-sensor system has been implemented and deployed in a real prototype smart home. We present the promising results of our experiment on real daily routines.

1. Introduction

The increase in life expectancy and the falling birth rate cause a population ageing [1]. Independence becomes a critical issue not only for older adults who desire to remain in the residence of their choice but also for societies. In order to satisfy the desires of people with cognitive disorders (e.g., Alzheimer's disease) and seniors to remain independent at home, the scientific community has proposed smart home as a promising solution [2]. Over the years, some assistive technologies have been developed by scientists in order to help people with cognitive deficit to complete their ADLs. In fact, once the ongoing activity is recognized, the real challenge is the detection of erratic behavior by using the raw data input. This detection is essential to provide appropriate instructions using a suggestion or reminder that increase the probability of obtaining a correct behavior [3]. In this regard, one of the new features of our hybrid system is an appropriate cognitive assistance based on a well-established test named NAT (Naturalistic Action Test) [4] (step omission, steps inversion, perseverance, temporal constraint, and cognitive overload).

Currently, most systems designed to assist patients are intrusive and complex to adjust and control [5–12]. Problematic systems mainly use cameras, binary sensors, or wearable devices. Vision-based solutions are commonly used [13] because they allow obtaining accurate information. Although the information provided is rich, there is an ethical issue using this type of sensor in the context of smart home. The cameras are intrusive and they infringe on the privacy of residents. In contrast, other teams of researchers have addressed the ADLs recognition problem by using binary sensors such as movement detectors, electromagnetic contacts, or other basic sensors that do not have the intrusiveness issue [14, 15]. Some of these works performed well despite the low-quality information provided by this type of sensor. However, these approaches are still limited in the abilities to recognize complex scenarios due to the lack of information. Other systems require wearing gloves or bracelets, but this requirement causes an intrusiveness issue. Moreover, we cannot be assured that a patient wears this kind of sensor.

Alternatives such as RFID technology [16] and analysis of electrical signatures of appliances [17] are also considered. These types of technology have already proven themselves to be very effective and not intrusive. RFID can acquire rich information such as the position of objects and spatial relationships. This technology is robust and not expensive and it does not need any battery. On the other side, the electrical analysis systems are innovative. In the field of smart homes, almost no team has worked on the subject from human activity recognition (HAR) point of view. The system is not very expensive. It requires only one sensor and it does not require maintenance. In addition, the information provided is very interesting because they are complementary to those provided by RFID technology. Although those are attractive technologies to recognize ADLs, they have some important limitations. For instance, let us take the classic example of the preparation of a coffee. With RFID technology, we are able to recognize the movements of objects and understand the entire activity. However, it is impossible to detect whether the resident turned on the coffee maker. On the other hand, if we only use the analysis of electrical signals, vital information such as the position of objects are missing. That is why, in this paper, we propose to use an affordable and nonintrusive system that combines RFID and the load signature of appliances to assist cognitively impaired people in their ADLs. This interesting combination of systems allows us to detect new abnormal behavior. This is achieved with our new step by step assistive multi-agents system where each agent (sensor interpretation agents, action recognition agent, behavior recognition agent, and assistive agent) has a predetermined role in the guidance process. Furthermore, our model implements a Bayesian network which increases the potential of the whole system. Our practical contribution consists in the implementation of the whole system within a real smart environment. Finally, we show through realistic scenarios that the assistive system can be used to support a cognitively impaired patient.

This paper is divided as follows. Section 2 describes the existing approaches for recognition and assistance in smart homes and gives a small overview of the literature on HAR models. Section 3 briefly presents the assistive multi-agent system. Section 4 describes our localization model using passive RFID technology. Section 5 details our load signature identification system for electrical devices. Section 6 presents in detail our intelligent agents that make the combination of the two systems using a probabilistic model. Section 7 specifies the step by step error detection to provide guidance during the realization of the activity. Section 8 presents the implementation in our prototype smart home infrastructures and discusses the results obtained from a first set of experiments. Finally, Section 9 briefly concludes and gives an overview of our future works.

2. Related Work

Since this study represents the culmination of our research on various aspects of the recognition in smart homes, the literature review is divided into two main areas. First, we will present works on real assistive system specifically developed for smart environments. This section describes the different technologies used and well-known approaches. The second subsection discusses the classical activity recognition techniques and learning techniques. Both the advantages and disadvantages will be discussed consecutively with their limitations.

2.1. Literature on Assistive Approaches

In this section, we will overview notable works that can be compared to our assistive model. These models combine ideas from sensor networks, ubiquitous computing, artificial intelligence (AI), and human-machine interface to achieve their goals by sending some prompts and giving appropriate reminders. To highlight the reasons why most of the proposed systems are not suitable for our research context, we will present the various limitations of each infrastructure. In the literature, there are different types of approaches: camera based approaches, binary sensors approaches, RFID based approaches, and electrical load analysis approaches. In this subsection, we will briefly describe each type and for each we will assess both advantages and disadvantages.

2.1.1. Camera Based Approaches

One of the best known systems which extracts information from visual data is called COACH (cognitive orthosis for assisting activities in the home) has been developed by Mihailidis et al. [5]. The objective of this system is to conduct surveillance of a patient performing a specific task of everyday life, for instance, cleaning his hands, and offers them assistance (i.e., a voice warning or guidance) in the most appropriate way only when the situation requires. To do so, COACH uses a single camera as sensor. It uses a screen and speakers as effectors. The COACH inference engine relies on a partially observable Markov decision process (POMDP) [18], which models each step of the ongoing activity. It used the Viterbi algorithm [19] to calculate the phase of the ADL currently underway. COACH operates a processing algorithm of the image in order to identify high-level information necessary for assistance. It extracts the position of the hands, towel, soap, and valve status (open/closed). The position is inferred summarily in large predefined areas. Designers conducted an extensive experimental evaluation of their support system using six scenarios and patients with dementia. A second vision-based assistance system is the one of Aghajan et al. [20]. Their system aims to analyze the information from multiple cameras to detect occupant's posture in order to anticipate problems, to assist vulnerable people, and to reduce accidents at home.

However, this type of approach has several limitations. The main limitation of this family lies in the intrusiveness in the privacy of the person caused by the presence of the cameras in the home. Likewise, the video signal can contain a lot of information, but it can be difficult to extract it (even more in real time) and cameras are very sensitive to changes in brightness and to the color variations of objects. Effectively, changes of color and form of the objects interfere with recognition by image processing. The brightness level of the room also has a great influence on performance. To maximize the effectiveness of such system, designers need to fix brightness and select objects of different colors and sizes specifically to achieve a better recognition. Although this approach might be used in smart homes, it is important to keep in mind that the cameras are expensive and fragile.

2.1.2. Binary Sensors Approaches

The second type of assistive technologies relies on distributed binary sensors (movement detectors, electromagnetic contacts, etc.). Jakkula and Cook [21] have obtained good results with this type of sensor. In fact, the binary data collection system consists of an array of motion sensors, which collect information using X10 devices and the in-house sensor network. Their laboratory consists in a presentation area, kitchen, student desk, and faculty room. There are over 100 sensors deployed including light, temperature, humidity, and reed switches. Specifically, their model of learning relies heavily on time to make temporal recognition of activity. It requires an analysis of large volumes of data to detect automatically interesting patterns or relationships that allow better understanding. Another system implemented with basic sensors is Independent Lifestyle Assistant (ILA) [8]. This multi-agents system integrates a unified activity detection model, situation assessments, response planning, instantaneous response generation, and data mining to automatically configure the settings. Concretely, this task tracking system was based on the Probabilistic Hostile Agent Task Tracker (PHATT) [22]. This system focuses on monitoring the taking of medications and the mobility of elders. However, the components and sensors involved in the task of tracking these patients are only able to recognize low-level errors and require many hours of testing, active debugging, and maintenance. On the other hand, Helal et al. [23] used a service auto detection to allow caregivers to remote monitoring and intervention of elderly people living in the house. It uses a large number of embedded sensors, actuators, processors, and networks in an apartment. Specifically, in their work, they used fuzzy logic rules to be able to recognize the activities of residents. Another binary approach is the Autominder system [7], which consists in an application for cognitive assistance deployed in a prototype form on a mobile robot assistant. It uses techniques of Ambient Intelligence (AI) to infer what activities that are performed by a person and to model her daily schedule. Then, it reasons about these daily plans using Quantitative Temporal Bayesian Networks (QTBNs) [24] in order to evaluate when to give reminders considering the temporal constraints set. This approach is severely limited by the fact that it does not differentiate the type of committed errors and this information is crucial for proper assistance. Finally, in the study by Patterson et al. [10], patients are tracked using various sensors within the smart environment and a hierarchical Bayesian model to determine the user's plans and goals.

These types of approaches have been used for many years and they have proven their robustness. However, binary sensors provide insufficient information to monitor complex situations with the aim of detecting cognitive errors. So, during an ADL such as preparing a meal, with binary sensors, it is not possible to detect a realization error because these sensors do not give sufficient information on the progression of the activity with, for example, the only information on the presence or absence of the person in the kitchen obtained by the movement detector and the opening and closing cabinets with electromagnetic contact sensors. Furthermore, it is important to note that the deployment of this technology is very complex, since it requires the use of a large number of sensors.

2.1.3. RFID Based Approaches

The third type of guidance systems exploits RFID sensors. The RFID technology has regained in popularity among the scientific community in recent years. In short, these systems use antennas and tags. The antennas emit signals, tags perceive these signals, tags retransmit the signal containing their identification numbers, and finally antennas receive answers. By example, the Barista system developed by Patterson et al. [9] uses RFID tags on items and wearable RFID sensor to obtain the identifiers of objects manipulated by the resident. Concretely, to perform the activity recognition, they used a probabilistic generative framework of Hidden Markov Models (HMMs) and a Dynamic Bayes Net (DBN). However, the system must be trained for a very long period of time. Also, Chu et al. [6] presented another assistance system using wearable RFID sensors in order to support people with cognitive impairment. In this case, a partially observed Markov decision process (POMDP) is used to interpret user interactions. Unfortunately, these systems require to wear an RFID reader on gloves or on bracelets who causes a significant degree of intrusiveness and we cannot consider that a person with cognitive impairment will not forget to wear the equipment with RFID [15].

Due to the imprecision and the disadvantages of the first approaches that used RFID, many teams of research tried to use other technology or to combine two or more technologies to perform indoor localization. Ultrasonic sensors are another technology that is often exploited for indoor localization [25]. These systems can generally achieve a very high precision but are completely blocked by any object or person that could obstruct their line of sight. Choi and Lee [25] combine the ultrasonic technology with passive RFID tags to localize a mobile robot thus compensating for the line-of-sight problems. Their model yields an impressive accuracy (1–3 cm) when not obstructed. However, their hardware is too big to be mounted on objects.

At first glance, RFID system does not have much interest in smart homes if it requires the use of bracelet or portable sensor. However, other research teams have developed localization algorithms based on this technology. A large part of them comes directly or indirectly from the well-known Landmark system [26]. This system introduces the concept of location tags references placed at strategic location. Vorst et al. [27] are one of them. Their model uses passive RFID tags and an onboard reader to localize mobile objects in an environment. A prerequisite learning step is required to define a probabilistic model. This model is exploited particles filter (PF) technique, which estimates the position. Another model of Joho et al. [28] uses reference tags in combination with different parameters. In particular, they are based on both the received signal strength (RSSI) and the orientation of the antennas. Among these, some of these approaches provide very good results, more than enough to use them as assistance services for smart homes, but they all rely on the large-scale tag references. This type of approach is not very suitable, nor always possible in the context of smart home. To address this issue, some teams of researchers have examined the situation and have developed new approaches that allow locating precisely objects based on the strength of received signals. In our previous work [16], we have developed a powerful algorithm that used elliptical trilateration to achieve very good results in terms of positioning. Moreover, our work does not stop just positioning. We also presented a model step by step assistance that used the position of objects and their spatial relations.

To conclude, this technology is more and more used in intelligent environments due to its low cost, its robustness, and the quality of information that it provides. However, it is limited in the cases of a scenario with undetectable steps due to a lack of information that this type of technology is not able to provide.

2.1.4. Electrical Load Signature Approaches

The last type of guidance systems exploits the nonintrusive appliance load monitoring (NIALM) which is described as a method for detecting the state of voltage fluctuations and the electric supplied to a house or a building, which directly influences the power difference. Electric meters with NIALM technology are widely used by utilities to examine the specific uses of electricity consumption in different houses [29, 30]. In general, equipment and meters used to monitor the behavior of devices are transparent to end-users. Indeed, measures are often taken at the entrance of the facility (e.g., the main electrical service entrance). With NIALM there are fewer components to install, maintain, and remove. In addition, in [31], it is noticed that the procedures of NIALM are divided into two, that is to say, those analyzing the steady-state and those that focus on the transient detection. Although NIALM are used in many areas, this approach is not very widespread in the field of smart homes. Only certain teams worked to analyze the variation of electrical signals and try to recognize the signatures of devices. Among these, Belley et al. [17] have implemented such a system in a real smart home infrastructure. Initially, in their approach, each device was analyzed and a signature specific charge was assigned to each. Thereafter, when a signature is recognized, the algorithm could identify which device was on or off. They experimented completely and have achieved impressive results. In addition to being able to identify signatures, their works include an additional layer of recognition activity for simple and predefined scenarios. The algorithm can recognize the different steps of the current activity and to detect certain errors. Moreover, Camier et al. [32] have developed other algorithms for recognition of electrical signatures on the same way.

However, these works were only preliminary. Although this approach is robust and perfectly suitable for smart homes, it is also limited by the amount of information it provides. In fact, this approach is limited only to the recognition of activities involving electrical appliances which is far from being sufficient.

2.2. Human Activity Recognition

The problem of HAR has been widely covered due to its importance in pervasive environments. Despite this, many challenges still remain and the problem is far from being solved. Our aim is to exploit smart home technology to assist frail and cognitively impaired people. In this subsection, we briefly describe each class of HAR algorithms.

2.2.1. Logical HAR

The formal theory of plan recognition of Kautz [33] constitutes a foundation to logic-based HAR algorithms and is still one of the most important theories in this branch. Kautz's theory formalizes the process of inference of the ongoing activity by using first-order logic. The theory is limited by the assumption that all possible activities are known and that basic actions can directly be observed. Chen et al. [34] recently proposed a new system that exploits ontology for explicit activity and context modeling. Their approach is very comprehensive and partially addresses the real-time recognition dilemma. Likewise, to other purely logical approaches, the way they model ADLs and perform the inference is elegant and natural to understand for a human being. Logical approaches to HAR mostly suffer from the tedious works required to model ADLs correctly. It does not only result in high overhead but also greatly limits their real-world applicability.

2.2.2. Probabilistic HAR

Many teams have explored the utilization of probabilistic theories such as Markovian and Bayesian models [9, 35] for HAR. These algorithms provide good recognition rate (RR) and are usually combined with learning techniques. These approaches are simpler to implement than those based on formal logic but suffer from many drawbacks. Particularly, building large activity libraries is very fastidious even with the help of learning methods. Additionally, inferring with them requires high computation (resp.,

2.2.3. Learning Based HAR

To address the difficulty of building a library of activities, many researchers have worked toward the development of learning schemes. In recent years, a plethora of supervised approaches have been developed such as the one of Van Kasteren et al. [36]. In their work, they exploit a learned Markovian model and conditional random field that achieves a recognition rate of 79.4–95.6%. There are also some completely unsupervised methods in the literature. For instance, Palmes et al. [37] scour the Internet to get models based on object relevance weight. This technique defines an influence score on each object that is part of an activity and chooses the one with the highest weight to define it as a key object. Though their approach offers great scalability, it is limited by the fact that two activities cannot define the same key object.

To conclude, there are many problems with state-of-the-art algorithms. Most of the existing approaches only recognize coarse-grained activities (cooking, toileting, etc.) and cannot detect the individual step to complete them. Secondly, a majority of these approaches do not specify the performance for real-time recognition and for the detection of errors in the ADLs. Thirdly, many proposals are intrusive and costly. Besides their installations are complex and are difficult to deploy in an existing building. Finally, we can note that most existing systems are based on the information gathered through a complex net of distributed sensors [6–10, 12], or on cameras [5], and few of them require a long period of training to be effective [8, 9, 12]. By cons, other systems such as those based on RFID technology and those based on the electrical load signatures are adapted to smart environments, but they have a lack of information when they are used alone. That is why, in our new system, the electrical load technology is combined to form a hybrid system with RFID that provide the missing information.

3. New Recognition Model

To address the limitations of both RFID and electrical systems, this paper presents a new hybrid approach combining RFID and electrical load signature of appliances. Indeed, in the context of smart homes, the combination of those technologies offers many advantages. Firstly, those technologies are very robust and are easy to deploy. Furthermore, the information provided by them is complementary, which allow recognizing a wide variety of scenarios. It is also worthy to note that passive RFID sensors and electrical sensor at the main panel are not intrusive, which is essential in this context. This section introduces our new model of step by step assistance implemented with a multi-agents system (illustrated in Figure 1). This model of the assistance adheres to the recent paradigm of ubiquitous computing [38–40] which seeks creating augmented environments with a distributed multi-agent system communicating between each other in order to give services. Starting with this recent vision and in the light of our work in ambient assistive environments, we propose a system to achieve our goal of creating an environment that acts as a rational agent. In addition, this kind of architecture can allow adding new agents dynamically without redesigning the entire system. The recognition process is organized in a multi-agents system where one or many agents are responsible for processing each task and where agents can communicate with each other. In this model, the process is divided into four types of agents: (1) the interpretation and merging raw data into more useful information, (2) identification of basic actions, (3) recognition of the current activity from assumptions about the most plausible goal, and (4) providing support which intervene adequately in case of erratic behavior. In Figure 1, each agent addresses a portion of the problem and they communicate their information and coordinate their actions to accomplish the overall goal (the step by step assistance).

Schema of the new activity recognition system.

Before being able to offer assistance to a patient with cognitive disorders, we must be able to infer its goal. It is why we used an activity recognition algorithm exploiting the real-time elliptic trilateration of objects [41] enhanced with electrical devices identification based on electrical signature [42]. The first important modification is the combination which allows us to know both the position and the state of electrical equipment used during an activity to improve support. In fact, our HAR algorithm was designed to be able to distinguish each individual step of an ADL (action). To show the general operation of the system, take as an example a resident which interacts with a smart environment equipped with sensors with the intention to cook spaghetti. Firstly, he changes the state of sensors due to a displacement of an object and the start of a stove burner. Then, the agents that interpret the sensors extract useful information from raw sensor data. Thirdly, an action is deduced from these interpreted facts. Finally, we deduct the most plausible activities using a Bayesian network to provide assistance in order that it reaches its inferred goal. In the next subsections, we will see how the raw data from the sensors are interpreted.

4. RFID Based Localisation

In this section, we present our localization agent which is an adaptation of our previous work [43]. Specifically, the algorithm uses the elliptic reinforced trilateration based on the passive RFID technology. This new adaptation is faster and provides better real-time tracking of the object in the smart home. Moreover, this adaptation now can export relevant data in a database which can then be reused by other agents for recognition and assistance.

4.1. Signals Filtering

The positioning system uses the strengths of received signals (RSSI) which are provided by the passive RFID technology. However, the data are highly variable and without any pretreatment, it is difficult to localize correctly. We had to find ways to address the imprecision of the data provided by the sensors. We have developed several filters that significantly improve positioning accuracy as evidenced by our previous work. First, we used an iteration based filter that eliminates false readings that occur in a noisy environment such as a smart home. The main idea of this type of filter is validated in a number of iterations before accepting a change of state. On the other hand, we have also introduced a variation of the RSSI filter to reduce high variation. In fact, the RSSI collected by the antenna is constantly changing, which often causes random jumps of the tracked object from one place to another between two iterations. Although there is no way to avoid completely this issue, we introduced a Gaussian weighted average that processes received signals and reduces significantly flicker.

4.2. Elliptic Trilateration

One of the difficulties when implementing a trilateration method is that most indoor RFID antennas are directional. Therefore, the wave propagation does not correspond to the circle which is used for trilateration. After a rigorous series of experiments, we found that the loss of signal is higher when the object moves away from the side of the antenna than when it moves perpendicularly. We also found that the wave fronts emitted by the antenna looked more like ellipses. Therefore, we decided to use ellipses instead of circles. These are characterized by two independent axes. The equation of the ellipse is given by

Based on the concept that the signal strength decreases quadratically with distance, we decided to use polynomial regression (degree 2). We determined (2) and (3) that respectively return the distance of the major axis (

The next step would simply consist in finding the intersection point between at least three ellipses (or two, if they are on the same wall). However, we almost never find one single intersection point in a realistic context. To address this issue, we use a classical method that consists in using the common area between the ellipses. If the ellipses do not intersect or if the common area is too big, they are automatically adjusted inversely proportional to the strength of the signal (the stronger it is, the more accurate the ellipse is, and the less we modify it). Otherwise, the resulting position is the center of the common area. Figure 2 illustrates the real-time localization of multiple objects from four antennas in our smart home kitchen with the developed method.

Real-time positioning of multiple objects. The gray ellipses are calculated from the RSSI and the dotted ellipses are corrected by the positioning algorithm. The triangle is the real position of the object.

4.3. Steering Behavior

Once the location is made, the jerky appearance of an object in motion is another problem we were facing. Although this is a minor problem for simple real-time localization, this trouble can cause defects in HAR. For example, a step of an activity could be to move an object from point A to B and variation could prevent us from correctly identifying the trajectory. In addition, it is often very difficult to distinguish between a mobile and a stationary object because of this issue. The steering behavior we present is defined as objects move correctly according to the physical perspective (speed, acceleration, and mass). This algorithm takes into account the maximum speed that the object is supposed to move and takes into account the acceleration and deceleration of the object to ensure that the direction of movement is stabilized over time. Specifically, this filter is based on the three force vectors: the speed, direction, and the desired speed. Using these vectors, we can calculate a realistic path searched, as shown in Figure 3.

The steering behavior and the three forces involved.

In Algorithm 1, the forces are calculated as follows. The current velocity is calculated using the distance between two iterations according to the preceding trilateration. The target position is the last position data by trilateration. There are, however, two other actors in the process constant, which must be determined. The first is the maximum velocity of the tracked object. It could be simply by estimating the approximate normal maximum speed at which a human moves an object in his ADLs. The second is the size of the arrival radius. This constant is used to determine from where the object should begin to slow down. We suggest you use the average error of location method as a deceleration zone. With these parameters, the behavior of arrival must stabilize the position of a static object. In conclusion, the movement behavior creates a coherent way rather than simply teleport the object from one point to another between two readings.

4.4. Spatial Modeling

RFID agent uses data modeling which is useful for the construction of the activity's library. In our model, each object is located in the time on a grid representing the area covered by the smart home. An object has a specific size depending on his size. Each object is associated with 1–4 tags RFID. In addition, each object has a type associated (e.g., cup, plate, etc.) and it also has a shape (circular or rectangular). Objects are positioned directly on the last-known position. In addition, we have fixed elements (e.g., oven, table, etc.) that are modeled according to their precise shape and position. These topological relations are one of the bases of the reasoning processes of intelligent agents for recognition and assistance. In fact, the goal of the algorithm is to observe the relationships between objects in the smart home and to follow up. These topological relationships are taken from the well-known framework of Egenhofer and Franzosa [44] that is based on the emptiness property of set. The framework defines the possible relationships between two entities

Topological relationships between physical entities A and B.

5. Home Appliances Identification

To be suitable for performing the electrical action recognition, we use an algorithm which detects when each appliance is turned on and off within a house. In fact, this part of our system is based on the algorithm explained and described in our precedent work [42]. In summary, the key is to detect the load signature of equipment when it is in operation. Typically, the variables considered are the voltage, the current and the power. In this way, each appliance is represented by its own waveform of power consumption versus the time. Finally, with this procedure, electrical signals identification agent achieves to associate a detected event with an appliance from the load signature.

5.1. Formal Definitions of Loading Capabilities

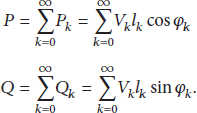

Each appliance is provided with specific operating characteristics. In our case, we focused on two main features related to power consumption. We undertook the study of active and reactive power of each appliance that is used within the house. Here are the formulas of the active power (P), expressed in watts, and reactive power (Q) expressed in VAR:

5.2. Identifying Device Status

An algorithm has been implemented, with the main purpose, to detect when appliances are turned on and turned off in order to add eventually scenarios and be effective in recognizing these last ones and to guide the patient to complete what he began. In fact, the load signatures are studied in a three-dimensional space by this algorithm. Thus, a representative load signature database has been established. In fact, this system is based on the algorithm explained and described in Belley et al. [17] to which we have made some modifications with the aim to apply it for assistance. The first developed part of this system is the database which is fundamental for the recognition of activities whose efficiency is closely linked to the quality of the assistance offered.

5.3. Extraction of Load Signatures

To build a data sheet for household devices, we used a nonintrusive intelligent module measuring only RMS values of active and reactive power in (4) (the number of samples per second depends on the frequency: 50 Hz or 60 Hz). Consequently, in this case, the possibility to obtain a complete power consumption waveform for each appliance was discarded because of the limited number of measurement samples provided by the smart modular power analyzer used in the smart home prototype. As mentioned, it is impossible to analyze the complete waveform, but it is still possible to base our reasoning on the variation of active power (P) and reactive power (Q). In fact, the algorithm, which describes the load signatures of each appliance, is effectively based on the study of the following features:

the active and reactive power variations during an on/off event; the number of the line-to-neutral that supplies the appliance.

To create the database containing the features of each household appliance used within the smart home, an algorithm was used to extract the load signature. Therefore, this algorithm (see Algorithm 2) reads an instantaneous measurement of the active (P) and reactive (Q) power on each line-to-neutral of three-phase lines at time

There is an appliance switched off.

5.4. Device State Recognition

The database created allows the identification of the appliances in operation. In fact, Algorithm 3 permanently reads the data from the power analyzer at the main electrical panel of the smart home to know the variation of P and Q on each line-to-neutral (three-phase system). Accordingly, when an appliance is used within the smart home, the algorithm detects it by the maximum variation of active and reactive power as well as by the specific number of the line-to-neutral where these changes are observed. Actually, a range of power variations (P and Q) are set for each appliance in the database; when the difference detected between two consecutive data readings can be associated to at least one appliance in the database. Then, the algorithm adds it in the monitoring report with the time of “ON” event and of the “OFF” event and the names of possible appliances in operation. Generally, there is only one recorded appliance for a given time, but in the case of the stove burner, it can occur that two appliances, with similar features, are considered. Nonetheless, in certain cases, the appliances are just misidentified. This kind of approach has considerable advantages. It uses nonintrusive equipment (i.e., power analyzer at the electrical panel). Indeed, contrary to other systems which work with the installation of many sensors [45–47], this NIALM system measures the electric current and voltage at the input of the main electrical panel limiting the number of sensors, and not necessarily convenient for the end-users, to monitor in the smart home.

three-phase electrical power If a device has been turned on an object in use

6. Activity Recognition

It is essential to build a robust computational intelligent system having a maximum of scalability, because it is sometimes difficult to adjust or modify the system. For our part, as presented in Figure 5, to increase the scalability of our system, we clearly distinguished the identification of actions from the recognition of activities. An agent is responsible for recognizing actions that have been learned. This means that when the various relationships that constitute action are recognized at the same instant, the action is detected. Specifically, each action of our system was modeled as topological relationships that are provided by the RFID agent or object states (on/off) which are provided by the analysis of the electrical agent.

Activity recognition model based on Bayesian network.

An action recognition agent answers to an issue which concerns the identification of the basic actions performed by the resident during his activities. In our system, to allow an agent to identify the action of “put water on to boil” its structure has been described by a set of conditions (e.g., the boiling pan must be on the oven when the burner is started). Thus, a condition is a spatial relationship between two objects or an electrical state of an appliance. From this, we can infer the action from the facts that describe the environment at a precise moment. Finally, an action starts when all its conditions are detected and ends otherwise.

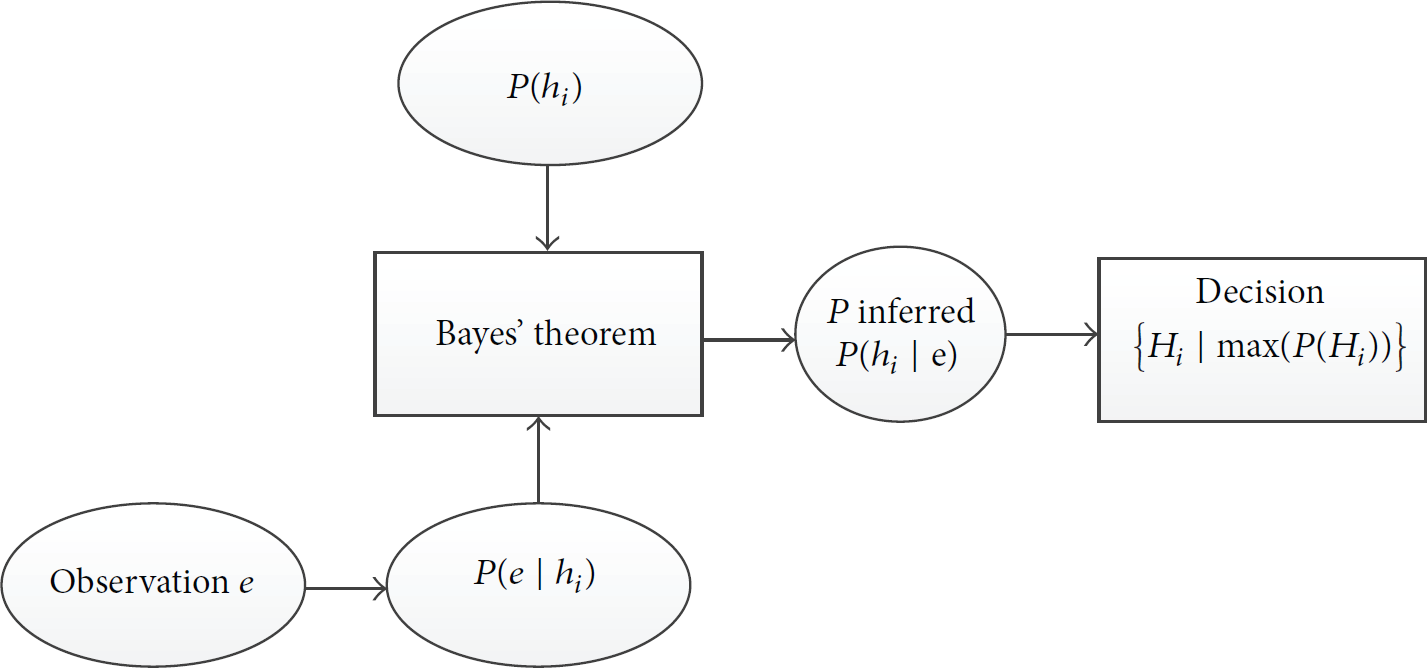

An activity recognition agent interprets the behavior of the resident from the actions, assuming they were correctly identified. More precisely, this agent deduces the most plausible activities using the results of a Bayesian recognition process represented by a set of possible activities associated with likelihoods. As we can see in Figure 6, the Bayesian decision process takes as input one or more observations e. The observations correspond to nonroot nodes of the network.

Bayesian recognition process.

7. Assistance

After detecting with a high success rate the ongoing activity, an assistive agent analyzes in real time the actions and intervenes in case of erratic behavior to provide assistance. When anomaly occurs, the assistive agent uses effectors (screen, speaker, etc.) in order to provide support appropriately to the kind of errors (step omission, steps inversion, perseverance, temporal constraint, and cognitive overload). An algorithm (see Algorithm 4) determines the errors which can occur in the scenario and gives advices to help patient performing a specific activity sequence.

7.1. Errors Detection

The first part of this assistive algorithm is the detection of errors and anomalies in a sequence of steps (actions) realized by a person with cognitive impairment from an activity definition. In fact, each scenario (activity) was defined by a human expert, but we believe that we could automatically build the library. Firstly, in order to define categories of errors that are often observed, we looked in the neuropsychology's literature of cognitively impaired patients, and we found a well-established cognitive test named the Naturalistic Action Test (NAT) [4]. From it, we were determined to focus on five errors that we could easily detect with the developed method: step omission, steps inversion, perseverance, temporal constraint, and cognitive overload.

A step omission is an error as a failure when a person forgets an essential step of an activity during its execution. For instance, in the preparation of spaghetti, if the patient never adds water in the boiling pan, it is considered as an omission. Moreover, an error of abandonment or renunciation occurs when the duration of a step is too short. This can be considered an error of omission in the sense that the step was not accomplished. For example, the resident may leave the pan on the oven for a period of time insufficient to allow the water to boil. Consequently, an omission is observed, when a patient skips one or several steps of a scenario described by a sequence of tasks where some task requires the completion of another before starting properly.

The inversion of steps is when two or more steps are not performed in the right order. Although an omission can lead to an inversion if the resident corrects his mistake by himself or from the prompt sent by the system, for example, the resident could start by putting milk and sugar in the cup when he is preparing a coffee, an inversion is observed when the tasks are not performed in the appropriate order.

An error of perseveration occurs when a patient persists on a same step in an activity during an excessive time. For example, if the time taken to boil water greatly exceeds the usual average time this is considered as a perseveration error.

The temporal error occurs when, for some reasons, the resident is not making any progress in the ADL or when the time elapsed since the last step seems too excessive in comparison to the temporal constraint. Foremost, it is primordial to estimate the maximum time judged acceptable between two actions for each patient according to the speed of execution of each task that he usually performs.

Finally, the fifth type of error is a cognitive overload that occurs when a patient tries to perform too many tasks at the same time which can result in a distraction and predispose a person to make errors. Thus, the number of simultaneous operations performed at the same time should be restricted with people suffering from cognitive impairment.

7.2. Providing Guidance

Essentially, the main objective of our work is to provide support services in real time for semiautonomous persons in smart environments to automate and replace caregiver services. Therefore, after detecting an erratic behavior, the agent must provide an appropriate assistance. Thus, when an error occurs, the assistive agent determines that it is essential to guide the patient in the achievement of his routine through instructions (prompts) using multimedia devices that equip the smart environment to ensure the visual and auditory communication. In fact, these devices are not disturbing for the person, because they are usually common devices that can be used for other activities than the assistance contrary to the sensors which are introduced only to monitor continuously the behavior and the activities performed by the patient. Finally, the algorithm evaluates the message to send and find the most appropriate way [50] to communicate with the patient living in the house using an interactive display for less critical problems or an audio message for more critical errors.

Once an activity is recognized by the agent, it is supported by the support agent. The global objective of this agent is to detect errors in the realization of ADL. To develop our method, we first examined in the literature of neuropsychology to find common mistakes cognitive disorders. From the different errors listed in the Naturalistic Action Test (NAT) [27], we selected the most frequent errors. The omission of the step is when, for some reason, the resident forgot to make a step from its current ADL. The inversion is that of two or more measurements not performed in the correct order. Failure may lead to a reversal if the resident corrects the mistake (if possible) we hope from the command prompt sent by the system. The problem of induction is when, for some reason, the resident does not make progress in the ADL (or inactive). This is detected by checking whether a time after the completion of one step of all the objects is at rest, so there is no change in the set of spatial relationships. The problem of persistence is when the resident performs a task or part of a task repeatedly for some time. This error is easy for us to detect because the step will be repeated over time. Moreover, our support system will be detailed in our next work.

8. Experimentation

For this research, we conducted different sets of experiments at our laboratory. Firstly, we discuss efficacy on RFID system and electrical load analysis in Section 8.1 but larger experiments have been published in [16, 17]. Secondly, we describe, in the next subsection, the experiments that were conducted to test our method of ADLs recognition. Thirdly, we described the experiments conducted on our assistance system.

We describe the tests in Section 8.2 that had as objective to demonstrate the efficacy of the combined recognition method. These experiments were all conducted. To validate our new hybrid recognition and assistance model, we used the modern infrastructure of the LIARA's smart home (see Figure 7). Our prototype apartment used more than a hundred of sensors. They are hidden as much as possible to keep the environment similar to a real apartment. Among the sensors, there are RFID technology (antennas and tags) and a modular power analyzer, but also electromagnetic sensors, accelerometers, force sensors, ultrasonic sensors, and much more. We have integrated all these different technologies for prototyping and developing algorithms. We also have many effectors that are strategically placed around the smart home to provide quickly our support services for the resident when needed. For example, there are some screens to show the orientation video and there IP speakers are installed in all corners. Specifically, for the RFID agent, have two series of experiments conducted at the same time based on the RFID technology.

The LIARA's smart home.

The experimental space was the kitchen where four RFID A-PATCH 0025 are installed on the walls. These antennas are circularly polarized for better indoor coverage. Antennas were originally placed there to implement a localization algorithm based proximity we often still use the quick demo. They are strategically placed as trilateration can be achieved. Also, according to the positioning of the antenna, the presence of human does not significantly influence the efficiency of the system. The presence of human usually influences when the human is between tags and antennas, but with this setup, it is rare that more than one antenna is affected at the same time. On the other hand, for the analysis of electric signatures, we set up a system in the laboratory. This system monitors the power consumption to a single power source or the main electrical panel of the laboratory at the university, resulting in lower costs with regard to installation and maintenance. Precisely, an intelligent modular power analyzer (Model: WM30 96) Society Carlo Gavazzi has been implemented. The kitchen was also the ideal choice for our tests. In fact, most of the cognitive tests concern the kitchen activities and the most difficult tasks of life of all normal day are usually cooking ADLs. Also, we did not mention it earlier, but during the experiments, we assumed that the human who did the task was located in the kitchen. In fact, we do not need its precise position to locate his body or hands. We base the reasoning on the position and movement of objects. Therefore, human subjects did not need to wear any bracelet which is an important advantage of our system.

8.1. Experiments on RFID and Electrical Load

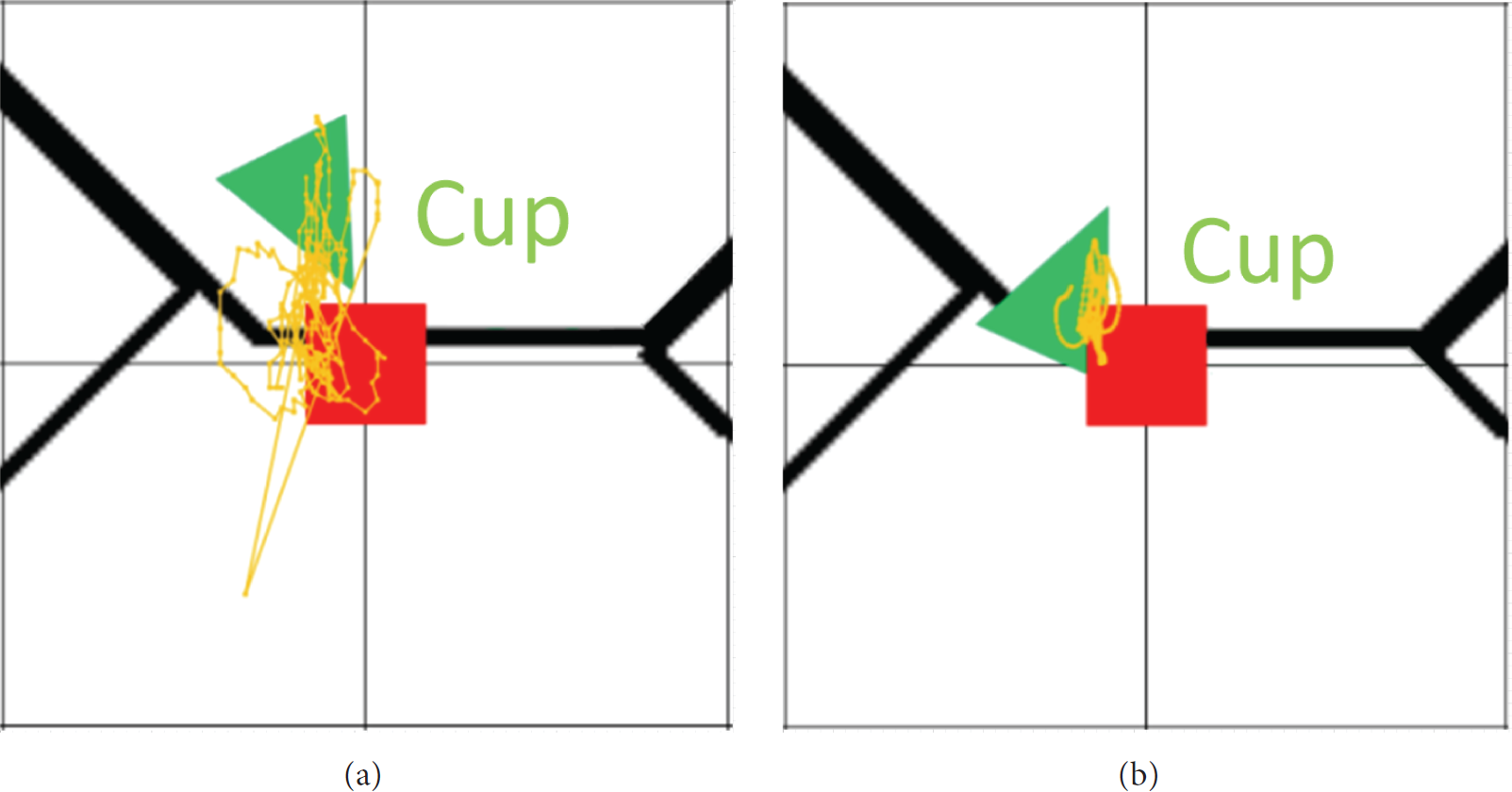

In our previous works [16, 17], the RFID positioning and the analyzing electrical signature were tested with robustness. In fact, our RFID positioning algorithm obtained excellent results with an average accuracy of ±11.64 cm. Besides, with the use of steering behavior, trajectory and stability have improved significantly as shown in Figure 8.

The image on the left is obtained without the movement behavior and the right image is obtained by using it.

Moreover, it is particularly useful to detect if an object is active or inactive. Also, false movements are greatly reduced, which can detect more easily if an object moves or not. On the other hand, we also conducted extensive experiments on the analysis of electrical signals and we obtained the detection rate of approximately 98.3% of on/off events.

8.2. Experiments on Activity Recognition

In order to challenge our new activity recognition system and test its effectiveness, we have established an experimental protocol that faithfully represents the intelligent reality environments. We wanted to cover a wide variety of scenarios related to cooking activities and develop a detailed experimental protocol to obtain significant results. First, we wanted to test the effectiveness of our hybrid system in recognition of the steps in the activities. A human subject performed 125 tests on five distinct kitchen ADL scenarios (MakeCoffee, MakeTea, MakeSpaghetti, PrepareToast, and PrepareHotChocolate). Each scenario has been performed 25 times. The algorithm was able to correctly identify activities in detail 96.9% of the time (recognition rate). As presented in Figure 9, the error of our system of hybrid recognition rates is mainly caused by the inaccuracy of RFID sensors, which sometimes have a fluctuation in reading. At certain moments, topological relationships are not correctly identified, which cause recognition errors. Although these results are impressive, it must be considered that the activities were carried out by simulation with humans who do not have any cognitive impairment.

Step recognition rates.

Second, we tested the effectiveness of dynamic probabilities of our system. We selected two ADLs (MakeCoffee and MakeTea) for which there were any common steps. We realized several times each scenario and over time the system was able to identify more effectively the ongoing activity corresponding to the profile of the resident. Obviously, it is certain that the experiments were checked, but we are still confident that in a real context, the results are equally impressive. In the near future, we have planned to test the system with patients with cognitive impairment, to place the system in a more realistic context.

Third, to confirm the contribution of our hybrid system (combining RFID and electrical sensors), we did some additional experiments. In fact, we conducted several common scenarios that we simulated 20 times each, and we made a comparison. For example, we completed the preparation of a coffee only using RFID technology. Subsequently, we repeated the same scenario with just the analysis of the electric system, and finally, we used the hybrid recognition system. We also performed the same way to compare the efficiency of the hybrid system with other scenarios (e.g., preparing a toast, morning routine, etc.). Figure 10 shows the recognition rates of each system in two specific scenarios which clearly show a gain with the hybrid system.

Comparison of single sensor system versus hybrid.

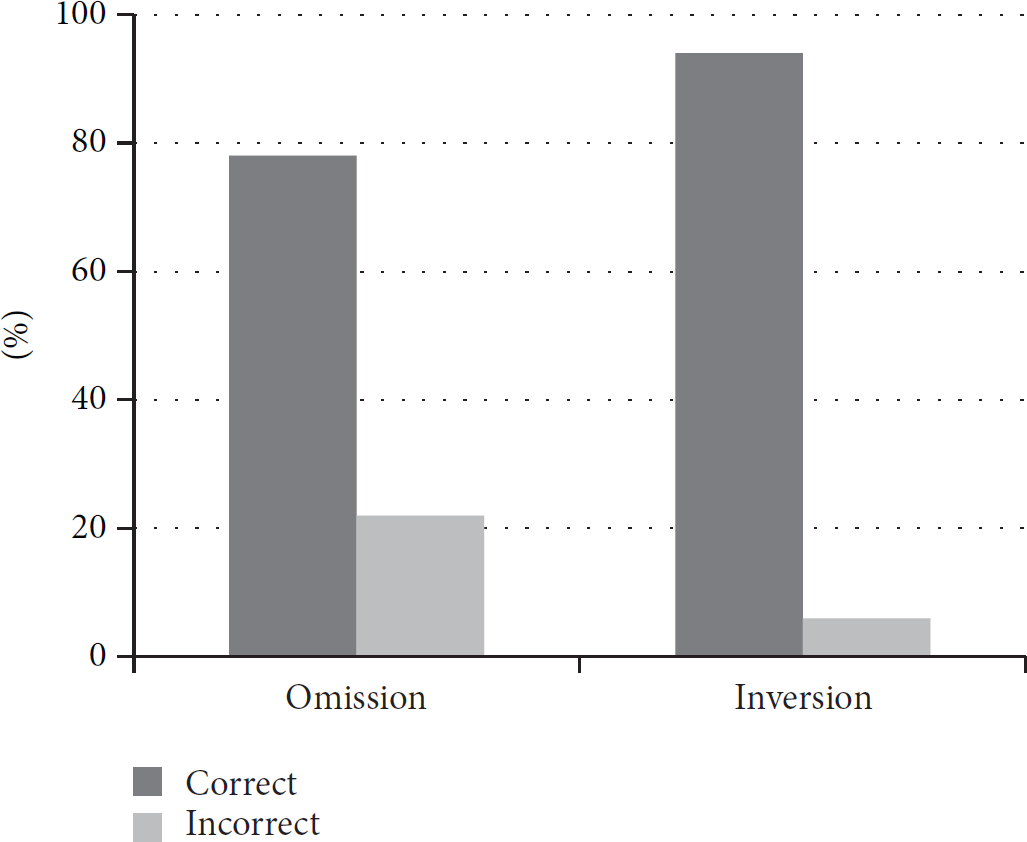

8.2.1. Experiments with Erroneous Executions

We also conducted other experiments to assess the reaction of the recognition system during an erroneous execution. For this, we used the same scenarios as in the previous phase, but we introduced certain typical cognitive errors during the execution. For these experiments, we carried out 50 executions erroneous (omission and inversion) and we analyze what was the most likely scenario. Figure 11 shows the obtained results.

Efficacity of the recognition system during an erroneous execution.

Because of the introduction of errors, the system has not always been able to identify, without doubts, what was the current activity. However, with probabilities provided by the Bayesian network, it often (86%) was possible to correctly identify the most likely activity corresponding to the current one. The results are also quite surprising. It is certain that the scenarios were performed by human subjects without cognitive disorder, but the introduced errors were not previously determined.

8.2.2. Comparison with Other Technologies

To compare different technologies, we conducted an analysis of various classical activity steps. We analyzed several common activities from the literature in order to give us a representative overview of usual steps. Then, according to the literature and our own experiments, we have identified the limitations of each families of recognition systems based on a particular technology. Table 1 shows a comparative analysis. It is interesting to note that our hybrid recognition system was able to identify the majority of the steps of an activity. However, our system still has some limitations in the recognition of specific events that are not based on electrical activity or on objects movements. Nevertheless, our system allows easily recognizing a wide variety of scenarios compared to other technologies.

Comparison of different technologies of recognition with typical activity steps.

8.3. Experiments on Assistance

In addition, we conducted other experimentations to test and assess the efficiency of our assistance and guidance. For this, we used the same real scenarios as in the previous phase. This time, we especially wanted to test the step by step identification part and the error detection. These ADLs were simulated fifteen times each again by a human subject in our smart environment but during the achievement, the subject introduces some typical cognitive errors that have been previously defined. Each wrong scenario has been tested using different systems (RFID only, load analysis only, and the combined system of the two technologies). A total of 75 erroneous executions have been done and our new combined system correctly identified almost all errors. However, due to the inaccuracy of sensors, the system has had difficulties in accurately detecting the duration of each action and by extension the errors related to the temporal aspect. Figure 12 shows the detection rate of each system of five types of errors, which clearly show the gains in the hybrid system.

Comparison of the hybrid sensor system.

9. Conclusion and Future Works

In this paper, we described our recent progress towards the development of a real-time assistance system based on passive RFID technology and electrical devices identification. We showed that the use of multiple sensors can significantly increase the level of accuracy in the recognition of activities which is useful for assisting. Moreover, this new Bayesian model has several advantages for smart home assistance because it does not rely on expensive, invasive, or difficult to deploy technologies. Also, our new algorithm performs step by step assistance of fine-grained ADL enabling it to detect at least five types of errors. In addition, our model distinguishes each layer of the recognition with a multi-agent approach. It is currently easy to add new sensors, modify the algorithms of each agent, and adapt the recognition system.

Despite our interesting results, there are still some limitations that we must work on in the near future. First, we need to test our system on a larger scale, with more scenarios, with a greater number of subjects, and with patients suffering from cognitive disabilities. These additional experiments will allow us to validate the effectiveness of the cognitive errors recognition and assistance offered by the system. These two elements are essential in a context of assistance for elderly patients. Second, it would be interesting to integrate a learning mechanism, based on recent advances in data mining approach, which would make it easier to increase the activity library. In addition, we are presently working to improve the accuracy of our RFID model by including filters (Kalman, Monte-Carlo, etc.) for signal processing. Also, we are currently working with other technologies in order to integrate them into our multi-sensors model.

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgments

The authors would like to thank their main financial sponsors: the Natural Sciences and Engineering Research Council of Canada, the Quebec Research Fund on Nature and Technologies, and the Canadian Foundation for Innovation.