Abstract

A sensor-rich mobile video represents a new type of videos acquired from modern smart phones. During video recording, it is also recorded various amounts of sensor data collected from embedded sensors. Unlike the conventional videos acquired from proprietary capturing devices, these videos allow enriched reconstruction of their surrounding environments, while enabling users to record them handily. In this paper, we examine the robustness of existing device orientation detection method, by analyzing the motion sensor samples that are publicly available from the sensor-rich mobile video hosting website, and discuss our observation results and potential problems when computing device orientation of georeferential mobile videos.

1. Introduction

When you take photos from a mobile phone and see them later, you may be embarrassed by incorrectly positioned upside-down or sideways photos. Such abnormally oriented photos are quite commonly observable even with a recently launched smartphone. With the assistance from a powerful photo editing utility, you will reluctantly reposition the photos to correct their orientation, while wondering why the modern smartphone equipped with the decent microelectromechanical systems (MEMS) sensor technology fails to detect the right orientation. Correcting wrong orientation of mobile videos is more bothersome and more tedious than that of the photos, since they need expensive reencoding processing during the fix.

Understanding the device orientation of a mobile video is vital for user's video watching experience. Without proper orientation clue, a user would be bothered by incorrectly positioned mobile videos, which is not rare in many user-generated mobile videos. Fortunately, the recent standardization efforts on HTML5 technology for video support make on-the-fly mobile video rotation in a web browser feasible, so that it may unnecessitate the reencoding task. Therefore, as long as the mobile video has a clue on its orientation information and is playable in a web browser, there is little cost on the real-time orientation change for the mobile video. Owing to the widespread use of MP4 format as a mobile video container, some mobile videos are annotated with a rotation tag from their metadata and video hosting services attempt to recognize and reflect it during video playback. The existing metadata tagging (including Exif-based model) may include “rotation” information when the device orientation was provided at the very beginning of video recordings. The adversity, however, still lies in that many videos may have multiple orientation changes during their video shooting. Therefore, it is necessary to correctly identify the device orientation and its change over time during the recording.

Recent advances in smart device technologies enables unprecedented blossom of creating new types of media presentations. One of such types is sensor-rich mobile video. With the extensive use of embedded sensors available on modern smart devices, the mobile video can be acquired from a commodity smart phone and accompanied by the acquisition of various raw sensor samples. The appearance of such sensor-embeddable mobile videos, however, addresses new challenges. Among them, magnetometer is known to be very noisy upon the appearance of any magnets nearby. Identifying correct orientation from images or videos has been popularly known to a very challenging task if no auxiliary information is available. Even humans failed to understand correct orientations from pure images.

Sensor-rich (or georeferential) mobile video services, http://geovid.org [1], aims to collect various pieces of sensing information from motion, location, position, and environment sensors embedded in a mobile device during video recording, indexes them for spatiotemporal query support, and synchronizes video playback with the sensor data. According to the quantitative analysis of the measured sensor values from sensor-rich video dataset, however, compass directions computed from raw sensor samples are error-prone, thus leading to incorrect search results [2]. Therefore, it requires extra post-processing such as computer vision aided augmentation. In this paper, we examine the degree of the robustness of the decision of device orientations from the pure use of raw sensor samples and discuss the observation results of test dataset.

Wang et al. [2] explored a content-based orientation correction method with the assistance from a location sensor, that is, GPS sensor. Before the correction, they first identified the user's location and then attempted to find any popular objects or landmarks nearby the location. Once locating the landmark at the first-appearing video frame, they applied Kanade-Lucas-Tomasi feature tracking algorithm on it over next video frames. Their method, however, heavily relies on the existence of location sensors. Moreover, it is doubtful whether it could provide location information instantly. Moreover, it may not work in less popular areas.

There have been several researches on estimating the device orientations without relying on the use of embedded sensors. Appia and Narasimha [3] suggest the computer-vision aided low-complex technique to pinpoint the orientation mode of a photo, using the classified category of an image and some intuitive assumptions. For example, they assume that higher smoothed region along a boundary from an image and higher mean intensity have a higher tendency towards North. Although this method may work in some stationary images, it has not reported any extensive evaluations. Moreover, there are many commonly known situations, where the assumptions do not hold. Ciocca et al. [4] developed image orientation detection from Local Binary Patterns (LBP). To lower the computational complexity, they relied only on low-level features and showed that their detection accuracy is comparable with that of ordinary human observers.

In this paper, we would like to answer the following three questions.

Which sensors are key contributors during the device orientation decisions? How much accurate and reliable are device orientation decisions in mobile devices? Why do some mobile videos fail to detect their device orientations during video recording?

The organization of this paper is as follows. In Section 2, we introduce the problem of detecting the device orientation from embedded motion sensors and various popularly known orientation computation methodologies used in many existing systems. The section also covers our sensor acquisition methods and necessary post-processing steps. Section 3 describes the observation results of the given computation methodologies and concludes which methodologies and which motion sensors are dominant factors. Using the collected mobile video dataset, we evaluate several performance metrics of the device orientation decisions and discuss their implications. Finally, Section 4 summarizes all our observation results and recommendations on the orientation correction of mobile videos.

2. Materials and Methods

Device orientation describes the physical orientation of a device and is expressed as a three-dimensional (3D) rotational angular vector from a device coordinate system. In this paper, we term the rotation angles yaw

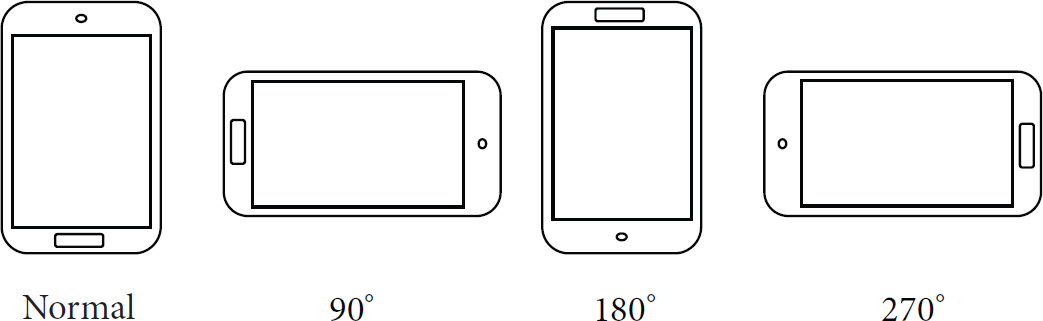

The orientation vector is derived from underlying sensor samples. There are many different derivation methodologies tested in our testbed. These will be covered in Section 2.3. After obtaining the orientation angles, we employed straightforward device orientation mode categorization from pitch and roll values. As shown in Table 1, a simplified categorization algorithm divides the given device orientation vector into one of the following: 0°, 90°, 180°, 270°, and undetermined. The device orientation mode is the closest rotation angle among four representative rotation angles from the given device coordinate frame–positive along the clock-wise direction. That is, 0° is normal, 90° is the rotation by 90 degrees from the normal mode vertically, 180° is the horizontal mirroring of the normal, and 270° is the reverse of the mode 90. The “undetermined” is a mode that an orientation value does not belong to any of the given degree categories. For example, a phone facing up on a table cannot be identified as one of the degree categories, since its orientation mode can be subjective to user's actual viewing position. Figure 1 illustrates this categorization concept clearly.

A simplified device orientation mode decision from two orientation values of a mobile phone.

Four different device orientation modes of a mobile phone.

2.1. Sensor Data Acquisition

We used GeoVid web services to collect all georeferential mobile videos and their corresponding metadata through APIs [6]. Since we collaborated with its development team, we could learn all detailed implementation specifics and their unique approaches. The GeoVid system consists of a client-side network component and a server-side network component. The sensor acquisition module (at client-side) implemented as a mobile app running on both iOS and Android records various amounts of sensor data (GPS, compass, WIFI fingerprints, light level, motion sensors, etc.) during video recording. Once recorded, a video is then uploaded to the video hosting server called GeoVid server along with collected sensor data via upload API. The server system software component hosts GeoVid API traffics coming from various places. It consists of four classes of API services: search, geovideo-specific service, upload service, and various utility services. Among these services, the geovideo-specific services allow users to directly access various georeferenced data services. For example, they may retrieve all metadata or GPS only, compass only, WIFI only, and so forth. The output results are encapsulated as either JSON (JavaScript Object Notation) or CSV (Comma Separated Values). See a sample JSON formatted raw sensor dataset, as shown in Figure 2.

A screenshot of sample raw sensor dataset collected from GeoVid API. You may access the dataset, using http://api.geovid.org/v1.0/raw/index/3adc2cf85d754117325799bfa68d2a46bfc6a098.

The iOS mobile app (termed GeoVid for iPhone and GeoVid HD for iPad) and the Android mobile app (called GeoVid Recorder) can record videos and their sensor data. Every time-stamped sensor sample is recorded on a separate file whenever its change is reported during the video recording. That is, the app does not collect sensor samples if they do not change over time; this policy lowers the sampling rate significantly. Since the compass value changes so frequently, we limited its sampling period by 200 milliseconds in earlier releases of the app, avoiding any noticeable performance degradation by too frequent sensor samplings. Later, we lowered the sampling rate down to 20 milliseconds if underlying mobile device is capable of measuring the sensors with such fast rate.

As of July 22, 2013, we extracted 1886 sensor-rich videos via APIs and stored all datasets to our local data storage system for further processing.

2.2. Characteristics of Sensor Datasets and Their Limitation

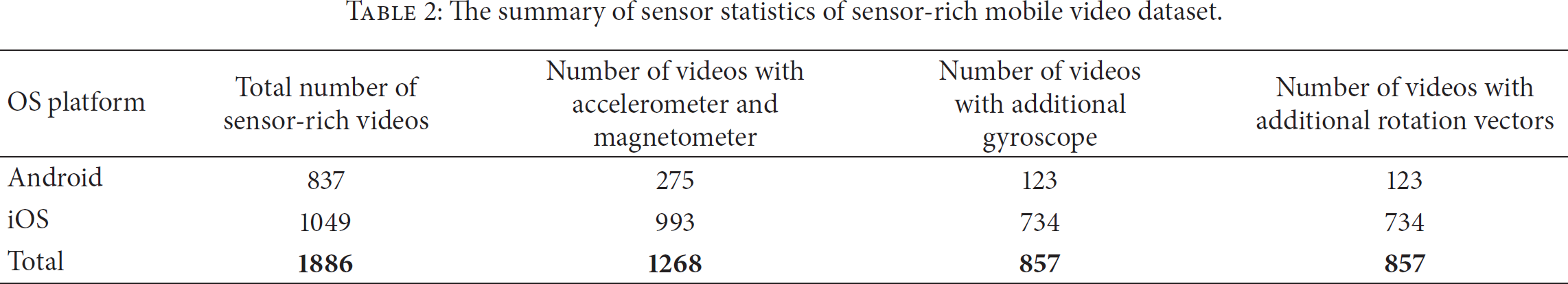

Table 2 summarizes the sensor-related statistics of the mobile videos hosted at GeoVid. We identified total of 1886 mobile videos: 837 uploaded from Android devices and 1049 from iOS devices. One-third of the videos (618), however, did not contain any samples from motion sensors, such as accelerometer and magnetometer. Then we further filter out the videos that have no gyroscope or sensor-fused rotation vector.

The summary of sensor statistics of sensor-rich mobile video dataset.

From the table, we realized that the rotation vector sensor, a synthesized composite sensor, is always obtainable as long as gyroscope sensor is embedded in a mobile device. That means that the rotation vector sensor may be unnecessary once three primary motion sensors (accelerometer, magnetometer, and gyroscope) are all available. On purpose, we use it in our later experiments, to evaluate the reliability of the sole use of rotation vector, while not using all the primary sensors. If it were evaluated reliable, we would expect that the sensor maintenance could be simplified as the single sensor management for the device orientation detection. On the contrary, it turned out to be very noisy and less usable than other motion sensors, which will be detailed in the next subsection. As a result, we identified the total number of 857 filtered sensor datasets, 123 from Android and 734 from iOS, and use them throughout the paper.

Table 3 shows the quantity of 857 filtered sensor datasets. Interestingly, the number of accelerometer sensor samples from iOS is much smaller (an order of magnitude smaller) than that from Android, considering the number of filtered videos for each platform, while other motion samples from iOS are relatively smaller but not more than twice smaller than from Android. Careful inspection revealed that accelerometer samples on iOS mobile acquisition app are only recorded in an outdated manner (sampling period of around 200 milliseconds), while other motion samples are logged in an up-to-date manner (as fast as a device can support). Since the longer sampling period is still tolerable, we use less frequently sampled dataset in our analysis.

The total number of sensor samples of 857 filtered sensor datasets.

123 filtered videos from Android had a total duration of 6 hours, 26 minutes and 20 seconds—around 3 minutes per video on average; similarly, 734 videos from iOS had a total duration of 22 hours, 25 minutes and 6 seconds—about 1 minute and 50 seconds per video.

The collected dataset showed that individual sensors are collected independently. Whenever a new sensor value is read from a sensor module, dedicated threads per sensor type usually calibrate the incoming sample to minimize any side effect caused by any noise. Then, the corresponding arrival event is delivered to a mobile system and any interested user thread will access and read the calibrated sample. Due to the nature of sensor event happenings, it is reasonable to treat all incoming sensor acquisition events asynchronously. During our postprocessing step, we want to compute device orientation on the same time instant, so that we need to align individual sensor values on the same time instants. Throughout the rest of this paper, all device orientation computation is executed on such interpolated sensor values. As shown in the Table 3, the number of interpolated samples is two times higher than that of raw samples. Therefore, in this paper, any performance metric tends to be overestimated twice.

2.3. Orientation Vector Derivation Methods

For every time-aligned interpolated sensor sample, we first compute their corresponding orientation vector. In our analysis, we use four different methodologies that have been widely used in existing solutions (see their detailed description in [7]). In our experiment, we analyze the dataset collected only from Android devices on purpose. This was because android manufacturers used a variety of cheap or expensive embedded sensors, to target low-end or high-end markets. For the comparison, we evaluate the performance of the following four derivation methods: (1) the use of original orientation sensor (“Simple”), (2) derivation from accelerometer and magnetometer (geomagnetic field at an earlier literature) (“acc + mag”), (3) derivation from rotation vector (“rotation”), and (4) computation by balance filter (“acc + mag + gyro”). Among them, the balance filter, proposed by Colton [8], uses all primitive motion sensors (accelerometer, magnetometer, and gyroscope). The basic idea of this filter is that it requires relatively lower processing power than different complex filtering mechanism, while maintaining a good quality of noise removal from sensor samples.

The simple method read data from an orientation sensor, whose use was later deprecated due to its wrong design. The use of two motion sensors, acc + mag, is a recommended version suggested by Google in their earlier Android SDK, widely used by many earlier Augment Reality Android app developers as a replacement for the simple method. The rotation vector sensor is introduced in later Android version (Android platform version 2.3 or above). The gyroscope-assisted version, the balance filter, has long been available since Android version 1.5, but not many Android devices were equipped with it until Android version 2.3. We are aware of new Android APIs that allow users to obtain uncalibrated pure raw sensor samples from Android version 4.3. Unfortunately, the majority of our Android dataset collected over the last two years did not contain such raw samples, so that we limit our preliminary experiments on the calibrated sensor values.

So far, we have described our sensor data acquisition methods, the characteristics of the collected samples and their limitations, and our preprocessing before the evaluation. In the following section, we use three performance metrics to answer the questions in Section 1.

3. Results and Discussion

To answer the questions in the introduction section, we provide the observation results of three different performance metrics in the first three subsections. In Section 3.1, we show the degree of accuracy and reliability of four orientation computation methods, to answer which motion sensors are the most dominant factors in the decision of correct device orientation. In the next subsection, we report our simplified device orientation mode categorization results for every orientation vector. This observation result shows the quantity of our simplified decision model and its accuracy. Upon any failure of the detection, we investigate why such unwanted failure happens. In Section 3.3, we show the startup delay of every given dataset. This performance metric may be associated with the observation from many photo taking mobile applications and this reveals how long it will take to get any convincible orientation mode. In the final subsection, the observation result on multiple orientation mode changes during a single video shooting and their examples.

3.1. Dominant Sensor Types for Accurate Orientation Decisions

In this experiment, we use the dataset collected from Android devices. The reasoning behind this is that there is not much information available on iOS sensor acquisition methodologies, while their sensor types relatively lack variability compared with the case in Android environments. So the analysis of the Android sensor dataset is expected to unveil many interesting observation results. As shall be described in Section 2.3, four different orientation vector acquisition methodologies are used for the evaluation. Among the orientation values, we chose yaw value because it is much easier to identify whether the computed orientation angle is correct when inspecting the correctness of the angle computation by human eyes.

Figure 3 depicts three stereotypical matching results of the four different methods. Figure 3(a) shows that four methods will result in the same computation results as long as sensor values are obtained reliably with little noise. Such well-matching results, especially, are commonly observable at mobile devices equipped with a relatively faster processor than cheap mobile phones. It implies that the accuracy and the reliability of sensor acquisitions are quite dependent on the processing power. Since we are using high sampling rate, low-powered processor tends to cause variable sensor acquisition delays and late arrivals due to frequent heavy processing loads. Therefore, it is recommended to avoid any cheap, low-powered processor equipped devices for the sensor acquisition.

Various orientation vector computation results. For a given dataset, orientation angles (yaw value) in degrees (y-axis) computed from different methods are depicted on their elapsed timestamp (x-axis in milliseconds).

Figure 3(b) shows the case where the simple method is a timestamped version of the acc + mag method. In fact, the simple method, obsolete and updated version, used a lower sampling rate than the acc + mag method, so that some device manufacturers seemed to use the derivation results of the acc + mag method, to support the orientation sensor model. While the simple and the acc + mag methods match well in the figure, the rotation and the balance filter showed similar trend, since the existence of gyroscope compensated any performance fluke during the sample acquisition, revealing the low-pass filtering effect reliably. Figure 3(c) reveals that the simple method, if used solely, causes high bias (more than 10 degrees difference with other methods). As Google deprecated, the use of sole orientation sensor is discouraged. The difference between the acc + mag and the balance filter has a statistically similar trend, while the acc + mag had a lot of noises during collection. One interesting observation from Figure 3(c) is that there is a statistically meaningful disparity between the balance filter and the rotation. Such offset bias persisted for a long period of time.

Figure 4 shows some errors observed from the use of rotation vector sensor. Compared with other sensors, this sensor-fused synthetic sensor is exposed to various noises and errors. The temporary noise was introduced and then resumed back to normal condition (see Figure 4(a)), or it stayed for a long period of time without any recovery (see Figure 4(b)). We also observed the drift effect shown in Figure 4(c). A phone, placed on a flat space, where its rotation vector should have been zero, can be not near to 0, which causes integration error during the computation. Unfortunately, the actual cause of this symptom is still unknown, considering the fact that rotation vector is a synthetic sensor computed from other real sensors, while the computations from the other real sensors are quite similar to each other. Such phenomena happened in many outdated devices. Therefore, we recommend not using rotation vector for any orientation detection task.

Various error types observed when the rotation vector sensor is used.

In summary, we recommend using the acc + mag and the balance filter among the four methods. Although acc + mag method is exposed to high-frequency noises, its smoothed version, the balance filter, will easily remove such high-frequency noises. In the following subsection, we further investigate whether two chosen methods result in the same degree of accuracy and reliability during the orientation mode decision.

3.2. Accuracy and Reliability of Orientation Mode Decision

Once the orientation vector is computed from the two methods, we apply the straightforward orientation categorization, whose algorithmic description is presented in Table 1. During the orientation mode detection, we observed that there are many orientation vectors that are categorized as “undetermined.” Specifically, 40339 discrete sensor samples out of 441883 gyroscope aligned sensor samples from 857 valid videos (9.1%) could not be determined on their device orientation modes. Such high percentage is understandable since our straightforward decision method is applied in a strict way.

To compensate the strictness, we convert all undetermined periods to the modes that are later determined correctly. For example, if the orientation mode computed from consecutive orientation vectors has the following sequence, {undetermined, undetermined, 0, 0, undetermined, 90}, the sequence will then be remapped to {

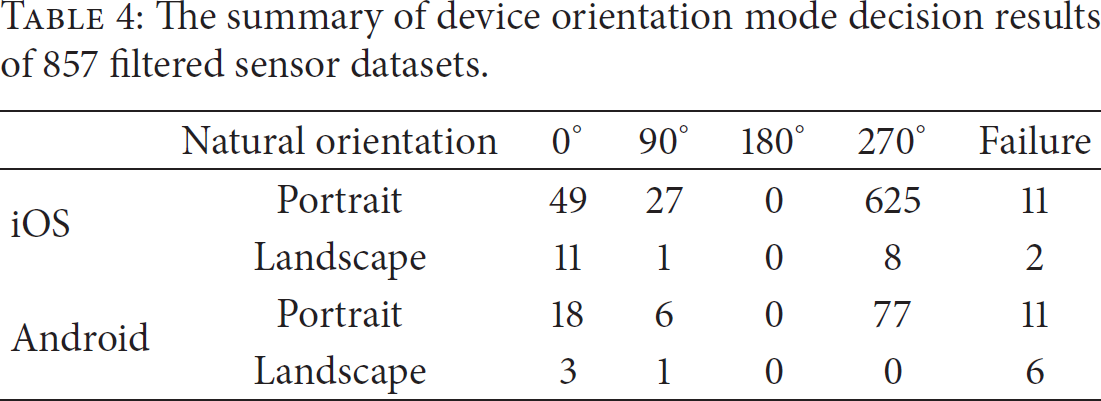

Table 4 shows the overall decision results by the categorization method. In the table, we employed the concept “natural orientation,” which is proposed in Android community and in W3C working draft [5]. The natural orientation means that which devices are preferable to portrait mode or landscape mode. For example, if your device has a small form-factor, it is expected to be natural to view the device in portrait mode, since the portrait mode can show more contents than the landscape mode. On the other hand, a large form-factor device such as tablets may be well-viewed in landscape mode, since it is too big to hold with a single hand. Since iOS had no such concept in their devices, we consider the natural orientation of all iPad devices as landscape. For example, portrait 0° mode means that the video is taken using a single hand in a portrait mode; portrait mode 270° means that the video is taken with two hands in a landscape mode; landscape mode 270° actually corresponds to portrait mode 0°, meaning that the video is taken in a normal portrait mode although the device is too big to hold in a single hand.

The summary of device orientation mode decision results of 857 filtered sensor datasets.

Among the 857 test videos, 87.4% videos were recorded in landscape mode, while 9.0% in portrait mode. This observation implies that video recording with conventional landscape mode, which has been widely employed by many professional or nonprofessional video shooters since video camcorder age, is still dominant in mobile age, although taking a video in portrait mode is very handy nowadays. But there exist unnegligible numbers of videos that indicate the use of portrait mode taking starts to get popular. One interesting observation from Table 4 is that there is no video that was taken in a totally upside down from its natural orientation: that is, landscape 180° and portrait 180°. It is believed that the reasoning behind this observation is that recording button is located in a fully strange way if the video is taken from the upside down mode and video takers seem uncomfortable with such situation.

The remaining 30 videos (3.5%) were reported as failed videos. The failed video means that the orientation mode sequence contains only the undetermined mode values such as {undetermined, undetermined, undetermined, undetermined}. Among the failed videos, 17 videos were from Android, while 13 were from iOS. Among 17 failed Android videos, 6 were from ASUS Transformer; 6, Samsung Galaxy Note; and 5, Samsung Galaxy S2. From 13 failed iOS videos, 2 were from iOS and 11 were from iPhone. Among the 30 failed videos, we observed that 20 videos could not be determined from our manual inspections either, since it was difficult to judge the orientations from their video contents visually. 18 out of these 20 videos were positioned either in front of a black keyboard or towards a monitor in a slanted manner and looked standing-still until the end of the videos. The remaining 2 videos out of 20 were pointed to a black screen, while some noises were heard in a background. But there was no way to find any visual clues to identify their orientation mode. Other than 20 videos, 10 truly faulty videos, seemingly ordinary, were taken on a bus, on a subway, or by foot, but they could not locate the correct orientation mode.

Finally, we compared the degree of the accuracy and the reliability of the orientation mode decision of the two methods (acc + mag and balance filter). Other than 30 totally failed videos, the rest of the videos matched their orientation decision well with our manual visual observation. The undetermined mode typically happened during transient periods, when orientation mode starts to change, which seems also acceptable to us. The two methods led to the same results, while their decision behaviors are quite different during the video transient periods. Such oddness was due to the different handling of high noises that are frequently observable during the transient time, but the final decision results are quite similar. This observation also reveals that orientation mode detection, actually, does not need gyroscope. The combination of accelerometer and magnetometer is sufficient for the accurate and reliable orientation mode decisions.

It is worthwhile to note that there is a single case of wrong orientation mode detection. As depicted in Figure 5(b), the content in the video actually shot the object (“Singapore Flyer at Marina Bay”) from the person positioned at left-bottom towards north-east bounds. Due to incorrect orientation mode detection, the field-of-view of the video (depicted as pie slice shape in the figure) pointed towards north; see Figure 5(a). This is because the orientation value was near 45 or −45 degrees boundaries in such particular situation. Therefore, a single degree difference can cause significant orientation errors. Although such error happened once in our 857 video datasets, it is vital to many ordinary users. This erroneous observation result is self-explanatory. We need a fine-grained algorithm to remove such unwanted situation.

A single case of wrong orientation mode detection.

3.3. Startup Latency

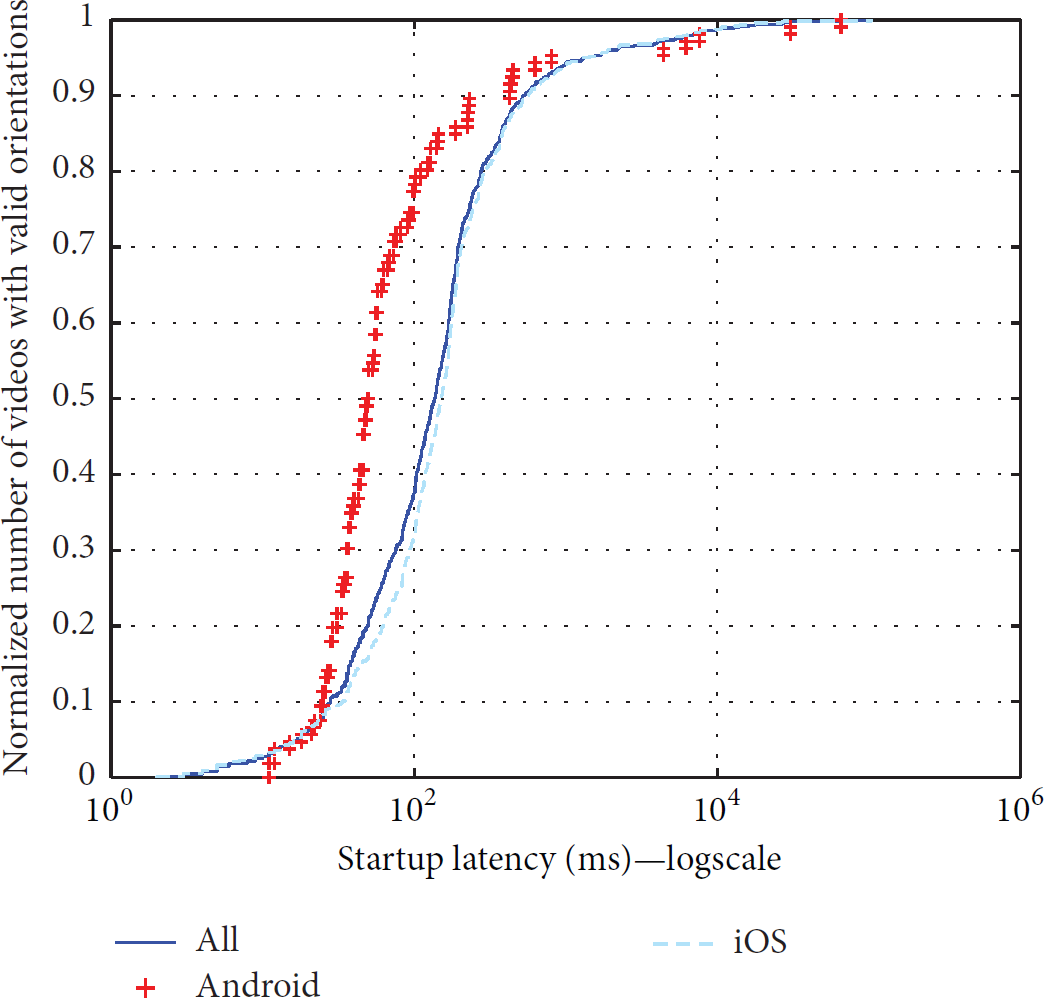

One of the key performance metrics is startup latency. The startup latency of a video, in our context, represents the elapsed time when the orientation decision is made after the video is recorded. In an ideal situation, it should be zero or negative. That is, the device orientation should be known at the moment of video recording or earlier. Otherwise, the device orientation would be undetermined until the startup delay. If this metric is larger, a user may have a higher chance to experience abnormally positioned photos or videos. The computation of the startup latency is as follows: the given sensor datasets of a video are aligned and interpolated over discrete time instants. From the interpolated sensor samples, we compute the orientation vectors of every time instant and categorize all the orientation vectors into one of the orientation modes. In many cases, the orientation modes cannot be identified immediately. Figure 6 depicts the trend of the startup latency of 827 videos (excluding 30 failed videos) whose orientation mode was successfully identified. The median values of the latency are 151 ms for iOS and 49 ms for Android. Although the median values are three times different, this discrepancy was largely due to the side effect by different sampling rates for different mobile platforms.

Observation results of startup latency related the CDF of different orientation detection performance metrics. The CDF of startup latency from valid mobile videos. The startup latency is expressed as millisecond unit.

Typically, most of valid videos could quickly reach a stable detection state (more than 80% videos were determined within 200 milliseconds), while some had an intolerable detection latency. Three such outliers (54 sec, 65 sec, and 159 sec) depicted in the figure were because the sensor recording started long after the video recording initiated, which are frequently observed in many earlier Android devices (due to performance glitches), but not in the up-to-date phones. Misconfigured photos taken from mobile devices may be associated with this headroom time. Although fractional, such incorrect image orientation is unavoidable when the orientation decision is purely dependent on underlying sensors.

3.4. Multiple Orientation Changes

We also observed that 4.6% (39 out of 857 videos) actually reported multiple orientation changes: 14 from Android and 25 from iOS. The number of orientation switching times seems to follow a long-tail distribution: majority of such videos had at most 4 switching attempts (typically, single or two switching attempts) but a rare number of videos had more than 10 times video switching attempts.

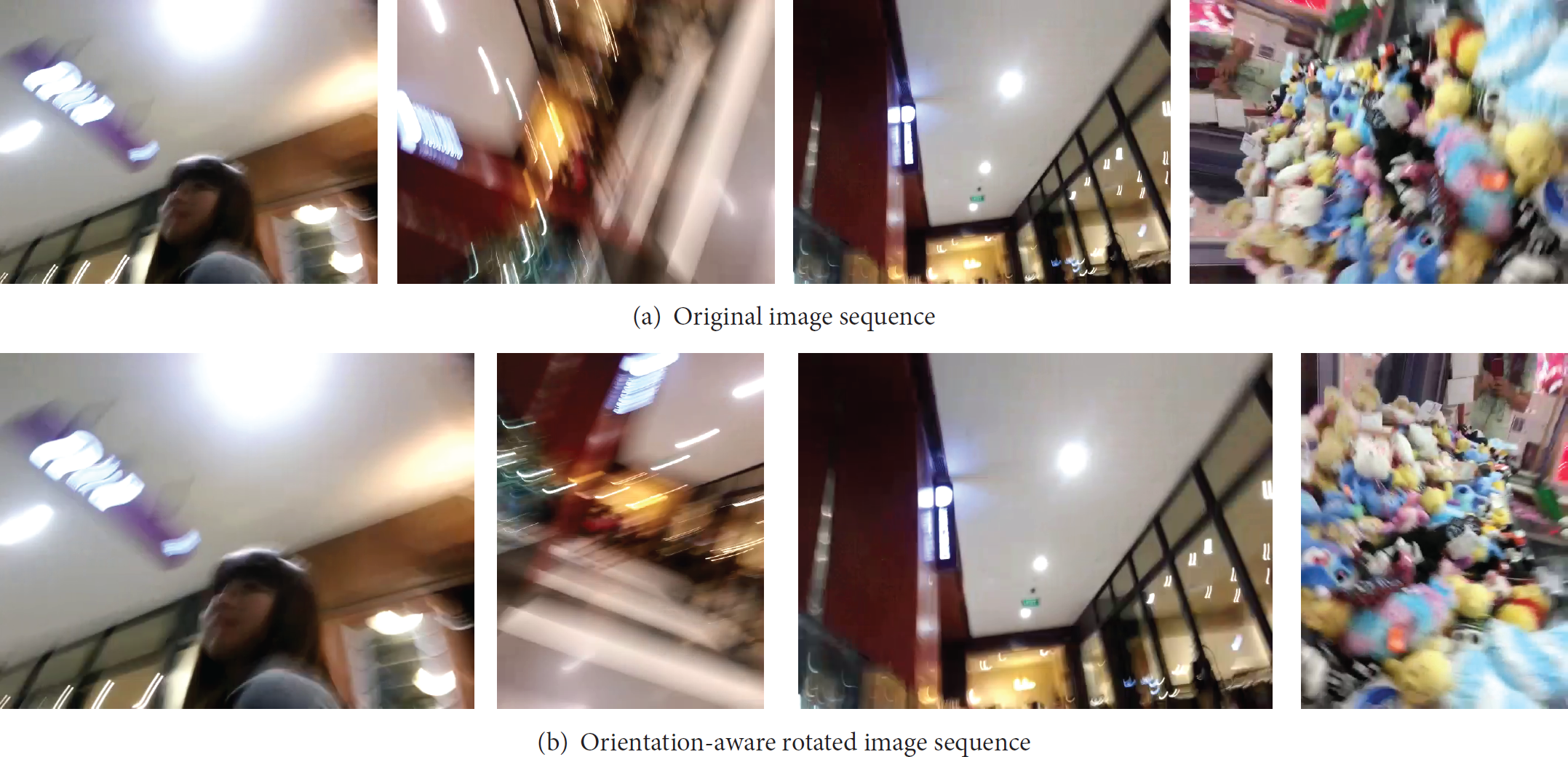

With the use of modern mobile videos, such multiple orientation change is unavoidable. If not corrected, you may have a disorientation experience during mobile video playback. As depicted in Figure 7(a), fixed orientation mode is insufficient for handy mobile videos. If we have such orientation change information, we will have a better chance to watch the video according to video shooters' intention (see Figure 7(b)). The use of sensor embedding on a mobile video can be an immediate solution. We hope that sensor-rich videos can handle such weird video shooting situation well.

Sample screenshot of multiple orientation mode changes during a single video shooting.

4. Conclusions

Modern smart devices are fully equipped with various sensors. In particular, they are extremely useful for any motion-based applications. Since the device is so handy, it becomes a necessity in modern society. Since its first appearance years ago, its technological advances have been a driving force. Compared with its dramatical changes, mobile video viewing experience, however, has a room for improvements.

In summary, we evaluated the usefulness of motion sensors for the orientation mode detection. Using the sensor dataset publicly available from GeoVid web services, we found that the combination of accelerometer and magnetometer, although these motion sensors are exposed to various random noises, is sufficient for accurate and reliable orientation mode detection. On the other hand, the use of sensor fused composite sensor such as rotation vector sensor is error-prone, so that it is recommended not to use such composite sensors. Identifying ones' orientation mode may take some initial startup latency. Through the experiments, we reported that the modes of the majority of mobile videos could be decided within one second. A small number of mobile videos, however, are still exposed to potential presence of the sensor acquisition failure, which eventually hurt users' video watching experience.

As presented in the paper, we showed that orientation detection looks relatively stable and reliable inspite of potential presence of various sensor noises. Moreover, we discovered that mobile video is no longer thought to be stationary; they can be rotated, swirled, or thrown in the sky for vivid presentations. Although the size of the dataset we tested in this paper is relatively small, it enlightens the potential of the next generation mobile videos to come; we expect the videos to be fully annotated with sensor information and to be capable of fully regenerating exactly the same environment when the video is taken, using those sensor measurements. Existing MEMs-based sensor acquisition methods are still vulnerable to various environmental noises, or intrinsic errors, but it is good enough to estimate the device orientation.

We also proved that there exist a number of situations, where device orientation can be determined ambiguously, which is still a challenging problem. To better understand the environments, we may need more contextual information; for example, a front-view camera may help to detect user's eye gazing, allowing the system to infer preferred orientation direction. We will continue to examine other videos taken from different mobile platforms and also plan to investigate the robustness issue of other half-equipped sensor-hungry mobile videos.

Footnotes

Conflict of Interests

The authors have read and understood the policy on declaration of interests and declare that they have no conflict of interests.

Acknowledgment

This work was supported by the Hongik University New Faculty Research Support Fund.