Abstract

In order to meet the practical requirement for Cognitive Wireless Sensor Networks applications, this paper proposes innovative fast channel selection algorithm to solve the shortcomings of original Experience-Weighted Attraction algorithm's complexity, higher energy consuming, and the nodes’ hardware restrictions of real-time data processing capabilities. Research is conducted by comparing channel selection differences and timeliness with traditional Experience-Weighted Attraction learning. Though not as stable as traditional Experience-Weighted Attraction learning, fast channel selection algorithm has effectively reduced the complexity of the original algorithm and has superior performance than Q learning.

1. Introduction

Traditionally, the licensed radio spectrum allocations are regulated by official authorities. The public and government use of radio spectrum is managed by the National Telecommunications and Information Administration (NTIA) and the Federal Communications Commission (FCC) is in charge of commercial radio resources, respectively, in the USA. With more and more applications of wireless devices, the rapid increasing requisition for radio spectrum licensing has led to current shortage of radio spectrum allocations and put their governing bodies into trouble. In fact, FCC's recent research has shown that these fixed static frequency channels are always idle or not occupied most of the time. Spectrum bands are not efficiently used or under utilization either at a temporal or on a geographical level. By seeking “spectrum holes” (unused frequency channels), Cognitive Radio (CR) can greatly improve the use efficiency of spectrum resources and solve these problems presented above in a “secondary utilization” (with lower priority than legacy users) way. First introduced by Mitola III [1], Cognitive Radio (CR) is often considered as an extension and expansion of Soft Radio (SR), which is equipped by general hardware and capable of programming to transmit and receive various radio waves.

There has already been lots of research in many aspects of CR. In sensing, Panahi and Ohtsuki [2] present a Fuzzy Q Learning (FQL) based scheme for channel sensing in CR networks. Zhang et al. [3] proposed a novel detection algorithm in which the fractal box dimension is used when the Signal to Noise Ratio (SNR) is high, while the improved TCC algorithm is used when the SNR is low, and Khalaf [4] formulated the detection problem based on the eigendecomposition technique. Hossain et al. [5] evaluated the performance of cooperative spectrum sensing with the hard combination OR, AND, and MAJORITY rules. Bkassiny et al. [6] presented an autonomous CR architecture, referred to as the Radiobot, to detect and identify the sensed signals. Lunden et al. [7] also distributed multiuser multiband spectrum sensing policies for CR networks based on multiagent reinforcement learning while Reinforcement Learning-Based Cooperative Sensing (RLCS) method was proposed to address the cooperation overhead problem and improve cooperative gain in CR ad hoc networks. [8] In channel allocation, Gállego et al. [9] presented a game theoretic solution for joint channel allocation and power control in CR networks analyzed under the physical interference model. In channel access, Teng et al. [10] demonstrated a reinforcement learning-based double auction algorithm aiming to improve the performance of dynamic spectrum access in CR networks. In security, Wang et al. [11] proposed a Four-Dimensional Continuous Time Markov Chain model to analyze the communication performance of normal Secondary Users under PUEAs, typically affected by SMUs, and compared several PUEA detection schemes.

As revolutionary development of Intelligent Radio (IR), CR implements Soft Radio by adding Knowledge Base, Reasoning Engine, and Learning Engine to be an independent Cognitive Engine (CE), which makes the radio capable of learning and adapting to the surrounding radio environment [12]. Knowledge Base which stores variety of cases, relations, and rules can be seen as memory in human's brain and is very common in Artificial Intelligence (AI) logic planning. Just like expert system in Artificial Intelligence, Reasoning Engine executes all kinds of state information for reference of Knowledge Base by logic thinking and then generates processed results or actions to drive Soft Radio changing setting parameters to adapt to changing environment. As the core component and key feature for CR implementation, Learning Engine is in charge of keeping Knowledge Base updated by accumulating new environmental experience into new knowledge extension, which is what differentiates CR from traditional preprogrammed ones.

There are varieties of learning algorithms available for CR, including neural networks, genetic models, and hidden Markov algorithms [13]. Bkassiny et al. characterized the learning problem in CR and state the importance of Artificial Intelligence in achieving real cognitive communications systems [14] and proposed a Bayesian nonparametric signal classification approach for spectrum sensing in CR [15]. Bizhani and Ghasemi [16] used Multiresponse Learning Automata (MRLA) to control how Secondary Users should access the licensed primary channels in CR networks. Tsagkaris et al. [17] used neural network-based learning to predict data bit rate of CR. Galindo-Serrano and Giupponi [18] proposed a form of real-time decentralized Q learning to manage the aggregated interference generated by multiple WRAN systems. Li [19] applied Multiagent Reinforcement Leaning (MARL) for the Secondary Users to learn good strategies of channel selection. Chen et al. [20] presented an intelligent policy based on reinforcement learning to acquire the stochastic behavior of Primary Users (PUs). Zhang and Liu [21] obtained the capability of iteratively online learning environment performance by using Reinforcement Learning (RL) algorithm after observing the variability and uncertainty of the heterogeneous wireless networks. Gállego et al. [9] provided no-regret learning algorithms to perform the joint channel and power allocation and overcome the convergence limitations of the local game. Zhu et al. [22] employed Reinforcement Learning (RL) approach to find a near-optimal policy under undiscovered environment. Torkestani and Meybodi [23] proposed the learning automata-based CR to address the spectrum scarcity challenges in wireless ad hoc networks. Yang and Grace [24] improved channel assignment in multicast terrestrial communication systems with distributed channel occupancy detection by using intelligence based on reinforcement learning and transmitter power adjustment. Zhou et al. [25] designed a robust distributed power control algorithm with low implementation complexity for CR networks through reinforcement learning, which does not require the interference channel and power strategy information among Secondary Users (SUs) and from SUs users to PUs.

However, as known with our best effort till now, little focus has been placed on implementing Learning Engine of CR with Experience-Weighted Attraction (EWA) algorithms. The innovative proposed channel selection algorithm based on EWA learning [26, 27] allows cognition to learn radio environment communication channel characteristics online. By accumulating the history channel experience, it can predict, select, and change the current optimal communication channel, dynamically ensure the quality of communication links, and finally reduce system communication outage probability. The effectiveness of this algorithm has been validated by simple probability method [26] and with handoff scheme [27] in our preliminary studies. However, it is not applicable for processing capability and power-restricted nodes of Wireless Sensors Networks (WSNs) due to original EWA algorithm's high complexity and energy consuming. Based on our lots of earlier research, the study focus has been shifted to fast channel selection algorithm EWAS with low complexity and green energy. The rest of this paper is presented as follows. In Section 2, EWAS algorithms will be introduced in full detail; then the simulation results comparison and analysis are presented in Section 3. In the end, the conclusion comes in Section 4.

2. Fast Cognitive Channel Selection Model

In the problem of radio communication channel selection, different wireless channels should have different channel availabilities; that is, the idle probabilities α of difference channel should not be the same for CR. Assuming radio propagation environment can be divided into n channels, then the idle probability of channel

To reduce the complexity of channel selection strategy based on EWA learning algorithm, exponential operation should be firstly avoided. Next the fast algorithm should simplify the calculation procedure and optimize and update the objective function directly in ideal. This paper calculates and carries iterative operation directly on channel selection probabilities and innovatively proposes a fast simplified cognitive channel selection algorithm EWAS.

Define the probability of selecting channel j in channel preferable selection policy

Parameters σ and τ are attenuation coefficients of probability and

These available channels are candidate channels for channel selection of CR, and the candidate channel with the highest probability (if more than one channel reaches the highest selection probability, then one of these channels will be selected randomly) of channel selection will be chosen for transmission. After successful transmission, this channel selection probability will go up to

At this point, it can be seen that the complexity of EWAS fast channel selection algorithm is

3. Results and Discussion

Assume the number of channels in simulation environment is 5, or

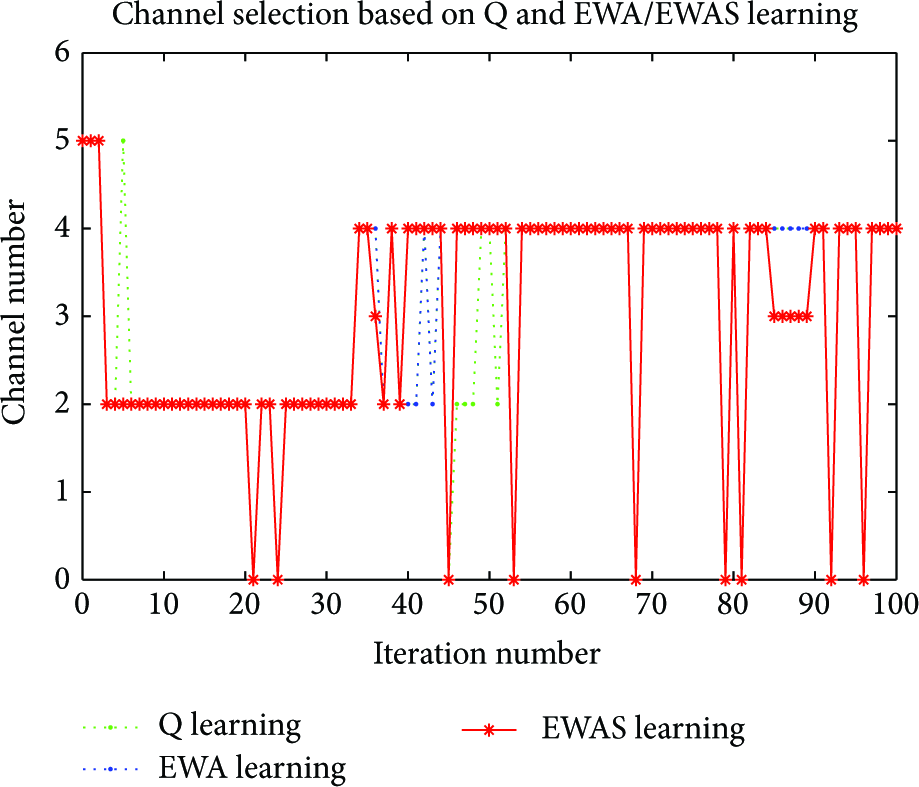

In this paper, a simple repeated experimental method is applied to verify the effectiveness of probability of channel selection algorithm based on EWA learning. That is, Turn-Based Strategy (TBS), a single uniformly distributed random number within range

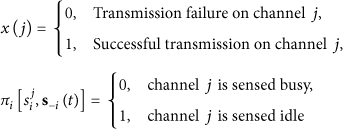

After the parameters above are set, the track records of channel selection probability based on EWA learning are shown in Figure 1.

Channel selection probability based on EWA learning.

In Figure 1, EWAS learning algorithm randomly selects channel 5 as the access channel in the condition of the same initial channel selection probabilities. After short initialization process, EWAS learning algorithm can successfully track and lock channel 2 as its preferable channel and its selection probability fluctuates slightly around 0.87. For the reason of channel availability, probability changes after 36th round and the selection probability of channel 2 falls dramatically, while the selection probability of channel 4 increases, respectively, and steadily overtakes the selection probability of channel 2 after 40 rounds. Channel 4 eventually replaces channel 2 to become optimal access channel under new channel available probability states.

In order to highlight better performance of channel selection algorithm based on EWA learning than other traditional radio with fixed transmission channel, the times of availability to access the channel and successful completion of transmissions in 100 rounds are collected in 3 scenes: fixed channel 2 as transmission channel, fixed channel 4 as transmission channel, and channel selection algorithm based on Q learning channel selection algorithm based on EWA learning and channel selection algorithm based on EWAS learning. The statistical data is compared in Figure 2.

Comparison between EWA/EWAS learning and reference ploys.

The number of availabilities to access the channel with fixed channel 2 as transmission channel is 63, and the number of successful completion of transmissions with fixed channel 2 as transmission channel is 54; the number of availabilities to access the channel with fixed channel 4 as transmission channel is 74, and the number of successful completion of transmissions with fixed channel 4 as transmission channel is 65. The numbers of availabilities to access the channel with channel selection algorithm based on Q, EWA, and EWAS learning are the same as 100 with no block, but the numbers of successful completion of transmissions are 80 for Q learning, 81 for EWAS, and 82 for EWA learning, respectively. Finally, the probability of successful completion of transmission with channel selection algorithm based on EWA learning is 81%, much higher than that of channel 2 (54%) and channel 4 (65%). By evident statistical comparison, channel selection algorithm based on EWAS learning can greatly improve the probabilities of successful channel access and transmission completion, much the same as Q (80%) and EWA (82%) learning. But its advantage has more intuitive reflection on the comparison chart of real-time channel selection below.

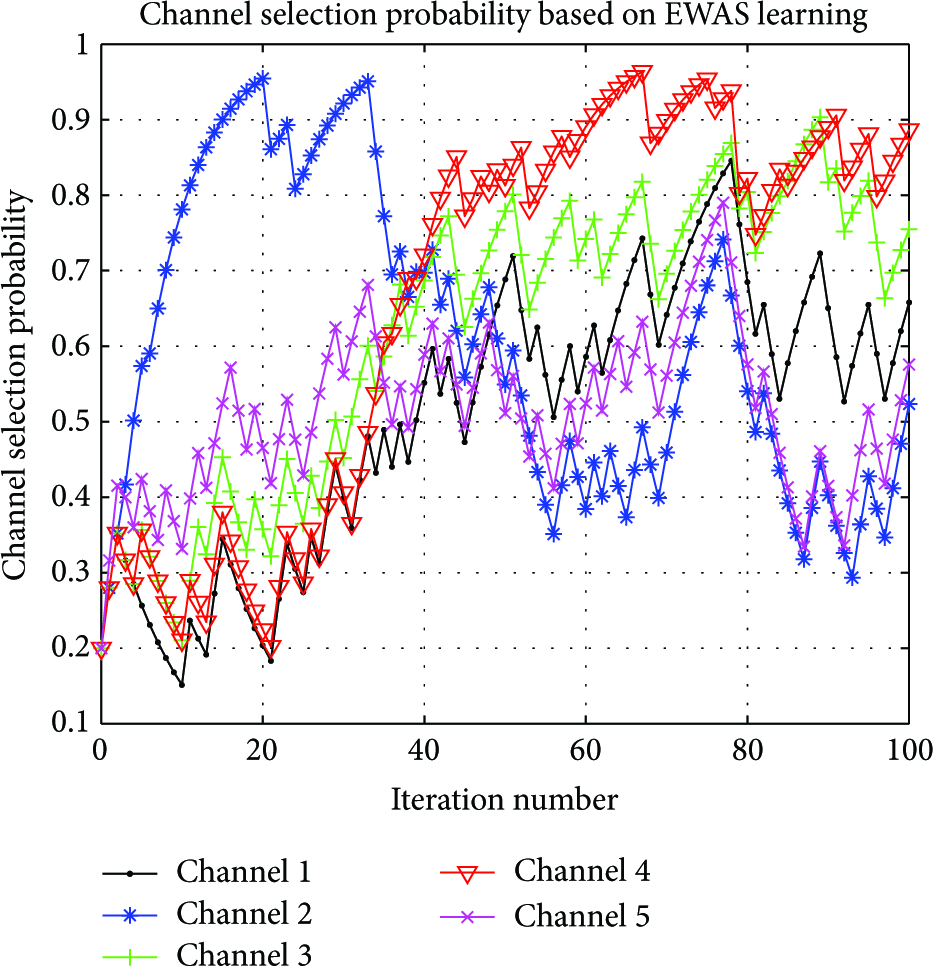

In order to highlight better performance of channel selection algorithm based on EWAS learning than Q learning, the channel selection tracks based on both learning algorithms are recorded under the same initial states and radio environments. The results are illustrated in Figure 3.

The tracks of channel selection based on EWA learning and Q learning.

Note that channel number 0 indicates that full channel blocking occurs, which means all the channels are in busy states and are not available for communication which is the situation in the 21st round. The differences between EWAS learning and EWA learning algorithms are mainly in the transition period (36th–45th round) of switching channel from former selected channel 2 to new optimal channel 4, and this reflects the differences between these two different algorithms. However sudden channel change of fast algorithm in the 86th round arouses our big interest. In order to analyze the reason of this phenomenon, the channel selection probability records after each round are derived and shown in Tables 1 and 2.

The probability table of channel selection based on EWAS learning.

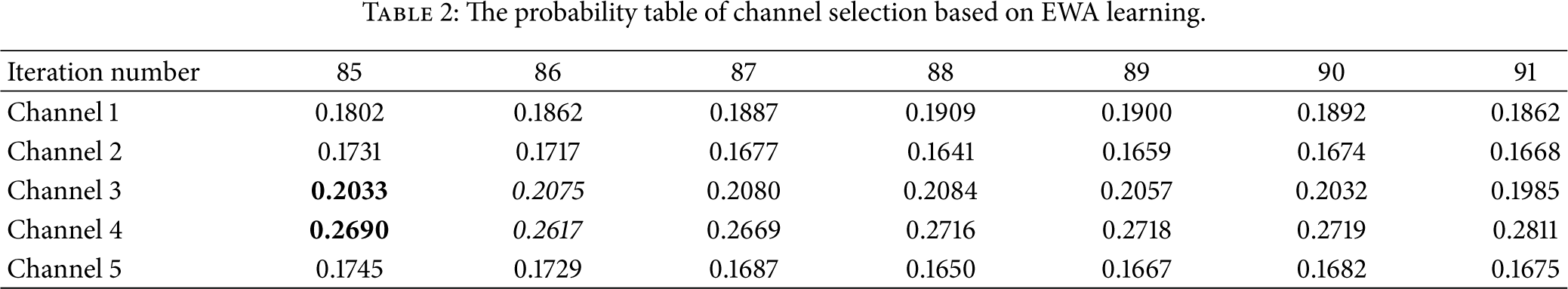

The probability table of channel selection based on EWA learning.

Table 1 records channel selection probabilities values calculated by EWAS algorithm after each round, while Table 2 presents channel selection probabilities values based on EWA learning. It can be seen from Table 1 that selection probabilities of channel 3 and channel 4 are very close to each other from the 85th round to the 91st round. After transmission failure of preferred channel 4 in the 85th round, selection probability of current channel 4 falls from 0.8341 to 0.8131, while selection probability of channel 3 increases from 0.7985 up to 0.8187 and weakly overtakes channel 4 to be new selected transmission channel by EWAS fast algorithm. Even if the same trend in the probability changes, the selection probability of channel 4 calculated by EWA learning is still the largest of all in the 86th round and channel 4 being the optimal transmission channel remains unchanged. In summary, channel selection based on EWAS fast algorithm has the same performance in fast tracking, locking, and switching to the current optimal channel from changing communication environment and is superior to Q learning algorithm even not as much stable as original EWA algorithm.

4. Conclusion

In this paper, an innovative fast channel selection algorithm EWAS is proposed to solve the shortcomings of original EWA algorithm's complexity, higher energy consuming, and the nodes’ hardware restrictions of real-time data processing capabilities in order to meet the practical requirement for Cognitive Wireless Sensor Networks (CWSNs) application. Research is conducted by comparing channel selection differences and timeliness with traditional Q learning and EWA algorithm. Though not as stable as EWA learning, fast channel selection algorithm EWAS has effectively reduced the complexity of the original algorithm and has superior performance than Q learning. However, EWAS algorithm is of passive channel detection and access; future research is lying on active channel state prediction and reallocation in application of Wireless Sensor Network (WSN).

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgment

This work was supported by the National High Technology Research and Development Program of China (863 Program) (no. 2012AA062103).